Detecting Large-Scale Urban Land Cover Changes from Very High Resolution Remote Sensing Images Using CNN-Based Classification

Abstract

1. Introduction

2. Methodology

2.1. Network for Land Cover Segmentation

2.2. Change Detection

3. Study Site

4. Experiments and Results

4.1. Experimental Design

4.2. Classificaiton Results

4.3. Change Detection Results

5. Discussion

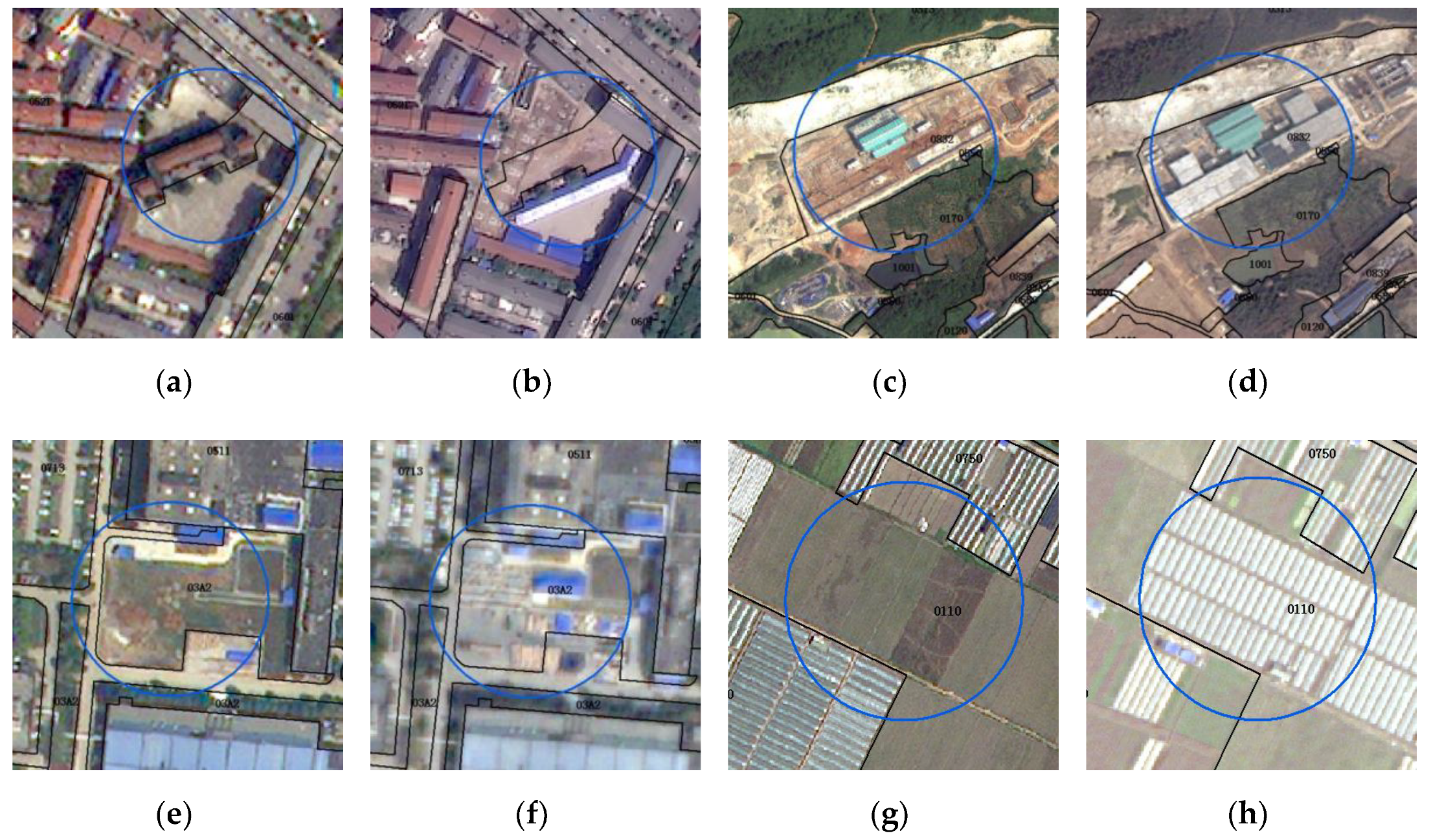

5.1. Interpretation Inconsistency between A Deep Learning Algorthm and A Human

5.2. Usage of Polygon-Based and Object-Based Change Maps

6. Conclusions

Author Contributions

Acknowledgments

Conflicts of Interest

References

- Walter, V. Object-based classification of remote sensing data for change detection. ISPRS J. Photogramm. Remote Sens. 2004, 58, 225–238. [Google Scholar] [CrossRef]

- Desclée, B.; Bogaert, P.; Defourny, P. Forest change detection by statistical object-based method. Remote Sens. Environ. 2006, 102, 1–11. [Google Scholar] [CrossRef]

- Stow, D. Geographic object-based image change analysis. In Handbook of Applied Spatial Analysis; Springer: Berlin, Germany, 2010; pp. 565–582. [Google Scholar]

- Gamanya, R.; De Maeyer, P.; De Dapper, M. Object-oriented change detection for the city of Harare, Zimbabwe. Expert Syst. Appl. 2009, 36, 571–588. [Google Scholar] [CrossRef]

- Nemmour, H.; Chibani, Y. Multiple support vector machines for land cover change detection: An application for mapping urban extensions. ISPRS J. Photogramm. Remote Sens. 2006, 61, 125–133. [Google Scholar] [CrossRef]

- Coppin, P.; Jonckheere, I.; Nackaerts, K.; Muys, B.; Lambin, E. Review ArticleDigital change detection methods in ecosystem monitoring: A review. Int. J. Remote Sens. 2004, 25, 1565–1596. [Google Scholar] [CrossRef]

- Todd, W.J. Urban and regional land use change detected by using Landsat data. J. Res. US Geol. Surv. 1977, 5, 529–534. [Google Scholar]

- Zhang, C.; Sargent, I.; Pan, X.; Li, H.; Gardiner, A.; Hare, J.; Atkinson, P.M. An object-based convolutional neural network (OCNN) for urban land use classification. Remote Sens. Environ. 2018, 216, 57–70. [Google Scholar] [CrossRef]

- Paris, C.; Bruzzone, L.; Fernandez-Prieto, D. A Novel Approach to the Unsupervised Update of Land-Cover Maps by Classification of Time Series of Multispectral Images. IEEE Trans. Geosci. Remote Sens. 2019, 1–19. [Google Scholar] [CrossRef]

- Shackelford, A.K.; Davis, C.H. A combined fuzzy pixel-based and object-based approach for classification of high-resolution multispectral data over urban areas. IEEE Trans. Geosci. Remote Sens. 2003, 41, 2354–2363. [Google Scholar] [CrossRef]

- Wardlow, B.; Egbert, S.; Kastens, J. Analysis of time-series MODIS 250 m vegetation index data for crop classification in the U.S. Central Great Plains. Remote Sens. Environ. 2007, 108, 290–310. [Google Scholar] [CrossRef]

- Conrad, C.; Colditz, R.R.; Dech, S.; Klein, D.; Vlek, P.L.G. Temporal segmentation of MODIS time series for improving crop classification in Central Asian irrigation systems. Int. J. Remote Sens. 2011, 32, 8763–8778. [Google Scholar] [CrossRef]

- Ruiz, L.; Fdez-Sarría, A.; Recio, J. Texture feature extraction for classification of remote sensing data using wavelet decomposition: A comparative study. In Proceedings of the 20th ISPRS Congress, Istanbul, Turkey, 12–23 July 2004; pp. 1109–1114. [Google Scholar]

- Garzelli, A.; Zoppetti, C. Urban Footprint from VHR SAR Images: Toward a Fully Operational Procedure. In Proceedings of the IGARSS 2018 IEEE International Geoscience and Remote Sensing Symposium, Valencia, Spain, 22–27 July 2018; pp. 6083–6086. [Google Scholar]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef] [PubMed]

- Kemker, R.; Salvaggio, C.; Kanan, C. Algorithms for semantic segmentation of multispectral remote sensing imagery using deep learning. ISPRS J. Photogramm. Remote Sens. 2018, 145, 60–77. [Google Scholar] [CrossRef]

- Long, J.; Shelhamer, E.; Darrell, T. Fully convolutional networks for semantic segmentation. In Proceedings of the 2015 IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; pp. 3431–3440. [Google Scholar]

- Ronneberger, O.; Fischer, P.; Brox, T. U-net: Convolutional networks for biomedical image segmentation. In Proceedings of the 2015 International Conference on Medical Image Computing and Computer-Assisted Intervention, Munich, Germany, 5–9 October 2015; pp. 234–241. [Google Scholar]

- Chen, L.-C.; Papandreou, G.; Kokkinos, I.; Murphy, K.; Yuille, A.L. DeepLab: Semantic Image Segmentation with Deep Convolutional Nets, Atrous Convolution, and Fully Connected CRFs. IEEE Trans. Pattern Anal. Mach. Intell. 2018, 40, 834–848. [Google Scholar] [CrossRef]

- Lin, T.-Y.; Dollár, P.; Girshick, R.; He, K.; Hariharan, B.; Belongie, S. Feature pyramid networks for object detection. arXiv, 2017; arXiv:1612.03144. [Google Scholar]

- Ji, S.; Wei, S.; Lu, M. Fully Convolutional Networks for Multisource Building Extraction from an Open Aerial and Satellite Imagery Data Set. IEEE Trans. Geosci. Remote Sens. 2018, 57, 574–586. [Google Scholar] [CrossRef]

- Ji, S.; Wei, S.; Lu, M. A scale robust convolutional neural network for automatic building extraction from aerial and satellite imagery. Int. J. Remote Sens. 2018, 40, 3308–3322. [Google Scholar] [CrossRef]

- Yu, F.; Koltun, V. Multi-scale context aggregation by dilated convolutions. arXiv, 2015; arXiv:1511.07122. [Google Scholar]

- Chen, L.-C.; Papandreou, G.; Schroff, F.; Adam, H. Rethinking atrous convolution for semantic image segmentation. arXiv, 2017; arXiv:1706.05587. [Google Scholar]

- Wang, P.; Chen, P.; Yuan, Y.; Liu, D.; Huang, Z.; Hou, X.; Cottrell, G. Understanding convolution for semantic segmentation. In Proceedings of the 2018 IEEE Winter Conference on Applications of Computer Vision (WACV), Lake Tahoe, NV, USA, 12–15 March 2018; pp. 1451–1460. [Google Scholar]

- Guo, R.; Liu, J.; Li, N.; Liu, S.; Chen, F.; Cheng, B.; Duan, J.; Li, X.; Ma, C. Pixel-Wise Classification Method for High Resolution Remote Sensing Imagery Using Deep Neural Networks. ISPRS Int. J. Geo-Inf. 2018, 7, 110. [Google Scholar] [CrossRef]

- Lunetta, R.S.; Knight, J.F.; Ediriwickrema, J.; Lyon, J.G.; Worthy, L.D. Land-cover change detection using multi-temporal MODIS NDVI data. Remote Sens. Environ. 2006, 105, 142–154. [Google Scholar] [CrossRef]

- Du, Y.; Teillet, P.M.; Cihlar, J. Radiometric normalization of multitemporal high-resolution satellite images with quality control for land cover change detection. Remote Sens. Environ. 2002, 82, 123–134. [Google Scholar] [CrossRef]

- Nair, V.; Hinton, G.E. Rectified linear units improve restricted boltzmann machines. In Proceedings of the 27th International Conference on Machine Learning (ICML-10), Haifa, Israel, 21–24 June 2010; pp. 807–814. [Google Scholar]

- Ioffe, S.; Szegedy, C. Batch normalization: Accelerating deep network training by reducing internal covariate shift. arXiv, 2015; arXiv:1502.03167. [Google Scholar]

- Huang, G.; Liu, Z.; Maaten, L.V.D.; Weinberger, K.Q. Densely Connected Convolutional Networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 2261–2269. [Google Scholar]

| Method | Crop | Vegetation | Building | Railway and Road | Structure | Construction Site | Water | Kappa | OA |

|---|---|---|---|---|---|---|---|---|---|

| FCN-16 | 0.606 | 0.531 | 0.719 | 0.667 | 0.661 | 0.632 | 0.883 | 0.666 | 0.655 |

| U-Net | 0.647 | 0.594 | 0.722 | 0.711 | 0.678 | 0.717 | 0.888 | 0.704 | 0.695 |

| Dense-Net | 0.664 | 0.605 | 0.748 | 0.678 | 0.709 | 0.691 | 0.884 | 0.711 | 0.702 |

| Deeplabv3 | 0.673 | 0.627 | 0.740 | 0.742 | 0.686 | 0.715 | 0.885 | 0.720 | 0.711 |

| SR-FCN | 0.663 | 0.610 | 0.761 | 0.776 | 0.714 | 0.708 | 0.889 | 0.723 | 0.713 |

| Ours | 0.685 | 0.628 | 0.779 | 0.786 | 0.756 | 0.720 | 0.896 | 0.741 | 0.732 |

| Type | Crop | Tree and Grass | Building | Railway and Road | Structure | Construction Site | Water |

|---|---|---|---|---|---|---|---|

| Crop | 0.723 | 0.111 | 0.011 | 0.012 | 0.033 | 0.079 | 0.031 |

| Tree and grass | 0.107 | 0.753 | 0.026 | 0.018 | 0.006 | 0.058 | 0.032 |

| building | 0.016 | 0.085 | 0.796 | 0.012 | 0.058 | 0.029 | 0.004 |

| Railway and road | 0.048 | 0.037 | 0.075 | 0.678 | 0.078 | 0.071 | 0.013 |

| structure | 0.072 | 0.089 | 0.082 | 0.024 | 0.645 | 0.071 | 0.017 |

| Construction site | 0.044 | 0.095 | 0.062 | 0.037 | 0.026 | 0.730 | 0.006 |

| water | 0.071 | 0.056 | 0.007 | 0.008 | 0.021 | 0.009 | 0.828 |

| Overall Accuracy = 0.732; Kappa = 0.741 | |||||||

| Type | Crop | Tree and Grass | Building | Railway and Road | Structure | Construction Site | Water |

|---|---|---|---|---|---|---|---|

| Crop | 0.718 | 0.147 | 0.006 | 0.007 | 0.033 | 0.030 | 0.059 |

| Tree and grass | 0.086 | 0.626 | 0.068 | 0.058 | 0.063 | 0.068 | 0.031 |

| Building | 0.011 | 0.054 | 0.803 | 0.021 | 0.061 | 0.050 | 0.001 |

| Railway and road | 0.033 | 0.072 | 0.037 | 0.743 | 0.059 | 0.051 | 0.007 |

| Structure | 0.053 | 0.077 | 0.204 | 0.098 | 0.495 | 0.062 | 0.010 |

| Construction site | 0.074 | 0.052 | 0.052 | 0.028 | 0.039 | 0.732 | 0.023 |

| Water | 0.047 | 0.053 | 0.002 | 0.002 | 0.006 | 0.008 | 0.880 |

| Precision | 0.703 | 0.579 | 0.686 | 0.776 | 0.655 | 0.732 | 0.870 |

| Overall Accuracy = 0.766; Kappa = 0.744 | |||||||

| Pixel | Precision | Recall | F1 Score |

|---|---|---|---|

| unchanged | 0.982 | 0.978 | 0.980 |

| changed | 0.677 | 0.378 | 0.485 |

| Overall Accuracy = 0.963; Kappa = 0.329 | |||

| Type | Number | GT | Predicted | Recall | Precision | Omission | F1 |

|---|---|---|---|---|---|---|---|

| Polygon | 65,438 | 4230 | 4609 | 63.4% | 58.1% | 36.6% | 60.6% |

| object | / | 1839 | 2392 | 96.4% | 74.1% | 3.6% | 83.8% |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhang, C.; Wei, S.; Ji, S.; Lu, M. Detecting Large-Scale Urban Land Cover Changes from Very High Resolution Remote Sensing Images Using CNN-Based Classification. ISPRS Int. J. Geo-Inf. 2019, 8, 189. https://doi.org/10.3390/ijgi8040189

Zhang C, Wei S, Ji S, Lu M. Detecting Large-Scale Urban Land Cover Changes from Very High Resolution Remote Sensing Images Using CNN-Based Classification. ISPRS International Journal of Geo-Information. 2019; 8(4):189. https://doi.org/10.3390/ijgi8040189

Chicago/Turabian StyleZhang, Chi, Shiqing Wei, Shunping Ji, and Meng Lu. 2019. "Detecting Large-Scale Urban Land Cover Changes from Very High Resolution Remote Sensing Images Using CNN-Based Classification" ISPRS International Journal of Geo-Information 8, no. 4: 189. https://doi.org/10.3390/ijgi8040189

APA StyleZhang, C., Wei, S., Ji, S., & Lu, M. (2019). Detecting Large-Scale Urban Land Cover Changes from Very High Resolution Remote Sensing Images Using CNN-Based Classification. ISPRS International Journal of Geo-Information, 8(4), 189. https://doi.org/10.3390/ijgi8040189