Using Eye Tracking to Explore the Guidance and Constancy of Visual Variables in 3D Visualization

Abstract

1. Introduction

2. Background and Related Work

3. Methods and Materials

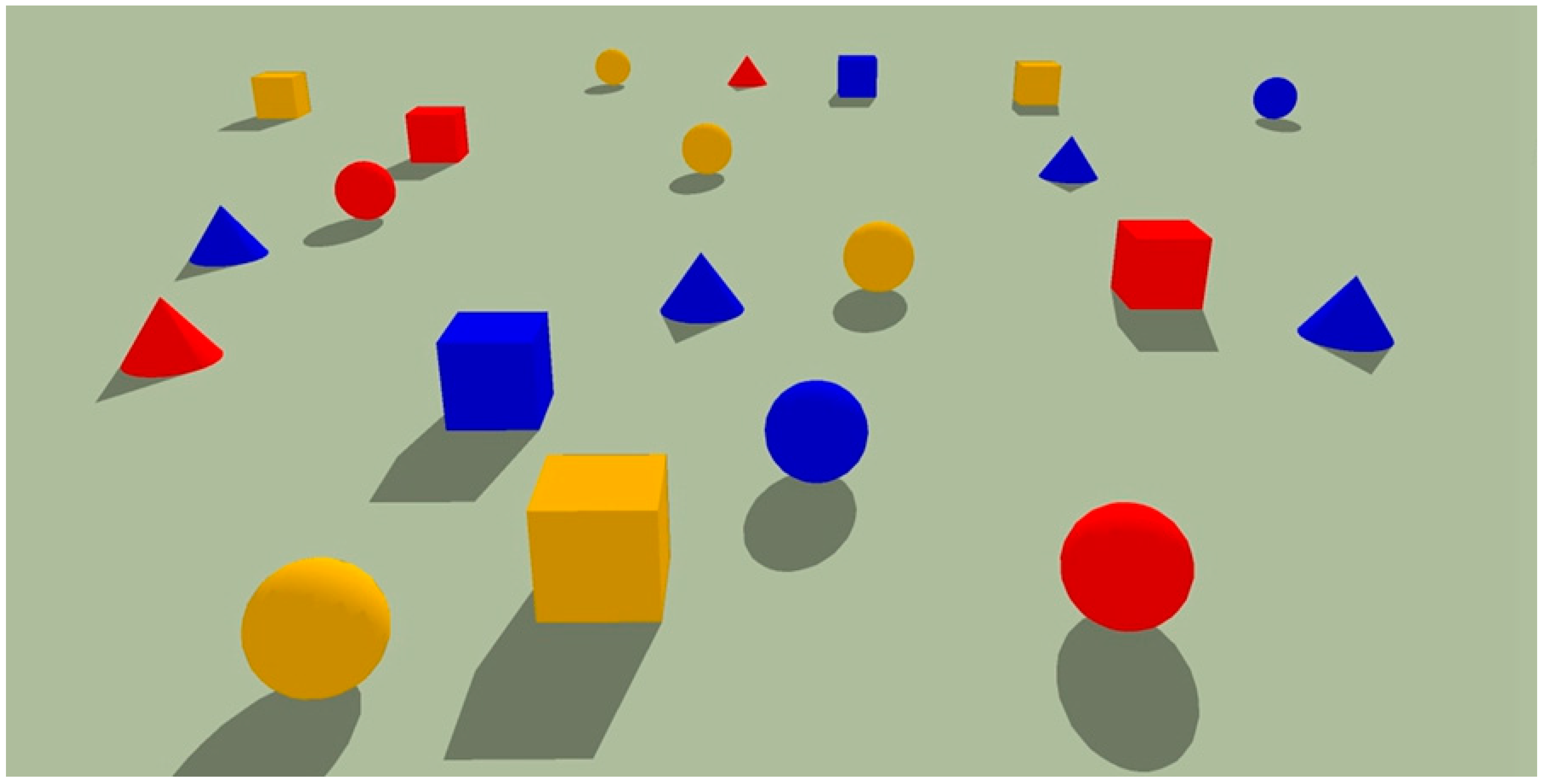

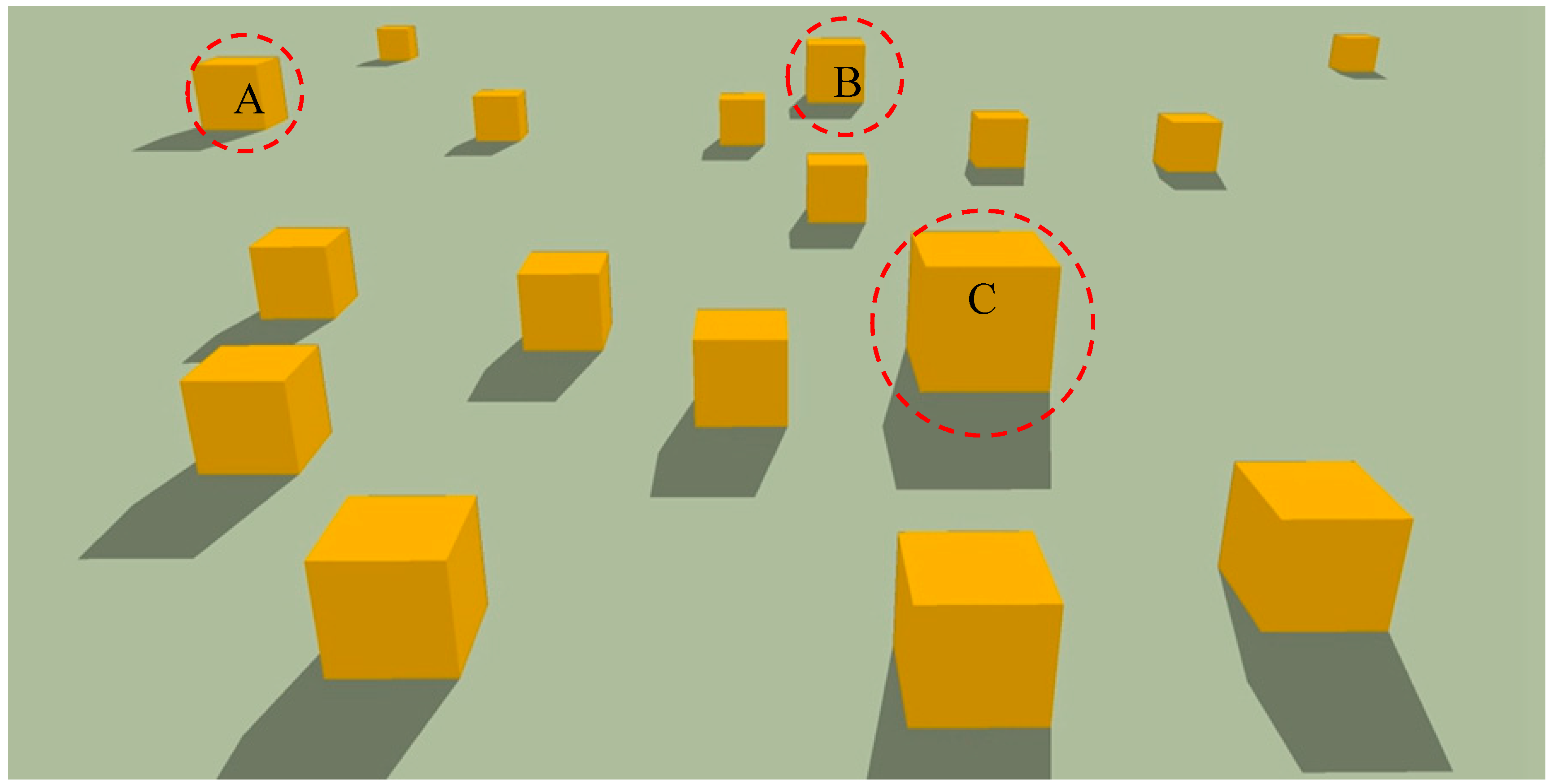

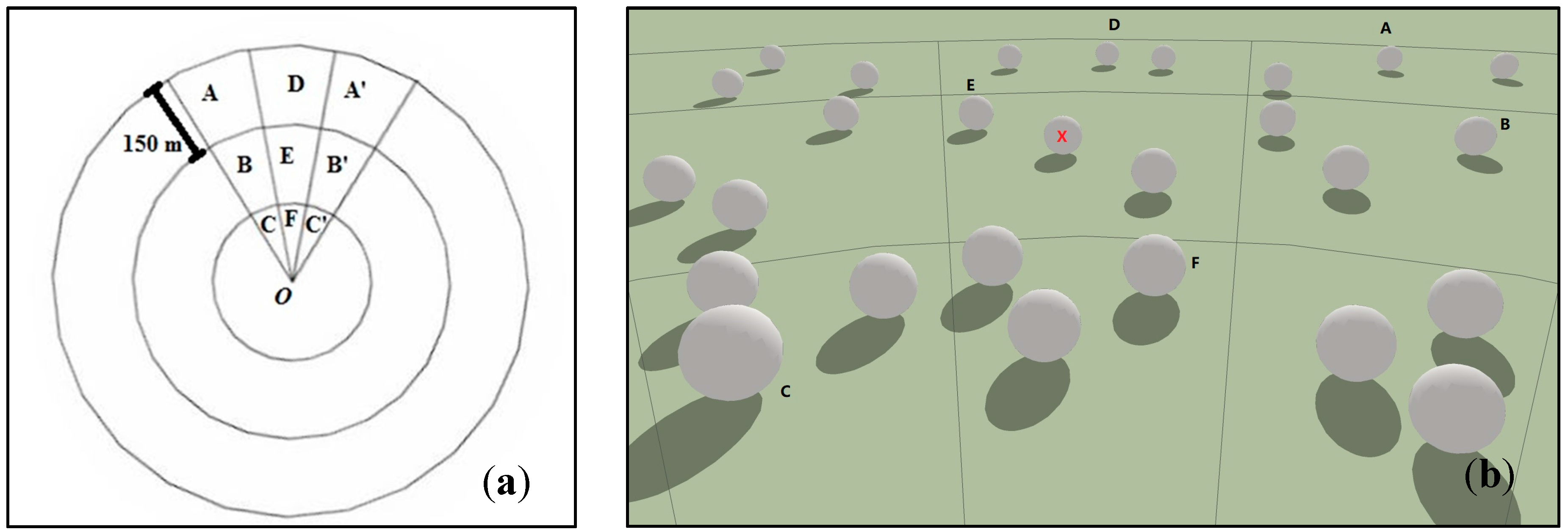

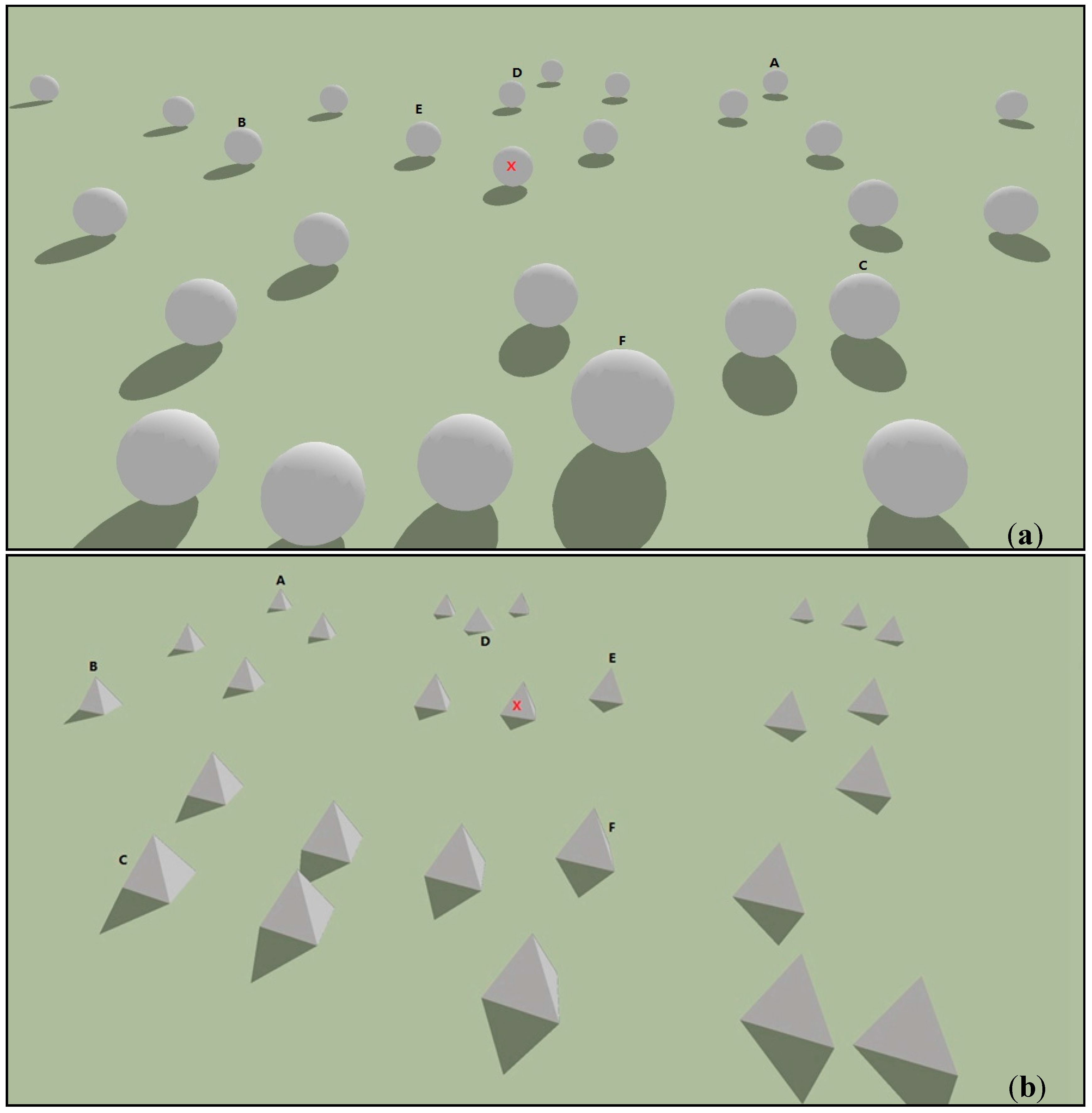

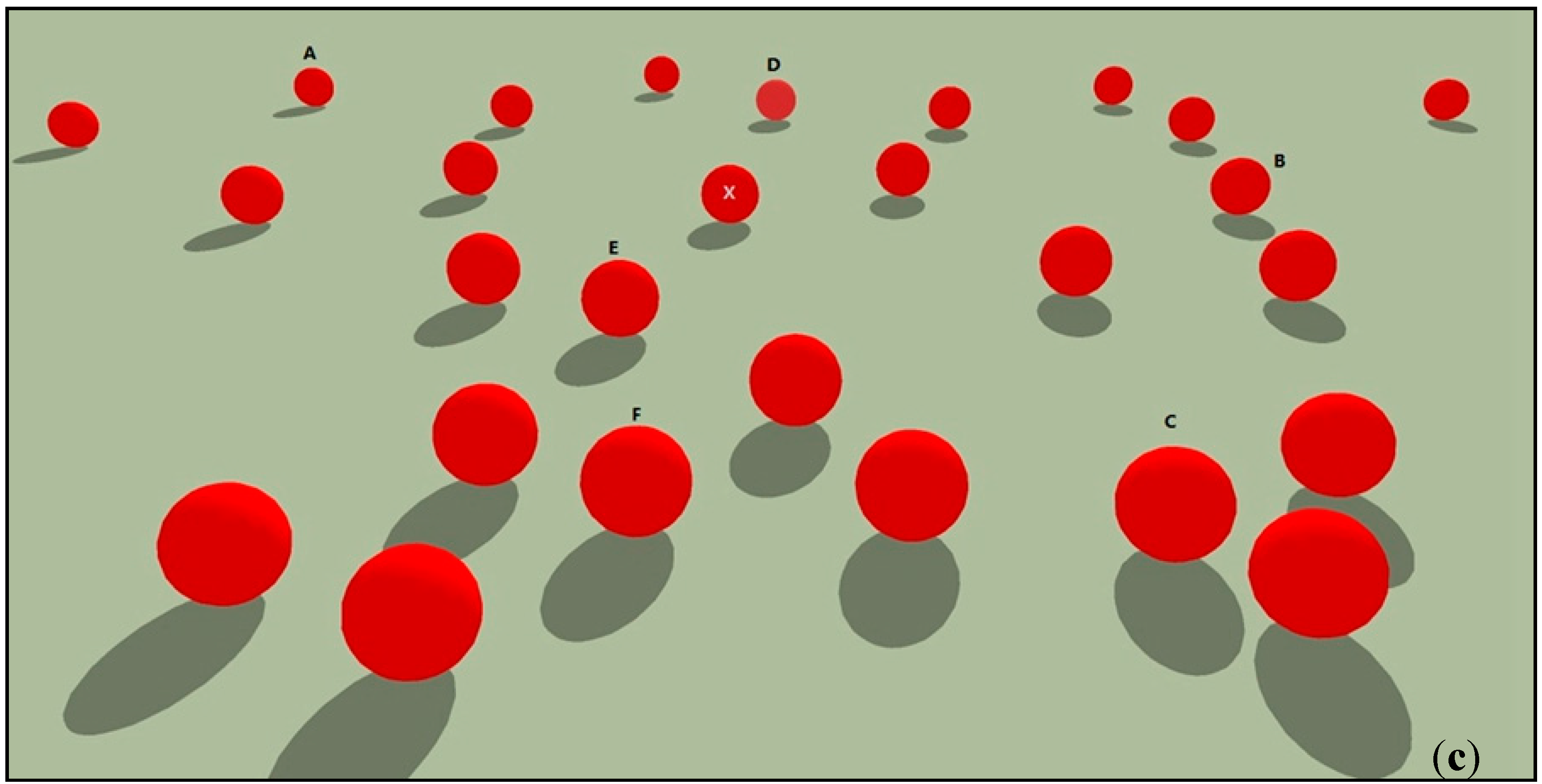

3.1. Experimental Design

3.2. Participants

3.3. Apparatus

3.4. Materials

3.5. Procedures

3.6. Analysis Framework

3.6.1. Guidance

3.6.2. Constancy

4. Results

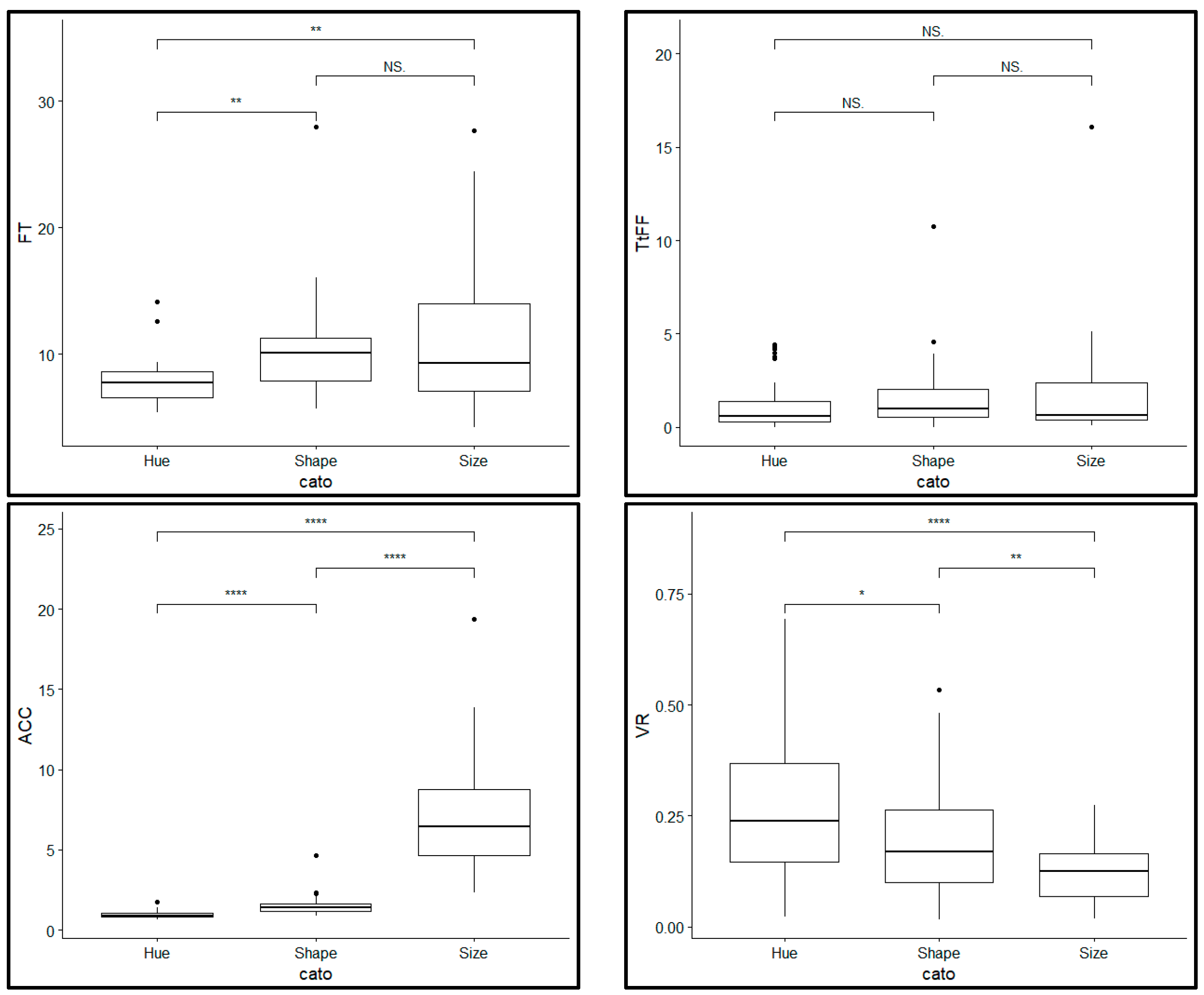

4.1. Guidance Task

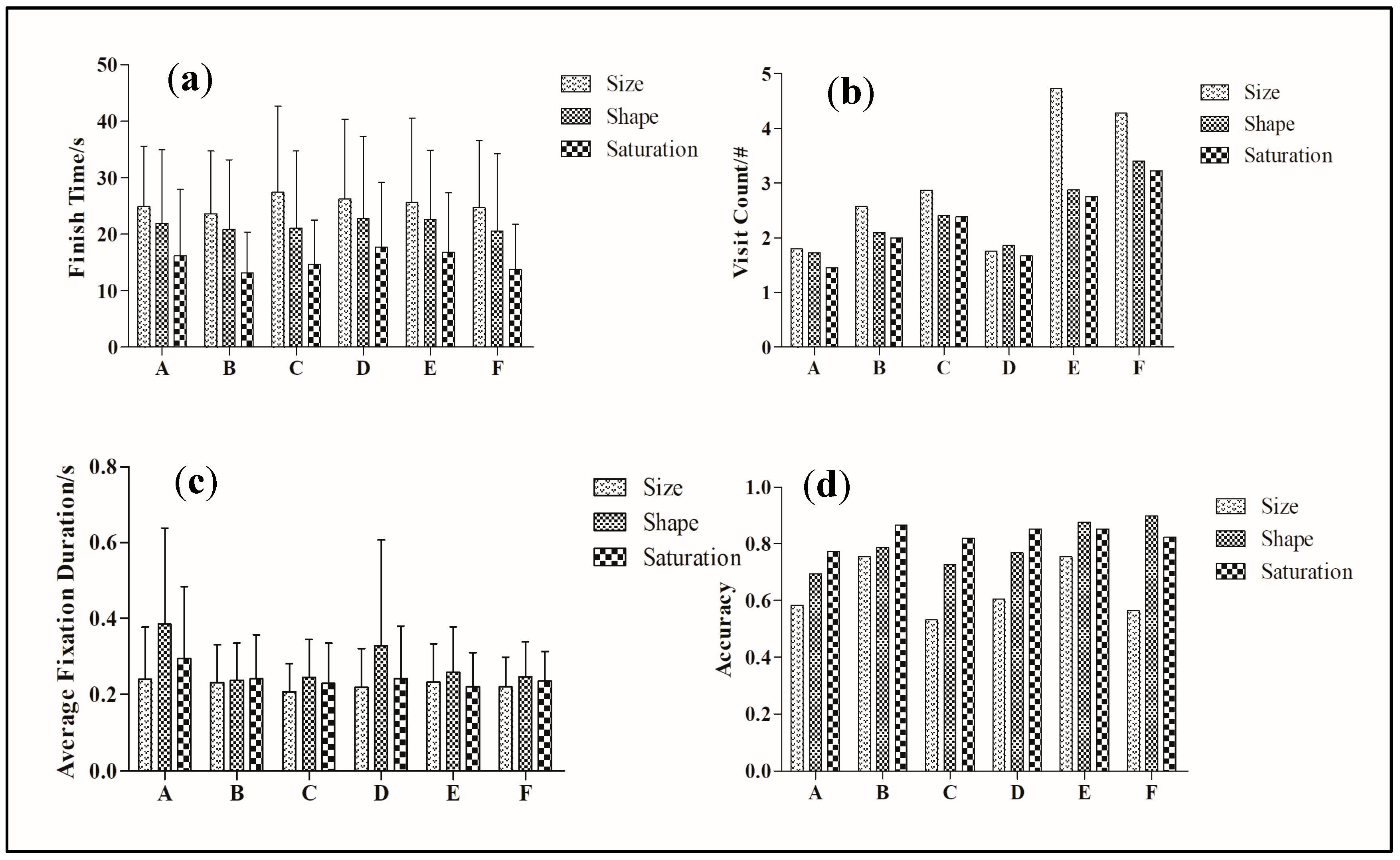

4.2. Constancy Task

4.2.1. Finish Time

4.2.2. Average Fixation Duration

4.2.3. Visit Count

4.2.4. Accuracy

5. Discussion

5.1. Guidance for Hue, Size and Shape

5.2. Constancy of Saturation, Size and Shape

6. Summary and Future Work

Supplementary Materials

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Kersting, O.; Dollner, J. Interactive 3D visualization of vector data in gis. In Proceedings of the Tenth ACM International Symposium on Advances in Geographic Information Systems, Mclean, VA, USA, 8–9 November 2002; pp. 107–112. [Google Scholar]

- Mccarthy, J.D.; Graniero, P.A. A gis-based borehole data management and 3D visualization system. Comput. Geosci. 2006, 32, 1699–1708. [Google Scholar] [CrossRef]

- Wu, H.; He, Z.; Gong, J. A virtual globe-based 3D visualization and interactive framework for public participation in urban planning processes. Comput. Environ. Urban Syst. 2010, 34, 291–298. [Google Scholar] [CrossRef]

- Korakakis, G.; Pavlatou, E.A.; Palyvos, J.A.; Spyrellis, N. 3D visualization types in multimedia applications for science learning: A case study for 8th grade students in greece. Comput. Educ. 2009, 52, 390–401. [Google Scholar] [CrossRef]

- Petersson, H.; Sinkvist, D.; Wang, C.; Smedby, Ö. Web-based interactive 3D visualization as a tool for improved anatomy learning. Anat. Sci. Educ. 2009, 2, 61–68. [Google Scholar] [CrossRef] [PubMed]

- St John, M.; Cowen, M.B.; Smallman, H.S.; Oonk, H.M. The use of 2D and 3D displays for shape-understanding versus relative-position tasks. Hum. Factors J. Hum. Factors Ergon. Soc. 2001, 43, 79–98. [Google Scholar] [CrossRef] [PubMed]

- Suárez, J.P.; Trujillo, A.; Santana, J.M.; Calle, M.D.L.; Gómez-Deck, D. An efficient terrain level of detail implementation for mobile devices and performance study. Comput. Environ. Urban Syst. 2015, 52, 21–33. [Google Scholar] [CrossRef]

- Swienty, O. Attention-Guiding Geovisualisation: A Cognitive Approach of Designing Relevant Geographic Information; TUM: Munich, Germany, 2008. [Google Scholar]

- Wolfe, J.M.; Horowitz, T.S. What attributes guide the deployment of visual attention and how do they do it? Nat. Rev. Neurosci. 2004, 5, 495–501. [Google Scholar] [CrossRef] [PubMed]

- Garlandini, S.; Fabrikant, S.I. Evaluating the effectiveness and efficiency of visual variables for geographic information visualization. In Proceedings of the International Conference on Spatial Information Theory, Aber Wrac’h, France, 21–25 September 2009; Springer: Berlin/Heidelberg, Germany, 2009; pp. 195–211. [Google Scholar]

- Wolfe, J.M. The rules of guidance in visual search. In Proceedings of the Perception and Machine Intelligence: First Indo-Japan Conference, Permin 2012, Kolkata, India, 12–13 January 2012; Kundu, M.K., Mitra, S., Mazumdar, D., Pal, S.K., Eds.; Springer: Berlin/Heidelberg, Germany, 2012; pp. 1–10. [Google Scholar]

- Cohen, J. Perceptual constancy. In Oxford Handbook of Philosophy of Perception; Oxford University Press: Oxford, UK, 2012. [Google Scholar]

- Bloj, M.; Hedrich, M. Color perception. In Handbook of Visual Display Technology; Springer: Berlin/Heidelberg, Germany, 2012; pp. 171–178. [Google Scholar]

- Bertin, J. Semiology of Graphics: Diagrams, Networks, Maps; University of Wisconsin Press: Madison, WI, USA, 1983. [Google Scholar]

- Bandrova, T. Designing of symbol system for 3D city maps. In Proceedings of the 20th International Cartographic Conference, Beijing, China, 6–10 August 2001; pp. 1002–1010. [Google Scholar]

- Haeberling, C. 3D map presentation—A systematic evaluation of important graphic aspects. In Proceedings of the ICA Mountain Cartography Workshop, Mt. Hood, OR, USA, 15–19 May 2002. [Google Scholar]

- Wilkening, J.; Fabrikant, S.I. How users interact with a 3D geo-browser under time pressure. Cartogr. Geogr. Inf. Sci. 2013, 40, 40–52. [Google Scholar] [CrossRef]

- Liao, H.; Dong, W.; Peng, C.; Liu, H. Exploring differences of visual attention in pedestrian navigation when using 2D maps and 3D geo-browsers. Cartogr. Geogr. Inf. Sci. 2016, 44, 1–17. [Google Scholar] [CrossRef]

- Li, Y.; Pizlo, Z. Depth cues versus the simplicity principle in 3D shape perception. Top. Cognit. Sci. 2011, 3, 667–685. [Google Scholar] [CrossRef] [PubMed]

- Brenner, E.; van Damme, W.J. Perceived distance, shape and size. Vis. Res. 1999, 39, 975–986. [Google Scholar] [CrossRef]

- Kenyon, R.V.; Phenany, M.; Sandin, D.; Defanti, T. Accommodation and size-constancy of virtual objects. Ann. Biomed. Eng. 2008, 36, 342–348. [Google Scholar] [CrossRef] [PubMed]

- Hatfield, G. Psychological experiments and phenomenal experience in size and shape constancy. Philos. Sci. 2014, 81, 940–953. [Google Scholar] [CrossRef]

- Morrison, J.L. A theoretical framework for cartographic generalization with the emphasis on the process of symbolization. Int. Yearb. Cartogr. 1974, 14, 115–127. [Google Scholar]

- Caivano, J.L. Visual texture as a semiotic system. Semiotica 1990, 80, 239–252. [Google Scholar] [CrossRef]

- MacEachren, A.M. How Maps Work: Representation, Visualization, and Design; Guilford Press: New York, NY, USA, 1995. [Google Scholar]

- MacEachren, A.M.; Roth, R.E.; O’Brien, J.; Li, B.; Swingley, D.; Gahegan, M. Visual semiotics & uncertainty visualization: An empirical study. IEEE Trans. Vis. Comput. Gr. 2012, 18, 2496–2505. [Google Scholar]

- Roth, R.E. Visual variables. Int. Encycl. Geogr. Available online: http://onlinelibrary.wiley.com/doi/10.1002/9781118786352.wbieg0761/abstract;jsessionid=6FB775930ACD5EAA6CA0D611A3B91EA8.f04t03 (accessed on 1 September 2017). [CrossRef]

- Brychtova, A.; Coltekin, A. An empirical user study for measuring the influence of colour distance and font size in map reading using eye tracking. Cartogr. J. 2016, 53, 202–212. [Google Scholar] [CrossRef]

- Keskin, M.; Dogru, O.; Guney, C.; Basaraner, M.; Ulugtekin, N. Contribution of neuroscience related technologies to cartography. In Proceedings of the International Conference on Cartography and Gis, Albena, Bulgaria, 13–17 June 2016. [Google Scholar]

- Grubert, A.; Eimer, M. Qualitative differences in the guidance of attention during single-color and multiple-color visual search: Behavioral and electrophysiological evidence. J. Exp. Psychol. Hum. Percept. Perform. 2013, 39, 1433–1442. [Google Scholar] [CrossRef] [PubMed]

- D’Zmura, M. Color in visual search. Vis. Res. 1991, 31, 951–966. [Google Scholar] [CrossRef]

- Quinlan, P.T.; Humphreys, G.W. Visual search for targets defined by combinations of color, shape, and size: An examination of the task constraints on feature and conjunction searches. Percept. Psychophys. 1987, 41, 455–472. [Google Scholar] [CrossRef] [PubMed]

- Williams, L. The effect of target specification on objects fixated during visual search. Percept. Psychophys. 1966, 1, 315–318. [Google Scholar] [CrossRef]

- Treisman, A.; Gormican, S. Feature analysis in early vision: Evidence from search asymmetries. Psychol. Rev. 1988, 95, 15–48. [Google Scholar] [CrossRef] [PubMed]

- Sagi, D. The combination of spatial frequency and orientation is effortlessly perceived. Atten. Percept. Psychophys. 1988, 43, 601–603. [Google Scholar] [CrossRef]

- Moraglia, G. Visual search: Spatial frequency and orientation. Percept. Motor Skills 1989, 69, 675–689. [Google Scholar] [CrossRef] [PubMed]

- Pizlo, Z. 3d Shape: Its Unique Place in Visual Perception; MIT Press: Cambridge, MA, USA, 2008. [Google Scholar]

- Bloj, M.G.; Kersten, D.; Hurlbert, A.C. Perception of three-dimensional shape influences colour perception through mutual illumination. Nature 1999, 402, 877–879. [Google Scholar] [PubMed]

- Giesel, M.; Gegenfurtner, K.R. Color appearance of real objects varying in material, hue, and shape. J. Vis. 2010, 10, 10. [Google Scholar] [CrossRef] [PubMed]

- Granzier, J.J.; Vergne, R.; Gegenfurtner, K.R. The effects of surface gloss and roughness on color constancy for real 3-d objects. J. Vis. 2014, 14, 16. [Google Scholar] [CrossRef] [PubMed]

- Radonjić, A.; Cottaris, N.P.; Brainard, D.H. Color constancy supports cross-illumination color selection. J. Vis. 2015, 15, 13. [Google Scholar] [CrossRef] [PubMed]

- Pizlo, Z.; Sawada, T.; Li, Y.; Kropatsch, W.G.; Steinman, R.M. New approach to the perception of 3d shape based on veridicality, complexity, symmetry and volume. Vis. Res. 2010, 50, 1–11. [Google Scholar] [CrossRef] [PubMed]

- Gibson, J.J. The Perception of the Visual World; Houghton Mifflin: Oxford, UK, 1950. [Google Scholar]

- Todd, J.T. The visual perception of 3D shape. Trends Cognit. Sci. 2004, 8, 115–121. [Google Scholar] [CrossRef] [PubMed]

- Werner, A. Color constancy improves, when an object moves: High-level motion influences color perception. J. Vis. 2007, 7, 19. [Google Scholar] [CrossRef] [PubMed]

- Gert, J. Color constancy and dispositionalism. Philos. Stud. 2013, 162, 183–200. [Google Scholar] [CrossRef]

- Patterson, R.E. Contextual factors, continued. In Human Factors of Stereoscopic 3d Displays; Springer: London, UK, 2015; pp. 57–62. [Google Scholar]

- Wagner, M. Sensory and cognitive explanations for a century of size constancy research. In Visual Experience: Sensation, Cognition, and Constancy; Oxford University Press: Oxford, UK, 2012; pp. 1–35. [Google Scholar]

- Crampton, J.W. Interactivity types in geographic visualization. Cartogr. Geogr. Inf. Sci. 2002, 29, 85–98. [Google Scholar] [CrossRef]

- Dong, W.; Liao, H.; Roth, R.E.; Wang, S. Eye tracking to explore the potential of enhanced imagery basemaps in web mapping. Cartogr. J. 2014, 51, 313–329. [Google Scholar] [CrossRef]

- Lawton, C.A. Gender, spatial abilities, and wayfinding. In Handbook of Gender Research in Psychology; Springer: New York, NY, USA, 2010; pp. 317–341. [Google Scholar]

- Granrud, C.E. Development of size constancy in children: A test of the metacognitive theory. Atten. Percept. Psychophys. 2009, 71, 644–654. [Google Scholar] [CrossRef] [PubMed]

- Castner, H.W.; Eastman, R.J. Eye-movement parameters and perceived map complexity—I. Am. Cartogr. 1984, 11, 107–117. [Google Scholar] [CrossRef]

- Brodersen, L.; Andersen, H.H.; Weber, S. Applying Eye-Movement Tracking for the Study of Map Perception and Map Design; National Survey and Cadastre: Copenhagen, Denmark, 2002. [Google Scholar]

- Manson, S.M.; Kne, L.; Dyke, K.R.; Shannon, J.; Eria, S. Using eye-tracking and mouse metrics to test usability of web mapping navigation. Cartogr. Geogr. Inf. Sci. 2012, 39, 48–60. [Google Scholar] [CrossRef]

- Ashbridge, E.; Perrett, D.I. Generalizing across object orientation and size. In Perceptual Constancy. Why Things Look as They Do; Cambridge University Press: Cambridge, UK, 1998; pp. 192–209. [Google Scholar]

- Plewan, T.; Rinkenauer, G. Simple reaction time and size-distance integration in virtual 3D space. Psychol. Res. 2016, 81, 653–663. [Google Scholar] [CrossRef] [PubMed]

- Granrud, C.E. Visual metacognition and the development of size constancy. In Thinking and Seeing: Visual Metacognition in Adults and Children; The MIT Press: Cambridge, MA, USA; London, UK, 2004; pp. 75–90. [Google Scholar]

- Granrud, C.E. Judging the size of a distant object: Strategy use by children and adults. In Visual Experience: Sensation, Cognition, and Constancy; Oxford University Press: Oxford, UK, 2012; pp. 13–34. [Google Scholar]

- Gori, M.; Giuliana, L.; Sandini, G.; Burr, D. Visual size perception and haptic calibration during development. Dev. Sci. 2012, 15, 854–862. [Google Scholar] [CrossRef] [PubMed]

- Chen, L. Topological structure in visual perception. Science 1982, 218, 699–700. [Google Scholar] [CrossRef] [PubMed]

- Winter, S.; Raubal, M.; Nothegger, C. Focalizing measures of salience for wayfinding. In Map—Based Mobile Services; Meng, L., Reichenbacher, T., Zipf, A., Eds.; Springer: Berlin/Heidelberg, Germnay, 2005; pp. 125–139. [Google Scholar]

- Olkkonen, M.; Brainard, D.H. Joint effects of illumination geometry and object shape in the perception of surface reflectance. I-Percept. 2011, 2, 1014–1034. [Google Scholar] [CrossRef] [PubMed]

- Kavšek, M.; Granrud, C.E. Children’s and adults’ size estimates at near and far distances: A test of the perceptual learning theory of size constancy development. I-Percept. 2012, 3, 459–466. [Google Scholar] [CrossRef] [PubMed]

- Tronick, E.; Hershenson, M. Size-distance perception in preschool children. J. Exp. Child Psychol. 1979, 27, 166–184. [Google Scholar] [CrossRef]

- Wu, W.-C.; Basdogan, C.; Srinivasan, M.A. Visual, haptic, and bimodal perception of size and stiffness in virtual environments. Proceedings of ASME Dynamic Systems and Control Division, Nashville, TN, USA, 14–19 November 1999; Volume 67, pp. 19–26. [Google Scholar]

- Haber, R.N.; Levin, C.A. The independence of size perception and distance perception. Percept. Psychophys. 2001, 63, 1140–1152. [Google Scholar] [CrossRef] [PubMed]

- Lee, Y.L.; Saunders, J.A. Symmetry facilitates shape constancy for smoothly curved 3d objects. J. Exp. Psychol. Hum. Percept. Perform. 2013, 39, 1193–1204. [Google Scholar] [CrossRef] [PubMed]

- Welchman, A.E.; Deubelius, A.; Conrad, V.; Bülthoff, H.H.; Kourtzi, Z. 3d shape perception from combined depth cues in human visual cortex. Nat. Neurosci. 2005, 8, 820–827. [Google Scholar] [CrossRef] [PubMed]

- Hubona, G.S.; Wheeler, P.N.; Shirah, G.W.; Brandt, M. The relative contributions of stereo, lighting, and background scenes in promoting 3d depth visualization. ACM Trans. Comput.-Hum. Interact. 1999, 6, 214–242. [Google Scholar] [CrossRef]

- Edler, D.; Bestgen, A.-K.; Kuchinke, L.; Dickmann, F. Grids in topographic maps reduce distortions in the recall of learned object locations. PLoS ONE 2014, 9, e98148. [Google Scholar] [CrossRef] [PubMed]

- Dolezalova, J.; Popelka, S. Scangraph: A novel scanpath comparison method using visualisation of graph cliques. J. Eye Mov. Res. 2016, 9, 1–13. [Google Scholar]

| Attribute | Task | Number of Repetitions | |

|---|---|---|---|

| Exp. 1.1 | Shape Guidance | Find and click on all the spheres (G1–G2)/cones (G3–G4)/cubes (G5–G6). | 1 |

| Exp. 1.2 | Color Guidance | Find and click on all the red (G1–G2)/blue (G3–G4)/yellow (G5–G6) objects. | 1 |

| Exp. 1.3 | Size Guidance | Find and click on all the cubes (1 or many) that are larger than the others. | 1 |

| Exp. 2.1 | Size Constancy | Compare the sizes of A–F with that of X and tell the experimenter whether they are the same size as X. | 6 |

| Exp. 2.2 | Shape Constancy | Compare the shapes of A–F with that of X and tell the experimenter whether they are the same shape as X. | 6 |

| Exp. 2.3 | Color Constancy | Compare the colors of A–F with that of X and tell the experimenter whether they are the same color as X. | 6 |

| Experiment | Index Abbr. (Full Name, Unit) | Interpretation |

|---|---|---|

| Exp. 1 (Guidance) | FT (Finish time, s) | Time needed to complete the task |

| TtFF (Time to first fixation, s) | Time from the start of the task to when the subject fixated on the AOI group for the first time | |

| ACC (Average time needed for a correct click, s) | FT/the number of correctly judged objects | |

| VR (Visit ratio) | Visit duration for the AOI group/total visit duration | |

| AC (Accuracy) | Number of correct judgments/number of clicks | |

| Exp. 2 (Constancy) | FT (Finish time, s) | Time to complete the task |

| AFD (Average fixation duration, s) | Average of all durations of fixation on one AOI | |

| VC (Visit count, count) | Number of visits within an AOI | |

| AC (Accuracy) | Ratio of the number of correct judgments to the total number of judgments (with respect to positions) among all subjects |

| Visual Variable | Position | A (Z(p)) | B (Z(p)) | C (Z(p)) | D (Z(p)) | E (Z(p)) |

|---|---|---|---|---|---|---|

| Size | B | −0.150 (0.880) | ||||

| C | −1.240 (0.215) | −1.903 (0.057) | ||||

| D | −0.627 (0.531) | −1.074 (0.283) | −0.339 (0.735) | |||

| E | −0.461 (0.645) | −0.442 (0.658) | −2.727 (0.006 *) | −1.452 (0.147) | ||

| F | −0.245 (0.806) | −0.706 (0.480) | −1.446 (0.148) | −0.686 (0.493) | −1.352 (0.176) | |

| Shape | B | −4.614 (0.000 **) | ||||

| C | −4.330 (0.000 **) | −0.729 (0.466) | ||||

| D | −1.952 (0.051) | −2.221 (0.026 *) | −1.887 (0.059) | |||

| E | −3.859 (0.000 **) | −1.260 (0.208) | −0.671 (0.502) | −1.292 (0.196) | ||

| F | −4.242 (0.000 **) | −1.089 (0.276) | −0.352 (0.725) | −1.684 (0.092) | −0.382 (0.702) | |

| Color | B | −1.719 (0.086) | ||||

| C | −2.207 (0.027 *) | −0.522 (0.602) | ||||

| D | −1.688 (0.091) | −0.513 (0.608) | −0.059 (0.953) | |||

| E | −2.518 (0.012 *) | −0.972 (0.331) | −0.445 (0.657) | −0.223 (0.824) | ||

| F | −1.487 (0.137) | −0.919 (0.358) | −1.527 (0.127) | −1.038 (0.299) | −2.116 (0.034 *) |

© 2017 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Liu, B.; Dong, W.; Meng, L. Using Eye Tracking to Explore the Guidance and Constancy of Visual Variables in 3D Visualization. ISPRS Int. J. Geo-Inf. 2017, 6, 274. https://doi.org/10.3390/ijgi6090274

Liu B, Dong W, Meng L. Using Eye Tracking to Explore the Guidance and Constancy of Visual Variables in 3D Visualization. ISPRS International Journal of Geo-Information. 2017; 6(9):274. https://doi.org/10.3390/ijgi6090274

Chicago/Turabian StyleLiu, Bing, Weihua Dong, and Liqiu Meng. 2017. "Using Eye Tracking to Explore the Guidance and Constancy of Visual Variables in 3D Visualization" ISPRS International Journal of Geo-Information 6, no. 9: 274. https://doi.org/10.3390/ijgi6090274

APA StyleLiu, B., Dong, W., & Meng, L. (2017). Using Eye Tracking to Explore the Guidance and Constancy of Visual Variables in 3D Visualization. ISPRS International Journal of Geo-Information, 6(9), 274. https://doi.org/10.3390/ijgi6090274