Attitudes towards Social Robots in Education: Enthusiast, Practical, Troubled, Sceptic, and Mindfully Positive

Abstract

1. Introduction

2. Theoretical Background

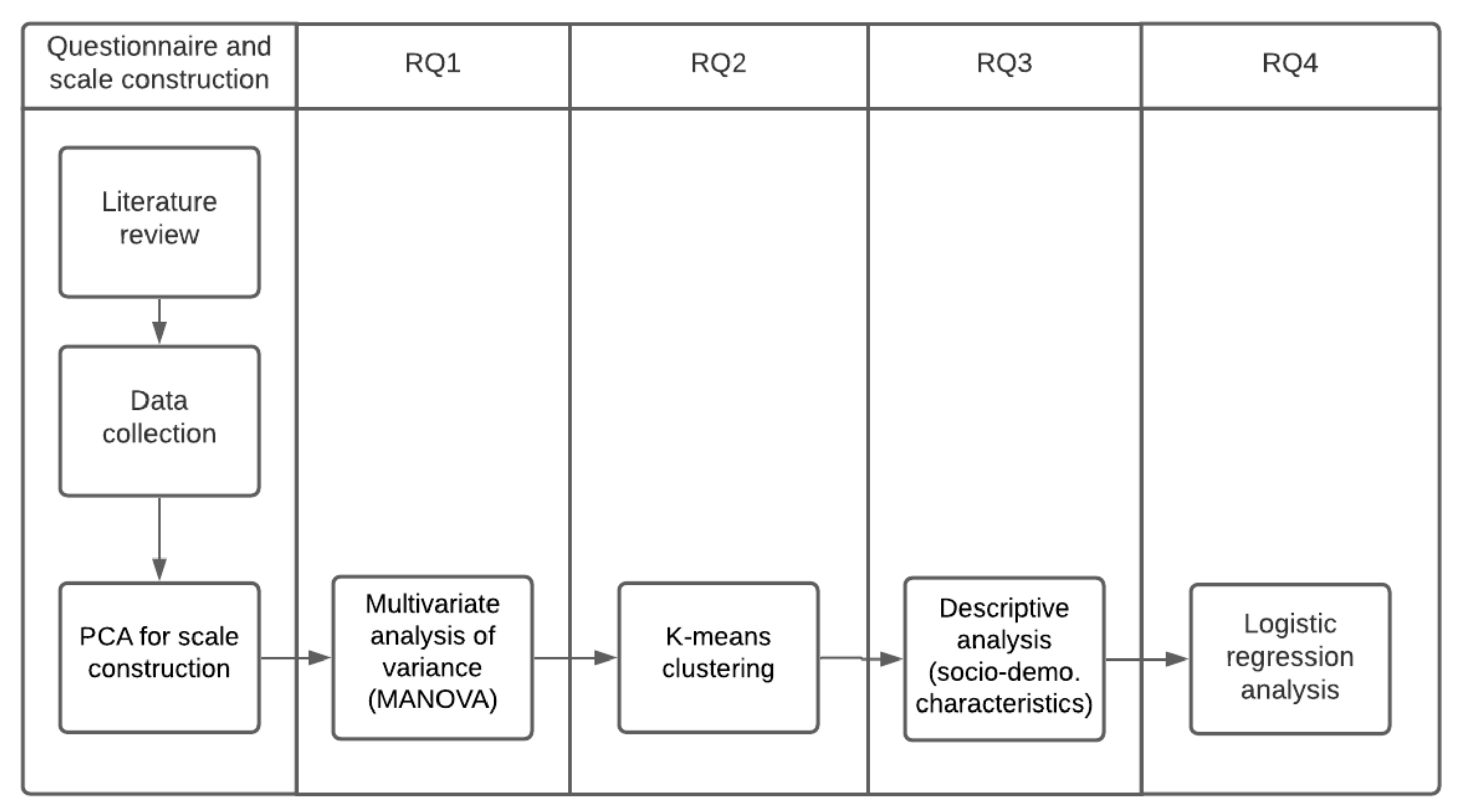

3. Method

3.1. Participants and Design

3.2. Materials and Methods

Construction of the Questionnaire

3.3. Psychometric Analyses of the Scales

4. Results

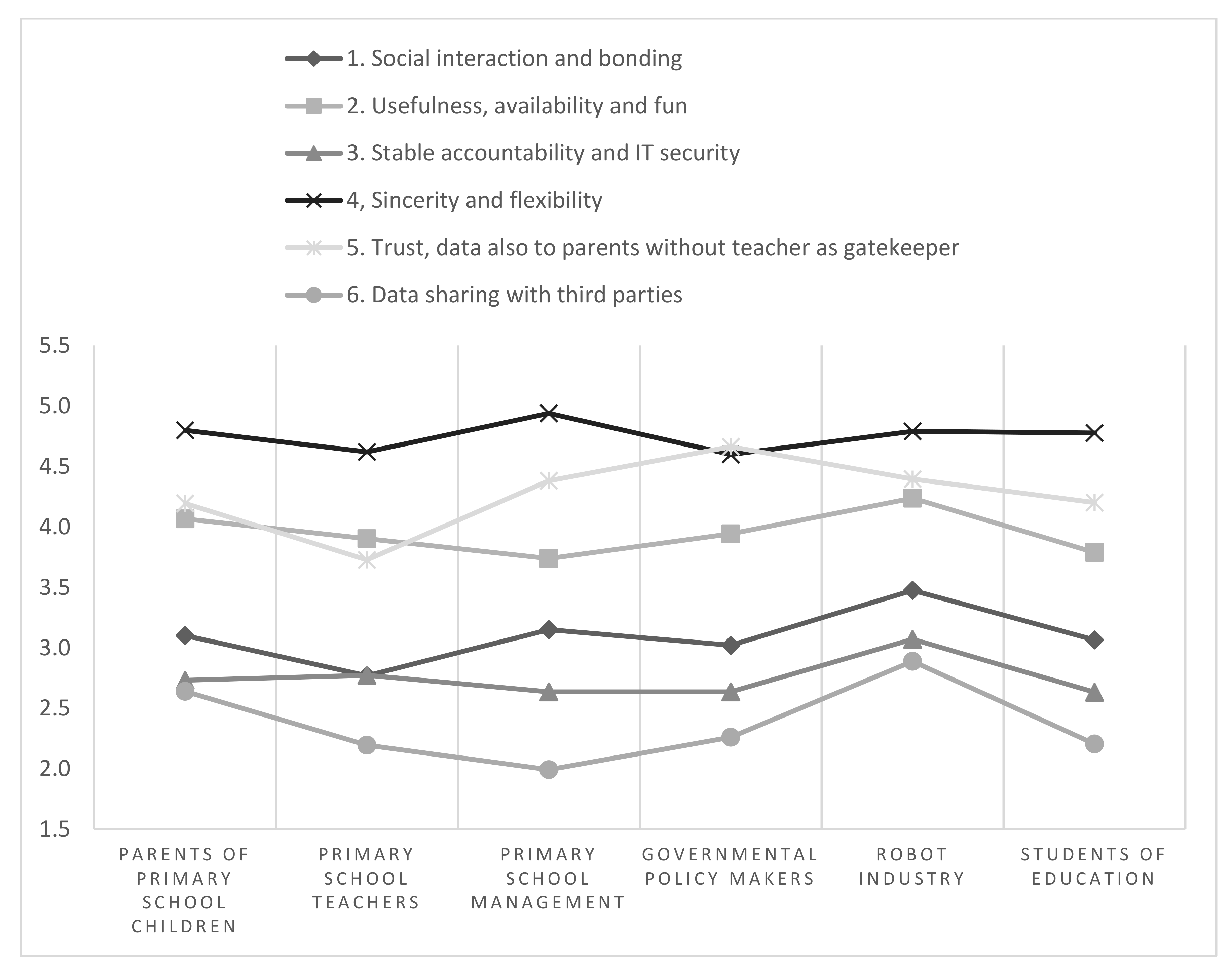

4.1. Stakeholder Perspectives

- For scale 1 (Social interaction and bonding), the employees of the robotics industry score significantly higher than teachers and government policymakers.

- For, scale 2 (Usefulness, availability and fun), the employees of the robotics industry score significantly higher than primary school directors/management and students of education.

- For scale 3 (Stable accountability and IT security), the employees of the robotics industry score significantly higher than government policymakers and students of education.

- For scale 4 (Sincerity and flexibility), there are no significant differences by group.

- For scale 5 (Trust, data also to parents without a teacher as the gatekeeper), teachers score significantly lower on this scale than all other groups. Additionally, government policymakers score significantly higher than parents with children in primary education, and students of education.

- For scale 6 (Data sharing with third parties), the robotics industry shows a significantly higher mean than primary school teachers, primary school directors, and students of education. Additionally, parents with children in primary education have a significantly higher mean than primary school director/management.

4.2. Cluster Analysis

4.2.1. Enthusiast (Cluster 1)

4.2.2. Practical (Cluster 2)

4.2.3. Troubled (Cluster 3)

4.2.4. Sceptic (Cluster 4)

4.2.5. Mindfully Positive (Cluster 5)

4.3. Descriptive Analysis of the Socio-Demographic Characteristics of Each Cluster

4.4. Logistic Regression Analysis

4.4.1. Cluster 1, Enthusiast

4.4.2. Cluster 2, Practical

4.4.3. Cluster 3, Troubled

4.4.4. Cluster 4, Sceptic

4.4.5. Cluster 5, Mindfully Positive

5. Discussion and Conclusions

5.1. RQ1, Stakeholder Attitudes

5.2. RQ2, Five Types of Moral Attitudes towards Social Robots in Education

5.3. RQ3, Which Socio-Demographic Characteristics Influence the Attitudes of Stakeholders

5.4. Implications for the Design and Implementation of Social Robots in (Primary) Education

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Serholt, S.; Barendregt, W.; Vasalou, A.; Alves-Oliveira, P.; Jones, A.; Petisca, S.; Paiva, A. The case of classroom robots: Teachers’ deliberations on the ethical tensions. AI Soc. 2017, 32, 613–631. [Google Scholar] [CrossRef]

- Sharkey, A.J.C. Should we welcome robot teachers? Ethics Inf. Technol. 2016, 18, 283–297. [Google Scholar] [CrossRef]

- Tolksdorf, N.F.; Siebert, S.; Zorn, I.; Horwath, I.; Rohlfing, K.J. Ethical Considerations of Applying Robots in Kindergarten Settings: Towards an Approach from a Macroperspective. Int. J. Soc. Robot. 2020, 1–12. [Google Scholar] [CrossRef]

- Belpaeme, T.; Kennedy, J.; Ramachandran, A.; Scassellati, B.; Tanaka, F. Social robots for education: A review. Sci. Robot. 2018, 3. [Google Scholar] [CrossRef] [PubMed]

- Bartneck, C.; Forlizzi, J. A design-centred framework for social human-robot interaction. In Proceedings of the RO-MAN 2004 13th IEEE International Workshop on Robot and Human Interactive Communication (IEEE Catalog No.04TH8759), Kurashiki, Japan, 20–22 September 2004; pp. 591–594. [Google Scholar] [CrossRef]

- Alemi, M.; Meghdari, A.; Basiri, N.M.; Taheri, A. The Effect of Applying Humanoid Robots as Teacher Assistants to Help Iranian Autistic Pupils Learn English as a Foreign Language. In Social Robotics; Tapus, A., André, E., Martin, J.-C., Ferland, F., Ammi, M., Eds.; Springer International Publishing: Berlin/Heidelberg, Germany, 2015; Volume 9388, pp. 1–10. [Google Scholar] [CrossRef]

- Gordon, G.; Breazeal, C.; Engel, S. Can Children Catch Curiosity from a Social Robot? In Proceedings of the Tenth Annual ACM/IEEE International Conference on Human-Robot Interaction, Portland, OR, USA, 2–5 March 2015; pp. 91–98. [Google Scholar] [CrossRef]

- Kwok, V.H.Y. Robot vs. Human Teacher: Instruction in the Digital Age for ESL Learners. Engl. Lang. Teach. 2015, 8. [Google Scholar] [CrossRef]

- van den Berghe, R.; Verhagen, J.; Oudgenoeg-Paz, O.; Van der Ven, S.; Leseman, P. Social Robots for Language Learning: A Review. Rev. Educ. Res. 2019, 89, 259–295. [Google Scholar] [CrossRef]

- Wang, Y.H.; Young, S.S.-C.; Jang, J.-S.R. Using Tangible Companions for Enhancing Learning English Conversation. J. Educ. Technol. Soc. 2013, 16, 296–309. [Google Scholar]

- Kose, H.; Yorganci, R. Tale of a robot: Humanoid robot assisted sign language tutoring. In Proceedings of the 2011 11th IEEE-RAS International Conference on Humanoid Robots, Bled, Slovenia, 26–28 October 2011; pp. 105–111. [Google Scholar] [CrossRef]

- Uluer, P.; Akalın, N.; Köse, H. A New Robotic Platform for Sign Language Tutoring: Humanoid Robots as Assistive Game Companions for Teaching Sign Language. Int. J. Soc. Robot. 2015, 7, 571–585. [Google Scholar] [CrossRef]

- Aresti-Bartolome, N.; Garcia-Zapirain, B. Technologies as Support Tools for Persons with Autistic Spectrum Disorder: A Systematic Review. Int. J. Environ. Res. Public Health 2014, 11, 7767–7802. [Google Scholar] [CrossRef]

- Konijn, E.A.; Hoorn, J.F. Robot tutor and pupils’ educational ability: Teaching the times tables. Comput. Educ. 2020, 157, 103970. [Google Scholar] [CrossRef]

- Ros, R.; Baroni, I.; Demiris, Y. Adaptive human–robot interaction in sensorimotor task instruction: From human to robot dance tutors. Robot. Auton. Syst. 2014, 62, 707–720. [Google Scholar] [CrossRef]

- Ros, R.; Demiris, Y. Creative Dance: An Approach for Social Interaction between Robots and Children. In Human Behavior Understanding; Salah, A.A., Hung, H., Aran, O., Gunes, H., Eds.; Springer International Publishing: Berlin/Heidelberg, Germany, 2013; Volume 8212, pp. 40–51. [Google Scholar] [CrossRef]

- Pandey, A.K.; Gelin, R. Humanoid Robots in Education: A Short Review. In Humanoid Robotics: A Reference; Goswami, A., Vadakkepat, P., Eds.; Springer: Berlin/Heidelberg, Germany, 2017; pp. 1–16. [Google Scholar] [CrossRef]

- Mechelen, M.V.; Baykal, G.E.; Dindler, C.; Eriksson, E.; Iversen, O.S. 18 Years of Ethics in Child-Computer Interaction Research: A Systematic Literature Review. In Proceedings of the Interaction Design and Children Conference, London, UK, 17–24 June 2020; pp. 161–183. [Google Scholar] [CrossRef]

- Friedman, B.; Kahn, P.H.; Borning, A.; Huldtgren, A. Value Sensitive Design and Information Systems. In Early Engagement and New Technologies: Opening up the Laboratory; Doorn, N., Schuurbiers, D., van de Poel, I., Gorman, M.E., Eds.; Springer: Berlin/Heidelberg, Germany, 2013; pp. 55–95. [Google Scholar] [CrossRef]

- Ligtvoet, A.; Van de Kaa, G.; Fens, T.; Van Beers, C.; Herder, P.; van den Hoven, J. Value Sensitive Design of Complex Product Systems. In Policy Practice and Digital Science: Integrating Complex Systems, Social Simulation and Public Administration in Policy Research; Janssen, M., Wimmer, M.A., Deljoo, A., Eds.; Springer International Publishing: Berlin/Heidelberg, Germany, 2015; pp. 157–176. [Google Scholar] [CrossRef]

- Friedman, B. (Ed.) Human Values and the Design of Computer Technology; Center for the Study of Language and Information; Cambridge University Press: Cambridge, UK, 1997. [Google Scholar]

- Smakman, M.; Konijn, E.A. Robot Tutors: Welcome or Ethically Questionable? In Robotics in Education-Current Research and Innovations; Merdan, M., Lepuschitz, W., Koppensteiner, G., Balogh, R., Obdržálek, D., Eds.; Springer: Cham, Switzerland, 2020; Volume 1023, pp. 376–386. [Google Scholar] [CrossRef]

- European Commission, Directorate-General for Communication. Special Eurobarometer 460: Attitudes towards the Impact of Digitization and Automation on Daily Life; (460 Wave EB87.1); European Commission: Brussels, Belgium, 2017; Available online: https://ec.europa.eu/commfrontoffice/publicopinion/archives/ebs/ebs_382_en.pdf (accessed on 8 December 2020).

- Hood, D.; Lemaignan, S.; Dillenbourg, P. When Children Teach a Robot to Write: An Autonomous Teachable Humanoid Which Uses Simulated Handwriting. In Proceedings of the Tenth Annual ACM/IEEE International Conference on Human-Robot Interaction, Portland, OR, USA, 2–5 March 2015; pp. 83–90. [Google Scholar] [CrossRef]

- Jones, A.; Castellano, G. Adaptive Robotic Tutors that Support Self-Regulated Learning: A Longer-Term Investigation with Primary School Children. Int. J. Soc. Robot. 2018, 10, 357–370. [Google Scholar] [CrossRef]

- Shin, N.; Kim, S. Learning about, from, and with Robots: Students’ Perspectives. In Proceedings of the 16th IEEE International Conference on Robot & Human Interactive Communication 2007, Jeju Island, Korea, 26–29 August 2007; pp. 1040–1045. [Google Scholar] [CrossRef]

- Wei, C.-W.; Hung, I.-C.; Lee, L.; Chen, N.-S. A Joyful Classroom Learning System with Robot Learning Companion for Children to Learn Mathematics Multiplication. Turk. Online J. Educ. Technol. 2011, 10, 11–23. [Google Scholar]

- Diep, L.; Cabibihan, J.-J.; Wolbring, G. Social Robots: Views of special education teachers. In Proceedings of the 3rd 2015 Workshop on ICTs for Improving Patients Rehabilitation Research Techniques, Lisbon, Portugal, 1–2 October 2015; pp. 160–163. [Google Scholar] [CrossRef]

- Kennedy, J.; Lemaignan, S.; Belpaeme, T. Cautious Attitude of Teachers towards Social Robots in Schools. In Proceedings of the Robots 4 Learning Workshop at IEEE RO-MAN, New York, NY, USA, 12 February 2016; p. 6. [Google Scholar]

- Reich-Stiebert, N.; Eyssel, F. Robots in the Classroom: What Teachers Think About Teaching and Learning with Education Robots. Soc. Robot. 2016, 671–680. [Google Scholar] [CrossRef]

- Ahmad, I.M.; Mubin, O.; Orlando, J. Understanding Behaviours and Roles for Social and Adaptive Robots in Education: Teacher’s Perspective. In Proceedings of the Fourth International Conference on Human Agent Interaction, Biopolis, Singapore, 4–7 October 2016; pp. 297–304. [Google Scholar] [CrossRef]

- Fridin, M.; Belokopytov, M. Acceptance of socially assistive humanoid robot by preschool and elementary school teachers. Comput. Hum. Behav. 2014, 33, 23–31. [Google Scholar] [CrossRef]

- Kory Westlund, J.M.; Gordon, G.; Spaulding, S.; Lee, J.J.; Plummer, L.; Martinez, M.; Das, M.; Breazeal, C. Lessons from teachers on performing HRI studies with young children in schools. In Proceedings of the 2016 11th ACM/IEEE International Conference on Human-Robot Interaction (HRI), Christchurch, New Zealand, 7–10 March 2016; pp. 383–390. [Google Scholar] [CrossRef]

- Chang, C.-W.; Lee, J.-H.; Chao, P.-Y.; Wang, C.-Y.; Chen, G.-D. Exploring the Possibility of Using Humanoid Robots as Instructional Tools for Teaching a Second Language in Primary School. Educ. Technol. Soc. 2010, 13, 13–24. [Google Scholar]

- Shih, C.-F.; Chang, C.-W.; Chen, G.-D. Robot as a Storytelling Partner in the English Classroom—Preliminary Discussion. In Proceedings of the Seventh IEEE International Conference on Advanced Learning Technologies (ICALT 2007), Niigata, Japan, 18–20 July 2007; pp. 678–682. [Google Scholar] [CrossRef]

- Sumioka, H.; Yoshikawa, Y.; Wada, Y.; Ishiguro, H. Teachers’ Impressions on Robots for Therapeutic Applications. In New Frontiers in Artificial Intelligence; Otake, M., Kurahashi, S., Ota, Y., Satoh, K., Bekki, D., Eds.; Springer International Publishing: Berlin/Heidelberg, Germany, 2017; Volume 10091, pp. 462–469. [Google Scholar] [CrossRef]

- Choi, J.-H.; Lee, J.-Y.; Han, J.-H. Comparison of Cultural Acceptability for Educational Robots between Europe and Korea. J. Inf. Process. Syst. 2008, 4, 97–102. [Google Scholar] [CrossRef]

- Koerber, A.; McMichael, L. Qualitative Sampling Methods: A Primer for Technical Communicator. J. Bus. Tech. Commun. 2008, 22, 454–473. [Google Scholar] [CrossRef]

- Smakman, M.; Konijn, E.; Vogt, P. Moral Considerations on Social Robots in Education: A Multi-stakeholder Perspective. 2020. submitted. [Google Scholar]

- Friedman, B.; Kahn, P.H.; Borning, A. Value sensitive design and information systems. In The Handbook of Information and Computer Ethics; Himma, K.E., Tavani, H.T., Eds.; John Wiley & Sons, Inc.: Hoboken, NJ, USA, 2008; pp. 69–101. [Google Scholar]

- Presser, S.; Schuman, H. The Measurement of a Middle Position in Attitude Surveys. Public Opin. Q. 1980, 44, 70–85. [Google Scholar] [CrossRef]

- Cattell, R.B. The Scree Test for the Number of Factors. Multivar. Behav. Res. 1966, 1, 245–276. [Google Scholar] [CrossRef] [PubMed]

- Ward, J.H. Hierarchical Grouping to Optimize an Objective Function. J. Am. Stat. Assoc. 1963, 58, 236–244. [Google Scholar] [CrossRef]

- Lloyd, S. Least squares quantization in PCM. IEEE Trans. Inf. Theory 1982, 28, 129–137. [Google Scholar] [CrossRef]

- Field, A. Discovering Statistics Using IBM SPSS Statistics, 5th ed.; Sage Publications Ltd.: New York, NY, USA, 2018. [Google Scholar]

- Davis, F.D. Perceived Usefulness, Perceived Ease of Use, and User Acceptance of Information Technology. MIS Q. 1989, 13, 319–340. [Google Scholar] [CrossRef]

- Goudzwaard, M.; Smakman, M.; Konijn, E.A. Robots are Good for Profit: A Business Perspective on Robots in Education. In Proceedings of the 2019 Joint IEEE 9th International Conference on Development and Learning and Epigenetic Robotics (ICDL-EpiRob), Oslo, Norway, 19–22 August 2019; pp. 54–60. [Google Scholar] [CrossRef]

- Van Ewijk, G.; Smakman, M.; Konijn, E.A. Teachers’ perspectives on social robots in education. In Proceedings of the Interaction Design and Children Conference, London, UK, 17–24 June 2020; pp. 273–280. [Google Scholar] [CrossRef]

- Hsu, S. Developing and validating a scale for measuring changes in teachers’ ICT integration proficiency over time. Comput. Educ. 2017, 111, 18–30. [Google Scholar] [CrossRef]

- Scaradozzi, D.; Screpanti, L.; Cesaretti, L.; Storti, M.; Mazzieri, E. Implementation and Assessment Methodologies of Teachers’ Training Courses for STEM Activities. Technol. Knowl. Learn. 2019, 24, 247–268. [Google Scholar] [CrossRef]

- Aldunate, R.; Nussbaum, M. Teacher adoption of technology. Comput. Hum. Behav. 2013, 29, 519–524. [Google Scholar] [CrossRef]

- Smakman, M.; Berket, J.; Konijn, E.A. The Impact of Social Robots in Education: Moral Considerations of Dutch Educational Policymakers. In Proceedings of the 2020 29th IEEE International Symposium on Robot and Human Interactive Communication (RO-MAN), Napels, Italy, 31 August–4 September 2020. [Google Scholar]

- Smakman, M.; Jansen, B.; Leunen, J.; Konijn, E.A. Acceptable Social Robots in Education: A Value Sensitive Parent Perspective. In Proceedings of the INTED2020 Conference 2020, Valencia, Spain, 2–4 March 2020; pp. 7946–7953. [Google Scholar] [CrossRef]

| Socio-Demographic Characteristics | % | |

|---|---|---|

| Age | 18–26 years | 19% |

| 26–35 years | 20% | |

| 36–45 years | 23% | |

| 46–55 years | 19% | |

| >55 years | 18% | |

| Experience with robots | No | 77% |

| Yes | 23% | |

| Gender | Male | 42% |

| Female | 58% | |

| Gross Income | Low (<€2.816 p/m) | 21% |

| Middle (€2.816–€5.632 p/m) | 51% | |

| High (>€5.632 p/m) | 15% | |

| No answer | 12% | |

| Highest finished education level | Secondary school | 11% |

| Vocational education (MBO) | 11% | |

| University of Applied Sciences (HBO) | 45% | |

| University of Science (WO) | 33% | |

| Stakeholder group | Parents with primary school children | 18% |

| Primary school teachers | 12% | |

| Primary school directors/management | 12% | |

| Government educational policymakers/advisors | 17% | |

| Employees of the robotics industry | 10% | |

| Students of education | 17% | |

| Other | 12% | |

| Values/Constructs | Explanation/Example Issue |

|---|---|

| Accountability | This value/construct is related to the effect robots have on who is accountable for the actions of robots and their effects. Someone accountable is obliged to accept the consequences of something. |

| Applicability | This value/construct is related to how useful and versatile a robot is in education. |

| Attachment | This value/construct is related to the possibility that the child will get attached to the robot, and whether this is permitted/ desirable. |

| Autonomy | This value/construct is related to the effect that the robot has on a teacher’s autonomy. Autonomy refers to the freedom of a teacher to make independent decisions. |

| Deception/Sincerity | This value/construct is related to the robot’s ability to make children believe something that is not true, such as pretend that the robot cares about a child or keeping information from children. |

| Flexibility | This value/construct is related to how easy it is to move and transport the robot. |

| Freedom from bias | This value/construct is related to the possible bias of the robot, such as gender or racial biases. |

| Friendship | This value/construct is related to the friendship that can develop between a child and a robot, and whether this is permitted/ desirable. |

| Happiness | This value/construct is related to the extent to which a robot provides pleasure/fun. |

| Human contact | This value/construct is related to the effects of a robot on human contact. |

| Privacy | This value/construct is related to the effect of the robot’s ability to collect personal data on children, and if this data may be shared with others. |

| Psychological welfare | This value/construct is related to the influence of the robot on psychological/social aspects, such as a robot may act as a person of trust, or may comfort a child. |

| Responsibility | This value/construct is related to the effect on teachers’ responsibility for the robot. Someone responsible is obliged to take care of something. |

| Safety | This value/construct is related to the physical safety of children when interacting with robots. |

| Security | This value/construct is related to the IT security of the data that the robot collects. |

| Trust | This value/construct is related to the trust that a child has in a robot, and whether this can be violated. |

| Usability | This value/construct is related to the availability of the robot. Availability indicates the extent to which a robot is accessible to users. |

| Scale | Label | Description | Items |

|---|---|---|---|

| 1 | Social interaction and bonding | Social robots may socially interact and form social bonds with children, such as friendship bonds. They may be used to aid the psychological wellbeing of children, and for learning social skills. | 16 |

| 2 | Usefulness, availability and fun | Social robots are useful and fun for children and parents and improve the job satisfaction of teachers. They should be made widely available for schools. | 11 |

| 3 | Stable accountability and IT security | Social robots do not jeopardize the accountability structure in schools and can be used without an IT security certificate. | 6 |

| 4 | Sincerity and flexibility | A robot must be honest to children and keep promises made to children. Additionally, the robots need to be flexible (movable). | 5 |

| 5 | Trust, data also to parents without a teacher as the gatekeeper | A robot must keep secrets told to by a child, and not share them with the parents of teachers. Teachers are not gatekeepers of data, parents should have access to data. It is safe to let children interact with robots without supervision. | 6 |

| 6 | Data share with third parties | Data collected by the robot may be shared with third parties, such as government and robot companies to improve policies and products. | 2 |

| Dependent Variable | Type III Sum of Squares | df | Mean Square | F | p |

|---|---|---|---|---|---|

| Social interaction and bonding | 18.670 | 6 | 3.112 | 4.417 | <0.001 |

| Usefulness, availability and fun | 11.632 | 6 | 1.939 | 3.482 | 0.002 |

| Stable accountability and IT safety | 8.653 | 6 | 1.442 | 2.180 | 0.044 |

| Sincerity and flexibility | 5.845 | 6 | 0.974 | 1.641 | 0.134 |

| Trust, data also to parents without teacher as gatekeeper | 36.956 | 6 | 6.159 | 9.806 | <0.001 |

| Data sharing with third parties | 37.811 | 6 | 6.302 | 4.032 | 0.001 |

| Clusters | ||||||

|---|---|---|---|---|---|---|

| Scales | Mean Cl 1 Enthusiast (n = 135) | Mean Cl 2 Practical (n = 87) | Mean Cl 3 Troubled (n = 143) | Mean Cl 4 Sceptic (n = 33) | Mean Cl 5 Mindfully Positive (n = 117) | Overall Mean (N = 515) |

| 1 Social interaction and bonding | 3.70 (SD 0.66) | 2.60 (SD 0.67) | 2.71 (SD 0.54) | 1.73 (SD 0.52) | 3.69 (SD 0.56) | 3.11 (SD 0.86) |

| 2 Usefulness, availability and fun | 4.37 (SD 0.52) | 3.86 (SD 0.54) | 3.69 (SD 0.56) | 2.31 (SD 0.70) | 4.31 (SD 0.54) | 3.95 (SD 0.76) |

| 3 Stable accountability and IT safety | 2.97 (SD 0.72) | 2.85 (SD 0.67) | 2.26 (SD 0.54) | 2.39 (SD 1.54) | 3.02 (SD 0.73) | 2.73 (SD 0.82) |

| 4 Sincerity and flexibility | 4.89 (SD 0.55 | 4.43 (SD 0.69) | 5.07 (SD 0.44) | 3.43 (SD 1.42) | 4.82 (SD 0.65) | 4.75 (SD 0.77) |

| 5 Trust, data also to parents without teacher as gatekeeper | 4.33 (SD 0.67) | 3.43 (SD 0.65) | 4.34 (SD 0.78) | 5.11 (SD 0.89) | 4.60 (SD 0.65) | 4.29 (SD 0.83) |

| 6 Data sharing with third parties | 3.93 (SD 0.70) | 3.13 (SD 0.74) | 1.41 (SD 0.55) | 1.11 (SD 0.30) | 1.57 (SD 0.57) | 2.38 (SD 1.27) |

| Socio-Demographic Characteristics | Cluster (CL) | |||||

|---|---|---|---|---|---|---|

| Cl 1 Enthusiast | Cl 2 Practical | Cl 3 Troubled | Cl 4 Sceptic | Cl 5 Mindfully Positive | ||

| Stakeholder group | Parents with primary school children | 22% | 20% | 11% | 18% | 20% |

| Primary school teachers | 7% | 28% | 14% | 9% | 7% | |

| School directors/management | 10% | 3% | 18% | 15% | 14% | |

| Government policymakers/advisors Employees of robotics industry Students of education Other | 15% | 14% | 17% | 30% | 20% | |

| 20% 14% 12% | 6% 18% 11% | 5% 24% 10% | 6% 18% 3% | 12% 12% 16% | ||

| Experience with robots | No Yes | 67% 33% | 92% 8% | 82% 18% | 88% 13% | 69% 31% |

| Gender | Male | 45% | 36% | 34% | 48% | 51% |

| Female | 55% | 63% | 66% | 52% | 49% | |

| Income | Low (<€2.816 p/m) | 20% | 31% | 24% | 35% | 22% |

| Middle (€2.816–€5.632 p/m) | 59% | 55% | 66% | 57% | 52% | |

| High (>€5.632 gross p/m) | 18% | 14% | 10% | 9% | 26% | |

| Highest finished education level | Secondary school | 9% | 17% | 12% | 13% | 9% |

| Vocational education (MBO) | 11% | 18% | 8% | 13% | 9% | |

| University of Applied Sciences (HBO) | 47% | 43% | 47% | 53% | 39% | |

| University of Science (WO) | 32% | 23% | 32% | 22% | 44% | |

| Socio-Demographic Characteristics | Odds Ratio (OR) | ||||

|---|---|---|---|---|---|

| Cl 1 Enthusiast | Cl 2 Practical | Cl 3 Troubled | Cl 4 Sceptic | Cl 5 Mindfully Positive | |

| Stakeholder: Parents with children in primary school | 1.023 | 1.478 | 0.456 | 4.562 | 1.228 |

| Stakeholder: Primary school teacher | 0.305 ** | 6.525 *** | 1.076 | 1.416 | 0.497 |

| Stakeholder: Primary school director/management | 0.581 | 0.423 | 1.865 | 3.092 | 1.210 |

| Stakeholder: Government policymakers | 0.714 | 1.116 | 1.151 | 7.627 * | 0.878 |

| Stakeholder: Robot industry | 1.850 | 0.937 | 0.482 | 4.523 | 0.634 |

| Stakeholder: Student of education | 0.846 | 1.182 | 2.720 ** | 3.013 | 0.322 ** |

| Age: 18–25 | 0.928 | 1.560 | 0.535 | 0.079 ** | 3.353 * |

| Age: 26–35 years | 0.980 | 1.627 | 0.899 | 0.107 ** | 1.467 |

| Age: 36–45 years | 0.988 | 1.331 | 0.963 | 0.502 | 1.001 |

| Age: 46–55 years | 1.639 | 1.070 | 0.748 | 0.570 | 0.810 |

| Experience with robots: Yes | 1.742 ** | 0.241 *** | 1.040 | 0.682 | 1.253 |

| Gender: Male | 0.770 | 1.076 | 1.026 | 1.431 | 1.155 |

| Highest finished education: Secondary school | 0.953 | 3.667 ** | 0.878 | 2.552 | 0.433 |

| Highest finished education: Vocational education (MBO) | 1.166 | 2.792 ** | 1.024 | 1.947 | 0.343 ** |

| Highest finished education: University of Applied Sciences (HBO) | 1.103 | 1.393 | 1.057 | 2.877 * | 0.569 ** |

| Income: low | 0.853 | 0.538 | 1.627 | 27.864 *** | 0.512 |

| Income: medium | 0.959 | 0.528 | 2.624 ** | 4.174 * | 0.475 ** |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Smakman, M.H.J.; Konijn, E.A.; Vogt, P.; Pankowska, P. Attitudes towards Social Robots in Education: Enthusiast, Practical, Troubled, Sceptic, and Mindfully Positive. Robotics 2021, 10, 24. https://doi.org/10.3390/robotics10010024

Smakman MHJ, Konijn EA, Vogt P, Pankowska P. Attitudes towards Social Robots in Education: Enthusiast, Practical, Troubled, Sceptic, and Mindfully Positive. Robotics. 2021; 10(1):24. https://doi.org/10.3390/robotics10010024

Chicago/Turabian StyleSmakman, Matthijs H. J., Elly A. Konijn, Paul Vogt, and Paulina Pankowska. 2021. "Attitudes towards Social Robots in Education: Enthusiast, Practical, Troubled, Sceptic, and Mindfully Positive" Robotics 10, no. 1: 24. https://doi.org/10.3390/robotics10010024

APA StyleSmakman, M. H. J., Konijn, E. A., Vogt, P., & Pankowska, P. (2021). Attitudes towards Social Robots in Education: Enthusiast, Practical, Troubled, Sceptic, and Mindfully Positive. Robotics, 10(1), 24. https://doi.org/10.3390/robotics10010024