Design of a Low-Cost Configurable Acoustic Sensor for the Rapid Development of Sound Recognition Applications

Abstract

1. Introduction

2. Background and Related Work

2.1. Urban Noise Monitoring

2.1.1. Urban Noise Monitoring with a Noise Meters

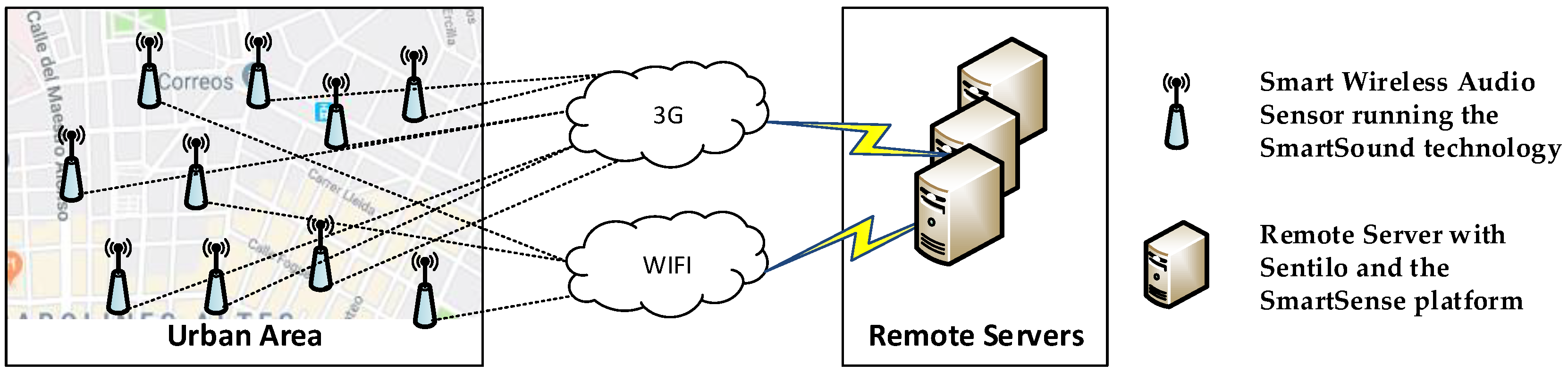

2.1.2. WASNs for Urban Noise Monitoring

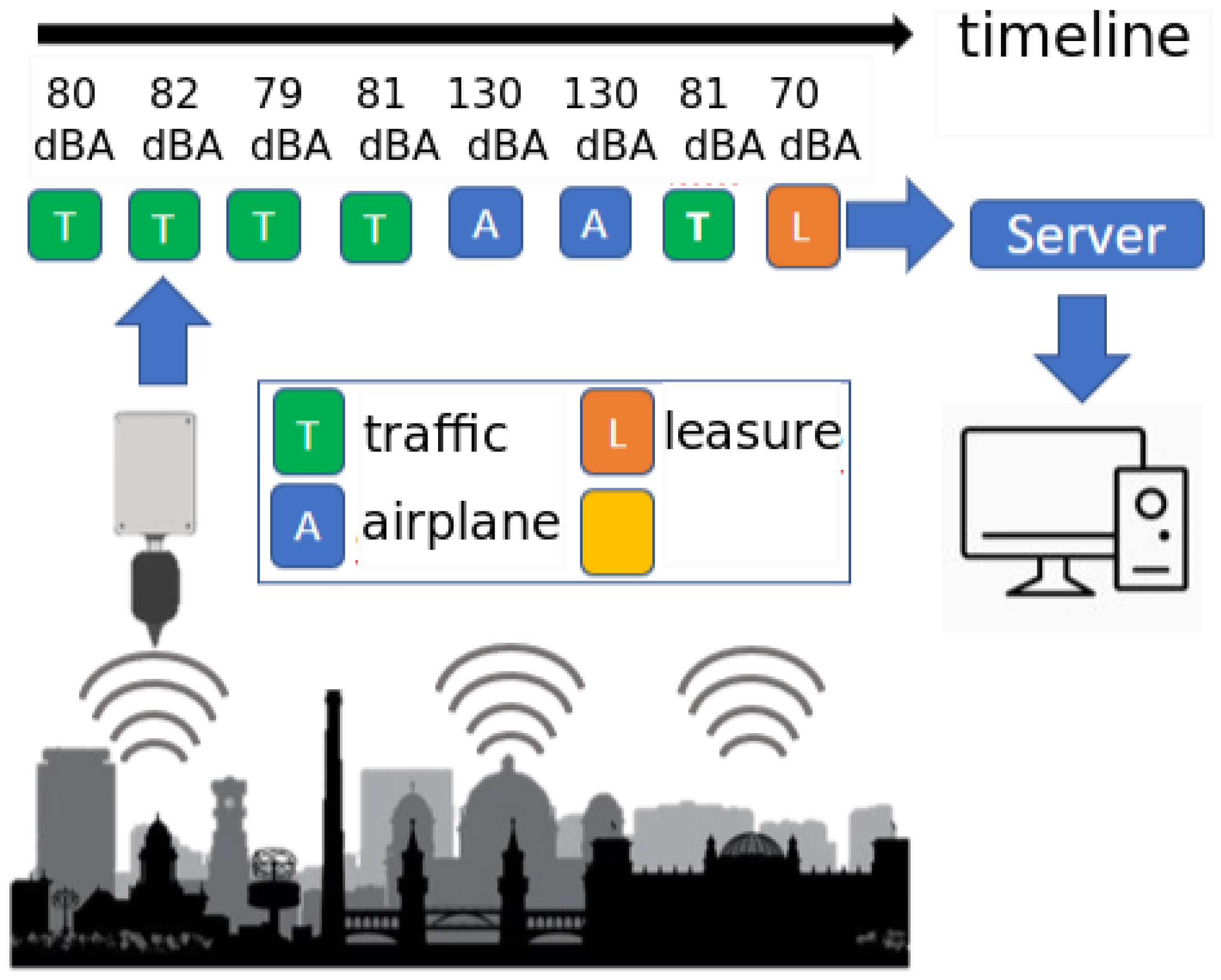

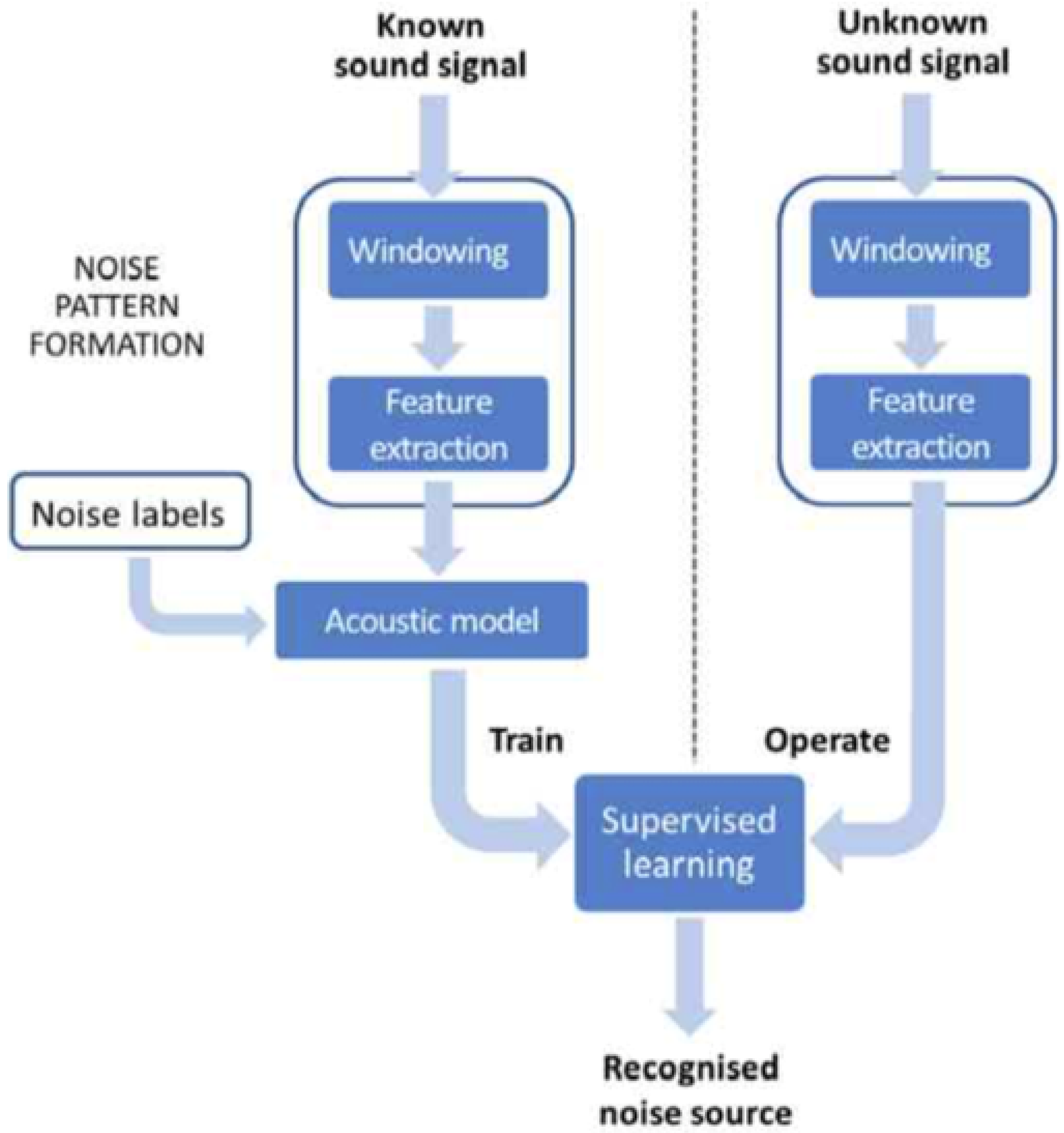

2.2. WASNs with Sound Source Detection

3. System Description

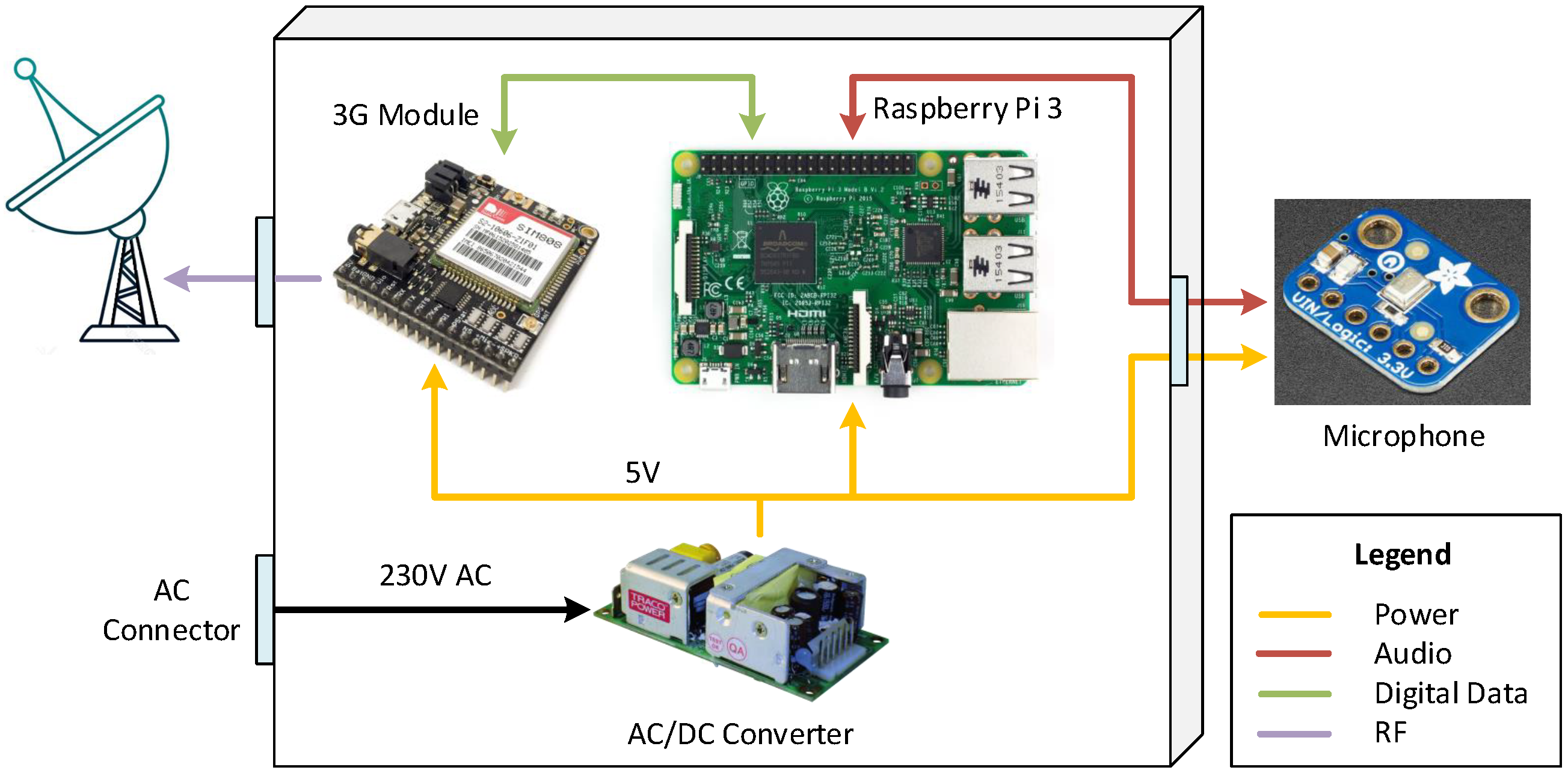

3.1. Description of the WASN Architecture

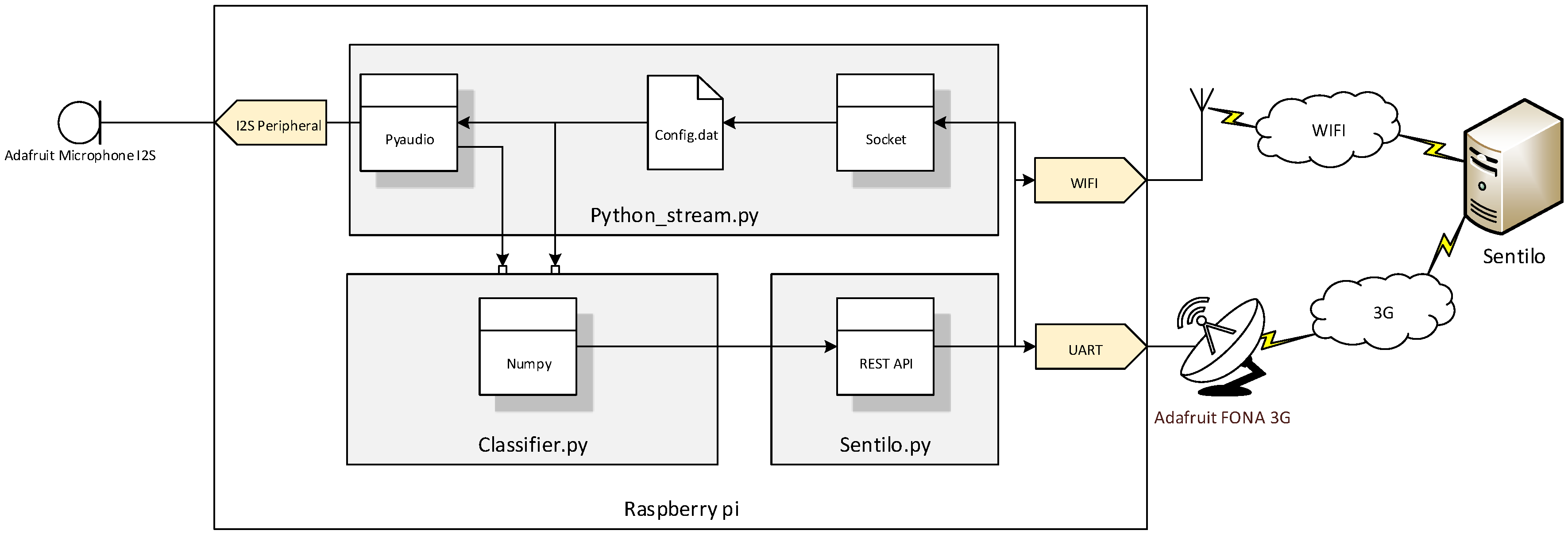

3.2. The SmartSound Technology

3.3. The SmartSense Platform

3.4. Sensor Requirements

- Price: For some time, accurate acoustic sensors based on noise meter commonly found in WASNs cost thousands of euros, see class/type 1 and 2 microphones on Section 4.2. Such high costs limit the number of nodes and, consequently, the coverage of of the network that city administrators can deploy. We envision smart WASNs deployed permanently across the entire city, as well as temporarily in points of interest by both by the public administration and private businesses. Therefore, low-cost is an essential requirement of our sensor.

- Processing capacity: The sensor should be able to run the SmartSound technology to identify the source of noise events by processing an audio stream in real-time.

- Storage capacity: The storage requirement is negligible due to the fact that, for privacy, the audio is deleted as soon as it is processed and the label is obtained. This requires very little storage.

- Microphone: The sensor should be able to analyse raw audio signal and identify the sources of noise that are audible to human ear. Therefore, it should ideally support an operating frequency in the range of 20 Hz to 20 kHz.

- Power Supply: We assume that the services to be developed by our research group based on WASNs are not critical and that sensors will be located in strategic places with Alternate Current (AC) power supply from the city, such as light posts and buildings facades. Therefore, for this first version of the sensor does not require battery for a power backup system.

- Wireless communications: The sensor should be able to send the results of the processing to a server and to an IoT platform for visualization; as well as allow on-the-fly configuration of parameters and the replacement of processing algorithms. For such, both WIFI and 3G should be supported.

- Outdoors exposure: The device has to operate outdoors for long periods of time during which it will be exposed to winds and rains. Therefore, it requires effective protection against adverse atmospheric conditions.

- Re-configurable: The researchers can configure the sampling frequency (up to 48kHz) and the data precision of the sensor (16 or 32 bits per sample), replace processing algorithms on-the-fly and request audio samples of varied sizes when ANEs of interest are detected. These configurations will be adjusted to develop and test the proof-of-concept applications.

4. Smart Acoustic Sensor Design

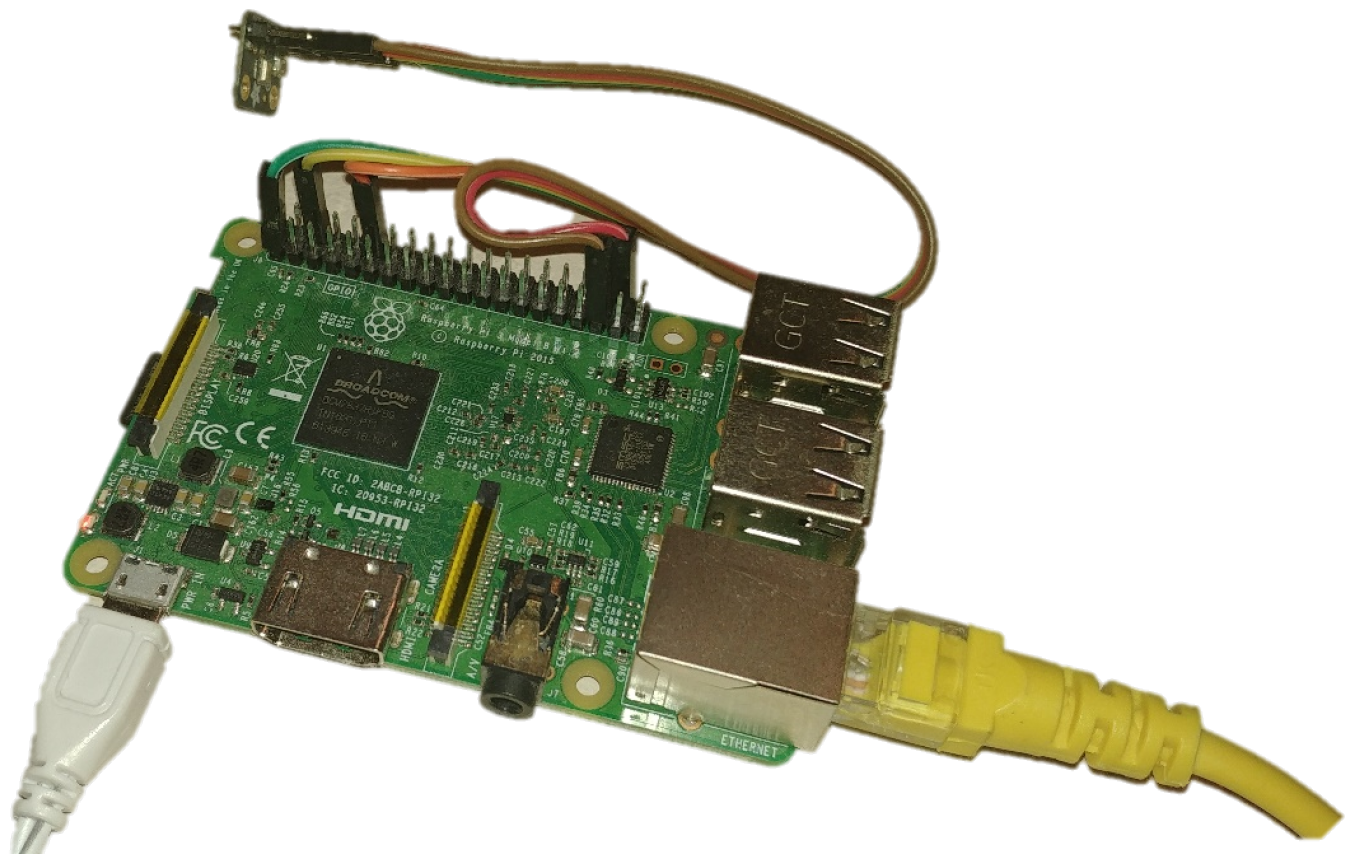

4.1. Computing Platform

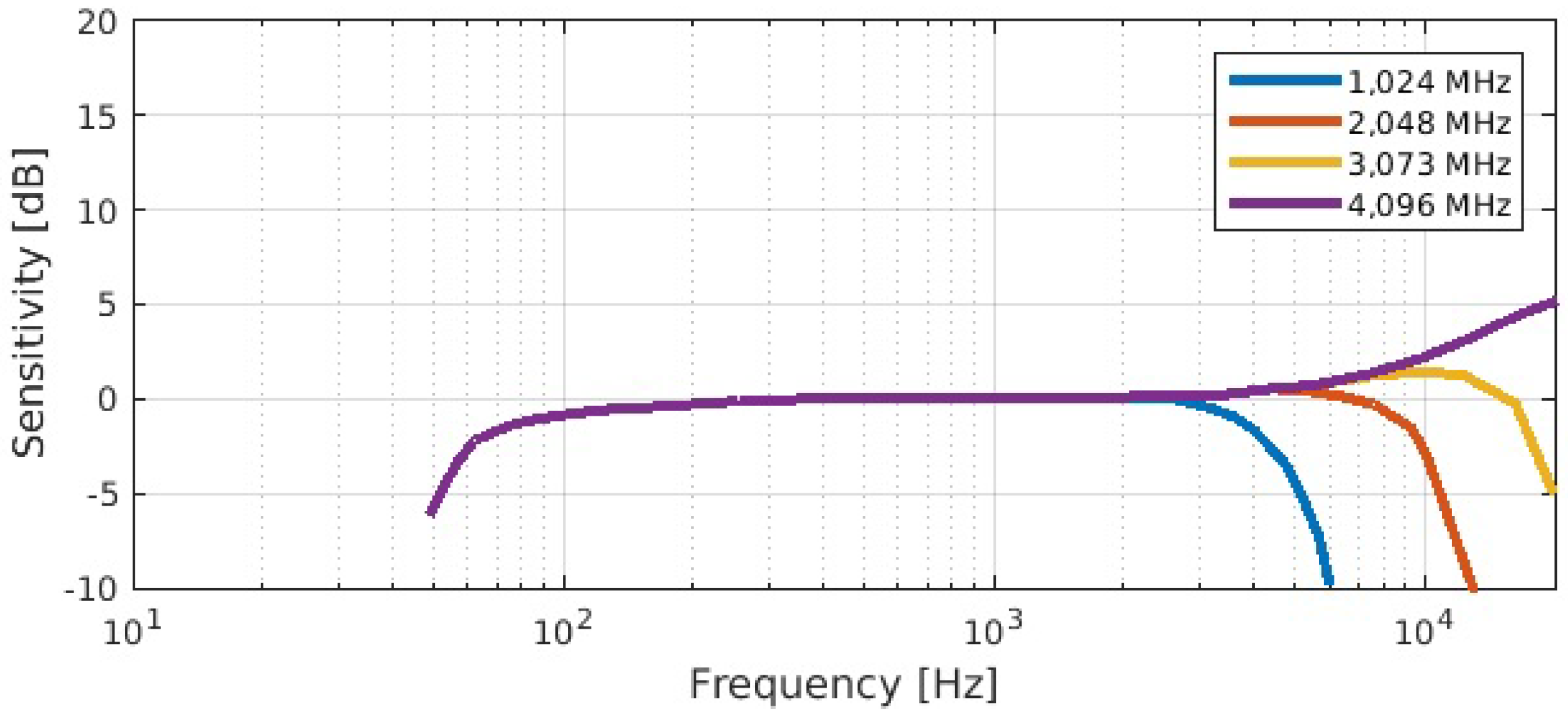

4.2. Microphone

4.3. Power Supply

4.4. Wireless Communications

4.5. Boxing

4.6. Sensor Proposal

4.7. Software Implementation

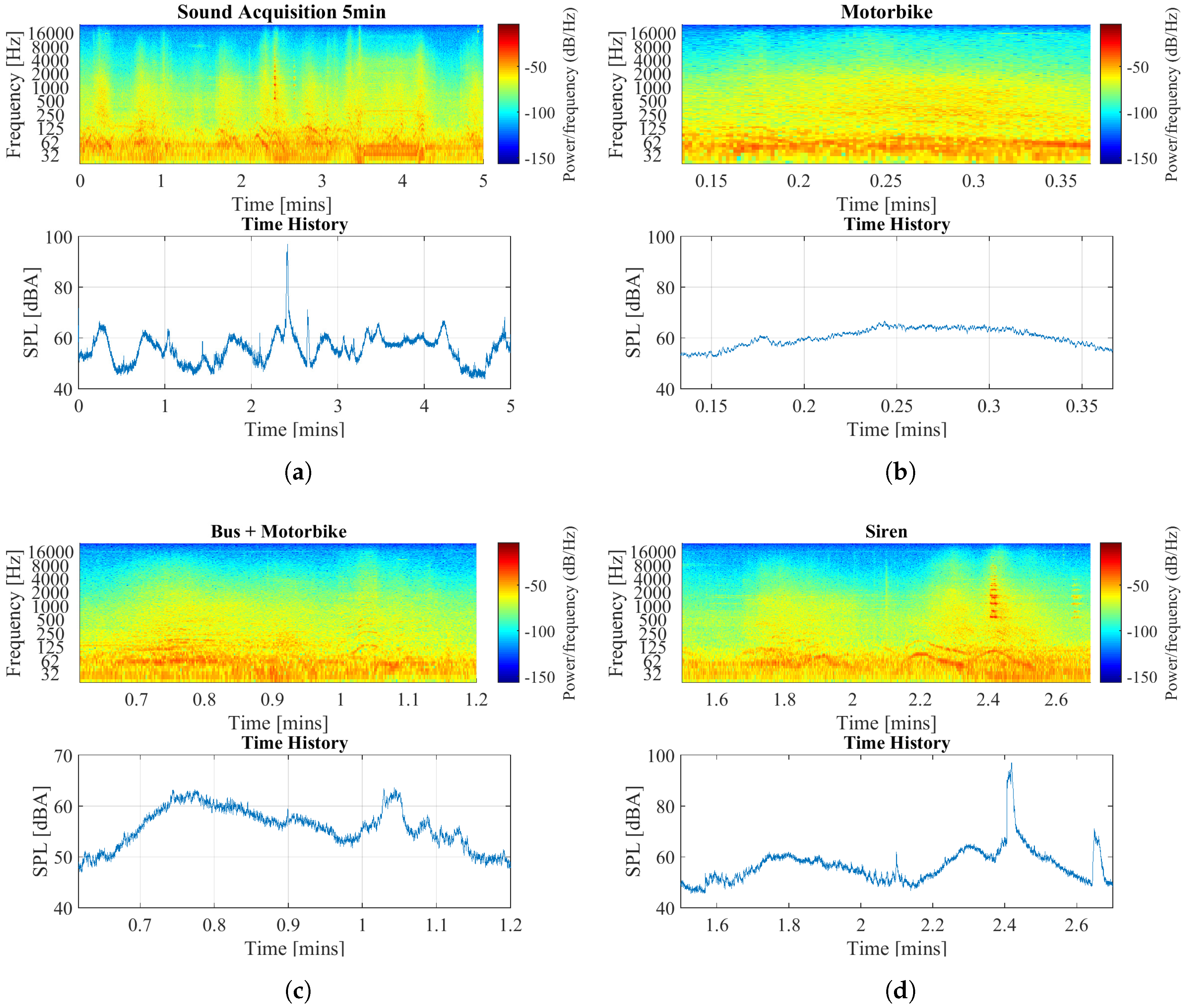

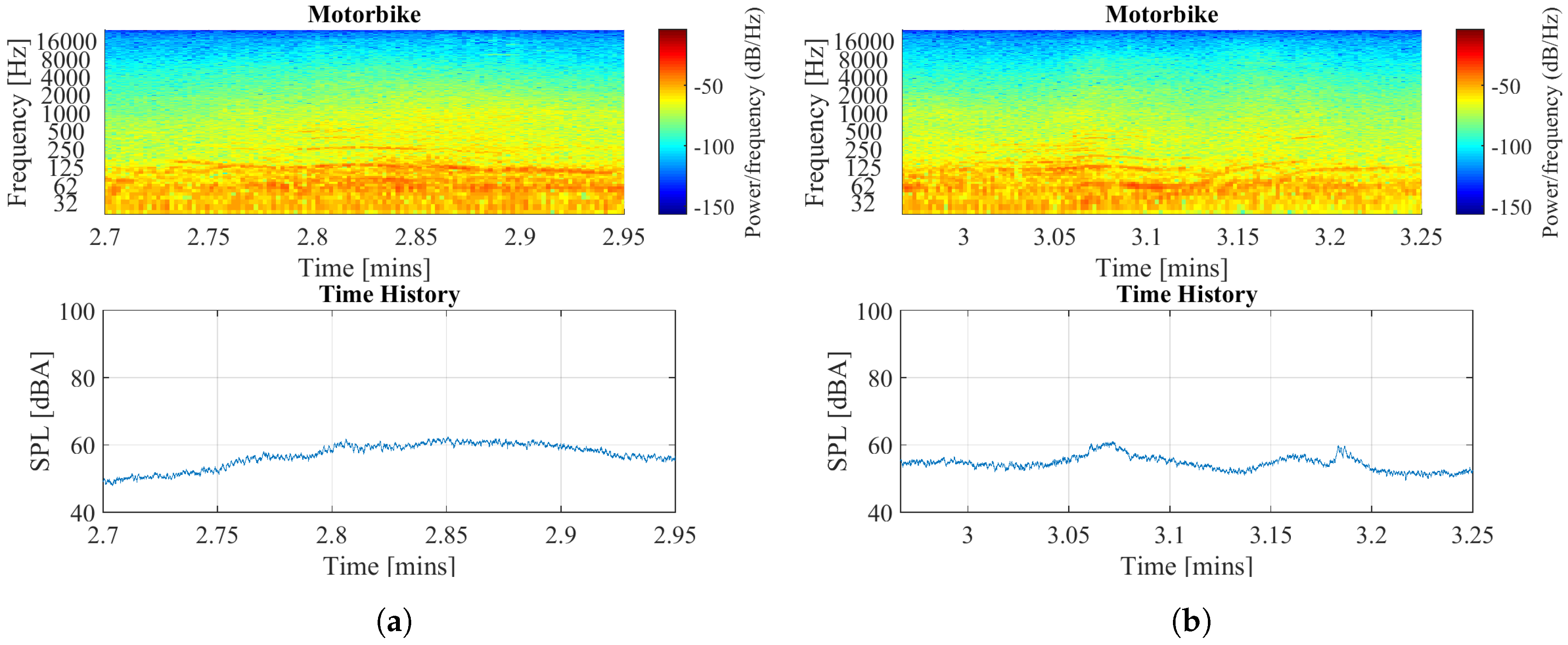

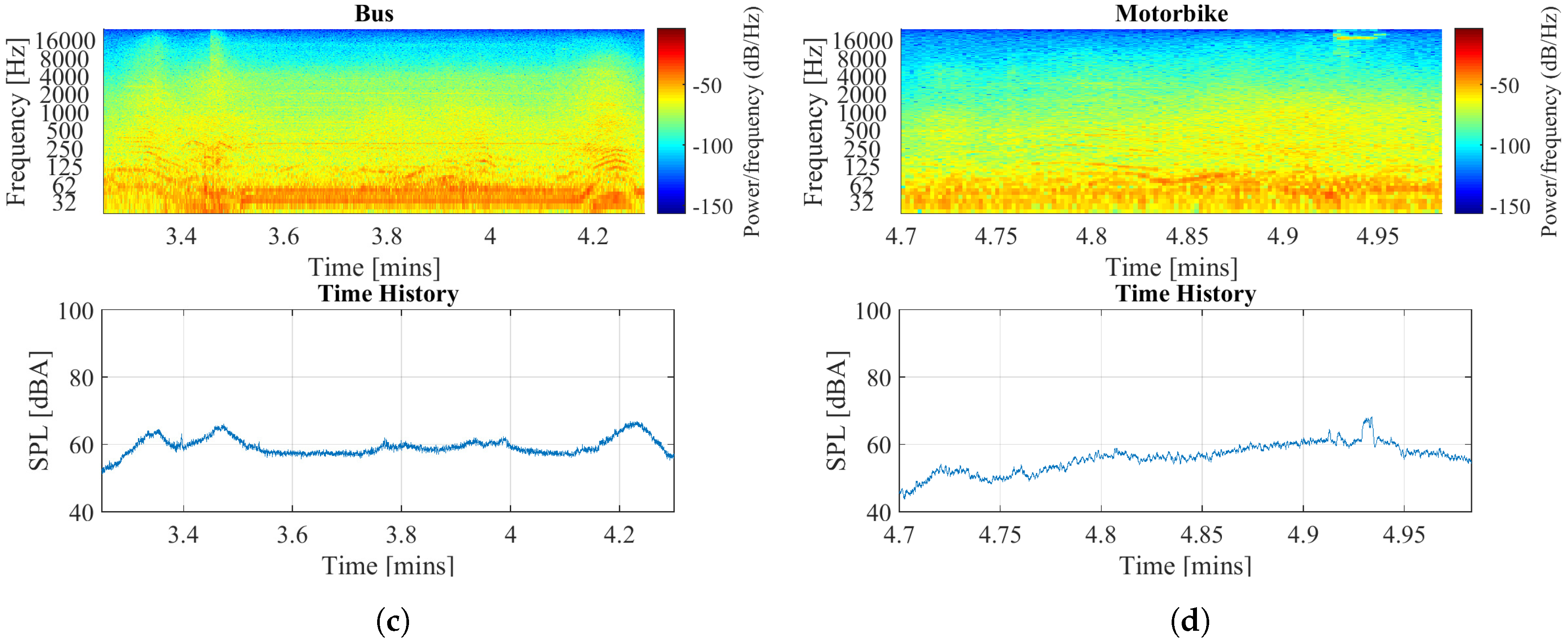

4.8. Preliminary Results of Data Acquisition

- Processing capacity: The sensor was capable of sampling, storage and transmit data in this test. However, more intensive tests have to be conducted to analyse the performance of the whole recognition system. More concretely, of the sound recognition algorithms for a long period of time in order to evaluate the results in real-operation.

- Microphone: The audio has been sampled at 48 ksps with 18 bits. Moreover, the sensor provides a flat frequency response in the commonly used bands with A-weighted filtering, between 31.5 Hz and 16,000 Hz, which includes most of the regular audible sounds that interests us. Finally, the sensor has a high SNR of 65 dB(A) [51] and a quantization noise of 108 dB.

- Wireless communications: The data has been sent to the remote server throughout the WI-FI connectivity.

- Re-configurable: The tests have been conducted at 32 and 48 ksps with a precision of 16 bits per sample.

5. Conclusions

- Taking our university campus as a SmartCampus living lab, we first plan to install a small WASN just outside the student residence, which is next to the university restaurant, a basketball court and a football field. The aim is to test the sensors and its integration with the SmartSense platform in a nearby, yet real environment for classifying the sources of noise around the student residence.

- At a second stage, we plan to install the sensor in the center of Barcelona, in a neighborhood that has both high-traffic and restaurants/bars. The goal is to analyse the noise during different times of the day and over a longer period of time to discriminate how much of it is caused by the traffic and leisure activities. According to our contacts in Barcelona city council, complains from neighbours in such areas are common, which noise originated from people/music on bars, people accessing leisure zones/venues and traffic. However, it can be difficult for them to create effective plans if they do not understand the distribution of these noise sources.

Author Contributions

Funding

Conflicts of Interest

Abbreviations

| AC | Alternate Current |

| AC-DC | Alternate Current to Direct Current |

| ANE | Anomalous Noise Event |

| ASIC | Application-Specific Integrated Circuits |

| BLE | Bluetooth Low Energy |

| CNOSSOS-EU | Common Noise Assessment Methods in Europe |

| DCS | Digital Cellular System |

| DSP | Digital Signal Processor |

| EC | European Commission |

| END | Environmental Noise Directive |

| EU | European Union |

| EGSM | Extended GSM |

| FPGA | Field Programmable Gate Array |

| GPRS | General Packet Radio Service |

| GSM | Global System for Mobile Communications |

| GPS | Global Positioning System |

| GTM | Research Group on Media Technology |

| HDD | Hard Drive Disk |

| HPC | High Performance Computing |

| IEC | International Electrotechnical Commission |

| IDEA | Intelligent Distributed Environmental Assessment |

| IoT | Internet of Things |

| ISO | International Organization for Standardization |

| IP | International Protection |

| MEMS | Microelectromechanical systems |

| MPU | MicroProcessor Unit |

| C | Controller |

| OS | Operating System |

| PCB | Printed Circuit Board |

| PCS | Personal Communications Service |

| PSU | Power Supply Unit |

| RISC | Reduced Instruction Set Computer |

| RTN | Road Traffic Noise |

| SIM | Subscriber Identity Module |

| SONYC | Sounds of New York Project |

| UN | United Nations |

| WASN | Wireless Acoustic Sensor Networks |

| WHO | World Health Organization |

References

- Mundi, I. World Demographics Profile. Available online: http://www.indexmundi.com/world/demographics_profile.html (accessed on 20 June 2017).

- United Nations, Department of Economic and Social Affairs, Population Division. World Population 2015. Available online: https://esa.un.org/unpd/wpp/Publications/Files/World_Population_2015_Wallchart.pdf (accessed on 20 February 2016).

- Morandi, C.; Rolando, A.; Di Vita, S. From Smart City to Smart Region: Digital Services for an Internet of Places; Springer: Berlin/Heidelberg, Germany, 2016. [Google Scholar]

- Fira de Barcelona. Smart City Expo World Congress, Report 2015. Available online: http://media.firabcn.es/content/S078016/docs/Report_SCWC2015.pdf (accessed on 20 February 2016).

- Bouskela, M.; Casseb, M.; Bassi, S.; De Luca, C.; Facchina, M. La ruta hacia las Smart Cities: Migrando de una gestión tradicional a la ciudad inteligente. In Monografía del BID (Sector de Cambio Climático y Desarrollo Sostenible. División de Viviendas y Desarrollo Urbano); IDB-MG-454; Banco Interamericano de Desarrollo: Washington, DC, USA, 2016. [Google Scholar]

- Ripoll, A.; Bäckman, A. State of the Art of Noise Mapping in Europe; Internal Report; European Topic Centre: Barcelona, Spain, 2005. [Google Scholar]

- European Environment Agency. The European Environment, State and Outlook 2010 (Sythesis). 2010. Available online: http://www.eea.europa.eu/soer/synthesis/synthesis (accessed on 20 February 2016).

- Cik, M.; Lienhart, M.; Lercher, P. Analysis of Psychoacoustic and Vibration-Related Parameters to Track the Reasons for Health Complaints after the Introduction of New Tramways. Appl. Sci. 2016, 6, 398. [Google Scholar] [CrossRef]

- Cox, P.; Palou, J. Directive 2002/49/EC of the European Parliament and of the Council of 25 June 2002 Relating to the Assessment and Management of Environmental Noise-Declaration by the Commission in the Conciliation Committee on the Directive Relating to the Assessment and Management of Environmental Noise (END). Off. J. Eur. Communities 2002, 189, 2002. [Google Scholar]

- European Commission, Joint Research Centre—Institute for Health and Consumer Protection. Common Noise Assessment Methods in Europe (CNOSSOS-EU) for Strategic Noise Mapping Following Environmental Noise Directive 2002/49/EC; European Comission: Brussels, Belgium, 2012. [Google Scholar]

- World Health Organization. Environmental Noise Guidelines for the European Region; Technical Report; World Health Organization: Geneva, Switzerland, 2018. [Google Scholar]

- Cueto, J.L.; Petrovici, A.M.; Hernández, R.; Fernández, F. Analysis of the impact of bus signal priority on urban noise. Acta Acust. United Acust. 2017, 103, 561–573. [Google Scholar] [CrossRef]

- Morley, D.; De Hoogh, K.; Fecht, D.; Fabbri, F.; Bell, M.; Goodman, P.; Elliott, P.; Hodgson, S.; Hansell, A.; Gulliver, J. International scale implementation of the CNOSSOS-EU road traffic noise prediction model for epidemiological studies. Environ. Pollut. 2015, 206, 332–341. [Google Scholar] [CrossRef]

- Ruiz-Padillo, A.; Ruiz, D.P.; Torija, A.J.; Ramos-Ridao, Á. Selection of suitable alternatives to reduce the environmental impact of road traffic noise using a fuzzy multi-criteria decision model. Environ. Impact Assess. Rev. 2016, 61, 8–18. [Google Scholar] [CrossRef]

- Licitra, G.; Fredianelli, L.; Petri, D.; Vigotti, M.A. Annoyance evaluation due to overall railway noise and vibration in Pisa urban areas. Sci. Total Environ. 2016, 568, 1315–1325. [Google Scholar] [CrossRef]

- Bunn, F.; Zannin, P.H.T. Assessment of railway noise in an urban setting. Appl. Acoust. 2016, 104, 16–23. [Google Scholar] [CrossRef]

- Iglesias-Merchan, C.; Diaz-Balteiro, L.; Soliño, M. Transportation planning and quiet natural areas preservation: Aircraft overflights noise assessment in a National Park. Transp. Res. Part D Transp. Environ. 2015, 41, 1–12. [Google Scholar] [CrossRef]

- Gagliardi, P.; Fredianelli, L.; Simonetti, D.; Licitra, G. ADS-B system as a useful tool for testing and redrawing noise management strategies at Pisa Airport. Acta Acust. United Acust. 2017, 103, 543–551. [Google Scholar] [CrossRef]

- Progetto SENSEable PISA. Sensing The City. Description of the Project. Available online: http://senseable.it/ (accessed on 20 February 2016).

- Nencini, L.; De Rosa, P.; Ascari, E.; Vinci, B.; Alexeeva, N. SENSEable Pisa: A wireless sensor network for real-time noise mapping. In Proceedings of the EURONOISE, Prague, Czech Republic, 10–13 June 2012; pp. 10–13. [Google Scholar]

- Sevillano, X.; Socoró, J.C.; Alías, F.; Bellucci, P.; Peruzzi, L.; Radaelli, S.; Coppi, P.; Nencini, L.; Cerniglia, A.; Bisceglie, A.; et al. DYNAMAP—Development of low cost sensors networks for real time noise mapping. Noise Mapp. 2016, 3, 172–189. [Google Scholar] [CrossRef]

- Alías, F.; Alsina-Pagčs, R.M.; Orga, F.; Socoró, J.C. Detection of Anomalous Noise Events for Real-Time Road-Traffic Noise Mapping: The Dynamap’s project case study. Noise Mapp. 2018, 5, 71–85. [Google Scholar] [CrossRef]

- Bello, J.P.; Silva, C.; Nov, O.; Dubois, R.L.; Arora, A.; Salamon, J.; Mydlarz, C.; Doraiswamy, H. SONYC: A System for Monitoring, Analyzing, and Mitigating Urban Noise Pollution. Commun. ACM 2019, 62, 68–77. [Google Scholar] [CrossRef]

- Zambon, G.; Benocci, R.; Bisceglie, A.; Roman, H.E.; Bellucci, P. The LIFE DYNAMAP project: Towards a procedure for dynamic noise mapping in urban areas. Appl. Acoust. 2016, 124, 52–60. [Google Scholar] [CrossRef]

- Bellucci, P.; Peruzzi, L.; Zambon, G. LIFE DYNAMAP project: The case study of Rome. Appl. Acoust. 2017, 117, 193–206. [Google Scholar] [CrossRef]

- Socoró, J.C.; Alías, F.; Alsina-Pagès, R.M. An Anomalous Noise Events Detector for Dynamic Road Traffic Noise Mapping in Real-Life Urban and Suburban Environments. Sensors 2017, 17, 2323. [Google Scholar] [CrossRef] [PubMed]

- Alsina-Pagès, R.M.; Navarro, J.; Alías, F.; Hervás, M. homesound: Real-time audio event detection based on high performance computing for behaviour and surveillance remote monitoring. Sensors 2017, 17, 854. [Google Scholar] [CrossRef] [PubMed]

- Basten, T.; Wessels, P. An overview of sensor networks for environmental noise monitoring. In Proceedings of the 21st International Congress on Sound and Vibration, ICSV 21, Beijing, China, 13–17 July 2014. [Google Scholar]

- EU. Directive 2002/49/EC of the European Parliament and the Council of 25 June 2002 relating to the assessment and management of environmental noise. Off. J. Eur. Communities 2002, L189, 12–25. [Google Scholar]

- Wang, C.; Chen, G.; Dong, R.; Wang, H. Traffic noise monitoring and simulation research in Xiamen City based on the Environmental Internet of Things. Int. J. Sustain. Dev. World Ecol. 2013, 20, 248–253. [Google Scholar] [CrossRef]

- Dekoninck, L.; Botteldooren, D.; Int Panis, L. Sound sensor network based assessment of traffic, noise, and air pollution. In Proceedings of the 10th European Congress and Exposition on Noise Control Engineering (Euronoise 2015), Maastricht, The Netherlands, 31 May–3 June 2015; pp. 2321–2326. [Google Scholar]

- Dekoninck, L.; Botteldooren, D.; Panis, L.I.; Hankey, S.; Jain, G.; Karthik, S.; Marshall, J. Applicability of a noise-based model to estimate in-traffic exposure to black carbon and particle number concentrations in different cultures. Environ. Int. 2015, 74, 89–98. [Google Scholar] [CrossRef]

- Fišer, M.; Pokorny, F.B.; Graf, F. Acoustic Geo-sensing Using Sequential Monte Carlo Filtering. In Proceedings of the 6th Congress of the Alps Adria Acoustics Association, Graz, Austria, 16–17 October 2014. [Google Scholar]

- Filipponi, L.; Santini, S.; Vitaletti, A. Data collection in wireless sensor networks for noise pollution monitoring. In Proceedings of the International Conference on Distributed Computing in Sensor Systems, Santorini Island, Greece, 11–14 June 2008; pp. 492–497. [Google Scholar]

- Moteiv Corporation. tmote Sky: Low Power Wireless Sensor Module. Available online: http://www.crew-project.eu/sites/default/files/tmote-sky-datasheet.pdf (accessed on 19 May 2020).

- Botteldooren, D.; De Coensel, B.; Oldoni, D.; Van Renterghem, T.; Dauwe, S. Sound monitoring networks new style. In Proceedings of the Acoustics 2011: Breaking New Ground: Annual Conference of the Australian Acoustical Society, Goald Coast, Australia, 2–4 November 2011; pp. 1–5. [Google Scholar]

- Domínguez, F.; Cuong, N.T.; Reinoso, F.; Touhafi, A.; Steenhaut, K. Active self-testing noise measurement sensors for large-scale environmental sensor networks. Sensors 2013, 13, 17241–17264. [Google Scholar] [CrossRef]

- Bell, M.C.; Galatioto, F. Novel wireless pervasive sensor network to improve the understanding of noise in street canyons. Appl. Acoust. 2013, 74, 169–180. [Google Scholar] [CrossRef]

- Paulo, J.; Fazenda, P.; Oliveira, T.; Carvalho, C.; Felix, M. Framework to monitor sound events in the city supported by the FIWARE platform. In Proceedings of the 46o Congreso Español de Acústica, Valencia, Spain, 21–23 October 2015; pp. 21–23. [Google Scholar]

- Intel® Edison Compute Module: Hardware Guide. Available online: https://www.intel.com/content/dam/support/us/en/documents/edison/sb/edison-module_HG_331189.pdf (accessed on 19 May 2020).

- Paulo, J.; Fazenda, P.; Oliveira, T.; Casaleiro, J. Continuos sound analysis in urban environments supported by FIWARE platform. In Proceedings of the EuroRegio2016/TecniAcústica, Porto, Portugal, 13–15 June 2016; Volume 16, pp. 1–10. [Google Scholar]

- Project, S. SONYC: Sounds of New York City. Available online: https://wp.nyu.edu/sonyc/ (accessed on 19 May 2020).

- Mydlarz, C.; Salamon, J.; Bello, J.P. The implementation of low-cost urban acoustic monitoring devices. Appl. Acoust. 2017, 117, 207–218. [Google Scholar] [CrossRef]

- Salamon, J.; Jacoby, C.; Bello, J.P. A Dataset and Taxonomy for Urban Sound Research. In Proceedings of the 22nd ACM International Conference on Multimedia, Orlando, FL, USA, 3–7 November 2014; ACM: New York, NY, USA, 2014; pp. 1041–1044. [Google Scholar] [CrossRef]

- Nencini, L. DYNAMAP monitoring network hardware development. In Proceedings of the 22nd International Congress on Sound and Vibration, Florence, Italy, 12–16 July 2015; pp. 12–16. [Google Scholar]

- Malinowski, A.; Yu, H. Comparison of embedded system design for industrial applications. IEEE Trans. Ind. Inform. 2011, 7, 244–254. [Google Scholar] [CrossRef]

- Simon, G.; Maróti, M.; Lédeczi, Á.; Balogh, G.; Kusy, B.; Nádas, A.; Pap, G.; Sallai, J.; Frampton, K. Sensor network-based countersniper system. In Proceedings of the 2nd International Conference on Embedded Networked Sensor Systems, Baltimore, MD, USA, 3–5 November 2004; pp. 1–12. [Google Scholar]

- Segura-Garcia, J.; Felici-Castell, S.; Perez-Solano, J.J.; Cobos, M.; Navarro, J.M. Low-cost alternatives for urban noise nuisance monitoring using wireless sensor networks. IEEE Sens. J. 2015, 15, 836–844. [Google Scholar] [CrossRef]

- Hughes, J.; Yan, J.; Soga, K. Development of wireless sensor network using bluetooth low energy (BLE) for construction noise monitoring. Int. J. Smart Sens. Intell. Syst. 2015, 8, 1379–1405. [Google Scholar] [CrossRef]

- Can, A.; Dekoninck, L.; Botteldooren, D. Measurement network for urban noise assessment: Comparison of mobile measurements and spatial interpolation approaches. Appl. Acoust. 2014, 83, 32–39. [Google Scholar] [CrossRef]

- knowles.com. SPH0645LM4H-B (Datasheet). Available online: https://www.knowles.com/docs/default-source/model-downloads/sph0645lm4h-b-datasheet-rev-c.pdf?Status=Master&sfvrsn=c1bf77b1_4 (accessed on 3 June 2020).

- Mietlicki, F.; Gaudibert, P.; Vincent, B. HARMONICA project (HARMOnised Noise Information for Citizens and Authorities). In Proceedings of the INTER-NOISE and NOISE-CON Congress and Conference Proceedings, New York, NY, USA, 19–22 August 2012; Volume 2012, pp. 7238–7249. [Google Scholar]

- Ajuntament.Barcelona.Cat. Wifi Barcelona. Available online: https://ajuntament.barcelona.cat/barcelonawifi/es/welcome.html (accessed on 15 June 2020).

- ANSI. Degrees of Protection Provided by Enclosures (IP Code) (Identical National Adoption); ANSI: New York, NY, USA, 2004. [Google Scholar]

- Alsina-Pagès, R.M.; Alías, F.; Socoró, J.C.; Orga, F.; Benocci, R.; Zambon, G. Anomalous events removal for automated traffic noise maps generation. Appl. Acoust. 2019, 151, 183–192. [Google Scholar] [CrossRef]

- Alías, F.; Socoró, J.C. Description of anomalous noise events for reliable dynamic traffic noise mapping in real-life urban and suburban soundscapes. Appl. Sci. 2017, 7, 146. [Google Scholar] [CrossRef]

| Platform | Architecture | Manufacturer | Model | Frequency | Features | Price |

|---|---|---|---|---|---|---|

| (CORE) | (MHz) | (€) | ||||

| Tmote Sky [34] | 16-bit RISC | TI | MSP430F1611 | 48 | TinyOS 2.x & ContikiOS | 77 |

| Waspmote | 8-bit RISC | Atmel | ATmega1281 | 14.74 | 16xWireless tech., SD Card | 228 |

| Mica2 mote [47] | 8-bit RISC | Atmel | Atmega128L | 8–16 | ISM Bands | - |

| MicaZ [48] | 8-bit RISC | Atmel | Atmega128L | 8–16 | IEEE 802.15.4 | - |

| PC Engines ALIX 3d3 [37] | x86 | AMD | Geode LX800 | 500 | Linux, Windows XP | 83 |

| Raspberry Pi [48] | ARM11 (Cortex-A) | Broadcom | BCM2835 | 700 | Ethernet, SD Card, Linux | 24 |

| RFD22301 [49] | ARM Cortex-M0 | RFDigital | R25 | 16 | BLE Module | 17 |

| BMD-200 (Rigado) [49] | ARM Cortex-M0 | Nordic Semi. | nRF51822 | 16 | BLE Module | 16 |

| Spark Core [49] | ARM Cortex-M3 | ST | STM32F103x8 | 72 | WiFi | 34 |

| Frequency Band | 16 Hz | 20 Hz | 1000 Hz | 10,000 Hz | 16,000 Hz |

|---|---|---|---|---|---|

| Class 1 | 2.5, −4.5 | ±2.5 | ±1.1 | 2.6, −3.6 | 3.5, −17 |

| Class 2 | 5.5, −Inf. | ±3.5 | ±1.4 | 5.6, −Inf. | 6, −Inf. |

| ID | Type | Microphone Sensitivity | Frequency | Price |

|---|---|---|---|---|

| (dBre 1 V Pa) | Range | (€) | ||

| Adafruit I2S MEMS Microphone—SPH0645LM4H | MEM | −42 dB | 50 Hz to 15 kHz | 6.95 |

| KEEG1542PBL-A [37] | Electret | −42 dB | 180 Hz to 20 kHz | 2.39 |

| FG-23329-P07 [37] | Electret | −37 dB | 100 Hz to 10 kHz | 24.19 |

| CMA-4544PF-W | Electret | −44 dB | 20 Hz to 20 kHz | 0.68 |

| BL-1994 | Piezoelectric | −49 dB | 20 Hz to 10 kHz | 106 |

| BL-3497 | Piezoelectric | −49 dB | 20 Hz to 10 kHz | 98 |

| Svantek 959 (GRAS 40AE 1/2″) [50] | ||||

| GRAS 40AE 1/2″ | Electret | −26 dB | 3.15 Hz to 20 kHz | 953 |

| CESVA SC-310 | ||||

| CESVA C-130 | Electret | −35 dB | 10 Hz to 20 kHz | - |

| CESVA C-250 | Electret | −27 dB | 10 Hz to 20 kHz | - |

| Behringer ECM8000 | Electret | −37 dB | 215 Hz to 20 kHz | 62 |

| G.R.A.S. 41AC-2 Outdoor Microphone | Electret | −26 dB | 3.15 Hz to 20 kHz | 2800 |

| Class 2—PCE-428 | Electret | −28 dB | 20 Hz to 12.5 kHz | 1960 |

| Class 1—PCE-430 | Electret | −28 dB | 3 Hz to 20 kHz | 1070 |

| Class 1—PCE-432 | Electret | −28 dB | 3 Hz to 20 kHz | 2260 |

| Outdoor Sound Level Meter Kit PCE-428-EKIT | Electret | −26 dB | 3.15 to 20 kHz | 4380 |

| Outdoor Sound Level Meter Kit PCE-430-EKIT | Electret | −28 dB | 3 Hz to 20 kHz | 5230 |

| Outdoor Sound Level Meter Kit PCE-432-EKIT | Electret | −28 dB | 3 Hz to 20 kHz | 5640 |

| WPK1710-Outdoor kit-Type 1-NoiseMeters | Electret | - | - | 5980 |

| WPK1720-Outdoor kit-Type 2-NoiseMeters | Electret | - | - | 5140 |

| M2230-WP-Outdoor kit + Basic Case-NTi audio | Electret | - | 5 Hz to 20 kHz | - |

| Outdoor Kit | Manufacturer | Price (€) |

|---|---|---|

| Outdoor Sound Monitor Kit PCE-4xx-EKIT | PCE | 3500 |

| WPK-OPT—Outdoor Noise Kit for Optimus Green Sound Level Meter | NoiseMeters | 2280 |

| WK2—The heavy-duty option for longer-term noise monitoring | Pulsar Instruments | 2570 |

| Device | Voltage | Max. Current |

|---|---|---|

| Raspberry Pi 3 | 5 V | 2.5 A |

| 3G Module | 5 V | 400 mA |

| Function | Component | Specifications | Cost (€) |

|---|---|---|---|

| MPU | Raspberry Pi 3 | 4x ARM Cortex-A53, 1.2 GHz | 35 |

| PSU | TT Electronics-IoT Solutions: SGS-15-5 | 5V@3A, 15 W | 7.02 |

| Microphone | Adafruit I2S MEMS Microphone Breakout-SPH0645LM4H | Measuring microphone | 6.95 |

| 3G Module | Adafruit FONA 3G Cellular | UMTS/HSDPA, WCDMA + HSDPA, AT commands | 80 |

| Box | Bud industries PNR-2601-C | IP65, polycarbonate | 10.31 |

| Total | 139.28 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Alsina-Pagès, R.M.; Hervás, M.; Duboc, L.; Carbassa, J. Design of a Low-Cost Configurable Acoustic Sensor for the Rapid Development of Sound Recognition Applications. Electronics 2020, 9, 1155. https://doi.org/10.3390/electronics9071155

Alsina-Pagès RM, Hervás M, Duboc L, Carbassa J. Design of a Low-Cost Configurable Acoustic Sensor for the Rapid Development of Sound Recognition Applications. Electronics. 2020; 9(7):1155. https://doi.org/10.3390/electronics9071155

Chicago/Turabian StyleAlsina-Pagès, Rosa Maria, Marcos Hervás, Leticia Duboc, and Jordi Carbassa. 2020. "Design of a Low-Cost Configurable Acoustic Sensor for the Rapid Development of Sound Recognition Applications" Electronics 9, no. 7: 1155. https://doi.org/10.3390/electronics9071155

APA StyleAlsina-Pagès, R. M., Hervás, M., Duboc, L., & Carbassa, J. (2020). Design of a Low-Cost Configurable Acoustic Sensor for the Rapid Development of Sound Recognition Applications. Electronics, 9(7), 1155. https://doi.org/10.3390/electronics9071155