A Synchronized Multi-Unit Wireless Platform for Long-Term Activity Monitoring

Abstract

1. Introduction

2. Related Work

2.1. Gait Analysis through sEMG

2.2. Multi-Board Acquisition Systems

2.3. Time Synchronization Protocols

- Termination: after ending, every node makes a decision;

- Agreement: every couple of nodes agrees on the same decision;

- Validity: every decided value is a proposed one;

- Integrity: every node makes a decision at least once.

3. Problem Formulation

3.1. Hardware Implementation

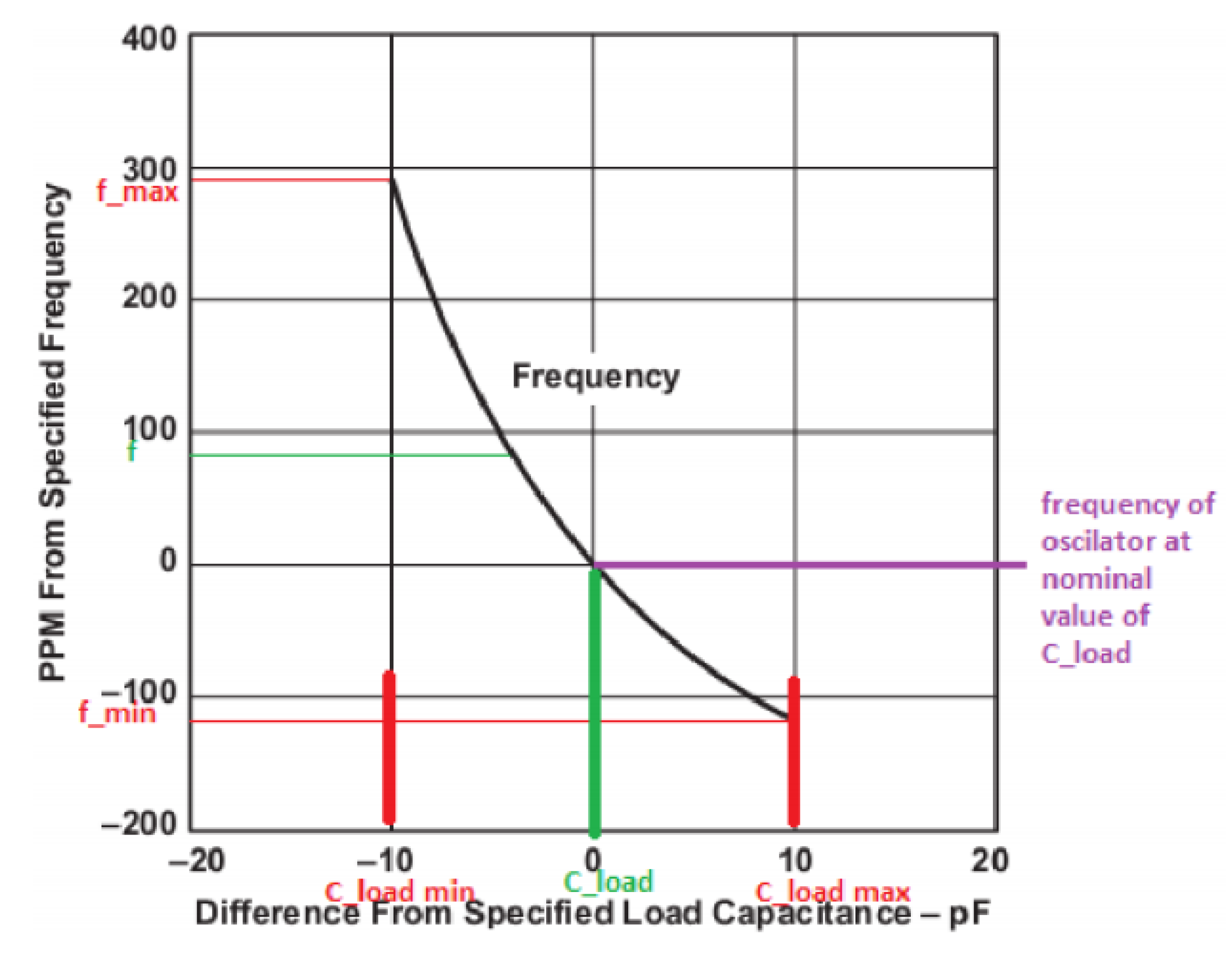

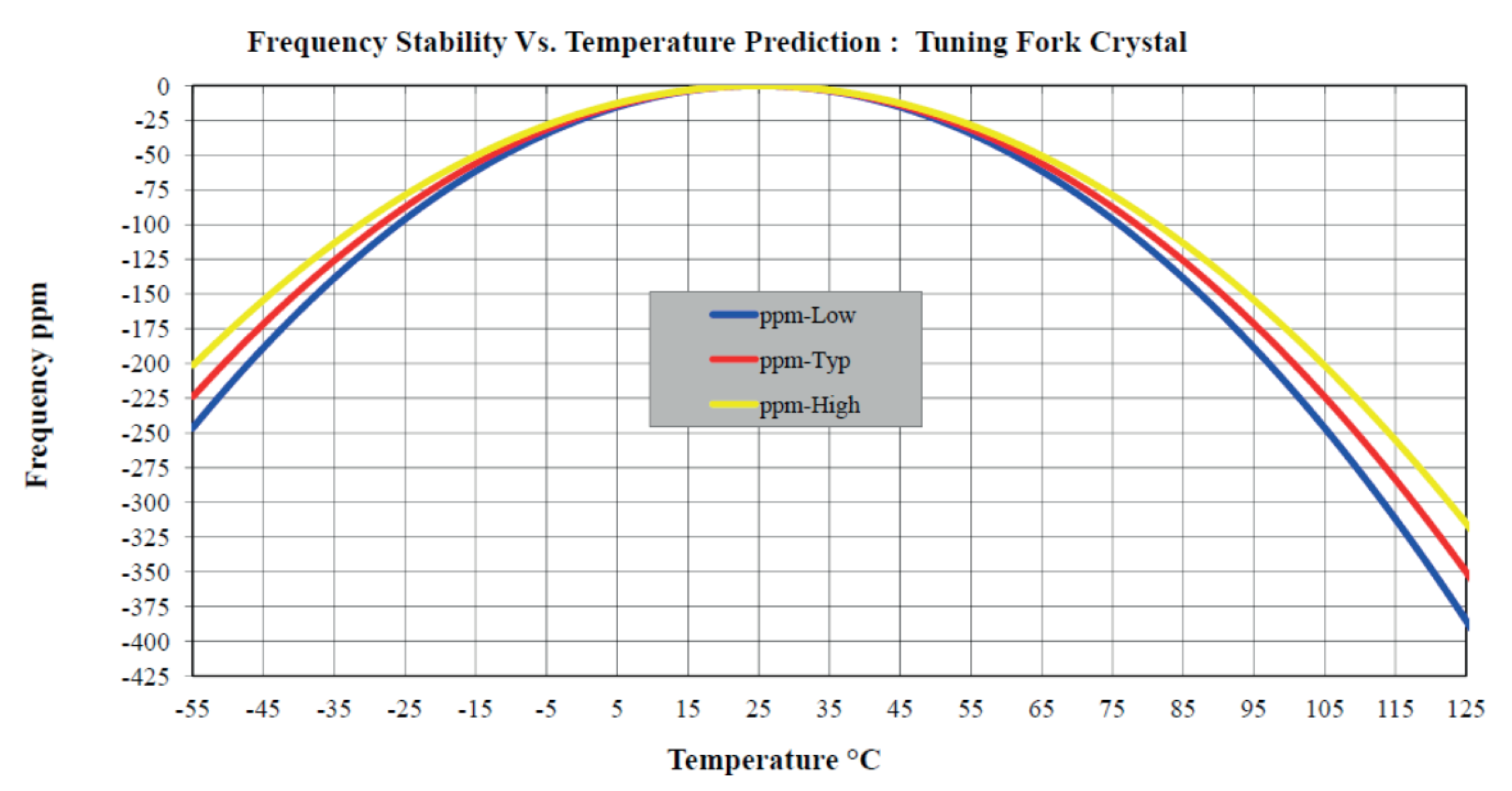

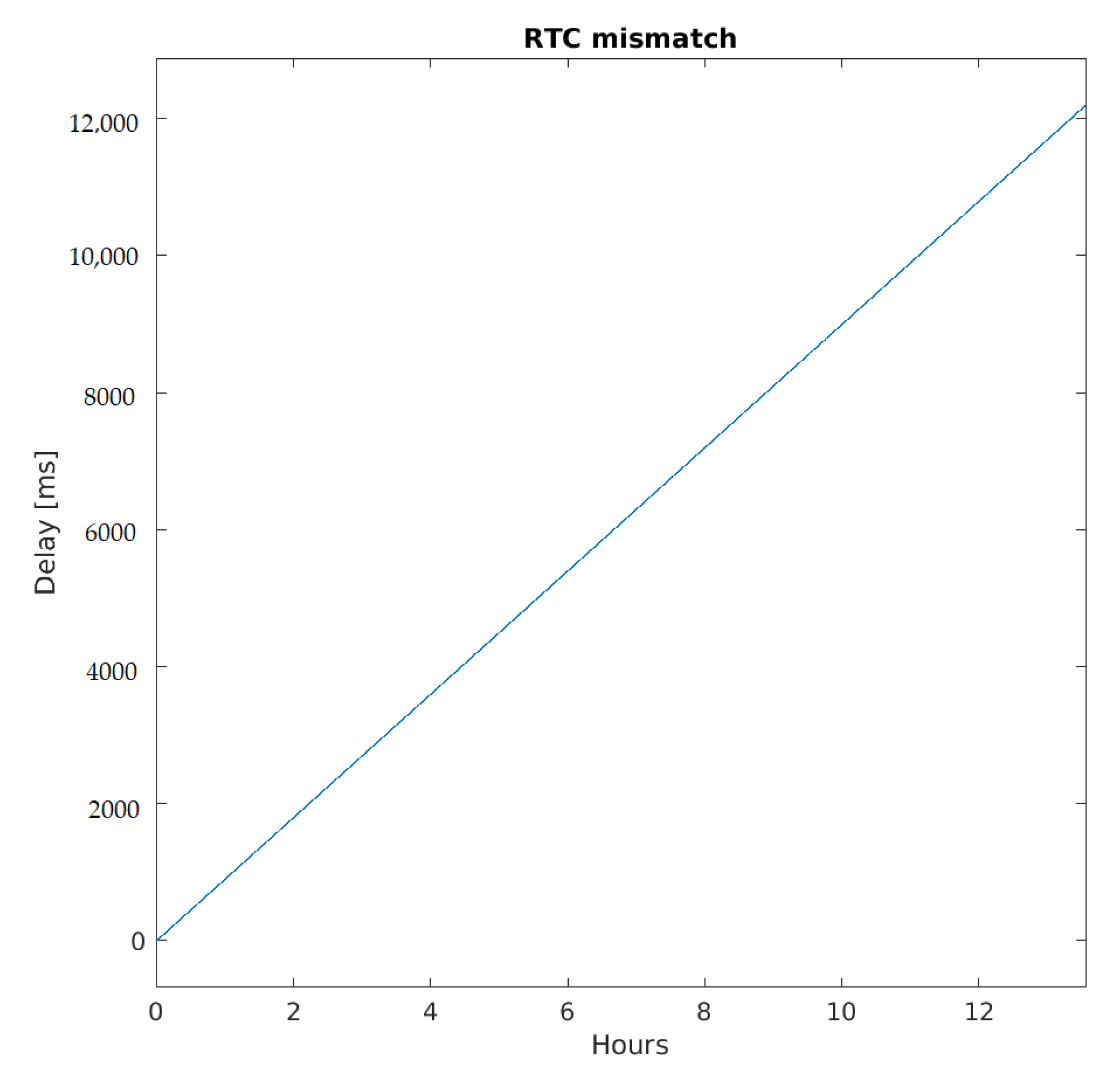

3.1.1. RTC Mismatch

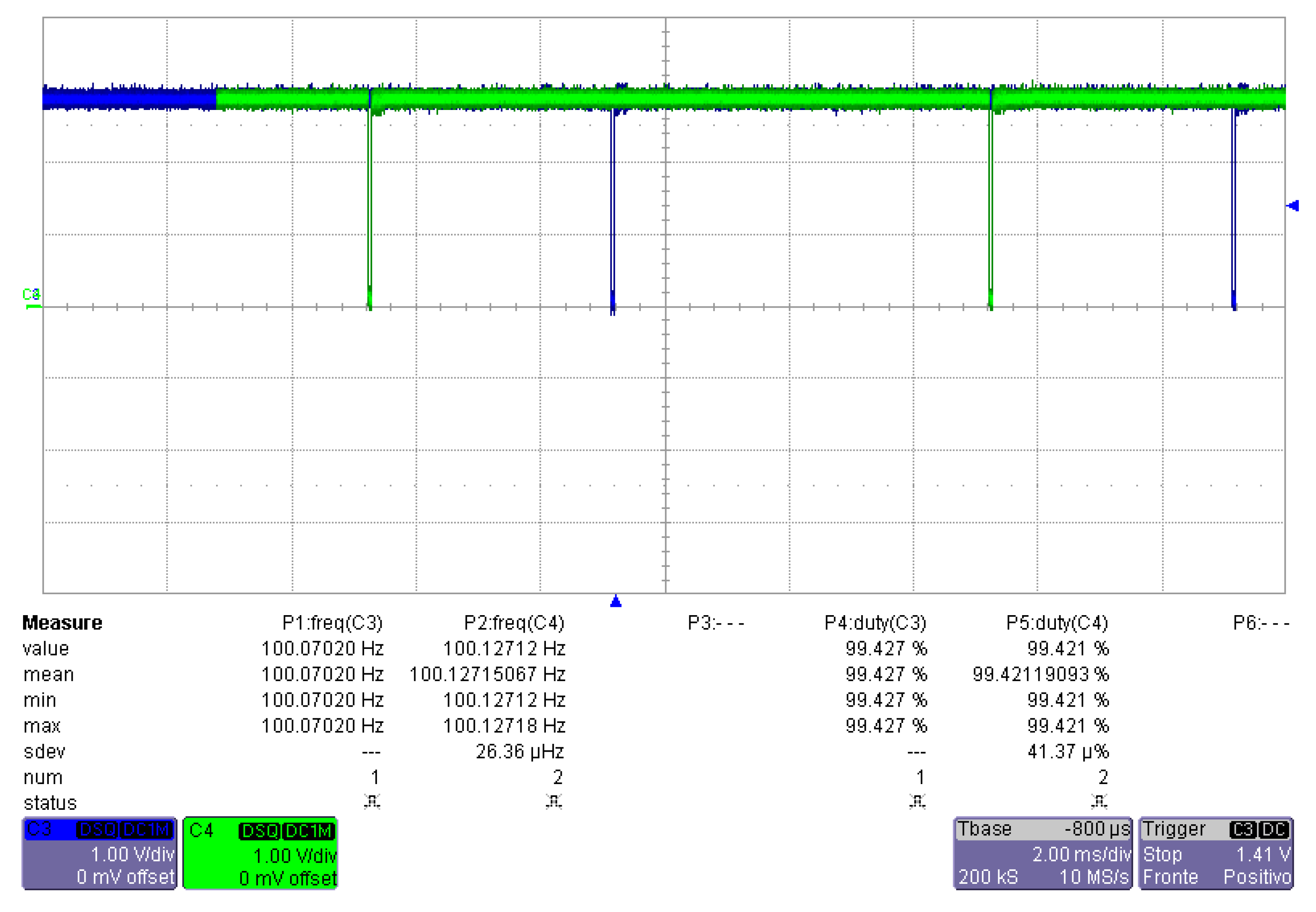

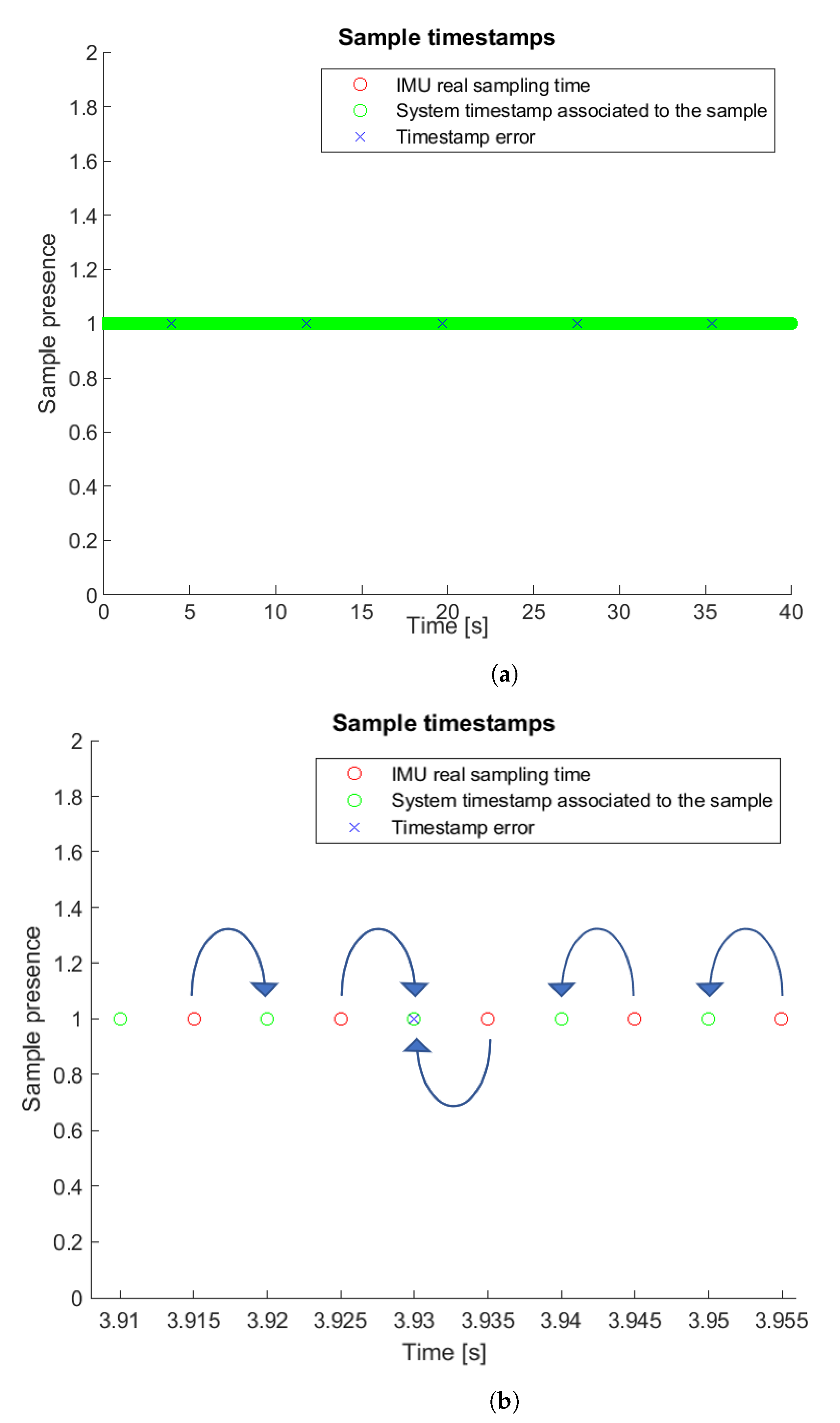

3.1.2. IMUs Sampling Frequency Differences

3.2. Non-Hardware Implementation

3.2.1. Micro SD Card Speed Classes

3.2.2. Network Topology

- Send time (): the time from the instant the message is composed to that in which it arrives to the network interface;

- Access time (): waiting time for the shared communication channel to be free;

- Transmission time (): time needed for the sender to put the message on the communication channel;

- Propagation time (): traveling time of the electromagnetic signal from the sender to the receiver.

- Reception time (): the dual time of

4. Platform Presentation

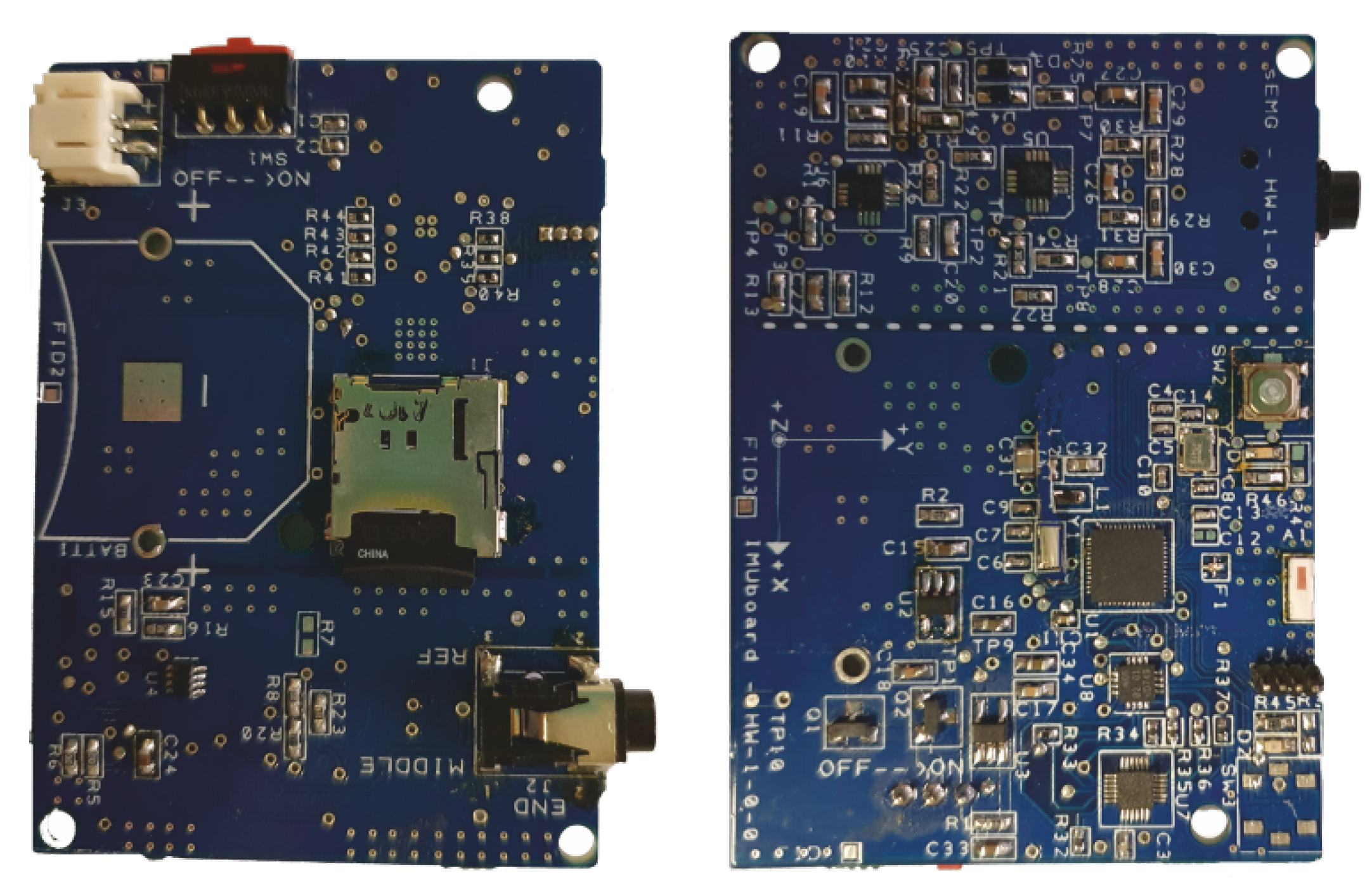

4.1. Hardware of IMU Board

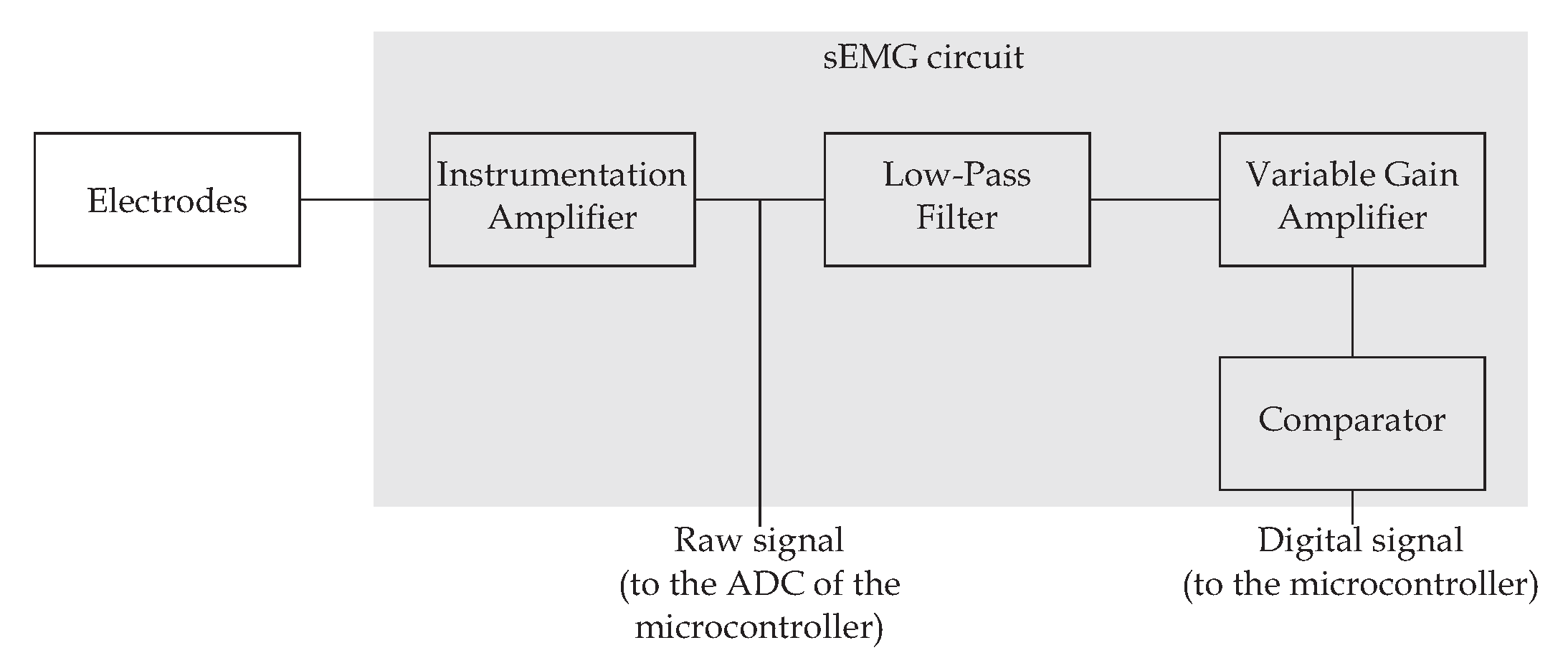

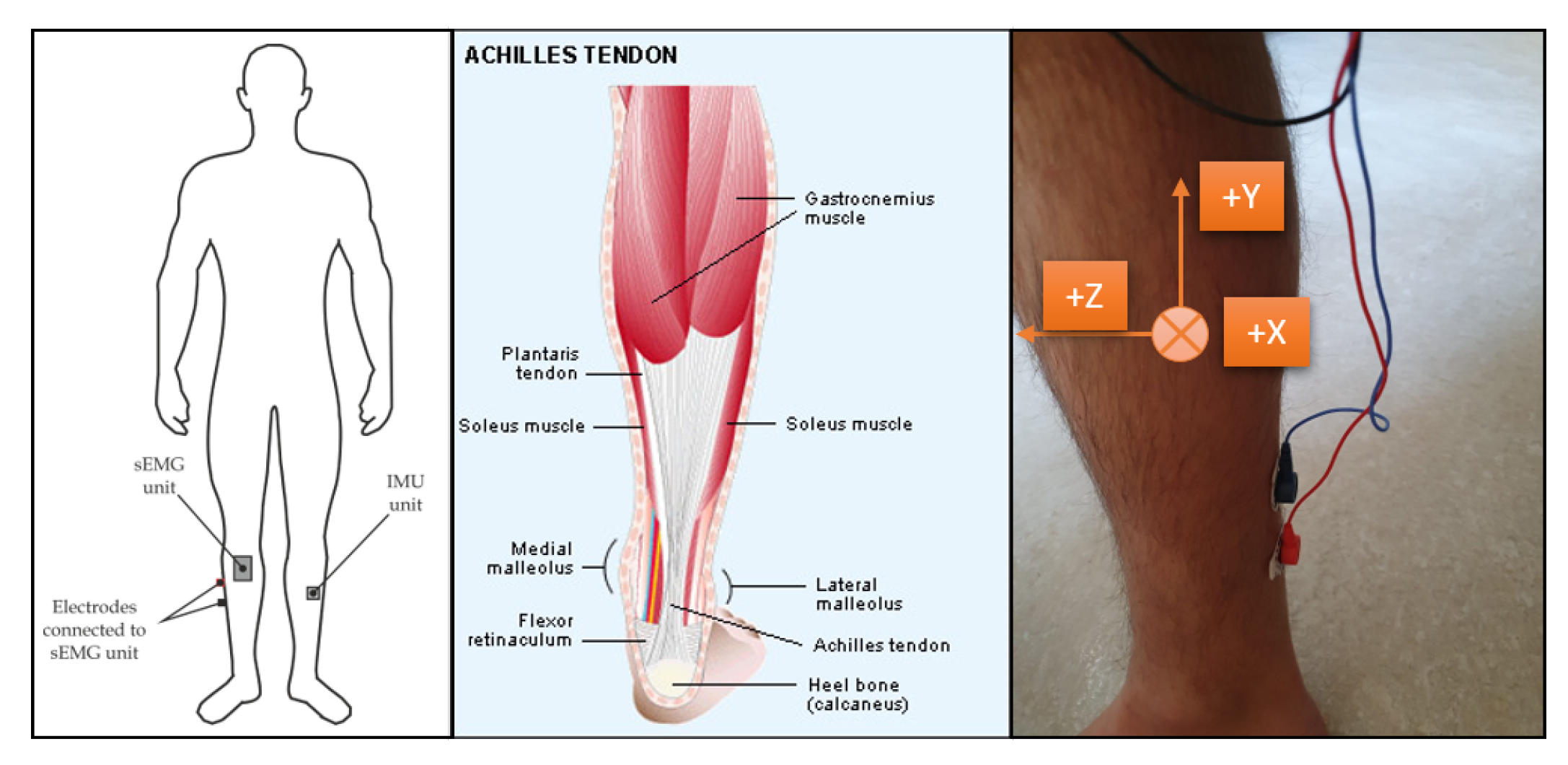

4.2. Hardware of sEMG Board

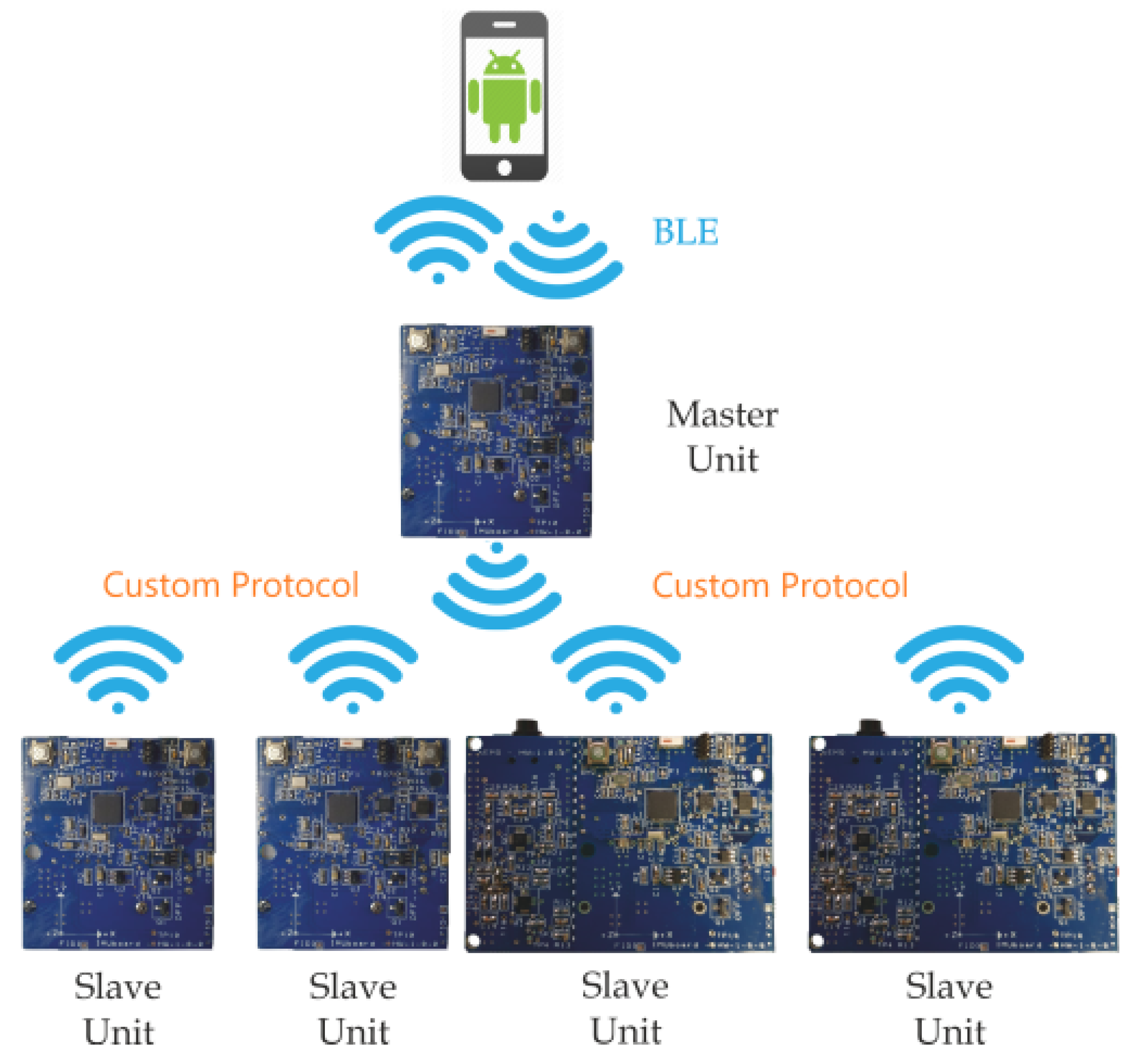

4.3. Master–Slave Architecture

4.4. Android App

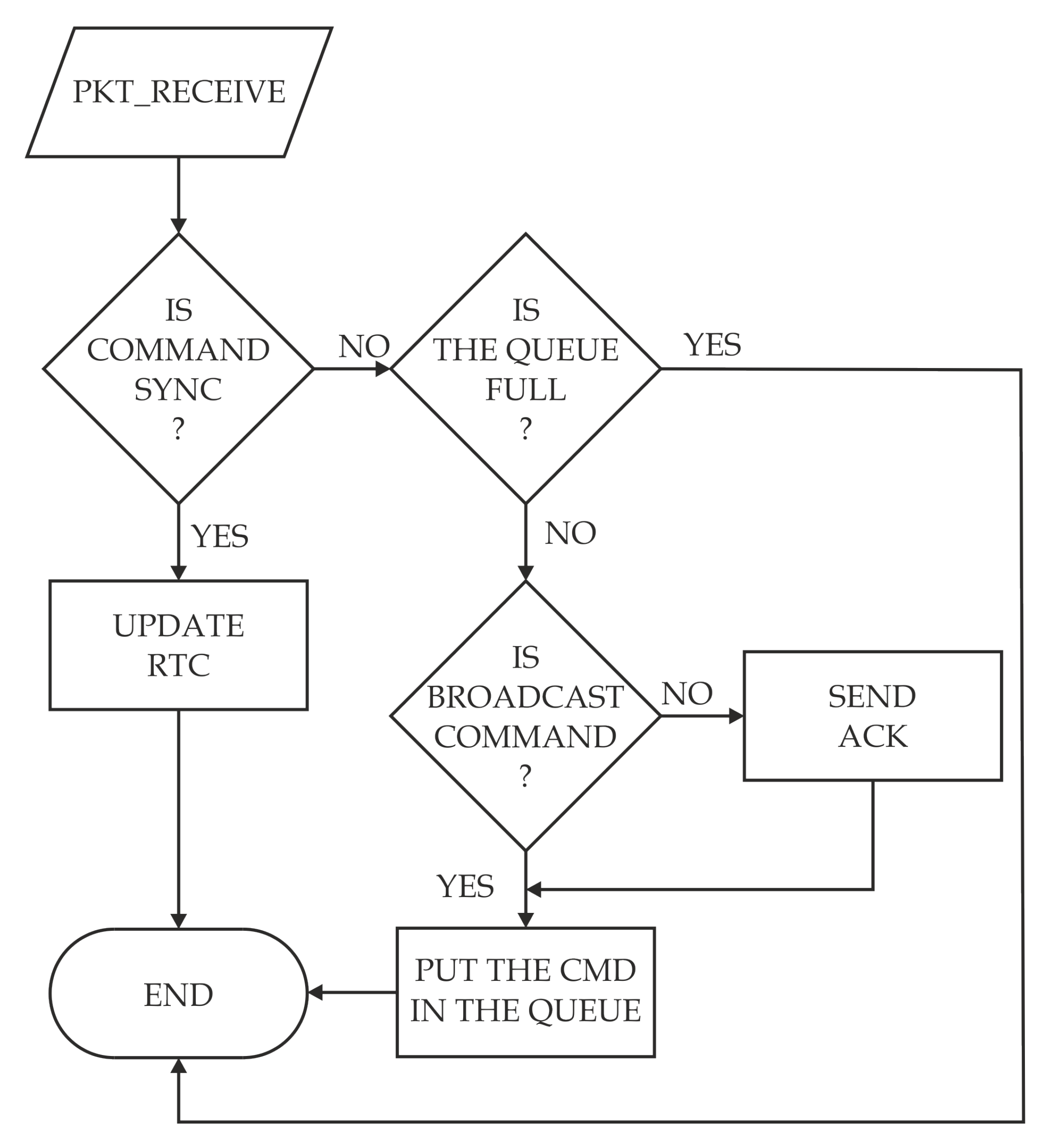

4.5. Firmware Considerations

5. Experimental Results

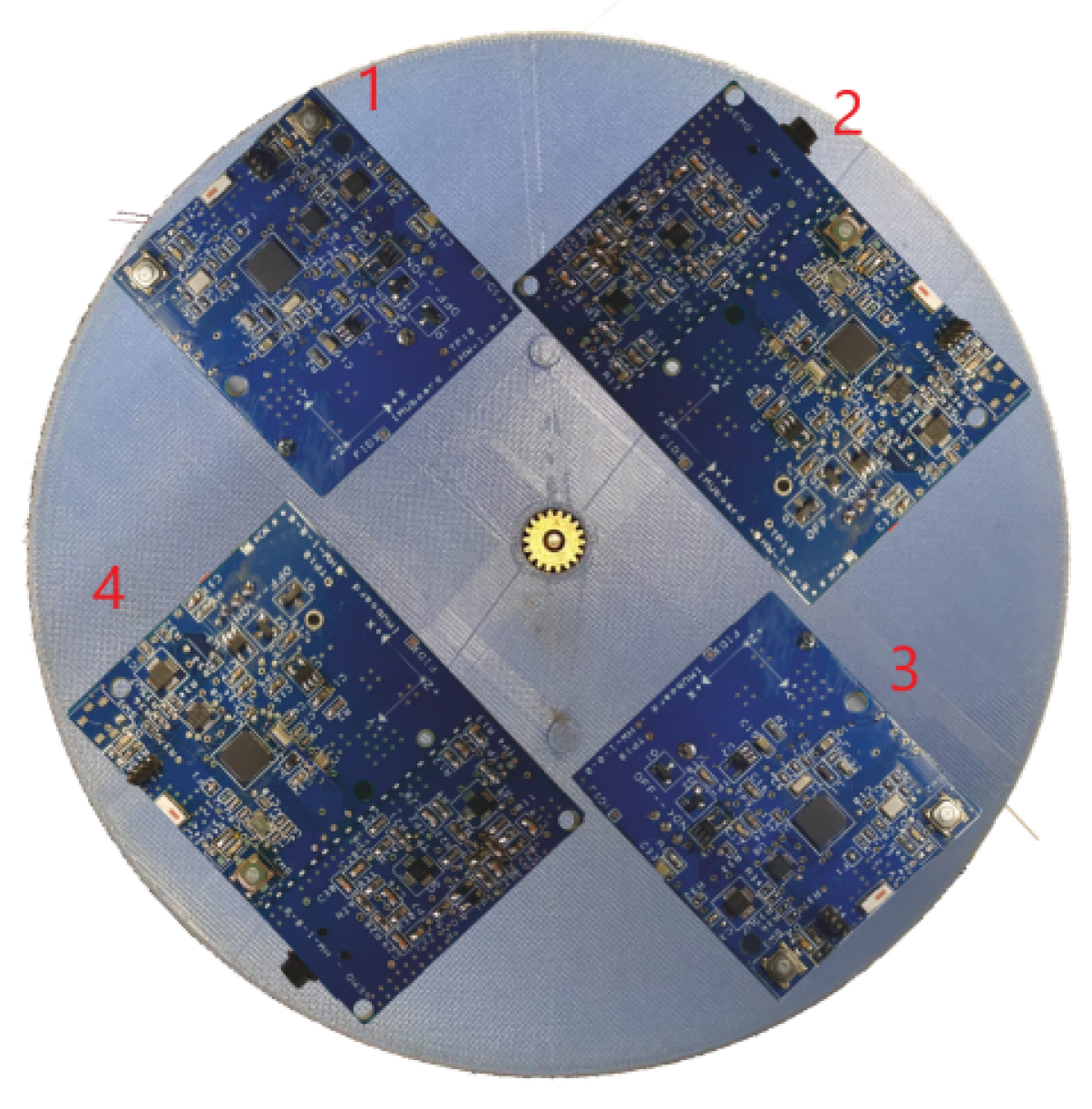

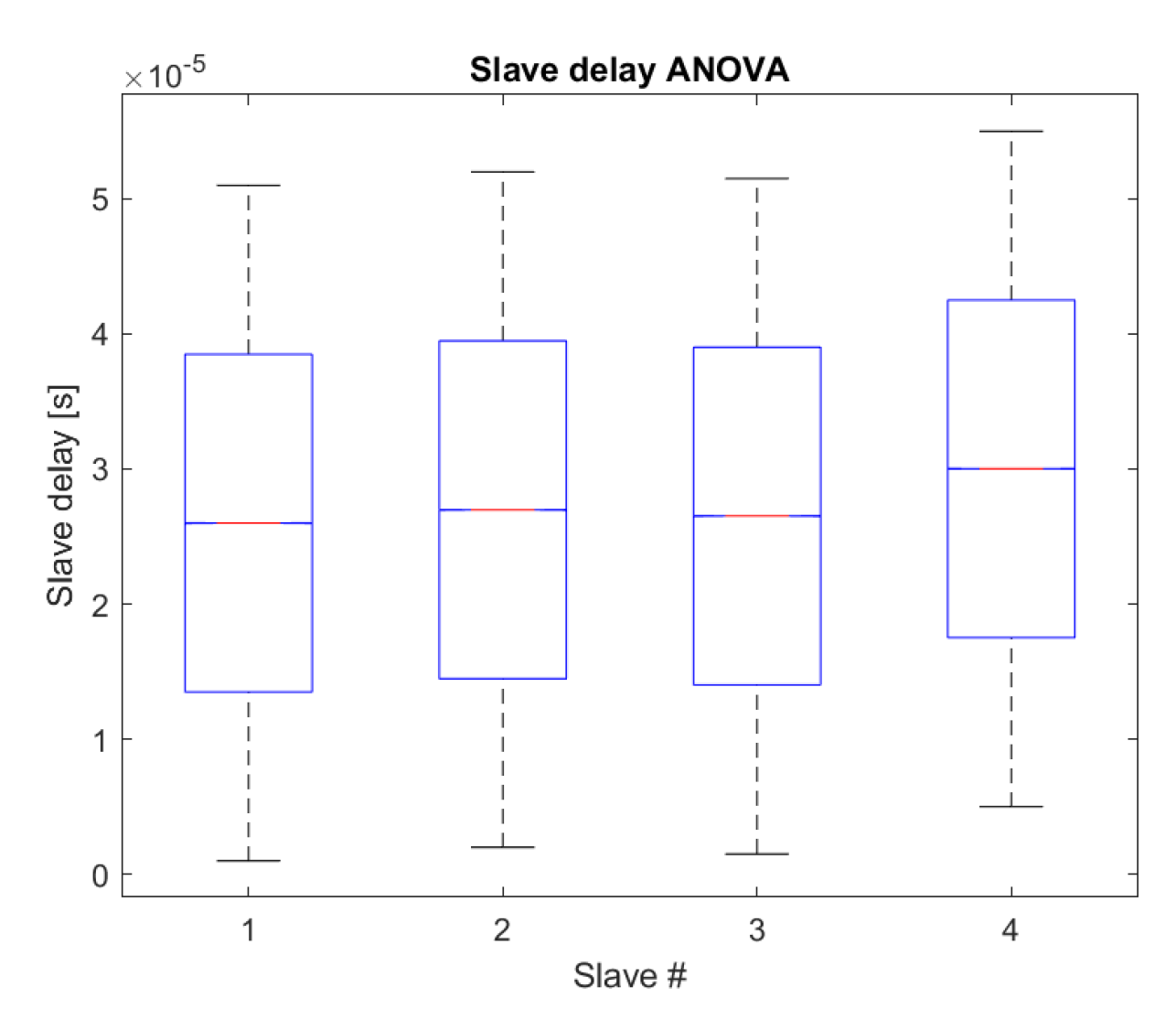

5.1. Rotating Platform

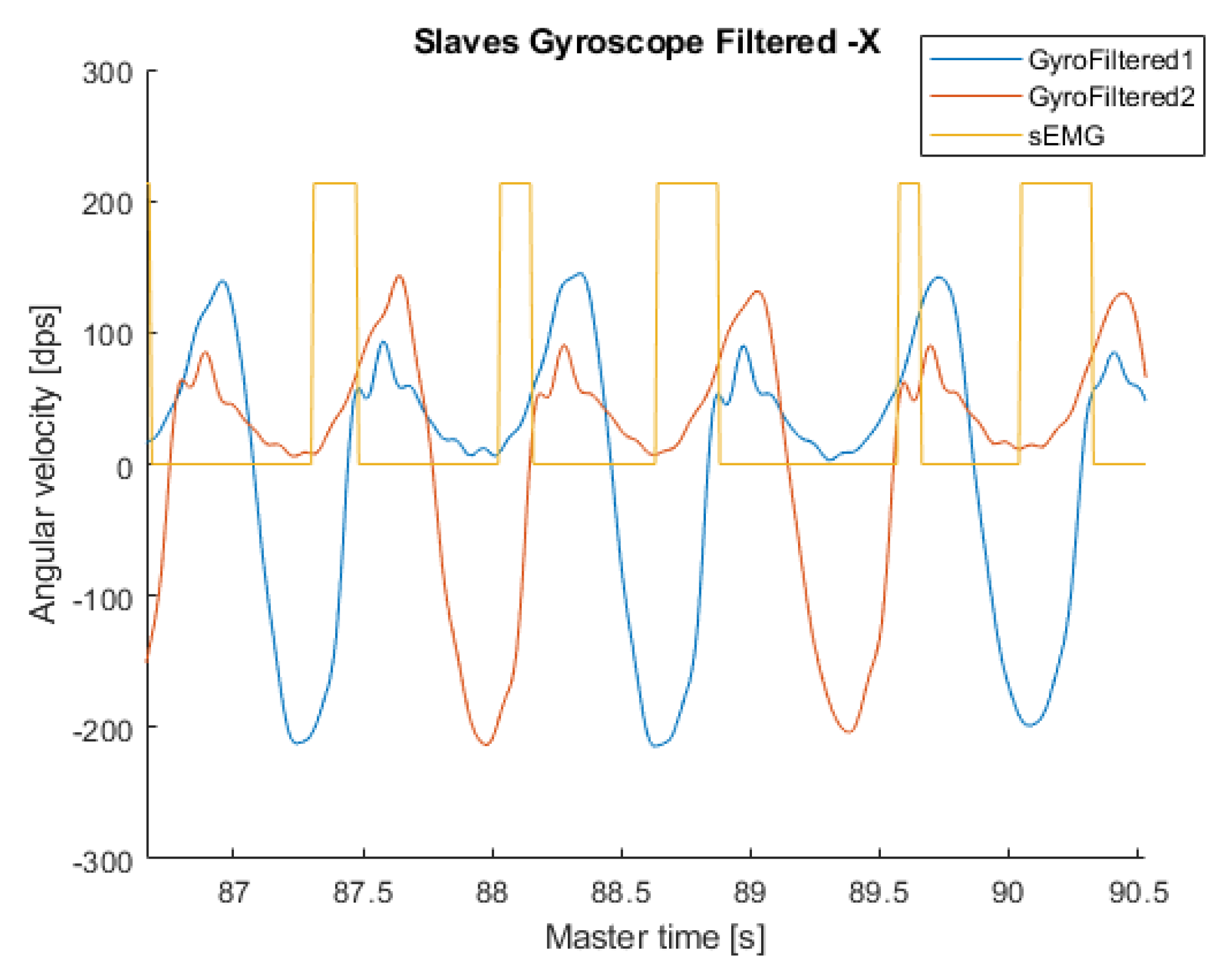

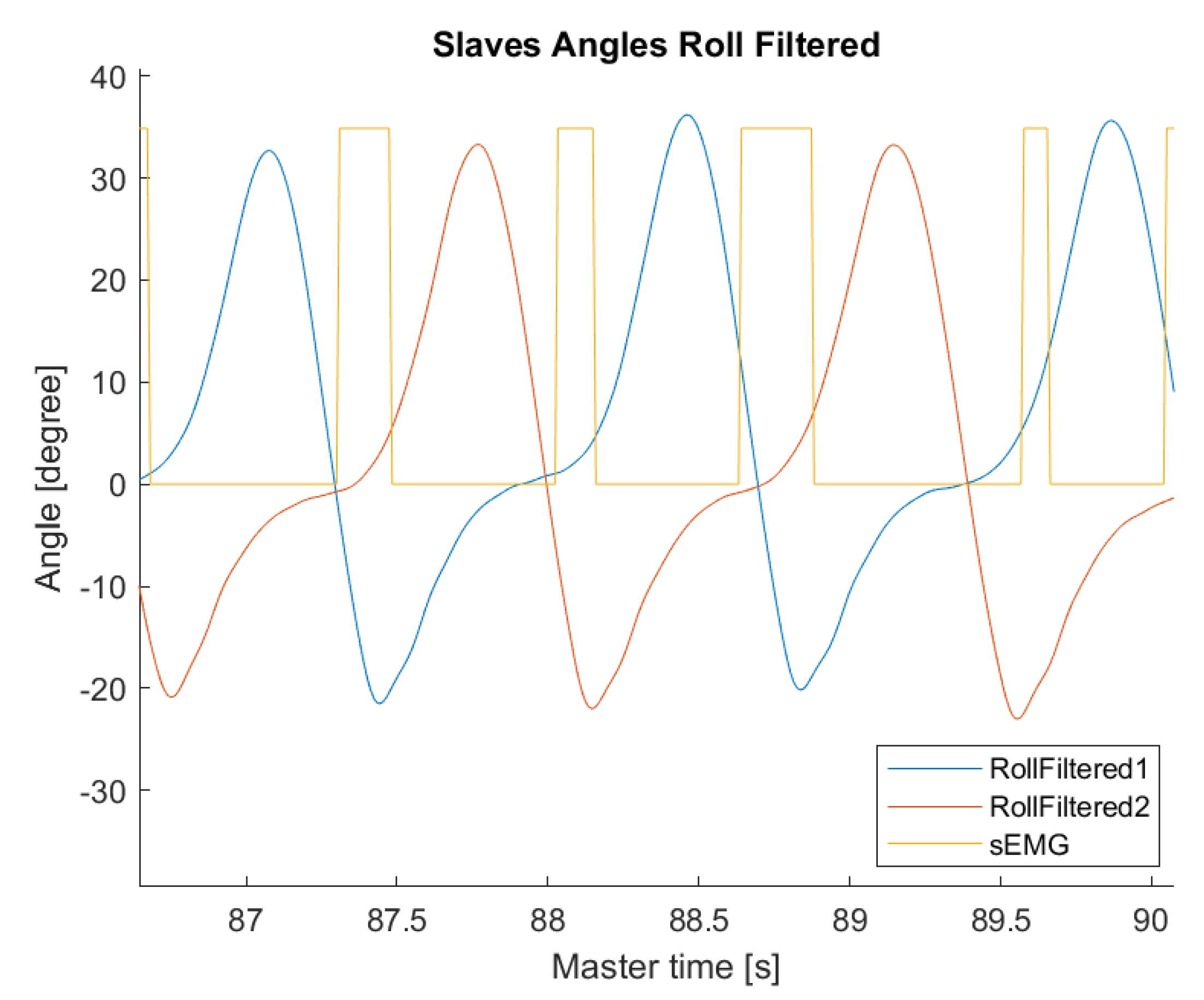

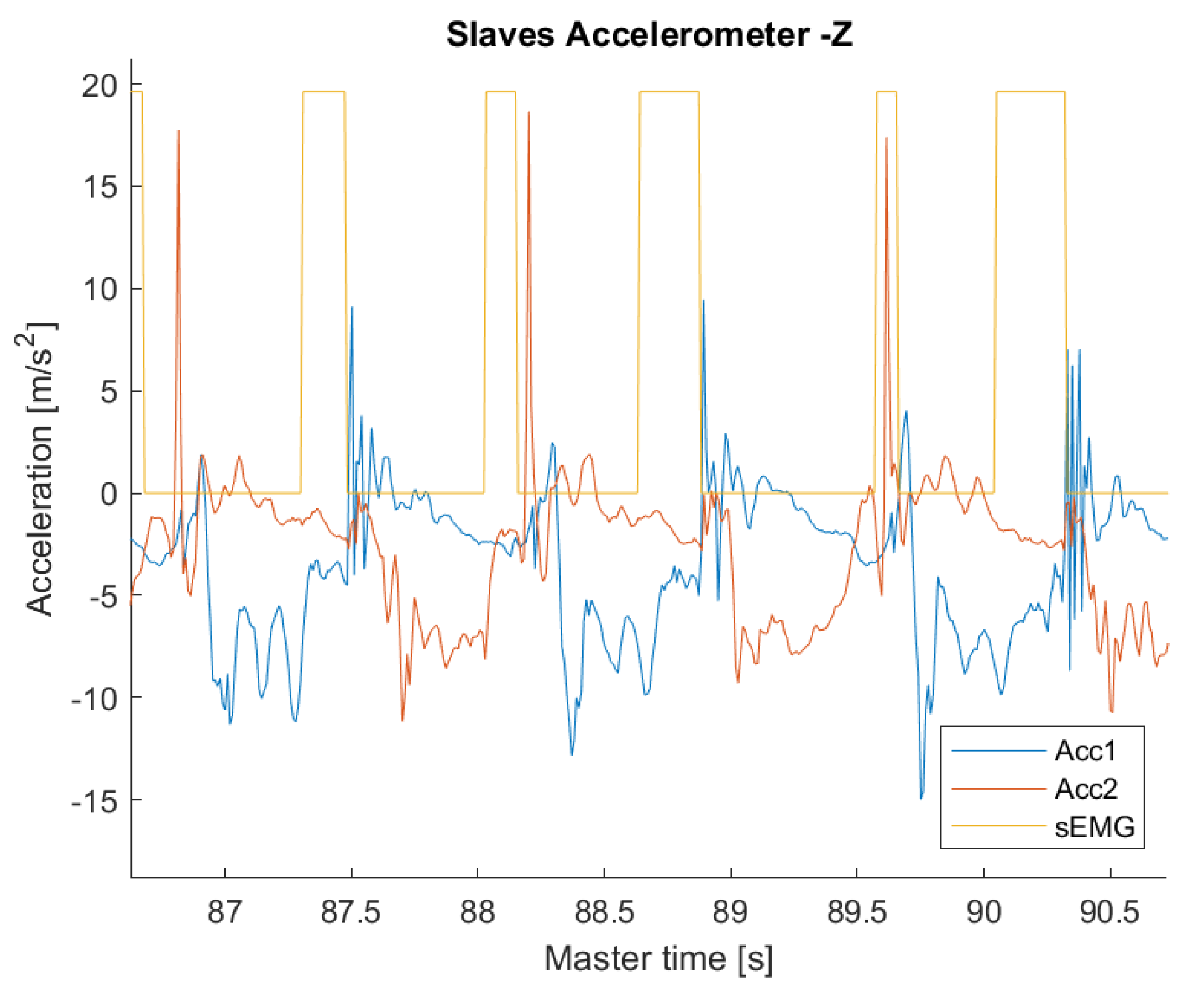

5.2. Walking

6. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Giorgio, A.; Guaragnella, C. ECG Signal Denoising using Wavelet for the VLP effective detection on FPGA. In Proceedings of the 2018 AEIT International Annual Conference, Bari, Italy, 3–5 October 2018; pp. 1–5. [Google Scholar]

- Casalino, G.; Castellano, G.; Mencar, C. Data Stream Classification by Dynamic Incremental Semi-Supervised Fuzzy Clustering. Int. J. Artif. Intell. Tools 2019, 28, 1960009. [Google Scholar] [CrossRef]

- Ahmed, M.U.; Brickman, S.; Dengg, A.; Fasth, N.; Mihajlovic, M.; Norman, J. A machine learning approach to classify pedestrians’ events based on IMU and GPS. Int. J. Artif. Intell. 2019, 17, 154–167. [Google Scholar]

- Carli, R.; Dotoli, M.; Pellegrino, R.; Ranieri, L. Using multi-objective optimization for the integrated energy efficiency improvement of a smart city public buildings’ portfolio. In Proceedings of the 2015 IEEE International Conference on Automation Science and Engineering (CASE), Gothenburg, Sweden, 24–28 August 2015; pp. 21–26. [Google Scholar]

- Bianchi, A.; Pizzutilo, S.; Vessio, G. CoreASM-based evaluation of the N-AODV protocol for mobile ad-hoc networks. J. Mob. Multimed. 2016, 12, 31–51. [Google Scholar]

- Parkinson, J. An essay on the shaking palsy. J. Neuropsychiatry Clin. Neurosci. 2002, 14, 223–236. [Google Scholar] [CrossRef]

- Margiotta, N.; Avitabile, G.; Coviello, G. A wearable wireless system for gait analysis for early diagnosis of Alzheimer and Parkinson disease. In Proceedings of the 2016 5th International Conference on Electronic Devices, Systems and Applications (ICEDSA), Ras Al Khaimah, UAE, 6–8 December 2016; pp. 1–4. [Google Scholar]

- Whittle, M.W. Chapter 4—Methods of gait analysis. In Gait Analysis, 4th ed.; Butterworth-Heinemann: Oxford, UK, 2007; pp. 137–175. [Google Scholar]

- Salarian, A.; Russmann, H.; Vingerhoets, F.J.; Dehollain, C.; Blanc, Y.; Burkhard, P.R.; Aminian, K. Gait assessment in Parkinson’s disease: Toward an ambulatory system for long-term monitoring. IEEE Trans. Biomed. Eng. 2004, 51, 1434–1443. [Google Scholar] [CrossRef]

- Coviello, G.; Avitabile, G. Multiple Synchronized Inertial Measurement Unit Sensor Boards Platform for Activity Monitoring. IEEE Sens. J. 2020, 20, 8771–8777. [Google Scholar] [CrossRef]

- Chen, M.; Gonzalez, S.; Vasilakos, A.; Cao, H.; Leung, V.C. Body area networks: A survey. Mob. Netw. Appl. 2011, 16, 171–193. [Google Scholar] [CrossRef]

- Martinez, B.; Monton, M.; Vilajosana, I.; Prades, J.D. The power of models: Modeling power consumption for IoT devices. IEEE Sens. J. 2015, 15, 5777–5789. [Google Scholar] [CrossRef]

- Wearable IMU Sensor |Motion Sensor| 9DOF-Shimmer3 IMU Unit. Available online: http://www.shimmersensing.com/products/shimmer3-imu-sensor (accessed on 28 May 2020).

- NGIMU x-io Technologies. Available online: https://x-io.co.uk/ngimu/ (accessed on 28 May 2020).

- Sun, Q.; Zhou, Z.; Jiang, J.; Hu, D. Gait cadence detection based on surface electromyography (sEMG) of lower limb muscles. In Proceedings of the 2014 International Conference on Multisensor Fusion and Information Integration for Intelligent Systems (MFI), Beijing, China, 28–29 September 2014; pp. 1–4. [Google Scholar]

- Morbidoni, C.; Cucchiarelli, A.; Fioretti, S.; Di Nardo, F. A Deep Learning Approach to EMG-Based Classification of Gait Phases during Level Ground Walking. Electronics 2019, 8, 894. [Google Scholar] [CrossRef]

- Luo, R.; Sun, S.; Zhang, X.; Tang, Z.; Wang, W. A Low-Cost End-to-End sEMG-Based Gait Sub-Phase Recognition System. IEEE Trans. Neural Syst. Rehabil. Eng. 2020, 28, 267–276. [Google Scholar] [CrossRef] [PubMed]

- Laerhoven, K.V.; Gellersen, H.W.; Malliaris, Y.G. Long term activity monitoring with a wearable sensor node. In Proceedings of the International Workshop on Wearable and Implantable Body Sensor Networks (BSN’06), Cambridge, MA, USA, 3–5 April 2006; pp. 4–174. [Google Scholar]

- Cancela, J.; Pastorino, M.; Arredondo, M.T.; Pansera, M.; Pastor-Sanz, L.; Villagra, F.; Pastor, M.A.; Gonzalez, A.P. Gait assessment in Parkinson’s disease patients through a network of wearable accelerometers in unsupervised environments. In Proceedings of the 2011 Annual International Conference of the IEEE Engineering in Medicine and Biology Society, Boston, MA, USA, 30 August–3 September 2011; pp. 2233–2236. [Google Scholar]

- Oniga, S.; Tisan, A.; Bólyi, R. Activity and health status monitoring system. In Proceedings of the 2017 IEEE 26th International Symposium on Industrial Electronics (ISIE), Edinburgh, UK, 8 August 2017; pp. 2027–2031. [Google Scholar]

- Fischer, M.J. The consensus problem in unreliable distributed systems (a brief survey). In Foundations of Computation Theory; Karpinski, M., Ed.; Springer: Berlin/Heidelberg, Germany, 1983; pp. 127–140. [Google Scholar]

- Cristian, F. Probabilistic clock synchronization. Distrib. comput. 1989, 3, 146–158. [Google Scholar] [CrossRef]

- Gusella, R.; Zatti, S. The accuracy of the clock synchronization achieved by TEMPO in Berkeley UNIX 4.3BSD. IEEE Trans. Softw. Eng. 1989, 15, 847–853. [Google Scholar] [CrossRef]

- Elson, J.; Girod, L.; Estrin, D. Fine-grained network time synchronization using reference broadcasts. ACM SIGOPS Oper. Syst. Rev. 2002, 36, 147–163. [Google Scholar] [CrossRef]

- Maróti, M.; Kusy, B.; Simon, G.; Ledeczi, A. The Flooding Time Synchronization Protocol. 2004, pp. 39–49. Available online: https://dl.acm.org/doi/abs/10.1145/1031495.1031501 (accessed on 28 May 2020).

- Wang, S.; Shi, M.; Li, D.; Du, T. A Survey of Time Synchronization Algorithms for Wireless Sensor Networks. In Proceedings of the 2019 Chinese Control Conference (CCC), Guangzhou, China, 27–30 July 2019; pp. 6392–6397. [Google Scholar]

- AT03155: Real-Time-Clock Calibration and Compensation. Available online: http://ww1.microchip.com/downloads/en/appnotes/atmel-42251-rtc-calibration-and-compensation_ap-note_at03155.pdf (accessed on 23 March 2020).

- Abracon 32.768 kHz Low Profile Crystal. Available online: http://abracon.com/Resonators/ABS07.pdf (accessed on 28 November 2019).

- Blazinsek, I.; Chowdhury, A. Enhancing the accuracy of standard embedded RTC module with random synchronization events and dynamic calibration. Przeglad Elektrotechniczny 2016, 92, 248–251. [Google Scholar] [CrossRef]

- ISL12022 RTC Accuracy Optimization Calibration Procedure—Application Note. Available online: https://www.renesas.com/us/en/www/doc/application-note/an1400.pdf (accessed on 28 November 2019).

- Underwood, S. Implementing a Temperature Compensated RTC—Application Note. Available online: http://www.ti.com/lit/ml/slap107/slap107.pdf (accessed on 28 November 2019).

- Munoz Diaz, E.; Heirich, O.; Khider, M.; Robertson, P. Optimal sampling frequency and bias error modeling for foot-mounted IMUs. In Proceedings of the International Conference on Indoor Positioning and Indoor Navigation, Montbeliard-Belfort, France, 28–31 October 2013; pp. 1–9. [Google Scholar]

- Provot, T.; Chiementin, X.; Oudin, E.; Bolaers, F.; Murer, S. Validation of a High Sampling Rate Inertial Measurement Unit for Acceleration During Running. Sensors 2017, 17, 1958. [Google Scholar] [CrossRef] [PubMed]

- TDK MPU-9250 Datasheet. Available online: https://invensense.tdk.com/wp-content/uploads/2015/02/PS-MPU-9250A-01-v1.1.pdf (accessed on 28 November 2019).

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Coviello, G.; Avitabile, G.; Florio, A. A Synchronized Multi-Unit Wireless Platform for Long-Term Activity Monitoring. Electronics 2020, 9, 1118. https://doi.org/10.3390/electronics9071118

Coviello G, Avitabile G, Florio A. A Synchronized Multi-Unit Wireless Platform for Long-Term Activity Monitoring. Electronics. 2020; 9(7):1118. https://doi.org/10.3390/electronics9071118

Chicago/Turabian StyleCoviello, Giuseppe, Gianfranco Avitabile, and Antonello Florio. 2020. "A Synchronized Multi-Unit Wireless Platform for Long-Term Activity Monitoring" Electronics 9, no. 7: 1118. https://doi.org/10.3390/electronics9071118

APA StyleCoviello, G., Avitabile, G., & Florio, A. (2020). A Synchronized Multi-Unit Wireless Platform for Long-Term Activity Monitoring. Electronics, 9(7), 1118. https://doi.org/10.3390/electronics9071118