1. Introduction

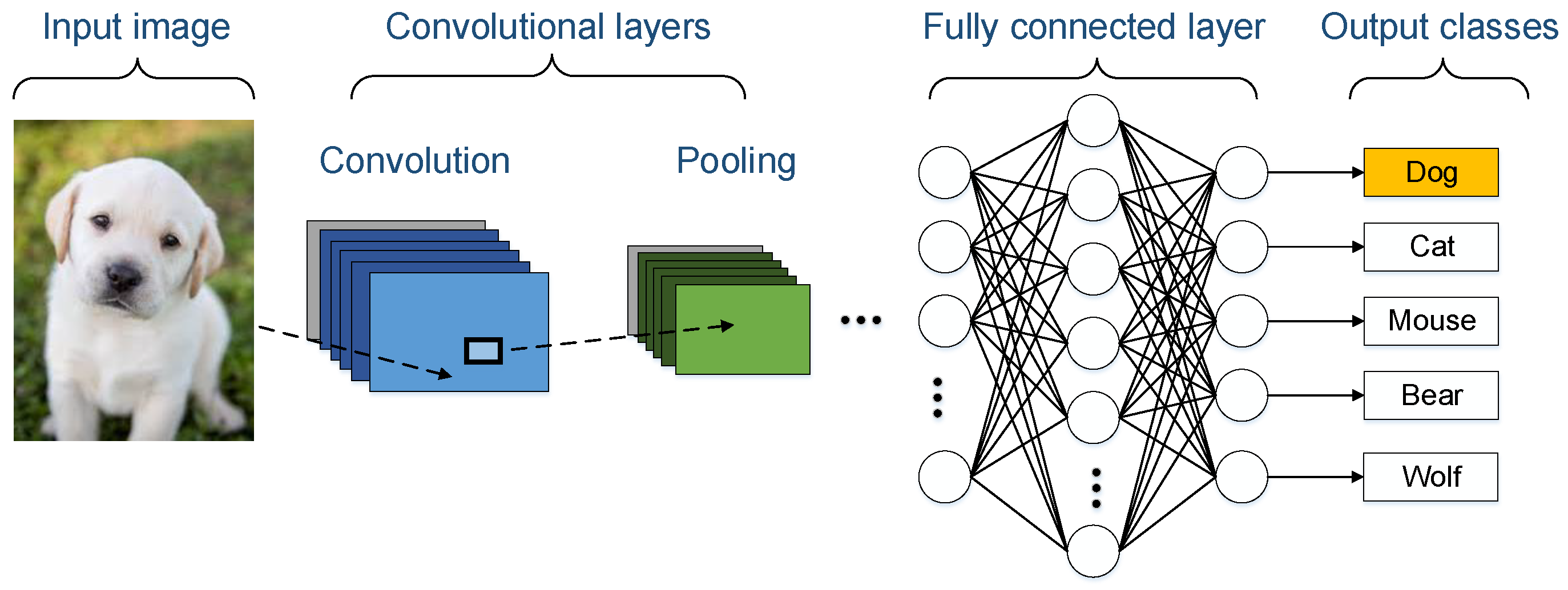

The impact of machine learning and deep learning is rapidly growing in our society, due to their diverse technological advantages. Convolutional neural networks (CNNs) are among the most notable architectures that provide a very powerful tool for many applications, such as video and image analysis, speech recognition, and recommender systems [

1]. On the other hand, CNNs require considerable computing power. In order to better satisfy some given requirements, it is possible to use high-performance processors, like graphic processing units (GPUs) [

2]. However, GPUs have some shortcomings that limit their usability and suitability in day-to-day mission-critical and real-time scenarios. The first downside of using GPUs is their high power consumption. This makes GPUs hard to use in robotics, drones, self-driving cars, and Internet of Things (IoTs), while these fields can highly benefit from deep learning algorithms. The second downside is the lack of external Inputs/Outputs (I/Os). GPUs are typically accessible through some PCI-express bus on their host computer. The absence of direct I/Os makes it hard to use them in mission-critical scenarios that need prompt control actions.

In particular, the floating-point number representation of CPUs and GPUs is used for most deep learning algorithms. However, it is possible to benefit from custom fixed-point numbers (quantized numbers) in order to reduce the power consumption, circuit footprint, and increase the number of compute engines [

3]. It was proven that many convolutional neural networks can work with eight-bit quantized numbers or less [

4]. Hence, GPUs waste significant computing power and electrical power consumption when performing inference in most deep learning algorithms. A better balance can be restored by designing application specific computing units while using quantized arithmetic. Quantized numbers provide other benefits, such as memory access performance improvements, as they allocate less space in the external or internal memory of compute devices. Memory access performance and memory footprint are particularly important for hardware designers in many low-power devices. Most of the portable hardware devices, such as cell-phones, drones, and internet of things (IoT) sensors, require memory optimization due to of the limited resources available on these devices.

Field Programmable Gate Arrays (FPGA) can be used in these scenarios in order to tackle the problems that are caused by limitations of GPUs without compromising the accuracy of the algorithm. Using quantized deep learning algorithms can solve the power consumption and memory efficiency issues on FPGAs as the size of the implemented circuits shrinks with quantization. In [

5], the authors reported that the theoretical peak performance of six-bit integer matrix multiplication (GEMM) in the Titan X GPU is almost 180 GOP/s/Watt, while it can be as high as 380 GOP/s/Watt for Stratix 10, and 200 GOP/s/Watt for Arria 10 FPGAs. This means that high end FPGAs are able to provide comparable or even better performance per Watt than the modern GPUs. FPGAs are scalable and configurable. Thus, a deep convolutional neural network can be configured and scaled to be used in a much smaller FPGA in order to reduce the power consumption for mobile devices. The present paper investigates various design-space exploration methods that can fit a desired CNN to a selected FPGA with limited resources. Having massive connectivity through I/Os is natural in FPGAs. Furthermore, FPGAs can be flexibly optimized and tailored to perform various applications in industrial and real-time mobile workloads [

6]. FPGA vendors, such as Intel, offer a range of commercialized System-on-Chip (SoC) devices that integrate both processor and FPGA fabric into a single chip (see, for example, [

7]). These SoCs are widely used on mobile devices and they make the use of application-specific processors obsolete in many cases.

Several research contributions address ghd architecture, synthesis, and optimization of deep learning algorithms on FPGAs [

8,

9,

10,

11,

12]. Nonetheless, little work was done regarding integrating the results into a single tool. An in depth literature review in

Section 2 exposes that tools, such as [

13,

14], provide neither integrated design space exploration nor generic CNN model analyzer. In [

11,

12,

15], the authors specifically discuss the hardware design and not the automation of the synthesis. In [

9], fitting the design automatically in different FPGA targets is not discussed. This presents a major challenge, as there are many degrees of freedom in designing a CNN. There is no fixed architecture for CNN, as a designer can choose as many convolution, pooling, and fully connected layers as needed to reach the desired accuracy. In addition, there is a need for design-space exploration methods that optimize resource utilization on FPGA.

A number of development environments exist for the description and architectural synthesis of digital systems [

16,

17]. However, they are designed for general purpose applications. This means that there is a great opportunity to make a library that uses these hardware design environments to implement deep learning algorithms. Alternately, there are also many other development frameworks/libraries for deep learning in Python language. Some notable libraries for deep learning are Pytorch, Keras, Tensorflow, and Caffe. Another challenge would be designing a synthesis tool for FPGA that can accept models that are produced by all of the aforementioned Python libraries and many more available for machine learning developers.

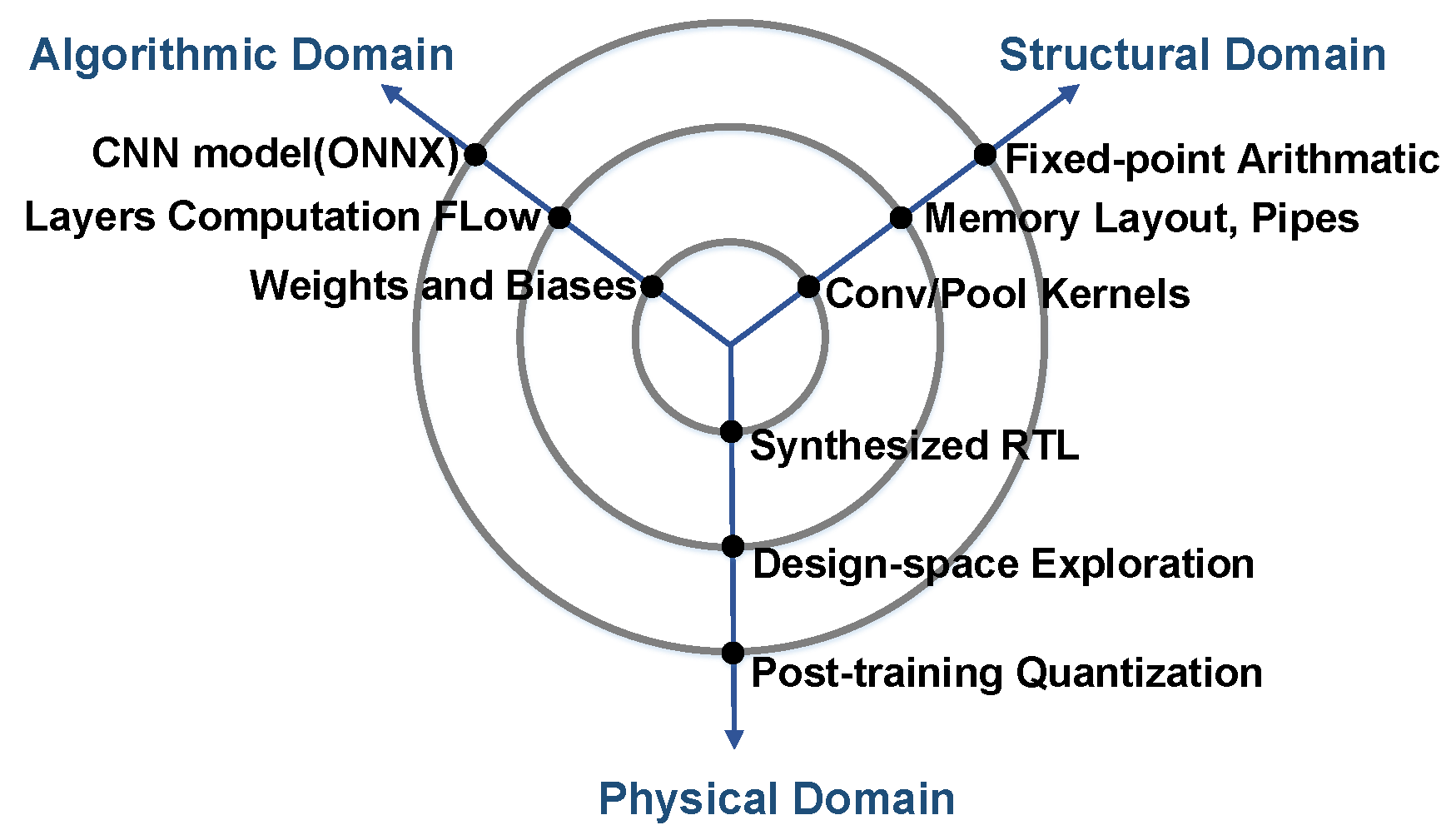

The present paper proposes methods for tackling research challenges that are related to the integration of high-level synthesis tools for convolutional neural networks on FPGAs. The contributions of the present paper are:

Generalized model analysis

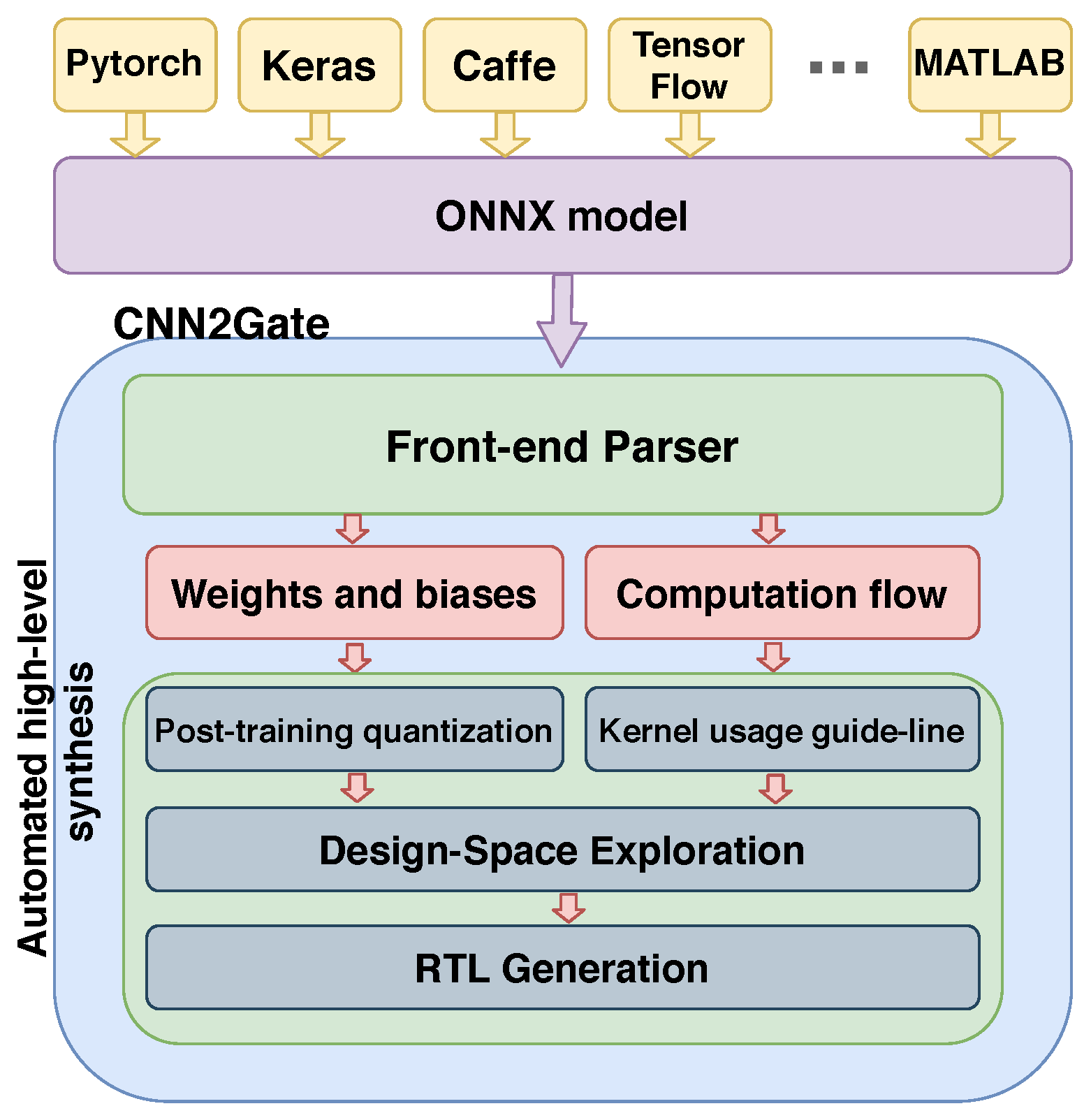

Most of the previous implementations of the CNNs on FPGA fall short in supporting as many machine learning libraries as possible. CNN2Gate benefits from a generalized model transfer format, called ONNX (Open Neural Network eXchange format) [

18]. ONNX helps hardware synthesis tools to be decoupled from the framework in which a specific CNN was designed. Using ONNX in CNN2Gate provides us the ability to focus on hardware synthesis without being concerned by details of specific machine learning tools. CNN2Gate integrates an ONNX parser that extracts the computation data-flow, as well as weights and biases from the ONNX representation of a CNN model. It then writes these data in a format that is more usable with hardware synthesis workflows.

Automated high-level synthesis tool

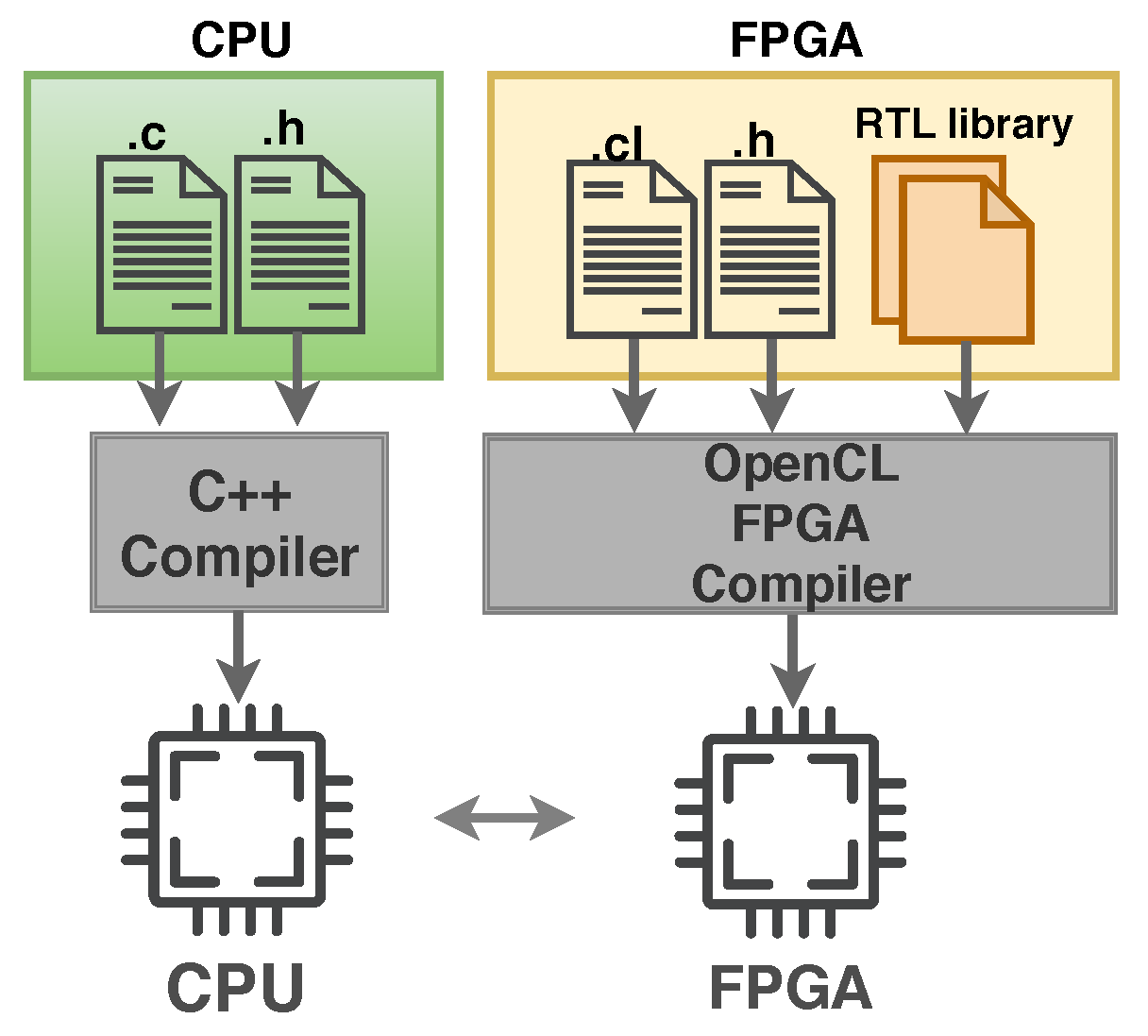

Several hardware implementation aspects of machine learning algorithms are not part of the skill set of many machine learning engineers and computer scientists. High-level synthesis is one of the solutions that aims at improving hardware design productivity. CNN2Gate offers a high-level synthesis workflow that can be used to implement CNN models on FPGA. CNN2Gate is a Python library that parses, gets the design synthesized using vendor’s OpenCL tools, and runs a CNN model automatically. Its embedded procedures aim to achieve the best throughput performance. Furthermore, CNN2Gate eliminates the need for FPGA experts to manually implement the CNN model targeting FPGA hardware during the early stages of the design. Having the form of a Python library, CNN2Gate can be directly exploited by machine learning developers in order to implement a CNN model on FPGAs. CNN2Gate is built around an available open-source tool, notably PipeCNN [

8], which exploits the capabilities of existing design tools to use OpenCL kernels from which high-level synthesis can be performed.

Hardware-aware design-space exploration

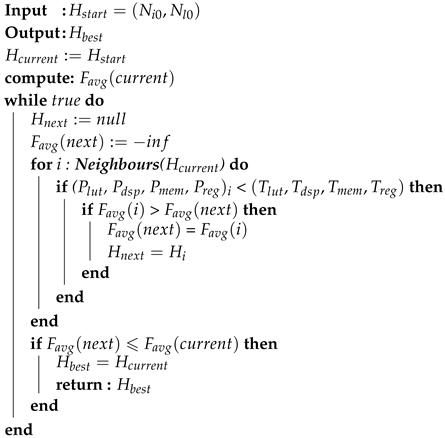

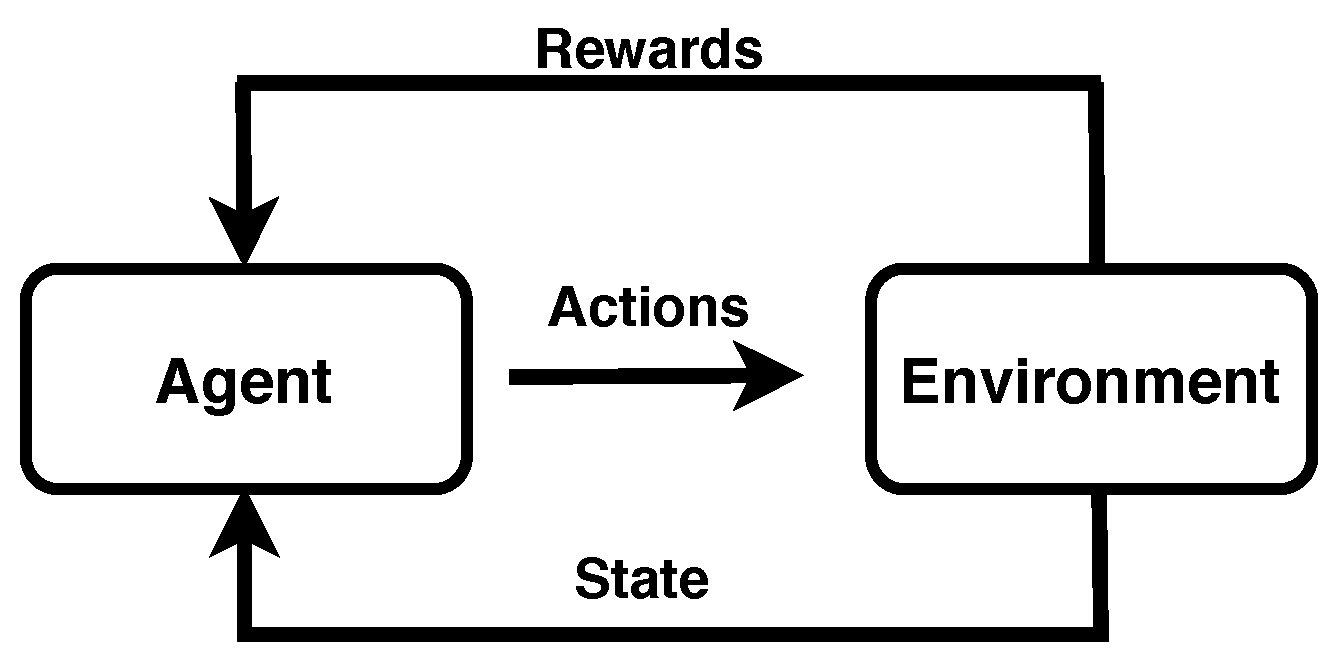

An important aspect of design-space exploration is to try choosing design parameters that achieve some desired performance prior to the generation of physical design. CNN2Gate provides a design-space exploration tool that is able to adjust the level of parallelism of the algorithm to fit the design on various FPGA platforms. The design-space exploration methods that are proposed here use estimations of hardware resource requirements (e.g., DSPs, lookup tables, registers, and on-chip memory) in order to fit the design. These estimates are obtained by invoking the first stage of the synthesis tool that was provided by the FPGA vendor and receives back the estimated hardware resource utilization. In the next step, the tool tunes the design parameters according to the resource utilization feedback and iterates again to obtain the new hardware resource utilization. We have implemented three algorithms to undertake design-space exploration. The first algorithm is based on brute-force in order to check all possible parameter values. The second algorithm is based on a reinforcement learning (RL) agent that explores the design space with a set of defined policies and actions. Finally, the third algorithm uses the hill-climbing method in order to tackle the problem of design-space exploration in large convex design-spaces. The advantages and disadvantages of these three exploration algorithms will be discussed in the corresponding sections.

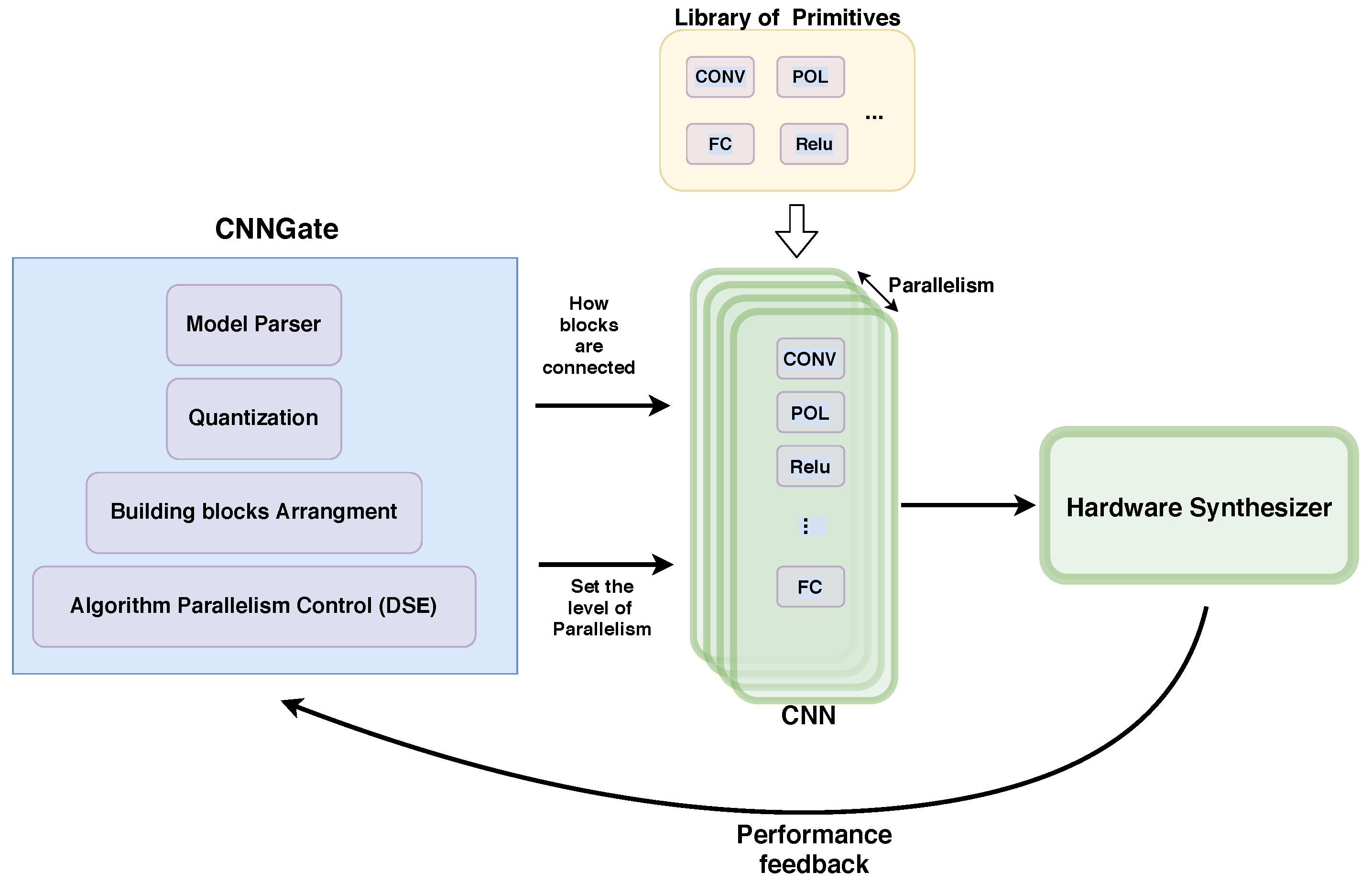

Note that CNN2Gate emphasizes software/hardware co-design methods. The goal of this paper is not to compare the performance of FPGAs and GPUs, but it explores the possibility of designing end-to-end generic frameworks that can leverage high-level model descriptions in order to implement FPGA-based CNN accelerators without human intervention. As shown in

Figure 1, CNN2Gate is a Python library that can be used in order to perform inference of CNNs on FPGAs that is capable of:

parsing CNN models;

extracting the computation flow of each layer;

extracting weights and biases of each kernel;

applying post-training quantization values to the extracted weights and biases;

writing this information in the proper format for hardware synthesis tools;

performing design space exploration for various FPGA devices using a hardware-aware smart algorithm; and,

synthesizing and running the project on FPGA using the FPGA vendor’s synthesis tool.

The rest of the paper is organized, as follows.

Section 2 reviews the related works.

Section 3 reviews the most relevant background knowledge on convolutional neural networks.

Section 4 elaborates on how CNN2Gate extracts the computation flow, configures the kernels, and executes design space exploration. Subsequently,

Section 5 reports some results and compares them to other existing implementations.

2. Related Works

A great deal of research was conducted on implementing deep neural networks on FPGAs. Among those researches, hls4ml [

14], fpgaConvNet [

9], and Caffeine [

15] are the most similar to the present paper. hls4ml is a companion compiler package for machine learning inference on FPGA. It translates open-source machine learning models into high-level synthesizable (HLS) descriptions. hls4ml was specifically developed for an application in particle physics, with the purpose of reducing the development time on FPGA. However, as stated in the status page of the project [

19], the package only supports Keras and Tensorflow for CNNs and the support for Pytorch is in development. In addition, to the best of our knowledge, hls4ml does not offer design-space exploration. FpgaConvNet is also an end-to-end framework for the optimized mapping of CNNs on FPGAs. FpgaConvNet uses a symmetric multi-objective algorithm in order to optimize the generated design for either throughput, latency, or multi-objective criteria (e.g., throughput and latency). The front-end parser of fpgaConvNet can analyze models that are expressed in the Torch and Caffe machine-learning libraries. Caffeine is also a software-hardware co-design library that directly synthesizes Caffe models comprising convolutional layers and fully connected layers for FPGAs. The main differences between CNN2Gate and other cited works are in three key features. First, as explained in

Section 4.1, CNN2Gate leverages a model transfer layer (ONNX), which automatically brings support for most machine-learning Python libraries without bounding the user to a specific machine-learning library. Second, CNN2Gate is based on OpenCL, unlike hls4ml and fpgasConvNet, which are based on C++. Third, CNN2Gate proposes an FPGA fitter algorithm that is based on reinforcement learning.

Using existing high-level synthesis technologies, it is possible to synthesize OpenCL Single Instruction Multiple Thread (SIMT) algorithms to RTL. It is worth mentioning some notable research efforts in that direction. In [

20], the authors provided a deep learning accelerator targeting Intel’s FPGA devices that are based on OpenCL. This architecture was capable of maximizing data-reuse and minimizing memory accesses. The authors of [

21] presented a systematic design-space exploration methodology in order to maximize the throughput of an OpenCL-based FPGA accelerator for a given CNN model. They used synthesis results to empirically model the FPGA resource utilization. Similarly, in [

22], the authors analyzed the throughput and memory bandwidth quantitatively in order to tackle the problem of design-space exploration of a CNN design targeting FPGAs. They also applied various optimization methods, such as loop-tiling, to reach the best performance. A CNN RTL compiler is proposed in [

23]. This compiler automatically generates sets of scalable computing primitives to form an end-to-end CNN accelerator.

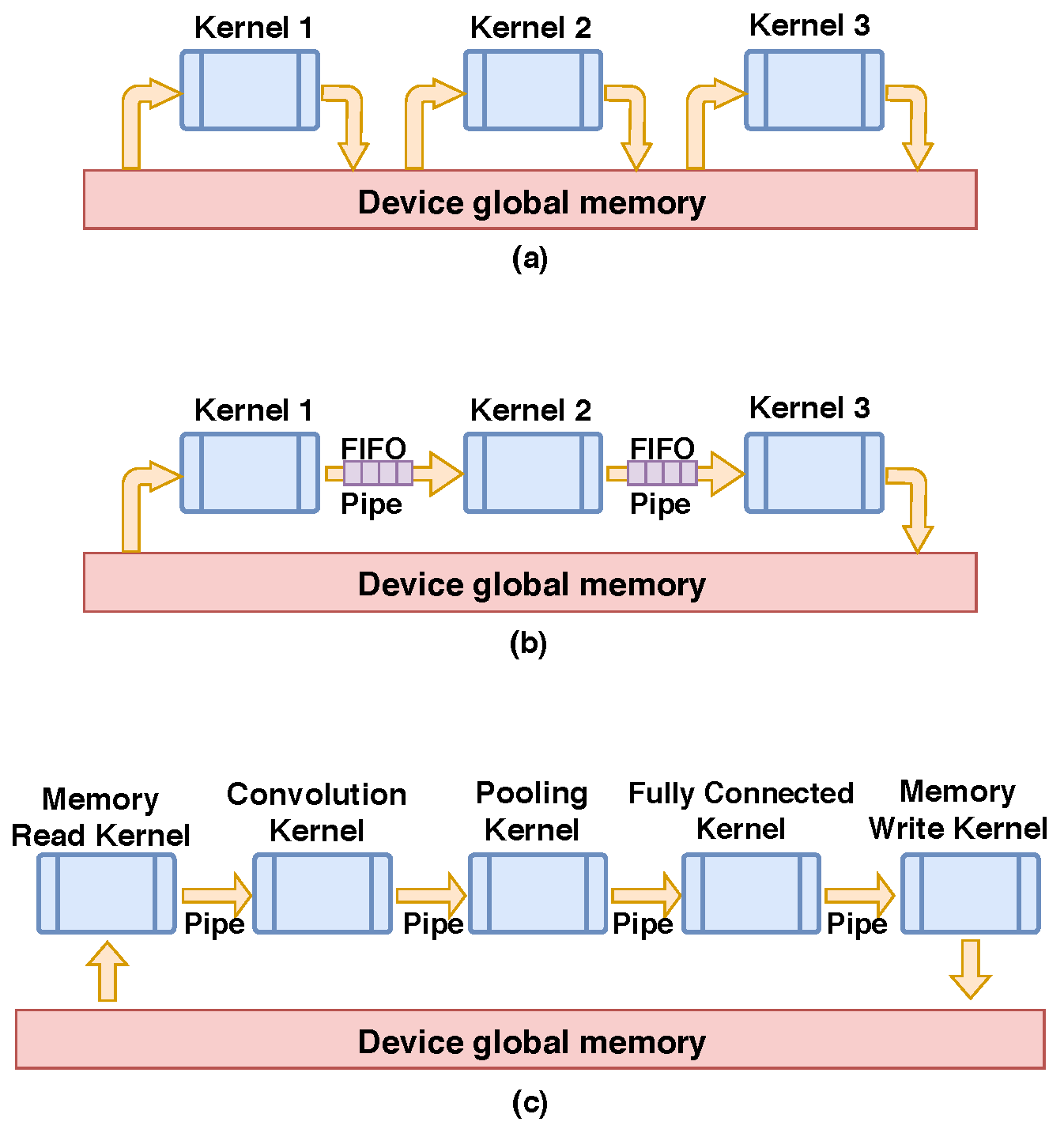

Another remarkable OpenCL-based implementation of CNNs is PipeCNN [

8]. PipeCNN is mainly based on the capability of currently available design tools in order to use OpenCL kernels in high-level synthesis. PipeCNN consists of a set of configurable OpenCL kernels to accelerate CNN and optimize memory bandwidth utilization. Data reuse and task mapping techniques have also been used in that design. Recently, in [

24], the authors added a sparse convolution scheme to PipeCNN in order to further improve its throughput. Our work (CNN2Gate) follows the spirit of PipeCNN. CNN2Gate is built on top of a modified version of PipeCNN. CNN2Gate is capable of exploiting a library of primitive kernels needed to perform inference of a CNN. In addition, CNN2Gate also includes means to perform automated design-space exploration and can automatically translate CNN models that are provided by a wide variety of machine learning libraries. It should be noted that our research goal is introducing a methodology to design an end-to-end framework to implement CNN models on FPGA targets. This means, without a loss of generality, that CNN2Gate can be modified to support other hardware implementations or technologies ranging from RTL to high-level synthesis with two conditions. First, CNN layers can be expressed as pre-defined templates. Second, the amount of parallelism in the hardware templates can be controlled.

There are several reasons that we have chosen PipeCNN as the foundation of CNN2Gate. First, while using the OpenCL model, it is possible to fine-tune the amount of parallelism in the algorithm, as explained in the

Section 4.3. Second, it supports the possibility of having deep pipelined design, as explained in

Section 3.2.2. Third, the library of primitive kernels can be easily adapted and re-configured based on the information that was extracted from a CNN model (

Section 4.1).

Lately, reinforcement learning has been used in order to perform automated quantization for neural networks. HAQ [

25] and ReLeQ [

26] are examples of research efforts exploiting this technique. ReLeQ proposes a systematic approach for solving the problem of deep quantization automatically. This provides a general solution for the quantization of a large variety of neural networks. Likewise, HAQ, suggests a hardware-aware automated quantization method for deep neural networks while using actor-critic reinforcement learning method [

27]. Inspired by these two papers, we used a reinforcement learning algorithm to control the level of parallelism in CNN2Gate OpenCL kernels.

Finally, it is worth mentioning the challenges of implementing digital vision system in hardware. In [

28], the authors proposed a hardware implementation of a haze removal method exploiting adaptive filtering. Additionally, in [

29], the authors provided an FPGA implementation of a novel method to recover clear images from degraded ones on FPGA.

5. Results

Table 1 shows the execution times of AlexNet [

38] and VGG-16 [

39] for three platforms while using CNN2Gate. The user can verify the CNN model on CPU using the CNN2Gate emulation mode in order to confirm the resulting numerical accuracy, as mentioned before. Even if the execution time is rather large, this is a very useful feature to let the developer verify the validity of the CNN design on the target hardware before going forward for synthesis, which is a very time-consuming process. Note that the emulation’s execution time cannot be a reference for the throughput performance of a core-i7 processor. The emulation mode only serves the purpose of verifying the OpenCL kernels operations. In [

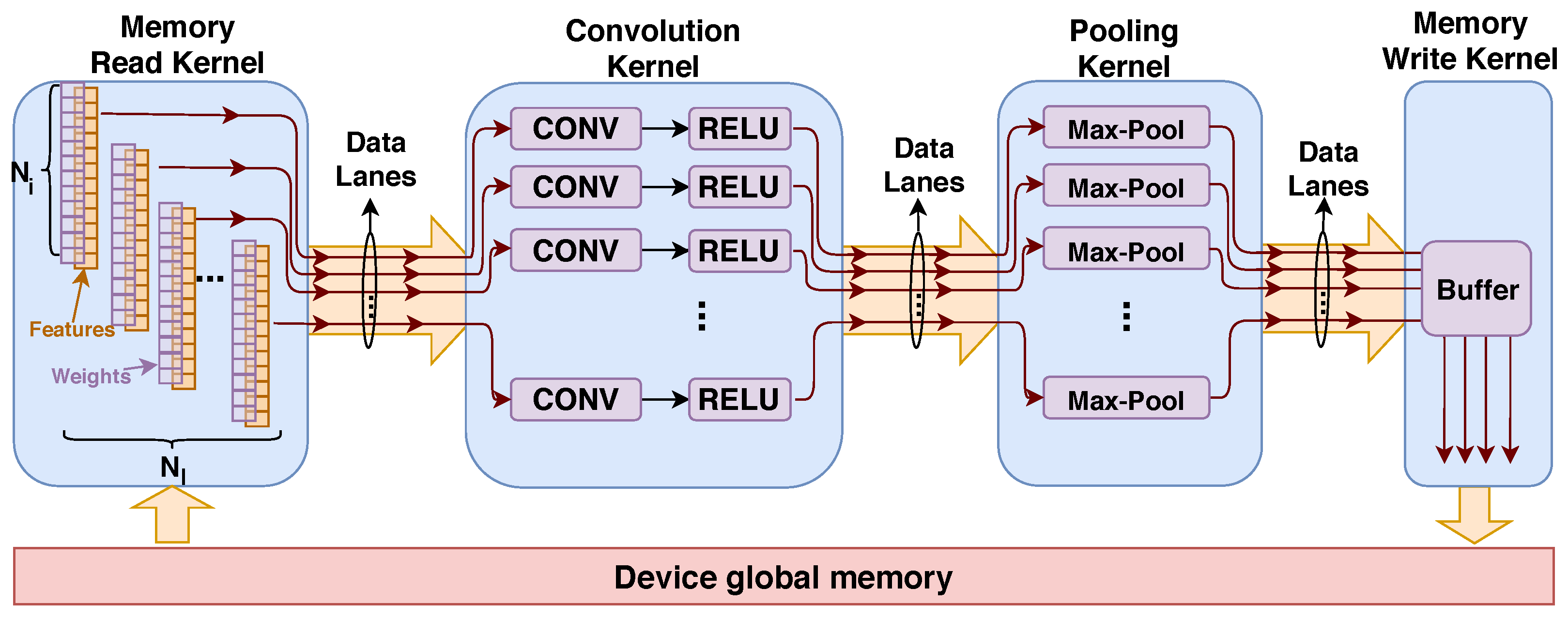

40], the authors described the execution of AlexNet on desktop CPUs and reported an execution time as low as 2.15 s. The reported results also show the scalability of this design Indeed, the results are reported for both the low cost Cyclone V SoC and the much more expensive Arria 10 FPGA that has much more resources. The exploited level of parallelism was automatically extended by the design-space exploration algorithm in order to obtain better results for execution times that are commensurate with the capacity of the hardware platform.

In order to maintain the scalability of the algorithm, it is not always possible to use arbitrary choices for

parameters. These parameters must be chosen in a way that kernels can be used as the building block of all the layers. This leads to have limited options to increase the level of parallelism that can be exploited with a given network algorithm. Relaxing this limitation (i.e., manually designing kernels based on each layers’ computation flow) could lead to better resource consumption and higher throughput at the expense of losing the scalability that is needed for automation of this process. The maximum operating frequency (

) varies for different FPGAs. Indeed, it depends on the underlying technology and FPGA family. Intel OpenCL compiler (synthesizer) automatically adjust PLLs on the board to use the maximum frequency for the kernels. It is of interest that the operating frequency of the kernels supporting AlexNet and VGG-16 were the same, as they had essentially the same critical path, even though VGG-16 is a lot more complex. The larger complexity of VGG was handled by synthesizing a core (i.e.,

Figure 6) that executes a greater number of cycles if the number of layers in the network increases.

Table 2 gives more details regarding the design-space exploration algorithms which are coupled with synthesis tool. All three algorithms use the resource utilization estimation of the synthesizer to fit the design on the FPGA. This is important as the time consumed for design-space exploration is normally under 5 min., while the synthesis time for larger FPGAs, such as the Arria 10, can be close to 10 h.

Experimenting with various DSE algorithms confirms that smart algorithms (such as reinforcement learning and hill-climbing) can provide advantages for design-space exploration when compared to brute-force enumeration. The first goal of suggesting the RL-DSE and HC-DSE methods is to demonstrate that it is possible to further decrease the exploration time by using some better search methods. Analyzing the execution times shows that the reinforcement learning algorithm is almost 25 percent and the hill-climbing is 50 percent faster than the brute-force algorithm when optimizing the design for the Arria 10 FPGA. Note that these exploration times are significantly less than the synthesis time, since we use estimation of resource usage provided by the synthesizer. On the other hand, performing full synthesis as part of the exploration process is not advisable, because the exploration time would take weeks. Because HC-DSE follows the gradient of resource usage, it is always faster than BF-DSE. This method is the best when the design-space is convex. However, if the search space is not convex (e.g., having several local maximums), HC-DSE might choose a wrong solution. In contrast, it is less probable for RL-DSE to be trapped in a local maximum due to the random nature of the reinforcement learning algorithm. Moreover, the RL-DSE algorithm would be more valuable if it could be exploited in conjunction to the reinforcement learning quantization algorithms, such as ReLeQ [

26].

The goal of considering various DSE algorithms in our work is to provide a versatile tool for the user in different conditions and it is not limited to a specific case. We included the brute-force algorithm in CNN2gate in order to guarantee a successful exploration for small design-spaces. However, presuming that the design space is small is not always a correct assumption. For large convex design-spaces, we added the hill-climbing algorithm to find the best solution which works significantly faster than brute-force. For non-convex and large design-spaces, reinforcement learning was found to work well [

41]. In

Table 2, the design-space is small. This is why the difference between the execution time between various exploration algorithms is small. However, it was shown that HC-DSE can be twice faster than brute force when optimizing the design for the Arria 10 FPGA.

There are other model-based design-space exploration algorithms that are dedicated to a specific implementation of a library of primitives. For instance, in [

24], the authors proposed a performance model for their design, and they can predict the performance of the hardware implementation based on the resource requirements. The advantage of our proposed DSE algorithms is that our algorithms are model agnostic. This means that the CNN2Gate framework tunes the parallelism parameters of the design and directly queries the performance feedback from the synthesizer.

We tried CNN2Gate on three platforms. The first one is a very small Cyclone V device with 15K adaptive logic modules (ALMs) and 83 DSPs. The fitter could not fit either ALexNet or VGG on this device. Clearly, the minimum space that is required for fitting this design on FPGA is fairly large due the complexity of the control logic. CNN2Gate did not experience any difficulty fitting the design on bigger FPGAs. as demonstrated in

Table 2. Resource utilization and hardware options

are also provided, which correspond to the execution times that are shown in

Table 1.

CNN2Gate resource consumption is very similar for AlexNet and VGG-16. In the case of identical hardware options, CNN2Gate’s synthesized core is going to be nearly identical for all CNN architecture as shown in

Figure 6. The only difference is the size of internal buffers to allocate the data in the computation flow. More quantitatively, in our implementation, VGG-16 uses 8% more of the Arria 10 FPGA block RAMs in comparison to what is shown in

Table 2 for AlexNet.

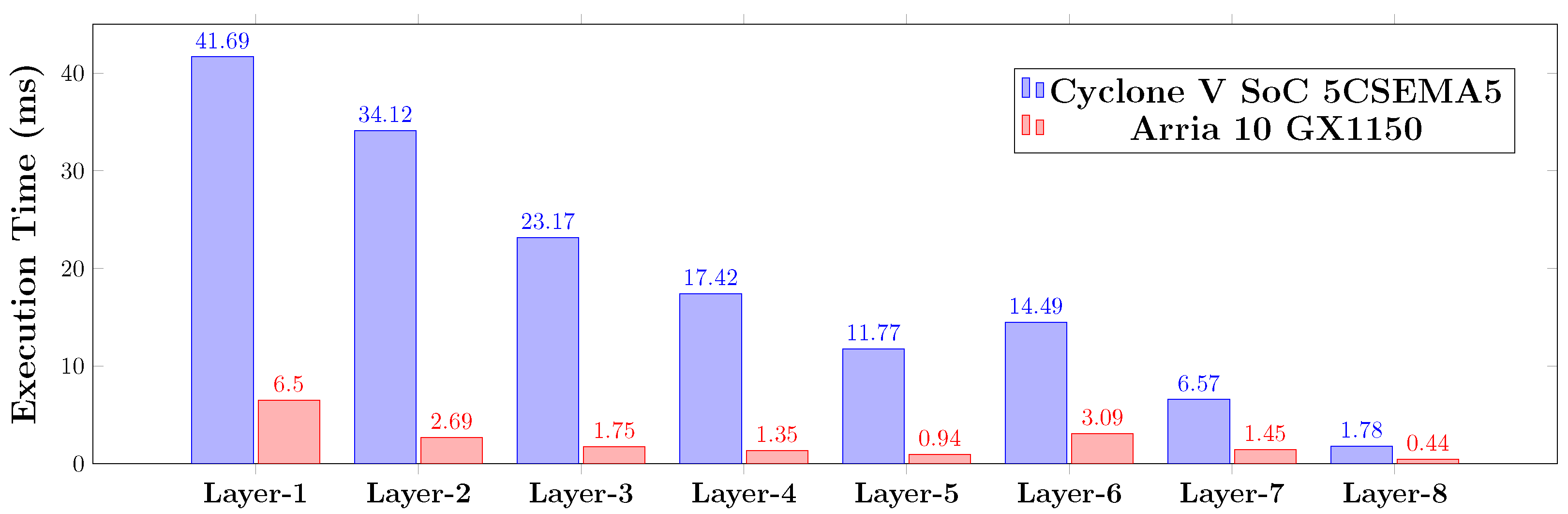

Revisiting

Figure 6, pipelined kernels are capable of reading data from global memory and processing the convolution and pooling kernel at the same time. In addition, for fully connected layers in CNNs, the convolution kernel acts as the main data process unit and the pooling kernel is configured as a pass-through. When considering this hardware configuration, we can merge convolution and pooling layers as one layer. In the case of AlexNet, this leads to five fused convolution/pooling and three fully-connected layers.

Figure 8 reports the detailed execution time of these six layers, including memory read and write kernels for each layer. Thus, as the algorithm proceeds through the layers, the dimensions of the data (features) reduced and the execution time is decreased. Note that, in this figure, Layer 1 to Layer 5 are fused layers comprising a convolution, a pooling, and a ReLu layers. Additionally, note that, although the number of parameters in convolutional layers are less in fully connected layers, the memory consumption is far greater than with fully connected layer. Therefore, the performance of the first convolutional layers can be affected in a system accessing external memory (RAM).

Table 3 shows a detailed comparison of CNN2Gate to other existing works for AlexNet. CNN2Gate is faster than [

21,

22] in terms of latency and throughput. However, CNN2Gate uses more FPGA resources than [

21]. To make a fair comparison, we can measure relative performance density as per DSP or ALMs. In this case, the CNN2Gate performance density (GOp/s/DSP) is higher (0.266) when compared to 0.234 for [

21]. There are other designs, such as [

9,

23], which are faster than our design in terms of pure performance. Nevertheless, the CNN2Gate method that is outlined above significantly improves on existing methods, as it more scalable and automatable than the methods that are presented in [

9,

23], which are limited in these regards, as they require human intervention in order to reach high levels of performance.

Second, the design entry of [

9,

23] are C and RTL, respectively, while our designs were automatically synthesized from ONNX while using OpenCL. Thus, not surprisingly, our work does not achieve the maximum reported performance. This is partly due to the use of ONNX as a starting point, and trying to keep the algorithm scalable for either large and small FPGAs. This imposes some limitations, such as the maximum number of utilized CONV units per layer. There are also other latency reports in the literature, such as [

8]. However, those latency reports are measured with favorable batch size (e.g., 16). An increasing batch size can make more parallelism available to the algorithm that can lead to higher throughput. Thus, for clarity, we limited the comparisons in

Table 3 and

Table 4 to batch size = 1.

Table 4 shows a detailed comparison of CNN2Gate to other existing works for VGG-16. It is observable that CNN2Gate is performing better for larger neural networks, such as VGG. CNN2Gate achieves 18 % lower latency than [

9], despite the fact that CNN2Gate uses fewer DSPs. While, for AlexNet, [

9] was more than 50 % faster than CNN2Gate. Finally, for VGG-16, we did find some hand tailored RTL custom designs, such as [

11], which are faster than CNN2Gate.