Comparing VR- and AR-Based Try-On Systems Using Personalized Avatars

Abstract

1. Introduction

2. Background and Related Work

2.1. AR- and VR-Based Try-On

2.1.1. AR-Based Try-On

- 2D Overlay AR-Based Try-OnEarlier work on 2D overlay virtual try-on was mostly conducted in computer graphics [19,20,21]. The 2D overlay VTO overlays a projected 2D image of products onto an image of the user and the real environment around the user. Hilsmann et al. re-textured garment overlay for real-time visualization of garments in a virtual mirror environment [22]. Yamada et al. proposed a method for reshaping the garment image based on human body shapes to make fitting more realistic [23]. Zheng et al. aligned the target clothing item on a given person’s body and presented a try-on look of the person with different static poses [24]. However, 2D overlay virtual try-on does not adapt well to dynamic poses when users perform certain activities. In addition, like many other re-texturing approaches, they operate only in 2D without using 3D information in any way, which prevents users from being able to view their virtual self from arbitrary viewpoints.

- 3D AR-Based Try-OnCompared to 2D images, 3D garment models precisely simulate garments. Previous research focused on matching the 3D virtual garment to user body shape or virtual avatar. Pons-Moll et al. introduced a system using a multi-part 3D model of clothed bodies for clothing extraction and re-targeting the clothing to new body shapes [25]. Zhou et al. generated 3D models of garments from a single image [26]. Yang et al. generated garment models by fitting 3D templates on users’ bodies [27]. Duan et al. proposed a multi-part 3D garment model reconstruction method to generate virtual garment for virtual fitting on virtual avatars [25].

2.1.2. VR-Based Try-On

2.2. Personalized Virtual Avatar on Concerns with Virtual Try-On

- Personalized Virtual AvatarCompared to standardized avatars, customizing a virtual avatar with their own features may provide users a sense of virtual self. The creation of realistic self-avatars is important for VR- or AR-based VTO applications that aim for improving the acceptance of personalized avatars, thus providing users a more realistic and accurate try-on experience. Recent studies focused on investigating the personalized VTO experience with a customized virtual avatar created using the user’s own face and body figures. Personalized VTO provides a more realistic user experience when users try clothes on their virtual self [10]. Yuan et al. customized a partially visible avatar based on the user’s body size and skin color, and used it for proper clothes fitting. They found that using a personalized avatar can increase customer purchase choice confidence [34]. Nadia Magnenat-Thalmann et al. proposed a VTO system that allows users to virtually fit physically-simulated garments on their generic body model [35]. Yang and Xiong found that a VTO experience with a personalized avatar significantly increases customer satisfaction and decreases the rate of product return [36]. Moreover, with females as the main target customers of online shopping, they usually have a high body esteem of their virtual body. Anne Thaler et al. investigated gender differences in the use of visual cues (shape, texture) of a self-avatar for estimating body weight and evaluating avatar appearance. In terms of the ideal body weight, females but not males desired a thinner body [37].

- Virtual Try-On Levels of PersonalizationDepending on the avatar’s level of personalization, the avatar representing the user may or may not provide a real sense of self [38]. According to the avatar’s similarity to the user, virtual try-on systems can be divided into four levels, as proposed Merle et al. [10]:

- (1)

- Mix-and-match: Same as the traditional online shopping where users can select the products using only online images.

- (2)

- (3)

- Personalized VTO: Virtual avatar models are customized with personal features (face color, height, weight, bust size, and body shape).

- (4)

- Highly personalized VTO: Virtual avatar models are customized with personal features, including the face model.

- (1)

- Personalization: Existing VTO systems only personalized user’s presence of the real body (body shape and face image). Our proposed AR- and VR-based try-on system improves the level of personalized avatars. We provide a new way for users to view the virtual garment by the augmented personalized motion.

- (2)

- Interactivity: Most existing VTO systems only overlay the 2D images of garment onto the user’s real body, without using 3D information and not allowing users to check the garment from different viewpoints. Our proposed AR- and VR-based try-on system enables users to view a life-size personalized avatar with garment models and posing or walking augmented in the real-world. Users can view the virtual garment interactively and immersive in 360 degrees.

- (3)

- Realism of garment model: Existing VTO systems usually use some pre-defined garment model. Our proposed AR- and VR-based try-on system generates a 3D garments model based on some existing website information.

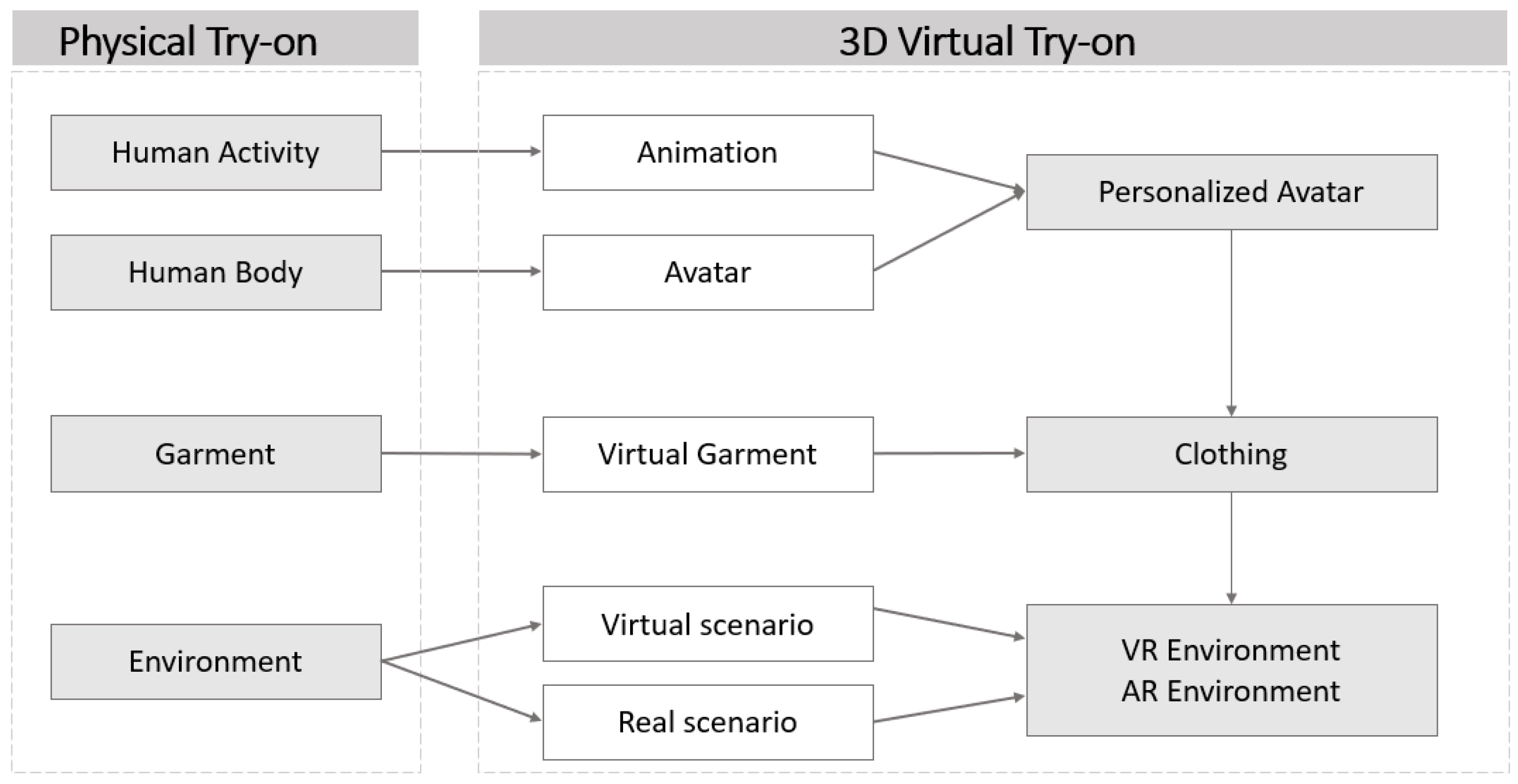

3. System Design

- Human Activity: Daily life activities and motions.When trying on clothes physically, consumers often like to move their body in such a way that mimics their daily life poses or actions in order to observe the dynamic details of the clothes. We prepared several natural daily life motions to simulate the consumers’ activities in the real world, such as walking, sitting, waving, etc.

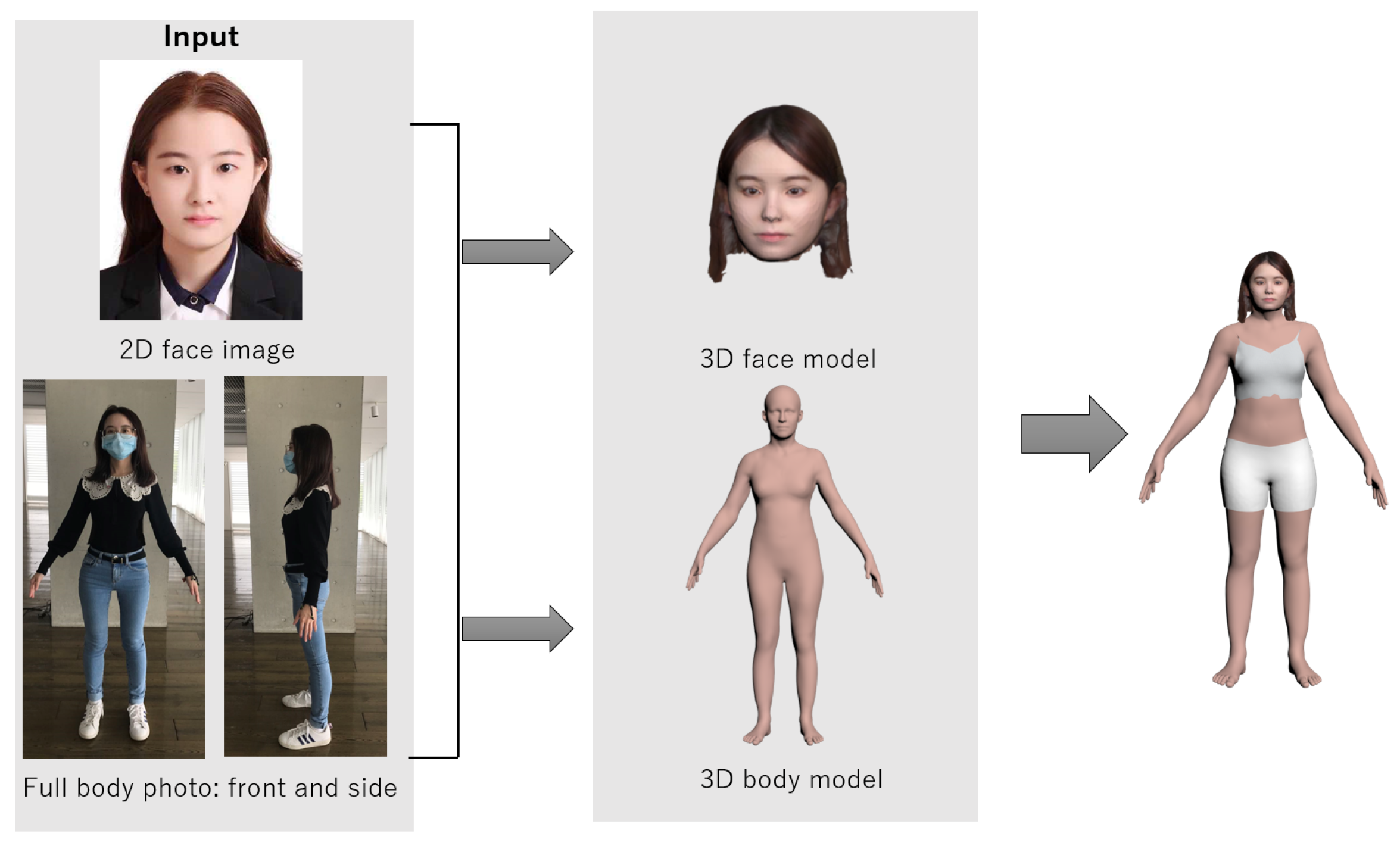

- Human Body: The basic human body property information such as sex, face, height, and body shape.During physical fitting, consumers have the opportunity to actually try the garments on and choose clothes that suit their own body. With 3D virtual try-on, we created a personalized virtual avatar based on the user’s body figures, face photos, as well as users’ personalized actual posture or movement.

- Garment: Clothing style, clothing fit, and garment type. For 3D virtual try-on, we generated a 3D garment model library for users based on the garment image information from existing online shopping websites.

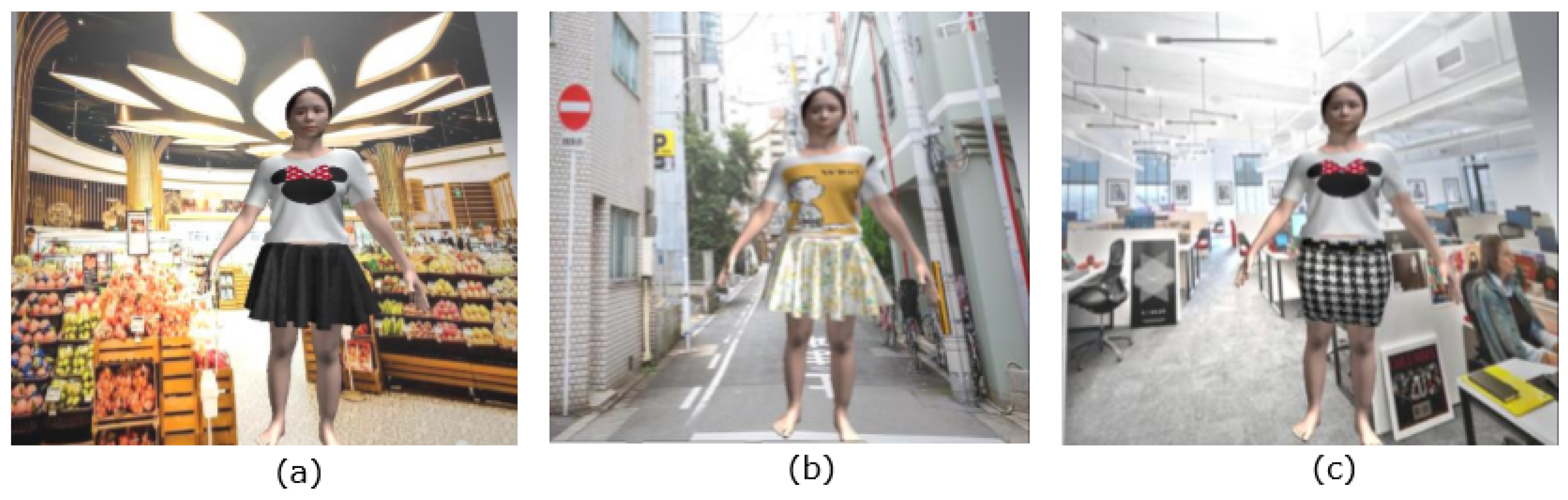

- Environment: Environmental conditions of the try-on experience.During physical fitting, consumers can actually try on clothes in the real world or wear the clothes under different conditions, such as walking in the street, working in the office, etc. Using VR technology, the 3D virtual try-on incorporates several virtual scenarios, simulating different physical scenes, which can help users make decisions according to different wearing conditions.

3.1. Personalized Avatar

3.1.1. Human Model Generation

- Face model generation3D Avatars SDK [50] combines complex computer vision, deep learning, and computer graphics techniques to turn 2D photos into a realistic virtual avatar. Using 3D Avatars SDK, we created a 3D face model from a single frontal facial image, as well as a fixed topology of a head that included user hair and neck.

- Body model generation

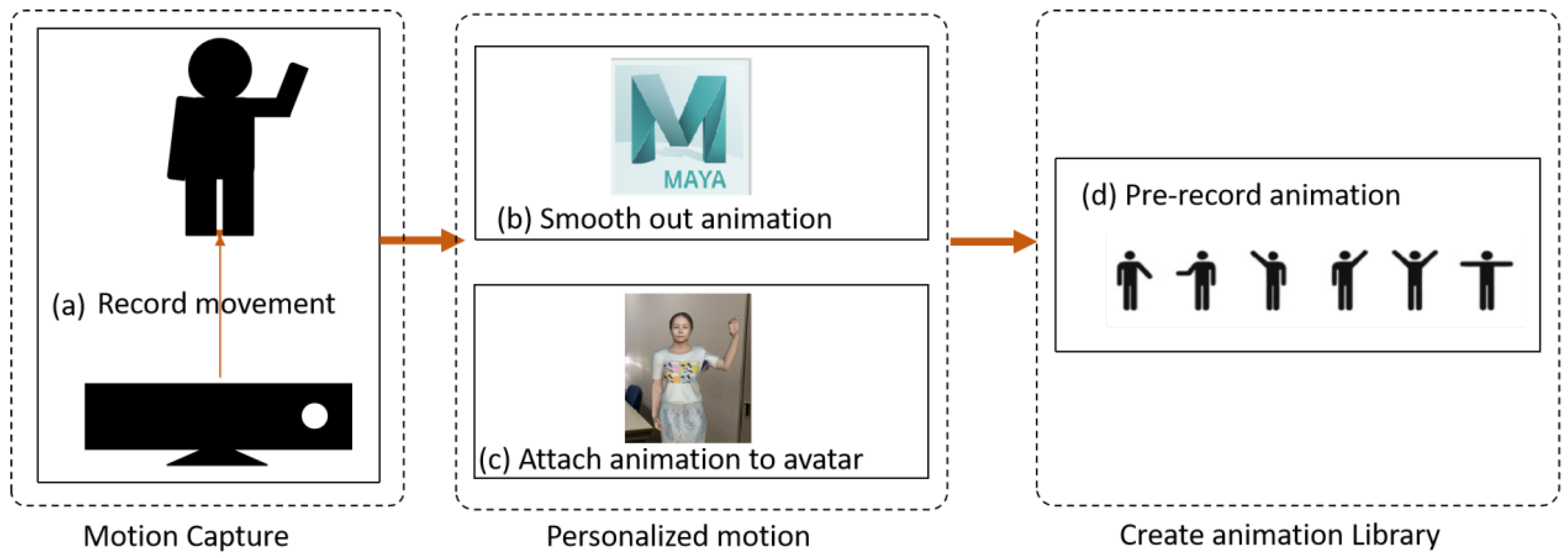

3.1.2. Personalize Motion of the Avatar

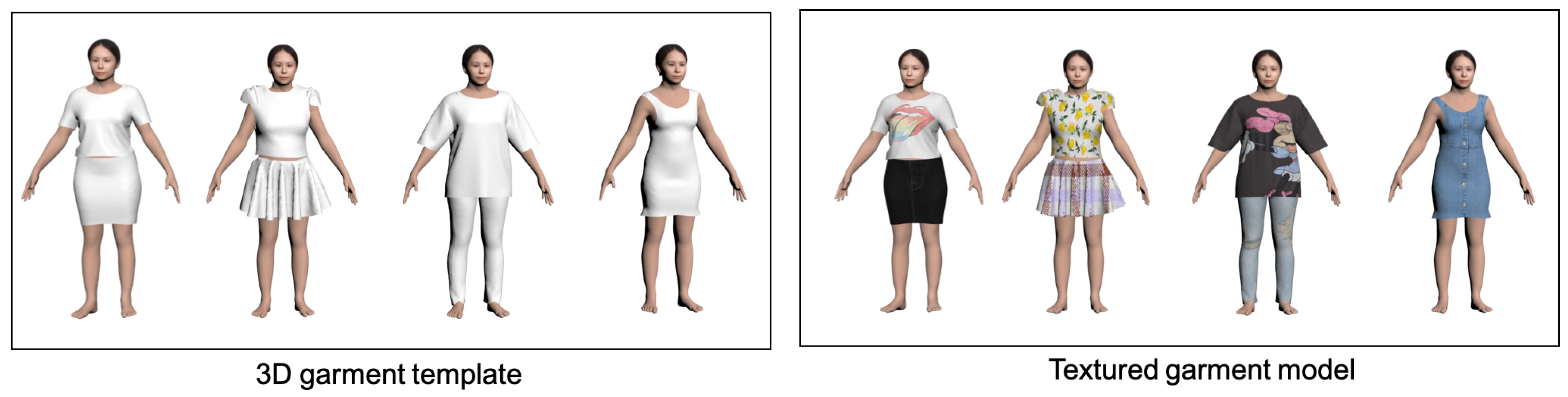

3.2. Garment Model Generation

3.2.1. 3D Garment Model Templates

3.2.2. Texture Mapping

3.3. 3D Virtual Try-On

3.3.1. VR-Based Try-On

3.3.2. AR-Based Try-On

4. Implementation

4.1. Hardware Overview

4.2. Software Overview

- Development ToolsWe developed our software using the Unity game engine (Unity Technologies, San Francisco, TX, USA) [59] version 2019.1.14f1 with Vuforia SDK and Cinema Mocap for Unity. Vuforia SDK was used to recognize the ground plane and create an AR experience. Cinema Mocap [60] was used to convert body tracking data to animation clips.

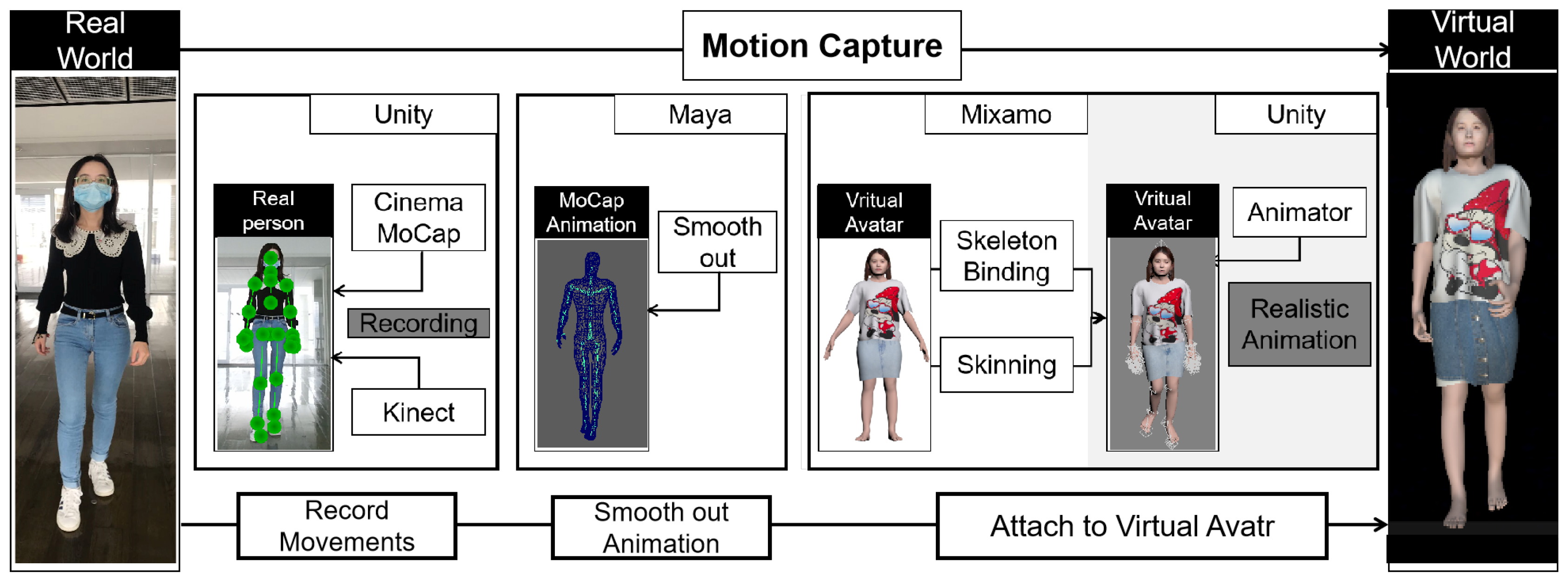

- Personalized MotionTo convert the captured mo-cap data into animation, we used a Unity plugin, Cinema-Mocap, which is a marker-less motion capture solution for Unity to create customized animations for users. As the movement captured by Kinect V2 depth sensor is quite jittery, we edited and smoothed the animation frame-by-frame in Maya [54], which is a 3D computer animation, modeling, simulation, and rendering software. The animations are then imported into Unity and the animations are attached to the virtual avatars.Our framework for motion capture using Kinect is shown in Figure 7. The movement of the users in the real world are converted into the animation of the avatar in the virtual world using these three steps:

- (1)

- Record the movements of users: We recorded users’ movements using a Kinect V2 depth sensor and Cinema Mocap in Unity. Kinect V2 sensor was used as a skeleton camera to track users’ body motion; Cinema Mocap in Unity was used to convert the motion capture data to avatar animation.

- (2)

- Smooth out animation: The animation captured by the Kinect V2 sensor contains trembling movements, which we smoothed out in Maya.

- (3)

- Attach animation to virtual avatar: Before attaching the animation to the avatar, we needed to rig the skeleton and the skin to the virtual avatar using Mixamo [61]. Then, we used an animation controller in Unity to control the virtual avatar to perform humanoid and realistic animation.

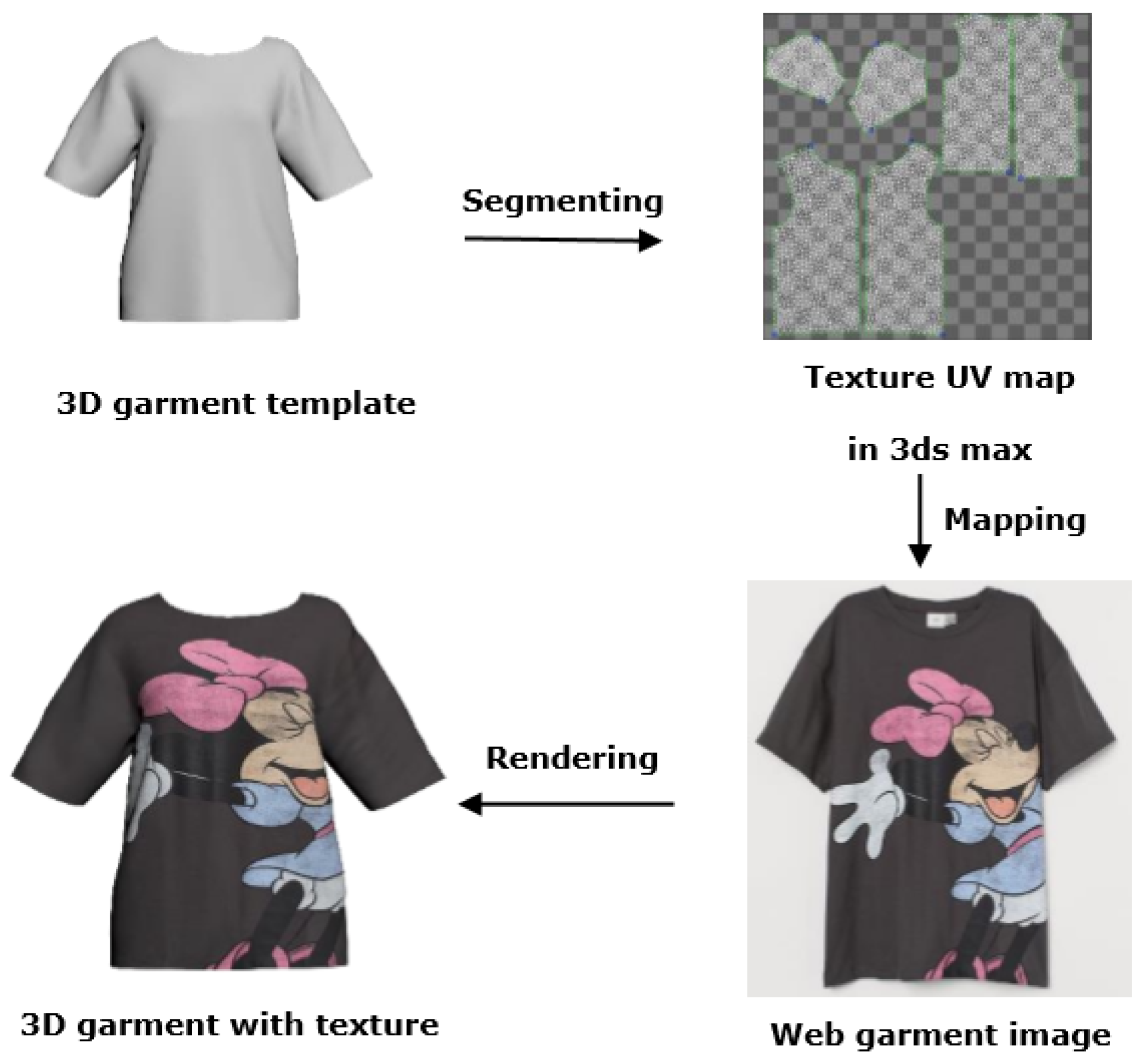

Following these three steps, users can view the virtual avatar with their personalized motion inside the VR or AR environment. - Garment Model GenerationPrevious research on garment modeling started from the 2D design pattern or 2D sketches. While other methods explored garment resizing and transfer from 3D template garments [27]. Compared to 2D design patterns, 3D garment models simulate the garment more precisely. Therefore, our method extends these methods to map the 2D image to the 3D garment models. The texture-mapping method increases the realism of the garment.We generated 3D garment models from images on online shopping websites, such as H&M and ZARA. We used Marvelous designer [56] to create a 3D garment model. The texture mapping method is shown in Figure 8. The 3D mesh of a generated garment template can be flattened into a 2D texture UV map in 3Ds Max. The 2D UV map contains several parts. For example, in the case of a T-shirt, it can be segmented into three parts: sleeves, front, and side. To map the web garment image to a 3D virtual garment template, we mapped the different segmentation parts from the garment image to its corresponding parts on the garment template. In this way, we can generate the 3D textured garment model.

5. Evaluation

5.1. Goal

5.2. Participants

5.3. User Study 1: Exploration of VR- and AR-Based Try-On

5.3.1. Experimental Design

- (1)

- Image-only (IO): Participants completed a simulation of an online shopping experience using garment pictures only. They can only imagine what they would look like wearing the clothes in their mind.

- (2)

- VR-based try-on (VR-TO): Participants completed a simulation of an online shopping experience with Virtual Reality. They can try virtual clothes on their static virtual avatar.

- (3)

- AR-based try-on (AR-TO): Participants completed a simulation of an online shopping experience with Augmented Reality. They can try virtual clothes on their static virtual avatar, and view the virtual avatar in our real life scene.

5.3.2. Measures

5.3.3. Results

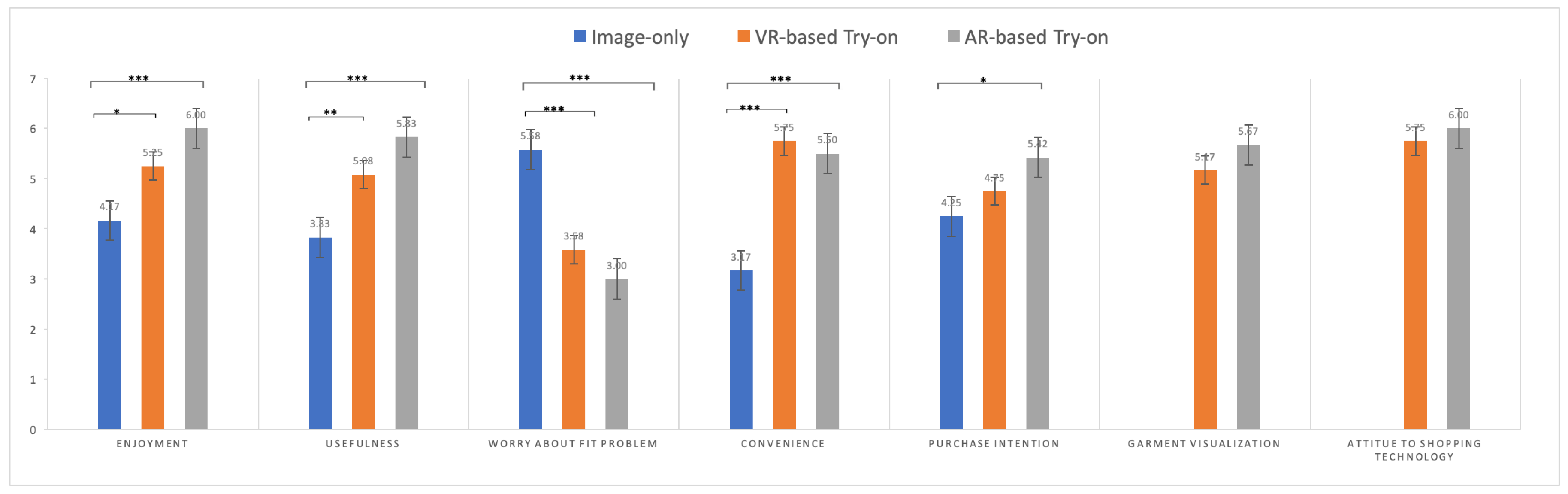

- Differences in RatingsFor statistical analysis of differences, we used repeated measures one-way ANOVA. The study we conducted meets almost all the assumptions required for one-way ANOVA repeated measures. As for the normality assumption, it is not necessary to be too strict, as long as the data approximately obey the normal distribution. One-way ANOVA was performed using SPSS [69] to assess whether there were any statistically significant differences among the means of the three independent conditions. To establish the between-group difference, post hoc tests were ran using Bonferroni method. The mean and standard deviation of the measured variables for every experimental condition are presented in Table 2. Figure 9 shows the differences among the three conditions for various items.The ANOVA result shows that significantly higher ratings in ’‘Enjoyment’ (p < 0.001), ‘’Convenience’’ (p < 0.001), ‘’Usefulness’’ (p < 0.001), ‘’Worry about fit problem’’ (p < 0.001), ‘’Purchase intention’’ (p < 0.05), while no significant difference is found in ‘’Garment visualization’’ (p = 0.08), ‘’Attitude to shopping technology’’ (p = 0.45). Post hoc tests showed significant differences between the image-only and AR-based try-on (M = 4.17 vs. M = 6.00, p < 0.001), as well as the VR-based try-on (M = 4.17 vs. M = 5.25, p < 0.05) in enjoyment. We found significant differences between the image-only and AR-based try-on (M = 3.83 vs. M = 5.92, p < 0.001), as well as the VR-based try-on (M = 3.83 vs. M = 5.08, p < 0.01) in usefulness. We also analyzed whether users worried about the fit problem after using this kind of shopping technology. The image-only condition differed from the AR-based try-on (M = 5.58 vs. M = 3.00, p < 0.001) and VR-based try-on (M = 5.58 vs. M = 3.58, p < 0.001) in terms of worry about fit problem. The image-only condition also differed from the AR-based try-on (M = 3.17 vs. M = 6.00, p < 0.001) and VR-based try-on (M = 3.17 vs. M = 5.75, p <0.001) in convenience. In addition, we found significant differences between the image-only and AR-based try-on in purchase intention (M = 4.25 vs. M = 5.42, p < 0.001).

- Qualitative DifferencesWe also asked about participants’ preferences for each condition at the end of study. The questionnaire that was administered to participants at the end of the experiment contained a set of open-ended questions. We found that all participants preferred the AR-based condition. We conducted a thematic analysis on the participants’ responses for AR and VR based try-on.For AR-based try-on, we collected the comments from the participants and summarized keywords of concern about AR-based try-on. Table 3 shows the reoccurring themes and the number of participants mentioning AR-based try-on. Participants liked the AR-based try-on as it provided a real interactive environment and enabled putting the virtual avatar into a real-life scene. Some participants found the AR view was helpful for observing the garment model and manipulating the garment model was easier. AR-based try-on allows user to manipulate their virtual avatars in the real world, which improves the interactivity between the user and the virtual avatar, thus enhancing user enjoyment in the shopping process.For VR-based try-on, we also collected various comments from the participants and summarized the keywords related to it (Table 4). Participants disliked VR-based try-on for several reasons. The participants complained about the difficulties when observing the garment model within the VR view. This is a limitation of the fixed camera perspective as it cannot provide a good overview or a detailed view. The participants complained that the virtual avatar does not look like themselves in the VR view due to the virtual environment and lighting problems, which affected their fitting experience.

5.3.4. Testing of H1 and H2

5.4. User Study 2: Exploration of the Personalized Motion on Concern About VTO

5.4.1. Experimental Design

- (1)

- AR-based try-on with no motion (NM): Participants can try virtual clothes using a static virtual avatar.

- (2)

- AR-based try-on with pre-defined motion (PDM): Participants can try virtual clothes using a virtual avatar with pre-defined animation.

- (3)

- AR-based try-on with personalized motion (PM): Participants can try virtual clothes using a virtual avatar with pre-recorded animation. The movements of each participant are captured by Kinect.

5.4.2. Measures

5.4.3. Results

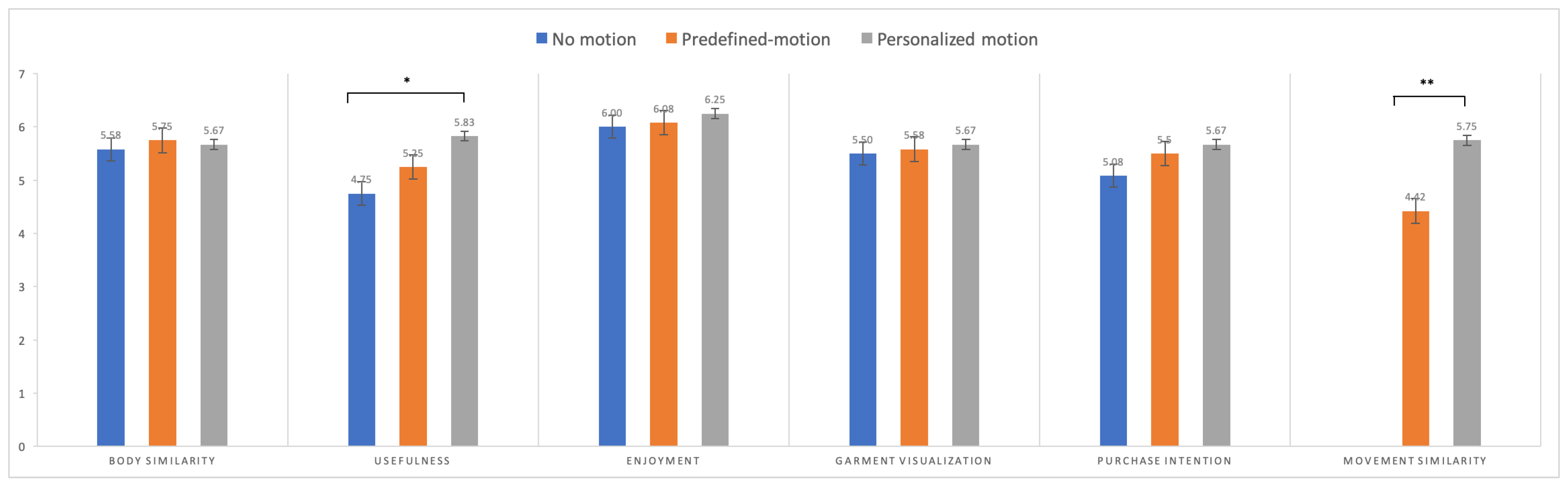

- Differences in ratingsOne-way ANOVA was performed using SPSS (IBM, Illinois, USA) [69] to assess if there were statistically significant differences among the means of the three independent conditions. To establish the between-group differences, the post hoc tests were ran using the Bonferroni method. The mean and standard deviation of the measured variables for each experimental condition are presented in Table 6. Figure 10 shows the differences among the three conditions in various items.The ANOVA result shows that significantly higher ratings in ’‘Usefulness’’ (p < 0.05), ‘’Movement similarity’’ (p < 0.001), while no significant difference is found in ‘’Body similarity’’ (p = 0.89), ‘’Enjoyment’’ (p = 0.76), ‘’Garment visualization’’ (p = 0.87), ”Purchase intention’’ (p = 0.07). Post hoc tests showed that personalized motion VTO is more useful than no-motion VTO (M = 4.75 vs. M = 5.83, p < 0.05). Regarding movement similarity to real motion, the personalized-motion VTO scored higher than the predefined-motion VTO (M = 4.36 vs. M = 5.67, p < 0.01). We did not find significant differences among the three conditions in garment visualization, enjoyment, body similarity, and purchase intention.

- Qualitative differencesThe qualitative study comments from each of the participants are listed in Table 7. The participants evaluated the three systems from four aspects: garment quality, virtual body similarity, enjoyment, and personalized motion. They thought the interactivity with the garment should be improved and the material of the garments was not realistic. The personalized virtual avatar provided by our system was similar to users, especially the personalized motion offering users a better sense of fitting on the “real me”, which makes the virtual avatar more realistic. As for enjoyment, some participants mentioned that the personalized motion makes them more interested in changing motions while fitting.We also asked for participants’ preferences for each condition at the end of study. Among the 12 female participants, 10 of them preferred the personalized motion condition, since it offered user a better sense of “real me”, and made the virtual avatar movement more realistic. In addition, personalized motion can help users gain a sense of wearing the clothes on their own body with different motions in their daily life. Two participants preferred the predefined motion condition; they thought that the personalized motion was not as smooth as the predefined motion, especially for walking animation. If the personalized motion can be smoother and more natural, they will choose the personalized-motion VTO as their preferred condition.

5.5. Testing of H3

6. Discussion

6.1. Theoretical Implications

6.2. Practical Implications

6.3. Limitations and Future Research

Author Contributions

Funding

Conflicts of Interest

Abbreviations

| VTO | Virtual Try-On |

| VR | Virtual Reality |

| AR | Augmented Reality |

| MoCap | Motion Capture |

| NM | No Motion VTO Condition |

| PM | Personalized Motion VTO Condition |

| PDM | Pre-defined Motion VTO Condition |

| IO | Image-Only |

| VR-TO | VR-based try-on |

| AR-TO | AR-based try-on |

References

- Martin, C.G.; Oruklu, E. Human friendly interface design for virtual fitting room applications on android based mobile devices. J. Signal Inf. Process. 2012, 3, 481. [Google Scholar]

- Beck, M.; Crié, D. I virtually try it...I want it! Virtual Fitting Room: A tool to increase on-line and off-line exploratory behavior, patronage and purchase intentions. J. Retail. Consum. Serv. 2018, 40, 279–286. [Google Scholar] [CrossRef]

- Shin, S.J.H.; Chang, H.J.J. An examination of body size discrepancy for female college students wanting to be fashion models. Int. J. Fash. Des. Technol. Educ. 2018, 11, 53–62. [Google Scholar] [CrossRef]

- Cases, A.S. Perceived risk and risk-reduction strategies in Internet shopping. Int. Rev. Retail Distrib. Consum. Res. 2002, 375–394. [Google Scholar] [CrossRef]

- Rosa, J.A.; Garbarino, E.C.; Malter, A.J. Keeping the body in mind: The influence of body esteem and body boundary aberration on consumer beliefs and purchase intentions. J. Consum. Psychol. 2006, 16, 79–91. [Google Scholar] [CrossRef]

- Blázquez, M. Fashion shopping in multichannel retail: The role of technology in enhancing the customer experience. Int. J. Electron. Commer. 2014, 18, 97–116. [Google Scholar] [CrossRef]

- Gao, Y.; Petersson Brooks, E.; Brooks, A.L. The Performance of Self in the Context of Shopping in a Virtual Dressing Room System. In HCI in Business; Nah, F.F.H., Ed.; Springer International Publishing: Cham, Switzerland, 2014; pp. 307–315. [Google Scholar]

- Kim, D.E.; LaBat, K. Consumer experience in using 3D virtual garment simulation technology. J. Text. Inst. 2013, 104, 819–829. [Google Scholar] [CrossRef]

- Lau, K.W.; Lee, P.Y. The role of stereoscopic 3D virtual reality in fashion advertising and consumer learning. In Advances in Advertising Research (Vol. VI); Springer: Berlin/Heidelberg, Germany, 2016; pp. 75–83. [Google Scholar]

- Merle, A.; Senecal, S.; St-Onge, A. Whether and how virtual try-on influences consumer responses to an apparel web site. Int. J. Electron. Commer. 2012, 16, 41–64. [Google Scholar] [CrossRef]

- Bitter, G.; Corral, A. The pedagogical potential of augmented reality apps. Int. J. Eng. Sci. Invent. 2014, 3, 13–17. [Google Scholar]

- Liu, F.; Shu, P.; Jin, H.; Ding, L.; Yu, J.; Niu, D.; Li, B. Gearing resource-poor mobile devices with powerful clouds: Architectures, challenges, and applications. IEEE Wirel. Commun. 2013, 20, 14–22. [Google Scholar]

- Wexelblat, A. Virtual Reality: Applications and Explorations; Academic Press: Cambridge, MA, USA, 2014. [Google Scholar]

- Yaoyuneyong, G.; Foster, J.; Johnson, E.; Johnson, D. Augmented reality marketing: Consumer preferences and attitudes toward hypermedia print ads. J. Interact. Advert. 2016, 16, 16–30. [Google Scholar] [CrossRef]

- Parekh, P.; Patel, S.; Patel, N.; Shah, M. Systematic review and meta-analysis of augmented reality in medicine, retail, and games. Vis. Comput. Ind. Biomed. Art 2020, 3, 1–20. [Google Scholar] [CrossRef] [PubMed]

- Javornik, A.; Rogers, Y.; Moutinho, A.M.; Freeman, R. Revealing the shopper experience of using a “magic mirror” augmented reality make-up application. In Proceedings of the 2016 ACM Conference on Designing Interactive Systems, Brisbane, Australia, 4–8 June 2016; Association For Computing Machinery (ACM): New York, NY, USA, 2016; Volume 2016, pp. 871–882. [Google Scholar]

- Lee, H.; Leonas, K. Consumer experiences, the key to survive in an omni-channel environment: Use of virtual technology. J. Text. Appar. Technol. Manag. 2018, 10, 1–23. [Google Scholar]

- De França, A.C.P.; Soares, M.M. Review of Virtual Reality Technology: An Ergonomic Approach and Current Challenges. In Advances in Ergonomics in Design; Rebelo, F., Soares, M., Eds.; Springer International Publishing: Cham, Switzerland, 2018; pp. 52–61. [Google Scholar]

- Han, X.; Wu, Z.; Wu, Z.; Yu, R.; Davis, L.S. Viton: An image-based virtual try-on network. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018; pp. 7543–7552. [Google Scholar]

- Sekine, M.; Sugita, K.; Perbet, F.; Stenger, B.; Nishiyama, M. Virtual fitting by single-shot body shape estimation. In Proceedings of the International Conference on 3D Body Scanning Technologies, Lugano, Switzerland, 21–22 October 2014; pp. 406–413. [Google Scholar]

- Decaudin, P.; Julius, D.; Wither, J.; Boissieux, L.; Sheffer, A.; Cani, M.P. Virtual garments: A fully geometric approach for clothing design. In Computer Graphics Forum; Wiley Online Library: Hoboken, NJ, USA, 2006; Volume 25, pp. 625–634. [Google Scholar]

- Hilsmann, A.; Eisert, P. Tracking and retexturing cloth for real-time virtual clothing applications. In Proceedings of the International Conference on Computer Vision/Computer Graphics Collaboration Techniques and Applications, Rocquencourt, France, 4–6 May 2009; Springer: Berlin/Heidelberg, Germany, 2009; pp. 94–105. [Google Scholar]

- Yamada, H.; Hirose, M.; Kanamori, Y.; Mitani, J.; Fukui, Y. Image-based virtual fitting system with garment image reshaping. In Proceedings of the 2014 International Conference on Cyberworlds, Santander, Spain, 6–8 October 2014; pp. 47–54. [Google Scholar]

- Chen, X.; Zhou, B.; Lu, F.X.; Wang, L.; Bi, L.; Tan, P. Garment modeling with a depth camera. ACM Trans. Graph. 2015, 34, 1–12. [Google Scholar] [CrossRef]

- Duan, L.; Yueqi, Z.; Ge, W.; Pengpeng, H. Automatic three-dimensional-scanned garment fitting based on virtual tailoring and geometric sewing. J. Eng. Fibers Fabr. 2019, 14. [Google Scholar] [CrossRef]

- Zhou, B.; Chen, X.; Fu, Q.; Guo, K.; Tan, P. Garment modeling from a single image. In Computer Graphics Forum; Wiley Online Library: Hoboken, NJ, USA, 2013; Volume 32, pp. 85–91. [Google Scholar]

- Yang, S.; Ambert, T.; Pan, Z.; Wang, K.; Yu, L.; Berg, T.; Lin, M.C. Detailed garment recovery from a single-view image. arXiv 2016, arXiv:1608.01250. [Google Scholar]

- Cheng, H.; Sanda, S. Application of Virtual Reality Technology in Garment Industry. DEStech Transactions on Social Science, Education and Human Science. In Proceedings of the 2017 3rd International Conference on Social Science and Management (ICSSM 2017), Xi’an, China, 8–9 April 2017. [Google Scholar] [CrossRef]

- Li, R.; Zou, K.; Xu, X.; Li, Y.; Li, Z. Research of interactive 3D virtual fitting room on web environment. In Proceedings of the 2011 Fourth International Symposium on Computational Intelligence and Design, Hangzhou, China, 28–30 October 2011; Volume 1, pp. 32–35. [Google Scholar]

- Liu, X.H.; Wu, Y.W. A 3D display system for cloth online virtual fitting room. In Proceedings of the 2009 WRI World Congress on Computer Science and Information Engineering, Los Angeles, CA, USA, 31 March–2 April 2009; Volume 7, pp. 14–18. [Google Scholar]

- Richins, M.L. Social comparison and the idealized images of advertising. J. Consum. Res. 1991, 18, 71–83. [Google Scholar] [CrossRef]

- Fiore, A.M.; Jin, H.J. Influence of image interactivity on approach responses towards an online retailer. Internet Res. 2003, 38–48. [Google Scholar] [CrossRef]

- Fiore, A.M.; Jin, H.J.; Kim, J. For fun and profit: Hedonic value from image interactivity and responses toward an online store. Psychol. Mark. 2005, 22, 669–694. [Google Scholar] [CrossRef]

- Yuan, M.; Khan, I.R.; Farbiz, F.; Yao, S.; Niswar, A.; Foo, M.H. A mixed reality virtual clothes try-on system. IEEE Trans. Multimed. 2013, 15, 1958–1968. [Google Scholar] [CrossRef]

- Magnenat-Thalmann, N.; Kevelham, B.; Volino, P.; Kasap, M.; Lyard, E. 3d web-based virtual try on of physically simulated clothes. Comput. Aided Des. Appl. 2011, 8, 163–174. [Google Scholar] [CrossRef]

- Yang, S.; Xiong, G. Try It On! Contingency Effects of Virtual Fitting Rooms. J. Manag. Inf. Syst. 2019, 36, 789–822. [Google Scholar] [CrossRef]

- Thaler, A.; Piryankova, I.; Stefanucci, J.K.; Pujades, S.; de La Rosa, S.; Streuber, S.; Romero, J.; Black, M.J.; Mohler, B.J. Visual perception and evaluation of photo-realistic self-avatars from 3D body scans in males and Females. Front. ICT 2018, 5, 18. [Google Scholar] [CrossRef]

- Kim, J.; Forsythe, S. Adoption of virtual try-on technology for online apparel shopping. J. Interact. Mark. 2008, 22, 45–59. [Google Scholar] [CrossRef]

- Meng, Y.; Mok, P.; Jin, X. Interactive virtual try-on clothing design systems. Comput. Aided Des. 2010, 42, 310–321. [Google Scholar] [CrossRef]

- Warehouse. Available online: https://www.warehouselondon.com/row/homepage (accessed on 21 November 2019).

- Cai, S.; Xu, Y. Designing not just for pleasure: Effects of web site aesthetics on consumer shopping value. Int. J. Electron. Commer. 2011, 15, 159–188. [Google Scholar] [CrossRef]

- Häubl, G.; Trifts, V. Consumer decision making in online shopping environments: The effects of interactive decision aids. Mark. Sci. 2000, 19, 4–21. [Google Scholar] [CrossRef]

- Senecal, S.; Nantel, J. The influence of online product recommendations on consumers’ online choices. J. Retail. 2004, 80, 159–169. [Google Scholar] [CrossRef]

- Tam, K.Y.; Ho, S.Y. Understanding the impact of web personalization on user information processing and decision outcomes. MIS Q. 2006, 30, 865–890. [Google Scholar] [CrossRef]

- Lee, C.K.C.; Fernandez, N.; Martin, B.A. Using self-referencing to explain the effectiveness of ethnic minority models in advertising. Int. J. Advert. 2002, 21, 367–379. [Google Scholar] [CrossRef]

- D’Alessandro, S.; Chitty, B. Real or relevant beauty? Body shape and endorser effects on brand attitude and body image. Psychol. Mark. 2011, 28, 843–878. [Google Scholar] [CrossRef]

- Boonbrahm, P.; Kaewrat, C.; Boonbrahm, S. Realistic simulation in virtual fitting room using physical properties of fabrics. Procedia Comput. Sci. 2015, 75, 12–16. [Google Scholar] [CrossRef]

- Javornik, A. ‘It’s an illusion, but it looks real!’Consumer affective, cognitive and behavioural responses to augmented reality applications. J. Mark. Manag. 2016, 32, 987–1011. [Google Scholar] [CrossRef]

- Body Data Platform, 3D LOOK. Available online: https://3dlook.me/ (accessed on 3 May 2020).

- Realistic 3D Avatars for Game, AR and VR, Avatar SDK. Available online: https://avatarsdk.com/ (accessed on 15 April 2020).

- Gültepe, U.; Güdükbay, U. Real-time virtual fitting with body measurement and motion smoothing. Comput. Graph. 2014, 43, 31–43. [Google Scholar] [CrossRef]

- Adikari, S.B.; Ganegoda, N.C.; Meegama, R.G.; Wanniarachchi, I.L. Applicability of a Single Depth Sensor in Real-Time 3D Clothes Simulation: Augmented Reality Virtual Dressing Room Using Kinect Sensor. Adv. Hum. Comput. Interact. 2020, 2020. [Google Scholar] [CrossRef]

- KINECT. Available online: https://en.wikipedia.org/wiki/Kinect (accessed on 14 April 2020).

- Maya. Available online: https://www.autodesk.co.jp/products/maya/overview (accessed on 10 May 2020).

- 3ds MAX. Available online: https://www.autodesk.co.jp/products/3ds-max/overview?plc=3DSMAX&term=1-YEAR&support=ADVANCED&quantity=1 (accessed on 20 April 2020).

- Marvelous Designer. Available online: https://www.marvelousdesigner.com/ (accessed on 13 March 2020).

- H&M. Available online: https://www2.hm.com/ja_jp/index.html (accessed on 13 October 2019).

- ZARA. Available online: https://www.zara.com/jp/ja/woman-skirts-l1299.html?v1=1445719 (accessed on 15 October 2019).

- Unity Game Enginee. Available online: https://unity.com/ja (accessed on 1 September 2019).

- CinemaMocap. Available online: https://assetstore.unity.com/packages/tools/animation/cinema-mocap-2-markerless-motion-capture-56576 (accessed on 18 April 2020).

- Mixamo. Available online: https://www.mixamo.com/#/ (accessed on 25 November 2019).

- Suh, K.S.; Kim, H.; Suh, E.K. What if your avatar looks like you? Dual-congruity perspectives for avatar use. MIs Q. 2011, 35, 711–729. [Google Scholar] [CrossRef]

- Rohm, A.J.; Kashyap, V.; Brashear, T.G.; Milne, G.R. The use of online marketplaces for competitive advantage: A Latin American perspective. J. Bus. Ind. Mark. 2004, 19, 372–385. [Google Scholar] [CrossRef]

- Mäenpää, K.; Kale, S.H.; Kuusela, H.; Mesiranta, N. Consumer perceptions of Internet banking in Finland: The moderating role of familiarity. J. Retail. Consum. Serv. 2008, 15, 266–276. [Google Scholar] [CrossRef]

- Childers, T.L.; Carr, C.L.; Peck, J.; Carson, S. Hedonic and utilitarian motivations for online retail shopping behavior. J. Retail. 2001, 77, 511–535. [Google Scholar] [CrossRef]

- Chandran, S.; Morwitz, V.G. Effects of participative pricing on consumers’ cognitions and actions: A goal theoretic perspective. J. Consum. Res. 2005, 32, 249–259. [Google Scholar] [CrossRef]

- Chen, L.-d.; Gillenson, M.L.; Sherrell, D.L. Enticing online consumers: An extended technology acceptance perspective. Inf. Manag. 2002, 39, 705–719. [Google Scholar]

- Davis, F.D. Perceived Usefulness, Perceived Ease of Use, and User Acceptance of Information Technology. MIS Q. 1989, 13, 319–340. [Google Scholar] [CrossRef]

- SPSS. Available online: https://www.ibm.com/analytics/spss-statistics-software (accessed on 3 July 2020).

- Banakou, D.; Slater, M. Body ownership causes illusory self-attribution of speaking and influences subsequent real speaking. Proc. Natl. Acad. Sci. USA 2014, 111, 17678–17683. [Google Scholar] [CrossRef] [PubMed]

- Bonetti, F.; Warnaby, G.; Quinn, L. Augmented reality and virtual reality in physical and online retailing: A review, synthesis and research agenda. In Augmented Reality and Virtual Reality; Springer: Berlin/Heidelberg, Germany, 2018; pp. 119–132. [Google Scholar]

- Zhang, J. A Systematic Review of the Use of Augmented Reality (AR) and Virtual Reality (VR) in Online Retailing. Ph.D. Thesis, Auckland University of Technology, Auckland, New Zealand, 2020. [Google Scholar]

- Chevalier, C.; Lichtlé, M.C. The Influence of the Perceived Age of the Model Shown in an Ad on the Effectiveness of Advertising. Rech. Appl. Mark. Engl. Ed. 2012, 27, 3–19. [Google Scholar] [CrossRef]

- Keh, H.T.; Park, I.H.; Kelly, S.; Du, X. The effects of model size and race on Chinese consumers’ reactions: A social comparison perspective. Psychol. Mark. 2016, 33, 177–194. [Google Scholar] [CrossRef]

- Plotkina, D.; Saurel, H. Me or just like me? The role of virtual try-on and physical appearance in apparel M-retailing. J. Retail. Consum. Serv. 2019, 51, 362–377. [Google Scholar] [CrossRef]

- Shim, S.I.; Lee, Y. Consumer’s perceived risk reduction by 3D virtual model. Int. J. Retail Distrib. Manag. 2011, 39, 945–959. [Google Scholar] [CrossRef]

- Akiyama, G.; Hsieh, R. Karte Garden. In Proceedings of the Virtual Reality International Conference-Laval Virtual, Laval, France, 4–6 April 2018; pp. 1–3. [Google Scholar]

| Measurement Items | Questions |

|---|---|

| Enjoyment | Shopping with this system was enjoyable for me. |

| Convenience in examining the product | I gain a sense of how the outfit might look for various occasions. |

| Garment visualization | Having a model in a virtual environment real environment helps me understand more about the appearance of the garments. |

| Worry about fit problem | I feel worried that the clothes I choose may unsuitable for me. |

| Usefulness | This shopping system would enhance the effectiveness of the shopping experience. |

| Purchase intention | It is very likely that I would purchase this product. |

| Attitude toward the shopping technology | I want to use this system when I buy clothes online in the future. |

| Dependent Variable | Condition (A) | Mean (A) | Condition (B) | Mean (B) | Significance of the Mean Difference (A vs. B) |

|---|---|---|---|---|---|

| Perceived Enjoyment | Image-Only | 4.17 (1.115) | VR-based try-on | 5.25 (1.138) | p < 0.050 |

| AR-based try-on | 6.00 (0.853) | p < 0.001 | |||

| VR-based try-on | 5.25 (1.138) | AR-based try-on | 6.00 (0.853) | ns | |

| Usefulness | Image-only | 3.83 (1.267) | VR-based try-on | 5.08 (0.669) | p < 0.010 |

| AR-based try-on | 5.83 (0.577) | p < 0.001 | |||

| VR-based try-on | 5.08 ( 0.669) | AR-based try-on | 5.83 (0.577) | ns | |

| Worry about fit problem | Image-only | 5.58 (0.996) | VR-based try-on | 3.58 (1.165) | p < 0.001 |

| AR-based try-on | 3.00 (0.953) | p < 0.001 | |||

| VR-based try-on | 3.58 (1.165) | AR-based try-on | 3.00 (0.953) | ns | |

| Purchase intention | Image-only | 4.25 (1.422) | VR-based try-on | 4.75 (0.866) | ns |

| AR-based try-on | 5.42 (0.793) | p < 0.050 | |||

| VR-based try-on | 4.75 (0.866) | AR-based try-on | 5.42 (0.793) | ns | |

| Convenience | Image-only | 3.17 (1.267) | VR-based try-on | 5.75 (0.866) | p < 0.001 |

| AR-based try-on | 5.50 (0.798) | p < 0.001 | |||

| VR-based try-on | 5.75 (0.866) | AR-based try-on | 5.50 (0.798) | ns |

| Categories | Conclusions | Free Comments |

|---|---|---|

| AR-based try-on | Highly realistic | “Light and environment in AR-based try-on are more realistic than VR-based try-on.” “AR-based real environment is closer to real-life scene, making the fitting effect more real.” |

| High accuracy of human avatar | “The virtual human model in VR-based try-on does not look like me.” “I can pay more attention to the sense of whole body in AR-based try-on.” | |

| Better 3D garment visualization | “I can see the effect close up or from a distance in AR-based try-on. It is very convenient for checking the details of the garments.” “I can obtain a view by looking thoroughly and examining the garments in details from different angles.” | |

| High interactivity | “AR-based try-on superimposed on the real world. I can interact with my model in the real world background, right next to me.” “It’s more interesting to interact with my own virtual avatars in a real-life scene.” “It is a game-like experience, I can put my virtual body into real life and interact with it. It is very interesting for me.” |

| Categories | Conclusions | Free Comments |

|---|---|---|

| VR-based try-on | Higher attractiveness | “The different wearing conditions give me some inspiration of how to design an outfit. It is very interesting.” “AR-based try-on looks better, I also like the changing environment function in VR-based try-on. So, If they can be combined, it will be better.” |

| Low accuracy of human avatar | “I think the virtual human model in VR-based try-on does not look like me, so when I am fitting clothes in VR, it is not realistic.” | |

| Single perspective and lower interactivity | “I can view virtual human models and clothes models in 360 degrees in AR-based try-on. The perspective of VR-based try-on is too simple. I cannot view the models from the view points that I wanted.” |

| Measurement Items of User Study 2 | |

|---|---|

| Body similarity | I feel that the virtual body I saw was my own body. |

| Movement similarity | I feel that the movement of the virtual avatar was similar to my own movements. |

| Usefulness | I can imagine what it looks like when I am wearing clothes by performing some activities in real life. |

| Garment visualization | Model walking in a real environment helps me know more about the appearance of the clothes. |

| Enjoyment | Seeing my own model in the real world makes me feel interested. |

| Purchase intention | The probability that I would buy the product is very high. |

| Dependent Variable | Condition (A) | Mean (A) | Condition (B) | Mean (B) | Significance of the Means Difference (A vs. B) |

|---|---|---|---|---|---|

| Body similarity | no-motion | 5.58 (0.793) | predefined motion | 5.75 (0.965) | ns |

| personalized motion | 5.67 (0.778) | ns | |||

| predefined motion | 5.25 (1.138) | personalized motion | 6.00 (0.853) | ns | |

| Usefulness | no-motion | 4.75 (1.138) | predefined motion | 5.25 (0.622) | ns |

| personalized motion | 5.83 (0.835) | p < 0.05 | |||

| predefined motion | 5.25 (0.622) | personalized motion | 5.83 (0.835) | ns | |

| Garment visualization | no motion | 5.50 (0.905) | predefined motion | 5.58 (0.669) | ns |

| personalized motion | 5.67 (0.778) | ns | |||

| pre-defined motion | 5.58 (0.669) | personalized motion | 5.67 (0.778) | ns | |

| Enjoyment | no motion | 6.00 (1.128) | pre-defined motion | 6.08 (0.669) | ns |

| personalized motion | 6.25 (0.622) | ns | |||

| pre-defined motion | 6.08 (0.669) | personalized motion | 6.25 (0.622) | ns | |

| Purchase intention | no motion | 5.08 (0.793) | predefined motion | 5.50 (0.522) | ns |

| personalized motion | 5.67 (0.492) | ns | |||

| predefined motion | 5.50 (0.522) | personalized motion | 5.67 (0.492) | ns |

| Categories | Conclusions | Free Comments |

|---|---|---|

| Garment quality | The material of clothes is not realistic. | “The garment looks unrealistic.” “The material of the clothes does not look real.” |

| Body similarity | Virtual avatar with personalized motion makes the virtual avatar more similar with users. | “The virtual avatar looks like me.” “The face of the virtual avatar is very similar to me.” “The virtual avatar is just another me.” |

| Enjoyment | AR-based VTO with personalized motion is enjoyable for users. | “The personalized motion condition makes me more interested in changing my avatar’s motion.” “Personalized motion is realistic, making me feel engaged.” |

| Personalized motion | Although the personalized motion is not as smooth as pre-defined motion, it is more similar to users’ own movements. | “The personalized motion looks more natural and realistic.” “Compared with predefined motion, personalized motion offers a sense of the real me, which makes the virtual avatar’s movement more realistic.” “The predefined motion is standardized, which does not look similar to me.” “The personalized motion is not smooth, I could gain a better understanding with a smoother motion.” “With the personalized motion, I can also check the shape of clothes when the model is moving.” “The AR-based VTO with personalized motion is closest to me. I feel like I am looking into a mirror.” “It would be nice if the virtual avatar can have the user’s facial expression as well.” |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Liu, Y.; Liu, Y.; Xu, S.; Cheng, K.; Masuko, S.; Tanaka, J. Comparing VR- and AR-Based Try-On Systems Using Personalized Avatars. Electronics 2020, 9, 1814. https://doi.org/10.3390/electronics9111814

Liu Y, Liu Y, Xu S, Cheng K, Masuko S, Tanaka J. Comparing VR- and AR-Based Try-On Systems Using Personalized Avatars. Electronics. 2020; 9(11):1814. https://doi.org/10.3390/electronics9111814

Chicago/Turabian StyleLiu, Yuzhao, Yuhan Liu, Shihui Xu, Kelvin Cheng, Soh Masuko, and Jiro Tanaka. 2020. "Comparing VR- and AR-Based Try-On Systems Using Personalized Avatars" Electronics 9, no. 11: 1814. https://doi.org/10.3390/electronics9111814

APA StyleLiu, Y., Liu, Y., Xu, S., Cheng, K., Masuko, S., & Tanaka, J. (2020). Comparing VR- and AR-Based Try-On Systems Using Personalized Avatars. Electronics, 9(11), 1814. https://doi.org/10.3390/electronics9111814