1. Introduction

Because of the technological advances in the field of computational biology, the huge amount of gene expression data collected represent a great opportunity to learn about the complex biological mechanisms of living organisms [

1]. In this context, machine learning (ML) techniques have been successfully applied to gene expression data in order to discover significant, non-trivial, and useful knowledge about how genes interact in a specific biological process [

2,

3]. Among the different ML techniques, Biclustering stands out as one of the most important approaches in this field, being applied to the discovery of functional annotations, co-regulation of metabolic pathways and gene networks, modularity analysis, biological networks elucidation, or disease sub-type identification of gene-drug association, among others [

4,

5,

6,

7,

8,

9].

The main objective of Biclustering is to extract local patterns from input datasets, which is known to be a NP-hard problem. In particular, Biclustering techniques find groups of genes that share a common behaviour under a certain group of experimental conditions (biclusters) [

10]. However, nowadays, they present two main issues: the computational performance when processing large input gene expression datasets and the need to validate the huge number of generated biclusters [

11].

In the last years, Biclustering-based studies have focused their efforts on overcoming the processing of large datasets in a reasonable time and scalable way [

12,

13]. This new situation may well be considered as Big Data context. To do so, High Performance Computing (HPC) computational technologies have been used in the literature [

14]. HPC technology provides hardware and software resources to create parallel and distributed computing strategies, such as Apache Hadoop, Apache Spark and Graphic Processing Units (GPU), among others. Apache Hadoop [

15] uses its distributed file system (HDFS) and the Map Reduce [

16] paradigm to process huge amounts of data. For example, in the work of Liao et al. [

17], the authors developed a Hadoop version of the Non-Negative Factorization Biclustering algorithm, able to process matrices with a size of up to millions of rows per a million of columns. Regarding Apache Spark [

18], the difference between it and Hadoop is that the former does not distribute data, only operates on distributed data collections. Apache Spark is used in the work of Sarazin et al. [

19], in which a cluster of 10 machines is used in order to obtain biclusters from four synthetic datasets with a dimension of up to two-million rows and columns. Finally, Graphics Processing Units (GPUs) and the CUDA [

20] environment are also used by the last Biclustering research works to accelerate their computational performance [

21,

22,

23,

24]. As an example, in the work of Liu et al. [

25], the Geometric Biclustering Algorithm (GBC) is adapted to GPU technology. During experimentation, GBC was able to process five large gene expression datasets, generating a maximum of 8280536 biclusters.

All of the aforementioned efforts to process large gene expression datasets have a direct consequence: the number of biclusters generated becomes huge [

25,

26]. Additionally, the application of a Biclustering technique to this kind of datasets does not imply that all its results are biologically relevant, since their goodness is subject to a subsequent validation process. Biclusters are usually validated by statistical methods or biological knowledge stored in public databases [

27]. For example, one of the most used statistical measure to evaluate biclusters is the Mean Squared Residue (MSR) [

28], while gene enrichment analysis based on the knowledge stored in Gene Ontology Database (GO) [

29], is the most used biological validation method. During the last years, Biclustering evaluation measures and tools have focused their efforts on improving the accuracy of the results and on developing intuitive and accessible web user interfaces. Thus, they did not consider massively evaluating more than one bicluster. The following tools, like gProfiler [

30], Enrichr [

31], UBiT2 [

32], or WebGestalt [

33], are some examples. The only exception is BiGO [

34], that is able to analyze and compare more than one gene list at a time, although it has not been designed to scale against a very high number of results. The lack of validation methods to massively process a large number of biclusters makes some of the latest Biclustering approaches have to limit their results. For example, in the work of Orzechowski et al. [

35], the number of biclusters generated by EBIC, an evolutionary multi-GPU Biclustering algorithm, was limited to 100. Thus, authors were able to use the R package GOStats [

36], which works with a single gene list, in order to validate the biclusters in a biological way.

This work presents gMSR, a multi-GPU version of the MSR bicluster validation measure. This version offers the possibility of validating a huge number of biclusters in a reasonable time. Consequently, a short and ordered list of the best validated biclusters is shown. The high level of parallelization of the calculation of MSR measure and the use of the GPU devices in current Biclustering techniques are the main reasons that gMSR also uses this technology as acceleration method. As long as we know, gMSR is the first method that exploits all of the computational capabilities of GPU devices to accelerate the validation of a large number of biclusters. Only the work of Gomez-Pulido et al. [

37] implemented before the MSR value in a parallel way. The main difference is that authors used a hardware implementation by means of Field Programmable Gate Array (FPGA) technology. Additionally, gMSR is able to equally distribute the processing tasks between multiple GPU devices (multi-GPU), improving its performance and scalability. The results show that gMSR achieves the validation task in a very optimal way when the number of biclusters and their size increase. gMSR source code is publicly available at

https://github.com/aureliolfdez/gmsr.

In summary, this work provides some novelty features, such as the ability to validate a huge number of biclusters in a very fast way and without memory issues, due to the the use of all available GPU resources. Additionally, gMSR has been designed to use more than one GPU device, thus multiplying its processing capacity. Besides, gMSR is independent of the Biclustering algorithm that generated the results. Due to this, it can easily be used as an evaluation module in Biclustering algorithms that are implemented in CUDA C/C++.

The rest of this article is organized, as follows.

Section 2 details the methodology of our multi-GPU method and the datasets used in the experimentation. gMSR runtime performance is evaluated and compared in

Section 3. Finally,

Section 4 summarizes the main conclusions derived from this work.

2. Materials and Methods

The main objective of gMSR implementation is to take advantage of the computational resources of the GPU devices to accelerate the evaluation of a large number of biclusters. In this section, the MSR measure is analyzed from the parallelization point of view. Subsequently, the implementation of the novel gMSR based on the different levels of the MSR parallelization is detailed. Finally, the datasets and the experimental design used in the present work are described.

2.1. Parallelization of the MSR Measure

In the MSR measure [

28], the residue,

R, is used in order to evaluate the coherence of an element of a bicluster regarding the rest of elements of the bicluster. A residue R of an element

of the bicluster

=

, composed by a set of rows

I and a set of columns

J, is:

where

is the expression level of the gene

i under the experimental condition

j,

the mean of the

i-th row of a bicluster,

the mean of the

j-th column, and

is the mean of all elements in the bicluster. The means are calculated by:

Finally, the MSR value of a bicluster

=

is the sum of the square residues of all the elements that a bicluster contains:

A bicluster is

-bicluster if MSR

≤

for some given threshold

≥ 0. The coherence of a

-bicluster improves as its MSR value is closer to 0. The Equation (

3) can also be used to measure cluster error in general systems [

38].

The mean square residue (MSR) is suitable to be parallelized in several levels:

High level: the calculation of the MSR value,

, of every bicluster

=

, is an independent task (see Equation (

3)).

Medium level: for each bicluster,

, the residue,

, of every element

, can be processed independently (see Equation (

1)).

Low level: for each residue

, every mean (see Equation (

2)) can be calculated in an independent way.

As can be observed, the degree of parallelization of the MSR measure makes it very suitable to be implemented using GPU technology. In the next section, the way these independent tasks are distributed among the GPU resources in an efficient way will be explained.

2.2. The gMSR Algorithm

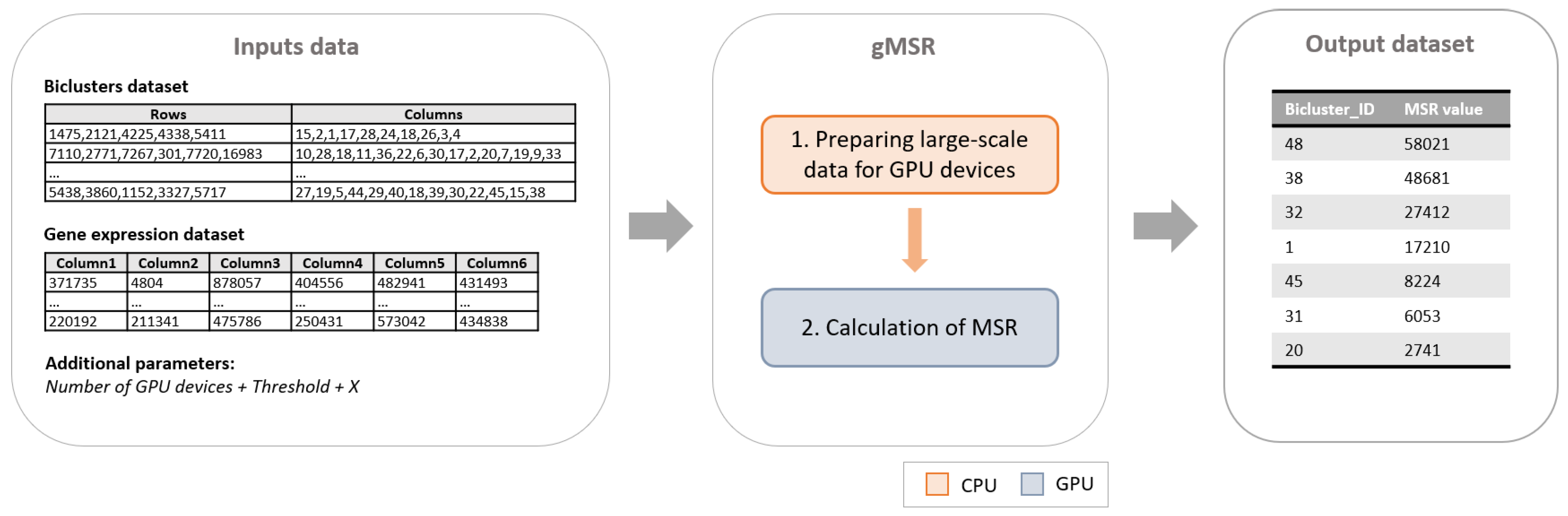

gMSR is a CUDA implementation of the MSR measure organized in two main phases (see

Figure 1). The first phase, which is executed in CPU, is the responsible for the input data distribution. The second phase calculates in GPU the MSR value of each biclusters.

The input of this implementation is composed by a gene expression dataset, a set of biclusters extracted from it, the number of the best biclusters to be returned by gMSR, the number of GPU devices to use, and a MSR value threshold. As an output, gMSR generates an ordered list with the best biclusters according to their MSR value. The following subsections will address the characteristics of each phase of gMSR.

2.2.1. Phase 1: Preparing Large-Scale Data for GPU Devices

Implementing an algorithm using CUDA architecture implies important challenges. One of them is related with the proper use of the GPU’s memory storage. In a GPU device, there are two types of memories: the shared memory (lower latency and lower storage capacity) and the global memory (higher latency and higher storage capacity). To process large input datasets without memory issues, it is necessary to select the appropriate type of memory in each case. In this work, the GPU global memory will storage the input data, while the GPU shared memory will be used to storage partial results.

Because the input data are initially stored in CPU memory, this first phase handles the transfer of data from CPU to GPU. For this reason, this is the only phase executed on the CPU. Thus, the input data are distributed, as follows:

This input data distribution allows for gMSR to process large input datasets without suffering any type of memory overflow in the GPU devices, minimizing the number of data transfers with the CPU memory.

2.2.2. Phase 2: Calculation of MSR on GPU Devices

The MSR measure is suitable to be parallelized in several levels, as was commented in

Section 2.1. A GPU device is composed by a lot of threads that are distributed in several CUDA blocks. Every CUDA block is responsible for processing the MSR of a single bicluster.

Figure 3 shows how the execution threads of a CUDA block process three independent tasks during the MSR calculation:

Calculation of the means of a bicluster.

Calculation of the residues of each element of a bicluster.

Calculation of the MSR value of a bicluster.

The first task corresponds to the low level of parallelization of the MSR (see

Section 2.1). Thus, a CUDA block is responsible of calculating all of the means associated to a bicluster. Each thread of a CUDA block calculates the average of a row and a column of a bicluster. However, a bicluster can have more rows and/or columns than threads (generally 1024 threads for each block). In these cases, gMSR distributes groups of rows and columns between each thread in a CUDA block in a balanced way. The number of rows and columns and the results of the means calculation are stored in the GPU shared memory. Thus, these values are used by all of the CUDA threads in a block during the whole MSR calculation process.

The medium level of parallelization of the MSR (see

Section 2.1) corresponds to the second task, which is, the calculation of the residue of each element in a bicluster. All the elements are distributed equally between the threads of the block and each thread calculates the residue of an element at a time. The sum of all the residues of a bicluster is being stored in the shared memory, since this value is used by the next task.

Finally, the last task corresponds to the highest level of parallelization of the MSR value (see

Section 2.1). The final MSR value of a bicluster is calculated in a CUDA block simultaneously with the processing of the residues. Once this process is finished, one of the threads of the CUDA block stores the MSR value of the bicluster in the GPU global memory. When all of the biclusters are processed in the GPU devices, the MSR values are stored in CPU memory in form of an ordered list. In this list, the biclusters with a MSR value greater than the threshold that was provided as an input parameter are eliminated.

In summary, during this implementation, the global and shared memory of GPU devices have been used by all tasks. The global memory has been used to store the input datasets and other data structures, such as arrays. On the other hand, the shared memory has been used for storing the partial results of each CUDA block, allowing for improving of the computational performance. Finally, gMSR makes the most of all the resources of the GPU devices, since in every task the processing is distributed equally between all of the threads.

2.3. Datasets Description

There are two different types of biclusters datasets used in the experimentation part of this work: those that are composed by synthetic biclusters and those composed by real biclusters. The synthetic biclusters are generated by randomly combining the rows and columns from a gene expression matrix. The real ones are those obtained by applying a Biclustering algorithm to a gene expression matrix. In this work, the BiBit Biclustering algorithm [

39] has been used to generate real biclusters. This algorithm has been selected, because it can generate a great number of results in a short time.

For each experiment, gMSR needs a gene expression dataset to calculate the MSR value of the biclusters generated from it. Hence, for the tests carried out with synthetic biclusters, the small cell lung cancer (SCLC) gene expression dataset is used. This dataset, provided by Sato et al. [

40], is available on the NCBI database (accession number GDS4794). It comprises 65 samples and 54,675 genes, including 23 clinical SCLC samples and 42 normal tissue samples. Additionally, the human GTEX gene expression dataset obtained from [

41] has been used in the test based on real biclusters. This dataset, a float number matrix of 8555 × 5177, contains analysis of RNA sequencing data from 1641 postmortem samples across 43 tissues from 175 individuals.

The BiBit algorithm has been designed in order to process binary data, so the binarization of the input gene expression dataset is a mandatory step to generate the real biclusters datasets. Then, binary values 1 and 0 mean that a gene

r is expressed or not under an experimental condition

c, respectively [

24]. Although there are many strategies to create a binary version of a gene expression dataset [

42], in this work one of the simplest statistical methods to transform the data into binary values has been used: the mean of all expression values from every gene

r has been considered as a threshold. If a concrete expression value of a gene

r is under the threshold, it is considered as a 0 (1 in any other case).

Once the gene expression matrix has been binarized, some input parameters are required to apply the BiBit algorithm. The input parameters mnr (minimum number of rows allowed in final biclusters) and mnc (minimum number of columns allowed in final biclusters) are set to 2, in order to generate the maximum number of biclusters and test the implementations under the least favorable conditions.

3. Results and Discussion

In this section, the study of the computational performance and the scalability behavior of the gMSR implementation is presented. A sequential version of the original MSR algorithm has been used in order to complete this study with a performance comparison. Only the process of calculating the MSR for all biclusters has been took into account in order to measure the execution times, so the time required for storing the results in external files is not considered. The GPU and the sequential versions of the MSR algorithm receive the same gene expression and bicluster datasets. The output of both versions is the same for all experiments of this work.

The analysis of two different types of biclusters datasets has been included in this experimentation: synthetic biclusters datasets and real biclusters datasets. The synthetic biclusters have been randomly generated from a gene expression dataset in order to control the number of biclusters and their size. The real biclusters datasets are obtained by the application of gBiBit [

43], a multi-GPU implementation of BiBit [

39] biclustering algorithm. The objective of both analysis is to compare the performance and the scalability of gMSR in its single-GPU and multi-GPU version.

All of the experiments have been carried out on an Intel Xeon E5-2686 v4 (18 cores at 2.30 GHz) with 32 GB RAM and 8 NVIDIA K80 12 GB graphics cards, with a total of 2496 CUDA cores each. CUDA 11 version and compute capability (CC) 3.7 version have been used to compile gMSR. These versions depend on the type of NVIDIA graphics card installed in the system. The two implementations of the MSR under study are implemented in C/C++. Finally, single-GPU and multi-GPU versions of gMSR has been applied to all the datasets.

3.1. Synthetic Biclusters Analysis

In this experimentation, synthetic biclusters randomly generated from the small cell lung cancer (SCLC) gene expression dataset are used. The objective is to analyze how the performance and scalability of gMSR is affected by the number of biclusters processed and their size. To do so, 27 synthetic bicluster datasets have been created. In twenty of them, the size of the biclusters is fixed (30 × 30), while the number of biclusters generated are between 50,000 and 1,000,000, with an increase of 50,000 for each dataset. In the other 7 datasets, the number of biclusters is fixed to 500,000, while the size of every bicluster varies from 5 × 5 to 50 × 50, with an increase of 5 × 5 for each dataset.

Following, the results of the two evaluations are presented: a performance comparison between gMSR and its sequential version and a scalability study for multi-GPU environments.

3.1.1. Performance Evaluation

Figure 4 shows the results of the comparison between the sequential version of MSR and single-GPU mode of gMSR. The graph on the left shows the behavior of gMSR when the number of biclusters increases. As it can be seen, the trend of the computational performance of the sequential version is linear, while gMSR presents a constant behavior. As it can be observed in the execution times

Table A1 (see the

Appendix A), for the datasets with 50,000 and 1,000,000 biclusters, the sequential version takes 93.34 s and 3869.25 s, respectively, in order to evaluate the biclusters. However, for the gMSR version, the times registered are 0.75 s and 8.94 s, for the same datasets.

In

Figure 4, the graph on the right shows the execution times when the size of the biclusters increases. In this case, the sequential version shows an exponential behavior, reaching 40395.9 s in order to process 500,000 biclusters with size 65 × 65. However, the trend of the gMSR behavior is constant again, like in the first test, being able to process the same dataset in 6.85 s. The execution times can be consulted in the

Table A2 in the

Appendix A.

It can be concluded that, for the sequential version of MSR, its execution time increases with the two variables tested: the number of biclusters and their size. Particularly, its performance is worse when the size of each bicluster increases. However, gMSR is not affected by the increase of any of the two aforementioned variables. Thus, gMSR is 493.89 times faster, on average, than the sequential version of MSR when the number of biclusters varies, with a standard deviation value of 69.67. Additioanlly, gMSR is 1538.03 faster, on average, when the size of the biclusters varies, with a standard deviation value of 2007.78.

3.1.2. Scalability Evaluation

gMSR scalability has been analyzed using 1, 2, 4, and 8 GPU devices for each synthetic bicluster dataset. All of the execution times of gMSR are presented in

Table A1 and

Table A2 in the

Appendix A.

Figure 5 presents the behaviour of the scalability of gMSR, in which a comparison between the execution times of the multi-GPU mode of gMSR is depicted.

In

Figure 5, the graph on the left shows the evolution in the scalability when the number of biclusters increases. It can be observed that, with a lower number of biclusters, the best execution times are registered when using a single GPU device. In this case, for a dataset with 50,000 biclusters, the results were obtained in 0.75 s in single-GPU mode, while the 8 GPUs execution (multi-GPU mode) took 1.53 s. As the number of biclusters grows, the execution times of the single-GPU version increases, while the execution time with multiple GPUs tends to stabilize. For example, for 500,000 biclusters, the gMSR execution is 3.60 s with single-GPU and 2.87 s with 2 GPU devices. It is also worth to mention that when the number of biclusters is high, there is a greater difference in the execution times between multi-GPU and single-GPU modes. For example, gMSR took 8.94 s in its single-GPU mode and 7.20 s with 4 GPU devices. Therefore, the scalability study resumes that the use of multiple GPU devices is proportionally related to the number of biclusters contained in a data set.

The scalability behavior when the size of each bicluster increases is shown in the graph on the right of

Figure 5. In this case, gMSR presents a similar behaviour than in the graph on the left. That is, if a dataset contains biclusters with a small size, then a single-GPU presents a better performance. However, as the size of the bicluster increases, using more than one GPU device is worthy. For example, when processing a dataset with 500,000 biclusters of size 65 × 65, gMSR needs 6.85 s in its single-GPU mode and 3.77 s with 4 GPU devices. That is, the use of several GPU devices reduces the execution times by 55%, compared to using a single device.

It has been verified that the computational performance and the scalability of gMSR have a very good behaviour when the number of biclusters or their size increase due to the tests carried out using synthetic biclusters datasets. Regarding the performance evaluation, it has been shown that gMSR is not negatively affected by these two variables due to the suitable use of GPU device resources, obtaining the validation results in a reasonable time. In the scalability evaluation, the tests determine that, when the amount of data is not big enough, the data partition and the transfer of data between multiple GPU devices penalise the overall performance. However, as the volume of datasets increases, the use of multiple GPU devices significantly improves the computational performance.

3.2. Real Biclusters Analysis

In this case, the objective of this experimentation is to test the usefulness of gMSR in a real case. To do so, 25 real biclusters datasets, generated from the GTEx gene expression dataset by the gBiBit biclustering algorithm, are used. For the first 24 datasets, the number of biclusters varies from 50,000 to 1,000,000, with an increase of 50,000 for each dataset. The latest dataset contains a total of 17,331,328 biclusters, which represent all the possible biclusters that the gBiBit algorithm is able to generate. In this experimentation, the size of the biclustes generated by gBiBit cannot be controlled, due to the algorithm’s own methodology.

In the previous experimentation (see

Section 3.1), the maximum size of a bicluster was 65 × 65. However, in this case, the mean of the number of the rows and the columns of the biclusters are 1712 and 1726, respectively. This significant increase in the number of rows and columns caused the execution times of the sequential version of MSR to exceed four days, also registering continuous memory overflow problems. For this reason, the sequential version of MSR has not been included in this experimentation.

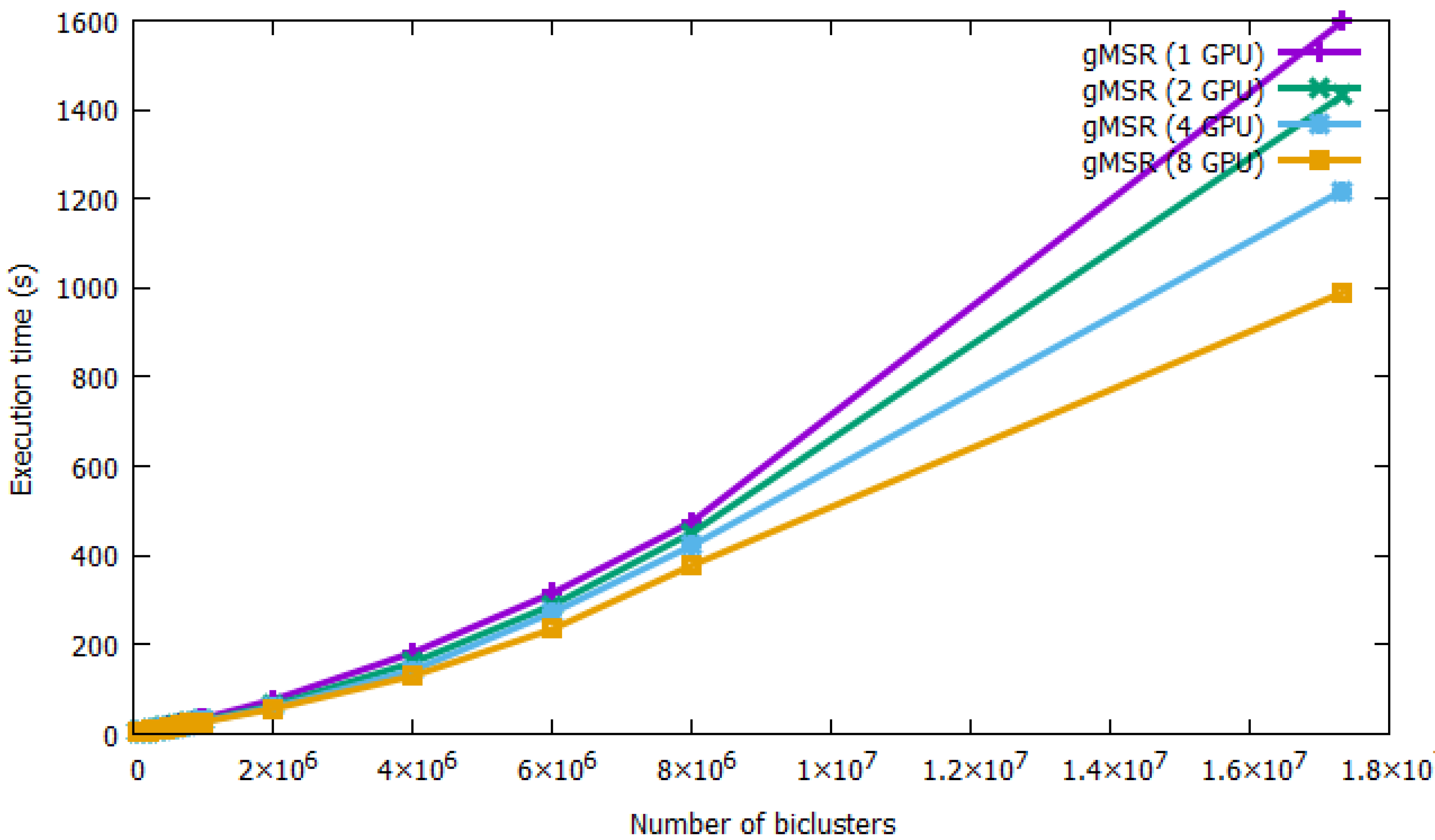

The performance and scalability of the single-GPU and multi-GPU modes is presented in

Figure 6. All of the execution times of gMSR have been collected in

Table A3 in the

Appendix A. As it can observed, gMSR is able to process all datasets regardless of the number of biclusters. For the smallest dataset, 50,000 biclusters, gMSR took 6.55 s in its single-GPU version and 2.45 s with 2 GPU devices. That is, multi-GPU systems improve computing performance by 37% over the single-GPU version. For the maximum number of biclusters generated, 17,331,328, gMSR took 1597.68 s in its single-GPU version, while the use of 8 GPU devices reduced the execution time to 989.87 s. Therefore, in this case, using multiple GPU devices improved the run-time by 61.95%. This fact implies that the multi-GPU version of gMSR is a useful tool for validating a large number of biclusters in a real experimentation.

Besides, in order to help researchers to focus in the best biclusters, gMSR includes the possibility to generate a final ranking of the best

N biclusters acoording their MSR value, as it can be seen in

Table 1.

In conclusion, there are two characteristics that negatively affect, to a greater or lesser extent, the performance of the MSR measure: the size and number of biclusters in a dataset. Despite changing the execution conditions on these two variables, gMSR is able to process the validation of the biclusters in a reasonable time in all cases. To do this, it incorporates an architecture that is able to evenly distribute the input biclusters among the GPU devices in order sto improve performance, scalability, and avoid memory overhead problems. In addition, the proper use of shared and global memory on GPU devices has helped to further minimize the time that is spent on data transfers.

4. Conclusions

Bicluster validation is currently facing new challenges, due to the generation of large amounts of biological data. As genetic expression databases are getting bigger, Biclustering algorithms generate a huge amount of biclusters. This implies that validating this massive number of biclusters is a great challenge. GPU technology and CUDA architecture are one of the most used options for obtaining results in a reasonable time of any type of computational algorithm in an parallelizable environment. In the case of bicluster validation, as far as we know, there is not an implementation of a bicluster validation measure that uses the computational resources of GPU devices to be able to face the aforementioned challenge.

In this work, a new implementation of the MSR measure, called gMSR, is presented. gMSR is implemented in C/C++ in order to run in an environment with only one GPU device or in a multi-GPU system. gMSR is able to process a huge number of biclusters in a very short time and without memory overflow problems. To do this, it incorporates a methodology that takes full advantage of all the computational resources offered by GPU devices, allowing the distribution of processing tasks equally among multiple devices when it is executed in a multi-GPU system.

Two experiments have been carried out to test the goodness of gMSR. The first experiment, in which synthetic biclusters datasets are used, showed that the increase in the number of biclusters and their size does not negatively affect the computational performance of gMSR. Besides, in a multi-GPU environment, gMSR also improves computational performance as the number of biclusters increases, showing a very good scalability. The second experimentation proves that gMSR is suitable to be used in a real Biclustering-based study, being able to validate millions of biclusters very fast and without problems of lack of resources.

Finally, future works will aim to develop, using GPU technology, news methodologies in order to validate biclusters with biological information stored in public databases. To do so, all of the available resources of the Cloud will be used to access to these databases and validate the biclusters in a parallel way.