Ransomware Detection System for Android Applications

Abstract

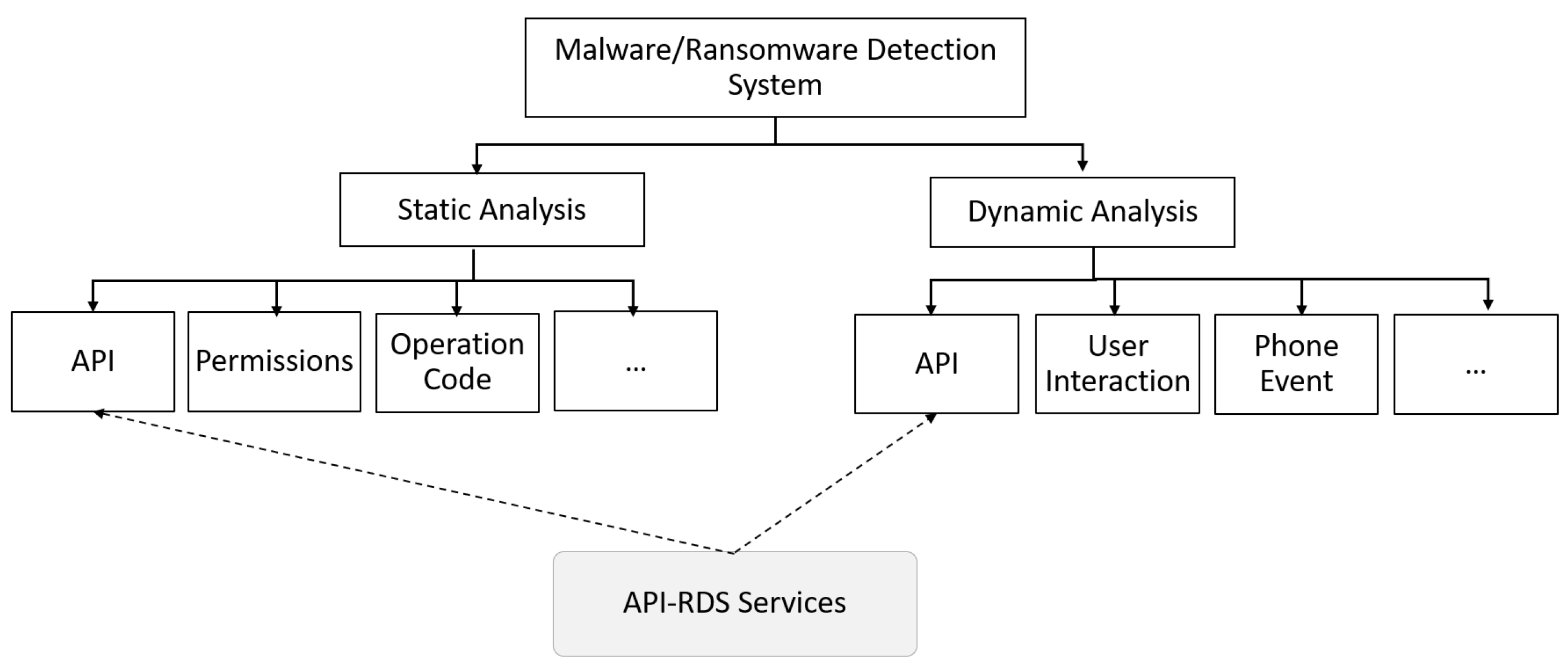

1. Introduction

- Propose API-based ransomware detection system (API-RDS) that distinguishes ransomware apps from benign apps before running the app and prevent the malicious executable from damaging the device.

- Provide high accuracy of ransomware detection.

- Provide efficient approach since it depends on static analysis which does not need an emulator or sandbox. Consequently, saving the cost in the deployment environment.

- Recognize the important API packages that highly influence the detection of Android ransomware.

- Create unique, labeled ransomware and benign datasets without repetitive samples.

- Reduce the complexity of the machine learning model in comparison with other state-of-the-art research work.

2. Background

- AndroidManifest.xml file: defines the capabilities of the application and informs the Operating System about the other application’s components. All permissions are defined in this file like accessing contacts and Bluetooth.

- Dalvik executable or classes.dex file: all java classes and methods in the application code are repacked into one single file (classes.dex).

- Several of .xml files: define the user interface of the application.

- Resources: include all external resources associated with the application (e.g., images).

2.1. API Calls

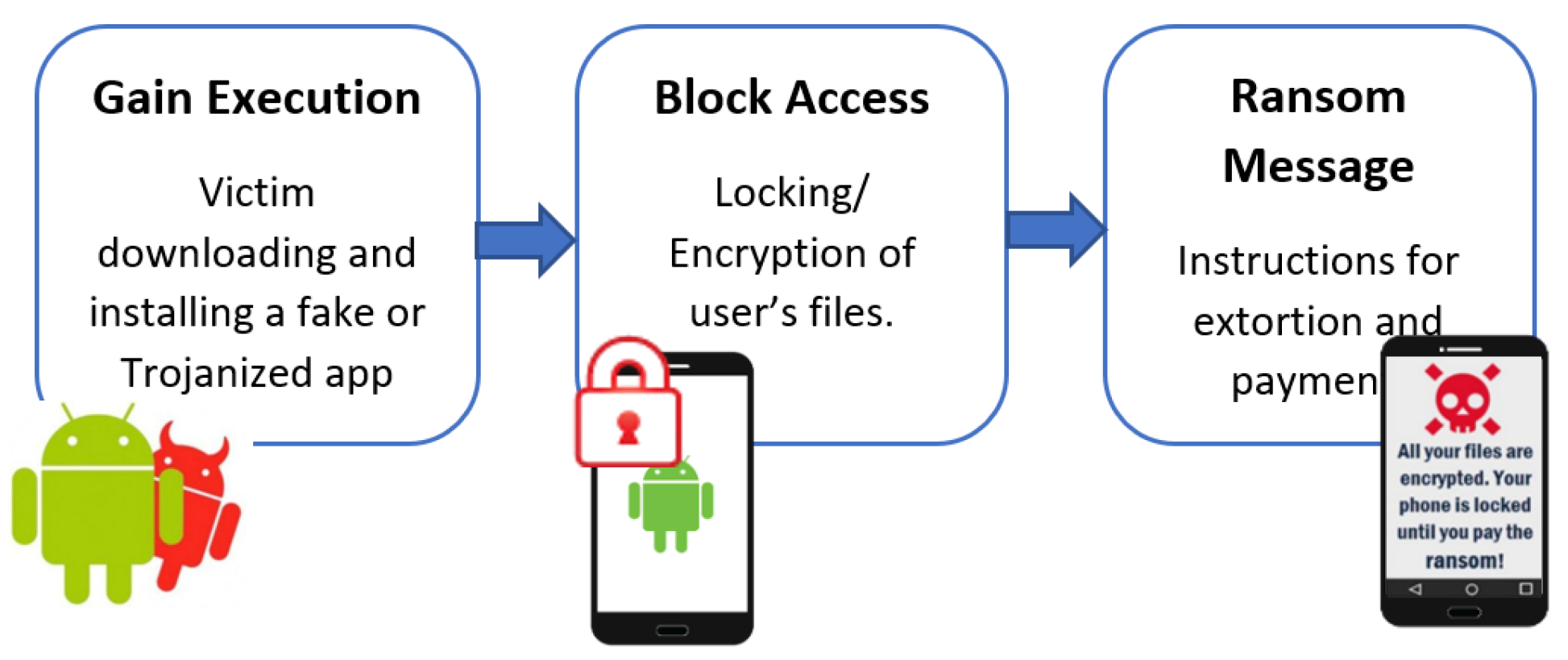

2.2. Ransomware

- Acquire admin privileges

- Detect running anti-viruses and block them

- Encrypt user’s files on the device

- Steal contacts

- Initiate the camera of the device and take photos

- Lock or unlock the device

- Mute the ringtone and notification sounds

- Display threatening text messages

2.3. Malicious Application Analysis

2.4. Machine Learning Algorithms

- is the total sample size

- the total sample that have and

- e is total number of sample where

- is priori estimator for

3. Related Work

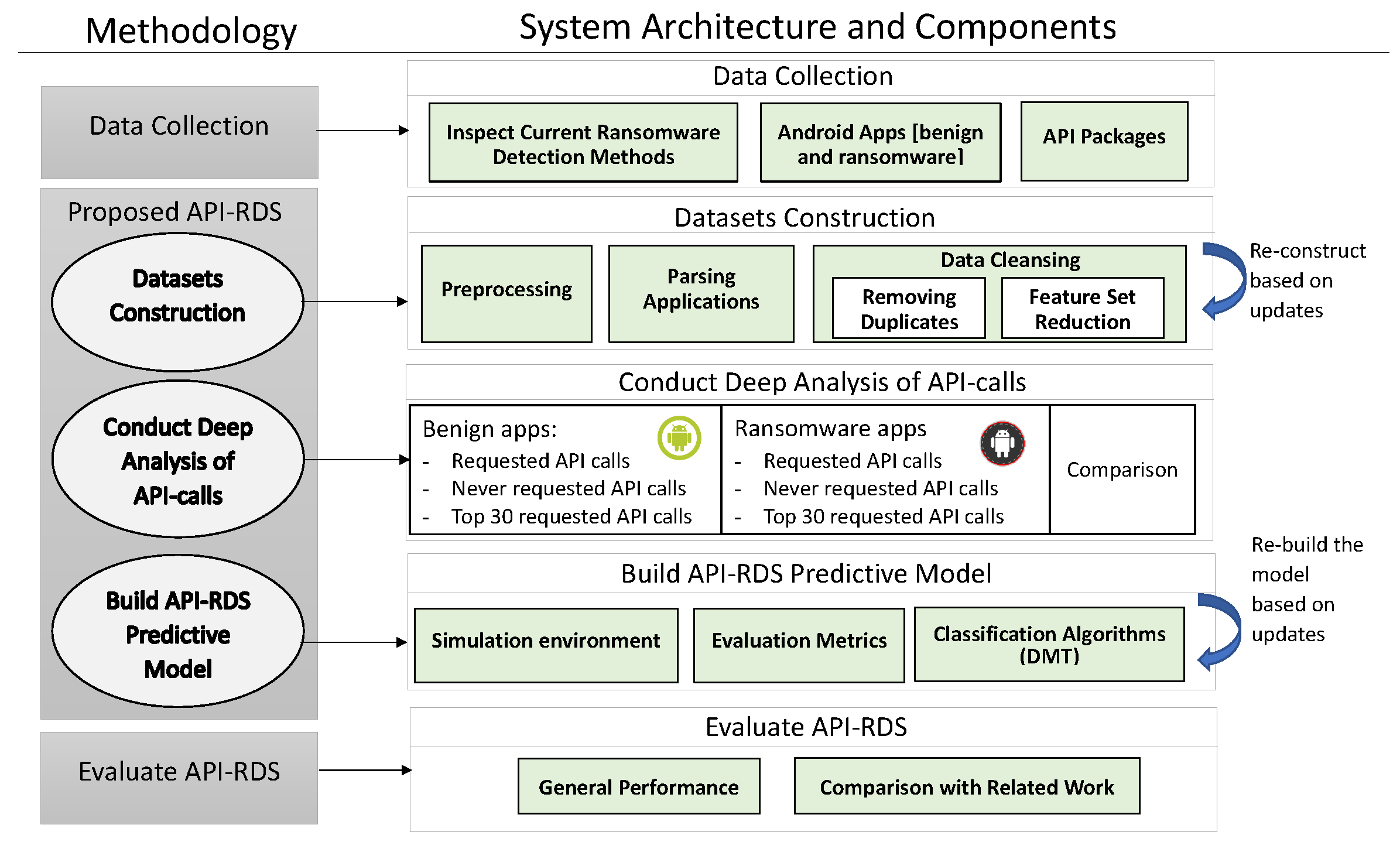

4. Methodology

- Inspect current ransomware detection methods

- Collect new Android apps [both benign and Ransomware]

- Check the latest API calls released by Android community

- Scan all API calls in the Android apps and calculate its frequency

- Filter the collected API calls values by removing the duplicates and reducing the features set.

- Analyse the API calls as: never requested /requested/top 30 requested

- Analyse the used and the highly used API-calls for both benign and ransomware apps from security perspectives

- Decide on the list of API-calls that should be listed as features in the new established datasets.

- Construct both benign and ransomware datasets considering the approved features and their values in all apps under study

- Examine the approved API-calls by running data mining techniques to build predictive models using data mining techniques and then check API-calls’ impact in detecting ransomware apps and their efficiency in terms of complexity and detection accuracy

- Approve the best model with the best performance to be the predictive model in the API-RDS

- Evaluate the API-RDS and compare its performance with recent related work

- Offer the services of API-RDS including ransomware detection system and the constructed datasets to users, researchers and developers

5. Data Collection

5.1. Inspect Current Ransomware Detection Methods

5.2. Android Applications

- Dataset-R: It included 345 ransomware samples from HelDroid, 2258 from RansomProper project, 694 samples from Virus Total and 40 from Koodous.

- Dataset-B: It included 519 samples from Google play store.

5.3. API-Packages Calls

6. Proposed API-Based Ransomware Detection System (API-RDS)

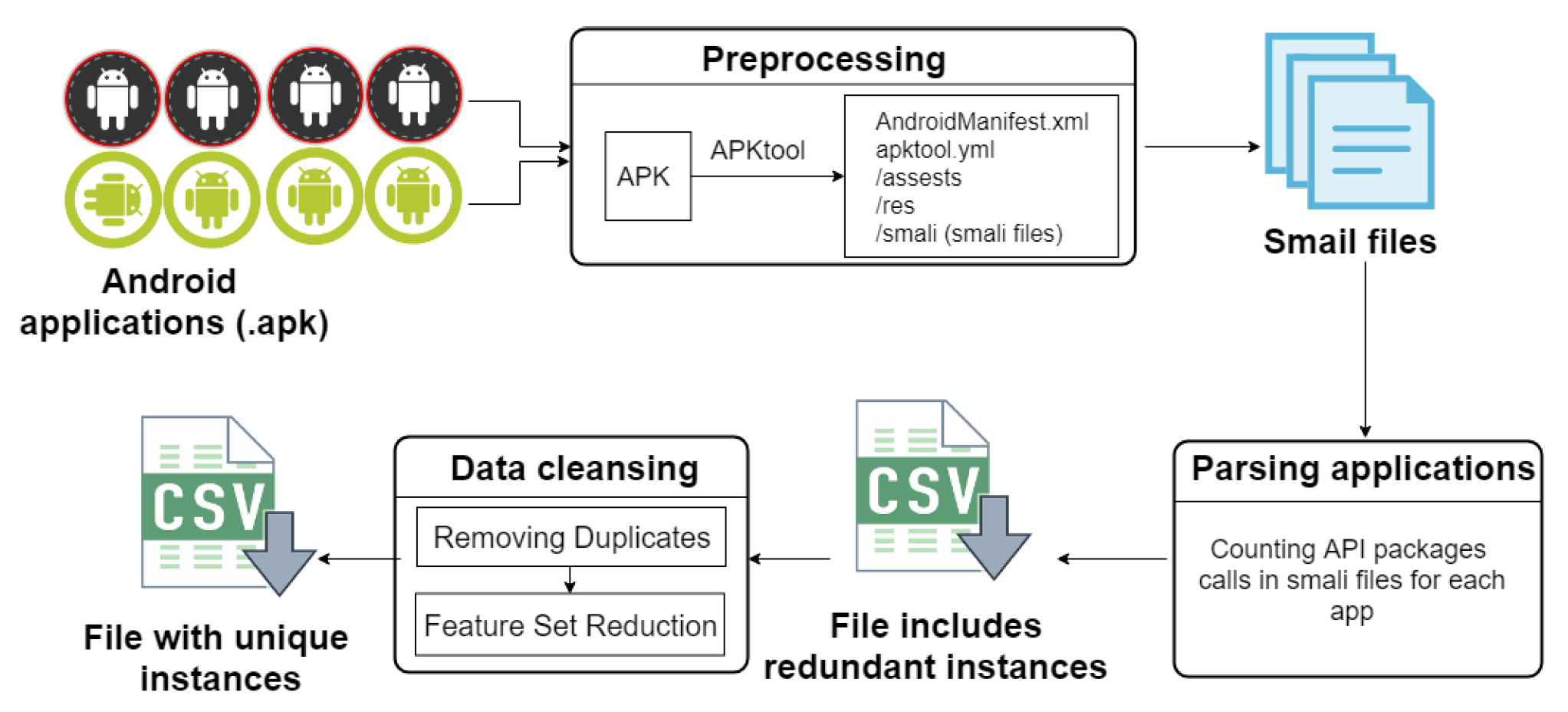

6.1. Datasets Construction

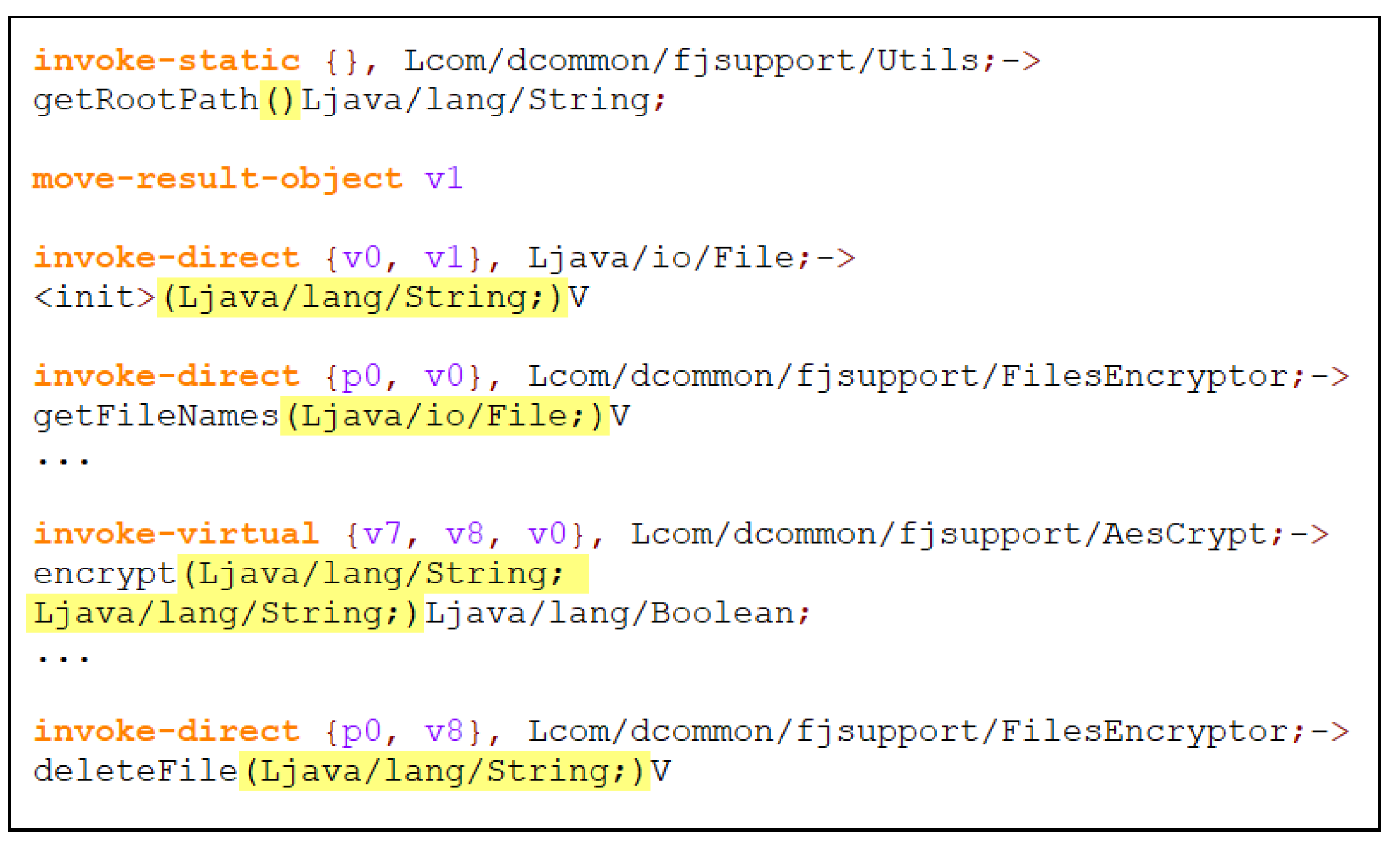

- Preprocessing/reverse engineering:All apps were downloaded as apk files which is a single zipped file that holds all of that application’s code (.dex files), resources, assets, certificates and manifest file. So, we need to decompile apk file to be able to discover the behavior of an Android application and reveal its components, structure and methods. Apktool was used to decompile all .apk files. This step is called reverse-engineering where the output of Apktool is a collection of smali code files. Smali code is an understandable form of code in smali language similar to Java that we used Notepad++ to read. Apktool was installed from Github website (https://ibotpeaches.github.io/Apktool/). In this paper reverse engineering and decompilation terms are used interchangeably.In fact, many errors found while decompiling apps samples with Apktool, especially for ransomware samples.In Dataset-R as in Table 4, 2959 ransomware samples were successfully decompiled among 3337 apps (total of samples from all sources).A few benign apps had errors compared to ransomware apps while decompiling using Apktool. In particular 19 apps in Dataset-B experimented decompilation error. Accordingly, all corrupted apps were removed to avoid faulty output during parsing phase that may affect the final results.

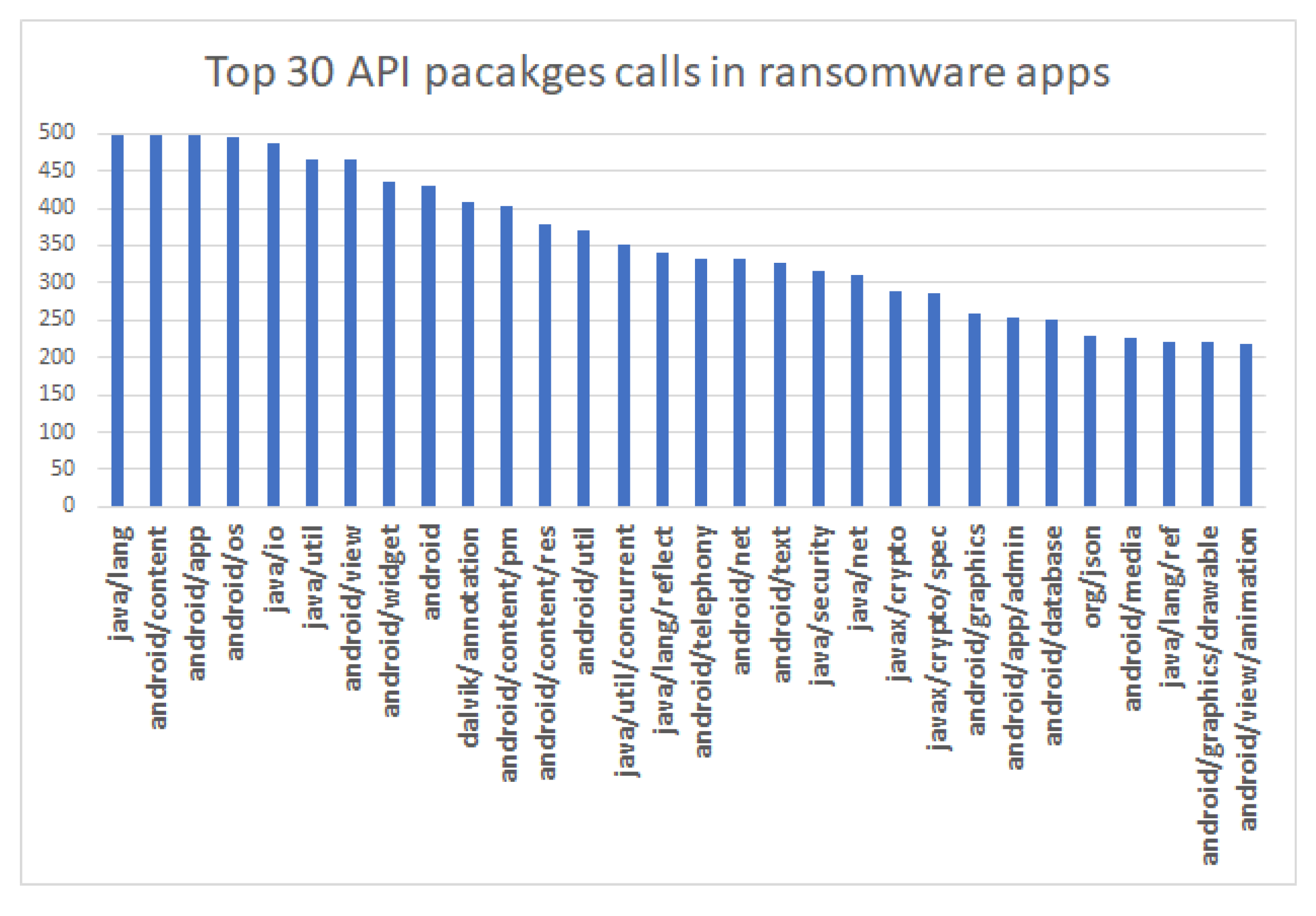

- Parsing applications/Feature extraction:Scanning or parsing comprises searching for API packages calls in smali files resulted from Apktool. API packages calls being searched are previously identified in the data collection stage. We looked for the exact matching of API packages name strings in each “.smal” file for each application and counted their occurrences. Algorithm 1 presents the pseudo code of the parsing phase. A crawling python script was developed based on this algorithm to scan all applications’ smali files and count the occurrences of the set of API packages then save their values in “.csv” file. Frequency feature extraction method was followed to count the occurrences of a string term in a sequence (smali file in our case) [57]. We counted the occurrences of 199 API packages belongs to API 27, so initially there were 199 features in the dataset. Mathematically, we can define a finite set S = {x, x, …, x to represent API packages frequencies for each application where x is the frequency of the API package in that application [58].

| Algorithm 1 Count occurrences of API packages of one application |

| Input: appFolder(Decompiled APK application folder) Output: Feature set (occurrences of each API call in smali files)

|

- 3.

- Data Cleansing:Data cleansing phase includes two stages. One to have a high quality dataset with unique instances and the other is reducing the features set to speed the training and to have more accurate results.

- Removing duplicatesIt is essential to have a high-quality dataset, and one significant aspect that may disrupt the data quality is data duplication, which affects the data mining results. After scanning and counting the number of each API package occurrences for feature extraction, we discovered that many samples have the exact same number of API packages calls. This may indicate that these are the same apps but with different hashes meaning; we have multiple instances in the dataset referring to the same Android app (or app with the same functionality). This could be a result of malware polymorphism technique which is used by attackers where they made some changes to the application in order to derive different hash in order to evade detection by signature based anti-viruses [59]. These apps eventually could have the same feature set for API calls and therefore need to be removed from dataset to avoid duplication [60]. One of the main contributions of this work is offering a clean ransomware dataset without duplicate apps. Removing duplicated data is necessary to have accurate and consistent data where irrelevant features have a negative impact on machine learning [61,62]. Therefore, we eliminated the repeated samples from the dataset. There were no identical instances in benign apps, on the contrary, many ransomware samples have duplicates. As a result, the instances in the ransomware dataset were significantly reduced. Specifically, in Dataset-R, we had 2959 ransomware samples resulted after prepossessing phase. However, after removing duplicated instances we only had 500 unique ransomware samples showed in Table 4. Meaning, almost 83% of the ransomware samples were removed due to samples duplication.

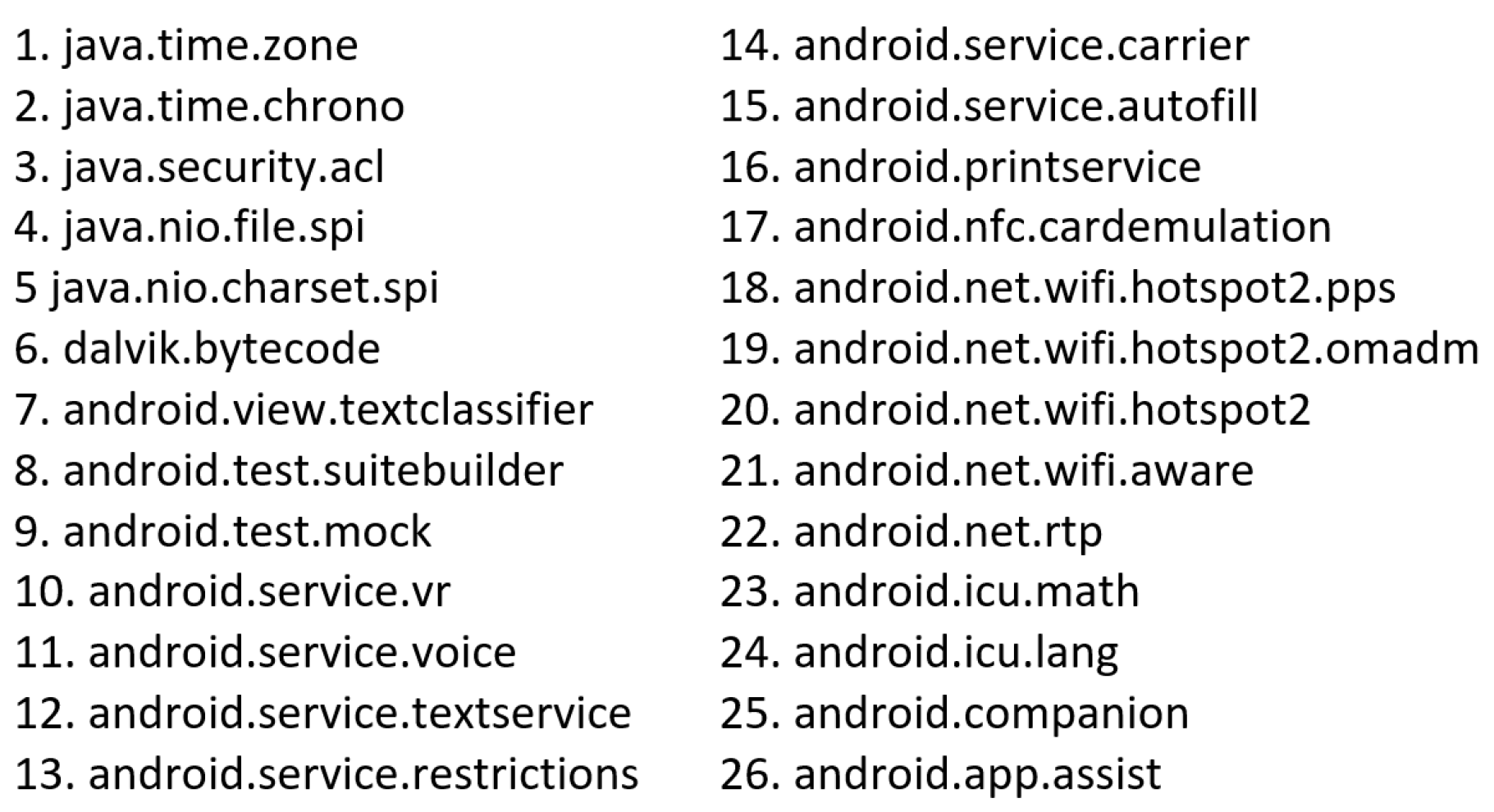

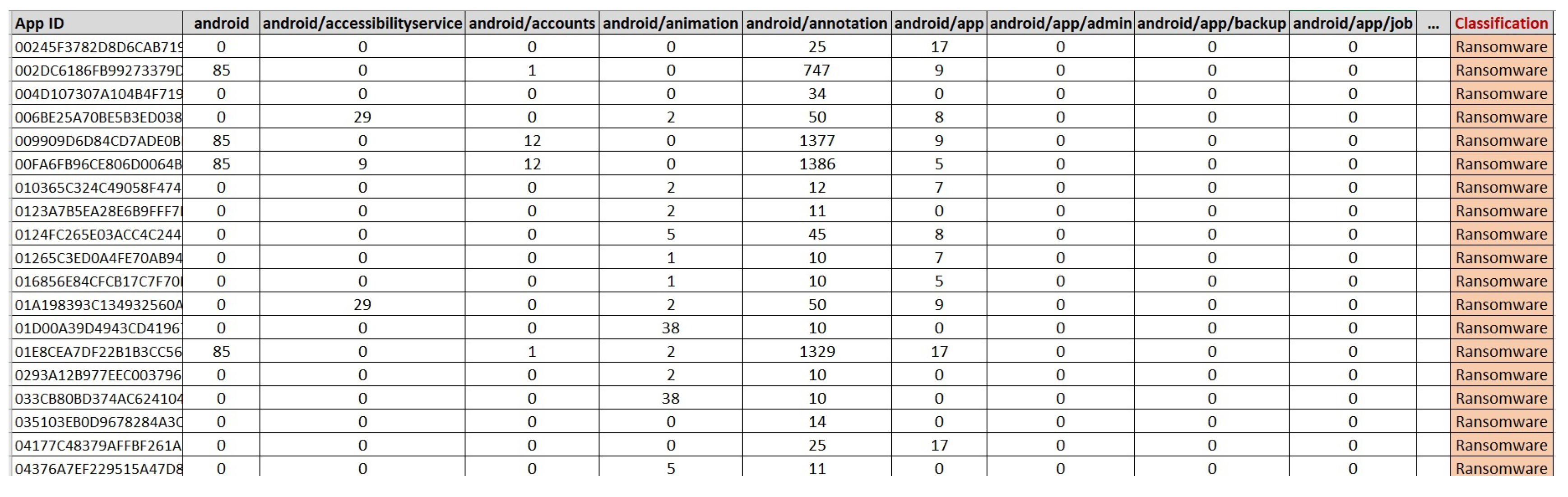

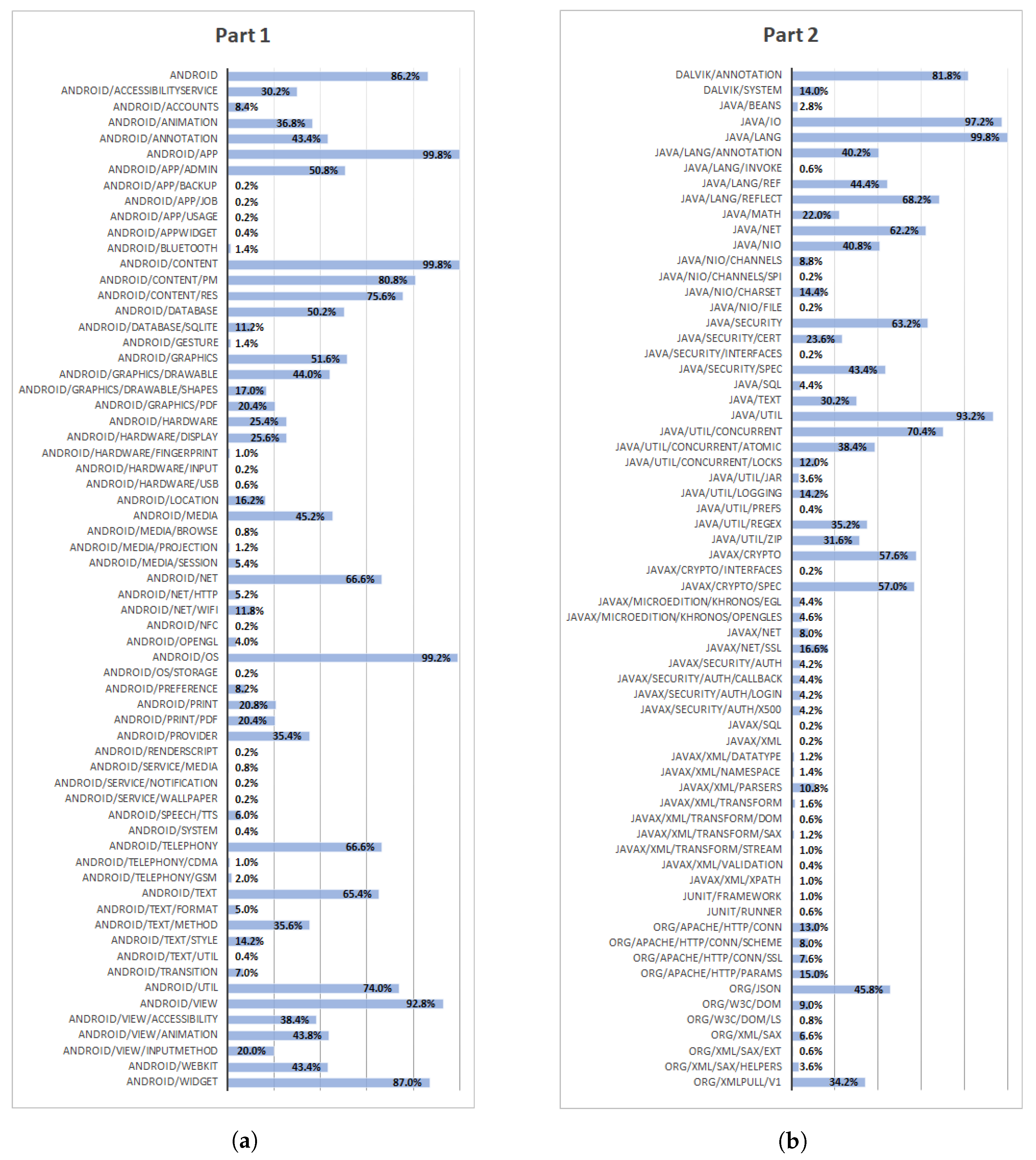

- Feature set reductionFeature set reduction is concerned about irrelevant and redundant features. Mainly, features are classified into strongly relevant, weakly relevant and irrelevant features [63]. Having fewer features reduces the time of training where a large number of features may lead to a higher computational cost [64]. Also reducing feature vectors improves the accuracy of prediction and the performance of machine learning algorithm [65]. As we count the occurrences of API packages calls in each Android application, we found that some API packages have zero occurrence in many applications. Meaning, these API packages were not requested by specific apps. If the number of occurrences of an API package call is equal to zero in all samples, that API package will be deleted from the features set. In our dataset, among 199 APIs in API 27 Android release, we found 26 API packages that had never been called in benign nor in ransomware apps. Such packages are considered irrelevant features and could be removed [66]. Therefore, we removed these packages from the features set. Figure 5 shows these eliminated packages. There are 173 API packages left after removing the irrelevant packages. Figure 6 shows a snapshot from the Dataset-R where there are 173 features which belongs to API 27 beside the app’s Identification and classification features.

- Android benign datasetThis dataset includes samples of more than 500 benign applications downloaded from different categories in Google play store.

- Android ransomware datasetsOne dataset contains the hashes of all tested 2959 ransomware applications. Also, another dataset can be provided that contains 500 hashes of unique ransomware applications.

- API-Packages calls datasetThis dataset provide API-packages calls (belong to Android API 27) occurrences in 500 benign and 500 ransomware apps.

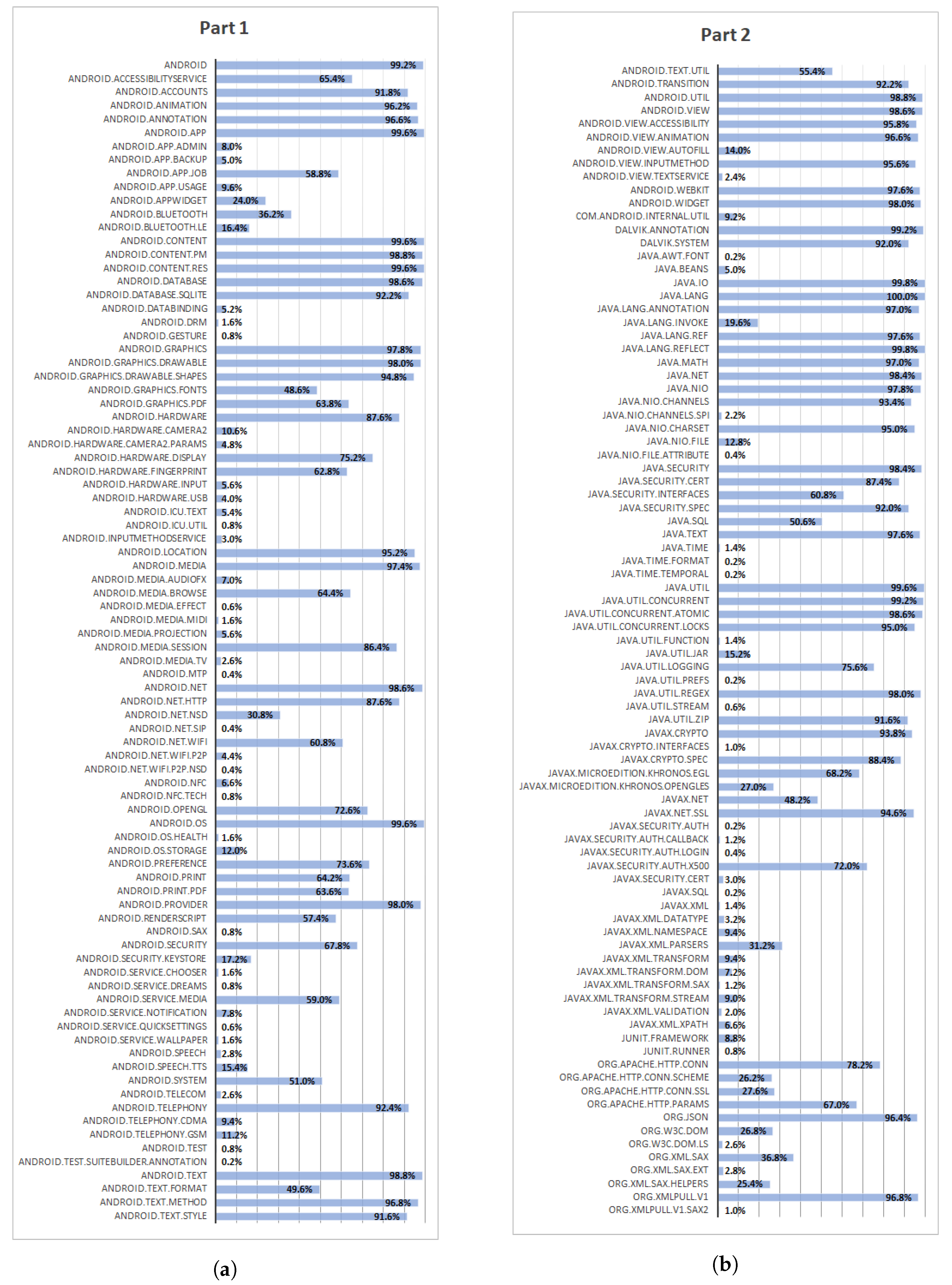

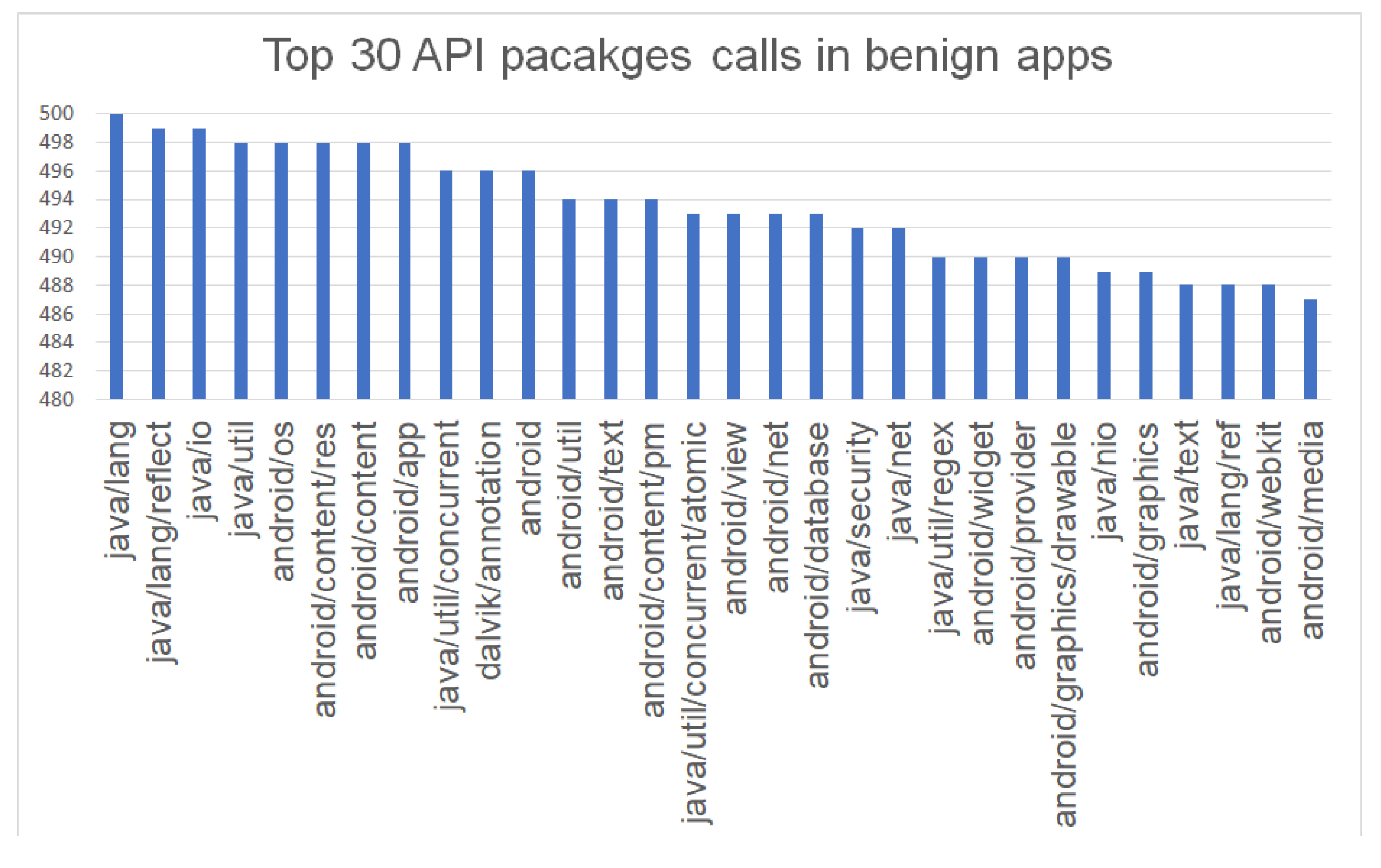

6.2. Conduct Deep Analysis of API Calls

6.2.1. Benign Applications

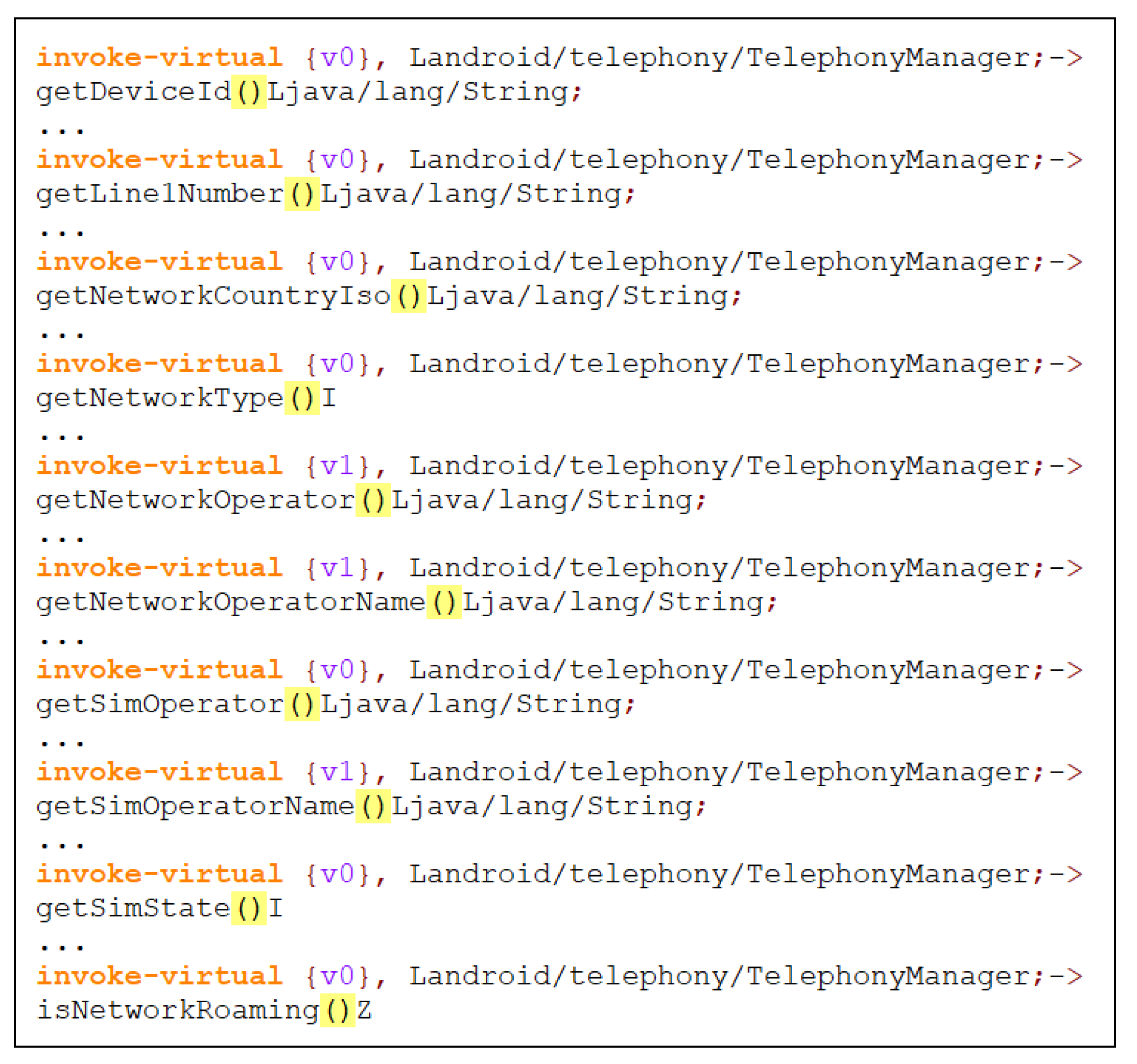

6.2.2. Ransomware Applications

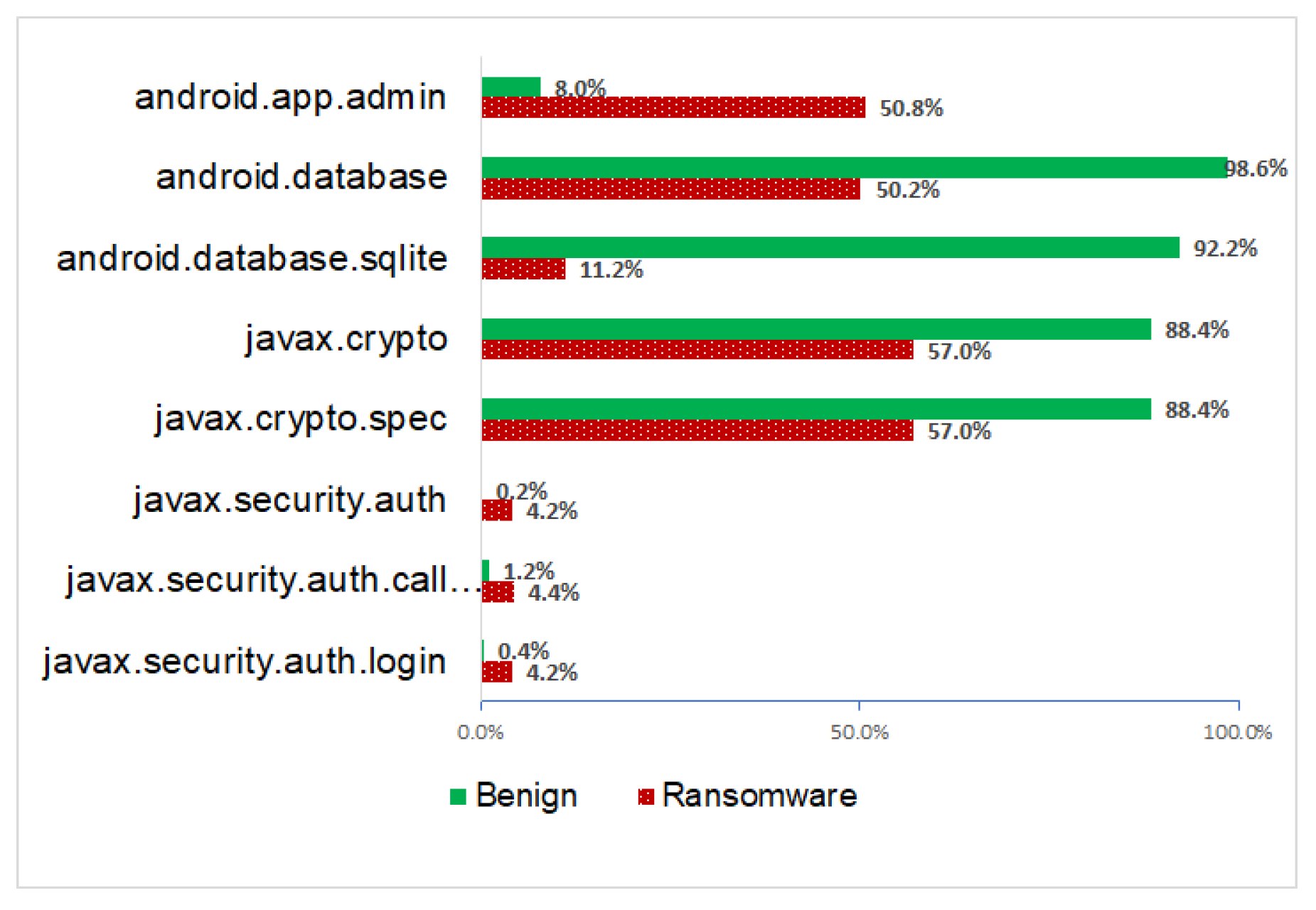

6.2.3. Comparison

- DevicePolicyManager class invokes the lockNow() which is a public method that locks the device immediately as if the lock period has expired. Thus, it can be used as a remote lock strategy. To command lockNow(), the app must have the tag <force-lock/> in </uses-policies> section of its meta-data. Moreover, an application with the policy <force-lock/> may exploit the built-in PIN screen to lock the device screen [75]. The force-lock tag was spotted in 28.8% of ransomware samples while found in two benign samples out of 500 (0.4%).

- Also, DevicePolicyManager class invokes the wipeData() which is a public method that clears all the data in the device. For this method to work, the app must have requested the policy wipe-data in its meta-data. This tag appeared in 24.2% of ransomware apps while only included in one benign app (0.2%). Furthermore, the only benign app that has the tag <wipe-data/> is called Phone Guard which is an app locker or app protector against user threats. In Phone Guard, policies tags <force-lock/>and <wipe-data/> could be used to protect users’ data when the device is lost. Therefore, we may assume that, in common cases, legitimate apps do not request the method wipeData() or invoke wipe-data tag in its meta-data.

6.3. Build API-RDS Predictive Model

- Simulation environment:Weka (https://www.cs.waikato.ac.nz/~ml/weka/) version 3.8 tool was used to evaluate the proposed API-RDS. Weka provides capabilities to train and evaluate classification models given features set [76].

- Evaluation metrics:As for evaluation metrics, we used True Positive (TP), True Negative (TN), False Positive (FP) and False Negative (FN) to measure the performance of the machine learning model. True positive and true negative refer to ransomware and benign applications that are correctly classified. In particular, true positive rate is calculated in Equation (8) where, FN indicates the number of instances in a specific class, which is classified incorrectly as the other class type. FN rate is calculated in Equation (9).False positive on the other hand, is the rate of instances falsely classified as a given class. False positive rate is measured in Equation (10) where TN represents all instances correctly classified as not the given class. TN is calculated in Equation (11).Accuracy as well is the main measure to evaluate the performance of API-RDS. The accuracy is the fraction of the correctly predicted labels to the total predicted labels defined as follows:Also, cost-sensitive analysis metric must be included as an evaluation metric to evaluate the classifier performance. ROC (receiver operating characteristics) is used to recognize the appropriate reflection of the classifier. It is a visualization tool that easily clarifies whether the classifier is appropriate or not by examining the performance of a binary classifier, by creating a graph of the true positives (TP) and False Positives (FP) for every classification threshold. We measured the AUC (area under the receiver operator characteristics (ROC) curve where a perfect classifier will have an AUC of 1. Thus, the closer the AUC is to 1, the higher the model’s predictive power.Another metric considered is the Kappa statistic that is used to the compliance of predictions with regard to classification values. Kappa is calculated as seen in Equation (13).where asandIn particular, Kappa measures the reliability of classification decisions. The best case is denoted by 1 if the classification values (ransomware or benign) are in complete agreement. On the other hand, 0 indicates the worst case.Also, model complexity was one of the metrics used to evaluate the proposed API-RDS. Model complexity refers to the number of features included in the predictive model that will affect the size and the performance of the predictive model [77].

- Classification algorithms (data mining techniques):Random forest (RF) was chosen as a supervised learning algorithm for ransomware detection. RF is a popular classification algorithm that usually results in a good accuracy of predictions compared to other classification algorithms [78]. It was utilized in many of malware and ransomware detection research [19,24,27,51,79,80].Also, other classification algorithms such as support vector machine (SVM), Decision trees (J48) and Naïve Bayes (NB) as well used to measure the accuracy of the proposed approach. SVM have been extensively applied in the detection of malware [21,44,79,80] and ransomware [27,51]. Here in this work, a software package was used for SVM called sequential minimal optimization algorithm (SMO) that is available in Weka tool.In particular, Decision trees (J48) have been successfully applied to predict the existence of malicious behavior of Android apps [19,27,49]. Naïve Bayes(NB) also used for classification problems due to low computational complexity [81] as it was used in many of malware [34,82] and ransomware [49,51] detection approaches.

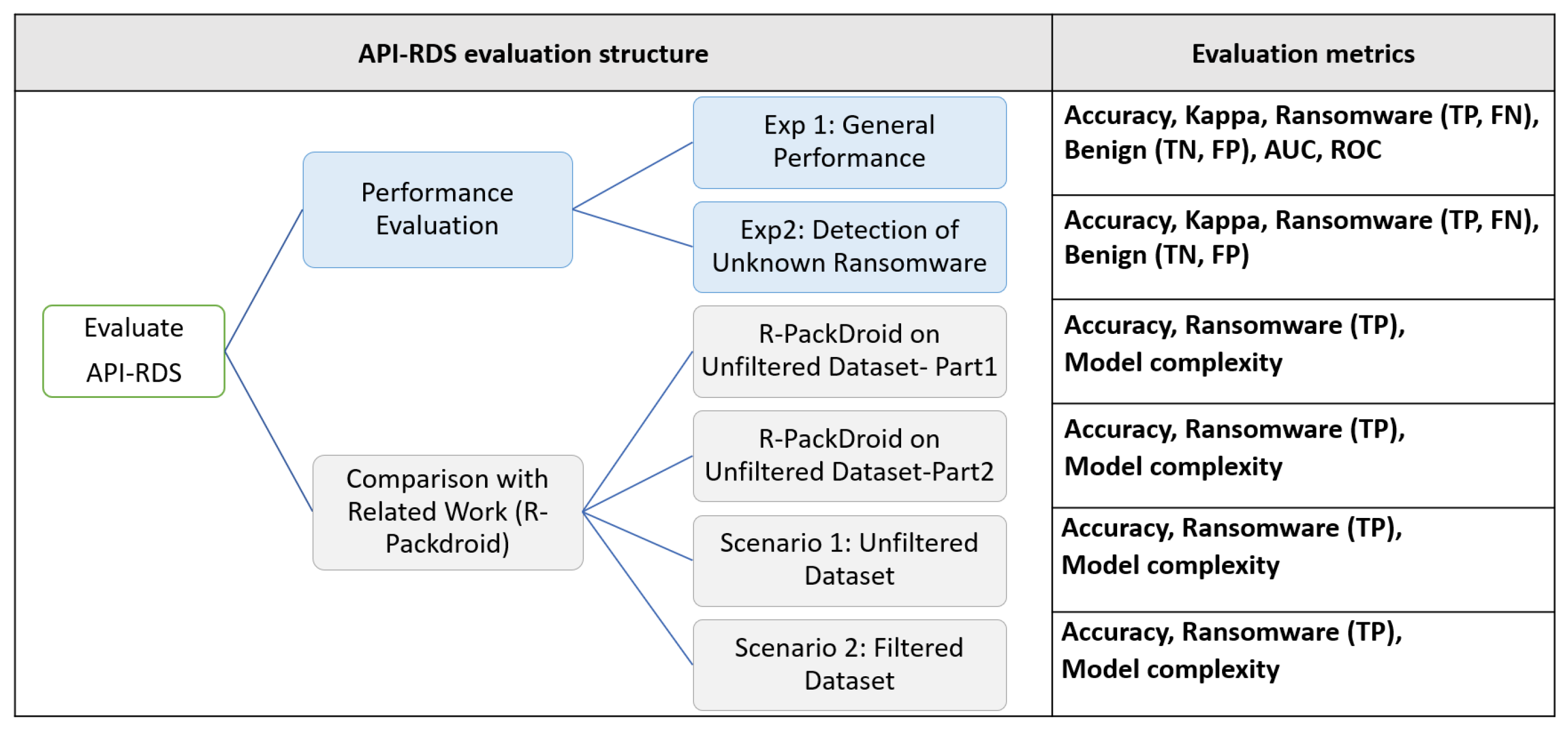

7. Evaluate API-RDS

7.1. Performance Evaluation

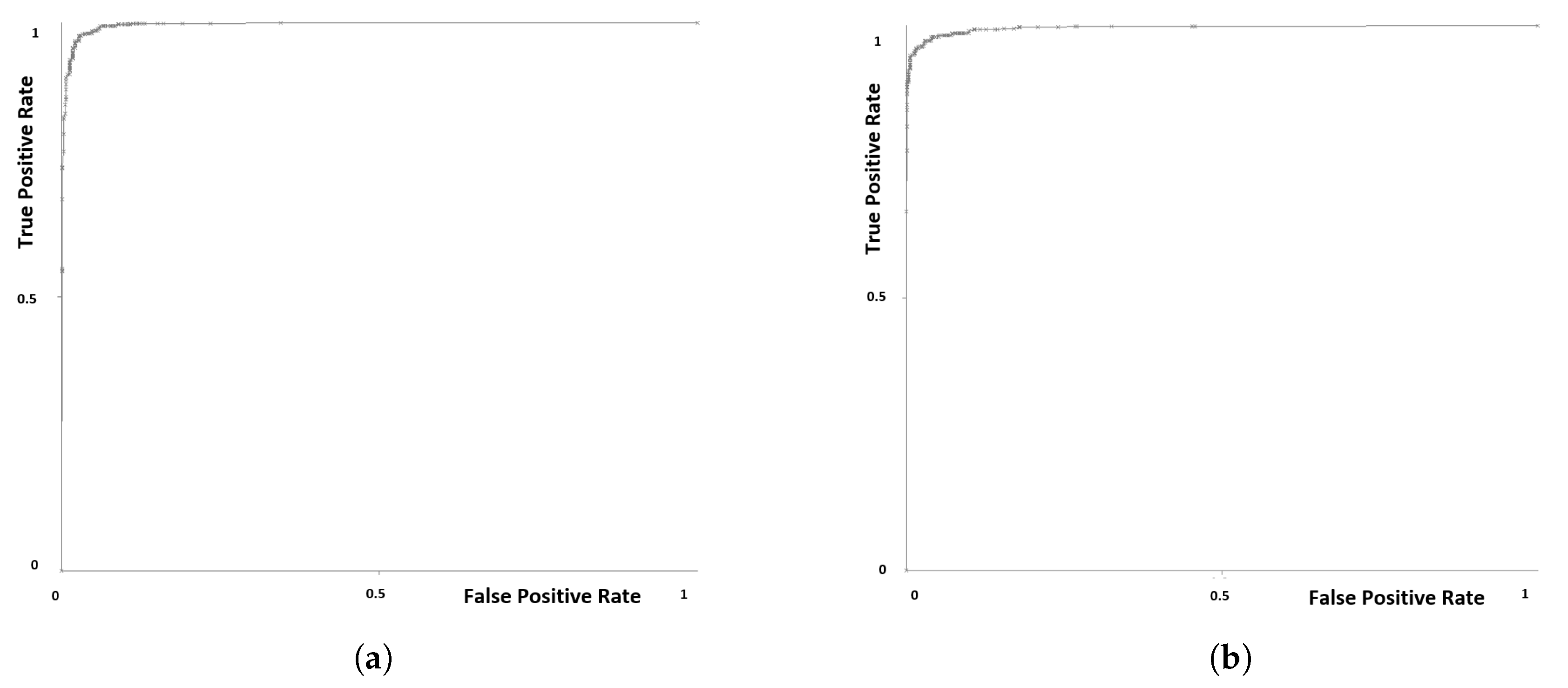

- Experiment 1: General performanceIn this experiment, we used both Dataset-R and Dataset-B as training dataset with a total of 1000 apps (500 ransomware apps and 500 benign apps). The algorithm used was Random Forest classifier with a 10-fold cross validation testing mode. The resulting metrics as shown in Table 6 indicates a strong predictive performance from the suggested model.The accuracy of identification was 97% to distinguish 1000 different ransomware and benign samples. The measured Kappa for the cross validation result was 0.94 which gives a good indication of the performance of random forest classifier used in the experiment.In general, False Positive ratio (FP) was 2% and 4% for ransomware and benign, respectively. In particular, 20 benign samples out of 500 (4%) were mistakenly identified as ransomware and 96% of benign apps are correctly labeled. While 98% of ransomware apps out of 500 were identified and only 10 ransomware apps incorrectly labeled as legitimate apps. The number of ransomware correctly classified (TP) is 490 and the number of benign correctly classified (TN) is 480 apps. Table 6 shows these values.We utilized other widely used machine learning classifiers with 10 fold cross validation. The classifiers that were applied are Sequential minimal optimization (SMO), Decision trees (J48) and Naïve Bayes.From Figure 18, we can notice that all classifiers provided high detection accuracy. Random Forest has the highest accuracy for the proposed model, followed by Decision trees (J48) with 96.6% detection rate. Also, SMO classifier achieved a close result with 96.2%. Naïve Bayes classifier exhibited the lowest result with 93.5% of accuracy. Hence, we employed Random Forest to train our model. It is worth observing that the large numbers in benign samples for many API calls (due to size difference), may improve the prediction.In addition, AUC was used to assess the accuracy of the predictive models besides the False Positive ratio. Table 7 shows AUC and average of False Positives for each classifier. Generally, the classifiers achieved high AUC, more than 0.9, meaning low number of false positives. The model Naïve Bayes did not perform well on the dataset and was more prone to False positive ratio compared to other classifiers. Decision Trees (J48) gave a slightly better False Positive ratio than SMO wiht a lower AUC value. Random Forest classifier exhibited the best AUC and the lowest False positive among all classifiers. The ROC plots in Figure 19 shows the excellent AUC performance of the best case model (0.995 from Random Forest) for both benign and ransomware applications.The high results of accuracy, Kappa and AUC are very encouraging, especially when considering a training dataset that is not homogeneous (e.g., ransomware applications from diverse sources and time frames).

- Experiment 2: Detection of unknown ransomwareThis experiment was conducted to predict the accuracy of the model in detecting new and unseen Android apps based on API packages calls. In this experiment, the dataset was separated into training and testing datasets:

- Training dataset: includes 800 samples which are a combination of ransomware and benign Android apps. In particular, 400 ransomware from Dataset-R and 400 benign samples from Dataset-B.

- Testing dataset: a new samples that are the remaining from Dataset-R and Dataset-B with 100 unseen samples from each dataset. The ransomware samples in the testing dataset were compared with samples in training dataset to assure its uniqueness and that there are no duplicates versions. Benign apps also were new and different than samples in the training dataset. The same features set is prepared; API packages with zero occurrences that were deleted before in training dataset were deleted from this dataset as well.

The main goal of this experiment is to measure the ability of the proposed API-RDS to detect Zero-Day and new/unseen ransomware. We applied Random Forest classifier to predict the maliciousness of the provided samples.Although the testing dataset was new to the model, the accuracy was 96.5% which show the high capability of the proposed model to detect ransomware activity at early stages before the damage happens.The accuracy matrices are shown in Table 8 are indicating that the classifier was able to discriminate between ransomware and benign apps by API calls features extracted through the source code. In particular, the API-RDS successfully identified 96% of ransomware samples and 97% of benign apps.

7.2. Comparison with Related Work

8. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Gazet, A. Comparative analysis of various ransomware virii. J. Comput. Virol. 2010, 6, 77–90. [Google Scholar] [CrossRef]

- Kharraz, A.; Robertson, W.; Balzarotti, D.; Bilge, L.; Kirda, E. Cutting the gordian knot: A look under the hood of ransomware attacks. In Proceedings of the International Conference on Detection of Intrusions and Malware, and Vulnerability Assessment, Milan, Italy, 9–10 July 2015; pp. 3–24. [Google Scholar]

- Rajput, T.S. Evolving Threat Agents: Ransomware and Their Variants. Int. J. Comput. Appl. 2017, 164, 28–34. [Google Scholar]

- Telegraph, T. WannaCry Ransomware ’from North Korea’ Say UK and US. 2017. Available online: http://www.telegraph.co.uk/news/2017/06/15/wannacry-ransomware-north-korea-say-uk-us/ (accessed on 15 November 2017).

- Guardian, T. University College London Hit by Ransomware Attack. 2017. Available online: https://www.theguardian.com/technology/2017/jun/15/university-college-london-hit-by-ransomware-attack-hospitals-email-phishing (accessed on 15 November 2017).

- News, C. WannaCry Ransomware Attack Losses could Reach $4 Billion. 2017. Available online: https://www.cbsnews.com/news/wannacry-ransomware-attacks-wannacry-virus-losses/ (accessed on 15 November 2017).

- Verizon. Data Breach Investigations Report. 2017. Available online: http://www.verizonenterprise.com/verizon-insights-lab/dbir/2017/ (accessed on 15 November 2017).

- Labs, S. No Platform Immune from Ransomware, According to SophosLabs 2018 Malware Forecast. 2017. Available online: https://www.sophos.com/en-us/press-office/press-releases/2017/11/sophoslabs-2018-malware-forecast.aspx (accessed on 16 November 2017).

- IDC. Smartphone OS Market Share, 2017 Q1. 2017. Available online: https://www.idc.com/promo/smartphone-market-share/os (accessed on 30 November 2017).

- Telegraph, T. Android Roars Back in Strongest Growth in Two Years, as iOS Shrinks. 2017. Available online: http://www.telegraph.co.uk/technology/2016/05/17/android-roars-back-in-strongest-growth-in-two-years-as-apple-shr/ (accessed on 17 November 2017).

- Stats, S.G. Mobile Operating System Market Share Worldwide. 2018. Available online: http://gs.statcounter.com/os-market-share/mobile/worldwide/2018 (accessed on 11 October 2018).

- Symantec. Internet Security Threat Report. 2017. Available online: https://www.symantec.com/content/dam/symantec/docs/reports/istr-22-2017-en.pdf (accessed on 2 November 2017).

- Tam, K.; Khan, S.J.; Fattori, A.; Cavallaro, L. CopperDroid: Automatic Reconstruction of Android Malware Behaviors. NDSS. 2015. Available online: https://core.ac.uk/download/pdf/77298524.pdf (accessed on 1 August 2019).

- Check Point Software Technologies Ltd. Charger Malware Calls and Raises the Risk on Google Play. 2017. Available online: https://blog.checkpoint.com/2017/01/24/charger-malware/ (accessed on 1 October 2018).

- Tee, M.Y.; Zhang, M. Hidden App Malware Found on Google Play. 2018. Available online: https://www.symantec.com/blogs/threat-intelligence/hidden-app-malware-Google-play (accessed on 4 September 2018).

- Tee, M.Y.; Zhang, M. More Fraudulent Apps Containing Aggressive Adware Found on Google Play. 2018. Available online: https://www.symantec.com/blogs/threat-intelligence/apps-containing-aggressive-adware-found-Google-play (accessed on 4 September 2018).

- Global, T. Telstra Cyber Security Report 2017. 2017. Available online: https://www.telstraglobal.com/images/assets/insights/resources/Telstra_Cyber_Security_Report_2017_-_Whitepaper.pdf (accessed on 20 November 2017).

- Guardian, T. Don’t Pay WannaCry Demands, Cybersecurity Experts Say. 2017. Available online: https://www.theguardian.com/technology/2017/may/15/dont-pay-ransomware-demands-cybersecurity-experts-say-wannacry (accessed on 30 November 2017).

- Bhatia, T.; Kaushal, R. Malware detection in android based on dynamic analysis. In Proceedings of the 2017 International Conference on Cyber Security And Protection Of Digital Services (Cyber Security), London, UK, 19–20 June 2017; pp. 1–6. [Google Scholar] [CrossRef]

- Wong, M.Y.; Lie, D. IntelliDroid: A Targeted Input Generator for the Dynamic Analysis of Android Malware. NDSS, 2016. Volume 16, pp. 21–24. Available online: https://www.ndss-symposium.org/wp-content/uploads/2017/09/intellidroid-targeted-input-generator-dynamic-analysis-android-malware.pdf (accessed on 1 August 2019).

- Arp, D.; Spreitzenbarth, M.; Hubner, M.; Gascon, H.; Rieck, K.; Siemens, C. DREBIN: Effective and Explainable Detection of Android Malware in Your Pocket. NDSS. 2014. Available online: https://www.researchgate.net/profile/Hugo_Gascon/publication/264785935_DREBIN_Effective_and_Explainable_Detection_of_Android_Malware_in_Your_Pocket/links/53efd0020cf26b9b7dcdf395.pdf (accessed on 1 August 2019).

- Yang, T.; Yang, Y.; Qian, K.; Lo, D.C.T.; Qian, Y.; Tao, L. Automated detection and analysis for android ransomware. In Proceedings of the 2015 IEEE 17th International Conference on High Performance Computing and Communications, 2015 IEEE 7th International Symposium on Cyberspace Safety and Security, and 2015 IEEE 12th International Conference on Embedded Software and Systems, New York, NY, USA, 24–26 August 2015; pp. 1338–1343. [Google Scholar]

- Andronio, N.; Zanero, S.; Maggi, F. HelDroid: Dissecting and Detecting Mobile Ransomware. In Research in Attacks, Intrusions, and Defenses, Proceedings of the 18th International Symposium, RAID 2015, Kyoto, Japan, 2–4 November 2015; Bos, H., Monrose, F., Blanc, G., Eds.; Springer International Publishing: Cham, Switzerland, 2015; pp. 382–404. [Google Scholar] [CrossRef]

- Maiorca, D.; Mercaldo, F.; Giacinto, G.; Visaggio, C.A.; Martinelli, F. R-PackDroid: API package-based characterization and detection of mobile ransomware. In Proceedings of the Symposium on Applied Computing, Marrakech, Morocco, 3–7 April 2017; pp. 1718–1723. [Google Scholar]

- Mercaldo, F.; Nardone, V.; Santone, A.; Visaggio, C.A. Ransomware steals your phone. formal methods rescue it. In Proceedings of the International Conference on Formal Techniques for Distributed Objects, Components, and Systems, Marrakech, Morocco, 3–7 April 2016; pp. 212–221. [Google Scholar]

- Chen, J.; Wang, C.; Zhao, Z.; Chen, K.; Du, R.; Ahn, G.J. Uncovering the face of android ransomware: Characterization and real-time detection. IEEE Trans. Inf. Forensics Secur. 2018, 13, 1286–1300. [Google Scholar] [CrossRef]

- Zheng, C.; Dellarocca, N.; Andronio, N.; Zanero, S.; Maggi, F. Greateatlon: Fast, static detection of mobile ransomware. In Proceedings of the International Conference on Security and Privacy in Communication Systems, Guangzhou, China, 10–12 October 2016; pp. 617–636. [Google Scholar]

- Wang, W.; Zhao, M.; Gao, Z.; Xu, G.; Xian, H.; Li, Y.; Zhang, X. Constructing Features for Detecting Android Malicious Applications: Issues, Taxonomy and Directions. IEEE Access 2019. [Google Scholar] [CrossRef]

- Ma, Z.; Ge, H.; Liu, Y.; Zhao, M.; Ma, J. A Combination Method for Android Malware Detection Based on Control Flow Graphs and Machine Learning Algorithms. IEEE Access 2019, 7, 21235–21245. [Google Scholar] [CrossRef]

- Almomani, I.; Khayer, A. Android applications scanning: The guide. In Proceedings of the 2019 International Conference on Computer and Information Sciences (ICCIS), Aljouf, Saudi Arabia, 3–4 April 2019; pp. 1–5. [Google Scholar]

- Almomani, I.; Alenezi, M. Android Application Security Scanning Process. In Android; IntechOpen: London, UK, 2019. [Google Scholar]

- Mercaldo, F.; Nardone, V.; Santone, A. Ransomware Inside Out. In Proceedings of the 2016 11th International Conference on Availability, Reliability and Security (ARES), Salzburg, Austria, 31 August–2 September 2016; pp. 628–637. [Google Scholar] [CrossRef]

- Hoffmann, J.; Rytilahti, T.; Maiorca, D.; Winandy, M.; Giacinto, G.; Holz, T. Evaluating analysis tools for android apps: Status quo and robustness against obfuscation. In Proceedings of the Sixth ACM Conference on Data and Application Security and Privacy, New Orleans, LA, USA, 9–11 March 2016; pp. 139–141. [Google Scholar]

- Akhuseyinoglu, N.B.; Akhuseyinoglu, K. AntiWare: An automated Android malware detection tool based on machine learning approach and official market metadata. In Proceedings of the 2016 IEEE 7th Annual Ubiquitous Computing, Electronics Mobile Communication Conference (UEMCON), New York, NY, USA, 20–22 October 2016; pp. 1–7. [Google Scholar] [CrossRef]

- Quan, D.; Zhai, L.; Yang, F.; Wang, P. Detection of android malicious apps based on the sensitive behaviors. In Proceedings of the 2014 IEEE 13th International Conference on Trust, Security and Privacy in Computing and Communications, Beijing, China, 24–26 September 2014; pp. 877–883. [Google Scholar]

- Li, Q.; Li, X. Android malware detection based on static analysis of characteristic tree. In Proceedings of the 2015 International Conference on Cyber-Enabled Distributed Computing and Knowledge Discovery, Xi’an, China, 17–19 September 2015; pp. 84–91. [Google Scholar]

- Moser, A.; Kruegel, C.; Kirda, E. Limits of static analysis for malware detection. In Proceedings of the Twenty-Third Annual Computer Security Applications Conference (ACSAC 2007), Miami Beach, FL, USA, 10–14 Dececember 2007; pp. 421–430. [Google Scholar]

- Zhang, Y.; Lv, D.; Guo, R.; Dietterich, T.; Zhou, Z.; Zhang, C.; Ma, Y.; Kuncheva, L.; Rokach, L.; Zhou, Z.; et al. Data Mining: Practical Machine Learning Tools and Techniques. J. Softw. Eng. 1997, 11, 97–136. [Google Scholar]

- Almomani, I.; Alenezi, M. Efficient Denial of Service Attacks Detection in Wireless Sensor Networks. J. Inf. Sci. Eng. 2018, 34, 977–1000. [Google Scholar]

- Enck, W.; Gilbert, P.; Han, S.; Tendulkar, V.; Chun, B.G.; Cox, L.P.; Jung, J.; McDaniel, P.; Sheth, A.N. TaintDroid: An information-flow tracking system for realtime privacy monitoring on smartphones. ACM Trans. Comput. Syst. 2014, 32, 5. [Google Scholar] [CrossRef]

- Zhou, Y.; Wang, Z.; Zhou, W.; Jiang, X. Hey, you, Get off of My Market: Detecting Malicious Apps in Official and Alternative Android Markets. NDSS. 2012, Volume 25, pp. 50–52. Available online: https://www.csd.uoc.gr/~hy558/papers/mal_apps.pdf (accessed on 1 August 2019).

- Grace, M.; Zhou, Y.; Zhang, Q.; Zou, S.; Jiang, X. Riskranker: Scalable and accurate zero-day android malware detection. In Proceedings of the 10th International Conference on Mobile Systems, Applications, and Services, Low Wood Bay, Lake District, UK, 25–29 June 2012; pp. 281–294. [Google Scholar]

- Affairs, S. DREBIN Android App Detects 94 Percent of Mobile Malware. Hoboken, NJ, USA, 2014. Available online: http://securityaffairs.co/wordpress/29020/malware/drebin-android-av.html (accessed on 1 December 2017).

- Yang, W.; Xiao, X.; Andow, B.; Li, S.; Xie, T.; Enck, W. Appcontext: Differentiating malicious and benign mobile app behaviors using context. In Proceedings of the 37th International Conference on Software Engineering (ICSE), Florence, Italy, 16–24 May 2015; Volume 1, pp. 303–313. [Google Scholar]

- Yang, W.; Kong, D.; Xie, T.; Gunter, C.A. Malware detection in adversarial settings: Exploiting feature evolutions and confusions in android apps. In Proceedings of the 33rd Annual Computer Security Applications Conference (ACSAC), Orlando, FL, USA, 4–8 December 2017. [Google Scholar]

- Wang, X.; Zhu, S.; Zhou, D.; Yang, Y. Droid-AntiRM: Taming Control Flow Anti-analysis to Support Automated Dynamic Analysis of Android Malware. In Proceedings of the 33rd Annual Conference on Computer Security Applications (ACSAC’17), Orlando, FL, USA, 4–8 December 2017. [Google Scholar]

- Rastogi, V.; Chen, Y.; Jiang, X. Droidchameleon: Evaluating android anti-malware against transformation attacks. In Proceedings of the 8th ACM SIGSAC Symposium on Information, Computer and Communications Security, Hangzhou, China, 8–10 May 2013; pp. 329–334. [Google Scholar]

- Song, S.; Kim, B.; Lee, S. The effective ransomware prevention technique using process monitoring on android platform. Mob. Inf. Syst. 2016, 2016, 2946735. [Google Scholar] [CrossRef]

- Ferrante, A.; Malek, M.; Martinelli, F.; Mercaldo, F.; Milosevic, J. Extinguishing Ransomware-a Hybrid Approach to Android Ransomware Detection. In Proceedings of the International Symposium on Foundations and Practice of Security, Nancy, France, 23–25 October 2017; pp. 242–258. [Google Scholar]

- Milosevic, N.; Dehghantanha, A.; Choo, K.K.R. Machine learning aided android malware classification. Comput. Electr. Eng. 2017, 61, 266–274. [Google Scholar] [CrossRef]

- Gharib, A.; Ghorbani, A. DNA-Droid: A Real-Time Android Ransomware Detection Framework. In Proceedings of the International Conference on Network and System Security, Helsinki, Finland, 21–23 August 2017; pp. 184–198. [Google Scholar]

- Cimitile, A.; Mercaldo, F.; Nardone, V.; Santone, A.; Visaggio, C.A. Talos: No more ransomware victims with formal methods. Int. J. Inf. Secur. 2018, 17, 719–738. [Google Scholar] [CrossRef]

- Kharraz, A.; Arshad, S.; Mulliner, C.; Robertson, W.K.; Kirda, E. UNVEIL: A Large-Scale, Automated Approach to Detecting Ransomware. In Proceedings of the USENIX Security Symposium, Austin, TX, USA, 10–12 August 2016; pp. 757–772. [Google Scholar]

- Scaife, N.; Carter, H.; Traynor, P.; Butler, K.R. Cryptolock (and drop it): Stopping ransomware attacks on user data. In Proceedings of the 2016 IEEE 36th International Conference on Distributed Computing Systems (ICDCS), Nara, Japan, 27–30 June 2016; pp. 303–312. [Google Scholar]

- Lab, K. Koler—The ’Police’ Ransomware for Android. 2014. Available online: https://media.kasperskycontenthub.com/wp-content/uploads/sites/43/2018/03/08081243/201407_Koler.pdf (accessed on 11 October 2017).

- koodous. Koodous Documentation. Available online: https://docs.koodous.com/ (accessed on 10 September 2018).

- Imran, M.; Afzal, M.T.; Qadir, M.A. A comparison of feature extraction techniques for malware analysis. Turk. J. Electr. Eng. Comput. Sci. 2017, 25, 1173–1183. [Google Scholar] [CrossRef]

- Santos, I.; Brezo, F.; Ugarte-Pedrero, X.; Bringas, P.G. Opcode sequences as representation of executables for data-mining-based unknown malware detection. Inf. Sci. 2013, 231, 64–82. [Google Scholar] [CrossRef]

- Martinelli, F.; Mercaldo, F.; Nardone, V.; Santone, A.; Visaggio, C.A. Identifying Mobile Repackaged Applications through Formal Methods. In Proceedings of the ICISSP, Portor, Portugal, 19–21 February 2017; pp. 673–682. [Google Scholar]

- Chen, Q.; Zobel, J.; Verspoor, K. Duplicates, redundancies and inconsistencies in the primary nucleotide databases: A descriptive study. Database 2017, 2017. [Google Scholar] [CrossRef]

- Rahm, E.; Do, H.H. Data cleaning: Problems and current approaches. IEEE Data Eng. Bull. 2000, 23, 3–13. [Google Scholar]

- Raman, K. Selecting features to classify malware. In Proceedings of the InfoSec Southwest, Austin, TX, USA, 30 March–1 April 2012. [Google Scholar]

- Yu, L.; Liu, H. Efficient feature selection via analysis of relevance and redundancy. J. Mach. Learn. Res. 2004, 5, 1205–1224. [Google Scholar]

- Korn, F.; Pagel, B.U.; Faloutsos, C. On the “dimensionality curse” and the “self-similarity blessing”. IEEE Trans. Knowl. Data Eng. 2001, 13, 96–111. [Google Scholar] [CrossRef]

- Sheena, K.K.; Kumar, G. Analysis of Feature selection Techniques: A Data Mining Approach. Int. J. Comput. Appl. ICAET 2016, 975, 8887. [Google Scholar]

- Jović, A.; Brkić, K.; Bogunović, N. A review of feature selection methods with applications. In Proceedings of the 2015 38th International Convention on Information and Communication Technology, Electronics and Microelectronics (MIPRO), Opatija, Croatia, 25–29 May 2015; pp. 1200–1205. [Google Scholar]

- Suarez-Tangil, G.; Stringhini, G. Eight Years of Rider Measurement in the Android Malware Ecosystem: Evolution and Lessons Learned. arXiv 2018, arXiv:1801.08115. [Google Scholar]

- Darcey, S.C.L. Learn Java for Android Development: Reflection Basics. 2010. Available online: https://code.tutsplus.com/tutorials/learn-java-for-android-development-reflection-basics–mobile-3203 (accessed on 7 August 2018).

- Android. Package Index | Android Developers. 2018. Available online: https://developer.android.com/reference/packages (accessed on 4 August 2018).

- Sharma, A.; Dash, S.K. Mining api calls and permissions for android malware detection. In Proceedings of the International Conference on Cryptology and Network Security, Heraklion, Crete, Greece, 22–24 October 2014; pp. 191–205. [Google Scholar]

- Bel, S. How To Dissect Android Simplelocker Ransomware. 2014. Available online: http://securehoney.net/blog/how-to-dissect-android-simplelocker-ransomware.html (accessed on 4 October 2018).

- Mughal, J. Find/Get Imei Number in Android Programmatically. 2016. Available online: https://www.android-examples.com/get-imei-number-in-android-programmatically/ (accessed on 11 October 2017).

- Rosmansyah, Y.; Dabarsyah, B. Malware detection on android smartphones using api class and machine learning. In Proceedings of the 2015 International Conference on Electrical Engineering and Informatics (ICEEI), Denpasar, Indonesia, 10–11 August 2015; pp. 294–297. [Google Scholar]

- Kelkar, S.P. Detecting Information Leakage in Android Malware Using Static Taint Analysis. Ph.D. Thesis, Wright State University, Dayton, OH, USA, 2017. [Google Scholar]

- Saurel, S. Creating a Lock Screen Device App for Android. 2018. Available online: https://medium.com/@ssaurel/creating-a-lock-screen-device-app-for-android-4ec6576b92e0 (accessed on 15 August 2018).

- Amos, B.; Turner, H.; White, J. Applying machine learning classifiers to dynamic android malware detection at scale. In Proceedings of the 2013 9th International Wireless Communications and Mobile Computing Conference (IWCMC), Sardinia, Italy, 1–5 July 2013; pp. 1666–1671. [Google Scholar]

- Martín, I.; Hernández, J.A.; Muñoz, A.; Guzmán, A. Android malware characterization using metadata and machine learning techniques. Secur. Commun. Netw. 2018, 2018, 5749481. [Google Scholar] [CrossRef]

- Touw, W.G.; Bayjanov, J.R.; Overmars, L.; Backus, L.; Boekhorst, J.; Wels, M.; van Hijum, S.A. Data mining in the Life Sciences with Random Forest: A walk in the park or lost in the jungle? Brief. Bioinform. 2012, 14, 315–326. [Google Scholar] [CrossRef]

- Suarez-Tangil, G.; Dash, S.K.; Ahmadi, M.; Kinder, J.; Giacinto, G.; Cavallaro, L. DroidSieve: Fast and accurate classification of obfuscated android malware. In Proceedings of the Seventh ACM on Conference on Data and Application Security and Privacy, Scottsdale, AZ, USA, 22–24 March 2017; pp. 309–320. [Google Scholar]

- Smutz, C.; Stavrou, A. Malicious PDF detection using metadata and structural features. In Proceedings of the 28th Annual Computer Security Applications Conference, Orlando, FL, USA, 3–7 December 2012; pp. 239–248. [Google Scholar]

- Bose, A.; Hu, X.; Shin, K.G.; Park, T. Behavioral detection of malware on mobile handsets. In Proceedings of the 6th International Conference on Mobile Systems, Applications, and Services, Breckenridge, CO, USA, 17–20 June 2008; pp. 225–238. [Google Scholar]

- Yerima, S.Y.; Sezer, S.; McWilliams, G.; Muttik, I. A new android malware detection approach using bayesian classification. In Proceedings of the 2013 IEEE 27th International Conference on Advanced Information Networking and Applications (AINA), Barcelona, Spain, 25–28 March 2013; pp. 121–128. [Google Scholar]

- Roy, S.; DeLoach, J.; Li, Y.; Herndon, N.; Caragea, D.; Ou, X.; Ranganath, V.P.; Li, H.; Guevara, N. Experimental study with real-world data for android app security analysis using machine learning. In Proceedings of the 31st Annual Computer Security Applications Conference, Los Angeles, CA, USA, 7–11 December 2015; pp. 81–90. [Google Scholar]

| Operating System | 2016 | 2017 | 2018 |

|---|---|---|---|

| Android | 71.97% | 73.54% | 76.61% |

| iOS | 18.89% | 19.91% | 20.66% |

| Other | 9.14% | 6.55% | 2.73% |

| Work | Static or Dynamic | Approach | Feature Set | Machine Learning | Ransomware Dataset | Publicly Accessible | Year |

|---|---|---|---|---|---|---|---|

| Andronio et al. [23] | Static | - Look for threatening messages in text - Analyzes dynamically allocated strings - Examine app ability of locking and encrypting the device | Threatening text, BIND_DEVICE_ADMIN permission, API methods (lockNow(),onKeyUp() and onKeyDown()), FLAG_SHOW_WHEN_LOCKED and trace of encryption process | Natural language processing (NLP) | Own collected ransomware samples (HelDroid) | Yes | 2015 |

| Yang et al. [22] | Static and dynamic | Suggest some malware and ransomware indicators | Permissions, API methods invoking flow, access to critical paths, malicious domain access and charges through sms and calls | Not stated | Not stated | No | 2015 |

| Zheng et al. [27] | Static | - Look for threatening messages in images - Forward and backward analyses to observe any malicious reflection - Inspect abuses of the device administration API to detect uses of cryptographic APIs | Threatening messages in images, meta-data policies, package name, URLs, file types and their count, number of permissions, activities and services, use of obfuscation, Reachability (operations on SMS) and API methods (Invoke()onEnable() onDisable()) | Decision trees (J48), Random forests, Support vector machine (SVM), Stochastic Gradient Descent (SGD), Decision Tables (DT) and rule learners (JRip, FURIA, LAC, RIDOR) | Contagio Mobile dataset And VirusTotal | Yes | 2016 |

| Song et al. [48] | Dynamic | Monitor processes and specific file directories | File input/output events, processor status info(processor share, memory usage, I/O count and Storage I/O count for each specific process) | Not stated | One self-developed ransomware sample | No | 2016 |

| Mercaldo et al. [25] | Static | Apply formal methods for checking the ransomware behavior. | Ransomware behavior (did not specify) | Not stated | HelDroid and Contagio Mobile dataset | No | 2016 |

| Maiorca et al. [24] | Static | Look for API call invoked in the executable code | 234 api call in their dataset | Random forest | HelDroid and VirusTotal | Yes | 2017 |

| Ferrante et al. [49] | Static and dynamic | Detect obcodes frequencies of some characteristics from the execution logs of an application | obcode occurrences, CPU, memory and network usage and system calls | Decision Trees (J48), Naïve Bayes, and Logistic Regression | HelDroid | No | 2017 |

| Gharib et al. [51] | Static and dynamic | - Look for threatening messages in text - Look for specific images/logos - Detect permissions and API calls statically - Detect dynamically API calls and compare it with already defined behavior (DNA) in databas | Threatening text, number of nude images and specific logos and API calls | Random forests, Support vector machine (SVM), Naïve Bayes, AdaBoost (AB) and Deep Neural Networks (DNN) | HelDroid, Contagio Mobile dataset, VirusTotal and Koodous | Yes | 2017 |

| Cimitille et al. [52] | Static | Apply formal methods for checking the ransomware behavior. | Obtain admin privileges and encryption process flow | Not stated | HelDroid and Contagio Mobile dataset | No | 2017 |

| Chen et al. [26] | Dynamic | Identify user interface differences between benign and ransomware apps with coordinates of the user’s finger movements. | User interface and information entropy of files before and after encryption | Not stated | HelDroid and own collected samples | No | 2018 |

| Sample | MD5 | SHA256 |

|---|---|---|

| Sample-1 | 67bde6039310b4bb9 ccd9fcf2a721a45 | 4d3de2103f740345aa2041691fde0878d7 d32e9e4985adf6b030d2e679560118 |

| Sample-2 | fb14553de1f41e3fc dc8f68fd9eed831 | 2e1ca3a9f46748e0e4aebdea1afe84f101 5e3e7ce667a91e4cfabd0db8557cbf |

| Source of Hash | Source of Apk File | Number of Samples | |||

|---|---|---|---|---|---|

| Collected | After Decompiling | Total | Total after Removing Duplicates | ||

| HelDroid project | Koodous | 345 | 304 | 2959 | 500 (17% of total) |

| RansomProper project | RansomProper project | 2258 | 2025 | ||

| Virus Total | Virus Total | 694 | 590 | ||

| Koodous | Koodous | 40 | 40 | ||

| Source of Apk File | Number of Samples | |||

|---|---|---|---|---|

| Collected | After Decompiling | Total | Total after removing Duplicates | |

| Google play store | 519 | 500 | 500 | 500 (100% of total) |

| API-RDS Accuracy | Kappa | Ransomware (TP, FN) | Benign (TN, FP) |

|---|---|---|---|

| 97% | 0.94 | 490,10 | 480,20 |

| Classifier | False Positive Ratio | AUC |

|---|---|---|

| Random Forest | 3.0% | 0.995 |

| Decision trees (J48) | 3.4% | 0.965 |

| SMO | 3.8% | 0.961 |

| Naïve Bayes | 6.5% | 0.961 |

| API-RDS Accuracy | Kappa | Ransomware (TP, FN) | Benign (TN, FP) |

|---|---|---|---|

| 96.5% | 0.93 | 96,4 | 97,3 |

| Work | Raw Dataset | Filtered Dataset |

|---|---|---|

| R-PackDroid Part-1 | 4098 benign, 5560 malware and 672 ransomware | NA |

| R-PackDroid Part-2 | 4098 benign, 5560 malware and 2022 ransomware | NA |

| API-RDS | 500 benign and 2959 Ransomware | 500 benign and 500 ransomware |

| Work | Testing Dataset | Classified as Malicious | API Level | Number of Features (Complexity) | Detection Accuracy |

|---|---|---|---|---|---|

| R-PackDroid Part-1 | 440 (unfiltered) | 415 | API24 | 234 | 94.3% |

| R-PackDroid Part-2 | 958 (unfiltered) | 876 | API24 | 234 | 91.4% |

| API-RDS Scenario-1 | 700 (unfiltered) | 696 | API27 | 173 | 99.4% |

| API-RDS Scenario-2 | 100 (filtered) | 96 | API27 | 173 | 96% |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Alsoghyer, S.; Almomani, I. Ransomware Detection System for Android Applications. Electronics 2019, 8, 868. https://doi.org/10.3390/electronics8080868

Alsoghyer S, Almomani I. Ransomware Detection System for Android Applications. Electronics. 2019; 8(8):868. https://doi.org/10.3390/electronics8080868

Chicago/Turabian StyleAlsoghyer, Samah, and Iman Almomani. 2019. "Ransomware Detection System for Android Applications" Electronics 8, no. 8: 868. https://doi.org/10.3390/electronics8080868

APA StyleAlsoghyer, S., & Almomani, I. (2019). Ransomware Detection System for Android Applications. Electronics, 8(8), 868. https://doi.org/10.3390/electronics8080868