Biologically-Inspired Computational Neural Mechanism for Human Action/activity Recognition: A Review

Abstract

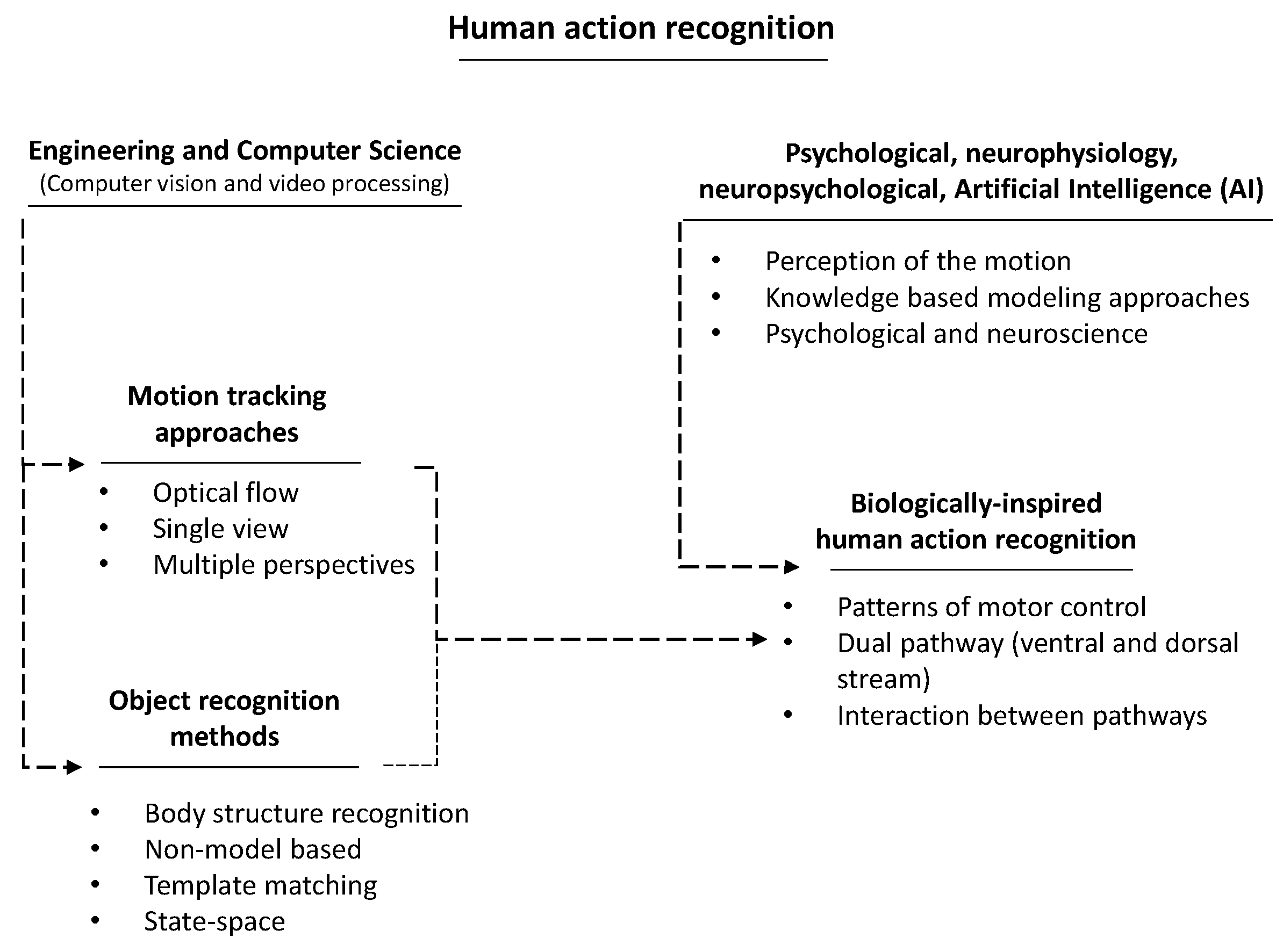

1. Introduction

- (1)

- symbolic methods, which follow classic approaches, are similar to expert systems and termed as connectionism approaches;

- (2)

- scruffy methods concentrate on the intelligence evolution or following artificial neural networks.

2. Motivation

- -

- Perception of the motion

- -

- Knowledge based modeling of the human action

- -

- Psychology and neurophysiology of the motion

3. Analysis of Biological Movement

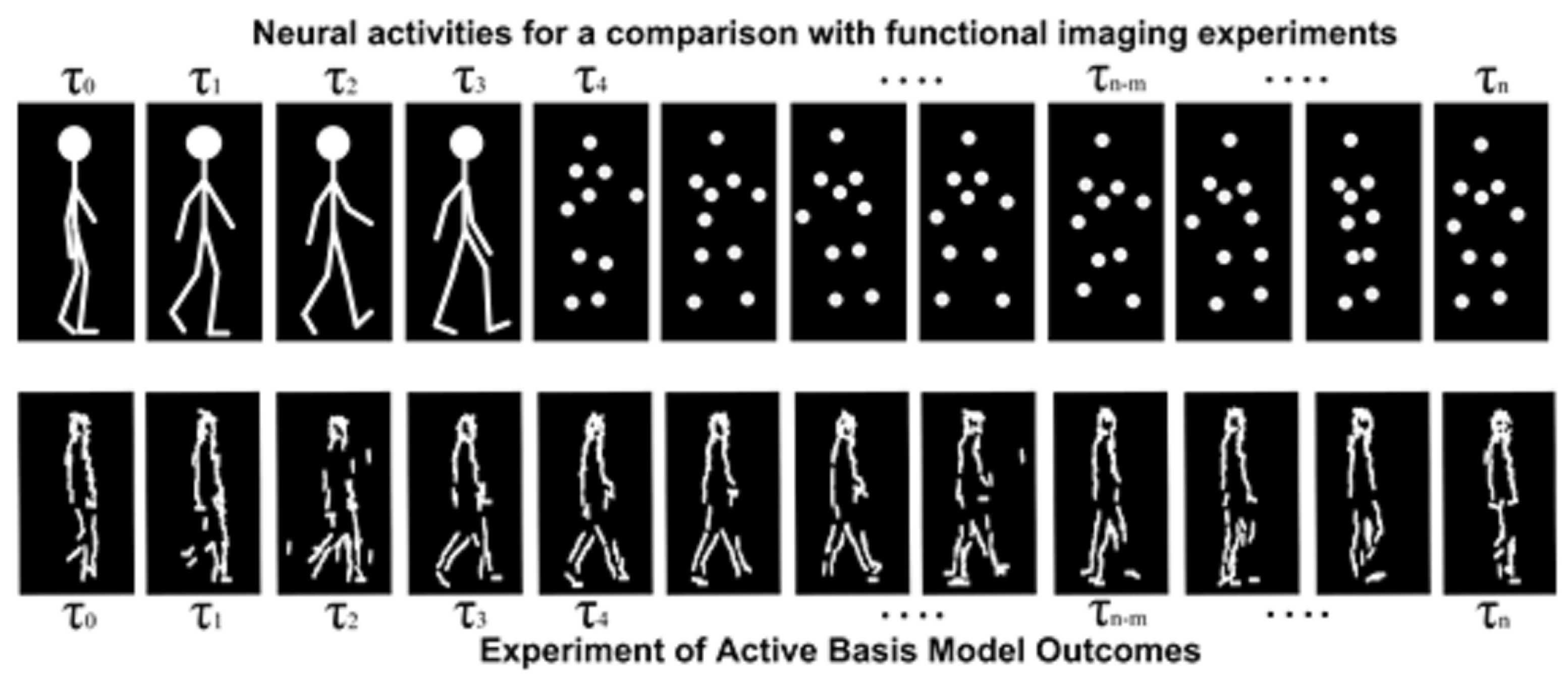

3.1. Motion Patterns

- One technique uses global feature extraction from video streams to allocate a particular label to the whole video. This technique clearly needs an unchanged observer within the video, and the environments where actions are occurring should be considered [7].

- The second technique considers local features in each frame and label for distinct action. Afterward, sequences can be attained through simple voting for global labeling. Temporal analysis for obtaining the features in each frame and classification is based on the observation in temporal window.

3.2. Kinetic–Geometric Model

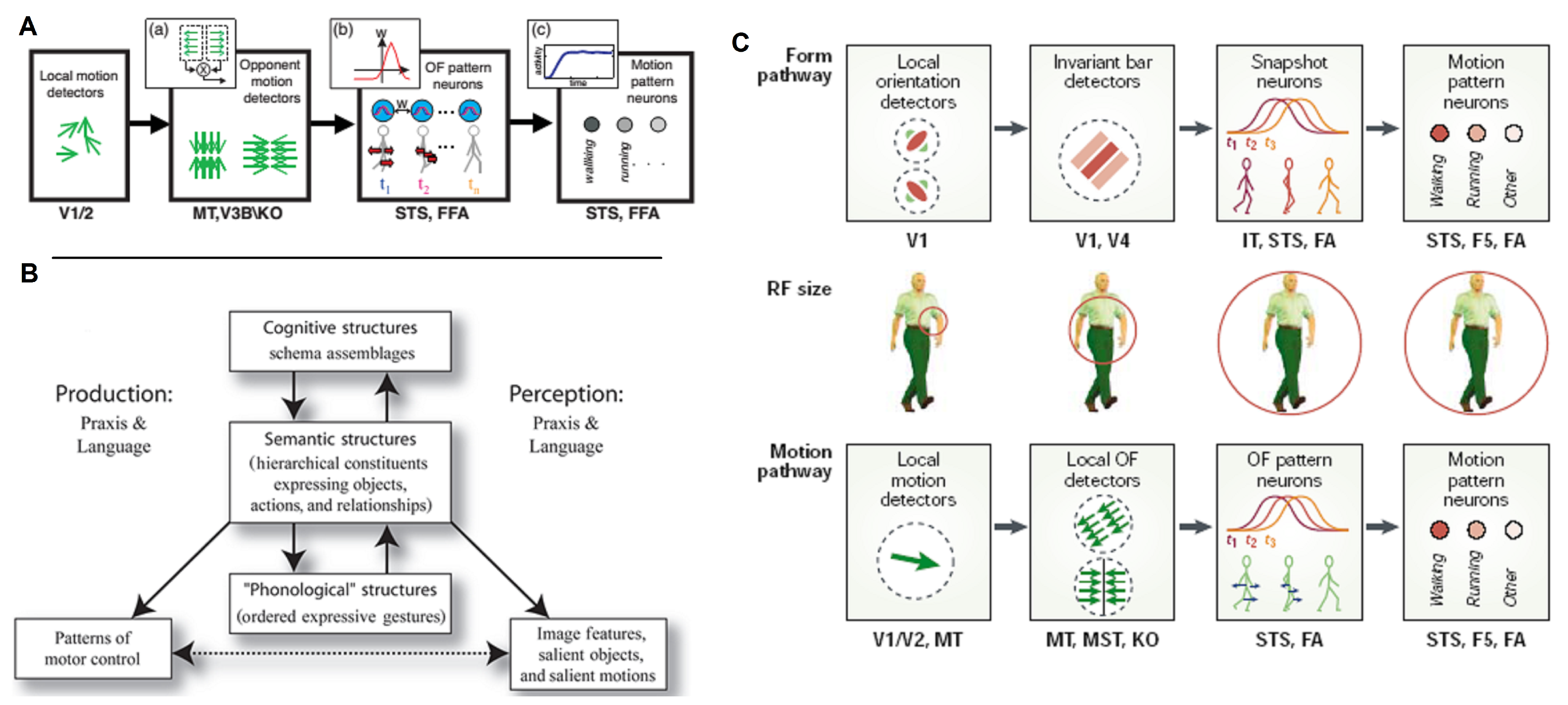

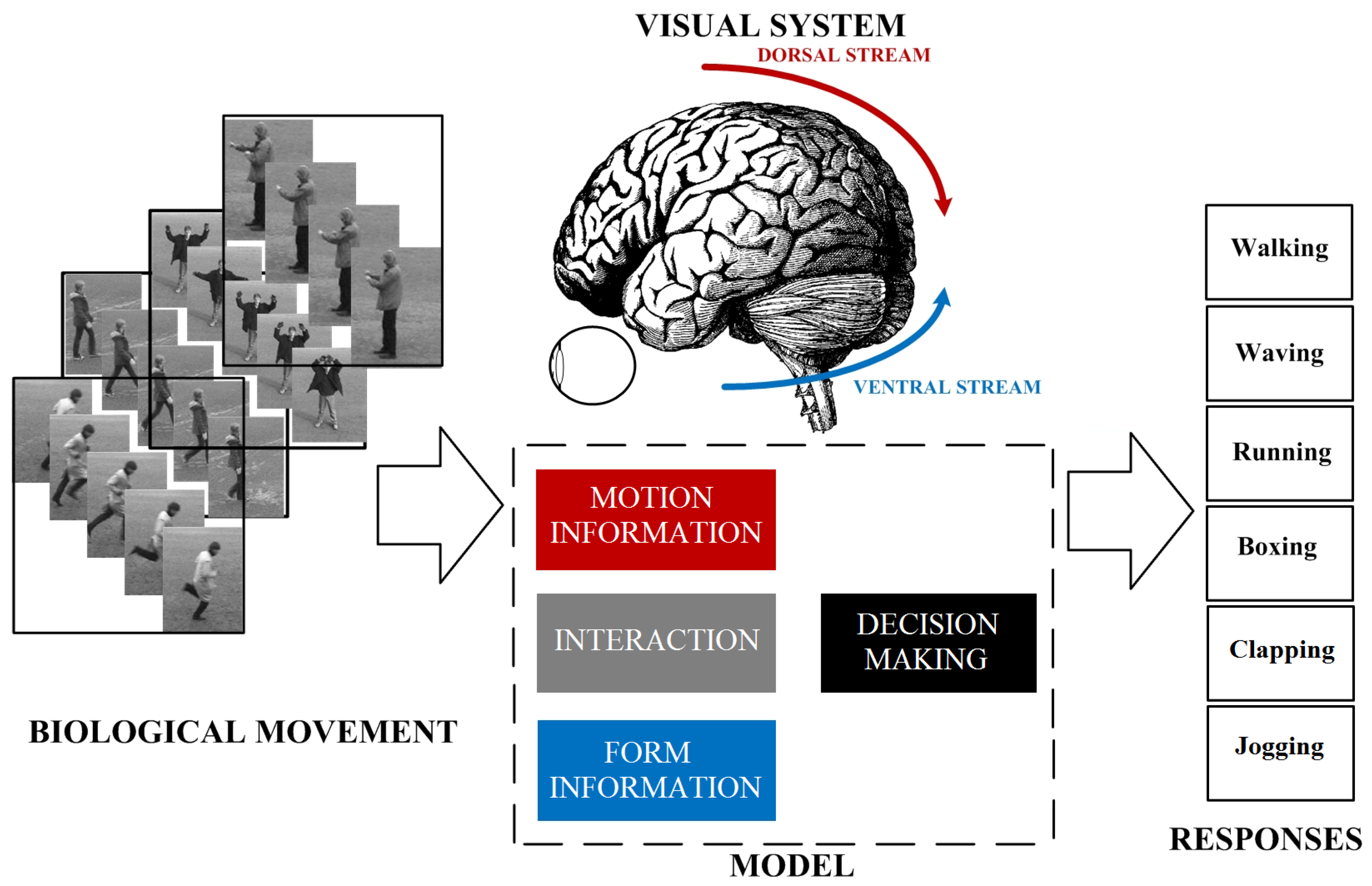

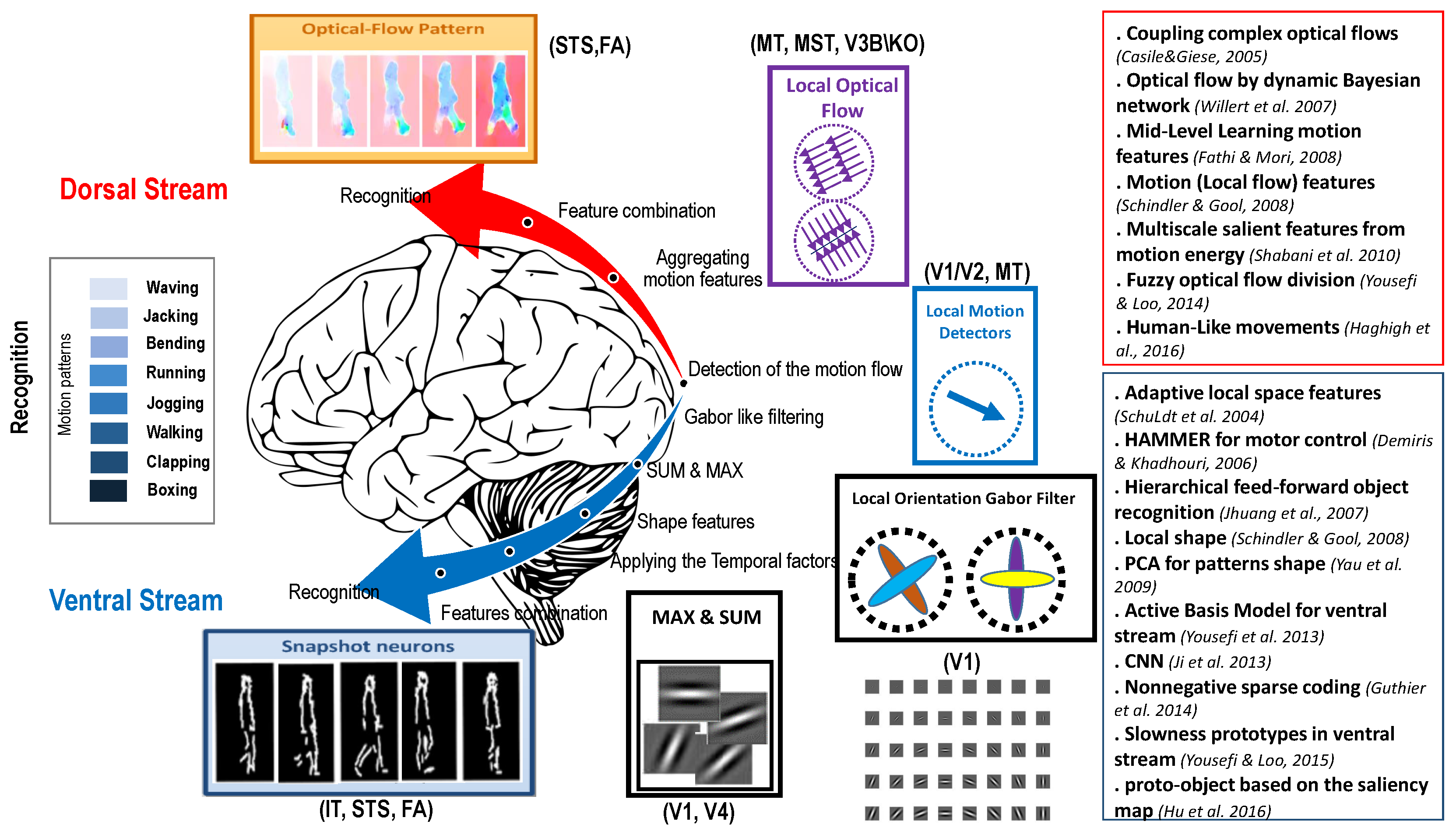

3.3. What and Where Pathways

4. Perception of The Motion

4.1. Perception And Actions

4.2. Motion Patterns for Perception Of Action

4.2.1. Spatiotemporal Filter

4.2.2. 3D Structural Method

4.2.3. Motion Capture

4.3. Computational Models

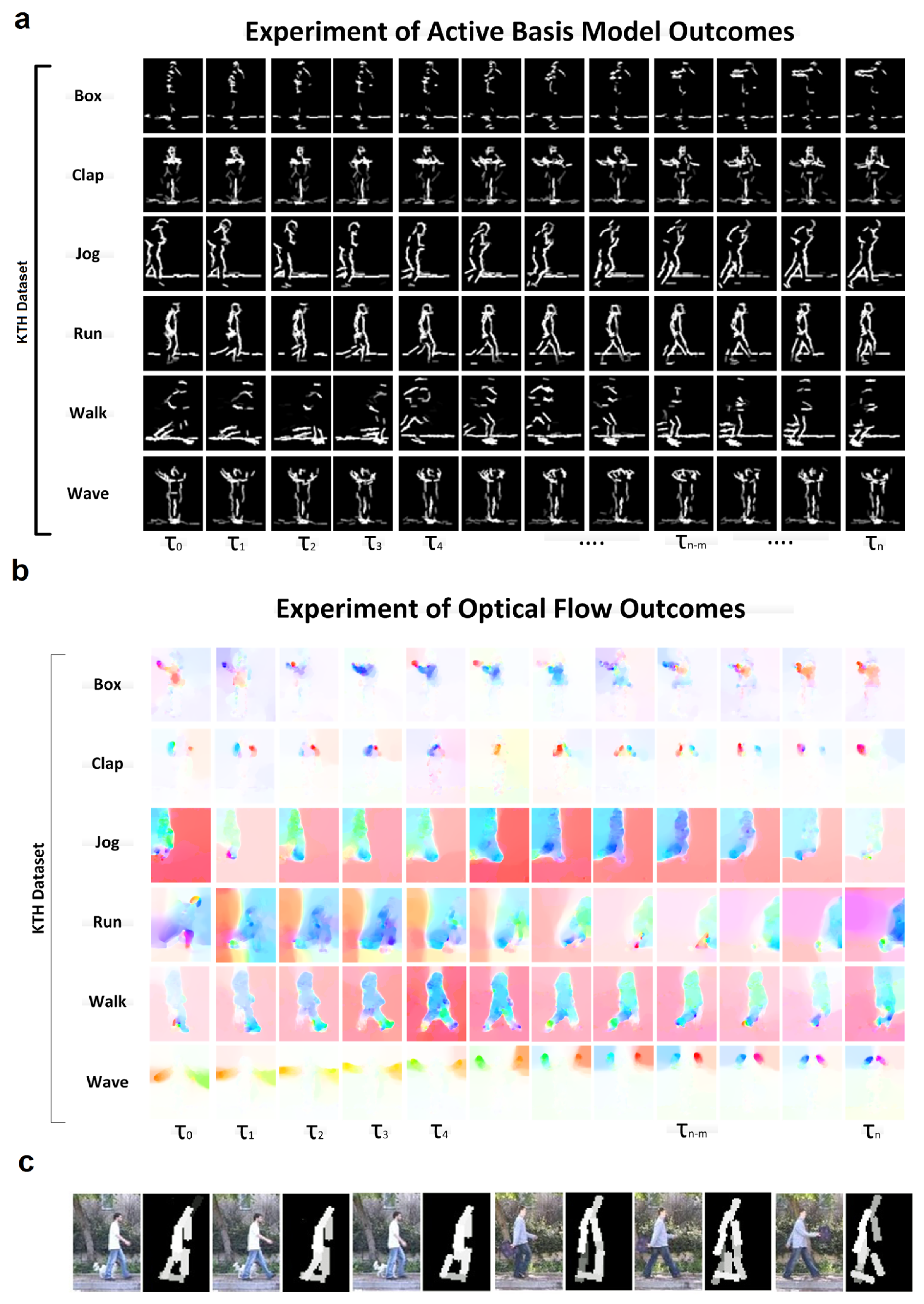

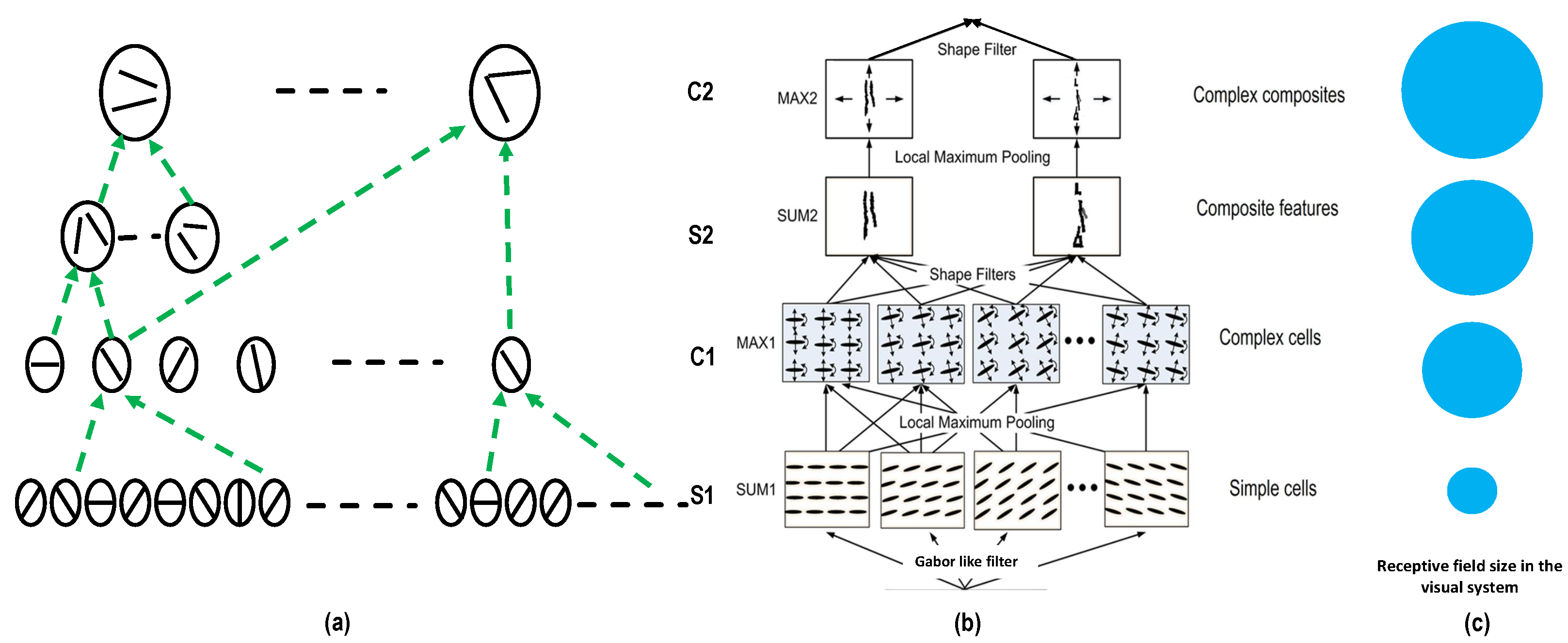

4.3.1. Form Pathway

4.3.2. Motion Pathway

4.4. Summary

5. Knowledge Based Modeling Approaches

5.1. Gabor Filter in Form Pathway

5.2. Deep Learning

5.3. Sparse Representation

5.4. Dynamic Representation Of Action

- Semantics is based on pragmatics;

- Pragmatics is anchored on semantics; and

- Pragmatics is a part and parcel of semantics (taken from [143]).

5.5. Interaction Between Pathways

5.6. Summary

6. Psychological and Neuroscience Point Of View

6.1. Biological Evidence Using Fmri

6.2. Biological Model And Imitation

6.3. Visual System Impairment and Pathways

6.4. Summary

7. Future Directions

8. Conclusions

Funding

Acknowledgments

Conflicts of Interest

Abbreviations

| AI | artificial intelligence |

| MLD | moving light display |

| PGA | Parliamentarians for Global Action |

| HMM | hidden Markov model |

| 2D | two dimension |

| 3D | three dimension |

| STS | superior temporal sulcus |

| ANN | Artificial Neural Network |

| KO | Kinetic Occipital area |

| MT | Medial Temporal |

| V5 | Fifth portion of visual extrastriate areas |

| LOC | loss of consciousness |

| EBA | extrastriate body area |

| BOLD | blood oxygenation level-dependent |

| fMRI | functional magnetic resonance imaging |

| STSp | posterior superior temporal sulcus |

| ITS | inferior temporal sulcus |

| FFA | fusiform face area |

| FBA | fusiform body area |

| AC | auditory cortex |

| DSRF | Dual Square-Root Function |

| HAMMER | multiple models for execution and recognition |

| ANN | Artificial Neural Network |

| PPA | parahippocampal place area |

| TOS | transverse occipital sulcus |

| RSC | retrosplenial complex |

| V1 | Visual Primary Cortex |

| CNNs | convolutional neural networks |

| LSTM | long-short term memory |

| LGMD | Lobula giant movement detectors |

| STIP | spatio-temporal interesting points |

| MBP | Motion Binary Pattern |

| VLBP | Volume Local Binary Pattern |

| OPE | optical flow field |

| BOW | bag of visual words |

| MST | Medial Superior Temporal |

| V3A | Third area of visual extrastriate areas-accessory |

| CSv | Cingulate Sulcus Visual Area |

| IPSmot | Intra-Parietal Sulcus motion |

| ABM | active basis model |

| DRAMA | Dynamical Recurrent As- sociative Memory Architecture |

| ASCs | autism spectrum conditions |

| LGNd | lateral geniculate nucleus in the thalamus |

| V1þ | early visual areas |

| EEG | electroencephalography |

References

- Aggarwal, J.K.; Cai, Q. Human motion analysis: A review. In Proceedings of the Nonrigid and Articulated Motion Workshop, San Juan, PR, USA, 16 June 1997; pp. 90–102. [Google Scholar]

- Turaga, P.; Chellappa, R.; Subrahmanian, V.S.; Udrea, O. Machine recognition of human activities: A survey. IEEE Trans. Circuits Syst. Video Technol. 2008, 18, 1473–1488. [Google Scholar] [CrossRef]

- Rubin, E. Visuell wahrgenommene wirkliche Bewegungen. Z. Psychol. 1927, 103, 384–392. [Google Scholar]

- Duncker, K. Über induzierte bewegung. Psychol. Forsch. 1929, 12, 180–259. [Google Scholar] [CrossRef]

- Johansson, G. Visual perception of biological motion and a model for its analysis. Percept. Psychophys. 1973, 14, 201–211. [Google Scholar] [CrossRef]

- Leek, E.C.; Cristino, F.; Conlan, L.I.; Patterson, C.; Rodriguez, E.; Johnston, S.J. Eye movement patterns during the recognition of three-dimensional objects: Preferential fixation of concave surface curvature minima. J. Vis. 2012, 12, 7. [Google Scholar] [CrossRef]

- Santofimia, M.J.; Martinez-del Rincon, J.; Nebel, J.C. Episodic reasoning for vision-based human action recognition. Sci. World J. 2014, 2014, 270171. [Google Scholar] [CrossRef]

- Hogg, T.; Rees, D.; Talhami, H. Three-dimensional pose from two-dimensional images: A novel approach using synergetic networks. In Proceedings of the ICNN’95-International Conference on Neural Networks, Perth, Australia, 27 November–1 December 1995; pp. 1140–1144. [Google Scholar]

- Schindler, K.; Van Gool, L. Action snippets: How many frames does human action recognition require? In Proceedings of the 2008 IEEE Conference on Computer Vision and Pattern Recognition, Anchorage, AK, USA, 23–28 June 2008; pp. 1–8. [Google Scholar]

- Schindler, K.; Van Gool, L. Combining densely sampled form and motion for human action recognition. In Pattern Recognition; Springer: Berlin/Heidelberg, Germany, 2008; pp. 122–131. [Google Scholar]

- Efros, A.A.; Berg, A.C.; Mori, G.; Malik, J. Recognizing action at a distance. In Proceedings of the Ninth IEEE International Conference on Computer Vision, Nice, France, 13–16 October 2003; p. 726. [Google Scholar]

- Daugman, J.G. Two-dimensional spectral analysis of cortical receptive field profiles. Vis. Res. 1980, 20, 847–856. [Google Scholar] [CrossRef]

- Olshausen, B.A.; Field, D.J. Emergence of simple-cell receptive field properties by learning a sparse code for natural images. Nature 1996, 381, 607. [Google Scholar] [CrossRef]

- Riesenhuber, M.; Poggio, T. Neural mechanisms of object recognition. Curr. Opin. Neurobiol. 2002, 12, 162–168. [Google Scholar] [CrossRef]

- Wu, Y.N.; Si, Z.; Gong, H.; Zhu, S.C. Learning active basis model for object detection and recognition. Int. J. Comput. Vis. 2010, 90, 198–235. [Google Scholar] [CrossRef]

- Yousefi, B.; Loo, C.K. A dual fast and slow feature interaction in biologically inspired visual recognition of human action. Appl. Soft Comput. 2018, 62, 57–72. [Google Scholar] [CrossRef]

- Johansson, G. Visual motion perception. Sci. Am. 1975, 232, 76–89. [Google Scholar] [CrossRef] [PubMed]

- Kozlowski, L.T.; Cutting, J.E. Recognizing the sex of a walker from a dynamic point-light display. Percept. Psychophys. 1977, 21, 575–580. [Google Scholar] [CrossRef]

- Perrett, D.; Smith, P.; Mistlin, A.; Chitty, A.; Head, A.; Potter, D.; Broennimann, R.; Milner, A.; Jeeves, M. Visual analysis of body movements by neurones in the temporal cortex of the macaque monkey: A preliminary report. Behav. Brain Res. 1985, 16, 153–170. [Google Scholar] [CrossRef]

- Perrett, D.I.; Harries, M.H.; Bevan, R.; Thomas, S.; Benson, P.; Mistlin, A.J.; Chitty, A.J.; Hietanen, J.K.; Ortega, J. Frameworks of analysis for the neural representation of animate objects and actions. J. Exp. Biol. 1989, 146, 87–113. [Google Scholar] [PubMed]

- Goddard, N.H. The interpretation of visual motion: Recognizing moving light displays. In Proceedings of the Workshop on Visual Motion, Irvine, CA, USA, 20–22 March 1989; pp. 212–220. [Google Scholar]

- Jamshidnezhad, A.; Nordin, M.J. Bee royalty offspring algorithm for improvement of facial expressions classification model. Int. J. Bio-Inspired Comput. 2013, 5, 175–191. [Google Scholar] [CrossRef]

- Babaeian, A.; Babaee, M.; Bayestehtashk, A.; Bandarabadi, M. Nonlinear subspace clustering using curvature constrained distances. Pattern Recognit. Lett. 2015, 68, 118–125. [Google Scholar] [CrossRef]

- Casile, A.; Giese, M.A. Critical features for the recognition of biological motion. J. Vis. 2005, 5, 6. [Google Scholar] [CrossRef]

- Arbib, M.A. From monkey-like action recognition to human language: An evolutionary framework for neurolinguistics. Behav. Brain Sci. 2005, 28, 105–124. [Google Scholar] [CrossRef]

- Giese, M.A.; Poggio, T. Neural mechanisms for the recognition of biological movements. Nat. Rev. Neurosci. 2003, 4, 179–192. [Google Scholar] [CrossRef]

- Goddard, N.H. The Perception of Articulated Motion: Recognizing Moving Light Displays; Technical Report; DTIC: Fort Belvoir, VA, USA, 1992.

- Giese, M.; Poggio, T. Synthesis and recognition of biological motion patterns based on linear superposition of prototypical motion sequences. In Proceedings of the Multi-View Modeling and Analysis of Visual Scenes, Fort Collins, CO, USA, 26 June 1999; pp. 73–80. [Google Scholar]

- Goodale, M.A.; Milner, A.D. Separate visual pathways for perception and action. Trends Neurosci. 1992, 15, 20–25. [Google Scholar] [CrossRef]

- Cedras, C.; Shah, M. Motion-based recognition a survey. Image Vis. Comput. 1995, 13, 129–155. [Google Scholar] [CrossRef]

- Perkins, D. Outsmarting IQ: The Emerging Science of Learnable Intelligence; Simon and Schuster: New York, NY, USA, 1995. [Google Scholar]

- Tsai, P.S.; Shah, M.; Keiter, K.; Kasparis, T. Cyclic Motion Detection; Computer Science Technical Report; University of Central Florida: Orlando, FL, USA, 1993. [Google Scholar]

- Riesenhuber, M.; Poggio, T. Hierarchical models of object recognition in cortex. Nat. Neurosci. 1999, 2, 1019–1025. [Google Scholar] [CrossRef] [PubMed]

- Hubel, D.H.; Wiesel, T.N. Receptive fields and functional architecture of monkey striate cortex. J. Physiol. 1968, 195, 215–243. [Google Scholar] [CrossRef] [PubMed]

- Gallese, V.; Fadiga, L.; Fogassi, L.; Rizzolatti, G. Action recognition in the premotor cortex. Brain 1996, 119, 593–609. [Google Scholar] [CrossRef] [PubMed]

- Tarr, M.J.; Bülthoff, H.H. Image-based object recognition in man, monkey and machine. Cognition 1998, 67, 1–20. [Google Scholar] [CrossRef]

- Billard, A.; Matarić, M.J. Learning human arm movements by imitation: Evaluation of a biologically inspired connectionist architecture. Robot. Auton. Syst. 2001, 37, 145–160. [Google Scholar] [CrossRef]

- Yousefi, B.; Loo, C.K.; Memariani, A. Biological inspired human action recognition. In Proceedings of the 2013 IEEE Workshop on Robotic Intelligence In Informationally Structured Space (RiiSS), Singapore, 16–19 April 2013; pp. 58–65. [Google Scholar]

- Fielding, K.H.; Ruck, D.W. Recognition of moving light displays using hidden Markov models. Pattern Recognit. 1995, 28, 1415–1421. [Google Scholar] [CrossRef]

- Hill, H.; Pollick, F.E. Exaggerating temporal differences enhances recognition of individuals from point light displays. Psychol. Sci. 2000, 11, 223–228. [Google Scholar] [CrossRef]

- Weinland, D.; Ronfard, R.; Boyer, E. A survey of vision-based methods for action representation, segmentation and recognition. Comput. Vis. Image Underst. 2011, 115, 224–241. [Google Scholar] [CrossRef]

- Giese, M.; Lappe, M. Measurement of generalization fields for the recognition of biological motion. Vis. Res. 2002, 42, 1847–1858. [Google Scholar] [CrossRef]

- Ryoo, M.S.; Aggarwal, J.K. Spatio-temporal relationship match: Video structure comparison for recognition of complex human activities. In Proceedings of the 2009 IEEE 12th International Conference on Computer Vision, Kyoto, Japan, 29 September–2 October 2009; pp. 1593–1600. [Google Scholar]

- Cai, B.; Xu, X.; Qing, C. Bio-inspired model with dual visual pathways for human action recognition. In Proceedings of the 2014 9th International Symposium on Communication Systems, Networks & Digital Sign (CSNDSP), Manchester, UK, 23–25 July 2014; pp. 271–276. [Google Scholar]

- Rangarajan, K.; Allen, W.; Shah, M. Recognition using motion and shape. In Proceedings of the 11th IAPR International Conference on Pattern Recognition, Hague, The Netherlands, 30 August–3 September 1992; pp. 255–258. [Google Scholar]

- Neri, P.; Morrone, M.C.; Burr, D.C. Seeing biological motion. Nature 1998, 395, 894–896. [Google Scholar] [CrossRef] [PubMed]

- Gavrila, D.M. The visual analysis of human movement: A survey. Comput. Vis. Image Underst. 1999, 73, 82–98. [Google Scholar] [CrossRef]

- Wachter, S.; Nagel, H.H. Tracking of persons in monocular image sequences. In Proceedings of the Nonrigid and Articulated Motion Workshop, San Juan, PR, USA, 16 June 1997; pp. 2–9. [Google Scholar]

- Blais, C.; Arguin, M.; Marleau, I. Orientation invariance in visual shape perception. J. Vis. 2009, 9, 14. [Google Scholar] [CrossRef]

- Wang, Y.; Zhang, K. Decomposing the spatiotemporal signature in dynamic 3D object recognition. J. Vis. 2010, 10, 23. [Google Scholar] [CrossRef][Green Version]

- Decety, J.; Grèzes, J. Neural mechanisms subserving the perception of human actions. Trends Cogn. Sci. 1999, 3, 172–178. [Google Scholar] [CrossRef]

- Hu, B.; Kane-Jackson, R.; Niebur, E. A proto-object based saliency model in three-dimensional space. Vis. Res. 2016, 119, 42–49. [Google Scholar] [CrossRef]

- Silaghi, M.C.; Plänkers, R.; Boulic, R.; Fua, P.; Thalmann, D. Local and global skeleton fitting techniques for optical motion capture. In Proceedings of the International Workshop on Capture Techniques for Virtual Environments, Geneva, Switzerland, 26–27 November 1998; pp. 26–40. [Google Scholar]

- Kurihara, K.; Hoshino, S.; Yamane, K.; Nakamura, Y. Optical motion capture system with pan-tilt camera tracking and real time data processing. In Proceedings of the 2002 IEEE International Conference on Robotics and Automation, Washington, DC, USA, 11–15 May 2002; pp. 1241–1248. [Google Scholar]

- Zordan, V.B.; Van Der Horst, N.C. Mapping optical motion capture data to skeletal motion using a physical model. In Proceedings of the 2003 ACM SIGGRAPH/Eurographics symposium on Computer animation, San Diego, CA, USA, 26–27 July 2003; pp. 245–250. [Google Scholar]

- Kirk, A.G.; O’Brien, J.F.; Forsyth, D.A. Skeletal parameter estimation from optical motion capture data. In Proceedings of the 2005 IEEE Computer Society Conference on Computer Vision and Pattern Recognition, San Diego, CA, USA, 20–25 June 2005; pp. 782–788. [Google Scholar]

- Ghorbani, S.; Etemad, A.; Troje, N.F. Auto-labelling of Markers in Optical Motion Capture by Permutation Learning. In Proceedings of the Computer Graphics International Conference, Calgary, AB, Canada, 17–20 June 2019; pp. 167–178. [Google Scholar]

- Vlasic, D.; Adelsberger, R.; Vannucci, G.; Barnwell, J.; Gross, M.; Matusik, W.; Popović, J. Practical motion capture in everyday surroundings. Acm Trans. Graph. 2007, 26, 35. [Google Scholar] [CrossRef]

- Fernandez-Baena, A.; Susín Sánchez, A.; Lligadas, X. Biomechanical validation of upper-body and lower-body joint movements of kinect motion capture data for rehabilitation treatments. In Proceedings of the 2012 Fourth International Conference on Intelligent Networking and Collaborative Systems, Bucharest, Romania, 19–21 September 2012; pp. 656–661. [Google Scholar]

- Mahmood, N.; Ghorbani, N.; Troje, N.F.; Pons-Moll, G.; Black, M.J. AMASS: Archive of motion capture as surface shapes. arXiv 2019, arXiv:1904.03278. [Google Scholar]

- Corazza, S.; Muendermann, L.; Chaudhari, A.; Demattio, T.; Cobelli, C.; Andriacchi, T.P. A markerless motion capture system to study musculoskeletal biomechanics: Visual hull and simulated annealing approach. Ann. Biomed. Eng. 2006, 34, 1019–1029. [Google Scholar] [CrossRef]

- Mündermann, L.; Corazza, S.; Andriacchi, T.P. The evolution of methods for the capture of human movement leading to markerless motion capture for biomechanical applications. J. Neuroeng. Rehabil. 2006, 3, 6. [Google Scholar] [CrossRef] [PubMed]

- De Aguiar, E.; Theobalt, C.; Stoll, C.; Seidel, H.P. Marker-less deformable mesh tracking for human shape and motion capture. In Proceedings of the 2007 IEEE Conference on Computer Vision and Pattern Recognition, Minneapolis, MN, USA, 17–22 June 2007; pp. 1–8. [Google Scholar]

- Schmitz, A.; Ye, M.; Shapiro, R.; Yang, R.; Noehren, B. Accuracy and repeatability of joint angles measured using a single camera markerless motion capture system. J. Biomech. 2014, 47, 587–591. [Google Scholar] [CrossRef] [PubMed]

- Giese, M.A.; Poggio, T. Morphable models for the analysis and synthesis of complex motion patterns. Int. J. Comput. Vis. 2000, 38, 59–73. [Google Scholar] [CrossRef]

- Moeslund, T.B.; Granum, E. A survey of computer vision-based human motion capture. Comput. Vis. Image Underst. 2001, 81, 231–268. [Google Scholar] [CrossRef]

- Grezes, J.; Fonlupt, P.; Bertenthal, B.; Delon-Martin, C.; Segebarth, C.; Decety, J. Does perception of biological motion rely on specific brain regions? Neuroimage 2001, 13, 775–785. [Google Scholar] [CrossRef] [PubMed]

- Pollick, F.E.; Paterson, H.M.; Bruderlin, A.; Sanford, A.J. Perceiving affect from arm movement. Cognition 2001, 82, B51–B61. [Google Scholar] [CrossRef]

- Ballan, L.; Cortelazzo, G.M. Marker-less motion capture of skinned models in a four camera set-up using optical flow and silhouettes. In Proceedings of the 3DPVT, Atlanta, GA, USA, 18–20 June 2008. [Google Scholar]

- Rodrigues, T.B.; Catháin, C.Ó.; Devine, D.; Moran, K.; O’Connor, N.E.; Murray, N. An evaluation of a 3D multimodal marker-less motion analysis system. In Proceedings of the 10th ACM Multimedia Systems Conference, Amherst, MA, USA, 18–21 June 2019; pp. 213–221. [Google Scholar]

- Song, Y.; Goncalves, L.; Di Bernardo, E.; Perona, P. Monocular perception of biological motion in johansson displays. Comput. Vis. Image Underst. 2001, 81, 303–327. [Google Scholar] [CrossRef]

- Wiley, D.J.; Hahn, J.K. Interpolation synthesis of articulated figure motion. IEEE Comput. Graph. Appl. 1997, 17, 39–45. [Google Scholar] [CrossRef]

- Grossman, E.D.; Blake, R. Brain areas active during visual perception of biological motion. Neuron 2002, 35, 1167–1175. [Google Scholar] [CrossRef]

- Giese, M.A.; Jastorff, J.; Kourtzi, Z. Learning of the discrimination of artificial complex biological motion. Perception 2012, 31, 133–138. [Google Scholar]

- Yi, Y.; Zheng, Z.; Lin, M. Realistic action recognition with salient foreground trajectories. Expert Syst. Appl. 2017, 75, 44–55. [Google Scholar] [CrossRef]

- Blake, R.; Shiffrar, M. Perception of human motion. Annu. Rev. Psychol. 2007, 58, 47–73. [Google Scholar] [CrossRef] [PubMed]

- Beintema, J.; Lappe, M. Perception of biological motion without local image motion. Proc. Natl. Acad. Sci. USA 2002, 99, 5661–5663. [Google Scholar] [CrossRef] [PubMed]

- Kilner, J.M.; Paulignan, Y.; Blakemore, S.J. An interference effect of observed biological movement on action. Curr. Biol. 2003, 13, 522–525. [Google Scholar] [CrossRef]

- Cohen, E.H.; Singh, M. Perceived orientation of complex shape reflects graded part decomposition. J. Vis. 2006, 6, 4. [Google Scholar] [CrossRef] [PubMed][Green Version]

- Lange, J.; Georg, K.; Lappe, M. Visual perception of biological motion by form: A template-matching analysis. J. Vis. 2006, 6, 6. [Google Scholar] [CrossRef] [PubMed]

- Gölcü, D.; Gilbert, C.D. Perceptual learning of object shape. J. Neurosci. 2009, 29, 13621–13629. [Google Scholar] [CrossRef] [PubMed]

- McLeod, P. Preserved and Impaired Detection of Structure from Motion by “Motion-blind” Patient. Vis. Cogn. 1996, 3, 363–392. [Google Scholar] [CrossRef]

- Daems, A.; Verfaillie, K. Viewpoint-dependent priming effects in the perception of human actions and body postures. Vis. Cogn. 1999, 6, 665–693. [Google Scholar] [CrossRef]

- Troje, N.F.; Westhoff, C. The inversion effect in biological motion perception: Evidence for a “life detector”? Curr. Biol. 2006, 16, 821–824. [Google Scholar] [CrossRef]

- Strasburger, H.; Rentschler, I.; Jüttner, M. Peripheral vision and pattern recognition: A review. J. Vis. 2011, 11, 13. [Google Scholar] [CrossRef] [PubMed]

- Servos, P.; Osu, R.; Santi, A.; Kawato, M. The neural substrates of biological motion perception: An fMRI study. Cereb. Cortex 2002, 12, 772–782. [Google Scholar] [CrossRef] [PubMed]

- Grossman, E. fMR-adaptation reveals invariant coding of biological motion on human STS. Front. Hum. Neurosci. 2010, 4, 15. [Google Scholar] [CrossRef] [PubMed]

- Puce, A.; Perrett, D. Electrophysiology and brain imaging of biological motion. Philos. Trans. R. Soc. Lond. B 2003, 358, 435–445. [Google Scholar] [CrossRef]

- Pyles, J.A.; Garcia, J.O.; Hoffman, D.D.; Grossman, E.D. Visual perception and neural correlates of novel ‘biological motion’. Vis. Res. 2007, 47, 2786–2797. [Google Scholar] [CrossRef]

- Giese, M.A. Biological and body motion perception. In Oxford Handbook of Perceptual Organization; Oxford University Press: Oxford, UK, 2014. [Google Scholar]

- Tlapale, É.; Dosher, B.A.; Lu, Z.L. Construction and evaluation of an integrated dynamical model of visual motion perception. Neural Netw. 2015, 67, 110–120. [Google Scholar] [CrossRef][Green Version]

- Jung, C.; Sun, T.; Gu, A. Content adaptive video denoising based on human visual perception. J. Vis. Commun. Image Represent. 2015, 31, 14–25. [Google Scholar] [CrossRef]

- Meso, A.I.; Masson, G.S. Dynamic resolution of ambiguity during tri-stable motion perception. Vis. Res. 2015, 107, 113–123. [Google Scholar] [CrossRef]

- Nigmatullina, Y.; Arshad, Q.; Wu, K.; Seemungal, B.; Bronstein, A.; Soto, D. How imagery changes self-motion perception. Neuroscience 2015, 291, 46–52. [Google Scholar] [CrossRef]

- Tadin, D. Suppressive mechanisms in visual motion processing: From perception to intelligence. Vis. Res. 2015, 115, 58–70. [Google Scholar] [CrossRef]

- Matsumoto, Y.; Takahashi, H.; Murai, T.; Takahashi, H. Visual processing and social cognition in schizophrenia: Relationships among eye movements, biological motion perception, and empathy. Neurosci. Res. 2015, 90, 95–100. [Google Scholar] [CrossRef] [PubMed]

- Ahveninen, J.; Huang, S.; Ahlfors, S.P.; Hämäläinen, M.; Rossi, S.; Sams, M.; Jääskeläinen, I.P. Interacting parallel pathways associate sounds with visual identity in auditory cortices. NeuroImage 2016, 124, 858–868. [Google Scholar] [CrossRef] [PubMed]

- Fu, Q.; Ma, S.; Liu, L.; Liu, J. Human Action Recognition Based on Sparse LSTM Auto-encoder and Improved 3D CNN. In Proceedings of the 2018 14th International Conference on Natural Computation, Fuzzy Systems and Knowledge Discovery (ICNC-FSKD), Huangshan, China, 28–30 July 2018; pp. 197–201. [Google Scholar]

- Ji, S.; Xu, W.; Yang, M.; Yu, K. 3D convolutional neural networks for human action recognition. IEEE Trans. Pattern Anal. Mach. Intell. 2012, 35, 221–231. [Google Scholar] [CrossRef] [PubMed]

- Yousefi, B.; Loo, C.K. Development of biological movement recognition by interaction between active basis model and fuzzy optical flow division. Sci. World J. 2014, 2014, 238234. [Google Scholar] [CrossRef] [PubMed]

- Yousefi, B.; Loo, C.K. Comparative study on interaction of form and motion processing streams by applying two different classifiers in mechanism for recognition of biological movement. Sci. World J. 2014, 2014, 723213. [Google Scholar] [CrossRef]

- Yousefi, B.; Loo, C.K. Bio-Inspired Human Action Recognition using Hybrid Max-Product Neuro-Fuzzy Classifier and Quantum-Behaved PSO. arXiv 2015, arXiv:1509.03789. [Google Scholar]

- Yousefi, B.; Loo, C.K. Slow feature action prototypes effect assessment in mechanism for recognition of biological movement ventral stream. Int. J. Bio-Inspired Comput. 2016, 8, 410–424. [Google Scholar] [CrossRef]

- He, D.; Zhou, Z.; Gan, C.; Li, F.; Liu, X.; Li, Y.; Wang, L.; Wen, S. StNet: Local and global spatial-temporal modeling for action recognition. In Proceedings of the AAAI Conference on Artificial Intelligence, Honolulu, HI, USA, 27 January–1 February 2019; pp. 8401–8408. [Google Scholar]

- Imtiaz, H.; Mahbub, U.; Schaefer, G.; Zhu, S.Y.; Ahad, M.A.R. Human Action Recognition based on Spectral Domain Features. Procedia Comput. Sci. 2015, 60, 430–437. [Google Scholar] [CrossRef]

- Jhuang, H.; Serre, T.; Wolf, L.; Poggio, T. A biologically inspired system for action recognition. In Proceedings of the IEEE 11th International Conference on Computer Vision, Rio de Janeiro, Brazil, 14–21 October 2007; pp. 1–8. [Google Scholar]

- Yamato, J.; Ohya, J.; Ishii, K. Recognizing human action in time-sequential images using hidden markov model. In Proceedings of the 1992 IEEE Computer Society Conference on Computer Vision and Pattern Recognition, Champaign, IL, USA, 15–18 June 1992; pp. 379–385. [Google Scholar]

- Li, Z.; Gavrilyuk, K.; Gavves, E.; Jain, M.; Snoek, C.G. Videolstm convolves, attends and flows for action recognition. Comput. Vis. Image Underst. 2018, 166, 41–50. [Google Scholar] [CrossRef]

- Wang, Y.; Mori, G. Human action recognition by semilatent topic models. IEEE Trans. Pattern Anal. Mach. Intell. 2009, 31, 1762–1774. [Google Scholar] [CrossRef]

- Alkurdi, L.; Busch, C.; Peer, A. Dynamic contextualization and comparison as the basis of biologically inspired action understanding. Paladyn J. Behav. Robot. 2018, 9, 19–59. [Google Scholar] [CrossRef]

- Guo, Y.; Li, Y.; Shao, Z. DSRF: A flexible trajectory descriptor for articulated human action recognition. Pattern Recognit. 2018, 76, 137–148. [Google Scholar] [CrossRef]

- Poppe, R. A survey on vision-based human action recognition. Image Vis. Comput. 2010, 28, 976–990. [Google Scholar] [CrossRef]

- Fernández-Caballero, A.; Castillo, J.C.; Rodríguez-Sánchez, J.M. Human activity monitoring by local and global finite state machines. Expert Syst. Appl. 2012, 39, 6982–6993. [Google Scholar] [CrossRef]

- Webb, B.S.; Roach, N.W.; Peirce, J.W. Masking exposes multiple global form mechanisms. J. Vis. 2008, 8, 16. [Google Scholar] [CrossRef][Green Version]

- Shu, N.; Tang, Q.; Liu, H. A bio-inspired approach modeling spiking neural networks of visual cortex for human action recognition. In Proceedings of the 2014 International Joint Conference on Neural Networks (IJCNN), Beijing, China, 6–11 July 2014; pp. 3450–3457. [Google Scholar]

- Nweke, H.F.; Teh, Y.W.; Al-garadi, M.A.; Alo, U.R. Deep learning algorithms for human activity recognition using mobile and wearable sensor networks: State of the art and research challenges. Expert Syst. Appl. 2018, 105, 233–261. [Google Scholar] [CrossRef]

- Oniga, S.; Suto, J. Activity recognition in adaptive assistive systems using artificial neural networks. Elektron. Elektrotechnika 2016, 22, 68–72. [Google Scholar] [CrossRef]

- Nguyen, T.V.; Mirza, B. Dual-layer kernel extreme learning machine for action recognition. Neurocomputing 2017, 260, 123–130. [Google Scholar] [CrossRef]

- Layher, G.; Brosch, T.; Neumann, H. Real-time biologically inspired action recognition from key poses using a neuromorphic architecture. Front. Neurorobotics 2017, 11, 13. [Google Scholar] [CrossRef]

- Wang, L.; Ge, L.; Li, R.; Fang, Y. Three-stream CNNs for action recognition. Pattern Recognit. Lett. 2017, 92, 33–40. [Google Scholar] [CrossRef]

- Tu, Z.; Xie, W.; Qin, Q.; Poppe, R.; Veltkamp, R.C.; Li, B.; Yuan, J. Multi-stream CNN: Learning representations based on human-related regions for action recognition. Pattern Recognit. 2018, 79, 32–43. [Google Scholar] [CrossRef]

- Ma, M.; Marturi, N.; Li, Y.; Leonardis, A.; Stolkin, R. Region-sequence based six-stream CNN features for general and fine-grained human action recognition in videos. Pattern Recognit. 2018, 76, 506–521. [Google Scholar] [CrossRef]

- Lu, X.; Yao, H.; Zhao, S.; Sun, X.; Zhang, S. Action recognition with multi-scale trajectory-pooled 3D convolutional descriptors. Multimed. Tools Appl. 2019, 78, 507–523. [Google Scholar] [CrossRef]

- Kleinlein, R.; García-Faura, Á.; Luna Jiménez, C.; Montero, J.M.; Díaz-de María, F.; Fernández-Martínez, F. Predicting Image Aesthetics for Intelligent Tourism Information Systems. Electronics 2019, 8, 671. [Google Scholar] [CrossRef]

- Wu, J.; Li, Z.; Qu, W.; Zhou, Y. One Shot Crowd Counting with Deep Scale Adaptive Neural Network. Electronics 2019, 8, 701. [Google Scholar] [CrossRef]

- Shi, Y.; Tian, Y.; Wang, Y.; Zeng, W.; Huang, T. Learning long-term dependencies for action recognition with a biologically-inspired deep network. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 716–725. [Google Scholar]

- Liu, C.; Freeman, W.T.; Adelson, E.H.; Weiss, Y. Human-assisted motion annotation. In Proceedings of the 2008 IEEE Conference on Computer Vision and Pattern Recognition, Anchorage, AK, USA, 23–28 June 2008; pp. 1–8. [Google Scholar]

- Lehky, S.R.; Kiani, R.; Esteky, H.; Tanaka, K. Statistics of visual responses in primate inferotemporal cortex to object stimuli. J. Neurophysiol. 2011, 106, 1097–1117. [Google Scholar] [CrossRef] [PubMed]

- Yue, S.; Rind, F.C. Redundant neural vision systems—Competing for collision recognition roles. IEEE Trans. Auton. Ment. Dev. 2013, 5, 173–186. [Google Scholar]

- Mathe, S.; Sminchisescu, C. Actions in the eye: Dynamic gaze datasets and learnt saliency models for visual recognition. IEEE Trans. Onpattern Anal. Mach. Intell. 2015, 37, 1408–1424. [Google Scholar] [CrossRef]

- Moayedi, F.; Azimifar, Z.; Boostani, R. Structured sparse representation for human action recognition. Neurocomputing 2015, 161, 38–46. [Google Scholar] [CrossRef]

- Guha, T.; Ward, R.K. Learning sparse representations for human action recognition. IEEE Trans. Pattern Anal. Mach. Intell. 2012, 34, 1576–1588. [Google Scholar] [CrossRef]

- Guthier, T.; Willert, V.; Schnall, A.; Kreuter, K.; Eggert, J. Non-negative sparse coding for motion extraction. In Proceedings of the 2013 International Joint Conference on Neural Networks (IJCNN), Dallas, TX, USA, 4–9 August 2013; pp. 1–8. [Google Scholar]

- Nayak, N.M.; Roy-Chowdhury, A.K. Learning a sparse dictionary of video structure for activity modeling. In Proceedings of the 2014 IEEE International Conference on Image Processing (ICIP), Paris, France, 27–30 October 2014; pp. 4892–4896. [Google Scholar]

- Dean, T.; Washington, R.; Corrado, G. Recursive sparse, spatiotemporal coding. In Proceedings of the 2009 11th IEEE International Symposium on Multimedia, San Diego, CA, USA, 14–16 December 2009; pp. 645–650. [Google Scholar]

- Ikizler, N.; Duygulu, P. Histogram of oriented rectangles: A new pose descriptor for human action recognition. Image Vis. Comput. 2009, 27, 1515–1526. [Google Scholar] [CrossRef]

- Guo, W.; Chen, G. Human action recognition via multi-task learning base on spatial-temporal feature. Inf. Sci. 2015, 320, 418–428. [Google Scholar] [CrossRef]

- Shabani, A.H.; Zelek, J.S.; Clausi, D.A. Human action recognition using salient opponent-based motion features. In Proceedings of the 2010 Canadian Conference Computer and Robot Vision, Ottawa, ON, Canada, 31 May–2 June 2010; pp. 362–369. [Google Scholar]

- Cadieu, C.F.; Olshausen, B.A. Learning intermediate-level representations of form and motion from natural movies. Neural Comput. 2012, 24, 827–866. [Google Scholar] [CrossRef] [PubMed]

- Tian, Y.; Ruan, Q.; An, G.; Xu, W. Context and locality constrained linear coding for human action recognition. Neurocomputing 2015, 167, 359–370. [Google Scholar] [CrossRef]

- Pitzalis, S.; Sdoia, S.; Bultrini, A.; Committeri, G.; Di Russo, F.; Fattori, P.; Galletti, C.; Galati, G. Selectivity to translational egomotion in human brain motion areas. PLoS ONE 2013, 8, e60241. [Google Scholar] [CrossRef] [PubMed]

- Willert, V.; Toussaint, M.; Eggert, J.; Körner, E. Uncertainty optimization for robust dynamic optical flow estimation. In Proceedings of the Sixth International Conference on Machine Learning and Applications (ICMLA 2007), Cincinnati, OH, USA, 13–15 December 2007; pp. 450–457. [Google Scholar]

- Prinz, W. Action representation: Crosstalk between semantics and pragmatics. Neuropsychologia 2014, 55, 51–56. [Google Scholar] [CrossRef]

- Schüldt, C.; Laptev, I.; Caputo, B. Recognizing human actions: A local SVM approach. In Proceedings of the 17th International Conference on Pattern Recognition, Cambridge, UK, 26 August 2004; pp. 32–36. [Google Scholar]

- Lange, J.; Lappe, M. A model of biological motion perception from configural form cues. J. Neurosci. 2006, 26, 2894–2906. [Google Scholar] [CrossRef]

- Willert, V.; Eggert, J. A stochastic dynamical system for optical flow estimation. In Proceedings of the 2009 IEEE 12th International Conference on Computer Vision Workshops, Kyoto, Japan, 27 September–4 October 2009; pp. 711–718. [Google Scholar]

- Yau, J.M.; Pasupathy, A.; Fitzgerald, P.J.; Hsiao, S.S.; Connor, C.E. Analogous intermediate shape coding in vision and touch. Proc. Natl. Acad. Sci. USA 2009, 106, 16457–16462. [Google Scholar] [CrossRef]

- Escobar, M.J.; Masson, G.S.; Vieville, T.; Kornprobst, P. Action recognition using a bio-inspired feedforward spiking network. Int. J. Comput. Vis. 2009, 82, 284–301. [Google Scholar] [CrossRef]

- Guthier, T.; Willert, V.; Eggert, J. Topological sparse learning of dynamic form patterns. Neural Comput. 2014, 1, 42–73. [Google Scholar] [CrossRef]

- Baumann, F.; Ehlers, A.; Rosenhahn, B.; Liao, J. Recognizing human actions using novel space-time volume binary patterns. Neurocomputing 2016, 173, 54–63. [Google Scholar] [CrossRef]

- Haghighi, H.; Abdollahi, F.; Gharibzadeh, S. Brain-inspired self-organizing modular structure to control human-like movements based on primitive motion identification. Neurocomputing 2016, 173, 1436–1442. [Google Scholar] [CrossRef]

- Esser, S.; Merolla, P.; Arthur, J.; Cassidy, A.; Appuswamy, R.; Andreopoulos, A.; Berg, D.; McKinstry, J.; Melano, T.; Barch, D.; et al. Convolutional networks for fast, energy-efficient neuromorphic computing. arXiv 2016, arXiv:1603.08270. [Google Scholar] [CrossRef] [PubMed]

- Ward, E.J.; MacEvoy, S.P.; Epstein, R.A. Eye-centered encoding of visual space in scene-selective regions. J. Vis. 2010, 10, 6. [Google Scholar] [CrossRef] [PubMed]

- Escobar, M.J.; Kornprobst, P. Action recognition via bio-inspired features: The richness of center–surround interaction. Comput. Vis. Image Underst. 2012, 116, 593–605. [Google Scholar] [CrossRef]

- Goodale, M.A.; Westwood, D.A. An evolving view of duplex vision: Separate but interacting cortical pathways for perception and action. Curr. Opin. Neurobiol. 2004, 14, 203–211. [Google Scholar] [CrossRef]

- Yousefi, B.; Yousefi, P. ABM and CNN application in ventral stream of visual system. In Proceedings of the 2015 IEEE Student Symposium in Biomedical Engineering & Sciences (ISSBES), Shah Alam, Malaysia, 4 November 2015; pp. 87–92. [Google Scholar]

- Jellema, T.; Baker, C.; Wicker, B.; Perrett, D. Neural representation for the perception of the intentionality of actions. Brain Cogn. 2000, 44, 280–302. [Google Scholar] [CrossRef]

- Billard, A.; Matarić, M.J. A biologically inspired robotic model for learning by imitation. In Proceedings of the fourth international conference on Autonomous agents, Barcelona, Spain, 3–7 June 2000; pp. 373–380. [Google Scholar]

- Vaina, L.M.; Solomon, J.; Chowdhury, S.; Sinha, P.; Belliveau, J.W. Functional neuroanatomy of biological motion perception in humans. Proc. Natl. Acad. Sci. USA 2001, 98, 11656–11661. [Google Scholar] [CrossRef]

- Fleischer, F.; Caggiano, V.; Thier, P.; Giese, M.A. Physiologically inspired model for the visual recognition of transitive hand actions. J. Neurosci. 2013, 33, 6563–6580. [Google Scholar] [CrossRef]

- Syrris, V.; Petridis, V. A lattice-based neuro-computing methodology for real-time human action recognition. Inf. Sci. 2011, 181, 1874–1887. [Google Scholar] [CrossRef]

- Vander Wyk, B.C.; Voos, A.; Pelphrey, K.A. Action representation in the superior temporal sulcus in children and adults: An fMRI study. Dev. Cogn. Neurosci. 2012, 2, 409–416. [Google Scholar] [CrossRef][Green Version]

- Troje, N.F. Decomposing biological motion: A framework for analysis and synthesis of human gait patterns. J. Vis. 2002, 2, 371–387. [Google Scholar] [CrossRef] [PubMed]

- Banquet, J.P.; Gaussier, P.; Quoy, M.; Revel, A.; Burnod, Y. A hierarchy of associations in hippocampo-cortical systems: Cognitive maps and navigation strategies. Neural Comput. 2005, 17, 1339–1384. [Google Scholar] [CrossRef] [PubMed]

- Yamamoto, K.; Miura, K. Effect of motion coherence on time perception relates to perceived speed. Vis. Res. 2015, 123, 56–62. [Google Scholar] [CrossRef] [PubMed]

- Schindler, A.; Bartels, A. Motion parallax links visual motion areas and scene regions. NeuroImage 2016, 125, 803–812. [Google Scholar] [CrossRef] [PubMed]

- Venezia, J.H.; Fillmore, P.; Matchin, W.; Isenberg, A.L.; Hickok, G.; Fridriksson, J. Perception drives production across sensory modalities: A network for sensorimotor integration of visual speech. NeuroImage 2016, 126, 196–207. [Google Scholar] [CrossRef] [PubMed]

- Harvey, B.M.; Dumoulin, S.O. Visual motion transforms visual space representations similarly throughout the human visual hierarchy. NeuroImage 2016, 127, 173–185. [Google Scholar] [CrossRef] [PubMed][Green Version]

- Rizzolatti, G.; Fogassi, L.; Gallese, V. Neurophysiological mechanisms underlying the understanding and imitation of action. Nat. Rev. Neurosci. 2001, 2, 661–670. [Google Scholar] [CrossRef]

- Breazeal, C.; Scassellati, B. Robots that imitate humans. Trends Cogn. Sci. 2002, 6, 481–487. [Google Scholar] [CrossRef]

- Schaal, S.; Ijspeert, A.; Billard, A. Computational approaches to motor learning by imitation. Philos. Trans. R. Soc. Lond. B 2003, 358, 537–547. [Google Scholar] [CrossRef]

- Demiris, Y.; Johnson, M. Distributed, predictive perception of actions: A biologically inspired robotics architecture for imitation and learning. Connect. Sci. 2003, 15, 231–243. [Google Scholar] [CrossRef]

- Johnson, M.; Demiris, Y. Hierarchies of coupled inverse and forward models for abstraction in robot action planning, recognition and imitation. In Proceedings of the AISB 2005 Symposium on Imitation in Animals and Artifacts, Hatfield, UK, 12–15 April 2005; pp. 69–76. [Google Scholar]

- Cook, J.; Saygin, A.P.; Swain, R.; Blakemore, S.J. Reduced sensitivity to minimum-jerk biological motion in autism spectrum conditions. Neuropsychologia 2009, 47, 3275–3278. [Google Scholar] [CrossRef]

- Milner, A.D.; Goodale, M.A. Two visual systems re-viewed. Neuropsychologia 2008, 46, 774–785. [Google Scholar] [CrossRef]

- Hesse, C.; Schenk, T. Delayed action does not always require the ventral stream: A study on a patient with visual form agnosia. Cortex 2014, 54, 77–91. [Google Scholar] [CrossRef][Green Version]

- Schenk, T. No dissociation between perception and action in patient DF when haptic feedback is withdrawn. J. Neurosci. 2012, 32, 2013–2017. [Google Scholar] [CrossRef]

- Schenk, T. Response to Milner et al.: Grasping uses vision and haptic feedback. Trends Cogn. Sci. 2012, 16, 258. [Google Scholar] [CrossRef]

- Whitwell, R.L.; Milner, A.D.; Cavina-Pratesi, C.; Byrne, C.M.; Goodale, M.A. DF’s visual brain in action: The role of tactile cues. Neuropsychologia 2014, 55, 41–50. [Google Scholar] [CrossRef]

- Whitwell, R.L.; Milner, A.D.; Cavina-Pratesi, C.; Barat, M.; Goodale, M.A. Patient DF’s visual brain in action: Visual feedforward control in visual form agnosia. Vis. Res. 2015, 110, 265–276. [Google Scholar] [CrossRef]

- Krigolson, O.E.; Cheng, D.; Binsted, G. The role of visual processing in motor learning and control: Insights from electroencephalography. Vis. Res. 2015, 110, 277–285. [Google Scholar] [CrossRef]

- Cavina-Pratesi, C.; Large, M.E.; Milner, A.D. Reprint of: Visual processing of words in a patient with visual form agnosia: A behavioural and fMRI study. Cortex 2015, 72, 97–114. [Google Scholar] [CrossRef]

- Libet, B.; Wright, E.W.; Gleason, C.A. Preparation-or intention-to-act, in relation to pre-event potentials recorded at the vertex. Electroencephalogr. Clin. Neurophysiol. 1983, 56, 367–372. [Google Scholar] [CrossRef]

- Chao, Y.W. Visual Recognition and Synthesis of Human-Object Interactions. Ph.D. Thesis, University of Michigan, Ann Arbor, MI, USA, 2019. [Google Scholar]

- Hoshide, R.; Jandial, R. Plasticity in Motion. Neurosurgery 2019, 84, 19–20. [Google Scholar] [CrossRef]

- Bicanski, A.; Burgess, N. A computational model of visual recognition memory via grid cells. Curr. Biol. 2019, 29, 979–990. [Google Scholar] [CrossRef]

- Calabro, F.J.; Beardsley, S.A.; Vaina, L.M. Differential cortical activation during the perception of moving objects along different trajectories. Exp. Brain Res. 2019, 2019, 1–9. [Google Scholar] [CrossRef]

- Grossberg, S. The resonant brain: How attentive conscious seeing regulates action sequences that interact with attentive cognitive learning, recognition, and prediction. Atten. Percept. Psychophys. 2019, 2019, 1–28. [Google Scholar] [CrossRef]

- Wagner, D.D.; Chavez, R.S.; Broom, T.W. Decoding the neural representation of self and person knowledge with multivariate pattern analysis and data-driven approaches. Wiley Interdiscip. Rev. Cogn. Sci. 2019, 10, e1482. [Google Scholar] [CrossRef]

- Isik, L.; Tacchetti, A.; Poggio, T. A fast, invariant representation for human action in the visual system. J. Neurophysiol. 2017, 119, 631–640. [Google Scholar] [CrossRef]

| Approaches in Perception | Topic of Each Approach | Connection to Other Researches |

|---|---|---|

| E. J. Marey and E. Muybridge (1850s) | moving photographs presenting locomotion | |

| Rubin (1927) | visual perception of real movement | |

| Duncker (1929) | visual perception of real movement | |

| Johansson et al. (1973) | motion patterns for humans& animals as biological motion (MLD) | |

| Turaga et al. (2008) | locomotion analysis | |

| Johansson (1975) | perception of human motion in neuroscience analysis | |

| Kozlowski & Cutting (1977) | with females and upper body MLD, gender recognition | |

| Marr et al. (1978) | computational process in the human visual system, 3D shapes | perception |

| Perrett et al. (1985) | the temporal cortex of macaque monkey analysis found two cells in the brain sensitive for rotation and view of the body movements | |

| Perrett et al. (1989) | view centered, view independent responses among the brain cells | |

| Goddard (1989) | spatial and temporal feature incorporation through diffuses MLD data | perception-computer |

| Goddard (1992) | synergistic manner of the process of “what” and “where” in visual system | neuroscience |

| Goodale & Milner (1992) | projection perceptual information from striate and inferotemporal cortex | neuroscience-object identification |

| Cédras and Shah (1995) | motion-based recognition into motion models | modelling |

| Perkins (1995) | animated real-time, texture of motion, avoiding computational | modelling |

| Tsai et al. (1993) | detection of cyclic motion, applying Fourier transform | highly related to computational modelling |

| Fielding & Ruck (1995) | Hidden Markov Model (HMM) technique for classification | highly related to computational modelling |

| Gallese et al. (1996) | analysis the electrical activity in macaque monkey’s brain | neuroscience |

| Aggarwal et al. (1999) | human motion analysis review and computer vision approaches | computer vision |

| Aggarwal & Cai (1997) | interpreting human motion, tracking, recognizing human activities per frame | perception |

| Decety & Grèzes (1999) | Process of action and its perception, functional segregation MLD | |

| Rangarajan ey al. (1992) | matching the biological motion trajectories (object recognition ) | computer vision |

| McLeod (1996) | motion blind patient, the homologue of V5/MT concerning the moving stimuli | |

| Wiley & Hahn (1997) | virtual reality approach regarding the computer-generated characters | |

| Neri et al. (1998) | visual system ability to integrate the motion information of walkers | |

| Hill & Pollick (2000) | temporal differences in MLD, recognition of the exaggerated motions | |

| Giese & Poggio (2000) | linear combination of prototypical views,3D stationary object recognition | computer vision |

| Moeslund & Granum (2001) | a comprehensive survey on the motion capture | computer vision |

| Grèzes et al. (2001) | neural network specifications and its verifications through fMRI | computer vision-neuroscience |

| Pollick et al. (2001) | visual perception effects used point-light display(MLD) | computer vision |

| Song et al. (2001) | Computer-human interface using joint probability density function (PDF) | computer vision |

| Servos et al. (2002) | relationship between biological motion and control unpredicted stimuli | computer |

| Grossman & Blake (2002) | neural mechanisms, anatomical, and functionality into two pathways | neuroscience |

| Jastorff at el. (2002) | investigating of recognition process in the neural mechanism | neuroscience |

| Giese & Lappe (2002) | spatio-temporal generalization of the biological movement perception | computer vision |

| Beintema & Lappe (2002) | analysis of the perception of form pattern of human action by MLD | computer vision |

| Puce & Perrett (2003) | analysis of single cells, neuroimaging data and records of field potential | |

| Kilner et al. (2003) | analysis of the action in motor programs | neuroscience |

| Cohen & Singh (2006) | perceiving the complex shape orientation and local geometric attributes | neuroscience |

| Lange et al. (2006) | moving human figure using MLD | neuroscience |

| Troje & Westhoff (2006) | data retrieval of direction from scrambled MLD in humans and animals | |

| Blake & Shiffrar (2007) | review in perception | |

| Pyles et al. (2007) | comparative research on human MLD | |

| Gölcü & Gilbert (2009) | perception of object recognition analyzing the human features | neuroscience |

| Daems & Verfaillie (2010) | analysis of body postures is different viewpoints and human identification | |

| Grossman (2010) | analyzing STSp region and its functionality underlying the BLOD response | modelling |

| Strasburger et al. (2011) | Peripheral vision and pattern recognition for theory of form perception | neuroscience |

| Giese (2014) | complex pattern recognition mechanism and motion perception | modelling |

| Tlapale (2015) | integrated dynamic motion model (IDM) to handle diverse moving stimuli | |

| Jung & Gu (2015) | perception and modeling in the visual motion | modelling |

| Meso & Masson (2015) | characterized the patterns and perception duration, | |

| Nigmatullina (2015) | link between the imagery and perception | |

| Tadin (2015) | spatially suppress the surrounding by perception information | |

| Matsumoto et al. (2015) | analyzed the biological motion perception in Schizophrenia patients | |

| hveninen et al. (2016) | combination of spatial and non-spatial information in auditory cortex (AC) | neuroscience |

| Psychological and Neuroscience Approaches | Topic of the Approach | Connection to Other Researches |

|---|---|---|

| Jellema et al. (2000) | analysis of the cellular population located in the temporal lobe of the macaque monkey | Psychology |

| Billard et al. (2000, June) | action imitation considered the actions high-level abstractions | |

| Vaina et al. (2001) | investigation regarding the neural network, fMRI in MLD | |

| Goodale & Westwood (2004) | evaluating the labour division at visual pathways | |

| Banquet et al. (2005) | associative learning for object location level, in CA3-CA1 region | |

| Milner & Goodale (2006) | involvement of dorsal stream in movement to target following ventral stream | |

| Cook et al. (2009) | ASCs for comparing detection of non-biological and biological motion | |

| Wyk et al. (2012) | action representation at STS | Psychology |

| Schenk (2012a) | DF patient analyzes the ability to get the object | |

| Schenk (2012b) | Using fMRI the functionality of DF patient | |

| Fleischer et al. (2013) | visual recognition from motion | |

| Hesse & Schenk (2014) | visuomotor performance of a D.F. patient tested for letter-posting task | |

| Theusner et al. (2014) | motion energy based on the luminance of objects motion detectors | |

| Whitwell et al. (2014) | test by different width of the objects and DF, distinguish the shape perceptually | perception |

| Whitwell et al. (2015) | ability grip scaling is may rely on online visual or haptic feedback (for DF patient) | |

| Krigolson et al. (2015) | review of the behavior using EEG | Psychology |

| Cavina-Pratesi et al. (2015) | brain circulation using fMRI regarding the word recognition ability | |

| Ganos et al. (2015) | voluntary movement considering GTS area | |

| Yamamoto & Miura (2016) | visual object motions on time perception | |

| Schindler & Bartels (2016) | on 3 dimensional visual cue involving the motion parallax analyzing | |

| Venezia et al. (2016) | the sensorimotor integration of visual speech through the perception | perception |

| Harvey & Dumoulin (2016) | visual motion effects on neural receptive field and fMRI response |

| Knowledge Based Modeling Approaches | Topic of the Approach | Connection to Other Researches |

|---|---|---|

| Yamato (1992,June) | HMM and feature based bottom up approaches in time sequence images | modelling |

| Giese & Poggio (1999) | Linear combination of motion sequence prototypical views, 3D object recognition | |

| Gavrila (1999) | survey article in visual analysis regarding the human movement | |

| Wachter & Nagel (1997, June) | the quantitative description of the geometry of the human object | |

| Gises & Poggio (2003) | dual processing pathways in the visual system | |

| SchuLdt et al. (2004) | adaptive local space-time features | Computer vision |

| Casile & Giese (2005) | multilevel generalization using simple mid-Level optic flow features | perception |

| Arbib (2005) | analysis of neural and its functionality grounding for the Language skills | perception, neuroscience |

| Valstar & Pantic (2006) | comparison of Logical and biological inspired methods for facial expression | computer vision |

| Demiris & Khadhouri (2006) | computational architecture and HAMMER for motor control systems | perception |

| Lange & Lappe (2006) | Neural plausibility assumptions for interaction of the form and motion signals | |

| Willert et al. (2007) | estimating the motion using optical flow by dynamic Bayesian network | |

| Jhuang et al. (2007) | hierarchical feed forward architecture on the object recognition | |

| Minler and Goodale (2007) | analysis of two cortical systems regarding the vision in action | perception |

| Fathi & Mori (2008) | mid-Level Learning for the motion features | modelling |

| Schindler & Gool (2008, June) | recognition of simple actions instantaneously by short sequences (snippets) 1-10 frames | computer vision |

| Schindler & Gool (2008) | recognition of form (Local shape) and motion (Local flow) features | computer vision |

| Webb et al. (2008) | intermediate Levels of visual processing, detection circular, and radial form | |

| Willert & Eggert (2009) | estimation of motion to analyze the small number of temporal consecutive frames | |

| Yau et al. (2009) | interaction of vision and touch, PCA for patterns shape features identification | |

| Escobar et al. (2009) | bio-inspired feed-forward of spiking network model | neuroscience |

| Ikizler & Duygulu (2009) | analyzing the dynamic representation of action recognition using HOR | |

| Wang & Mori (2009) | visual features as visual word and semi-Latent topic models | modelling |

| Ryoo & Aggarwal (2009) | Spatiotemporal relation for recognition of human activity | |

| Dean et al. (2009, December) | Learning sparse spatiotemporal codes from the basis vectors | |

| Shabani et al. (2010) | multiscale salient features from motion energy | modelling |

| Poppe (2010) | Visual based human action recognition | computer vision |

| Sun et al. (2010) | median filtering of the intermediate flow fields | |

| Ward et al. (2010) | references frames applied for visual information using fMRI | |

| WeinLand et al. (2011) | review paper for human action/activity recognition | |

| Lehky et al. (2011) | characteristic of selection of sparseness in anterior inferotemporal cortex | neuroscience |

| Willert & Eggert (2011) | representation of visual motion processing | |

| Mathe & Sminchisescu (2012) | BOW in maxima of sparse interest operators | |

| Escobar & Kornprobst (2012) | analysis motion in the models of cortical areas V1 and MT | neuroscience-perception |

| Cadieu & Olshausen (2012) | intermediate-level visual presentation | |

| Guha & Ward (2012) | human action in the sparse representation in overcompleted basis (dictionary) set | |

| Guthier et al. (2012) | non-negative sparse coding on biological motion | |

| Yousefi et al. (2013) | Introducing Active Basis Model for ventral stream | Computer vision |

| Ji et al. (2013) | fully automatic system for human action recognition by CNN | modelling |

| PitzaLis et al. (2013) | motion analysis approach considers the movements in all directions | perception |

| Yue & Rind (2013) | detection of collisions, analysis of two types neurons: LGMD and DSNs | neuroscience |

| Cai et al. (2014) | spatiotemporal feature in the bio-inspired model, BIM-STIP | |

| Guthier et al. (2014) | survey, modelling using nonnegative sparse coding, VNMF | |

| Shu et al. (2014) | bio-inspired modeling human action recognition, spiking neural network | |

| Nayak & Roy-Chowdhury (2014) | spatiotemporal features, unsupervised way into a dictionary Learning | |

| Yousefi & Loo(2014) | fuzzy optical flow division in Dorsal stream | Computer vision |

| Esmaili et al. (2014) | robust recognition of face using C2 features in HMAX | |

| Yousefi & Loo(2014) | Interaction between dorsal and ventral streams | Computer vision |

| Prinz (2014) | analysis of action semantics and pragmatics | perception |

| Yousefi & Loo(2015) | Slowness principal into modeling | Computer vision |

| Moayedi (2015) | basic shape extraction of action group sparse coding employed BOW | |

| Yousefi & Loo(2015) | Hybrid Max-Product Neuro-Fuzzy Classifier and Quantum-Behaved PSO in the model | Computer vision |

| Tian (2015) | BOW method, VQ, CLLC, GSRC in the human action recognition | |

| Yousefi & Loo(2015) | Slowness prototypes in the ventral stream | Computer vision |

| Hu et al. (2016) | proto-object based on the saliency map | computer vision |

| Haghigh et al. (2016) | human-Like movements |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Yousefi, B.; Loo, C.K. Biologically-Inspired Computational Neural Mechanism for Human Action/activity Recognition: A Review. Electronics 2019, 8, 1169. https://doi.org/10.3390/electronics8101169

Yousefi B, Loo CK. Biologically-Inspired Computational Neural Mechanism for Human Action/activity Recognition: A Review. Electronics. 2019; 8(10):1169. https://doi.org/10.3390/electronics8101169

Chicago/Turabian StyleYousefi, Bardia, and Chu Kiong Loo. 2019. "Biologically-Inspired Computational Neural Mechanism for Human Action/activity Recognition: A Review" Electronics 8, no. 10: 1169. https://doi.org/10.3390/electronics8101169

APA StyleYousefi, B., & Loo, C. K. (2019). Biologically-Inspired Computational Neural Mechanism for Human Action/activity Recognition: A Review. Electronics, 8(10), 1169. https://doi.org/10.3390/electronics8101169