Implementation and Assessment of an Intelligent Motor Tele-Rehabilitation Platform

Abstract

1. Introduction

2. Related Work

3. Functionalities and Architecture of the Platform

3.1. Core

3.2. Real Time Evaluation

3.2.1. Functionalities

3.2.2. Implementation

4. Movement Assessment: DTW Approach

4.1. Dynamic Time Warping

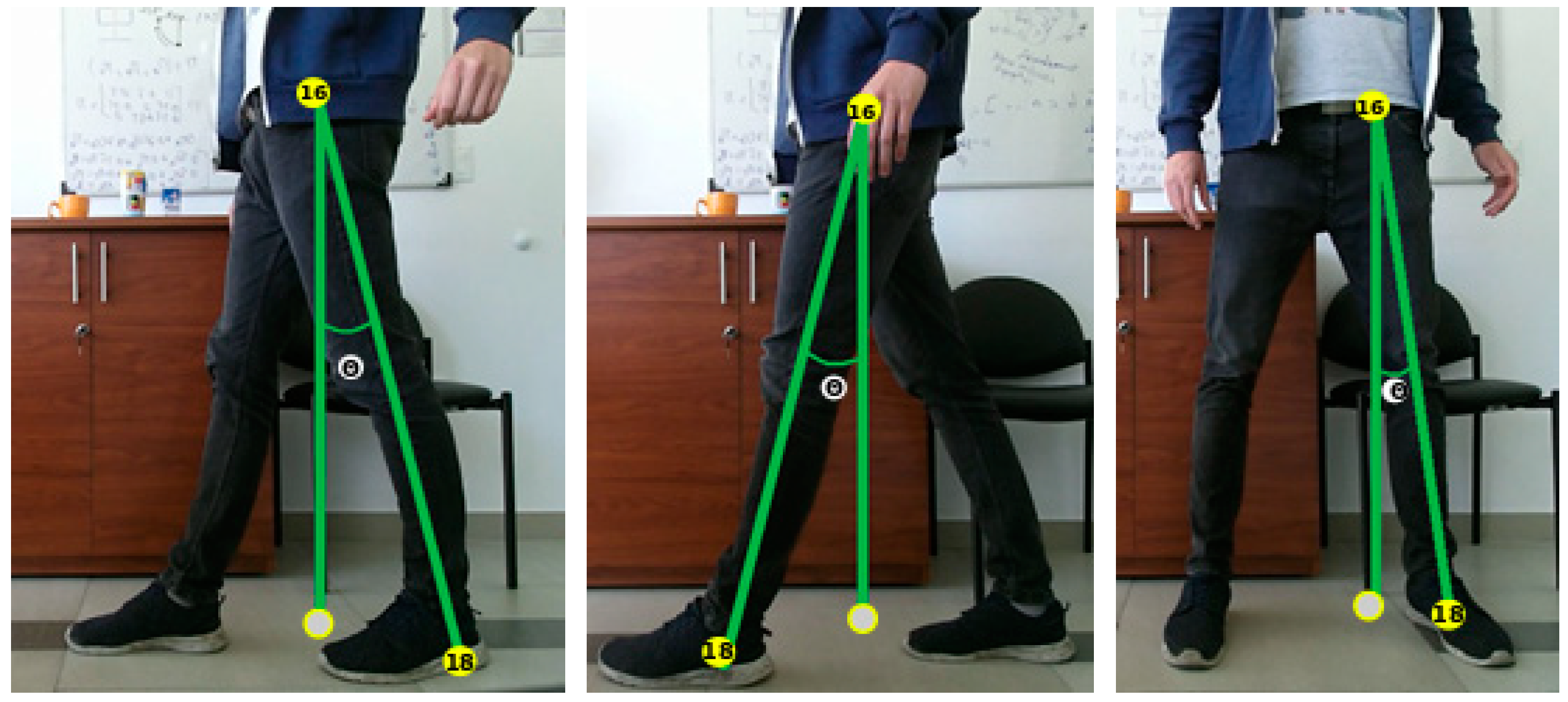

4.2. Trigonometric Parametrization

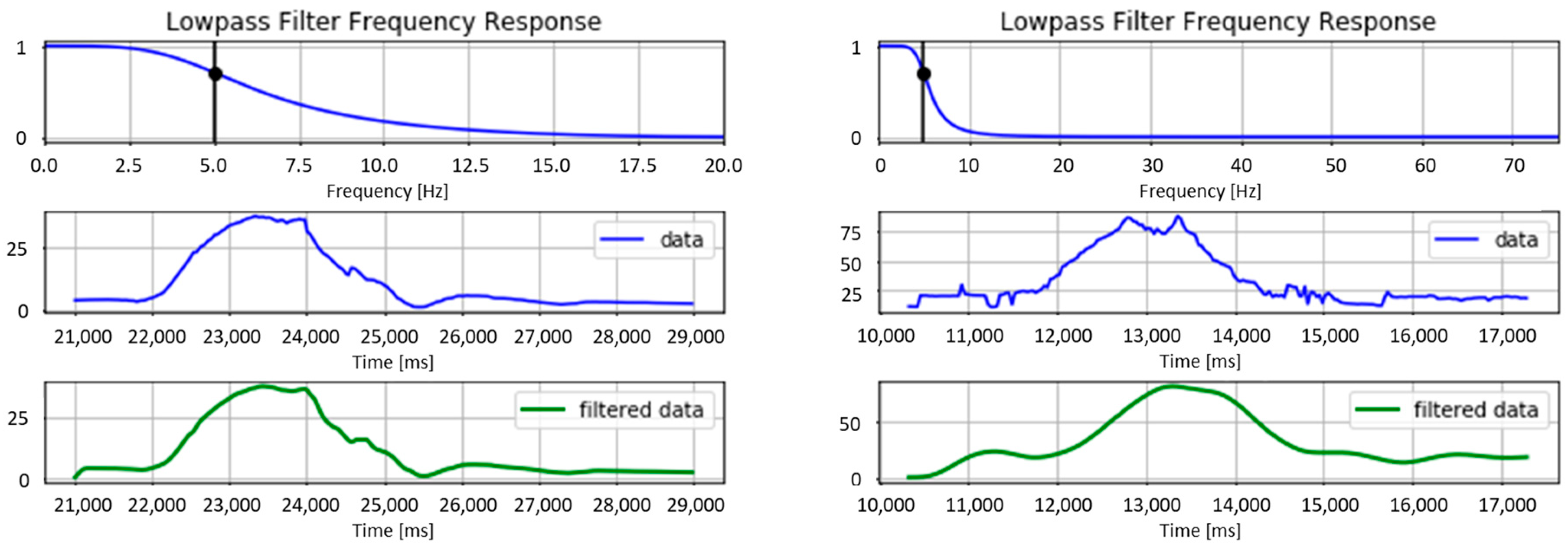

4.3. Filtering and Implementation

- filter order = 2

- sample rate (Hz) = 40

- cutoff frequency (Hz) = 5

- filter order = 4

- sample rate (Hz) = 150

- cutoff frequency (Hz) = 5

4.4. Validation

4.4.1. Experimental Protocol

4.4.2. Results

5. Movement Assessment: HMM Approach

5.1. Hidden Markov Model

5.2. Gesture Representation

5.3. Experiment

5.3.1. Protocol

5.3.2. Evaluation Method

5.3.3. Validation Method

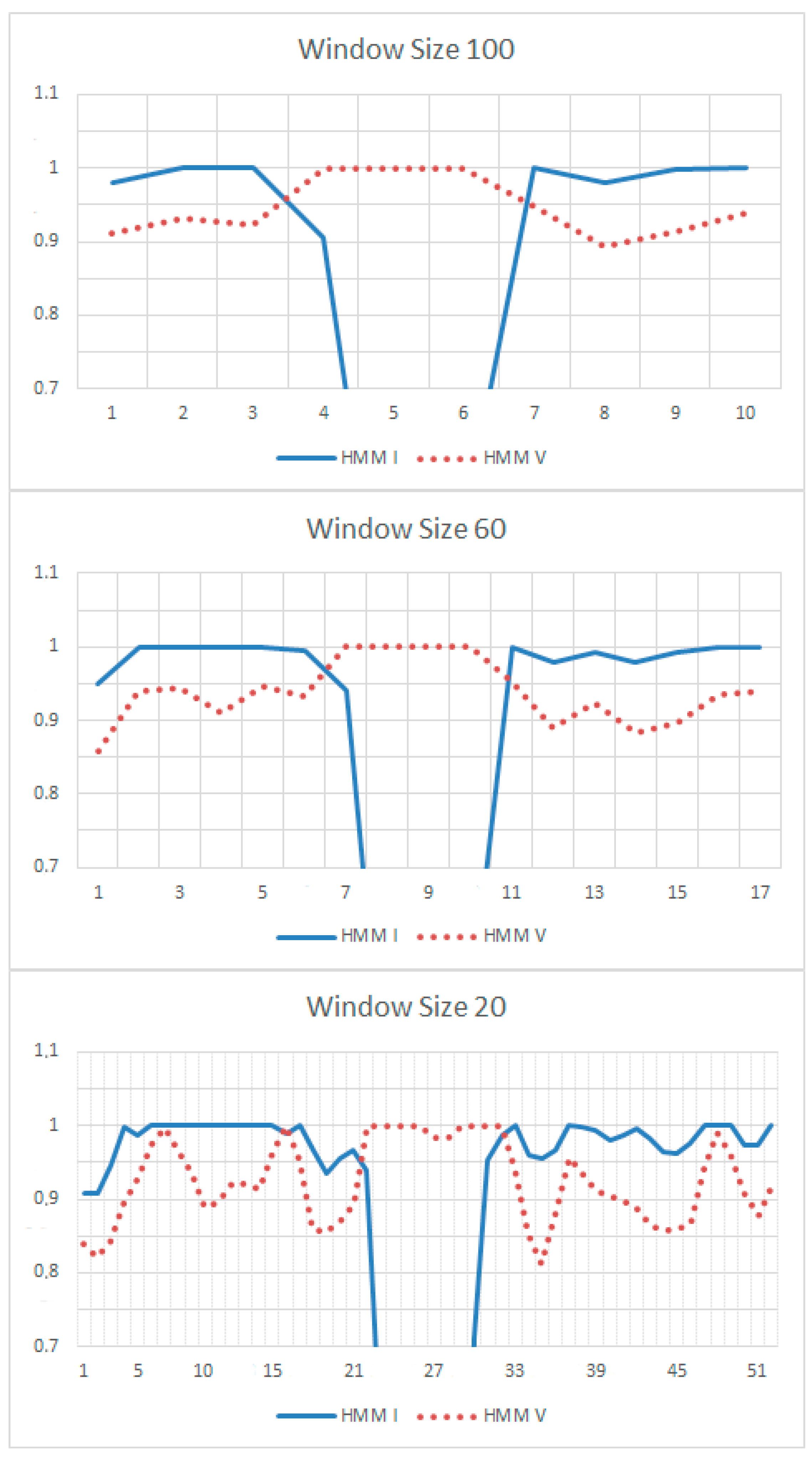

5.3.4. Results

6. Usability of the System

6.1. Participants and Procedure

6.2. Tasks

6.2.1. Task 1: Consult Therapeutic Instructions of Stage 1

6.2.2. Task 2: Perform Rehabilitation Exercises of Stage 1

6.2.3. Task 3: Consult Therapeutic Instructions of Stage 2

6.2.4. Task 4: Perform Rehabilitation Exercises of Stage 2

6.2.5. Task 5: Consult and Send a Message to the Therapist

6.3. Results

7. Discussion

Author Contributions

Funding

Conflicts of Interest

References

- Rybarczyk, Y.; Kleine Deters, J.; Cointe, C.; Esparza, D. Smart web-based platform to support physical rehabilitation. Sensors 2018, 18, 1344. [Google Scholar] [CrossRef] [PubMed]

- Rybarczyk, Y.; Goncalves, M.J. WebLisling: A web-based therapeutic platform for rehabilitation of aphasic patients. IEEE Latin Am. Trans. 2016, 14, 3921–3927. [Google Scholar] [CrossRef]

- Mani, S.; Sharma, S.; Omar, B.; Paungmali, A.; Joseph, L. Validity and reliability of Internet-based physiotherapy assessment for musculoskeletal disorders: A systematic review. J. Telemed. Telecare 2017, 23, 379–391. [Google Scholar] [CrossRef] [PubMed]

- Rybarczyk, Y.; Kleine Deters, J.; Gonzalvo, A.; Gonzalez, M.; Villarreal, S.; Esparza, D. ePHoRt project: A web-based platform for home motor rehabilitation. In Proceedings of the 5th World Conference on Information Systems and Technologies, Madeira, Portugal, 11–13 April 2017; pp. 609–618. [Google Scholar] [CrossRef]

- Kleine Deters, J.; Rybarczyk, Y. Hidden Markov Model approach for the assessment of tele-rehabilitation exercises. Int. J. Artif. Intell. 2018, 16, 1–19. [Google Scholar]

- Rybarczyk, Y.; Kleine Deters, J.; Aladro Gonzalo, A.; Esparza, D.; Gonzalez, M.; Villarreal, S.; Nunes, I.L. Recognition of physiotherapeutic exercises through DTW and low-cost vision-based motion capture. In Proceedings of the 8th International Conference on Applied Human Factors and Ergonomics, Los Angeles, CA, USA, 17–21 July 2017; pp. 348–360. [Google Scholar] [CrossRef]

- Fortino, G.; Gravina, R.A. Cloud-assisted wearable system for physical rehabilitation. In Communications in Computer and Information Science; Barbosa, S.D.J., Chen, P., Filipe, J., Kotenko, I., Sivalingam, K.M., Washio, T., Yuan, J., Zhou, L., Eds.; Springer: Heidelberg, Germany, 2015; Volume 515, pp. 168–182. [Google Scholar]

- Fortino, G.; Parisi, D.; Pirrone, V.; Fatta, G.D. BodyCloud: A SaaS approach for community body sensor networks. Future Gener. Comput. Syst. 2014, 35, 62–79. [Google Scholar] [CrossRef]

- Bonnechère, B.; Jansen, B.; Salvia, P.; Bouzahouene, H.; Omelina, L.; Moiseev, F.; Sholukha, V.; Cornelis, J.; Rooze, M.; Van Sint Jan, S. Validity and reliability of the Kinect within functional assessment activities: Comparison with standard stereophotogrammetry. Gait Posture 2014, 39, 593–598. [Google Scholar] [CrossRef] [PubMed]

- Pedraza-Hueso, M.; Martín-Calzón, S.; Díaz-Pernas, F.J.; Martínez-Zarzuela, M. Rehabilitation using Kinect-based games and virtual reality. Procedia Comput. Sci. 2015, 75, 161–168. [Google Scholar] [CrossRef]

- Morais, W.O.; Wickström, N. A serious computer game to assist Tai Chi training for the elderly. In Proceedings of the 1st IEEE International Conference on Serious Games and Applications for Health, Washington, DC, USA, 16–18 November 2011; pp. 1–8. [Google Scholar] [CrossRef]

- Lin, T.Y.; Hsieh, C.H.; Der Lee, J. A kinect-based system for physical rehabilitation: Utilizing Tai Chi exercises to improve movement disorders in patients with balance ability. In Proceedings of the 7th Asia Modelling Symposium, Hong Kong, China, 23–25 July 2013; pp. 149–153. [Google Scholar] [CrossRef]

- Hoang, T.C.; Dang, H.T.; Nguyen, V.D. Kinect-based virtual training system for rehabilitation. In Proceedings of the International Conference on System Science and Engineering, Ho Chi Minh City, Vietnam, 21–23 July 2017; pp. 53–56. [Google Scholar] [CrossRef]

- Okada, Y.; Ogata, T.; Matsuguma, H. Component-based approach for prototyping of Tai Chi-based physical therapy game and its performance evaluations. Comput. Entertain. 2017, 14, 1–20. [Google Scholar] [CrossRef]

- Da Gama, A.; Chaves, T.; Figueiredo, L.; Teichrieb, V. Guidance and movement correction based on therapeutic movements for motor rehabilitation support systems. In Proceedings of the 14th Symposium on Virtual and Augmented Reality, Rio de Janeiro, Brazil, 28–31 May 2012; pp. 28–31. [Google Scholar] [CrossRef]

- Brokaw, E.B.; Lum, P.S.; Cooper, R.A.; Brewer, B.R. Using the Kinect to limit abnormal kinematics and compensation strategies during therapy with end effector robots. In Proceedings of the 2013 IEEE International Conference on Rehabilitation Robotics, Seattle, WA, USA, 24–26 June 2013; pp. 24–26. [Google Scholar] [CrossRef]

- Zhao, W.; Reinthal, M.A.; Espy, D.D.; Luo, X. Rule-based human motion tracking for rehabilitation exercises: Realtime assessment, feedback, and guidance. IEEE Access 2017, 5, 21382–21394. [Google Scholar] [CrossRef]

- Antón, D.; Goñi, A.; Illarramendi, A. Exercise recognition for Kinect-based telerehabilitation. Methods Inf. Med. 2015, 54, 145–155. [Google Scholar] [CrossRef] [PubMed]

- Gal, N.; Andrei, D.; Nemes, D.I.; Nadasan, E.; Stoicu-Tivadar, V. A Kinect based intelligent e-rehabilitation system in physical therapy. Stud. Health Technol. Inf. 2015, 210, 489–493. [Google Scholar] [CrossRef]

- López-Jaquero, V.; Rodríguez, A.C.; Teruel, M.A.; Montero, F.; Navarro, E.; Gonzalez, P. A bio-inspired model-based approach for context-aware post-WIMP tele-rehabilitation. Sensors 2016, 16, 1689. [Google Scholar] [CrossRef] [PubMed]

- Rybarczyk, Y.; Vernay, D. Educative therapeutic tool to promote the empowerment of disabled people. IEEE Lat. Am. Trans. 2016, 14, 3410–3417. [Google Scholar] [CrossRef]

- Jaiswal, S.; Kumar, R. Learning Django Web Development; O’Reilly: Sebastopol, CA, USA, 2015; ISBN 978-1783984404. [Google Scholar]

- Riehle, D. Composite design patterns. In Proceedings of the 12th ACM SIGPLAN ACM Conference on Object-Oriented Programming, Systems, Languages, and Applications, Atlanta, GA, USA, 5–9 October 1997; pp. 218–228. [Google Scholar] [CrossRef]

- Hillar, G.C. Django RESTful Web Services: The Easiest Way to Build Python RESTful APIs and Web Services with Django; Packt: Birmingham, UK, 2018; ISBN 978-1788833929. [Google Scholar]

- Kinectron: A Realtime Peer Server for Kinect 2. Available online: https://kinectron.github.io/docs/server.html (accessed on 5 April 2018).

- Jakobus, B. Mastering Bootstrap 4: Master the Latest Version of Bootstrap 4 to Build Highly Customized Responsive Web Apps; Packt: Birmingham, UK, 2018; ISBN 978-1788834902. [Google Scholar]

- Wu, Q.; Xu, G.; Li, M.; Chen, L.; Zhang, X.; Xie, J. Human pose estimation method based on single depth image. IET Comput. Vis. 2018, 12, 919–924. [Google Scholar] [CrossRef]

- Yamato, J.; Ohya, J.; Ishii, K. Recognizing human action in time-sequential images using Hidden Markov Model. In Proceedings of the 1992 IEEE Computer Society Conference on Computer Vision and Pattern Recognition, Champaign, IL, USA, 15–18 June 1992. [Google Scholar] [CrossRef]

- Fiosina, J.; Fiosins, M. Resampling based modelling of individual routing preferences in a distributed traffic network. Int. J. Artif. Intell. 2014, 12, 79–103. [Google Scholar]

- Yao, A.; Gall, J.; Fanelli, G.; Van Gool, L. Does human action recognition benefit from pose estimation? In Proceedings of the 22nd British Machine Vision Conference, Dundee, UK, 29 August–2 September 2011. [Google Scholar] [CrossRef]

- Smyth, P. Clustering using Monte Carlo cross-validation. In Proceedings of the 2nd International Conference on Knowledge Discovery and Data Mining, Portland, OR, USA, 2–4 August 1996. [Google Scholar]

- Lewis, J.R. IBM computer usability satisfaction questionnaires: Psychometric evaluation and instructions for use. Int. J. Hum. Comput. Interact. 1995, 7, 57–78. [Google Scholar] [CrossRef]

- Antón, D.; Berges, I.; Bermúdez, J.; Goñi, A.; Illarramendi, A. A telerehabilitation system for the selection, evaluation and remote management of therapies. Sensors 2018, 18, 1459. [Google Scholar] [CrossRef] [PubMed]

- Antón, D.; Kurillo, G.; Goñi, A.; Illarramendi, A.; Bajcsy, R. Real-time communication for kinect-based telerehabilitation. Future Gener. Comput. Syst. 2017, 75, 72–81. [Google Scholar] [CrossRef]

- Gowing, M.; Ahmadi, A.; Destelle, F.; Monaghan, D.S.; O’Connor, N.E.; Moran, K. Kinect vs. low-cost inertial sensing for gesture recognition. In Proceedings of the 20th International Conference on Multimedia Modeling, Dublin, Ireland, 6–10 January 2014. [Google Scholar] [CrossRef]

- Gil-Gómez, J.A.; Manzano-Hernández, P.; Albiol-Pérez, S.; Aula-Valero, C.; Gil-Gómez, H.; Lozano-Quilis, J.A. USEQ: A short questionnaire for satisfaction evaluation of virtual rehabilitation systems. Sensors 2017, 17, 1589. [Google Scholar] [CrossRef] [PubMed]

- Parmanto, B.; Lewis, A.N., Jr.; Graham, K.M.; Bertolet, M.H. Development of the telehealth usability questionnaire (TUQ). Int. J. Telerehabil. 2016, 8, 3–10. [Google Scholar] [CrossRef] [PubMed]

| Exercises | Sampling Error (%) | Occultation (%) | Total (%) |

|---|---|---|---|

| SFHK | 0.01 | 14.35 | 14.36 |

| HA | 0.00 | 0.26 | 0.26 |

| HE | 0.01 | 1.17 | 1.18 |

| FSB | 0.00 | 7.61 | 7.61 |

| Mean (%) | 0.005 | 5.85 | 5.855 |

| Right Hip | Left Hip | Spine Center | Knee |

|---|---|---|---|

| Right hip | Left hip | Spine center | Right knee |

| Frontal plane rotation | Frontal plane rotation | Frontal plane rotation | (flexion) |

| (abduction) | (abduction) | (lateral left) | |

| Right hip | Left hip | Spine center | Left knee |

| Frontal plane rotation | Frontal plane rotation | Frontal plane rotation | (flexion) |

| (adduction) | (adduction) | (lateral right) | |

| Right hip | Left hip | Spine center | |

| Sagittal plane rotation | Sagittal plane rotation | Sagittal plane rotation | |

| (flexion) | (flexion) | (flexion) | |

| Right hip Sagittal plane rotation (extension) | Left hip Sagittal plane rotation (extension) | Spine center Sagittal plane rotation (extension) |

| I | II | III | IV | V | VI |

|---|---|---|---|---|---|

| 100% | 100% | 57% | 97% | 100% | 100% |

| Category | # | Question | Mean | SD |

|---|---|---|---|---|

| SYSUSE | 01 | Overall, I am satisfied with how easy it is to use this system. | 5.82 | 0.88 |

| 02 | It was simple to use this system. | 5.92 | 0.74 | |

| 03 | I could effectively complete my work using this system. | 6.03 | 1.01 | |

| 04 | I was able to complete my work quickly using this system. | 5.74 | 0.97 | |

| 05 | I was able to efficiently complete my work using this system. | 5.79 | 1.10 | |

| 06 | I felt comfortable using this system. | 5.82 | 0.99 | |

| 07 | It was easy to learn to use this system. | 5.90 | 0.94 | |

| 08 | I believe I could become productive quickly using this system. | 5.72 | 0.92 | |

| INFOQUAL | 09 | The system gave error messages that clearly tell me how to fix problems. | 4.77 | 1.51 |

| 10 | Whenever I made a mistake using the system, I could recover easily and quickly. | 5.31 | 1.51 | |

| 11 | The information (such as online help, on-screen messages, and other documentation) provided with this system was clear. | 5.38 | 1.04 | |

| 12 | It was easy to find the information I needed. | 5.72 | 1.15 | |

| 13 | The information provided for the system was easy to understand. | 5.69 | 0.95 | |

| 14 | The information was effective in helping me complete the tasks and scenarios. | 5.64 | 1.01 | |

| 15 | The organization of information on the system screens was clear. | 5.92 | 0.84 | |

| INTERQUAL | 16 | The interface of this system was pleasant. | 5.54 | 1.19 |

| 17 | I liked using the interface of this system. | 5.38 | 1.07 | |

| 18 | This system has all the functions and capabilities I expect it to have. | 5.59 | 1.09 | |

| OVERALL | 19 | Overall, I am satisfied with this system. | 5.74 | 0.88 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Rybarczyk, Y.; Pérez Medina, J.L.; Leconte, L.; Jimenes, K.; González, M.; Esparza, D. Implementation and Assessment of an Intelligent Motor Tele-Rehabilitation Platform. Electronics 2019, 8, 58. https://doi.org/10.3390/electronics8010058

Rybarczyk Y, Pérez Medina JL, Leconte L, Jimenes K, González M, Esparza D. Implementation and Assessment of an Intelligent Motor Tele-Rehabilitation Platform. Electronics. 2019; 8(1):58. https://doi.org/10.3390/electronics8010058

Chicago/Turabian StyleRybarczyk, Yves, Jorge Luis Pérez Medina, Louis Leconte, Karina Jimenes, Mario González, and Danilo Esparza. 2019. "Implementation and Assessment of an Intelligent Motor Tele-Rehabilitation Platform" Electronics 8, no. 1: 58. https://doi.org/10.3390/electronics8010058

APA StyleRybarczyk, Y., Pérez Medina, J. L., Leconte, L., Jimenes, K., González, M., & Esparza, D. (2019). Implementation and Assessment of an Intelligent Motor Tele-Rehabilitation Platform. Electronics, 8(1), 58. https://doi.org/10.3390/electronics8010058