An Interpretable Agent-Assisted Pipeline for Statistical Anomaly Detection in IoT Temperature Time Series

Abstract

1. Introduction

2. State of the Art and Related Works

2.1. State of the Art

2.2. Related Works

3. Data Generation and Processing Methods

3.1. Overall Data Processing Pipeline

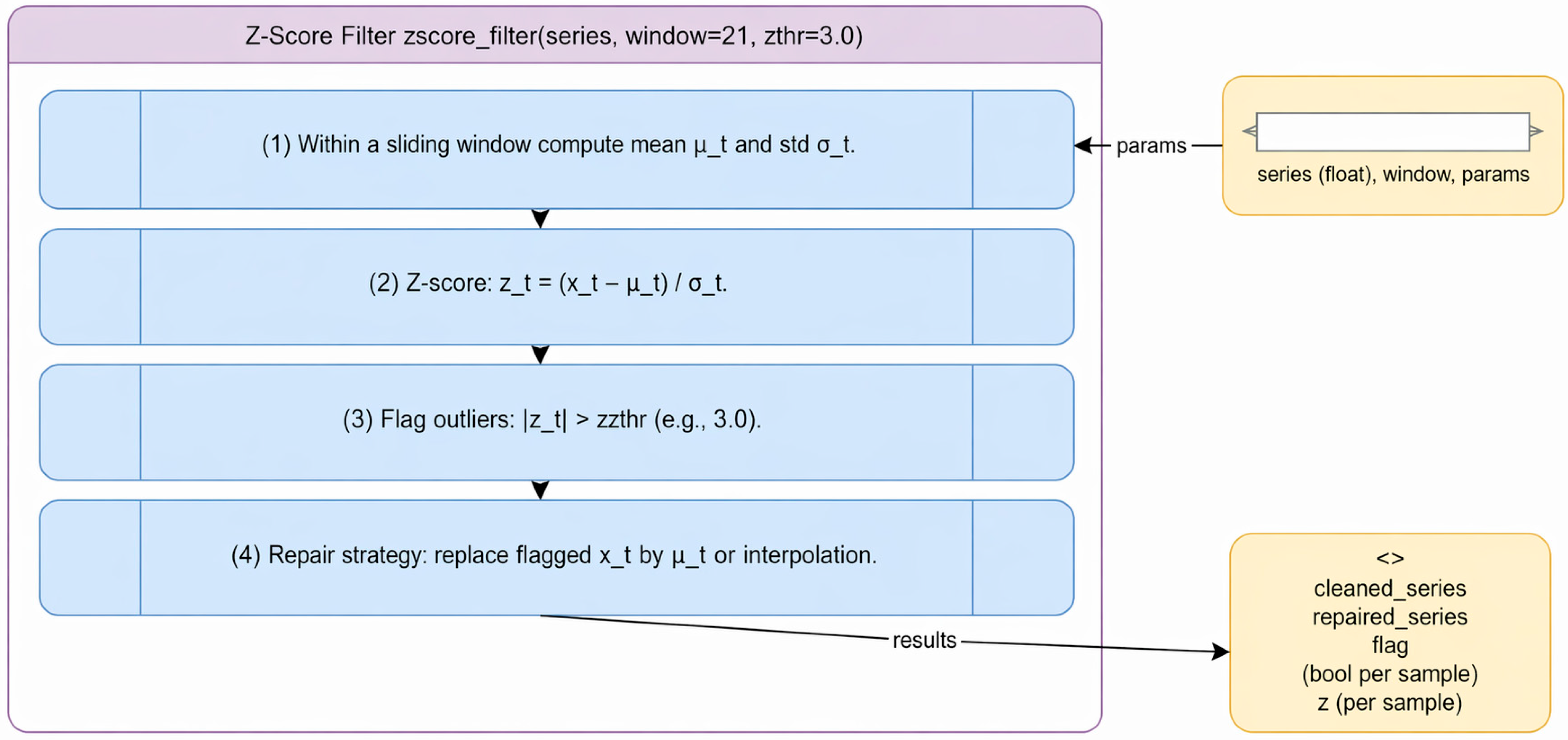

3.2. Statistical Filtering Models

3.3. Machine Learning-Based Baseline Methods

- is the anomaly score of sample given a dataset of size ;

- is the expected path length of across the ensemble of isolation trees;

- is a normalization factor corresponding to the average path length of unsuccessful searches in a binary tree, defined as [25]

- being the harmonic number, approximated by ;

- (Euler–Mascheroni constant).

- represents the input samples;

- is a mapping to a higher-dimensional feature space;

- is the normal vector defining the decision boundary;

- is the offset (threshold) of the decision function;

- are slack variables allowing soft margin violations;

- is a hyperparameter controlling the trade-off between the fraction of outliers and the model complexity;

- is the number of training samples.

- Higher computational cost (especially IF);

- Increased memory requirements;

- Reduced interpretability compared to statistical filters.

3.4. Agent Cost Function and Filter Evaluation Strategy

- Effective suppression of anomalous measurements;

- Preservation of the underlying signal dynamics;

- And adequate smoothing of noise without distorting the signal structure.

- represents anomaly suppression effectiveness;

- represents signal smoothness;

- represents deviation from the original signal;

- are weighting coefficients controlling the relative importance of each component.

- is the normalized reconstruction error;

- is the fraction of detected outliers;

- is the normalized variance of the reconstructed signal;

- represents the difference between the slopes of the original and filtered signals;

- denotes the residual spike rate;

- are weighing coefficients controlling the contribution of each metric.

- is the number of detected spikes after filtering;

- is the total number of samples in the time series.

3.5. Scenario-Based Data Generation and Reproducibility

4. Dataset Characterization and Experimental Design

4.1. Dataset Overview and Scenario Design

- represents the baseline environmental temperature signal;

- represents measurement noise produced by the sensor;

- represents injected anomalies simulating sensor faults or disturbances.

- is the initial temperature level;

- represents a small drift coefficient;

- is low-amplitude Gaussian noise representing natural environmental fluctuations.

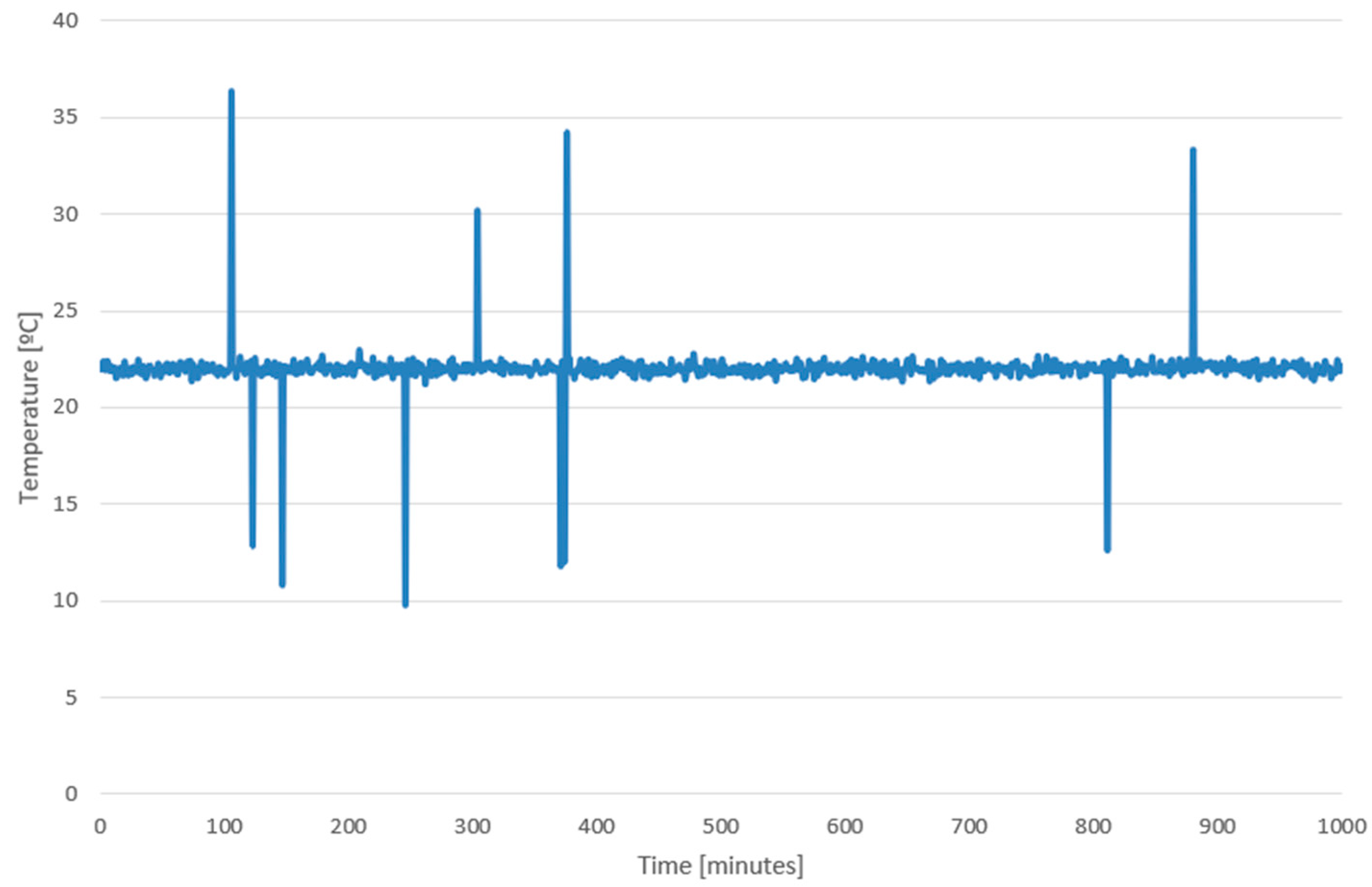

4.2. Scenario 1 (S1): Stable Signal with Sparse Spikes

4.3. Scenario 2 (S2): Dense Impulsive Noise

4.4. Scenario 3 (S3): Gradual Drift

4.5. Scenario 4 (S4): Corrupted Flat Segments

4.6. Scenario 5 (S5): Mixed Anomalies with Non-Stationary Behavior

4.7. Scenario 6 (S6): Cyclic Variations with Embedded Anomalies

4.8. Scenario 7 (S7): Heavy-Tailed Noise and Extreme Events

4.9. Field-like Scenario (SF)

- Non-stationary temperature behavior;

- Real measurement noise;

- Irregular anomaly patterns;

- Sensor drift and environmental variability.

- Detection consistency across methods;

- Reconstruction stability;

- Generalization capability of the agent-based selection.

5. Results

- A controlled synthetic dataset with seven anomaly scenarios;

- A real-world IoT dataset with weak or missing labels.

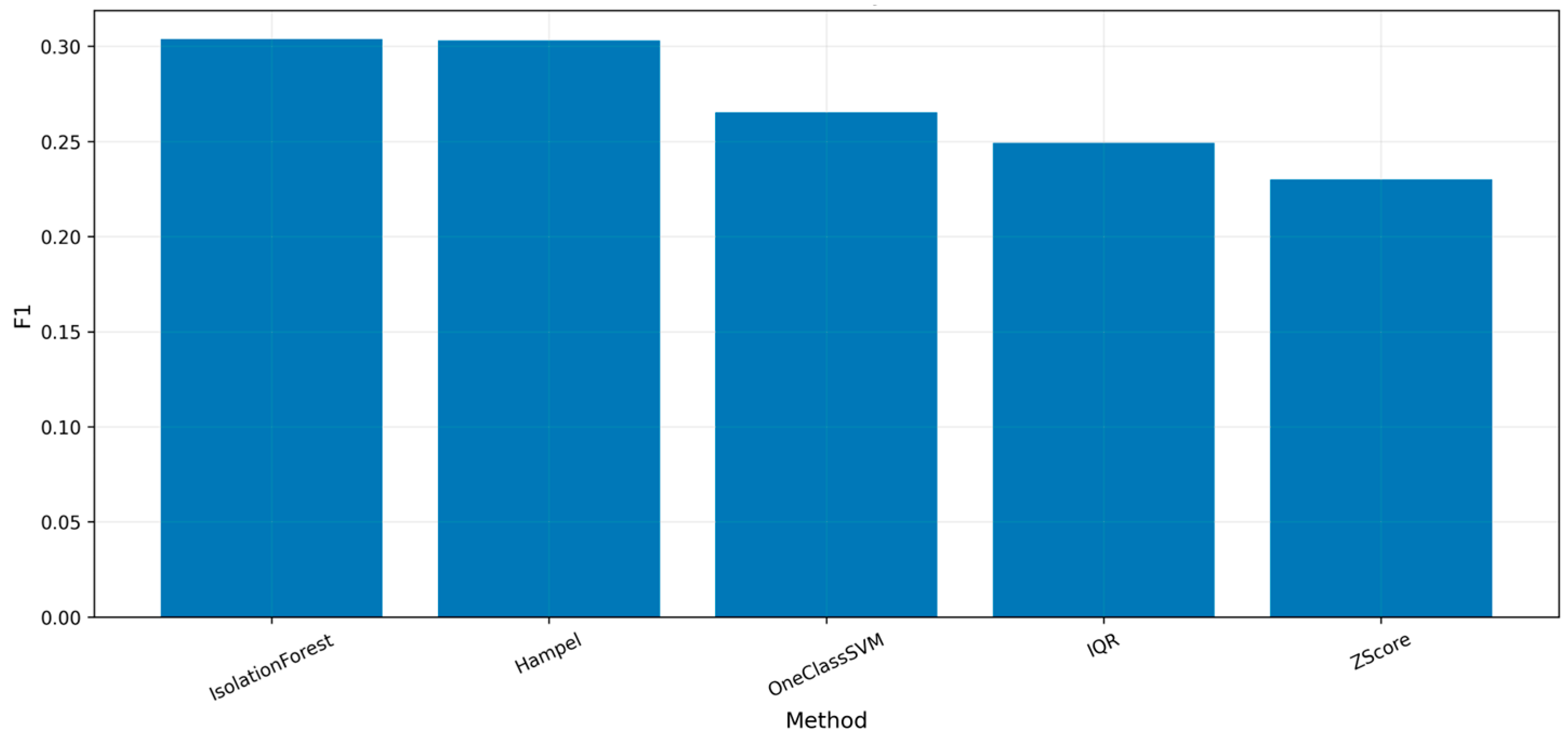

5.1. Aggregated Detection Performance

- IF achieves the highest overall F1-score (0.304), which demonstrates its ability to handle various types of abnormal behavior.

- Hampel filtering delivers competitive results through its F1-score of 0.303 while using less computational power, which makes it an efficient lightweight solution.

- The IQR method and OC-SVM demonstrate moderate success because they achieve only slight improvements beyond what statistical methods accomplish.

- Z-Score achieves the highest precision (0.734) and lowest false positive rate, but at the expense of recall, resulting in a lower F1-score.

- Edge IoT deployment requires statistical methods which operate at milliseconds speed according to the test results;

- IF requires roughly 1000 milliseconds to run while it consumes more memory than other methods, which creates a considerable performance versus operational effectiveness problem.

5.2. Scenario-Based Detection Analysis

- The Z-Score method achieves its best results in S1 (stable spikes) because it can effectively identify outlier data points.

- Statistical methods do not match the performance of IF and OC-SVM because these two methods can effectively analyze non-linear data distributions.

- Methods show worse results in S3 (periodic drift) because they need to handle the challenge of detecting slow-moving anomalies.

- Methods in S4 (flat corrupted) reach their lowest F1-score results because they can only detect a small number of anomalies.

- The mixed complex conditions in S5 to S6 demonstrate that machine learning techniques enhance system endurance.

- IQR method delivers optimal results in S7 (multiple locations/structured variability) with an F1-score of 0.909.

- Hampel method shows optimal stability performance in SF (field-like scenario).

- No method consistently dominates across all scenarios;

- Optimal performance is inherently scenario-dependent;

- Static filter selection is suboptimal in heterogeneous environments.

5.3. Reconstruction Quality Evaluation

5.4. Computational Performance

5.5. Discussion and Practical Insights

5.6. Synthesis of Results

6. Dataset Insights, Limitations, and Potential Extensions

6.1. Insights from Comparative Filter Behavior

6.2. Interpretability and Metric-Driven Decision Making

6.3. Limitations

6.4. Extensions

6.5. Evaluation of Field-like Scenario (SF)

7. Conclusions and Future Work

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Abbreviations

| IF | Isolation Forest |

| IoT | Internet of Things |

| IQR | Interquartile Range |

| LSTM | Long Short-Term Memory |

| MAD | Median Absolute Deviation |

| OC-SVM | One Class SVM |

| RMSE | Root Mean Square Error |

| SVM | Support Vector Machines |

| TSB-UAD | Time-Series Benchmark for Univariate Anomaly Detection |

| UML | Unified Modeling Language |

References

- Shi, W.; Cao, J.; Zhang, Q.; Li, Y.; Xu, L. Edge computing: Vision and challenges. IEEE Internet Things J. 2016, 3, 637–646. [Google Scholar] [CrossRef]

- Zhou, Z.; Chen, X.; Li, E.; Zeng, L.; Luo, K.; Zhang, J. Edge intelligence: Paving the last mile of artificial intelligence with edge computing. Proc. IEEE 2019, 107, 1738–1762. [Google Scholar] [CrossRef]

- Premsankar, G.; Di Francesco, M.; Taleb, T. Edge computing for the Internet of Things: A case study. IEEE Internet Things J. 2018, 5, 1275–1284. [Google Scholar] [CrossRef]

- Yousefpour, A.; Ishigaki, G.; Jue, J.P. Fog computing: Towards minimizing delay in the Internet of Things. J. Syst. Archit. 2019, 91, 1–16. [Google Scholar]

- Zhang, Y.; Chen, X.; Jin, L.; Wang, X.; Guo, X. Network anomaly detection based on deep learning: A survey. IEEE Commun. Surv. Tutor. 2019, 21, 2019–2021. [Google Scholar] [CrossRef]

- Chalapathy, R.; Chawla, S. Deep learning for anomaly detection: A survey. ACM Comput. Surv. 2019, 51, 1–36. [Google Scholar]

- Hundman, K.; Constantinou, V.; Laporte, C.; Colwell, I.; Soderstrom, T. Detecting spacecraft anomalies using LSTMs and nonparametric dynamic thresholding. In Proceedings of the ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, London, UK, 19–23 August 2018; ACM: New York, NY, USA, 2018. [Google Scholar]

- Ahmed, M.; Mahmood, A.N.; Hu, J. A survey of network anomaly detection techniques. J. Netw. Comput. Appl. 2016, 60, 19–31. [Google Scholar] [CrossRef]

- Blázquez-García, A.; Conde, A.; Mori, U.; Lozano, J.A. A review on outlier/anomaly detection in time series data. ACM Comput. Surv. 2021, 54, 1–33. [Google Scholar] [CrossRef]

- Pires, L.M. IoT Anomaly Detection Repository (Hampel, IQR, Z-Score). GitHub. 2025. Available online: https://github.com/prof-luispires/iot-anomaly-detection.git (accessed on 8 April 2026).

- Python, version 3.11; Python Software Foundation: Beaverton, OR, USA, 2022. Available online: https://www.python.org (accessed on 8 April 2026).

- Maronna, R.A.; Martin, R.D.; Yohai, V.J.; Salibián-Barrera, M. Robust Statistics: Theory and Methods, 2nd ed.; Wiley: Hoboken, NJ, USA, 2019. [Google Scholar]

- Pearson, R.K.; Neuvo, Y.; Astola, J.; Gabbouj, M. The Hampel filter: An efficient robust outlier detection algorithm for real-time applications. Digit. Signal Process. 2016, 59, 1–18. [Google Scholar] [CrossRef]

- Castro, L.N. Exploratory Data Analysis: Descriptive Analysis, Visualization, and Dashboard Design; CRC Press: Boca Raton, FL, USA, 2025. [Google Scholar]

- Yaro, A.S.; Maly, F.; Prazak, P. Outlier detection in time-series RSS using Z-score with Sn estimator. Appl. Sci. 2023, 13, 3900. [Google Scholar] [CrossRef]

- United Nations. Transforming Our World: The 2030 Agenda for Sustainable Development; United Nations: New York, NY, USA, 2015; Available online: https://sdgs.un.org/2030agenda (accessed on 8 April 2026).

- European Parliament and Council. Regulation (EU) 2024/1781 on Ecodesign Requirements for Sustainable Products; European Parliament and Council: Washington, DC, USA, 2024; Available online: https://eur-lex.europa.eu (accessed on 8 April 2026).

- European Commission. Digital Product Passport: Transparency and Sustainability; European Commission: Brussels, Belgium, 2024; Available online: https://data.europa.eu (accessed on 8 April 2026).

- Hampel, F.R. The influence curve and its role in robust estimation. J. Am. Stat. Assoc. 1974, 69, 383–393. [Google Scholar] [CrossRef]

- Tukey, J.W. Exploratory Data Analysis; Addison-Wesley: Reading, MA, USA, 1977. [Google Scholar]

- Iglewicz, B.; Hoaglin, D.C. How to Detect and Handle Outliers; ASQC: Milwaukee, WI, USA, 1993. [Google Scholar]

- Rousseeuw, P.J.; Hubert, M. Robust statistics for outlier detection. WIREs Data Min. Knowl. Discov. 2011, 1, 73–79. [Google Scholar] [CrossRef]

- Roos-Hoefgeest Toribio, M.; Garnung Menéndez, A.; Roos-Hoefgeest Toribio, S.; Álvarez García, I. Speed-up Hampel filter for outlier detection. Sensors 2025, 25, 3319. [Google Scholar] [CrossRef] [PubMed]

- Montgomery, D.C.; Runger, G.C. Applied Statistics and Probability for Engineers, 7th ed.; Wiley: Hoboken, NJ, USA, 2020. [Google Scholar]

- Liu, F.T.; Ting, K.M.; Zhou, Z.-H. Isolation forest. In Proceedings of the IEEE International Conference on Data Mining (ICDM), Pisa, Italy, 15–19 December 2008; IEEE: New York, NY, USA, 2008; pp. 413–422. [Google Scholar]

- Schölkopf, B.; Platt, J.C.; Shawe-Taylor, J.C.; Smola, A.J.; Williamson, R.C. Estimating the support of a high-dimensional distribution. Neural Comput. 2001, 13, 1443–1471. [Google Scholar] [CrossRef] [PubMed]

- Shen, L.; Li, Z.; Kwok, J.T. Time-series anomaly detection using temporal hierarchical one-class network. In Proceedings of the Neural Information Processing Systems (NeurIPS), Vancouver, BC, Canada, 6–12 December 2020; NeurIPS: San Diego, CA, USA, 2020. [Google Scholar]

- Paparrizos, J.; Kang, Y.; Boniol, P.; Tsay, R.S.; Palpanas, T.; Franklin, M.J. TSB-UAD: Benchmark suite for time-series anomaly detection. Proc. VLDB Endow. 2022, 15, 1697–1711. [Google Scholar] [CrossRef]

- de Medeiros, K.; da Costa, K.A.; Papa, J.P.; Lisboa, C.O.; Munoz, R. A survey on anomaly detection in Internet of Things data using machine learning. Sensors 2023, 23, 3245. [Google Scholar] [CrossRef]

- Chatterjee, A.; Ahmed, B.S. IoT anomaly detection methods and applications: A survey. Internet Things 2022, 19, 100568. [Google Scholar] [CrossRef]

- DataVic. Sensor Readings with Temperature, Light, Humidity Every 5 Minutes at 8 Locations (2014–2015). Available online: https://discover.data.vic.gov.au/dataset/sensor-readings-with-temperature-light-humidity-every-5-minutes-at-8-locations-trial-2014-2015 (accessed on 8 April 2026).

- Susto, G.A.; Schirru, A.; Pampuri, S.; McLoone, S.; Beghi, A. Machine learning for predictive maintenance: A multiple classifier approach. IEEE Trans. Ind. Inform. 2015, 11, 812–820. [Google Scholar] [CrossRef]

| Method | Category | Interpretability | Computational Cost | Typical IoT Suitability |

|---|---|---|---|---|

| IF | Machine Learning | Low | Medium | Moderate |

| OC-SVM | Machine Learning | Low | High | Limited |

| LSTM Autoencoder | Deep Learning | Very Low | Very High | Limited |

| TSB-UAD Benchmark Methods | Mixed | Variable | Variable | Evaluation Framework |

| Proposed Framework | Statistical and Decision Agent | High | Low | High |

| Ref. | Focus | Method | Key Limitation | Contribution |

|---|---|---|---|---|

| [13] | Real-time outlier detection | Hampel filter | Single-method focus | Comparative multi-filter evaluation |

| [20] | Computational efficiency | Accelerated Hampel | No multi-scenario analysis | Integrated into full pipeline |

| [15] | Time-series outliers | Z-Score and Sn | Sensitive to non-stationarity | Baseline under diverse scenarios |

| [14] | Exploratory analysis | IQR bounds | Not time-series specific | Sliding-window IoT application |

| [12] | Robust statistics | Theoretical foundation | No IoT focus | Practical dataset implementation |

| [27] | Deep anomaly detection | LSTM/neural models | Higher computational complexity | Captures complex temporal patterns |

| [28] | Benchmark evaluation | Multiple anomaly detection algorithms | Not specific to IoT temperature monitoring | Provides standardized evaluation datasets |

| This work | IoT temperature | Hampel, IQR, Z-Score and agent selection | No real temperature sensors data | Adaptive, interpretable selection |

| Metric | Symbol | Description | Definition | Range |

|---|---|---|---|---|

| Normalized reconstruction error | RMSEn | Deviation between original and filtered signals | ≥0 | |

| Fraction of detected outliers | fout | Proportion of samples identified as anomalies | [0, 1] | |

| Normalized variance | Varn | Relative smoothness of filtered signal | ≥0 | |

| Trend preservation | Δm | Difference between slopes of original and filtered signals | Δm = ∣morig − mclean∣ | ≥0 |

| Residual spike rate | rspikes | Frequency of abrupt residual variations | [0, 1] |

| Parameter | Description | Typical Value |

|---|---|---|

| Initial baseline temperature | 20–22 °C | |

| Sensor noise standard deviation | 0.05–0.2 °C | |

| Spike magnitude | 2–5 °C | |

| Spike occurrence probability | 0.5–2% | |

| Impulsive noise duration | 2–5 samples | |

| Drift coefficient | 0.001–0.01 °C/sample | |

| Length of flat corrupted segment | 20–50 samples |

| Scenario | Signal Characteristics | Anomaly Type | Typical Magnitude | Duration | Injection Frequency |

|---|---|---|---|---|---|

| Scenario 1 (S1): Stable Spikes | Stable baseline temperature with small natural noise | Isolated spikes | ±3–6 °C deviation | 1–2 samples | Rare (≈1–2% of samples) |

| Scenario 2 (S2): Impulsive Noise | Stable signal with frequent abrupt disturbances | Dense impulsive noise | ±2–5 °C deviation | 1 sample | Frequent (≈5–10%) |

| Scenario 3 (S3): Gradual Drift | Slowly increasing baseline | Drift anomaly | Gradual ±3–8 °C shift | Long segments (50–150 samples) | Continuous |

| Scenario 4 (S4): Flat Corrupted Segments | Artificially constant temperature | Sensor freeze/flat signal | Constant value | 40–120 samples | Occasional |

| Scenario 5 (S5): Mixed Anomalies | Non-stationary signal | Spikes, drift and flat segments | ±3–8 °C | Variable | Mixed |

| Scenario 6 (S6): Cyclic Variations | Periodic environmental pattern | Periodic anomalies and spikes | ±2–6 °C | Short bursts | Irregular |

| Scenario 7 (S7): Heavy-Tailed Noise | High variance signal | Extreme events | ±5–10 °C | 1–3 samples | Rare extreme |

| Parameter | Value | Justification | Reference |

|---|---|---|---|

| Hampel window size | 21 | Provides a balance between local sensitivity and noise robustness in slowly varying temperature signals | [13,19] |

| Hampel threshold | 3 | Standard choice for outlier detection using MAD, equivalent to ~3σ rule | [13,14] |

| IQR multiplier | 1.5 | Tukey’s rule for detecting moderate outliers in non-parametric distributions | [6] |

| Z-Score threshold | 3 | Common threshold for Gaussian-based anomaly detection | [12] |

| w1 | 0.4 | Normalized reconstruction error | This work |

| w2 | 0.3 | Outlier fraction | This work |

| w3 | 0.15 | Signal variance preservation | This work |

| w4 | 0.1 | Trend deviation | This work |

| w5 | 0.05 | Residual spike penalty | This work |

| Scenario | Type | Samples | Anomaly Rate (%) | Description |

|---|---|---|---|---|

| S1 | Synthetic | 10,000 | 1.0 | Stable signal with sparse spikes |

| S2 | Synthetic | 10,000 | 5.0 | Dense impulsive noise |

| S3 | Synthetic | 10,000 | 3.0 | Drift with embedded anomalies |

| S4 | Synthetic | 10,000 | 4.0 | Flat segments with spikes |

| S5 | Synthetic | 10,000 | 6.0 | Mixed anomalies and regime switching |

| S6 | Synthetic | 10,000 | 3.5 | Cyclic signal with anomalies |

| S7 | Synthetic | 10,000 | 7.0 | Heavy-tailed noise and extreme events |

| SF | Real (8 sensors) | ~200,000+ | ~0.8–1.0 | Real-world IoT dataset (8 locations) |

| Method | Precision | Recall | F1-Score | FPR | Accuracy | RMSE | MAE | Runtime (ms) | Peak Memory (kB) |

|---|---|---|---|---|---|---|---|---|---|

| Hampel | 0.413 | 0.362 | 0.303 | 0.020 | 0.903 | 0.424 | 0.218 | 3.481 | 92.260 |

| IQR | 0.390 | 0.277 | 0.249 | 0.009 | 0.906 | 0.446 | 0.225 | 3.110 | 95.410 |

| IF | 0.482 | 0.317 | 0.304 | 0.017 | 0.905 | 0.451 | 0.220 | 1007.105 | 821.393 |

| OC-SVM | 0.431 | 0.288 | 0.265 | 0.019 | 0.902 | 0.464 | 0.222 | 5.167 | 78.449 |

| Z-Score | 0.734 | 0.207 | 0.230 | 0.000 | 0.911 | 0.473 | 0.229 | 3.201 | 83.268 |

| Scenario | Hampel | IQR | Z-Score | IF | OC-SVM |

|---|---|---|---|---|---|

| S1 | 0.326 | 0.609 | 0.933 | 0.378 | 0.368 |

| S2 | 0.551 | 0.254 | 0.000 | 0.651 | 0.651 |

| S3 | 0.062 | 0.024 | 0.018 | 0.106 | 0.106 |

| S4 | 0.034 | 0.037 | 0.040 | 0.034 | 0.034 |

| S5 | 0.058 | 0.036 | 0.031 | 0.108 | 0.120 |

| S6 | 0.236 | 0.024 | 0.000 | 0.362 | 0.210 |

| S7 | 0.867 | 0.909 | 0.712 | 0.500 | 0.353 |

| SF | 0.230 | 0.038 | 0.040 | 0.142 | 0.142 |

| Method | Main Strength | Main Limitation | Best-Suited Scenarios |

|---|---|---|---|

| Hampel | Strong balance between recall, robustness, and computational efficiency while remaining fully interpretable | Slightly lower precision compared to Z-Score in low-noise conditions | Sparse spikes, mixed anomaly scenarios, and field-like IoT signals |

| IQR | Simple and fast method with good performance for well-defined impulsive outliers | Less stable under non-stationary signals and gradual drift patterns | Impulsive noise and moderate anomaly conditions |

| Z-Score | Achieves the highest precision with very low false positive rate | Low recall due to conservative behavior, missing subtle or distributed anomalies | Scenarios where minimizing false alarms is critical |

| IF | Strong overall F1-score and good performance in complex or non-linear data distributions | High computational cost and memory usage compared to statistical methods | Complex scenarios with overlapping anomalies or dense noise |

| OC-SVM | Lightweight machine learning baseline with balanced performance | Limited interpretability and marginal performance gains compared to statistical approaches | Intermediate anomaly scenarios and benchmarking purposes |

| Scenario | Best Method | Precision | Recall | F1-Score | RMSE |

|---|---|---|---|---|---|

| S1 | Z-Score | 0.875 | 1.000 | 0.933 | 0.007 |

| S2 | IF | 0.900 | 0.509 | 0.651 | 0.466 |

| S3 | IF | 0.633 | 0.058 | 0.106 | 1.073 |

| S4 | Z-Score | 1.000 | 0.020 | 0.040 | 0.064 |

| S5 | OC-SVM | 0.724 | 0.065 | 0.120 | 0.824 |

| S6 | IF | 0.633 | 0.253 | 0.362 | 0.342 |

| S7 | IQR | 0.897 | 0.921 | 0.909 | 0.017 |

| SF | Hampel | 0.596 | 0.143 | 0.230 | 0.572 |

| Location | Samples | Duration (Days) | Weak Anomalies | Weak Anomaly Rate (%) | Mean Temp (°C) | Std Temp (°C) |

|---|---|---|---|---|---|---|

| 509 | 19,119 | 66.39 | 178 | 0.93 | 18.18 | 5.52 |

| 510 | 12,038 | 41.79 | 121 | 1.00 | 18.09 | 6.06 |

| 506 | 6626 | 23.01 | 64 | 0.97 | 16.88 | 5.38 |

| 511 | 4598 | 15.96 | 38 | 0.83 | 19.51 | 5.22 |

| 507 | 2918 | 10.13 | 25 | 0.86 | 19.73 | 4.84 |

| 505 | 2915 | 10.12 | 27 | 0.93 | 20.42 | 4.37 |

| 501 | 2903 | 10.07 | 26 | 0.90 | 19.86 | 5.26 |

| 508 | 2728 | 9.47 | 22 | 0.81 | 19.91 | 4.43 |

| 509 | 19,119 | 66.39 | 178 | 0.93 | 18.18 | 5.52 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Pires, L.M.; Vasconcelos, J.B.d. An Interpretable Agent-Assisted Pipeline for Statistical Anomaly Detection in IoT Temperature Time Series. Electronics 2026, 15, 1840. https://doi.org/10.3390/electronics15091840

Pires LM, Vasconcelos JBd. An Interpretable Agent-Assisted Pipeline for Statistical Anomaly Detection in IoT Temperature Time Series. Electronics. 2026; 15(9):1840. https://doi.org/10.3390/electronics15091840

Chicago/Turabian StylePires, Luis Miguel, and José Braga de Vasconcelos. 2026. "An Interpretable Agent-Assisted Pipeline for Statistical Anomaly Detection in IoT Temperature Time Series" Electronics 15, no. 9: 1840. https://doi.org/10.3390/electronics15091840

APA StylePires, L. M., & Vasconcelos, J. B. d. (2026). An Interpretable Agent-Assisted Pipeline for Statistical Anomaly Detection in IoT Temperature Time Series. Electronics, 15(9), 1840. https://doi.org/10.3390/electronics15091840