ETMamba: An Effective Temporal Model for Video Action Recognition

Abstract

1. Introduction

2. Related Work

2.1. Traditional Action Recognition Methods

2.2. Mamba-Based Methods

3. Method

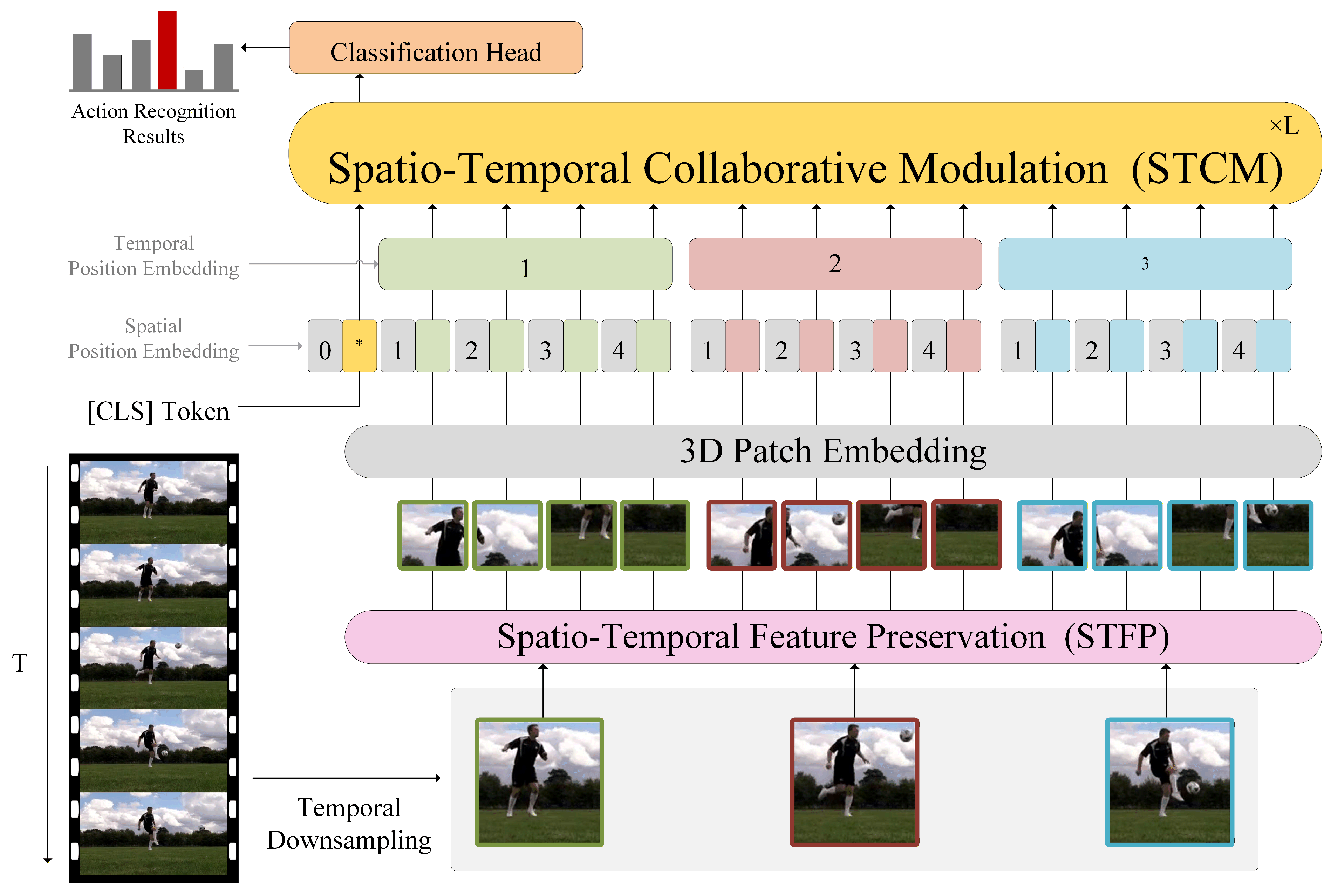

3.1. Overall Architecture

3.2. Spatiotemporal Feature Preservation (STFP)

3.3. Efficient Bidirectional Sharing (EBS)

3.4. Spatiotemporal Collaborative Modulation (STCM)

4. Results

4.1. Dataset

4.2. Model Configurations

4.3. Contrast Experiment

4.4. Ablation Experiments

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Ding, X.; Zhang, X.; Han, J.; Ding, G. Scaling up your kernels to 31x31: Revisiting large kernel design in cnns. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LO, USA, 19–20 June 2022; pp. 11963–11975. [Google Scholar]

- Zhu, L.; Liao, B.; Zhang, Q.; Wang, X.; Liu, W.; Wang, X. Vision mamba: Efficient visual representation learning with bidirectional state space model. arXiv 2024, arXiv:2401.09417. [Google Scholar] [CrossRef]

- Li, K.; Li, X.; Wang, Y.; He, Y.; Wang, Y.; Wang, L.; Qiao, Y. Videomamba: State space model for efficient video understanding. In European Conference on Computer Vision; Springer Nature: Cham, Switzerland, 2024; pp. 237–255. [Google Scholar]

- Kay, W.; Carreira, J.; Simonyan, K.; Zhang, B.; Hillier, C.; Vijayanarasimhan, S.; Viola, F.; Green, T.; Back, T.; Natsev, P.; et al. The kinetics human action video dataset. arXiv 2017, arXiv:1705.06950. [Google Scholar] [CrossRef]

- Goyal, R.; Ebrahimi Kahou, S.; Michalski, V.; Materzynska, J.; Westphal, S.; Kim, H.; Haenel, V.; Fruend, I.; Yianilos, P.; Mueller-Freitag, M.; et al. The “something something” video database for learning and evaluating visual common sense. In Proceedings of the IEEE International Conference on Computer Vision; IEEE: Piscatway, NJ, USA, 2017; pp. 5842–5850. [Google Scholar]

- Kuehne, H.; Jhuang, H.; Garrote, E.; Poggio, T.; Serre, T. HMDB: A large video database for human motion recognition. In 2011 International Conference on Computer Vision; IEEE: Piscataway, NJ. USA, 2011; pp. 2556–2563. [Google Scholar]

- Kuehne, H.; Arslan, A.; Serre, T. The language of actions: Recovering the syntax and semantics of goal-directed human activities. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition; IEEE: Piscatway, NJ, USA, 2014; pp. 780–787. [Google Scholar]

- Lin, J.; Gan, C.; Han, S. Tsm: Temporal shift module for efficient video understanding. In Proceedings of the IEEE/CVF International Conference on Computer Vision; IEEE: Piscatway, NJ, USA, 2019; pp. 7083–7093. [Google Scholar]

- Zhang, Y.; Bai, Y.; Wang, H.; Xu, Y.; Fu, Y. Look more but care less in video recognition. Adv. Neural Inf. Process. Syst. 2022, 35, 30813–30825. [Google Scholar]

- Feichtenhofer, C.; Fan, H.; Malik, J.; He, K. Slowfast networks for video recognition. In Proceedings of the IEEE/CVF International Conference on Computer Vision; IEEE: Piscatway, NJ, USA, 2019; pp. 6202–6211. [Google Scholar]

- Wang, H.; Xia, T.; Li, H.; Gu, X.; Lv, W.; Wang, Y. A Channel-Wise Spatial-Temporal Aggregation Network for Action Recognition. Mathematics 2021, 9, 3226. [Google Scholar] [CrossRef]

- Xia, L.; Fu, W. Spatial-temporal multiscale feature optimization based two-stream convolutional neural network for action recognition. Clust. Comput. 2024, 27, 11611–11626. [Google Scholar] [CrossRef]

- Bertasius, G.; Wang, H.; Torresani, L. Is space-time attention all you need for video understanding? Int. Conf. Mach. 2021, 2, 4. [Google Scholar]

- Zhang, Y.; Li, X.; Liu, C.; Shuai, B.; Zhu, Y.; Brattoli, B.; Chen, H.; Marsic, I.; Tighe, J. Vidtr: Video transformer without convolutions. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Montreal, QC, Canada, 10–17 October 2021; pp. 13577–13587. [Google Scholar]

- Liu, Z.; Ning, J.; Cao, Y.; Wei, Y.; Zhang, Z.; Lin, S.; Hu, H. Video swin transformer. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE: Piscatway, NJ, USA, 2022; pp. 3202–3211. [Google Scholar]

- Li, Y.; Wu, C.Y.; Fan, H.; Mangalam, K.; Xiong, B.; Malik, J.; Feichtenhofer, C. Mvitv2: Improved multiscale vision transformers for classification and detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE: Piscatway, NJ, USA, 2022; pp. 4804–4814. [Google Scholar]

- Li, S.; Wang, Z.; Liu, Y.; Zhang, Y.; Zhu, J.; Cui, X.; Liu, J. FSformer: Fast-Slow Transformer for video action recognition. Image Vis. Comput. 2023, 137, 104740. [Google Scholar] [CrossRef]

- Li, K.; Wang, Y.; Zhang, J.; Gao, P.; Song, G.; Liu, Y.; Li, H.; Qiao, Y. Uniformer: Unifying convolution and self-attention for visual recognition. IEEE Trans. Pattern Anal. Mach. Intell. 2023, 45, 12581–12600. [Google Scholar] [CrossRef]

- Li, K.; Wang, Y.; He, Y.; Li, Y.; Wang, Y.; Wang, L.; Qiao, Y. Uniformerv2: Spatiotemporal learning by arming image vits with video uniformer. arXiv 2022, arXiv:2211.09552. [Google Scholar]

- Lu, H.; Salah, A.A.; Poppe, R. Videomambapro: A leap forward for mamba in video understanding. arXiv 2024, arXiv:2406.19006. [Google Scholar]

- Suleman, H.; Talal Wasim, S.; Naseer, M.; Gall, J. Distillation-free Scaling of Large SSMs for Images and Videos. arXiv 2024, arXiv:2409.11867. [Google Scholar] [CrossRef]

- Liu, Y.; Wu, P.; Liang, C.; Shen, J.; Wang, L.; Yi, L. VideoMAP: Toward Scalable Mamba-based Video Autoregressive Pretraining. arXiv 2025, arXiv:2503.12332. [Google Scholar]

- Beedu, A.; Dong, Z.; Sheinkopf, J.; Essa, I. Mamba Fusion: Learning Actions Through Questioning. In ICASSP 2025—IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP); IEEE: Piscatway, NJ, USA, 2025; pp. 1–5. [Google Scholar]

- Li, S.; Singh, H.; Grover, A. Mamba-nd: Selective state space modeling for multi-dimensional data. In European Conference on Computer Vision; Springer Nature: Cham, Switzerland, 2024; pp. 75–92. [Google Scholar]

- Gao, X.; Kanu-Asiegbu, A.M.; Du, X. Mambast: A plug-and-play cross-spectral spatial-temporal fuser for efficient pedestrian detection. In 2024 IEEE 27th International Conference on Intelligent Transportation Systems (ITSC); IEEE: Piscatway, NJ, USA, 2024; pp. 2027–2034. [Google Scholar]

- Sheng, J.; Zhou, J.; Wang, J.; Ye, P.; Fan, J. DualMamba: A lightweight spectral–spatial mamba-convolution network for hyperspectral image classification. IEEE Trans. Geosci. Remote Sens. 2024, 63, 5501415. [Google Scholar] [CrossRef]

- Russakovsky, O.; Deng, J.; Su, H.; Krause, J.; Satheesh, S.; Ma, S.; Huang, Z.; Karpathy, A.; Khosla, A.; Bernstein, M. Imagenet large scale visual recognition challenge. Int. J. Comput. Vis. 2015, 115, 211–252. [Google Scholar] [CrossRef]

- Wang, M.; Xing, J.; Liu, Y. Actionclip: A new paradigm for video action recognition. arXiv 2021, arXiv:2109.08472. [Google Scholar] [CrossRef]

- Yang, T.; Zhu, Y.; Xie, Y.; Zhang, A.; Chen, C.; Li, M. Aim: Adapting image models for efficient video action recognition. arXiv 2023, arXiv:2302.03024. [Google Scholar] [CrossRef]

- Park, J.; Lee, J.; Sohn, K. Dual-path adaptation from image to video transformers. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE: Piscatway, NJ, USA, 2023; pp. 2203–2213. [Google Scholar]

- Zhu, M.; Wang, Z.; Hu, M.; Dang, R.; Lin, X.; Zhou, X.; Liu, C.; Chen, Q. Mote: Reconciling generalization with specialization for visual-language to video knowledge transfer. Adv. Neural Inf. Process. Syst. 2024, 37, 55403–55424. [Google Scholar]

- Zhang, W.; Wan, C.; Liu, T.; Tian, X.; Shen, X.; Ye, J. Enhanced motion-text alignment for image-to-video transfer learning. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE: Piscatway, NJ, USA, 2024; pp. 18504–18515. [Google Scholar]

- Yang, Y.; Xing, Z.; Yu, L.; Fu, H.; Huang, C.; Zhu, L. Vivim: A Video Vision Mamba for Ultrasound Video Segmentation. IEEE Trans. Circuits Syst. Video Technol. 2025, 35, 10293–10304. [Google Scholar] [CrossRef]

- Song, X.; Tian, W.; Zhu, Q.; Zhang, X. VideoMamba++: Integrating state space model with dual attention for enhanced video understanding. Image Vis. Comput. 2025, 161, 105609. [Google Scholar] [CrossRef]

- Hu, Y.; Zhao, J.; Qi, C.; Qiang, Y.; Zhao, J.; Pei, B. VC-Mamba: Causal Mamba representation consistency for video implicit understanding. Knowl.-Based Syst. 2025, 317, 113437. [Google Scholar] [CrossRef]

- Yang, Y.; Ma, C.; Mao, Z.; Yao, J.; Zhang, Y.; Wang, Y. MoMa: Modulating Mamba for Adapting Image Foundation Models to Video Recognition. arXiv 2025, arXiv:2506.23283. [Google Scholar] [CrossRef]

- Li, K.; Wang, Y.; Li, Y.; Wang, Y.; He, Y.; Wang, L.; Qiao, Y. Unmasked teacher: Towards training-efficient video foundation models. In Proceedings of the IEEE/CVF International Conference on Computer Vision; IEEE: Piscatway, NJ, USA, 2023; pp. 19948–19960. [Google Scholar]

- Hao, Y.; Zhou, D.; Wang, Z.; Ngo, C.W.; Wang, M. Posmlp-video: Spatial and temporal relative position encoding for efficient video recognition. Int. J. Comput. Vis. 2024, 132, 5820–5840. [Google Scholar] [CrossRef]

- Maaten, L.V.D.; Hinton, G. Visualizing data using t-SNE. J. Mach. Learn. Res. 2008, 9, 2579–2605. [Google Scholar]

- Tong, Z.; Song, Y.; Wang, J.; Wang, L. Videomae: Masked autoencoders are data-efficient learners for self-supervised video pre-training. Adv. Neural Inf. Process. Syst. 2022, 35, 10078–10093. [Google Scholar]

- Wang, L.; Huang, B.; Zhao, Z.; Tong, Z.; He, Y.; Wang, Y.; Qiao, Y. Videomae v2: Scaling video masked autoencoders with dual masking. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE: Piscataway, NJ, USA, 2023; pp. 14549–14560. [Google Scholar]

- Lin, X.; Petroni, F.; Bertasius, G.; Rohrbach, M.; Chang, S.F.; Torresani, L. Learning to recognize procedural activities with distant supervision. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE: Piscatway, NJ, USA, 2022; pp. 13853–13863. [Google Scholar]

- Islam, M.M.; Bertasius, G. Long movie clip classification with state-space video models. In European Conference on Computer Vision; Springer Nature: Cham, Switzerland, 2022; pp. 87–104. [Google Scholar]

- Han, T.; Xie, W.; Zisserman, A. Turbo training with token dropout. arXiv 2022, arXiv:2210.04889. [Google Scholar] [CrossRef]

| Method | Pre-Training | Params | Fr. × Cr. × Cl. | FLOPs | Top-1 | Top-5 |

|---|---|---|---|---|---|---|

| ActionCLIP-B/16 [28] | CLIP-400M | 142M | 32 × 3 × 10 | 16.89T | 83.8% | 96.2% |

| UniFormerV2-B/16 [19] | CLIP-400M | 115M | 8 × 3 × 4 | 1.8T | 85.6% | 97.0% |

| AIM-L/14 [29] | CLIP-400M | 341M | 8 × 3 × 1 | 0.93T | 86.8% | 97.2% |

| DUALPATH-B/16 [30] | CLIP-400M | 96M | 32 × 3 × 1 | 2.1T | 85.4% | 97.1% |

| MoTE-L/14 [31] | CLIP-400M | 346.6M | 8 × 3 × 4 | 7.788T | 86.8% | 97.5% |

| MoTED-B/16 [32] | CLIP-400M | 116M | 32 × 3 × 1 | 2.04T | 86.2% | 97.5% |

| VideoMamba-M [3] | IN-1K | 74M | 16 × 3 × 4 | 2.424T | 81.9% | 95.4% |

| StableMamba-M [21] | IN-1K | 76M | 16 × 3 × 4 | 2.472T | 82.5% | - |

| VideoMambaPro-Ti [20] | IN-1K | 7M | 32 × 3 × 4 | 0.4T | 85.8% | 96.9% |

| ViViM-S [33] | IN-1K | 26M | 16 × 3 × 4 | 0.816T | 80.1% | 94.1% |

| VideoMamba++ [34] | IN-1K | 21M | 64 × 3 × 4 | 1.62T | 83.3% | 96.0% |

| VideoMAP-M [22] | IN-1K | 96M | 16 × 3 × 4 | - | 85.8% | 97.3% |

| VCMamba [35] | - | 79M | - × 3 × 4 | 0.261T | 87.3% | 97.8% |

| MoMa-L/14 [36] | CLIP-400M | 342M | 16 × 1 × 3 | 4.152T | 87.8% | 98.0% |

| ETMamba(Ours) | IN-1K | 97M | 16 × 3 × 4 | 1.704T | 88.3% | 98.5% |

| Method | Pre-Training | Params | Fr. × Cr. × Cl. | FLOPs | Top-1 | Top-5 |

|---|---|---|---|---|---|---|

| UniFormerV2-B/16 [19] | CLIP-400M | 163M | 16 × 3 × 1 | 0.6T | 69.5% | 92.3% |

| UMT-B800e [37] | CLIP-400M | 87M | 8 × 3 × 2 | 1.08T | 70.8% | 92.6% |

| PosMLP-Video-L [38] | - | 35.4M | 16 × 3 × 1 | 0.338T | 70.3% | 92.3% |

| MoTED-B [32] | CLIP-400M | 112M | 32 × 3 × 1 | 2.04T | 71.9% | 92.7% |

| VideoMamba-M [3] | IN-1K | 74M | 16 × 3 × 4 | 2.424T | 68.3% | 91.4% |

| VideoMamba++ [34] | IN-1K | 16M | 56 × 3 × 4 | 0.672T | 69.6% | 92.2% |

| MoMa-L/14 [36] | CLIP-400M | 342M | 16 × 1 × 3 | 8.304T | 73.8% | 93.6% |

| ETMamba(Ours) | IN-1K | 97M | 16 × 3 × 4 | 1.704T | 74.6% | 94.1% |

| Method | Pre-Training | Params | Top-1 |

|---|---|---|---|

| VideoMAE [40] | K400 | 87M | 73.3% |

| VideoMAE V2 [41] | - | 1050M | 88.1% |

| VideoMamba-M [3] | K400 | 74M | 68.6% |

| VideoMambaPro-M [20] | IN-1K | 72M | 63.2% |

| Mamba-ND [24] | IN-1K | 36M | 60.9% |

| ETMamba(Ours) | K400 | 97M | 75.7% |

| Method | End-to-End | Pre-Training | Frames | Top-1 |

|---|---|---|---|---|

| Distant Supervision [42] | × | IN-21K+HTM | - | 89.9% |

| ViS4mer [43] | × | IN-21K+K600 | - | 88.2% |

| Turbo [44] | × | K400+HTM-AA | 32 | 91.3% |

| VideoMamba-M [3] | ✓ | K400 | 64 | 95.8% |

| MoMa-L/14 [36] | ✓ | K400+CLIP-400M | 64 | 96.9% |

| VideoMAP-M [22] | ✓ | K400 | 24 | 97.9% |

| ETMamba(Ours) | ✓ | K400 | 64 | 98.1% |

| STFP | EBS | STCM | Top-1 (K400) | Top-1 (SSv2) | |

|---|---|---|---|---|---|

| (a) | × | × | × | 81.9% | 68.3% |

| (b) | ✓ | × | × | 85.1% (+3.2) | 70.7% (+2.4) |

| (c) | ✓ | ✓ | × | 86.4% (+1.3) | 72.8% (+2.1) |

| (d) | ✓ | ✓ | ✓ | 88.3% (+1.9) | 74.6% (+1.8) |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Hong, R.; Wen, C.; Sun, P.; Zhang, L.; Niu, Z.; Shi, Y.; Li, C.; Li, M.; Su, H.; Chen, H. ETMamba: An Effective Temporal Model for Video Action Recognition. Electronics 2026, 15, 1338. https://doi.org/10.3390/electronics15061338

Hong R, Wen C, Sun P, Zhang L, Niu Z, Shi Y, Li C, Li M, Su H, Chen H. ETMamba: An Effective Temporal Model for Video Action Recognition. Electronics. 2026; 15(6):1338. https://doi.org/10.3390/electronics15061338

Chicago/Turabian StyleHong, Rundong, Changji Wen, Patrick Sun, Leyao Zhang, Zhuozhen Niu, Yaqi Shi, Chenshuang Li, Mingqi Li, Hengqiang Su, and Hongbing Chen. 2026. "ETMamba: An Effective Temporal Model for Video Action Recognition" Electronics 15, no. 6: 1338. https://doi.org/10.3390/electronics15061338

APA StyleHong, R., Wen, C., Sun, P., Zhang, L., Niu, Z., Shi, Y., Li, C., Li, M., Su, H., & Chen, H. (2026). ETMamba: An Effective Temporal Model for Video Action Recognition. Electronics, 15(6), 1338. https://doi.org/10.3390/electronics15061338