1. Introduction

The integration of artificial intelligence (AI) into digital games has advanced rapidly in recent years, enabling not only competitive performance but also more human-like, creative, and socially interactive behaviors [

1]. Beyond traditional rule-based game AI, recent research has explored learning-based approaches that allow AI agents to perceive, generate, and reason about complex player actions, such as speech, gestures, and visual expressions [

2,

3,

4]. Among these modalities, drawing-based interaction presents a unique challenge, as it involves both temporal dynamics and semantic ambiguity inherent in human sketches.

Social deduction games, such as Werewolf, represent a particularly challenging domain for game AI [

5,

6]. These games require players to infer hidden roles and intentions through indirect and often ambiguous cues rather than explicit actions. When such games incorporate creative activities—such as collaborative drawing—the difficulty increases further, as the AI must interpret incomplete and evolving visual information while considering social context and deception. Despite the growing interest in AI for asymmetric and social games, research on AI agents capable of understanding and participating in drawing-based social deduction games remains limited.

To address this gap, we focus on Drawing Werewolf (also known as A Fake Artist Goes to New York), a multiplayer game in which players collaboratively construct a drawing by adding strokes one by one while attempting to identify a hidden “Werewolf” player who does not know the drawing theme. In this game, players must infer the theme from partial drawing sequences, often at very early stages, and use this information to support both drawing decisions and social reasoning. For an AI player to function effectively in this setting, it is essential to estimate the drawing theme from incomplete stroke sequences and to do so incrementally as the drawing progresses.

In our previous work, we developed the Drawing Werewolf game system and implemented a preliminary AI player using SketchRNN trained on the “Quick, Draw!” dataset, demonstrating the feasibility of integrating a generative drawing model into an asymmetric multiplayer game environment [

7]. While this approach enabled AI-driven drawing generation, it revealed a critical limitation: the AI lacked the ability to infer the drawing theme from partial sketches, which is a fundamental requirement for strategic reasoning and role-dependent behavior in the game.

In this study, we address the problem of inferring drawing targets from partial sketch sequences toward building a more intelligent AI player for Drawing Werewolf. Specifically, we compare a unidirectional Long Short-Term Memory-based model (UniLSTM) and a Transformer-based model for classifying drawing themes from prefixes of stroke sequences. Using the animal categories of the “Quick, Draw!” dataset, we evaluate how accurately each model can estimate the drawing target at different stages of the drawing process, ranging from very early strokes to more complete sketches.

The contributions of this paper are as follows:

We formulate incremental sketch classification under strict causal constraints, reflecting real-time inference conditions in drawing-based social deduction games.

We provide a systematic comparison between unidirectional recurrent modeling and causal self-attention modeling across multiple prefix lengths, enabling detailed analysis of early-stage prediction behavior.

We demonstrate that the advantage of self-attention is particularly pronounced in highly incomplete sketch stages, revealing stage-dependent modeling characteristics that have not been systematically analyzed in prior sketch recognition studies.

By bridging sketch recognition, sequence modeling, and game AI, this work contributes to the broader understanding of how AI agents can participate meaningfully in creative, socially interactive games that rely on ambiguous and evolving visual information.

Beyond the context of drawing-based games, incremental inference from partially observed data is a broader challenge in computer vision and real-time perception systems. Many practical applications, such as traffic scene enhancement, defect monitoring, and inspection systems, require models to operate under incomplete or progressively revealed information. Recent studies have demonstrated the effectiveness of advanced normalization techniques and attention-based architectures for robust visual understanding in such settings [

8,

9]. Positioning incremental sketch inference within this broader computer vision perspective highlights the general relevance of our formulation beyond game-specific applications.

The remainder of this paper is organized as follows.

Section 2 reviews related work on artificial intelligence for social deduction games, sketch-based communication, and learning-based sketch recognition.

Section 3 introduces the Drawing Werewolf game and discusses its characteristics from the perspective of asymmetric information and partial observability.

Section 4 outlines the key challenges involved in constructing an AI player for Drawing Werewolf.

Section 5 describes the UniLSTM and Transformer-based models adopted in this study and explains the incremental sketch recognition framework.

Section 6 presents the experimental setup, including the dataset, preprocessing, model configurations, and evaluation protocol.

Section 7 reports experimental results and provides both quantitative and class-wise analyses.

Section 8 discusses the implications of the results for sketch-based inference and AI players in drawing-based social deduction games. Finally,

Section 9 concludes the paper and outlines directions for future work.

2. Related Works

Research related to this study can be broadly categorized into four areas:

Artificial intelligence for asymmetric and social deduction games.

Sketch-based communication and collaborative drawing.

Learning-based sketch representation and recognition.

Sequence modeling for partial and incremental sketch inference.

2.1. AI in Asymmetric and Social Deduction Games

Artificial intelligence has achieved remarkable success in a wide range of games, including board games, real-time strategy games, and multiplayer online games. In particular, reinforcement learning combined with deep neural networks has enabled AI agents to reach or surpass human-level performance in complex environments, as exemplified by AlphaGo and AlphaStar [

10,

11]. However, most of these successes have been demonstrated in symmetric games, where players share identical information, roles, and objectives.

In contrast, asymmetric and social deduction games such as Mafia or Werewolf pose fundamentally different challenges. Players are assigned hidden roles with conflicting goals, and successful play depends on reasoning about others’ intentions under incomplete and deceptive information [

6]. Prior studies have explored asymmetric games using symbolic reasoning, constraint-based inference, and reinforcement learning frameworks. Representative examples include deduction-oriented systems for games such as Cluedo [

12] and learning-based approaches that address role imbalance, partial observability, and strategic diversity in asymmetric environments [

13,

14].

More recently, research has begun to examine social deduction games from a multimodal and human-centered perspective. For example, Lai et al. [

15] introduced a multimodal dataset collected from a multiplayer social deduction game, incorporating dialogue transcripts, video recordings, and annotations of persuasion strategies. Their analysis demonstrates that non-verbal cues, including facial expressions and gestures, play an important role in persuasion and outcome prediction. While such studies highlight the limitations of text-only modeling, the AI systems considered primarily analyze human behavior rather than actively participating in the game.

Complementary insights are provided by theoretical and human-centered studies of social deduction games. Tilton [

16] analyzed games such as

Are You a Werewolf? and

The Resistance as pedagogical tools for studying small-group communication. This work shows that social deduction games naturally elicit phenomena such as leadership emergence, groupthink, and deception under conditions of hidden roles and incomplete information. Similarly, research on asymmetric role design in games has demonstrated that intentional imbalance in abilities or information can foster communication and interdependence among players [

17].

Despite these advances, existing studies largely focus on dialogue, symbolic actions, or observable behavioral cues. The problem of enabling AI agents to infer hidden intentions or roles from incremental, user-generated, and inherently ambiguous content remains underexplored. In particular, few works consider AI agents that must operate within creative action spaces, where meaning emerges gradually through interaction.

Drawing Werewolf extends prior social deduction games by replacing verbal communication with collaborative drawing. This shift introduces both informational and representational asymmetry, as intentions are conveyed through incomplete and evolving sketches rather than explicit statements or predefined actions. As a result, AI agents in this setting must reason under uncertainty using visual, temporal, and creative signals, highlighting the need for models capable of partial and incremental inference.

2.2. Sketch-Based Communication and Collaborative Drawing

Sketches have long been recognized as an effective medium for communication, especially in collaborative and creative tasks. In artificial intelligence research, sketch-based communication has been explored through referential and cooperative games. CoDraw [

18] and Iconary [

19] demonstrated that drawings can function as grounded communication channels between agents, enabling goal-driven interaction and shared understanding through visual symbols.

Related work on emergent communication has further shown that graphical conventions can arise through repeated interaction. Studies on emergent graphical conventions in visual communication games [

20] reveal that agents can gradually shift from iconic to symbolic representations depending on context and shared experience. Similarly, research on pragmatic inference and visual abstraction [

21] highlights how contextual reasoning allows flexible interpretation of partial and abstract drawings.

Beyond AI-centered studies, research in design and engineering emphasizes the role of collaborative sketching as a boundary object that facilitates idea sharing and negotiation among participants with different expertise [

22,

23]. These findings underscore the inherent ambiguity and flexibility of sketches, properties that are particularly suitable for social deduction games where uncertainty and interpretation play central roles.

2.3. Learning-Based Sketch Representation and Recognition

Sketch recognition has long been studied as a distinct research area from image recognition, due to the sparse, abstract, and highly variable nature of hand-drawn sketches. Early work highlighted the importance of temporal stroke patterns for online sketch interpretation, demonstrating that stroke order provides valuable cues beyond static shape information [

24].

Recent advances increasingly adopt deep learning methods. Transformer-based models such as Sketchformer [

25] represent sketches as sequences of strokes and employ self-attention mechanisms to capture long-range dependencies, achieving strong performance in sketch classification and retrieval tasks. In addition, explainability-oriented approaches, such as SketchXAI [

26], investigate how individual strokes contribute to model decisions, further emphasizing the semantic importance of stroke order and structure.

These studies collectively suggest that vector-based and sequence-aware representations are well suited for modeling sketches, especially when temporal information is essential.

2.4. Sequence Models for Partial Sketch Inference

Sequence models such as Long Short-Term Memory (LSTM) networks and Transformer-based architectures have shown strong performance in modeling temporal data across various domains [

27,

28]. In sketch recognition, these models can capture dependencies between strokes and extract semantic information from drawing trajectories [

29].

Recent sketch recognition approaches, including Sketchformer [

25] and Sketch-BERT [

30], have demonstrated strong performance by leveraging bidirectional attention mechanisms and global context modeling. Graph-enhanced Transformer variants [

29] further incorporate structural relationships among stroke segments to improve representation learning.

However, many existing studies focus on recognizing completed sketches, where the full stroke sequence is available at inference time. Bidirectional models are commonly employed, implicitly assuming access to future strokes [

30]. While effective for offline recognition, such assumptions are unsuitable for real-time or interactive scenarios.

Transformer and graph-based architectures have also been applied in related sequential classification domains beyond sketch recognition, including defect monitoring and inspection tasks [

31,

32]. These studies demonstrate the applicability of attention-based and graph-structured models for analyzing temporally evolving visual patterns. However, their evaluation settings typically assume fully observed inputs rather than incremental prefix-based inference under causal constraints.

In social deduction games such as Drawing Werewolf, early-stage inference from partial stroke sequences is essential. Players often make judgments after observing only a few strokes, and AI agents must similarly estimate drawing targets without access to the complete sequence. Although prior work has highlighted the importance of temporal patterns in sketches [

24], systematic evaluations of unidirectional sequence models under incremental prefix-based settings remain limited.

In contrast, the incremental causal setting considered in this study restricts models from attending to future strokes and requires predictions to be updated as new strokes are observed. This setting more closely reflects real-time gameplay conditions and provides a framework for analyzing how different sequence modeling architectures behave during the drawing process.

2.5. Positioning of This Study

While Transformer-based architectures such as Sketchformer and Sketch-BERT have demonstrated strong performance in sketch recognition, these studies primarily evaluate models under fully observed and offline settings. In contrast, our work explicitly constrains both recurrent and attention-based models to operate under causal, unidirectional conditions, reflecting real-time inference scenarios where future strokes are unavailable.

Furthermore, prior Transformer-based sketch studies focus on improving final recognition accuracy on completed sketches. The incremental behavior of these models under partial and progressively revealed input has not been systematically analyzed. Our framework evaluates prediction performance at multiple stroke-prefix stages, enabling fine-grained analysis of how model characteristics manifest across drawing progression.

To the best of our knowledge, a systematic comparison between unidirectional recurrent models and causally constrained Transformer models under incremental sketch observation has not been previously reported.

3. Drawing Werewolf Game

Drawing Werewolf is a social deduction game derived from the classic Werewolf (Mafia) game, in which players attempt to identify hidden roles through indirect information. Unlike the original Werewolf game, which relies primarily on verbal communication and discussion, Drawing Werewolf replaces spoken clues with collaborative drawing, fundamentally altering how information is expressed and interpreted.

3.1. Game Overview

In Drawing Werewolf, multiple players participate in a round-based game. At the beginning of the game, players are secretly assigned roles. In our implemented setting, the game is played by four players consisting of three Villagers and one Werewolf. A common category is provided to all players; additionally, the Villagers are given the specific target theme within that category, whereas the Werewolf receives only the category and does not know the theme. Players then take turns drawing on a shared canvas, incrementally adding strokes to a single shared sketch.

The Villagers aim to identify the Werewolf while preventing the theme from being revealed through the drawing process. In contrast, the Werewolf attempts to avoid being detected and/or to infer the hidden theme from the evolving sketch. This asymmetric information structure and conflicting objectives make Drawing Werewolf a social deduction game characterized by hidden roles and partially observable, temporally evolving visual evidence.

3.2. Drawing as an Information Medium

A key characteristic of Drawing Werewolf is that drawing serves as the sole medium of communication. Unlike language-based interaction, drawings are inherently ambiguous, abstract, and open to multiple interpretations. The meaning of a drawing is not explicitly encoded but emerges gradually through the accumulation of strokes.

Moreover, information in Drawing Werewolf is temporal. Early strokes often provide only vague hints, while later strokes refine or clarify the intended concept. Human players naturally form hypotheses during the early stages of drawing and revise them as new strokes are added. This incremental interpretation process plays a critical role in gameplay, as decisions and suspicions may arise before a drawing is completed.

In addition, the intent behind drawing differs fundamentally depending on the assigned role. Villagers are required to produce drawings that are consistent with the given theme, while simultaneously avoiding overly explicit representations that could reveal the theme to the Werewolf. In contrast, the Werewolf must infer the hidden theme from other players’ drawings while generating strokes that remain plausible within the shared category and do not raise suspicion. This role-dependent drawing behavior introduces an additional layer of complexity, making drawing generation and interpretation in Drawing Werewolf particularly challenging for both human players and AI agents.

3.3. Asymmetry and Partial Observability

From a game-theoretic perspective, Drawing Werewolf exhibits strong asymmetry. Players have unequal information due to hidden roles and the asymmetric disclosure of the theme: Villagers know the specific theme, whereas the Werewolf is given only the shared category and must infer the theme from the evolving drawing. As a result, players pursue opposing objectives under different information conditions.

In addition, the drawing process introduces partial observability over time: at any given moment, only a prefix of the full stroke sequence is available. This structure differs from many conventional game AI benchmarks, where agents act on well-defined states and receive explicit observations. In Drawing Werewolf, players must reason about intentions and consistency based on incomplete, noisy, and temporally evolving visual evidence. Such characteristics make the game particularly challenging for AI agents and highlight the need for models capable of incremental inference under uncertainty.

3.4. Relevance to AI Research

Drawing Werewolf provides a unique testbed for artificial intelligence research at the intersection of game AI, sketch understanding, and sequential inference. Unlike image classification tasks that operate on completed drawings, this game requires real-time interpretation of partial sketch sequences. Furthermore, unlike dialogue-based social deduction games, Drawing Werewolf demands reasoning from non-verbal and creative expressions.

These properties make Drawing Werewolf well suited for investigating how different sequence models process incremental visual information and how early-stage predictions evolve as additional strokes are observed. Consequently, the game offers a meaningful context for evaluating AI players that must infer hidden roles and themes from partial and ambiguous visual inputs.

3.5. Implemented Drawing Werewolf System

To evaluate AI players under realistic gameplay conditions, we implemented an original Drawing Werewolf game system for research purposes.

Figure 1 shows a screenshot of the implemented system during gameplay. The system allows multiple players to participate in a round-based game in which drawings are created collaboratively on a shared canvas.

All drawing actions are recorded as temporal stroke sequences, preserving both spatial and temporal information. Although the AI models evaluated in this study were trained and tested using the “Quick, Draw!” dataset, the implemented system is designed to collect drawing sequences in the same format, enabling future integration of in-game data for training and evaluation. The system enables controlled experiments by standardizing game rules, drawing order, and the number of strokes per player, while maintaining gameplay characteristics similar to those experienced by human players.

4. Issues for Creating an AI Player in Drawing Werewolf

Creating an AI player for Drawing Werewolf presents several challenges that go beyond those encountered in conventional game AI or sketch recognition tasks. These challenges arise from the combination of asymmetric information, temporal incompleteness, and role-dependent drawing behavior.

4.1. Partial and Incremental Observation

Unlike standard image recognition tasks that operate on completed drawings, an AI player in Drawing Werewolf must interpret sketches that are incomplete and continuously evolving. At any given time step, only a prefix of the full stroke sequence is observable, and critical semantic cues may not yet be present.

As a result, the AI must generate predictions based on limited visual evidence and update its internal representation as new strokes are added. This requirement necessitates models capable of incremental inference rather than post hoc recognition, making early-stage interpretation a key difficulty in the game.

4.2. Asymmetric Information and Hidden Intentions

Another major challenge lies in the asymmetric information structure of the game. Villagers and the Werewolf operate under fundamentally different knowledge conditions: Villagers know the target theme, whereas the Werewolf must infer it from other players’ drawings while avoiding suspicion.

For an AI player, this implies that drawing or recognition cannot be treated as a purely perceptual task. Instead, the AI must reason about hidden intentions behind each drawing action and consider how its own behavior may be interpreted by other players. Modeling such intent-aware behavior is particularly difficult when the observations are ambiguous and non-verbal.

4.3. Role-Dependent Objectives and Strategies

The objectives of an AI player vary significantly depending on its assigned role. As a Villager, the AI must produce drawings that are consistent with the theme while avoiding overly explicit representations that could reveal the theme to the Werewolf. Conversely, as a Werewolf, the AI must draw in a way that remains consistent with the shared category while gradually inferring the hidden theme.

These conflicting requirements lead to role-dependent strategies that cannot be captured by a single static policy. Instead, the AI must adapt its behavior based on both its role and the evolving game state, further increasing the complexity of the problem.

4.4. Data Representation and Temporal Modeling

Finally, the representation of drawing data itself poses a challenge. Stroke-based drawing data is naturally sequential and varies in length, order, and spatial structure. Simple image-based representations discard temporal information that is crucial for early inference.

Therefore, effective AI players must leverage sequence modeling techniques that preserve temporal dependencies and can handle variable-length inputs. This requirement motivates the use of models such as Long Short-Term Memory networks and Transformer-based architectures, which are capable of modeling temporal dynamics in drawing sequences.

4.5. Scope of This Study

Among the challenges discussed above, this study primarily focuses on the problem of partial and incremental observation in Drawing Werewolf and its implications for temporal modeling of sketch sequences. Specifically, we investigate how different sequence models process incomplete stroke sequences and how recognition performance evolves as additional strokes are observed.

While the game inherently involves asymmetric information and role-dependent objectives, this paper does not attempt to model full strategic behavior or intent-aware drawing generation. Instead, these aspects are simplified by evaluating AI models on sketch recognition tasks using the “Quick, Draw!” dataset, which provides controlled drawing sequences with known labels.

By narrowing the scope in this manner, the present study aims to clarify the capabilities and limitations of sequence models in handling partial sketch information, thereby establishing a foundation for future work on more complex AI players that fully integrate gameplay dynamics.

5. Long Short-Term Memory and Transformer

The core contribution of this study lies not in introducing a novel neural architecture, but in formulating and systematically evaluating incremental sketch classification under strict causal constraints. Unlike conventional sketch recognition settings that assume access to complete drawings, the proposed framework explicitly models real-time inference conditions by generating stroke-prefix inputs and evaluating prediction behavior at each drawing stage. This enables fine-grained analysis of stage-dependent inference characteristics that have not been systematically investigated in prior work. UniLSTM and Transformer models are adopted as representative sequence modeling approaches within this framework.

Figure 2 illustrates the online incremental inference loop in the context of Drawing Werewolf. At each time step,

t, a newly drawn stroke is incorporated into the observed prefix, and theme probabilities are updated under strict causal constraints without access to future strokes. This online loop reflects the real-time gameplay setting, where AI players must continuously revise their belief about the drawing theme as the sketch evolves.

This study focuses on sequence modeling approaches that can handle partial and incremental observations of sketch data. In particular, we investigate a unidirectional Long Short-Term Memory (UniLSTM) model as a recurrent baseline and a Transformer-based model as an attention-based alternative.

Figure 3 and

Figure 4 illustrate the internal architectures of the two models, while

Figure 5 summarizes the conceptual comparison between conventional offline sketch recognition and the proposed incremental recognition framework.

5.1. Unidirectional Long Short-Term Memory

Long Short-Term Memory (LSTM) networks are a class of recurrent neural networks designed to model long-range dependencies in sequential data through gated mechanisms. In sketch recognition tasks, LSTMs process stroke sequences in a time-step-wise manner, updating a hidden state as each stroke is observed.

Figure 3 shows the UniLSTM-based sketch recognition model used in this study. Temporal stroke sequences are first embedded using a linear layer and then processed sequentially by a unidirectional LSTM. At each time step, the current hidden state represents the accumulated information from the observed prefix of the sketch. Classification is performed based on the hidden state at the current time step, enabling both early-stage and final predictions.

Although bidirectional LSTMs (BiLSTMs) are commonly used in offline sketch recognition tasks [

33,

34], this study adopts a unidirectional architecture. BiLSTMs require access to both past and future strokes, which is incompatible with the incremental inference setting considered here, where only partial sketches are available during early stages of drawing. The UniLSTM architecture therefore better reflects the real-time constraints of Drawing Werewolf, in which AI players must make predictions without observing future strokes.

5.2. Transformer-Based Model

Transformer-based models provide an alternative approach to sequence modeling by replacing recurrent computation with self-attention mechanisms. Self-attention allows the model to capture global dependencies among all observed strokes, potentially improving robustness when early visual cues are sparse or ambiguous.

Figure 4 illustrates the Transformer-based sketch recognition model employed in this study. Temporal stroke sequences are embedded using a linear layer and combined with temporal positional encoding to preserve stroke order information. The resulting representations are processed by a Transformer encoder composed of multi-head self-attention and position-wise feed-forward networks.

To ensure compatibility with incremental inference, the Transformer encoder is configured to operate under a causal attention setting, such that each stroke can attend only to previously observed strokes. After encoding, the representation of the final token (i.e., the most recently observed stroke) is used as the sequence representation and passed to a classifier for category prediction. This design enables the model to produce predictions from both completed sketches and partial sketch prefixes, since the representation of the final token summarizes all previously observed strokes.

5.3. Conventional and Incremental Recognition Frameworks

Figure 5 contrasts the conventional sketch recognition framework with the incremental recognition framework considered in this study. In conventional approaches, classification is performed only after a sketch is fully completed, and intermediate predictions are not evaluated.

In contrast, the proposed framework evaluates model predictions at multiple stages of sketch completion. Partial stroke sequences are extracted at different time steps and independently fed into the recognition models, enabling analysis of how prediction confidence and accuracy evolve as additional strokes are observed. This framework is particularly suitable for Drawing Werewolf, where AI players must infer semantic information from incomplete drawings during the drawing process rather than after completion.

By comparing UniLSTM and Transformer-based models within this incremental recognition framework, this study aims not only to compare final recognition accuracy, but to analyze stage-wise prediction behavior under strictly causal constraints. Unlike conventional sketch recognition studies that evaluate models on fully observed sketches, our framework enables fine-grained examination of how architectural differences manifest across drawing progression.

In particular, this setting allows us to identify (1) at which stroke stages performance differences become significant, (2) how early incomplete cues are utilized by different sequence modeling strategies, and (3) whether global self-attention provides systematic advantages when semantic structure is still highly ambiguous. Such stage-dependent analysis has not been sufficiently explored in prior Transformer-based sketch recognition research, where evaluation is typically performed after full sequence completion.

Therefore, the incremental recognition framework serves not merely as an application setting for game AI, but as a methodological tool for analyzing temporal information utilization under partial observation.

6. Experimental Setup

This section describes the dataset, preprocessing procedures, model configurations, and evaluation protocol used in the experiments. All experiments were conducted using a fixed random seed to ensure reproducibility.

6.1. Dataset

Experiments were conducted using the “Quick, Draw!” dataset, focusing on 44 object classes belonging to the “animal” category. For each class, 70,000 sketch sequences were used for training and 2500 sequences for testing. In total, the models were trained on 3.08 million sequences () and evaluated on 110,000 sequences ().

All sketches are represented as temporal stroke sequences. During evaluation, each test sequence was classified incrementally using partial stroke prefixes corresponding to one stroke, the first two strokes, the first three strokes, and so on, up to the complete sequence.

The “Quick, Draw!” dataset was selected for three primary reasons. First, it provides large-scale stroke-level temporal annotations, which are essential for simulating incremental observation at stroke boundaries. Second, its standardized vector format ensures controlled and reproducible comparison between different sequence models. Third, the dataset allows systematic extraction of stroke-prefix segments across a wide range of object categories, enabling fine-grained analysis of stage-wise prediction behavior.

While sketches in “Quick, Draw!” are individually created and do not reflect collaborative drawing dynamics as in Drawing Werewolf, the dataset provides a controlled benchmark for isolating the effects of sequence modeling under partial observation. Future work will extend the evaluation to in-game player-generated drawings.

6.2. Preprocessing

Preprocessing was performed following the standard representation used in SketchRNN. Each sketch is originally provided as a sequence of absolute pen coordinates along with pen state information. For model input, sketches are converted into a five-dimensional vector representation , where and denote coordinate differences between consecutive points, indicates pen-down, indicates pen-up, and represents the end-of-sequence token.

The coordinate differences and are normalized to have zero mean and unit variance, computed based on the training data. This normalization reduces variations caused by drawing scale and stroke speed across different sketches.

To enable batch processing, stroke sequences are padded to a fixed maximum length. Padding masks are applied so that only valid sequence elements contribute to model computation. For the UniLSTM model, packed sequences are used to ignore padded elements, while for the Transformer model, attention masks are employed.

In addition, partial stroke sequences are generated by truncating each sketch at the end of a given stroke. These truncated sequences serve as inputs for incremental recognition and reflect the conditions under which predictions must be made before a drawing is completed.

In the “Quick, Draw!” dataset, sketches are represented as sequences of points with associated pen states. A single stroke is defined as a continuous sequence of points between a pen-down and the subsequent pen-up event. Stroke boundaries are determined by the (pen-up) signal in the five-dimensional representation.

For incremental evaluation, prefixes are generated strictly at stroke boundaries. Specifically, a prefix corresponding to k strokes includes all points belonging to the first k complete strokes. No truncation is performed within a stroke.

6.3. Model Configuration

Both the UniLSTM and Transformer-based models are designed to support incremental inference, allowing predictions to be updated as new strokes are observed.

The UniLSTM model consists of stacked LSTM layers that process the input stroke sequence and produce a hidden representation at each time step. The hidden state is then passed to a fully connected classification layer to predict the drawing category.

The Transformer-based model is implemented as a causal Transformer encoder. Each encoder layer contains a multi-head self-attention module followed by a position-wise feed-forward network (FFN). To ensure compatibility with incremental recognition, causal masking is applied so that each token attends only to previously observed strokes. We adopt a pre-layer normalization (Pre-Norm) configuration to improve training stability.

The input representation consists of five-dimensional stroke features and a one-hot pen state), which are projected into the model embedding space before being processed by the network.

The detailed architectural and training hyperparameters used in our experiments are summarized in

Table 1. Both models shared identical training settings in order to ensure a fair comparison.

Training was performed using the AdamW optimizer with cosine learning rate decay. The batch size was set to 64 and the models were trained for 20 epochs. Weight decay and gradient clipping were applied to stabilize optimization. No early stopping was used. All experiments were conducted using a single NVIDIA GPU. The approximate training time was 3 h for UniLSTM and 16 h for the Transformer.

6.4. Evaluation Protocol

Recognition performance is evaluated using classification accuracy at different stages of sketch completion. Accuracy was computed for each prefix length and compared against performance obtained using complete sketches.

By analyzing early-stage and late-stage prediction accuracy, we assess the ability of each model to perform recognition under partial observation. This evaluation protocol is particularly relevant to Drawing Werewolf, where timely inference from incomplete sketches is essential for effective gameplay.

7. Experimental Results

7.1. Overall Classification Accuracy

Figure 6 shows the average classification accuracy of the Transformer-based model and the UniLSTM-based model as a function of the number of observed strokes. Each point represents the mean accuracy over 44 animal classes, evaluated on 2500 test sequences per class.

As shown in

Figure 6, both models exhibit a monotonic increase in accuracy as more strokes are observed, reflecting the progressive disambiguation of sketches. However, the Transformer consistently outperforms UniLSTM across all stroke counts. The performance gap is particularly pronounced in the early stages of drawing, indicating that the Transformer is more effective at exploiting partial and incomplete stroke information.

Even when only a small number of strokes are available, the Transformer achieves a substantially higher accuracy, whereas UniLSTM requires more strokes to reach a comparable performance level. This trend suggests that self-attention enables the Transformer to capture global structural cues from sparse stroke sequences more efficiently than recurrent processing.

7.2. Quantitative Comparison Across Stroke Counts

Table 2 summarizes the quantitative comparison between Transformer and UniLSTM. For each stroke count,

k, we report the average accuracy over all classes, as well as the maximum and minimum accuracies observed among the 44 classes. We also report the average accuracy difference between the two models.

To further assess the statistical reliability of the observed performance gap, we computed summary statistics across the 44 animal classes.

Table 3 reports the mean, standard deviation, maximum, and minimum accuracies of the class-wise average results.

The Transformer achieves a higher mean accuracy (0.711) than UniLSTM (0.586), while also exhibiting a slightly smaller standard deviation (0.115 vs. 0.133), indicating more stable performance across classes.

We additionally conducted a paired t-test using the class-wise mean accuracies of the two models. The result indicates a highly significant difference (), confirming that the Transformer consistently outperforms UniLSTM across object categories.

7.3. Class-Wise Performance Analysis

Although the Transformer shows higher average accuracy, the performance varies across object categories. To analyze this variability, we examined the class-wise accuracy distributions for each stroke count.

Across most classes, the Transformer achieves higher accuracy than UniLSTM, resulting in a positive average accuracy difference. However, the magnitude of this improvement depends on the object category and the drawing stage. Classes with distinctive global shapes tend to result in better performance from the Transformer, especially in the early stages.

In contrast, classes characterized by fine-grained local details or gradual shape refinement show smaller performance gaps, indicating that recurrent models can partially compensate once sufficient strokes are observed.

7.4. Representative Class-Wise Accuracy Curves

To further analyze the behavior of the two models beyond averaged performance, we present class-wise accuracy curves for four representative animal categories in

Figure 7. These classes were selected to illustrate distinct performance characteristics observed across the 44 classes.

The butterfly class represents an easy category for both models, where high classification accuracy is achieved within the early stages of drawing. Notably, both Transformer and UniLSTM reach their peak accuracy around 2–4 strokes, and the performance gap between the two models remains minimal throughout this range.

In contrast, the tiger class exemplifies a difficult category, for which both models consistently exhibit low accuracy across all stroke counts. Although the Transformer outperforms UniLSTM, the overall accuracy remains limited, suggesting that the difficulty arises from the inherent ambiguity of early sketches rather than the modeling approach alone.

The frog class highlights a case where the performance gap between the two models is most pronounced in the early stage. With only one or two strokes, the Transformer achieves substantially higher accuracy than UniLSTM, indicating its superior ability to exploit partial and incomplete stroke information.

Finally, the dolphin class illustrates a scenario in which the performance difference becomes more evident in later stages of drawing. While both models benefit from additional strokes, the Transformer maintains a consistently higher accuracy from mid to late stages, demonstrating its effectiveness in integrating longer-range temporal dependencies.

These representative examples confirm that the advantage of the Transformer is not limited to a specific drawing stage or class type, but manifests differently depending on the visual and temporal characteristics of each category.

Due to space limitations, detailed accuracy curves for the remaining 40 animal categories are provided in

Appendix A.

7.5. Stage-Wise Prediction Visualization

To better understand the behavior of the models during incremental recognition, we visualize how class predictions evolve as additional strokes are observed.

Figure 8 presents representative examples comparing UniLSTM and Transformer predictions at different stroke stages (

for frog and

for dolphin). For each stage, the top five predicted classes and their probabilities are shown.

These examples illustrate how the predicted class probabilities change as the sketch becomes more complete. In many cases, the Transformer assigns higher probability to the correct class earlier in the drawing process and converges more rapidly as additional strokes are added.

For example, in the dolphin sketch, the Transformer already ranks “dolphin” as the top prediction at , whereas UniLSTM still assigns higher probability to several unrelated classes. Similarly, in the frog example, the Transformer places the correct class among the top predictions earlier than UniLSTM.

These observations support the quantitative results reported in the previous sections and highlight the advantage of the Transformer model in capturing global contextual cues from partial sketches.

7.6. Aggregation Strategy Analysis

To examine the effect of different aggregation strategies in the Transformer encoder, we compare four approaches: mean pooling, final-token representation, attention pooling, and a CLS-style token.

Figure 9 presents the average classification accuracy as a function of the number of observed strokes. The final-token representation consistently achieves the highest accuracy, while attention pooling produces comparable performance across most stroke counts.

In contrast, mean pooling significantly degrades recognition accuracy. This result suggests that averaging token representations can dilute discriminative cues that often emerge in later strokes of the drawing process.

The CLS-style aggregation performs poorly under the causal attention setting. Because the CLS token cannot attend to future tokens in the sequence, it fails to effectively aggregate information from subsequent strokes in incremental recognition scenarios.

Overall, these results confirm that the final-token representation provides a stable and effective aggregation strategy for Transformer-based incremental sketch recognition.

7.7. Summary of Experimental Findings

The experimental results demonstrate that the Transformer-based model consistently outperforms UniLSTM across all stroke counts and object categories. This advantage is particularly evident in early-stage prediction, where only a small number of strokes are available and the observed sketch information is highly incomplete.

Class-wise analyses further reveal that the performance gap manifests differently depending on the visual characteristics of each category. While some classes achieve high accuracy for both models, others exhibit substantial differences either in early or later stages of drawing. The additional aggregation study further confirms that the final-token representation provides the most stable and accurate performance among the tested strategies.

These findings indicate that self-attention mechanisms are well suited for modeling partial sketch sequences, enabling effective utilization of global context beyond strictly sequential dependencies. Overall, the results support the suitability of Transformer architectures for sketch-based inference tasks in asymmetric and incremental drawing scenarios.

8. Discussion

The experimental results highlight clear differences between Transformer-based and UniLSTM-based models in sketch classification under incremental observation. In this section, we discuss the underlying reasons for these differences from the perspectives of temporal modeling, early-stage inference, and class-dependent visual characteristics.

8.1. Effectiveness of Self-Attention for Early-Stage Sketch Inference

One of the most notable findings is the superior performance of the Transformer in early-stage prediction, where only one or two strokes are available. At this stage, sketches are highly incomplete and often lack explicit semantic structure. The UniLSTM model processes stroke sequences strictly in a step-by-step manner, relying heavily on the most recent hidden state to represent the accumulated information. As a result, early predictions tend to be unstable and sensitive to local stroke order.

In contrast, the Transformer leverages self-attention to directly model relationships among all observed stroke points, regardless of their temporal distance. This mechanism enables the model to capture global spatial patterns and contextual cues even from a small number of strokes. The large performance gap observed in early stages for classes such as frog demonstrates the advantage of this global reasoning capability when information is sparse and ambiguous.

Beyond empirical accuracy improvements, the structural properties of self-attention provide a plausible explanation for the observed performance differences. In recurrent models such as UniLSTM, past information is compressed into a single hidden state vector, which may limit the model’s ability to maintain multiple competing semantic hypotheses during early drawing stages. In contrast, self-attention allows pairwise interactions among all observed strokes, enabling more flexible integration of sparse and ambiguous cues.

This architectural difference is particularly relevant in incremental settings, where early strokes may only weakly indicate the drawing theme. The ability to dynamically reweight stroke importance according to contextual relevance likely contributes to the Transformer’s robustness under highly incomplete conditions.

8.2. Sequential Constraints of UniLSTM

The use of a unidirectional LSTM in this study reflects realistic gameplay conditions, where future strokes are not observable during inference. While bidirectional LSTM architectures are commonly adopted in sketch recognition tasks, they assume access to the entire sequence and are therefore unsuitable for online or incremental prediction scenarios.

This unidirectional constraint limits the ability of UniLSTM to revise earlier interpretations once new strokes are added. Consequently, the model may fail to fully exploit long-range dependencies across strokes, particularly when critical visual cues appear later in the drawing process. This limitation is evident in classes such as dolphin, where the Transformer continues to improve in later stages while UniLSTM shows comparatively slower gains.

8.3. Class-Dependent Performance Characteristics

The class-wise analysis reveals that the performance gap between models is not uniform across classes. For visually distinctive classes such as butterfly, both models achieve high accuracy with minimal performance difference, suggesting that early strokes already convey sufficient discriminative information. Conversely, classes such as tiger remain challenging for both models, indicating that the ambiguity arises from the intrinsic complexity of the drawing rather than the choice of sequence model.

These observations suggest that the effectiveness of temporal modeling depends not only on the model architecture but also on the visual structure and drawing strategies associated with each class. Transformer-based models appear particularly advantageous for classes where meaningful global patterns emerge gradually or require integration across distant strokes.

8.4. Implications for AI Players in Drawing-Based Games

From the perspective of AI players in Drawing Werewolf, early and reliable interpretation of partial sketches is essential for decision making under uncertainty. The demonstrated ability of the Transformer to produce more accurate predictions with limited stroke information implies that such models are better suited for real-time inference during gameplay.

Moreover, the robustness of the Transformer across different drawing stages suggests its potential applicability beyond sketch classification, including role inference and strategic reasoning in asymmetric social deduction games. These properties align with the requirements of AI agents that must operate under partial observability and continuously update their beliefs as new information becomes available.

From a system-level perspective, incremental sketch recognition serves as the perception module of an AI player. The predicted class probabilities at each stroke stage can be interpreted as belief updates regarding the hidden drawing theme. These belief estimates can subsequently inform higher-level reasoning modules, such as role inference, deception modeling, and action selection.

Thus, the proposed incremental recognition framework constitutes the perception-to-decision interface within a drawing-based social deduction AI architecture.

8.5. Limitations and Future Directions

Although recent Transformer variants and graph-based models have achieved strong results in offline sketch recognition, their performance under strict incremental causal constraints has not been systematically evaluated. Many of these models rely on bidirectional attention or full-sequence availability, which differs from the real-time setting considered in this study. The framework proposed in this work provides a controlled and reproducible setting for future comparative studies under such causal, prefix-based conditions.

Another limitation concerns the dataset used for evaluation. Although the study is motivated by the Drawing Werewolf game, all experiments were conducted using the “Quick, Draw!” dataset for controlled benchmarking. QuickDraw sketches are collected from individual human participants and preserve temporally ordered stroke information, making them suitable for evaluating incremental and causal recognition behavior under standardized conditions. However, real in-game drawings in Drawing Werewolf are created collaboratively by multiple players, which may introduce greater stylistic variability, intentional ambiguity, and strategic stroke ordering. Such socially influenced drawing behaviors may differ from the single-author sketches contained in QuickDraw.

The current experimental design isolates the perception component of the AI system in order to analyze incremental recognition performance under controlled conditions. Future work will extend the evaluation to in-game drawing data collected from the implemented Drawing Werewolf system, where drawing styles and strategies may vary more significantly.

In addition, integrating sketch recognition with higher-level reasoning modules remains an important direction for future work. For example, predicted theme probabilities could be incorporated into role estimation, belief updating, or decision-making policies for AI players. Exploring hybrid architectures that combine Transformer-based perception with game-theoretic reasoning or reinforcement learning frameworks may further enable more adaptive and strategic AI behavior in drawing-based social deduction games.

9. Conclusions

This paper investigated sketch-based inference for AI players in the Drawing Werewolf game, focusing on the problem of incremental classification from partial stroke sequences. To address the challenges posed by incomplete and ambiguous drawings, we compared a Transformer-based model with a unidirectional LSTM baseline under realistic online inference settings.

Experimental results on 44 animal classes from the “Quick, Draw!” dataset demonstrated that the Transformer consistently outperforms UniLSTM across all stroke counts. The advantage is particularly pronounced in early-stage prediction, where only a small number of strokes are available. Class-wise analyses further revealed that the performance gap depends on the visual and temporal characteristics of each category, with the Transformer showing robust behavior both in early and later stages of drawing.

These findings indicate that self-attention mechanisms are well suited for modeling partial sketch sequences, enabling effective utilization of global context beyond strictly sequential dependencies. From the perspective of game AI, such capabilities are essential for decision making under partial observability in asymmetric and social deduction games.

As future work, we plan to extend the evaluation to player-generated drawings collected from the implemented Drawing Werewolf system and to integrate sketch recognition with higher-level reasoning modules, such as role estimation and strategic decision making. These extensions will contribute to the development of AI players capable of participating more naturally and effectively in drawing-based social deduction games.

Overall, this study establishes a controlled experimental framework for evaluating incremental sketch recognition under causal constraints and provides empirical insights into the suitability of different sequence models for real-time gameplay scenarios.

Author Contributions

Conceptualization, N.O. and S.N. (Shun Nishide); methodology, N.O.; software, N.O., S.N. (Sota Nishiguchi) and A.T.; validation, N.O., S.N. (Sota Nishiguchi) and A.T.; formal analysis, N.O.; investigation, N.O.; resources, S.N. (Shun Nishide); data curation, S.N. (Sota Nishiguchi); writing—original draft preparation, N.O.; writing—review and editing, N.O. and S.N. (Shun Nishide); visualization, N.O.; supervision, S.N. (Shun Nishide); project administration, S.N. (Shun Nishide); funding acquisition, S.N. (Shun Nishide). All authors have read and agreed to the published version of the manuscript.

Funding

This research was supported by JSPS KAKENHI Grant Number JP23K11277.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

Acknowledgments

The authors would like to thank the members of the Nishide Laboratory at Kyoto Tachibana University for their support in the development of the game system and the preparation of the experimental environment. During the preparation of this manuscript, the authors used ChatGPT-5.3(OpenAI) (

https://chatgpt.com/) to assist with idea verification and language refinement. All authors reviewed and edited the content and take full responsibility for the final manuscript.

Conflicts of Interest

The authors declare no conflict of interest.

Abbreviations

The following abbreviations are used in this manuscript:

| AI | Artificial Intelligence |

| LSTM | Long Short-Term Memory |

| UniLSTM | Unidirectional Long Short-Term Memory |

| BiLSTM | Bidirectional Long Short-Term Memory |

| CNN | Convolutional Neural Network |

| RNN | Recurrent Neural Network |

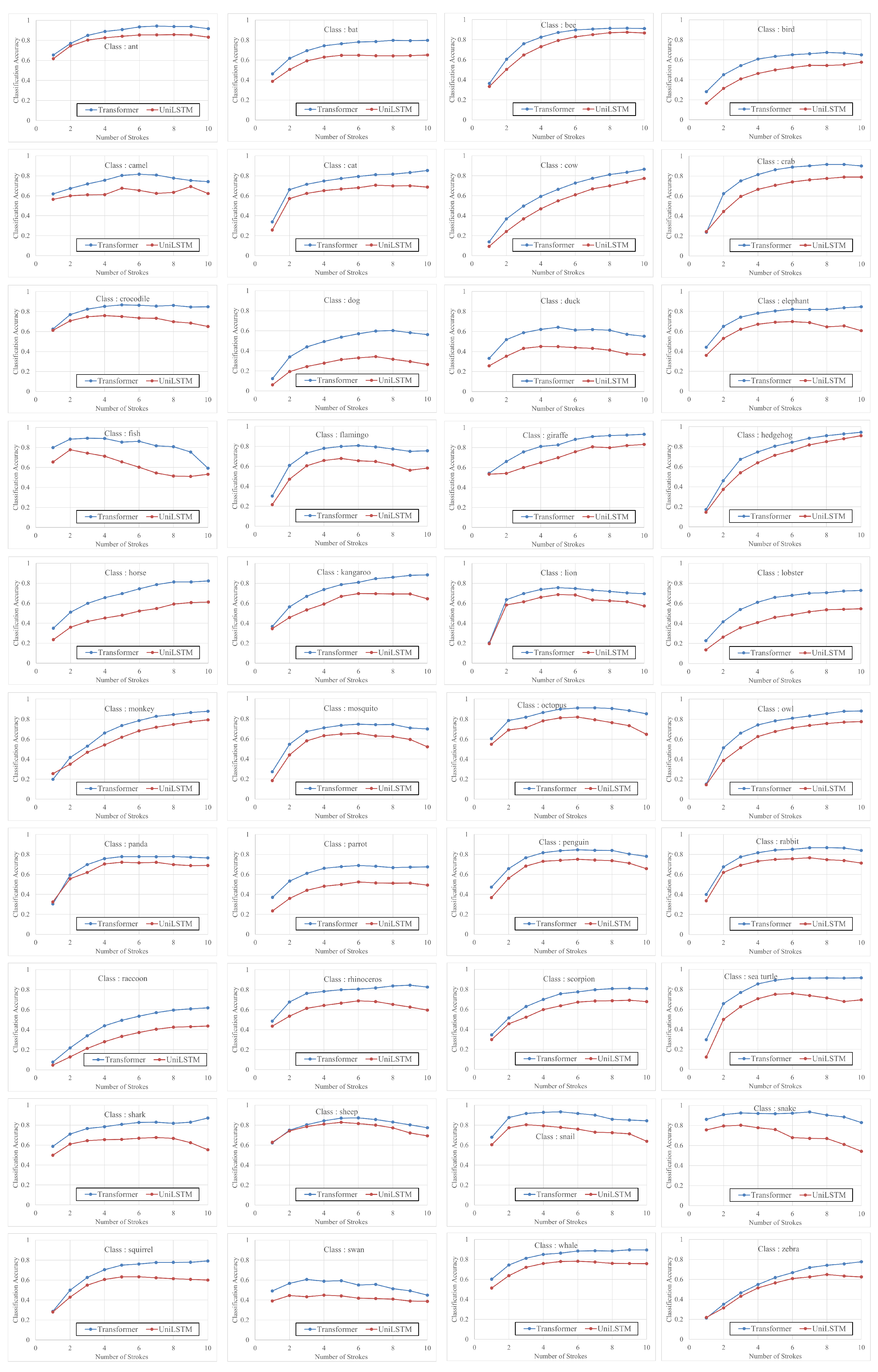

Appendix A. Additional Class-Wise Results

This appendix presents the classification accuracy curves for the remaining 40 animal categories that are not included in the main text. While

Section 7 focuses on representative classes to highlight characteristic performance trends, the results shown here provide a comprehensive view of model behavior across all evaluated categories.

Figure A1 illustrates class-wise accuracy curves for both the Transformer-based model and the UniLSTM model as a function of the number of observed strokes. Each subplot corresponds to one object class, with the class name indicated within the plot. The blue and red curves represent the Transformer and UniLSTM models, respectively.

Overall, the appendix results confirm the trends discussed in the main paper: the Transformer model consistently achieves higher accuracy than UniLSTM across most classes and stroke counts, with particularly notable advantages in early-stage prediction. At the same time, variations across classes highlight differences in sketch complexity and the degree to which early strokes convey discriminative information.

Figure A1.

Class-wise classification accuracy curves for the remaining 40 animal categories. Each subplot shows accuracy as a function of the number of strokes. The class name is displayed within each plot. Blue curves denote the Transformer model, and red curves denote the UniLSTM model.

Figure A1.

Class-wise classification accuracy curves for the remaining 40 animal categories. Each subplot shows accuracy as a function of the number of strokes. The class name is displayed within each plot. Blue curves denote the Transformer model, and red curves denote the UniLSTM model.

References

- Hu, C.; Zhao, Y.; Wang, Z.; Du, H.; Liu, J. Games for Artificial Intelligence Research: A Review and Perspectives. IEEE Trans. Artif. Intell. 2024, 5, 5949–5968. [Google Scholar] [CrossRef]

- Bakhtin, A.; Brown, N.; Dinan, E.; Farina, G.; Flaherty, C.; Fried, D.; Goff, A.; Gray, J.; Hu, H.; Jacob, A.P.; et al. Human-level play in the game of Diplomacy by combining language models with strategic reasoning. Science 2022, 378, 1067–1074. [Google Scholar]

- Nasri, N.; Orts-Escolano, S.; Cazorla, M. An sEMG-Controlled 3D Game for Rehabilitation Therapies: Real-Time Time Hand Gesture Recognition Using Deep Learning Techniques. Sensors 2020, 20, 6451. [Google Scholar] [CrossRef]

- Westera, W.; Prada, R.; Mascarenhas, S.; Santos, P.A.; Dias, J.; Guimarães, M.; Georgiadis, K.; Nyamsuren, E.; Bahreini, K.; Yumak, Z.; et al. Artificial intelligence moving serious gaming: Presenting reusable game AI components. Educ. Inf. Technol. 2020, 25, 351–380. [Google Scholar] [CrossRef]

- Zheng, Z.; Lan, Y.; Chen, Y.; Wang, L.; Wang, X.; Wang, H. DVM: Towards Controllable LLM Agents in Social Deduction Games. In Proceedings of the ICASSP 2025—2025 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Hyderabad, India, 6–11 April 2025; IEEE: Piscataway, NJ, USA, 2025. [Google Scholar]

- Yoo, B.; Kim, K.-J. Finding Deceivers in Social Context with Large Language Models and How to Find Them: The Case of the Mafia Game. Sci. Rep. 2024, 14, 30946. [Google Scholar] [CrossRef] [PubMed]

- Okamoto, N.; Nishiguchi, S.; Nishide, S. Creating a Drawing Werewolf Game and Building an Artificial Intelligence Player Using SketchRNN. Eng. Proc. 2025, 108, 3. [Google Scholar]

- Zhang, S.; Zhang, X.; Shen, L.; Wan, S.; Ren, W. Wavelet-based physically guided normalization network for real-time traffic dehazing. Pattern Recognit. 2026, 172, 112451. [Google Scholar] [CrossRef]

- Liu, Y.; Li, T.; Tan, C.; Ren, W.; Ancuti, C.; Lin, W. IHDCP: Single Image Dehazing Using Inverted Haze Density Correction Prior. IEEE Trans. Image Process. 2026, 35, 1448–1461. [Google Scholar] [CrossRef]

- Silver, D.; Huang, A.; Maddison, C.J.; Guez, A.; Sifre, L.; van den Driessche, G.; Schrittwieser, J.; Antonoglou, I.; Panneershelvam, V.; Lanctot, M.; et al. Mastering the game of Go with deep neural networks and tree search. Nature 2016, 529, 484–489. [Google Scholar] [CrossRef]

- Vinyals, O.; Babuschkin, I.; Czarnecki, W.M.; Mathieu, M.; Dudzik, A.; Chung, J.; Choi, D.H.; Powell, R.; Ewalds, T.; Georgiev, P.; et al. Grandmaster level in StarCraft II using multi-agent reinforcement learning. Nature 2019, 575, 350–354. [Google Scholar] [CrossRef]

- Meng, F.; Lucas, S. Cluedo AI: Applying Constraint-Solving Methods to Play the Multi-Player Deduction Game Cluedo. In FDG’25: Proceedings of the 20th International Conference on the Foundations of Digital Games; Association for Computing Machinery: New York, NY, USA, 2025. [Google Scholar]

- Yin, Q.; Yu, T.; Feng, X.; Yang, J.; Huang, K. An Asymmetric Game Theoretic Learning Model. In Pattern Recognition and Computer Vision (PRCV 2024); Lecture Notes in Computer Science; Springer: Singapore, 2025; Volume 15033, pp. 130–143. [Google Scholar]

- Dasgupta, P.; Kliem, J. Improved Reinforcement Learning in Asymmetric Real-time Strategy Games via Strategy Diversity. Int. J. Serious Games 2023, 10, 19–38. [Google Scholar] [CrossRef]

- Lai, B.; Zhang, H.; Liu, M.; Pariani, A.; Ryan, F.; Jia, W.; Hayati, S.A.; Rehg, J.M.; Yang, D. Werewolf Among Us: Multimodal Resources for Modeling Persuasion Behaviors in Social Deduction Games. In Findings of the Association for Computational Linguistics: ACL 2023; Association for Computational Linguistics: Stroudsburg, PA, USA, 2023; pp. 6570–6588. [Google Scholar]

- Tilton, S. Winning Through Deception: A Pedagogical Case Study on Using Social Deception Games to Teach Small Group Communication Theory. SAGE Open 2019, 9, 21582440198. [Google Scholar] [CrossRef]

- Gonçalves, D.; Rodrigues, A.; Richardson, M.L.; de Sousa, A.A.; Proulx, M.J.; Guerreiro, T. Exploring Asymmetric Roles in Mixed-Ability Gaming. In CHI’21: Proceedings of the 2021 CHI Conference on Human Factors in Computing Systems; Association for Computing Machinery: New York, NY, USA, 2021; p. 114. [Google Scholar]

- Kim, J.-H.; Kitaev, N.; Chen, X.; Rohrbach, M.; Zhang, B.-T.; Tian, Y.; Batra, D.; Parikh, D. CoDraw: Collaborative Drawing as a Testbed for Grounded Goal-Driven Communication. In Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics; Association for Computational Linguistics: Stroudsburg, PA, USA, 2019; pp. 6495–6513. [Google Scholar]

- Clark, C.; Salvador, J.; Schwenk, D.; Bonafilia, D.; Yatskar, M.; Kolve, E.; Herrasti, A.; Choi, J.; Mehta, S.; Skjonsberg, S.; et al. Iconary: A Pictionary-Based Game for Testing Multimodal Communication with Drawings and Text. In Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing; Association for Computational Linguistics: Stroudsburg, PA, USA, 2021; pp. 1864–1886. [Google Scholar]

- Qiu, S.; Xie, S.; Fan, L.; Gao, T.; Joo, J.; Zhu, S.-C.; Zhu, Y. Emergent Graphical Conventions in a Visual Communication Game. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), New Orleans, LA, USA, 28 November–9 December 2022; pp. 13119–13131. [Google Scholar]

- Fan, J.E.; Hawkins, R.D.; Wu, M.; Goodman, N.D. Pragmatic Inference and Visual Abstraction Enable Contextual Flexibility During Visual Communication. Comput. Brain Behav. 2020, 3, 86–101. [Google Scholar] [CrossRef]

- Shah, J.J.; Vargas-Hernandez, N.; Summers, J.D.; Kulkarni, S. Collaborative Sketching (C-Sketch)—An Idea Generation Technique for Engineering Design. J. Creat. Behav. 2001, 35, 168–198. [Google Scholar] [CrossRef]

- Henderson, K. Flexible Sketches and Inflexible Databases: Visual Communication, Conscription Devices, and Boundary Objects in Design Engineering. Sci. Technol. Hum. Values 1991, 16, 448–473. [Google Scholar] [CrossRef]

- Sezgin, T.M.; Davis, R. Sketch Interpretation Using Multiscale Models of Temporal Patterns. IEEE Comput. Graph. Appl. 2007, 27, 28–37. [Google Scholar] [CrossRef]

- Ribeiro, L.S.F.; Bui, T.; Collomosse, J.; Ponti, M. Sketchformer: Transformer-Based Representation for Sketched Structure. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 14153–14162. [Google Scholar]

- Qu, Z.; Gryaditskaya, Y.; Li, K.; Pang, K.; Xiang, T.; Song, Y.-Z. SketchXAI: A First Look at Explainability for Human Sketches. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 20–25 June 2021; IEEE: Piscataway, NJ, USA, 2021; pp. 23327–23337. [Google Scholar]

- Xu, J.; Wang, Z.; Li, X.; Li, Z.; Li, Z. Prediction of Daily Climate Using Long Short-Term Memory (LSTM) Model. Int. J. Innov. Sci. Res. Technol. 2024, 9, 83–90. [Google Scholar] [CrossRef]

- Zhu, S.; Zheng, J.; Ma, Q. MR-Transformer: Multiresolution Transformer for Multivariate Time Series Prediction. IEEE Trans. Neural Netw. Learn. Syst. 2025, 36, 1171–1183. [Google Scholar] [CrossRef]

- Xu, P.; Joshi, C.K.; Bresson, X. Multigraph Transformer for Free-Hand Sketch Recognition. IEEE Trans. Neural Netw. Learn. Syst. 2022, 33, 5150–5161. [Google Scholar] [CrossRef]

- Lin, H.; Fu, Y.; Jiang, Y.-G.; Xue, X. Sketch-BERT: Learning Sketch Bidirectional Encoder Representation from Transformers by Self-Supervised Learning of Sketch Gestalt. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 6758–6767. [Google Scholar]

- Wang, S. Development of an automated transformer-based text analysis framework for monitoring fire door defects in buildings. Sci. Rep. 2025, 15, 43910. [Google Scholar] [CrossRef]

- Wang, S. Graph neural network–driven text classification for fire-door defect inspection in pre-completion construction. Sci. Rep. 2025, 15, 44382. [Google Scholar] [CrossRef] [PubMed]

- Pan, C.; Huang, J.; Gong, J.; Chen, C. Teach machine to learn: Hand-drawn multi-symbol sketch recognition in one-shot. Appl. Intell. 2020, 50, 2239–2251. [Google Scholar] [CrossRef]

- Dahikar, Y.; Chikmurge, D.; Kharat, S. Sketch Captioning Using LSTM and BiLSTM. In Proceedings of the 2023 International Conference on Network, Multimedia and Information Technology (NMITCON), Bengaluru, India, 1–2 September 2023; IEEE: Piscataway, NJ, USA, 2023. [Google Scholar]

Figure 1.

Screenshot of the implemented Drawing Werewolf game during gameplay. Players collaboratively add strokes to a shared canvas while inferring hidden roles. The drawing is still incomplete at this stage, illustrating partial sketch observation.

Figure 1.

Screenshot of the implemented Drawing Werewolf game during gameplay. Players collaboratively add strokes to a shared canvas while inferring hidden roles. The drawing is still incomplete at this stage, illustrating partial sketch observation.

Figure 2.

Online incremental sketch recognition process in Drawing Werewolf. At each time step, t, a newly drawn stroke () is appended to the observed prefix , and the causal sequence model produces an updated theme probability distribution . The prediction is repeatedly updated as new strokes are observed, reflecting real-time inference under partial observation.

Figure 2.

Online incremental sketch recognition process in Drawing Werewolf. At each time step, t, a newly drawn stroke () is appended to the observed prefix , and the causal sequence model produces an updated theme probability distribution . The prediction is repeatedly updated as new strokes are observed, reflecting real-time inference under partial observation.

Figure 3.

Uni-directional LSTM-based sketch recognition model.

Figure 3.

Uni-directional LSTM-based sketch recognition model.

Figure 4.

Transformer-based sketch recognition model with incremental inference.

Figure 4.

Transformer-based sketch recognition model with incremental inference.

Figure 5.

Conceptual comparison between conventional offline sketch recognition and the proposed incremental recognition framework. In conventional settings, classification is performed only after full sketch completion. In contrast, the proposed framework evaluates predictions at multiple stroke stages under causal constraints, enabling stage-wise analysis of temporal information utilization.

Figure 5.

Conceptual comparison between conventional offline sketch recognition and the proposed incremental recognition framework. In conventional settings, classification is performed only after full sketch completion. In contrast, the proposed framework evaluates predictions at multiple stroke stages under causal constraints, enabling stage-wise analysis of temporal information utilization.

Figure 6.

Average classification accuracy over 44 animal classes as a function of the number of observed strokes. The Transformer (blue) consistently outperforms UniLSTM (red), with a larger margin in the early drawing stages.

Figure 6.

Average classification accuracy over 44 animal classes as a function of the number of observed strokes. The Transformer (blue) consistently outperforms UniLSTM (red), with a larger margin in the early drawing stages.

Figure 7.

Class-wise classification accuracy curves for representative animal categories. Butterfly and tiger represent classes with consistently high and low accuracy, respectively, for both models. Frog highlights a case where the performance gap is largest in the early stage, while dolphin illustrates a class where the gap becomes more prominent in later stages of drawing.

Figure 7.

Class-wise classification accuracy curves for representative animal categories. Butterfly and tiger represent classes with consistently high and low accuracy, respectively, for both models. Frog highlights a case where the performance gap is largest in the early stage, while dolphin illustrates a class where the gap becomes more prominent in later stages of drawing.

Figure 8.

Stage-wise prediction examples comparing UniLSTM and Transformer models. Each row shows the prediction results after observing k strokes (). The left and right columns correspond to two example categories (“frog” and “dolphin”). For each stage, the top 5 predicted classes and their probabilities are displayed for both models. The results illustrate that the Transformer tends to assign higher probability to the correct class earlier in the drawing process and maintains more stable predictions as additional strokes are observed. The red box highlights the correct target class in the Top-5 predictions. Different colors represent strokes drawn at different stages of the sketch.

Figure 8.

Stage-wise prediction examples comparing UniLSTM and Transformer models. Each row shows the prediction results after observing k strokes (). The left and right columns correspond to two example categories (“frog” and “dolphin”). For each stage, the top 5 predicted classes and their probabilities are displayed for both models. The results illustrate that the Transformer tends to assign higher probability to the correct class earlier in the drawing process and maintains more stable predictions as additional strokes are observed. The red box highlights the correct target class in the Top-5 predictions. Different colors represent strokes drawn at different stages of the sketch.

Figure 9.

Comparison of aggregation strategies for the Transformer model. The final-token representation achieves the best performance, while attention pooling shows comparable results. Mean pooling significantly reduces accuracy, and CLS-style aggregation performs poorly under causal attention constraints.

Figure 9.

Comparison of aggregation strategies for the Transformer model. The final-token representation achieves the best performance, while attention pooling shows comparable results. Mean pooling significantly reduces accuracy, and CLS-style aggregation performs poorly under causal attention constraints.

Table 1.

Model architecture and training hyperparameters.

Table 1.

Model architecture and training hyperparameters.

| Parameter | UniLSTM | Transformer |

|---|

| Input dimension | 5 | 5 |

| Hidden dimension () | 256 | 256 |

| Number of layers | 2 | 6 |

| Attention heads | – | 8 |

| FFN dimension | – | 1024 |

| Dropout rate | 0.1 | 0.1 |

| Number of classes | 44 | 44 |

| Normalization | – | Pre-LayerNorm |

| Attention masking | – | Causal mask |

| Optimizer | AdamW |

| Initial learning rate | |

| Batch size | 64 |

| Training epochs | 20 |

| Weight decay | 0.01 |

| Gradient clipping | 1.0 |

| Learning rate schedule | Cosine decay |

Table 2.

Classification accuracy comparison between Transformer and UniLSTM. Average, maximum, and minimum accuracies were computed over 44 animal classes.

Table 2.

Classification accuracy comparison between Transformer and UniLSTM. Average, maximum, and minimum accuracies were computed over 44 animal classes.

| k | Transformer | UniLSTM | Avg. Diff. |

|---|

|

Avg.

|

Max

|

Min

|

Avg.

|

Max

|

Min

|

(Tra.–LSTM)

|

|---|

| 1 | 0.40 | 0.86 | 0.076 | 0.34 | 0.76 | 0.047 | +0.06 |

| 2 | 0.60 | 0.91 | 0.22 | 0.49 | 0.86 | 0.13 | +0.11 |

| 3 | 0.68 | 0.93 | 0.28 | 0.57 | 0.91 | 0.19 | +0.11 |

| 4 | 0.73 | 0.94 | 0.37 | 0.61 | 0.92 | 0.25 | +0.12 |

| 5 | 0.77 | 0.94 | 0.41 | 0.64 | 0.91 | 0.28 | +0.13 |

| 6 | 0.78 | 0.93 | 0.46 | 0.65 | 0.88 | 0.33 | +0.13 |

| 7 | 0.79 | 0.94 | 0.51 | 0.65 | 0.85 | 0.34 | +0.14 |

| 8 | 0.79 | 0.94 | 0.51 | 0.65 | 0.87 | 0.32 | +0.14 |

| 9 | 0.79 | 0.94 | 0.49 | 0.64 | 0.88 | 0.29 | +0.15 |

| 10 | 0.78 | 0.94 | 0.45 | 0.62 | 0.91 | 0.26 | +0.16 |

Table 3.

Classification accuracy comparison between Transformer and UniLSTM. Statistics were computed from class-wise average accuracies over 44 animal classes.

Table 3.

Classification accuracy comparison between Transformer and UniLSTM. Statistics were computed from class-wise average accuracies over 44 animal classes.

| Model | Avg. | Std. | Max | Min |

|---|

| Transformer | 0.711 | 0.115 | 0.900 | 0.415 |

| UniLSTM | 0.586 | 0.133 | 0.820 | 0.264 |

| Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |