MIC-SSO: A Two-Stage Hybrid Feature Selection Approach for Tabular Data

Abstract

1. Introduction

- Stage 1—MIC Filtering: Features are ranked based on the MIC, a non-parametric measure capable of capturing linear, nonlinear, and complex dependencies with the target variable. Candidate subsets are generated by progressively retaining the top-ranked features.

- Stage 2—SSO: The SSO algorithm performs wrapper-based refinement, selecting a compact subset that balances predictive accuracy and feature reduction.

- A two-stage hybrid feature selection framework that combines MIC-based filtering with SSO-based wrapper optimization, enabling efficient and scalable feature selection for high-dimensional tabular data.

- A balanced fitness function that jointly optimizes classification performance and feature compactness using MCC and feature reduction ratio.

- The integration of TabPFN as a fixed, pretrained evaluator, providing reliable and efficient performance estimation during feature selection.

- Extensive experimental validation on public benchmark datasets, demonstrating that MIC-SSO achieves competitive accuracy with significantly reduced feature subsets compared to existing hybrid methods.

2. Literature Review

2.1. Filter, Wrapper, and Embedded Methods

2.2. Emergence of Hybrid Feature Selection

- A filter or embedded step is used to rapidly eliminate irrelevant features and reduce the search space.

- A wrapper or optimization step then refines the reduced feature set to maximize predictive performance.

2.3. Hybrid Metaheuristic Approaches

- Genetic Algorithm (GA) hybrids, where initial feature rankings provided by filters seed the population for GA-based search [15]. These approaches benefit from GA’s global search capabilities and can escape local optima that greedy wrappers may encounter.

- Particle Swarm Optimization (PSO) and variants, which have been hybridized with filters to balance exploration and exploitation in the search space [16].

2.4. Maximal Information Coefficient (MIC)

2.5. Simplified Swarm Optimization (SSO)

2.6. Positioning the Proposed MIC-SSO Framework

- Limited capability of conventional filter methods to capture complex feature dependencies

- 2.

- High computational cost of wrapper-based metaheuristics in high-dimensional spaces

- 3.

- Insufficient attention to balanced optimization objectives in hybrid FS

- 4.

- Lack of unified hybrid frameworks designed for robust performance across diverse datasets

- Capturing complex feature-target relationships (MIC): MIC is a nonparametric statistic capable of detecting linear, nonlinear, and periodic associations between features and the target variable. By using MIC to rank and retain the most informative features, the framework ensures that relevant features—including those involved in complex, high-order dependencies—are preserved for further optimization.

- Efficient combinatorial search (SSO): While MIC identifies individually relevant features, it does not account for interactions among features that collectively enhance predictive performance. SSO, a lightweight population-based metaheuristic, explores the reduced feature space efficiently, balancing global exploration and local refinement. Its simple update mechanism reduces computational overhead compared with conventional metaheuristics, making it scalable for high-dimensional datasets.

- Scientific synergy of MIC and SSO: The combination of MIC and SSO is conceptually synergistic. MIC ensures the candidate pool contains highly informative features, while SSO optimizes the joint selection of feature subsets that maximize classification performance. This integration addresses two fundamental FS challenges: identifying robustly relevant features and efficiently searching combinatorial feature spaces. As a result, MIC-SSO maintains a balance between predictive accuracy and feature compactness, ensuring robust and scalable performance across diverse tabular datasets.

2.7. TabPFN Classifier

3. Proposed Method

- (1)

- Encoding Scheme

- (2)

- Fitness Function

- (3)

- Update Mechanism

- Compute MIC scores for all features in F

- Rank features in descending MIC order

- For each retention ratio r ∈ {10%, 20%, ..., 100%}:

- Select top-r features → subset Cr

- Evaluate Cr using TabPFN → MCCr

- Select Ccandidate = subset with highest MCCr

- 5.

- Initialize population P with binary vectors from Ccandidate

- 6.

- Evaluate the fitness of each individual usingF(Cf) = α∗MCC(Cf) + (1−α)∗(1 − |Cf|/|Ccandidate|)

- 7.

- For generation = 1 to MaxGen,

- Update each individual according to SSO rules (global best, personal best, random exploration)

- Retrain TabPFN for each individual → compute MCC → update fitness

- Update global best solution if improvement occurs

- 8.

- Copt = global best solution at convergence

4. Experimental Result

4.1. Datasets

4.2. Parameter Settings

4.3. Experimental Results

- MI-SSO, which replaces the MIC filter with Mutual Information (MI);

- MIC-GA and MIC-PSO, which replace the SSO optimizer with Genetic Algorithm (GA) and Particle Swarm Optimization (PSO), respectively.

- XGBoost-MOGA, which combines XGBoost 3.2.0 feature ranking with multi-objective genetic optimization;

- JASAL, a two-stage hybrid method integrating ReliefF filtering and a metaheuristic wrapper combining Sine–Cosine Algorithm (SCA) and JAYA.

- Choice of MIC:

- Theoretical Justification: MIC is a non-parametric measure capable of capturing linear, nonlinear, and complex associations between features and the target variable. Unlike mutual information (MI) or mRMR, MIC does not assume any specific distribution or functional relationship, making it particularly suitable for high-dimensional tabular datasets with heterogeneous feature types.

- Empirical Justification: Preliminary experiments comparing MIC against MI and mRMR on representative datasets showed that MIC consistently produced feature subsets that achieved higher classification performance and better stability across multiple runs. These results are summarized in Table 3 (MI-SSO variant).

- Choice of SSO:

- Theoretical Justification: SSO is a population-based evolutionary algorithm that balances exploration and exploitation using a straightforward update mechanism. Compared to GA and PSO, SSO requires fewer hyperparameters, converges faster, and can efficiently navigate the search space of candidate feature subsets without being trapped in local optima.

- Empirical Justification: Ablation studies (MIC-GA, MIC-PSO variants in Table 3) indicate that replacing SSO with GA or PSO generally results in slower convergence or slightly lower fitness values, confirming the complementary role of SSO in refining MIC-selected feature subsets.

- Justification of Parameter Settings:

- The SSO hyperparameters (Cg = 0.4, Cp = 0.6, Cw = 0.9) and fitness trade-off α = 0.7 were chosen based on a combination of prior literature on SSO and preliminary sensitivity experiments. These settings provide a balanced search behavior, enabling efficient convergence toward feature subsets that achieve high classification performance while maintaining compactness.

- Sensitivity analysis for feature count in the MIC stage (Figure 3) further supports our design choices, showing that an intermediate-sized subset (≈20–40% of features) consistently produces the best trade-off between performance and dimensionality reduction.

- Feature subsets from 10% → 100% (MIC ranking);

- Peak performance at an intermediate range (≈20–40%);

- Plateau or slight degradation when more features are added;

- The MIC stage performs an implicit sensitivity analysis;

- An intermediate subset is optimal;

- Passing this subset to SSO is empirically justified.

- Complementary Role of MIC and SSO:

- The MIC-based filtering stage prioritizes features that are highly relevant to the target variable, effectively removing noisy or redundant features early.

- The SSO-based wrapper stage then fine-tunes the selection of features by exploring feature combinations to optimize predictive performance and compactness simultaneously.

- This complementary design ensures that MIC-SSO balances accuracy and dimensionality reduction, improving the overall fitness across datasets.

- Adaptive Feature Subset Selection:

- Sensitivity analysis of feature counts (Figure 3) shows that peak MCC is typically achieved using an intermediate subset of features.

- By selecting this optimal subset prior to SSO, the algorithm reduces the search space while retaining the most informative features, enabling better convergence and improved performance.

- Robustness Across Diverse Datasets:

- MIC-SSO maintains strong performance regardless of dataset dimensionality, sample size, or class complexity because the initial MIC filtering stage adapts to dataset-specific feature distributions, while SSO’s heuristic search efficiently handles the remaining combinatorial complexity.

- Interpretability Considerations:

- While MIC-SSO is primarily designed for high predictive performance, the selected feature subsets can be analyzed using feature importance metrics derived from TabPFN or other post hoc interpretability algorithms (e.g., SHAP, Permutation Importance).

- Such analyses allow identification of features that contribute most to classification decisions, providing practical interpretability alongside high accuracy.

- Parameter Sensitivity Analysis:

- The key hyperparameters of the SSO algorithm—population size (P), number of generations (T), and update coefficients (Cg, Cp, Cw)—as well as the trade-off parameter αin the fitness function, were varied systematically within reasonable ranges.

- Results show that the performance, measured by MCC, remains stable across small-to-moderate variations in these parameters, indicating that MIC-SSO is not highly sensitive to specific hyperparameter settings. For example, varying α between 0.6 and 0.8 led to <2% change in MCC across all datasets.

- Ablation Studies:

- We tested three variants of MIC-SSO:

- MI-SSO, replacing MIC with Mutual Information;

- MIC-GA, replacing SSO with Genetic Algorithm;

- MIC-PSO, replacing SSO with Particle Swarm Optimization.

- As shown in Table 3, removing or replacing either stage generally reduces fitness and predictive performance, highlighting the complementary effect of MIC filtering and SSO. This confirms that both stages are essential for robust feature selection.

- Effect of Candidate Feature Subset Size:

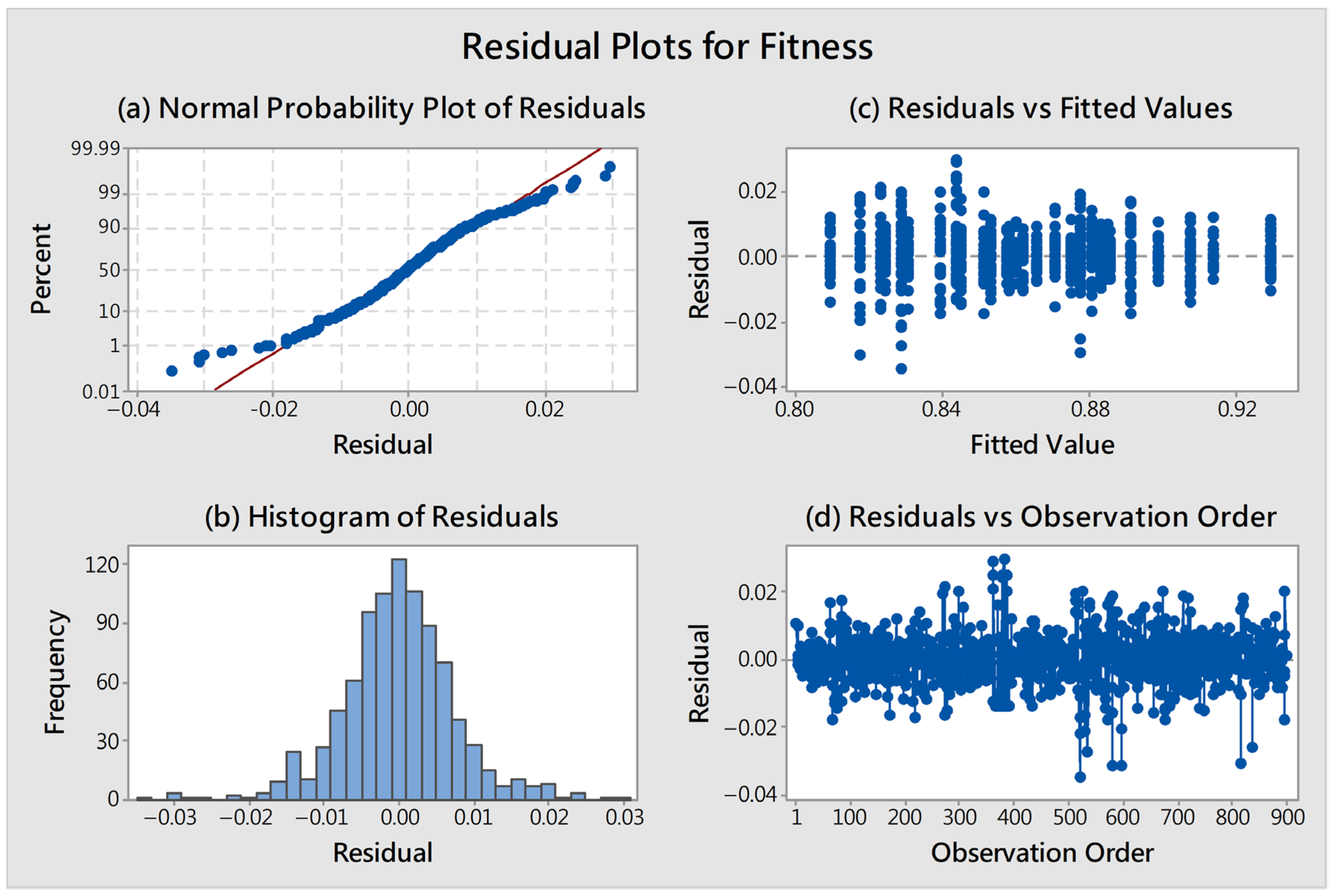

4.4. Statistical Significance Analysis

4.5. Computational Complexity and Scalability Analysis

4.5.1. Complexity of the MIC Filtering Stage

4.5.2. Complexity of the SSO-Based Wrapper Optimization

4.5.3. Overall Complexity and Scalability Discussion

- Across multiple runs (30 independent trials per dataset), SSO consistently converges to stable solutions within the predefined number of generations (30 generations in our experiments).

- Sensitivity analysis indicates that initializing the SSO population with the full candidate subset from MIC accelerates convergence and improves stability, reducing the risk of premature convergence or over-exploration.

- For representative datasets, the combination of MIC filtering and SSO reduces the number of candidate features by over 60%, directly reducing evaluation cost per generation.

- Compared to baseline wrapper methods (GA, PSO), MIC-SSO achieves similar or higher fitness scores while requiring fewer function evaluations due to the reduced feature space, demonstrating both computational efficiency and effective convergence.

5. Conclusions

- Conceptual insights: MIC filtering prioritizes highly relevant features; SSO refines subsets to optimize the balance between predictive performance and compactness.

- Empirical results: Across five datasets, MIC-SSO achieves the highest overall fitness, outperforming both component-level variants and existing hybrid methods.

- Robustness: Sensitivity analyses confirm stability against parameter variations and intermediate feature subset selection.

- Stage 1 does not explicitly eliminate redundant features; collinear features may increase SSO burden.

- Current evaluation is limited to medium-sized tabular datasets; extremely high-dimensional, small-sample, or multi-class scenarios require further study.

- Dependency on TabPFN may limit adaptability to other classifiers.

- Direct comparisons with modern metaheuristics (GWO, WOA) remain future work.

- Redundancy-aware filtering: Integrate MIC matrix or symmetric uncertainty to improve Stage 1 selection.

- High-dimensional, small-sample datasets: Evaluate MIC-SSO performance in extreme scenarios.

- Multi-class fairness analysis: Ensure feature representation is balanced across classes.

- Metaheuristic benchmarking: Compare SSO to modern optimizers such as GWO and WOA.

- Runtime and scalability: Conduct empirical runtime benchmarking and explore parallel or adaptive SSO implementations.

- Explainable AI integration: Use SHAP or LIME to improve the interpretability of selected feature subsets.

- Adaptive parameter tuning: Explore data-driven or AutoML approaches for hyperparameter selection to enhance robustness and flexibility.

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A

| Source | DF | Adj SS | Adj MS | F-Value | p-Value |

|---|---|---|---|---|---|

| Method | 5 | 0.11760 | 0.023521 | 391.19 | <0.001 |

| Datasets | 4 | 0.48137 | 0.120342 | 2001.47 | <0.001 |

| Method * Datasets | 20 | 0.16433 | 0.008216 | 136.65 | <0.001 |

| Error | 870 | 0.05231 | 0.000060 | ||

| Total | 899 | 0.81561 |

| Method | N | Mean | Grouping | ||||

|---|---|---|---|---|---|---|---|

| MIC-SSO | 150 | 0.884913 | A | ||||

| MIC-PSO | 150 | 0.867955 | B | ||||

| MIC-GA | 150 | 0.861592 | C | ||||

| JASAL | 150 | 0.860459 | C | ||||

| MI-SSO | 150 | 0.854865 | D | ||||

| XGBoost-MOGA | 150 | 0.848692 | E | ||||

References

- Umesh, C.; Mahendra, M.; Bej, S.; Wolkenhauer, O.; Wolfien, M. Challenges and applications in generative AI for clinical tabular data in physiology. Pflug. Arch. Eur. J. Physiol. 2025, 477, 531–542. [Google Scholar] [CrossRef]

- Adiputra, I.N.M.; Wanchai, P. CTGAN-ENN: A tabular GAN-based hybrid sampling method for imbalanced and overlapped data in customer churn prediction. J. Big Data 2024, 11, 121. [Google Scholar] [CrossRef]

- Chui, M.; Manyika, J.; Miremadi, M.; Henke, N.; Chung, R.; Nel, P.; Malhotra, S. Notes from the AI frontier: Insights from hundreds of use cases. McKinsey Glob. Inst. 2018, 2, 267. [Google Scholar]

- Kapila, R.; Saleti, S. Federated learning-based disease prediction: A fusion approach with feature selection and extraction. Biomed. Signal Process. Control 2025, 100, 106961. [Google Scholar] [CrossRef]

- Venkatesh, B.; Anuradha, J. A Review of Feature Selection and Its Methods. Cybern. Inf. Technol. 2019, 19, 3–26. [Google Scholar] [CrossRef]

- Yeh, W.C.; Chu, C.L. Feature selection for data classification in the semiconductor industry by a hybrid of simplified swarm optimization. Electronics 2024, 13, 2242. [Google Scholar] [CrossRef]

- Chen, W.; Cai, Y.; Li, A.; Su, Y.; Jiang, K. EEG feature selection method based on maximum information coefficient and quantum particle swarm. Sci. Rep. 2023, 13, 14515. [Google Scholar] [CrossRef] [PubMed]

- Qin, Y.; Song, K.; Zhang, N.; Wang, M.; Zhang, M.; Peng, B. Robust NIR quantitative model using MIC-SPA variable selection and GA-ELM. Infrared Phys. Technol. 2023, 128, 104534. [Google Scholar] [CrossRef]

- Piri, J.; Mohapatra, P.; Dey, R.; Acharya, B.; Gerogiannis, V.C.; Kanavos, A. Literature review on hybrid evolutionary approaches for feature selection. Algorithms 2023, 16, 167. [Google Scholar] [CrossRef]

- Liyew, C.M.; Ferraris, S.; Di Nardo, E.; Meo, R. A review of feature selection methods for actual evapotranspiration prediction. Artif. Intell. Rev. 2025, 58, 292. [Google Scholar] [CrossRef]

- Kundu, R.; Mallipeddi, R. HFMOEA: Hybrid filter-multiobjective evolutionary algorithm for feature selection. J. Comput. Des. Eng. 2025, 9, 949–965. [Google Scholar]

- Yavuz, G.; Moghanjoughi, M.K.; Dumlu, H.; Çakir, H.İ. A Feature Selection Method Combining Filter and Wrapper Approaches for Medical Dataset Classification. Vietnam. J. Comput. Sci. 2025, 12, 375–393. [Google Scholar] [CrossRef]

- Yin, Y.; Jang-Jaccard, J.; Xu, W.; Singh, A.; Zhu, J.; Sabrina, F.; Kwak, J. IGRF-RFE: A0020hybrid feature selection method for mlp-based network intrusion detection on unsw-nb15 dataset. J. Big Data 2023, 10, 15. [Google Scholar] [CrossRef]

- Deng, X.; Li, M.; Deng, S.; Wang, L. Hybrid gene selection approach using XGBoost and multi-objective genetic algorithm for cancer classification. Med. Biol. Eng. Comput. 2022, 60, 663–681. [Google Scholar] [CrossRef] [PubMed]

- Patil, S.; Bansode, R. A Hybrid Feature Selection Approach Incorporating Mutual Information and Genetics Algorithm for Web Server Attack Detection. Indian J. Sci. Technol. 2024, 17, 325–332. [Google Scholar] [CrossRef]

- Adamu, A.; Abdullahi, M.; Junaidu, S.B.; Hassan, I.H. An hybrid particle swarm optimization with crow search algorithm for feature selection. Mach. Learn. Appl. 2021, 6, 100108. [Google Scholar] [CrossRef]

- Demiröz, A.; Aydın Atasoy, N. Explainable Model of Hybrid Ensemble Learning for Prostate Cancer RNA-Seq Classification via Targeted Feature Selection. Electronics 2025, 14, 4050. [Google Scholar] [CrossRef]

- Reshef, D.N.; Reshef, Y.A.; Finucane, H.K.; Grossman, S.R.; McVean, G.; Turnbaugh, P.J.; Lander, E.S.; Mitzenmacher, M.; Sabeti, P.C. Detecting novel associations in large data sets. Science 2011, 334, 1518–1524. [Google Scholar] [CrossRef]

- Kinney, J.B.; Atwal, G.S. Equitability, mutual information, and the maximal information coefficient. Proc. Natl. Acad. Sci. USA 2014, 111, 3354–3359. [Google Scholar] [CrossRef]

- Yeh, W.C. A two-stage discrete particle swarm optimization for the problem of multiple multi-level redundancy allocation in series systems. Expert Syst. Appl. 2009, 36, 9192–9200. [Google Scholar] [CrossRef]

- Yeh, W.C.; Yang, Y.T.; Lai, C.M. A hybrid simplified swarm optimization method for imbalanced data feature selection. Aust. Acad. Bus. Econ. Rev. 2017, 2, 263–275. [Google Scholar]

- Yeh, W.C.; Tan, S.Y. Simplified swarm optimization for the heterogeneous fleet vehicle routing problem with time-varying continuous speed function. Electronics 2021, 10, 1775. [Google Scholar] [CrossRef]

- Zhong, Q.; Shang, J.; Ren, Q.; Li, F.; Jiao, C.N.; Liu, J.X. FSCME: A Feature Selection Method Combining Copula Correlation and Maximal Information Coefficient by Entropy Weights. IEEE J. Biomed. Health Inform. 2024, 28, 5638–5648. [Google Scholar] [CrossRef]

- Hollmann, N.; Müller, S.; Purucker, L.; Krishnakumar, A.; Körfer, M.; Bin Hoo, S.; Schirrmeister, R.T.; Hutter, F. Accurate predictions on small data with a tabular foundation model. Nature 2025, 637, 319–326. [Google Scholar] [CrossRef]

- Hollmann, N.; Müller, S.; Eggensperger, K.; Hutter, F. Tabpfn: A transformer that solves small tabular classification problems in a second. arXiv 2022, arXiv:2207.01848. [Google Scholar]

- Abdo, A.; Mostafa, R.; Abdel-Hamid, L. An Optimized Hybrid Approach for Feature Selection Based on Chi-Square and Particle Swarm Optimization Algorithms. Data 2024, 9, 20. [Google Scholar] [CrossRef]

- Yu, K.; Li, W.; Xie, W.; Wang, L. A Hybrid Feature-Selection Method Based on mRMR and Binary Differential Evolution for Gene Selection. Processes 2024, 12, 313. [Google Scholar] [CrossRef]

- Chen, K.; Wang, W.; Zhang, F.; Liang, J.; Yu, K. Correlation-guided particle swarm optimization approach for feature selection in fault diagnosis. IEEE/CAA J. Autom. Sin. 2025, 12, 2329–2341. [Google Scholar] [CrossRef]

- Huang, Y.; Lu, M.; Hu, Y.; Wen, Q.; Cai, B.; Li, X. Enhanced particle swarm optimization based on network structure for feature selection. Complex Intell. Syst. 2025, 11, 473. [Google Scholar] [CrossRef]

- Guyon, I. Madelon, UCI Machine Learning Repository. Available online: https://archive.ics.uci.edu/dataset/171/madelon (accessed on 1 July 2025).

- Vanschoren, J.; van Rijn, J.N.; Bischl, B.; Torgo, L. OpenML: Networked science in machine learning. ACM SIGKDD Explor. Newsl. 2014, 15, 49–60. [Google Scholar] [CrossRef]

- Anguita, D.; Ghio, A.; Oneto, L.; Parra, X.; Reyes-Ortiz, J.L. A public domain dataset for human activity recognition using smartphones. In Proceedings of the ESANN 2013 European Symposium on Artificial Neural Networks, Computational Intelligence and Machine Learning, Bruges, Belgium, 24–26 April 2013; Volume 3, pp. 3–4. [Google Scholar]

- Duin, R. Mfeat-Factors. Available online: https://www.openml.org/d/12 (accessed on 1 July 2025).

- UC Irvine Machine Learning Repository. Semeion Handwritten Digit. Available online: https://archive.ics.uci.edu/dataset/178/semeion+handwritten+digit (accessed on 1 July 2025).

| Hybrid Method | Filter/Ranking Stage | Wrapper/Optimization Stage | Objective | Main Contribution | Reference(s) | Open Challenges |

|---|---|---|---|---|---|---|

| Chi-Square + PSO/GWO | Chi-Square filter | PSO/Gray Wolf Optimization (GWO) | Maximize classification accuracy | Combines statistical relevance with swarm search for higher accuracy | [26] | Basic filter may miss nonlinear dependencies; wrapper cost still non-trivial |

| mRMR + Binary Differential Evolution (BDE) | mRMR | Binary Differential Evolution | Maximize classification accuracy | Improves search efficiency via adaptive DE search after mRMR | [27] | Initial relevance metric still limited; evolutionary wrapper cost scales |

| Correlation-guided PSO | Correlation ranking | Correlation-guided PSO | Maximize classification accuracy | Enhances PSO search with domain/feature correlation guidance | [28] | Sensitivity to correlation measure; scaling with dimensionality |

| Additional PSO-based FS work | Basic feature ranking | PSO enhancements | Maximize accuracy | Orthogonal initialization + crossover help search | [29] | Still swarm-based; sensitivity to parameters |

| MIC-SSO (Proposed) | Maximal Information Coefficient (MIC) | SSO | Maximize classification accuracy | Captures linear & nonlinear dependencies + lightweight swarm search | This paper | Validation on very large scales beyond current datasets |

| Dataset | Features | Instances (Train/Test) | Classes | Source |

|---|---|---|---|---|

| Madelon | 500 | 2600 (2000/600) | 2 | [30] |

| DNA | 180 | 3186 (2548/638) | 3 | [31] |

| Human Activity Recognition Using Smartphones (HARUS) | 561 | 10,299 (7352/2947) | 6 | [32] |

| Mfeat-Factors | 216 | 2000 (1600/400) | 10 | [33] |

| Semeion Handwritten Digit (Semeion) | 256 | 1593 (1274/319) | 10 | [34] |

| Dataset | Average (Std Dev) | MI-SSO | MIC-GA | MIC-PSO | MIC-SSO (Proposed) |

|---|---|---|---|---|---|

| Madelon | MCC | 0.8281 | 0.8269 | 0.8291 | 0.8342 |

| F | 133.27 | 181.03 | 160.70 | 136.63 | |

| Fitness | 0.8599 | 0.8308 | 0.8438 | 0.8600 | |

| DNA | MCC | 0.9340 | 0.9373 | 0.9395 | 0.9390 |

| F | 88.70 | 58.53 | 81.00 | 52.33 | |

| Fitness | 0.8291 | 0.8805 | 0.8439 | 0.8914 | |

| HARUS | MCC | 0.9249 | 0.9264 | 0.9252 | 0.9269 |

| F | 216.93 | 185.90 | 166.00 | 166.20 | |

| Fitness | 0.8577 | 0.8748 | 0.8850 | 0.8855 | |

| Mfeat-Factors | MCC | 0.9646 | 0.9657 | 0.9620 | 0.9660 |

| F | 57.70 | 64.33 | 52.40 | 42.47 | |

| Fitness | 0.9075 | 0.8987 | 0.9139 | 0.9292 | |

| Semeion | MCC | 0.9312 | 0.9316 | 0.9217 | 0.9281 |

| F | 134.83 | 130.47 | 101.90 | 99.47 | |

| Fitness | 0.8179 | 0.8232 | 0.8532 | 0.8584 |

| Dataset | Average (Std Dev) | XGBoost-MOGA | JASAL | MIC-SSO (Proposed) |

|---|---|---|---|---|

| Madelon | MCC | 0.8185 | 0.8258 | 0.8342 |

| F | 211.63 | 191.37 | 136.63 | |

| Fitness | 0.8095 | 0.8242 | 0.8600 | |

| DNA | MCC | 0.8957 | 0.9062 | 0.9390 |

| F | 74.23 | 53.57 | 52.33 | |

| Fitness | 0.8398 | 0.8779 | 0.8914 | |

| HARUS | MCC | 0.9316 | 0.9344 | 0.9269 |

| F | 196.43 | 208.73 | 166.20 | |

| Fitness | 0.8710 | 0.8654 | 0.8855 | |

| Mfeat-Factors | MCC | 0.9683 | 0.9668 | 0.9660 |

| F | 80.00 | 75.70 | 42.47 | |

| Fitness | 0.8778 | 0.8833 | 0.9292 | |

| Semeion | MCC | 0.9158 | 0.9197 | 0.9281 |

| F | 106.83 | 102.73 | 99.47 | |

| Fitness | 0.8453 | 0.8515 | 0.8584 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Yeh, W.-C.; Jiang, Y.; Hsu, H.-J.; Huang, C.-L. MIC-SSO: A Two-Stage Hybrid Feature Selection Approach for Tabular Data. Electronics 2026, 15, 856. https://doi.org/10.3390/electronics15040856

Yeh W-C, Jiang Y, Hsu H-J, Huang C-L. MIC-SSO: A Two-Stage Hybrid Feature Selection Approach for Tabular Data. Electronics. 2026; 15(4):856. https://doi.org/10.3390/electronics15040856

Chicago/Turabian StyleYeh, Wei-Chang, Yunzhi Jiang, Hsin-Jung Hsu, and Chia-Ling Huang. 2026. "MIC-SSO: A Two-Stage Hybrid Feature Selection Approach for Tabular Data" Electronics 15, no. 4: 856. https://doi.org/10.3390/electronics15040856

APA StyleYeh, W.-C., Jiang, Y., Hsu, H.-J., & Huang, C.-L. (2026). MIC-SSO: A Two-Stage Hybrid Feature Selection Approach for Tabular Data. Electronics, 15(4), 856. https://doi.org/10.3390/electronics15040856