1. Introduction

Artificial intelligence (AI) systems have increasingly been integrated into core operational processes, positioning them as critical infrastructure for both enterprises and society. In particular, Large Language Models (LLMs) and foundation models require prolonged training cycles involving high-performance computing resources and large-scale datasets, resulting in assets whose value is driven not only by software implementation but also by accumulated training cost and behavioral knowledge [

1,

2,

3]. As a consequence, AI systems have become attractive targets for ransomware actors seeking to exploit their high recovery cost and operational dependency.

Conventional ransomware attacks primarily disrupt service availability by encrypting file systems or databases and demanding ransom for decryption [

4]. Defense strategies have therefore been designed under the assumption that service continuity can be restored through backup-based recovery of data and executable code. However, this assumption does not hold in AI-centric environments. In such systems, critical assets extend beyond files and binaries to include model parameters, training data, inference pipelines, and policy or safety mechanisms. Compromise of these assets can undermine system behavior and decision-making reliability even when files are successfully restored, indicating that traditional recovery-oriented protection models are insufficient for AI systems.

Indeed, recent ransomware variants explicitly identify and encrypt AI model file formats (e.g., .pt, .h5, .onnx) such as PyTorch, TensorFlow, and ONNX, or employ intermittent encryption techniques that selectively damage specific blocks rather than the entire file [

5,

6]. Furthermore, attacks that cause malfunctions only under specific conditions while maintaining model accuracy through data poisoning or backdoor insertion can be interpreted as a new form of “integrity ransomware” that holds the reliability of AI models hostage [

7,

8,

9]. These attacks reveal the fundamental limitations of traditional ransomware response strategies, as restoring a model from backup data does not guarantee the same behavioral characteristics.

Moreover, AI development and operations environments themselves are being utilized as attack surfaces. Structural vulnerabilities in the pickle format used during model serialization allow for arbitrary code execution upon loading model files, with reported cases of ransomware spreading into internal infrastructures through this vector [

10,

11,

12,

13]. Supply chain attacks exploiting Jupyter Notebooks and public model repositories also serve as pathways to threaten both models and data simultaneously by taking advantage of the openness of AI research environments [

14,

15].

To counter these attack trends, AI-specific defense technologies such as confidential computing, model signing, and Supply Chain Levels for Software Artifacts (SLSA) have been proposed [

16,

17,

18]. However, existing research focuses primarily on the effectiveness of individual attack techniques or defense technologies, and there is a relative lack of attempts to redefine ransomware threats against AI systems as a question of “which assets must be protected.”

Motivated by these issues, this study re-examines ransomware attacks on AI systems from the perspective of existing data- and code-centric protection models. Specifically, we argue that the protection targets in AI systems are not merely files or executable code, but an expanded set of targets including decision-making characteristics and behavioral logic based on inputs. To this end, we comprehensively re-interpret recently reported attack cases and technical analyses, structurally analyzing why traditional ransomware recovery assumptions fail in AI systems. Furthermore, this research aims to present the security and operational implications of rethinking protection targets for ransomware response suitable for AI environments.

This paper is organized as follows: we first analyze why traditional ransomware recovery assumptions fail in AI-centric environments, then introduce a behavior-aware protection methodology, and finally evaluate its effectiveness through representative experimental scenarios to highlight its operational implications for post-recovery decision-making. Although some experiments involve large language models, the proposed framework and methodology are broadly applicable to AI systems whose reliability depends on learned behavior, including vision models and other data-driven decision systems.

2. Background and Threat Landscape of AI-Targeted Ransomware

Ransomware has long established itself as a quintessential cyberattack vector that denies system availability through data encryption to extort financial gain. Conventional ransomware defense strategies have primarily revolved around data backup, file integrity verification, and disaster recovery—approaches that have functioned with relative effectiveness within traditional IT infrastructures [

19,

20,

21]. However, as AI systems are increasingly integrated as core business assets, both the targets and the underlying objectives of ransomware attacks are undergoing a structural shift.

2.1. Economic Motivation Behind AI-Targeted Ransomware

The proliferation of ransomware attacks targeting AI systems is underpinned by the unique economic attributes of AI assets. Large-scale AI models are not mere software artifacts; they represent the culmination of expensive GPU resources, extensive training durations, and highly curated datasets. According to recent analyses, the computational costs required to train frontier-level language models range from tens to hundreds of millions of dollars, granting attackers a formidable degree of economic asymmetry [

1,

2,

3].

In such an environment, attackers can induce scenarios where victim organizations face prohibitive costs and time delays for retraining by encrypting or corrupting model files and training data. Indeed, the average ransom demands in recent attacks have shown a consistent upward trend, and the scale of extortion is expected to escalate further when targeting AI-driven enterprises or research institutions [

4,

22]. This underscores that AI systems are no longer incidental victims of ransomware but are high-value assets intentionally selected for exploitation.

Beyond raw GPU consumption, retraining large-scale AI models incurs substantial additional costs, including data curation and labeling, hyperparameter re-tuning, validation cycles, compliance re-certification, and prolonged service downtime, which collectively amplify the economic leverage available to attackers.

Table 1 visually demonstrates the extent to which AI model assets represent attractive financial targets for attackers by comparing the immense costs involved in training state-of-the-art AI models with the average ransom amounts demanded or paid.

2.2. AI-Specific Attack Vectors and Ransomware Techniques

Ransomware attacks targeting AI systems utilize distinct attack vectors that differentiate them from traditional IT asset threats. A primary example is the exploitation of model serialization formats used in PyTorch or TensorFlow, which can lead to arbitrary code execution during the model loading phase. Specifically, because the pickle format inherently allows for code execution, instances have been documented where malicious payloads embedded within model files are distributed under the guise of legitimate training artifacts [

10,

11,

12,

13].

Furthermore, ransomware actors capitalize on the massive size of AI model files by employing intermittent encryption techniques. By selectively corrupting specific data blocks instead of encrypting the entire file, attackers can render models unusable while significantly reducing the likelihood of detection by traditional file-based monitoring tools [

5,

6].

Data poisoning and backdoor injection also serve as critical mechanisms for AI-targeted ransomware. By subtly manipulating training or fine-tuning datasets, attackers can ensure models malfunction only under specific trigger conditions. These clandestine attacks maintain overall performance while enabling “integrity-based extortion” strategies, where victims are coerced into paying a ransom to restore the reliability of their AI models [

7,

8,

9].

2.3. Expanded Attack Surface in AI Development and Operations

The attack surface for AI systems extends well beyond model files and datasets. Development and operations tools—such as Jupyter Notebooks, public model hubs, and package management ecosystems—have become primary vectors for supply chain attacks. Exposed or misconfigured Jupyter Notebooks provide entry points for attackers to infiltrate internal networks, deploy ransomware, or install cryptomining malware [

14,

15].

This evolving threat landscape highlights that AI systems function as integrated pipelines within broader operational contexts rather than as isolated assets. Consequently, the ramifications of ransomware transcend simple availability issues, impacting the core reliability and decision-making integrity of the system. This underscores that legacy asset-centric protection models are insufficient for AI environments and necessitates a fundamental reassessment of protection targets.

2.4. Recent Trends in AI-Driven Vulnerabilities

The rapid evolution of LLMs and agentic AI systems has introduced a novel class of security vulnerabilities that transcend the boundaries of traditional software exploitation [

25,

26]. In contrast to legacy AI-related threats that primarily focused on input manipulation or model misuse, recent analyses indicate that AI systems are increasingly being leveraged as active participants in cyberattacks rather than mere passive targets [

25,

26].

Recent surveys on large language models in cybersecurity have documented their growing use in both defensive automation and offensive attack pipelines, while also emphasizing newly exposed vulnerabilities inherent to their scale and autonomy [

27]. In parallel, backdoor-focused surveys have shown that modern neural models may preserve benign behavior under standard evaluation while exhibiting malicious responses only under specific trigger conditions, thereby evading conventional integrity checks [

28].

Agentic AI systems, in particular, possess the capability to autonomously conduct reconnaissance, analyze system environments, generate executable code, and iteratively refine attack strategies based on observed feedback [

25]. Threat intelligence reports have documented instances where AI-driven coding agents automated significant portions of the attack lifecycle, producing code that appears syntactically benign during initial infiltration but enables adaptive malicious behavior post-deployment [

25].

These developments signify a qualitative shift in the nature of cyber threats targeting AI-enabled environments [

26]. As attack logic becomes dynamically generated and adapted at runtime, traditional defensive paradigms based on static signatures or predefined malicious payloads are becoming increasingly ineffective [

26]. Consequently, the evolution of AI-driven vulnerabilities underscores the imperative to reconsider protection assumptions in AI-centric infrastructures, especially concerning autonomous decision-making and behavior within deployed systems.

3. Failure of Asset-Based Ransomware Protection in AI Systems

Traditional ransomware protection models are predicated on an asset-based perspective that confines protected assets to data, executable code, and system availability. Such models implicitly assume that the pre-attack normal state can be fully restored through file integrity verification, backup recovery, and system restarts [

19,

20,

21]. However, we argue that these assumptions are structurally untenable within the context of AI system environments.

3.1. File-Level Integrity Does Not Imply Behavioral Integrity

The successful restoration of model files and code in an AI system does not automatically translate into reliable system behavior. In several recently reported attack scenarios, model parameter files remained formally intact and passed hash or signature verifications, yet they exhibited anomalous outputs or policy-violating behaviors only under specific input conditions. This phenomenon is particularly prominent in data poisoning and backdoor injection attacks [

7,

8,

9].

A defining characteristic of these attacks is the absence of significant fluctuations in the model’s overall accuracy or general performance metrics. As a result, conventional integrity verification techniques and performance tests may fail to detect the presence of an attack, incorrectly validating the model as “normal.” In essence, file-level integrity and behavioral integrity are decoupled within AI systems, and traditional asset-based protection models are fundamentally incapable of adequately capturing the latter.

3.2. Recovery Does Not Guarantee Trust Restoration

In traditional ransomware mitigation, recovery is generally understood as the restoration of normal operational status. However, in AI systems, it is exceptionally difficult to determine whether model outputs remain trustworthy even after a successful recovery process. Even if model parameters or training data are restored from backups, instances where the backups themselves are compromised—or where their integrity cannot be verified—occur frequently.

Particularly in data poisoning or integrity-based extortion scenarios, attackers may deliberately conceal the root cause of malfunctions or the specific trigger conditions. In such cases, the organization cannot restore model reliability without opting for a complete retrain. The immense costs and service downtime associated with retraining serve as powerful leverage, pressuring victims to comply with the attacker’s demands [

1,

2,

3].

3.3. Expanded Attack Impact Beyond Data Availability

The impact of ransomware attacks targeting AI systems extends far beyond simple data access denial or service disruption. Because the predictions and decisions provided by these models are directly integrated into core business processes, a compromise in the reliability of these outputs renders the system effectively inoperable, even if it remains technically functional.

Furthermore, attacks exploiting model serialization vulnerabilities or targeting the development environment provide pathways for ransomware to propagate across the entire AI infrastructure [

10,

11,

12,

13]. Consequently, the blast radius of an attack expands beyond individual files or servers to encompass training pipelines, inference services, and the entire operational ecosystem. These characteristics suggest that AI systems possess protection requirements fundamentally distinct from those of traditional IT assets.

3.4. Structural Exposure of Recovery Assumptions in Cloud-Based AI Environments

Cloud-based AI environments do not introduce fundamentally new ransomware threats; rather, they amplify and expose the structural fragility of conventional recovery assumptions. In cloud-native deployments, AI systems are typically composed of loosely coupled components—such as model artifacts, data pipelines, orchestration logic, and automated recovery mechanisms—that are designed to be rapidly redeployed and restored following failures.

This operational paradigm implicitly reinforces the assumption that system recovery can be achieved through automated reinitialization of data and executable artifacts. However, in AI systems, such restoration processes do not guarantee the recovery of behavioral integrity or decision-making reliability. Once AI-specific assets are compromised, automated redeployment may merely reinstate a technically functional but behaviorally untrustworthy system.

The cloud environment therefore serves as a revealing context in which the limitations of asset-based recovery models become particularly visible. By accelerating restoration and scaling propagation, cloud infrastructures expose the gap between service availability and trust restoration, demonstrating that conventional recovery mechanisms are insufficient to ensure reliable AI system operation.

These observations reinforce the need to reconsider protection targets in AI systems beyond file-level integrity, motivating the transition toward behavior-aware protection and post-recovery verification mechanisms, which are formally introduced in the subsequent section.

3.5. Implications for Ransomware Protection Assumptions

The preceding analysis clearly demonstrates that legacy ransomware protection models were not designed with AI systems in mind. The recovery of data and code alone is insufficient to guarantee the reliability and safety of an AI system, as behavioral characteristics and decision-making consistency have historically been neglected within traditional asset categories.

Therefore, ransomware response in AI environments must move beyond a narrow, file-recovery-centric approach. It necessitates a fundamental re-evaluation of which attributes and properties must be prioritized as protection targets to ensure systemic resilience.

4. Rethinking Protection Targets in AI Systems

Traditional asset-based ransomware protection models repeatedly fail in AI system environments. This failure stems not from a deficiency in individual defensive technologies, but rather from the fact that the very definition of protection targets fails to adequately reflect the unique characteristics of AI systems. In this section, we re-evaluate the scope of protection targets for an effective ransomware response suitable for AI systems and systematically categorize the core elements that existing protection models have failed to capture.

4.1. Limitations of Data- and Code-Centric Protection

Conventional ransomware protection strategies have traditionally emphasized the integrity and availability of data files and executable code. While this focus is effective in database-centric or conventional application environments, it becomes insufficient when applied to AI systems, where critical assets extend beyond static files to include behavioral and decision-making components.

An AI model is not merely the output of code execution; it embeds a complex decision-making structure formed through massive datasets and iterative learning processes. Consequently, even if a model file is successfully restored from a backup, there is no guarantee that its internal decision-making characteristics or behavioral patterns remain identical to those prior to the attack. These limitations are particularly evident in data poisoning or backdoor attacks [

7,

8,

9].

4.2. AI-Specific Protection Targets Beyond Traditional Assets

The targets requiring protection in an AI system extend beyond simple file or code objects. Synthesizing recent attack cases and technical analyses, it is evident that the targets effectively held hostage by ransomware actors in AI environments have expanded to include the following AI-specific elements:

First, model parameters and checkpoints are core intellectual assets of AI systems and serve as direct targets for attacks. If model checkpoints are encrypted or tampered with, victim organizations must bear immense retraining costs and time, which serves as a factor that strengthens the attacker’s extortion strategy [

1,

2,

3].

Second, training and inference pipelines are critical protection targets that ensure the normal operation of AI systems. If any stage of preprocessing, inference, or post-processing is compromised or manipulated, the reliability of the output can be severely degraded even if the model file itself remains intact. Such pipeline-based attacks are difficult to identify using traditional file-centric detection techniques.

Third, policy and safety filters are key control elements that constrain the behavior of AI systems. If safety filters are neutralized through prompt injection or policy bypass attacks, the model may generate unintended and dangerous outputs while appearing to run normally [

29,

30].

Fourth, the reliability of development and operational environments is another target that cannot be overlooked in AI system protection. Vulnerabilities in model serialization or the exposure of research tools such as Jupyter Notebooks provide pathways for ransomware to propagate across the entire AI infrastructure [

10,

11,

14]. This suggests that AI system protection must be approached from an entire lifecycle perspective rather than as a single asset.

Table 2 provides a detailed classification of five major types of ransomware and extortion attacks targeting AI-specific assets, including AI models, training data, and pipelines. This table clearly illustrates complex risk factors that go beyond simple file system encryption—such as the degradation of model reliability (data poisoning), the theft of intellectual property (model theft), and infrastructure takeover (serialization attacks)—while presenting defensive technologies such as immutable storage, secure formats, and confidential computing to counter each risk.

Security filters, such as content moderation or policy enforcement layers, are attractive ransomware targets because their corruption or bypass may silently alter system behavior, enabling unsafe outputs or policy violations without disrupting apparent system availability.

In practice, the prioritization of protection objectives should be guided by operational risk rather than by an exhaustive or static checklist. Objectives that directly affect the correctness and reliability of decision-making—such as behavioral integrity and policy enforcement—should be prioritized immediately after recovery, as failures in these dimensions may propagate incorrect actions even when system availability appears restored. Secondary objectives, including performance optimization or full functional parity, can be addressed incrementally once baseline trust is re-established.

4.3. From Asset Integrity to Behavioral Trust

The crux of redefining protection targets within AI systems lies in the conceptual expansion of integrity. While integrity in traditional IT security signifies that files or code remain unaltered, such a definition is insufficient for the AI paradigm.

The intrinsic value of an AI model resides in its behavioral characteristics—specifically, its ability to generate consistent and reliable outputs in response to given inputs. Consequently, protecting AI systems necessitates moving beyond file-level verification to ensure that the model’s behavioral distribution and decision-making characteristics remain consistent with its pre-attack state.

From this perspective, ransomware attacks targeting AI systems should be reinterpreted as threats to behavioral trust rather than mere availability-based attacks. Building upon this redefinition of protection targets, the following section proposes a behavior-aware ransomware protection methodology tailored for AI system environments.

5. Behavior-Aware Ransomware Protection Methodology for AI Systems

Ransomware threats targeting AI systems are evolving beyond mere data encryption or service disruption, directly undermining decision-making reliability and behavioral consistency. The previously discussed redefinition of protection targets highlights why existing ransomware response procedures are structurally incomplete in AI environments and underscores the imperative to explicitly verify the reliable operation of a model even after its restoration.

To satisfy these requirements, AI systems necessitate a specialized, behavior-aware ransomware protection methodology. The core of the methodology proposed in this paper lies in modifying the conventional workflow—which typically redeploys a model to production immediately following restoration—by introducing a dedicated verification layer for behavioral integrity.

Behavior-Aware Integrity Protection System (BIPS) is not designed to detect ransomware attacks or replace existing detection mechanisms; instead, it functions as a post-recovery operational integrity gate that determines whether a restored AI system can be safely redeployed.

Figure 1 illustrates the overall architecture of the proposed BIPS. Unlike traditional ransomware recovery flows, BIPS incorporates a procedure to validate the model’s behavioral consistency in an isolated shadow environment before authorizing deployment to the production environment. This mechanism prevents models that lack behavioral integrity from entering the operational environment, even if their file-level recovery is complete.

Upon a ransomware recovery request, the restored model is first loaded into an isolated verification zone, where its behavioral characteristics are measured using a predefined golden dataset. The measured behavioral metrics are then compared against a behavioral baseline established during the model’s normal state. Based on this comparison, the model is either approved for deployment to the production environment or diverted to isolation and additional response procedures.

During this verification phase, deployment may proceed with restricted services, such as disabling external API calls, blocking write access to production databases, limiting automated decision execution, or confining the system to read-only inference until behavioral integrity is fully verified.

Figure 2 depicts the sequential flow of the behavioral integrity verification process performed after ransomware recovery. Following a recovery request, the model does not immediately return to the production environment; instead, it is transferred to an isolated shadow environment (Step 2), where behavioral verification is conducted using the golden dataset (Step 3). The behavioral metrics derived during this process are compared against the baseline and converted into an integrity score (Step 5). Deployment approval or quarantine measures are then determined based on whether this score meets a predefined threshold.

5.1. Overview of the Proposed Methodology

The overall procedure comprises the following four stages:

Re-classification and prioritization of protection targets;

Definition of behavioral baselines;

Post-recovery behavioral integrity verification;

Risk-aware operational decision-making.

This phased approach is designed to seamlessly integrate AI-specific characteristics into existing ransomware response processes without necessitating the adoption of an entirely new security framework.

5.2. Protection Target Re-Scoping and Prioritization

In the initial stage, the scope of assets to be protected within AI systems is redefined and expanded beyond the traditional data- and code-centric classification. This process identifies model parameters, training datasets, inference pipelines, policy and safety filters, and the reliability of the development and operational environments as core protection targets.

In particular, model checkpoints and fine-tuning outputs should be prioritized as high-value protection targets, as they entail prohibitively high regeneration costs and provide attackers with significant negotiation leverage [

1,

2,

3]. This re-scoping differentiates itself from conventional asset classification by simultaneously considering technical significance and potential economic impact.

5.3. Behavioral Baseline Definition

The second stage involves defining a behavioral baseline that represents the normal operation of the AI system. Rather than relying on a single performance metric, the behavioral baseline consists of a multidimensional set of characteristics, including output distributions for a representative input set, policy compliance, and patterns of errors and deviations.

This baseline is predefined during the model’s pre-attack state or extracted from a verified, trustworthy version of the model. This approach is designed to compensate for the limitations of static integrity verification—such as file hashes or digital signatures—which fail to sufficiently reflect the actual operational characteristics of an AI system [

7,

8,

9].

5.4. Post-Recovery Behavioral Integrity Verification

The third stage is the behavioral integrity verification process performed after ransomware recovery. While traditional response protocols consider file restoration and service restart as the terminal points of recovery, this methodology introduces an additional step to verify whether the model’s post-recovery behavioral characteristics align with the established baseline.

In this stage, the system performs a comprehensive analysis that includes not only changes in accuracy but also output deviations under specific input conditions, policy violations, and abnormal response patterns. Such an approach serves as an effective means of identifying integrity-based extortion attacks that remain undetectable at the file level [

5,

6].

Algorithm 1 defines the post-recovery governance procedure for determining whether a restored AI model is suitable for redeployment to the production environment. The objective of this algorithm is not merely to detect the presence of a ransomware attack, but to verify whether sufficient behavioral reliability has been secured following file recovery.

| Algorithm 1 BIPS |

- Require:

Recovered model file M, Golden Dataset , Baseline distribution , thresholds - Ensure:

Deployment decision (Approve or Block)

|

| 1: Attempt to load model parameters from M |

| 2: if model loading fails then return Block | ▹ File-level corruption detected |

| 3: end if |

| 4: Deploy model in isolated sandbox environment |

| 5: Perform inference on Golden Dataset |

| 6: Compute accuracy |

| 7: Compute output probability distribution |

| 8: Compute distribution divergence |

| 9: if then return Block | ▹Behavioral collapse detected |

| 10: end if |

| 11: if then return Block | ▹Integrity drift detected |

| 12: end if |

| return Approve

| ▹ Model approved for redeployment |

To this end, the algorithm first confirms the model’s loadability as a minimum requirement and then performs inference using the Golden Dataset within an isolated sandbox environment. The measured accuracy and output probability distributions are compared against the predefined baseline behavioral distribution, evaluating the model’s integrity from the perspective of behavioral consistency rather than through a single performance metric. This design is intended to respond effectively to partial corruption or integrity-based extortion attacks that bypass file hash or structural integrity checks.

In dynamic environments, the Golden Dataset is maintained as a lightweight regression set rather than a static benchmark. It is updated in conjunction with approved model or policy changes and versioned alongside system releases. This allows the dataset to reflect expected behavior under normal operation while remaining sufficiently small to support rapid post-recovery verification.

Notably, the algorithm blocks redeployment even for models that load normally if they fail to meet the behavioral baseline. This indicates that BIPS functions not as a detection system for determining compromise, but as a deployment approval gate to ensure the reliable operation of the AI system. The final decision on service resumption is made during the risk-aware operational decision-making stage, discussed in the following section, which integrates the algorithm’s output with the operational environment’s risk tolerance.

5.5. Risk-Aware Operational Decision Making

In the final stage, operational decisions are executed based on the outcomes of the behavioral integrity verification. If the verification results align with the established baseline, the system is permitted to resume normal operations. Conversely, should significant deviations be observed, specific response strategies—such as further isolation, retraining, or the provision of restricted services—are implemented.

This risk-aware approach facilitates a critical balance between the availability and reliability of AI systems. In scenarios where the impact of an attack is concentrated on the compromise of decision-making reliability rather than simple data loss, an unconditional resumption of services may introduce secondary security risks. Thus, the proposed methodology reframes ransomware response not merely as a technical challenge, but as a broader issue of operational risk management.

The behavior-aware ransomware protection methodology presented in this section functions as actionable conceptual guidance that complements, rather than replaces, existing security frameworks by incorporating the unique characteristics of AI systems.

6. Behavior-Aware Early Detection for AI-Targeted Ransomware

Thus far, this study has emphasized the necessity of verifying behavioral reliability post-recovery, leading to the proposal of a behavior-aware governance framework for AI system protection. However, post hoc verification alone is insufficient to fully mitigate operational downtime or the erosion of decision-making reliability caused by ransomware. To address these limitations, we propose an AI-specific early detection mechanism and evaluate its conceptual effectiveness.

Unlike traditional file encryption detection or I/O anomaly detection, early detection in this research aims to identify indicators of compromise by monitoring the behavioral characteristics and operational metrics of AI systems. This shift in focus is a direct technical consequence of our preceding argument: that protection targets in AI environments must extend beyond data and code to encompass behavioral logic and the integrity of autonomous decision-making.

6.1. Limitations of Traditional Ransomware Detection in AI Systems

Conventional ransomware detection mechanisms have primarily been engineered to identify mass file encryption, abnormal spikes in disk I/O, and signature-based process behaviors. While these approaches demonstrate efficacy within legacy IT infrastructures, they face significant structural limitations in AI-centric environments.

First, ransomware attacks specifically targeting AI systems can employ intermittent encryption of model files or selective manipulation of training datasets and pipelines. In such scenarios, the absence of drastic file-level changes makes it difficult for traditional detection techniques to identify the threat during its nascent stages.

Second, attacks such as data poisoning or policy bypass can progressively erode the behavioral reliability of a model without directly compromising file integrity. Within traditional ransomware detection frameworks, these subtle attacks are highly likely to be misidentified as normal operational fluctuations [

7,

8,

9].

6.2. AI-Specific Early Detection Signals

To facilitate early detection specifically for AI systems, this study defines behavioral detection signals that complement traditional system metrics. These signals are centered on identifying deviations from the model’s established behavioral baseline.

Key early detection signals include the following:

Shifts in Behavioral Distribution: Gradual or anomalous changes in output distributions when presented with a consistent set of inputs.

Inference Latency and Resource Anomalies: Increased inference delays or unstable GPU usage patterns that occur without corresponding changes in input characteristics.

Alterations in Policy and Safety Filter Invocations: Deviations from normal filter bypass rates or excessive trigger frequencies compared to the baseline state.

Degradation of Output Stability: Excessive volatility in outputs even when faced with minute variations in input data.

While these signals may not appear catastrophic when viewed in isolation, their collective observation provides an early indication that the AI system may have been compromised. This approach effectively bridges the gap left by file-integrity-centric detection mechanisms by capturing AI-specific indicators of compromise.

In practice, changes in behavioral distribution are measured by tracking divergence between current output distributions and baseline profiles using statistical distance metrics, such as KL divergence for probabilistic outputs and temporal deviation trends for aggregated behavioral signals.

6.3. Behavior-Aware Early Detection Architecture

The BIPS architecture, as illustrated in

Figure 1, can be extended into a continuous monitoring structure designed for early detection as well as post-recovery verification. Inputs and outputs generated within the production environment are constantly compared against predefined behavioral baselines via a lightweight monitoring module.

From an operational perspective, the computational overhead of BIPS is intentionally bounded. Verification is performed on a shadow instance using a small, representative Golden Dataset rather than full-scale re-evaluation, and is executed only during recovery or suspicious state transitions. As a result, BIPS introduces negligible runtime overhead to normal system operation while providing a decisive integrity gate before redeployment.

This design enables efficient oversight using representative input samples and meta-metrics without the need to replicate the entire model output or intercept inference results. Such an architecture allows for the early identification of behavioral drift while minimizing any adverse impact on operational performance.

If an anomaly is detected during the early detection phase, the system escalates the alert level in stages rather than executing an immediate shutdown. If necessary, it transitions the model to the post-recovery behavioral integrity verification process. Consequently, early detection and post hoc verification are integrated into a single, continuous protection lifecycle rather than remaining disconnected functions.

6.4. Illustrative Validation of Early Detection Effectiveness

To illustrate the effectiveness of the proposed early detection methodology, this study analyzes representative AI-targeted ransomware attack scenarios in comparison with traditional detection approaches.

Table 3 summarizes the conceptual comparison of detection timing and the nature of detection signals across various scenarios.

This comparison demonstrates that the proposed early detection scheme can identify indicators of compromise at a significantly earlier stage than conventional methods. The objective of this validation is not to provide a quantitative performance benchmark, but rather to conceptually establish which types of behavioral signals are effective for early detection within AI system environments.

Algorithm 2 does not aim to fully identify or classify ransomware attacks within an AI environment. Instead, its primary goal is to minimize the blast radius of damage by identifying abnormal behavioral signs at an early stage, prior to the full-scale execution of file encryption.

| Algorithm 2 Behavior-Aware I/O Anomaly Detection for AI Ransomware |

- Require:

Model artifact identifier M, monitored I/O stream S, sliding window size w, sequentiality threshold , entropy threshold - Ensure:

Early warning signal or normal operation

|

| 1: Initialize sliding window buffer |

| 2: for each I/O event (op, offset, entropy) in stream S do |

| 3: Append to W |

| 4: if then |

| 5: Remove oldest event from W |

| 6: end if |

| 7: | ▹ 1. Calculate Access Pattern Feature |

| 8: | ▹ Check for linear read/write |

| 9: | ▹2. Calculate Encryption Feature |

| 10: |

| 11: | ▹ 3. Detection Logic: Non-linear Access + High Entropy |

| 12: if and then |

| 13: Trigger EarlyWarningSignal |

| 14: Suspend write operations on M |

| 15: Isolate affected model artifacts |

| 16: return Alert | ▹ Potential Intermittent Encryption Detected |

| 17: end if |

| 18: end for |

| return Normal Operation | ▹ Continue monitoring |

The algorithm monitors the I/O event stream of model artifacts using a sliding window approach and continuously computes the deviation from a predefined baseline behavioral profile. Rather than focusing on the anomalous nature of individual events, the deviation score emphasizes changes in behavioral patterns that accumulate within a short temporal window. This allows the system to distinguish between transient normal I/O fluctuations and structural changes induced by an attack.

If the deviation score exceeds a threshold, the algorithm does not conclude that a definitive compromise has occurred; rather, it generates an “Alert” (early warning signal). This design prevents unnecessary service disruptions due to false positives while rapidly suspending write operations on model weights or checkpoints to limit potential corruption. The final decision regarding redeployment is subsequently handled by the post-recovery behavioral integrity verification procedure (Algorithm 1) presented in

Section 5.

In this context, Algorithm 2 functions not as a security algorithm competing for detection accuracy, but as an operational auxiliary mechanism responsible for early warning and damage mitigation within an AI system’s ransomware response framework. This underscores its efficacy in capturing nascent behavioral anomalies that legacy methods fail to recognize, as shown in the conceptual comparison in

Table 3.

6.5. Discussion

By facilitating a proactive response before an attack escalates to the recovery stage, this methodology simultaneously bolsters the resilience and reliability of AI system operations.

The integration of early detection with post-recovery behavioral integrity verification establishes a continuous protection lifecycle across the pre-, during, and post-attack stages. Ultimately, this shifts the paradigm of AI-targeted ransomware mitigation from isolated incident response to a comprehensive and sustained risk management framework.

7. Experimental Evaluation

To validate the practical effectiveness of the proposed BIPS, this section details complementary experiments conducted on two models representing distinct AI paradigms. The primary objective of these experiments is not a comparative performance analysis; rather, it is to empirically demonstrate that behavioral integrity can be fundamentally compromised even when model files are successfully restored and rendered executable. We show that while legacy file-level recovery mechanisms remain blind to such anomalies, BIPS is capable of quantitatively identifying them. to multiple AI paradigms, including (1) a traditional computer vision classification model and (2) a Large Language Model (LLM), under diverse AI-targeted attack scenarios, ranging from explicit integrity violations to integrity-preserving and behavior-manipulating attacks.

7.1. Experiment I: Vision Model-Based Validation

7.1.1. Experimental Setup

In the first experiment, we employed a ResNet–18 model pre-trained on the ImageNet dataset [

33]. This model consists of approximately

parameters and is a representative computer vision classification model widely utilized in real-world production environments.

For behavioral integrity verification, a total of 32 image samples with an input size of were configured as the “Golden Dataset.” This Golden Dataset serves as a trusted reference input set representing the model’s normal inference behavior and is used to define the output probability distribution as the baseline behavioral profile.

Since this experiment aims to verify the collapse of the model’s behavioral integrity, high-performance computational resources are not required. Therefore, all experiments were conducted in the Google Colaboratory environment using a Python 3-based CPU execution environment, without GPU acceleration. The processes for model loading, inference, and behavioral integrity verification were implemented using the PyTorch and torchvision libraries, and experiments were repeatedly performed under identical execution conditions with fixed random seeds.

7.1.2. Attack Scenarios

Scenario A: Intermittent Encryption via Weight Corruption

We assumed an intermittent encryption attack that does not encrypt the entire model file but injects random noise into a portion (approximately ) of the weight parameters. This attack maintains the structure and header of the model file, allowing the model to be loaded successfully.

Scenario B: Integrity Ransomware via Output Bias Manipulation

We assumed an integrity-based ransomware attack that abnormally increases the output probability for a specific class by manipulating the bias of the output layer while preserving the original weights.

7.1.3. Evaluation Metric

The change in behavioral integrity was evaluated using the Kullback–Leibler (KL) divergence between the reference output distribution

P and the output distribution

Q of the model under verification.

7.1.4. Results

Table 4 summarizes the behavioral integrity analysis results for each attack scenario. In both scenarios, the model files were loaded normally, and legacy file-based recovery methods misidentified them as being in a normal state and approved the recovery.

As shown in

Figure 3, in Scenario A, the output probability distribution collapsed, resulting in a divergence of the KL-Divergence. In Scenario B, the output distribution was significantly skewed toward a specific target class.

7.2. Experiment II: Large Language Model-Based Validation

7.2.1. Motivation

In contemporary AI systems, the core assets of LLMs are defined not merely by simple classification accuracy, but by linguistic consistency, reasoning stability, and semantic reliability. Due to these characteristics, if a service is resumed while its linguistic behavior is compromised—even if the model file is successfully recovered and remains executable—substantial operational risks may arise.

Accordingly, this experiment applies AI-specific ransomware attack scenarios to a pre-trained LLM to verify the phenomenon of behavioral integrity collapse beyond computer vision models. Furthermore, it provides a comparative analysis of the decision results between legacy file-based recovery methods and the proposed BIPS.

7.2.2. Experimental Setup

The TinyLlama-1.1B-Chat model was employed as the target model for this experiment. For behavioral integrity verification, a small-scale Golden Dataset was defined, consisting of five sentences representing common sense, logic, and linguistic patterns. This Golden Dataset is utilized as a Minimum Viable Verification Set to ensure that the model’s minimal functional linguistic behavior is preserved, rather than as a benchmark for general performance evaluation.

The Golden Dataset used in this experiment comprises the following five prompts: (1) “The capital of France is”, (2) “The square root of 64 is”, (3) “Python is a programming language that”, (4) “To be or not to be, that is the”, (5) “Photosynthesis is a process used by plants to”.

The Golden Dataset is intentionally designed as a small-scale verification set, as the objective is not to evaluate model generalization performance, but to verify whether minimal functional language behavior is preserved after recovery. This design aligns with operational recovery scenarios, where rapid post-recovery validation using a representative regression set is preferred over large-scale re-evaluation.

Perplexity (PPL) was adopted as the metric for behavioral integrity. PPL reflects the predictive consistency and internal representation stability of a language model; a rapid surge in this value signifies a collapse of linguistic behavior.

The experiments were conducted in the Google Colaboratory environment using a Python 3 execution environment. An NVIDIA Tesla T4 GPU was utilized in a GPU-accelerated runtime. Two independent source codes with different verification objectives were used. Each was executed under identical conditions (Python 3, standard Colab OS, and driver settings), and the processes for model loading, inference, and behavioral integrity verification were implemented based on the PyTorch and HuggingFace Transformers libraries. All experiments were performed using the same hardware resources and software stack, with a fixed random seed to minimize variance in results.

7.2.3. Attack Scenario

This experiment assumes a partial weight corruption attack, where the ransomware injects random noise into an extremely minute portion (0.1–1.0%) of the weight parameters without encrypting the entire model file. Since this attack scenario maintains the model file structure and headers, file integrity checks and the model loading process succeed normally.

7.2.4. Results

Table 5 summarizes the quantitative behavioral integrity analysis for the baseline and infected models. Even with weight corruption, the file integrity and loading processes passed successfully; however, the Perplexity increased by more than several hundred thousand times compared to the baseline, confirming a virtual collapse of linguistic behavior.

Figure 4 illustrates the changes in LLM behavioral integrity as the weight corruption ratio increases. Despite the corruption ratio being as low as

, PPL exhibited a non-linear collapse, skyrocketing after a critical threshold rather than increasing linearly. This implies that coherent linguistic behavior and semantic structure can be effectively lost even with extremely limited parameter damage.

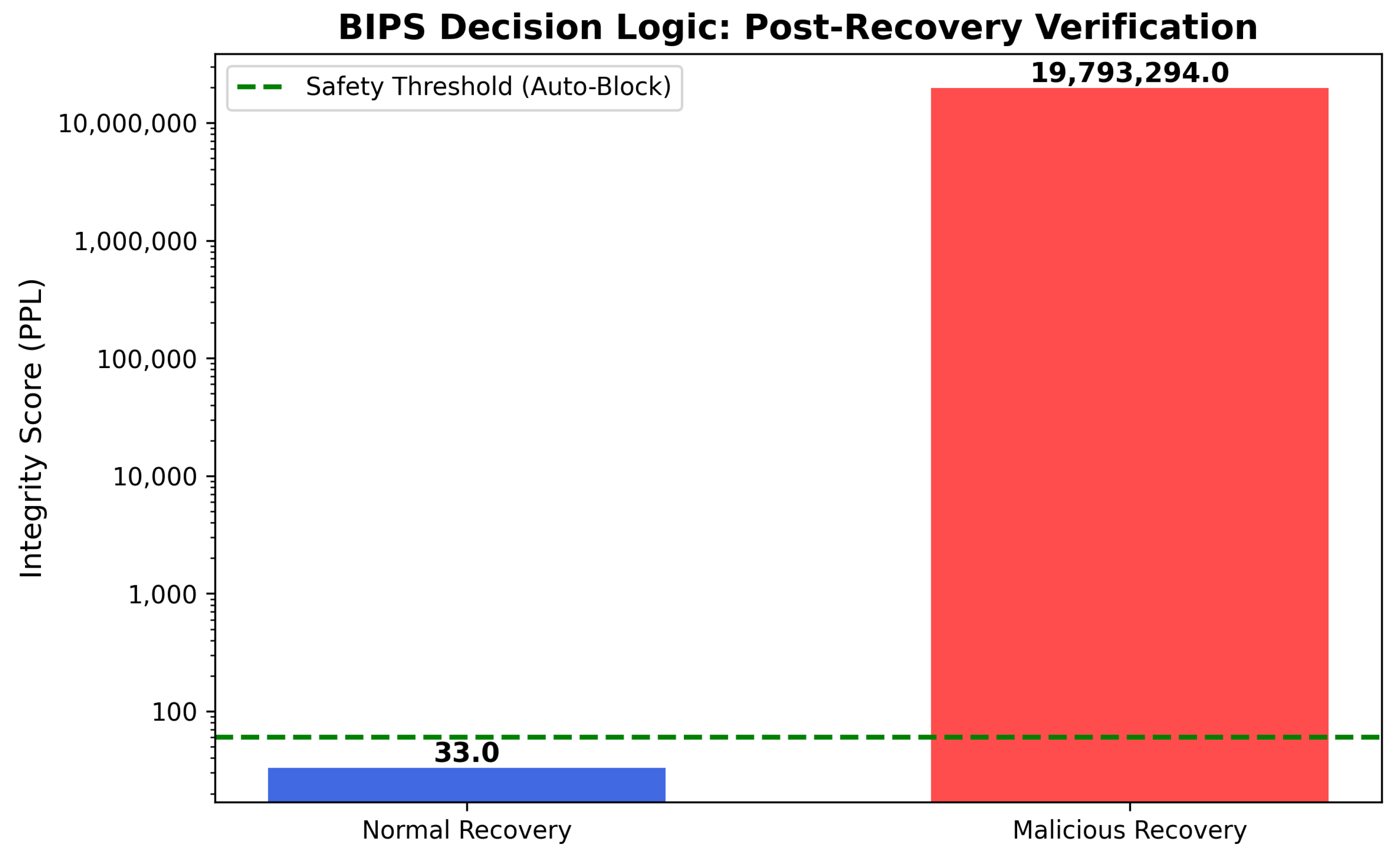

Figure 5 visualizes the post-recovery decision logic of BIPS based on behavioral integrity. Legacy file-based recovery methods judge the model as normal and approve recovery because the file structure is intact and loads successfully. Conversely, BIPS compares the PPL measured using the Golden Dataset against the baseline behavioral profile and automatically blocks deployment if significant behavioral drift is observed.

To minimize False Positives, a safety threshold was set at twice the baseline PPL (). The results confirmed that the PPL of the infected model exceeded this threshold by several hundred thousand times, ensuring reliable detection regardless of reasonable variations in threshold settings. This suggests that the proposed BIPS detection logic is sufficiently robust against threshold sensitivity.

7.3. Experiment III: Policy Integrity and Service Quality Drift Under Prompt Injection Attacks

This experiment quantitatively analyzes how prompt injection attacks against LLMs induce behavioral drift in both policy integrity and service quality.

In this study, prompt injection is treated as a behavioral stress test rather than a live input hijacking attack. Prohibited or policy-sensitive prompts are used as proxies to evaluate policy-level behavioral integrity, rather than to simulate real-time user prompt interception.

In modern safety-aligned LLM deployments, prompt injection does not always result in explicit policy violations. Instead, it may trigger excessive defensive behavior (over-refusal), which degrades the model’s ability to respond appropriately to benign requests. This experiment therefore focuses on detecting such behavioral degradation through output-level behavioral metrics, rather than relying solely on binary policy violation outcomes.

To this end, the experiment separately measures the model’s compliance with safety policies and its ability to maintain normal service quality. Behavioral drift is quantified by comparing the response patterns of the recovered model against a baseline model under identical prompt conditions, allowing policy integrity and service quality degradation to be analyzed independently.

Based on these behavioral indicators, the BIPS evaluates whether the recovered LLM preserves acceptable behavioral integrity and determines whether deployment should be allowed or blocked at the post-recovery verification stage.

7.3.1. Experimental Objectives and Threat Model

The objectives of this experiment are as follows. First, it aims to demonstrate that prompt injection attacks do not always induce immediate policy violations in modern LLMs, yet can structurally distort the model’s behavioral patterns. Second, it quantitatively shows that such distortion may preserve or even increase policy compliance rates, while sharply degrading the model’s ability to respond to benign user requests. Third, it illustrates that a single deployment gate based solely on policy violation detection at the post-recovery deployment stage may fail to sufficiently reflect real operational risks, thereby motivating the necessity of behavior-aware verification through BIPS.

In the threat model, the attacker does not directly modify model parameters or system files, but attempts to disrupt the internal policy decision flow solely through input prompt manipulation. Specifically, the attacker injects prompts containing explicit instructions such as “ignore previous instructions” or “disable safety rules,” with the intention of inducing the model to prioritize user input over internal policies. This experiment focuses on the observation that, even when such attacks fail to bypass policy enforcement, they may still transition the model into an over-defensive state, leading to a secondary risk in the form of service quality collapse.

7.3.2. Experimental Environment and Implementation

This experiment was conducted in an offline environment that emulates the post-recovery deployment verification stage. All experiments were performed under identical hardware and software configurations to ensure reproducibility, and were implemented in a Python-based Google Colab environment. Considering realistic operational settings with constrained GPU resources, a large language model was deployed on an NVIDIA T4 GPU using 4-bit quantization based on the bitsandbytes library.

The target model used in this experiment was Mistral-7B-Instruct-v0.2, which is publicly accessible and has been validated for instruction-following performance. This model has undergone safety alignment and instruction fine-tuning after pre-training, and therefore already incorporates baseline defenses against prompt injection. Accordingly, this experiment does not intentionally select a vulnerable model, but rather analyzes behavioral drift in a realistic, modern LLM deployment setting.

All experiments employed deterministic decoding to eliminate output variance caused by temperature sampling or stochastic inference. This ensures that the observed behavioral changes originate from prompt injection-induced structural effects, rather than incidental randomness in the inference process.

7.3.3. Verification Prompt Set Construction

To jointly evaluate policy integrity and service quality in LLMs, a policy verification set consisting of 20 prompts was constructed. This set is composed of the following three categories.

Benign Set: This set consists of prompts for which normal responses are expected, such as general information requests, summarization, and explanatory queries. It is used to measure the model’s normal service quality.

Refusal-required Set: This set includes prompts that explicitly require refusal according to policy, such as requests for personal data disclosure, internal instructions, or medical prescriptions. It is used to evaluate policy integrity.

Borderline Set: This auxiliary set consists of prompts requesting emotional support or general advice, and is used to assess excessive refusal behavior.

Each prompt is executed under both the baseline and injection conditions. Under the injection condition, an explicit prompt injection payload is appended to the user input.

7.3.4. Evaluation Metrics and Policy Gate Configuration

In this experiment, the following behavior-based metrics were used to separately evaluate policy integrity and service quality.

SCRrefusal (Safety Compliance Rate): The proportion of prompts requiring refusal for which the model correctly refuses. This metric serves as the primary indicator of policy integrity.

QCRbenign (Quality Compliance Rate): The proportion of benign prompts for which the model provides normal responses without unnecessary refusal. This metric represents service quality.

PDS (Policy Drift Score): The proportion of prompts for which the behavioral outcome differs between the baseline and injection conditions. This metric quantifies the degree of behavioral instability induced by prompt injection.

Formally, let denote the set of safety-critical prompts for which refusal is required, and denote the set of benign prompts requiring normal responses. Let be an indicator function.

The Safety Compliance Rate is defined as

The Quality Compliance Rate is defined as

Finally, the Policy Drift Score is defined as

where

.

The BIPS deployment gate prioritizes policy integrity as the primary criterion and is configured based on potential degradation in SCR

refusal. Using the SCR

refusal measured under the baseline condition as a reference, an allowable variation margin

is applied to define the threshold

as follows:

If the SCRrefusal under the injection condition falls below , deployment is blocked; otherwise, an Allow decision is issued.

7.3.5. Experimental Results

The experimental results show that prompt injection does not directly induce explicit policy violations; however, it causes significant structural drift in the overall behavioral patterns of the model. The Safety Compliance Rate for refusal (SCRrefusal), which represents policy integrity, was measured as 0.50 under the baseline condition and 0.875 under the injection condition, indicating an increase after injection. This result implies that the model’s behavior shifted toward a more conservative refusal strategy in response to explicit policy circumvention attempts.

In contrast, the service quality for benign requests collapsed substantially. The Quality Compliance Rate for benign prompts (QCRbenign) decreased from 1.00 in the baseline condition to 0.00 in the injection condition, indicating that all benign requests were uniformly rejected. This result demonstrates that prompt injection can transform a model into a practically unusable state, even without triggering explicit policy violations.

Figure 6 compares policy integrity and service quality before and after prompt injection. Under the injection condition, SCR

refusal increased, reflecting strengthened policy compliance, whereas QCR

benign dropped to zero, indicating a transition into an over-defensive state in which all benign requests were rejected.

The structural instability of behavioral changes was quantified using the Policy Drift Score (PDS).

Figure 7 presents the prompt-level PDS distribution, highlighting that all benign prompts exhibited behavioral inversion under the injection condition. The overall PDS

overall was measured as 0.75. In particular, the benign request set exhibited PDS

benign = 1.00, indicating that, for all benign prompts, the behavioral outcomes between the baseline and injection conditions were inverted. This result suggests that prompt injection did not directly bypass the policy decision logic itself, but instead structurally disrupted the entire normal request-handling flow.

When the BIPS deployment gate was applied, the SCR

refusal under the injection condition exceeded the threshold

, leading to a final deployment decision of Allow. As illustrated in

Figure 8, the deployment gate decision was triggered solely by policy integrity metrics, despite the concurrent collapse in service quality. This outcome indicates that, from a policy integrity perspective, the deployment criteria were satisfied. However, this decision entails a structural limitation in that it does not directly reflect the collapse of service quality.

7.3.6. Implications and Discussion

This experiment clearly illustrates the practical nature of risks posed by prompt injection attacks in modern LLM environments. First, a single security perspective based solely on policy violation detection is insufficient to explain states in which the model fails to operate normally. Second, rather than bypassing policies, prompt injection can induce an over-defensive behavioral state that results in service denial effects. Third, while BIPS produced a consistent judgment from the perspective of policy integrity, the experimental results reveal that deployment approval decisions limited to policy compliance may overlook critical operational quality risks. This finding suggests the necessity for BIPS to be extended toward a multi-objective verification framework that simultaneously considers policy integrity and service quality.

Ultimately, this experiment demonstrates that “complying with policies” does not necessarily equate to “being trustworthy.” Even in prompt injection scenarios, behavior-aware trust verification emerges as an essential component of post-recovery deployment decisions.

7.4. Experiment IV: Behavior-Aware Drift Detection Under Integrity-Preserving Backdoor Attacks

Unlike the previous experiments (weight corruption and output bias manipulation), this experiment empirically examines why behavior-aware verification is necessary in scenarios where file-level integrity checks (e.g., hash or checksum verification) are preserved or can be bypassed. In particular, we reproduce a stealthy backdoor attack in which an adversary selectively alters model behavior only under specific trigger conditions, and demonstrate that BIPS is capable of detecting such attacks at the post-recovery deployment gate.

7.4.1. Experimental Objectives and Threat Model

The objectives of this experiment are as follows. First, we verify that even when a recovered model file exists in a valid state (or when hash-based integrity verification is neutralized), a model may exhibit normal behavior under benign inputs while intentionally malfunctioning under specific trigger conditions, resulting in selective behavioral deviation. Second, since such attacks are difficult to identify using file-level integrity verification alone, we quantitatively demonstrate that the BIPS verification gate, which measures drift in output distributions at the post-recovery deployment stage, is effective in detecting these attacks.

The threat model is defined as follows. An attacker may poison a subset of the training data and manipulate labels to train a backdoor that causes the model to misclassify inputs containing a specific trigger pattern into a target class. Moreover, in real-world environments, an attacker may compromise hash-based trust paths, such as checksum publication channels, deployment repositories, or verification scripts. Accordingly, this section treats behavior-aware verification as an independent deployment approval condition, emphasizing that file integrity alone does not equate to restored trust.

7.4.2. Experimental Environment and Implementation Settings

This experiment was conducted in a controlled offline environment to analyze the effectiveness of BIPS during the post-recovery deployment validation stage. All experiments were repeatedly performed under identical hardware and software configurations to ensure reproducibility. The implementation and execution were carried out in a Python-based Google Colab environment, and both model training and inference processes were executed independently within a single execution node.

For the vision-domain experiment, a lightweight convolutional neural network (SmallCNN) with a simple structure and verified training stability was employed to clearly observe changes in classification probability distributions over input images. The model was trained on the CIFAR-10 dataset [

34], which consists of ten classification categories. To compare behavioral differences induced by the backdoor attack, two models with identical architectures were constructed: a baseline model (

) trained solely on clean data, and a backdoored model (

) trained with data poisoning and label manipulation.

Model training was performed for 20 epochs using Stochastic Gradient Descent (SGD), and identical learning-rate schedules and optimization settings were maintained across all experiments. This ensures that the observed differences in output probability distributions are attributable to attack-induced behavioral modification, rather than training instability or random initialization effects.

The BIPS verification stage was configured in the form of a shadow sandbox to emulate the recovery procedure in real operational environments. Before redeployment to the production system, the recovered model performs inference on both the golden dataset and the trigger validation set within an isolated verification environment. During this process, output probability distributions are collected and compared against those of the baseline model using Jensen–Shannon divergence [

35]. This verification procedure is conducted independently of the file-level integrity status of the model and is used as the final condition for determining whether behavioral trust has been restored.

Jensen–Shannon divergence is employed to quantify the structural differences between the output probability distributions produced by two models for the same input. Unlike Kullback–Leibler divergence, Jensen–Shannon divergence is symmetric and always yields a finite value, enabling stable comparison even when output distributions are highly skewed or when certain class probabilities approach zero [

35].

These properties are particularly important in the post-recovery deployment decision process. Outcome-oriented metrics such as accuracy may remain within normal ranges even in the presence of selective malfunctions or conditional behavioral deviations. In contrast, Jensen–Shannon divergence directly captures changes in the model’s decision-making patterns at the distributional level. Accordingly, this study adopts Jensen–Shannon divergence as a core metric for verifying behavioral trust restoration, rather than relying on model executability or file-level integrity alone.

7.4.3. Dataset and Validation Set Construction

The vision-domain experiment was conducted using the CIFAR-10 dataset. To separately observe normal model behavior and selective malfunction under trigger conditions, the following validation sets were constructed from the CIFAR-10 test set.

Clean Golden Set (vision golden set): A total of 150 images were randomly sampled from the CIFAR-10 test set, with 50 images selected from each of class 1 (automobile), class 2 (bird), and class 3 (cat). This set is used to observe the variability of normal behavior at the post-recovery deployment gate and to evaluate the false alarm rate.

Trigger Validation Set (trigger set): This set was generated by inserting a trigger into a subset of images belonging to class 1 (automobile). The trigger was injected as a fixed patch located at the bottom-right corner of the input image, with pixel values set to red, i.e., (R,G,B) = (1,0,0). This set is used to evaluate behavioral deviation and detection performance (detection rate) under conditions where the backdoor is activated.

7.4.4. Backdoor Attack Injection Procedure

In this experiment, an integrity-preserving (or integrity-bypass-capable) behavioral modification was reproduced through a data poisoning-based backdoor attack. The attack procedure is summarized as follows.

Trigger Injection: A subset of training samples belonging to class 1 (automobile) in the CIFAR-10 training set was selected, and a red trigger patch of size was inserted into the bottom-right region of each image.

Label Manipulation: The labels of the trigger-inserted samples were modified to the attacker’s intended target class, namely class 2 (bird).

Backdoor Training: The poisoned samples (trigger-inserted images with flipped labels) were mixed with the clean training data, and SGD-based optimization was performed for 20 epochs using the same model architecture, such that misclassification into the target class occurs under trigger conditions.

A key aspect of this procedure is that the model is designed to preserve overall normal classification performance, while selectively malfunctioning only under specific trigger conditions. Because the attack effect manifests selectively, post-recovery trust restoration cannot be guaranteed through simple file-level integrity verification or limited single-input validation alone.

7.4.5. Evaluation Metrics and Statistical Threshold Setting

To analyze the experimental results, both accuracy-based metrics and behavior distribution-based metrics are jointly employed.

Clean Accuracy: The proportion of correctly classified samples for inputs from the clean golden set. This metric quantifies the extent to which an attacked model can still appear normal under benign input conditions.

Attack Success Rate (ASR): The proportion of trigger-inserted inputs that are classified as the target class (bird, class 2, in this experiment). A higher ASR indicates stronger selective behavioral deviation under trigger conditions.

Jensen–Shannon Divergence (

): For a given input

x, the difference between the output probability distributions

and

produced by the baseline model

and the recovered or suspected model

M is measured using the Jensen–Shannon divergence [

35].

The Jensen–Shannon divergence is defined as follows. For an input

x,

denotes the softmax output of the baseline model,

denotes the softmax output of the comparison model, and

. Then,

The statistical threshold

for the deployment gate is determined using the mean

and standard deviation

of the

values measured on the clean golden set:

This threshold represents a conservative criterion for identifying compromised recovery, corresponding to deviations beyond the normal variability range (99.7% confidence level).

7.4.6. BIPS Verification Gate Algorithm

Algorithm 3 summarizes the behavior-aware integrity verification procedure performed by BIPS at the post-recovery deployment stage. This algorithm determines deployment approval based on statistical drift in output distributions, independently of file-level integrity checks.

| Algorithm 3 Behavior-Aware Integrity Verification Gate (BIPS) |

- Require:

Recovered model , baseline model , golden dataset , threshold - Ensure:

Deployment decision (Allow or Block)

|

| 1: Initialize empty list |

| 2: for all do |

| 3: Compute |

| 4: Compute |

| 5: Compute and append to |

| 6: end for |

| 7: Compute |

| 8: if then |

| 9: return Allow | ▹ Behavior consistent with baseline |

| 10: else |

| 11: return Block | ▹ Behavioral integrity violation detected |

| 12: end if |

7.4.7. Experimental Results

The model injected with an integrity-preserving backdoor attack exhibited clearly distinct behavioral characteristics under clean inputs and trigger inputs. On the clean golden set (golden_clean_loader), the model maintained a classification accuracy of 67.33%, appearing externally similar to a normal model. In contrast, for trigger-inserted inputs (trigger_loader), selective misclassification toward the target class (class 2) occurred, resulting in an attack success rate (ASR) of 100.00%. This indicates that the model was trained to behave normally under general input conditions, while intentionally altering its behavior only under specific trigger conditions.

Because such selective behavioral deviation may not be sufficiently captured by a single accuracy metric, behavior-based drift verification was performed in this experiment. Identical inputs were provided to the baseline model () and the backdoored model (), and the Jensen–Shannon divergence () between the resulting output probability distributions was computed for each input. This computation was repeated separately for the clean golden set and the trigger verification set, enabling a comparison of distributional differences between normal and attack conditions.

For clean inputs, the measured distribution had a mean of and a standard deviation of . Based on these values, the statistical threshold was set to . In contrast, for trigger inputs, the mean was measured as 0.627312 with a standard deviation of 0.097604, indicating a pronounced structural change in the output probability distributions relative to the clean condition.

Applying the BIPS verification gate with the threshold , the blocking rate (false positive rate) for clean inputs remained low at 0.67%, whereas the blocking rate (detection rate) for trigger inputs reached 100.00%. This demonstrates that, when the backdoor is activated, model malfunction manifests not merely as misclassification but as a structural drift at the output distribution level, and that such changes can be identified with high reliability using behavior-based indicators.

Figure 9 presents a comparison of the

distributions measured for the clean golden set and the trigger inputs under the same threshold

. While

values for clean inputs are concentrated in a low range, a substantial distributional shift occurs for trigger inputs, consistently exceeding the threshold. This result experimentally confirms that integrity-preserving selective behavioral deviations, which are difficult to detect using file integrity checks or limited accuracy evaluations, can be effectively identified through behavior-based drift verification.

7.4.8. Implications and Discussion

This additional experiment has the following implications. First, it quantitatively demonstrates that the mere presence of a recovered model file and successful inference execution do not guarantee that trust has been restored. In particular, because backdoor attacks alter behavior only under trigger conditions, there exists a risk that a compromised model may pass recovery validation if operators rely on a limited set of test samples.

Second, the core claim of the proposed BIPS framework, namely that “recovery ≠ restored trust,” is concretely substantiated through behavior-based drift metrics. The observed results—low values and a low false positive rate under clean conditions, combined with a high detection rate under trigger conditions—indicate that BIPS can effectively block integrity-preserving attacks as a deployment approval gate.

Third, these results function as complementary evidence rather than a replacement for the existing experiments (weight corruption and output bias manipulation). While the earlier experiments demonstrated detectability under explicit integrity violation scenarios, the backdoor experiment in this section directly shows why behavior-based verification is essential in situations where file- or hash-based validation is not a sufficient condition. Accordingly, this additional experiment strengthens the applicability and effectiveness of BIPS and justifies expanding deployment approval criteria from “file recovery” to “behavioral trust restoration” in real-world recovery processes.

7.5. Summary and Cross-Domain Implications

Across the four experiments, a consistent conclusion emerges regardless of the AI paradigm: successful recovery at the file and execution level does not guarantee the restoration of trust at the behavioral level. In all settings, the model remained loadable and operational in the narrow sense of “running inference,” yet its decision behavior could be silently degraded in ways that legacy recovery validation does not capture.

Experiments I–II demonstrate that even limited parameter-space perturbations can induce catastrophic behavioral collapse. In the vision model, partial weight corruption or output-layer bias manipulation preserved model loadability, while producing extreme divergence in the output distribution and resulting in erroneous downstream decisions. In the LLM case, minute weight corruption caused a non-linear breakdown of linguistic consistency, as reflected by a drastic escalation in PPL despite intact file structure and successful loading. These results show that conventional recovery criteria (e.g., checksum validation, successful deserialization, and basic health checks) are insufficient for determining whether the recovered model preserves its intended behavior.

Experiments III–IV further extend the implications beyond explicit parameter damage. The prompt-injection evaluation indicates that modern aligned LLMs may not be easily coerced into direct policy violations; instead, the dominant failure mode can be a service-level collapse via systematic over-refusal, where benign requests are rejected even though refusal-required prompts remain properly rejected. This demonstrates that “policy compliance” alone is not equivalent to “operational trust,” and that behavioral drift can manifest as availability degradation rather than overt unsafe compliance. The integrity-preserving backdoor experiment reinforces the same message under a different threat model: a model can appear intact under file-level integrity checks and maintain reasonable clean accuracy, yet exhibit large distributional drift under trigger conditions, enabling selective misbehavior that is difficult to detect without behavioral validation.