A Classifier with Unknown Pattern Recognition for Domain Name System Tunneling Detection in Dynamic Networks

Abstract

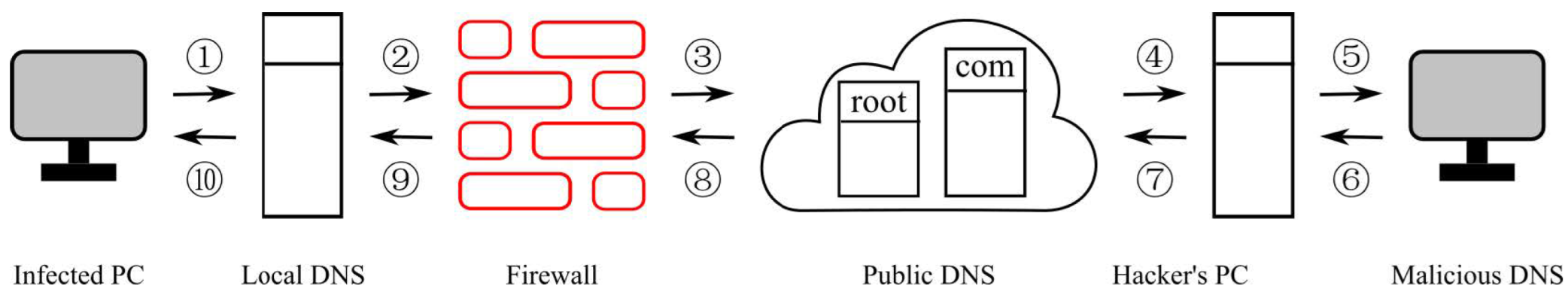

1. Introduction

- Diversity: For unsafe samples in the training dataset, the types of data to be transmitted and the encryption methods used are highly diverse, resulting in no unified pattern. Meanwhile, legitimate DNS traffic in the training dataset also exhibits significant variability—for example, traffic generated by accessing video websites differs substantially from that generated by instant messaging applications.

- Novel patterns: During the testing phase, DNS queries with patterns not observed during training (i.e., unknown patterns) will inevitably emerge. Conventional classifiers cannot accurately predict the categories of these samples, requiring independent consideration of such unknown queries.

- Class imbalance: In datasets collected from live networks, the vast majority of samples are legitimate DNS requests generated by normal user activities, whereas malicious DNS requests generated by DNS tunneling account for only a small proportion.

- Feature engineering complexity: A large amount of information can be extracted from each DNS query, including the source IP address, the destination IP address, the domain name, and the timestamp. However, this raw data cannot be directly input into machine learning models, making feature engineering a critical and challenging step.

1.1. Problem Formulation

- Known Pattern (KP) Samples: DNS query samples whose patterns have been observed in the training dataset, including both legitimate and known malicious patterns.

- Unknown Pattern (UP) Samples: DNS query samples whose patterns have not been observed in the training dataset, primarily consisting of newly emerging malicious DNS tunneling patterns.

1.2. Motivation and Contributions

- How to formalize the DNS tunneling detection task involving unknown pattern recognition to accurately characterize the dynamic emergence of DNS query patterns in real-world dynamic networks?

- How to design an efficient classifier that can not only handle multi-pattern classes in DNS tunneling detection but also effectively identify unknown pattern samples?

- Can the proposed model outperform state-of-the-art baselines in both known pattern classification accuracy and unknown pattern detection rate, especially in handling class imbalance, when tested on real DNS traffic across diverse network scenarios?

2. Related Work

3. Our Model

3.1. Tree Construction (MNTree)

- Initialize a root node containing all training samples X.

- If the number of samples in the current node is less than a predefined threshold (minimum internal node size), the node becomes a leaf node, and the construction process terminates.

- Otherwise, use k-sums-x clustering to split the samples in the current node into two clusters and (k = 2 (We set k = 2 in k-sums-x for node splitting to ensure balanced tree growth and reduce computational complexity.)

- Recursively construct left and right child nodes using and , respectively.

- Return the current node, which stores the points to the left and right child nodes.

| Algorithm 1 MNTree |

| Require: Dataset , threshold Ensure: Node A of the tree

|

3.2. Prediction Mechanism

3.2.1. Medium Neighbor

3.2.2. Probability Calculation of UP Samples

3.2.3. Class Probability Calculation for KP Samples

3.2.4. Integrated Prediction Result

3.3. Prediction and Unknown Pattern Identification

| Algorithm 2 Predict |

| Require: Model MNForest, threshold and , test data , Ensure: Updated MNForest, predicted labels |

3.4. Time and Space Complexity

4. Experiments on Public Datasets

4.1. Datasets

- USPS: A handwritten digit dataset containing 9298 samples of digits 0–9. It is employed and divided into three experimental cases, as detailed below:

- –

- The first case focuses on the SENC scenario. Specifically, the known classes are composed of samples from patterns 1, 3, 4, 5, 6, 8, while the unknown classes are composed of samples from patterns 0, 2, 7, 9.

- –

- The second case focuses on the balanced EPC scenario. In this case, the dataset is categorized into two classes: the first class is formed by samples from patterns 1, 3, 4, and the second class is constituted by samples from patterns 5, 6, 8. The unknown classes still contain samples from the patterns 0, 2, 7, 9. Notably, the number of samples contributed by each pattern is approximately equal.

- –

- The third case focuses on the imbalanced EPC scenario. It shares similarities with the second case in terms of the two-class division and the corresponding patterns involved in each class. The key difference lies in the sample size distribution: the number of samples from patterns 1, 5 is about 10 times that of samples from patterns 3, 4, 6, 8.

- Digits: A handwritten digit dataset containing 1797 samples of digits 0–9. Consistent with the USPS dataset, it is divided into three experimental cases to simulate SENC and EPC scenarios, as follows:

- –

- The first case focuses on the SENC scenario. Specifically, the known classes are composed of samples from patterns 2, 4, 6, 8, 0, while the unknown classes are composed of samples from patterns 1, 3, 5, 7, 9.

- –

- The second case focuses on the balanced EPC scenario. The dataset is categorized into two classes here: the first class consists of samples from patterns 1, 2, 3, 4, and the second class is formed by samples from patterns 5, 6, 7, 8. The unknown classes remain samples from patterns 0, 9, with the number of samples contributed by each pattern being approximately equal.

- –

- The third case focuses on the imbalanced EPC scenario. It has the same two-class division and pattern composition as the second case, but differs in sample size distribution: the number of samples from patterns 1, 5 is about 10 times that of samples from patterns 2, 3, 4, 6, 7, 8.

- JAFFE: A face emotion dataset containing 213 images of 10 Japanese female subjects, with 7 emotion categories (happy, sad, angry, fear, surprise, disgust, neutral), which are regarded as patterns. Three experimental cases are designed for this dataset, detailed as follows:

- –

- The first case focuses on the SENC scenario. Specifically, the known classes are composed of samples from patterns happy, sad, angry, surprise, neutral, while the unknown classes are composed of samples from patterns fear, disgust.

- –

- The second case focuses on the balanced EPC scenario. The dataset is divided into two classes: the first class consists of samples from the patterns happy, sad, angry, and the second class consists of samples from the patterns surprise, neutral. The unknown classes are samples from patterns fear, disgust, with each pattern contributing approximately the same number of samples.

- –

- The third case focuses on the imbalanced EPC scenario. It adopts the same two-class division and pattern composition as the second case, but with an unbalanced sample size distribution: the number of samples from patterns happy, surprise is about 10 times that of samples from patterns sad, angry, neutral.

- Pix10P: A face recognition dataset containing 1000 images of 10 subjects (100 images per subject), where each subject corresponds to a unique pattern. Three experimental cases are designed for this dataset, as detailed below:

- –

- The first case focuses on the SENC scenario. Specifically, the known classes are composed of samples from patterns Subject 1, Subject 2, Subject 3, Subject 4, Subject 5, Subject 6, Subject 7, Subject 8, while the unknown classes are composed of samples from patterns Subject 9, Subject 10.

- –

- The second case focuses on the balanced EPC scenario. The dataset is categorized into two classes: the first class consists of samples from patterns Subject 1, Subject 2, Subject 3, Subject 4, and the second class is formed by samples from patterns Subject 5, Subject 6, Subject 7, Subject 8. The unknown classes are samples from patterns Subject 9, Subject 10, with each pattern contributing roughly the same number of samples.

- –

- The third case focuses on the imbalanced EPC scenario. It shares the same two-class division and pattern composition as the second case, but with an unbalanced sample size distribution: the number of samples from patterns Subject 1, Subject 5 is about 10 times that of samples from patterns Subject 2, Subject 3, Subject 4, Subject 6, Subject 7, Subject 8.

4.2. Baselines

- SEEN [26]: A semi-supervised streaming learning model that can handle emerging new labels. The trade-off parameter is set to 0.1.

- EVM [27]: An extreme value machine-based open-set classification model that models the distribution of known classes using extreme value theory. The number of samples used to construct extrema is 50, the number of extreme vectors is 4, and the margin scaling multiplier is 0.5.

- SENCForest [28]: A forest-based model designed for classification under streaming emerging new classes. The number of trees T is 100, and the minimum internal node size is 20. ( is a hyperparameter; according to experimental results, the algorithm usually performs well when , so we chose this value.) The parameters involved in this algorithm are the same as those in our proposed algorithm. For fairness, the parameter values are the same for both.

- ASG [29]: An open-category classification model that generates adversarial samples to expand the decision boundary of known classes. The number of generated adversarial samples is 10, and the number of original samples used for generating adversarial samples is 50.

- KNNENS [10]: A K-nearest neighbor ensemble model that can identify emerging classes without ground-truth labels. The number of nearest neighbors k is 10, and the number of ensemble members is 50.

- MNForest (Ours): The number of trees T is 100, the minimum internal node size is 20, and the threshold for unknown sample identification is 0.5. Only T (involved in SENCForest and MNForest) and (involved in MNForest) were set empirically; other parameters were optimized via grid search. Most hyperparameters across all baseline methods were optimized via grid search to ensure each model performed at its optimal level.

4.3. Metrics

- Accuracy (ACC): Accuracy of classifying KP samples into correct known classes, reflecting the known pattern classification capability.

- UP-Detection Rate (UDR): Ratio of correctly identified UP samples to total UP samples, reflecting the unknown pattern identification capability.

- Micro-F1 (MIF): Harmonic mean of overall precision and recall, emphasizing performance on majority classes.

- Macro-F1 (MAF): Average F1-score of each class (including unknown class), emphasizing performance on minority classes.

4.4. Experimental Results

- MNForest outperforms baselines in most cases: In the EPC scenario, MNForest achieves the highest micro-F1 and macro-F1 scores on most dataset configurations. For example, on the Pix10P dataset (case 3), MNForest achieves a micro-F1 score of 0.917, which is 6.7% higher than the second-best model (SENCForest, 0.850). On the Digits dataset (case 2), MNForest achieves a micro-F1 score of 0.978, which is 9.3% higher than the second-best model (SENCForest, 0.885). Similar advantages are observed on the JAFFE and USPS datasets.

- Forest-based models perform better than other types of models: MNForest and SENCForest (both forest-based models) generally outperform EVM, ASG and SEEN models. This is because forest-based models can better capture the multi-pattern characteristics within classes by leveraging ensemble learning, which is consistent with the characteristics of the EPC problem.

- MNForest has better unknown pattern identification capability: Compared with SENCForest, MNForest achieves higher scores on datasets with more complex pattern distributions. This is because MNForest uses the medium neighbor mechanism to accurately identify unknown patterns, while SENCForest relies on predefined distance thresholds, which are less adaptive to complex pattern distributions.

- Performance on imbalanced datasets: On datasets with significant pattern imbalance, MNForest still maintains high macro-F1 scores, indicating its strong ability to handle class-imbalanced scenarios, which is critical for DNS tunneling detection (where malicious samples are rare).

5. DNS Tunneling Detection Experiments

5.1. Dataset Collection and Preprocessing

- Enterprise Network: DNS traffic from the internal network of a medium-sized enterprise (500+ employees), including legitimate traffic (e.g., web browsing, email, enterprise application access) and simulated DNS tunneling traffic (generated by tools such as DNScat2, iodine, and DNSExfiltrator). The total number of DNS queries in the dataset is 18,000, of which 17,460 are legitimate samples, accounting for 97% of the total dataset, and 540 are malicious DNS tunneling samples, accounting for 3%.

- Campus Network: DNS traffic from a university campus network (10,000+ users), including traffic from student dormitories, teaching buildings, and research labs. The dataset includes legitimate traffic (e.g., video streaming, online learning, social media) and real malicious DNS tunneling traffic captured by the campus network security system. The total number of DNS queries is 105,000, with 101,325 legitimate samples (96.5% of the total) and 3675 malicious DNS tunneling samples (3.5%)

- Public Wi-Fi Network: DNS traffic from a public Wi-Fi network in a shopping mall (5000+ daily users), including legitimate traffic (e.g., mobile app usage, web browsing) and DNS tunneling traffic used to bypass captive portals. The dataset contains 63,000 DNS queries, with 61,236 legitimate samples (97.2% of the total) and 1764 malicious DNS tunneling samples (2.8%).

- Data Center Network: DNS traffic from a cloud data center (hosting 200+ enterprise applications), including legitimate traffic (e.g., application service discovery, database access) and advanced persistent threat (APT) DNS tunneling traffic (simulated using custom encoding methods). The total number of DNS queries is 12,000, with 11,760 legitimate samples (98% of the total) and 240 malicious APT DNS tunneling samples (2%)

- Data Cleaning: Remove duplicate DNS queries, invalid queries (e.g., empty domain names), and queries with incomplete information (e.g., missing timestamp or IP address).

- Group: Queries with the same primary domain name, h-time, and sip are assigned to the same group, where the primary domain name is obtained by tldextract (https://github.com/john-kurkowski/tldextract (accessed on 25 September 2025)), h-time is the hour extracted from the timestamp, and sip is obtained byThe port 53 is mainly used for domain name resolution.

- Feature: Let denote the group, n is the number of queries, is the i-th query in the group; 41 customised statistics are extracted from each group. These features are described in Table 6.

5.2. Scenario-Specific Performance Analysis

- Enterprise Network: The enterprise network has strict access control policies, so the number of unknown-pattern, legitimate queries is relatively small, which helps improve detection accuracy. Specifically, the Precision (0.954) and Recall (0.937) of the proposed algorithm are both the highest among all models. Compared to the second-best baseline (SENCForest, F1-score = 0.915), MNForest’s F1-score is 3% higher, indicating that MNForest has a strong detection capability for DNS tunneling traffic in enterprise networks.

- Campus Network: The campus network has a large number of users and diverse traffic types, resulting in more unknown pattern legitimate queries. MNForest continues to maintain excellent performance in the binary classification task, with the highest Recall (0.932) and F1-score (0.928). Specifically, its Recall is 6.0% higher than the second-best baseline (SENCForest, 0.872), and its F1-score is 4.4% higher than SENCForest (0.884).

- Public Wi-Fi Network: DNS tunneling traffic in this scenario is mainly used to bypass captive portals, which have unique encoding patterns. MNForest can effectively identify these patterns in the binary classification task, achieving the highest Precision (0.957) and Recall (0.924) among all models. The high precision indicates that MNForest has a strong ability to identify the unique patterns of portal-bypassing DNS tunneling traffic, reducing the misjudgment of legitimate Wi-Fi access traffic in binary classification.

- Data Center Network: MNForest’s medium neighbor mechanism can effectively capture the subtle differences between APT traffic and legitimate traffic in the binary classification task, achieving the highest Recall (0.918) and F1-score (0.915). This shows that MNForest has significant advantages in detecting low-volume, custom-encoded APT DNS tunneling traffic in binary classification, which is difficult for traditional classifiers to capture.

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Correction Statement

References

- Kuang, S.; Wang, W.; Ye, Y.; Peng, L. HHBT: DNS tunneling Detection via Hybrid Hierarchical Bidirectional Transformer. Comput. Netw. 2025, 275, 111919. [Google Scholar] [CrossRef]

- Wang, Z.; Xu, Q.; Yang, Z.; He, Y.; Cao, X.; Huang, Q. Openauc: Towards auc-oriented open-set recognition. Adv. Neural Inf. Process. Syst. 2022, 35, 25033–25045. [Google Scholar]

- Cevikalp, H.; Uzun, B.; Salk, Y.; Saribas, H.; Köpüklü, O. From anomaly detection to open set recognition: Bridging the gap. Pattern Recognit. 2023, 138, 109385. [Google Scholar] [CrossRef]

- Geng, C.; Huang, S.; Chen, S. Recent Advances in Open Set Recognition: A Survey. IEEE Trans. Pattern Anal. Mach. Intell. 2021, 43, 3614–3631. [Google Scholar] [CrossRef]

- Zhou, Z.H. Open-environment machine learning. Natl. Sci. Rev. 2022, 9, nwac123. [Google Scholar] [CrossRef]

- Júnior, J.D.C.; Faria, E.R.; Silva, J.A.; Gama, J.; Cerri, R. Novelty detection for multi-label stream classification under extreme verification latency. Appl. Soft Comput. 2023, 141, 110265. [Google Scholar] [CrossRef]

- Kanagaraj, K.Y.S.S.; Nallappan, M. Methods for Predicting the Rise of the New Labels from a High-Dimensional Data Stream. Int. J. Intell. Eng. Syst. 2023, 16, 339–349. [Google Scholar] [CrossRef]

- Yu, X.R.; Wang, D.B.; Zhang, M.L. Partial label learning with emerging new labels. Mach. Learn. 2024, 113, 1549–1565. [Google Scholar] [CrossRef]

- Din, S.U.; Yang, Q.; Shao, J.; Mawuli, C.B.; Ullah, A.; Ali, W. Synchronization-based semi-supervised data streams classification with label evolution and extreme verification delay. Inf. Sci. 2024, 678, 120933. [Google Scholar] [CrossRef]

- Zhang, J.; Wang, T.; Ng, W.W.; Pedrycz, W. KNNENS: A k-nearest neighbor ensemble-based method for incremental learning under data stream with emerging new classes. IEEE Trans. Neural Netw. Learn. Syst. 2022, 34, 9520–9527. [Google Scholar] [CrossRef]

- Mu, X.; Zeng, G.; Huang, Z. Federated Learning with Emerging New Class: A Solution Using Isolation-Based Specification. In Proceedings of the International Conference on Database Systems for Advanced Applications, Tianjin, China, 17–20 April 2023; Springer: Cham, Switzerland, 2023; pp. 719–734. [Google Scholar]

- Wang, Y.; Vinogradov, A. Multi-classification generative adversarial network for streaming data with emerging new classes: Method and its application to condition monitoring. TechRxiv 2022. [Google Scholar] [CrossRef]

- Wang, Y.; Wang, Q.; Vinogradov, A. Ensembled Multi-classification Generative Adversarial Network for Condition Monitoring in Streaming Data with Emerging New Classes. In Olympiad in Engineering Science; Springer: Berlin/Heidelberg, Germany, 2023; pp. 45–57. [Google Scholar]

- Wang, Y.; Wang, Q.; Vinogradov, A. Semi-supervised deep architecture for classification in streaming data with emerging new classes: Application in condition monitoring. TechRxiv 2023. [Google Scholar] [CrossRef]

- Cao, Z.; Zhang, S.; Lin, C.T. Online Ensemble of Ensemble OVA Framework for Class Evolution with Dominant Emerging Classes. In Proceedings of the 2023 IEEE International Conference on Data Mining (ICDM), Shanghai, China, 1–4 December 2023; IEEE: New York, NY, USA, 2023; pp. 968–973. [Google Scholar]

- Henrydoss, J.; Cruz, S.; Li, C.; Günther, M.; Boult, T.E. Enhancing Open-Set Recognition Using Clustering-Based Extreme Value Machine (C-EVM). In Proceedings of the International Conference on Big Data (BigData), Honolulu, HI, USA, 18–20 September 2020; IEEE: New York, NY, USA, 2020. [Google Scholar]

- Fontanel, D.; Cermelli, F.; Mancini, M.; Buló, S.R.; Ricci, E.; Caputo, B. Boosting Deep Open World Recognition by Clustering. IEEE Robot. Autom. Lett. 2020, 5, 5985–5992. [Google Scholar] [CrossRef]

- Pei, S.; Sun, Y.; Nie, F.; Jiang, X.; Zheng, Z. Adaptive Graph K-Means. Pattern Recognit. 2025, 161, 111226. [Google Scholar] [CrossRef]

- Pei, S.; Sun, Y.; Lin, Z.; Nie, F.; Lu, J.; Jiang, X.; Zhang, C.; Zheng, Z. Concave Cut: Analyzing the Role of Concave Functions in Clustering. Pattern Recognit. 2025, 174, 112950. [Google Scholar] [CrossRef]

- Pei, S.; Nie, F.; Wang, R.; Li, X. A rank-constrained clustering algorithm with adaptive embedding. In Proceedings of the ICASSP 2021—2021 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Virtual, 6–11 June 2021; IEEE: New York, NY, USA, 2021; pp. 2845–2849. [Google Scholar]

- Pei, S.; Chen, H.; Nie, F.; Wang, R.; Li, X. Centerless clustering. IEEE Trans. Pattern Anal. Mach. Intell. 2023, 45, 167–181. [Google Scholar] [CrossRef]

- Pei, S.; Nie, F.; Wang, R.; Li, X. Efficient clustering based on a unified view of k-means and ratio-cut. Adv. Neural Inf. Process. Syst. 2020, 33, 14855–14866. [Google Scholar]

- Wang, H.; Du, F.; Pei, S.; Zheng, Z. Semi-supervised learning with centerless clustering. In Proceedings of the 2025 IEEE Cyber Science and Technology Congress (CyberSciTech), Hakodate, Japan, 21–24 October 2025; pp. 85–90. [Google Scholar]

- Pei, S.; Zhang, Y.; Wang, R.; Nie, F. A portable clustering algorithm based on compact neighbors for face tagging. Neural Netw. 2022, 154, 508–520. [Google Scholar] [CrossRef] [PubMed]

- Pei, S.; Nie, F.; Wang, R.; Li, X. An efficient density-based clustering algorithm for face groping. Neurocomputing 2021, 462, 331–343. [Google Scholar] [CrossRef]

- Zhu, Y.N.; Li, Y.F. Semi-Supervised Streaming Learning with Emerging New Labels. Proc. AAAI Conf. Artif. Intell. 2020, 34, 7015–7022. [Google Scholar] [CrossRef]

- Rudd, E.M.; Jain, L.P.; Scheirer, W.J.; Boult, T.E. The Extreme Value Machine. IEEE Trans. Pattern Anal. Mach. Intell. 2018, 40, 762–768. [Google Scholar] [CrossRef] [PubMed]

- Mu, X.; Ting, K.M.; Zhou, Z.H. Classification Under Streaming Emerging New Classes: A Solution Using Completely-Random Trees. IEEE Trans. Knowl. Data Eng. 2017, 29, 1605–1618. [Google Scholar] [CrossRef]

- Yu, Y.; Qu, W.Y.; Li, N.; Guo, Z. Open-Category Classification by Adversarial Sample Generation. In Proceedings of the 26th International Joint Conference on Artificial Intelligence, IJCAI’17, Melbourne, Australia, 19–25 August 2017; AAAI Press: Washington, DC, USA, 2017; pp. 3357–3363. [Google Scholar]

| Cases | Metric | SEEN | EVM | SENCForest | ASG | KNNENS | MNForest |

|---|---|---|---|---|---|---|---|

| USPS Case 1 SENC | ACC | 0.862 | 0.891 | 0.913 | 0.902 | 0.895 | 0.928 |

| UDR | 0.783 | 0.816 | 0.839 | 0.828 | 0.822 | 0.857 | |

| MIF | 0.856 | 0.882 | 0.901 | 0.893 | 0.887 | 0.915 | |

| MAF | 0.829 | 0.855 | 0.874 | 0.866 | 0.860 | 0.888 | |

| USPS Case 2 EPC | ACC | 0.825 | 0.860 | 0.892 | 0.878 | 0.866 | 0.924 |

| UDR | 0.771 | 0.805 | 0.832 | 0.821 | 0.815 | 0.853 | |

| MIF | 0.823 | 0.857 | 0.889 | 0.875 | 0.863 | 0.921 | |

| MAF | 0.795 | 0.831 | 0.862 | 0.848 | 0.835 | 0.903 | |

| USPS Case 3 EPC | ACC | 0.789 | 0.815 | 0.848 | 0.840 | 0.832 | 0.880 |

| UDR | 0.721 | 0.756 | 0.789 | 0.778 | 0.769 | 0.825 | |

| MIF | 0.786 | 0.812 | 0.845 | 0.837 | 0.829 | 0.876 | |

| MAF | 0.652 | 0.698 | 0.734 | 0.716 | 0.701 | 0.793 |

| Cases | Metric | SEEN | EVM | SENCForest | ASG | KNNENS | MNForest |

|---|---|---|---|---|---|---|---|

| Digits Case 1 SENC | ACC | 0.845 | 0.878 | 0.905 | 0.896 | 0.889 | 0.923 |

| UDR | 0.768 | 0.802 | 0.827 | 0.819 | 0.811 | 0.849 | |

| MIF | 0.839 | 0.871 | 0.898 | 0.889 | 0.882 | 0.917 | |

| MAF | 0.812 | 0.846 | 0.869 | 0.861 | 0.853 | 0.885 | |

| Digits Case 2 EPC | ACC | 0.813 | 0.856 | 0.888 | 0.874 | 0.862 | 0.926 |

| UDR | 0.756 | 0.798 | 0.823 | 0.815 | 0.807 | 0.847 | |

| MIF | 0.810 | 0.852 | 0.885 | 0.871 | 0.859 | 0.978 | |

| MAF | 0.783 | 0.825 | 0.857 | 0.843 | 0.831 | 0.901 | |

| Digits Case 3 EPC | ACC | 0.775 | 0.808 | 0.841 | 0.833 | 0.824 | 0.876 |

| UDR | 0.712 | 0.748 | 0.782 | 0.771 | 0.763 | 0.819 | |

| MIF | 0.772 | 0.805 | 0.838 | 0.830 | 0.821 | 0.873 | |

| MAF | 0.645 | 0.691 | 0.728 | 0.710 | 0.695 | 0.789 |

| Cases | Metric | SEEN | EVM | SENCForest | ASG | KNNENS | MNForest |

|---|---|---|---|---|---|---|---|

| JAFFE Case 1 SENC | ACC | 0.832 | 0.865 | 0.893 | 0.884 | 0.876 | 0.915 |

| UDR | 0.753 | 0.789 | 0.816 | 0.808 | 0.800 | 0.838 | |

| MIF | 0.826 | 0.859 | 0.887 | 0.878 | 0.870 | 0.909 | |

| MAF | 0.798 | 0.832 | 0.856 | 0.848 | 0.840 | 0.876 | |

| JAFFE Case 2 EPC | ACC | 0.801 | 0.842 | 0.875 | 0.861 | 0.850 | 0.912 |

| UDR | 0.741 | 0.783 | 0.811 | 0.803 | 0.794 | 0.835 | |

| MIF | 0.798 | 0.838 | 0.872 | 0.858 | 0.847 | 0.913 | |

| MAF | 0.770 | 0.812 | 0.844 | 0.830 | 0.819 | 0.895 | |

| JAFFE Case 3 EPC | ACC | 0.762 | 0.796 | 0.830 | 0.821 | 0.812 | 0.868 |

| UDR | 0.703 | 0.739 | 0.773 | 0.762 | 0.754 | 0.807 | |

| MIF | 0.759 | 0.793 | 0.827 | 0.818 | 0.809 | 0.865 | |

| MAF | 0.638 | 0.684 | 0.721 | 0.703 | 0.688 | 0.776 |

| Cases | Metric | SEEN | EVM | SENCForest | ASG | KNNENS | MNForest |

|---|---|---|---|---|---|---|---|

| Pix10P Case 1 SENC | ACC | 0.856 | 0.889 | 0.916 | 0.907 | 0.899 | 0.932 |

| UDR | 0.775 | 0.811 | 0.838 | 0.829 | 0.821 | 0.856 | |

| MIF | 0.850 | 0.883 | 0.910 | 0.901 | 0.893 | 0.924 | |

| MAF | 0.823 | 0.857 | 0.879 | 0.871 | 0.863 | 0.892 | |

| Pix10P Case 2 EPC | ACC | 0.824 | 0.867 | 0.899 | 0.885 | 0.873 | 0.928 |

| UDR | 0.762 | 0.804 | 0.831 | 0.822 | 0.814 | 0.852 | |

| MIF | 0.821 | 0.863 | 0.896 | 0.882 | 0.870 | 0.922 | |

| MAF | 0.796 | 0.838 | 0.868 | 0.854 | 0.842 | 0.904 | |

| Pix10P Case 3 EPC | ACC | 0.786 | 0.820 | 0.853 | 0.845 | 0.836 | 0.885 |

| UDR | 0.725 | 0.761 | 0.794 | 0.783 | 0.775 | 0.828 | |

| MIF | 0.783 | 0.817 | 0.850 | 0.842 | 0.833 | 0.917 | |

| MAF | 0.658 | 0.704 | 0.741 | 0.723 | 0.708 | 0.798 |

| NO. | Name | Example |

|---|---|---|

| 2 | source ip | 162.105.129.122 |

| 3 | destination ip | 205.251.198.179 |

| 4 | source port | 20,018 |

| 11 | start time | 1,501,605,396 |

| 54 | domain | pixel.redditmedia.com |

| 55 | rr type | 0001;0000;0000;0000;0000 |

| 58 | DNS TTL | 300 |

| 59 | reply ipv4 | 151.101.73.140 |

| 87 | request length | 37 |

| 89 | reply length | 88 |

| NO. | Description |

|---|---|

| 1 | The number of queries |

| 2 | The number of distinct subdomain names |

| 3–7 | , where is the request length of |

| 8–12 | , where is the reply length of |

| 13–17 | , where is the DNS TTL of |

| 18–22 | , where is the number of tags of |

| 23–27 | , where is the length of |

| 28 | Frequency of rrtypes containing 0001 |

| 29 | Frequency of valid reply IPv4 |

| 30 | Frequency of distinct valid reply IPv4 |

| 31–35 | , where is the number of digits in domain name of |

| 36–40 | , where is the number of characters that are neither digits nor letters in |

| 41 | , where and represent the reply length and request length of |

| Scenario | Metric | SEEN | EVM | SENCForest | ASG | KNNENS | MNForest |

|---|---|---|---|---|---|---|---|

| Enterprise | PRE | 0.892 | 0.913 | 0.928 | 0.919 | 0.915 | 0.954 |

| REC | 0.867 | 0.885 | 0.903 | 0.895 | 0.890 | 0.937 | |

| F1 | 0.879 | 0.898 | 0.915 | 0.907 | 0.902 | 0.945 | |

| Campus | PRE | 0.851 | 0.876 | 0.897 | 0.886 | 0.879 | 0.926 |

| REC | 0.822 | 0.848 | 0.872 | 0.861 | 0.854 | 0.932 | |

| F1 | 0.836 | 0.861 | 0.884 | 0.873 | 0.866 | 0.928 | |

| Public Wi-Fi | PRE | 0.898 | 0.921 | 0.935 | 0.927 | 0.922 | 0.957 |

| REC | 0.873 | 0.895 | 0.910 | 0.902 | 0.896 | 0.924 | |

| F1 | 0.885 | 0.907 | 0.922 | 0.914 | 0.909 | 0.949 | |

| Data Center | PRE | 0.836 | 0.864 | 0.886 | 0.875 | 0.868 | 0.913 |

| REC | 0.801 | 0.827 | 0.852 | 0.840 | 0.833 | 0.918 | |

| F1 | 0.818 | 0.845 | 0.868 | 0.857 | 0.850 | 0.915 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Dong, H.; Zheng, Z.; Pei, S. A Classifier with Unknown Pattern Recognition for Domain Name System Tunneling Detection in Dynamic Networks. Electronics 2026, 15, 709. https://doi.org/10.3390/electronics15030709

Dong H, Zheng Z, Pei S. A Classifier with Unknown Pattern Recognition for Domain Name System Tunneling Detection in Dynamic Networks. Electronics. 2026; 15(3):709. https://doi.org/10.3390/electronics15030709

Chicago/Turabian StyleDong, Huijuan, Zengwei Zheng, and Shenfei Pei. 2026. "A Classifier with Unknown Pattern Recognition for Domain Name System Tunneling Detection in Dynamic Networks" Electronics 15, no. 3: 709. https://doi.org/10.3390/electronics15030709

APA StyleDong, H., Zheng, Z., & Pei, S. (2026). A Classifier with Unknown Pattern Recognition for Domain Name System Tunneling Detection in Dynamic Networks. Electronics, 15(3), 709. https://doi.org/10.3390/electronics15030709