1. Introduction

With the rapid development of digital media and image acquisition technologies, content-based image retrieval (CBIR) has become an essential tool in various application domains [

1,

2,

3]. A typical CBIR system consists of two core modules: feature extraction and similarity measurement [

4]. The feature extraction module converts image content into discriminative feature vectors, while the similarity measurement module computes feature distances between a query image and images in a database to retrieve the most relevant results. However, features extracted by conventional CBIR methods are mainly low-level visual descriptors, and similarity measurements based on such features often deviate significantly from human subjective perception [

5]. In texture-related scenarios, humans tend to describe and distinguish textures using perceptual attributes such as roughness, directionality, and contrast. Classic perceptual studies have shown that texture perception can be characterized along a set of perceptually meaningful dimensions [

6]. Textures that are perceptually similar usually share consistent high-level perceptual characteristics, even when low-level image statistics differ. Therefore, modeling texture similarity directly from perceptual features derived from human visual experience is regarded as a promising direction for bridging the gap between computational retrieval results and subjective perception.

From the perspective of system design, a texture retrieval framework generally involves two key components: texture feature extraction and retrieval method formulation. Feature extraction aims to encode texture appearance into quantifiable representations that serve as the basis for retrieval accuracy, while retrieval methods determine how efficiently and effectively target textures are matched within a database. Early texture retrieval approaches relied primarily on traditional image analysis techniques and handcrafted feature descriptors [

7]. With the advancement of deep learning, neural network–based methods have been increasingly applied to texture retrieval, enabling the extraction of high-order texture representations through deep architectures and improving retrieval robustness and accuracy [

8]. Deep convolutional architectures have shown strong representation capacity across a range of image modeling tasks, which further motivates deep feature learning for texture-related analysis [

9]. Nevertheless, due to the intrinsic limitations of conventional image-driven features in representing natural texture perception, researchers have gradually explored texture retrieval frameworks inspired by human visual perception [

10]. By constructing perceptual texture spaces that better conform to human similarity judgments [

11], these approaches aim to enhance perceptual consistency in retrieval results. For instance, psychophysics-based studies have analyzed perceptual similarity among natural textures and constructed texture similarity matrices to support perceptually aligned retrieval [

12]. Despite these efforts, most existing texture retrieval frameworks remain fundamentally image-driven and assume the availability of reference images, which restricts their applicability in perception-driven scenarios where retrieval intent is expressed only through subjective descriptions.

From the viewpoint of feature representation, texture features can be broadly categorized into handcrafted features and deep learning–based features. Handcrafted features are designed according to prior knowledge of visual mechanisms and encode low-level texture characteristics into explicit descriptors. Such features are commonly divided into statistical and structural categories [

13]. Statistical features, such as gray-level co-occurrence matrices (GLCM), model texture by capturing gray-level relationships and have been widely applied in medical image analysis, although they are sensitive to scale and orientation variations and may incur high computational costs in high-dimensional settings [

14]. Local binary patterns (LBP) describe local intensity differences and are effective for capturing fine texture details but are sensitive to noise and lack global structural information [

15]. Other handcrafted descriptors, including Histogram of Oriented Gradients (HOG) [

16] and Gabor filters [

17], characterize texture through gradient orientation distributions and frequency-domain analysis, respectively. More recently, advanced local pattern descriptors combined with dimensionality reduction techniques have been proposed for texture-based image retrieval. For example, a PCA-based advanced local octa-directional pattern descriptor was introduced to encode local intensity variations along multiple directions while reducing feature dimensionality [

18], achieving competitive retrieval performance on large-scale image datasets. While handcrafted features offer clear physical interpretability and low computational complexity, they essentially capture pixel-level statistics and structures and cannot directly correspond to high-level perceptual attributes such as roughness or granularity. Beyond conventional pixel-level descriptors, alternative structural modeling approaches have also been explored for texture analysis. Graph-based representations, such as natural and horizontal visibility graphs, have been employed to capture texture structures using graph-theoretic features and conventional machine learning classifiers, demonstrating that non-CNN structural modeling can achieve competitive texture classification performance [

19].

With the rapid development of deep learning, deep features have become the mainstream choice for complex texture analysis due to their hierarchical and data-driven representation learning capability. Recent studies have further explored deep learning–based image retrieval frameworks by integrating multiple feature modalities. For instance, a retrieval model for lunar complex craters combined deep representations extracted by transformer-based architectures with handcrafted texture and shape features, and optimized similarity learning through metric learning strategies, achieving improved retrieval accuracy under complex visual conditions [

20]. Early convolutional neural network (CNN)–based approaches extracted texture features from intermediate or fully connected layers of pre-trained models such as VGG-16 and ResNet, enabling abstraction from low-level edges to high-level semantic patterns [

21]. Specialized architectures, such as ArtFusionNet, further enhanced texture representation by incorporating dilated convolutions and multi-scale feature fusion, demonstrating strong performance in artistic texture recognition tasks [

22]. More recently, Transformer-based models, including Vision Transformer (ViT) and Swin Transformer, have introduced self-attention mechanisms to overcome the local receptive field limitations of CNNs, effectively capturing global dependencies in non-periodic textures and improving discrimination in complex scenes [

23]. Despite their strong performance, deep features generally suffer from limited interpretability, as their learned representations lack explicit correspondence with fine-grained human perceptual attributes [

24]. Moreover, despite their effectiveness, these image-driven methods generally assume the availability of a reference image or embedding as the query, which limits their applicability in reference-free retrieval scenarios based on subjective perceptual descriptions.

In terms of retrieval strategy design, existing image retrieval methods can be broadly divided into distance-based and classification-based paradigms. Distance-based retrieval methods formulate retrieval as a similarity measurement problem between feature vectors and rank results according to predefined distance metrics. Commonly used measures include Euclidean distance [

25], which is intuitive and suitable for low- to medium-dimensional continuous features; Manhattan distance [

26], which offers lower computational complexity for high-dimensional data; and cosine similarity [

27], which evaluates directional consistency and is effective for sparse representations. However, the efficiency of distance-based retrieval is highly dependent on feature dimensionality and distance computation cost. Classification-based retrieval methods, in contrast, map images into predefined categories using trained classifiers and perform retrieval within localized category subsets. Traditional classifiers such as support vector machines (SVM) [

28] and random forests [

29] rely on handcrafted features and incur relatively low training costs, but their performance is constrained by feature representation capability. Deep learning–based classification approaches significantly improve classification accuracy by leveraging large-scale annotated datasets and can reduce retrieval search space in large databases [

30]. Nevertheless, classification-based paradigms depend heavily on predefined category systems and annotated data, making them unsuitable for scenarios in which retrieval intent is expressed through fuzzy or subjective perceptual descriptions.

Therefore, when users are unable to provide reference images and retrieval intent is primarily driven by subjective perceptual attributes, conventional distance-based and classification-based retrieval paradigms exhibit inherent limitations. This observation motivates the development of reference-free texture retrieval frameworks that operate directly within a perceptual feature space derived from human visual perception, enabling retrieval results that are more consistent with human subjective judgments.

The main contributions of this work are summarized as follows:

A human-centered perceptual feature space for texture representation is constructed through systematic psychophysical experiments, where texture attributes are explicitly defined and quantified based on human subjective evaluations, enabling perceptually meaningful texture modeling.

A perception-aligned, reference-free texture image retrieval framework is proposed, which formulates texture retrieval directly in the constructed perceptual space and allows users to retrieve textures using subjective perceptual descriptions rather than reference images.

A user-adaptive retrieval mechanism is introduced by constructing dual perceptual feature libraries for different user groups, accounting for perceptual sensitivity differences between art-major and non-art-major observers and improving the robustness and interpretability of retrieval results.

2. Materials and Methods

This section describes the engineering pipeline of the proposed perception-aligned texture retrieval framework, whose objective is to construct a computable perceptual representation for reference-free retrieval based on subjective descriptions. Controlled psychophysical experiments are employed as an engineering tool to collect and aggregate human ratings of predefined perceptual attributes, forming a low-dimensional perceptual feature space for texture representation, rather than as an independent study of visual perception mechanisms. In this work, the term “user-adaptive” refers to the selection of a corresponding perceptual feature library according to the user group, rather than an online learning or iterative optimization process. All perceptual feature construction and model training are performed offline, and retrieval is conducted on fixed representations without parameter updating. The overall framework of the proposed perception-aligned texture retrieval system is illustrated in

Figure 1. The framework consists of two main stages: perceptual feature construction and perception-aligned retrieval. Texture images are first evaluated through psychophysical experiments to obtain aggregated perceptual feature vectors, and separate perceptual feature libraries are constructed for art-major and non-art-major observer groups. Given a user-defined perceptual description as the query, retrieval is performed directly in the perceptual feature space by measuring perceptual distances, and the Top-K most perceptually similar textures are returned.

2.1. Dataset and Texture Selection

The experiments in this study are conducted using texture images selected from the Describable Textures Dataset (DTD), which contains a diverse collection of real-world texture images covering a wide range of material categories and visual appearances. A total of 300 texture images were selected from the DTD for perceptual evaluation, covering multiple material categories. The selection aimed to ensure perceptual diversity across attributes such as granularity, regularity, contrast, and directionality, rather than category balance. To ensure perceptual diversity and experimental reliability, texture samples are selected to represent variations in structural regularity, granularity, contrast, and directionality. All selected texture images are resized to a unified resolution and converted to grayscale when necessary to reduce potential color-related interference in perceptual evaluation. This design choice was made to isolate texture-related perceptual attributes and avoid confounding effects introduced by color variation, rather than to model color perception. Representative examples of the preprocessed grayscale texture images used for perceptual evaluation are shown in

Figure 2.

2.2. Perceptual Evaluation Protocol

To construct perceptual feature representations for engineering-oriented texture retrieval, a controlled perceptual evaluation protocol was designed to collect structured subjective ratings under standardized conditions. The protocol emphasizes measurement consistency and reproducibility, ensuring that perceptual ratings can be reliably aggregated and utilized for subsequent computational modeling, rather than aiming to investigate psychological mechanisms or cognitive processes of human vision. Specifically, the experimental design focuses on minimizing external visual interference and inter-subject ambiguity through unified display settings, predefined perceptual attributes, and consistent rating criteria. By treating perceptual evaluation as a data acquisition procedure for feature construction, the protocol provides a stable perceptual basis for engineering-oriented texture retrieval.

Figure 3 illustrates the overall procedure of the psychophysical experiment designed for perceptual attribute evaluation. Texture images are presented to observers under controlled laboratory conditions, where participants from art-major and non-art-major groups perform attribute-wise evaluation using a predefined set of perceptual attributes. The collected subjective ratings are aggregated across observers to form perceptual feature representations, which serve as the basis for constructing the perceptual feature library.

Texture samples used in the experiment are selected to cover a wide range of visual appearances, including variations in granularity, regularity, contrast, and directional patterns. Prior to the formal evaluation, all texture images are preprocessed to ensure consistency in resolution and display conditions. Images are presented on a calibrated display under controlled ambient lighting to minimize external visual interference.

2.2.1. Participants

A total of 62 participants were recruited for the perceptual evaluation. All participants were university students aged between 18 and 26 years, including 29 males and 33 females, forming a controlled observer group under standardized laboratory conditions. Their majors encompassed fields such as Computer Science, Law, and Arts. Participants are recruited and divided into two groups according to their educational background: an art-major group and a non-art-major group. This grouping strategy is motivated by previous findings that visual training and artistic experience may influence sensitivity to texture-related perceptual attributes. All participants re-port normal or corrected-to-normal vision and have no known visual or neurological disorders.

Before the experiment, participants receive standardized instructions explaining the evaluation task, perceptual attributes, and rating criteria. A brief familiarization session is conducted to ensure that participants understand the semantic meaning of each perceptual attribute and the usage of the rating scale, thereby reducing inter-subject ambiguity.

2.2.2. Texture Attributes and Rating Scale

Based on a review of relevant literature and expert consultation, a set of perceptual attributes is defined to characterize texture appearance using commonly adopted descriptors in texture perception studies. These attributes are selected to reflect both local and global perceptual properties of textures, including but not limited to contrast, repetitiveness, granularity, and feature density. Each attribute is treated as an independent perceptual dimension to facilitate explicit perceptual representation.

To facilitate a consistent understanding of the perceptual attributes and their semantic ranges, representative visual examples are provided for each attribute.

Figure 4 illustrates the perceptual definitions of four representative attributes by showing texture samples with gradually increasing perceptual strength from low to high.

Together with the numerical scale definitions summarized in

Table 1, these visual examples were presented to participants during the instruction phase to reduce semantic ambiguity and improve inter-observer consistency in perceptual evaluation. By combining textual definitions with representative visual references, participants were provided with a concrete and unified understanding of each perceptual attribute prior to the formal evaluation task.

Texture perception is quantified using a 9-point Likert scale, where higher values indicate a stronger presence of the corresponding perceptual attribute. The use of a discrete ordinal scale allows participants to express fine-grained perceptual differences while maintaining evaluation consistency across samples and reducing subjective rating instability. In addition, the bounded numerical range facilitates reliable aggregation of ratings across observers and supports subsequent normalization and distance-based similarity computation in the perceptual feature space. The complete list of perceptual attributes and the perceptual meanings of the scale endpoints are summarized in

Table 1, which provides a structured, standardized, and reproducible definition of the 12 perceptual dimensions employed in the experiment.

To ensure a shared understanding of the perceptual attributes and rating criteria across observers with different perceptual backgrounds, detailed attribute definitions and representative visual examples were provided during the instruction phase prior to the formal experiment. This preparation step is intended not only to reduce semantic ambiguity, but also to establish consistent internal reference standards for perceptual judgment, thereby improving inter-observer consistency in perceptual evaluation.

2.2.3. Experimental Procedure

The psychophysical experiments were conducted following a standardized and controlled protocol to ensure consistency and reliability of perceptual evaluations across observers. All experimental sessions were carried out in the same university computer laboratory to avoid potential variations in perceptual judgment caused by environmental changes. At the beginning of each session, the experimenter operated the instructor’s master control computer and used the Red Spider Multimedia Network Classroom Software (developed by Guangzhou Chuangxun Software Co., Ltd., China; official website:

http://www.3000soft.net/index.php, accessed on 3 February 2026) to simultaneously distribute texture images to all participant workstations. All computers in the laboratory were equipped with identical hardware configurations, including processor models and display specifications, ensuring device-level uniformity across observers. Each texture image was assigned a unique sample identifier and displayed at a fixed resolution on all student computers. Together with the fixed seating arrangement and uniform ambient lighting conditions in the laboratory, this setup ensured that all observers viewed texture samples from consistent perspectives, under identical illumination directions, and with the same display quality, thereby minimizing potential interference from device-related or environmental factors on perceptual judgments.

Given the relatively large number of texture samples involved in the experiment, specific measures were adopted to reduce visual fatigue and maintain evaluation reliability. All texture images were divided into groups of 20 samples, and each observer randomly selected three groups for perceptual evaluation. This grouping strategy limited the duration of continuous visual exposure while preserving sufficient perceptual data coverage across the dataset. Random group selection further helped mitigate potential ordering effects and learning biases during the evaluation process. Since the grouping is random, each texture image is scored by multiple observers and the final average is taken. In this study, the minimum, median, and maximum numbers of ratings per image were 4, 5, and 6, respectively, with comparable rating coverage across the art-major and non-art-major groups.

To assess the reliability of the collected subjective ratings, inter-observer agreement was evaluated using Kendall’s coefficient of concordance (W) on representative perceptual attributes. The results indicate a moderate to high level of agreement among observers across both art-major and non-art-major groups, suggesting that aggregating ratings from multiple participants provides a stable perceptual feature representation suitable for subsequent modeling and retrieval.

To ensure consistency in the assessment of individual perceptual attributes, observers were instructed to evaluate one perceptual attribute at a time across all texture samples within a selected group. For each attribute, observers completed the rating task for all samples before proceeding to the next attribute. Short breaks were arranged between consecutive attribute evaluations to alleviate visual and cognitive fatigue and to maintain stable internal evaluation criteria for each perceptual dimension. During the experiment, observers were required to view each texture sample in its entirety and provide ratings for all 12 predefined perceptual attributes according to the experimental instructions. All perceptual rating data were recorded electronically and used for subsequent perceptual feature construction and retrieval modeling.

2.3. Construction of Perceptual Feature Space

Based on the psychophysical experiments described in

Section 2.2, a perceptual feature space is constructed to represent texture images using aggregated human perceptual evaluations. The resulting perceptual feature space is designed for computational retrieval and similarity modeling, rather than for psychological interpretation of human perception. Let the perceptual attribute set be defined as

where

denotes the number of perceptual attributes considered in this study.

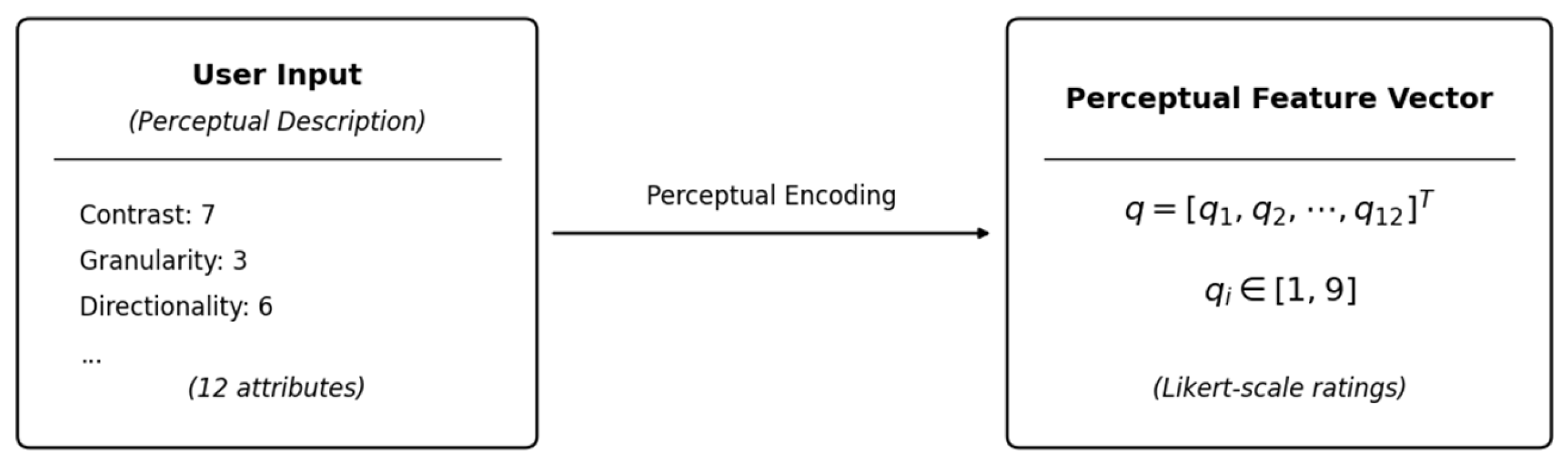

For a given perceptual query specified by the user, the desired perceptual description is represented as an

-dimensional perceptual query vector

where each element

corresponds to the target strength of the

-th perceptual attribute. This perceptual query encoding process is illustrated in

Figure 5.

Similarly, each texture image in the database is represented by a perceptual feature vector derived from human ratings. Suppose that

observers provide rating scores for the

-th perceptual attribute of a texture image. The aggregated perceptual value for this attribute is computed as the mean of the corresponding ratings:

where

denotes the rating score given by the

-th observer for the

-th perceptual attribute.

By aggregating the perceptual values of all attributes, the perceptual feature vector of a texture image is defined as

To ensure balanced contributions of different perceptual attributes during similarity computation, min–max normalization is applied independently to each perceptual dimension:

where

denotes the normalized perceptual feature value of the

-th attribute. After normalization, all texture images are embedded into a unified perceptual feature space with comparable attribute scales.

Here, the minimum and maximum values are computed across all texture images in the dataset for each perceptual attribute independently.

Figure 6 provides an intuitive visualization of the distribution of texture images in the constructed perceptual feature space after perceptual feature normalization.

2.4. Perception-Aligned Texture Retrieval Method

Given a normalized perceptual query vector

and a database of normalized perceptual feature vectors

, perception-aligned texture retrieval is formulated as a similarity measurement problem in the perceptual feature space. The perceptual distance between the query and each texture image is computed using the Euclidean distance metric:

where

denotes the normalized perceptual value of the

-th attribute for the

-th texture image.

For clarity, the squared Euclidean distance can be equivalently expressed as

Based on the computed perceptual distances, all texture images in the database are ranked in ascending order of distance. The Top-

textures with the smallest perceptual distances are selected as the retrieval result set:

where

denotes the texture ranked at the

-th position according to ascending perceptual distance.

To quantitatively evaluate the perceptual consistency between the retrieval results and the user-specified perceptual description, the Perception-Aligned Precision at

(PAP@

) metric is employed. PAP@

is defined as

where

if the

-th retrieved texture is judged to satisfy the perceptual requirements of the query by multiple human observers following the same perceptual attribute definitions and rating criteria as those used in the psychophysical experiments, and

otherwise. The evaluation focuses on whether retrieved textures fall within the perceptually acceptable range defined by the query attributes, rather than requiring strict numerical matching of attribute values.

From a computational perspective, the proposed retrieval framework is lightweight and scalable. Perceptual feature construction is performed offline, and each texture image is represented by a low-dimensional perceptual vector (12 dimensions in this study). During retrieval, similarity computation involves simple Euclidean distance calculation, resulting in a time complexity linear to the database size. This design enables efficient retrieval even for large-scale texture databases and is suitable for interactive retrieval scenarios.

2.5. Baseline Methods and Implementation Details

To provide a comprehensive comparison with conventional image-driven texture retrieval methods, several representative handcrafted and deep learning–based feature representations were implemented as baselines under a unified experimental setting.

For handcrafted feature baselines, texture images were represented using Gray-Level Co-occurrence Matrix (GLCM), Local Binary Pattern (LBP), and Histogram of Oriented Gradients (HOG) descriptors. For all handcrafted features, feature extraction followed standard implementations with default parameter settings, and retrieval was performed by computing Euclidean distances between feature vectors.

For deep learning baselines, VGG16 and ResNet50 networks pre-trained on ImageNet were adopted and used as fixed feature extractors without fine-tuning. Specifically, for ResNet50, features were extracted from the Global Average Pooling layer, resulting in 2048-dimensional feature vectors. For VGG16, features were extracted from the first fully connected layer (FC1), producing 4096-dimensional representations.

Perceptual similarity for the deep learning baselines was computed using Euclidean distance in the corresponding high-dimensional feature spaces. No dimensionality reduction or task-specific feature adaptation was applied, in order to reflect the standard usage of deep features in conventional content-based image retrieval methods. It should be noted that the objective of this study is not to pursue state-of-the-art image retrieval performance through increasingly complex feature representations, but to investigate the effectiveness of an explicit, perception-aligned retrieval formulation. Therefore, representative handcrafted descriptors and widely adopted deep feature extractors (VGG16 and ResNet50) are selected as baselines to reflect conventional image-driven retrieval paradigms. More recent architectures typically require large-scale training data, task-specific adaptation, or high-dimensional embeddings, which are not directly comparable to the proposed low-dimensional perceptual feature space and reference-free query setting. Accordingly, such methods are beyond the scope of the current study and are left for future exploration.

4. Discussion

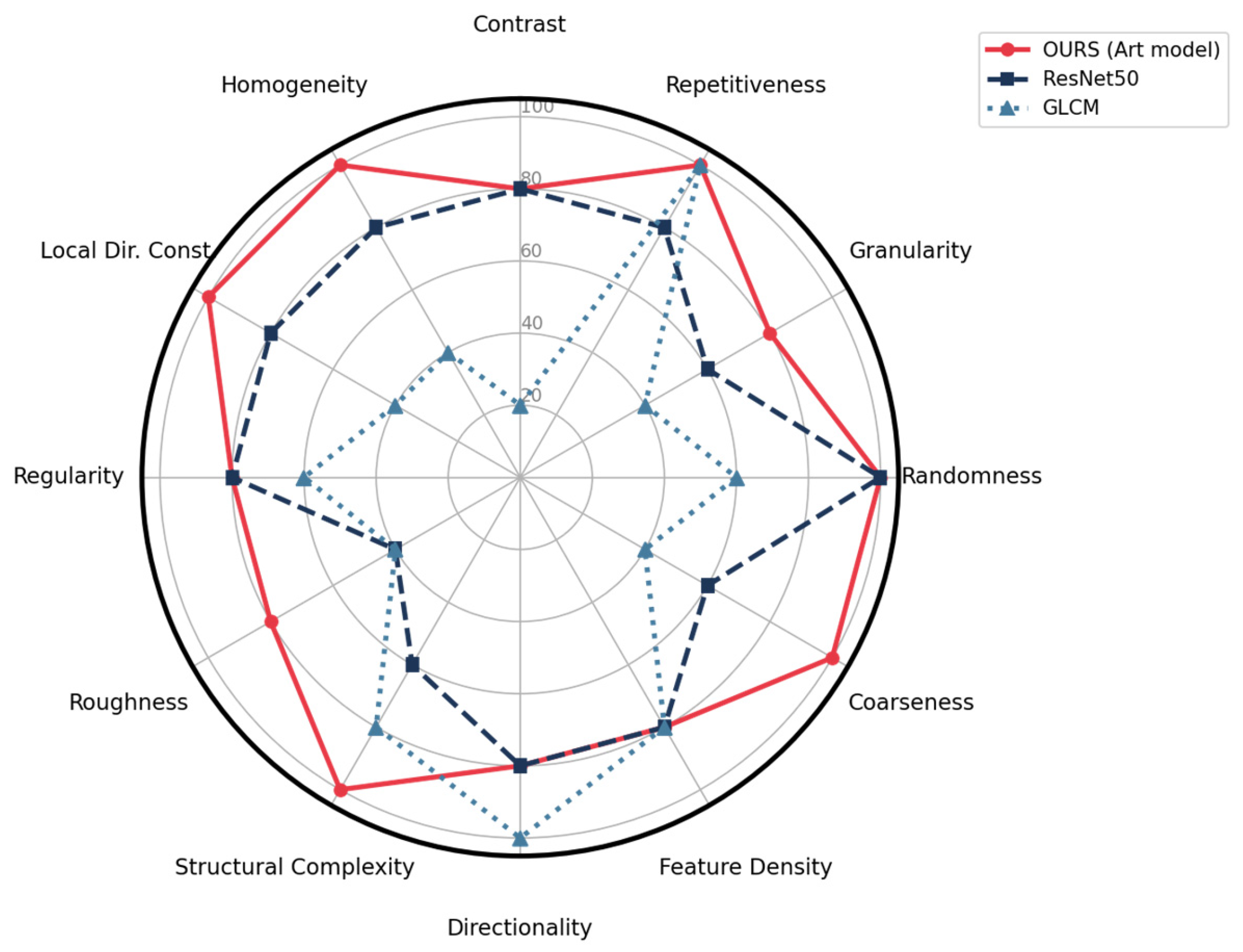

Table 3 summarizes the PAP@5 performance of different texture representation methods across individual perceptual attributes, providing a detailed quantitative basis for analyzing attribute-level retrieval behavior.

Overall, the results reveal clear and systematic differences in how various feature representations respond to distinct perceptual dimensions, highlighting both the strengths and inherent limitations of image-driven features when confronted with explicit perceptual attributes.

To facilitate a holistic interpretation of these attribute-wise results,

Figure 9 presents a radar-chart-based visualization that summarizes perceptual behavior across representative methods.

As illustrated in

Figure 9, conventional handcrafted and deep feature representations exhibit uneven performance across perceptual attributes, with notable fluctuations depending on the attribute type. In contrast, the proposed perceptual feature representations maintain relatively balanced performance across most perceptual dimensions. This observation suggests that explicit perceptual modeling contributes to more consistent attribute-level retrieval behavior. These results suggest that explicitly modeling perceptual attributes contributes to more consistent attribute-level retrieval behavior, particularly in scenarios where perceptual consistency is more critical than category discrimination.

Beyond method-level differences, perceptual behavior may also vary across user groups with different perceptual backgrounds. To further examine the influence of perceptual sensitivity,

Figure 10 provides a focused comparison between the art-major and non-art-major perceptual feature libraries. Overall, the two perceptual feature libraries exhibit highly consistent patterns across most perceptual attributes, suggesting the existence of a shared perceptual baseline in texture perception. This consistency implies that the constructed perceptual feature space captures dominant perceptual tendencies that remain robust across user backgrounds.

At the same time, modest yet systematic differences can be observed for certain perceptual attributes, reflecting variations in perceptual sensitivity associated with user background. These differences do not lead to drastic changes in retrieval behavior but instead manifest as subtle shifts in attribute-level performance. Importantly, such variations remain bounded and do not undermine the overall stability of the proposed retrieval framework.

From an attribute-level perspective, the results further indicate that perceptual attributes differ in their inherent difficulty for perception-driven texture retrieval. Attributes associated with global and visually salient texture properties, such as repetitiveness, randomness, and regularity, consistently achieve high PAP@5 values across perceptual feature libraries. These attributes correspond to prominent global patterns that can be more reliably perceived by observers.

Attributes describing structural organization and spatial arrangement, including structural complexity, coarseness, and feature density, also demonstrate relatively stable retrieval behavior. As shown in

Table 3, retrieval accuracy under these attributes remains competitive across different methods, while the proposed perceptual representations preserve balanced performance. This suggests that explicit perceptual modeling is effective in capturing mid-level texture characteristics that are not fully determined by local pixel statistics.

In contrast, perceptual attributes related to finer local variations, such as granularity and roughness, exhibit greater variability across feature representation methods. While the proposed perceptual feature libraries maintain relatively stable accuracy under these attributes, traditional handcrafted features show more pronounced fluctuations. This observation highlights the inherent difficulty of representing subtle local texture cues using purely image-driven descriptors and underscores the advantage of incorporating subjective perceptual evaluations into texture representation.

Taken together, the results demonstrate that the proposed perception-aligned retrieval framework achieves balanced performance across diverse perceptual attributes while accommodating user-group-specific perceptual tendencies. By jointly modeling attribute-level behavior and user-group effects within a unified perceptual feature space, the framework avoids over-specialization and preserves system simplicity. This design is particularly suitable for reference-free texture retrieval scenarios, where user intent is expressed through subjective perceptual descriptions and perceptual background may influence interpretation.

From the perspective of controlled perceptual evaluation, the observed retrieval behavior is grounded in perceptual feature representations derived from aggregated subjective ratings. Standardized experimental conditions, predefined perceptual attributes, and consistent rating scales contribute to the stability of the constructed perceptual feature space. By aggregating perceptual ratings across multiple observers, individual variability is reduced while dominant perceptual tendencies are retained. The consistency observed across perceptual attributes and user groups therefore reflects both the effectiveness of the perception-aligned retrieval formulation and the reliability of the underlying psychophysical data. Within the scope of the current experimental setting, these results support the feasibility and practical effectiveness of using human-rated perceptual attributes as explicit feature dimensions for perception-driven texture retrieval.

Despite the promising results, this study has certain limitations that should be addressed in future work. First, the perceptual feature libraries were constructed based on data from 62 participants. While this controlled participant group enabled stable perceptual evaluation under standardized conditions, a larger and more culturally diverse demographic could further enhance the universality of the perceptual baseline. It should be noted that formal statistical significance testing was not the primary focus of this study, as the objective was to construct a stable perceptual feature space for retrieval rather than to compare competing hypotheses. Instead, the observed consistency across perceptual attributes and user groups provides practical evidence of perceptual alignment under the proposed framework. Second, the current framework relies on 12 predefined attributes. Although these cover major perceptual dimensions, they may not capture all subtle semantic nuances of complex artistic textures. Future research could explore expanding the attribute set or incorporating open-vocabulary descriptions to cover a broader range of visual semantics. Finally, the experiments were conducted on grayscale textures to control for color interference; extending the framework to fully incorporate color-perception interactions remains an important direction for practical applications.

5. Conclusions

This paper presents a perception-aligned texture image retrieval framework that operates directly in a human-centered perceptual feature space constructed from psychophysical experiments. By explicitly modeling texture appearance using subjective visual attributes, the proposed approach enables reference-free texture retrieval driven by perceptual descriptions rather than example images, addressing a key limitation of conventional image-driven retrieval methods.

Through controlled psychophysical experiments, perceptual ratings were collected under standardized conditions and aggregated to construct stable and interpretable perceptual feature representations. Based on this perceptual feature space, a unified retrieval formulation was developed, in which similarity is measured directly in the perceptual domain and retrieval results are obtained through distance-based ranking. Quantitative and qualitative evaluations demonstrate that the proposed method achieves consistent perception-aligned retrieval performance across different perceptual attributes and user groups.

In addition, the introduction of group-specific perceptual feature libraries for art-major and non-art-major participants provides a practical mechanism for accommodating perceptual sensitivity differences while preserving a unified retrieval pipeline. The discussion of attribute-level and user-group effects further illustrates the robustness and interpretability of the proposed perception-driven retrieval framework.

Overall, this work highlights the feasibility of integrating psychophysical perceptual data into texture retrieval system design. By bridging human subjective perception and computational retrieval models, the proposed framework offers an interpretable and flexible solution for perception-driven texture retrieval scenarios. From a system design perspective, such a perception-aligned retrieval framework can be readily integrated into interactive material search systems and human–computer interfaces, where users specify retrieval intent through perceptual descriptors rather than example images, thereby providing a practical foundation for future research on perceptual modeling and user-adaptive visual retrieval.