Quality Assessment of Artificial Intelligence Systems: A Metric-Based Approach

Abstract

1. Introduction

1.1. Motivation

1.2. Background

- assessment of data quality;

- assessment of the quality of algorithms and models;

- assessment of the quality of AI-based systems as a whole.

- quality models for AI systems;

- quality models for AI systems for specific application domains;

- studies devoted to particular (sub)characteristics of AI system quality.

1.3. Aim, Objectives, and Structure

- To analyse existing international standards regulating quality models and procedures for measuring quality (sub)characteristics of AI systems, with a focus on identifying inconsistencies between the new and outdated versions of the ISO/IEC 25000 series standards.

- To develop an updated quality model for AI systems from two perspectives—product quality and quality in use—aligning it with the updated version of international standards.

- To develop a justified set of metrics for the evaluation of quality characteristics and subcharacteristics of AI systems in accordance with the current versions of international standards in the field of AI systems and existing best practices in AI system quality assessment.

2. Development of a Standardised Technology-Oriented Quality Model for Artificial Intelligence Systems

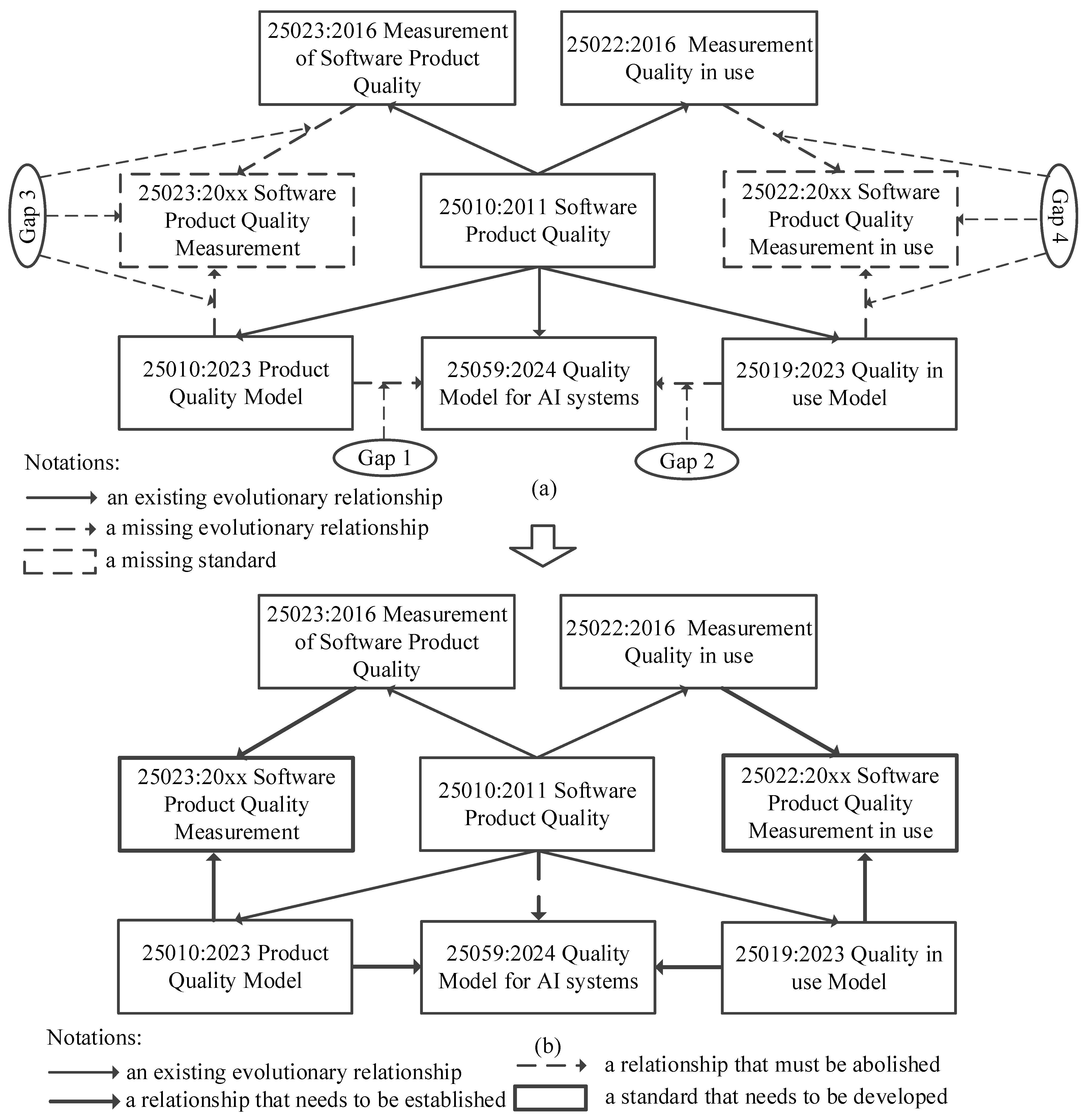

2.1. ISO/IEC Standards Overview

- All modified characteristics and subcharacteristics described in the standard ISO/IEC 25010:2023 and not included in this form in the standard ISO/IEC 25059:2024 must be included in the updated quality model of AI systems;

- All new characteristics and subcharacteristics of the quality model presented in the standard ISO/IEC 25059:2024, and reflecting the specific features of AI systems, must be included in the updated quality model of artificial intelligence systems.

2.2. Updated Product Quality Model Development

2.3. Updated Quality in Use Model Development

3. AI System Product Quality Model Measures

- For quality (sub)characteristics carried over unchanged from the ISO/IEC 25010 software product quality model into the ISO/IEC 25059 AI system quality model, the metrics defined in ISO/IEC 25023 may be used, as mentioned in the guidelines for the evaluation of AI systems [50]. The ISO/IEC 25023 standard is currently under revision and contains a set of quality metrics for each (sub)characteristic, as well as recommendations for their application to software products and systems. The applicability of these metrics to AI systems is not discussed in this paper.

- For the new characteristics and subcharacteristics specific to artificial intelligence, which were introduced for the first time in the ISO/IEC 25059 product quality model, the guidance provided in ISO/IEC 25058 may be used. This document contains recommendations for the evaluation of AI system quality; however, this document does not contain any metrics. The authors propose their own metrics, developed in accordance with the recommendations of ISO/IEC 25058.

- For characteristics and subcharacteristics not covered by the current standards and transferred into the AI model from the updated ISO/IEC 25010, the metrics will be proposed by the authors on the basis of an analysis of the results of existing studies, current practice, and other ISO/IEC standards.

- Response time for intervention—the time delay between the receipt of a user intervention instruction by the system and its subsequent action.

- Intervention success rate: the percentage of user-issued intervention instructions that are correctly identified and executed by the AI system, thereby achieving the desired outcomes in specific tests or usage scenarios.

- User Intervention Survey—the level of user satisfaction when intervening with AI systems or software.

- AI Safety focuses on preventing internal risks associated with AI behaviour and decision-making, in order to reduce the likelihood of adverse impacts on business or society.

- AI Security, in contrast, addresses protection against external threats and malicious interference.

- Malicious use (e.g., the spread of fake content, manipulation of public opinion, cybercrime, biological or chemical threats).

- Risks from malfunctions (e.g., reliability issues, algorithmic bias, loss of control).

- Systemic risks (e.g., labour market disruption, global inequalities in AI R&D, market concentration and single points of failure, threats to the environment, privacy, and intellectual property).

- Additionally, the use of open-weight general-purpose AI models may exacerbate many of these risks.

4. AI System Quality in Use Model Measures

5. Discussion

- An updated technology-oriented quality model for AI systems is proposed, taking into account the specific features of AI systems (ISO/IEC 25059:2023) and the latest updates to the traditional quality models from the 25010 standards (ISO/IEC 25010:2023, ISO/IEC 25019:2023). The quality model has been considered from two perspectives: product quality and the quality in use model.

- A metric-based approach to the assessment of AI system quality is proposed on the basis of the quality model. This approach envisages the use of standardised metrics for (sub)characteristics of AI systems transferred from the traditional product quality and quality in use models, and also proposes metrics for new (sub)characteristics included in the updated technology-oriented quality model for AI systems.

5.1. Harmonisation and the Timely Updating and Development of International Standards in the Field of AI

5.2. Limitations and Challenges in Applying Standardised Metrics to AI Systems Quality Assessment

5.3. Quality Assessment of AI Systems vs. AI Models

5.4. Context of the AI System

5.5. Development of Assessment Tools and Testing Methods for AI Systems

6. Conclusions and Future Work

Supplementary Materials

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Turing, A. Turing 1936 on Cumputable Numbers; Internet Archive: San Francisco, CA, USA, 1936; Available online: http://archive.org/details/Turing1936OnCumputableNumbers (accessed on 1 September 2025).

- Turing, A.M. Computing Machinery and Intelligence. Mind 1950, 59, 433–460. [Google Scholar] [CrossRef]

- McCarthy, J.; Minsky, M.L.; Rochester, N.; Shannon, C.E. A Proposal for the Dartmouth Summer Research Project on Artificial Intelligence: August 31, 1955. AI Mag. 2006, 27, 12–14. [Google Scholar] [CrossRef]

- Wiener, N. Cybernetics or Control and Communication in the Animal and the Machine, 2nd ed.; MIT Press: Cambridge, MA, USA, 1961. [Google Scholar]

- Tu, X.; He, Z.; Huang, Y.; Zhang, Z.-H.; Yang, M.; Zhao, J. An overview of large AI models and their applications. Vis. Intell. 2024, 2, 34. [Google Scholar] [CrossRef]

- Musa, J.D. Software Reliability Engineering: More Reliable Software Faster and Cheaper, 2nd ed.; AuthorHouse: Bloomington, IN, USA, 2004. [Google Scholar]

- Pressman, R.S.; Maxim, B.R. Software Engineering: A Practitioner’s Approach; McGraw Hill: New York, NY, USA, 2015. [Google Scholar]

- Sommerville, I. Software Engineering; Pearson: Boston, MA, USA; Munich, Germany, 2016. [Google Scholar]

- Smith, H. Algorithmic bias: Should students pay the price? AI Soc. 2020, 35, 1077–1078. [Google Scholar] [CrossRef]

- Heaton, D.; Nichele, E.; Clos, J.; Fischer, J.E. “The algorithm will screw you”: Blame, social actors and the 2020 A Level results algorithm on Twitter. PLoS ONE 2023, 18, e0288662. [Google Scholar] [CrossRef]

- Engel, C.; Linhardt, L.; Schubert, M. Code is law: How COMPAS affects the way the judiciary handles the risk of recidivism. Artif. Intell. Law 2025, 33, 383–404. [Google Scholar] [CrossRef]

- Strickland, E. How IBM Watson Overpromised and Underdelivered on AI Health Care—IEEE Spectrum; Spectrum IEEE: New York, NY, USA, 2019; Available online: https://spectrum.ieee.org/how-ibm-watson-overpromised-and-underdelivered-on-ai-health-care (accessed on 1 September 2025).

- Troncoso, I.; Runshan Fu, N.M.; Proserpio, D. Algorithm Failures and Consumers’ Response: Evidence from Zillow; Working Paper; Harvard Business School: Boston, MA, USA, 2023; Available online: https://www.hbs.edu/faculty/Pages/item.aspx?num=64507 (accessed on 1 September 2025).

- Alvi, M.; Zisserman, A.; Nellaker, C. Turning a Blind Eye: Explicit Removal of Biases and Variation from Deep Neural Network Embeddings. arXiv 2018, arXiv:1809.02169. [Google Scholar] [CrossRef]

- Mills, K.G. Gender Bias Complaints Against Apple Card Signal a Dark Side to Fintech|Working Knowledge; Harvard Business School: Boston, MA, USA, 2019; Available online: https://www.library.hbs.edu/working-knowledge/gender-bias-complaints-against-apple-card-signal-a-dark-side-to-fintech (accessed on 1 September 2025).

- Brand, J.L.M. Air Canada’s chatbot illustrates persistent agency and responsibility gap problems for AI. AI Soc. 2025, 40, 3361–3363. [Google Scholar] [CrossRef]

- Ryan, W.A.; Garrett, A.; Sears, B. Practical Lessons from the Attorney AI Missteps in Mata v. Avianca. Available online: https://www.acc.com/resource-library/practical-lessons-attorney-ai-missteps-mata-v-avianca (accessed on 22 September 2025).

- Harris, M. NTSB Investigation into Deadly Uber Self-Driving Car Crash Reveals Lax Attitude Toward Safety; IEEE Spectrum: New York, NY, USA, 2019; Available online: https://spectrum.ieee.org/ntsb-investigation-into-deadly-uber-selfdriving-car-crash-reveals-lax-attitude-toward-safety (accessed on 1 September 2025).

- Buolamwini, J.; Gebru, T. Gender Shades: Intersectional Accuracy Disparities in Commercial Gender Classification. In Proceedings of the 1st Conference on Fairness, Accountability and Transparency, PMLR, New York, NY, USA, 23–24 February 2018; pp. 77–91. Available online: https://proceedings.mlr.press/v81/buolamwini18a.html (accessed on 2 September 2025).

- AI Incident Database. Available online: https://incidentdatabase.ai/ (accessed on 1 September 2025).

- ISO/IEC 25059:2023; Software Engineering—Systems and Software Quality Requirements and Evaluation (SQuaRE)—Quality model for AI Systems. International Organization for Standardization: Geneva, Switzerland, 2023. Available online: https://www.iso.org/standard/80655.html (accessed on 2 September 2025).

- Ali, M.A.; Yap, N.K.; Ghani, A.A.A.; Zulzalil, H.; Admodisastro, N.I.; Najafabadi, A.A. A Systematic Mapping of Quality Models for AI Systems, Software and Components. Appl. Sci. 2022, 12, 8700. [Google Scholar] [CrossRef]

- Gezici, B.; Tarhan, A.K. Systematic literature review on software quality for AI-based software. Empir. Softw. Eng. 2022, 27, 66. [Google Scholar] [CrossRef]

- ISO/IEC 25010:2023; Systems and Software Engineering—Systems and Software Quality Requirements and Evaluation (SQuaRE)—Product Quality Model. International Organization for Standardization: Geneva, Switzerland, 2023. Available online: https://www.iso.org/standard/78176.html (accessed on 2 September 2025).

- ISO/IEC 25010:2011; Systems and Software Engineering—Systems and Software Quality Requirements and Evaluation (SQuaRE)—System and Software Quality Models. International Organization for Standardization: Geneva, Switzerland, 2011.

- Heck, P. A Quality Model for Trustworthy AI Systems; Fontys: Eindhoven, The Netherlands, 2022; Available online: https://fontysblogt.nl/a-quality-model-for-trustworthy-ai-systems/ (accessed on 7 September 2025).

- Kuwajima, H.; Ishikawa, F. Adapting SQuaRE for Quality Assessment of Artificial Intelligence Systems. In Proceedings of the 2019 IEEE International Symposium on Software Reliability Engineering Workshops (ISSREW), Berlin, Germany, 27–30 October 2019. [Google Scholar] [CrossRef]

- Ramos, T.; Dean, A.; McGregor, D. AI-Augmented Software Engineering: Revolutionizing or Challenging Software Quality and Testing? J. Softw. Evol. Process 2025, 37, e2741. [Google Scholar] [CrossRef]

- Smith, A.L.; Clifford, R. Quality Characteristics of Artificially Intelligent Systems. In Proceedings of the IWESQ APSEC, Singapore, 1–4 December 2020; pp. 1–6. [Google Scholar]

- Indykov, V.; Strüber, D.; Wohlrab, R. Architectural tactics to achieve quality attributes of machine-learning-enabled systems: A systematic literature review. J. Syst. Softw. 2025, 223, 112373. [Google Scholar] [CrossRef]

- Siebert, J.; Joeckel, L.; Heidrich, J.; Trendowicz, A.; Nakamichi, K.; Ohashi, K.; Namba, I.; Yamamoto, R.; Aoyama, M. Construction of a quality model for machine learning systems. Softw. Qual. J. 2022, 30, 307–335. [Google Scholar] [CrossRef]

- Nakamichi, K.; Ohashi, K.; Namba, I.; Yamamoto, R.; Aoyama, M.; Joeckel, L.; Siebert, J.; Heidrich, J. Requirements-Driven Method to Determine Quality Characteristics and Measurements for Machine Learning Software and Its Evaluation. In Proceedings of the 2020 IEEE 28th International Requirements Engineering Conference (RE), Zurich, Switzerland, 31 August–4 September 2020; pp. 260–270. [Google Scholar] [CrossRef]

- Kelly, J.; Zafar, S.A.; Heidemann, L.; Zacchi, J.-V.; Espinoza, D.; Mata, N. Navigating the EU AI Act: A Methodological Approach to Compliance for Safety-critical Products. In Proceedings of the Conference on Artificial Intelligence 2024, Singapore, 25–27 June 2024. [Google Scholar] [CrossRef]

- ISO/PAS 8800:2024; Road Vehicles—Safety and Artificial Intelligence. International Organization for Standardization: Geneva, Switzerland, 2024. Available online: https://www.iso.org/standard/83303.html (accessed on 22 September 2025).

- Martínez-Fernández, S.; Bogner, J.; Franch, X.; Oriol, M.; Siebert, J.; Trendowicz, A.; Vollmer, A.M.; Wagner, S. Software Engineering for AI-Based Systems: A Survey. ACM Trans. Softw. Eng. Methodol. 2022, 31, 1–59. [Google Scholar] [CrossRef]

- Yu, L.; Alégroth, E.; Chatzipetrou, P.; Gorschek, T. Measuring the quality of generative AI systems: Mapping metrics to quality characteristics—Snowballing literature review. Inf. Softw. Technol. 2025, 186, 107802. [Google Scholar] [CrossRef]

- Habibullah, K.M.; Gay, G.; Horkoff, J. Non-functional requirements for machine learning: Understanding current use and challenges among practitioners. Requir. Eng. 2023, 28, 283–316. [Google Scholar] [CrossRef]

- Oviedo, J.; Rodriguez, M.; Trenta, A.; Cannas, D.; Natale, D.; Piattini, M. ISO/IEC quality standards for AI engineering. Comput. Sci. Rev. 2024, 54, 100681. [Google Scholar] [CrossRef]

- Kharchenko, V.; Fesenko, H.; Illiashenko, O. Quality Models for Artificial Intelligence Systems: Characteristic-Based Approach, Development and Application. Sensors 2022, 22, 4865. [Google Scholar] [CrossRef]

- Gu, K.; Liu, H.; Liu, Y.; Qiao, J.; Zhai, G.; Zhang, W. Perceptual Information Fidelity for Quality Estimation of Industrial Images. IEEE Trans. Circuits Syst. Video Technol. 2025, 35, 477–491. [Google Scholar] [CrossRef]

- Oviedo, J.; Rodríguez, M.; Piattini, M. An Environment for the Assessment of the Functional Suitability of AI Systems. In Quality of Information and Communications Technology; Bertolino, A., Pascoal Faria, J., Lago, P., Semini, L., Eds.; Springer Nature: Cham, Switzerland, 2024; pp. 21–34. [Google Scholar] [CrossRef]

- Zhang, H.; Xu, Y.; Luo, R.; Mao, Y. Fast GNSS acquisition algorithm based on SFFT with high noise immunity. China Commun. 2023, 20, 70–83. [Google Scholar] [CrossRef]

- Nastoska, A.; Jancheska, B.; Rizinski, M.; Trajanov, D. Evaluating Trustworthiness in AI: Risks, Metrics, and Applications Across Industries. Electronics 2025, 14, 2717. [Google Scholar] [CrossRef]

- Kemmerzell, N.; Schreiner, A.; Khalid, H.; Schalk, M.; Bordoli, L. Towards a Better Understanding of Evaluating Trustworthiness in AI Systems. ACM Comput. Surv. 2025, 57, 1–38. [Google Scholar] [CrossRef]

- Díaz-Rodríguez, N.; Del Ser, J.; Coeckelbergh, M.; López de Prado, M.; Herrera-Viedma, E.; Herrera, F. Connecting the dots in trustworthy Artificial Intelligence: From AI principles, ethics, and key requirements to responsible AI systems and regulation. Inf. Fusion 2023, 99, 101896. [Google Scholar] [CrossRef]

- Qi, P.; Liu, B.; Di, S.; Liu, J.; Pei, J.; Yi, J.; Zhou, B. Trustworthy AI: From Principles to Practices. ACM Comput. Surv. 2023, 55, 1–46. [Google Scholar] [CrossRef]

- Guo, M.; Guo, D.; Li, M. Metrics and Testing Methods for Artificial Intelligence Software Quality Models and Their Application Examples. In Proceedings of the 2024 11th International Conference on Dependable Systems and Their Applications (DSA), Suzhou, China, 2–3 November 2024; pp. 35–42. [Google Scholar] [CrossRef]

- ISO/IEC 23053:2022; Framework for Artificial Intelligence (AI) Systems Using Machine Learning (ML). International Organization for Standardization: Geneva, Switzerland, 2022. Available online: https://www.iso.org/standard/74438.html (accessed on 2 September 2025).

- ISO/IEC 25019:2023; Systems and Software Engineering—Systems and Software Quality Requirements and Evaluation (SQuaRE)—Quality-in-Use Model. International Organization for Standardization: Geneva, Switzerland, 2023. Available online: https://www.iso.org/standard/78177.html (accessed on 2 September 2025).

- ISO/IEC TS 25058:2024; Systems and Software Engineering—Systems and Software Quality Requirements and Evaluation (SQuaRE)—Guidance for Quality Evaluation of Artificial Intelligence (AI) Systems. International Organization for Standardization: Geneva, Switzerland, 2024. Available online: https://www.iso.org/standard/82570.html (accessed on 2 September 2025).

- ISO/IEC 25023:2016; Systems and Software Engineering—Systems and Software Quality Requirements and Evaluation (SQuaRE)—Measurement of System and Software Product Quality. International Organization for Standardization: Geneva, Switzerland, 2016. Available online: https://www.iso.org/standard/35747.html (accessed on 2 September 2025).

- ISO/IEC 25022:2016; Systems and Software Engineering—Systems and Software Quality Requirements and Evaluation (SQuaRE)—Measurement of Quality in Use. International Organization for Standardization: Geneva, Switzerland, 2016. Available online: https://www.iso.org/standard/35746.html (accessed on 2 September 2025).

- Lopez-Paz, D.; Ranzato, M. Gradient Episodic Memory for Continual Learning. arXiv 2022, arXiv:1706.08840. [Google Scholar] [CrossRef]

- ISO 9241-110:2020; Ergonomics of Human-System Interaction Part 110: Interaction Principles. International Organization for Standardization: Geneva, Switzerland, 2020. Available online: https://www.iso.org/standard/75258.html (accessed on 2 September 2025).

- Lavazza, L.; Morasca, S. Understanding and Modeling AI-Intensive System Development. In Proceedings of the 2021 IEEE/ACM 1st Workshop on AI Engineering—Software Engineering for AI (WAIN), Madrid, Spain, 30–31 May 2021; pp. 55–61. [Google Scholar] [CrossRef]

- ISO/IEC TS 4213:2022; Information Technology—Artificial Intelligence—Assessment of Machine Learning Classification Performance. International Organization for Standardization: Geneva, Switzerland, 2022. Available online: https://www.iso.org/standard/79799.html (accessed on 2 September 2025).

- IEEE 2937-2022; IEEE Standard for Performance Benchmarking for Artificial Intelligence Server Systems. IEEE Standards Association: Piscataway, NJ, USA, 2022. Available online: https://standards.ieee.org/ieee/2937/10376/ (accessed on 2 September 2025).

- Brand, L.; Humm, B.G.; Krajewski, A.; Zender, A. Towards Improved User Experience for Artificial Intelligence Systems. In Engineering Applications of Neural Networks; Iliadis, L., Maglogiannis, I., Alonso, S., Jayne, C., Pimenidis, E., Eds.; Springer Nature: Cham, Switzerland, 2023; pp. 33–44. [Google Scholar] [CrossRef]

- Zender, A.; Humm, B.G.; Holzheuser, A. Enhancing User Experience in Artificial Intelligence Systems: A Practical Approach. In Software, System, and Service Engineering; Kardas, G., Luković, I., Milašinović, B., Popović, A., Radliński, Ł., Staroń, M., Swacha, J., Przybyłek, A., Eds.; Springer Nature: Cham, Switzerland, 2025; pp. 113–131. [Google Scholar] [CrossRef]

- Vinayagasundaram, B.; Srivatsa, S.K. Software Quality in Artificial Intelligence System. Inf. Technol. J. 2007, 6, 835–842. [Google Scholar] [CrossRef]

- ISO/IEC TS 8200:2024; Information Technology—Artificial Intelligence—Controllability of Automated Artificial Intelligence Systems. International Organization for Standardization: Geneva, Switzerland, 2024. Available online: https://www.iso.org/standard/83012.html (accessed on 2 September 2025).

- OpenAI; Achiam, J.; Adler, S.; Agarwal, S.; Ahmad, L.; Akkaya, I.; Aleman, F.L.; Almeida, D.; Altenschmidt, J.; Altman, S.; et al. GPT-4 Technical Report. arXiv 2024, arXiv:2303.08774. [Google Scholar] [CrossRef]

- Fehr, J.; Citro, B.; Malpani, R.; Lippert, C.; Madai, V.I. A trustworthy AI reality-check: The lack of transparency of artificial intelligence products in healthcare. Front. Digit. Health 2024, 6, 1267290. [Google Scholar] [CrossRef]

- European Commission. Assessment List for Trustworthy Artificial Intelligence (ALTAI) for Self-Assessment. 2020. Available online: https://digital-strategy.ec.europa.eu/en/library/assessment-list-trustworthy-artificial-intelligence-altai-self-assessment (accessed on 2 September 2025).

- Nicolae, I.E.; Danciu, G.; Nanou, C.; Koulierakis, N.; Danilatou, V. Transparency Metrics for Artificial Intelligence-Driven Applications in Healthcare. In Proceedings of the 13th Hellenic Conference on Artificial Intelligence, in SETN ’24, New York, NY, USA, 11–13 September 2024; Association for Computing Machinery: New York, NY, USA, 2024; pp. 1–8. [Google Scholar] [CrossRef]

- Wan, A.; Klyman, K.; Kapoor, S.; Maslej, N.; Longpre, S.; Xiong, B.; Liang, P.; Bommasani, R. The Foundation Model Transparency Index. arXiv 2023, arXiv:2310.12941. [Google Scholar] [CrossRef]

- Barmer, H.; Dzombak, R.; Gaston, M.; Heim, E.; Palat, J.; Redner, F.; Smith, T.; VanHoudnos, N.M. Robust and Secure AI; Software Engineering Institute: Pittsburgh, PA, USA, 2021; Available online: https://www.sei.cmu.edu/library/robust-and-secure-ai/ (accessed on 2 September 2025).

- ISO/IEC TR 24029-1:2021; Artificial Intelligence (AI)—Assessment of the Robustness of Neural Networks Part 1: Overview. International Organization for Standardization: Geneva, Switzerland, 2021. Available online: https://www.iso.org/standard/77609.html (accessed on 2 September 2025).

- ISO/IEC 24029-2:2023; Artificial Intelligence (AI)—Assessment of the Robustness of Neural Networks Part 2: Methodology for the Use of Formal Methods. International Organization for Standardization: Geneva, Switzerland, 2023. Available online: https://www.iso.org/standard/79804.html (accessed on 2 September 2025).

- Powell, R.; Stockwell, S.; Sharadjaya, N. Towards Secure AI. How Far Can International Standards Take Us? Alan Turing Institute: Pittsburgh, PA, USA, 2024; Available online: https://cetas.turing.ac.uk/publications/towards-secure-ai (accessed on 2 September 2025).

- ISO/IEC DIS 27090; Cybersecurity—Artificial Intelligence—Guidance for Addressing Security Threats and Compromises to Artificial Intelligence Systems. International Organization for Standardization: Geneva, Switzerland, 2025. Available online: https://www.iso.org/standard/56581.html (accessed on 2 September 2025).

- Vassilev, A.; Oprea, A.; Fordyce, A.; Anderson, H. Adversarial Machine Learning: A Taxonomy and Terminology of Attacks and Mitigations; NIST 100-2e2023; National Institute of Standards and Technology (U.S.): Gaithersburg, MD, USA, 2024. Available online: https://nvlpubs.nist.gov/nistpubs/ai/NIST.AI.100-2e2023.pdf (accessed on 2 September 2025).

- Hu, Y.; Kuang, W.; Qin, Z.; Li, K.; Zhang, J.; Gao, Y.; Li, W.; Li, K. Artificial Intelligence Security: Threats and Countermeasures. ACM Comput. Surv. 2021, 55, 1–36. [Google Scholar] [CrossRef]

- Saeed, M.M.; Alsharidah, M. Security, privacy, and robustness for trustworthy AI systems: A review. Comput. Electr. Eng. 2024, 119, 109643. [Google Scholar] [CrossRef]

- Liu, Q.; Li, P.; Zhao, W.; Cai, W.; Yu, S.; Leung, V.C.M. A Survey on Security Threats and Defensive Techniques of Machine Learning: A Data Driven View. IEEE Access 2018, 6, 12103–12117. [Google Scholar] [CrossRef]

- Grosse, K.; Alahi, A. A qualitative AI security risk assessment of autonomous vehicles. Transp. Res. Part C Emerg. Technol. 2024, 169, 104797. [Google Scholar] [CrossRef]

- Musser, M.; Lohn, A.; Dempsey, J.X.; Spring, J.; Kumar, R.S.S.; Leong, B.; Liaghati, C.; Martinez, C.; Grant, C.D.; Rohrer, D.; et al. Adversarial Machine Learning and Cybersecurity; Center for Security and Emerging Technology: Washington, DC, USA, 2023; Available online: https://cset.georgetown.edu/publication/adversarial-machine-learning-and-cybersecurity/ (accessed on 2 September 2025).

- Mitre. Navigate Threats to AI Systems Through Real-World Insights. Available online: https://atlas.mitre.org/ (accessed on 2 September 2025).

- OWASP. OWASP Top 10 for LLM Applications 2025. 2024. Available online: https://genai.owasp.org/llm-top-10/ (accessed on 2 September 2025).

- Bezombes, P.; Brunessaux, S.; Cadzow, S. Cybersecurity of AI and Standardisation|ENISA. 2023. Available online: https://www.enisa.europa.eu/publications/cybersecurity-of-ai-and-standardisation (accessed on 2 September 2025).

- Barmer, H.; Dzombak, R.; Gaston, M.; Palat, V.; Redner, F.; Smith, T.; Wohlbier, J. Scalable AI; Carnegie Mellon University: Pittsburgh, PA, USA, 2021; Available online: https://kilthub.cmu.edu/articles/report/Scalable_AI/16560273/1?file=30632712 (accessed on 2 September 2025).

- Mishra, A. Scalable AI and Design Patterns: Design, Develop, and Deploy Scalable AI Solutions; Apress: Berkeley, CA, USA, 2024. [Google Scholar] [CrossRef]

- Scepanski, E.; Zillner, S. AI Systems and their Scalability—A Systematic Literature Review. In Proceedings of the ACIS 2024, Canberra, Australia, 4–6 December 2024; Available online: https://aisel.aisnet.org/acis2024/95 (accessed on 2 September 2025).

- ISO/IEC TR 29119-11:2020; Software and Systems Engineering. Software Testing. Guidelines on the Testing of AI-Based Systems. International Organization for Standardization: Geneva, Switzerland, 2020. Available online: https://www.iso.org/standard/79016.html (accessed on 2 September 2025).

- Bengio, Y.; Mindermann, S.; Privitera, D.; Besiroglu, T. International AI Safety Report 2025. 2025. Available online: https://www.gov.uk/government/publications/international-ai-safety-report-2025 (accessed on 2 September 2025).

- Center for AI Safety. 2023 Impact Report; Center for AI Safety: San Francisco, CA, USA, 2023; Available online: https://safe.ai/work/impact-report/2023 (accessed on 2 September 2025).

- Klüver, C.; Greisbach, A.; Kindermann, M.; Püttmann, B. A requirements model for AI algorithms in functional safety-critical systems with an explainable self-enforcing network from a developer perspective. Secur. Saf. 2024, 3, 2024020. [Google Scholar] [CrossRef]

- ISO/IEC TR 5469:2024; Artificial Intelligence—Functional Safety and AI Systems. International Organization for Standardization: Geneva, Switzerland, 2024. Available online: https://www.iso.org/standard/81283.html (accessed on 2 September 2025).

- IEC 61508-1:2010; Functional Safety of Electrical/Electronic/Programmable Electronic Safety-related Systems. International Electrotechnical Commission: Geneva, Switzerland, 2010. Available online: https://landingpage.bsigroup.com/LandingPage/Series?UPI=BS%20EN%2061508 (accessed on 2 September 2025).

- ISO 26262-1:2018; Road Vehicles – Functional Safety. International Organization for Standardization: Geneva, Switzerland, 2018. Available online: https://blog.ansi.org/ansi/iso-26262-2018-road-vehicle-functional-safety/ (accessed on 2 September 2025).

- ISO 21448:2022; Road Vehicles—Safety of the Intended Functionality. International Organization for Standardization: Geneva, Switzerland. Available online: https://www.iso.org/standard/77490.html (accessed on 2 September 2025).

- Ji, J.; Venkatram, V.; Batalis, S. AI Safety Evaluations: An Explainer|Center for Security and Emerging Technology; CSET: Washington, DC, USA, 2025; Available online: https://cset.georgetown.edu/article/ai-safety-evaluations-an-explainer/ (accessed on 2 September 2025).

- Challen, R.; Denny, J.; Pitt, M.; Gompels, L.; Edwards, T.; Tsaneva-Atanasova, K. Artificial intelligence, bias and clinical safety|BMJ Quality & Safety. BMJ Qual. Saf. 2019, 28, 231–237. [Google Scholar] [PubMed]

- Varshney, K.R. Engineering safety in machine learning. In Proceedings of the 2016 Information Theory and Applications Workshop (ITA), La Jolla, CA, USA, 31 January–5 February 2016; pp. 1–5. [Google Scholar] [CrossRef]

- Morales-Forero, A.; Bassetto, S.; Coatanea, E. Toward safe AI. AI Soc. 2023, 38, 685–696. [Google Scholar] [CrossRef]

- Gordieiev, O.; Kharchenko, V. Profile-Oriented Assessment of Software Requirements Quality: Models, Metrics, Case Study. Int. J. Comput. 2020, 19, 656–665. [Google Scholar] [CrossRef]

- Gordieiev, O.; Kharchenko, V.; Fusani, M. Software Quality Standards and Models Evolution: Greenness and Reliability Issues. In Information and Communication Technologies in Education, Research, and Industrial Applications; Yakovyna, V., Mayr, H.C., Nikitchenko, M., Zholtkevych, G., Spivakovsky, A., Batsakis, S., Eds.; Springer International Publishing: Cham, Switzerland, 2016; pp. 38–55. [Google Scholar] [CrossRef]

- Gordieiev, O.; Rainer, A.; Kharchenko, V.; Pishchukhina, O.; Gordieieva, D. A Unified Approach to the Development of Technology-Based Software Quality Models on the Example of Blockchain Systems. IEEE Access 2024, 12, 118875–118889. [Google Scholar] [CrossRef]

- Gordieiev, O.; Kharchenko, V.; Fominykh, N.; Sklyar, V. Evolution of Software Quality Models in Context of the Standard ISO 25010. In Proceedings of the Ninth International Conference on Dependability and Complex Systems DepCoS-RELCOMEX, Brunów, Poland, 30 June–4 July 2014; Springer: Cham, Switzerland, 2014; pp. 223–232. [Google Scholar] [CrossRef]

- Gordieiev, O.; Kharchenko, V.; Vereshchak, K. Usable Security Versus Secure Usability: An Assessment of Attributes Interaction. In Proceedings of the International Conference on Information and Communication Technologies in Education, Research, and Industrial Applications, Kyiv, Ukraine, 15–18 May 2017; pp. 727–740. [Google Scholar]

- Hughes, A. AI Models vs. AI Systems: Understanding Units of Performance Assessment; Microsoft Research: Redmond, WA, USA, 2022; Available online: https://www.microsoft.com/en-us/research/blog/ai-models-vs-ai-systems-understanding-units-of-performance-assessment/ (accessed on 19 September 2025).

- Sculley, D.; Holt, G.; Golovin, D.; Davydov, E.; Phillips, T.; Ebner, D.; Chaudhary, V.; Young, M.; Crespo, J.-F.; Dennison, D. Hidden Technical Debt in Machine Learning Systems. In Advances in Neural Information Processing Systems; Curran Associates, Inc.: Red Hook, NY, USA, 2015; Available online: https://proceedings.neurips.cc/paper_files/paper/2015/hash/86df7dcfd896fcaf2674f757a2463eba-Abstract.html (accessed on 19 September 2025).

- Felderer, M.; Ramler, R. Quality Assurance for AI-Based Systems: Overview and Challenges (Introduction to Interactive Session). In Software Quality: Future Perspectives on Software Engineering Quality; Winkler, D., Biffl, S., Mendez, D., Wimmer, M., Bergsmann, J., Eds.; Springer International Publishing: Cham, Switzerland, 2021; pp. 33–42. [Google Scholar] [CrossRef]

- Karnouskos, S.; Sinha, R.; Leitão, P.; Ribeiro, L.; Strasser, T.I. The Applicability of ISO/IEC 25023 Measures to the Integration of Agents and Automation Systems. In Proceedings of the IECON 2018—44th Annual Conference of the IEEE Industrial Electronics Society, Washington, DC, USA, 21–23 October 2018; pp. 2927–2934. [Google Scholar] [CrossRef]

| # | Name (Country, Year) | Description | Participants | Consequences |

|---|---|---|---|---|

| 1 | Inaccurate algorithms for grading school examinations (A-levels/GCSE) (UK, 2020) [9,10] | The grade moderation algorithm was used to normalise results, as traditional A-level and GCSE examinations were cancelled due to the COVID-19 pandemic. It downgraded the grades of many pupils, exacerbating inequality. The results were later replaced with teacher-assessed grades | Ofqual (the examinations regulator in England), the Department for Education, schools, and university applicants | As a result of the algorithm’s implementation, approximately 40% of grades were downgraded compared to teacher assessments. This led to protests, the withdrawal of the algorithm, and the publication of analytical reports and academic studies |

| 2 | Biased assessment of recidivism risk using the COMPAS algorithm (USA, 2016) [11] | COMPAS (Correctional Offender Management Profiling for Alternative Sanctions) is a proprietary recidivism risk assessment algorithm widely used by courts in the United States. It exhibited racial disparities in its evaluation of the likelihood of reoffending | Equivant (ex-Northpointe), USA courts, researchers | The algorithm influenced decisions regarding parole, bail, and the length of sentences. For African Americans, the risk was higher. Broad academic debate, the reconsideration of approaches to fairness metrics, and the growing caution of courts and regulators towards AI “black boxes” followed |

| 3 | AI Solution for health care IBM Watson for Oncology (USA, 2013–2020) [12] | Low accuracy of recommendations in clinics, limited data, and issues with clinical integration | IBM, MD Anderson Cancer Center, and other clinics | IBM closed its Watson Health division, and industry lessons on the clinical validation of AI |

| 4 | Inaccurate housing price forecasting by Zillow’s “Zillow Offers” service (USA, 2021) [13] | The algorithms overestimated housing prices, particularly under the sharp market volatility of 2020–2021 | Zillow Group, home sellers/buyers | Business closure, losses—the company lost about $500 million and laid off around 2000 employees; the incident became a case study |

| 5 | Google Photos scandal with the “gorilla” label and the subsequent blocking of the category (USA, 2015) [14] | In a photo with two Black individuals, the algorithm applied the label “gorillas”. A mistaken racist classification | Google Inc. | Reputational damage, the emergence of the notion of an “AI bias incident” as a distinct category of problems, and the rise in initiatives on AI fairness and incident databases |

| 6 | Allegations of gender bias in credit scoring in Apple Card (USA, 2019) [15] | Goldman Sachs launched the Apple Card. Women received significantly lower credit limits than their husbands/partners, even when they had a better credit history or a joint account | Apple, Goldman Sachs, and New York State regulators | Regulatory investigations, debate on the transparency of credit models |

| 7 | Inaccurate responses by Air Canada’s chatbot (Canada, 2024) [16] | In 2022, a passenger consulted Air Canada’s chatbot on the airline’s website to clarify the rules on compensation in the event of the death of a close relative. Air Canada refused to provide compensation, claiming that the chatbot had “made a mistake” and was “not an official source.” | Air Canada, passenger, British Columbia Civil Resolution Tribunal | Court ruling: Air Canada bears full legal responsibility for the responses of its own chatbot; the customer was awarded compensation This case set a precedent: companies cannot invoke an “AI error” as an external problem—everything stated by their AI system is equated with official information. The case is widely discussed by legal experts as an example that the era of the “irresponsible chatbot” has come to an end |

| 8 | “Fake” references from generative AI for a lawyer (USA, 2023) [17] | A lawyer filed a motion with non-existent references to precedents generated by AI | R. Mata, Avianca, Inc., U.S. District Court for the Southern District of New York (SDNY) | Fines and requirements for the verification of AI-generated materials in legal practice |

| 9 | Fatal collision involving an Uber self-driving car with a system developed by the Advanced Technologies Group (ATG) (USA, 2018) [18] | The system misclassified the pedestrian, while the emergency brakes had been disabled. At the same time, the driver was distracted by a phone | Uber Advanced Technologies Group (ATG), National Transportation Safety Board (NTSB), and victim Elaine Herzberg | Death of a person, NTSB report, and tightening of safety requirements for autonomous vehicle testing |

| 10 | Systematic errors in facial recognition (2018) [19] | The study revealed significant differences in gender classification accuracy in commercial facial recognition systems from IBM, Microsoft, and Face++. Errors were minimal for light-skinned men (up to 0.8%) but increased sharply for dark-skinned women, ranging from 20% to 35% | Joy Buolamwini and Timnit Gebru evaluated systems from Microsoft, IBM, and Face++ | Sparked global debate on algorithmic fairness; led to improvements in commercial facial recognition systems; influenced policy discussions and regulation efforts |

| # | Name of (Sub) Characteristics of Quality | Sources | ||

|---|---|---|---|---|

| ISO/IEC 25010:2011 [25] | IS0/IEC 25010:2023 [24] | ISO/IEC 25059:2023 [21] | ||

| 1. | Functional suitability | + | + | + |

| 1.1 | Functional completeness | + | + | + |

| 1.2 | Functional correctness | + | + | + (M) |

| 1.3 | Functional appropriateness | + | + | + |

| 1.4 | Functional adaptability | + (NS) | ||

| 2. | Performance efficiency | + | + | + |

| 2.1 | Time behaviour | + | + | + |

| 2.2 | Resource utilisation | + | + | + |

| 2.3 | Capacity | + | + | + |

| 3. | Compatibility | + | + | + |

| 3.1 | Co-existence | + | + | + |

| 3.1 | Interoperability | + | + | + |

| 4. | Interaction capability | + (R, Usability) | + | + (R, Usability) |

| 4.2 | Appropriateness recognisability | + | + | + |

| 4.3 | Learnability | + | + | + |

| 4.4 | Operability | + | + | + |

| 4.5 | User error protection | + | + | + |

| 4.6 | User engagement | + (R, User interface aesthetics) | + | + (R, User interface aesthetics) |

| 4.7 | Inclusivity | + (S&R, Accessibility) | + | + (S&R, Accessibility) |

| 4.8 | User assistance | + | ||

| 4.9 | Self-descriptiveness | + (NS) | ||

| 4.10 | User controllability | + (NS) | ||

| 4.11 | Transparency | + (NS) | ||

| 5. | Reliability | + | + | + |

| 5.1 | Faultlessness | + (R, Maturity) | + | + (R, Maturity) |

| 5.1 | Availability | + | + | + |

| 5.2 | Fault tolerance | + | + | + |

| 5.3 | Recoverability | + | + | + |

| 5.4 | Robustness | + (NS) | ||

| 6. | Security | + | + | + |

| 6.1 | Confidentiality | + | + | + |

| 6.2 | Integrity | + | + | + |

| 6.3 | Nonrepudiation | + | + | + |

| 6.4 | Accountability | + | + | + |

| 6.5 | Authenticity | + | + | + |

| 6.6 | Resistance | + (NS) | ||

| 6.7 | Intervenability | + (NS) | ||

| 7. | Maintainability | + | + | + |

| 7.1 | Modularity | + | + | + |

| 7.2 | Reusability | + | + | + |

| 7.3 | Analysability | + | + | + |

| 7.4 | Modifiability | + | + | + |

| 7.5 | Testability | + | + | + |

| 8. | Flexibility | + (R, Portability) | + | + (R, Portability) |

| 8.1 | Adaptability | + | + | + |

| 8.2 | Scalability | + (NS) | ||

| 8.3 | Installability | + | + | + |

| 8.4 | Replaceability | + | + | + |

| 9. | Safety | + (NC) | ||

| 9.1 | Operational constraint | + (NS) | ||

| 9.2 | Risk identification | + (NS) | ||

| 9.3 | Fail safe | + (NS) | ||

| 9.4 | Hazard warning | + (NS) | ||

| 9.5 | Safe integration | + (NS) | ||

| # | Name of (Sub) Characteristics of Quality in Use | Sources | ||

|---|---|---|---|---|

| ISO/IEC 25010:2011 [25] | IS0/IEC 25019:2023 [49] | ISO/IEC 25059:2024 [21] | ||

| 1 | Beneficialness | + | ||

| 1.1 | Usability | + (M&R Effectiveness, Efficiency, Satisfaction) | + | |

| 1.1.1 | Effectiveness | + | + | |

| 1.1.2 | Efficiency | + | + | |

| 1.1.3 | Usefulness | + | + | |

| 1.1.4 | Trust | + | + | |

| 1.1.5 | Pleasure | + | + | |

| 1.1.6 | Comfort | + | + | |

| 1.1.7 | Transparency | + | ||

| 1.2 | Accessibility | + | ||

| 1.3 | Suitability | + | ||

| 2 | Freedom from risk | + | + | + |

| 2.1 | Freedom from economic risk | + (R, Economic risk mitigation) | + | + (R, Economic risk mitigation) |

| 2.2 | Freedom from health risk | + (S&R, Health and safety risk mitigation) | + | + (S&R, Health and safety risk mitigation) |

| 2.3 | Freedom from human life risk | + | ||

| 2.4 | Freedom from environmental and societal risk | + (M&R, Environment risk mitigation, Societal and ethical risk mitigation) | + | + (M&R, Environment risk mitigation, Societal and ethical risk mitigation) |

| 3 | Acceptability | + | ||

| 3.1 | Experience | + | ||

| 3.2 | Trustworthiness | + | ||

| 3.3 | Compliance | + | ||

| 4 | Context coverage | + | + | |

| 4.1 | Context completeness | + | + | |

| 4.2 | Flexibility | + | + | |

| № | Quality Characteristic | Quality Subcharacteristic | Quality Measure Name | ID | Source |

|---|---|---|---|---|---|

| 1 | Functional suitability | Functional completeness | Functional coverage | FCp-1-G | [51] |

| 2 | Functional correctness | Functional correctness | FCr-1-G | [51] | |

| 3 | Functional appropriateness | Functional appropriateness of usage objective | FAp-1-G | [51] | |

| Functional appropriateness of system | FAp-2-G | [51] | |||

| 4 | Functional adaptability | Average Accuracy | FAd-1-S | [53] | |

| Backward Transfer | FAd-2-S | ||||

| Forward Transfer | FAd-3-S | ||||

| 5 | Performance efficiency | Time behaviour | Mean response time | PTb-1-G | [51] |

| Response time adequacy | PTb-2-G | ||||

| Mean turnaround time | PTb-3-G | ||||

| Turnaround time adequacy | PTb-4-G | ||||

| Mean throughput | PTb-5-G | ||||

| 6 | Resource utilization | Mean processor utilization | PRu-1-G | [51] | |

| Mean memory utilization | PRu-2-G | ||||

| Mean I/O devices utilization | PRu-3-G | ||||

| Bandwidth utilization | PRu-4-S | ||||

| 7 | Capacity | Transaction processing capacity | PCa-1-G | [51] | |

| User access capacity | PCa-2-G | ||||

| User access increase adequacy | PCa-3-S | ||||

| 8 | Compatibility | Co-existence | Co-existence with other products | CCo-1-G | [51] |

| 9 | Interoperability | Data formats exchangeability | CIn-1-G | [51] | |

| Data exchange protocol sufficiency | CIn-2-G | ||||

| External interface adequacy | CIn-3-S | ||||

| 10 | Interaction capability | Appropriateness recognisability | Description completeness | UAp-1-G | [51] |

| Demonstration coverage | UAp-2-S | ||||

| Entry point self-descriptiveness | UAp-3-S | ||||

| 11 | Learnability | User guidance completeness | ULe-1-G | [51] | |

| Entry fields defaults | ULe-2-S | ||||

| Error message understandability | ULe-3-S | ||||

| Self-explanatory user interface | ULe-4-S | ||||

| 12 | Operability | Operational consistency | UOp-1-G | [51] | |

| Message clarity | UOp-2-G | ||||

| Functional customizability | UOp-3-S | ||||

| User interface customizability | UOp-4-S | ||||

| Monitoring capability | UOp-5-S | ||||

| Undo capability | UOp-6-S | ||||

| Understandable categorization of information | UOp-7-S | ||||

| Appearance consistency | UOp-8-S | ||||

| Input device support | UOp-9-S | ||||

| 13 | User error protection | Avoidance of user operation error | UEp-1-G | [51] | |

| User entry error correction | UEp-2-S | ||||

| User error recoverability | UEp-3-S | ||||

| 14 | User engagement | Appearance aesthetics of user interfaces | UIn-1-S | [51] | |

| 15 | Inclusivity | Accessibility for users with disabilities | UAc-1-G | [51] | |

| 16 | User assistance | Supported languages adequacy | UAc-2-S | [51] | |

| 17 | Self-descriptiveness | Task Success Without Guidance | UDe-1-S | Proposed by the authors based on [54] | |

| First-time Task Completion Rate | UDe-2-S | ||||

| Contextual Feedback Ratio | UDe-3-S | ||||

| Action Clarifiability Rate | UDe-4-S | ||||

| 18 | User controllability | Control execution correctness | UCo-1-G | Proposed by the authors based on [50] | |

| Average control execution time | UCo-2-S | ||||

| Control reliability | UCo-3-S | ||||

| Control process complexity | UCo-4-S | ||||

| 19 | Transparency | Technical Transparency Rate | UTr-1-S | Proposed by the authors | |

| Interaction Transparency Rate | UTr-2-S | ||||

| Social Transparency Disclosure | UTr-3-S | ||||

| 20 | Reliability | Faultlessness | Fault correction | RMa-1-G | [51] |

| Mean time between failures (MTBF) | RMa-2-G | ||||

| Failure rate | RMa-3-S | ||||

| Test coverage | RMa-4-S | ||||

| 21 | Availability | System availability | RAv-1-G | [51] | |

| Mean down time | RAv-2-G | ||||

| 22 | Fault tolerance | Failure avoidance | RFt-1-G | [51] | |

| Redundancy of components | RFt-2-S | ||||

| Mean fault notification time | RFt-3-S | ||||

| 23 | Recoverability | Mean recovery time | RRe-1-G | [51] | |

| Backup data completeness | RRe-2-S | ||||

| 24 | Robustness | Error Tolerance Rate | RRb-1-S | Proposed by the authors | |

| Adversarial Rate | RRb-2-S | ||||

| Dataset Shift Tolerance Rate | RRb-3-S | ||||

| 25 | Security | Confidentiality | Access controllability | SCo-1-G | [51] |

| Data encryption correctness | SCo-2-G | ||||

| Strength of cryptographic algorithms | SCo-3-S | ||||

| 26 | Integrity | Data integrity | SIn-1-G | [51] | |

| Internal data corruption prevention | SIn-2-G | ||||

| Buffer overflow prevention | SIn-3-S | ||||

| 27 | Nonrepudiation | Digital signature usage | SNo-1-G | [51] | |

| 28 | Accountability | User audit trail completeness | SAc-1-G | [51] | |

| System log retention | SAc-2-S | ||||

| 29 | Authenticity | Authentication mechanisms sufficiency | SAu-1-G | [51] | |

| Authentication rules conformity | SAu-2-S | ||||

| 30 | Resistance | Attack Resistance Rate | RRb-1-S | Proposed by the authors | |

| Model Confidentiality Protection Rate | RRb-2-S | ||||

| Security Testing Coverage | RRb-3-S | ||||

| Retraining Resistance Sufficiency | RRb-1-S | ||||

| 31 | Intervenability | Intervention success rate | RRb-2-S | [47] | |

| Average intervention delay time | RRb-3-S | ||||

| 32 | Maintainability | Modularity | Coupling of components | MMo-1-G | [51] |

| Cyclomatic complexity adequacy | MMo-2-S | ||||

| 33 | Reusability | Reusability of assets | MRe-1-G | [51] | |

| Coding rules conformity | MRe-2-S | ||||

| 34 | Analysability | System log completeness | MAn-1-G | [51] | |

| Diagnosis function effectiveness | MAn-2-S | ||||

| Diagnosis function sufficiency | MAn-3-S | ||||

| 35 | Modifiability | Modification efficiency | MMd-1-G | [51] | |

| Modification correctness | MMd-2-G | ||||

| Modification capability | MMd-3-S | ||||

| 36 | Testability | Test function completeness | MTe-1-G | [51] | |

| Autonomous testability | MTe-2-S | ||||

| Test restartability | MTe-3-S | ||||

| 37 | Flexibility | Adaptability | Hardware environmental adaptability | PAd-1-G | [51] |

| System software environmental adaptability | PAd-2-G | ||||

| Operational environment adaptability | PAd-3-S | ||||

| 38 | Scalability | Size Rate | PSc-1-G | Proposed by the authors | |

| Speed Rate | PSc-2-G | ||||

| Complexity Rate | PSc-3-S | ||||

| 39 | Installability | Installation time efficiency | PIn-1-G | [51] | |

| Ease of installation | PIn-2-G | ||||

| 40 | Replaceability | Usage similarity | PRe-1-G | [51] | |

| Product quality equivalence | PRe-2-S | ||||

| Functional inclusiveness | PRe-3-S | ||||

| Data reusability/import capability | PRe-4-S | ||||

| 41 | Safety | Operational constraint | Operational Constraint Effectiveness | SaO-1-G | Proposed by the authors |

| False Constraint Trigger Rate | SaO-2-S | ||||

| Constraint Activation Time | SaO-3-S | ||||

| 42 | Risk identification | Risk identification rate | SaR-1-G | Proposed by the authors | |

| Coverage of Risk Scenarios | SaR-2-S | ||||

| 43 | Fail safe | Fail-Safe Rate | SaF-1-G | Proposed by the authors | |

| Safe Mode Controllability Rate | SaF-2-S | ||||

| Unintended Outcome Avoidance | SaF-3-S | ||||

| 44 | Hazard warning | Warning Accuracy Rate | SaW-1-G | Proposed by the authors | |

| Timeliness of Warning | SaW-2-S | ||||

| Warning Coverage | SaW-3-S | ||||

| 45 | Safe integration | Integration Safety Rate | SaI-1-G | Proposed by the authors | |

| Conflict Detection Rate | SaI-2-S |

| No. | Quality Characteristic | Quality Subcharacteristic | Quality Measure Name | ID | Source |

|---|---|---|---|---|---|

| 1 | Beneficialness | Usability: | |||

| Tasks completed | Ef-1-G | [52] | ||

| Objectives achieved | Ef-2-S | ||||

| Errors in a task | Ef-3-G | ||||

| Tasks with errors | Ef-4-G | ||||

| Task error intensity | Ef-5-G | ||||

| Task time | Ey-1-G | [52] | ||

| Time efficiency | Ey-2-S | ||||

| Cost-effectiveness | Ey-3-S | ||||

| Productive time ratio | Ey-4-S | ||||

| Unnecessary actions | Ey-5-S | ||||

| Consequences of fatigue | Ey-6-S | ||||

| Satisfaction with features | SUs-2-G | [52] | ||

| Discretionary usage | SUs-3-G | ||||

| Feature utilisation | SUs-4-G | ||||

| Proportion of users complaining | SUs-5-G | ||||

| Proportion of user complaints about a particular feature | SUs-6-G | ||||

| User trust | STr-1-G | [52] | ||

| User pleasure | SPL-1-G | [52] | ||

| Physical comfort | SCo-1-G | [52] | ||

| Technical Transparency Rate | UTr-1-S | Proposed by the authors | ||

| Interaction Transparency Rate | UTr-2-S | ||||

| Social Transparency Disclosure | UTr-3-S | ||||

| 2 | Accessibility | Inclusive Functionality Rate | BAc-1-G | Proposed by the authors | |

| Assistive Tech Compatibility Score | BAc-2-S | ||||

| User Diversity Success Rate | BAc-3-S | ||||

| Cross-language Accessibility Index | BAc-4-S | ||||

| Cognitive Load Differential | BAc-5-S | ||||

| 3 | Suitability | Requirement Satisfaction Rate | BSu-1-G | Proposed by the authors | |

| Goal Match Rate | BSu-2-S | ||||

| Acceptability Rating | BSu-3-S | ||||

| Contextual Misfit Rate | BSu-4-S | ||||

| Override Frequency | BSu-5-S | ||||

| 4 | Freedom from risk | Freedom from economic risk | Return on investment (ROI) | REc-1-G | [52] |

| Time to achieve return on investment | REc-2-G | ||||

| Business performance | REc-3-G | ||||

| Benefits of IT Investment | REc-4-G | ||||

| Service to customers | REc-5-S | ||||

| Website visitors converted to customers | REc-6-S | ||||

| Revenue from each customer | REc-7-S | ||||

| Errors with economic consequences | REc-8-G | ||||

| 5 | Freedom from health risk | User health reporting frequency | RHe-1-G | [52] | |

| User health and safety impact | RHe-2-G | ||||

| 6 | Freedom from human life risk | Safety of people affected by use of the system | RHe-3-G | [52] | |

| 7 | Freedom from environmental and societal risk | Environmental impact | REn-1-G | [52] | |

| Societal: Societal Risk Identification Coverage | Rs-1-G | Proposed by the authors | |||

| Societal: Mitigation Effectiveness Rate | Rs-2-S | ||||

| Ethical: Ethical Review Integration Index | REt-1-G | ||||

| Ethical: Residual Risk Acceptability Rate | REt-2-S | ||||

| Ethical: Stakeholder Ethics Involvement Ratio | REt-3-S | ||||

| 8 | Acceptability | Experience | Skill Gain Rate | AEe-1-G | Proposed by the authors |

| Pattern Recognition Assistance Score | AEe-2-S | ||||

| Knowledge Retention Support Index | AEe-3-S | ||||

| Experience Continuity Rate | AEe-4-S | ||||

| 9 | Trustworthiness | Expectation Confidence Index | ATw-1-G | Proposed by the authors | |

| Transparency Support Rate | ATw-2-S | ||||

| Security Incident Avoidance Rate | ATw-3-S | ||||

| Accountability Traceability Index | ATw-4-S | ||||

| 10 | Compliance | Regulatory Compliance Coverage | ACo-1-G | Proposed by the authors | |

| Compliance Audit Success Rate | ACo-2-S | ||||

| Policy Traceability Index | ACo-3-S | ||||

| User Confidence in Legal Compliance | ACo-4-S | ||||

| 11 | Context coverage | Context completeness | Context completeness | CCm-1-G | [52] |

| 12 | Flexibility | Flexible context of use | CFl-1-S | [52] | |

| Product flexibility | CFl-2-S | ||||

| Proficiency independence | CFl-3-S |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Gordieiev, O.; Gordieieva, D.; Rainer, A.; Gorbenko, A.; Tarasyuk, O. Quality Assessment of Artificial Intelligence Systems: A Metric-Based Approach. Electronics 2026, 15, 691. https://doi.org/10.3390/electronics15030691

Gordieiev O, Gordieieva D, Rainer A, Gorbenko A, Tarasyuk O. Quality Assessment of Artificial Intelligence Systems: A Metric-Based Approach. Electronics. 2026; 15(3):691. https://doi.org/10.3390/electronics15030691

Chicago/Turabian StyleGordieiev, Oleksandr, Daria Gordieieva, Austen Rainer, Anatoliy Gorbenko, and Olga Tarasyuk. 2026. "Quality Assessment of Artificial Intelligence Systems: A Metric-Based Approach" Electronics 15, no. 3: 691. https://doi.org/10.3390/electronics15030691

APA StyleGordieiev, O., Gordieieva, D., Rainer, A., Gorbenko, A., & Tarasyuk, O. (2026). Quality Assessment of Artificial Intelligence Systems: A Metric-Based Approach. Electronics, 15(3), 691. https://doi.org/10.3390/electronics15030691