1. Introduction

In recent years, with continuous advancements in automotive technology, per capita vehicle ownership has steadily increased. Yet, human factors account for 80% to 90% of traffic accidents [

1,

2], with a significant proportion attributed to driver fatigue. Notably, the likelihood of accidents caused by fatigued driving is four to six times higher than that under normal driving conditions [

3]. Consequently, the development of efficient and reliable Driver Fatigue Monitoring Systems (DFMSs) has become an urgent necessity [

4].

Driver state monitoring systems leverage advanced technologies to assess drivers’ physiological conditions, attention levels, and emotional states in real time, thereby enhancing driving safety and comfort [

5,

6]. Earlier systems relied on seat pressure or steering torque sensors, but their limited functionality could not meet the complexity of modern driving environments [

7,

8]. With advancements in automotive electronics, monitoring technologies have shifted toward vision-based approaches, using in-cabin cameras and infrared sensors combined with deep learning algorithms to analyze facial and posture information. These systems extract key features such as the percentage of eyelid closure over time (PERCLOS), blink frequency, eye aspect ratio (EAR), mouth aspect ratio (MAR), head posture, and risky behaviors [

9,

10,

11,

12,

13,

14,

15,

16]. Machine learning models then process these features to identify and predict driver fatigue states in real time, enabling timely warnings to prompt drivers to rest. Meanwhile, data privacy has become a critical consideration, requiring strict encryption and anonymization measures during data transmission and storage to mitigate potential risks [

17,

18,

19,

20].

Driver Monitoring Systems (DMSs) are increasingly being incorporated into automotive safety standards worldwide [

21]. In China, installation of DMS has been mandated in commercial vehicles since 2018, with full implementation across new vehicles expected by 2027. In 2020, the U.S. National Transportation Safety Board recommended DMS as an effective safety measure for Level 2 advanced driver assistance systems [

22]. In 2023, the European New Car Assessment Programme (E-NCAP) designated active safety systems with DMS as a key criterion for achieving a five-star rating [

23], and in 2024, the European Union’s General Safety Regulation (GSR) required all new vehicles sold in the EU to be equipped with driver fatigue monitoring systems [

24]. Collectively, these developments indicate that DMS will become a mandatory component of active safety systems worldwide in the near future.

In addition to regulatory progress, recent academic studies further highlight the global trend toward integrating multimodal monitoring approaches. For example, Guo et al. (2023) demonstrated that combining multi-source data streams significantly enhances vigilance estimation accuracy [

5]; Qu et al. (2024) provided a comprehensive review of vision-based driver monitoring techniques and emphasized the importance of deep learning for fatigue recognition [

20]; and Yang et al. (2024) analyzed the effect of DMS design on drivers’ mental stress and attention in car-sharing scenarios, offering insights into user acceptance and human factors [

21]. These recent contributions show that while this study aligns with ongoing international efforts, it diverges by proposing a novel testing framework that emphasizes hierarchical decoupling and automated scenario generation, thereby addressing current gaps in standardization and test efficiency.

Despite these advances, both academia and industry still face significant challenges in the testing and evaluation of Driver Monitoring Systems (DMSs). Most existing studies primarily focus on improving fatigue recognition algorithms or reporting performance on specific datasets, while systematic testing frameworks for DMSs remain relatively underexplored. In practice, evaluation is often conducted through end-to-end vehicle tests or fixed benchmark datasets, where algorithmic perception accuracy, system integration logic, and human–machine interaction effects are tightly coupled. This coupling makes it difficult to isolate the root causes of performance degradation or to conduct fair and reproducible comparisons across different systems. Moreover, standardized testing methodologies that explicitly account for scenario diversity—such as lighting conditions, driver behaviors, and demographic variability—are still lacking, resulting in limited comparability between suppliers and vehicle models.

In this work, the term embodied is used from an engineering systems perspective. Specifically, the DFMS is regarded as an in-vehicle system in which visual perception, vehicle context, decision logic, and human–machine interaction are physically coupled and evaluated as a closed perception–decision loop. This study does not aim to formulate a cognitive agent or learning-based policy, but rather focuses on testing and validating how such embodied perception and decision components interact under real deployment constraints.

To address these challenges, this study proposes a testing framework based on hierarchical decoupling and scenario elements, which differs fundamentally from prior fatigue recognition and evaluation studies. Rather than introducing a new detection algorithm, this framework explicitly separates algorithm-level visual perception evaluation from application-level functional validation, enabling targeted and interpretable testing at different system layers. Scenario elements are formalized to abstract driving behaviors, environmental conditions, and driver characteristics into controllable variables for automated test case generation. Combined with multidimensional validation methods—including virtual simulation, functional testing, subjective evaluation, and benchmarking—the proposed framework provides a structured, scalable, and reusable approach for DFMS evaluation, supporting both standard development and iterative product optimization.

In summary, the main contributions of this study are as follows:

- (1)

Proposition of a hierarchical decoupling testing framework for DFMSs, which explicitly separates algorithm-level perception evaluation from application-level functional validation, addressing an underexplored gap in existing DFMS testing methodologies;

- (2)

Introduction of the scenario elements method, driving the automation of test case generation and improving testing coverage;

- (3)

Employment of multidimensional validation methods, demonstrating superior performance in key behavior detection and false positive rate control compared to competing products;

- (4)

Providing practical guidance for the standardized development of DFMS and offering feasibility studies for system optimization across different vehicle types and operational environments.

The remainder of the paper is structured as follows:

Section 2 reviews the background and limitations of existing DFMS testing methods;

Section 3 provides a detailed explanation of the proposed testing framework and its core components;

Section 4 presents experimental design, results, and analysis;

Section 5 discusses the limitations of the research and concludes the paper.

2. Driver Fatigue Monitoring System

Building on the challenges highlighted in the introduction, this section details the architecture and functionality of the proposed Driver Fatigue Monitoring System (DFMS) to address driver fatigue effectively.

Driver monitoring technology has rapidly evolved, transitioning from indirect methods such as seat pressure sensors and steering wheel torque sensors to direct monitoring approaches based on visual imaging technology. By leveraging visual image perception technology to capture in-cabin driver facial and posture information, this method enables more intuitive and efficient identification of driver fatigue and abnormal behaviors, establishing it as the mainstream technical approach in the field of driver monitoring today [

6,

25].

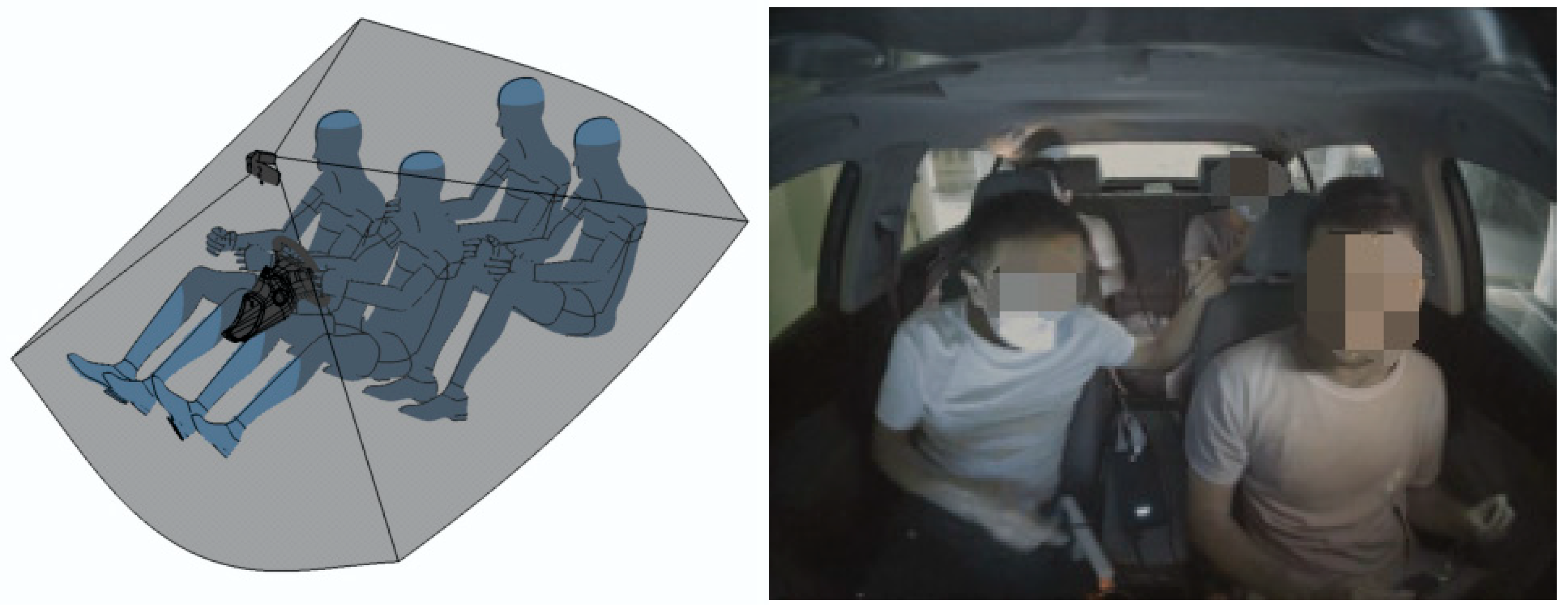

Figure 1 illustrates the in-cabin visual image monitoring interface.

2.1. Hardware Product Terminal

The hardware terminal of the Driver Fatigue Monitoring System (DFMS) primarily consists of three main components: the main control board, the camera module, and the infrared supplementary light. The main control board employs a highly integrated, computationally powerful AI System-on-Chip (SoC), with the AX620A chip at its core. The camera module is equipped with an image sensor based on the OV05B1S chip, enabling real-time capture of driver images and transmission to the main control board for data processing. The infrared supplementary light (IR LED) ensures stable acquisition of high-quality driver facial images under various lighting conditions. A schematic diagram of the hardware terminal is shown in

Figure 2.

The proposed DFMS is implemented on an embedded AI System-on-Chip (SoC) platform commonly used for in-cabin driver monitoring applications, integrating image signal processing and neural network inference within a single control unit. This deployment configuration is representative of current production-grade DMS or cockpit domain controllers used in commercial vehicles. As the system does not rely on discrete GPUs or external computing units, but instead operates entirely on an embedded SoC together with a standard in-cabin camera and infrared illumination, the hardware resource requirements are compatible with those of existing commercial vehicle platforms that already support driver monitoring or occupant sensing functions.

2.2. Functional Design

The DFMS continuously monitors the driver’s eye and mouth states during driving through in-cabin cameras. The collected eye and mouth state data are combined with vehicle behavior states for comprehensive analysis to determine driver fatigue levels. The fatigue monitoring alarm system is categorized into none, low, medium, and high levels, with alarm levels determined based on eye closure detection, yawn detection, and vehicle behavior state analysis [

26].

The overall functional workflow of the DFMS is illustrated in

Figure 3. Upon vehicle startup and power-on, the system synchronously activates the camera (Camera Activate), the main control module (Control Activate), and the in-vehicle system (Car Bot). Once all three components are successfully activated, the system notifies the driver via the interface with the message, “The Cockpit Care system has been activated successfully,” and enters continuous monitoring mode.

In the active state, the system prompts the driver to perform eye closure or yawning actions to verify real-time monitoring functionality. When behaviors indicative of fatigue, such as eye closure or yawning, are detected, the system immediately conducts relevant data analysis. Upon identifying eye closure actions (eye opening and closing test) or yawning actions (yawn detection), the system triggers an alarm mechanism, displaying a fatigue warning image on the interface accompanied by an auditory alert to ensure the driver takes appropriate action promptly.

Additionally, the system includes an option for the driver to manually disable the fatigue monitoring function. When the driver selects this option via the “Cockpit Care” interface, the system issues a reminder: “The fatigue monitoring function is turned off, please drive safely”.

If any component, such as the camera or control module, fails to activate properly during the startup process, the system issues a warning to the driver, displaying the message, “The Cockpit Care system has failed to activate,” and advises the driver to check the system status and ensure safe driving.

This functional design fully embodies the human–machine interaction characteristics of the fatigue monitoring system, balancing the driver’s active intervention with automated system monitoring. Through clear and real-time alarm strategies, it effectively enhances driving safety and the reliability of the user experience.

2.3. Algorithmic Software Architecture

The algorithmic software architecture of the DFMS adopts a modular and hierarchical design, including image acquisition, image preprocessing, facial keypoint detection, posture feature analysis, and fatigue state determination, as shown in

Figure 4. The processing steps are logically organized, starting with the initialization of the system and image acquisition, followed by face frame evaluation, head pose analysis, occlusion and fatigue detection modules, and culminating in the calculation of attention and fatigue levels. This structured representation helps clarify the modular nature of the system and how different algorithmic components interact to generate comprehensive driver state assessments.

To facilitate the readability of the complex workflow illustrated in

Figure 4, the DFMS algorithmic software architecture can be interpreted as a sequence of four functional stages. First, image acquisition and preprocessing modules initialize the system, configure parameters, and generate normalized facial data from raw RGB and infrared inputs. Second, face frame validation and posture-related computations (e.g., face frame judgment, head angle calculation, and yaw estimation) determine whether subsequent feature extraction is activated. Third, a set of core perception submodules—highlighted in the dashed block in

Figure 4, including Face-PB, OCC-EyeOC, MouthOC, RC, and Gaze—extract facial posture, eye state, mouth state, risky behaviors, and gaze information. Finally, these intermediate outputs are fused at the application layer to compute attention- and fatigue-related scores and warning logic, resulting in the overall fatigue level assessment and corresponding user alerts.

Although the DFMS is implemented as an integrated in-vehicle system, the architecture in

Figure 4 represents a composition of algorithmic perception modules and data-processing stages. Each module operates on well-defined inputs (e.g., RGB/IR images, facial keypoints, vehicle signals) and produces intermediate algorithmic outputs (e.g., eye state probabilities, mouth opening scores, head pose angles, gaze coordinates), which are subsequently fused through rule-based logic for higher-level driver state inference. This abstraction allows individual algorithms and data-processing steps to be independently evaluated and analyzed, consistent with standard practices in computer vision–based driver monitoring research.

During system operation, the DFMS camera first captures infrared (IR) and RGB images containing the driver’s face. Upon receiving a DFMS request, the system performs software initialization and parameter configuration, followed by preprocessing to output facial image data.

After preprocessing, the system employs a Face Detection three-Pack Algorithm to output the face frame, face orientation, and face keypoints. If no face is detected in the image, the system proceeds to process the next frame; if a face frame is successfully detected, the system conducts a Driver Face Frame Judgment. Upon confirming the driver’s face frame, it performs posture calculations (Face Frame Position Calculation) and retrieves real-time vehicle information (e.g., vehicle speed, door lock status) through Vehicle Status Judgment to enhance fatigue monitoring accuracy with dynamic vehicle data.

Subsequently, the system uses vehicle state information, such as steering wheel angle and turn signal status, to execute Face-based Frame Cropping for precise extraction of the driver’s facial region. The system then activates multiple submodules for feature analysis, including:

Face-PB: Head posture and image blur detection algorithm (Pose and Blur), outputting face angle data and image clarity scores.

OCC-EyeOC: Eye occlusion and closure state detection module, identifying eye closure levels (e.g., left eye open, right eye open, or closed).

MouthOC: Mouth state detection module, calculating mouth opening scores.

Gaze: Gaze detection module, outputting driver gaze coordinate data.

The system integrates these detection results for advanced feature fusion and state evaluation, including head angle calculation, head corner area calculation, right-left status calculation, and head-down status calculation. It further calculates the field of vision using driver gaze coordinates to determine the attention level calculation. Based on comprehensive features such as eye closure states, yawning actions, mouth opening conditions, head posture, and risky behavior scores, the system performs Next Frame Fatigue State Calculation to determine the real-time driver fatigue level and conducts Risky Behavior Calculation based on risk scores.

This software architecture, through modular design, precise algorithmic processing, and multi-source feature fusion, ensures the real-time performance and high reliability of driver state monitoring, providing a solid technical foundation for subsequent fatigue warnings and safety interventions.

3. Testing Methods and Test Case Extraction

Having described the design of the Driver Fatigue Monitoring System (DFMS) in the previous section, this section introduces the testing methods and case extraction strategies used to rigorously evaluate its performance. From a methodological perspective, this section formalizes DFMS testing as a data-driven evaluation problem, where algorithmic perception accuracy and application-level decision logic are assessed through structured scenario decomposition and controlled data processing pipelines.

3.1. Hierarchical Decoupling Testing

To address the complex hardware–software interaction characteristics of the Driver Fatigue Monitoring System (DFMS), this study adopts a hierarchical decoupling testing approach to verify and evaluate system functionality with greater clarity and efficiency. In contrast to existing DFMS testing approaches, which often rely on integrated end-to-end vehicle testing that conflates both algorithmic performance and application outcomes, our approach separates these layers, offering more targeted and efficient testing. The hierarchical decoupling method enables precise localization of issues, which is often challenging in integrated testing approaches. Additionally, our approach incorporates a scenario elements method that allows for automated, comprehensive test case generation, reducing the need for manual test creation and ensuring broader scenario coverage. These innovations significantly enhance both the efficiency and accuracy of DFMS validation compared to existing methods, which often lack scalability and scenario diversity.

Hierarchical design and decoupling are widely used in software engineering, sharing common principles but emphasizing different aspects [

27]. Hierarchical design decomposes complex systems into modular layers with clearly defined functions, improving modularity, reducing complexity, and enhancing maintainability and scalability [

28]. Decoupling minimizes dependencies between modules, increasing independence and flexibility. This improves maintainability, facilitates targeted testing, and enables independent updates or replacements, thereby enhancing overall system reliability [

29,

30,

31].

The DFMS, built on an embedded system, relies on efficient collaboration between hardware modules and software algorithms [

32]. The hierarchical decoupling testing method allows for targeted independent testing of each component and precise evaluation of their interaction, ensuring overall reliability and stability. To illustrate this process more intuitively,

Figure 5 provides an overview of the end-to-end testing framework, showing the sequential data flow between the Sensor, Algorithm, Engineering, and Testing Teams. The Sensor Team captures and transmits raw video data, the Algorithm Team processes it using detection and recognition models, the Engineering Team integrates the processed outputs into system functions, and the Testing Team evaluates the complete system through functional performance and user experience assessments. This structured flow reflects the collaborative yet modular nature of the approach, ensuring each team focuses on its specific role while maintaining overall system coherence.

Furthermore, the system development involves multiple technical teams, each with distinct responsibilities and testing tasks. To ensure the final user experience of the system, this study proposes a hierarchical decoupling testing strategy tailored for end-to-end testing scenarios:

- ①

Clear Definition of Team Responsibilities for Collaborative Hierarchical Testing

The Sensor Team validates video data quality; the Algorithm Team verifies model accuracy, real-time performance, and processing pipelines; the Engineering Team tests functional logic and interfaces; and the Testing Team conducts comprehensive end-to-end evaluations to ensure design objectives and user requirements are met.

- ②

Decoupling of Algorithmic and Application Layers for Targeted Precision Testing

Algorithm and application layers are tested separately. The Algorithm Team assesses models for face detection, head posture, eye state, and behavior recognition, while the Testing Team verifies application-layer functions such as driver state judgment, occlusion recognition, and fatigue/attention/risk alert logic.

- ③

End-to-End Black-Box Testing to Ensure Overall User Experience

After independent tests, black-box testing evaluates overall functionality, responsiveness, and user experience in realistic scenarios.

Through hierarchical decoupling, this approach reduces module coupling, improves fault localization, and enhances process efficiency, strongly supporting DFMS development and optimization.

3.2. Scenario-Based Test Cases

In product-level testing of the DFMS, black-box testing is extensively utilized to validate system functionality and performance. Particularly in handling complex or specialized application scenarios, black-box testing enables the testing team to comprehensively assess the system’s user experience under diverse environments and conditions [

33]. Traditionally, test scenario cases are manually crafted based on prior experience and subjective judgment, which is often inefficient and prone to incomplete scenario coverage. To overcome these limitations, this study proposes a structured automated script-based method for generating test scenarios. By leveraging standardized scenario decomposition and Python programming, this approach ensures comprehensive scenario coverage, significantly improving the efficiency and accuracy of test case generation.

In this method, test scenario cases are organized through systematic structural decomposition, encompassing three dimensions: testing pipeline (testing behaviors and duration), testing elements (environment, accessories, occlusions, etc.), and testing subjects (gender, ethnicity, age, etc.). Each dimension includes various variables and factors, which are combined to generate specific test cases. For instance, as shown in the diagram, testing actions and durations form the core of testing behaviors, covering combinations such as left gaze, right gaze, and different durations. Environmental factors account for variations in lighting conditions and dynamic versus static scenes, including representative challenging conditions such as rapid illumination transitions, strong backlighting, and motion-induced image perturbations, which are commonly encountered in real-world driving environments. Accessories and occlusions include factors such as glasses and hats, while testing subjects cover differences in driver gender, ethnicity, and age (as shown in

Figure 6).

The creation of test scenario cases is based on multiple scenario elements, each containing various variables. The different combinations of these variables form diverse test cases, ensuring the breadth and completeness of testing. This approach not only enhances the comprehensiveness of test coverage, but also effectively reduces biases that may arise from manual test case creation, thereby improving the reliability of test results. By utilizing this structured Python script-based automated test scenario-generation method, testers can efficiently generate test cases covering diverse usage scenarios in a short time, ensuring stable system performance that meets design expectations across varied environments.

3.3. Scenario Extraction Algorithms

Product-level system test cases are typically constructed and derived based on scenario elements. Traditional test case creation relies heavily on the experience of testers, which is often inefficient and prone to incomplete coverage. To address these challenges, this study employs three commonly used algorithms from mathematical probability theory—the Cartesian product algorithm, orthogonal analysis algorithm, and pairwise analysis algorithm—to investigate methods for test scenario extraction [

34,

35,

36].

3.3.1. Cartesian Product Algorithm

The Cartesian product algorithm is a widely adopted method in product-level system testing. It generates various test scenario cases by arranging combinations of different input test elements, enabling the identification of defects and issues in the system under diverse operating conditions [

34,

37,

38]. Each test scenario case covers distinct input test elements, thereby verifying the correctness and stability of the system across various conditions [

39].

Given several sets

, the result of the Cartesian product is all possible ordered combinations. The mathematical expression is as follows:

Suppose there are two sets

and

, then the Cartesian product is as follows:

For the driver fatigue monitoring system, the Cartesian product generates diverse scenarios, including lighting conditions, driving behaviors, and driver characteristics.

Although the Cartesian product algorithm is suitable for the virtual simulation test stage, it will generate all possible combinations of elements, resulting in a very large number of test scenarios, which may cause inefficiency and excessive resource consumption in actual testing. Therefore, in real-vehicle testing, the number of test scenarios should be reduced as much as possible while ensuring full coverage to reduce test time and cost. To this end, orthogonal analysis and pairwise analysis can be used as more optimized options.

3.3.2. Orthogonal Analysis Algorithm

The orthogonal analysis algorithm is a testing approach based on mathematical probability statistics, which is used to effectively select the number of test scenarios that cover various combinations of system-level elements. It can realize comprehensive testing of the product system in smaller test scenario cases to find potential problems and optimization [

40,

41]. Orthogonality means that in each test scenario case, the combination of each test element can appear evenly and balanced to achieve the highest possible coverage of test scenario cases [

42].

Orthogonal analysis is based on the design concept of orthogonal array. Orthogonal array is a mathematical structure used to generate a balanced arrangement of each factor in the combination so that every combination of two factors can appear in all test cases. Given an orthogonal array

, its structure is as follows:

where

is the number of test cases, and

represents the level of each factor, ensuring that the combination of factors is evenly distributed in each row (i.e., each test case).

The orthogonal analysis algorithm generates balanced combinations of driving behaviors, environments, and driver characteristics, ensuring even distribution of factor levels.

This method can significantly reduce the number of test cases while ensuring that all possible combinations are covered. It is especially suitable for situations with many test dimensions and complex combinations.

3.3.3. Pairwise Analysis Algorithm

The pairwise analysis algorithm is an algorithm used to determine the matching relationship between two or more elements. It is usually used to solve combinatorial optimization problems, that is, pairing a set of elements to form non-repeating combinations [

36]. The pairwise algorithm is based on the following two assumptions:

- ①

Each dimension is orthogonal, that is, each dimension has no intersection with each other;

- ②

According to mathematical statistical analysis, 73% of defects (35% for single factor and 38% for double factor) are caused by the interaction of a single factor or two factors, and 17% of defects are caused by the interaction of three factors.

The goal of pairwise analysis is to select a combination of certain key factors in the test case to maximize the chance of defect exposure while reducing the number of test cases. The mathematical expression is as follows:

Pairwise analysis generates the minimum number of test cases by combining test factors, making it efficient for system testing.

To clarify the trade-offs among the three scenario-generation approaches,

Table 1 compares their time/space complexity and notes practical limitations. Let

denote the number of factors and

the number of levels for factor

(with

). The total number of exhaustive combinations is

.

In practice, the exhaustive Cartesian product guarantees full coverage but scales exponentially with

. Orthogonal designs reduce the test set to

scale while preserving t-wise balance when a suitable array exists. Pairwise covering arrays typically yield the smallest sets and near-linear generation time, making them preferable for resource-constrained, on-road validation; higher-order risks can be mitigated by augmenting with a small number of targeted cases. The full Python scripts for the above three algorithms have been placed in the

Supplementary Material (S1, ZIP).

The computational complexity of the automated test case generation process is a key consideration when dealing with large datasets and numerous test variables. Our Python scripts rely on efficient algorithms such as the Cartesian product, orthogonal analysis, and pairwise analysis to generate test cases. However, the computational requirements vary significantly depending on the size of the input datasets, the number of variables, and the complexity of the test scenarios. Specifically, the Cartesian product algorithm, while comprehensive, can lead to a high number of combinations, which increases processing time. For instance, generating a test case set with 10 variables and 5 levels per variable using the Cartesian product method required approximately 4 h on a typical desktop setup (Intel i7, 16 GB RAM). In contrast, both the orthogonal analysis and pairwise analysis algorithms reduce the number of test cases, improving efficiency, with average processing times for similar configurations reduced to 1.5 h. Additionally, as the number of test variables increases, or when high-resolution images are used for scenario simulations, the computational load increases. In our current setup, high-resolution image processing (e.g., 1920 × 1080 pixel RGB images) adds approximately 2 h to the total test case generation time. To improve efficiency in real-time applications, we aim to optimize the script by exploring parallel processing and algorithmic enhancements in future work. The integration of more powerful hardware (e.g., multi-core processors or GPUs) could also significantly reduce the time required for these processes.

Beyond computational aspects, the choice of these algorithms was guided by their complementary strengths: Cartesian product provides exhaustive coverage for simulation datasets, orthogonal analysis achieves balanced coverage with fewer cases, and pairwise analysis minimizes test size for on-road validation. Moreover, scenario design incorporated demographic and environmental diversity (e.g., gender, age, lighting, accessories) to enhance representativeness. Sample sizes were determined to balance statistical coverage with feasibility, ensuring rigorous yet practical validation.

4. Testing and Validation Analysis

Building upon the testing methods outlined in

Section 3, this section presents empirical validation through various testing environments and analyses, demonstrating the effectiveness of the system.

4.1. Test Validation Environment Design

Product testing and validation generally rely on quantifiable metrics and the design of corresponding interfaces and signals to statistically evaluate the accuracy of test results [

43]. This section constructs a testing environment based on the system architecture shown in

Figure 7, comprising three components: the Vehicle Signal Input and Acquisition Module (Vehicle), the DFMS Core Processing Unit, and the human–machine interaction (HMI) and Measurement Interface Module.

In the Vehicle Module, the “Power On” condition serves as the test initiation trigger, simulating a real ignition scenario. A unified signal interface collects multidimensional raw signals, including functional status bits, vehicle power mode, vehicle speed, gear position, steering status, turn signal status, door lock status, seatbelt status, and system physical time, which are input into the DFMS. The DFMS Core Processing Unit first determines the system’s operational phase using a functional state machine. Upon meeting switch-state transition and readiness conditions, the system sequentially enters standby, warm-up, and activation phases. Subsequently, it employs four application-layer perception–processing–logic (PPL) modules, namely Fatigue Level Warning, Attention Warning, Dangerous Behavior Warning, and Leave Warning. Here, PPL refers to a rule-based application-layer processing stage that integrates perception outputs with vehicle state information to generate driver state judgments and warning decisions.

In the HMI and Measurement Interface Module, the system uses six icons—“Fault Alarm,” “Activation Status,” “Fatigue Warning,” “Attention Warning,” “Dangerous Behavior,” and “Leave Warning”—to visually represent various alert states. It also synchronously records input-output signals and alert timing, providing quantitative support for subsequent virtual simulation testing, basic function testing, and subjective evaluation testing.

This modular design not only replicates multi-source data inputs under real-world conditions, but also enables precise validation of the system’s functional performance, laying a solid foundation for product optimization and methodological research.

4.2. Virtual Simulation Testing

Virtual simulation testing plays a crucial role in the validation process for the Driver Fatigue Monitoring System (DFMS). Its objective is to comprehensively evaluate the system’s performance and stability under diverse operating conditions by simulating real-world driving scenarios. This testing approach involves constructing test datasets using scenario videos collected from real vehicles, which are then subjected to data preprocessing, annotation, and analysis to generate test cases. This enables full scenario coverage, identifying potential issues in various application contexts and optimizing system functionality.

The implementation steps for virtual simulation testing are illustrated in

Figure 8 and consist of the following four stages:

Data Preprocessing: Scenario videos capturing driver behaviors are collected in real-vehicle environments. These videos undergo segmentation, sampling, and cleaning to remove noisy data, ensuring data quality meets testing requirements.

Data Annotation: Preprocessed video data are manually or semi-automatically annotated to generate corresponding images and JSON files. Annotations include driver facial features, behavioral states (e.g., eye closure, yawning, phone use), and environmental factors (e.g., lighting variations, occlusions).

Result Analysis: Using the annotated dataset, test cases are executed on a virtual simulation platform, and key metrics such as recognition accuracy, false positive rate, and false negative rate are statistically analyzed across different scenarios.

Data Feedback: Test results are fed back into the system development process to optimize algorithm models and functional design, enhancing the system’s robustness in complex scenarios.

The core of virtual simulation testing lies in the construction of the test dataset. This study leverages the hierarchical decoupling and scenario elements approach proposed in

Section 3 to design a virtual simulation test case library. The test case library employs the Cartesian product algorithm to generate a comprehensive set of test scenarios, covering various test elements (e.g., lighting conditions, driver gender, ethnicity, age, behavior types) and test subjects, ensuring that the test scenarios simulate a wide range of real-world driving conditions. During the testing process, the system uses in-cabin cameras to capture driver images, analyzing facial features and behavioral states through deep learning algorithms to output corresponding fatigue or attention alert signals.

4.3. Basic Function Testing

Basic function testing seeks to verify whether the Driver Fatigue Monitoring System (DFMS) can achieve its core functionalities as designed, encompassing both application-level and system-level testing. The tests are primarily conducted in a bench testing environment, utilizing a series of designed test cases to rapidly evaluate the system’s functional performance and stability under typical usage scenarios. The test cases cover various driver behavioral states and system configuration functions to ensure accurate recognition and response across diverse conditions.

Table 2 provides a detailed description of the basic function test cases designed for the DFMS and their corresponding test results. For clarity, test icons are consistently labeled using standard terminology (e.g., “Fatigue Warning” instead of mixed terms), and units such as time (s) are explicitly indicated.

Through the execution of the aforementioned test cases, the system accurately triggered corresponding interface prompts and alert icons when detecting driver behaviors such as eye closure, yawning, phone use, smoking, drinking, mask wearing, and sunglasses wearing. This indicates that the application function modules effectively recognize typical driver fatigue and distraction behaviors. Additionally, the switch settings test for system functions validated the system’s correct response to configuration changes, with interface transition logic aligning with the expected design.

The results of the basic function testing demonstrate that the Driver Fatigue Monitoring System (DFMS) operates stably in the bench testing environment, with functionality meeting design requirements. No significant functional defects were identified during the testing process, providing a solid foundation for subsequent virtual simulation and subjective evaluation tests. Still, it should be noted that the idealized conditions of the bench testing environment may not fully reflect the complexity of real-world driving scenarios. Therefore, further testing in real-world conditions is necessary to validate the system’s robustness.

To further demonstrate the utility of the proposed testing framework, we performed a comparison between two distinct Driver Fatigue Monitoring Systems (DFMSs) using the same set of generated test scenarios. The framework was applied to generate diverse test scenarios, including variations in driving behaviors, environmental conditions, and vehicle configurations. When comparing the results from both systems, key differences in detection accuracy and false positive rates were observed. This comparison highlights the ability of the proposed framework to consistently generate test scenarios and evaluate multiple systems under standardized conditions, ensuring consistent and reliable performance assessments.

4.4. Subjective Evaluation Testing

Subjective evaluation testing aims to assess the user experience and practical application effectiveness of the Driver Fatigue Monitoring System (DFMS) by simulating typical scenarios in real-world driving scenarios. This testing approach employs structured storylines and scenario scripts to identify potential issues under various driving conditions and provide a basis for design optimization. The system utilizes an in-cabin camera module to capture the driver’s facial and body images, analyzing fatigue states (e.g., eye closure, smoking, phone use) through deep learning algorithms to ensure monitoring accuracy in complex environments.

The testing specifically focuses on the impact of lighting variations on the performance of the camera module. Daytime scenarios emphasize testing under challenging lighting conditions, such as bridge underpasses, tunnel entries and exits, underground parking garages, and direct sunlight during sunrise or sunset, which introduce abrupt illumination changes and high dynamic range visual disturbances. Nighttime scenarios prioritize performance in varying brightness levels (particularly low-light conditions) and specific situations (e.g., brake lights from the vehicle ahead or headlights from the vehicle behind). To this end, four subjective evaluation test routes were designed, covering a diverse range of driving scenarios. The key performance indicators (KPIs) for these routes, including false positive rates (FPRs), response times (RTs), and user satisfaction scores (DSSs), are summarized in

Table 3. The results demonstrate stable performance across diverse conditions, with Route 4 achieving the highest satisfaction due to robust recognition performance and minimal false alerts.

In this study, the response time (RT) reported in

Table 3 represents the end-to-end on-board processing delay in real vehicle deployment. Specifically, RT is defined as the elapsed time between the onset of a target driver behavior (identified via frame-level annotation or manual trigger) and the corresponding warning output on the human–machine interface (HMI), including visual icon activation and/or audible alert. Across the four real-world routes, the observed average RT ranges from 1.09 s to 1.34 s, indicating that the proposed DFMS pipeline is generally consistent with the real-time requirements of practical in-vehicle use, where warning delays within approximately 1–2 s are typically considered acceptable for driver assistance and monitoring systems.

During the testing process, participants drove along predefined routes while the system continuously monitored and recorded fatigue warning, attention distraction warnings, and related behavior recognition results. Subjective evaluation was conducted through driver feedback and observational records, assessing the system’s response timeliness, alert accuracy, and user acceptance across different scenarios. Preliminary results indicate that the system effectively detects fatigue behaviors in both daytime and nighttime scenarios. Nonetheless, occasional false positives or false negatives were observed under extreme lighting conditions, such as tunnel entrances/exits or direct strong sunlight, necessitating further optimization of algorithm parameters. The subjective evaluation testing provides critical data support for optimizing the system’s user experience in real-world applications. Future improvements can be achieved by increasing test sample sizes and expanding scenario complexity to further enhance the system’s adaptability and reliability.

4.5. Algorithm Model Testing

The algorithm model testing was conducted as an algorithm-level performance evaluation and engineering validation of the deployed DFMS perception models. The test data were collected from two Asian adult male subjects in real-vehicle environments, covering representative driver behaviors such as phone use, drinking, smoking, eye opening/closure, and mask wearing. A total of approximately 20,000 in-vehicle images were collected for this purpose. It should be noted that this dataset was not intended to support population-level generalization or fairness evaluation, but rather to verify the correctness, stability, and real-time performance of the algorithm models under controlled deployment conditions. The results were obtained through the 4.3 virtual simulation test method.

Table 4 shows the test results of each model in the dataset. The study used accuracy, precision, recall, and F1-score for evaluation [

44]. The calculation formula is as follows:

One of the critical models in driver fatigue assessment is designed to detect yawning behavior, categorized into three classes: normal, half-open mouth, and fully open mouth, with only the fully open mouth classified as a yawn. A total of 103,000 paired IR/RGB images were collected, including 79,000 closed-mouth images, 12,000 half-open mouth images, and 12,000 fully open mouth images.

Table 5 presents the test results for the mouth algorithm model.

For the mouth (yawn) detection experiments, the dataset was partitioned into training, validation, and test subsets following a subject-independent split strategy, such that images from the same recording session and subject were not shared across different subsets. This design was adopted to mitigate potential data leakage caused by frame-level temporal correlations.

Model performance was evaluated on the held-out test set using a per-frame evaluation protocol, and true positive rates (TPR) were reported at fixed false positive rate (FPR) operating points (1%, 0.5%, and 0.1%), as summarized in

Table 5. The reported metrics, therefore reflect frame-level classification performance under controlled conditions, rather than per-subject or population-level behavioral statistics.

One of the important models in driver fatigue judgment is to judge whether the driver has eyes open or closed. The number of collected pictures IR is 32 K pairs of data.

Table 6 shows the test results of the eye algorithm model.

To facilitate reproducibility, key implementation details are provided here. All deep learning models were trained using Python 3.8 with TensorFlow 2.6 on a NVIDIA RTX 3090 GPU (24 GB VRAM) and Intel i7 CPU with 64 GB RAM. Training was conducted for 50 epochs with a batch size of 32, using the Adam optimizer (learning rate = 0.001, β1 = 0.9, β2 = 0.999) and categorical cross-entropy as the loss function. Data augmentation (random rotation ± 15°, horizontal flip, and brightness adjustment) was applied to increase robustness. Input images were resized to 224 × 224 pixels, and normalization was performed before training. These settings ensured stable convergence and reproducible performance across experiments.

4.6. Similar Product Testing

To further demonstrate the applicability of the proposed testing framework in practical engineering scenarios, a comparative evaluation was conducted between the proposed Driver Fatigue Monitoring System (DFMS) and a representative commercial driver behavior analysis product. This experiment is intended as an illustrative engineering benchmark, rather than an exhaustive algorithm-level performance comparison.

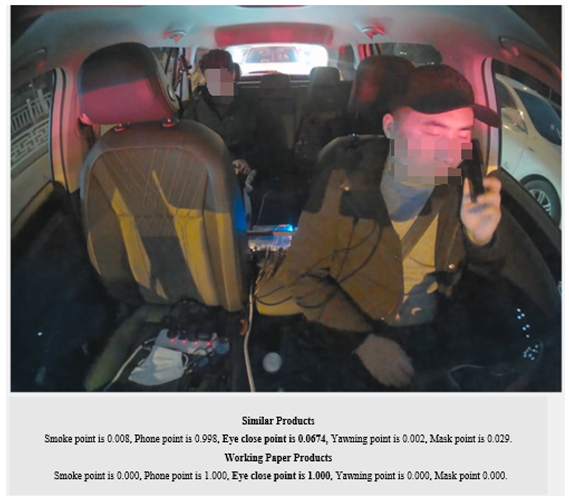

The selected commercial product is provided by an Internet company in the form of a publicly accessible application programming interface (API). Users can upload driver behavior images and obtain corresponding recognition results and JSON-format outputs, as shown in

Figure 9. The supported behavior categories include eye closure, smoking, phone use, mask wearing, yawning, head-down behavior, seat belt usage, and hands-off steering wheel.

The evaluation dataset consisted of images collected from young Asian male and female subjects. Identical driver behavior images were uploaded to both the commercial platform and the proposed DFMS, and the resulting recognition outputs were analyzed under the same testing conditions. The comparison results are summarized in

Table 7.

It should be emphasized that the purpose of this comparison is not to establish a comprehensive ranking among multiple driver monitoring algorithms, but to demonstrate how the proposed hierarchical decoupling and scenario-based testing framework can be applied to consistently evaluate different DFMS products under identical input conditions. Due to the black-box nature and limited accessibility of most commercial DFMS solutions, a single widely used external product was selected as a reference baseline, which is sufficient for illustrating the discriminative capability and practical value of the testing methodology.

From an engineering validation perspective, the results indicate that the proposed DFMS produces more stable and conservative outputs in several representative scenarios, particularly in reducing false alarms for phone-use detection. For example, in the non-phone-call scenario shown in

Table 7, the commercial product produced a high confidence misrecognition score (0.805), whereas the proposed system maintained a low response value (0.016), effectively avoiding false positives. Such behavior is critical in real-world deployment, where excessive false alarms may negatively affect driver trust and system acceptance.

Overall, this comparative experiment demonstrates that the proposed testing framework can effectively expose functional differences between DFMS products and highlight potential reliability issues under standardized test conditions. Extending this benchmarking to a broader range of commercial and open-source systems will be an important direction for future work, as access to additional comparable products becomes available.

5. Conclusions

This study introduces a testing framework for the Driver Fatigue Monitoring System (DFMS) based on hierarchical decoupling and scenario element methods, which systematically improves testing efficiency, accuracy, and reliability. It provides significant theoretical and practical foundations for improving road safety and system optimization, leading to the following conclusions:

By decomposing DFMS functionalities into algorithmic and application layers and implementing hierarchical decoupling testing, this study effectively addresses the limitations of traditional end-to-end testing, particularly the difficulty in pinpointing issues, thereby significantly improving the specificity and precision of the testing process.

Through structured decomposition based on scenario elements, combined with Cartesian product, orthogonal analysis, and pairwise analysis algorithms, comprehensive test cases were generated using Python automation scripts. Compared to traditional manual methods, this approach improved test case generation efficiency by approximately 50% while ensuring comprehensive coverage of multidimensional scenarios (e.g., lighting conditions, driving behaviors, and driver characteristics).

The testing validation was completed through virtual simulation, basic function tests, subjective evaluations, and benchmarking tests. The results demonstrate the proposed system’s superior performance in key behavior detection, such as eye closure detection (confidence score of 1.000 vs. 0.674 for the competitor product), smoking detection (confidence score of 1.000 vs. 0.011 for the competitor product), and false positive rate control (phone call misrecognition rate of 0.016 vs. 0.805 for the competitor product), showcasing significant advantages over similar products. Based on simulated data, the enhanced detection capabilities are likely to reduce the risk of fatigue-related accidents, as the system enables more timely interventions, which could reduce incidents caused by driver fatigue. However, real-time processing demands substantial computational resources, including high-performance hardware such as GPUs or AI chips. The limitations in system resources may impact scalability and performance across different vehicle platforms. These limitations should be addressed in future work to optimize real-world applications.

Although this study establishes a robust foundation for standardized DFMS testing and performance optimization, the comprehensiveness of test scenarios is limited by the diversity of real-vehicle data collection samples. Most test subjects came from relatively homogeneous demographic groups, which may restrict the generalizability of the results; therefore, the reported algorithm model testing results should be interpreted primarily as engineering validation outcomes rather than evidence of demographic fairness or population-level robustness. Another limitation lies in the potential challenges of adapting the system across different vehicle types due to hardware variability and software integration requirements. Future research could focus on expanding system robustness with a prioritized approach. First, integrating multimodal sensors, such as heart rate monitors and steering wheel torque sensors, will improve the system’s ability to detect fatigue-related behaviors in diverse conditions. Second, addressing the challenge of extreme weather conditions, such as heavy rain, snow, and fog, will enhance the system’s reliability under adverse environmental factors. Third, broader demographic data collection and systematic validation across multiple vehicle categories will help address the limitations mentioned above, ensuring stronger adaptability and wider applicability. Additionally, the generalizability of the system to other vehicle types and operational domains should be explored. Collaborative efforts with OEMs (Original Equipment Manufacturers) and regulatory bodies will be crucial in establishing standardized guidelines, testing protocols, and performance benchmarks. Furthermore, aligning with evolving automotive safety standards beyond 2027, such as updates to C-NCAP, E-NCAP, and UNECE regulations, will ensure long-term compliance and industry adoption. Engaging in joint working groups with industry stakeholders can accelerate the standardization process and promote interoperability across markets.

In summary, the proposed testing framework and validation methods provide reusable theoretical foundations and practical guidance for advancing intelligent driving safety technologies. The modular design and automated testing strategies are not only applicable to the development and optimization of DFMS, but also provide a reference for testing other intelligent driving assistance systems, holding significant academic value and broad application prospects. Moreover, the proposed framework provides practical guidance for industry adoption and regulatory compliance by aligning DFMS development with evolving international safety standards.