Investigation of Exponent-Free LSTM Cells for Virtual Sensing Applications

Abstract

1. Introduction

- We propose a comprehensive evaluation of exponent-free activation functions specifically for wind turbine virtual sensing, demonstrating that they can match or exceed the accuracy of standard activations.

- We provide a rigorous feature selection analysis, reducing the input space from 57 to 9 key variables using Mutual Information, ensuring an optimal balance between information density and model size.

- We benchmark the proposed architectures against mainstream lightweight models (TinyLSTM), proving that the optimized activation functions offer a complementary path to efficiency alongside architectural compression.

2. State of the Art

3. Materials and Methods

3.1. LSTM Variants and Bidirectional LSTM

3.2. Alternative Activation Functions

3.3. Experimental Setup

- LSTM with forget gate.

- Peephole LSTM.

- Bidirectional LSTM.

4. Results and Discussion

4.1. Horizontal Comparison with Lightweight Baseline

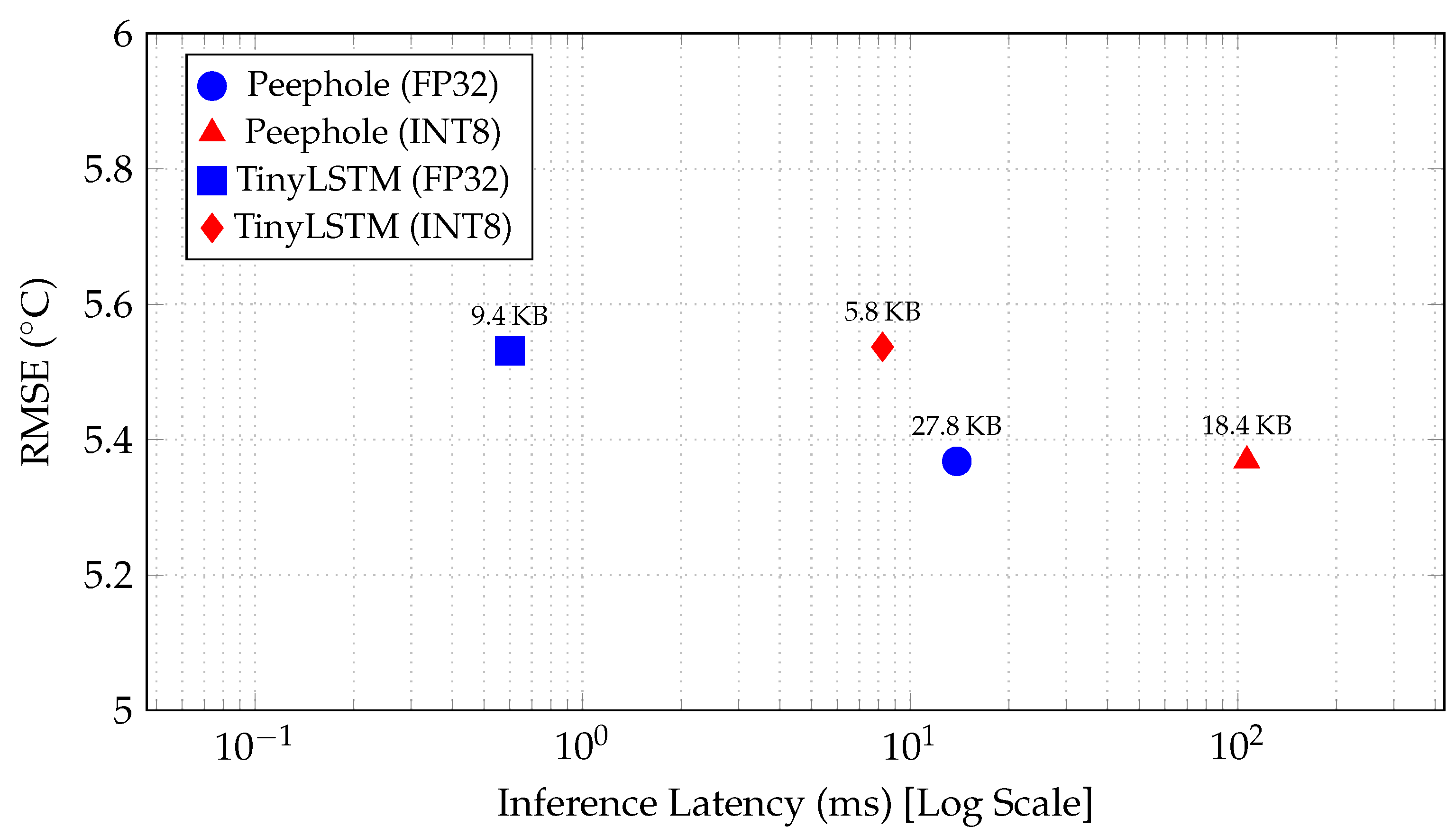

4.2. Deployment-Oriented Quantization (INT8) Benchmark

4.3. Performance Comparison of LSTM Variants

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Abbreviations

| LSTM | Long Short-Term Memory |

| LSTM-FG | Long Short-Term Memory with Forget Gate |

| BiLSTM | Bidirectional Long Short-Term Memory |

| SCADA | Supervisory Control and Data Acquisition |

| FPGA | Field-Programmable Gate Array |

| GMDH | Group Method of Data Handling |

| CNN-LSTM | Convolutional Neural Network–Long Short-Term Memory |

| ECG | Electrocardiography |

| EMG | Electromyography |

| GELU | Gaussian Error Linear Unit |

| MSE | Mean Squared Error |

| RMSE | Root Mean Squared Error |

| MAE | Mean Absolute Error |

| MAPE | Mean Absolute Percentage Error |

References

- Chong, Y.S.; Goh, W.L.; Ong, Y.S.; Nambiar, V.P.; Do, A.T. Efficient implementation of activation functions for LSTM accelerators. In Proceedings of the 2021 IFIP/IEEE 29th International Conference on Very Large Scale Integration (VLSI-SoC), Singapore, 4–7 October 2021; IEEE: New York, NY, USA, 2021; pp. 1–5. [Google Scholar] [CrossRef]

- Yan, G.; Yu, C.; Bai, Y. Wind turbine bearing temperature forecasting using a new data-driven ensemble approach. Machines 2021, 9, 248. [Google Scholar] [CrossRef]

- Qian, P.; Tian, X.; Kanfoud, J.; Lee, J.L.Y.; Gan, T.H. A novel condition monitoring method of wind turbines based on long short-term memory neural network. Energies 2019, 12, 3411. [Google Scholar] [CrossRef]

- Azzam, B.; Schelenz, R.; Roscher, B.; Baseer, A.; Jacobs, G. Development of a wind turbine gearbox virtual load sensor using multibody simulation and artificial neural networks. Forsch. Ingenieurwesen 2021, 85, 241–250. [Google Scholar] [CrossRef]

- Jankauskas, M.; Serackis, A.; Šapurov, M.; Pomarnacki, R.; Baskys, A.; Hyunh, V.K.; Vaimann, T.; Zakis, J. Exploring the limits of early predictive maintenance in wind turbines applying an anomaly detection technique. Sensors 2023, 23, 5695. [Google Scholar] [CrossRef] [PubMed]

- Seidel, E.; Franzen, J.; Strake, M.; Fingscheidt, T. Y2-Net FCRN for acoustic echo and noise suppression. arXiv 2021, arXiv:2103.17189. [Google Scholar]

- Ali, M.H.E.; Abdel-Raman, A.B.; Badry, E.A. Developing novel activation functions based deep learning LSTM for classification. IEEE Access 2022, 10, 97259–97275. [Google Scholar] [CrossRef]

- Rybalkin, V.; Sudarshan, C.; Weis, C.; Lappas, J.; Wehn, N.; Cheng, L. Efficient hardware architectures for 1D-and MD-LSTM networks. J. Signal Process. Syst. 2020, 92, 1219–1245. [Google Scholar] [CrossRef]

- Silfa, F.; Arnau, J.M.; González, A. Boosting LSTM performance through dynamic precision selection. In Proceedings of the 2020 IEEE 27th International Conference on High Performance Computing, Data, and Analytics (HiPC), Pune, India, 16–19 December 2020; IEEE: New York, NY, USA, 2020; pp. 323–333. [Google Scholar]

- Timmons, N.G.; Rice, A. Approximating activation functions. arXiv 2020, arXiv:2001.06370. [Google Scholar]

- Parisi, L.; Ma, R.; RaviChandran, N.; Lanzillotta, M. hyper-sinh: An accurate and reliable function from shallow to deep learning in TensorFlow and Keras. Mach. Learn. Appl. 2021, 6, 100112. [Google Scholar] [CrossRef]

- Joseph, T.; Bindiya, T. Realization and Hardware Implementation of Gating Units for Long Short-Term Memory Network Using Hyperbolic Sine Functions. IEEE Trans. Comput.-Aided Des. Integr. Circuits Syst. 2023, 42, 5141–5145. [Google Scholar] [CrossRef]

- Ding, Y.; Yi, Z.; Li, M.; Long, J.; Lei, S.; Guo, Y.; Fan, P.; Zuo, C.; Wang, Y. HI-MViT: A lightweight model for explainable skin disease classification based on modified MobileViT. Digit. Health 2023, 9, 20552076231207197. [Google Scholar] [CrossRef] [PubMed]

- Vallés-Pérez, I.; Soria-Olivas, E.; Martínez-Sober, M.; Serrano-López, A.J.; Vila-Francés, J.; Gómez-Sanchís, J. Empirical study of the modulus as activation function in computer vision applications. Eng. Appl. Artif. Intell. 2023, 120, 105863. [Google Scholar] [CrossRef]

- Zhang, L.; Li, J.; Walter, N.G. Pretrained Deep Neural Network Kin-SiM for Single-Molecule FRET Trace Idealization. J. Phys. Chem. B 2025, 129, 1167–1175. [Google Scholar] [CrossRef] [PubMed]

- Hochreiter, S.; Schmidhuber, J. Long Short-Term Memory. Neural Comput. 1997, 9, 1735–1780. [Google Scholar] [CrossRef]

- Ordóñez, F.J.; Roggen, D. Deep convolutional and lstm recurrent neural networks for multimodal wearable activity recognition. Sensors 2016, 16, 115. [Google Scholar] [CrossRef]

- Greff, K.; Srivastava, R.K.; Koutník, J.; Steunebrink, B.R.; Schmidhuber, J. LSTM: A Search Space Odyssey. IEEE Trans. Neural Netw. Learn. Syst. 2017, 28, 2222–2232. [Google Scholar] [CrossRef]

- Xia, K.; Huang, J.; Wang, H. LSTM-CNN Architecture for Human Activity Recognition. IEEE Access 2020, 8, 56855–56866. [Google Scholar] [CrossRef]

- Mekruksavanich, S.; Jitpattanakul, A. LSTM Networks Using Smartphone Data for Sensor-Based Human Activity Recognition in Smart Homes. Sensors 2021, 21, 1636. [Google Scholar] [CrossRef]

- Mutegeki, R.; Han, D.S. A CNN-LSTM Approach to Human Activity Recognition. In Proceedings of the 2020 International Conference on Artificial Intelligence in Information and Communication (ICAIIC), Fukuoka, Japan, 19–21 February 2020; IEEE: New York, NY, USA, 2020; pp. 362–366. [Google Scholar] [CrossRef]

- Yildirim, Ö. A novel wavelet sequence based on deep bidirectional LSTM network model for ECG signal classification. Comput. Biol. Med. 2018, 96, 189–202. [Google Scholar] [CrossRef]

- Abromavičius, V.; Plonis, D.; Tarasevičius, D.; Serackis, A. Two-Stage Monitoring of Patients in Intensive Care Unit for Sepsis Prediction Using Non-Overfitted Machine Learning Models. Electronics 2020, 9, 1133. [Google Scholar] [CrossRef]

- Cicėnas, B.; Abromavičius, V. Investigation of Pneumonia Detection using Convolutional Neural Networks. In Proceedings of the 2022 IEEE Open Conference of Electrical, Electronic and Information Sciences (eStream), Vilnius, Lithuania, 21 April 2022; IEEE: New York, NY, USA, 2022; pp. 1–4. [Google Scholar] [CrossRef]

- Kapustynska, V.; Abromavičius, V.; Serackis, A.; Paulikas, Š.; Ryliškienė, K.; Andručkevičius, S. Machine Learning and Wearable Technology: Monitoring Changes in Biomedical Signal Patterns during Pre-Migraine Nights. Healthcare 2024, 12, 1701. [Google Scholar] [CrossRef]

- Gers, F.A.; Schmidhuber, J.; Cummins, F. Continual Prediction using LSTM with Forget Gates. In Neural Nets WIRN Vietri-99, Proceedings of the 11th Italian Workshop on Neural Nets, Vietri Sul Mare, Salerno, Italy, 20–22 May 1999; Marinaro, M., Tagliaferri, R., Eds.; Springer: London, UK, 1999; pp. 133–138. [Google Scholar] [CrossRef]

- Zhang, P.; Li, C.; Peng, C.; Tian, J. Ultra-Short-Term Prediction of Wind Power Based on Error Following Forget Gate-Based Long Short-Term Memory. Energies 2020, 13, 5400. [Google Scholar] [CrossRef]

- Chien, H.Y.S.; Turek, J.S.; Beckage, N.; Vo, V.A.; Honey, C.J.; Willke, T.L. Slower is Better: Revisiting the Forgetting Mechanism in LSTM for Slower Information Decay. arXiv 2021, arXiv:2105.05944. [Google Scholar] [CrossRef]

- Gers, F.A.; Schraudolph, N.N.; Schmidhuber, J. Learning precise timing with LSTM recurrent networks. J. Mach. Learn. Res. 2003, 3, 115–143. [Google Scholar] [CrossRef][Green Version]

- Schuster, M. Acoustic model building based on non-uniform segments and bidirectional recurrent neural networks. In Proceedings of the 1997 IEEE International Conference on Acoustics, Speech, and Signal Processing, Munich, Germany, 21–24 April 1997; IEEE: New York, NY, USA, 1997; Volume 4, pp. 3249–3252. [Google Scholar] [CrossRef]

- Rezaee, K.; Khavari, S.F.; Ansari, M.; Zare, F.; Roknabadi, M.H.A. Hand gestures classification of semg signals based on bilstm-metaheuristic optimization and hybrid u-net-mobilenetv2 encoder architecture. Sci. Rep. 2024, 14, 31257. [Google Scholar] [CrossRef] [PubMed]

- Supratak, A.; Dong, H.; Wu, C.; Guo, Y. DeepSleepNet: A Model for Automatic Sleep Stage Scoring Based on Raw Single-Channel EEG. IEEE Trans. Neural Syst. Rehabil. Eng. 2017, 25, 1998–2008. [Google Scholar] [CrossRef]

- Bharatheedasan, K.; Maity, T.; Kumaraswamidhas, L.; Durairaj, M. An intelligent of fault diagnosis and predicting remaining useful life of rolling bearings based on convolutional neural network with bidirectional lstm. Sādhanā 2023, 48, 131. [Google Scholar] [CrossRef]

- Cao, L.; Qian, Z.; Zareipour, H.; Huang, Z.; Zhang, F. Fault Diagnosis of Wind Turbine Gearbox Based on Deep Bi-Directional Long Short-Term Memory Under Time-Varying Non-Stationary Operating Conditions. IEEE Access 2019, 7, 155219–155228. [Google Scholar] [CrossRef]

- Portugal, E.D. Wind Turbine Failure Detection. 2019. Available online: https://opendata.edp.com/pages/challenges/#description (accessed on 1 June 2022).

| Attribute | Description |

|---|---|

| Turbine Model | Vestas V90 (2 MW, Onshore) |

| Number of Turbines | 5 (T01, T06, T07, T09, T11) |

| Time Period | 2014–2015 (2 Years) |

| Sampling Rate | 10 min SCADA averages |

| Total Features Available | 57 |

| Features Selected | 9 (via Mutual Information & Correlation) |

| Target Variable | Generator Bearing Temperature (Gen_Bear_Temp_Avg) |

| Training Samples | Max 62,208 per turbine |

| Split Strategy | Chronological Hold-out |

| Model Architecture | Hidden Size | Params | Size (KB) | FLOPs/Sample | RMSE | CPU Latency (ms) | |

|---|---|---|---|---|---|---|---|

| Proposed Peephole Baseline | 32 | 5665 | 22.13 | ≈645 K | 0.0821 | 0.8415 | 11.70 |

| Mainstream TinyLSTM | 16 | 1745 | 6.82 | ≈200 K | 0.0553 | 0.9265 | 0.45 |

| Model | Format | Size (KB) | Accuracy () | Latency (ms) * |

|---|---|---|---|---|

| Peephole (Logish) | FP32 | 27.82 | 0.8271 | 13.89 |

| Peephole (Logish) | INT8 | 18.43 | 0.8270 | 106.67 |

| TinyLSTM | FP32 | 9.38 | 0.8164 | 0.60 |

| TinyLSTM | INT8 | 5.81 | 0.8160 | 8.24 |

| Turbine | Model | MSE | MAE | RMSE | MAPE (%) | |

|---|---|---|---|---|---|---|

| LSTM-FG | 0.007845 | 0.049178 | 0.088571 | 0.815423 | 17.33 | |

| T09 | Peephole | 0.006681 | 0.042991 | 0.081737 | 0.842807 | 14.81 |

| BiLSTM | 0.006621 | 0.044040 | 0.081369 | 0.844219 | 15.40 | |

| LSTM-FG | 0.006509 | 0.044625 | 0.080676 | 0.846860 | 15.72 | |

| T07 | Peephole | 0.006375 | 0.045438 | 0.079842 | 0.850010 | 15.25 |

| BiLSTM | 0.007049 | 0.044685 | 0.083956 | 0.834157 | 15.72 | |

| LSTM-FG | 0.007420 | 0.046163 | 0.086141 | 0.825410 | 16.33 | |

| T11 | Peephole | 0.007507 | 0.047833 | 0.086640 | 0.823381 | 16.30 |

| BiLSTM | 0.006753 | 0.045652 | 0.082178 | 0.841106 | 16.12 | |

| LSTM-FG | 0.007449 | 0.046807 | 0.086307 | 0.824737 | 16.18 | |

| T06 | Peephole | 0.006583 | 0.043307 | 0.081139 | 0.845100 | 14.98 |

| BiLSTM | 0.007591 | 0.046228 | 0.087124 | 0.821403 | 16.19 | |

| LSTM-FG | 0.006721 | 0.044640 | 0.081981 | 0.841867 | 15.48 | |

| T01 | Peephole | 0.006399 | 0.046954 | 0.079994 | 0.849438 | 16.03 |

| BiLSTM | 0.006783 | 0.044977 | 0.082360 | 0.840400 | 15.77 |

| Model/Activation | RMSE | MSE | MAE | MAPE (%) | |

|---|---|---|---|---|---|

| LSTM with Forget Gate | 0.08668 | 0.00745 | 0.04681 | 0.82474 | 16.18 |

| Baseline Peephole LSTM | 0.08208 | 0.00674 | 0.04569 | 0.84151 | 16.12 |

| Baseline BiLSTM | 0.08058 | 0.00649 | 0.04463 | 0.84686 | 15.72 |

| Peephole LSTM (TANH) | 0.08200 | 0.00673 | 0.04560 | 0.84166 | 15.94 |

| Peephole LSTM (ReLU) | 0.08146 | 0.00663 | 0.04490 | 0.84408 | 15.82 |

| Peephole LSTM (LeakyReLU) | 0.08063 | 0.00651 | 0.04447 | 0.84660 | 15.59 |

| Peephole LSTM (ELU) | 0.08478 | 0.00719 | 0.04709 | 0.83143 | 16.65 |

| Peephole LSTM (Softplus) | 0.08194 | 0.00671 | 0.04558 | 0.84193 | 15.97 |

| Peephole LSTM (GELU) | 0.08369 | 0.00701 | 0.04648 | 0.83518 | 16.32 |

| Peephole LSTM (Swish) | 0.08576 | 0.00736 | 0.04737 | 0.82769 | 16.79 |

| Peephole LSTM (Mish) | 0.09009 | 0.00811 | 0.04948 | 0.80904 | 17.39 |

| Peephole LSTM (SiLU) | 0.08269 | 0.00684 | 0.04609 | 0.83929 | 16.08 |

| Peephole LSTM (Snake) | 0.08071 | 0.00652 | 0.04451 | 0.84633 | 15.61 |

| Peephole LSTM (Smish) | 0.08319 | 0.00692 | 0.04622 | 0.83718 | 16.15 |

| Peephole LSTM (Logish) | 0.07990 | 0.00638 | 0.04417 | 0.84976 | 15.43 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Jankauskas, M.; Katkevičius, A.; Serackis, A. Investigation of Exponent-Free LSTM Cells for Virtual Sensing Applications. Electronics 2026, 15, 576. https://doi.org/10.3390/electronics15030576

Jankauskas M, Katkevičius A, Serackis A. Investigation of Exponent-Free LSTM Cells for Virtual Sensing Applications. Electronics. 2026; 15(3):576. https://doi.org/10.3390/electronics15030576

Chicago/Turabian StyleJankauskas, Mindaugas, Andrius Katkevičius, and Artūras Serackis. 2026. "Investigation of Exponent-Free LSTM Cells for Virtual Sensing Applications" Electronics 15, no. 3: 576. https://doi.org/10.3390/electronics15030576

APA StyleJankauskas, M., Katkevičius, A., & Serackis, A. (2026). Investigation of Exponent-Free LSTM Cells for Virtual Sensing Applications. Electronics, 15(3), 576. https://doi.org/10.3390/electronics15030576