Explainable Prediction of Crowdfunding Success Using Hierarchical Attention Network

Abstract

1. Introduction

- In this study, we collected publicly available crowdfunding campaign data from one of the most popular crowdfunding sites, Kickstarter.com. We trained and evaluated success prediction models on two different major categories of crowdfunding campaigns—Technology and Art, using text content data only.

- We propose adopting HAN [8] to predict crowdfunding success by effectively modeling the multi-level structure (word and sentence) of campaign texts. Our model achieves state-of-the-art level accuracies of 86.38% and 87.29% using only textual data from the Updates and Comments sections, respectively.

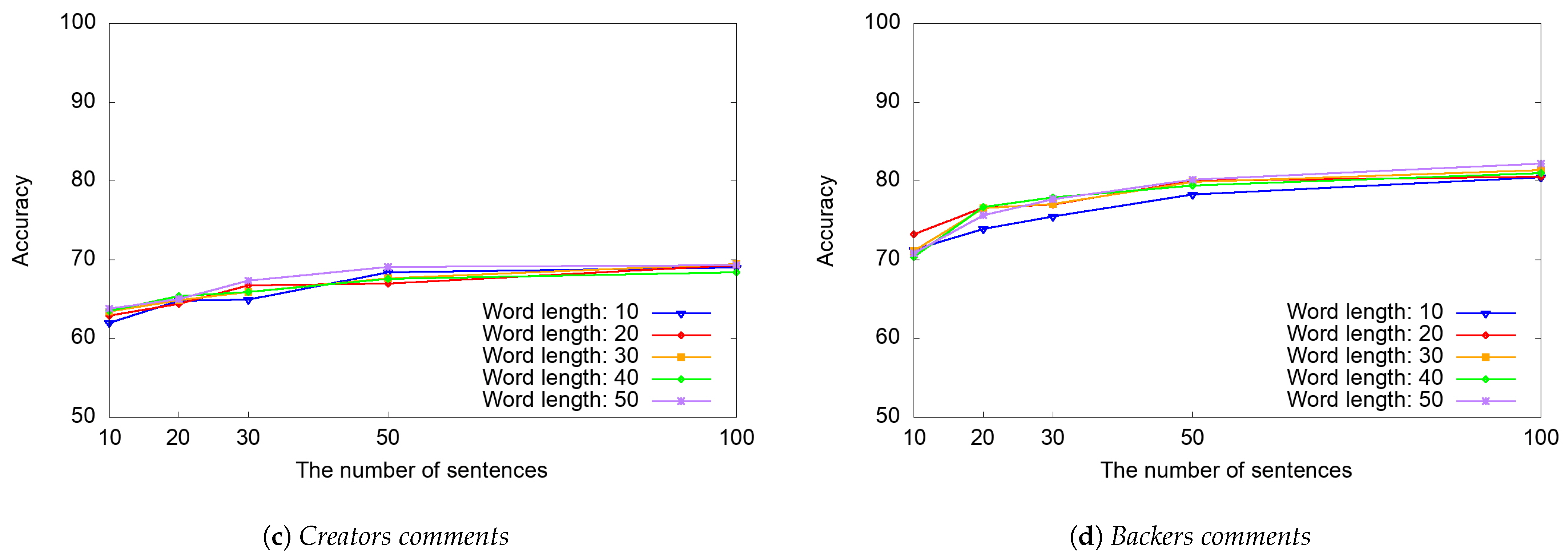

- We also explore the feasibility of early prediction of campaign success using text content data only, showing our model achieves 59.55% of prediction accuracy on the very first day of campaign launch. As more contents arrive in Updates and Comments sections later on, our model achieves 70.96% to 80.99% (with Updates section text only) and 65.99% to 74.49% (with backers’ comments in Comments section text only), within one to two months.

- This paper presents an empirical analysis of attention weight scores at both word and sentence levels generated by HAN, to examine which words and sentences in Updates and Comments sections affected the prediction results (i.e., the success of the campaigns) most. For instance, we found that in Updates section of successful projects, sentences where the creator explicitly requests backers to share project information via social media platforms like Facebook and Twitter are prominent. In Comments section of failed projects, sentences expressing dissatisfaction due to the creator’s lack of response to investors’ messages stand out. We believe that our findings provide valuable insights for both researchers and practitioners, such as project creators and backers, helping them make informed decisions and increase the success rate of their campaigns and investments.

2. Related Work

3. Methodology

3.1. Data Set

3.2. Hierarchical Attention Network

3.2.1. Gated Recurrent Unit (GRU)

3.2.2. Word Encoder

3.2.3. Word Attention

3.2.4. Sentence Encoder

3.2.5. Sentence Attention

3.3. Performance Metrics

- (Overall) accuracy: the ratio of the projects correctly classified as successful or failed out of the total number of projects contained in our data set. We use this measure to assess the accuracy of the classifier across the entire dataset, as shown in Equation (18).

- AUC: AUC is the “Area Under the Receiver Operating Characteristic (ROC) curve”. ROC is a probability curve that plots the True Positive Rate (TPR) against the False Positive Rate (FPR) at various classification thresholds. AUC measures the overall performance of the classifier by calculating the area under the ROC curve, with a value ranging from 0 to 1. An AUC score of 1 indicates a perfect classifier, meaning it correctly distinguishes all positive and negative instances across all thresholds.

- Precision: the ratio of True Positives to the sum of True Positives and False Positives, indicating the proportion of predicted successful campaigns that were actually successful. True Positives are the number of correctly classified successful campaigns, False Positives are failed projects falsely labeled as success. This calculation is expressed in Equation (19):

- Recall: the ratio of True Positives to the sum of True Positives and False Negatives, indicating the proportion of actual successful campaigns that were correctly identified. False Negatives represent successful campaigns that were incorrectly classified as failed, leading to missed positive cases. The recall is determined as shown in Equation (20):

4. Results

4.1. Experimental Settings

4.2. Classification Performance Evaluation

4.3. Explainability Analysis

4.3.1. Updates Section

“Our team is well-versed in knowing how to mitigate privacy and security issues. Read and share the article about MirroCool in KnowTechie We are pretty excited about this article! Check it and share it with your friends and family on Facebook and Twitter. Here is the link of the news article <url>”

“I’m excited to start delivering your rewards in the new repair truck soon! Best, Pete Major milestone reached”

“The de-limer works by electrolysis. This is where a small DC current is passed though a liquid.The de-limer consists of two anodes and one cathode. The middle part of the device is the cathode and the top and bottom parts are the anodes. The anodes must be in contact with the metal of the device to be cleaned. If there is a layer of limescale between the de-limer and your kettle then it will not work.”

“Thank you for all the support! It’s been 1 week now since we’ve launched our Kickstarter and it’s been a very exciting ride. We wanted to say thank you so much for your pledges, they really mean a lot to Court and the team. We are going to continue pushing forward and doing all we can to spread the message of recovery to those who need it. If you have any questions for us you can ask them in the FAQ section and we’ll answer them as quickly as we can. Thanks again for your support and the support you give to all those who are struggling today with addiction.”

4.3.2. Backers’ Comments in Comments Section

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Abbreviations

| HAN | Hierarchical Attention Network |

| MLP | Multi-Layer Perceptron |

| seq2seq | sequence-to-sequence |

| RNNs | Recurrent Neural Networks |

| Bi-GRU | Bidirectional Gated Recurrent Unit |

| GRU | Gated Recurrent Unit |

| ROC | Area Under the Receiver Operating Characteristic |

| TPR | True Positive Rate |

| FPR | False Positive Rate |

| NLTK | Natural Language Toolkit |

| NLP | Natural Language Processing |

Appendix A. Performance Comparison According to the Number of Sentences and the Number of Words in a Sentence in Each Section by Category

Appendix A.1. Technology + Art

Appendix A.2. Technology

Appendix A.3. Art

References

- Crowdfunding Market Size & Share [2026–2035]. Available online: https://www.businessresearchinsights.com/market-reports/crowdfunding-market-100103 (accessed on 19 January 2026).

- Mollick, E. The dynamics of crowdfunding: An exploratory study. J. Bus. Ventur. 2014, 29, 1–16. [Google Scholar] [CrossRef]

- Pebble Time—Awesome Smartwatch, No Compromises by Pebble Technology—Kickstarter. Available online: https://www.kickstarter.com/projects/getpebble/pebble-time-awesome-smartwatch-no-compromises (accessed on 19 December 2025).

- Yuan, H.; Qiao, D.; Wei, Q. A Persuasion-Based Framework for Predicting Crowdfunding Success. 2023. Available online: https://ssrn.com/abstract=4658668 (accessed on 21 January 2026).

- Etter, V.; Grossglauser, M.; Thiran, P. Launch Hard or Go Home!: Predicting the Success of Kickstarter Campaigns. In Proceedings of the ACM Conference on Online Social Networks (COSN), Boston, MA, USA, 7–8 October 2013; pp. 177–182. [Google Scholar] [CrossRef]

- Greenberg, M.D.; Pardo, B.; Hariharan, K.; Gerber, E. Crowdfunding support tools: Predicting success & failure. In Proceedings of the Conference on Human Factors in Computing Systems-Extended Abstracts (CHI-EA), Paris, France, 27 April–2 May 2013; pp. 1815–1820. [Google Scholar] [CrossRef]

- Lee, S.; Lee, K.; Kim, H.-C. Content-based Success Prediction of Crowdfunding Campaigns: A Deep Learning Approach. In Proceedings of the ACM Conference on Computer Supported Cooperative Work and Social Computing (CSCW), Jersey City, NJ, USA, 3–7 November 2018; pp. 3–7. [Google Scholar] [CrossRef]

- Yang, Z.; Yang, D.; Dyer, C.; He, X.; Smola, A.; Hovy, E. Hierarchical attention networks for document classification. In Proceedings of the 2016 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (NAACL HLT), San Diego, CA, USA, 12–17 June 2016; pp. 1480–1489. [Google Scholar] [CrossRef]

- Greenberg, J.; Mollick, E. Sole Survivors: Solo Ventures Versus Founding Teams. 2018. Available online: https://ssrn.com/abstract=3107898 (accessed on 18 December 2025).

- Zhang, Y.; Tan, C.D.; Sun, J.; Yang, Z. Why do people patronize donation-based crowdfunding platforms? An activity perspective of critical success factors. Comput. Hum. Behav. 2020, 112, 106470. [Google Scholar] [CrossRef]

- Aleksina, A.; Akulenka, S.; Lubloy, A. Success factors of crowdfunding campaigns in medical research: Perceptions and reality. Drug Discov. Today 2019, 24, 1413–1420. [Google Scholar] [CrossRef] [PubMed]

- Agrawal, A.K.; Catalini, C.; Goldfarb, A. The Geography of Crowdfunding; Technical Report; National Bureau of Economic Research: Cambridge, MA, USA, 2011. [Google Scholar] [CrossRef]

- Ma, Z.; Palacios, S. Image-mining: Exploring the impact of video content on the success of crowdfunding. J. Mark. Anal. 2021, 9, 265–285. [Google Scholar] [CrossRef]

- Li, Y.; Xiao, N.; Wu, S. The devil is in the details: The effect of nonverbal cues on crowdfunding success. Inf. Manag. 2021, 58, 103528. [Google Scholar] [CrossRef]

- Xu, A.; Yang, X.; Rao, H.; Fu, W.-T.; Huang, S.-W.; Bailey, B.P. Show Me the Money!: An Analysis of Project Updates during Crowdfunding Campaigns. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems (CHI), Toronto, ON, Canada, 26 April–1 May 2014; pp. 591–600. [Google Scholar] [CrossRef]

- Zvilichovsky, D.; Inbar, Y.; Barzilay, O. Playing Both Sides of the Market: Success and Reciprocity on Crowdfunding Platforms. 2015. Available online: https://ssrn.com/abstract=2304101 (accessed on 18 December 2025).

- Rakesh, V.; Choo, J.; Reddy, C.K. Project recommendation using heterogeneous traits in crowdfunding. In Proceedings of the 9th International AAAI Conference on Web and Social Media (ICWSM), Oxford, UK, 26–29 May 2015; pp. 26–29. [Google Scholar] [CrossRef]

- Thies, F.; Wessel, M.; Rudolph, J.; Benlian, A. Personality matters: How signaling personality traits can influence the adoption and diffusion of crowdfunding campaigns. In Proceedings of the 24th European Conference on Information Systems (ECIS), Istanbul, Turkey, 12–15 June 2016. [Google Scholar]

- Moreno-Moreno, A.; Sanchis-Pedregosa, C.; Berenguer, E. Success factors in peer-to-business (P2B) crowdlending: A predictive approach. IEEE Access 2019, 7, 148586–148593. [Google Scholar] [CrossRef]

- Geiger, M.; Moore, K. Attracting the crowd in online fundraising: A meta-analysis connecting campaign characteristics to funding outcomes. Comput. Hum. Behav. 2022, 128, 107061. [Google Scholar] [CrossRef]

- Zhang, Z.; Lau, R.Y. Exploiting multimodal features and deep learning for predicting crowdfunding successes. In Proceedings of the 2024 IEEE International Conference on Omni-layer Intelligent Systems (COINS), London, UK, 29–31 July 2024; pp. 1–6. [Google Scholar] [CrossRef]

- An, J.; Quercia, D.; Crowcroft, J. Recommending Investors for Crowdfunding Projects. In Proceedings of the 23rd International Conference on World Wide Web (WWW), Seoul, Republic of Korea, 7–11 April 2014; pp. 261–270. [Google Scholar] [CrossRef]

- Gerber, E.M.; Hui, J.S.; Kuo, P.Y. Crowdfunding: Why people are motivated to post and fund projects on crowdfunding platforms. In Proceedings of the ACM SIGCHI Conference on Computer-Supported Cooperative Work & Social Computing (CSCW), Seattle, WA, USA, 11–15 February 2012; p. 10. [Google Scholar]

- Siering, M.; Koch, J.-A.; Deokar, A.V. Detecting fraudulent behavior on crowdfunding platforms: The role of linguistic and content-based cues in static and dynamic contexts. J. Manag. Inf. Syst. 2016, 33, 421–455. [Google Scholar] [CrossRef]

- Cumming, D.; Hornuf, L.; Korami, M.; Schweizer, D. Disentangling Crowdfunding from Fraudfunding. J. Bus. Ethics 2021, 182, 1103–1128. [Google Scholar] [CrossRef] [PubMed]

- Lee, S.; Shafqat, W.; Kim, H.-C. Backers Beware: Characteristics and Detection of Fraudulent Crowdfunding Campaigns. Sensors 2022, 22, 7677. [Google Scholar] [CrossRef] [PubMed]

- Cheng, C.; Tan, F.; Hou, X.; Wei, Z. Success prediction on crowdfunding with multimodal deep learning. In Proceedings of the 28th International Joint Conference on Artificial Intelligence (IJCAI), Macao, China, 10–16 August 2019; pp. 10–16. [Google Scholar] [CrossRef]

- Yu, P.F.; Huang, F.M.; Yang, C.; Liu, Y.H.; Li, Z.Y.; Tsai, C.H. Prediction of Crowdfunding Project Success with Deep Learning. In Proceedings of the IEEE 15th International Conference on e-Business Engineering (ICEBE), Xi’an, China, 12–14 October 2018; pp. 12–14. [Google Scholar] [CrossRef]

- Shi, J.; Yang, K.; Xu, W.; Wang, M. Leveraging deep learning with audio analytics to predict the success of crowdfunding projects. J. Supercomput. 2021, 77, 7833–7853. [Google Scholar] [CrossRef]

- Mollick, E. Delivery Rates on Kickstarter. 2015. Available online: https://ssrn.com/abstract=2699251 (accessed on 18 December 2025).

- Dey, S.; Duff, B.; Karahalios, K.; Fu, W.T. The art and science of persuasion: Not all crowdfunding campaign videos are the same. In Proceedings of the ACM Conference on Computer Supported Cooperative Work Companion (CSCW), Portland, OR, USA, 25 February–1 March 2017; pp. 755–769. [Google Scholar] [CrossRef]

- Schuster, M.; Paliwal, K.K. Bidirectional recurrent neural networks. IEEE Trans. Signal Process. 1997, 45, 2673–2681. [Google Scholar] [CrossRef]

- Cho, K.; Merrienboer, B.v.; Bahdanau, D.; Bengio, Y. On the properties of neural machine translation: Encoder-decoder approaches. arXiv 2014, arXiv:1409.1259. [Google Scholar] [CrossRef]

- Bojanowski, P.; Grave, E.; Joulin, A.; Mikolov, T. Enriching Word Vectors with Subword Information. Trans. Assoc. Comput. Linguist. 2017, 5, 135–146. [Google Scholar] [CrossRef]

- NLTK Natural Language Toolkit. Available online: https://www.nltk.org/ (accessed on 19 December 2025).

- ReduceLROnPlateau. Available online: https://keras.io/api/callbacks/reduce_lr_on_plateau/ (accessed on 19 December 2025).

- ModelCheckpoint. Available online: https://keras.io/api/callbacks/model_checkpoint/ (accessed on 19 December 2025).

- Chung, J.; Lee, K. A long-term study of a crowdfunding platform: Predicting project success and fundraising amount. In Proceedings of the 26th ACM Conference on Hypertext & Social Media, Guzelyurt, Cyprus, 1–4 September 2015; pp. 1–4. [Google Scholar] [CrossRef]

- Lai, C.-Y.; Lo, P.-C.; Hwang, S.-Y. Incorporating comment text into success prediction of crowdfunding campaigns. In Proceedings of the 21st Pacific-Asia Conference on Information Systems (PACIS), Langkawi, Malaysia, 16–20 July 2017. [Google Scholar]

- Kaminski, J.C.; Hopp, C. Predicting outcomes in crowdfunding campaigns with textual, visual, and linguistic signals. Small Bus. Econ. 2020, 55, 627–649. [Google Scholar] [CrossRef]

- Cumming, D.J.; Leboeuf, G.; Schwienbacher, A. Crowdfunding Models: Keep-It-All vs. All-or-Nothing. 2019. Available online: https://ssrn.com/abstract=2447567 (accessed on 18 December 2025).

- Horisch, J. Crowdfunding for environmental ventures: An empirical analysis of the influence of environmental orientation on the success of crowdfunding initiatives. J. Clean. Prod. 2015, 107, 636–645. [Google Scholar] [CrossRef]

- Devlin, J.; Chang, M.-W.; Lee, K.; Toutanova, K. Bert: Pre-training of deep bidirectional transformers for language understanding. arXiv 2018, arXiv:1810.04805. [Google Scholar]

- OpenAI. GPT-4 Technical Report. arXiv 2023, arXiv:2303.08774. [Google Scholar] [CrossRef]

- Google. PaLM 2 Technical Report. arXiv 2023, arXiv:2305.10403. [Google Scholar] [CrossRef]

- Brown, T.B.; Mann, B.; Ryder, N. Language models are few-shot learners. arXiv 2020, arXiv:2005.14165. [Google Scholar] [CrossRef]

- Li, Y.; Rakesh, V.; Reddy, C.K. Project Success Prediction in Crowdfunding Environments. In Proceedings of the Ninth ACM International Conference on Web Search and Data Mining (WSDM), San Francisco, CA, USA, 22–25 February 2016; pp. 247–256. [Google Scholar] [CrossRef]

| Category | Successful (%) | Failed (%) | Canceled (%) | Suspended (%) | Total # of Campaigns |

|---|---|---|---|---|---|

| Film & Video | 23,298 (40.0) | 30,374 (52.2) | 4445 (7.6) | 93 (0.2) | 58,210 |

| Publishing | 12,976 (34.1) | 22,342 (58.7) | 2687 (7.1) | 55 (0.1) | 38,060 |

| Music | 17,757 (46.2) | 17,996 (46.9) | 2562 (6.7) | 91 (0.2) | 38,406 |

| Games | 14,556 (39.7) | 16,187 (44.2) | 5730 (15.6) | 183 (0.5) | 36,656 |

| Technology | 6836 (22.2) | 19,670 (63.8) | 3931 (12.8) | 370 (1.2) | 30,807 |

| Design | 11,804 (40.4) | 14,658 (50.2) | 2541 (8.7) | 182 (0.6) | 29,185 |

| Art | 11,441 (42.9) | 13,383 (50.2) | 1782 (6.7) | 69 (0.3) | 26,675 |

| Fashion | 6330 (32.9) | 10,860 (56.5) | 1911 (9.9) | 111 (0.6) | 19,212 |

| Food | 4817 (27.0) | 11,526 (64.6) | 1399 (7.8) | 105 (0.6) | 17,847 |

| Comics | 6349 (55.4) | 4367 (38.1) | 718 (6.3) | 23 (0.2) | 11,457 |

| Photography | 3549 (34.6) | 5894 (57.5) | 755 (7.4) | 46 (0.4) | 10,244 |

| Theater | 5873 (57.6) | 3854 (37.8) | 445 (4.4) | 17 (0.2) | 10,189 |

| Crafts | 2358 (27.6) | 5549 (64.9) | 581 (6.8) | 60 (0.7) | 8548 |

| Journalism | 1114 (21.9) | 3352 (66.0) | 558 (11.0) | 57 (1.1) | 5081 |

| Dance | 2463 (62.1) | 1314 (33.1) | 175 (4.4) | 15 (0.4) | 3967 |

| Total | 131,521 (38.2) | 181,326 (52.6) | 30,220 (8.8) | 1477 (0.4) | 344,544 |

| Category | Content | Precision | Recall | Accuracy | AUC |

|---|---|---|---|---|---|

| Technology + Art | Campaign | 60.23 | 59.41 | 59.55 | 0.640 |

| Updates | 84.31 | 89.47 | 86.38 | 0.925 | |

| Creators’ comments in Comments | 66.90 | 79.85 | 69.47 | 0.762 | |

| Backers’ comments in Comments | 84.03 | 82.08 | 83.09 | 0.908 | |

| Technology | Campaign | 65.70 | 58.14 | 61.63 | 0.688 |

| Updates | 86.68 | 84.14 | 85.43 | 0.920 | |

| Creators’ comments in Comments | 78.58 | 79.38 | 78.59 | 0.854 | |

| Backers’ comments in Comments | 91.15 | 82.66 | 87.29 | 0.941 | |

| Art | Campaign | 61.69 | 69.13 | 62.38 | 0.679 |

| Updates | 82.36 | 80.26 | 80.85 | 0.898 | |

| Creators’ comments in Comments | 56.79 | 79.54 | 59.56 | 0.643 | |

| Backers’ comments in Comments | 76.91 | 78.36 | 77.26 | 0.836 |

| Paper | Method | Features | Accuracy | F1 |

|---|---|---|---|---|

| Chung and Lee (2015) [38] | AdaboostM1 | Project & Creator info. + Twitter | 84.2% | – |

| Shi et al. (2021) [29] | DNN | Project & Creator info. + Audio from Campaign | – | 0.838 |

| Cheng et al. (2019) [27] | Multimodal (CNN, BoW) | Project info. + Text & Image from Campaign | – | 0.753 |

| Lai, Lo and Hwang (2017) [39] | XGBoost | Project info. + Text from Updates and Comments | 92.4% | – |

| Kaminski and Hopp (2020) [40] | Logistic Regression | Video & Speech & Text from Campaign | 72% | 0.720 |

| Yu et al. (2018) [28] | MLP | Project & Creator info. | 93.2% | – |

| Yuan et al. (2023) [4] | PSM-PEM | Proejct info. + Text from Campaign | 86.6% | 0.799 |

| Zhang and Lau (2024) [21] | CNN, RNN, BERT | Project & Creator info. + Video & Audio & Text from Campaign | 82.2% | – |

| Ours | HAN | Raw text from Campaign OR Updates OR Comments | 87.29% | 0.867 |

| “after battery replacement worked two weeks well” |

| “beautiful artwork and craftmanship” |

| “let’s get this thing funded.” |

| “have they all been shipped now” |

| ”a cool 20 physical reward like maybe a print would have been great“ |

| "Mar 27 2018 or 8 weeks "Add ons Today, we FINISHED shipping all the add ons (apart from maker kits and additional batteries)" |

| ”I am sure that this project has a great potential“ |

| ”I’ve sent a few messages which have gone unanswered.“ |

| “he have tried to use the trust of the backers in order for him to obtain an external investors funding” |

| “I have backed almost 500 campaigns, most have not made me angry. You need to learn some customer service. I will be withdrawing my support for your campaign—even though it is most likely the pledges will be refunded anyway when you do not meet your goal.” |

| “I haven’t received my order” |

| “good luck with the campaign” |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Lee, S.; Khan, M.A.; Kim, H.-c. Explainable Prediction of Crowdfunding Success Using Hierarchical Attention Network. Electronics 2026, 15, 570. https://doi.org/10.3390/electronics15030570

Lee S, Khan MA, Kim H-c. Explainable Prediction of Crowdfunding Success Using Hierarchical Attention Network. Electronics. 2026; 15(3):570. https://doi.org/10.3390/electronics15030570

Chicago/Turabian StyleLee, SeungHun, Muneeb A. Khan, and Hyun-chul Kim. 2026. "Explainable Prediction of Crowdfunding Success Using Hierarchical Attention Network" Electronics 15, no. 3: 570. https://doi.org/10.3390/electronics15030570

APA StyleLee, S., Khan, M. A., & Kim, H.-c. (2026). Explainable Prediction of Crowdfunding Success Using Hierarchical Attention Network. Electronics, 15(3), 570. https://doi.org/10.3390/electronics15030570