AptEVS: Adaptive Edge-and-Vehicle Scheduling for Hierarchical Federated Learning over Vehicular Networks

Abstract

1. Introduction

- (1)

- Comprehensive Cost Metric: We formulate a holistic training cost metric for VHFL systems, integrating accuracy deviation cost (ADC), time delay cost (TDC), and energy consumption cost (ECC) to quantitatively evaluate performance against task-specific requirements.

- (2)

- Dynamic Configuration Analysis: Through systematic experiments, we analyze optimal EVS configurations. Our findings reveal that the optimal configuration is a dynamic variable, exhibiting high sensitivity not only to intrinsic task attributes and environmental dynamics, but crucially, to the evolution of the training phase. This establishes the necessity for a phase-aware, learning-based approach.

- (3)

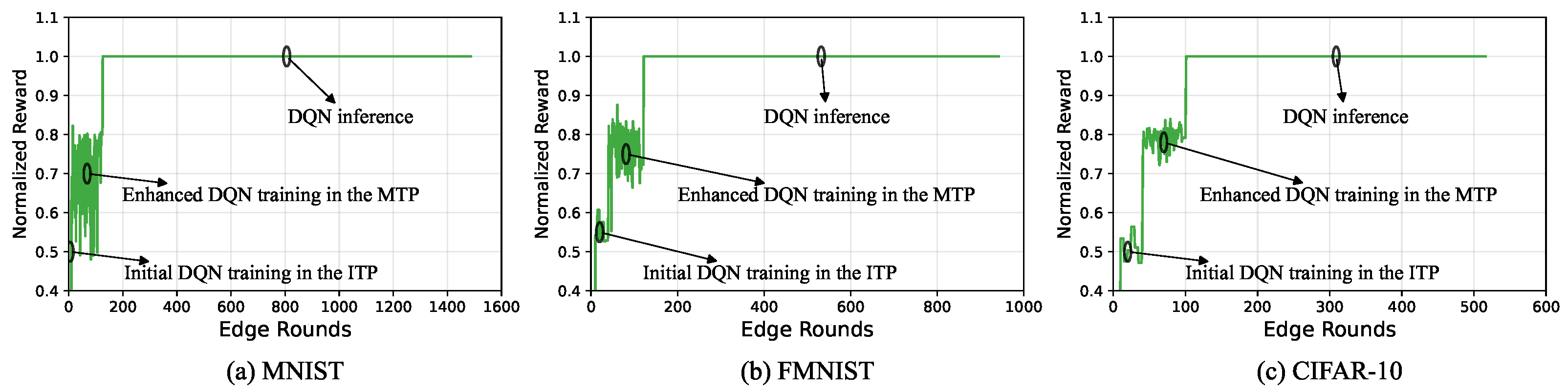

- Phase-aware DRL Framework (AptEVS): We propose AptEVS, a novel phase-aware DRL framework for online EVS adaptation. Its core employs a lightweight mechanism to detect the current training phase and dynamically switches between two specialized algorithms: Structured Exploration for the Initial Training Phase (ITP) and Priority-Enhanced DQN Training for the Medium Training Phase (MTP).

- (4)

- Experimental Validation: Extensive simulations demonstrate that AptEVS consistently outperforms baseline methods, achieving significant reductions in long-term training cost. These results prove the feasibility and effectiveness of online, phase-aware scheduling in complex vehicular environments.

2. Related Work

3. System Model

3.1. Training Procedure of VHFL

3.2. Mobility Model

3.3. Performance Metrics

3.4. Problem Formulation

4. Motivation

4.1. Experimental Setup

4.1.1. VHFL System Simulation Setup

4.1.2. Controlled Simulation Settings

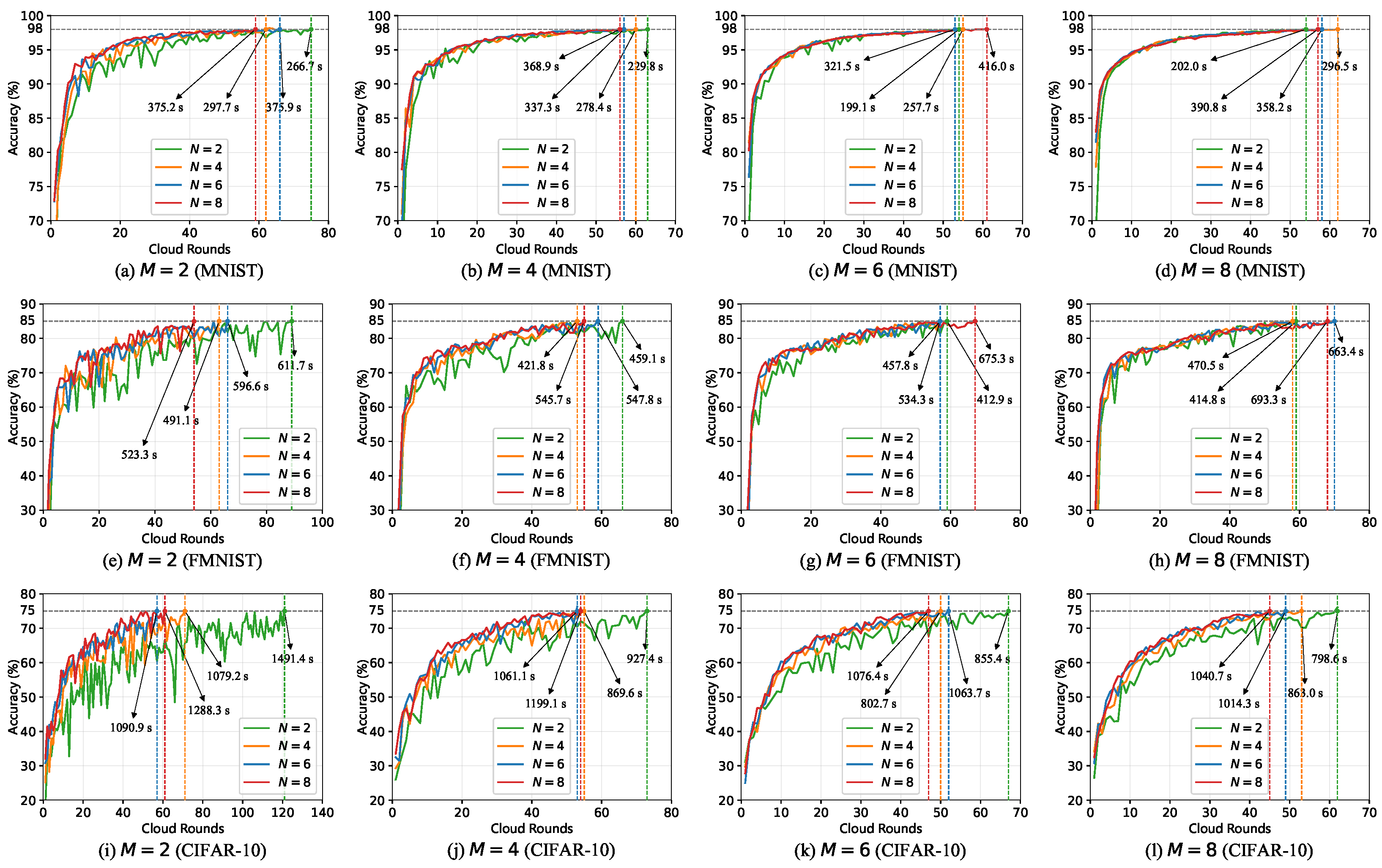

4.2. Impact of Intrinsic Task Attributes

- Initial training phase (ITP): Accuracy increases rapidly as the model is far from optimal and gradients are large. This results in significant performance gains and a convex learning curve: .

- Medium training phase (MTP): Accuracy improvement slows and begins to fluctuate. The model enters a local convergence region, where training shifts from coarse adjustment to fine-tuning and becomes more sensitive to data-induced variations: changes sign frequently.

- Final training phase (FTP): The model approaches convergence and accuracy saturates. Gradients diminish, performance gains become negligible, and variations are mainly due to noise: becomes stable or slightly increases.

4.3. Impact of Environmental Dynamics

- Vehicle arrival rate: The arrival rate reveals a non-monotonic impact on the optimal EVS. At low rates, the system is data-starved, and the optimal EVS is small due to a lack of diverse candidates. At very high rates, the system becomes data-congested, as high vehicle density creates severe contention for limited communication bandwidth, increasing the TDC. This again forces the scheduler to select a smaller EVS to manage congestion. A moderate arrival rate provides the optimal balance of a rich data supply without prohibitive resource contention.

- Vehicle speed characteristics: The speed characteristics illustrate the duality of mobility. The mean speed dictates the primary trade-off: at low EVS, the bottleneck is stagnant data diversity, hindering ADC reduction; at high EVS, the dominant issue is system instability, increasing TDC. The standard deviation further acts as a data diversity amplifier; greater speed variation enhances vehicle mixing, allowing the system to achieve its learning goals with a potentially smaller EVS.

4.4. Theoretical Analysis

5. AptEVS: Adaptive Edge-and-Vehicle Scheduling

5.1. Framework Design Overview

5.2. Design of DRL Environment

- is the set of the static task characteristics in intrinsic task attributes, where C is computational complexity and Z is communication load. While model accuracies are available, they are not included in the state but used by a separate phase detection module.

- is the dynamic of the external environment.

- –

- is the current distance between neighboring ENs.

- –

- is the real-time statistical characteristics of the distributed data, where and denote the mean and standard deviation of the local data sizes across vehicles, and and represent the corresponding statistics of local label skew. These statistics are also reported by vehicles in a privacy-preserving form. Specifically, the label skew for client i is defined as [58].

- –

- is the vehicle mobility pattern in the current candidate training segment. indicates the current vehicle arrival rate, and and are the mean and variance of vehicle speeds.

5.3. Phase-Aware Scheduling Algorithms and Workflow

5.3.1. Adaptive Phase Detection Mechanism

| Algorithm 1 AptEVS: phase-aware DRL scheduling | ||

| ||

| 1: | Initialize DQN online and target networks , replay buffer . | |

| 2: | Initialize counters , , best action , counter threshold and . | |

| 3: | for each cloud round do | |

| 4: | Set the current time step t be r. | |

| 5: | Observe current system state . | |

| 6: | // Adaptive phase detection | |

| 7: | Apply Algorithm 2, obtain . | |

| 8: | // Phase-specific action selection | |

| 9: | if is ITP then | ▹ DQN Training |

| 10: | Apply Algorithm 3, obtain and execute . | |

| 11: | Calculate normalized reward . | |

| 12: | Store experience in . | |

| 13: | Train DQN networks using MER from . | |

| 14: | else if is MTP then | ▹ DQN Training |

| 15: | Apply Algorithm 4, obtain and execute . | |

| 16: | Calculate normalized reward . | |

| 17: | Store experience in . | |

| 18: | Train DQN networks using MER from . | |

| 19: | if is a new best action then | |

| 20: | . | |

| 21: | end if | |

| 22: | else | |

| 23: | // Phase switches to the FTP | |

| 24: | ▹ DQN Inference | |

| 25: | Execute . | |

| 26: | end if | |

| 27: | end for | |

| 28: | return | |

| Algorithm 2 Adaptive phase detection mechanism | ||

| ||

| 1: | Initialize , , . | |

| 2: | if is ITP then | |

| 3: | // Check for ITP to MTP transition | |

| 4: | if then | |

| 5: | . | |

| 6: | end if | |

| 7: | if ( or ) and then | |

| 8: | ▹ Transition to MTP. | |

| 9: | Construct the new action space . | |

| 10: | end if | |

| 11: | else if is MTP then | |

| 12: | // Check for MTP to FTP transition | |

| 13: | if then | |

| 14: | . | |

| 15: | else | |

| 16: | ▹ Reset counter. | |

| 17: | end if | |

| 18: | if then | |

| 19: | . | ▹ Transition to Inference Phase |

| 20: | end if | |

| 21: | end if | |

| 22: | return , . | |

| Algorithm 3 Structured exploration for ITP | ||

| 1: | Input: Accuracy history , last action . | |

| 2: | Output: Action . | |

| 3: | if then | |

| 4: | . | ▹ Ensure a robust initial performance |

| 5: | else if then | |

| 6: | . | ▹ Probe the baseline |

| 7: | else | |

| 8: | . | ▹ Retain previous configuration |

| 9: | if then | ▹ If accuracy stagnates |

| 10: | if then | |

| 11: | ▹ Preferentially increase ENs. | |

| 12: | else if then | |

| 13: | . | |

| 14: | end if | |

| 15: | end if | |

| 16: | end if | |

| 17: | return . | |

| Algorithm 4 Priority-enhanced DQN training for MTP | ||

| 1: | Input: State , epsilon . | |

| 2: | Output: Action . | |

| 3: | if then | ▹ Exploration |

| 4: | Calculate the prioritization probability (29) for each action . | |

| 5: | Obtain action randomly from according to . | |

| 6: | else | ▹ Exploitation |

| 7: | . | |

| 8: | end if | |

| 9: | Update with decay coefficient . | |

| 10: | return . | |

5.3.2. Phase-Aware DRL Algorithms

6. Performance Evaluation

6.1. Experimental Setup

6.2. Overall Performance Comparison

- Fixed proportional Edge-and-Vehicle Scheduling (FpEVS): A static baseline used to reflect uniform and task-independent scheduling commonly adopted in earlier studies. It schedules all ENs and 30% of vehicles in every training round, following the empirical setup in [11].

- Phase-aware Edge-and-Vehicle Scheduling (PahEVS): A dynamic baseline that adjusts the vehicle participation ratio across different training phases, 20% in ITP, 30% in MTP, and 50% in FTP, while keeping all ENs scheduled. This design is based on the core insight from [33] that scheduling fewer clients in early stages and more in later stages can reduce training cost.

6.3. Ablation Study: Efficacy of the Reward Function Design

- AptEVS-A: Employs a reward based on the direct model accuracy , as in [59]. The reward is defined as .

- AptEVS-EA: Uses an exponential form of the accuracy as the primary reward component, following [44]. The reward is defined as .

- AptEVS-ADC: Utilizes the ADC as the reward signal, a method used in [45]. The reward is defined as .

- AptEVS-ACAR: A variant based on [57], which uses a weighted sum of the current accuracy and the change in accuracy from the previous round. Let and be weights such that , and set . The reward function is given by

6.4. Ablation Study: Efficacy of the Phase-Aware Mechanism

6.5. Performance Robustness Across Diverse Environments

6.6. Overhead Analysis

7. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Yan, H.; Li, Y. Generative AI for Intelligent Transportation Systems: Road Transportation Perspective. Acm Comput. Surv. 2025, 57, 315. [Google Scholar] [CrossRef]

- Talpur, A.; Gurusamy, M. Machine Learning for Security in Vehicular Networks: A Comprehensive Survey. IEEE Commun. Surv. Tutor. 2022, 24, 346–379. [Google Scholar] [CrossRef]

- Khan, M.W.; Obaidat, M.S.; Mahmood, K.; Sadoun, B.; Badar, H.M.S.; Gao, W. Real-Time Road Damage Detection Using an Optimized YOLOv9s-Fusion in IoT Infrastructure. IEEE Internet Things J. 2025, 12, 17649–17660. [Google Scholar] [CrossRef]

- Huang, Y.; Wang, F. D-TLDetector: Advancing Traffic Light Detection With a Lightweight Deep Learning Model. IEEE Trans. Intell. Transp. Syst. 2025, 26, 3917–3933. [Google Scholar] [CrossRef]

- McMahan, B.; Moore, E.; Ramage, D.; Hampson, S.; Arcas, B.A.Y. Communication-efficient learning of deep networks from decentralized data. In Proceedings of the 20th International Conference on Artificial Intelligence and Statistics, Fort Lauderdale, FL, USA, 20–22 April 2017; pp. 1273–1282. [Google Scholar]

- Liu, L.; Zhang, J.; Song, S.; Letaief, K.B. Client-edge-cloud hierarchical federated learning. In Proceedings of the ICC 2020–2020 IEEE International Conference on Communications (ICC), Virtual, 7–11 June 2020; pp. 1–6. [Google Scholar]

- Deng, Y.; Lyu, F.; Ren, J.; Wu, H.; Zhou, Y.; Zhang, Y.; Shen, X. AUCTION: Automated and quality-aware client selection framework for efficient federated learning. IEEE Trans. Parallel Distrib. Syst. 2021, 33, 1996–2009. [Google Scholar] [CrossRef]

- Tao, M.; Zhou, Y.; Shi, Y.; Lu, J.; Cui, S.; Lu, J.; Letaief, K.B. Federated Edge Learning for 6G: Foundations, Methodologies, and Applications. Proc. IEEE, 2024; early access. [Google Scholar]

- Banafaa, M.; Shayea, I.; Din, J.; Azmi, M.H.; Alashbi, A.; Daradkeh, Y.I.; Alhammadi, A. 6G mobile communication technology: Requirements, targets, applications, challenges, advantages, and opportunities. Alex. Eng. J. 2023, 64, 245–274. [Google Scholar] [CrossRef]

- Chen, T.; Yan, J.; Sun, Y.; Zhou, S.; Gündüz, D.; Niu, Z. Mobility accelerates learning: Convergence analysis on hierarchical federated learning in vehicular networks. IEEE Trans. Veh. Technol. 2025, 74, 1657–1673. [Google Scholar] [CrossRef]

- Zhang, T.; Lam, K.Y.; Zhao, J. Device Scheduling and Assignment in Hierarchical Federated Learning for Internet of Things. IEEE Internet Things J. 2024, 11, 18449–18462. [Google Scholar] [CrossRef]

- Zhao, J.; Chang, X.; Feng, Y.; Liu, C.H.; Liu, N. Participant selection for federated learning with heterogeneous data in intelligent transport system. IEEE Trans. Intell. Transp. Syst. 2022, 24, 1106–1115. [Google Scholar] [CrossRef]

- Taik, A.; Mlika, Z.; Cherkaoui, S. Clustered vehicular federated learning: Process and optimization. IEEE Trans. Intell. Transp. Syst. 2022, 23, 25371–25383. [Google Scholar] [CrossRef]

- Wu, Q.; Wang, S.; Fan, P.; Fan, Q. Deep reinforcement learning based vehicle selection for asynchronous federated learning enabled vehicular edge computing. In Proceedings of the International Congress on Communications, Networking, and Information Systems, Guilin, China, 25–27 March 2023; pp. 3–26. [Google Scholar]

- Tang, X.; Zhang, J.; Fu, Y.; Li, C.; Cheng, N.; Yuan, X. A Fair and Efficient Federated Learning Algorithm for Autonomous Driving. In Proceedings of the 2023 IEEE 98th Vehicular Technology Conference (VTC2023-Fall), Hong Kong, China, 10–13 October 2023; pp. 1–5. [Google Scholar]

- Sangdeh, P.K.; Li, C.; Pirayesh, H.; Zhang, S.; Zeng, H.; Hou, Y.T. CF4FL: A communication framework for federated learning in transportation systems. IEEE Trans. Wirel. Commun. 2022, 22, 3821–3836. [Google Scholar] [CrossRef]

- Saputra, Y.M.; Hoang, D.T.; Nguyen, D.N.; Tran, L.N.; Gong, S.; Dutkiewicz, E. Dynamic federated learning-based economic framework for internet-of-vehicles. IEEE Trans. Mob. Comput. 2021, 22, 2100–2115. [Google Scholar] [CrossRef]

- Li, Z.; Wu, H.; Lu, Y. Coalition based utility and efficiency optimization for multi-task federated learning in Internet of Vehicles. Future Gener. Comput. Syst. 2023, 140, 196–208. [Google Scholar] [CrossRef]

- Pervej, M.F.; Jin, R.; Dai, H. Resource constrained vehicular edge federated learning with highly mobile connected vehicles. IEEE J. Sel. Areas Commun. 2023, 41, 1825–1844. [Google Scholar] [CrossRef]

- Xiao, H.; Zhao, J.; Pei, Q.; Feng, J.; Liu, L.; Shi, W. Vehicle selection and resource optimization for federated learning in vehicular edge computing. IEEE Trans. Intell. Transp. Syst. 2021, 23, 11073–11087. [Google Scholar] [CrossRef]

- Wang, G.; Xu, F.; Zhang, H.; Zhao, C. Joint resource management for mobility supported federated learning in Internet of Vehicles. Future Gener. Comput. Syst. 2022, 129, 199–211. [Google Scholar] [CrossRef]

- Lin, F.P.C.; Hosseinalipour, S.; Michelusi, N.; Brinton, C.G. Delay-aware hierarchical federated learning. IEEE Trans. Cogn. Commun. Netw. 2023, 10, 674–688. [Google Scholar] [CrossRef]

- Luo, S.; Chen, X.; Wu, Q.; Zhou, Z.; Yu, S. HFEL: Joint edge association and resource allocation for cost-efficient hierarchical federated edge learning. IEEE Trans. Wirel. Commun. 2020, 19, 6535–6548. [Google Scholar] [CrossRef]

- Wang, Z.; Xu, H.; Liu, J.; Huang, H.; Qiao, C.; Zhao, Y. Resource-efficient federated learning with hierarchical aggregation in edge computing. In Proceedings of the IEEE INFOCOM 2021-IEEE Conference on Computer Communications, Vancouver, BC, Canada, 10–13 May 2021; pp. 1–10. [Google Scholar]

- Qi, T.; Zhan, Y.; Li, P.; Guo, J.; Xia, Y. Hwamei: A learning-based synchronization scheme for hierarchical federated learning. In Proceedings of the 2023 IEEE 43rd International Conference on Distributed Computing Systems (ICDCS), Hong Kong, China, 18–21 July 2023; pp. 534–544. [Google Scholar]

- Wei, X.; Liu, J.; Shi, X.; Wang, Y. Participant selection for hierarchical federated learning in edge clouds. In Proceedings of the 2022 IEEE International Conference on Networking, Architecture and Storage (NAS), Philadelphia, PA, USA, 3–4 October 2022; pp. 1–8. [Google Scholar]

- Lim, W.Y.B.; Ng, J.S.; Xiong, Z.; Niyato, D.; Miao, C.; Kim, D.I. Dynamic edge association and resource allocation in self-organizing hierarchical federated learning networks. IEEE J. Sel. Areas Commun. 2021, 39, 3640–3653. [Google Scholar] [CrossRef]

- Su, L.; Zhou, R.; Wang, N.; Chen, J.; Li, Z. Low-latency hierarchical federated learning in wireless edge networks. IEEE Internet Things J. 2023, 11, 6943–6960. [Google Scholar] [CrossRef]

- Nguyen, T.D.; Tong, N.A.; Nguyen, B.P.; Nguyen, Q.V.H.; Le Nguyen, P.; Huynh, T.T. Hierarchical Federated Learning in MEC Networks with Knowledge Distillation. In Proceedings of the 2024 International Joint Conference on Neural Networks (IJCNN), Yokohama, Japan, 30 June–5 July 2024; pp. 1–8. [Google Scholar]

- Kou, W.B.; Wang, S.; Zhu, G.; Luo, B.; Chen, Y.; Ng, D.W.K.; Wu, Y.C. Communication resources constrained hierarchical federated learning for end-to-end autonomous driving. In Proceedings of the 2023 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Detroit, MI, USA, 1–5 October 2023; pp. 9383–9390. [Google Scholar]

- Zhou, X.; Liang, W.; She, J.; Yan, Z.; Kevin, I.; Wang, K. Two-layer federated learning with heterogeneous model aggregation for 6g supported internet of vehicles. IEEE Trans. Veh. Technol. 2021, 70, 5308–5317. [Google Scholar] [CrossRef]

- Feng, C.; Yang, H.H.; Hu, D.; Zhao, Z.; Quek, T.Q.; Min, G. Mobility-aware cluster federated learning in hierarchical wireless networks. IEEE Trans. Wirel. Commun. 2022, 21, 8441–8458. [Google Scholar] [CrossRef]

- Lai, F.; Zhu, X.; Madhyastha, H.V.; Chowdhury, M. Oort: Efficient federated learning via guided participant selection. In Proceedings of the 15th USENIX Symposium on Operating Systems Design and Implementation (OSDI’21), Virtual, 14–16 July 2021; pp. 19–35. [Google Scholar]

- Swenson, B.; Murray, R.; Poor, H.V.; Kar, S. Distributed stochastic gradient descent: Nonconvexity, nonsmoothness, and convergence to local minima. J. Mach. Learn. Res. 2022, 23, 1–62. [Google Scholar]

- Zhao, Y.; Li, M.; Lai, L.; Suda, N.; Civin, D.; Chandra, V. Federated learning with non-iid data. arXiv 2018, arXiv:1806.00582. [Google Scholar] [CrossRef]

- Xie, B.; Sun, Y.; Zhou, S.; Niu, Z.; Xu, Y.; Chen, J.; Gunduz, D. MOB-FL: Mobility-aware federated learning for intelligent connected vehicles. In Proceedings of the ICC 2023-IEEE International Conference on Communications, Rome, Italy, 28 May–1 June 2023; pp. 3951–3957. [Google Scholar]

- Cui, J.; Chen, Y.; Zhong, H.; He, D.; Wei, L.; Bolodurina, I.; Liu, L. Lightweight encryption and authentication for controller area network of autonomous vehicles. IEEE Trans. Veh. Technol. 2023, 72, 14756–14770. [Google Scholar] [CrossRef]

- Ma, Z.; Zhang, T.; Liu, X.; Li, X.; Ren, K. Real-time privacy-preserving data release over vehicle trajectory. IEEE Trans. Veh. Technol. 2019, 68, 8091–8102. [Google Scholar] [CrossRef]

- Fu, Y.; Li, C.; Yu, F.R.; Luan, T.H.; Zhao, P. An incentive mechanism of incorporating supervision game for federated learning in autonomous driving. IEEE Trans. Intell. Transp. Syst. 2023, 24, 14800–14812. [Google Scholar] [CrossRef]

- Dai, P.; Hu, K.; Wu, X.; Xing, H.; Teng, F.; Yu, Z. A probabilistic approach for cooperative computation offloading in MEC-assisted vehicular networks. IEEE Trans. Intell. Transp. Syst. 2020, 23, 899–911. [Google Scholar] [CrossRef]

- Zhang, X.; Chang, Z.; Hu, T.; Chen, W.; Zhang, X.; Min, G. Vehicle selection and resource allocation for federated learning-assisted vehicular network. IEEE Trans. Mob. Comput. 2023, 23, 3817–3829. [Google Scholar] [CrossRef]

- Yu, Z.; Hu, J.; Min, G.; Zhao, Z.; Miao, W.; Hossain, M.S. Mobility-aware proactive edge caching for connected vehicles using federated learning. IEEE Trans. Intell. Transp. Syst. 2020, 22, 5341–5351. [Google Scholar] [CrossRef]

- Zhang, C.; Zhang, W.; Wu, Q.; Fan, P.; Fan, Q.; Wang, J.; Letaief, K.B. Distributed deep reinforcement learning based gradient quantization for federated learning enabled vehicle edge computing. IEEE Internet Things J. 2024, 12, 4899–4913. [Google Scholar] [CrossRef]

- Mao, W.; Lu, X.; Jiang, Y.; Zheng, H. Joint client selection and bandwidth allocation of wireless federated learning by deep reinforcement learning. IEEE Trans. Serv. Comput. 2024, 17, 336–348. [Google Scholar] [CrossRef]

- Wang, H.; Kaplan, Z.; Niu, D.; Li, B. Optimizing federated learning on non-iid data with reinforcement learning. In Proceedings of the IEEE INFOCOM 2020-IEEE Conference on Computer Communications, Virtual, 6–9 July 2020; pp. 1698–1707. [Google Scholar]

- Wu, C.; Ren, Y.; So, D.K. Adaptive User Scheduling and Resource Allocation in Wireless Federated Learning Networks: A Deep Reinforcement Learning Approach. In Proceedings of the ICC 2023-IEEE International Conference on Communications, Rome, Italy, 28 May–1 June 2023; pp. 1219–1225. [Google Scholar]

- Peng, Y.; Tang, X.; Zhou, Y.; Hou, Y.; Li, J.; Qi, Y.; Liu, L.; Lin, H. How to tame mobility in federated learning over mobile networks? IEEE Trans. Wirel. Commun. 2023, 22, 9640–9657. [Google Scholar] [CrossRef]

- You, C.; Guo, K.; Yang, H.H.; Quek, T.Q. Hierarchical personalized federated learning over massive mobile edge computing networks. IEEE Trans. Wirel. Commun. 2023, 22, 8141–8157. [Google Scholar] [CrossRef]

- Liu, S.; Guan, P.; Yu, J.; Taherkordi, A. Fedssc: Joint client selection and resource management for communication-efficient federated vehicular networks. Comput. Netw. 2023, 237, 110100. [Google Scholar] [CrossRef]

- Study on Channel Model for Frequencies from 0.5 to 100 GHz (Release 18); Technical Report TR 38.901 V18.0.0, 3GPP; ETSI: Sofia, France, 2024.

- Zeng, T.; Semiari, O.; Chen, M.; Saad, W.; Bennis, M. Federated learning on the road autonomous controller design for connected and autonomous vehicles. IEEE Trans. Wirel. Commun. 2022, 21, 10407–10423. [Google Scholar] [CrossRef]

- LeCun, Y.; Bottou, L.; Bengio, Y.; Haffner, P. Gradient-based learning applied to document recognition. Proc. IEEE 1998, 86, 2278–2324. [Google Scholar] [CrossRef]

- Xiao, H.; Rasul, K.; Vollgraf, R. Fashion-mnist: A novel image dataset for benchmarking machine learning algorithms. arXiv 2017, arXiv:1708.07747. [Google Scholar] [CrossRef]

- Krizhevsky, A.; Hinton, G. Learning Multiple Layers of Features from Tiny Images; Computer Science University of Toronto: Toronto, ON, Canada, 2009. [Google Scholar]

- Li, Q.; Diao, Y.; Chen, Q.; He, B. Federated learning on non-iid data silos: An experimental study. In Proceedings of the 2022 IEEE 38th International Conference on Data Engineering (ICDE), Kuala Lumpur, Malaysia, 9–12 May 2022; pp. 965–978. [Google Scholar]

- Li, X.; Huang, K.; Yang, W.; Wang, S.; Zhang, Z. On the convergence of fedavg on non-iid data. arXiv 2019, arXiv:1907.02189. [Google Scholar]

- Kim, Y.G.; Wu, C.J. Autofl: Enabling heterogeneity-aware energy efficient federated learning. In Proceedings of the MICRO-54: 54th Annual IEEE/ACM International Symposium on Microarchitecture, Virtual, 18–22 October 2021; pp. 183–198. [Google Scholar]

- Tian, Y.; Wang, N.; Zhang, Z.; Zou, W.; Zou, G.; Tian, L.; Li, W. Joint Client Selection and Bandwidth Allocation Algorithm for Time-Sensitive Federated Learning over Wireless Networks. In Proceedings of the 2024 IEEE 99th Vehicular Technology Conference (VTC2024-Spring), Singapore, 24–27 June 2024; pp. 1–6. [Google Scholar]

- Yu, X.; Gao, Z.; Xiong, Z.; Zhao, C.; Yang, Y. DDPG-AdaptConfig: A deep reinforcement learning framework for adaptive device selection and training configuration in heterogeneity federated learning. Future Gener. Comput. Syst. 2025, 163, 107528. [Google Scholar] [CrossRef]

| Symbol | Definition | Symbol | Definition |

|---|---|---|---|

| maximum of vehicles in EN m | set of vehicles | ||

| maximum of ENs | set of ENs managed by the CS | ||

| m | index of an EN | i | index of a vehicle |

| local data size of vehicle i | local dataset of vehicle i | ||

| cloud interval | edge interval | ||

| time slice of edge round in cloud round r | R | total number of cloud rounds | |

| set of cloud rounds | set of ENs scheduled by the CS | ||

| edge scheduling number | vehicle scheduling number per EN | ||

| vehicles connected to EN m at time slice | vehicles scheduled by EN m at time slice | ||

| w | training model of vehicles | cloud model in the r-th cloud round | |

| edge model at time slice | local model of vehicle i after j-th local iteration | ||

| L | total number of local iterations | b | batch size |

| learning rate | edge spacing | ||

| vehicle arrival rate | probability density function of | ||

| speed of vehicle i | mean speed of vehicles | ||

| standard deviation of vehicle speeds | model accuracy after the r-th cloud round | ||

| target accuracy | actual computing frequency of vehicle i | ||

| computational cycles per sample for vehicle i | effective capacitance factor of the computing chipset | ||

| actual transmit power of vehicle i | Z | model size | |

| number of RBs allocated to vehicle i at | B | bandwidth of one RB |

| Parameter | Value |

|---|---|

| Computational frequency of vehicle for three HFL tasks, | /1/4 GHz |

| Transmit power of vehicle, | dBm |

| Bandwidth of one RB for three HFL tasks, B | 180/180/360 kHz |

| Number of RBs at each EN, | 20 |

| Carrier frequency, | GHz |

| Effective capacitance factor of the computing chipset on vehicle i, | |

| Noise power spectral density, | dBm/MHz |

| Edge Spacing, L | 300 m |

| Vehicle arrival rate, | 1 veh/s |

| Speed characteristics of vehicles in the training area, | m/s |

| Uplink transmission rate of the ENs, | 50 Mbps |

| Cloud interval, | 5 |

| Edge interval, | 5 |

| Learning rate, | |

| Decay rate, | |

| Batch size, b | 20 |

| Convergence threshold for three HFL tasks, | |

| Data partitioning parameter, | 5 |

| Data size per vehicle, | 200 |

| Weighting factors for ADC/TDC/ECC, | 0.1/0.5/0.4 |

| scaling factor of ADC, | 100 |

| Task Class | Computational Complexity (cycles) | Communication Load (bits) | Target Accuracy (%) |

|---|---|---|---|

| MNIST | 102,412 | 13,492,544 | 98 |

| FMNIST | 867,804 | 13,504,832 | 85 |

| CIFAR-10 | 6,291,652 | 83,333,632 | 75 |

| Variable | Value | Optimal EVS | Cloud Rounds | ADC | TDC (s) | ECC (J) | LTTC |

|---|---|---|---|---|---|---|---|

(m) | 200 | (2, 6) | 58 | 17.2 | 438.5 | 4732.7 | 14,575.2 |

| 300 | (4, 4) | 53 | 16.0 | 421.8 | 7663.9 | 15,213.7 | |

| 400 | (6,2) | 52 | 18.1 | 368.1 | 8094.2 | 14,257.4 | |

| 5 | (4, 4) | 53 | 16.0 | 421.8 | 7663.9 | 15,213.7 | |

| 2 | (8, 2) | 65 | 32.6 | 457.6 | 13,474.3 | 20,089.3 | |

| (6, 2) | 121 | 33.7 | 981.7 | 17,097.2 | 31,999.4 | ||

| (8, 2) | 135 | 37.1 | 1161.1 | 25,348.6 | 40,517.0 | ||

(veh/s) | 0.6 | (4, 2) | 65 | 22.1 | 452.0 | 6888.8 | 16,264.4 |

| 1.0 | (4, 4) | 53 | 16.0 | 421.8 | 7663.9 | 15,213.7 | |

| 1.4 | (4, 2) | 55 | 15.5 | 436.6 | 7937.2 | 15,636.3 | |

(m/s) | (2, 4) | 56 | 19.1 | 436.3 | 4036.5 | 14,438.7 | |

| (8, 2) | 52 | 15.9 | 365.6 | 10,784.7 | 15,046.4 | ||

| (4, 4) | 53 | 16.0 | 421.8 | 7663.9 | 15,213.7 | ||

| (2, 2) | 68 | 28.3 | 467.5 | 3520.0 | 15,926.8 | ||

| (2, 2) | 68 | 29.5 | 466.8 | 3562.4 | 16,046.3 |

| Parameter | Value |

|---|---|

| Learning rate of DQN, | |

| Discounted factor of DQN, | |

| Initial epsilon of the epsilon-greedy strategy, | |

| Decay coefficient of the epsilon-greedy strategy, | |

| Minimum epsilon of the epsilon-greedy strategy, | |

| Experience replay buffer size, V | 32 |

| Mini-batch size of DQN, | 16 |

| Update frequency of target network, J | 4 |

| Minimum of time step, | 40 |

| ADC factor, | 100 |

| Update cycle for short-term target accuracy, | 4 |

| Accuracy improvement threshold for ITP, | 5% |

| Counter threshold for ITP, | 3 |

| Counter threshold for the DQN training phase, | 5 |

| Termination accuracy for ITP, | 60% |

| Variable | Value | Method | Edge Rounds | ADC | TDC (s) | ECC (J) | Long-Term Training Cost |

|---|---|---|---|---|---|---|---|

(m) | 200 | AptEVS (Ours) | |||||

| FpEVS | 10,918.3 | ||||||

| PahEVS | 17,423.3 | 10,686.0 | |||||

| 400 | AptEVS (Ours) | ||||||

| FpEVS | 20,026.9 | 13,481.3 | |||||

| PahEVS | 17,663.0 | 10,739.0 | |||||

(veh/s) | AptEVS (Ours) | ||||||

| FpEVS | 10,281.2 | ||||||

| PahEVS | 21,030.0 | 12,761.4 | |||||

| AptEVS (Ours) | |||||||

| FpEVS | 16,362.1 | 10,761.3 | |||||

| PahEVS | 15,710.2 | ||||||

(m/s) | AptEVS (Ours) | ||||||

| FpEVS | 33,054.9 | 22,237.3 | |||||

| PahEVS | 16,784.4 | 10,263.2 | |||||

| AptEVS (Ours) | |||||||

| FpEVS | 11,331.6 | ||||||

| PahEVS | 17,656.7 | 10,718.1 | |||||

| AptEVS (Ours) | |||||||

| FpEVS | 12,131.6 | ||||||

| PahEVS | 16,843.9 | 10,289.0 | |||||

| AptEVS (Ours) | |||||||

| FpEVS | 14,975.8 | ||||||

| PahEVS | 16,897.1 | 10,339.3 | |||||

| AptEVS (Ours) | |||||||

| FpEVS | |||||||

| PahEVS | 17,108.7 | 10,513.5 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Tian, Y.; Wang, N.; Zhang, Z.; Zou, W.; Zhao, L.; Liu, S.; Tian, L. AptEVS: Adaptive Edge-and-Vehicle Scheduling for Hierarchical Federated Learning over Vehicular Networks. Electronics 2026, 15, 479. https://doi.org/10.3390/electronics15020479

Tian Y, Wang N, Zhang Z, Zou W, Zhao L, Liu S, Tian L. AptEVS: Adaptive Edge-and-Vehicle Scheduling for Hierarchical Federated Learning over Vehicular Networks. Electronics. 2026; 15(2):479. https://doi.org/10.3390/electronics15020479

Chicago/Turabian StyleTian, Yu, Nina Wang, Zongshuai Zhang, Wenhao Zou, Liangjie Zhao, Shiyao Liu, and Lin Tian. 2026. "AptEVS: Adaptive Edge-and-Vehicle Scheduling for Hierarchical Federated Learning over Vehicular Networks" Electronics 15, no. 2: 479. https://doi.org/10.3390/electronics15020479

APA StyleTian, Y., Wang, N., Zhang, Z., Zou, W., Zhao, L., Liu, S., & Tian, L. (2026). AptEVS: Adaptive Edge-and-Vehicle Scheduling for Hierarchical Federated Learning over Vehicular Networks. Electronics, 15(2), 479. https://doi.org/10.3390/electronics15020479