TBRNet: A Multi-Modal Network for Teacher Behavior Recognition with Cascaded Collaborative Attention and Dynamic Query-Driven

Abstract

1. Introduction

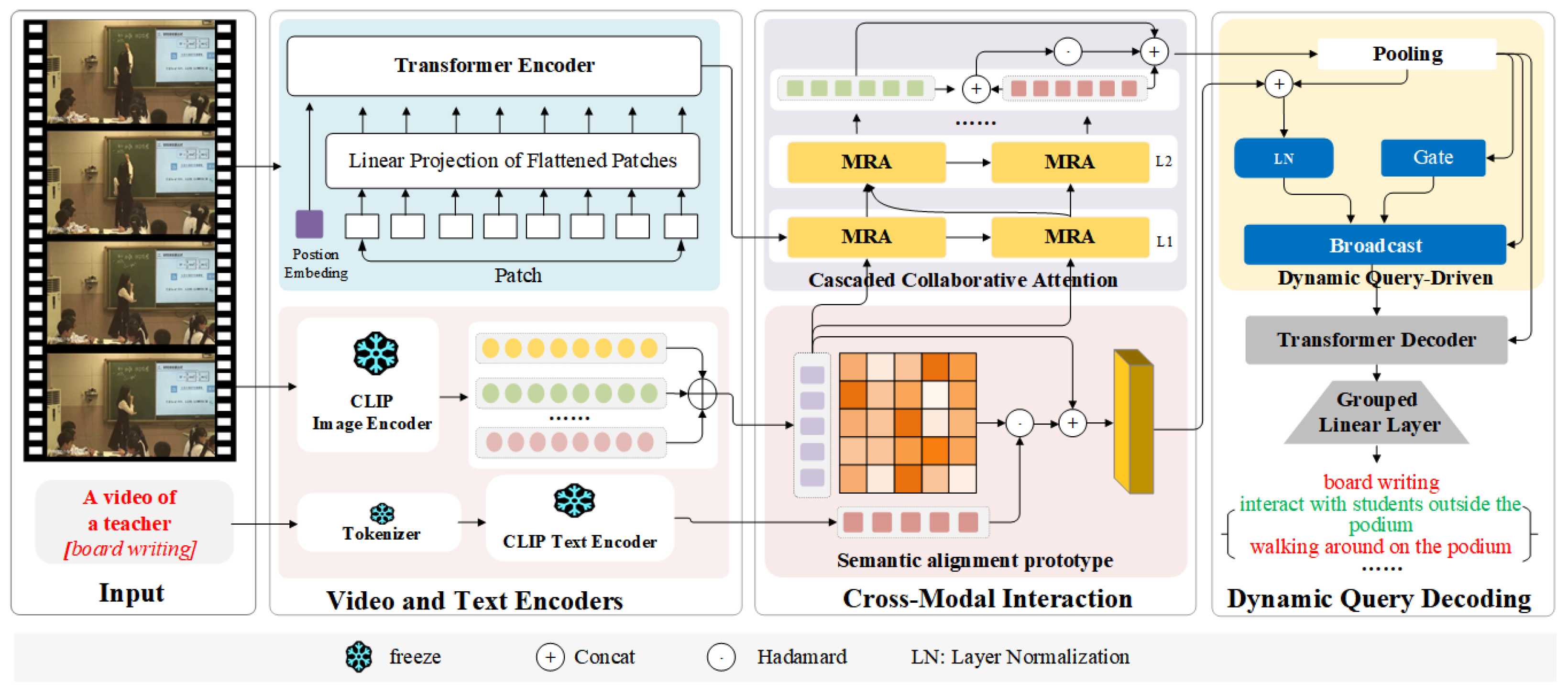

- We propose TBRNet, a novel multi-modal network for teacher behavior recognition. TBRNet is designed to integrate temporal, visual, and textual semantic information. Through the design of mechanisms for visual-semantic alignment, cross-modal fusion, and prototype guidance, TBRNet effectively tackles the recognition challenges of TTB in real classrooms, including complex backgrounds, severe occlusions, and high behavior similarity.

- A Cascaded Cooperative Attention (CCA) is designed. CCA employs a hierarchical multi-level cross-attention architecture to progressively achieve bidirectional fusion between spatiotemporal behavioral features and global visual semantics, thereby improving the temporal modeling of continuous instructional behaviors.

- A Dynamic Query-Driven (DQD) mechanism is introduced. Guided by semantic prototypes, DQD utilizes an adaptive gating module to filter discriminative behavioral features, thereby enhancing the perception and recognition of key teaching behaviors in complex scenarios.

- Extensive experiments on a dedicated teacher behavior dataset (TBU) and two public benchmarks validate the effectiveness of TBRNet. The results show that our method significantly outperforms existing baselines in recognition accuracy and exhibits strong generalization ability, making it a robust solution for real classroom behavior analysis.

2. Related Work

2.1. Single-Modal Teacher Teaching Behavior Recognition

2.2. Multi-Modal Teacher Teaching Behavior Recognition

2.3. CLIP-Based Action Recognition

3. Methodology

3.1. Overview of TBRNet

3.2. Video and Text Encoder

3.2.1. TimeSFormer-Based Video Encoder

3.2.2. CLIP-Based Visual Encoder

3.2.3. CLIP-Based Visual and Text Encoder

3.2.4. CLIP-Based Text Encoder

3.3. Cross-Modal Interaction

3.3.1. Cascaded Collaborative Attention

3.3.2. Semantic Alignment Prototype

3.4. Dynamic Query Decoding

3.4.1. Dynamic Query Generation

3.4.2. Decoding and Classification

3.5. The Loss

4. Results and Analysis

4.1. Experimental Protocols

4.1.1. Datasets and Evaluation

4.1.2. Comparison Models and Implementation Details

4.2. Comparison with Previous Works

4.2.1. Comparison of Different Models on TBU

4.2.2. Comparison of Different Models on UCF101 and HMDB51

4.2.3. Evaluation of Recognition Accuracy of Each Category

4.3. Ablation Study

4.3.1. Ablation Study of Different Modules

4.3.2. Ablation Study on Different Modal Inputs

5. Conclusions and Future Work

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Abbreviations

| TTB | Teacher Teaching Behavior |

| CLIP | Contrastive Language-Image Pretraining |

| CCA | Cascaded Collaborative Attention |

| DQD | Dynamic Query-Driven |

| MCA | Multi-level Cross-Attention |

| STOP | Spatio-Temporal Prompts |

| BCE | Binary Cross-Entropy |

| TS | TimeSformer |

References

- Huang, C.; Zhu, J.; Ji, Y.; Shi, W.; Yang, M.; Guo, H.; Ling, J.; De Meo, P.; Li, Z.; Chen, Z. A Multi-Modal Dataset for Teacher Behavior Analysis in Offline Classrooms. Sci. Data 2025, 12, 1115. [Google Scholar] [CrossRef] [PubMed]

- Lazarides, R.; Frenkel, J.; Petković, U.; Göllner, R.; Hellwich, O. ‘No words’—Machine-learning classified nonverbal immediacy and its role in connecting teacher self-efficacy with perceived teaching and student interest. Br. J. Educ. Psychol. 2025, 95, S15–S31. [Google Scholar] [CrossRef] [PubMed]

- Tammets, K.; Khulbe, M.; Sillat, L.H.; Ley, T. A digital learning ecosystem to scaffold teachers’ learning. IEEE Trans. Learn. Technol. 2022, 15, 620–633. [Google Scholar] [CrossRef]

- Zheng, L.; Li, J.; Zhu, Z.; Ji, W. LightNet: A lightweight head pose estimation model for online education and its application to engagement assessment. J. King Saud Univ. Comput. Inf. Sci. 2025, 37, 166. [Google Scholar] [CrossRef]

- Xiong, Y.; He, C.; Chen, L.; Cai, T. Spatio-temporal graph interaction networks for teacher behavior description in classroom scene. Eng. Appl. Artif. Intell. 2025, 159, 111668. [Google Scholar] [CrossRef]

- Pang, S.; Zhang, A.; Lai, S.; Zuo, Z. Automatic recognition of teachers’ nonverbal behavior based on dilated convolution. In Proceedings of the 2022 IEEE 5th International Conference on Information Systems and Computer Aided Education (ICISCAE), Dalian, China, 23–25 September 2022; pp. 162–167. [Google Scholar]

- Pang, S.; Lai, S.; Zhang, A.; Yang, Y.; Sun, D. Graph convolutional network for automatic detection of teachers’ nonverbal behavior. Comput. Educ. Artif. Intell. 2023, 5, 100174. [Google Scholar] [CrossRef]

- Zhao, G.; Zhu, W.; Hu, B.; Chu, J.; He, H.; Xia, Q. A simple teacher behavior recognition method for massive teaching videos based on teacher set. Appl. Intell. 2021, 51, 8828–8849. [Google Scholar] [CrossRef]

- Yuvaraj, R.; Amalin, A.P.; Murugappan, M. An automated recognition of teacher and student activities in the classroom environment: A deep learning framework. IEEE Access 2024, 12, 192159–192171. [Google Scholar] [CrossRef]

- Peng, Y.; Lu, S.; Qiu, Z.; Wang, J. Teaching behaviors recognition by combining deep learning-based human body detection and pose estimation. In Proceedings of the 2024 International Symposium on Artificial Intelligence for Education, Xi’an, China, 6–8 September 2024; pp. 594–600. [Google Scholar]

- Wu, D.; Chen, J.; Deng, W.; Wei, Y.; Luo, H.; Wei, Y. The recognition of teacher behavior based on multimodal information fusion. Math. Probl. Eng. 2020, 2020, 8269683. [Google Scholar] [CrossRef]

- Nguyen, H.; Tran, N.; Nguyen, M.; Nguyen, H.D. Empowering classroom behavior recognition through hybrid spatial-temporal feature fusion. Appl. Intell. 2025, 55, 863. [Google Scholar] [CrossRef]

- Wu, D.; Wang, J.; Zou, W.; Zou, S.; Zhou, J.; Gan, J. Classroom teacher action recognition based on spatio-temporal dual-branch feature fusion. Comput. Vis. Image Underst. 2024, 247, 104068. [Google Scholar] [CrossRef]

- Hou, P.; Yang, M.; Zhang, T.; Na, T. Analysis of English classroom teaching behavior and strategies under adaptive deep learning under cognitive psychology. Curr. Psychol. 2024, 43, 35974–35988. [Google Scholar] [CrossRef]

- Guerrero-Sosa, J.D.T.; Romero, F.P.; Men’endez-Dom’inguez, V.H.; Serrano-Guerrero, J.; Montoro-Montarroso, A.; Olivas, J.A. A Comprehensive Review of Multimodal Analysis in Education. Appl. Sci. 2025, 15, 5896. [Google Scholar] [CrossRef]

- Lee, G.; Shi, L.; Latif, E.; Gao, Y.; Bewersdorff, A.; Nyaaba, M.; Guo, S.; Liu, Z.; Mai, G.; Liu, T.; et al. Multimodality of AI for Education: Toward Artificial General Intelligence. IEEE Trans. Learn. Technol. 2025, 18, 666–683. [Google Scholar] [CrossRef]

- Bertasius, G.; Wang, H.; Torresani, L. Is space-time attention all you need for video understanding? In Proceedings of the International Conference on Machine Learning, Virtual, 18–24 July 2021; Volume 2, p. 4. [Google Scholar]

- Xu, T.; Deng, W.; Zhang, S.; Wei, Y.; Liu, Q. Research on recognition and analysis of teacher–student behavior based on a blended synchronous classroom. Appl. Sci. 2023, 13, 3432. [Google Scholar] [CrossRef]

- Zheng, Q.; Chen, Z.; Wang, M.; Shi, Y.; Chen, S.; Liu, Z. Automated multimode teaching behavior analysis: A pipeline-based event segmentation and description. IEEE Trans. Learn. Technol. 2024, 17, 1677–1693. [Google Scholar] [CrossRef]

- Xu, F.; Wu, L.; Thai, K.P.; Hsu, C.; Wang, W.; Tong, R. MUTLA: A large-scale dataset for multimodal teaching and learning analytics. arXiv 2019, arXiv:1910.06078. [Google Scholar]

- Sümer, Ö.; Goldberg, P.; D’Mello, S.; Gerjets, P.; Trautwein, U.; Kasneci, E. Multimodal engagement analysis from facial videos in the classroom. IEEE Trans. Affect. Comput. 2021, 14, 1012–1027. [Google Scholar] [CrossRef]

- Tang, W.; Wang, C.; Zhang, Y. Evaluation Method of Teaching Styles Based on Multi-modal Fusion. In Proceedings of the 7th International Conference on Communication and Information Processing, Beijing, China, 16–18 December 2021; pp. 9–15. [Google Scholar]

- Ma, X.; Zhou, J.; Wu, D.; Luo, S. A teacher behavior recognition model based on multi-stream graph convolution network. In Proceedings of the 2023 5th International Conference on Computer Science and Technologies in Education (CSTE), Xi’an, China, 21–23 April 2023; pp. 320–325. [Google Scholar]

- Chango, W.; Lara, J.A.; Cerezo, R.; Romero, C. A review on data fusion in multimodal learning analytics and educational data mining. Wiley Interdiscip. Rev. Data Min. Knowl. Discov. 2022, 12, e1458. [Google Scholar] [CrossRef]

- Liang, J.; Pan, W.; Zhang, Z.; Zhou, M. Multi-Modal Detection and Enhancement of Teachers’ and Students’ Behaviors Based on YOLO Object Detection and Voiceprint Technologies. In Proceedings of the 2025 6th International Conference on Computer Vision, Image and Deep Learning (CVIDL), Ningbo, China, 23–25 May 2025; pp. 100–106. [Google Scholar]

- Ni, B.; Peng, H.; Chen, M.; Zhang, S.; Meng, G.; Fu, J.; Xiang, S.; Ling, H. Expanding language-image pretrained models for general video recognition. In Proceedings of the European Conference on Computer Vision, Tel Aviv, Israel, 23–27 October 2022; pp. 1–18. [Google Scholar]

- Wu, W.; Wang, X.; Luo, H.; Wang, J.; Yang, Y.; Ouyang, W. Bidirectional cross-modal knowledge exploration for video recognition with pre-trained vision-language models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Vancouver, BC, Canada, 17–24 June 2023; pp. 6620–6630. [Google Scholar]

- Wang, H.; Liu, F.; Jiao, L.; Wang, J.; Hao, Z.; Li, S.; Li, L.; Chen, P.; Liu, X. ViLT-CLIP: Video and Language Tuning CLIP with Multimodal Prompt Learning and Scenario-Guided Optimization. In Proceedings of the AAAI Conference on Artificial Intelligence, Vancouver, BC, Canada, 26–27 February 2024. [Google Scholar]

- Wasim, S.T.; Naseer, M.; Khan, S.; Khan, F.S.; Shah, M. Vita-clip: Video and text adaptive clip via multimodal prompting. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Vancouver, BC, Canada, 17–24 June 2023; pp. 23034–23044. [Google Scholar]

- Wang, Q.; Du, J.; Yan, K.; Ding, S. Seeing in flowing: Adapting clip for action recognition with motion prompts learning. In Proceedings of the 31st ACM International Conference on Multimedia, Ottawa, ON, Canada, 29 October–3 November 2023; pp. 5339–5347. [Google Scholar]

- Zhang, B.; Zhang, Y.; Zhang, J.; Sun, Q.; Wang, R.; Zhang, Q. Visual-guided hierarchical iterative fusion for multi-modal video action recognition. Pattern Recognit. Lett. 2024, 186, 213–220. [Google Scholar] [CrossRef]

- Liu, Z.; Xu, K.; Su, B.; Zou, X.; Peng, Y.; Zhou, J. STOP: Integrated Spatial-Temporal Dynamic Prompting for Video Understanding. In Proceedings of the 2025 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 11–15 June 2025; pp. 13776–13786. [Google Scholar]

- Khattak, M.U.; Naeem, M.F.; Naseer, M.; Van Gool, L.; Tombari, F. Learning to Prompt with Text Only Supervision for Vision-Language Models. In Proceedings of the AAAI Conference on Artificial Intelligence, Vancouver, BC, Canada, 26–27 February 2024. [Google Scholar]

- Cai, T.; Xiong, Y.; He, C.; Wu, C.; Cai, L. Classroom teacher behavior analysis: The TBU dataset and performance evaluation. Comput. Vis. Image Underst. 2025, 257, 104376. [Google Scholar] [CrossRef]

- Soomro, K.; Zamir, A.; Shah, M. UCF101: A Dataset of 101 Human Actions Classes From Videos in The Wild. arXiv 2012, arXiv:1212.0402. [Google Scholar] [CrossRef]

- Carreira, J.; Zisserman, A. Quo Vadis, Action Recognition? A New Model and the Kinetics Dataset. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 4724–4733. [Google Scholar]

- Tran, D.; Bourdev, L.D.; Fergus, R.; Torresani, L.; Paluri, M. Learning Spatiotemporal Features with 3D Convolutional Networks. In Proceedings of the 2015 IEEE International Conference on Computer Vision (ICCV), Santiago, Chile, 7–13 December 2015; pp. 4489–4497. [Google Scholar]

- Wang, L.; Xiong, Y.; Wang, Z.; Qiao, Y.; Lin, D.; Tang, X.; Van Gool, L. Temporal Segment Networks: Towards Good Practices for Deep Action Recognition. In Proceedings of the European Conference on Computer Vision, Amsterdam, The Netherlands, 11–14 October 2016. [Google Scholar]

- Feichtenhofer, C.; Fan, H.; Malik, J.; He, K. SlowFast Networks for Video Recognition. In Proceedings of the 2019 IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Republic of Korea, 27 October–2 November 2019; pp. 6201–6210. [Google Scholar]

- Zhang, H.; Liu, D.; Xiong, Z. Two-Stream Action Recognition-Oriented Video Super-Resolution. In Proceedings of the 2019 IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Republic of Korea, 27 October–2 November 2019; pp. 8798–8807. [Google Scholar]

- Ranasinghe, K.; Naseer, M.; Khan, S.H.; Khan, F.S.; Ryoo, M.S. Self-supervised Video Transformer. In Proceedings of the 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, LA, USA, 18–24 June 2022; pp. 2864–2874. [Google Scholar]

- Zong, M.; Wang, R.; Chen, X.; Chen, Z.; Gong, Y. Motion saliency based multi-stream multiplier ResNets for action recognition. Image Vis. Comput. 2021, 107, 104108. [Google Scholar] [CrossRef]

| Model | Acc/Top-1 (%) | Acc/Mean1 (%) | Acc/F1_Score (%) |

|---|---|---|---|

| C3D [37] | 81.3 | 66.8 | 71.1 |

| I3D [36] | 81.7 | 70.9 | 68.3 |

| Slowfast [39] | 82.2 | 66.9 | 68.1 |

| TSN [38] | 82.7 | 70.9 | 74.1 |

| Timesformer [17] | 83.4 | 71.9 | 74.3 |

| BIKE [27] | 83.3 | 78.9 | 75.5 |

| STOP [32] | 84.6 | 80.5 | 77.2 |

| TBRNet | 86.4 | 82.1 | 81.8 |

| Model | Type | Backbone | Acc/Top-1(%) | |

|---|---|---|---|---|

| UCF101 | HMDB51 | |||

| C3D+IDT [37] | 3D | Resnet-50 | 90.4 | — |

| I3D [36] | 3D | Inception-V1 | 95.1 | 74.3 |

| TSN [38] | 2D | Resnet-50 | 94.2 | 69.4 |

| SoSR+ToSR [40] | Two-stream | - | 92.1 | 68.3 |

| MSM-ResNets [42] | Two-stream | Resnet-50 | 93.5 | 69.2 |

| SVT [41] | Attention | ViT-B/14 | 93.7 | 67.2 |

| MCB [30] | Attention | ViT-B/14 | 96.3 | 72.9 |

| STOP [32] | Attention | ViT-B/32 | 95.3 | 72.0 |

| TBRNet | Attention | ViT-B/14 | 96.2 | 75.7 |

| Serial Number | Behavior Category | Recognition Accuracy |

|---|---|---|

| 0 | board writing | 0.925 |

| 1 | erasing the blackboard | 0.909 |

| 2 | operate multimedia | 0.658 |

| 3 | multimedia teaching | 0.845 |

| 4 | teacher bows | 0.800 |

| 5 | displaying teaching aids | 0.781 |

| 6 | lecture on the podium | 0.876 |

| 7 | walking around on the podium | 0.661 |

| 8 | interacting with students on the podium | 0.849 |

| 9 | lecture underneath the podium | 0.890 |

| 10 | walking around underneath the podium | 0.849 |

| 11 | interact with students outside the podium | 0.882 |

| 12 | point to the blackboard | 0.736 |

| Modules | Acc/Top-1(%) | ||

|---|---|---|---|

| TS | CCA | DQD | |

| ✓ | × | × | 83.42 |

| ✓ | ✓ | × | 85.95 |

| ✓ | × | ✓ | 84.76 |

| ✓ | ✓ | ✓ | 86.40 |

| Inputs | Acc/Top-1(%) | ||

|---|---|---|---|

| ST-R | GV-S | TC-S | |

| ✓ | × | × | 83.42 |

| ✓ | ✓ | × | 84.69 |

| ✓ | × | ✓ | 85.78 |

| ✓ | ✓ | ✓ | 86.40 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Cai, T.; Xiong, Y.; He, C.; Chen, L. TBRNet: A Multi-Modal Network for Teacher Behavior Recognition with Cascaded Collaborative Attention and Dynamic Query-Driven. Electronics 2026, 15, 460. https://doi.org/10.3390/electronics15020460

Cai T, Xiong Y, He C, Chen L. TBRNet: A Multi-Modal Network for Teacher Behavior Recognition with Cascaded Collaborative Attention and Dynamic Query-Driven. Electronics. 2026; 15(2):460. https://doi.org/10.3390/electronics15020460

Chicago/Turabian StyleCai, Ting, Yu Xiong, Chengyang He, and Lulu Chen. 2026. "TBRNet: A Multi-Modal Network for Teacher Behavior Recognition with Cascaded Collaborative Attention and Dynamic Query-Driven" Electronics 15, no. 2: 460. https://doi.org/10.3390/electronics15020460

APA StyleCai, T., Xiong, Y., He, C., & Chen, L. (2026). TBRNet: A Multi-Modal Network for Teacher Behavior Recognition with Cascaded Collaborative Attention and Dynamic Query-Driven. Electronics, 15(2), 460. https://doi.org/10.3390/electronics15020460