A Unified FPGA/CGRA Acceleration Pipeline for Time-Critical Edge AI: Case Study on Autoencoder-Based Anomaly Detection in Smart Grids

Abstract

1. Introduction

1.1. Motivation

1.2. Background and State of the Art

1.3. Contributions

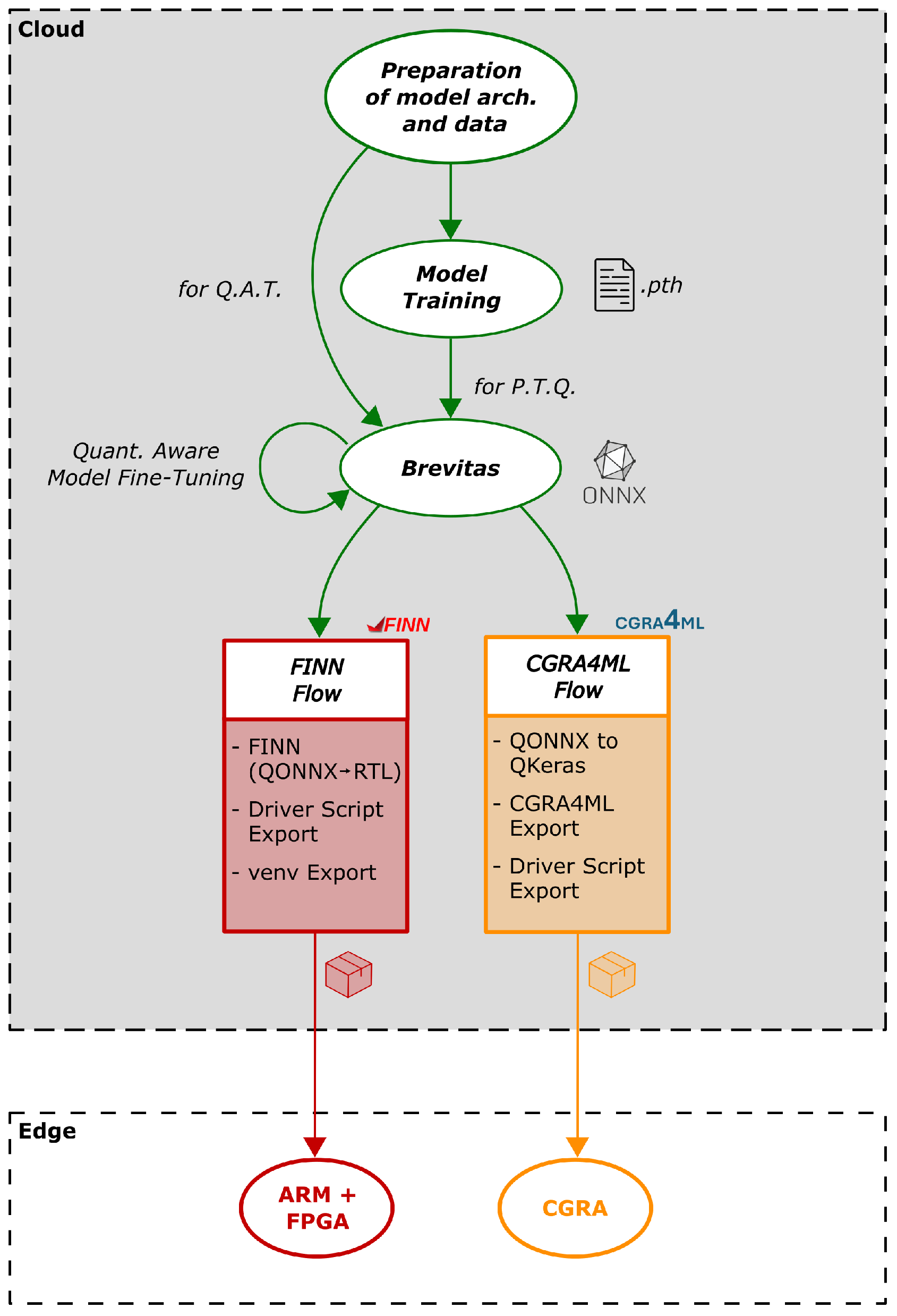

- A unified hardware-aware AI acceleration pipeline targeting FPGA devices with two diverse acceleration flows, one focused on custom high-performance low-latency AI dataflow accelerator design via the FINN library, and the other centered around a state-of-the-art CGRA-ML framework. A model translation layer from QONNX 0.4.0 to QKeras 0.9.0 is also introduced to facilitate the process. To our knowledge, this is the first time that a fully open-source unified ML pipeline for both FPGAs/CGRAs is presented, with the powerful Brevitas quantization library as the frontend.

- Application of the proposed pipeline on a real-world time-critical cyber–physical system scenario in the smart grid domain for the acceleration of a real-time anomaly detection solution for wind turbines.

- Validation of the proposed pipeline on the presented cyber–physical system scenario by applying our anomaly detection solution on two different hardware platforms, one Commercial Off-The-Shelf (COTS) Raspberry Pi (RPi) utilizing ONNX Runtime, which serves as the reference baseline, and an AMD ZCU104 platform. The main concept is that a comparison is performed of both platforms in terms of accuracy, performance, latency, power and energy efficiency, etc., and of the two acceleration flows when dealing with the same quantized model input.

1.4. Paper Structure

2. Hardware-Aware AI Acceleration Pipeline

2.1. High-Level View of the Proposed Pipeline

- 1.

- The first step is for the AI engineer to prepare (or pre-process) the available dataset and select the model architecture best suited for the application of interest in a PyTorch environment. The outcome of this step is a set of Python 3.10 scripts, one with the model architecture description, one for the training procedure.

- 2.

- The second step is the model training phase. Here we can follow two different paths:

- (a)

- Either train the model in PyTorch in floating-point mode directly and then apply PTQ on the model with Brevitas, or

- (b)

- Apply QAT on the model with Brevitas, therefore training the quantized model directly, given that the model architecture description and training script from Step 1 are already prepared in a Brevitas QAT-ready format.

- 3.

- (Optional) The third step mainly applies to those having followed Step 2(a) and is about quantization-aware model finetuning. For those who used Brevitas PTQ methods, it may be that the resulting quantized model is not good enough and therefore a finetuning process is mandatory. This process is the same as training a standard PyToch model, but in this case, the model is a Brevitas PTQ-generated one featuring special “quantization hooks” which enable the generated model to be used as a standard floating-point one during finetuning. This further facilitates the whole quantization process.

- 4.

- During the fourth step, the quantized model is extracted in a quantized Open Neural Network eXchange (ONNX), also known as QONNX [24], formatted, and is given as input to the acceleration flow of choice:

- (a)

- FINN flow;

- (b)

- CGRA4ML flow.

2.2. Brevitas Frontend

- 1.

- It features many state-of-the-art quantization algorithms for almost all popular AI models, from basic DNNs to even core Large Language Model (LLM) functionalities.

- 2.

- It supports both QAT and PTQ while providing full flexibility to the developer, who is able to choose custom integer resolutions of less than 8 bit, even at 1 bit for Binary Neural Network (BNN) computation support.

- 3.

- It exports quantized models on the quantization version of the popular ONNX Intermediate Representation (IR) model format, which is already supported on all major AI workflows.

- 4.

- It is inherently supported in FINN; therefore, it is easy to export a quantized model and work with it there.

- 5.

- It is a very active open-source project, with a great community and a lot of support and examples that enable the developer to go straight into action.

2.3. FINN Flow

2.4. CGRA4ML Flow

2.5. QONNX-QKeras Translation

- 1.

- Identification of Conv/ConvTranspose nodes, along with their respective activation nodes and quantizers.

- 2.

- Extraction of basic parameters for the Conv/ConvTranspose nodes (kernel size, strides, dilations, filters).

- 3.

- Identification of relevant weight and bias Quant nodes, along with extraction of the respective raw tensors and quantization information.

- 4.

- Preparation of the model architecture layer per layer (or more precisely bundle by bundle, following the CGRA4ML/QKeras logic) based on a QKeras bundle template.

- 5.

- Extraction of input shape from the QONNX model and transformation from NCHW (batch, channels, height, width) to NHWC (batch, height, width, channels) format.

- 6.

- Assignment of weight and bias tensors to the respected bundles, with transformation of weights tensors’ shape for QKeras compatibility.

- 7.

- Model export and continuation with the rest CGRA4ML flow.

| Algorithm 1 QONNX-QKeras Translation Flow |

| Require: QONNX model Ensure: QKeras IR model

|

ConvTranspose Translation

- 1.

- Pixel Padding (Explicit Upsampling)A transposed convolution with weightsstride , dilation , and padding p is rewritten asThe first step is to upsample the input tensor by inserting zeros between adjacent samples according to the stride. For an input feature map , the pixel-padded tensor is defined as:with output dimensionsThis operation performs deterministic upsampling without introducing learnable parameters:

- 2.

- Kernel Rotation and Channel ReorderingThe second step constructs a convolution kernel that matches the effect of the original transposed kernel. The required transformation consists of

- (a)

- Rotating the spatial dimensions of by ;

- (b)

- Swapping the input and output channel axes.

Formally, the rotated kernel is given bywhereAfter rotation and channel transposition, the tensorconforms to the format expected by a standard convolution.

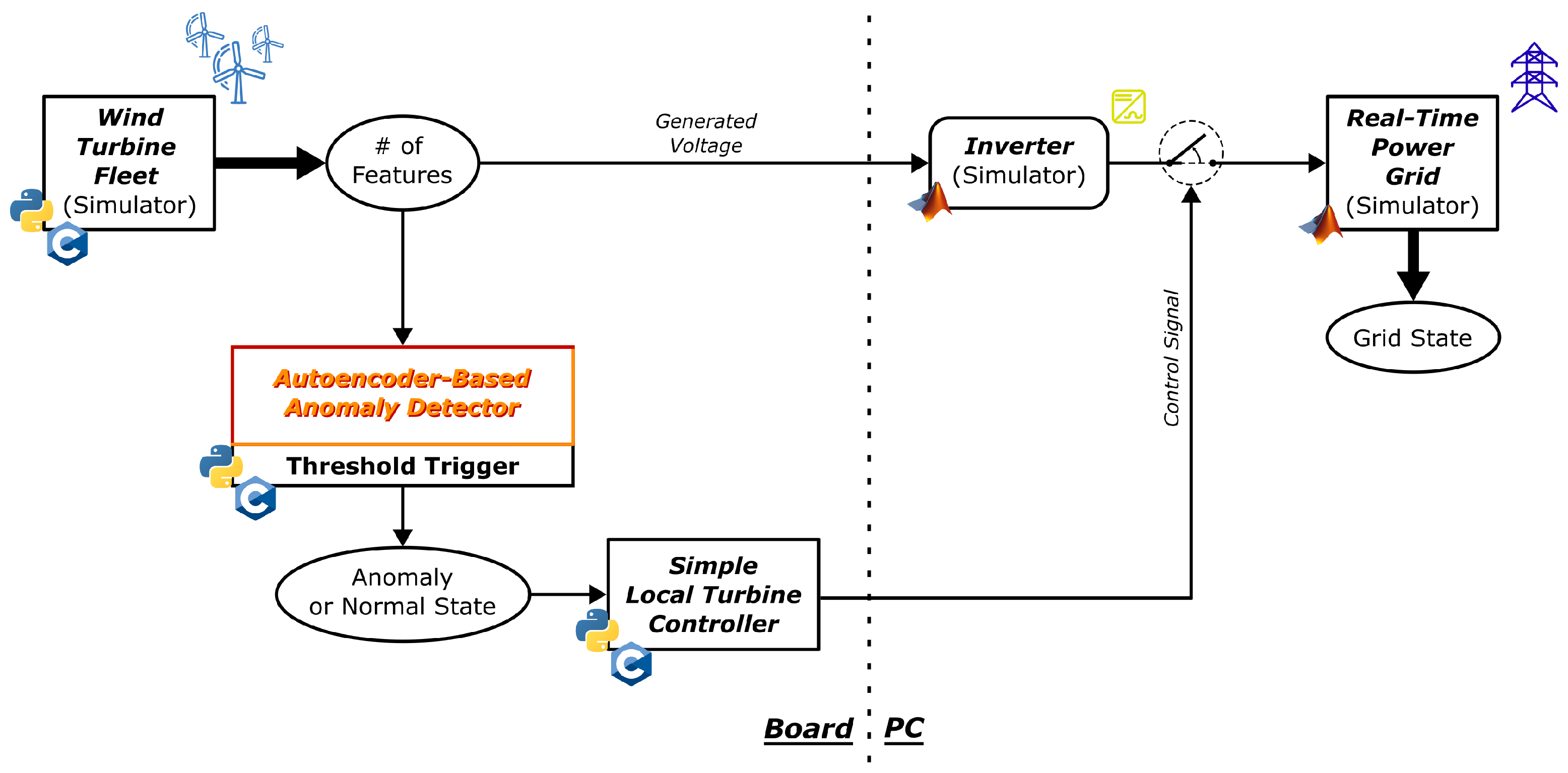

3. Real-Time Anomaly Detection in Wind Turbines

3.1. Concept Description

3.2. Autoencoder-Based Anomaly Detection Module

3.3. Threshold Trigger Module

4. Results

4.1. Experimentation Setup

4.2. Power Measurement Methodology

- Overall Mean Power per Inference (mW);

- Mean Absolute Deviation of Power per Inference (MAD) (mW);

- Standard Deviation of Mean Power per Inference (STDev) (mW);

- Mean Inference Duration (ms);

- Mean Energy per Inference (mJ);

- Performance (inferences per second);

- Efficiency (Performance per W).

4.3. Evaluation of the Proposed Pipeline

5. Discussion

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Abbreviations

| PPA | Power–Performance–Area |

| AI | Artificial Intelligence |

| CNN | Convolutional Neural Network |

| ANN | Artificial Neural Network |

| ViT | Vision Transformer |

| ML | Machine Learning |

| COTS | Commercial Off-The-Shelf |

| RPi | Raspberry Pi |

| NPU | Neural Processing Unit |

| DPU | Deep Learning Processing Unit |

| CPU | Central Processing Unit |

| GPU | Graphics Processing Unit |

| CGRA | Coarse-Grained Reconfigurable Architecture |

| FPGA | Field-Programmable Gate Array |

| QAT | Quantization-Aware Training |

| PTQ | Post-Training Quantization |

| ONNX | Open Neural Network eXchange |

| QONNX | Quantized Open Neural Network eXchange |

| LLM | Large Language Model |

| BNN | Binary Neural Network |

| IR | Intermediate Representation |

| RTL | Register-Transfer-Level |

| HLS | High-Level Synthesis |

| DNN | Deep Neural Network |

| PE | Processing Element |

| SIMD | Single-Instruction–Multiple-Data |

| FIFO | First-In First-Out |

| ASIC | Application-Specific Integrated Circuit |

| DMA | Direct Memory Access |

| TCL | Tool Command Language |

| PLC | Programmable Logic Controller |

| DWT | Discrete Wavelet Transform |

| IDWT | Inverse Distrete Wavelet Transform |

| FP32 | Single Precision Floating Point Format |

| DUT | Device Under Test |

| PGA | Programmable Gain Amplifier |

| ADC | Analog-to-Digital Converter |

| GPIO | General-Purpose Input/Output |

| MAD | Mean Absolute Deviation |

| STDev | Standard Deviation |

| MSE | Mean Squared Error |

| FPS | Frames-Per-Second |

| LUT | Look-Up Table |

| LUTRAM | Look-Up Table Random Access Memory |

| FF | Flip FLop |

| BRAM | Block Random Access Memory |

| URAM | Unified Random Access Memory |

| DSP | Digital Signal Processing |

| RNN | Recurrent Neural Network |

| NAS | Neural Architecture Search |

References

- Radanliev, P.; De Roure, D.; Van Kleek, M.; Santos, O.; Ani, U. Artificial intelligence in cyber physical systems. AI Soc. 2021, 36, 783–796. [Google Scholar] [CrossRef]

- Chen, J.; Wen, K.; Xia, J.; Huang, R.; Chen, Z.; Li, W. Knowledge Embedded Autoencoder Network for Harmonic Drive Fault Diagnosis Under Few-Shot Industrial Scenarios. IEEE Internet Things J. 2024, 11, 22915–22925. [Google Scholar] [CrossRef]

- Choi, Y.; Joe, I. Motor Fault Diagnosis and Detection with Convolutional Autoencoder (CAE) Based on Analysis of Electrical Energy Data. Electronics 2024, 13, 3946. [Google Scholar] [CrossRef]

- Ghazimoghadam, S.; Hosseinzadeh, S.A.A. A novel unsupervised deep learning approach for vibration-based damage diagnosis using a multi-head self-attention LSTM autoencoder. Elsevier Meas. 2024, 229, 114410. [Google Scholar] [CrossRef]

- We Did the Math on AI’s Energy Footprint. Here’s the Story You Haven’t Heard, MIT Technology Review. Available online: https://www.technologyreview.com/2025/05/20/1116327/ai-energy-usage-climate-footprint-big-tech/ (accessed on 15 December 2025).

- Liu, L.; Zhu, J.; Li, Z.; Lu, Y.; Deng, Y.; Han, J.; Yin, S.; Wei, S. A Survey of Coarse-Grained Reconfigurable Architecture and Design: Taxonomy, Challenges, and Applications. ACM Comput. Surv. 2019, 53, 1–39. [Google Scholar] [CrossRef]

- Podobas, A.; Sano, K.; Matsuoka, S. A Survey on Coarse-Grained Reconfigurable Architectures From a Performance Perspective. IEEE Access 2020, 8, 146719–146743. [Google Scholar] [CrossRef]

- Mohaidat, T.; Khalil, K. A Survey on Neural Network Hardware Accelerators. IEEE Trans. Artif. Intell. 2024, 4, 3801–3822. [Google Scholar] [CrossRef]

- Liu, S.; Fan, H.; Ferianc, M.; Niu, X.; Shi, H.; Luk, W. Toward Full-Stack Acceleration of Deep Convolutional Neural Networks on FPGAs. IEEE Trans. Neural Netw. Learn. Syst. 2022, 33, 3974–3987. [Google Scholar] [CrossRef] [PubMed]

- Medus, L.D.; Iakymchuk, T.; Frances-Villora, J.V.; Bataller-Mompeán, M.; Rosado-Munoz, A. A Novel Systolic Parallel Hardware Architecture for the FPGA Acceleration of Feedforward Neural Networks. IEEE Access 2019, 7, 76084–76103. [Google Scholar] [CrossRef]

- Zhao, Z.; Cao, R.; Un, K.F.; Yu, W.H.; Mak, P.I.; Martins, R.P. An FPGA-Based Transformer Accelerator Using Output Block Stationary Dataflow for Object Recognition Applications. IEEE Trans. Circuits Syst. II Express Briefs 2023, 70, 281–285. [Google Scholar] [CrossRef]

- Blott, M.; Preußer, T.B.; Fraser, N.J.; Gambardella, G.; O’brien, K.; Umuroglu, Y.; Leeser, M.; Vissers, K. FINN-R: An end-to-end deep-learning framework for fast exploration of quantized neural networks. ACM Trans. Reconfigurable Technol. Syst. 2018, 11, 1–23. [Google Scholar] [CrossRef]

- Umuroglu, Y.; Fraser, N.J.; Gambardella, G.; Blott, M.; Leong, P.; Jahre, M.; Vissers, K. FINN: A Framework for Fast, Scalable Binarized Neural Network Inference. In Proceedings of the 2017 ACM/SIGDA International Symposium on Field-Programmable Gate Arrays, Monterey, CA, USA, 22–24 February 2017. [Google Scholar] [CrossRef]

- Schulte, J.F.; Ramhorst, B.; Sun, C.; Mitrevski, J.; Ghielmetti, N.; Lupi, E.; Danopoulos, D.; Loncar, V.; Duarte, J.; Burnette, D.; et al. hls4ml: A Flexible, Open-Source Platform for Deep Learning Acceleration on Reconfigurable Hardware. arXiv 2025, arXiv:2512.01463. [Google Scholar]

- Anderson, J.; Beidas, R.; Chacko, V.; Hsiao, H.; Ling, X.; Ragheb, O.; Wang, X.; Yu, T. CGRA-ME: An Open-Source Framework for CGRA Architecture and CAD Research: (Invited Paper). In Proceedings of the 2021 IEEE 32nd International Conference on Application-Specific Systems, Architectures and Processors (ASAP), Virtual, 7–9 July 2021. [Google Scholar] [CrossRef]

- Vitis AI User Guide (UG1414). Available online: https://docs.amd.com/r/en-US/ug1414-vitis-ai/Vitis-AI-Overview (accessed on 15 December 2025).

- OpenVINO Website. Available online: https://docs.openvino.ai/2025/index.html (accessed on 15 December 2025).

- DNNDK User Guide. Available online: https://docs.amd.com/v/u/en-US/ug1327-dnndk-user-guide (accessed on 15 December 2025).

- Faure-Gignoux, A.; Delmas, K.; Gauffriau, A.; Pagetti, C. Open-source Stand-Alone Versatile Tensor Accelerator. arXiv 2025, arXiv:2509.19790. [Google Scholar]

- Xilinx/brevitas Zenodo Website. Available online: https://zenodo.org/records/16987789 (accessed on 15 December 2025).

- CGRA4ML’s GitHub Website. Available online: https://github.com/KastnerRG/cgra4ml.git (accessed on 13 January 2026).

- Abarajithan, G.; Ma, Z.; Li, Z.; Koparkar, S.; Munasinghe, R.; Restuccia, F.; Kastner, R. CGRA4ML: A Framework to Implement Modern Neural Networks for Scientific Edge Computing. arXiv 2024, arXiv:2408.15561. [Google Scholar] [CrossRef]

- Khadem Hosseini, A.M.; Mirzakuchaki, S. Real-Time Semantic Segmentation on FPGA for Autonomous Vehicles Using LMIINet with the CGRA4ML Framework. arXiv 2025, arXiv:2510.22243. [Google Scholar]

- Pappalardo, A.; Umuroglu, Y.; Blott, M.; Mitrevski, J.; Hawks, B.; Tran, N.; Loncar, V.; Summers, S.; Borras, H.; Muhizi, J.; et al. QONNX: Representing Arbitrary-Precision Quantized Neural Networks. arXiv 2022, arXiv:2206.07527. [Google Scholar] [CrossRef]

- What Is Transposed Convolutional Layer? Available online: https://towardsdatascience.com/what-is-transposed-convolutional-layer-40e5e6e31c11/ (accessed on 15 December 2025).

- Preventing Blackouts: Real-Time Data Processing for Millisecond-Level Fault Handling. Available online: https://www.ververica.com/blog/preventing-blackouts-real-time-data-processing-for-mission-critical-infrastructure (accessed on 15 December 2025).

- Singh, G.K. Power system harmonics research: A survey. Eur. Trans. Electr. Power 2009, 19, 151–172. [Google Scholar] [CrossRef]

- Liang, X.; Andalib-Bin-Karim, C. Harmonics and Mitigation Techniques Through Advanced Control in Grid-Connected Renewable Energy Sources: A Review. IEEE Trans. Ind. Appl. 2018, 54, 3100–3111. [Google Scholar] [CrossRef]

- Berahm, K.; Daneshfar, F.; Salehi, E.S.; Li, Y.; Xu, Y. Autoencoders and their applications in machine learning: A survey. Artif. Intell. Rev. 2024, 57, 28. [Google Scholar] [CrossRef]

- Zhu, X.; Yang, C.; Lin, T. Maximum Variance Regularization for Latent Variables Makes Autoencoder Become Better One-Class Classifier. In Proceedings of the China Automation Congress (CAC), Qingdao, China, 1–3 November 2024; Available online: https://ieeexplore.ieee.org/document/10865629 (accessed on 3 January 2026).

- Yong, B.X.; Brintrup, A. Do Autoencoders Need a Bottleneck for Anomaly Detection? IEEE Access 2022, 10, 78455–78471. [Google Scholar] [CrossRef]

- Mercioni, M.A.; Holban, S. Developing Novel Activation Functions in Time Series Anomaly Detection with LSTM Autoencoder. In Proceedings of the 2021 IEEE 15th International Symposium on Applied Computational Intelligence and Informatics (SACI), Timisoara, Romania, 19–21 May 2021. [Google Scholar] [CrossRef]

- PyWavelets—Wavelet Transforms in Python. Available online: https://pywavelets.readthedocs.io/en/latest/ (accessed on 15 December 2025).

- Mylonas, E.; Tzanis, N.; Birbas, M.; Birbas, A. An Automatic Design Framework for Real-Time Power System Simulators Supporting Smart Grid Applications. Electronics 2020, 9, 299. [Google Scholar] [CrossRef]

- Stavropoulos, S.; Tzanis, N.; Mylonas, E.; Birbas, M.; Birbas, A.; Papalexopoulos, A. FPGA-enabled Real-Time Power Grid Simulation Using Grid Partitioning. In Proceedings of the 12th Mediterranean Conference on Power Generation, Transmission, Distribution and Energy Conversion (MEDPOWER 2020), Paphos, Cyprus, 9–12 November 2020. [Google Scholar] [CrossRef]

- Tzanis, N.; Proiskos, G.; Birbas, M.; Birbas, A. FPGA-Assisted Distribution Grid Simulator. In Proceedings of the 14th International Symposium, ARC 2018, Santorini, Greece, 2–4 May 2018. [Google Scholar] [CrossRef]

- ZCU104 Board User Guide (UG1267). Available online: https://docs.amd.com/v/u/en-US/ug1267-zcu104-eval-bd (accessed on 14 December 2025).

- MicroBlaze V Processor Embedded Design User Guide (UG1711). Available online: https://docs.amd.com/r/en-US/ug1711-microblaze-v-embedded-design/Introduction (accessed on 14 December 2025).

- Wind Turbine Fleet Dataset for Anomaly Detection. Available online: https://aws-ml-blog.s3.amazonaws.com/artifacts/monitor-manage-anomaly-detection-model-wind-turbine-fleet-sagemaker-neo/dataset_wind_turbine.csv.gz (accessed on 14 December 2025).

- INA219 Datasheet. Available online: https://www.ti.com/lit/ds/symlink/ina219.pdf?ts=1765634341896 (accessed on 14 December 2025).

- Circuit Python Driver for INA219 Current Sensor. Available online: https://github.com/adafruit/Adafruit_CircuitPython_INA219.git (accessed on 14 December 2025).

- INA226 Datasheet. Available online: https://www.ti.com/product/INA226 (accessed on 14 December 2025).

| Metric (Unit) | RPi (ONNX Runtime) | ZCU104 (FINN) | ZCU104 (CGRA4ML) |

|---|---|---|---|

| Overall Mean Power per Inference (mW) | 371.3 | 399.3 | 461.9 |

| MAD of Mean Power per Inference (mW) | 93.1 | 24.1 | 20.9 |

| STDev (mW) | 133.5 | 35.0 | 25.6 |

| Mean Inference Duration (ms) | 259.3 | 27.5 | 6.89 |

| Mean Energy per Inference (mJ) | 96.3 | 11.0 | 3.2 |

| Performance (inf/s) | 3 | 36 | 145 |

| Efficiency (Performance/W) | 8.079 | 90.156 | 313.941 |

| Metric (Unit) | RPi (ONNX Runtime) | ZCU104 (FINN) | ZCU104 (CGRA4ML) |

|---|---|---|---|

| Accuracy (MSE) | 0.0015 | 0.0026 | 0.0026 |

| Design Attribute | Utilization * | Available | Utilization % |

|---|---|---|---|

| LUT | 22,228 | 230,400 | 9.65 |

| LUTRAM | 9195 | 101,760 | 9.04 |

| FF | 21,088 | 460,800 | 4.58 |

| BRAM | 107 | 312 | 34.13 |

| URAM | 3 | 96 | 3.13 |

| DSP | 21 | 1728 | 1.22 |

| Design Attribute | Utilization * | Available | Utilization % |

|---|---|---|---|

| LUT | 57,600 | 230,400 | 25 |

| LUTRAM | 6106 | 101,760 | 6 |

| FF | 92,160 | 460,800 | 20 |

| BRAM | 47 | 312 | 15.06 |

| URAM | 1 | 96 | 1.04 |

| DSP | 18 | 1728 | 1.04 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Mylonas, E.; Filippou, C.; Kontraros, S.; Birbas, M.; Birbas, A. A Unified FPGA/CGRA Acceleration Pipeline for Time-Critical Edge AI: Case Study on Autoencoder-Based Anomaly Detection in Smart Grids. Electronics 2026, 15, 414. https://doi.org/10.3390/electronics15020414

Mylonas E, Filippou C, Kontraros S, Birbas M, Birbas A. A Unified FPGA/CGRA Acceleration Pipeline for Time-Critical Edge AI: Case Study on Autoencoder-Based Anomaly Detection in Smart Grids. Electronics. 2026; 15(2):414. https://doi.org/10.3390/electronics15020414

Chicago/Turabian StyleMylonas, Eleftherios, Chrisanthi Filippou, Sotirios Kontraros, Michael Birbas, and Alexios Birbas. 2026. "A Unified FPGA/CGRA Acceleration Pipeline for Time-Critical Edge AI: Case Study on Autoencoder-Based Anomaly Detection in Smart Grids" Electronics 15, no. 2: 414. https://doi.org/10.3390/electronics15020414

APA StyleMylonas, E., Filippou, C., Kontraros, S., Birbas, M., & Birbas, A. (2026). A Unified FPGA/CGRA Acceleration Pipeline for Time-Critical Edge AI: Case Study on Autoencoder-Based Anomaly Detection in Smart Grids. Electronics, 15(2), 414. https://doi.org/10.3390/electronics15020414