Surface Defect Detection of Magnetic Tiles Based on YOLOv8-AHF

Abstract

1. Introduction

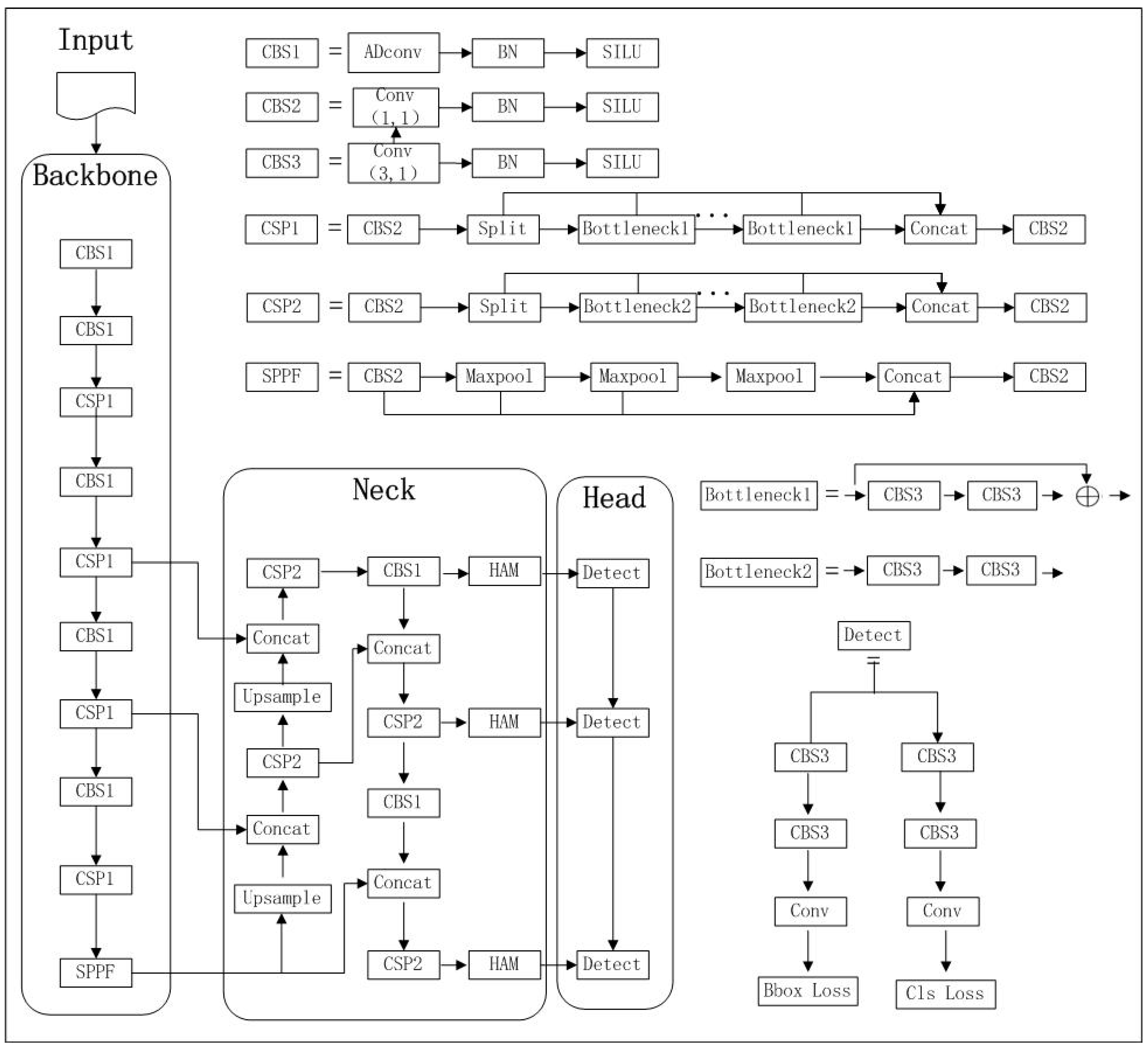

2. Basic Backbone Network

3. Algorithm Improvements

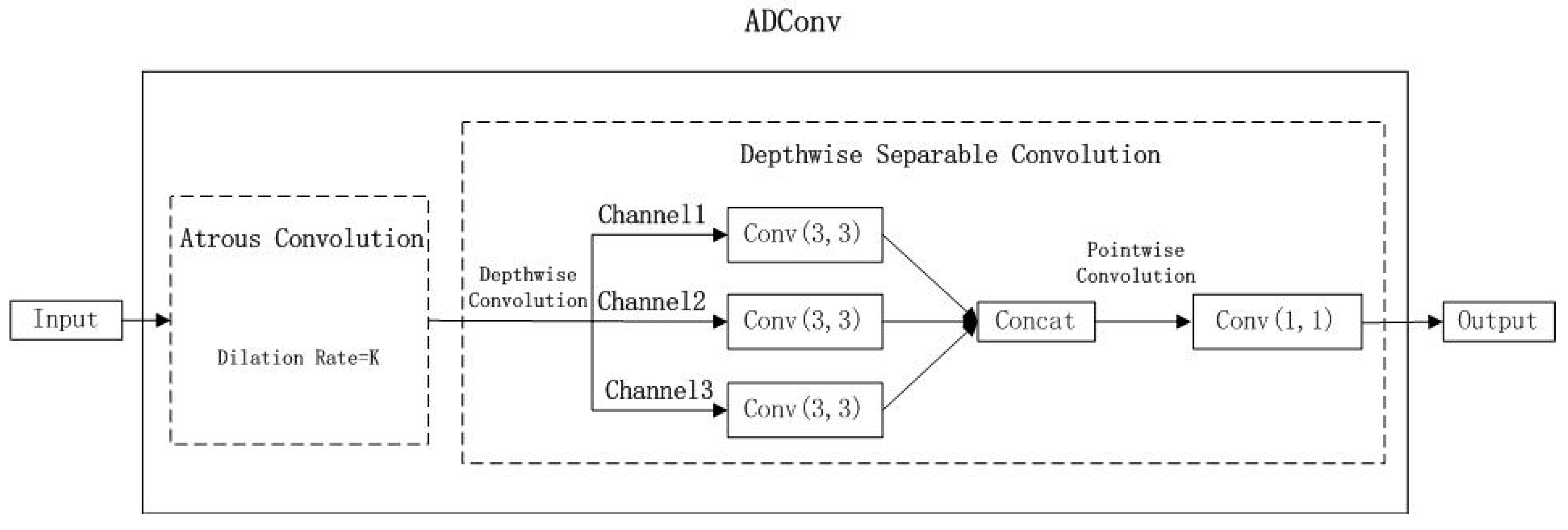

3.1. Mixed Convolution Module ADConv

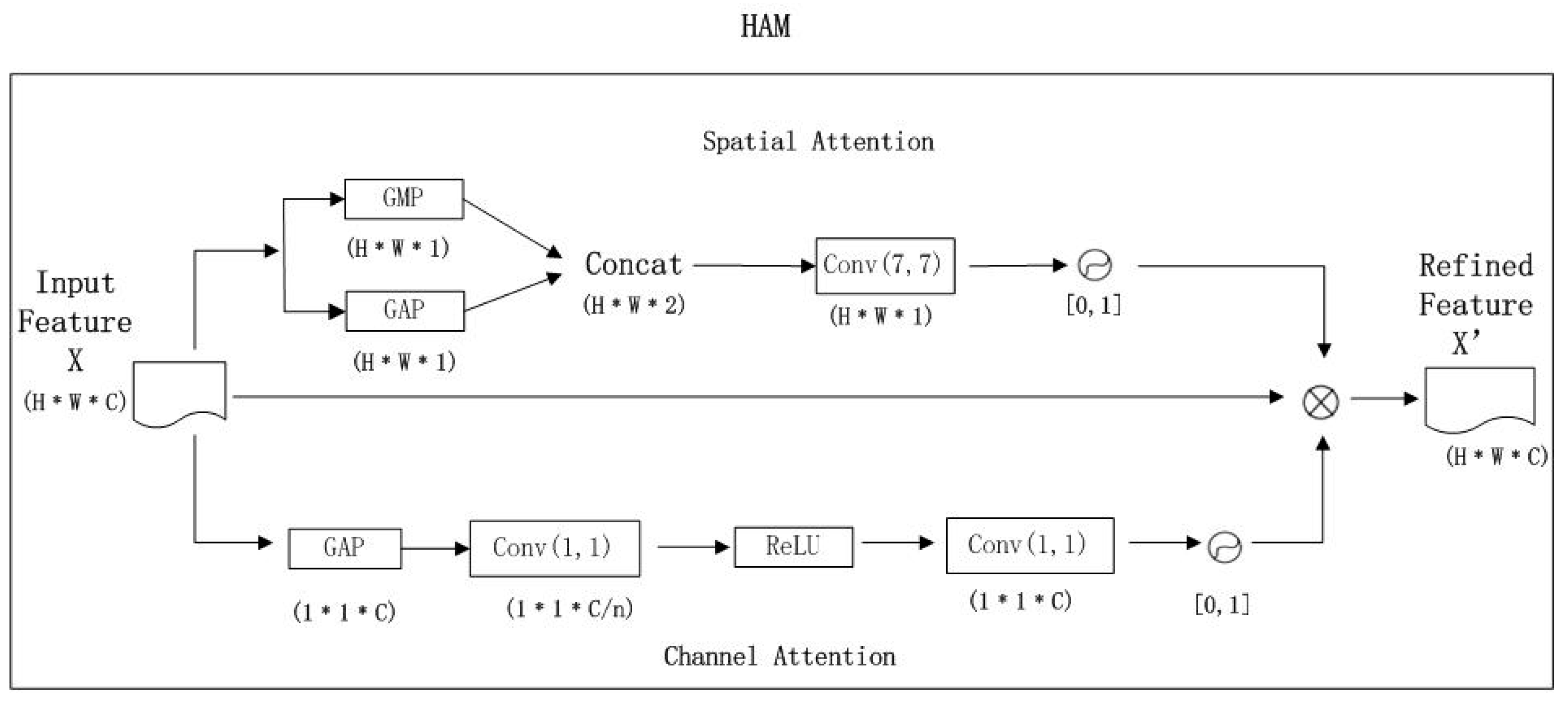

3.2. Hybrid Attention Module (HAM)

3.3. Loss Function Focal-EIoU

4. Experiments and Analysis

4.1. Experimental Environment

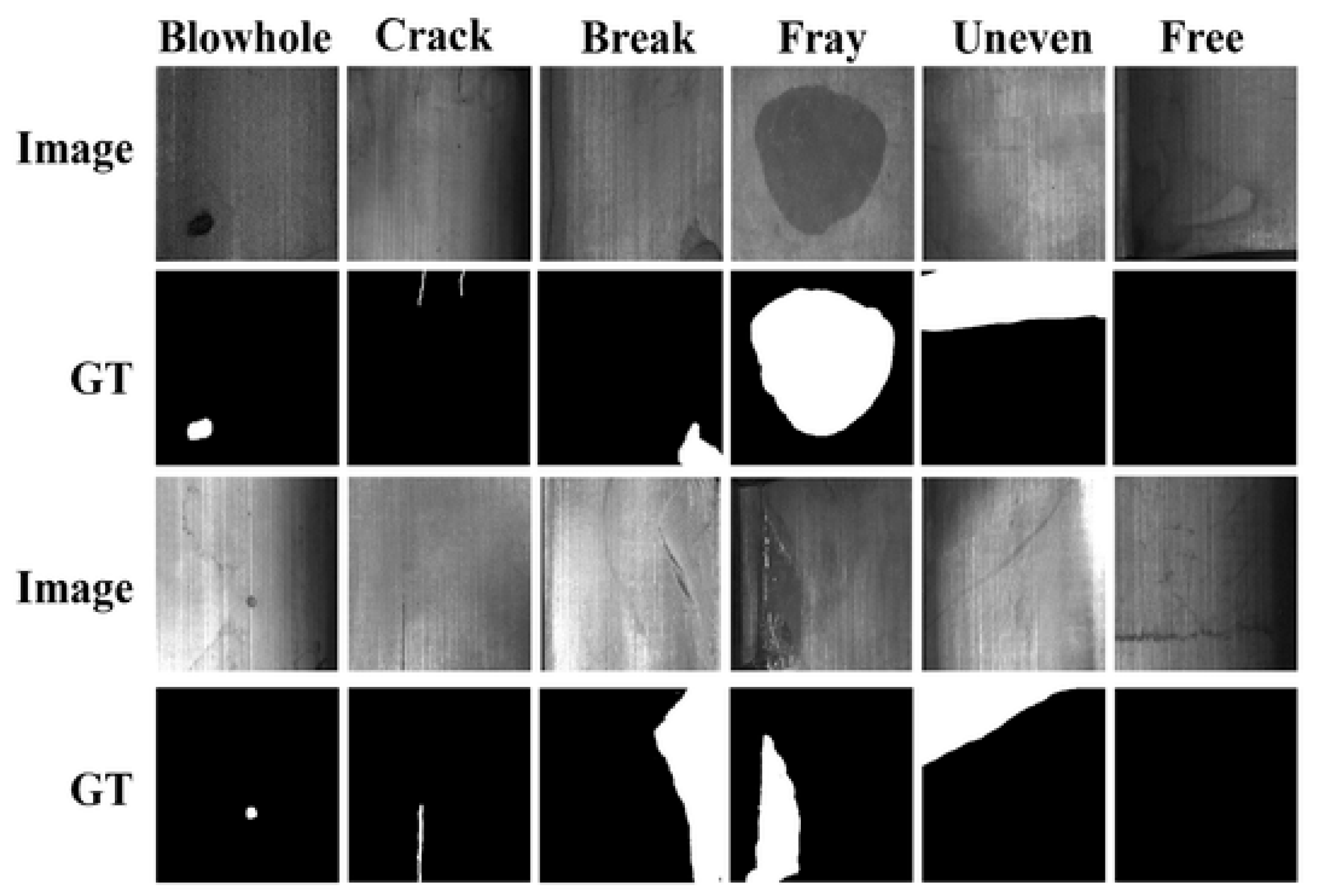

4.2. Experimental Data

4.3. Evaluation Metrics

4.4. Comparative Experiments

4.4.1. Algorithm Comparison Experiment

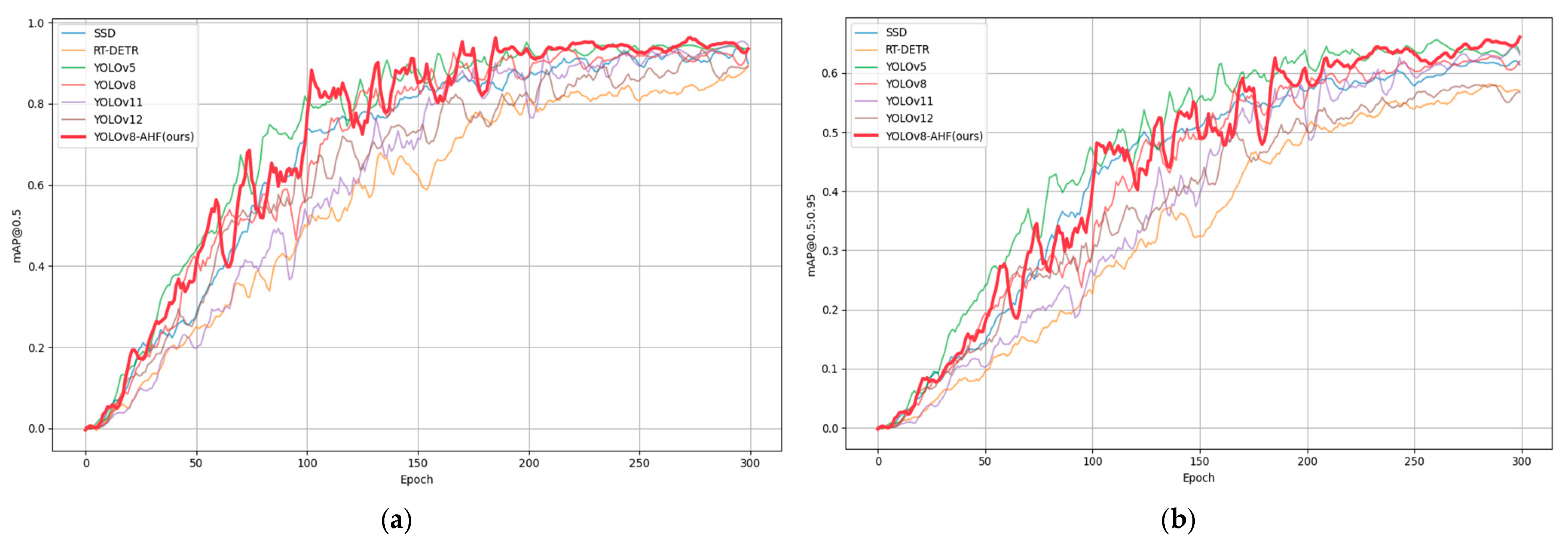

4.4.2. mAP Comparison Experiment

4.4.3. Precision–Recall Comparison Experiment

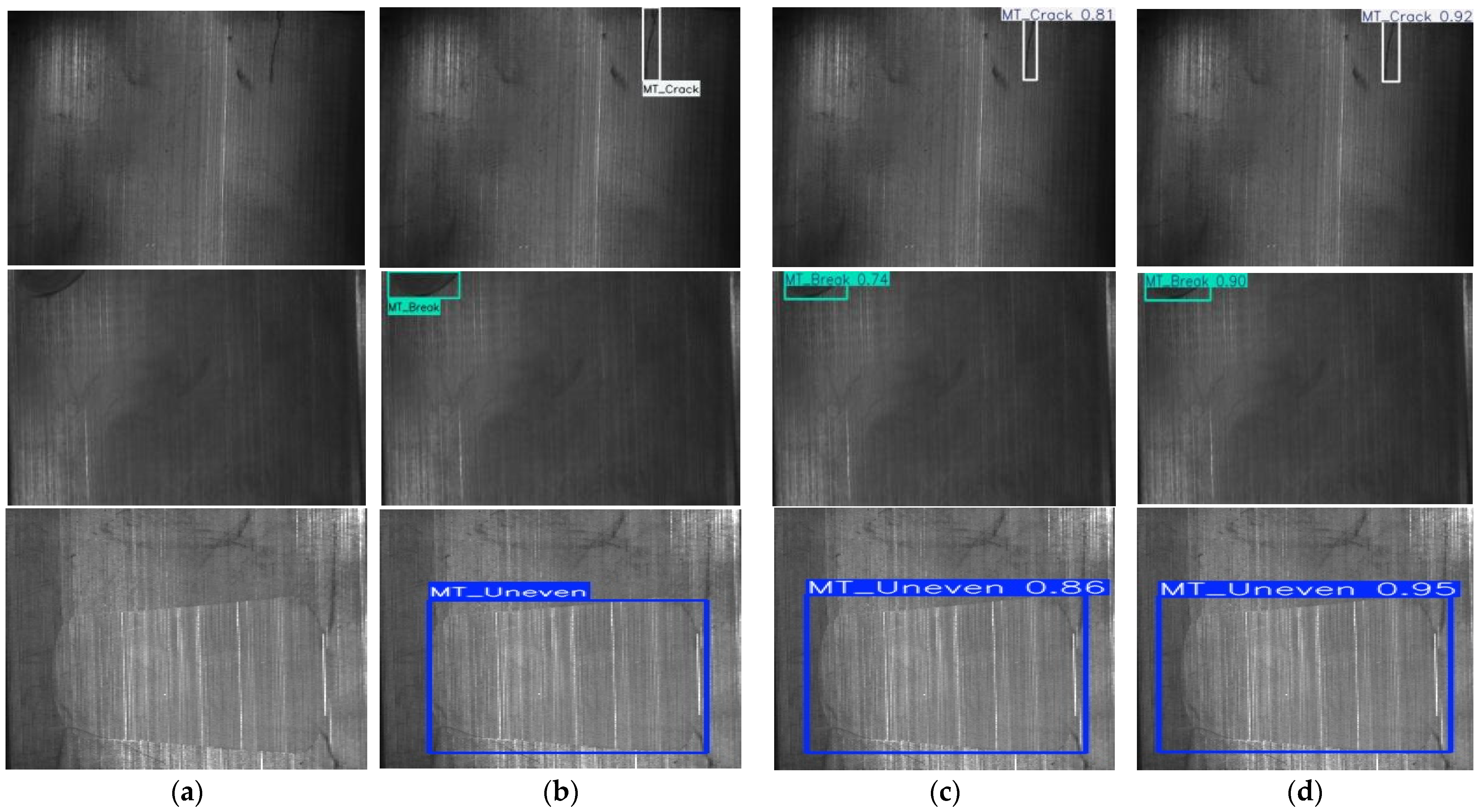

4.4.4. Detection Effect Comparison Experiment

4.5. Ablation Experiment

4.5.1. Convolutional Module Ablation Experiment

4.5.2. Attention Module Ablation Experiment

4.5.3. Loss Function Ablation Experiment

4.5.4. Overall Ablation Experiment

4.6. Generalization Experiment

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Correction Statement

Abbreviations

| ADConv | Combination of Atrous Convolution and Depthwise Separable Convolution |

| AlphaIoU | Alpha Intersection over Union |

| AP | Average Precision |

| BAM | Bottleneck Attention Module |

| BN | Batch Normalization |

| CA | Channel Attention |

| CBS | Conv-BN-SILU |

| CIoU | Complete Intersection over Union |

| CSP | Cross-Stage Partial |

| DIoU | Distance Intersection over Union |

| DyConv | Dynamic Convolution |

| EIoU | Efficient Intersection over Union |

| EMA | Efficient Multi-Scale Attention |

| FN | False Negative |

| Focal-EIoU | Focal-Enhanced Intersection over Union |

| FP | False Positive |

| FPS | Frames Per Second |

| GAM | Global Attention Mechanism |

| GFLOPS | Giga Floating Point Operations Per Second |

| GSConv | Group Spatial Convolution |

| HAM | Hybrid Attention Module |

| IoU | Intersection over Union |

| mAP | Mean Average Precision |

| NSCT | Non-Subsampled Contourlet Transform |

| ODConv | Omni-Dimensional Dynamic Convolution |

| PR | Precision-Recall |

| SILU | Sigmoid Linear Unit |

| SPDConv | Space-to-Depth Convolution |

| SPPF | Spatial Pyramid Pooling Fast |

| TP | True Positive |

| YOLO | You Only Look Once |

References

- Li, X.; Jiang, H.; Liu, P.; Yin, G. Non downsampling Contourlet domain adaptive threshold surface magnetic tile surface defect detection. J. Comput.-Aided. Des. Comput. Graph. 2014, 26, 553–558. [Google Scholar]

- Lin, L.J.; Yin, Y.; He, M.G.; Yin, X.Y.; Yin, G.F. Edge detection algorithm for magnetic tile crack defects based on wavelet modulus maximum. J. Univ. Electron. Sci. Technol. China. 2015, 44, 283–288. [Google Scholar]

- Gao, Q.Q. Research on Visual Inspection Technology for Surface Quality of Magnetic Tile. Ph.D. Thesis, Shandong University of Technology, Zibo, China, 2018. [Google Scholar]

- Li, Z.H. Research on Visual Inspection System and Algorithm for Surface Defects of Polished Bricks. Ph.D. Thesis, Guangdong University of Technology, Guangzhou, China, 2021. [Google Scholar]

- Ding, L.F.; Zeng, S.L. Surface defect detection of motor magnetic tiles based on multiple attention mechanisms. Comput. Technol. Development 2022, 32, 194–199. [Google Scholar]

- Hu, H.; Li, J.F.; Shen, J.M. Research on micro defect detection method for small magnetic tile surface based on machine vision. Mech. Electr. Engineering 2019, 36, 117–123. [Google Scholar]

- Wang, L.F. Research on Modeling and Quantitative Diagnosis Method of Impact Characteristics of Rolling Bearing Defects. Master Thesis, Chongqing University, Chongqing, China, 2021. [Google Scholar]

- LeCun, Y.; Bottou, L.; Bengio, Y.; Haffner, P. Gradient-based learning applied to document recognition. In Proceedings of the IEEE, Orlando, FL, USA, 7–13 November 2018; pp. 2278–2324. [Google Scholar]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. Imagenet classification with deep convolutional neural networks. Adv. Neural Inf. Process. Syst. 2012, 25, 1097–1105. [Google Scholar] [CrossRef]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv 2014, arXiv:1409.1556. [Google Scholar]

- Szegedy, C.; Liu, W.; Jia, Y.Q.; Sermanet, P.; Reed, S.; Anguelov, D.; Erhan, D.; Vanhoucke, V.; Rabinovich, A. Going Deeper with Convolutions. arXiv 2014, arXiv:1409.4842. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. arXiv 2016, arXiv:1512.03385. [Google Scholar]

- Howard, A.G.; Zhu, M.; Chen, B.; Kalenichenko, D.; Wang, W.; Weyand, T.; Andreetto, M.; Adam, H. MobileNets: Efficient Convolutional Neural Networks for Mobile Vision Applications. arXiv 2017, arXiv:1704.04861. [Google Scholar] [CrossRef]

- Sandler, M.; Howard, A.; Zhu, M.; Zhmoginov, A.; Chen, L.C. MobileNetV2: Inverted Residuals and Linear Bottlenecks. arXiv 2019, arXiv:1801.04381. [Google Scholar]

- Tan, M.; Le, Q. EfficientNet: Rethinking Model Scaling for Convolutional Neural Networks. arXiv 2020, arXiv:1905.11946. [Google Scholar] [CrossRef]

- Tan, M.; Le, Q. EfficientNetV2: Smaller Models and Faster Training. arXiv 2021, arXiv:2104.00298. [Google Scholar]

- Liu, J.H.; Dong, J.X.; Wang, N.N.; Fang, H.H. Application analysis of pixel level segmentation and measurement algorithm for pavement cracks based on Crack Mask R-CNN model. Domest. Foreign Highw. 2023, 43, 47–52. [Google Scholar]

- Gao, M.Y. Research on Wood Knot Defect Detection Based on ResNet Convolutional Neural Network. Master’s Thesis, Northeast Forestry University, Harbin, China, 2022. [Google Scholar]

- Shen, D.M.; Liu, X.; Shang, Y.F.; Tang, X. Intelligent recognition method for underground drainage pipeline defects based on improving ResNet. Intell. Comput. Appl. 2024, 14, 92–98. [Google Scholar]

- Wang, W.J. Research on Surface Defect Detection of Steel Billets Based on Cross Scale Cross Weighted Feature Fusion Network. Master’s Thesis, Hefei University of Technology, Hefei, China, 2023. [Google Scholar]

- Dai, Y. Research on Surface Defect Detection and Localization of Industrial Images Based on Generative Adversarial Networks. Master’s Thesis, China West Normal University, Nanchong, China, 2024. [Google Scholar]

- Yu, S.; Xia, Y.; Guo, P.W.; Hou, R.X.; Zhang, Y.B.; Zhou, Z. L A defect detection method for solar cells based on deep convolutional neural networks. J. Sens. Technol. 2023, 36, 1165–1170. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Spatial pyramid pooling in deep convolutional networks for visual recognition. IEEE Trans. Pattern Anal. Mach. Intell. 2015, 37, 1904–1916. [Google Scholar] [CrossRef] [PubMed]

- Liu, G.; Yang, N.; Guo, L.; Guo, S.; Chen, Z. A one-stage approach for surface anomaly detection with background suppression strategies. Sensors 2020, 20, 01829. [Google Scholar] [CrossRef] [PubMed]

- Wang, X.; Wang, Y.; Xu, X.; Yan, F.; Zeng, Z. Two-stage deep neural network with joint loss and multi-level representations for defect detection. J. Electron. Imaging 2022, 31, 063060. [Google Scholar] [CrossRef]

- Redmon, J.; Divvala, S.; Girshick, R.; Farhadi, A. You only look once: Unified, real-time object detection. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 26 June–1 July 2016; pp. 779–788. [Google Scholar]

- Redmon, J.; Farhadi, A. YOLO9000: Better, faster, stronger. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2016; pp. 6517–6525. [Google Scholar]

- Redmon, J.; Farhadi, A. YOLOv3: Incremental Improvement. arXiv 2018, arXiv:1804.02767. [Google Scholar] [CrossRef]

- Bochkovskiy, A.; Wang, C.Y.; Liao, H.Y.M. YOLOv4: Optimal speed and accuracy of object detection. arXiv 2020, arXiv:1804.02767. [Google Scholar] [CrossRef]

- Ge, Z.; Liu, S.; Wang, F.; Li, Z.; Sun, J. YOLOX: Exceeding YOLO Series in 2021. arXiv 2021, arXiv:2107.08430. [Google Scholar] [CrossRef]

- Li, C.; Li, L.; Jiang, H.; Weng, K.; Geng, Y.; Li, L.; Ke, Z.; Li, Q.; Cheng, M.; Nie, W.; et al. YOLOv6: Single-Stage Object Detection Framework for Industrial Applications. arXiv 2022, arXiv:2209.02976. [Google Scholar]

- Wang, C.Y.; Bochkovskiy, A.; Liao, H.Y.M. YOLOv7: Trainable bag-of-freebies sets new state-of-the-art for real-time object detectors. arXiv 2022, arXiv:2207.02696. [Google Scholar]

- Yu, F.; Koltun, V.; Funkhouser, T. Dilated Residual Networks. arXiv 2017, arXiv:1705.09914. [Google Scholar] [PubMed]

- Chollet, F. Xception: Deep learning with depthwise separable convolutions. arXiv 2017, arXiv:1610.02357. [Google Scholar] [CrossRef]

- Woo, S.; Park, J.; Lee, J.Y.; Kweon, I.S. CBAM: Convolutional Block Attention Module. arXiv 2018, arXiv:1807.06521. [Google Scholar] [CrossRef]

- Zheng, Z.; Wang, P.; Liu, W.; Li, J.; Ye, R.; Ren, D. Distance-IoU Loss: Faster and Better Learning for Bounding Box Regression. arXiv 2019, arXiv:1911.08287. [Google Scholar] [CrossRef]

- Zhang, Y.F.; Ren, W.; Zhang, Z.; Jia, Z.; Wang, L.; Tan, T. Focal and Efficient IOU Loss for Accurate Bounding Box Regression. arXiv 2022, arXiv:2101.08158. [Google Scholar] [CrossRef]

- Huang, Y.; Qiu, C.; Yuan, K. Surface Defect Saliency of Magnetic Tile. Vis. Comput. 2020, 36, 85–96. [Google Scholar] [CrossRef]

| Experimental Environment | Configuration |

|---|---|

| CPU | AMD EPYC 9554 CPU@ 3.00 GHz × 128 |

| GPU | NVIDIA RTX A6000 × 1 |

| Memory | 125GiB |

| Operating System | Ubuntu 22.04.1 LTS × 64 (5.15.0-67-generic) |

| Deep Learning Computing Platform | Cuda11.8 |

| Deep Learning Framework | PyTorch 2.1.2 |

| Compiler Language | Python 3.10.8 |

| Algorithm | mAP@0.5 | mAP@0.5:0.95 | F1-Score | Precision | Recall | Parameters | GFLOPs | FPS |

|---|---|---|---|---|---|---|---|---|

| SSD | 0.887 | 0.596 | 0.88 | 0.96 | 0.803 | 26,375,621 | 31.6 | 52.51 |

| RT-DETR | 0.89 | 0.558 | 0.83 | 0.851 | 0.823 | 31,994,015 | 103.5 | 24.77 |

| YOLOv5 | 0.922 | 0.656 | 0.90 | 0.878 | 0.919 | 2,503,919 | 7.1 | 84.37 |

| YOLOv8 | 0.903 | 0.63 | 0.88 | 0.874 | 0.885 | 3,006,623 | 8.1 | 75.68 |

| YOLOv11 | 0.954 | 0.656 | 0.91 | 0.917 | 0.897 | 2,583,127 | 6.3 | 68.32 |

| YOLOv12 | 0.934 | 0.585 | 0.87 | 0.86 | 0.871 | 2,509,319 | 5.8 | 85.28 |

| YOLOv8-AHF(ours) | 0.962 | 0.682 | 0.93 | 0.924 | 0.943 | 3,051,829 | 8.5 | 65.55 |

| Module | mAP@0.5 | mAP@0.5:0.95 | F1-Score | Precision | Recall | Parameters | GFLOPs | FPS |

|---|---|---|---|---|---|---|---|---|

| - | 0.903 | 0.630 | 0.88 | 0.874 | 0.885 | 3,006,623 | 8.1 | 75.68 |

| GSConv | 0.934 | 0.672 | 0.89 | 0.907 | 0.890 | 2,816,767 | 7.7 | 77.76 |

| SPDConv | 0.921 | 0.663 | 0.91 | 0.968 | 0.871 | 4,182,959 | 7.6 | 79.52 |

| DyConv | 0.930 | 0.655 | 0.91 | 0.939 | 0.879 | 4,190,431 | 7.2 | 81.60 |

| ODConv | 0.956 | 0.651 | 0.91 | 0.922 | 0.909 | 5,733,850 | 15.7 | 37.44 |

| ADConv(ours) | 0.955 | 0.698 | 0.94 | 0.956 | 0.917 | 3,097,887 | 8.2 | 68.31 |

| Module | mAP@0.5 | mAP@0.5:0.95 | F1-Score | Precision | Recall |

|---|---|---|---|---|---|

| - | 0.903 | 0.63 | 0.88 | 0.874 | 0.885 |

| CA | 0.911 | 0.651 | 0.86 | 0.918 | 0.808 |

| GAM | 0.917 | 0.677 | 0.91 | 0.965 | 0.855 |

| EMA | 0.916 | 0.642 | 0.89 | 0.947 | 0.851 |

| BAM | 0.916 | 0.665 | 0.91 | 0.928 | 0.888 |

| HAM (ours) | 0.931 | 0.658 | 0.93 | 0.912 | 0.894 |

| Loss Function | mAP@0.5 | mAP@0.5:0.95 | F1-Score | Precision | Recall |

|---|---|---|---|---|---|

| - | 0.903 | 0.63 | 0.88 | 0.874 | 0.885 |

| IoU | 0.926 | 0.634 | 0.87 | 0.883 | 0.864 |

| DIoU | 0.888 | 0.629 | 0.85 | 0.876 | 0.824 |

| EIoU | 0.929 | 0.64 | 0.89 | 0.884 | 0.897 |

| AlphaIoU | 0.871 | 0.495 | 0.76 | 0.777 | 0.757 |

| Focal-EIoU(ours) | 0.935 | 0.632 | 0.90 | 0.951 | 0.868 |

| Module | mAP@0.5 | mAP@0.5:0.95 | F1-Score | Precision | Recall |

|---|---|---|---|---|---|

| - | 0.903 | 0.63 | 0.88 | 0.874 | 0.885 |

| ADConv | 0.955 | 0.668 | 0.92 | 0.956 | 0.917 |

| HAM | 0.931 | 0.658 | 0.93 | 0.912 | 0.894 |

| Focal-EIoU | 0.935 | 0.632 | 0.87 | 0.883 | 0.864 |

| ADconv+Focal-EIoU | 0.95 | 0.671 | 0.91 | 0.917 | 0.906 |

| ADconv+HAM+Focal-EIoU (YOLOv8-AHF) | 0.962 | 0.682 | 0.93 | 0.924 | 0.943 |

| Dataset | Algorithm | mAP@0.5 | mAP@0.5:0.95 | F1-Score | Precision | Recall |

|---|---|---|---|---|---|---|

| Magnetic-Tile-Defect | YOLOv8 | 0.903 | 0.630 | 0.88 | 0.874 | 0.885 |

| YOLOv8-AHF (ours) | 0.962 | 0.682 | 0.93 | 0.924 | 0.943 | |

| PKU-Market-PCB | YOLOv8 | 0.908 | 0.458 | 0.88 | 0.916 | 0.841 |

| YOLOv8-AHF (ours) | 0.955 | 0.525 | 0.93 | 0.943 | 0.914 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Ma, C.; Pan, Y.; Chen, J. Surface Defect Detection of Magnetic Tiles Based on YOLOv8-AHF. Electronics 2025, 14, 2857. https://doi.org/10.3390/electronics14142857

Ma C, Pan Y, Chen J. Surface Defect Detection of Magnetic Tiles Based on YOLOv8-AHF. Electronics. 2025; 14(14):2857. https://doi.org/10.3390/electronics14142857

Chicago/Turabian StyleMa, Cheng, Yurong Pan, and Junfu Chen. 2025. "Surface Defect Detection of Magnetic Tiles Based on YOLOv8-AHF" Electronics 14, no. 14: 2857. https://doi.org/10.3390/electronics14142857

APA StyleMa, C., Pan, Y., & Chen, J. (2025). Surface Defect Detection of Magnetic Tiles Based on YOLOv8-AHF. Electronics, 14(14), 2857. https://doi.org/10.3390/electronics14142857