A Transformer-Based Pavement Crack Segmentation Model with Local Perception and Auxiliary Convolution Layers

Abstract

1. Introduction

2. Related Work

2.1. Computer Vision Methods

2.2. Deep Learning Methods

3. Methodology

3.1. Model Architecture

3.2. Local Perception Module

3.3. Auxiliary Convolution Layer

4. Experimental Results

4.1. Dataset and Evaluation Metrics

4.2. Experimental Environment

4.3. Ablation on the Localization Module

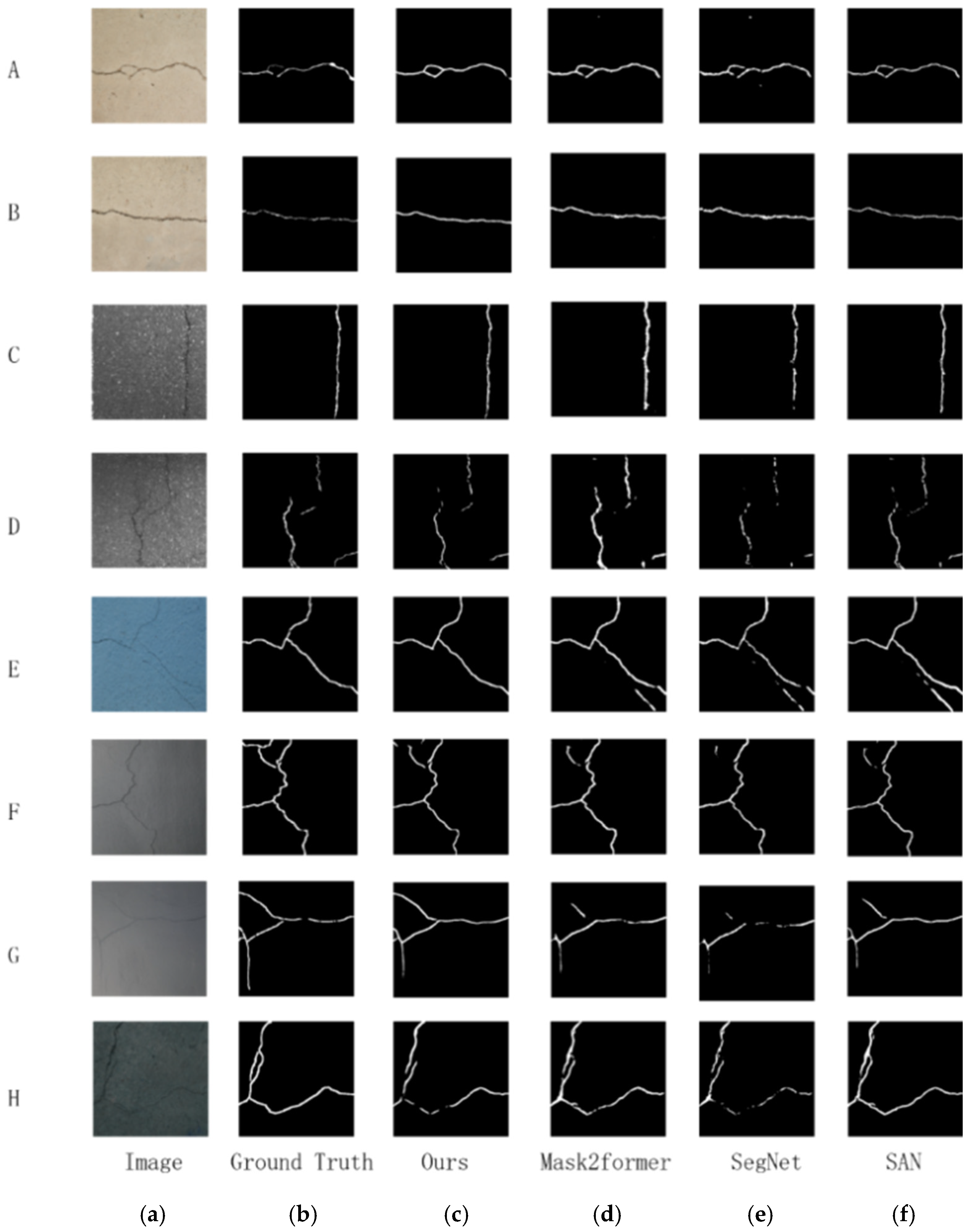

4.4. Comparison and Analysis

5. Discussion

5.1. Effectiveness of the Proposed Modules

5.2. Analysis of Model Performance

5.3. Limitations and Future Work

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Abbreviations

| Abbreviation | Full Term | Description |

| mIoU | Mean Intersection over Union | Average overlap between predicted and ground truth segments across classes |

| F1 | F1 Score | Harmonic mean of precision and recall for crack pixels |

| Acc-Crack | Accuracy for Crack Pixels | Accuracy calculated only for crack pixels |

| mAcc | Mean Accuracy | Average per-class accuracy |

| GT | Ground Truth | Manually annotated segmentation labels |

| LPM | Local Perception Module | Proposed module to enhance local feature awareness |

| ACL | Auxiliary Convolutional Layer | Proposed module to preserve high-resolution spatial details |

| FPS | Frames Per Second | Model inference speed (higher is faster) |

| CE | Cross-Entropy Loss | Standard pixel-wise classification loss |

| FL | Focal Loss | Loss function to handle class imbalance |

| CRF | Conditional Random Field | Post-processing method to refine segmentation edges |

| CAM | Class Activation Map | Visualization technique to locate important regions for predictions |

References

- Li, L.; Zhou, T.; Wang, W.; Li, J.; Yang, Y. Deep hierarchical semantic segmentation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 1246–1257. [Google Scholar]

- Chen, L.C.; Papandreou, G.; Kokkinos, I.; Murphy, K.; Yuille, A.L. DeepLab: Semantic Image Segmentation with Deep Convolutional Nets, Atrous Convolution, and Fully Connected CRFs. IEEE Trans. Pattern Anal. Mach. Intell. 2018, 40, 834–848. [Google Scholar] [CrossRef] [PubMed]

- Zhang, H.; Zhang, A.A.; Dong, Z.; He, A.; Liu, Y.; Zhan, Y.; Wang, K.C.P. Robust semantic segmentation for automatic crack detection within pavement images using multi-mixing of global context and local image features. IEEE Trans. Intell. Transp. Syst. 2024, 25, 11282–11303. [Google Scholar] [CrossRef]

- Majidifard, H.; Jin, P.; Adu-Gyamfi, Y.; Buttlar, W.G. Pavement image datasets: A new benchmark dataset to classify and densify pavement distresses. Transp. Res. Rec. 2020, 2674, 328–339. [Google Scholar] [CrossRef]

- Amhaz, R.; Chambon, S.; Idier, J.; Baltazart, V. Automatic Crack Detection on 2D Pavement Images: An Algorithm Based on Minimal Path Selection. IEEE Trans. Intell. Transp. Syst. 2016, 17, 2718–2729. [Google Scholar] [CrossRef]

- Peng, B.; Jiang, Y.-S.; Pu, Y. A review of automatic pavement crack image recognition algorithms. J. Highw. Transp. Technol. 2014, 31, 7. [Google Scholar] [CrossRef]

- Zhao, H.; Qin, G.; Wang, X. Improvement of Canny Algorithm Based on Pavement Edge Detection. In Proceedings of the 2010 3rd International Congress on Image and Signal Processing, Yantai, China, 16–18 October 2010; pp. 964–967. [Google Scholar]

- Zhang, Y.; Chen, B.; Wang, J.; Li, J.; Sun, X. APLCNet: Automatic Pixel-Level Crack Detection Network Based on Instance Segmentation. IEEE Access 2020, 8, 199159–199170. [Google Scholar] [CrossRef]

- Zou, Q.; Cao, Y.; Li, Q.; Mao, Q.; Wang, S. CrackTree: Automatic Crack Detection from Pavement Images. Pattern Recognit. Lett. 2012, 33, 227–238. [Google Scholar] [CrossRef]

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster R-CNN: Towards Real-Time Object Detection with Region Proposal Networks. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 1137–1149. [Google Scholar] [CrossRef]

- He, K.; Gkioxari, G.; Dollár, P.; Girshick, R. Mask R-CNN. In Proceedings of the 2017 IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2020; pp. 2980–2988. [Google Scholar]

- Fujita, H.; Itagaki, M.; Ichikawa, K.; Hooi, Y.K.; Kawahara, K.; Sarlan, A. Fine-tuned Surface Object Detection Applying Pre-trained Mask R-CNN Models. In Proceedings of the 2020 International Conference on Computational Intelligence, Las Vegas, NV, USA, 16–18 December 2020; pp. 17–22. [Google Scholar]

- Du, Y.; Zhong, S.; Fang, H.; Wang, N.; Liu, C.; Wu, D.; Sun, Y.; Xiang, M. Modeling Automatic Pavement Crack Object Detection and Pixel-level Segmentation. Autom. Constr. 2023, 150, 104840. [Google Scholar] [CrossRef]

- Wang, X.; Gao, H.; Jia, Z.; Li, Z. BL-YOLOv8: An Improved Road Defect Detection Model Based on YOLOv8. Sensors 2023, 23, 8361. [Google Scholar] [CrossRef]

- Zhang, Y.; Niu, P.; Guo, F.; Yan, W.; Liu, J.; Kou, L. Tunnel Lining Crack Intelligent Recognition Based on YOLOv11 Algorithm. In Proceedings of the 2024 International Conference on Smart Transportation Interdisciplinary Studies, Nanjing, China, 14–15 December 2024. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.; Polosukhin, I. Attention Is All You Need. Adv. Neural Inf. Process. Syst. 2017, 30, 1415–1423. [Google Scholar]

- Ji, A.; Xue, X.; Zhang, L.; Luo, X.; Man, Q. A transformer-based deep learning method for automatic pixel-level crack detection and feature quantification. Eng. Constr. Archit. Manag. 2025, 32, 2455–2486. [Google Scholar] [CrossRef]

- Guo, F.; Liu, J.; Lv, C.; Yu, H. A Novel Transformer-based Network with Attention Mechanism for Automatic Pavement Crack Detection. Constr. Build. Mater. 2023, 391, 131852. [Google Scholar] [CrossRef]

- Wang, Z.; Leng, Z.; Zhang, Z. A weakly-supervised transformer-based hybrid network with multi-attention for pavement crack detection. Constr. Build. Mater. 2024, 411, 134134. [Google Scholar] [CrossRef]

- Lin, C.; Tian, D.; Duan, X.; Zhou, J. TransCrack: Revisiting Fine-grained Road Crack Detection with A Transformer Design. Philos. Trans. R. Soc. A Math. Phys. Eng. Sci. 2023, 381, 20220172. [Google Scholar] [CrossRef]

- Shamsabadi, E.A.; Xu, C.; Dias-Da-Costa, D. Robust Crack Detection in Masonry Structures with Transformers. Measurement 2022, 200, 111590. [Google Scholar] [CrossRef]

- Ding, W.; Yang, H.; Yu, K.; Shu, J. Crack Detection and Quantification for Concrete Structures using UAV and Transformer. Autom. Constr. 2023, 152, 104929. [Google Scholar] [CrossRef]

- Cheng, B.; Choudhuri, A.; Misra, I.; Kirillov, A.; Girdhar, R.; Schwing, A.G. Mask2former for video instance segmentation. arXiv 2021, arXiv:2112.10764. [Google Scholar]

- Cheng, B.; Misra, I.; Schwing, A.G.; Kirillov, A.; Girdhar, R. Masked-attention Mask Transformer for Universal Image Segmentation. In Proceedings of the 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 1280–1289. [Google Scholar]

- Li, Y.; Zhang, K.; Cao, J.; Timofte, R.; Magno, M.; Benini, L.; Van Goo, L. LocalViT: Analyzing Locality in Vision Transformers. In Proceedings of the 2023 IEEE/RSJ International Conference on Intelligent Robots and Systems, Detroit, MI, USA, 1–5 October 2023; pp. 9598–9605. [Google Scholar]

- Shi, Y.; Cui, L.; Qi, Z.; Meng, F.; Chen, Z. Automatic road crack detection using random structured forests. IEEE Trans. Intell. Transp. Syst. 2016, 17, 3434–3445. [Google Scholar] [CrossRef]

- Zou, Q.; Zhang, Z.; Song, Y.; Wang, Q.; Han, Y. DeepCrack: A deep hierarchical feature learning architecture for crack segmentation. Neurocomputing 2019, 338, 139–153. [Google Scholar]

- Ronneberger, O.; Fischer, P.; Brox, T. U-Net: Convolutional Networks for Biomedical Image Segmentation. In Medical Image Computing and Computer-Assisted Intervention—MICCAI 2015; Springer: Cham, Switzerland, 2015; pp. 234–241. [Google Scholar]

- Chen, L.C.; Papandreou, G.; Kokkinos, I.; Murphy, K.; Yuille, A.L. Rethinking Atrous Convolution for Semantic Image Segmentation. arXiv 2017, arXiv:1706.05587. [Google Scholar]

- Zhao, H.; Shi, J.; Qi, X.; Wang, X.; Jia, J. Pyramid Scene Parsing Network. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 2881–2890. [Google Scholar]

- Badrinarayanan, V.; Kendall, A.; Cipolla, R. SegNet: A Deep Convolutional Encoder-Decoder Architecture for Image Segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 2481–2495. [Google Scholar] [CrossRef] [PubMed]

- Zhao, H.; Zhang, Y.; Liu, S.; Shi, J.; Loy, C.C.; Lin, D.; Jia, J. Exploring Self-Attention for Image Recognition. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 10076–10085. [Google Scholar]

- Cheng, B.; Schwing, A.G.; Kirillov, A. Per-Pixel Classification is Not All You Need for Semantic Segmentation. Adv. Neural Inf. Process. Syst. 2021, 34, 17864–17875. [Google Scholar]

| Source | Amount | Size | Percentage of Cracks (%) |

|---|---|---|---|

| CFD | 118 | 480 × 320 | 1.61 |

| Deep Crack | 175 | 544 × 384 | 4.43 |

| Ours | 442 | 3106 × 4032 | 2.12 |

| Total | 735 | —— | 1.82 |

| Local Perception Module | Auxiliary Convolution Layer | mIoU |

|---|---|---|

| ✕ | ✕ | 80.24 |

| ✓ | ✕ | 81.67 |

| ✕ | ✓ | 81.43 |

| ✓ | ✓ | 82.54 |

| Methods | mIoU | F1 | Acc- Crack | Acc-Background | mAcc | Params (M) | FPS |

|---|---|---|---|---|---|---|---|

| Unet | 50.00 | 0.00 | 0.00 | 100.00 | 50.00 | 7.76 | 22.21 |

| SegNet | 77.42 | 0.73 | 74.19 | 98.21 | 86.20 | 14.70 | 12.42 |

| PSPNet | 81.32 | 0.77 | 76.33 | 98.53 | 87.43 | 21.80 | 14.15 |

| DeepLabV3 | 50.00 | 0.00 | 0.00 | 100.00 | 50.00 | 41.31 | 16.56 |

| SAN | 79.29 | 0.75 | 78.89 | 98.57 | 88.73 | 21.82 | 14.20 |

| MaskFormer | 78.21 | 0.74 | 78.16 | 99.12 | 88.64 | 45.01 | 12.66 |

| Mask2Former | 80.24 | 0.76 | 81.27 | 99.15 | 90.21 | 44.52 | 10.20 |

| Ours | 82.54 | 0.79 | 79.55 | 99.03 | 89.29 | 56.2 | 8.31 |

| Stage | Weight (Background:Crack) |

|---|---|

| 0–5000 | 1:500 |

| 5001–10,000 | 1:200 |

| Above 10,000 | 1:50 |

| Model | IoU-Crack | IoU-Background | Acc_Crack | Acc_Background | F1 | mIoU | mAcc |

|---|---|---|---|---|---|---|---|

| Unet | 50.11 | 98.83 | 64.08 | 95.38 | 66.09 | 74.47 | 79.73 |

| DeepLabv3 | 61.43 | 98.89 | 79.24 | 97.58 | 73.27 | 80.16 | 88.41 |

| Model | Dataset | mIoU | F1 | mAcc |

|---|---|---|---|---|

| DeepCrackNet | DeepCrack | 81.44 | 78.11 | 87.20 |

| Unet | DeepCrack | 73.82 | 69.20 | 85.63 |

| Ours | DeepCrack | 83.12 | 80.53 | 88.90 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhu, Y.; Cao, T.; Yang, Y. A Transformer-Based Pavement Crack Segmentation Model with Local Perception and Auxiliary Convolution Layers. Electronics 2025, 14, 2834. https://doi.org/10.3390/electronics14142834

Zhu Y, Cao T, Yang Y. A Transformer-Based Pavement Crack Segmentation Model with Local Perception and Auxiliary Convolution Layers. Electronics. 2025; 14(14):2834. https://doi.org/10.3390/electronics14142834

Chicago/Turabian StyleZhu, Yi, Ting Cao, and Yiqing Yang. 2025. "A Transformer-Based Pavement Crack Segmentation Model with Local Perception and Auxiliary Convolution Layers" Electronics 14, no. 14: 2834. https://doi.org/10.3390/electronics14142834

APA StyleZhu, Y., Cao, T., & Yang, Y. (2025). A Transformer-Based Pavement Crack Segmentation Model with Local Perception and Auxiliary Convolution Layers. Electronics, 14(14), 2834. https://doi.org/10.3390/electronics14142834