Lightweight Video Super-Resolution for Compressed Video

Abstract

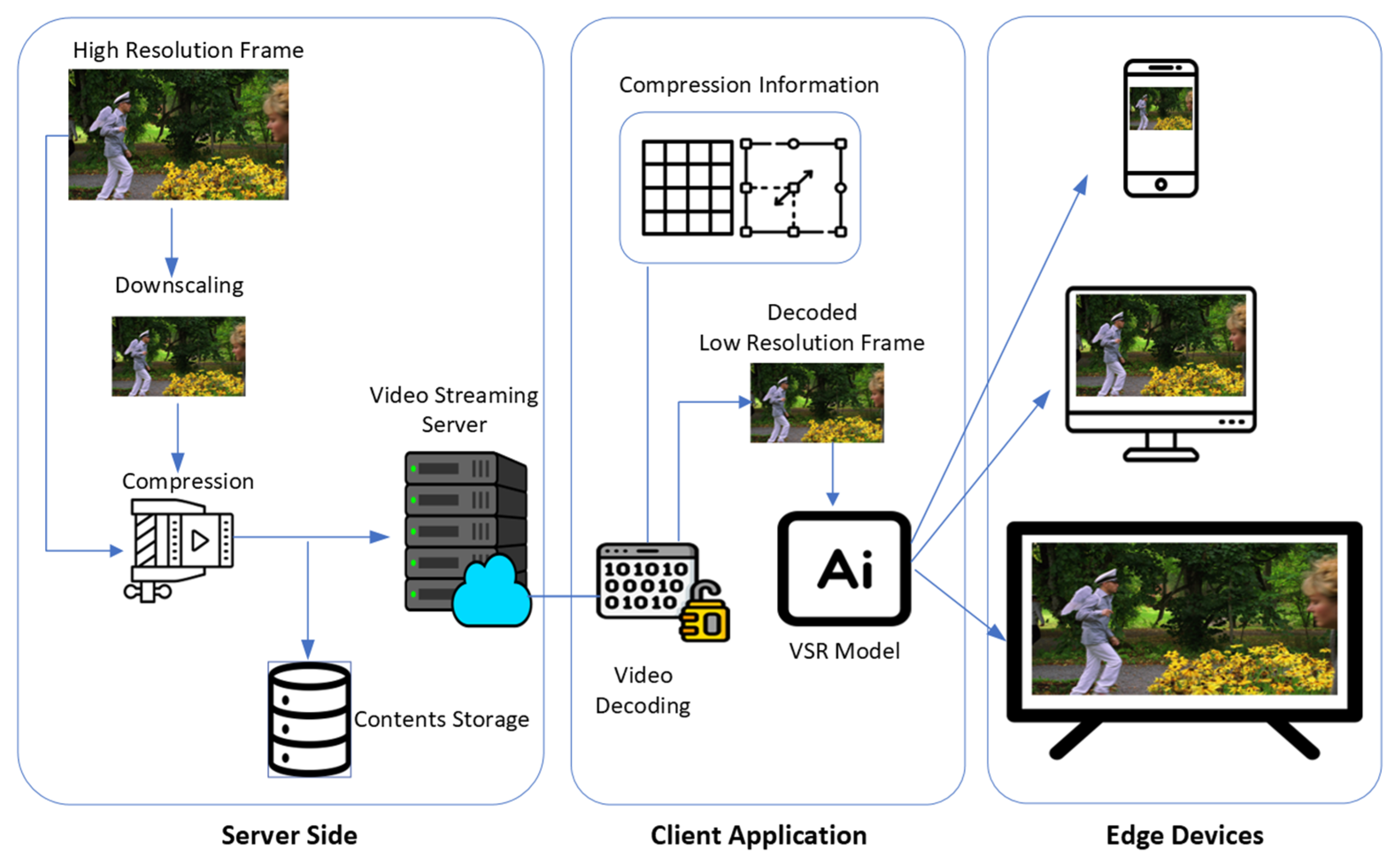

1. Introduction

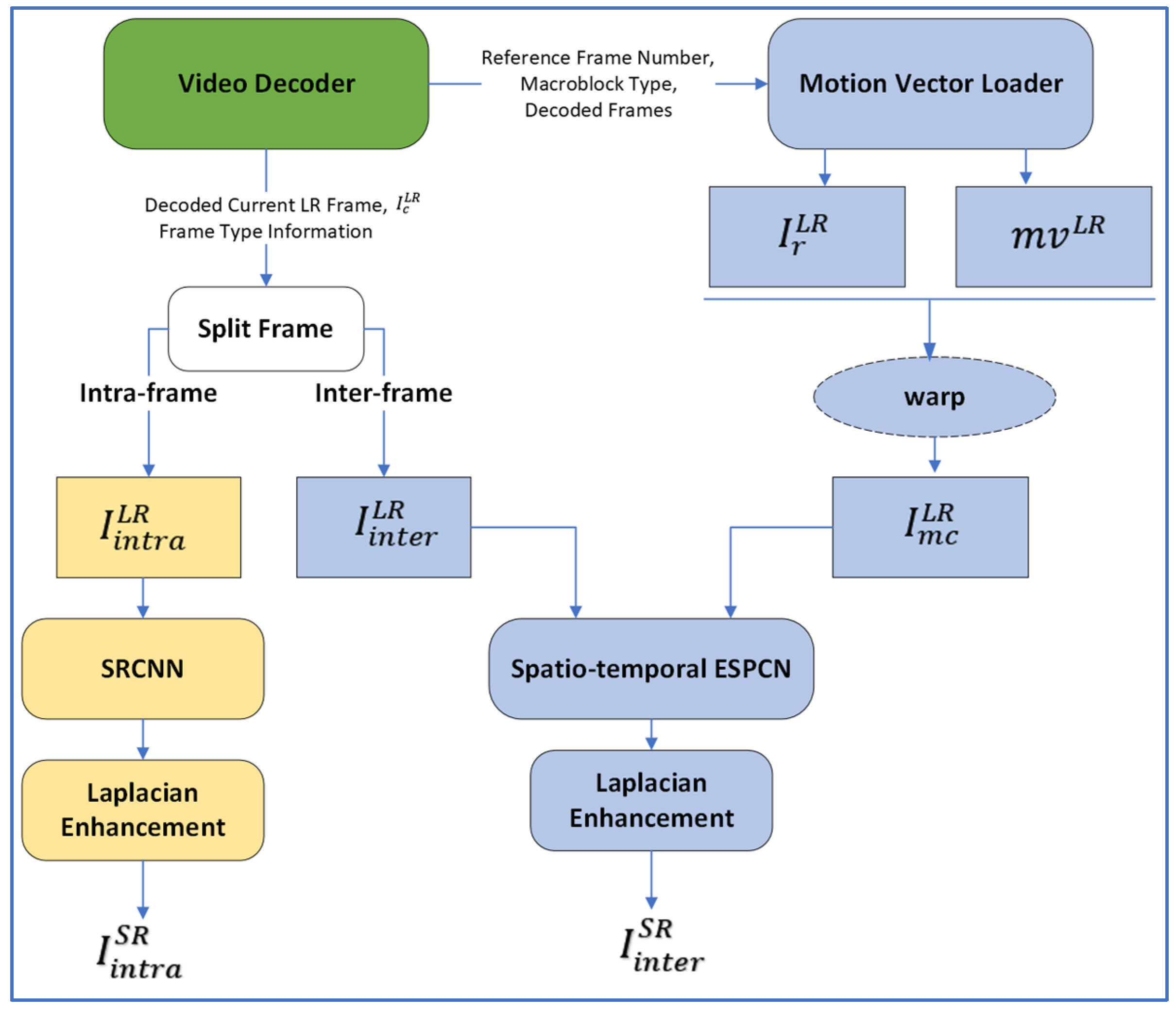

- The VSR model in this paper consumes low computational resources for inference work without significantly damaging the quality of the video through adopting a smaller number of reference frames compared to other Spatio-Temporal-based VSR models;

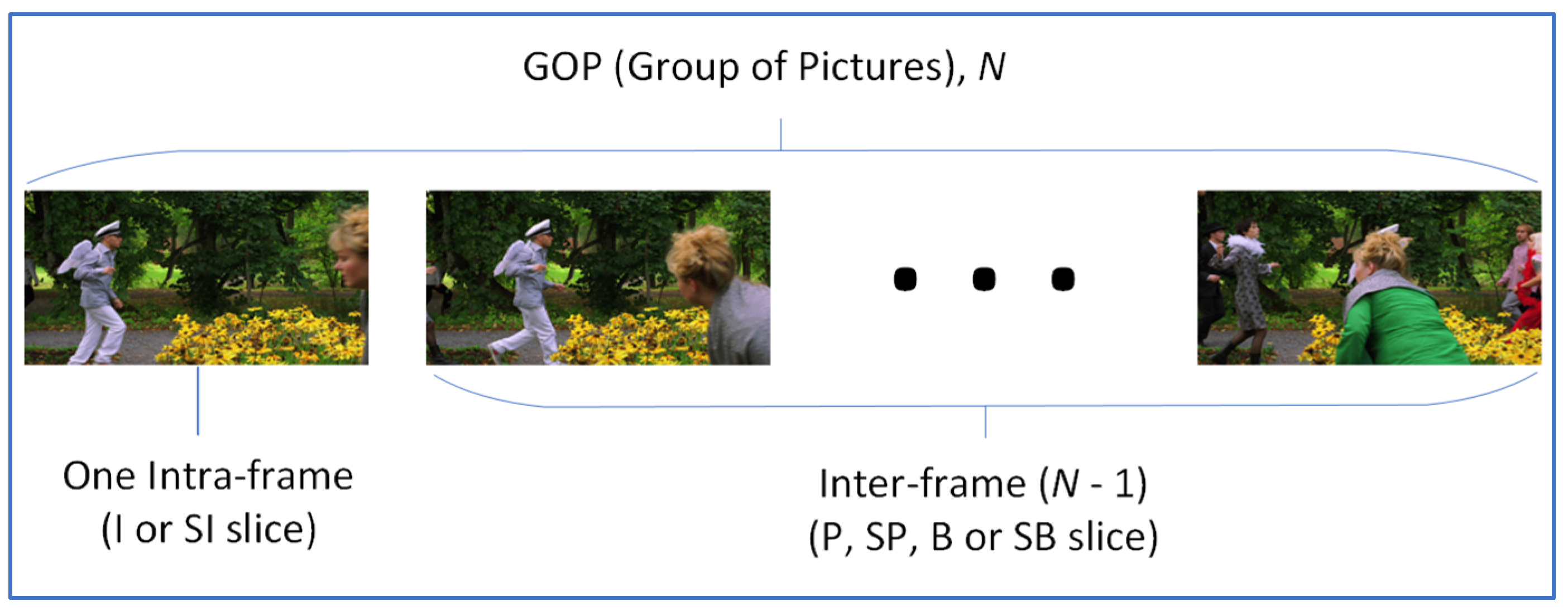

- To extend the availability of VSR model under a bad network environment, the proposed model is separable by frame type;

- The proposed VSR model is appropriate for real-time video streaming services by using various information from a video decoder.

2. Related Works

2.1. Recurrent Frame-Based VSR Network

2.2. Spatio-Temporal VSR Network

2.3. GAN Based SR

2.4. Video Compression Informed VSR

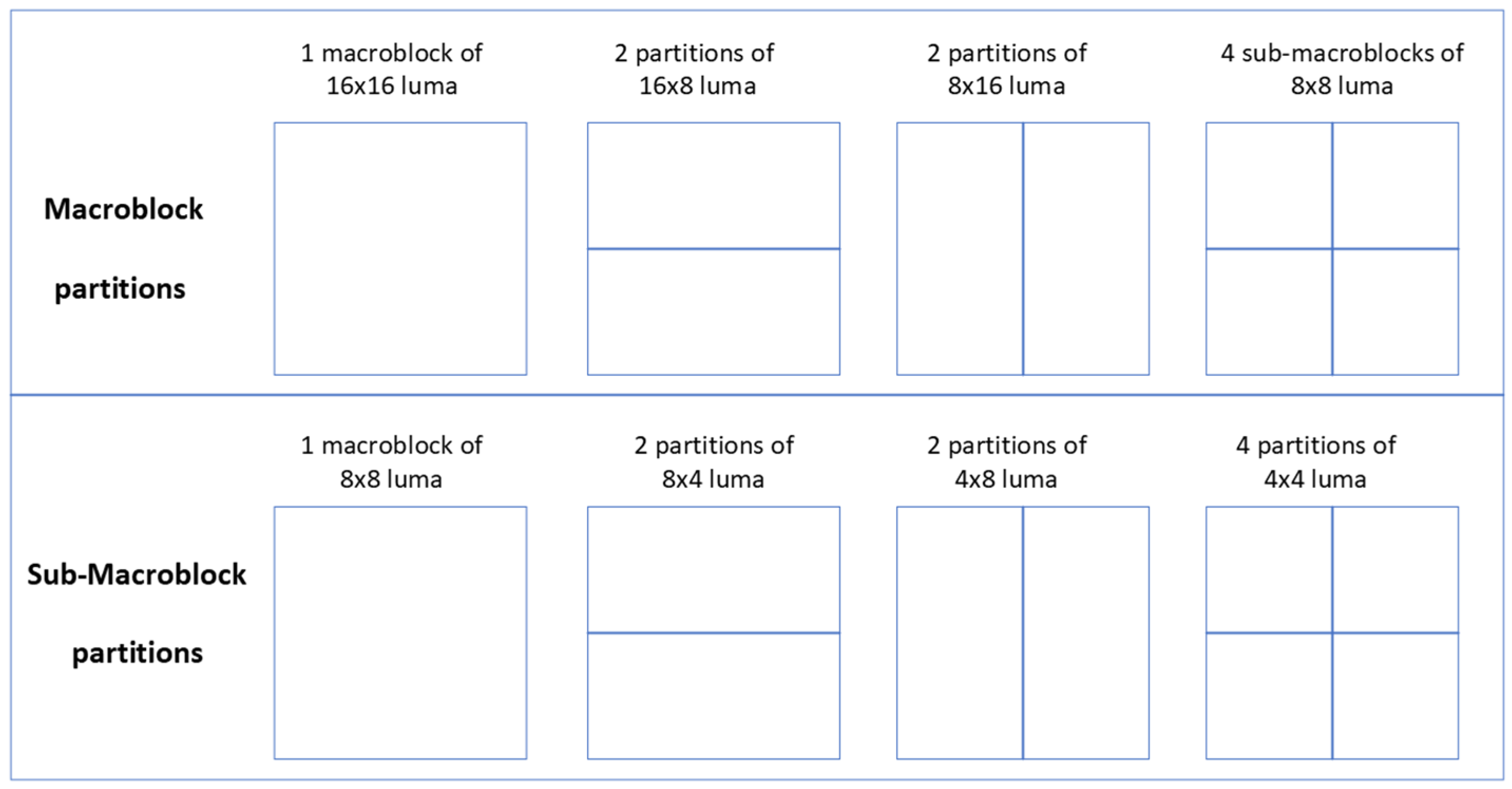

3. Methodology

4. Experiments

4.1. Training and Evaluation Dataset

4.2. Experiment Environment

4.3. Evaluation on the Vid4 Dataset

4.3.1. Quantitative Evaluation

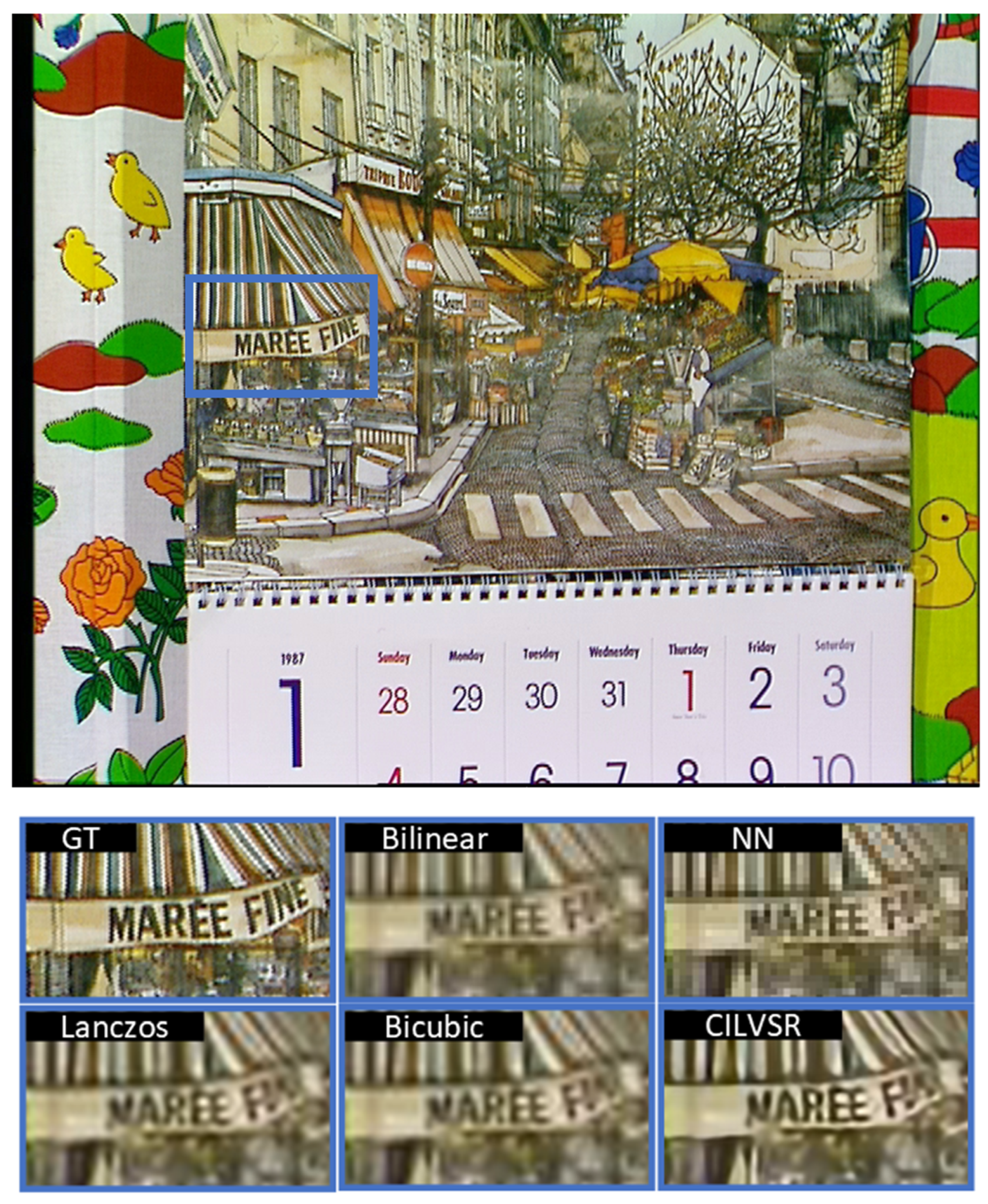

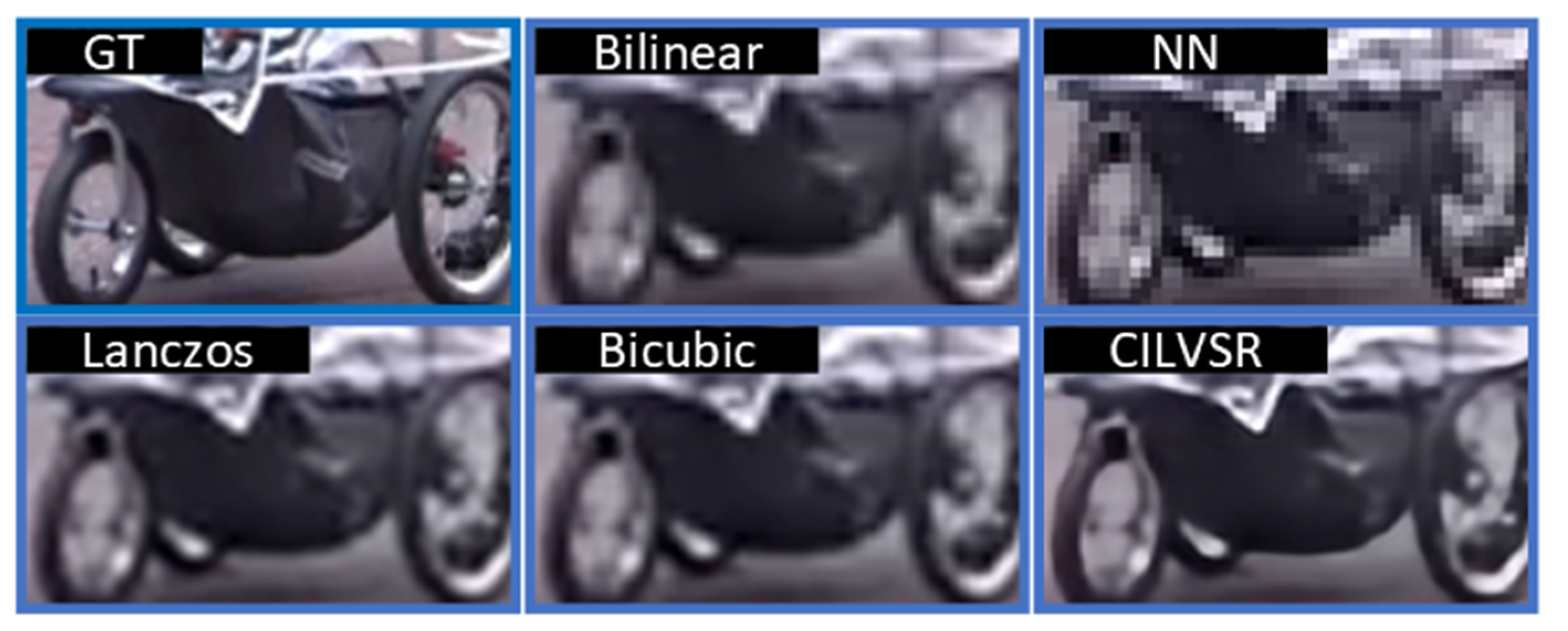

4.3.2. Qualitative Evaluation

4.4. Result Analysis

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Ant Media. Available online: https://antmedia.io/video-bitrate-vs-resolution-4-key-differences-and-their-role-in-video-streaming/ (accessed on 12 October 2022).

- Liborio, J.D.; Melo, C.; Silva, M. Internet Video Delivery Improved by Super-Resolution with GAN. Future Internet 2022, 14, 364. [Google Scholar] [CrossRef]

- Dong, C.; Loy, C.C.; He, K.; Tang, X. Image Super-Resolution Using Deep Convolutional Networks. IEEE Trans. Pattern Anal. Mach. Intell. 2014, 38, 295–307. [Google Scholar] [CrossRef]

- Lai, W.; Huang, J.; Ahuja, N.; Yang, M. Deep Laplacian Pyramid Networks for Fast and Accurate Super-Resolution. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 5835–5843. [Google Scholar]

- Kim, J.; Lee, J.K.; Lee, K.M. Accurate Image Super-Resolution Using Very Deep Convolutional Networks. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2015; pp. 1646–1654. [Google Scholar]

- Sajjadi, M.S.; Vemulapalli, R.; Brown, M.A. Frame-Recurrent Video Super-Resolution. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 6626–6634. [Google Scholar]

- Haris, M.; Shakhnarovich, G.; Ukita, N. Recurrent Back-Projection Network for Video Super-Resolution. In Proceedings of the 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 15–20 June 2019; pp. 3892–3901. [Google Scholar]

- Isobe, T.; Zhu, F.; Jia, X.; Wang, S. Revisiting Temporal Modeling for Video Super-resolution. ArXiv 2020, arXiv:abs/2008.05765. [Google Scholar]

- Wang, L.; Guo, Y.; Liu, L.; Lin, Z.; Deng, X.; An, W. Deep Video Super-Resolution Using HR Optical Flow Estimation. IEEE Trans. Image Process. 2020, 29, 4323–4336. [Google Scholar] [CrossRef]

- Xiang, X.; Tian, Y.; Zhang, Y.; Fu, Y.R.; Allebach, J.P.; Xu, C. Zooming Slow-Mo: Fast and Accurate One-Stage Space-Time Video Super-Resolution. In Proceedings of the 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; pp. 3367–3376. [Google Scholar]

- Tian, Y.; Zhang, Y.; Fu, Y.R.; Xu, C. TDAN: Temporally-Deformable Alignment Network for Video Super-Resolution. In Proceedings of the 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2018; pp. 3357–3366. [Google Scholar]

- Xue, T.; Chen, B.; Wu, J.; Wei, D.; Freeman, W.T. Video Enhancement with Task-Oriented Flow. Int. J. Comput. Vis. 2017, 127, 1106–1125. [Google Scholar] [CrossRef]

- Liu, D.; Wang, Z.; Fan, Y.; Liu, X.; Wang, Z.; Chang, S.; Wang, X.; Huang, T.S. Learning Temporal Dynamics for Video Super-Resolution: A Deep Learning Approach. IEEE Trans. Image Process. 2018, 27, 3432–3445. [Google Scholar] [CrossRef]

- Ledig, C.; Theis, L.; Huszár, F.; Caballero, J.; Aitken, A.P.; Tejani, A.; Totz, J.; Wang, Z.; Shi, W. Photo-Realistic Single Image Super-Resolution Using a Generative Adversarial Network. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2016; pp. 105–114. [Google Scholar]

- Wang, X.; Yu, K.; Wu, S.; Gu, J.; Liu, Y.; Dong, C.; Loy, C.C.; Qiao, Y.; Tang, X. ESRGAN: Enhanced Super-Resolution Generative Adversarial Networks. ECCV Workshops. 2018. Available online: https://arxiv.org/abs/1809.00219 (accessed on 21 December 2022).

- Zhang, W.; Liu, Y.; Dong, C.; Qiao, Y. RankSRGAN: Generative Adversarial Networks with Ranker for Image Super-Resolution. In Proceedings of the 2019 IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Republic of Korea, 27 October–2 November 2019; pp. 3096–3105. [Google Scholar]

- Chadha, A. iSeeBetter: Spatio-temporal video super-resolution using recurrent generative back-projection networks. Computational Visual Media 2020, 6, 307–317. [Google Scholar] [CrossRef]

- Zhang, Z.; Sze, V. FAST: A Framework to Accelerate Super-Resolution Processing on Compressed Videos. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Honolulu, Hawaii, USA, 21–26 July 2016; pp. 1015–1024. [Google Scholar]

- Li, Y.; Jin, P.; Yang, F.; Liu, C.; Yang, M.; Milanfar, P. COMISR: Compression-Informed Video Super-Resolution. In Proceedings of the 2021 IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, BC, Canada, 11–17 October 2021; pp. 2523–2532. [Google Scholar]

- Chen, P.; Yang, W.; Wang, M.; Sun, L.; Hu, K.; Wang, S. Compressed Domain Deep Video Super-Resolution. IEEE Trans. Image Process. 2021, 30, 7156–7169. [Google Scholar] [CrossRef] [PubMed]

- Zhang, H.; Zou, X.D.; Guo, J.; Yan, Y.; Xie, R.; Song, L. A Codec Information Assisted Framework for Efficient Compressed Video Super-Resolution. Eur. Conf. Comput. Vis. 2022, 1–16. [Google Scholar]

- ISO/IEC 14496-10 Advanced Video Coding. Available online: https://www.iso.org/obp/ui/#iso:std:iso-iec:14496:-10:ed-9:v1:en (accessed on 21 December 2022).

- Liu, H.; Ruan, Z.; Zhao, P.; Shang, F.; Yang, L.; Liu, Y. Video super-resolution based on deep learning: A comprehensive survey. Artif. Intell. Rev. 2020, 55, 5981–6035. [Google Scholar] [CrossRef]

- Huang, Y.; Wang, W.; Wang, L. Bidirectional Recurrent Convolutional Networks for Multi-Frame Super-Resolution. NIPS 2015. [Google Scholar]

- Zhang, J.; Xu, T.; Li, J.; Jiang, S.; Zhang, Y. Single-Image Super Resolution of Remote Sensing Images with Real-World Degradation Modeling. Remote. Sens. 2022, 14, 2895. [Google Scholar] [CrossRef]

- Jo, Y.; Oh, S.W.; Kang, J.; Kim, S.J. Deep Video Super-Resolution Network Using Dynamic Upsampling Filters Without Explicit Motion Compensation. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 3224–3232. [Google Scholar]

- Haris, M.; Shakhnarovich, G.; Ukita, N. Deep Back-Projection Networks for Super-Resolution. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018; pp. 1664–1673. [Google Scholar]

- Shi, W.; Caballero, J.; Huszár, F.; Totz, J.; Aitken, A.P.; Bishop, R.; Rueckert, D.; Wang, Z. Real-Time Single Image and Video Super-Resolution Using an Efficient Sub-Pixel Convolutional Neural Network. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 1874–1883. [Google Scholar]

- Yi, P.; Wang, Z.; Jiang, K.; Jiang, J.; Lu, T.; Tian, X.; Ma, J. Omniscient Video Super-Resolution. In Proceedings of the 2021 IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, QC, Canada, 10–17 October 2021; pp. 4409–4418. [Google Scholar]

- Kim, J.; Lee, J.K.; Lee, K.M. Deeply-Recursive Convolutional Network for Image Super-Resolution. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 1637–1645. [Google Scholar]

- Tao, X.; Gao, H.; Liao, R.; Wang, J.; Jia, J. Detail-Revealing Deep Video Super-Resolution. In Proceedings of the 2017 IEEE International Conference on Computer Vision (ICCV), Venice Italy, 22–29 October 2017; pp. 4482–4490. [Google Scholar]

- Liu, D.; Wang, Z.; Fan, Y.; Liu, X.; Wang, Z.; Chang, S.; Huang, T.S. Robust Video Super-Resolution with Learned Temporal Dynamics. In Proceedings of the 2017 IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; pp. 2526–2534. [Google Scholar]

- Li, F.; Bai, H.; Zhao, Y. Learning a Deep Dual Attention Network for Video Super-Resolution. IEEE Trans. Image Process. 2020, 29, 4474–4488. [Google Scholar] [CrossRef] [PubMed]

- Fu, L.; Li, J.; Zhou, L.; Ma, Z.; Liu, S.; Lin, Z.; Prasad, M. Utilizing Information from Task-Independent Aspects via GAN-Assisted Knowledge Transfer. In Proceedings of the International Joint Conference on Neural Networks (IJCNN), Rio de Janeiro, Brazil, 8–13 July 2018. [Google Scholar]

- Zhang, L.; Li, J.; Huang, T.; Ma, Z.; Lin, Z.; Prasad, M. GAN2C: Information Completion GAN with Dual Consistency Constraints. In Proceedings of the International Joint Conference on Neural Networks (IJCNN), Rio de Janeiro, Brazil, 8–13 July 2018. [Google Scholar]

- Liu, R.; Wang, X.; Lu, H.; Wu, Z.; Fan, Q.; Li, S.; Jin, X. SCCGAN: Style and Characters Inpainting Based on CGAN. Mob. Netw. Appl. 2021, 26, 3–12. [Google Scholar] [CrossRef]

- Mittal, A.; Soundararajan, R.; Bovik, A.C. Making a “Completely Blind” Image Quality Analyzer. IEEE Signal Process. Lett. 2013, 20, 209–212. [Google Scholar] [CrossRef]

- Ma, C.; Yang, C.; Yang, X.; Yang, M. Learning a No-Reference Quality Metric for Single-Image Super-Resolution. Comput. Vis. Image Underst. 2016, 158, 1–16. [Google Scholar] [CrossRef]

- Blau, Y.; Michaeli, T. The Perception-Distortion Tradeoff. Computer Vision and Pattern Recognition. 2017. Available online: https://arxiv.org/abs/1711.06077 (accessed on 12 December 2022).

- Qin, X.; Ban, Y.; Wu, P.; Yang, B.; Liu, S.; Yin, L.; Liu, M.; Zheng, W. Improved Image Fusion Method Based on Sparse Decomposition. Electronics 2022, 11, 2321. [Google Scholar] [CrossRef]

- Liu, H.; Liu, M.; Li, D.; Zheng, W.; Yin, L.; Wang, R. Recent Advances in Pulse-Coupled Neural Networks with Applications in Image Processing. Electronics 2022, 11, 3264. [Google Scholar] [CrossRef]

- Dong, C.; Li, Y.; Gong, H.; Chen, M.; Li, J.; Shen, Y.; Yang, M. A Survey of Natural Language Generation. ACM Comput. Surv. 2022, 55, 1–38. [Google Scholar] [CrossRef]

- Zabalza, M.C.; Bernardini, A. Super-Resolution of Sentinel-2 Images Using a Spectral Attention Mechanism. Remote. Sens. 2022, 14, 2890. [Google Scholar] [CrossRef]

- Zhang, R.; Isola, P.; Efros, A.A.; Shechtman, E.; Wang, O. The Unreasonable Effectiveness of Deep Features as a Perceptual Metric. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 586–595. [Google Scholar]

- Sajjadi, M.S.; Schölkopf, B.; Hirsch, M. EnhanceNet: Single Image Super-Resolution Through Automated Texture Synthesis. In Proceedings of the 2017 IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2016; pp. 4501–4510. [Google Scholar]

- Johnson, J.; Alahi, A.; Fei-Fei, L. Perceptual Losses for Real-Time Style Transfer and Super-Resolution. arXiv 2016, arXiv:abs/1603.08155. [Google Scholar]

- Huang, J.; Singh, A.; Ahuja, N. Single Image Super-Resolution from Transformed Self-Exemplars. In Proceedings of the 2015 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 2015; pp. 5197–5206. [Google Scholar]

- Clark, A.; Donahue, J.; Simonyan, K. Efficient Video Generation on Complex Datasets. ArXiv 2019, arXiv:ArXiv:abs/1907.06571. [Google Scholar]

- Dong, C.; Deng, Y.; Loy, C.C.; Tang, X. Compression Artifacts Reduction by a Deep Convolutional Network. In Proceedings of the 2015 IEEE International Conference on Computer Vision (ICCV), Santiago, Chile, 7–13 December 2015; pp. 576–584. [Google Scholar]

- Kim, K.I.; Kwon, Y. Single-image super-resolution using sparse regression and natural image prior. IEEE Trans. Pattern Anal. Mach. Intell. 2010, 32, 1127–1133. [Google Scholar] [PubMed]

- Timofte, R.; De Smet, V.; Van Gool, L. Anchored neighborhood regression for fast example-based super-resolution. In Proceedings of the IEEE International Conference on Computer Vision (ICCV); 2013; pp. 1920–1927. [Google Scholar]

- H.264 Reference Software. Available online: https://iphome.hhi.de/suehring/tml/download/ (accessed on 21 December 2022).

- She, Q.; Hu, R.; Xu, J.; Liu, M.; Xu, K.; Huang, H. Learning High-DOF Reaching-and-Grasping via Dynamic Representation of Gripper-Object Interaction. ACM Trans. Graph. 2022, 41, 1–14. [Google Scholar] [CrossRef]

- Caballero, J.; Ledig, C.; Aitken, A.P.; Acosta, A.; Totz, J.; Wang, Z.; Shi, W. Real-Time Video Super-Resolution with Spatio-Temporal Networks and Motion Compensation. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2016; pp. 2848–2857. [Google Scholar]

- ITS. Consumer Digital Video Library. Available online: https://www.cdvl.org/ (accessed on 22 December 2022).

- Ma, D.; Afonso, M.; Zhang, F.; Bull, D.R. Perceptually-inspired super-resolution of compressed videos. Opt. Eng. + Appl. 2019, 11137, 1113717. [Google Scholar]

- Keys, R. Cubic convolution interpolation for digital image processing. IEEE Trans. Acoust. Speech Signal Process. 1981, 29, 1153–1160. [Google Scholar] [CrossRef]

- Turkowski, K. Filters for Common Resampling Tasks. In Graphics Gems; Academic Press Professional, Inc.: Cambridge, MA, USA, 1990; pp. 147–165. [Google Scholar]

- Mo, Y.; Chen, D.; Su, T. A lightweight hardware-efficient recurrent network for video super-resolution. Electron. Lett. 2022, 58, 699–701. [Google Scholar] [CrossRef]

- Shang, F.; Liu, H.; Ma, W.; Liu, Y.; Jiao, L.; Shang, F.; Wang, L.; Zhou, Z. Lightweight Super-Resolution with Self-Calibrated Convolution for Panoramic Videos. Sensors 2022, 23, 392. [Google Scholar] [CrossRef]

| Type | Video Bitrate, Standard Frame Rate (24, 25, 30) | Video Bitrate, High Frame Rate (48, 50, 60) |

|---|---|---|

| 2160p (4K) | 35~45 Mbps | 53~68 Mbps |

| 1440p (2K) | 16 Mbps | 24 Mbps |

| 1080p | 8 Mbps | 12 Mbps |

| 720p | 5 Mbps | 7.5 Mbps |

| 480p | 2.5 Mbps | 4 Mbps |

| 360p | 1 Mbps | 1.5 Mbps |

| slice_Type | Name | slice_Type | Name |

|---|---|---|---|

| 0 | P (P slice) | 5 | P (P slice) |

| 1 | B (B slice) | 6 | B (B slice) |

| 2 | I (I slice) | 7 | I (I slice) |

| 3 | SP (SP slice) | 8 | SP (SP slice) |

| 4 | SI (SI slice) | 9 | SI (SI slice) |

| mb_Type | Name of mb_Type |

|---|---|

| 0 | P_L0_16 × 16 |

| 1 | P_L0_L0_16 × 8 |

| 2 | P_L0_L0_8 × 8 |

| 3 | P_8 × 8 |

| 4 | P_8 × 8ref0 |

| inferred | P_Skip |

| 5 | I_4 × 4 |

| 6~29 | I_16 × 16 |

| 30 | I_PCM |

| Layer | SRCNN |

|---|---|

| 1 | Conv2d (1, 64, kernel_size = 9, padding = 4)/ReLu |

| 2 | Conv2d (64, 32, kernel_size = 5, padding = 2)/ReLu |

| 3 | Conv2d (32, 1, kernel_size = 5, padding = 2)/ReLu |

| Layer | Spatio-Temporal ESPCN |

|---|---|

| 1 | Conv2d (Nc*Ns, 64, kernel_size = 3, padding = 1)/LeakyReLu |

| 2 | Conv2d (64, 64, kernel_size = 3, padding = 1)/LeakyReLu |

| 3 | Conv2d (64, 64, kernel_size = 3, padding = 1)/LeakyReLu |

| 4 | Conv2d (64, 32, kernel_size = 3, padding = 1)/LeakyReLu |

| 5 | Conv2d (32, 32, kernel_size = 3, padding = 1)/LeakyReLu |

| 6 | Conv2d (32, 32, kernel_size = 3, padding = 1)/LeakyReLu |

| 7 | Conv2d (32, 20, kernel_size = 3, padding = 1)/LeakyReLu |

| 8 | Conv2d (20, Nc*Scale2, kernel_size = 3, padding = 1)/LeakyReLu/ PixelShuffle(Scale) |

| 9 | Conv2d (Nc, Nc, kernel_size = 1, padding = 0)/LeakyReLu |

| Scale | Vid4 Dataset | SOF-VSR [9] | VESPCN [54] | Bicubic [57] | CILVSR |

|---|---|---|---|---|---|

| ×4 | Calendar | 22.72 | - | 20.26 | 20.88 |

| City | 26.73 | - | 25.52 | 24.85 | |

| Foliage | 25.52 | - | 23.00 | 23.46 | |

| Walk | 29.92 | - | 25.40 | 26.36 | |

| Average | 25.97 | 25.35 * | 23.54 | 23.89 |

| Scale | Vid4 Dataset | SOF-VSR [9] | VESPCN [54] | Bicubic [57] | CILVSR |

|---|---|---|---|---|---|

| ×4 | Calendar | 0.74 | - | 0.59 | 0.60 |

| City | 0.74 | - | 0.54 | 0.58 | |

| Foliage | 0.72 | - | 0.51 | 0.56 | |

| Walk | 0.88 | - | 0.76 | 0.79 | |

| Average | 0.77 | 0.76 * | 0.60 | 0.63 |

| Scale | SOF-VSR [9] | VESPCN [54] | CILVSR | |

|---|---|---|---|---|

| ×4 | Parameter number | 1M | - | 121K |

| FLOPs | 112.5G | 14.0G * | 2.8G | |

| Average inference time per Frame (msec) | 240 | - | 40 | |

| ×3 | Parameter number | 1.1M | - | 119K |

| FLOPs | 205.0G | 24.23G * | 5.0G | |

| Average inference time per Frame (msec) | 337 | - | 50 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kwon, I.; Li, J.; Prasad, M. Lightweight Video Super-Resolution for Compressed Video. Electronics 2023, 12, 660. https://doi.org/10.3390/electronics12030660

Kwon I, Li J, Prasad M. Lightweight Video Super-Resolution for Compressed Video. Electronics. 2023; 12(3):660. https://doi.org/10.3390/electronics12030660

Chicago/Turabian StyleKwon, Ilhwan, Jun Li, and Mukesh Prasad. 2023. "Lightweight Video Super-Resolution for Compressed Video" Electronics 12, no. 3: 660. https://doi.org/10.3390/electronics12030660

APA StyleKwon, I., Li, J., & Prasad, M. (2023). Lightweight Video Super-Resolution for Compressed Video. Electronics, 12(3), 660. https://doi.org/10.3390/electronics12030660