1. Introduction

In contemporary computing, the majority of tasks necessitate some form of digital signal processing [

1]. With the escalating computational capabilities of digital processing systems, there is a concurrent surge in power consumption. This is further exacerbated by the rapid advancements in the field of artificial intelligence. Neuromorphic sampling, or time encoding, is an alternative to traditional digital encoding that transforms an analog signal into a sequence of low-power time-based pulses, often referred to as a spike train. Neuromorphic sampling draws its inspiration from neuroscience and introduces a paradigm shift by significantly reducing power consumption during encoding and transmission [

2,

3]. Despite these advantages, as of now, there exist no equivalents of digital signal processing operations tailored to neuromorphic sampling. This unexplored territory holds the promise of groundbreaking developments in low-power and efficient signal processing.

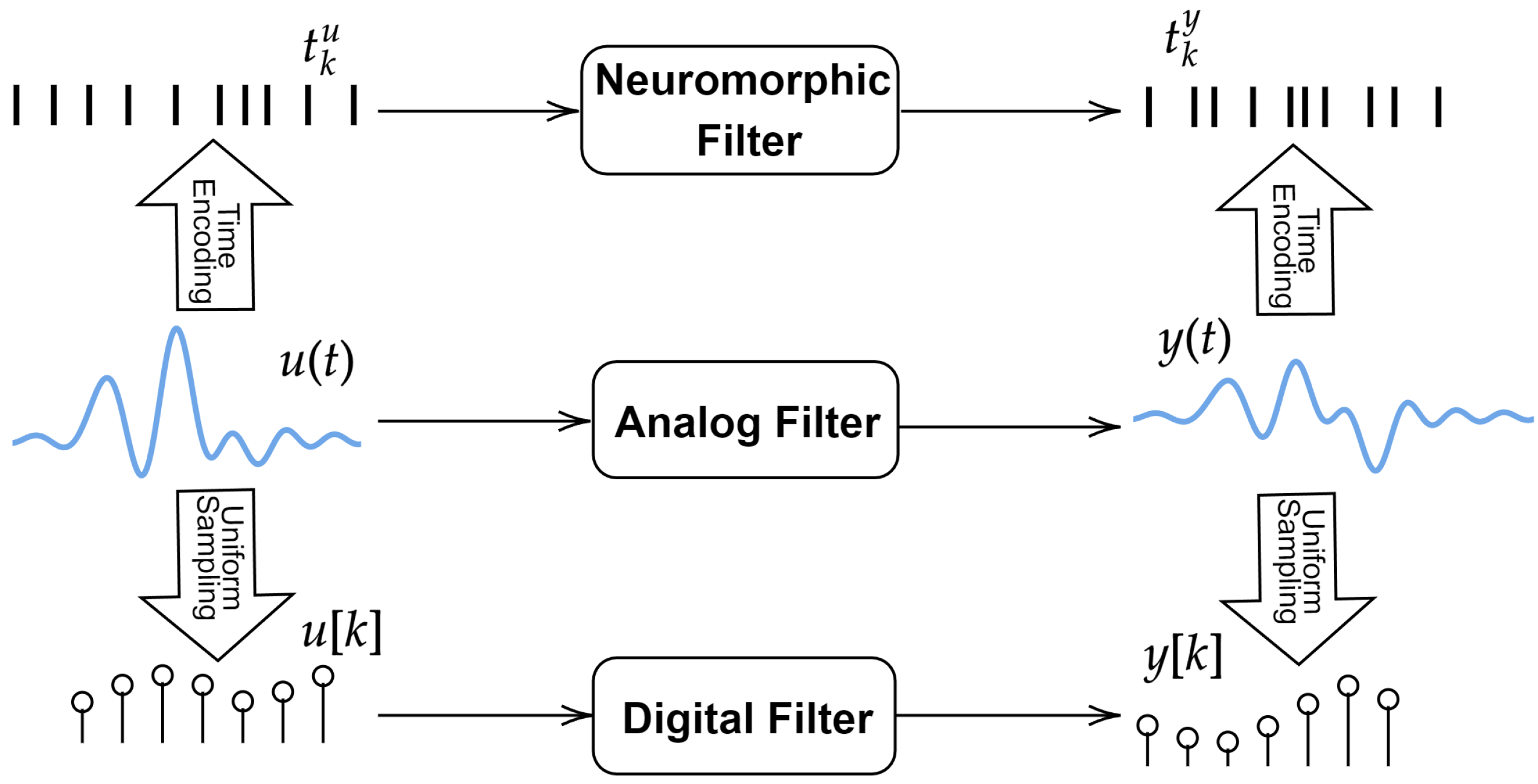

In this paper, we address the problem of filtering a signal using its neuromorphic measurements, thus extending the principle of digital signal filtering for the case of neuromorphic sampling. In posing this problem, we do not seek alternatives for spiking neural networks, but rather theoretically validated analytical approaches. Moreover, the proposed problem is not to replace existing conventional digital signal processing, but it is posed under the assumption that the communication protocol is emitting and receiving spike trains [

4,

5]. In this case, the proposed problem is to perform the mathematical operation of filtering on the analog signals encoded in these spike trains.

In the literature, the concept of spike train filtering predominantly refers to convolving the filter function with a sequence of Diracs centered in the spike times [

6]. This is not an operation on the analog signal that resulted in those neuromorphic samples. Moreover, in the context of neuromorphic measurements generated from multidimensional signals, such as those generated by event cameras [

7], filtering also refers to performing multidimensional spatial convolution [

8,

9]. The case of filtering the analog signal via its neuromorphic measurements was proposed in [

10], but the process also involves signal reconstruction. A filtering approach of neuromorphic signals without reconstruction was first studied in [

11].

Therefore, conventionally, to process the signal underlying a sequence of spike measurements, the signal is recovered in the analog domain, followed by filtering and neuromorphic sampling. There are a number of drawbacks with this approach. First, this method does not exploit the power consumption advantage of neuromorphic measurements. Second, this approach is heavier computationally due to the complexity of signal reconstruction from neuromorphic samples [

12,

13]. Third, reconstruction is only possible if the input satisfies some restrictive smoothness or sparsity constraints. However, for conventional sampling, the process of digital filtering is independent of the charateristics of the signal that generated the measurements.

In this paper, we derive a direct mapping between the time encoded inputs and outputs of an analog filter. The proposed mapping forms the basis for a practical filtering algorithm of the underlying signal corresponding to some given neuromorphic measurements, without direct access to the signal. We introduce theoretical guarantees and error bounds for the measurements generated with the proposed algorithm. Through numerical simulations, we demonstrate the performance of our method in terms of speed, but also reduced restrictions on the input signal, in comparison with the existing conventional method.

This paper is structured as follows.

Section 2 presents a brief review of the time encoding model used in this paper and associated input reconstruction methods.

Section 3 introduces the proposed problem.

Section 4 describes the proposed filtering method. Numerical results are presented in

Section 5.

Section 6 presents the concluding remarks.

2. Time Encoding

The time encoding machine (TEM) is a conceptualization of neuromorphic sampling that maps input

into sequence of samples

. The particular TEM considered here is the integrate-and-fire (IF) TEM, which is inspired by neuroscience. Consequently, sequence

is called a spike train, where the spike refers to the firing of an action potential, representing the information transmission method in the mammalian cortex. Previously, the IF model was used for system identification of biological neurons [

14,

15] to perform machine learning tasks [

16,

17] but also for input reconstruction [

13,

18,

19,

20,

21]. The IF model adds input

with bias parameter

b, and subsequently integrates the output to generate a strictly increasing function

. When

crosses threshold

, the integrator is reset and the IF generates output spike time

. The IF TEM is described by the following equations:

Without reducing the generality, it is assumed that

. A common assumption is that the input is bounded by

, such that

. This bound enables derivation of the following density guarantees [

2]:

Signal

is, in the general case, not recoverable from

. To ensure it can be reconstructed, we require imposing restrictive assumptions. A common assumption is that

belongs to

, the space of functions bandlimited to

that are also square integrable, i.e.,

. If this assumption is satisfied, then

can be recovered from

if

To ofer an intuition on the recovery condition and its link to the Nyquist rate, we note that TEM Equation (

1) can be re-written as

where

denotes the inner product in Hilbert space

of square integrable functions and

is the characteristic function of

. We note that although

, it is not bandlimited, i.e.,

. However, due to the properties of the inner product [

22],

where

denotes the projection of

in

. In the case of the Nyquist rate condition, the uniform samples can be described as

The Nyquist rate criterion [

23] ensures that

uniquely identify

if

, which is guaranteed by the fact that functions

form a basis in

. The same is not true in the case of time encoding, which is a form of nonuniform sampling [

24,

25,

26], where

do not form a basis. They can, however, form the more general concept of frame [

27], which guarantees that

is uniquely determined by

if sequence

is dense enough. Via (

2), we can use

as a measure of density of sequence

, yielding Nyquist-like criterion (

3).

Input

is then recovered from

as

where

are the midpoints of intervals

and

are the solution in the least square sense of the following system [

22]:

Unlike uniform sampling, the input recovery for an IF TEM is much more complex computationally because, for each new input, functions

have to be computed and System (

6) needs to be solved. This becomes very demanding computationally for long sequences

. Alternative recovery approaches are based on optimizing a smoothness-based criterion instead of aiming to uniquely recover the input [

28,

29]. Moreover, the problem of input recovery was shown to be equivalent to that of system identification of a filter in series with an IF TEM in the case of linear [

30,

31] and nonlinear filters [

32].

The methods presented so far are assuming the input is bandlimited. Further generalizations were introduced for the case where

is a function in a shift-invariant space [

13,

19] or in a space with finite rate of innovation [

20,

21]. However, if

does not belong to one of the classes above, or if it is bandlimited and does not satisfy (

3), then the conventional theory does not allow any processing of signal

via its samples

. The same is not true for conventional digital signals, which can be processed even when they are not sampled at the Nyquist rate. We show that some types of processing such as filtering are still doable even when (

3) is not true.

3. Problem Statement

Here, we formulate the proposed signal filtering problem as follows. We assume the neuron input is continuous, i.e.,

. To satisfy the neuron encoding requirement in

Section 2, we assume the input is bounded, such that

. Furthermore, we assume that the input is absolutely integrable

and square integrable

. Following the same idea as in digital filtering, we do not impose any general conditions on the bandwidth, smoothness, or sparsity of the analog signal in order to compute the filter output.

The filter is assumed to be linear, with impulse response

that is continuous,

, and absolutely integrable,

. The output of the filter then satisfies

where the last inequality assumes that

, which is introduced to ensure that

, which in turn allows

sampling by the same neuron. According to the properties of the convolution operator, we also have

, where

denotes the space of square integrable functions.

We let

and

be the neuromorphic samples of signals

u and

y, respectively, computed using an IF neuron with parameters

. The proposed problem is to compute

knowing

, sampling parameters

and filter

. This problem, illustrated in

Figure 1, is inspired by digital signal processing, where a digital filter is applied directly to the samples of a signal. The conventional way to address this problem would be to recover

from

, apply filter

in the analog domain, and subsequently sample output

with the same IF model to obtain

. We refer to this as the indirectmethod for filtering. The first step of recovery, however, is not possible unless we impose some further restrictive conditions on

such as being bandlimited [

22], shift-invariant [

13,

19], or having a finite rate of innovation [

18,

20]. Therefore, the proposed problem is not solvable in its full generality using conventional approaches.

However, if we replace neuromorphic sampling by conventional uniform sampling, this problem leads to the widely used operation of digital filtering. The operation itself does not require any special conditions on the analog signal that generated these samples. Therefore, an equivalent of this solution for the case of neuromorphic sampling is highly desirable.

4. The Proposed Neuromorphic Direct Filtering Method

In this section, we describe the proposed direct filtering method. To compute

from

we need to create an analytical link between the integrals of the underlying analog signals

and

due to the integral operators in (

1) and (

7). To this end, we define the following auxiliary functions:

We note that

U satisfies

. Using Young’s convolution inequality, we obtain

. Using these functions, we derive the equivalent of

t-transform Equations (

1) as

Assuming we know

, the target spike train

satisfies

, where

The following result shows that can be uniquely computed using .

Lemma 1 (Exact Output Samples Computation).

Function has a unique fixed point . Furthermore, we let be computed recursively such that is arbitrary andThen, and .

Proof. We assume, by contradiction, that

such that

. It follows that

On the other hand, we know that

due to (

7), and thus

is a strictly increasing function of

, which ensures that

has a unique solution. Using (

9), we obtain

, which invalidates our initial assumption and proves uniqueness.

From (

9), it follows that

The following holds:

and thus

We let

and

. It follows that

Similarly, by choosing

and

, we obtain

and the process continues recursively, which completes the proof via

. □

Therefore,

can be computed by solving the fixed-point equation

. This equation requires knowing

, which satisfies

where the last equality uses the variable change

. In reality, however,

is unknown, since it could only be precisely computed using

. Given that we only know

and do not impose any smoothness or sparsity conditions on

, we do not have access to

, but only to its samples

via (

9). In the following, we show that

,

and subsequently

can be estimated using a piecewise constant approximation of

at points

. We let

be defined by

where

where

is the piecewise constant interpolant to

U at points

, such that

for

. The next proposition derives some properties of

.

Proposition 1. Function is continuous and satisfieswhere . Proof. Using (

15), we obtain

which proves (

16). It follows that

is a linear combination of continuous functions; thus, it is itself continuous. □

Proposition 1 shows that, unlike and , function and, consequently, also , are fully known from the IF parameters and input samples . The remaining challenge is to show that the fixed point equation can be solved and to provide an error bound for estimating . This challenge is addressed rigorously in the next theorem. Moreover, the result allows computing recursively a sequence of estimations that converges to a vicinity of .

Theorem 1 (Estimating Output Samples from Input Samples). We let be a signal satisfying . Furthermore, we let be the impulse response of a filter satisfying , and let be the filter output in response to input . Signals and , sampled with an IF neuron with parameters , generate values and , respectively. Then, the following hold true:

- (a)

such that where satisfies (14). - (b)

We let be a sequence defined recursively as , where . Then, - (c)

For defined above, such that .

Proof. Function

satisfies

where

From (

12), the following holds:

Using

,

Unlike in the case of Lemma 1, in this case, applying

recursively does not guarantee the exact computation of

. However, we observe that by picking

, we obtain identical intervals for

t and

:

This observation is very useful as it enables applying Brouwer’s fixed-point theorem which states that for any continuous function

where

is a nonempty compact convex set, there is a point

such that

. Given that

is continuous due to Proposition 1, it follows that

is also continuous. By applying Brouwer’s fixed point theorem for

and

, it follows that

has a fixed point in

. We recall that

, which, using (

2), leads to

It follows that , which yields the required result.

We approach this proof using mathematical induction. We select

in (

21). Using (

23), it follows that

We note that

is always true for

, which yields

This demonstrates that (

18) is true for

. To finalize the induction, we assume (

18) to be true, and show it is true for

as follows:

Finally, as before, we use the fact that

guarantees

. We also use the fact that

is bounded by (

18), which, when substituted in (

26), leads to the desired result via (

23).

(

c) Equation (

18) can be expanded into

The required result follows from

via (

23). □

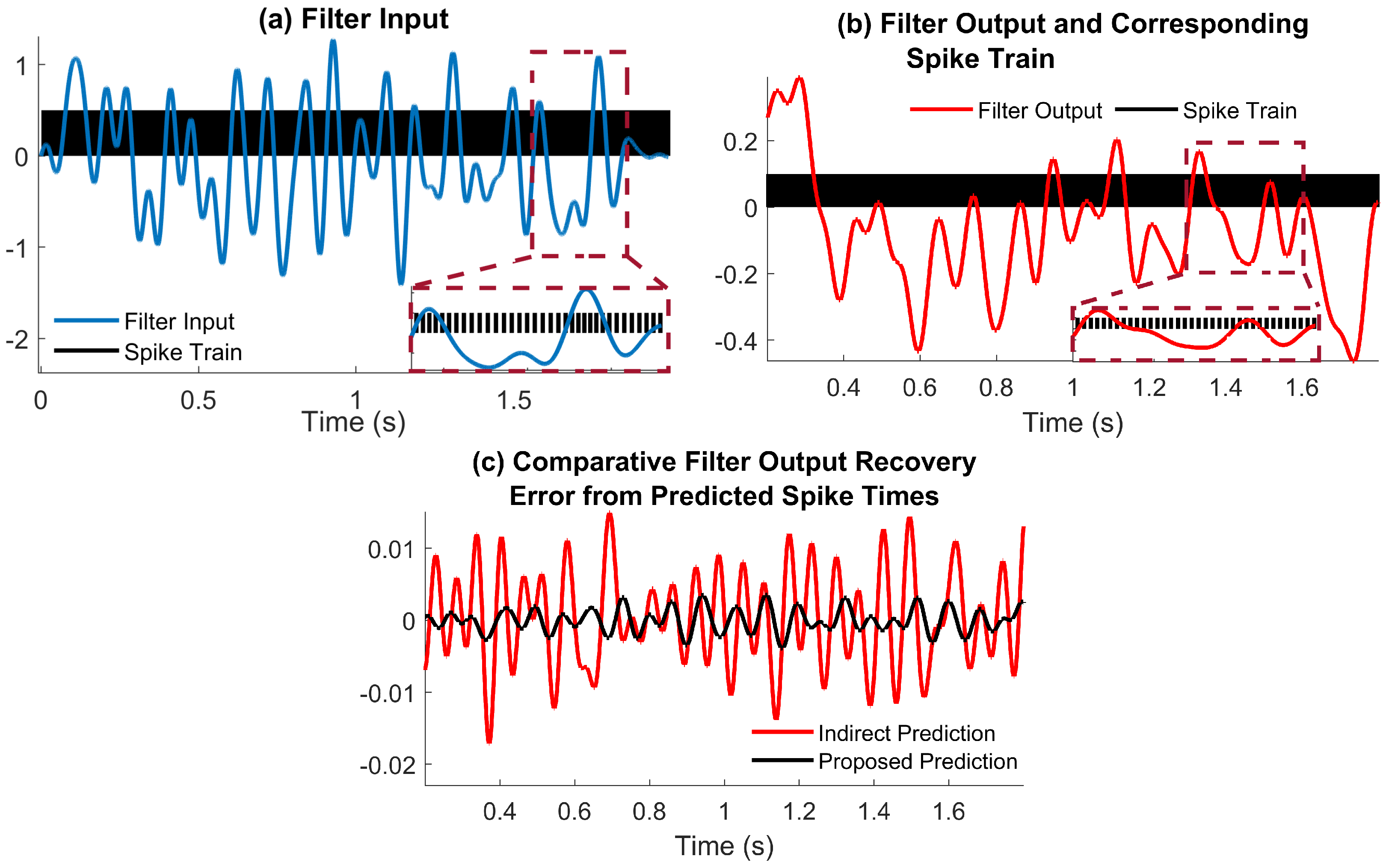

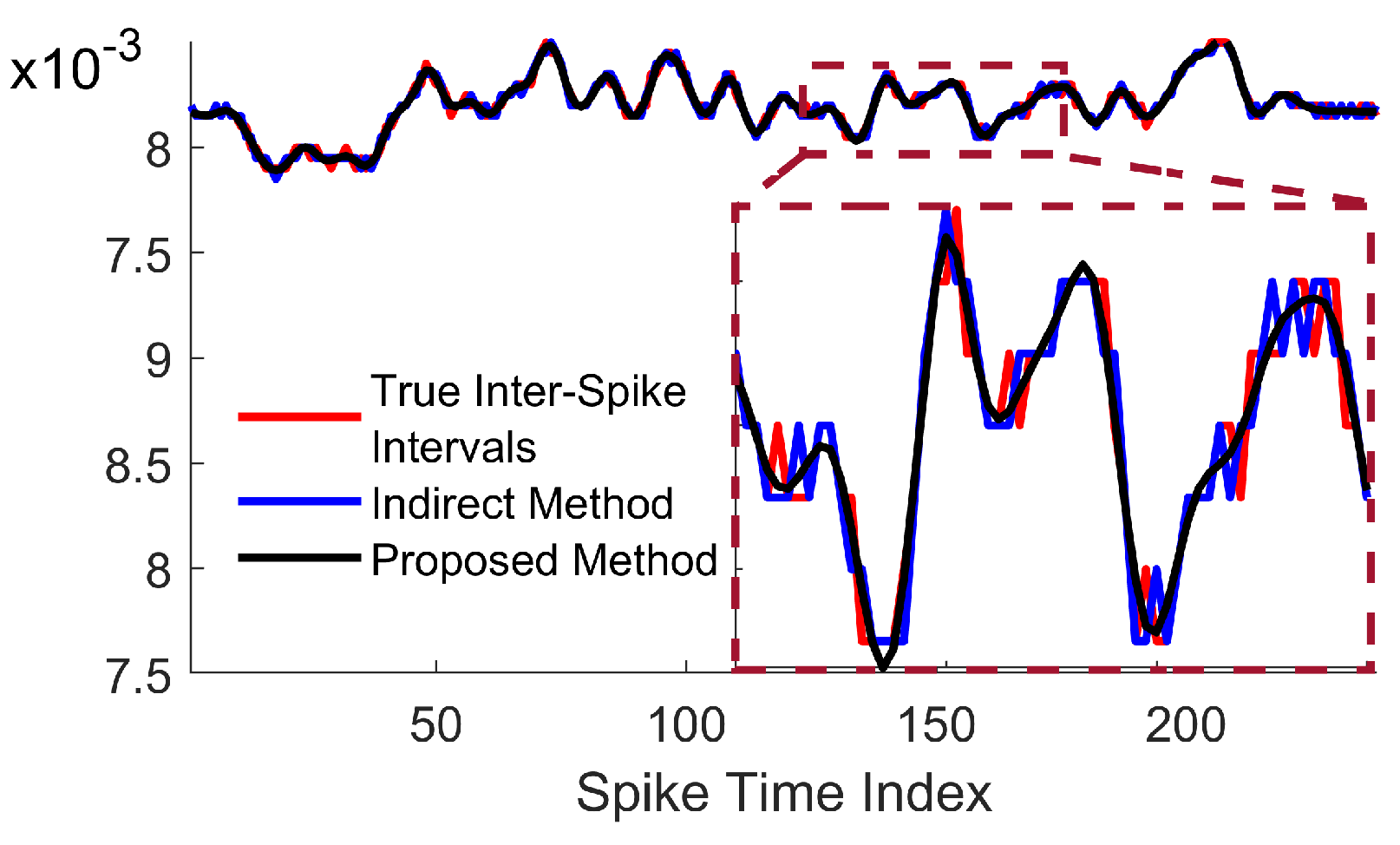

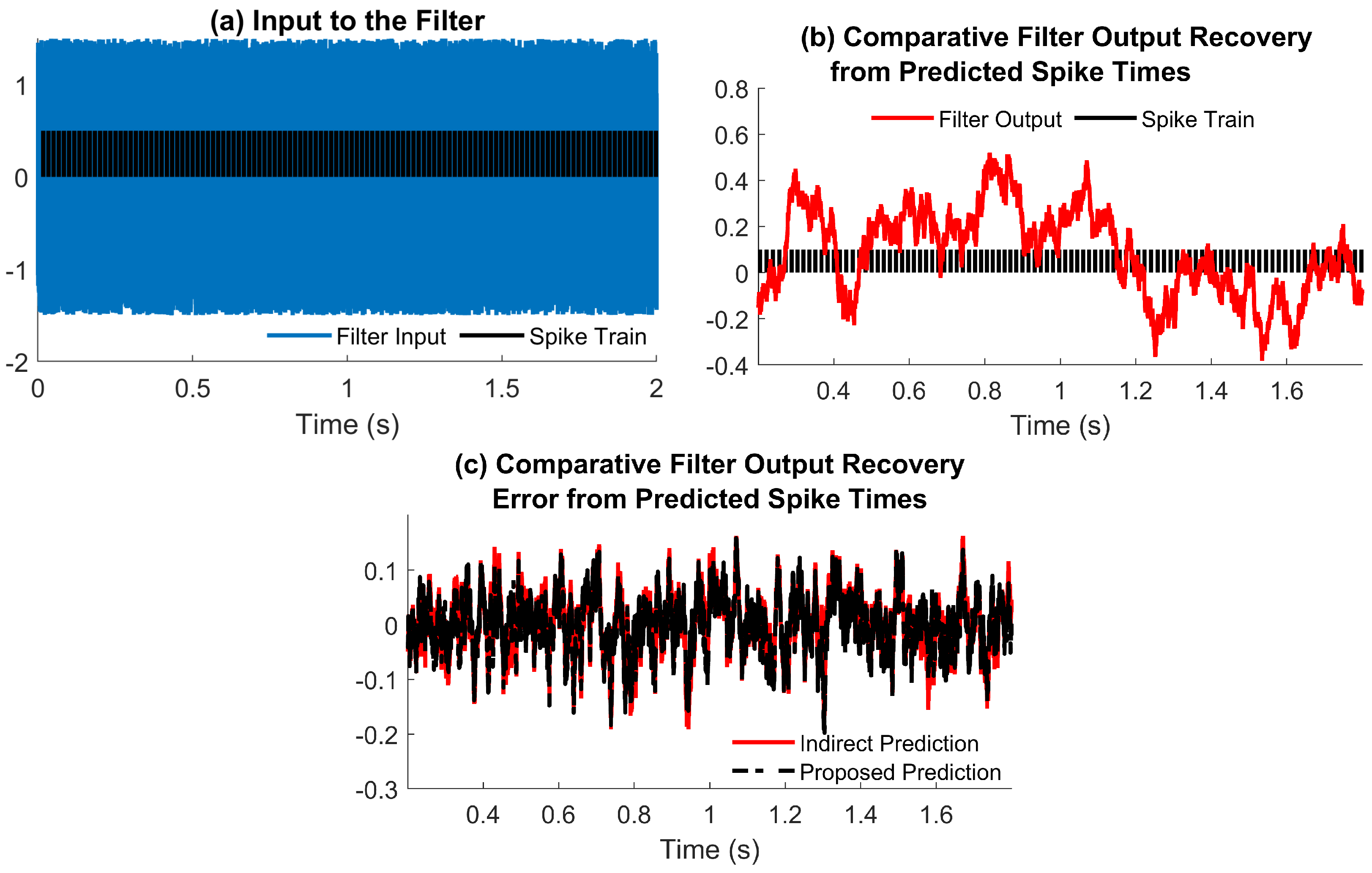

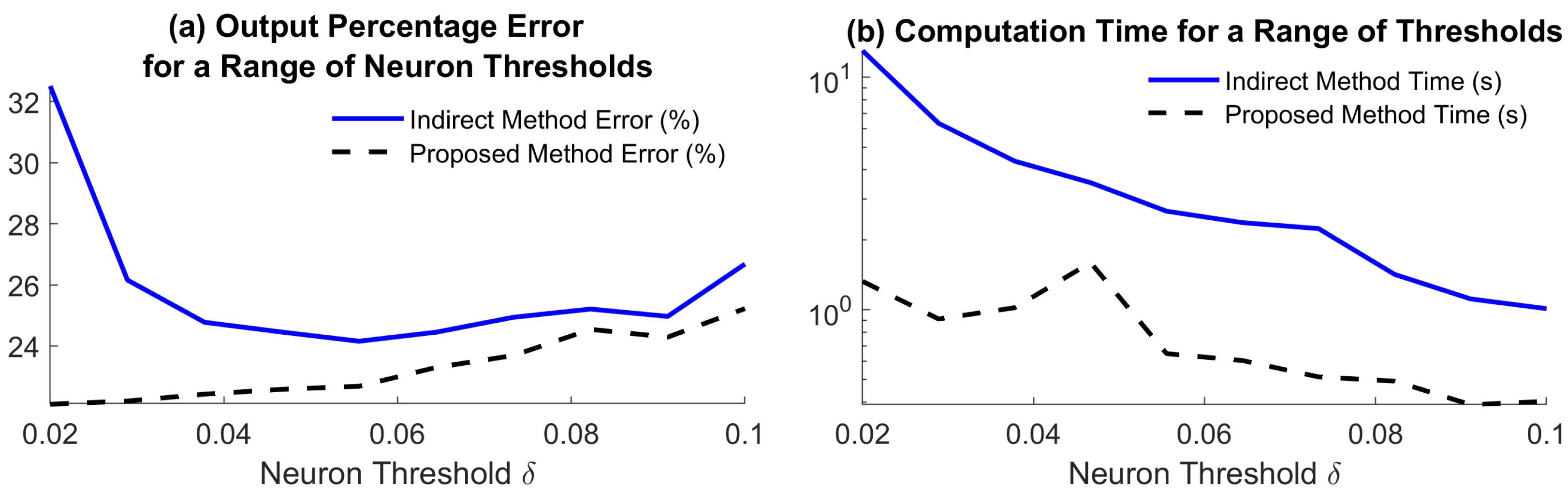

Theorem 1 shows that one can construct sequence that approximates with error . We note that this error is dependent only on neuron parameters, and thus can be made arbitrarily small by changing the IF model. In practice, we use a finite sequence of input measurements to approximate output samples that satisfy . The proposed direct filtering method is summarized in Algorithm 1.

We note that, in practice, convergence was achieved in Algorithm 1 for

iterations in all examples we evaluated. Moreover, we note that, in the proposed manuscript, computing

uses

as an initial condition. When new input data samples become available, Algorithm 1 incorporates them in computing new output data samples, but does not need to re-compute the output samples that are already known. In the next section, we numerically evaluate the proposed algorithm.

| Algorithm 1 Computing the neuromorphic output data samples via the proposed method. |

| Data: , , , b; |

| Result: ; |

| Step 1. Set and . While , |

| Step 1a. ; |

| Step 1b. Compute for , where ,

|

| and |

| Step 1d. ; |

| Step 1e. ; |

| Step 2. Set . |

6. Conclusions

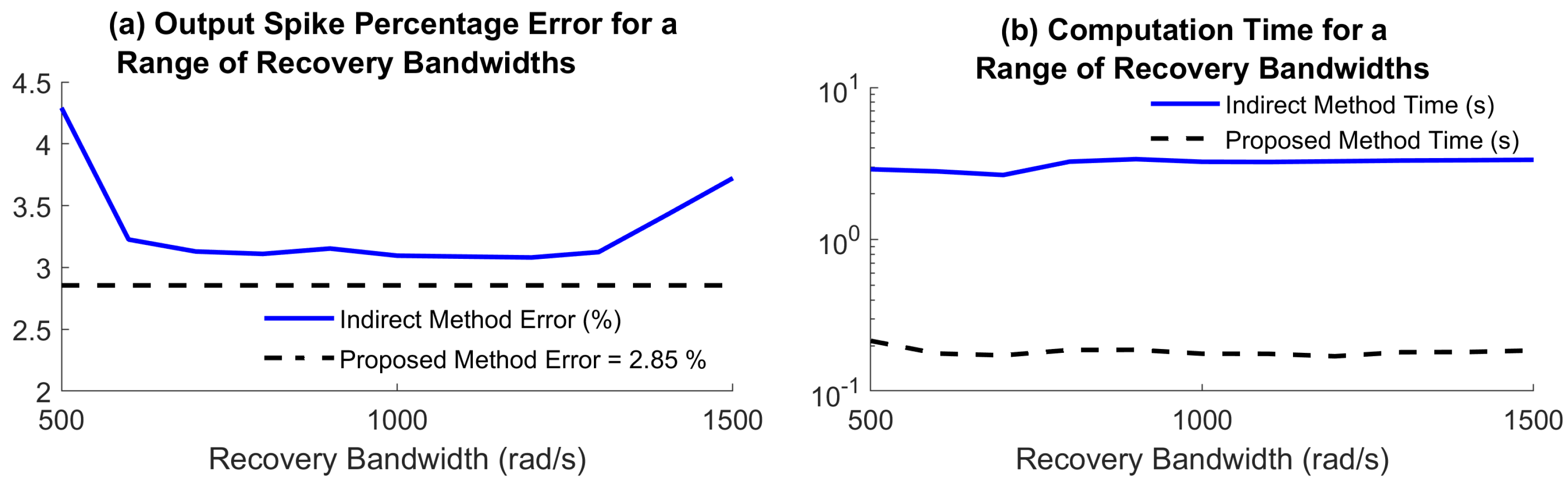

In this work, we introduced a new method to filter an analog signal via its neuromorphic measurements. Unlike existing approaches, the method does not require imposing smoothness type assumptions on the analog input and filter output, such as a limited bandwidth. We introduced recovery guarantees, showing that it is possible to approximate the output spike train with arbitrary accuracy for an appropriate choice of the sampling model. We compared the proposed method numerically against the conventional solution to this problem, which involves reconstruction of the analog signal. The results show the accuracy of the proposed method is comparable to that of the conventional approach. However, the computing time was smaller for the proposed method in all examples, ranging from 2–3 times up to more than one order of magnitude smaller.

Conceptually, the proposed method has the advantage of not depending on the characteristics of the analog signal, and therefore it is not restricted to satisfy any reconstruction guarantees. As demonstrated numerically, the method works well in the case of random inputs, as well as when the input and output of the filter are sampled below Nyquist. Moreover, given the fact that it bypasses input reconstruction, the proposed method is not affected by known artefacts of recovery methods such as boundary errors.

This work can be extended in several directions. First, the theoretical results can be extended to work with higher-order interpolation rather than a piecewise constant, which may lead to better error bounds. Second, the results can be extended for the more general scenarios of multichannel or nonlinear filters. Third, the proposed algorithm could be implemented in hardware and tested in practical communication scenarios. This work has the potential to lead to the development of neuromorphic filters that would facilitate a faster transition towards a power-efficient computing infrastructure.