1. Introduction

Drivers’ attention is a critical determinant of road safety, with inattention contributing significantly to crash risk [

1]. The literature clearly establishes that secondary tasks, particularly those involving visual and cognitive distractions, can impair a driver’s ability to respond to dynamic driving environments [

1,

2,

3,

4]. Furthermore, the growing integration of in-vehicle infotainment systems, touch-based interfaces, and mobile devices has intensified the challenge of performing secondary tasks while driving [

5,

6]. Unlike tactile controls, touch-based on-vehicle control systems often require drivers to divert their gaze from the road, thereby redirecting attention away from primary driving tasks and propagating road safety risks. While these trends highlight the urgency of addressing driver distraction, the specific mechanisms by which attention is allocated across different areas of interest (AOIs) during multitasking remain underexplored.

To mitigate driver distraction and improve attention to primary driving tasks, vehicle manufacturers have developed various alert systems [

7,

8]. These systems have emerged as a promising countermeasure to mitigate driver distraction by redirecting attention back to driving tasks. Among various alert modalities, auditory and haptic alerts have been widely investigated for their ability to effectively capture drivers’ attention [

7,

8]. Auditory alerts leverage sound cues to prompt immediate responses, while haptic alerts provide tactile feedback that can intuitively guide attention back to the driving task.

These alert systems rely on various mechanisms to detect driver inattention. Steering wheel-embedded sensors monitor driver engagement through hand placement and provide real-time attention metrics [

9,

10]. Another mechanism involves in-cabin cameras that monitor the driver’s head orientation and face direction to detect when visual attention is diverted away from the road [

11,

12]. Despite the increasing adoption of such technologies, questions remain regarding their effectiveness in realigning driver attention across different AOIs during multitasking scenarios. Furthermore, the comparative impact of different alert conditions on gaze behavior and the sequential patterns of gaze shifts across the road, dashboard, and secondary tasks requires additional examination. Addressing these gaps is essential to enhance road safety by informing the design of more resilient vehicle systems aligned with human factors principles.

Therefore, this study examines how different alert conditions with distinct detection mechanisms and behavioral control logic influence drivers’ sequential allocation of attention while driving among three AOIs (the road, dashboard, and in-vehicle infotainment system). Specifically, we investigate driver attention patterns when engaged in secondary tasks involving both visual and cognitive distractions, under varying alert conditions.

This paper makes three primary contributions. First, it investigates the impact of different alert modalities as system-level interventions on drivers’ sequential allocation of attention across three areas of interest under visual and cognitive distraction.

Second, it provides a framework for utilizing sequence analysis to uncover gaze transition patterns and develop insights into how drivers dynamically shift their focus in response to alerts. Third, it quantifies the relative attention allocation to each AOI under varying alert conditions, offering a comprehensive view of how distraction mitigation strategies could influence driver behavior within a Safe Systems framework. In this study, areas of interest (AOIs) are conceptualized as discrete system states, enabling the examination of attention as a dynamic state-transition process within the driver–vehicle system.

The remainder of this paper is structured as follows: The literature review on eye-tracking research on driver attention, followed by a detailed methodology section describing the study design, sample, and experimental apparatus. We then detail our data preparation process, including gaze mapping techniques, definition of areas of interest, data visualization approaches, and sequence analysis methods. Finally, we present our results, followed by discussion and conclusions.

From a Safe Systems perspective, driver attention should be understood as an emergent property of a socio-technical system involving the driver, the vehicle, and the supporting alert mechanisms, rather than solely as an individual responsibility of the driver. Within this framework, alert modalities function as system-level interventions designed to anticipate and accommodate human error by influencing attention dynamics under distracted driving conditions.

2. Literature Review

Driver attention plays a critical role in road safety, with human error accounting for over 90 percent of traffic collisions [

13]. As in-vehicle technologies continue to evolve, concerns regarding visual distractions have intensified, making it essential to understand how attention shifts in response to various stimuli. Eye-tracking research has become a valuable tool in this domain, offering insights into where drivers allocate their visual attention and how distractions impact driving performance [

14,

15,

16,

17,

18]. By examining gaze behavior, researchers have identified patterns of inattention, cognitive load, and response to alerts, contributing to the design of safer vehicles.

Table 1 summarizes the methodologies used in key publications, highlighting the specific reasons for utilizing eye-tracking and the main findings derived from each approach. This structured comparison provides insight into how eye-tracking has been applied to different research objectives and helps identify trends in its use across studies.

2.1. Effects of In-Vehicle Distractions

Several studies have explored how in-vehicle distractions affect driver attention, revealing that secondary task complexity significantly impacts driving performance. Victor et al. [

21] analyzed how drivers interact with in-vehicle systems of varying complexity and their effect on driving performance. Their findings showed that complex tasks lead to increased visual distractions and ultimately deteriorating driving performance and increasing safety risks. Liang et al. [

28] extended this research by examining visual distractions caused by multifunctional in-car displays in a driving simulator. Their study demonstrated a nonlinear relationship between distraction duration and its effects, showing that while short-term distractions can often be mitigated, prolonged tasks significantly impair hazard perception. Similarly, Narayana et al. [

25] found that both simple and complex secondary tasks induce cognitive load as indicated by pupil dilation during these tasks. This clearly indicates that any type of secondary task, regardless of complexity, disrupts attention allocation.

2.2. Alert Systems and Intervention Strategies

To address these safety concerns, researchers have investigated the effectiveness of alert modalities, either tactile or auditory, in reducing risks related to distractions. For instance, Ahlström et al. [

15] evaluated the gaze-based distraction warning system (AttenD) using tactile alerts and found that it improved visual behavior following warnings by reducing glance durations away from the road. Lee and Hoffman [

27] examined collision warning systems and demonstrated that graded warnings improved driver response times, with tactile alerts being more reliable than auditory ones.

Beyond traditional alert systems, recent research has also compared advanced detection and intervention mechanisms aimed at improving driver monitoring and risk mitigation. Nagy et al. [

26] introduced the Binarized Area of Interest Tracking (BAIT) algorithm, which significantly enhanced gaze diversion detection, improving real-time driver surveillance. Similarly, Palazzi et al. [

29] applied a deep learning model using gaze tracking and successfully modeled driver attention patterns, demonstrating that automated real-time risk estimation in advanced driver assistance systems (ADAS) is feasible. These studies indicate an increasing application of intelligent, real-time interventions that can proactively mitigate driver inattention and enhance road safety.

2.3. Research Gaps and Study Objectives

Despite the wealth of studies on driver attention, no research has comprehensively combined visual distractions and alerts within experimental conditions. Most studies focus either on visual distractions or the efficacy of alerts in isolation. For example, Narayana’s [

14] work analyzed cognitive load from visual distractions, while Ahlström’s [

15] study targeted alert efficiency, but neither integrated both elements in a unified experimental design. Additionally, while previous research has examined the effects of different alert modalities, such as tactile and auditory, fewer studies have directly compared their effectiveness in aiding attention recovery under constant distractions.

The present study addresses these gaps by simultaneously studying visual distractions and different alert modalities (tactile and auditory) through a driving simulator. By employing sequence analysis, this study investigates how drivers’ attention in different areas of interest transitions in response to distractions and alert modalities. The experiment conducted in this study includes control conditions without alerts, allowing for a comprehensive analysis of attentional shifts. Further, the use of sequence analysis to interpret gaze behavior offers a novel methodological approach compared to existing studies. Lastly, findings from this study will contribute to the design of more effective driver assistance systems that minimize visual demands while improving situational awareness.

3. Experimental Design

This section describes the overall experimental design, participant sample, and simulator-based apparatus used to examine driver attention under different alert conditions.

3.1. Experimental Design and Study Sample

This study employed a within-subject experimental design to examine the impact of different driver engagement warning systems on eye movement patterns under visual distraction. The eye-tracking dataset analyzed in this paper originates from a broader driving simulator experiment, previously reported in Shirani et al. [

33]. In the broader study, the visual-distraction condition included 18 participants. However, due to calibration loss and gaze-tracking quality issues commonly encountered in wearable eye-tracking studies, reliable eye-tracking recordings across all alert conditions were obtained from 13 participants, who were therefore included in the present sequence analysis.

The demographic characteristics of the analyzed participants indicate that their ages ranged from 18 to 58 years (mean = 29.1), and the sample included 6 female and 7 male drivers. All participants possessed a valid driver’s license and had normal or corrected-to-normal vision, ensuring reliable eye-tracking measurement. Participants were also active drivers who reported driving regularly. This study was approved by the University of Connecticut Institutional Review Board (IRB Approval No. H19-085). All participants provided informed consent prior to participation and were informed that they could withdraw from the study at any time without penalty. Additional details regarding participant recruitment, study procedures, and the broader experimental design are described in Shirani et al. [

33].

Although the number of participants included in the eye-tracking analysis is modest, the within-subject experimental design and high-frequency eye-tracking sampling (100 Hz) generate a large number of fixation events and gaze transitions for each participant. As a result, the sequence-based analyses rely on thousands of gaze observations, substantially increasing analytical sensitivity despite the limited number of participants. Similar sample sizes have been reported in previous studies investigating driver gaze behavior and distraction using driving simulators and eye-tracking methodologies (e.g., [

31,

34]).

3.2. Experimental Apparatus

Experiments for this study were conducted in a controlled driving simulator lab equipped to allow drivers to engage naturally in designated tasks. A full-scale fixed-base Realtime Technologies™ driving simulator (Realtime Technologies, Inc., Royal Oak, MI, USA) was utilized to collect data on driver behavior and reactions. The simulator features five high-resolution segmented screens, providing an extensive horizontal field of view that closely mimics real-world driving scenarios.

Additionally, a retractable rear projection screen has been integrated, facilitating the use of rear-view mirrors. This versatile system is fully programmable, enabling users to design vehicle dynamic models, including the integration of driving assistance technologies and a variety of scenarios spanning different roadway geometries, traffic control devices, environmental objects, weather conditions, and other road users.

Figure 1 illustrates the interactive environment experienced by the participants in the UConn driving simulator.

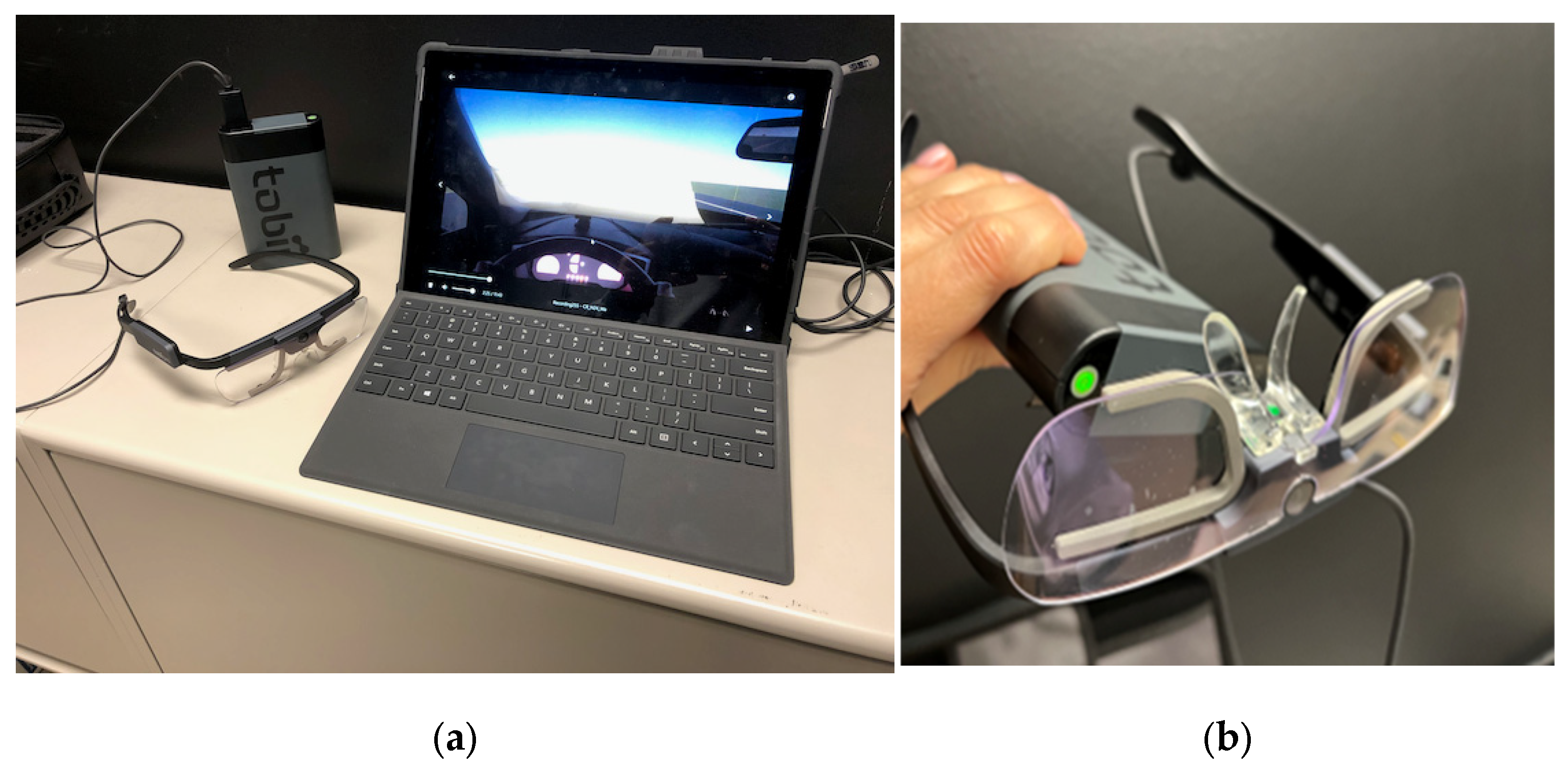

To capture eye-tracking data, we used Tobii Pro Glasses 2, (Tobii Pro AB, Stockholm, Sweden) a lightweight wearable eye tracker designed for real-world and simulated environments. The Tobii Pro Glasses 2 system provides high-accuracy gaze tracking with a sampling rate of 100 Hz, allowing for precise measurement of eye movements across different driving conditions. This device enabled the analysis of visual attention distribution by recording fixations and saccades within predefined Areas of Interest (AOIs). The collected data were processed using iMotions™ software version 9.0, which facilitated the extraction of eye movement metrics and behavioral patterns under different alert conditions.

Figure 2 provides an overview of the Tobii Pro Glasses 2 setup used in the experiment.

3.3. Study Design and Implementation

The steering wheel torque alert was implemented using sensors included in the driving simulator steering wheel. The face tracking warning was applied with facial recognition camera (

Figure 3). Both alert systems deliver an auditory warning signal (beep); however, they differ in their detection mechanisms and behavioral requirements for terminating the alert. The Steering-Wheel Alert is triggered when no steering torque input is detected from the steering wheel sensors for more than 5 s. The auditory warning stops once the driver applies steering torque to the wheel. The Face-Tracking Alert is triggered when the facial recognition camera detects that the driver’s face is oriented away from the road for more than 5 s. In this case, the auditory warning continues until the driver redirects their gaze toward the forward roadway.

Although both alerts use the same auditory stimulus, the behavioral requirements for terminating the alert differ. The steering-wheel alert can be deactivated through manual input without necessarily changing gaze direction, whereas the face-tracking alert requires drivers to visually re-engage with the road.

The 5 s threshold was chosen based on current practices observed in the market, where similar timeframes are utilized for alerts in ADAS-equipped vehicles. This duration ensures that alerts are provided in a timely manner without being overly intrusive or frequent, aligning with real-world scenarios where drivers may need a few moments to recognize and respond to changing road conditions, especially when distracted.

Participants completed three driving sessions, each incorporating different alert conditions: one with a steering wheel alert, one with a face tracking alert, and one with no alert. Throughout all driving scenarios, participants were subjected to visual distraction in the form of a card-matching game presented on a tablet. From the beginning of each scenario, the designated alert system was activated; however, drivers could temporarily remove their hands from the steering wheel (in the case of the steering wheel alert) or turn their face away (in the case of the face alert), depending on the assigned scenario.

Figure 3 also illustrates the setup of the face recognition camera mounted on the dashboard and the tablet positioned for the card matching game, which served as the visual distraction.

Eye-tracking data were collected throughout all driving sessions to examine how drivers’ visual attention distribution and gaze patterns varied under different alert conditions.

Figure 4 presents the eye-tracking data collection setup, showing a participant wearing Tobii Pro Glasses, their view of the simulated driving environment, and engagement with the visual distraction task in the driving simulator.

4. Data Preparation

This section details the procedures used to preprocess and analyze the eye-tracking data collected during the driving simulator study. The workflow included data cleaning, defining areas of interest (AOIs), extracting fixation metrics, and computing gaze transitions to facilitate statistical and sequence analysis.

4.1. Data Cleaning and Synchronization

Raw gaze data files were imported into iMotions software, where missing or noisy data points were filtered using interpolation techniques to improve data quality. The eye-tracking data were time-synchronized with the driving simulator’s event log to align fixation events with the corresponding alert conditions. Any gaze points recorded outside the display range or classified as artifacts due to blinks or head movements were excluded from the analysis to ensure accuracy in gaze tracking.

Minor variations in the length of recorded gaze sequences occurred due to temporary tracking loss, calibration drift, or brief signal interruptions during head movements. These issues are common in wearable eye-tracking studies conducted in dynamic environments such as driving simulators. All participants completed the same experimental protocol and driving tasks; therefore, differences in sequence length reflect data availability rather than differences in the experimental design or participant attrition.

4.2. Definition of Areas of Interest (AOIs)

To systematically analyze attention distribution, three Areas of Interest (AOIs) were defined: Road, Dashboard, and Tablet, as shown in

Figure 5. The road AOI corresponded to the driver’s forward view of the roadway and the surrounding traffic environment, including the rearview mirror.

The Dashboard AOI encompassed the driver’s instrument panel, including the speedometer and vehicle controls. The Tablet AOI represented the in-vehicle infotainment system displaying a logic-based card-matching game. These AOIs were manually annotated by overlaying boundaries on video frames captured from the eye-tracker’s scene camera. The software then mapped gaze points to the predefined AOIs, allowing for the computation of attention metrics.

4.3. Key Frame Enhancement

Gaze mapping was conducted using iMotions, followed by a post-processing stage to correct any inconsistencies where processing gaps were visible on the timeline. This was achieved by employing a key frame image to enhance alignment accuracy (

Figure 6).

At this point, frames with failed processing are oriented towards the reference image. By linking corresponding objects in two frames with reference markers, the system was provided with structured guidance to map gaze positions and refine the processing outcome accurately. This approach improved the overall precision of gaze tracking, ensuring continuity and reliability in the data analysis (

Figure 7).

Processed data were exported in Excel and CSV formats for further statistical analysis. The dataset included fixation logs, which contained time-stamped records of fixations categorized by AOI. To prepare the data for sequence-based and statistical analyses, fixation events were converted into time-ordered sequences of AOIs. These sequences allowed us to examine transitions between areas of interest and calculate metrics such as dwell time, fixation count, and gaze transitions. Additionally, summary statistics and condition-specific subsets were generated to facilitate comparison across different alert scenarios.

During sequence construction, missing gaze samples resulting from temporary tracking loss were excluded from transition computations and were not treated as a separate state in the sequence analysis. Only valid gaze samples classified into the predefined Areas of Interest (AOIs)—road, dashboard, and tablet—were included in the transition matrices and subsequent Markov chain analysis. Transitions were therefore calculated based on consecutive valid AOI observations, ensuring that the computed gaze transitions represent genuine shifts in visual attention rather than artifacts introduced by missing data.

4.4. Sequence Construction and Length Consistency

The sequence-based analysis relies on transition probabilities calculated from the relative frequency of gaze shifts between AOIs rather than the absolute duration of individual sequences. Consequently, small differences in sequence length do not materially affect the resulting transition matrices or Markov chain estimates. Because all participants experienced the same experimental conditions and produced sufficiently long gaze sequences sampled at 100 Hz, the dataset generated thousands of gaze observations per participant. This high temporal resolution ensured robust estimation of transition probabilities despite minor variations in sequence length across sessions.

5. Methodology

This section summarizes the analytical methods used to quantify gaze behavior and model attention transitions across AOIs under each alert condition.

5.1. Sequence Analysis

Fixation metrics were extracted to quantify drivers’ visual attention patterns. Fixation duration, defined as the cumulative time spent fixating on each AOI per trial, was computed to determine the extent of visual focus on each area. Fixation count, indicating the number of discrete fixations per AOI, was also analyzed to understand how frequently participants directed their attention to specific AOIs. This study exclusively focused on fixation data rather than saccade data due to the nature of the research objectives. Fixations provide a direct measure of sustained attention on a particular AOI, which is crucial for understanding how drivers distribute their visual focus during distracted driving scenarios. In contrast, saccadic movements primarily capture rapid gaze shifts between AOIs, which may not necessarily indicate attention allocation. Since the study aims to assess the effectiveness of different alert conditions in redirecting and maintaining attention, fixation-based analysis offers a more relevant measure of visual engagement. As such, sequence analysis was conducted to identify common patterns in gaze transitions. Sequence analysis allows for the identification of frequent transition sequences between AOIs and their durations. The probability of a particular gaze sequence occurring is determined by:

where P (S) represents the probability of sequence S and

represents the probability of transitioning from

at time

t.

5.2. Computation of Gaze Transition Matrices

Transition matrices were generated to examine how participants shifted their attention between AOIs under different alert conditions. The transition probability between AOIs was calculated using the formula:

where

represents the probability of transitioning from

to

,

represents the number of gaze transitions from

to

, and

is the total number of gaze transitions from

to all AOIs. These matrices provided insights into how often participants moved their gaze between different AOIs and revealed patterns of visual attention transitions under varying alert conditions. Hence, a full transition matrix

P can be defined as:

5.3. Markov Chain Model and Steady-State Probability

Markov chain models were employed to analyze the gaze transitions and calculate steady-state probabilities. Each observation in the transition sequence corresponds to a single fixation as detected by the eye tracker. The transition matrix was estimated directly from consecutive fixation-to-fixation transitions, including self-transitions where the tracker recorded successive fixations on the same AOI. This approach preserves the natural temporal structure of gaze behavior without requiring artificial time discretization. First-order Markov chains are a widely used framework for eye-tracking transition data [

35]. The memoryless assumption implies that the probability of transitioning to a given AOI depends only on the current AOI, not on previous gaze locations. While higher-order dependencies may exist, the first-order model provides a parsimonious summary of gaze switching dynamics and is consistent with prior driving eye-tracking studies. The transition matrices were used to compute steady-state probabilities, representing the long-term distribution of gaze behavior across different AOIs under each alert condition.

Steady-state probabilities were computed to understand the proportion of time drivers spent gazing at each AOI over an extended period, regardless of their initial gaze location. These probabilities were derived by solving the Markov chain’s equilibrium equations, ensuring that the distribution of gaze behavior remained stable over time. This analysis provides insights into the effectiveness of each alert type in maintaining drivers’ attention on the road. The steady-state probabilities were compared across the three alert conditions to assess which alert type best mitigates sustained distractions.

To quantify uncertainty around the steady-state estimates, a participant-level nonparametric bootstrap (B = 2000 replicates) is performed. In each replicate, the 13 participants are resampled with replacement. The transition matrix and steady-state distribution and the resulting probabilities are recomputed. Finally, the 95% bootstrap confidence intervals for all steady-state estimates are reported.

5.4. Entropy Analysis

To quantify the randomness in gaze transitions, Shannon entropy was calculated for each alert condition. Shannon entropy measures the uncertainty in gaze transitions, with higher values indicating more random and unpredictable gaze behavior, while lower values reflect more structured and predictable gaze patterns. The entropy values were computed using the formula:

where

represents the probability of transitioning to a given AOI. Entropy values were then compared across alert conditions to determine whether certain alerts introduced more structured or more random gaze behaviors.

5.5. Logistic Regression Analysis with Random Effects

To assess the influence of different alert conditions on drivers’ gaze transitions toward the road, we employed a mixed-effects logistic regression model. This approach accounts for the binary nature of the outcome variable, whether the next gaze fixation is on the road (coded as 1) or not (coded as 0) and accommodates the repeated measures design by incorporating random effects for individual participants.

The objective of this analysis is to understand the transitions between AOI. So, the self-transitions are aggregated, and the corresponding fixation duration is calculated as the sum of individual fixation durations in the self-transition. Transitions originating from road (current AOI = Road) are excluded by design. Alert type, log-transformed fixation duration and current AOI are used as fixed effects in the model. The mixed-effects logistic regression model was specified as follows:

is the binary outcome indicating whether the next fixation for the participant at observation i is on the road.

is the intercept representing the log-odds of transitioning to the road under the No Alert condition.

and are the fixed-effect coefficients for the Wheel Alert, Face Alert conditions, current AOI and log of fixation duration in current AOI, respectively.

represents the random effect for participant j, assumed to be normally distributed with mean zero and variance , capturing individual-specific deviations from the overall intercept.

The No Alert condition serves as the reference category, allowing for the comparison of each alert type’s effect relative to the absence of alerts. Model parameters were estimated using the maximum likelihood estimation method, appropriate for mixed-effects models with binary outcomes. This approach provides unbiased estimates of both fixed and random effects, accounting for the nested structure of the data.

6. Results

Heatmaps were generated to provide a visual representation of gaze density across the driving scenario. Heatmaps used color gradients to depict fixation density, with warmer colors such as red and yellow indicating areas of high visual attention, while cooler colors such as blue and green represented areas with less frequent gaze activity. Heatmap analyses were conducted for each alert condition to assess how gaze allocation varied across AOIs and to illustrate differences in drivers’ visual behavior based on the alert type.

Figure 8 shows a sample of fixation duration heatmap illustrating driver gaze distribution during a distracted driving scenario in the driving simulator.

Thirteen participants completed driving experiments under three alert conditions (No Alert, Steering Wheel Alert, and Face Alert), resulting in 39 sequences (one per condition per participant). Due to differences in the duration of driving sessions across participants, not all drivers experienced the alerts for equal periods, resulting in missing values within some sequences. These missing observations reflect variations in overall driving time but do not affect the subsequent sequence analyses.

Figure 9 presents the state sequences for all participants across different alert conditions. The plot shows notable individual differences in driving behavior: for example, participant 9 rarely transitioned to the road, spending most of the time fixating on the tablet, whereas participant 10 appeared to be the least distracted by the presented visual distractions. Additionally, participant 8’s sequence contains a substantial number of missing values, indicating a considerably shorter driving session relative to other participants. Nonetheless, all available observations were retained for analysis, as the variable session lengths do not compromise the integrity of the sequence analysis methods employed in the subsequent sections.

Transition matrices are calculated separately for each alert type to understand the nature of the transition of states in the sequences (

Table 2). The matrices capture the probability of transitioning from one AOI to another, providing initial insights into how alert types can affect gaze patterns. Across all combinations, the highest probabilities are observed for self-transition—showing that once a driver fixates on an area of interest, they tend to remain in the same area of interest. For instance, self-transitions for the Road range from 77.8% to 78.7%, and those for the Tablet range from 90.2% to 92.8% under all alert conditions. Self-transitions for the Dashboard are lower but still substantial, ranging from 54.9% to 56.7%.

Focusing on the transitions to the road, subtle differences are observed across the alert types. Transitions to the road from the dashboard are relatively stable across conditions, at 29.3% under No Alert, 29.6% under Wheel Alert, and 29.8% under Face Alert. More notable differences emerged in transitions from the Tablet to the Road: Face Alert showed the highest probability at 8.2%, compared to 5.9% under No Alert and 4.7% under Wheel Alert. Thus, while the overall transition patterns are dominated by the tendency to remain in the same AOI, the data indicate that Face Alerts are associated with increased transitions toward the Road, particularly when the current fixation is on the Tablet. Conversely, Wheel Alerts are associated with a lower probability of transitioning from the Tablet to the Road relative to both No Alert and Face Alert conditions, suggesting that Wheel Alerts may be less effective at redirecting gaze away from the Tablet.

To understand the statistical significance of transitions to the road from other areas of interest, a logit model was developed with random effects to account for the repeated measures from participants across all experimental conditions (

Table 3). The sequence analysis data was transformed into a binary dependent variable, where 1 indicates that the next stage in the sequence is the road for the current state and 0 otherwise.

Both alert types significantly affected the likelihood of returning to the Road relative to the No Alert baseline. Face Alerts were associated with 72% higher odds of transitioning back to the Road (OR = 1.716, 95% CI: [1.36, 2.16]), while Wheel Alerts were associated with 37% lower odds (OR = 0.63, 95% CI: [0.51, 0.79]). Longer fixation durations away from the road were associated with modestly higher odds of returning to the Road (OR = 1.19, 95% CI: [1.10, 1.30]). The source AOI (Dash vs. Tablet) did not significantly affect the probability of returning to the Road (OR = 1.09, 95% CI: [0.88, 1.35]), suggesting that alert-driven gaze reallocation operates similarly regardless of which AOI currently holds the attention. The random intercept variance for participant was 1.514 (standard deviation = 1.23), indicating substantial between-driver variability in baseline gaze transition to the road.

To this end, the transition matrices and the logit model helped us understand short-term transitions. The next objective is to study the long-term behavior of drivers’ gaze patterns under different alert conditions. This behavior is examined by visualizing the state distributions and using Markov chain analysis for steady-state probabilities. The state distribution plots in

Figure 10 show the tablet as a dominant area of interest for all participants across all alert conditions. Given the visual distraction of solving a card-matching game on the tablet, the distributions are logical. However, a close comparison of the plots reveals some increase in the time spent looking at the road under face alert compared to no alert and wheel cases for most participants. Additionally, differences are observed in gaze patterns at the individual level.

Next, to quantify the long-term gaze patterns, each alert-specific transition matrix is transformed into a Markov chain to compute the steady-state (stationary) probabilities. Steady-state probabilities were computed to characterize the relative distribution of gaze across AOIs implied by the observed transition dynamics, rather than as predictions of a true long-term behavioral equilibrium. This analysis provides a complementary perspective on attention allocation, revealing whether certain alerts might cause drivers to fixate more on the road (or other AOIs) in the long run.

Figure 11 shows the steady-state probabilities under the three alert conditions. Face Alert was associated with the highest share of time focused on the Road at 34.0% (95% CI: [22.9%, 45.9%]), followed by No Alert at 29.7% (CI: [18.3%, 42.5%]) and Wheel Alert at 26.7% (CI: [16.9%, 37.2%]). Conversely, the highest Tablet fixation time occurred under Wheel Alert (63.2%, CI: [51.4%, 73.6%]), with No Alert and Face Alert at slightly lower levels. The Dashboard consistently received the least attention across all conditions, with steady-state probabilities of 9.6% under No Alert, 8.4% under Face Alert, and 10.1% under Wheel Alert. Pairwise bootstrap comparisons revealed that individual steady-state differences are not statistically significant at 95% level.

Importantly, the directional patterns observed in the steady-state analysis are fully consistent with the binary logit model results (

Table 3), where Face Alerts significantly increased the odds of returning gaze to the Road and Wheel Alerts significantly decreased them. The convergence of findings across the transition-level model and the steady-state analysis shows the stability of the analysis.

The analysis presented thus far highlights the dynamic nature of state transitions in sequences and the long-term gaze behavior of drivers under various alert conditions. Now, the entropy analysis illustrates the predictability of gaze patterns across these different alert situations. A higher entropy value reflects more random gaze patterns, while lower values indicate more structured gaze patterns.

Figure 12 depicts the entropy distribution for individual sequences, showing a moderate entropy of approximately 0.7 for most sequences. This suggests that the gaze patterns are neither entirely predictable nor deterministic. The mean entropy values for No Alert, Face Alert, and Wheel Alert are 0.636, 0.681, and 0.684, respectively. The results indicate that drivers exhibit the most random gaze patterns during the wheel alert condition and the least random during No Alert. The analysis of the transition probabilities supports these entropy values, as previously presented; drivers tend to fixate on the tablet for longer durations when there is No Alert, resulting in more uniform gaze patterns. Consequently, the Face Alert balances randomness in transitions and the driver’s focus on the road.

7. Discussion

As shown in the transition matrices and sequence visualizations, drivers’ gaze tends to remain within the same area of interest for extended periods, resulting in a high prevalence of self-transitions. Nevertheless, subtle differences are observable across alert conditions. In particular, transitions from the dashboard and tablet toward the road occur slightly more frequently under the face alert condition. The mixed-effects logit model similarly indicates that face alerts are associated with an increased likelihood of transitioning to the road compared with the no-alert condition. However, the magnitude of these differences is relatively modest in absolute terms. Therefore, the results should be interpreted as indicating small directional shifts in gaze allocation patterns rather than large behavioral changes in driver attention. The steering-wheel alert appears less effective than the no-alert condition, which may initially seem counterintuitive. However, this outcome can be explained by the operational logic of the alert system. The steering-wheel alert is deactivated once torque is applied to the steering wheel, whereas the face-tracking alert continues until the driver redirects their gaze toward the roadway.

It is important to note that both alerts used the same auditory warning stimulus and differed primarily in their detection logic and behavioral requirements for deactivating the alert. Specifically, the face-tracking alert required drivers to redirect their gaze toward the roadway to silence the warning, whereas the steering-wheel alert could be deactivated simply by applying steering torque. This difference in behavioral requirements likely contributed to the slightly higher probability of transitions toward the road observed under the face-alert condition.

Under the distraction condition, where the driver is engaged with the tablet, they can temporarily keep their hands on the wheel to avoid the alert while maintaining their gaze on the tablet. Hence, it can be inferred that the alerts that force drivers to perform in a certain way are more effective in getting their attention under distracted conditions than the passive alerts that may not necessarily force the drivers. These findings are important for understanding how different alert modalities can modulate driver behavior. This result aligns with findings by Ahlström et al. [

15], who reported that gaze-based warnings significantly reduced glance durations away from the road, effectively redirecting visual attention toward critical driving tasks. Similarly, Lee and Hoffman [

27] observed improved driver responses and reduced distraction when using auditory and tactile alerts designed to actively recapture drivers’ visual attention. These parallel findings reinforce the effectiveness of active, attention-compelling alert systems in mitigating visual distractions. Interpreted through a Safe Systems lens, these findings reinforce the notion that human error and distraction are expected characteristics of real-world driving rather than exceptional failures. The observed effectiveness of face-tracking alerts highlights the importance of system designs that actively compensate for predictable lapses in driver attention, rather than relying on drivers to self-correct under distraction. By embedding attention-support mechanisms directly into the driver–vehicle interaction loop, such systems shift part of the safety responsibility from the individual driver to the broader transportation system.

Long-term gaze assessed using Markov chain analysis explains how driver attention is allocated over extended periods. The largest share of the driver’s attention is directed toward the tablet under all conditions, which is logical because the experiment requires drivers to engage with the tablet while playing a card-matching game. Within this context, the face-alert condition shows a slightly higher proportion of time spent looking at the road (34.0%) compared with the no-alert (29.7%) and wheel-alert (26.7%) conditions. While this difference suggests that the face alert may encourage drivers to periodically redirect their gaze toward the roadway, the absolute magnitude of the effect is relatively small and should be interpreted as a modest shift in attention allocation rather than a large behavioral change. It is also important to note that the experiment was conducted in a driving simulator with a continuous secondary task that intentionally encouraged sustained tablet engagement. As a result, the observed gaze patterns reflect a controlled experimental context and may not fully represent the variability of attention allocation in naturalistic driving environments. As mentioned earlier, the face alert compels drivers to look back at the road to stop the alert, making it effective in redirecting drivers’ attention to the road, not only during short transitions but throughout the overall driving period. These results are consistent with previous research by Victor et al. [

21], who demonstrated that auditory alerts effectively redirected drivers’ visual attention toward the road, improving situational awareness and reducing distraction-related errors.

The results of the entropy analysis show moderate entropy under all alerts, indicating that the gaze patterns are neither deterministic nor overly predictable. The highest value of entropy for the wheel alert suggests the greatest randomness. This indicates the unpredictable behavior of drivers with the alert, as some drivers, or even the same driver in certain situations, may not direct their gaze to the road but simply react by placing their hand on the steering wheel. Other times, they may respond by changing their gaze in addition to placing their hands on the steering wheel. Under no-alert conditions, drivers are aware that they are not being assisted by any alerts, which might lead to a more predictable gaze pattern. The moderate values of entropy for the face alert reflect a reduction in randomness and an improvement in the predictability of the gaze shift toward the road. The observed entropy patterns align with findings by Cvahte Ojsteršek and Topolšek [

30], noting that real-time alert interventions could effectively stabilize attention distribution, thereby balancing structured attention and flexibility in visual behavior under distracted driving scenarios.

Despite these insights, several limitations should be considered when interpreting the results. One limitation of this study is the relatively small number of participants included in the eye-tracking analysis. Although the dataset originates from a larger experimental study, only a subset of participants had reliable eye-tracking recordings due to calibration and gaze-tracking quality issues that are common in wearable eye-tracking experiments. While the within-subject experimental design and high-frequency gaze sampling produced a large number of fixation observations and gaze transitions per participant, future research involving larger participant samples would help further validate the observed gaze transition patterns and strengthen the generalizability of the findings.

In addition to sample-related limitations, the analytical framework also relies on several modeling assumptions. The Markov chain analysis assumes that gaze transitions follow a first-order process, meaning the probability of the next fixation location depends only on the current AOI. While this is a standard assumption in eye-tracking research and is consistent with prior driving studies, higher-order dependencies (e.g., the influence of two or more preceding fixations) may exist. Additionally, the stationarity assumption that transition probabilities remain constant throughout the driving task may not fully hold if driver behavior changes due to fatigue, habituation, or task structure. The bootstrap confidence intervals for pairwise steady-state comparisons were wide and did not reach statistical significance. However, the directional patterns were consistent with the transition-level binary logistic regression model, which had sufficient statistical power to detect significant effects of alert type. Future research with larger samples could explore higher-order Markov models or time-varying transition probabilities to further refine the characterization of gaze dynamics.

8. Conclusions

This study employed a comprehensive sequence analysis approach to investigate how different alert conditions influence drivers’ gaze transitions and attention allocation under conditions of visual distraction. Utilizing a driving simulator paired with eye-tracking technology, this research provided critical insights into the effectiveness of face-tracking auditory alerts compared to steering wheel torque alerts.

The results demonstrate that face-tracking alerts significantly enhance drivers’ attentional redirection to the road, resulting in increased gaze transitions toward road-related areas of interest. In contrast, steering wheel torque alerts showed limited effectiveness, sometimes performing worse than scenarios with no alerts at all. This counterintuitive outcome underscores the importance of alert design: active alerts that compel immediate gaze reorientation to the road prove considerably more effective than passive alerts that drivers can circumvent.

Markov chain modeling revealed that face alerts increase steady-state focus on the road by approximately 3.6%, clearly highlighting their potential to sustain safer driving behaviors over prolonged periods of distraction. Additionally, entropy analyses indicated a balanced randomness and stability in attentional transitions under face alerts, suggesting optimal performance in mitigating sustained visual distraction without inducing excessive unpredictability.

From a Safe Systems perspective, the results of this study underscore the value of shifting safety responsibility from individual drivers toward system-level design solutions that proactively support human limitations. Alert modalities that require explicit visual re-engagement with the roadway demonstrate greater potential to mitigate distraction-related risks than passive mechanisms that can be bypassed without meaningful attention recovery. These findings provide practical insights for the design of future vehicle safety technologies and contribute to the broader Safe Systems discourse by illustrating how attention-support interventions can be integrated into the driver–vehicle system to reduce crash risk under real-world distracted driving conditions.

Author Contributions

Conceptualization, N.S., Y.S., E.J. and K.W.; methodology, M.J., Y.S., N.S., E.O., A.B. and P.H.; software, E.O. and N.S.; validation, N.S. and E.O.; formal analysis, M.J., N.S. and E.O.; investigation, N.S., Y.S., P.H. and E.O.; resources E.J. and K.W.; data curation N.S. and E.O.; writing—original draft preparation, N.S., E.O., M.J. and P.H.; writing—review and editing, N.S., E.O., M.J., P.H., Y.S., A.B., K.W. and E.J.; visualization, N.S., E.O. and M.J.; supervision, N.S., K.W. and E.J.; project administration, E.J.; funding acquisition E.J. and K.W. All authors have read and agreed to the published version of the manuscript.

Funding

This study was funded by Consumer Reports, Inc. under the project titled UConn Driving Simulator Laboratory Pilot Project for Consumer Reports: Evaluating Driver Alert Systems Under Vehicle Automation, Project No. AG190725.

Institutional Review Board Statement

The study was conducted in accordance with the Declaration of Helsinki and was approved by the University of Connecticut Institutional Review Board (IRB), Protocol #H19-085, approved on 1 February 2020.

Informed Consent Statement

Informed consent for participation was obtained from all subjects involved in the study.

Data Availability Statement

The data supporting the findings of this study are not publicly available due to privacy and ethical restrictions and are not available for sharing.

Conflicts of Interest

The authors declare no conflicts of interest.

References

- Oviedo-Trespalacios, O.; Truelove, V.; Watson, B.; Hinton, J.A. The impact of road advertising signs on driver behaviour and implications for road safety: A critical systematic review. Transp. Res. Part A Policy Pract. 2019, 122, 85–98. [Google Scholar] [CrossRef]

- Overton, T.L.; Rives, T.E.; Hecht, C.; Shafi, S.; Gandhi, R.R. Distracted driving: Prevalence, problems, and prevention. Int. J. Inj. Control Saf. Promot. 2015, 22, 187–192. [Google Scholar] [CrossRef] [PubMed]

- Stavrinos, D.; Jones, J.L.; Garner, A.A.; Griffin, R.; Franklin, C.A.; Ball, D.; Welburn, S.C.; Ball, K.K.; Sisiopiku, V.P.; Fine, P.R. Impact of distracted driving on safety and traffic flow. Accid. Anal. Prev. 2013, 61, 63–70. [Google Scholar] [CrossRef] [PubMed]

- Wilson, F.A.; Stimpson, J.P. Trends in fatalities from distracted driving in the United States, 1999 to 2008. Am. J. Public Health 2010, 100, 2213–2219. [Google Scholar] [CrossRef]

- Wang, Y.; Reimer, B.; Dobres, J.; Mehler, B. The sensitivity of different methodologies for characterizing drivers’ gaze concentration under increased cognitive demand. Transp. Res. Part F Traffic Psychol. Behav. 2014, 26, 227–237. [Google Scholar] [CrossRef]

- Abbasi, E.; Li, Y.; Liu, Y.; Zhao, R. Effect of human–machine interface infotainment systems and automated vehicles on driver distraction. Hum. Factors Ergon. Manuf. Serv. Ind. 2024, 34, 558–571. [Google Scholar] [CrossRef]

- Faisal, I.; Farooq, O.; Malik, S.; Alam, M.T.; Mondal, S.; Anowar, T.I. A smart vehicle alert system with intelligent vehicle safety. In Proceedings of the International Conference on Signal Processing, Computation, Electronics, Power and Telecommunication (IConSCEPT), Karaikal, India, 4–5 July 2024; pp. 199–204. [Google Scholar]

- Zaki, N.I.M.; Husin, S.M.C.; Husain, M.K.A.; Ma’aram, A.; Marmin, S.N.A.; Adanan, A.F.; Ahmad, Y.; Abu Kassim, K.A. Auditory alert for in-vehicle safety technologies: A review. J. Soc. Automot. Eng. Malays. 2021, 5, 88–102. [Google Scholar] [CrossRef]

- Borghi, G.; Frigieri, E.; Vezzani, R.; Cucchiara, R. Hands on the wheel: A dataset for driver hand detection and tracking. In Proceedings of the IEEE International Conference on Automatic Face and Gesture Recognition (FG 2018), Xi’an, China; IEEE: Piscataway, NJ, USA, 2018; pp. 564–570. [Google Scholar]

- Lu, J.; Zheng, X.; Tang, L.; Zhang, T.; Sheng, Q.Z.; Wang, C.; Jin, J.; Yu, S.; Zhou, W. Can steering wheel detect your driving fatigue? IEEE Trans. Veh. Technol. 2021, 70, 5537–5550. [Google Scholar] [CrossRef]

- Perrett, T.; Mirmehdi, M.; Dias, E. Visual monitoring of driver and passenger control panel interactions. IEEE Trans. Intell. Transp. Syst. 2017, 18, 321–331. [Google Scholar] [CrossRef]

- Palo, P.; Nayak, S.; Modhugu, D.N.R.K.; Gupta, K.; Uttarkabat, S. Holistic driver monitoring: A multi-task approach for in-cabin driver attention evaluation through multi-camera data. In Proceedings of the IEEE Intelligent Vehicles Symposium (IV); IEEE: Piscataway, NJ, USA, 2024; pp. 1361–1366. [Google Scholar]

- Singh, S. Critical Reasons for Crashes Investigated in the National Motor Vehicle Crash Causation Survey; Report No. DOT HS 812 115; National Highway Traffic Safety Administration (NHTSA): Washington, DC, USA, 2015. Available online: https://crashstats.nhtsa.dot.gov/Api/Public/ViewPublication/812115 (accessed on 17 March 2026).

- Narayana, P. Eye movements Behaviors in a Driving Simulator During Simple and Complex Distractions. Master’s Thesis, San Jose State University, San Jose, CA, USA, 2023. [Google Scholar] [CrossRef]

- Ahlström, C.; Kircher, K.; Nyström, M.; Wolfe, B. Eye tracking in driver attention research—How gaze data interpretations influence what we learn. Front. Neuroergon. 2021, 2, 778043. [Google Scholar] [CrossRef]

- Engström, J.; Markkula, G.; Victor, T.; Merat, N. Effects of cognitive load on driving performance: The cognitive control hypothesis. Hum. Factors 2017, 59, 734–764. [Google Scholar] [CrossRef] [PubMed]

- Ronca, V.; Brambati, F.; Napoletano, L.; Marx, C.; Trösterer, S.; Vozzi, A.; Di Flumeri, G. A novel EEG-based assessment of distraction in simulated driving under different road and traffic conditions. Brain Sci. 2024, 14, 193. [Google Scholar] [CrossRef] [PubMed]

- Giorgi, A.; Borghini, G.; Colaiuda, F.; Menicocci, S.; Ronca, V.; Vozzi, A.; Di Flumeri, G. Driving fatigue onset and visual attention: An electroencephalography-driven analysis of ocular behavior in a driving simulation task. Behav. Sci. 2024, 14, 1090. [Google Scholar] [CrossRef] [PubMed]

- Chen, W.; Sawaragi, T.; Hiraoka, T. Comparing driver reaction and mental workload of visual and auditory take-over requests from the perspective of driver characteristics and eye-tracking metrics. Transp. Res. Part F Traffic Psychol. Behav. 2023, 97, 396–410. [Google Scholar] [CrossRef]

- Underwood, G.; Chapman, P.; Brocklehurst, N.; Underwood, J.; Crundall, D. Visual attention while driving: Sequences of eye fixations made by experienced and novice drivers. Ergonomics 2003, 46, 629–646. [Google Scholar] [CrossRef]

- Victor, T.W.; Harbluk, J.L.; Engström, J.A. Sensitivity of eye-movement measures to in-vehicle task difficulty. Transp. Res. Part F Traffic Psychol. Behav. 2005, 8, 167–190. [Google Scholar] [CrossRef]

- Stutts, J.C.; Reinfurt, D.W.; Staplin, L.; Rodgman, E.A. The Role of Driver Distraction in Traffic Crashes; AAA Foundation for Traffic Safety: Washington, DC, USA, 2001. [Google Scholar]

- Khan, M.Q.; Lee, S. Gaze and eye tracking: Techniques and applications in ADAS. Sensors 2019, 19, 5540. [Google Scholar] [CrossRef]

- Cvahte Ojsteršek, T.; Topolšek, D. Eye tracking use in researching driver distraction: A scientometric and qualitative literature review approach. J. Eye Mov. Res. 2019, 12, 5. [Google Scholar] [CrossRef]

- Narayana, P.; Attar, N. Analyzing the impact of distractions on driver attention: Insights from eye movement behaviors in a driving simulator. In Proceedings of the IEEE International Conference on Robotic Computing (IRC); IEEE: Piscataway, NJ, USA, 2023. [Google Scholar]

- Nagy, V.; Földesi, P.; Istenes, G. Area of interest tracking techniques for driving scenarios focusing on visual distraction detection. Appl. Sci. 2024, 14, 3838. [Google Scholar] [CrossRef]

- Lee, J.D.; Hoffman, J.D.; Hayes, E. Collision warning design to mitigate driver distraction. In Proceedings of the Conference on Human Factors in Computing Systems (CHI 2004), Vienna, Austria, 24–29 April 2004; pp. 24–29. [Google Scholar]

- Liang, Z.; Wang, Y.; Qian, C.; Wang, Y.; Zhao, C.; Du, H.; Deng, J.; Li, X.; He, Y. A driving simulator study to examine the impact of visual distraction duration from in-vehicle displays: Driving performance, detection response, and mental workload. Electronics 2024, 13, 2718. [Google Scholar] [CrossRef]

- Palazzi, A.; Abati, D.; Calderara, S.; Solera, F.; Cucchiara, R. Predicting the driver’s focus of attention: The DR(eye)VE project. IEEE Trans. Pattern Anal. Mach. Intell. 2018, 40, 1101–1114. [Google Scholar] [CrossRef]

- Cvahte Ojsteršek, T.; Topolšek, D. Influence of drivers’ visual and cognitive attention on their perception of changes in the traffic environment. Eur. Transp. Res. Rev. 2019, 11, 45. [Google Scholar] [CrossRef]

- Le, A.S.; Suzuki, T.; Aoki, H. Evaluating driver cognitive distraction by eye tracking: From simulator to driving. Transp. Res. Interdiscip. Perspect. 2019, 3, 100087. [Google Scholar] [CrossRef]

- Ahlstrom, C.; Kircher, K.; Kircher, A. A gaze-based driver distraction warning system and its effect on visual behavior. IEEE Trans. Intell. Transp. Syst. 2013, 14, 965–973. [Google Scholar] [CrossRef]

- Shirani, N.; Song, Y.; Wang, K.; Jackson, E. Evaluation of driver reaction to disengagement of advanced driver assistance system with different warning systems while driving under various distractions. Transp. Res. Rec. 2024, 2678, 1614–1628. [Google Scholar] [CrossRef]

- Alharasees, O.; Kale, U. Comparative analysis of drivers’ vital parameters across varied driving scenarios and experience levels: A pilot study. J. Transp. Health 2025, 44, 102088. [Google Scholar] [CrossRef]

- Krejtz, K.; Duchowski, A.T.; Szmidt, T.; Krejtz, I.; González Perilli, F.; Pires, A.C.; Vilaró, A.; Villalobos, N. Gaze transition entropy. ACM Trans. Appl. Percept. 2015, 13, 4. [Google Scholar] [CrossRef]

| Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |