1. Introduction

In the field of rehabilitation, year after year, efforts are increased to create therapies that actively involve the subject in the process [

1]. In addition, it is about standardizing these therapies based on biomarkers that allow us to indicate in some way the level of involvement of the subject [

2] and the performance of the therapy. Non-invasive brain activity recording technology has proven to be effective in this field [

3], proposing a path between the subject’s intention and motor action [

4]. This connection between cerebral activity and machine action is called brain machine interfaces (BMI). Depending on the potential that is used, the BMI could have a more rehabilitative or more assistive role. However, the two purposes can be combined [

5].

BMIs have been demonstrated as being able to decode brain motor patterns related to the upper and lower limbs in a large number of users with high accuracy, for both motor imagination (MI) [

6] and intention [

7]. BMIs can also decode up to four motor tasks with deep-learning techniques in which non-specific models are applied [

8]. However, making the control depending on the motor task can be cognitively very demanding. Models of relaxation vs. motor imagination have shown promising results [

9], but the performance still has room for improvement. Both paradigms have demonstrated their ability to operate in real time for upper and lower limbs: MI up [

10], MI low [

9]), intention up [

11] and intention low [

12]. Intention and MI paradigms have a different mental workload, which could be useful depending on the application. In rehabilitation, assuring that the subject is actively engaged in the MI could be positive to enhance neuroplasticity mechanisms [

9]. However, in the assistive environment, MI can have high cognitive demands, unlike intention, which is the way people make decisions (spontaneously) and seems a natural paradigm for device control.

For the voluntary intention paradigms, two potentials are related to the preparation of the task: movement-related cortical potential (MRCP) [

13] and event-related desynchronization and synchronization (ERD/ERS) [

14]. The first paradigm is associated with low frequency changes (0.05–3 Hz) and the second with changes of power in alpha (8–12 Hz) and beta (13–30 Hz) bands. MRCP provides timing information about movement stages and planning execution, but it is very unstable against noise, and it is hard to detect it in single trial analysis [

13]. On the other hand, ERD/ERS does not provide precise timing information about different stages of movement planning, preparation and execution, but ERD/ERS has been shown to be detectable from single trial EEG [

15], and it is affected by noise in a minor way in comparison to MRCP [

16].

Regarding the works in the bibliography, four types of analysis are usually carried out. Due to their difficulty from least to greatest, the first ones are the most abundant in the bibliography: offline studies with specific windows, pseudo-online analysis with a window-by-window sweep of the entire signal, real-time analysis in open-loop control without feedback, and real-time closed-loop analysis with feedback.

For the intention paradigm, several aspects have to be taken into account:

Many studies present pseudo-online analysis with filtering steps that cannot be applied in real time or are unrealistic for their application.

Creation of the model with instants only before and after the real movement or before the real movement. Regardless of how the model is created, the verification paradigm may be different. For example, in [

15,

17], an intention prediction verification model is applied, but the model is created with before and after instants.

Level of cognition, totally spontaneous, planned, conscious or evoked (the subject is forced to turn when seeing a mark).

From offline studies with turn direction, the work carried out in [

18] applied a large concatenated feature vector and trained the model with a k-nearest neighbors classifier. These studies obtained an offline accuracy of over 90.0% in most of the subjects. However, the methodologies are not suitable for pseudo-online.

Regarding the works that present pseudo-online analysis, the most abundant are those that propose start-walk and stop-walk paradigms. In [

19], a model approach was made with a signal before and after the change and a processing based on the Stockwell transform. The evaluation was not predictive, and it used a four-second detection window for pseudo-online start and stop detection, which employed data from two seconds before the movement to two seconds after the movement. They achieved an average of 80.5 ± 14.6% True Positive (TP) with 4.43 ± 3.67 FP/min in start–walk detection and 84.1 ± 14.6% TP with 4.7 ± 5.3 FP/min in stop–walk detection.

However, in [

15], a model with a signal before and after was created with the pseudo-online evaluation being predictive. Four data frames of each trial were taken as the baseline to calculate the ERD features. Nevertheless, the preprocessing methodology cannot be applied online, as artifact subspace reconstruction (ASR) and the ICA methodology were applied. The results for start–walk were 78.3 ± 8.6% and 6.5 ± 0.8 FP/min, and for the stop–walk paradigm 81.4 ± 7.2% and 9.2 ± 1.8 FP/min. This baseline was reported as a gap for real-time operations [

15].

The literature shows two open-loop turn models with an exoskeleton, using the MRCP paradigm and evoked potentials. In [

20], an algorithm,

filter, was applied to mitigate ocular artifacts, achieving an accuracy of 98.17% for the turns with an impaired patient. In [

21], ASR was applied as a preprocessing technique, to distinguish between four tasks, including turns to both sides. Two subjects participated in the study with different accuracies: able bodied (right 84.5% and left 78.5%) and impaired (right 72.1% and turn left 66.5%).

Regarding speed paradigms. In [

22], the model was trained with a planned conscious model. The pseudo online model performed better with a window, including before and after data than with just the previous one. In [

12], an only-before-data model was used both for training and testing. The results were 42.0% TP and a FP/min of 9.0 for the pseudo-online analysis. In [

17], a model was trained with data before and after the motion. However, only data after motion were used in the evaluation. The preprocessing consisted of principal component analysis (PCA) + Laplacian spatial filter and the processing of a wavelet synchro squeezed transform with baseline correction. Results for a subject achieved 81.9 ± 7.4% with 7.7 ± 0.8 FP/min. In addition, a real-time test of 12 repetitions was completed with a 75% TP ratio and 1.5 FP/min. However, the EEG classifier was combined with an inertial sensor classifier working together with the predictive BMI.

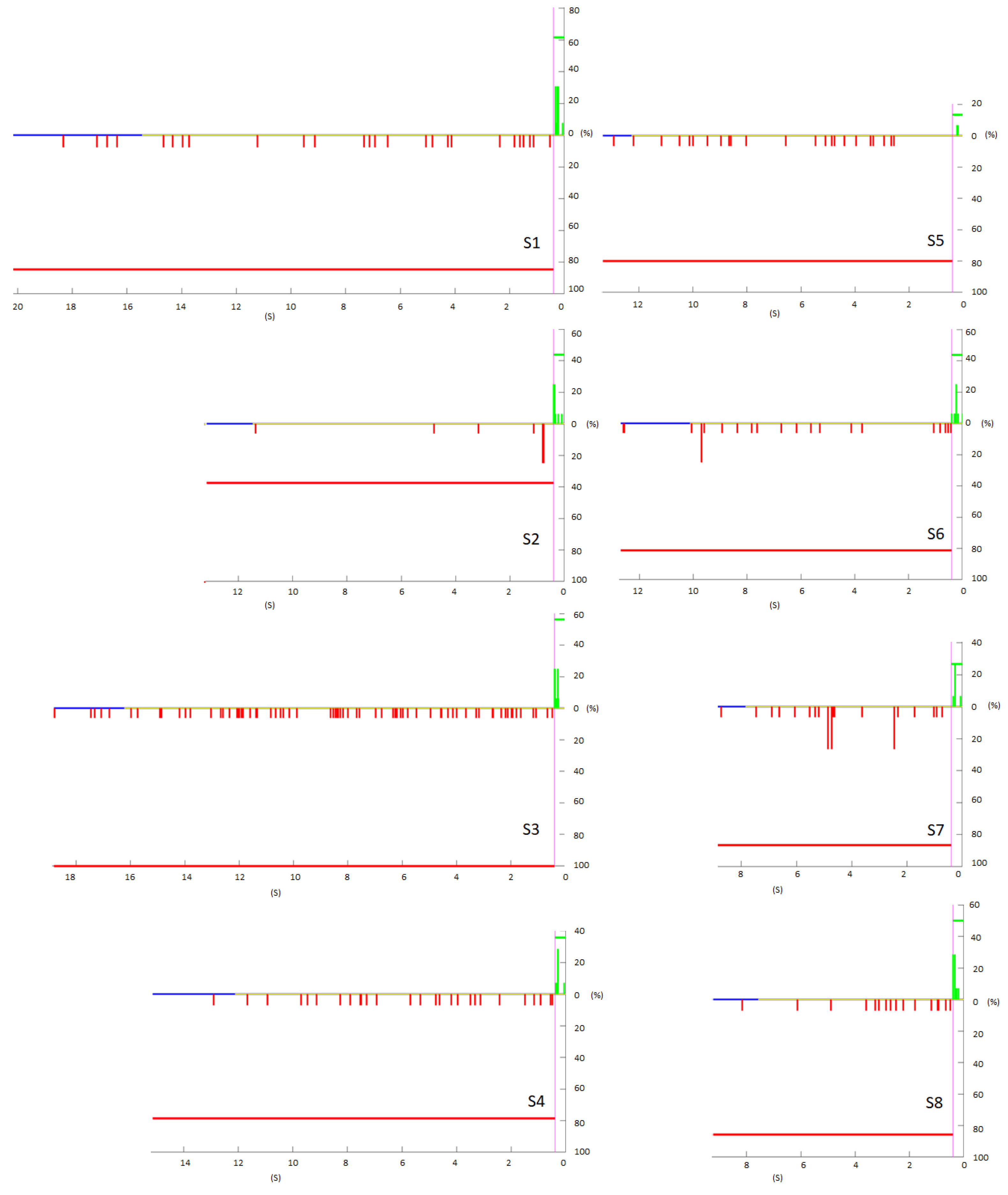

When a BMI works in real time, the most crucial metric is FP/min. A high FP/min means that the interface will fail against the subject’s will too many times. If this happens in a closed-loop scenario, the BMI will force the changing of state. However, not many studies have addressed why and how often these FPs happen throughout the trials. It is important to analyze if the FPs are concentrated in a short period of time or if they are dispersed among the trials. In the case that the analysis is taken without feedback (open-loop control), the session can become monotonous. This can make the subject lose their focus, lowering the performance, just because the degree of alertness and vigilance are not proper [

23]. Therefore, there could be trials with too many FPs and some without them [

24], which may indicate that in an online test, the metrics could be more favorable. The analysis of the distribution of the FPs during the trials is one of the main points addressed in this work.

Several of these mentioned works have approached the paradigm of intention with different methodologies and purposes. There are not enough works that address an asynchronous intention model with pre-motion information, and its validation in real time with leading pre-processing (such as ASR [

25] or

[

26]) and processing tools (such as Riemannian manifold [

27] or common spatial patterns (CSP) [

9]) in the literature. Therefore, the objective of this study was to use the EEG as a biomarker of the intention to turn. This indicator was used in real-time detection and in the future may be used to send commands to an assistive device. To achieve this objective, the present work is organized with the following scheme:

Development of an algorithm for the proper detection of the moment of direction change through inertial measurement units (IMUs). This new algorithm provides accurate information of the turn, which allows an adequate segmentation of data for a proper intention model creation (only before information) and a correct metric evaluation of the intention to change direction.

Implementation of state-of-the-art preprocessing techniques to mitigate artifacts. ASR and are compared in the paper.

Development of robust methods for the distinguishing between monotonous walk (several seconds before change intention) and the intention to change direction (seconds before actual change of the walking direction).

Offline analysis of brain patterns using the covariance matrices at different frequencies with a Riemannian manifold approach. The validation of these offline markers is tested online for one of the subjects to verify their consistency.

2. Materials and Methods

2.1. Equipment

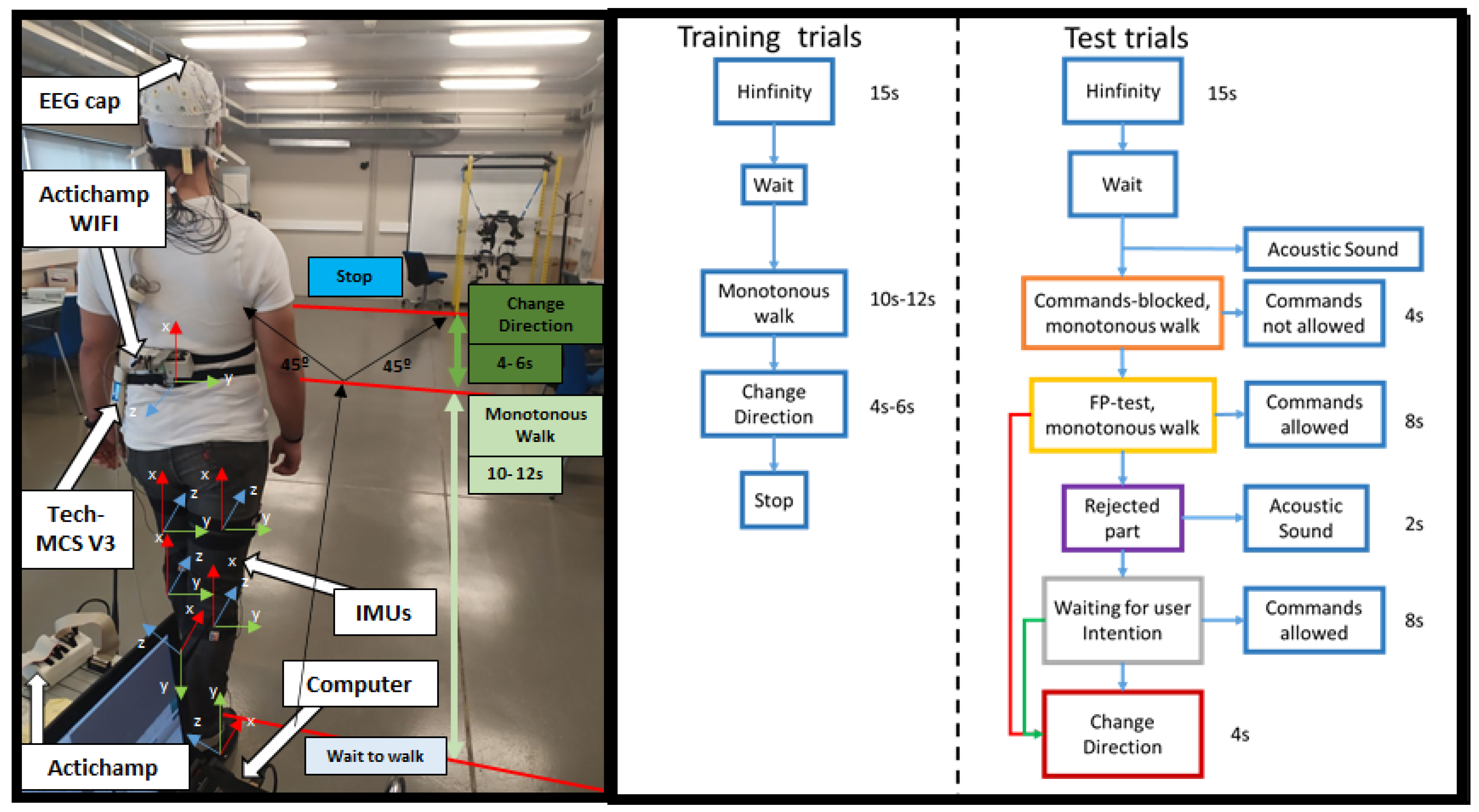

For the EEG data recording, a 32-electrode actiCHamp bundle (Brain Products GmbH, Germany) was used (

Figure 1). Signals were transmitted wirelessly by the Move transmitter to the actiCHamp. The electrodes registered EEG activity through 27 non-invasive active electrodes following the international 10-10 system distribution (F3, FZ, FC1, FCZ, C1, CZ, CP1, CPZ, FC5, FC3, C5, C3, CP5, CP3, P3, PZ, F4, FC2, FC4, FC6, C2, C4, CP2, CP4, C6, CP6, and P4). One of the electrodes was placed on the right ear lobe for reference, and an additional electrode acted as ground on the left ear lobe. The other four electrodes were placed to record electrooculography (EOG) activity in bipolar configuration (HR, HL, VU, and VD). A high-pass filter at 0.1 Hz and a notch filter at 50 Hz to remove the network noise were applied by hardware. Signals were registered at 500 Hz. A medical rack was placed on the head to mitigate the electrode and wire oscillation due to motion. The 32-electrode signals were visualized in real time by the technical assistant in the pycorder actiCHamp software during the trials.

For the change direction detection, seven IMUs (Tech MCS V3, Technaid, Spain) were placed for registering the walking parameters at 50 Hz sampling (

Figure 1 left): one at the back and for the remaining six, three on one leg and three on the other placed on the gluteus, shin and foot.

EEG and IMU signals were recorded at their sampling frequencies and synchronized in Matlab by a custom software.

2.2. Register Experimental Procedure

For the experiment, the subject trained a model of brain activity. The experiment was designed to try to discriminate among two classes: monotonous walk and intention to turn. To characterize these mental states, the subject was instructed to leave their mind blank out. The subject should turn at their will trying not to anticipate the changing too much to assure an spontaneous change of direction.

At the beginning of the session, 60 s of signal were recorded (for the ASR baseline) while the subject was standing and as much relaxed as possible (basal state). Subsequently, the subject performed 13 trials of the designed protocol for the recording of spontaneous EEG related to direction change. In each of these trials, the subject started standing. During the initial 15 s, the activity was recorded in basal state for the

convergence. Once this period was over, the subject started walking until they decided to turn left or right indifferently approximately at a 45 degree angle. The subject was instructed to turn abruptly (turn with the whole body, instead of starting with one leg or with the head to facilitate detection with the back IMU). The turn was made asynchronous.This was done within the trial, without stopping the recording eight times (

Figure 1 Right Training trials), so each trial consisted of eight turn event repetitions.

Nevertheless, one turn event repetition could be discarded for the creation of the model if an anomaly was observed. Anomalies detected could consist of forgetting to turn, making a strange gesture, coughing, biting or chewing, swallowing, or turning very soon and not allowing enough normal walking time period (details in

Section 2.4.2).

The first two trials were discarded, as the subjects were getting used to the protocol. Subjects performed around 12 trials, as 10 was the number of trials employed for the analysis. The last trial (number 13) was carried out with the aim of taking a similar number of repetitions through the subjects in case more repetitions were discarded. This last repetition was only added if the number of discards exceeded 10 repetitions.

2.3. Subjects

Eight healthy subjects, with an average age of 25.4 ± 3.5 years (four female and four males) participated in the study. The subjects reported no diseases and they participated voluntarily in the study by giving their informed consent according to the Helsinki declaration approved by the Ethics Committee of the Responsible Research Office of Miguel Hernandez University of Elche (Spain).

After analyzing the signal, one subject was selected to validate online the proposed model in real time.

2.4. Offline Validation of the Turn with the Imus

2.4.1. Algorithm to Detect the Turn

Of the seven sensors recorded, only the back IMU was used for gyro analysis. The choice in this paper of a single IMU rather than a combination of a group of IMUs was due to the fact that turn strategies are usually top–down, and there may be a time lag in the accurate detection of the turn moment. Thus, depending on the position and measurement (angle or angular velocity), the noise can affect differently. In [

28], the back position (upper or down) was reported as the most effective one, being that the angle measurement is the most accurate way to detect the turn. Therefore, to standardize the turning methodology and assure that the body trigger was the same for all the subjects in this research, they were asked to turn with the trunk.

From the matrix of leading cosines, the

Xz and

Yz values were used and the angle in the zenith plane was calculated, according to the following equation:

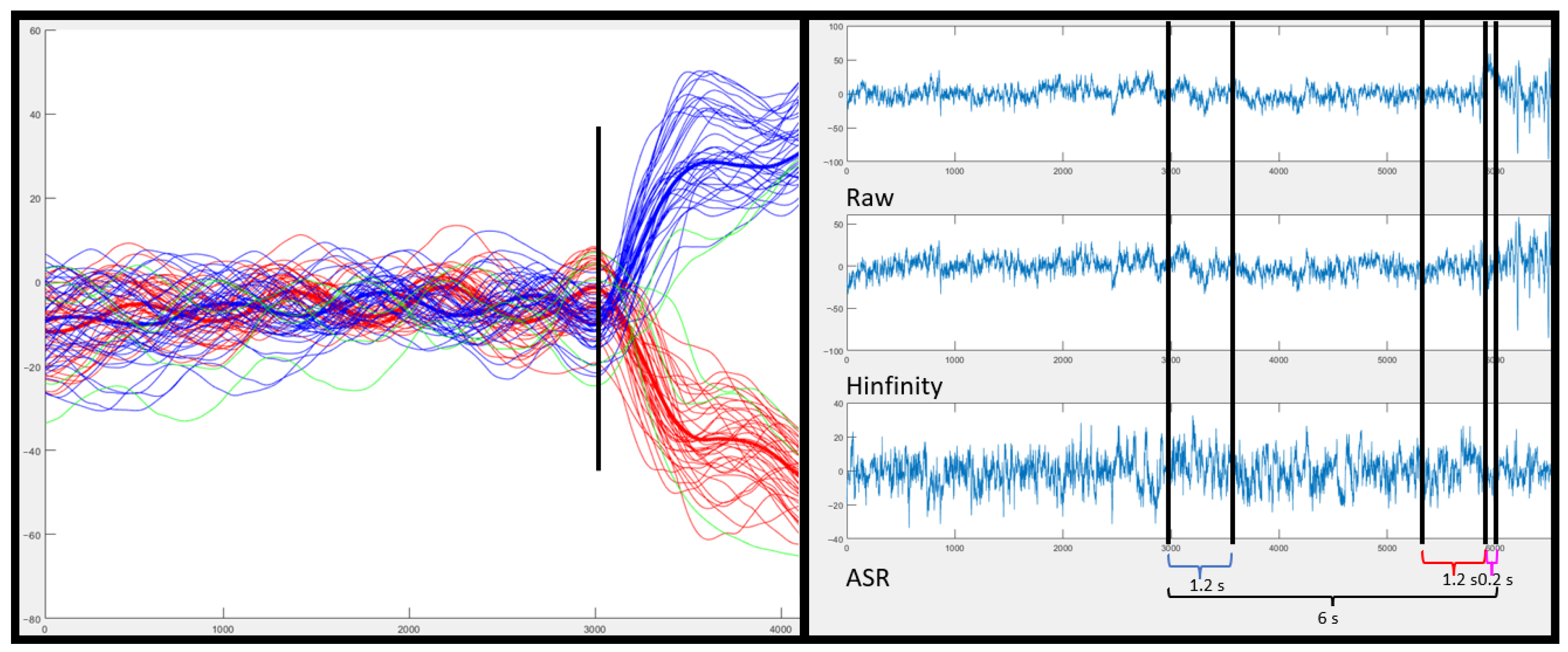

In order for the algorithm to be accurate and calculate the exact point where the major angle change occurred, the noise was reduced by using a mslowess filter in matlab with ‘Order’, 0, ‘Kernel’ type ‘Gaussian’ and ‘Span’ smooth window value of 0.02.

The inflection points were calculated from the filtered signal. First, the double derivative of the signal was calculated, and a threshold was selected based on the maximum value that produced the inflection of the turn. For obtaining the threshold, it was iteratively evaluated which inflection values exceeded the average of the inflection values by a factor. This factor started at six; if no value fulfilled the condition, the factor was decreased by one, until it reached one. For the values above the mean, the first one was temporarily chosen, and this was selected as the change point.

Repetitions were labeled according to its directions as left or right. For this, the mean of the signal before the change and the mean of the signal after the change were averaged. If the value of the mean after the change is positive, it is considered a right turn; otherwise, it is considered a left turn (see

Figure 2).

EEG data labeling was based on the actual point at which the turn was detected by the IMU. The monotonous walk class was considered a 1.2 s window, six seconds before the turn, previous to the change and change direction intention classes (

Figure 2). The temporal specification of these windows is detailed in

Section 2.5.1.

2.4.2. Discards and Validation

Within the protocol-related discards, IMUs were used to discard those repetitions in which the subject turned too fast. The criterion chosen was that the distance between the third step (labeled as in [

29]) and the turn should be less than 6 s. In addition, a turn could be rejected if it was labeled incorrectly. A discard algorithm was designed to flag those turns that disagree with a criteria based on the means of the left and right repetitions. Each repetition was correlated with the mean and considered erroneous (in green

Figure 2) if the correlation index was lower than 0.9, or the angle increment differed by an angle lower than 25 degrees from the mean signal.

Because the algorithm can discard more repetitions than it should, for the creation of the offline-cross validation model in the previous analysis of the EEG signal (before the online test) only those that were visually detected as erroneous were discarded, and the discard algorithm was only used as an aid. After validation (

Section 3.1), it was used in the real-time test (

Section 2.7).

Due to the fact that the algorithm can discard more repetitions than it should, for the creation of the Offline-cross validation model in the previous analysis of the EEG signal (before performing the online test), only those that were visually detected as erroneous were discarded. In this section, the discard algorithm is only used as a tool for helping the visual discard. After being validated (

Section 3.1), it was used in the online test (

Section 2.7), where time was more critical and in a hurry, the visual inspection could not be performed correctly.

2.5. Offline and Pseudo-Online Validation of the EEG Intention

The aim of this work is to use the EEG as a biomarker of subject intention and use it on its own, independent of the signals from the IMUs. The acceleration signal is used to generate the predictive EEG model from the IMUs as a marker of the actual turn execution.

2.5.1. Offline-Cross Validation

The turns were segmented according to whether they were leftward or rightward. However, intention patterns of monotonous walk vs. turn were classified for both left and right turns in the EEG analysis. To find out which configuration maximized the classification results, a leave-one-out cross-validation was computed. Different artifact filters, frequency bands and classifiers were tested.

Before segmenting the signal, two types of filters were applied to the entire recording separately:

To apply both algorithms, frequency filters by state variables (order 2) were employed to isolate different bands: 8–14 Hz, 15–22 Hz, 23–30 Hz, 31–40 Hz and 8–40 Hz. The selection of these bands was based on the basic connections of the nature of the EEG [

30] and previous works in which the intention was characterized [

14,

22].

To characterize the time windows of both the monotonous walk and turn intent classes, the previously calculated turning point was used as a reference point 0 s. The monotonous walk class was defined in a period of time between −6 s and −4.8 s before the actual event. The change intention class was defined within −1.4 s and −0.2 s before the actual turn. Later, the covariance matrix features of all electrodes were extracted according to the “scm” method for each artifact filter condition and band in all the segmented windows.

From the 10 trials considered valid, the first eight trials were used in the validation (one for testing and seven for model). The last two trials were left for the pseudo-online testing explained in

Section 2.5.2.

The Riemannian paradigm was used for modeling. This paradigm was applied for four different classifier types: a support vector machine (SVM) classifier [

31], a minimum distance to mean (MDM) classifier, an MDM classifier with filter and an linear discriminant analysis (LDA) [

27]. The evaluation metrics used for the classifiers were accuracy (

Acc) and balance (

b) between classes. The randomness value was used to filter out those

that were below the randomness threshold [

32] and those

b that were above 10 (this value was considered as a good tolerance between classes difference for a balance classification prediction).

The values that passed this cut-off were averaged depending on the classifier, according to the artifact filter type and band filter. From this analysis, a generic configuration valid for all subjects will be chosen and will also be compared with a personalized one, that is, the best band and classifier configuration for each subject. The relevance of these two configurations in real-time testing will be discussed later.

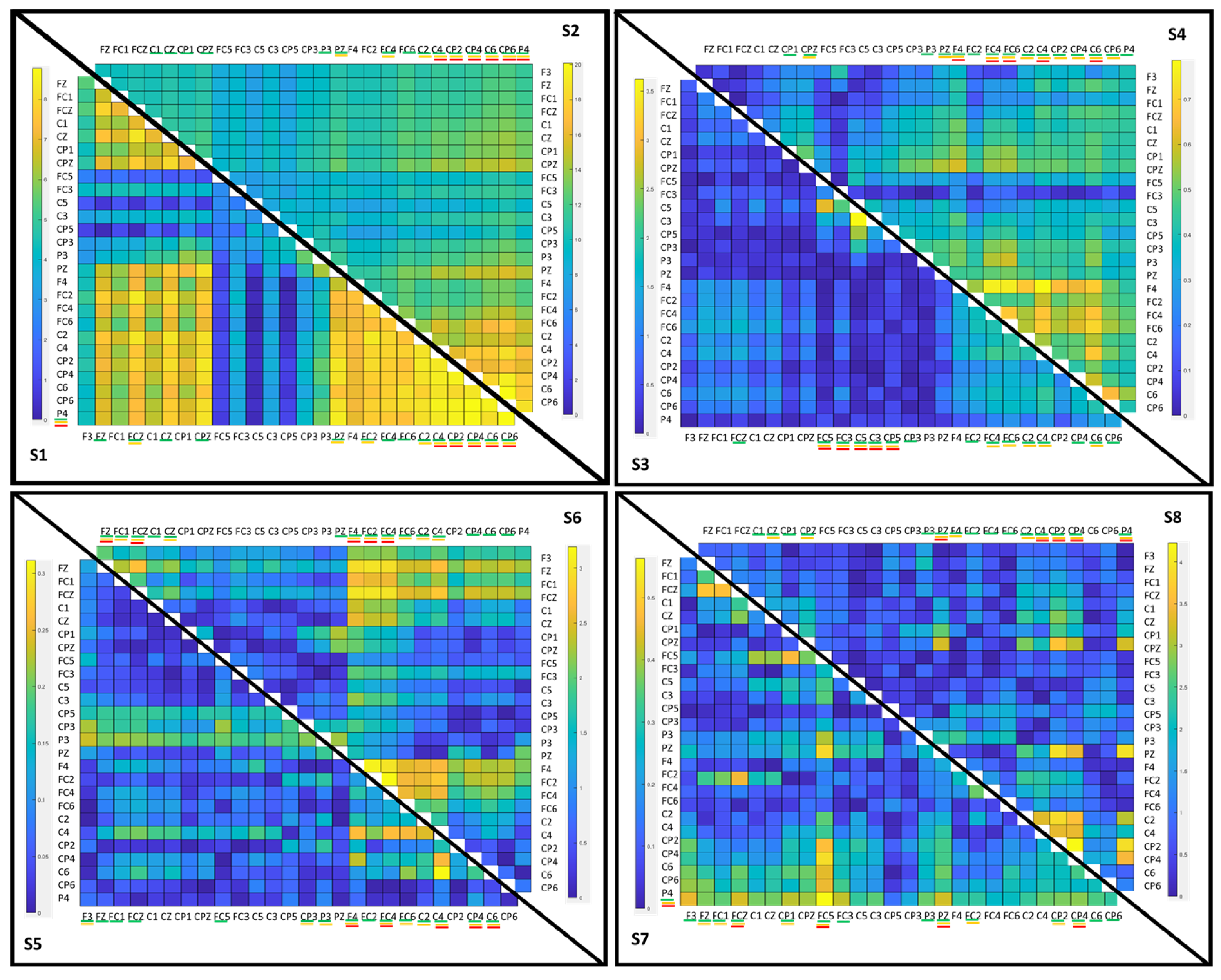

In addition, several alternatives were tested in the best configuration of these two to discuss how they can influence, for example, creating an intention prediction model only with right turns or only with left turns and if there is any difference with the current model that includes right and left turns. In addition, the contribution of the different brain areas to our classification model were analyzed.

Offline-Cross Validation Model Type

According to the criteria discussed in

Section 2.4.1, the repetitions were grouped according to whether the turn occurred in the left or right direction, and a cross validation was carried out with fewer testing trials than in the left and right model.

Offline-Cross Validation Electrode Selection

From the personalized configuration, it was also studied which channels have a greater difference between the classes of monotonous walk and change intention from the covariance matrix. To calculate the electrodes that maximized the difference between the covariance matrices of both classes, the mean of both covariance matrices were calculated. For the monotonous walk class

MeanClassMonotonousWalk and for the change class

MeanClassChange, the formula used was the following:

From the diagonal array, the highest pairs of electrodes were taken and added to the list. This list was ordered from highest to lowest, and the first 15, 10 and 5 highest were taken.

2.5.2. Pseudo-Online Analysis

After detecting the best combination of features and electrode configurations for the three types of classification models, the pseudo-online analysis was performed in order to check them in a simulated real-time scenario.

No cross validation was used in the pseudo-online analysis in order to simulate the real-time conditions. This way, the model was created using the first eight trials with the explained offline procedure and tested with the last two trials of each session with the following procedure.

Trials were analyzed epoch by epoch, with a size of 600 samples (1.2 s), shifting them every 100 samples (0.2 s), producing an effective 1.0 s overlap between epochs. The preprocessing was performed in the same way as in the offline model using the state variable filters. However, the whole signal was processed in the pseudo-online analysis extracting the features based on the generic and personalized selection.

Meanwhile, the classification of each window was only evaluated in the interval between points marked as the beginning of walking (the third step) and the change labeled (see

Figure 3). Regarding the command decision, a TP was computed if five consecutive epochs were identified as a turn change class and the detection was detected at most 0.4 s before the actual IMUs detection of the change; otherwise, it was considered a FP. Taking into account the epoch size and shifting, the time window in which a TP can be considered valid before the actual change could contain information from −2.40 s to 0 s.

The number of successful changes, the

TP rate of the test, could be defined as

If one of the epochs was detected within the change range, a true event was computed, and the signal processing was stopped until the start of the next repetition. As a realistic way to compute FP, once one was detected, the next one could not be computed until at least two seconds passed. This helps to simulate that in a real-time scenario, as the command is sent (to perform a feedback action), it is necessary to wait a while until the next command can be sent again. As the normal walking time period has a duration of 8–10 s, the FP evaluation can be expressed as

In addition, the FPRatio was calculated, which indicates the percentage of repetitions in which there was an FP. The TPnoFP indicates the percentage of repetitions in which there was no FP and if there was TP.

2.6. Online Experimental Procedure

With the offline register protocol, the intention patterns were analyzed and studied in eight subjects to finally propose an online evaluation (

Figure 1 Right Test trials): On the day of such an evaluation, first a model was again created with the day’s activity in an analogous way as explained above (

Section 2.2). This model was created with fewer trials, since the subject is already trained in this task. The model was then generated with the selected features and filters; the EEG analysis methodologies are discussed in

Section 2.5.1. Finally, the effectiveness of real-time BMI prediction was evaluated:

In each online trial, at the beginning, the subject waited and relaxed 15 s for the

to converge, and after this period, the subject walked four seconds (no FP were allowed) + eight seconds (FP were allowed) without the intention to turn to evaluate FP. Subsequently, after the instructional cue, the subject decided in a time interval of three to five seconds when they wanted to turn. If the turn happened before the detected turn, it was counted as a TP turn. The details of the validation are discussed in

Section 2.7.

2.7. Online Validation of the EEG Intention

An online test was performed with one of the subjects who already had experience with the protocol. The training was carried out in the same way than the offline model. To create the model, it was necessary to choose the most reliable artifact filtering technique to apply, the frequency band which provides the best segmentation, and the classifier which performs with a higher accuracy. This choosing can be based on two types of approach after analyzing the offline results. First option consists of a general configuration, which would be valid for most of the subjects and that does not require a high temporary expense. The second option looks for maximizing the success, personalizing the model for each of the subjects. The second approach, as it must be done between training and testing, can take a long time. In this study, the explained configuration took about 15 min, so it was chosen as the valid option.

For signal analysis, the features were extracted from windows of size 1.2 s, and the window moves every 0.2 s in real time. However, in the pseudo-online analysis, it was considered a mode of the window of five. This was adapted to the online test. For the specific test, a mode of four was used. For this adaptation, in the first two repetitions, it was analyzed which mode was the most suitable to maximize success in the 2nd phase.

Once the model was created following the steps below: algorithm IMUs detection, algorithm discard IMUs and offline-cross validation for best configuration selection. The protocol consists of two phases: As explained above, the subject started walking after the 15 s of convergence of the . In this 1st phase, no FP should be detected, failing if one is detected. A FP is computed if the interface detected the class intention to turn in the verification metric instead of the class monotonous walk.

If the 1st phase did not compute FPs, the second phase was considered TP if the turn intention was detected before the IMUs algorithm detects the change (see

Section 2.4.1). A FP at the 2nd phase was considered if the interface detected a change after the IMUs detection or 0.6 s before the turn occurred in the period of monotonous walking, the window interval for the pseudo-online test was 0.4 but due to the change of mode (from five to four), these were readjusted.

The evaluation was computed in real time, but the feedback to the subject was given by the technical assistant, indicating the subject to turn (closed-loop) if the interface failed in the first phase. If during the evaluation phase, the BMI detected a TP, the technical was able to visualize it and gave feedback on the performance after the voluntary turn was done (open-loop), as the difference in time was so close such that it prevented the real-time feedback by voice command. Nevertheless, a verification of the TP was performed after the trial.

4. Discussion

The first objective of this work was to design a robust algorithm for offline detection of the real turning moment by means of IMUs. In addition to validate the performance of the algorithm, a discard algorithm that detects the correct repetitions and eliminates the invalid ones was designed. The discard method for labeling the IMUs was carried out by a visual inspection. The decision of the visual inspection and the algorithm was contrasted. Although the algorithm detected as invalid more repetitions than the visual inspection, which the algorithm corroborated, it only failed in one. Although in

Section 2.5.1, it was only used as a reference, it was actively used for the online test for the direct creation of the model.

The second and the most important objective for this study is to detect the intention to turn in an online test from the EEG signal. Many configurations were tested to try to maximize the accuracy and performance of a BMI only commanded by the subject’s brain activity.

ASR and

are two of the most popular algorithms in the literature, and in this work, it was evaluated how they can affect the creation of a model. The first ASR algorithm uses a shifting PCA window on covariance matrices to remove large amplitude artifacts potentially, while the second focuses on ocular artifacts and drift. For the extraction of features and the creation of the model, the covariance matrices and several Riemannian manifold classifiers were applied. This together with CSP with its variants are two of the most used algorithms in the state of the art. The CSPs were discarded due to the low number of repetitions of each of the classes and did not obtain good results in earlier experiments. The spatial/temporal features were prioritized before the frequency ones. According to our analysis, the features in frequencies obtained very variable results [

12].

In addition, in this work, different frequency bands were evaluated to examine where the correlates of intention differ to a greater degree from the correlates of the monotonous walk. The filters used were by state variables to later be able to apply them in real time. In these frequency bands, the covariance matrix for both classes was extracted in 1.2 s windows and the patterns of both classes were classified with different Riemannian classifiers.

Several aspects of the statistical analysis stand out. First, the significance that exists between subjects is highlighted. There are bands significantly better than others; 8–40 Hz and 23–30 Hz seem to be the most significant. Furthermore, it seems that there are classifiers that under all conditions perform better than the rest, MDMfilter and MDM.

From this analysis, it is also concluded that there are no significant differences for or ASR. Both algorithms remove artifacts that could influence the turn decision (although the subject was trained not to do so). However, for the selection of a configuration, one must be chosen based on some criteria.

From the analysis of

Table 3 it is concluded that the band and the classifier with the highest percentage and with the greatest number of valid configurations is the MDMfilter in the band 8–40 Hz in general. To choose which filter is selected in the

Table 4, the mean of

is higher both for the generic configuration and the lower balance, as well as for the personalized configuration.

The generic and personalized settings were tested in pseudo-online mode. The former takes less time than the latter to perform an online test. However, the personalized configuration has less FP and a higher percentage of TPnoFP. Therefore, for the test carried out in this study, this previous analysis will be carried out.

The results of the pseudo-online analysis were promising for three of the subjects. This is promising for online testing: respectively, the results of TP of 43.8%, FP/min 2.9 and TPnoFP of 12.5% for S2; S1 with TP 61.5%, FP/min 8.0 and TPnoFP 7.7%; and S6 with TP 43.8%, FP/min 7.5 and TPnoFP 12.5%. In addition to

Figure 3, it is extracted that the FP/min accumulated near the validation point.

For the personalized configuration, several variations were also tested:

First, it was tested whether it was easier to predict a model with only turn right or only turn left or both. The average of the results was better for both. Although right or left improved for some subjects, these settings are generally worse for all subjects.

Second, a selection of electrodes was proposed. With this selection, although the results did not improve, the areas that most differentiate both classes were analyzed. The right hemisphere is the one that most differentiates this pattern. The posterior parietal cortex area is the most relevant, and this also agrees with what is expressed in other paradigms of intention [

34].

The ability of S2 to modulate its brain activity allows for robust EEG classification. The online test obtains very good results, according to the FP/min metric used (once an FP was sent, the person turned and stopped). The designed experiment allows to accurately evaluate the conditions of the interface and can be easily extrapolated to the closed loop, as has already been done in similar experiments [

12]. The FP/min obtained in this 2.5 test is one of the lowest results in the literature, also taking into account the features of this study; only information prior to the change is used, and only information from EEG brain activity is used [

15,

17]. The subjects of this study show more variability than in those mentioned studies, but this trend can be observed in many other works [

35] of the bibliography. Comparing the online open-loop test with other works, two-state stop–start (no transition between two states with motion), in this work, information only before the real movement was used. Comparing with the jobs that do have a transition between two states with movement and online evaluation [

17], apart from using only information from before, the IMUs were not used to lower FPs.

Limitations and Future Work

Multiple factors may be conditioning our study and introducing undesired variability. Below are some of the most important factors to take into account for improvement and future proposals.

Regarding registration equipment, the signal was recorded wirelessly, rather than being wired, to offer mobility to the subject and avoid tracking problems. However, although electronic devices external to the registry were avoided, in the room, the wireless signal can be affected by electrical sources and radio signals.

In this study, a wide variety of algorithms were tested to try to maximize the performance of subjects, acting over the pre-processing, processing and classification methods. Regarding the filters used in pre-processing, many algorithms in the literature competed for artifact mitigation. Some of them used their own signal to discard noisy components, typically Laplacian [

12], CAR [

36] and MPCA [

37]. These methods mix the variance between channels and impair the distinction of covariance matrices, which makes them incompatible with Riemannian tangent space algorithms. Other methods use a baseline to remove noisy components, such as ASR, or even another kind of biosignal to remove interference, e.g.,

. These last two were tested in the paper.

Frequency filters were applied by state variable models. This choice was made to make the BMI compatible with previous BMIs [

9] and work in real time. The final objective is to merge paradigms obtaining a total movement control by the BMI. Other methodologies, such as variational mode decomposition [

37,

38] and empirical wavelet transform [

15,

39], could be interesting techniques to analyze the rhythms in frequency–time and show highly accurate results [

6]. However, its implementation in the current architecture would require further code optimization and computing resources to assure that each epoch iteration can be processed at a 0.2 s pace, which is the needed time for a proper average.

Regarding processing and classification, the classifiers tested were based on Riemmanian geometry, which is a current topic of interest in the field [

40]. In previous phases of the study, other algorithms, such as neural networks, that require large amounts of data were discarded, as this paradigm has a limited amount of valid signal samples that can represent the neural state of intention to turn.

It is important to notice, that after testing many configurations, subject dependency was high. This is a general problem of EEG analysis [

41], and hard to overcome. Subjects that did not obtain good results had really difficulties to improve. Thus, other alternatives should be explored to try to improve and standardize the variability of the results. Some of the aspects that should be considered are as follows.

Although in other paradigms, such as MI, it has been reported that improvement can occur during the week based on a plasticity phenomenon, the improvement through intention paradigms is not clear. Nevertheless, Gangadhar et al. [

42] reported an improvement after multiple sessions.

Although the instructions on mental states were clear, some of the subjects performed the mental process in a different way. Protocols should be improved, making special emphasis in the messages to the subjects, guiding them in a more strict way in the explanation of the mental processes. FPs could be reduced, standardizing the mental states that can occur during the monotonous walk phase. Different cognitive states could be tested in addition to a totally spontaneous turn, being aware of making the decision. Using a continuous mental process of preparation for the turn would avoid relying on a spontaneous intention event. This could be a more robust and easier way to detect the turn, because the window in which this cognitive phenomenon occurs is larger that the one when it is totally spontaneous [

43]. These factors could produce a greater improvement than the improvement that sophisticated processing or classification algorithms could produce [

44].

The online validation chosen has a slightly different conditioning factor than the pseudo-online analysis might have. This may also explain why a smaller mode (four) S2 real-time experiment fits better than the pseudo-online analysis (five). However, the online validation, as discussed above, allows a very simple validation that is easy to extrapolate to the use of assistive devices [

12].

The objective is to apply this biomarker on patients with some degree of disability, whose training can be difficult to do for enough trials. The logical step to apply this to any type of patient is to try to make the EEG model independent of the subject. Nevertheless, transfer learning has limitations in the application of EEG [

45], and especially for this paradigm, as there are many sources of variability (different turn intention pattern and label trend). Other alternatives, such as the generation of repetitions from GANS [

46], could be more appropriate to subsequently apply neural network methodologies. The control would be performed with an assistive device, and the EEG model would be retrained in real time. It is proposed to create a generic model and to set up a real-time adaptation prototype.

As this experiment has a dual application in both the rehabilitation and care domains, an attempt should be made to improve the robustness of the system. By modeling the EEG as two classes, other point mental states (or noise) that were not in the training may be mistaken our classification. This can be seen in

Figure 4. Although for some subjects, the classes were very mixed, for those who obtained better results, see results in bold

Table 7, the UMAP algorithm represented the classes separately, except for some samples in the intermediate state. Therefore, having several uncertainty states (that favor the non-activation of the device) enveloping our data may be an effective way to achieve greater robustness for real-time use, outside of a controlled experimental setting.