Monetary Compensation and Private Information Sharing in Augmented Reality Applications

Abstract

1. Introduction

2. Background

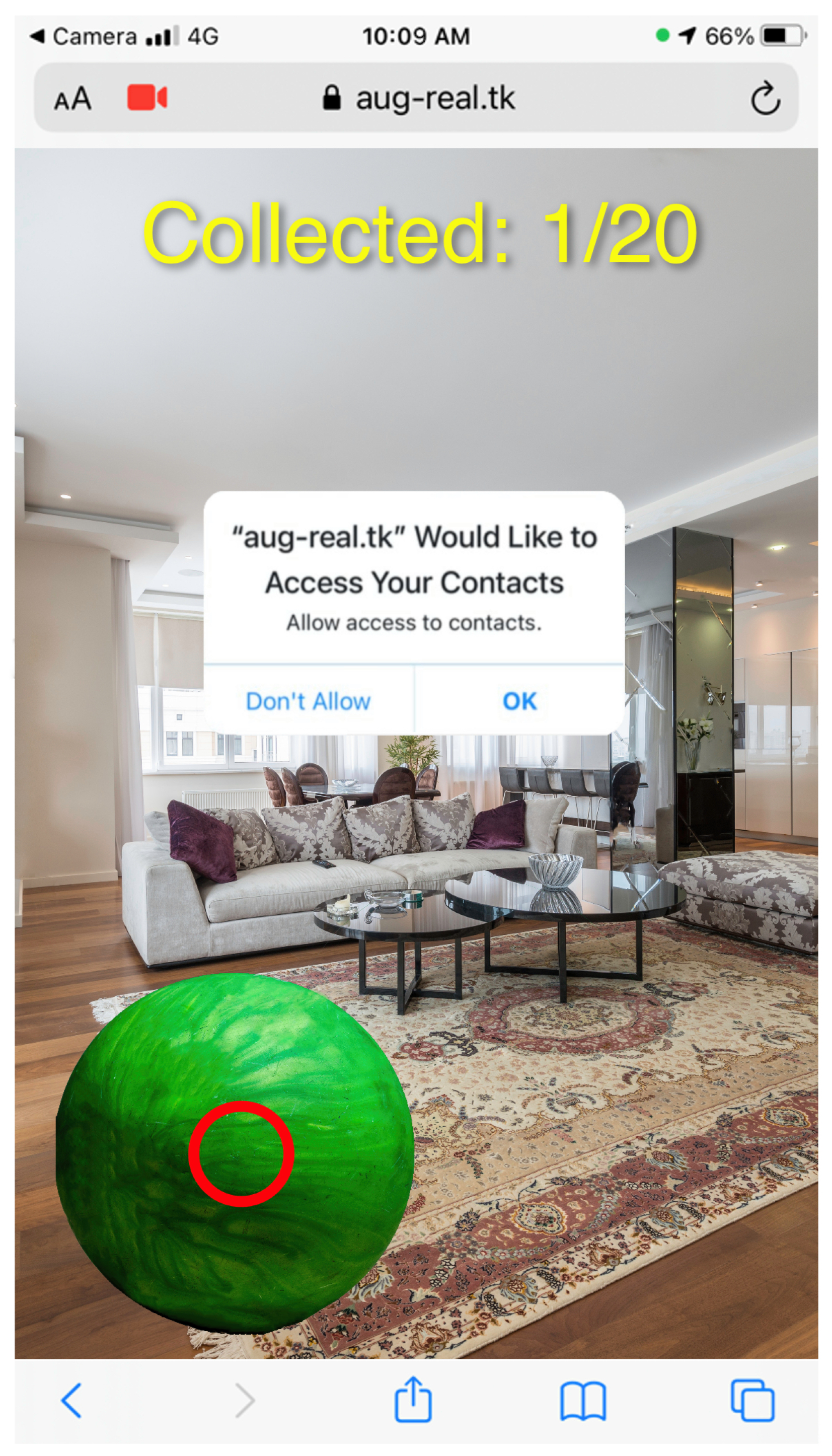

2.1. Augmented Reality

2.2. Personal Information Security Threats

2.3. Price of Information

2.4. Using Crowdsourcing Platforms to Elicit Human Behavior

3. Research Questions and Objectives

- Regarding access requests in AR apps, which types of information are people more/less likely to grant permission to?

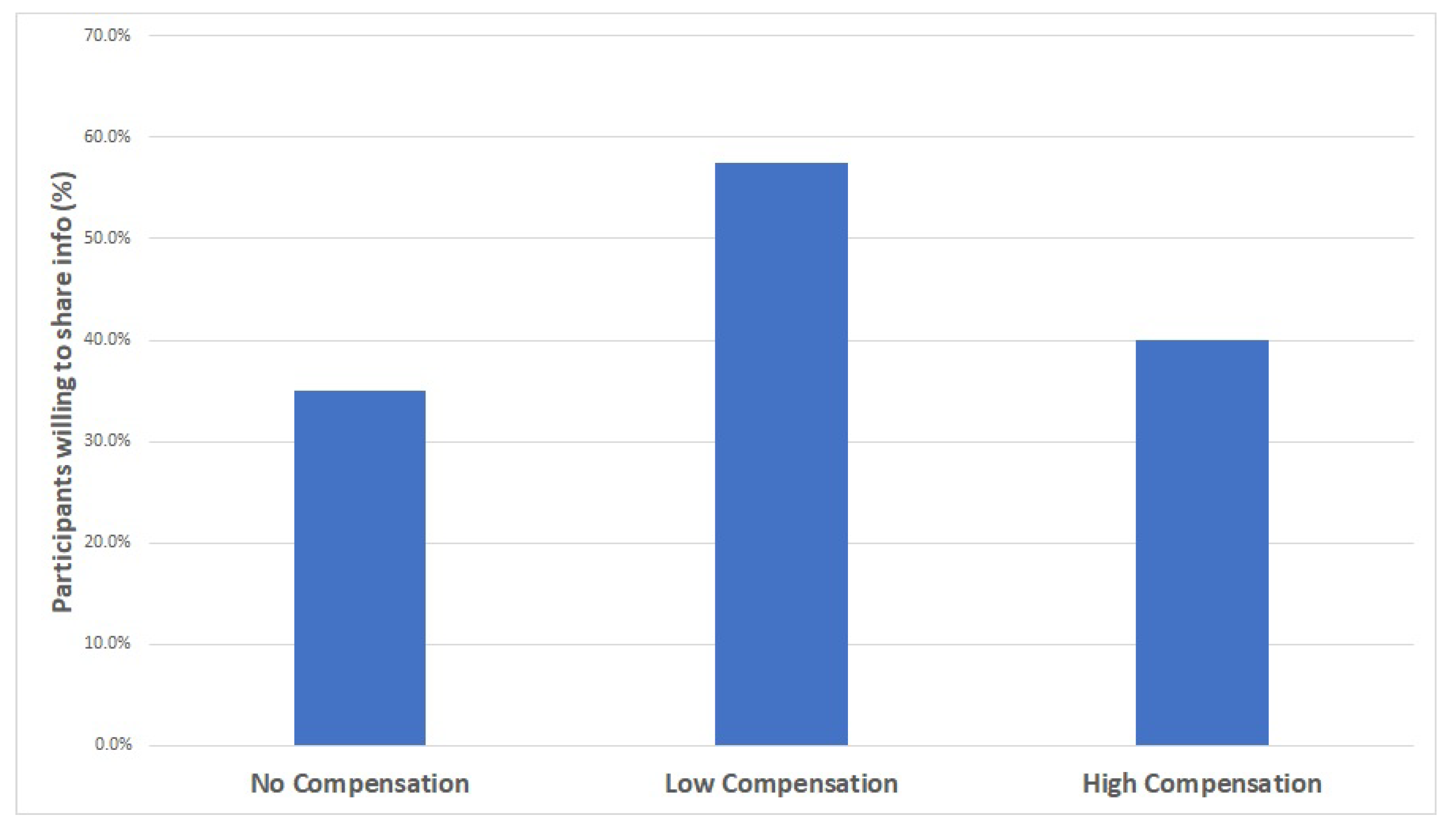

- When people are offered a financial reward for sharing their personal information while using AR apps, is there a linear relationship between the amount of money offered and the responses to the disclosure of personal information? Specifically, when participants are asked to share their personal information, is there a difference in their responses when they are offered no compensation for sharing their information compared to when they are offered high or low compensation for sharing their information?

- To assess people’s reactions to requests that seek their personal information while using AR apps;

- To identify which personal information requests people are reluctant to grant access to;

- To determine the effect of monetary incentives on personal information sharing while using AR apps.

4. Materials and Methods

5. Results

6. Discussion

7. Conclusions

8. Future Work

9. Limitations

Author Contributions

Funding

Conflicts of Interest

Abbreviations

| AMT | Amazon Mechanical Turk |

| AR | Augmented reality |

| SI | Social information |

| PI | Personal information |

| OS | Operating system |

References

- Balebako, R.; Jung, J.; Lu, W.; Cranor, L.F.; Nguyen, C. “Little brothers watching you” raising awareness of data leaks on smartphones. In Proceedings of the Ninth Symposium on Usable Privacy and Security, Newcastle, UK, 24–26 July 2013; pp. 1–11. [Google Scholar] [CrossRef]

- Liu, R.; Cao, J.; Yang, L. Smartphone privacy in mobile computing: Issues, methods and systems. Inf. Media Technol. 2015, 10, 281–293. [Google Scholar] [CrossRef]

- Quermann, N.; Degeling, M. Data sharing in mobile apps—User privacy expectations in Europe. In Proceedings of the 2020 IEEE European Symposium on Security and Privacy Workshops (EuroS&PW), Genoa, Italy, 7–11 September 2020; pp. 107–119. [Google Scholar] [CrossRef]

- Waldman, A.E. Cognitive biases, dark patterns, and the ‘privacy paradox’. Curr. Opin. Psychol. 2020, 31, 105–109. [Google Scholar] [CrossRef] [PubMed]

- Shklovski, I.; Mainwaring, S.D.; Skúladóttir, H.H.; Borgthorsson, H. Leakiness and creepiness in app space: Perceptions of privacy and mobile app use. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, Toronto, ON, Canada, 26 April–1 May 2014; pp. 2347–2356. [Google Scholar] [CrossRef]

- Degirmenci, K. Mobile users’ information privacy concerns and the role of app permission requests. Int. J. Inf. Manag. 2020, 50, 261–272. [Google Scholar] [CrossRef]

- Kununka, S.; Mehandjiev, N.; Sampaio, P. A comparative study of Android and iOS mobile applications’ data handling practices versus compliance to privacy policy. In Proceedings of the IFIP International Summer School on Privacy and Identity Management, Ispra, Italy, 4–8 September 2017; Springer: Berlin/Heidelberg, Germany, 2017; pp. 301–313. [Google Scholar]

- Wang, T.; Duong, T.D.; Chen, C.C. Intention to disclose personal information via mobile applications: A privacy calculus perspective. Int. J. Inf. Manag. 2016, 36, 531–542. [Google Scholar] [CrossRef]

- Zeng, K.C.; Shu, Y.; Liu, S.; Dou, Y.; Yang, Y. A practical GPS location spoofing attack in road navigation scenario. In Proceedings of the 18th International Workshop on Mobile Computing Systems and Applications, Sonoma, CA, USA, 21–22 February 2017; pp. 85–90. [Google Scholar]

- CVE-2022-30717. Samsung Mobile Security. CVE. Available online: https://security.samsungmobile.com/securityUpdate.smsb?year=2022&month=6 (accessed on 1 February 2023).

- Azuma, R.T. A Survey of Augmented Reality. Presence Teleoperators Virtual Environ. 1997, 6, 355–385. [Google Scholar] [CrossRef]

- Köse, H.; Güner-Yildiz, N. Augmented reality (AR) as a learning material in special needs education. Educ. Inf. Technol. 2021, 26, 1921–1936. [Google Scholar] [CrossRef]

- Lohre, R.; Wang, J.C.; Lewandrowski, K.U.; Goel, D.P. Virtual reality in spinal endoscopy: A paradigm shift in education to support spine surgeons. J. Spine Surg. 2020, 6, S208. [Google Scholar] [CrossRef] [PubMed]

- Subhashini, P.; Siddiqua, R.; Keerthana, A.; Pavani, P. Augmented reality in education. J. Inf. Technol. 2020, 2, 221–227. [Google Scholar] [CrossRef]

- Dunleavy, M.; Dede, C.; Mitchell, R. Affordances and limitations of immersive participatory augmented reality simulations for teaching and learning. J. Sci. Educ. Technol. 2009, 18, 7–22. [Google Scholar] [CrossRef]

- Ong, S.K.; Zhao, M.; Nee, A.Y.C. Augmented Reality-Assisted Healthcare Exercising Systems. In Springer Handbook of Augmented Reality; Springer: Berlin/Heidelberg, Germany, 2023; pp. 743–763. [Google Scholar] [CrossRef]

- Berciu, A.G.; Dulf, E.H.; Stefan, I.A. Flexible Augmented Reality-Based Health Solution for Medication Weight Establishment. Processes 2022, 10, 219. [Google Scholar] [CrossRef]

- Innocente, C.; Ulrich, L.; Moos, S.; Vezzetti, E. Augmented Reality: Mapping Methods and Tools for Enhancing the Human Role in Healthcare HMI. Appl. Sci. 2022, 12, 4295. [Google Scholar] [CrossRef]

- Shuhaiber, J.H. Augmented reality in surgery. Arch. Surg. 2004, 139, 170–174. [Google Scholar] [CrossRef]

- Scholz, J.; Smith, A.N. Augmented reality: Designing immersive experiences that maximize consumer engagement. Bus. Horizons 2016, 59, 149–161. [Google Scholar] [CrossRef]

- Arghashi, V.; Yuksel, C.A. Interactivity, Inspiration, and Perceived Usefulness! How retailers’ AR-apps improve consumer engagement through flow. J. Retail. Consum. Serv. 2022, 64, 102756. [Google Scholar] [CrossRef]

- Jiang, Z.; Seock, Y.K.; Lyu, J. Does Augmented Reality really engage consumers? Exploring AR driven consumer engagement. Int. Text. Appar. Assoc. Annu. Conf. Proc. 2022, 78, 1–4. [Google Scholar] [CrossRef]

- Hung, S.W.; Chang, C.W.; Ma, Y.C. A new reality: Exploring continuance intention to use mobile augmented reality for entertainment purposes. Technol. Soc. 2021, 67, 101757. [Google Scholar] [CrossRef]

- Wei, W. Research progress on virtual reality (VR) and augmented reality (AR) in tourism and hospitality: A critical review of publications from 2000 to 2018. J. Hosp. Tour. Technol. 2019, 10, 539–570. [Google Scholar] [CrossRef]

- Song, Y.; Koeck, R.; Luo, S. Review and analysis of augmented reality (AR) literature for digital fabrication in architecture. Autom. Constr. 2021, 128, 103762. [Google Scholar] [CrossRef]

- Shevchuk, R.; Tykhiy, R.; Melnyk, A.; Karpinski, M.; Owedyk, J.; Yurchyshyn, T. Cyber-physical System for Dynamic Annotating Real-world Objects Using Augmented Reality. In Proceedings of the 2022 12th International Conference on Advanced Computer Information Technologies (ACIT), Ruzomberok, Slovakia, 26–28 September 2022; pp. 392–395. [Google Scholar] [CrossRef]

- Mendigochea, P. WebAR: Creating Augmented Reality Experiences on Smart Glasses and Mobile Device Browsers. In Proceedings of the ACM SIGGRAPH 2017 Studio, Los Angeles, CA, USA, 30 July–3 August 2017; SIGGRAPH’ 17. Association for Computing Machinery: New York, NY, USA, 2017; pp. 1–2. [Google Scholar] [CrossRef]

- Harborth, D.; Pape, S. Investigating privacy concerns related to mobile augmented reality apps—A vignette based online experiment. Comput. Hum. Behav. 2021, 122, 106833. [Google Scholar] [CrossRef]

- Roesner, F.; Kohno, T.; Molnar, D. Security and privacy for augmented reality systems. Commun. ACM 2014, 57, 88–96. [Google Scholar] [CrossRef]

- Braghin, C.; Del Vecchio, M. Is Pokémon GO watching you? A survey on the Privacy-awareness of Location-based Apps’ users. In Proceedings of the 2017 IEEE 41st Annual Computer Software and Applications Conference (COMPSAC), Turin, Italy, 4–8 July 2017; Volume 2, pp. 164–169. [Google Scholar] [CrossRef]

- Lebeck, K.; Ruth, K.; Kohno, T.; Roesner, F. Arya: Operating system support for securely augmenting reality. IEEE Secur. Priv. 2018, 16, 44–53. [Google Scholar] [CrossRef]

- Lebeck, K.; Kohno, T.; Roesner, F. How to safely augment reality: Challenges and directions. In Proceedings of the 17th International Workshop on Mobile Computing Systems and Applications, St. Augustine, FL, USA, 23–24 February 2016; pp. 45–50. [Google Scholar] [CrossRef]

- Thompson, C.; Wagner, D. Securing recognizers for rich video applications. In Proceedings of the 6th Workshop on Security and Privacy in Smartphones and Mobile Devices, Vienna, Austria, 24 October 2016; pp. 53–62. [Google Scholar] [CrossRef]

- Jana, S.; Narayanan, A.; Shmatikov, V. A scanner darkly: Protecting user privacy from perceptual applications. In Proceedings of the 2013 IEEE Symposium on Security and Privacy, Berkeley, CA, USA, 19–22 May 2013; pp. 349–363. [Google Scholar] [CrossRef]

- Lehman, S.M.; Tan, C.C. PrivacyManager: An access control framework for mobile augmented reality applications. In Proceedings of the 2017 IEEE Conference on Communications and Network Security (CNS), Las Vegas, NV, USA, 9–11 October 2017; pp. 1–9. [Google Scholar] [CrossRef]

- Brandtzaeg, P.B.; Pultier, A.; Moen, G.M. Losing control to data-hungry apps: A mixed-methods approach to mobile app privacy. Soc. Sci. Comput. Rev. 2019, 37, 466–488. [Google Scholar] [CrossRef]

- Wottrich, V.M.; van Reijmersdal, E.A.; Smit, E.G. The privacy trade-off for mobile app downloads: The roles of app value, intrusiveness, and privacy concerns. Decis. Support Syst. 2018, 106, 44–52. [Google Scholar] [CrossRef]

- Denning, T.; Dehlawi, Z.; Kohno, T. In situ with bystanders of augmented reality glasses: Perspectives on recording and privacy-mediating technologies. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, Toronto, ON, Canada, 26 April–1 May 2014; pp. 2377–2386. [Google Scholar] [CrossRef]

- Dacko, S.G. Enabling smart retail settings via mobile augmented reality shopping apps. Technol. Forecast. Soc. Chang. 2017, 124, 243–256. [Google Scholar] [CrossRef]

- Rauschnabel, P.A.; He, J.; Ro, Y.K. Antecedents to the adoption of augmented reality smart glasses: A closer look at privacy risks. J. Bus. Res. 2018, 92, 374–384. [Google Scholar] [CrossRef]

- Tufekci, Z. Can you see me now? Audience and disclosure regulation in online social network sites. Bull. Sci. Technol. Soc. 2008, 28, 20–36. [Google Scholar] [CrossRef]

- Fogel, J.; Nehmad, E. Internet social network communities: Risk taking, trust, and privacy concerns. Comput. Hum. Behav. 2009, 25, 153–160. [Google Scholar] [CrossRef]

- Hoy, M.G.; Milne, G. Gender differences in privacy-related measures for young adult Facebook users. J. Interact. Advert. 2010, 10, 28–45. [Google Scholar] [CrossRef]

- Grossklags, J.; Acquisti, A. When 25 Cents is Too Much: An Experiment on Willingness-To-Sell and Willingness-To-Protect Personal Information.In Proceedings of the WEIS. 2007, pp. 1–22. Available online: https://econinfosec.org/archive/weis2007/program.htm (accessed on 8 May 2023).

- Xu, H.; Teo, H.H.; Tan, B.C.; Agarwal, R. The role of push-pull technology in privacy calculus: The case of location-based services. J. Manag. Inf. Syst. 2009, 26, 135–174. [Google Scholar] [CrossRef]

- Xu, H.; Luo, X.R.; Carroll, J.M.; Rosson, M.B. The personalization privacy paradox: An exploratory study of decision making process for location-aware marketing. Decis. Support Syst. 2011, 51, 42–52. [Google Scholar] [CrossRef]

- Benndorf, V.; Normann, H.T. The willingness to sell personal data. Scand. J. Econ. 2018, 120, 1260–1278. [Google Scholar] [CrossRef]

- Pugnetti, C.; Elmer, S. Self-assessment of driving style and the willingness to share personal information. J. Risk Financ. Manag. 2020, 13, 53. [Google Scholar] [CrossRef]

- Mayle, A.; Bidoki, N.H.; Masnadi, S.; Boeloeni, L.; Turgut, D. Investigating the Value of Privacy within the Internet of Things. In Proceedings of the GLOBECOM 2017-2017 IEEE Global Communications Conference, Singapore, 4–8 December 2017; pp. 1–6. [Google Scholar] [CrossRef]

- Oh, H.; Park, S.; Lee, G.M.; Choi, J.K.; Noh, S. Competitive data trading model with privacy valuation for multiple stakeholders in IoT data markets. IEEE Internet Things J. 2020, 7, 3623–3639. [Google Scholar] [CrossRef]

- Yassine, A.; Shirehjini, A.A.N.; Shirmohammadi, S.; Tran, T.T. Knowledge-empowered agent information system for privacy payoff in eCommerce. Knowl. Inf. Syst. 2012, 32, 445–473. [Google Scholar] [CrossRef]

- Mason, W.; Suri, S. Conducting behavioral research on Amazon’s Mechanical Turk. Behav. Res. Methods 2012, 44, 1–23. [Google Scholar] [CrossRef]

- Crump, M.J.; McDonnell, J.V.; Gureckis, T.M. Evaluating Amazon’s Mechanical Turk as a tool for experimental behavioral research. PLoS ONE 2013, 8, e57410. [Google Scholar] [CrossRef] [PubMed]

- Horton, J.J.; Rand, D.G.; Zeckhauser, R.J. The online laboratory: Conducting experiments in a real labor market. Exp. Econ. 2011, 14, 399–425. [Google Scholar] [CrossRef]

- Staiano, J.; Oliver, N.; Lepri, B.; de Oliveira, R.; Caraviello, M.; Sebe, N. Money walks: A human-centric study on the economics of personal mobile data. In Proceedings of the 2014 ACM International Joint Conference on Pervasive and Ubiquitous Computing, Seattle, WA, USA, 13–17 September 2014; pp. 583–594. [Google Scholar] [CrossRef]

- Consolvo, S.; Smith, I.E.; Matthews, T.; LaMarca, A.; Tabert, J.; Powledge, P. Location disclosure to social relations: Why, when, & what people want to share. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, Portland, OR, USA, 2–7 April 2005; pp. 81–90. [Google Scholar] [CrossRef]

- Kardes, F.R.; Cronley, M.L.; Kellaris, J.J.; Posavac, S.S. The Role of Selective Information Processing in Price-Quality Inference. J. Consum. Res. 2004, 31, 368–374. [Google Scholar] [CrossRef]

- Westin, A.F.; Maurici, D. E-Commerce & Privacy: What Net Users Want; Privacy & American Business: Hackensack, NJ, USA, 1998. [Google Scholar]

- Kumaraguru, P.; Cranor, L.F. Privacy Indexes: A Survey of Westin’s Studies. pp. 2–3. Available online: https://kilthub.cmu.edu/articles/journal_contribution/Privacy_indexes_a_survey_of_Westin_s_studies/66254062005 (accessed on 8 May 2023).

| Mic. | Photos | Cont. | Mess. | Cal. | Loc. | F/ | |

|---|---|---|---|---|---|---|---|

| Age M(SD) | 32.47 (10.82) | 30.17 (8.82) | 30.89 (8.19) | 31.79 (10.28) | 31.06 (8.58) | 32.17 (9.58) | 0.85 |

| Female (%) | 52% | 57% | 48% | 56% | 51% | 48% | 2.97 |

| U.S. Residence (%) | 65% | 53% | 56% | 59% | 51% | 57% | 4.93 |

| Mic. | Loc. | Photos | Mess. | Cal. | Cont. | |

|---|---|---|---|---|---|---|

| Agreed | 75% | 68% | 67% | 53% | 44% | 35% |

| Disagreed | 25% | 32% | 33% | 47% | 56% | 65% |

| No Compensation | Low Compensation | High Compensation | F/ | |

|---|---|---|---|---|

| Age, M(SD) | 30.89(8.19) | 32.23(5.96) | 32.08(10.16) | 0.85 |

| Female (%) | 48% | 40% | 65% | 2.97 |

| U.S. Residence (%) | 56% | 52.5% | 57.5% | 0.82 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Taub, G.; Elmalech, A.; Aharony, N.; Rosenfeld, A. Monetary Compensation and Private Information Sharing in Augmented Reality Applications. Information 2023, 14, 325. https://doi.org/10.3390/info14060325

Taub G, Elmalech A, Aharony N, Rosenfeld A. Monetary Compensation and Private Information Sharing in Augmented Reality Applications. Information. 2023; 14(6):325. https://doi.org/10.3390/info14060325

Chicago/Turabian StyleTaub, Gilad, Avshalom Elmalech, Noa Aharony, and Ariel Rosenfeld. 2023. "Monetary Compensation and Private Information Sharing in Augmented Reality Applications" Information 14, no. 6: 325. https://doi.org/10.3390/info14060325

APA StyleTaub, G., Elmalech, A., Aharony, N., & Rosenfeld, A. (2023). Monetary Compensation and Private Information Sharing in Augmented Reality Applications. Information, 14(6), 325. https://doi.org/10.3390/info14060325