Corporate Responsibility in the Digital Era

Abstract

1. Introduction

2. Relevant Literature

3. Research Method

4. Case Study Findings

4.1. Walmart

4.2. Deutsche Telekom

5. Analysis and Discussion

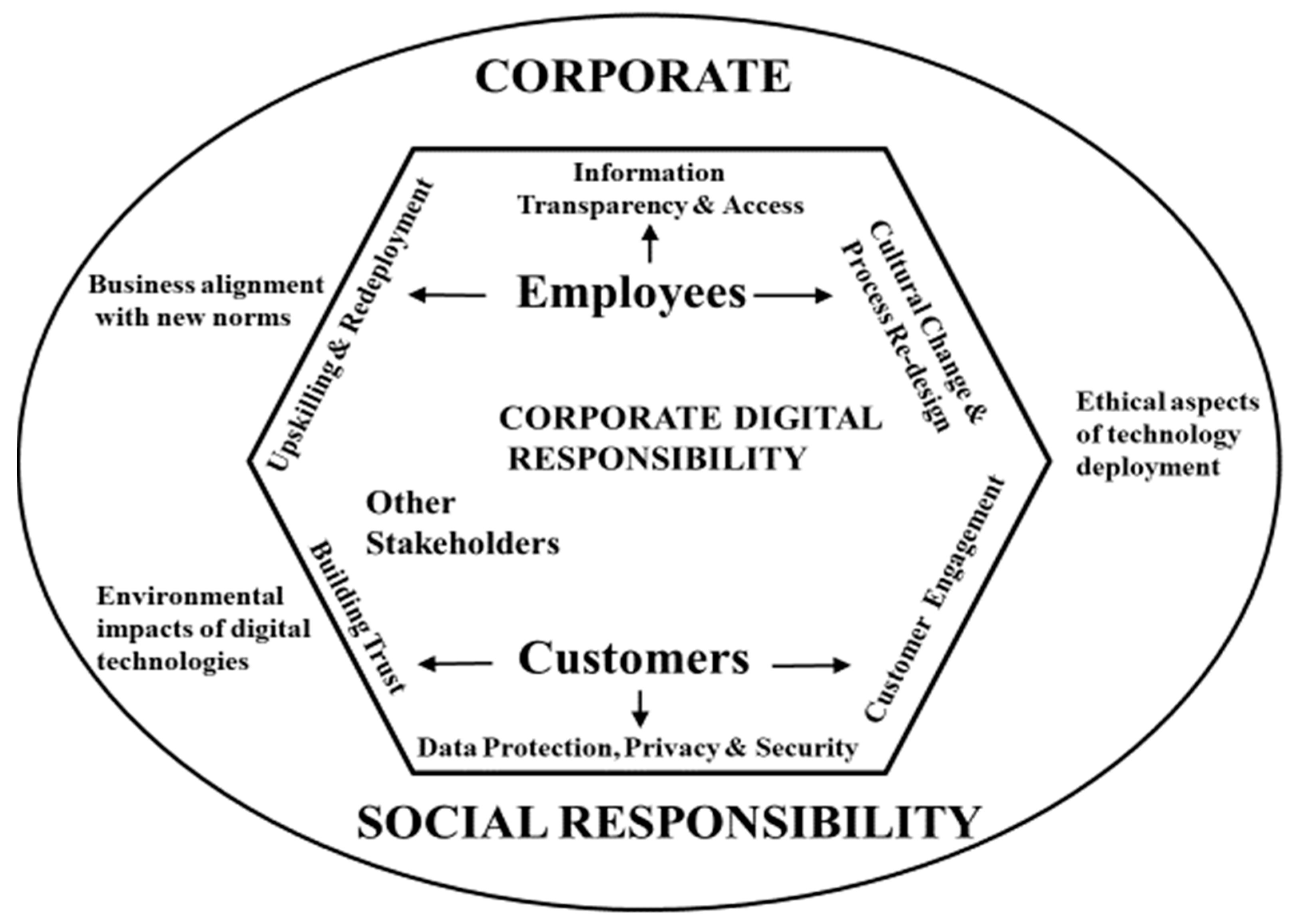

5.1. RQ1. What Are the Main Parameters of CDR That Are Evidenced in the Industry Case Studies?

5.2. RQ2. What Further Issues Emerge as Regards the Operation of CDR Policies and Practice in the Industry Case Studies?

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Bednarova, M.; Serpeninova, Y. Corporate digital responsibility: Bibliometric landscape–chronological literature review. Int. J. Digit. Account. Res. 2023, 23, 1–18. [Google Scholar] [CrossRef]

- Zizek, S. The Post-Human Desert. Taipei Times, 11 April 2023. Available online: https://www.taipeitimes.com/News/editorials/archives/2023/04/11/2003797695 (accessed on 12 April 2023).

- Lobschat, L.; Mueller, B.; Eggers, F.; Brandmarte, L.; Diefenbach, S.; Kroschke, M.; Wirtz, J. Corporate Digital Responsibility. J. Bus. Res. 2021, 122, 875–888. [Google Scholar] [CrossRef]

- Herden, C.J.; Alliu, E.; Cakici, A.; Cormier, T.; Deguelle, C.; Gambhir, S.; Griffiths, C.; Gupta, S.; Kamani, S.R.; Kiradi, Y.-S.; et al. Corporate Digital Responsibility. Sustain. Manag. Forum 2021, 29, 13–29. [Google Scholar] [CrossRef]

- Mueller, B. Corporate digital responsibility. Bus. Inf. Syst. Eng. 2022, 64, 689–700. [Google Scholar] [CrossRef]

- Wade, M. Corporate Responsibility in the Digital Era. 2020. Available online: https://sloanreview.mit.edu/article/corporate-responsibility-in-the-digital-era/ (accessed on 20 May 2023).

- Van der Merwe, J.; Al Achkar, Z. Data Responsibility, Corporate Social Responsibility, and Corporate Digital Responsibility. 2022. Available online: https://www.cambridge.org/core/journals/data-and-policy/article/data-responsibility-corporate-social-responsibility-and-corporate-digital-responsibility/B7749EC986BFF98EF32D3192E8D4F9D7 (accessed on 3 February 2023).

- Mihale-Wilson, C.; Hinz, O.; van der Aalst, W.; Weinhardt, C. Corporate digital responsibility: Relevance and opportunities for business and information systems engineering. Bus. Syst. Inf. Eng. 2022, 64, 127–132. [Google Scholar] [CrossRef]

- France Strategie. Corporate Digital Responsibilities 1-Data: Key Issues. 2020. Available online: https://www.strategie.gouv.fr/sites/strategie.gouv.fr/files/atoms/files/fs-2020-corporate-digital-responsibility-juillet.pdf (accessed on 13 April 2023).

- Elliott, K.; Price, B.; Shaw, P.; Spiliotopoulos, T.; Ng, M.; Coopamootoo, K.; van Moorsel, A. Towards an Equitable Digital Society: Artificial Intelligence (AI) and Corporate Digital Responsibility (CDR). Society 2021, 58, 179–188. [Google Scholar] [CrossRef] [PubMed]

- Jelovac, D.; Ljubojevic, C.; Ljubojevic, L. HPC in business: The impact of corporate digital responsibility on building digital trust and responsible corporate digital governance. Digit. Policy Regul. Gov. 2021, 24, 485–497. [Google Scholar] [CrossRef]

- Wirtz, J.; Kunz, W.H.; Hartley, N.; Tarbit, J. Corporate Digital Responsibility in Service Firms and their Ecosystems. J. Serv. Res. 2022, 26, 173–190. Available online: https://journals.sagepub.com/doi/full/10.1177/10946705221130467 (accessed on 13 April 2023). [CrossRef]

- Liyanaarachchi, G.; Deshpande, S.; Weaven, S. Market oriented corporate digital responsibility to manage data vulnerability in online banking. Int. J. Bank Mark. 2020, 39, 571–591. [Google Scholar] [CrossRef]

- Isik, O.; Wade, M.R. The Four Components of Digital Corporate Responsibility. Brain Circuits, 7 October 2021. Available online: https://iby.imd.org/brain-circuits/the-four-components-of-digital-corporate-responsibility/ (accessed on 12 April 2023).

- Hanneke, R.; Asada, Y.; Lieberman, L.; Neubauer, L.; Fagan, M. The Scoping Review Method: Mapping the Literature in Structural Change Public Health Interventions. Dep. Public Health Scholarsh. Creat. Work. 2017, 94, 1–14. [Google Scholar] [CrossRef]

- Rowley, J. Using case studies in research. Manag. Res. News 2002, 25, 16–27. [Google Scholar] [CrossRef]

- Bowen, G.A. Document analysis as a qualitative research method. Qual. Res. J. 2009, 9, 27–40. [Google Scholar] [CrossRef]

- Walsham, G. Interpretive case studies in IS research: Nature and method. Eur. J. Inf. Syst. 1995, 4, 74–81. [Google Scholar] [CrossRef]

- Walmart. Digital Citizenship: Ethical Use of Data and Responsible Use of Technology. 2023. Available online: https://corporate.walmart.com/esgreport/governance/digital-citizenship-ethical-use-of-data-responsible-use-of-technology (accessed on 23 March 2023).

- Deutsche Telekom. Corporate Digital Responsibility @ Deutsche Telekom. 2022. Available online: https://www.telekom.com/en/company/digital-responsibility/cdr (accessed on 28 March 2023).

- Jones, P.; Comfort, D. Corporate Digital Responsibility: Approaches of the Leading IT Companies. In Handbook of Research on Digital Transformation, Industry Use Cases, and the Impact of Disruptive Technologies; Wynn, M., Ed.; IGI-Global: Hershey, PA, USA, 2022; pp. 231–248. [Google Scholar] [CrossRef]

- Ahold Delhaize. ESG Reporting-Data Privacy. 2023. Available online: https://www.aholddelhaize.com/sustainability/our-position-on-societal-and-environmental-topics/data-privacy/ (accessed on 23 March 2023).

- Tesco. Tesco Privacy Centre. 2023. Available online: https://www.tesco.com/help/privacy-and-cookies/privacy-centre/tesco-and-your-data/our-commitment/ (accessed on 23 March 2023).

- Burr, C.; Taddeo, M.; Floridi, L. The Ethics of Digital Well Being: A Thematic Review. Sci. Eng. Ethics 2020, 26, 2313–2343. [Google Scholar] [CrossRef] [PubMed]

- United Nations Environment Programme. How Digital Technology and Innovation Can Help Protect the Planet. 2022. Available online: https://www.unep.org/news-and-stories/story/how-digital-technology-and-innovation-can-help-protect-planet (accessed on 1 February 2023).

- DataCamp. The Environmental Impact of Digital Technologies and Data. 2023. Available online: https://www.datacamp.com/blog/environmental-impact-data-digital-technology (accessed on 4 April 2023).

- Ozdemir, S.; Wynn, M.; Bilgin, M. Cybersecurity and Country of Origin: Towards a New Framework for Assessing Digital Product Domesticity. Sustainability 2023, 15, 87. [Google Scholar] [CrossRef]

- LeBaron, G.; Lister, J.; Dauvergne, P. Governing Global Supply Chain Sustainability through Ethical Audit Regime. Globalizations 2017, 14, 958–975. [Google Scholar] [CrossRef]

- Flyvbjerg, B. Five Misunderstandings about Case-Study Research. Qual. Inq. 2006, 12, 219–245. [Google Scholar] [CrossRef]

- Bressanelli, G.; Perona, M.; Saccani, N. Challenges in supply chain redesign for the Circular Economy: A literature review and a multiple case study. Int. J. Prod. Res. 2019, 57, 7395–7422. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Wynn, M.; Jones, P. Corporate Responsibility in the Digital Era. Information 2023, 14, 324. https://doi.org/10.3390/info14060324

Wynn M, Jones P. Corporate Responsibility in the Digital Era. Information. 2023; 14(6):324. https://doi.org/10.3390/info14060324

Chicago/Turabian StyleWynn, Martin, and Peter Jones. 2023. "Corporate Responsibility in the Digital Era" Information 14, no. 6: 324. https://doi.org/10.3390/info14060324

APA StyleWynn, M., & Jones, P. (2023). Corporate Responsibility in the Digital Era. Information, 14(6), 324. https://doi.org/10.3390/info14060324