Survey and Classification of Automotive Security Attacks

Abstract

1. Introduction

- 🟉

- A classification of 162 existing attacks based on our proposed taxonomy and an illustration of the value added by using it in the automotive security development process.

- 🟉

- A decomposition of incidents that consist of multiple attack steps into individual blocks presented as a multi-stage attack path leading to a total of 413 attacks.

- 🟉

- A rigerous discussion of the taxonomy and challenges that can arise when applying it in practice.

2. Fundamentals and Related Work

2.1. Automotive Security Attacks

2.2. Vulnerability Databases

2.3. Taxonomies

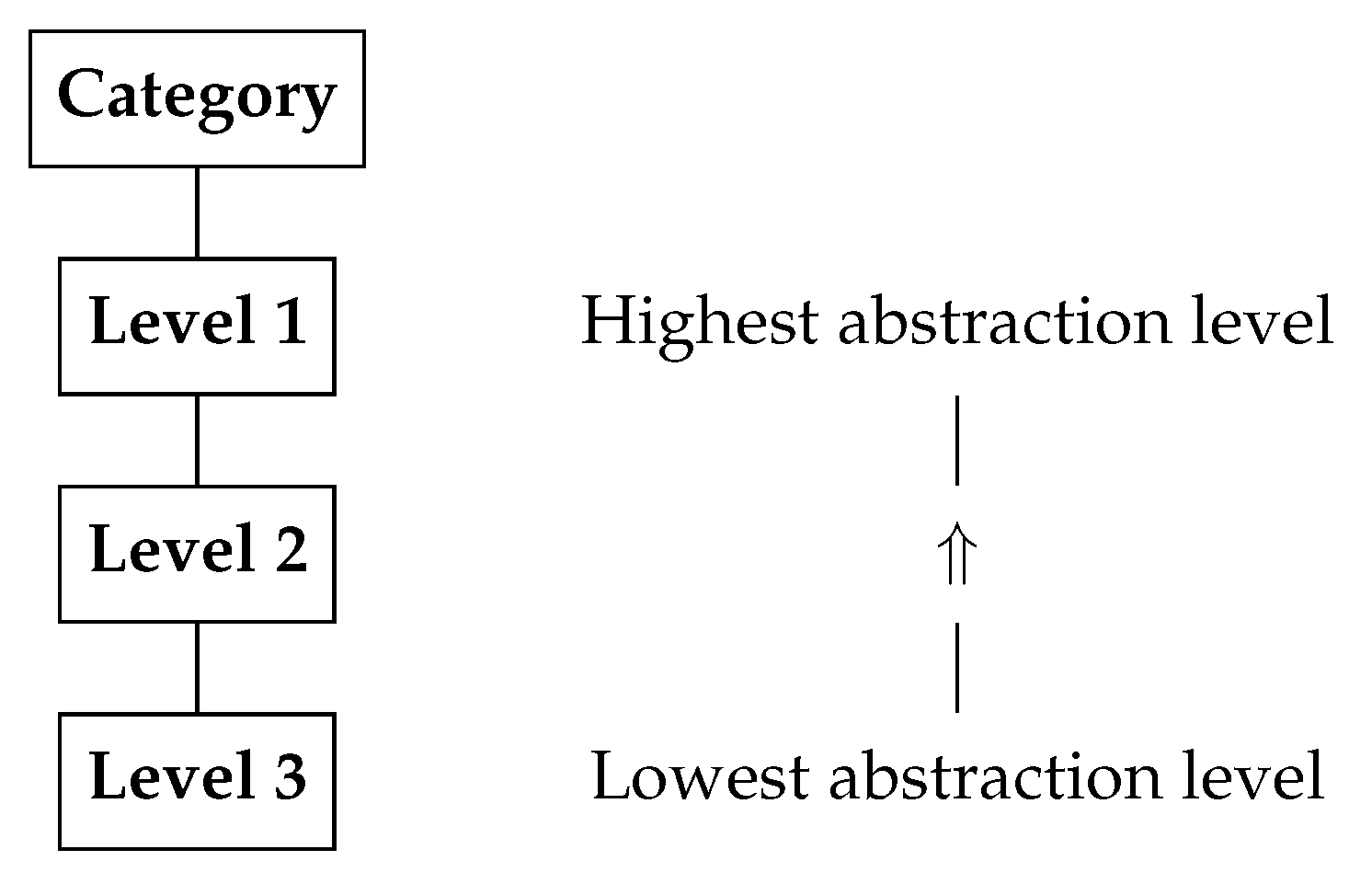

3. Automotive Security Taxonomy

- Incident management,

- threat analysis and risk assessment (TARA),

- Security testing.

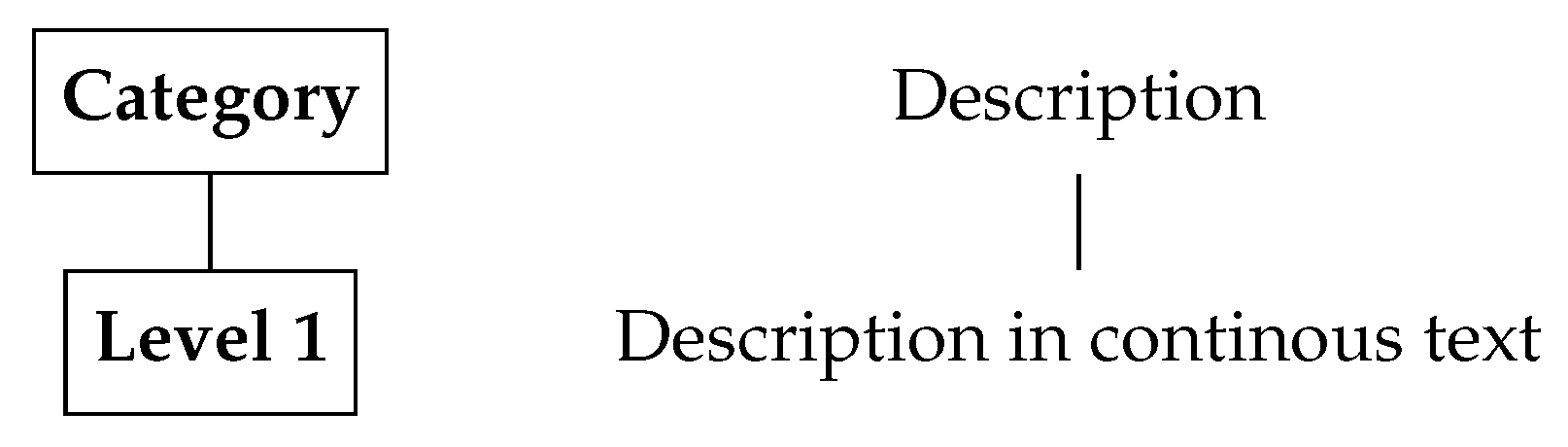

3.1. Description

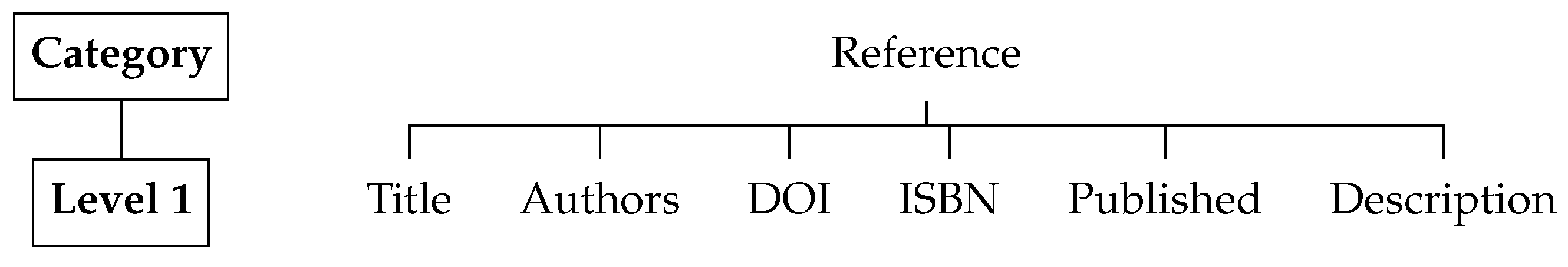

3.2. Reference

3.3. Year

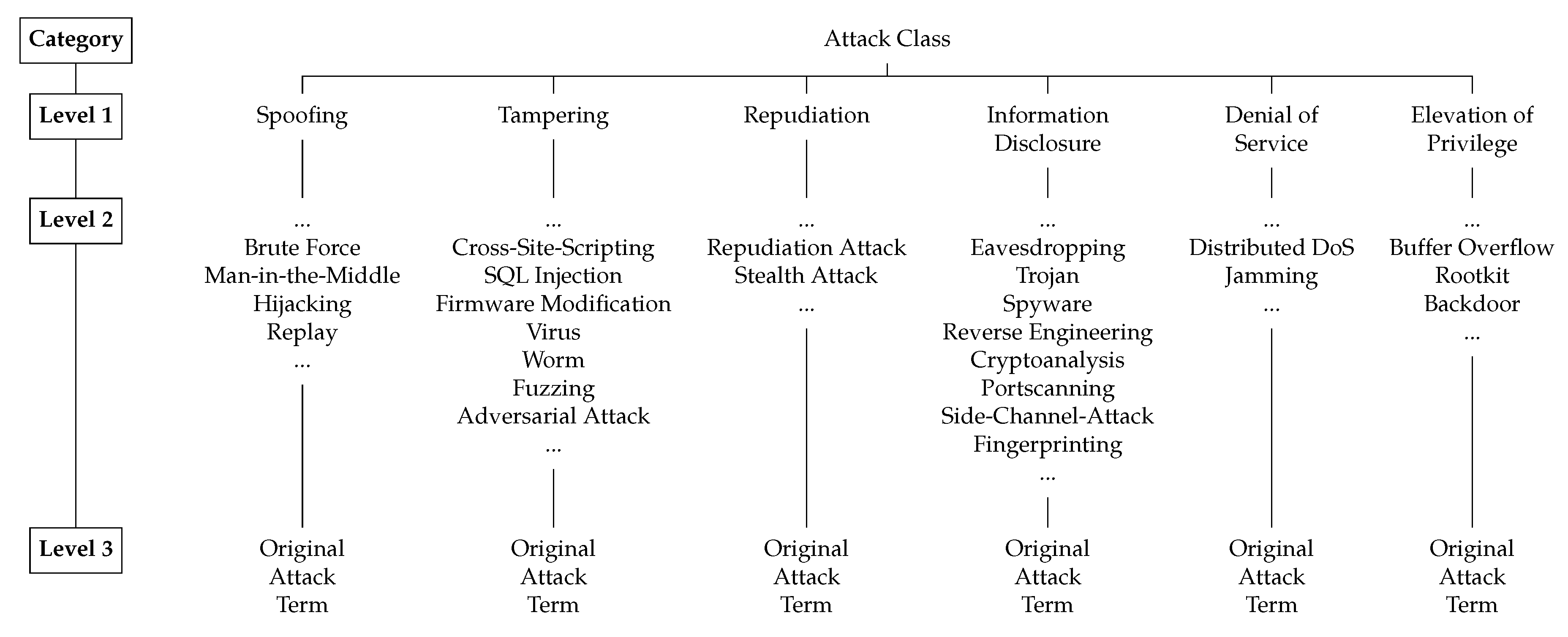

3.4. Attack Class

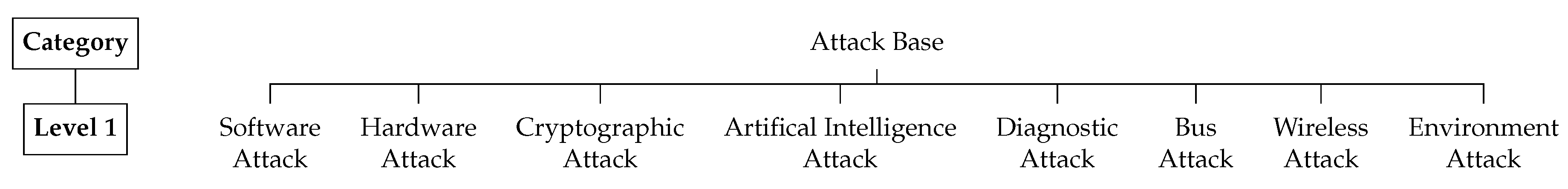

3.5. Attack Base

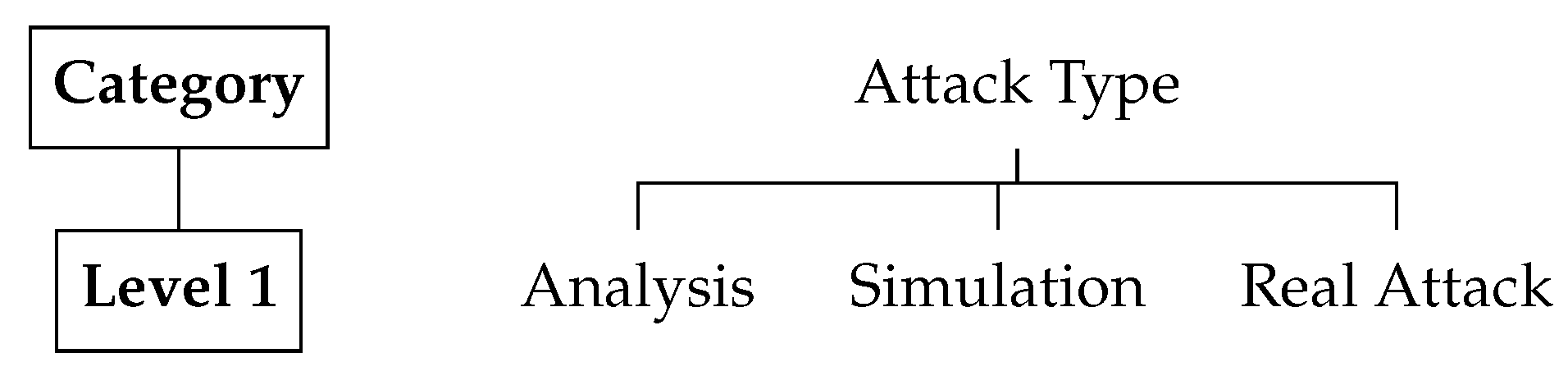

3.6. Attack Type

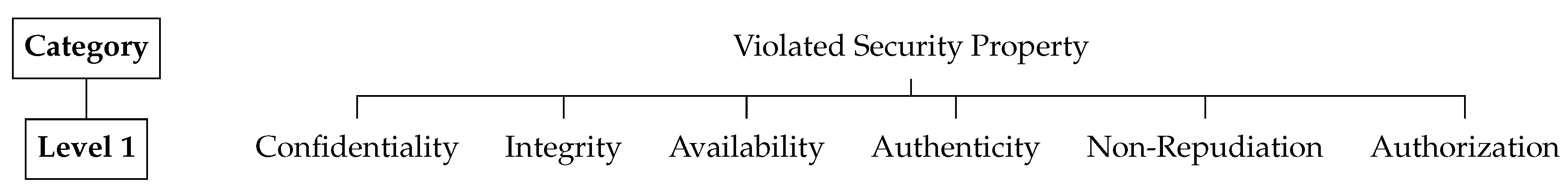

3.7. Violated Security Property

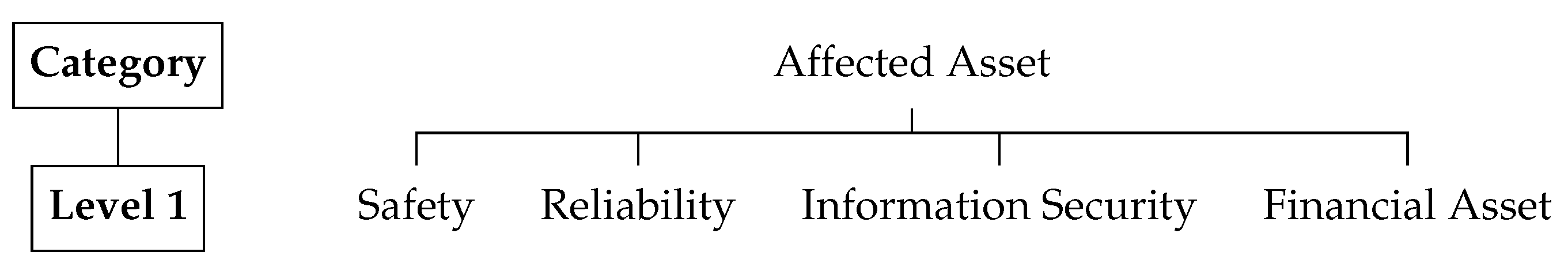

3.8. Affected Asset

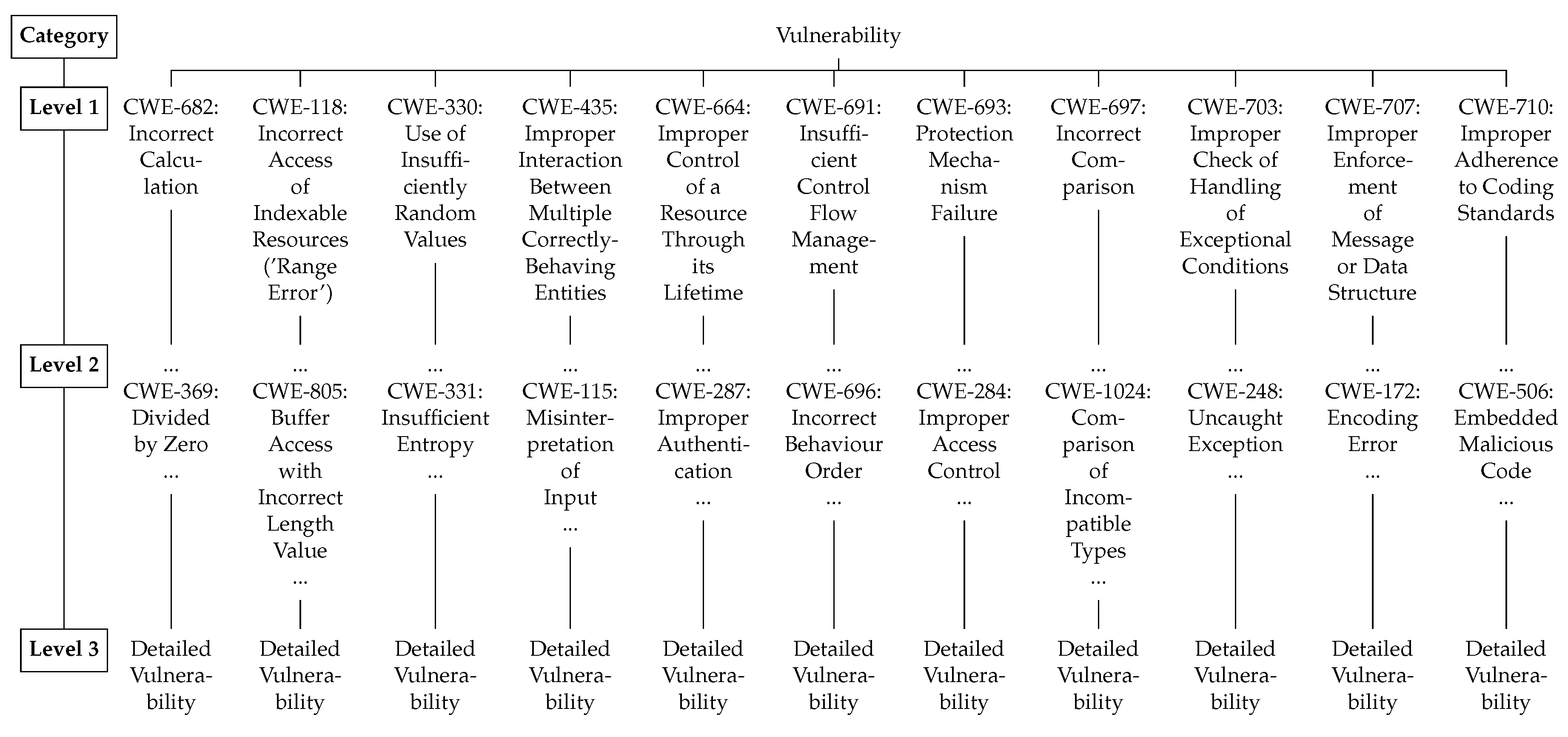

3.9. Vulnerability

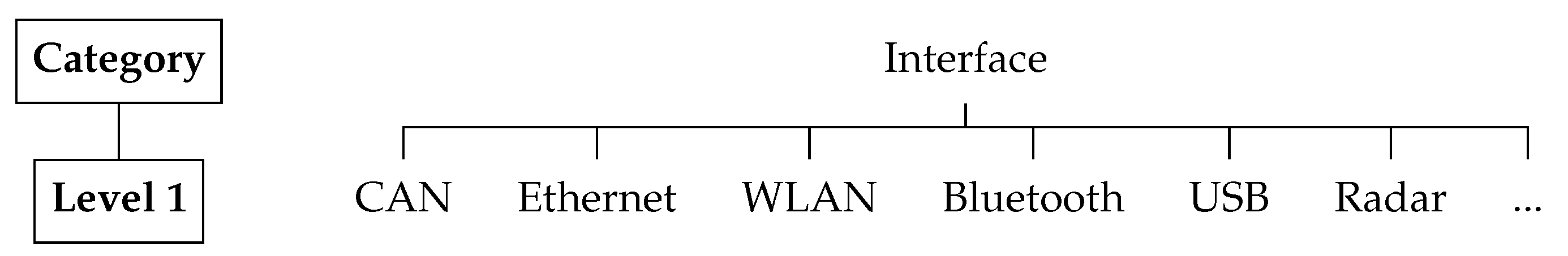

3.10. Interface

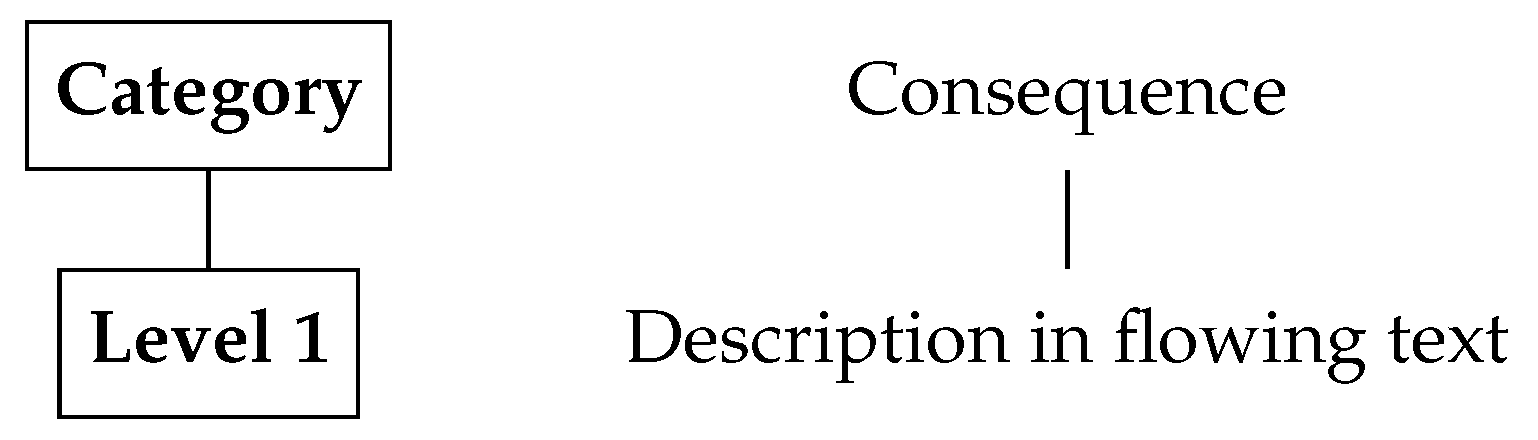

3.11. Consequence

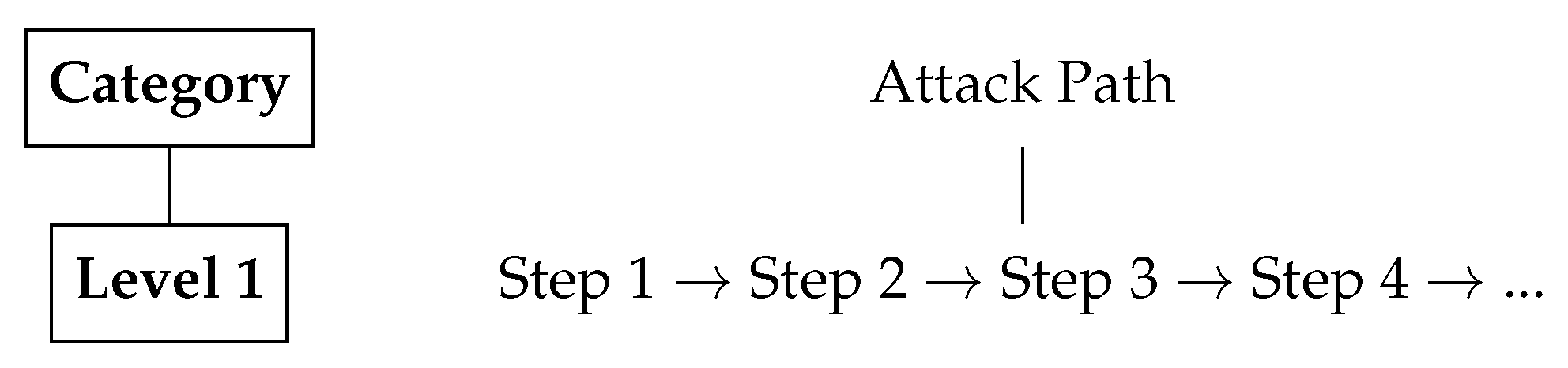

3.12. Attack Path

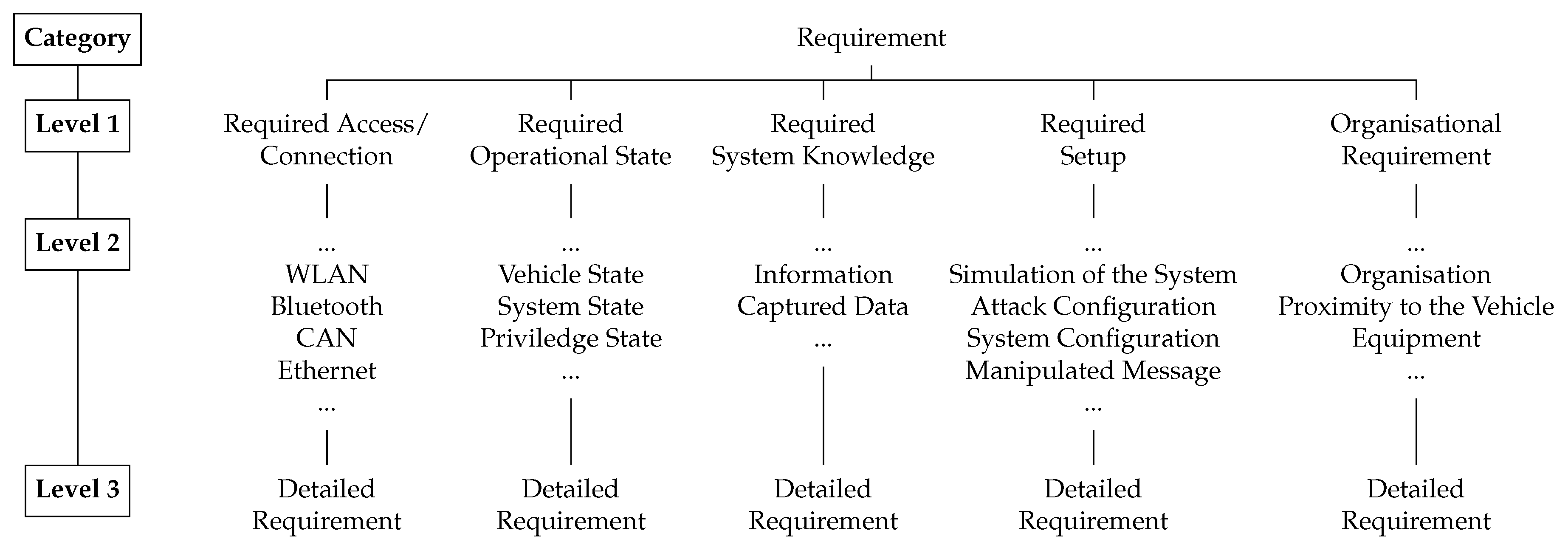

3.13. Requirement

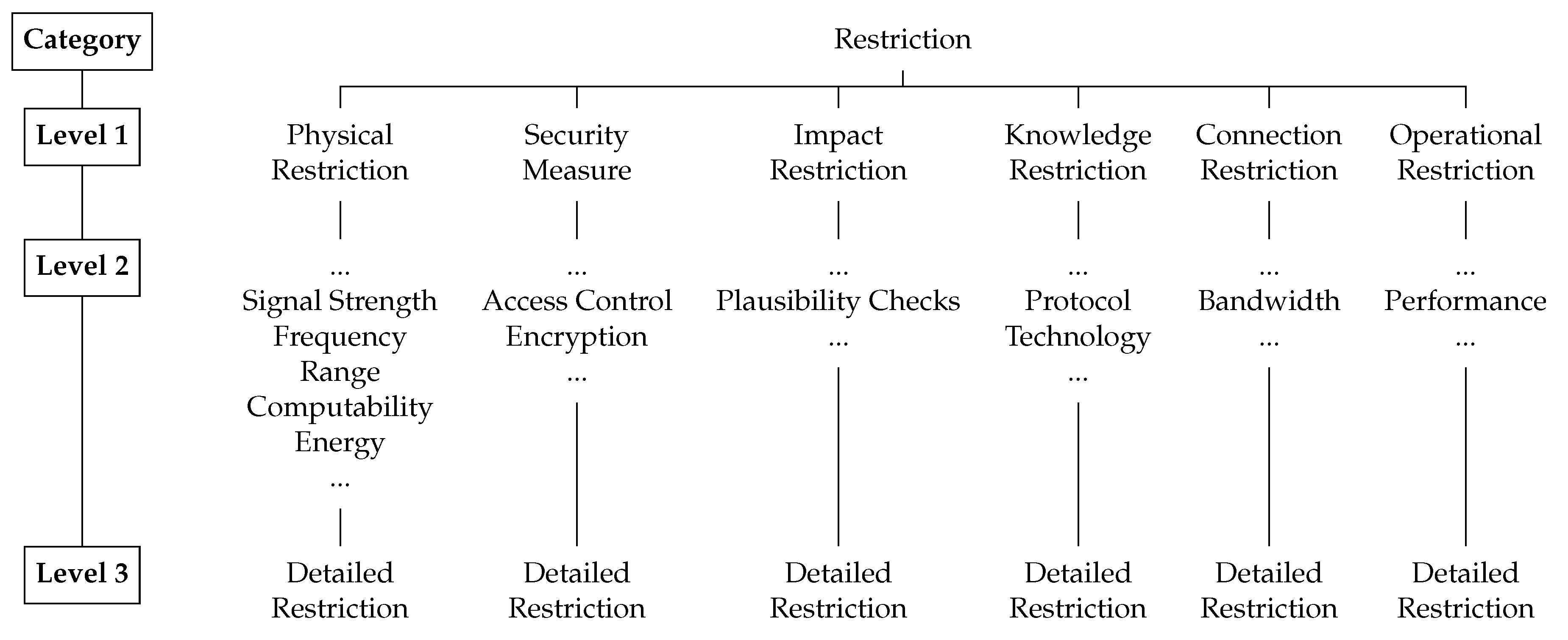

3.14. Restriction

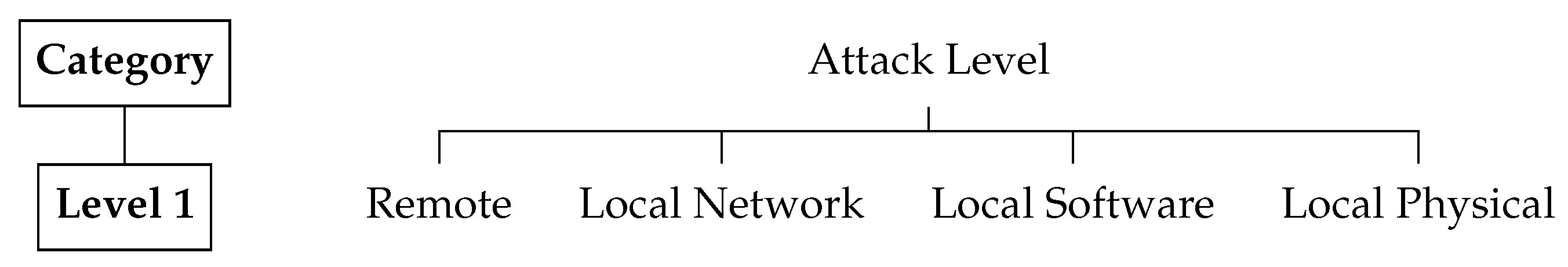

3.15. Attack Level

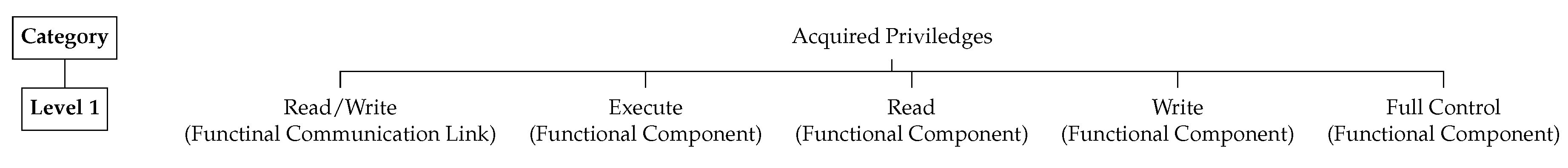

3.16. Acquired Privileges

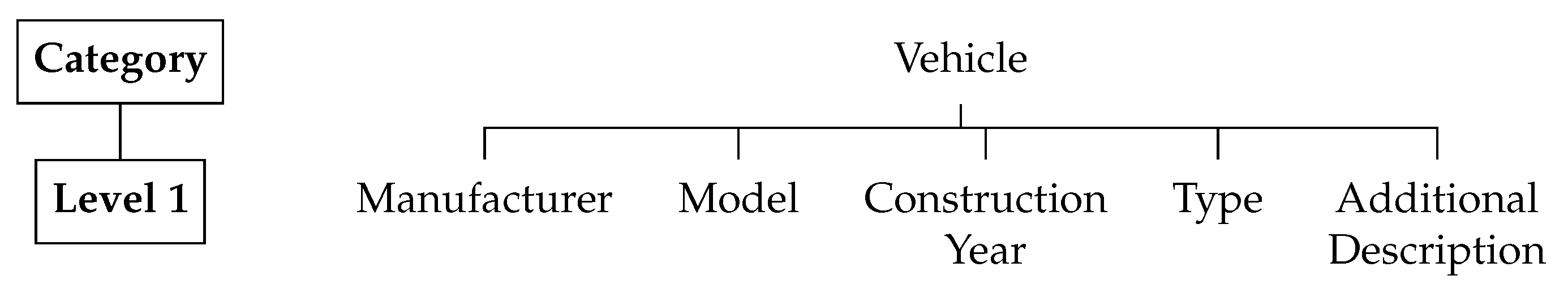

3.17. Vehicle

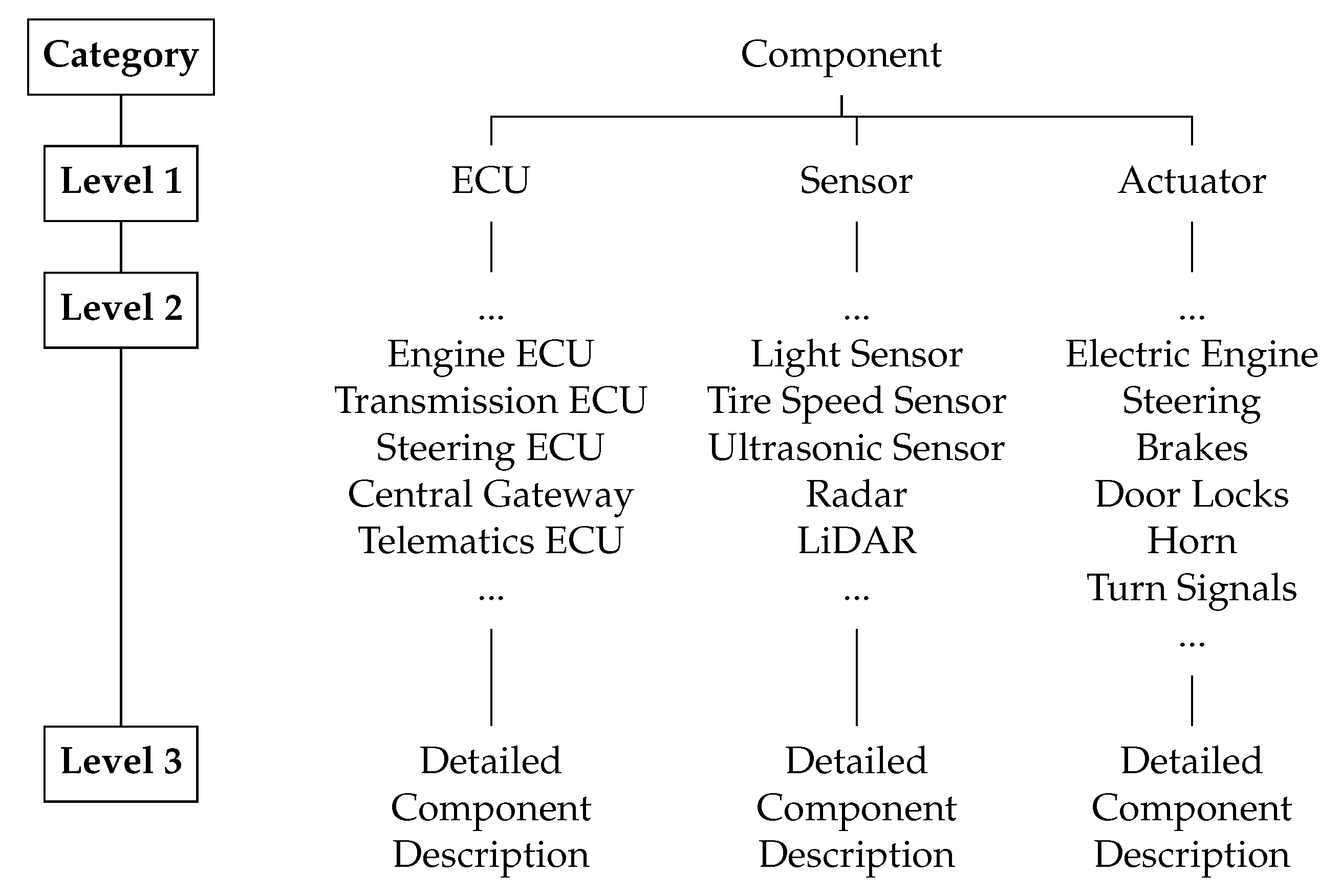

3.18. Component

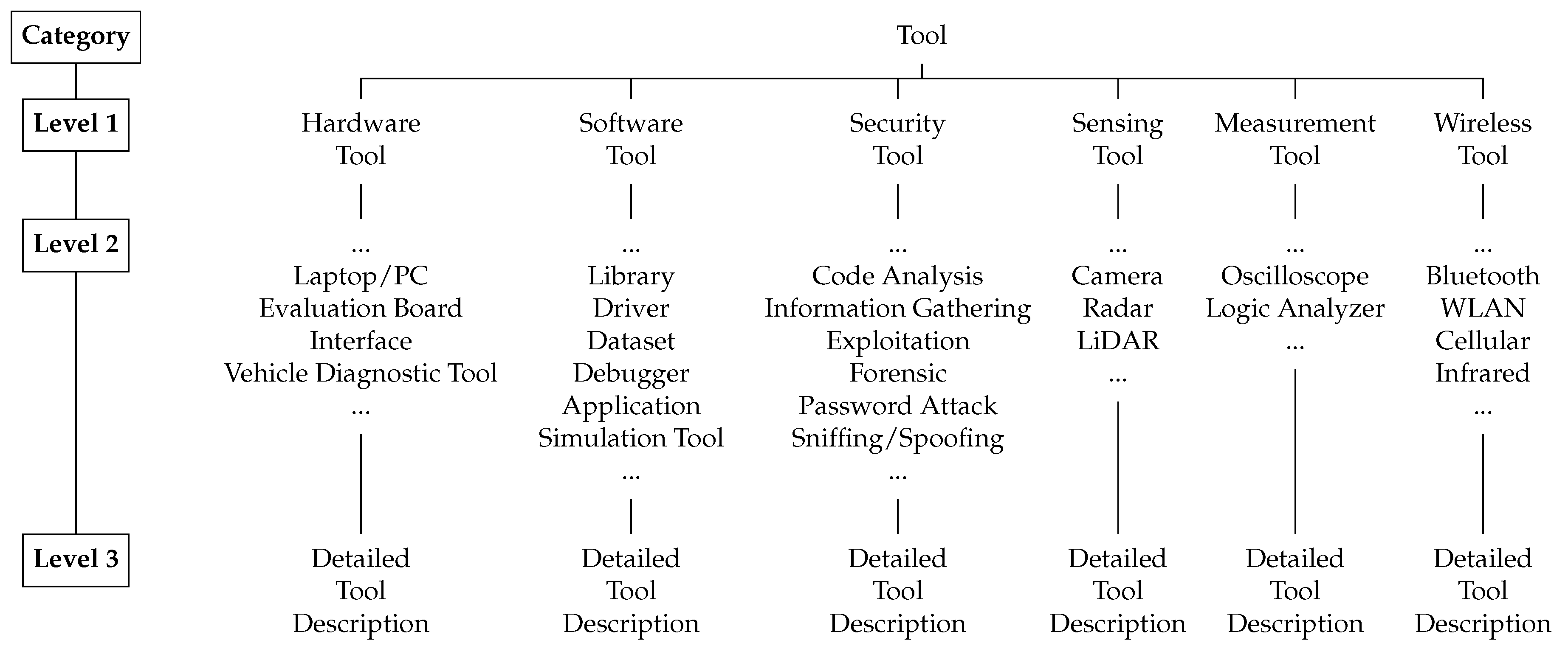

3.19. Tool

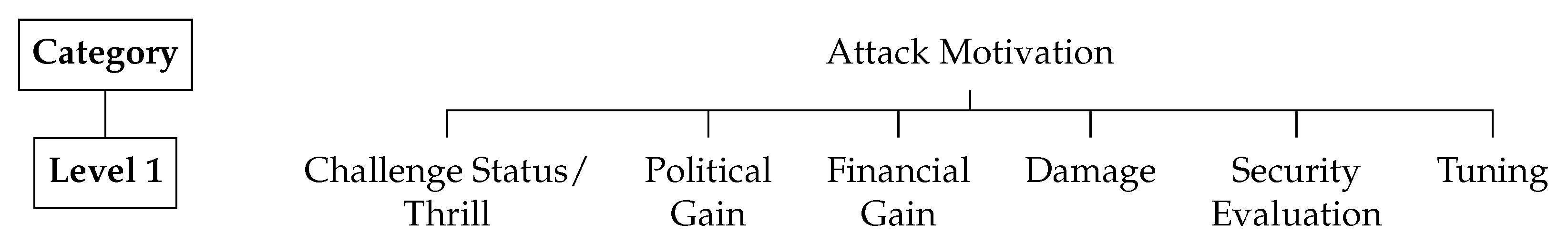

3.20. Attack Motivation

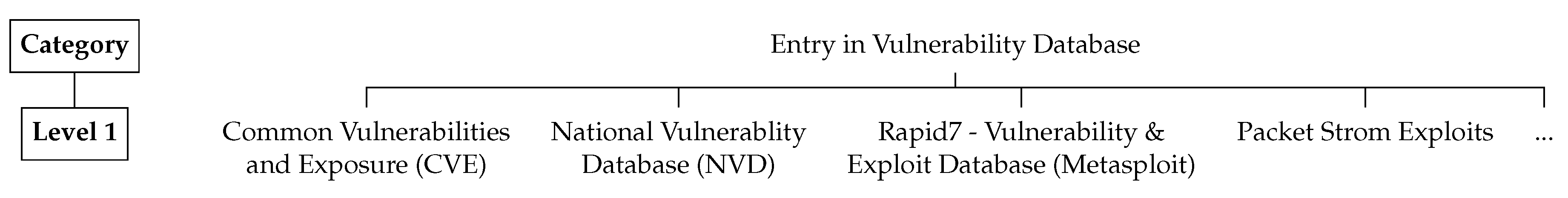

3.21. Entry in Vulnerability Database

3.22. Rating

3.23. Exploitability

4. Example

5. Discussion

6. Conclusions and Future Work

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Maurer, M.; Gerdes, J.C.; Lenz, B.; Winner, H. Autonomes Fahren: Technische, Rechtliche und Gesellschaftliche Aspekte; Springer-Verlag: Berlin, Germany, 2015. [Google Scholar]

- IEEE. IEEE Std 802.11ak-2018 (Amendment to IEEE Std 802.11(TM)-2016 as Amended by IEEE Std 802.11ai(TM)-2016, IEEE Std 802.11ah(TM)-2016, and IEEE Std 802.11aj(TM)-2018): IEEE Standard for Information Technology-Telecommunications and Information Exchange Betwee; IEEE: Piscataway, NJ, USA, 2018. [Google Scholar]

- Bluetooth Special Interest Group. Bluetooth Core Specification v5.0. 2018. Available online: https://www.bluetooth.com/specifications/bluetooth-core-specification (accessed on 28 March 2019).

- Dürrwang, J.; Braun, J.; Rumez, M.; Kriesten, R.; Pretschner, A. Enhancement of Automotive Penetration Testing with Threat Analyses Results. SAE Int. J. Transp. Cybersecur. Priv. 2018, 1, 91–112. [Google Scholar] [CrossRef]

- Francillon, A.; Danev, B.; Capkun, S. Relay attacks on passive keyless entry and start systems in modern cars. In Proceedings of the Network and Distributed System Security Symposium (NDSS), San Diego, CA, USA, 6–9 February 2011. [Google Scholar]

- Keen Lab. Experimental Security Assessment of BMW Cars: A Summary Report. 2017. Available online: https://keenlab.tencent.com/en/whitepapers/Experimental_Security_Assessment_of_BMW_Cars_by_KeenLab.pdf (accessed on 17 April 2019).

- Nilsson, D.K.; Larson, U.E.; Picasso, F.; Jonsson, E. A First Simulation of Attacks in the Automotive Network Communications Protocol FlexRay. In Proceedings of the International Workshop on Computational Intelligence in Security for Information Systems CISIS’08; Corchado, E., Zunino, R., Gastaldo, P., Herrero, Á., Eds.; Springer: Berlin/Heidelberg, Germany, 2009; Volume 53, pp. 84–91. [Google Scholar]

- Petit, J.; Stottelaar, B.; Feiri, M.; Kargl, F. Remote attacks on automated vehicles sensors: Experiments on camera and lidar. Black Hat Europe 2015, 11, 2015. [Google Scholar]

- Ring, M.; Dürrwang, J.; Sommer, F.; Kriesten, R. Survey on vehicular attacks - building a vulnerability database. In Proceedings of the 2015 IEEE International Conference on Vehicular Electronics and Safety (ICVES), Yokohama, Japan, 5–7 November 2015; pp. 208–212. [Google Scholar]

- Spaar, D. Auto, öffne dich. Sicherheitslücken bei BMWs ConnectedDrive C 2015, 5, 15. [Google Scholar]

- Verdult, R.; Garcia, F.D.; Balasch, J. Gone in 360 seconds: Hijacking with Hitag2. In Proceedings of the 21st 5USENIX6 Security Symposium (5USENIX6 Security 12), Bellevue, WA, USA, 8–10 August 2012; pp. 237–252. [Google Scholar]

- Verdult, R.; Garcia, F.D.; Ege, B. Dismantling Megamos Crypto: Wirelessly Lockpicking a Vehicle Immobilizer. In Proceedings of the USENIX Security Symposium, Washington, DC, USA, 14–16 August 2013; pp. 703–718. [Google Scholar]

- Woo, S.; Jo, H.J.; Lee, D.H. A Practical Wireless Attack on the Connected Car and Security Protocol for In-Vehicle CAN. IEEE Trans. Intell. Transp. Syst. 2014, 1–14. [Google Scholar] [CrossRef]

- Miller, C.; Valasek, C. Adventures in automotive networks and control units. Def Con 2013, 21, 260–264. [Google Scholar]

- Miller, C.; Valasek, C. Remote exploitation of an unaltered passenger vehicle. Black Hat USA 2015, 2015, 91. [Google Scholar]

- Koscher, K.; Czeskis, A.; Roesner, F.; Patel, S.; Kohno, T.; Checkoway, S.; McCoy, D.; Kantor, B.; Anderson, D.; Shacham, H.; et al. Experimental Security Analysis of a Modern Automobile. In Proceedings of the 2010 IEEE Symposium on Security and Privacy, Berkeley/Oakland, CA, USA, 16–19 May 2010; pp. 447–462. [Google Scholar]

- Checkoway, S.; McCoy, D.; Kantor, B.; Anderson, D.; Shacham, H.; Savage, S.; Koscher, K.; Czeskis, A.; Roesner, F.; Kohno, T.; et al. Comprehensive Experimental Analyses of Automotive Attack Surfaces. In Proceedings of the USENIX Security Symposium, San Francisco, CA, USA, 8–9 August 2011. [Google Scholar]

- SAE Vehicle Electrical System Security Committee. SAE J3061-Cybersecurity Guidebook for Cyber-Physical Automotive Systems; SAE International: Warrendale, PA, USA, 2016. [Google Scholar]

- Barber, A. Status of work in Process on ISO/SAE 21434 Automotive Cybersecurity Standard; ISO SAE International: Geneva, Switzerland, 2018; Volume 10. [Google Scholar]

- Upstream Security Ltd. Smart Mobility Cyber Attacks Repository. 2019. Available online: https://www.upstream.auto/research/automotive-cybersecurity/ (accessed on 28 March 2019).

- Hochschule Karlsruhe - Technik und Wirtschaft. Sichere Datenverarbeitung beim autonomen Fahren: Starke IT-Sicherheit für das Auto der Zukunft—Forschungsverbund entwickelt neue Ansätze. Available online: https://www.hs-karlsruhe.de/presse/secforcars/ (accessed on 28 March 2019).

- Bundesministerium für Bildung und Forschung. SecForCARs: Sicherheit für vernetzte, autonome Fahrzeuge. Available online: https://www.forschung-it-sicherheit-kommunikationssysteme.de/projekte/sicherheit-fuer-vernetzte-autonome-fahrzeuge (accessed on 28 March 2019).

- Lee, E.A. Cyber Physical Systems: Design Challenges. In Proceedings of the IEEE Symposium on Object/Component/Service-Oriented Real-Time Distributed Computing, Orlando, FL, USA, 5–7 May 2008; pp. 363–369. [Google Scholar]

- King, J.D. Passive Remote Keyless Entry System. US Patent 6,236,333, 22 May 2001. [Google Scholar]

- Thomas, E.; Timo, K.; Amir, M.; Christof, P.; Mahmoud, S.; Mohammad, T.M.S. On the Power of Power Analysis in the Real World: A Complete Break of the KeeLoq Code Hopping Scheme. In CRYPTO 2008, Volume 5157 of LNCS; LNCS, Ed.; Springer: Berlin, Germany, 2008; pp. 203–220. [Google Scholar]

- Rüsberg, K. Keyless Gone: Autodiebe Tricksen Kontaktlose Schließsysteme aus. 2015. Available online: https://www.heise.de/ct/ausgabe/2015-26-Autodiebe-tricksen-kontaktlose-Schliesssysteme-aus-3013915.html (accessed on 28 March 2019).

- Courtois, N.T.; Bard, G.V.; Wagner, D. Algebraic and Slide Attacks on KeeLoq. In Fast Software Encryption; Nyberg, K., Ed.; Springer: Berlin/Heidelberg, Germany, 2008; Volume 5086, pp. 97–115. [Google Scholar]

- Miller, C.; Valasek, C. Can message injection. In OG Dynamite Edition; 2016; Available online: http://illmatics.com/can%20message%20injection.pdf (accessed on 17 April 2019).

- Hoppe, T.; Kiltz, S.; Dittmann, J. Security Threats to Automotive CAN Networks—Practical Examples and Selected Short-Term Countermeasures. In Computer Safety, Reliability, and Security; Harrison, M.D., Sujan, M.A., Eds.; Springer: Berlin, Germany; New York, NY, USA, 2008; Volume 5219, pp. 235–248. [Google Scholar]

- Miller, C.; Valasek, C. A survey of remote automotive attack surfaces. Black Hat USA 2014, 2014, 94. [Google Scholar]

- Foster, I.D.; Prudhomme, A.; Koscher, K.; Savage, S. Fast and Vulnerable: A Story of Telematic Failures. In Proceedings of the Workshop on Offensive Technologies (WOOT), Washington, DC, USA, 10–11 August 2015. [Google Scholar]

- Mahaffey, K. Hacking a Tesla Model S: What We Found and What We Learned. 2015. Available online: https://blog.lookout.com/hacking-a-tesla (accessed on 28 March 2019).

- Spill, D.; Bittau, A. BlueSniff: Eve Meets Alice and Bluetooth. WOOT 2007, 7, 1–10. [Google Scholar]

- Committee, S.O.R.A.V.S. Taxonomy and Definitions for Terms Related to on-Road Motor Vehicle Automated Driving Systems; SAE International: Warrendale, PA, USA, 2014. [Google Scholar]

- Sitawarin, C.; Bhagoji, A.N.; Mosenia, A.; Chiang, M.; Mittal, P. DARTS: Deceiving Autonomous Cars with Toxic Signs. Available online: http://arxiv.org/pdf/1802.06430v3 (accessed on 28 March 2019).

- Eykholt, K.; Evtimov, I.; Fernandes, E.; Li, B.; Rahmati, A.; Xiao, C.; Prakash, A.; Kohno, T.; Song, D. Robust Physical-World Attacks on Deep Learning Models. Available online: http://arxiv.org/pdf/1707.08945v5 (accessed on 28 March 2019).

- Sommer, F.; Dürrwang, J. IEEM-HsKA/AAD: Automotive Attack Database (AAD). 2019. Available online: https://github.com/IEEM-HsKA/AAD (accessed on 28 March 2019).

- Booth, H.; Rike, D.; Witte, G. The National Vulnerability Database (NVD): Overview. Available online: https://nvd.nist.gov/ (accessed on 28 March 2019).

- Mitre, C. Common Vulnerabilities and Exposures. 2005. Available online: https://cve.mitre.org/ (accessed on 28 March 2019).

- Australia Cyber Emergency Response Team. Security Bulletins. Available online: https://www.auscert.org.au/bulletins/ (accessed on 28 March 2019).

- Computer Emergency Response Team Coordination Center of China. China National Vulnerability Database (CNVD). Available online: http://www.cnvd.org.cn/ (accessed on 2 March 2019).

- China Information Security Evaluation Center. China National Vulnerability Database of Information Security (CNNVD). Available online: http://www.cnnvd.org.cn/ (accessed on 2 March 2019).

- Packet Storm. Exploits. Available online: https://packetstormsecurity.com/ (accessed on 28 March 2019).

- Offensive Security. Exploit Database. Available online: https://www.exploit-db.com/ (accessed on 28 March 2019).

- Rapid7. Vulnerability and Exploit Database. Available online: https://www.rapid7.com/db/ (accessed on 28 March 2019).

- Smiraglia, R.P. The Elements of Knowledge Organization; Springer: Berlin, Germany, 2014. [Google Scholar]

- Bundesamt für Sicherheit in der Informationstechnik. Leitfaden IT-Forensik. Available online: https://www.bsi.bund.de/SharedDocs/Downloads/DE/BSI/Cyber-Sicherheit/Themen/Leitfaden_IT-Forensik.pdf?__blob=publicationFile&v=2 (accessed on 28 March 2019).

- Weber, S.; Karger, P.A.; Paradkar, A. A software flaw taxonomy: Aiming tools at security. ACM SIGSOFT Softw. Eng. Notes 2005, 30, 1–7. [Google Scholar] [CrossRef]

- Abbott, R.P.; Chin, J.S.; Donnelley, J.E.; Konigsford, W.L.; Tokubo, S.; Webb, D.A. Security analysis and enhancements of computer operating systems. Available online: https://nvlpubs.nist.gov/nistpubs/Legacy/IR/nbsir76-1041.pdf (accessed on 17 April 2019).

- Aslam, T. A Taxonomy of Security Faults in the Unix Operating System. Master’s Thesis, Purdue University, West Lafayette, Indiana, 1995. [Google Scholar]

- Aslam, T.; Krsul, I.; Spafford, E.H. Use of a Taxonomy of Security Faults. 1996. Available online: http://citeseerx.ist.psu.edu/viewdoc/summary?doi=10.1.1.140.5631 (accessed on 17 April 2019).

- Bisbey, R.; Hollingworth, D. Protection Analysis: Final Report; ISI/SR-78-13, Information Sciences Inst; 1978; Volume 3. Available online: https://www.ncjrs.gov/App/Publications/abstract.aspx?ID=57682 (accessed on 17 April 2019).

- Landwehr, C.E.; Bull, A.R.; McDermott, J.P.; Choi, W.S. A taxonomy of computer program security flaws. ACM Comput. Surv. 1994, 26, 211–254. [Google Scholar] [CrossRef]

- Novak, J.; Krajnc, A.; Žontar, R. Taxonomy of static code analysis tools. In Proceedings of the 33rd International Convention MIPRO, Opatija, Croatia, 24–28 May 2010; pp. 418–422. [Google Scholar]

- Lindqvist, U.; Jonsson, E. How to systematically classify computer security intrusions. In Proceedings of the IEEE Symposium on Security and Privacy, Oakland, CA, USA, 4–7 May 1997; pp. 154–163. [Google Scholar]

- Neumann, P.G.; Parker, D.B. A summary of computer misuse techniques. In Proceedings of the 12th National Computer Security Conference, Baltimore, MD, USA, 10–13 October 1989; pp. 396–407. [Google Scholar]

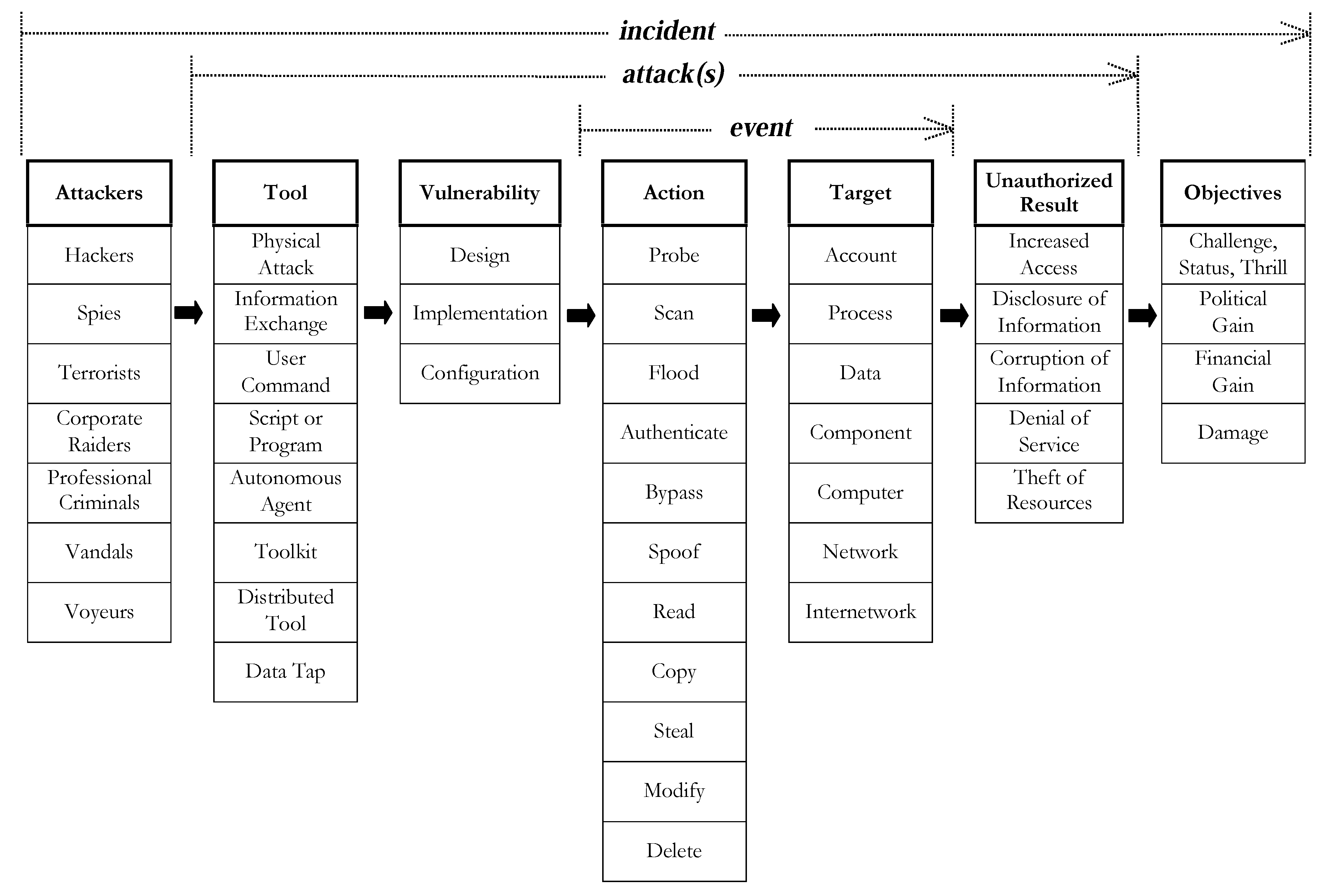

- Howard, J.D. An Analysis of Security Incidents on the Internet 1989–1995; Carnegie-Mellon Univ.: Pittsburgh, PA, USA, 1997. [Google Scholar]

- Howard, J.D.; Longstaff, T.A. A Common Language for Computer Security Incidents; Sandia National Labs.: Livermore, CA, USA, 1998. [Google Scholar]

- Hoppe, T.; Dittman, J. Sniffing/Replay Attacks on CAN Buses: A simulated attack on the electric window lift classified using an adapted CERT taxonomy. In Proceedings of the 2nd Workshop on Embedded Systems Security (WESS), Salzburg, Austria, 4 October 2007; pp. 1–6. [Google Scholar]

- Thing, V.L.L.; Wu, J. Autonomous vehicle security: A taxonomy of attacks and defences. In Proceedings of the 2016 IEEE International Conference on Internet of Things (iThings) and IEEE Green Computing and Communications (GreenCom) and IEEE Cyber, Physical and Social Computing (CPSCom) and IEEE Smart Data (SmartData), Chengdu, China, 15–18 December 2016; pp. 164–170. [Google Scholar]

- Bundesamt für Sicherheit in der Informationstechnik. Cyber-Sicherheit: BSI—Leitfaden IT-Forensik. Available online: https://www.bsi.bund.de/DE/Themen/Cyber-Sicherheit/Dienstleistungen/IT-Forensik/forensik_node.html (accessed on 28 March 2019).

- Arkin, B.; Stender, S.; McGraw, G. Software penetration testing. IEEE Secur. Priv. 2005, 3, 84–87. [Google Scholar] [CrossRef]

- Kohnfelder, L.; Garg, P. The STRIDE Threat Model. Available online: https://docs.microsoft.com/en-us/previous-versions/commerce-server/ee823878(v=cs.20) (accessed on 28 March 2019).

- ISO. 14229: 2006(E)–Road Vehicles—Unified Diagnostic Services (UDS)—Specification and Requirements. 2015. Available online: https://www.iso.org/standard/45293.html (accessed on 17 April 2019).

- ISO. 11898-1: 2015–Road Vehicles–Controller Area Network (CAN)—Part 1: Data Link Layer and Physical Signalling. 2015. Available online: https://www.iso.org/standard/63648.html (accessed on 17 April 2019).

- Katz, J.; Menezes, A.J.; van Oorschot, P.C.; Vanstone, S.A. Handbook of Applied Cryptography; CRC Press: Boca Raton, FL, USA, 1996. [Google Scholar]

- Charniak, E. Introduction to Artificial Intelligence; Pearson Education: Delhi, India, 1985. [Google Scholar]

- Huang, S.; Papernot, N.; Goodfellow, I.; Duan, Y.; Abbeel, P. Adversarial Attacks on Neural Network Policies. Available online: http://arxiv.org/pdf/1702.02284v1 (accessed on 28 March 2019).

- Shostack, A. STRIDE chart. 2007. Available online: https://www.microsoft.com/security/blog/2007/09/11/stride-chart/ (accessed on 28 March 2019).

- CVSS Special Interest Group. Common Vulnerability Scoring System v3.0: Specification Document. 2019. Available online: https://www.first.org/cvss/specification-document (accessed on 28 March 2019).

- Tsipenyuk, K.; Chess, B.; McGraw, G. Seven pernicious kingdoms: A taxonomy of software security errors. IEEE Secur. Priv. 2005, 3, 81–84. [Google Scholar] [CrossRef]

- Christey, S. PLOVER- Preliminary List Of Vulnerability Examples for Researchers. 2006. Available online: https://cwe.mitre.org/documents/sources/PLOVER.pdf (accessed on 28 March 2019).

- MITRE, C.W. Common weakness enumeration. 2006. Available online: https://cwe.mitre.org/ (accessed on 28 March 2019).

- Zimmermann, W.; Schmidgall, R. Bussysteme in der Fahrzeugtechnik: Protokolle, Standards und Softwarearchitektur; Springer Vieweg: Wiesbaden, Germany, 2014. [Google Scholar]

- Popovici, K.; Jerraya, A. Hardware Abstraction Layer. In Hardware-Dependent Software; Springer: Berlin, Germany, 2009; pp. 67–94. [Google Scholar]

- Winter, M.; Goetz, H.; Brandes, C.; Roßner, T. Basiswissen Modellbasierter Test; dpunkt.verlag GmbH: Heidelberg, Germany, 2012. [Google Scholar]

- Ruddle, A.; Ward, D.; Weyl, B.; Idrees, S.; Roudier, Y.; Friedewald, M.; Leimbach, T.; Fuchs, A.; Grgens, S.; Henniger, O.; et al. Deliverable d2. 3: Security Requirements for Automotive On-Board Networks Based on Dark-Side Scenarios. 2009. Available online: https://evita-project.org/deliverables.html (accessed on 28 March 2019).

- Kerckhoffs, A. La cryptographie militaire. J. Sci. Mil. 1883, 9, 38. [Google Scholar]

- Offensive Security. Kali Linux Tools Listing. 2019. Available online: https://tools.kali.org/tools-listing (accessed on 28 March 2019).

- Kennedy, D.; O’gorman, J.; Kearns, D.; Aharoni, M. Metasploit: The Penetration Tester’s Guide; No Starch Press: San Francisco, CA, USA, 2011. [Google Scholar]

- Center, C.C. CERT Vulnerability Notes Database. 2001. Available online: https://www.kb.cert.org/vuls/ (accessed on 28 March 2019).

- ISO 26262. Road Vehicles—Functional Safety. 2018. Available online: https://www.iso.org/standard/68383.html (accessed on 28 March 2019).

- Ring, M.; Dürrwang, J. Angriffsklassifizierung. Available online: www.mmt.hs-karlsruhe.de/downloads/IEEM/Angriffsklassifizierung.ods (accessed on 28 March 2019).

- Bishop, M.; Bailey, D. A Critical Analysis of Vulnerability Taxonomies. 1996. Available online: http://www.selfsec.com/unclassified/hacking/A%20Critical%20Analysis%20of%20Vulnerability% 20Taxonomies.pdf (accessed on 17 April 2019).

| Category | Level 1 | Level 2 | Level 3 |

|---|---|---|---|

| Description | Deactivation of the ECU communication over CAN by using a standard diagnostic function | ||

| Reference | Experimental Security Analysis of a Modern Automobile (Koscher et al.) | ||

| Year | 2010 | ||

| Attack Class | Denial of Service | None | None |

| Attack Base | Diagnostic Attack | ||

| Attack Type | Real Attack | ||

| Violated Security Property | Availability | ||

| Affected Asset | Realiability | ||

| Vulnerability | CWE-664: Improper Control of a Resource Through its Lifetime | CWE-284: Improper Access Control | Standard is not met because “disable CAN communication” command must not be executed when vehicle is in motion |

| Interface | OBD | ||

| Consequence | ECU communication is disabled while driving the vehicle | ||

| Attack Path | Sending the standard command for disabling the CAN communication via OBD | ||

| Requirement | Required Access/Connection | OBD | None |

| Restriction | None | None | None |

| Attack Level | Local Network | ||

| Acquired Privileges | Execute (Functional Component) | ||

| Vehicle | Vehicle brand not known | ||

| Component | Engine ECU | Electronic Brake ECU | Engine Control Module |

| Electronic Brake ECU | Electronic Brake Control Module | None | |

| ... | ... | ... | |

| Tool | Hardware Tool | Interface | CAN-to-USB-Converter |

| Hardware Tool | Laptop/PC | None | |

| Measurement Tool | Oscilloscope | None | |

| Hardware Tool | Evaluation Board | Atmel AVR-CAN | |

| Hardware Tool | Interface | CANCapture ECOM Cable | |

| Software Tool | Communication Tool | CARSHARK | |

| Security Testing Tool | Reverse Engineering Tool | IDA Pro | |

| Attack Motivation | Security Evaluation | ||

| Entry in Vulnerability Database | None | ||

| Rating | CVSS: 7.4 | ||

| Exploitability | CVSS Exploitability: 2.84 | ||

| Category | Level 1 | Level 2 | Level 3 |

|---|---|---|---|

| Description | Unauthorized flashing of malicious code on the engine ECU by using the diagnostic reprogramming routine | ||

| Reference | Adventures in Automotive Networks and Control Units (C. Valasek et al.) | ||

| Year | 2013 | ||

| Attack Class | Tampering | Firmware Modification | None |

| Attack Base | Diagnostic Attack | ||

| Attack Type | Real Attack | ||

| Violated Security Property | Integrity | ||

| Affected Asset | Information Security | ||

| Vulnerability | CWE-693: Protection Mechanism Failure | CWE-287: Improper Authentication | Unauthorized reprogramming possible |

| Interface | OBD | ||

| Consequence | Flashing of malicious code on ECU | ||

| Attack Path | Downloading a new calibration update for ECU from manufacturer and Reverse Engineering of the Toyota Update Calibration Wizard (CUW). Monitoring the update process. Reverse Engineering update algorithm for calibration updates. Modification of calibration update. Reflashing of malicious update. | ||

| Requirement | Required Access/Connection | OBD | None |

| Restriction | Security Feature | Access Control | Security Layer which is tied to the Calibration Version and allows only one time overwriting |

| Attack Level | Local Network | ||

| Acquired Privileges | Full Control (Functional Component) | ||

| Vehicle | Toyota Prius (Year of Construction: 2010) | ||

| Component | Engine ECU | Engine Control Module | 2 CPUs, NEC v850, Renesas M16/C |

| Tool | Software Tool | Vehicle Diagnostic Software | Toyota Calibration Update Wizard (CUW) |

| Hardware Tool | Interface | J2534 PassThru Device (CarDAQPlus) | |

| Hardware Tool | Interface | ECOM cable | |

| Hardware Tool | Laptop/PC | Windows PC | |

| Software Tool | Communication Tool | EcomCat Application | |

| Attack Motivation | Security Evaluation | ||

| Entry in Vulnerability Database | None | ||

| Rating | CVSS: 6.8 | ||

| Exploitability | CVSS Exploitability: 1.62 | ||

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Sommer, F.; Dürrwang, J.; Kriesten, R. Survey and Classification of Automotive Security Attacks. Information 2019, 10, 148. https://doi.org/10.3390/info10040148

Sommer F, Dürrwang J, Kriesten R. Survey and Classification of Automotive Security Attacks. Information. 2019; 10(4):148. https://doi.org/10.3390/info10040148

Chicago/Turabian StyleSommer, Florian, Jürgen Dürrwang, and Reiner Kriesten. 2019. "Survey and Classification of Automotive Security Attacks" Information 10, no. 4: 148. https://doi.org/10.3390/info10040148

APA StyleSommer, F., Dürrwang, J., & Kriesten, R. (2019). Survey and Classification of Automotive Security Attacks. Information, 10(4), 148. https://doi.org/10.3390/info10040148