Learning Subword Embedding to Improve Uyghur Named-Entity Recognition

Abstract

1. Introduction

2. Related Works

3. Methodology

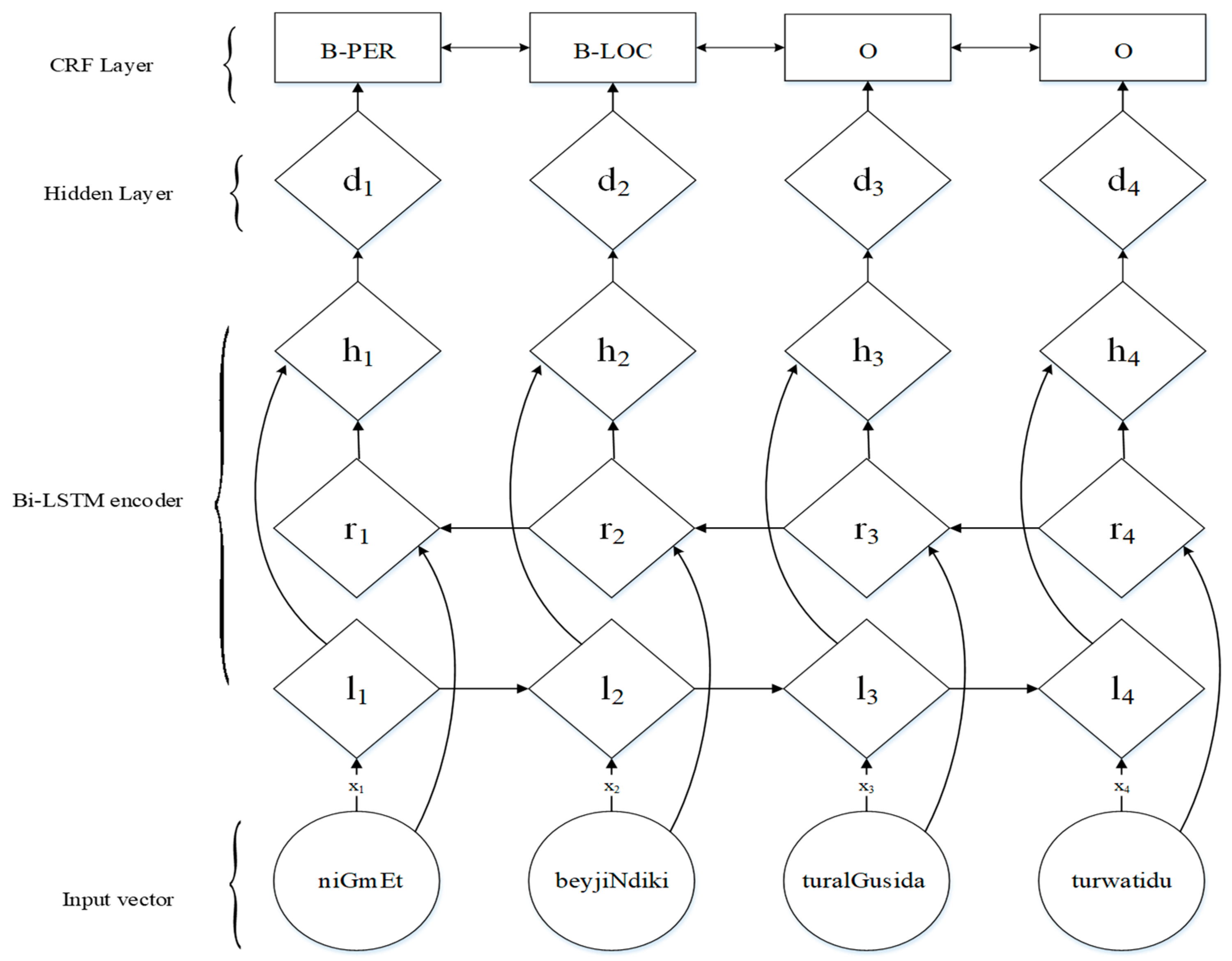

3.1. Word-Based Neural Model

3.2. Subword-Based Neural Model

Latin Uyghur: niGmEt beyjiNdiki turalGusida turwatidu. (niGmEt lives in Beijing.)Single-point segmentation: niGmEt beyjiN/diki turalGu/sida tur/watiduMulti-point segmentation: niGmEt beyjiN/diki turalGu/si/da tur/watidu

3.3. Features

3.3.1. Word Embedding

3.3.2. Subword Embedding

3.3.3. Character Embedding

4. Experiments

4.1. Datasets

4.2. Training and Evaluation

4.3. Experimental Results and Discussion

4.4. OOV Error Comparison with Different Models

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Lample, G.; Ballesteros, M.; Subramanian, S.; Kawakami, K.; Dyer, C. Neural architectures for named entity recognition. arXiv, 2016; arXiv:1603.01360. [Google Scholar]

- Ma, X.; Hovy, E. End-to-end sequence labeling via bi-directional LSTM-CNN-CRF. arXiv, 2016; arXiv:1603.01354. [Google Scholar]

- Dong, C.; Zhang, J.; Zong, C.; Hattori, M.; Di, H. Character-based LSTM-CRF with radical-level features for Chinese named entity recognition. In Natural Language Understanding and Intelligent Applications; Springer: Cham, Switzerland, 2016; pp. 239–250. [Google Scholar]

- Xiang, Y. Chinese Named Entity Recognition with Character-Word Mixed Embedding. In Proceedings of the 2017 ACM on Conference on Information and Knowledge Management, Singapore, 6–10 November 2017; pp. 2055–2058. [Google Scholar]

- Rozi, A.; Zong, C.; Mamateli, G.; Mahmut, R.; Hamdulla, A. Approach to recognizing Uyhgur names based on conditional random fields. J. Tsinghua Univ. 2013, 53, 873–877. [Google Scholar]

- Tashpolat, N.; Wang, K.; Askar, H.; Palidan, T. Combination of statistical and rule-based approaches for Uyghur person-name recognition. Acta Autom. Sin. 2017, 43, 653–664. [Google Scholar]

- Maimaiti, M.; Abiderexiti, K.; Wumaier, A.; Yibulayin, T.; Wang, L. Uyghur location names recognition based on conditional random fields and rules. J. Chin. Inf. Process. 2017, 31, 110–118. [Google Scholar]

- Marcińczuk, M. Automatic construction of complex features in conditional random fields for named entities recognition. In Proceedings of the International Conference Recent Advances in Natural Language Processing, Hissar, Bulgaria, 7–9 September 2015. [Google Scholar]

- Gayen, V.; Sarkar, K. An HMM based named entity recognition system for Indian languages: JU system at ICON 2013. arXiv, 2014; arXiv:1405.7397. [Google Scholar]

- Kravalová, J.; Žabokrtský, Z. Czech Named Entity Corpus and SVM-Based Recognizer. In Proceedings of the 2009 Named Entities Workshop: Shared Task on Transliteration, Singapore, 7 August 2009; pp. 194–201. [Google Scholar]

- Collobert, R.; Weston, J.; Bottou, L.; Karlen, M.; Kavukcuoglu, K.; Kuksa, P. Natural language processing (almost) from scratch. J. Mach. Learn. Res. 2011, 12, 2493–2537. [Google Scholar]

- Huang, Z.; Xu, W.; Yu, K. Bidirectional LSTM-CRF models for sequence tagging. arXiv, 2015; arXiv:1508.01991. [Google Scholar]

- Chiu, J.P.C.; Nichols, E. Named entity recognition with bidirectional LSTM-CNNs. Trans. Assoc. Comput. Linguist 2016, 4, 357–370. [Google Scholar] [CrossRef]

- Rei, M.; Crichton, G.K.; Pyysalo, S. Attending to characters in neural sequence labeling models. arXiv, 2016; arXiv:1611.04361. [Google Scholar]

- Shen, Y.; Yun, H.; Lipton, Z.C.; Kronrod, Y.; Anandkumar, A. Deep active learning for named entity recognition. arXiv, 2017; arXiv:1707.05928. [Google Scholar]

- Gungor, O.; Yildiz, E.; Uskudarli, S.; Gungor, T. Morphological embeddings for named entity recognition in morphologically rich languages. arXiv, 2017; arXiv:1706.00506. [Google Scholar]

- Bharadwaj, A.; Mortensen, D.; Dyer, C.; Carbonell, J. Phonologically aware neural model for named entity recognition in low resource transfer settings. In Proceedings of the 2016 Conference on Empirical Methods in Natural Language Processing, Austin, TX, USA, 1–5 November 2016; pp. 1462–1472. [Google Scholar]

- Wang, W.; Bao, F.; Gao, G. Mongolian named entity recognition with bidirectional recurrent neural networks. In Proceedings of the 2016 IEEE 28th International Conference on Tools with Artificial Intelligence (ICTAI), San Jose, CA, USA, 6–8 November 2016; pp. 495–500. [Google Scholar]

- Maihefureti Rouzi, M.; Aili, M.; Yibulayin, T. Uyghur organization name recognition based on syntactic and semantic knowledge. Comput. Eng. Des. 2014, 35, 2944–2948. [Google Scholar]

- Halike, A.; Wumaier, H.; Yibulayin, T.; Abiderexiti, K.; Maimaiti, M. Research on recognition and translation of Chinese-Uyghur time and numeral and quantifier. J. Chin. Inf. Process. 2016, 30, 190–200. [Google Scholar]

- Bengio, Y.; Simard, P.; Frasconi, P. Learning long-term dependencies with gradient descent is difficult. IEEE Trans. Neural Netw. 1994, 5, 157–166. [Google Scholar] [CrossRef] [PubMed]

- Hochreiter, S.; Schmidhuber, J. Long short-term memory. Neural Comput. 1997, 9, 1735–1780. [Google Scholar] [CrossRef] [PubMed]

- Greff, K.; Srivastava, R.K.; Koutník, J.; Steunebrink, B.R.; Schmidhuber, J. LSTM: A Search Space Odyssey. IEEE Trans. Neural Netw. Learn. Syst. 2015, 28, 2222–2232. [Google Scholar] [CrossRef] [PubMed]

- Graves, A.; Schmidhuber, J. Framewise phoneme classification with bidirectional LSTM and other neural network architectures. Neural Netw. 2005, 18, 602–610. [Google Scholar] [CrossRef] [PubMed]

- Graves, A.; Mohamed, A.R.; Hinton, G. Speech recognition with deep recurrent neural networks. In Proceedings of the 2013 IEEE International Conference on Acoustics, Speech and Signal Processing, Vancouver, BC, Canada, 26–31 May 2013; pp. 6645–6649. [Google Scholar]

- Creutz, M.; Hirsimäki, T.; Kurimo, M.; Puurula, A.; Pylkkönen, J.; Siivola, V.; Varjokallio, M.; Arisoy, E.; Saraçlar, M.; Stolcke, A. Morph-based speech recognition and modeling of out-of-vocabulary words across languages. ACM Trans. Speech Lang. Process. 2007, 5, 3. [Google Scholar] [CrossRef]

- Lai, S.; Liu, K.; He, S.; Zhao, J. How to generate a good word embedding. IEEE Intell. Syst. 2016, 31, 5–14. [Google Scholar] [CrossRef]

- Maimaiti, M.; Wumaier, A.; Abiderexiti, K.; Wang, L.; Wu, H.; Yibulayin, T. Construction of Uyghur named entity corpus. In Proceedings of the Eleventh International Conference on Language Resources and Evaluation (LREC-2018), Miyazaki, Japan, 7–12 May 2018; p. 14. [Google Scholar]

- Wang, L.; Wumaier, A.; Maimaiti, M.; Abiderexiti, K.; Yibulayin, T. A semi-supervised approach to Uyghur named entity recognition based on CRF. J. Chin. Inf. Process. 2018, 32, 16–26, 33. [Google Scholar]

| Method | Segmentation Category | F1 |

|---|---|---|

| bi-LSTM | single-point | 90.61 |

| SRILM-Ngram | multi-point | 43.40 |

| MaxMatch | multi-point | 82 |

| Type | Sentence | Token | NE | PER | LOC | ORG |

|---|---|---|---|---|---|---|

| dataset | 39,027 | 1,152,645 (91,599) | 102,360 (48,792) | 28,469 (15,174) | 42,585 (14,842) | 31,306 (18,805) |

| train | 29,270 | 861,967 (77,665) | 76,787 (38,561) | 21,304 (12,061) | 32,011 (11,847) | 23,472 (14,652) |

| dev | 3902 | 115,689 (22,574) | 10,215 (6854) | 2842 (2142) | 4258 (2257) | 3115 (2457) |

| test | 5855 | 174,989 (29,639) | 15,358 (9713) | 4323 (3073) | 6316 (3166) | 4719 (3477) |

| Segmentation Method | Dev | Test | ||||||

|---|---|---|---|---|---|---|---|---|

| PER | LOC | ORG | AVE | PER | LOC | ORG | AVE | |

| bi-LSTM | 93.70 | 88.75 | 87.43 | 89.72 | 93.46 | 87.66 | 86.79 | 89.02 |

| SRILM-Ngram | 93.42 | 88.73 | 87.46 | 89.65 | 92.72 | 87.16 | 86.16 | 88.42 |

| MaxMatch | 93.14 | 88.49 | 86.93 | 89.29 | 93.11 | 87.26 | 86.85 | 88.78 |

| Model | Input Embedding | DEV | TEST | ||||||

|---|---|---|---|---|---|---|---|---|---|

| PER | LOC | ORG | Total | PER | LOC | ORG | Total | ||

| CRF (Wang et al. 2018) | - | - | - | - | - | 91.65 | 85.72 | 85.91 | 87.43 |

| Word-based neural model | word embedding | 93.03 | 87.40 | 87.22 | 88.89 | 92.01 | 86.17 | 86.79 | 88.04 |

| +char embedding | 94.47 | 89.19 | 87.82 | 90.24 | 94.63 | 87.80 | 87.04 | 89.49 | |

| Subword-based neural model | subword embedding | 93.70 | 88.75 | 87.43 | 89.72 | 93.46 | 87.66 | 86. 79 | 89.02 |

| +char embedding | 95.00 | 89.83 | 87.59 | 90.57 | 94.17 | 88.45 | 86.79 | 89.55 | |

| Datasets | Type | IV | OOTV | OOEV | OOBV |

|---|---|---|---|---|---|

| Word-Datasets | DEV | 10,581 | 4465 | 1607 | 1195 |

| TEST | 15,718 | 6748 | 2569 | 1878 | |

| Subword-Datasets | DEV | 13,818 | 4400 | 1844 | 1313 |

| TEST | 20,497 | 6866 | 3045 | 2122 |

| Model | Input Embedding | DEV | TEST | ||||||

|---|---|---|---|---|---|---|---|---|---|

| IV | OOTV | OOEV | OOBV | IV | OOTV | OOEV | OOBV | ||

| Baseline | - | - | - | - | - | 88.64 | 69.48 | 82.01 | 80.32 |

| Word-based neural model | word embedding | 97.33 | 79.63 | 79.04 | 77.42 | 96.82 | 77.37 | 74.47 | 75.35 |

| +char embedding | 97.46 | 85.77 | 83.65 | 84.87 | 97.37 | 83.52 | 78.88 | 79.83 | |

| Subword-based neural model | subword embedding | 97.54 | 82.26 | 81.33 | 79.87 | 97.32 | 81.13 | 75.90 | 76.79 |

| +char embedding | 97.59 | 85.79 | 84.80 | 83.24 | 97.48 | 85.14 | 81.19 | 82.42 | |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Saimaiti, A.; Wang, L.; Yibulayin, T. Learning Subword Embedding to Improve Uyghur Named-Entity Recognition. Information 2019, 10, 139. https://doi.org/10.3390/info10040139

Saimaiti A, Wang L, Yibulayin T. Learning Subword Embedding to Improve Uyghur Named-Entity Recognition. Information. 2019; 10(4):139. https://doi.org/10.3390/info10040139

Chicago/Turabian StyleSaimaiti, Alimu, Lulu Wang, and Tuergen Yibulayin. 2019. "Learning Subword Embedding to Improve Uyghur Named-Entity Recognition" Information 10, no. 4: 139. https://doi.org/10.3390/info10040139

APA StyleSaimaiti, A., Wang, L., & Yibulayin, T. (2019). Learning Subword Embedding to Improve Uyghur Named-Entity Recognition. Information, 10(4), 139. https://doi.org/10.3390/info10040139