2.2.2. The Neural Network Inversion Method

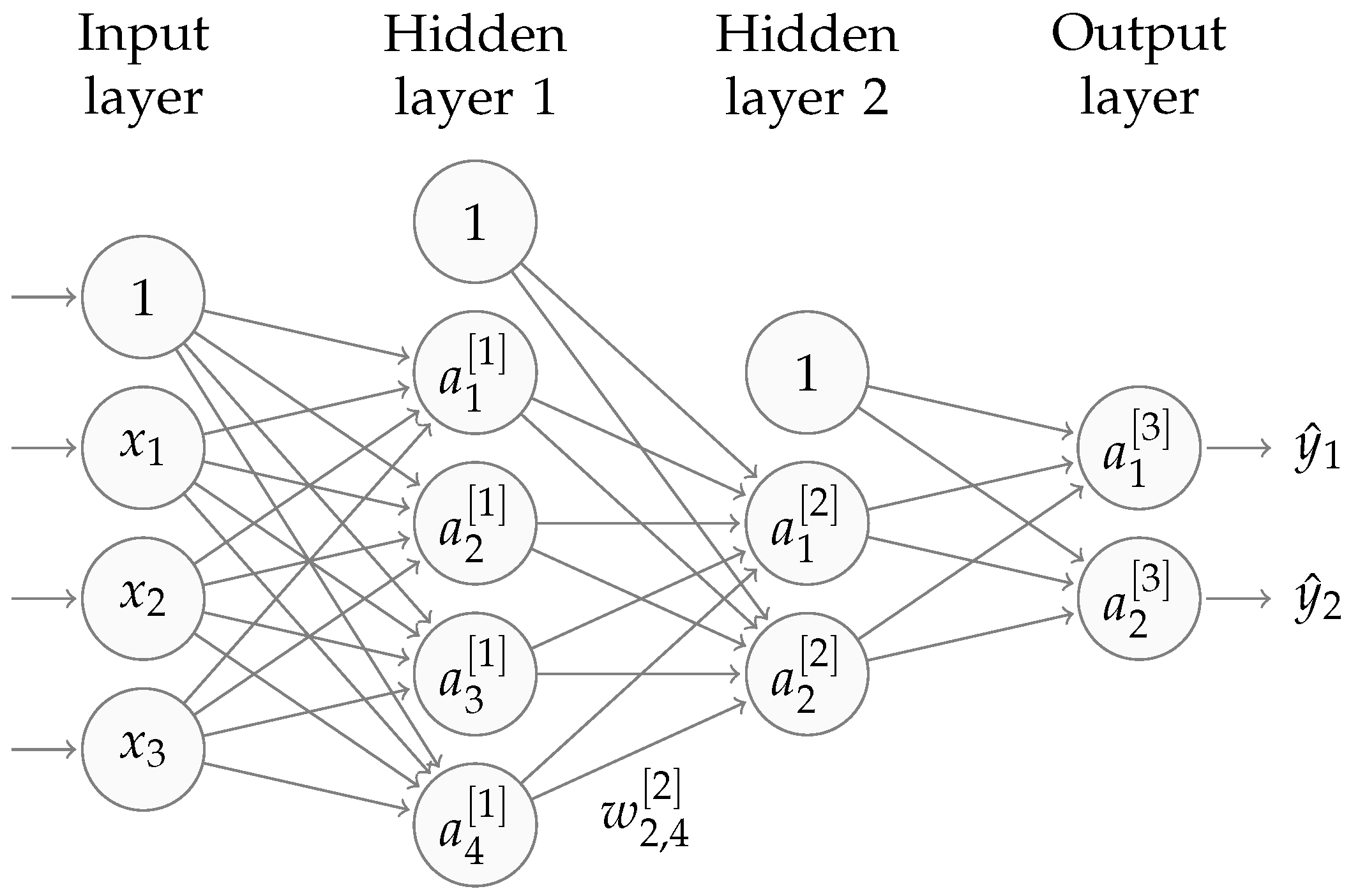

A neural network consists of an

input layer, a set of

hidden layers and an

output layer, where each layer comprises a varying number of

nodes. The number of nodes in the input and output layers is dependent on the specific problem, and the number in the hidden layers is set by the user. Furthermore, each node is connected to all of the nodes in the previous and following layers by some weight,

, which signifies the weight between the

node in the

layer and the

node in the

layer. At each layer, a bias vector,

, is also added.

Figure 3 depicts a basic neural network.

The goal of a neural network is to learn appropriate values for the weights, connecting the neurons, in order to map accurately some input vector to an output vector .

To train the neural network, a training set was used, which consisted of input and output data pairs, . The training process included two steps: forward propagation and backward propagation.

In the forward propagation step, an input value

(which, for the following expression, is equivalent to

), was propagated from left to right through the neural network. To do this, at hidden layer

l, we calculated:

where:

for

,

,

, and

, where there are

m nodes in layer

l and

n in layer

. In Equation (

5),

g is an

activation function, which is usually some nonlinear function, which allows the algorithm to learn more complex nonlinear relationships; some common choices include the sigmoid, relu, elu [

31] and tanh functions. This process was repeated until the output layer was reached, and then, the generated value,

, was compared to the training set output value,

, by some defined metric, here called the

cost function, which measures the error in the prediction.

We then performed backpropagation, where the error was propagated back (i.e., right to left) through the neural network, using the chain rule, to find the derivatives of the cost function with respect to each weight and bias term. The derivatives were used in a minimising algorithm, such as the Adam [

32] or RMSProp optimisers, to update the values of the weights such that the prediction error was decreased.

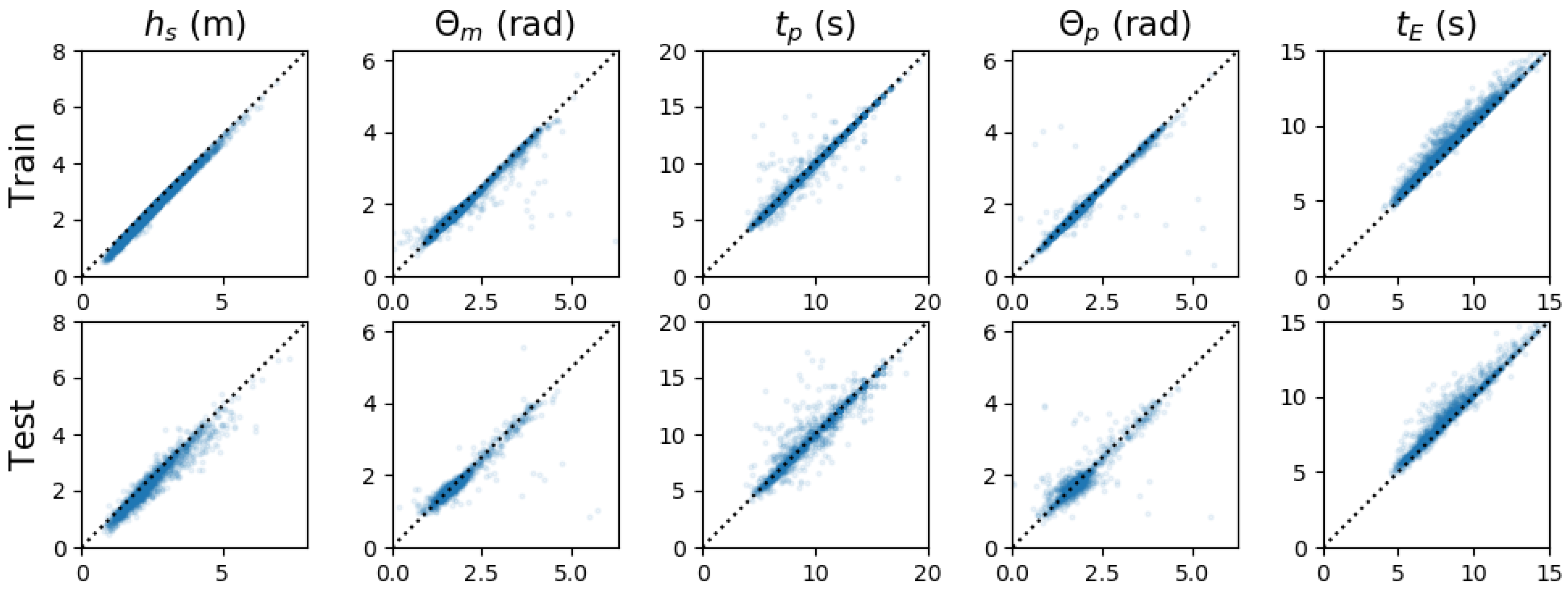

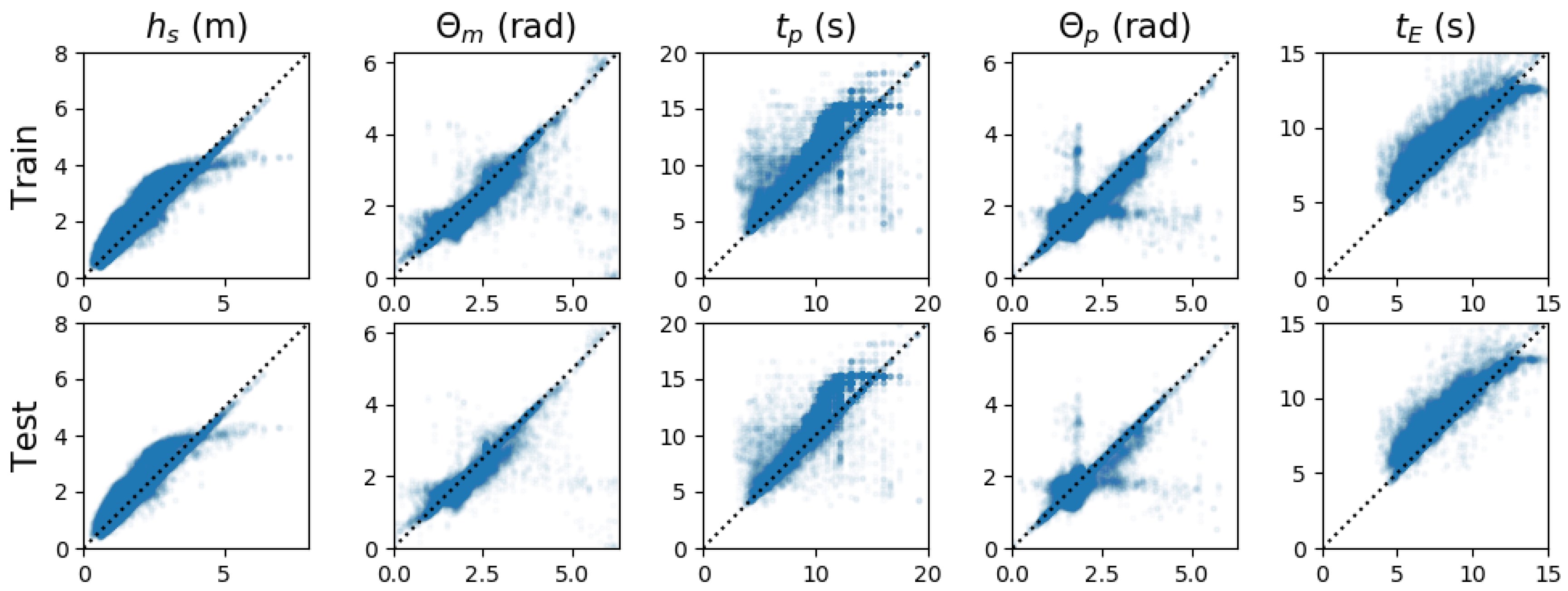

The forward/backward propagation process was repeated until a set number of iterations was executed, or a specific accuracy level was reached. The learning phase was then stopped and the results tested. To ensure the test was unbiased, a test set was used, which is a reserved portion of the dataset on which the neural network has not been trained. Using the test set, we can identify if the model is overfitting, which is when the neural network performs significantly better on the training data than the test data, i.e., it fails to generalise. In such a case, we can use regularization, which penalises the neural network when it fits too well, to reduce overfitting. The test set is usually smaller than the training set, as the training phase requires as much data, with as much variation, as possible.

The performance of the neural network largely depends on its architecture; therefore, choosing the following parameters is important:

There is no particular rule on how to choose the optimal architecture, it is often a case of investigating the different combinations and finding one that is within a tolerance. A trade-off between time and performance may arise, and the user then has to decide what is more important. A genetic algorithm can be used to search more intelligently through a large set of combinations and is, in fact, used in this work; see the work of Mitchell [

33] for an introduction to the subject.

In this work, the goal is to train a neural network (implemented using the TensorFlow framework for Python 3 (

https://www.tensorflow.org/)) that can map from radar Doppler spectra to the corresponding ocean spectra. Although it may be possible for a neural network to learn to resolve the directional ambiguity that exists for a single radar, this is yet to be tested. Therefore, to resolve the directional ambiguity, two radars will be used to provide two spectra for each location. Consequently, the neural network will have two radar Doppler spectra as the input and the corresponding directional ocean spectrum as the output.

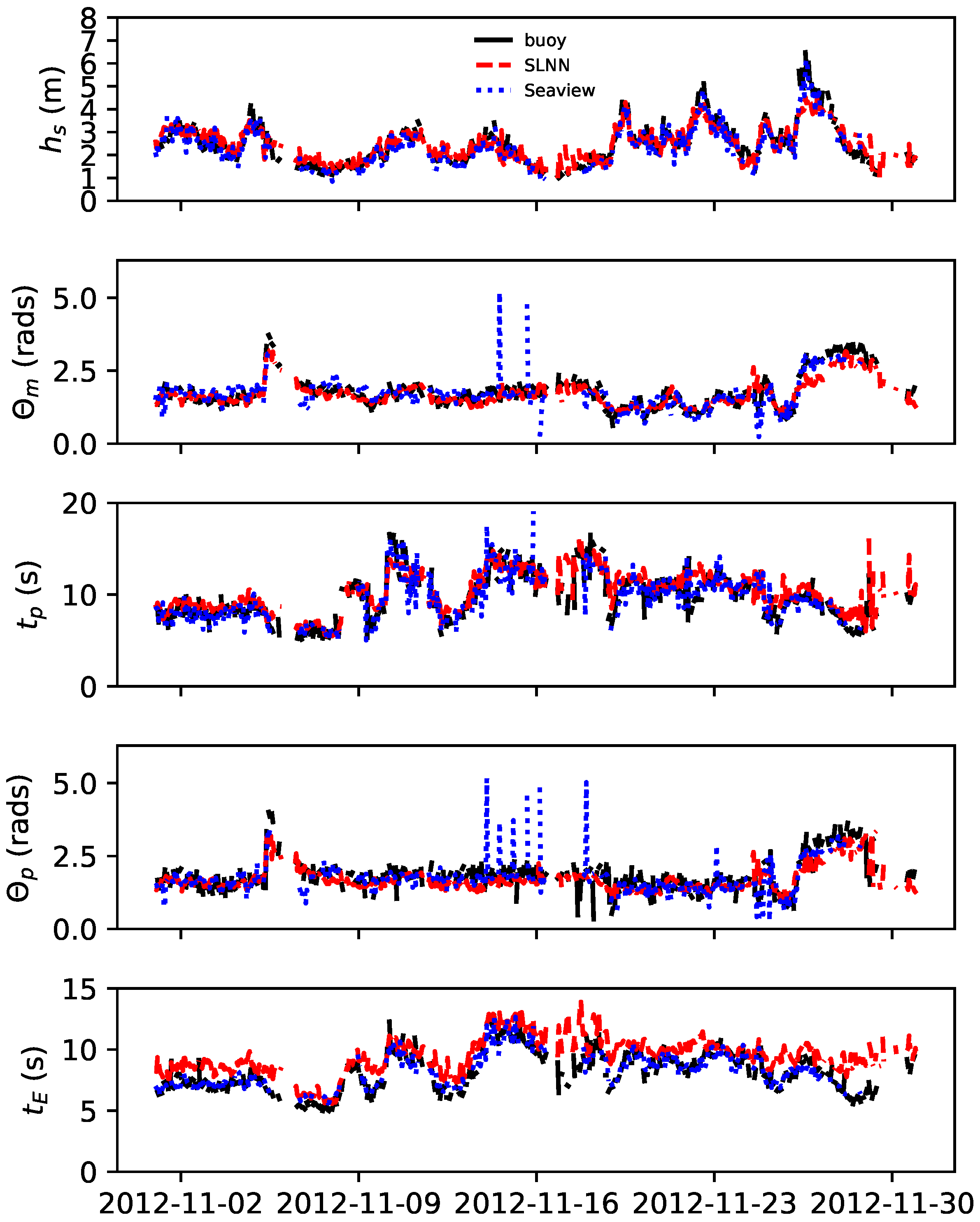

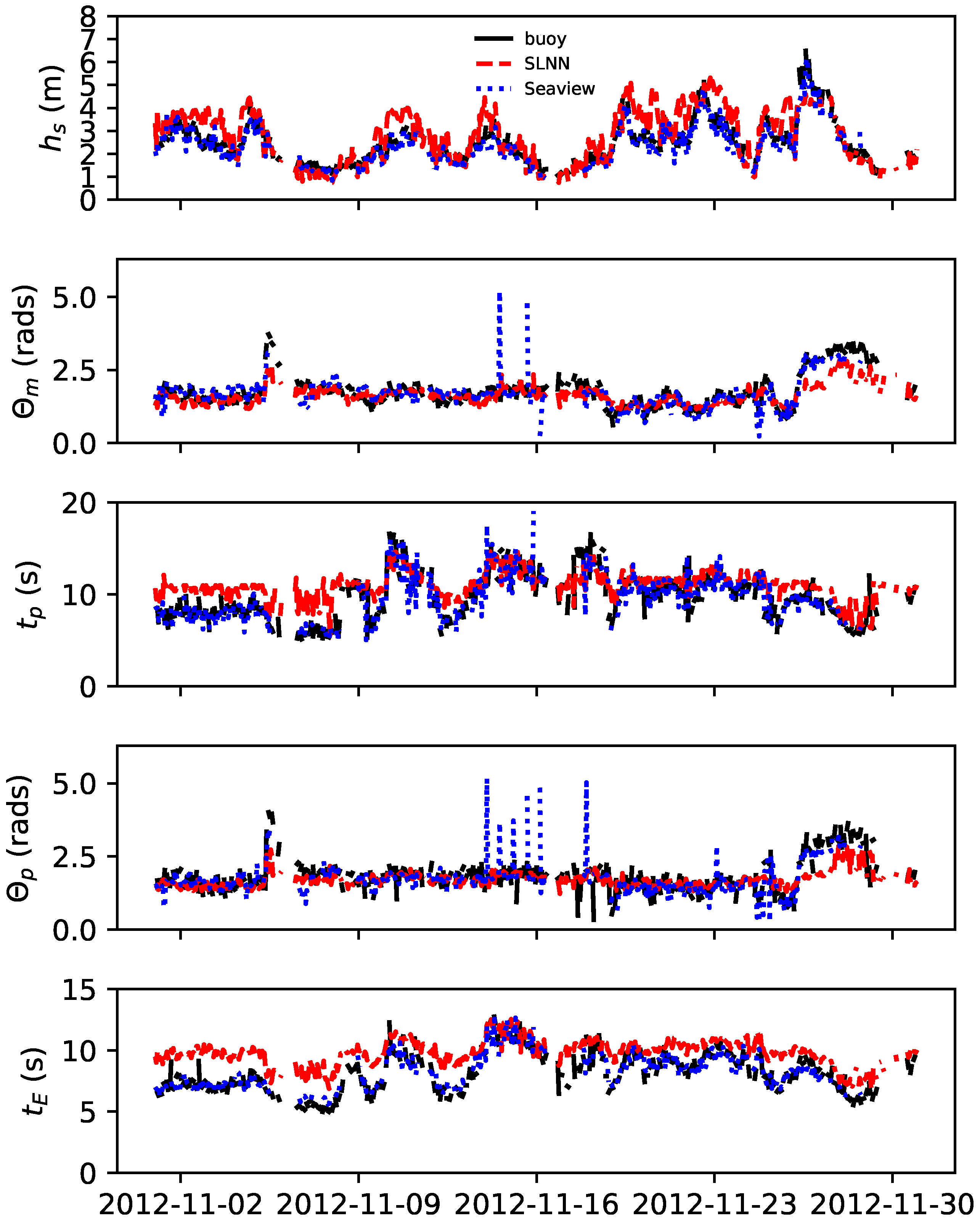

Depending on where the radars are situated, we define and as the beam angles, measured clockwise from north, from each radar to a measurement point. As the beam angles are different for each measurement location in the radar coverage area, the resulting Doppler spectra are also different. Therefore, either a different neural network must be trained for every desired location or a single neural network must be able to understand the relationship between , and the related Doppler spectra. In this work, both methods will be tested:

the single-location neural network, or SLNN, trained in one location;

and the multi-location neural network, or MLNN, trained to understand the relationship between the radar beam angles and and the Doppler spectra.

For both neural network experiments, the training/test data were generated in the same way. Beginning with a large dataset of directional ocean spectra, from both the wave buoy and WW3 datasets described in

Section 2.1, we used the radar cross-section expression, from Equations (

2) and (

4), to simulate the corresponding Doppler spectra at a particular location, for both radars. The radar frequencies, the depth of the ocean and the beam angles (

and

) were fixed, and two 512-point Doppler spectra ranging from −1.27 Hz–1.27 Hz were simulated. These simulations should mimic the real data as closely as possible to make the neural network’s task less complicated, and therefore, appropriate noise floor and general noise levels were included in the simulations, after analysing the available measured radar data.

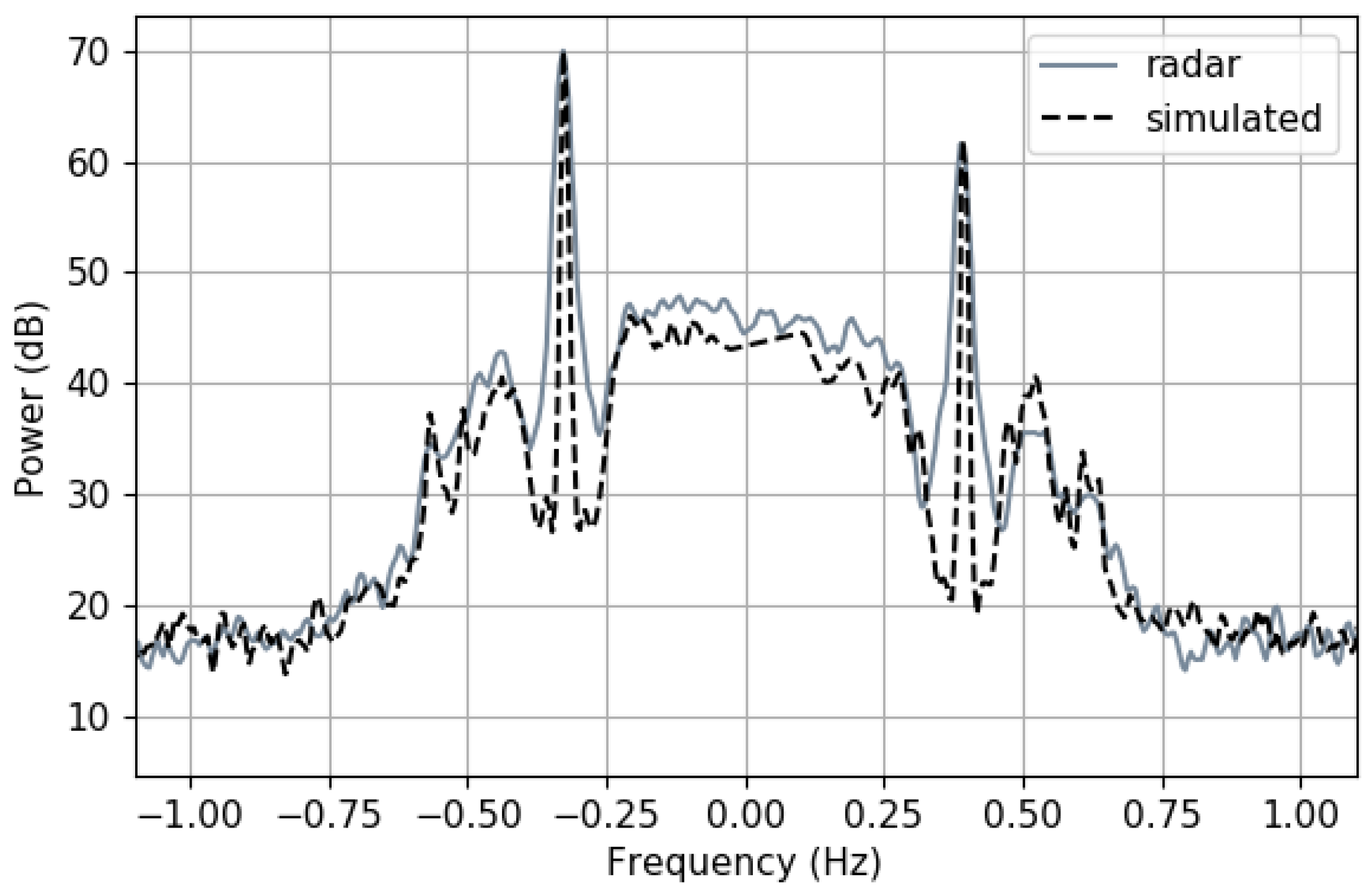

Then, any Doppler spectrum input to the neural network (so either the simulated data or the measured validation data) was filtered and processed. An SNR of 10 dB for the second order peaks was set as a minimum requirement for a Doppler spectrum to be used in the neural network, and anything below was discarded, as high noise levels may complicate the neural network model. Any current-induced Doppler shifts were removed (which in this case only applies to the measured radar data) before the resulting Doppler spectra were normalised, so that the first order peaks were set to 1 and −1, and the highest Bragg peak was set to a fixed value, here 70 dB. Values ±0.6 around each peak were used as input to the neural network, which for this experiment gave a 174 point array for each radar, say and (where ). This smaller subset of points was used so that the neural network did not waste resources trying to understand the less important outer parts of the Doppler spectrum, whilst fully enclosing the second order continuum.

The output values, , were the directional spectra, and they were interpolated onto a grid of 36 × 98, where there were 36 directional values in the range [0, 2π] and 98 wavenumber values in the range [0.004, 0.986]. The values were then each multiplied by k, for scaling purposes, and flattened, to form a vector of a size of 3528, for processing.

In the neural network, an

elu function, i.e.,

for constant α, was added in the final layer to encourage the output spectrum values to be positive. The value of α was set to the TensorFlow-default value of 1, to avoid the cost of tuning another parameter. The cost function was the mean squared error of the predicted and actual values of

; namely:

where

and

are the

predicted and actual values, respectively, in a total of

M values. To find the optimal neural network architecture, a genetic algorithm was used, whose criteria were to minimise the differences in the predicted and actual values in the test set.

Single-Location Neural Network

In training the SLNN,

and

were fixed and therefore unnecessary for the training process. Thus, we define

as the two radar Doppler spectra subsets joined together,

, which forms a vector of size 348, and

as the related directional ocean spectrum

. To validate the method, the location of the simulations was set to the position nearest to the wave buoy where operational radar data were available. At this position, we found that

,

, and the ocean depth was 53 m; the rest of the radar information used in the simulation process is given in

Section 2.1.1.

In this experiment, we used both the WW3-modelled and wave buoy-measured directional spectra in the simulations, as described in

Section 2.1. After filtering by the SNR limit, a total of 4804 training examples were available, which were then split into 66%/33% training/test sets for the training phase. After some experimenting, the values shown in

Table 2 were decided for the genetic algorithm through which to search.

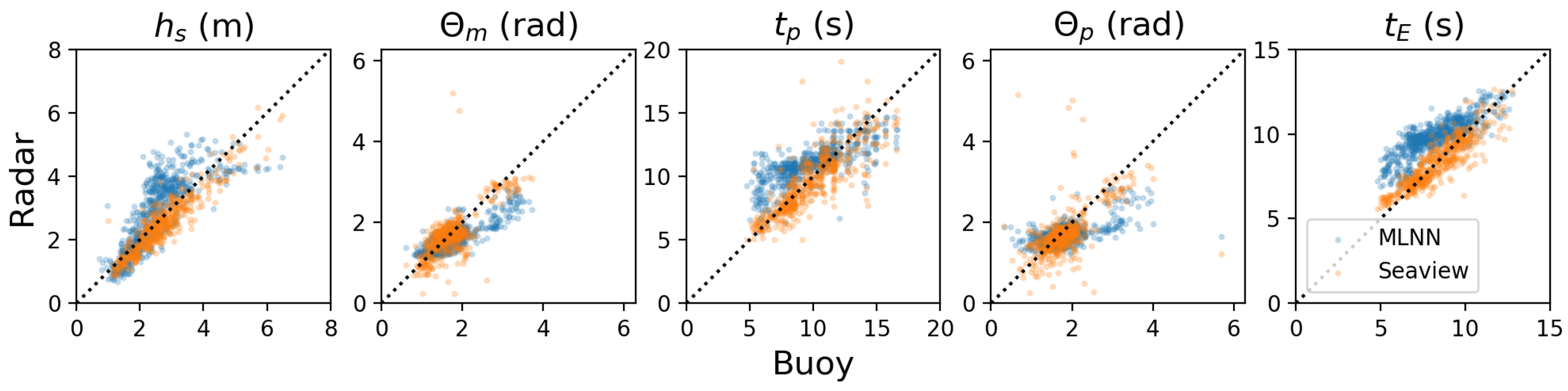

Multi-Location Neural Network

For a neural network being trained to understand the location during the inversion, namely the MLNN, the beam angles and (scaled to be proportional to the range of the Doppler spectra) must also be passed into the algorithm. Thus, we define as the 350-point vector and, again, as the related .

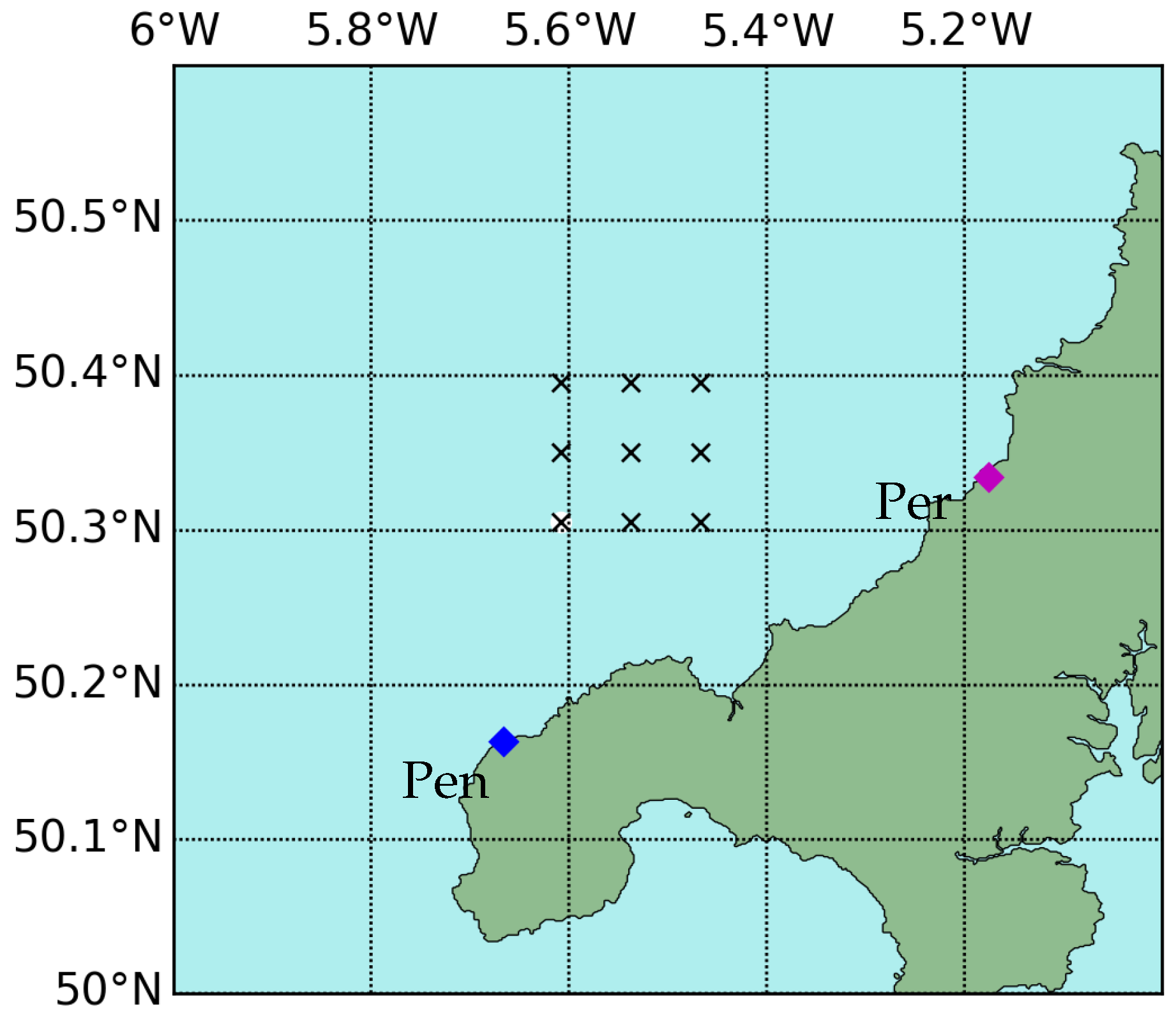

The test was carried out on a relatively small scale, where 9 locations, including the location of the wave buoy, were chosen, as shown in

Figure 2. For the 9 positions,

ranged between 9° and 42°, and

ranged between 261° and 288°.

In this experiment, like the SLNN, both the wave buoy-measured and WW3-modelled values of were used to simulate the training set. Post filtering, the dataset contained 109,971 data pairs, which were divided into the training/test sets by a 67%/33% split.

Similarly to the SLNN, some experimenting was carried out to decide on the parameters for the genetic algorithm. The resulting parameters are shown in

Table 3.