1. Introduction

The spatio-temporal evolution of complex vortex flow fields surrounding underwater vehicles plays a critical role in determining their maneuverability, stealth performance, and operational safety. Therefore, accurately characterizing and predicting these vortex dynamics is essential to achieving optimal hydrodynamic design and overall engineering performance. Traditionally, investigations into such flow fields have relied on towing tank experiments and numerical simulations using computational fluid dynamics (CFD). However, physical experiments are often constrained by high costs, extended testing durations, and limitations in replicating extreme operational conditions. On the other hand, high-fidelity CFD simulations require tens to hundreds of millions of computational grid elements to capture large-scale, highly complex flow phenomena, thereby imposing substantial computational burdens.

In recent years, the rapid advancement of artificial intelligence has introduced a transformative paradigm in addressing computational fluid dynamics (CFD) challenges, leading to the emergence of the interdisciplinary field of Artificial Intelligence for Computational Fluid Dynamics (AI for CFD) [

1,

2,

3]. Among various approaches, Deep Neural Network (DNN)-based models have become a central research focus in flow field prediction, with substantial progress achieved using Recurrent Neural Networks (RNNs), Convolutional Neural Networks (CNNs), and Physics-Informed Neural Networks (PINNs). RNN-based models exhibit strong capabilities in capturing the temporal evolution of flow fields and have been successfully applied to turbulence prediction tasks [

4,

5,

6]. CNNs, through hierarchical convolution and pooling operations, are effective at extracting spatial features and have shown promising results for reconstructing vortex structures [

7,

8,

9]. To more precisely capture the spatio-temporal features of flow field data, several researchers have investigated hybrid neural network architectures integrating RNNs and CNNs for enhanced prediction. These studies have verified that RNN-CNN hybrid models can achieve high prediction accuracy and strong generalization performance when modeling the spatio-temporal evolution of complex, unsteady flow fields [

10,

11]. PINNs incorporate physical governing equations directly into the loss function, enabling the training process to adhere to physical laws and thereby improving model generalization, particularly under conditions of sparse flow field data [

12,

13,

14] Nevertheless, existing DNN architectures, including RNNs, CNNs, and PINNs, are fundamentally designed for data defined on regular Euclidean grids and therefore struggle to capture the complex spatial topologies of unstructured grid flow field data. Such unstructured representations are prevalent in large-scale, highly intricate systems, including underwater vehicles and submarines.

A Graph Neural Network (GNN) is a deep learning model designed for graph-structured data (nodes, edges) that effectively handles non-Euclidean data structures, thereby offering a novel approach for modeling and predicting unstructured flow fields. Current researchers have applied GNN-based methods to predict flow fields around unstructured grid geometries, such as cylinders and airfoils. Pfaff et al. (2020) proposed a Mesh-Based Simulation with Graph Networks (MeshGraphNet) model to predict the flow fields of cylinders and NACA airfoils, as well as structural dynamic responses and the behavior of deformable materials, establishing an important foundation for applying GNNs to fluid and structural field prediction [

15]. Subsequently, many scholars focused on improving and optimizing methods based on the MeshGraphNet. Fortunato et al. (2022) introduced a multilevel approach that enhanced prediction accuracy and reduced computational costs compared to the original MeshGraphNet [

16]. Yang et al. (2022) proposed Algebraic Multigrid Net (AMGNet), which integrates algebraic multigrid techniques into the MeshGraphNet architecture, and successfully applied it to predict flow fields around cylinders and airfoils [

17]. Li et al. (2024) introduced the Finite Volume Graph Network (FVGN) and its improved model (Gen-FVGN), which enable prediction of cylinder and airfoil flow fields simulated using the finite volume method while retaining the “encoder–processor–decoder” core architecture of MeshGraphNet [

18,

19]. In the same year, a Finite Difference Informed Graph Network (FDGN) was introduced, which integrates graph networks with finite-difference techniques to predict flow fields with reduced dependence on labeled data while still preserving the core MeshGraphNet architecture [

20].

Concurrently, significant research efforts have been directed towards applying fundamental GNN architectures like Graph Convolutional Networks (GCNs) and Graph Attention Networks (GAT) to fluid flow problems. These studies have demonstrated the versatility and effectiveness of GNNs in handling the unstructured data inherent to complex flow simulations. For instance, Ogoke et al. (2021) utilized a GCNN to predict global properties like drag force from scattered, irregular velocity measurements around airfoils, showcasing the model’s invariance to node order and resolution [

21]. Peng et al. (2022) developed a GCN-based reduced-order model demonstrating excellent adaptability to non-uniform meshes and achieving a three-order-of-magnitude speedup while maintaining high accuracy for internal flow cases [

22]. Furthermore, Liu et al. (2022) proposed a Graph Attention network-based Fluid simulation Model that leverages attention mechanisms to handle non-equilibrium phenomena in vortices and turbulent flows, reporting a speedup of two to three orders of magnitude over traditional CFD solvers for 2D cylinder flow [

23]. Beyond these foundational works on canonical geometries, recent advancements have expanded the application of GNN to more complex and diverse scenarios. Covoni et al. (2024) demonstrated the capability of GNNs to predict explosion-induced transient flow in highly complex geometries, with strong generalization to domains significantly larger than those in the training set [

24]. Hadizadeh et al. (2024) employed a GNN as a surrogate for multi-objective fluid-acoustic shape optimization, achieving high predictive accuracy for varied airfoil shapes and enabling drastic computational acceleration [

25]. Gao et al. (2025) presented a reduced-dimensional deep learning approach leveraging GNN principles for fast 3D indoor flow field prediction with near-real-time inference [

26]. Chen et al. (2025) introduced a Physics-Informed GNN for flow in porous media, rigorously enforcing physical constraints and outperforming traditional PINN [

27]. A comprehensive review by Cheng et al. (2025) further synthesized these progresses, highlighting the pivotal role of GNN and other advanced ML methods in tackling unstructured grid data across computational physics [

28].

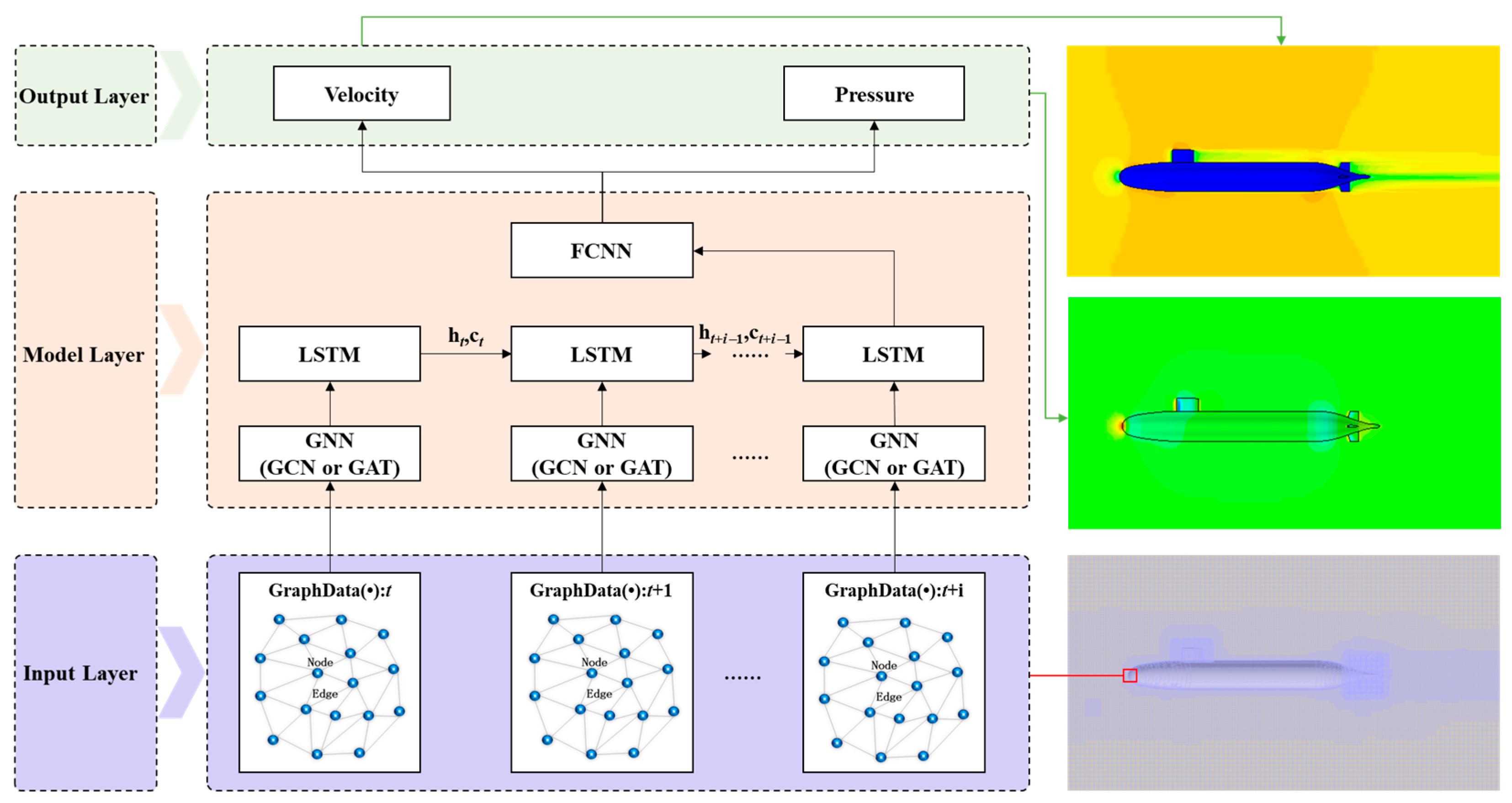

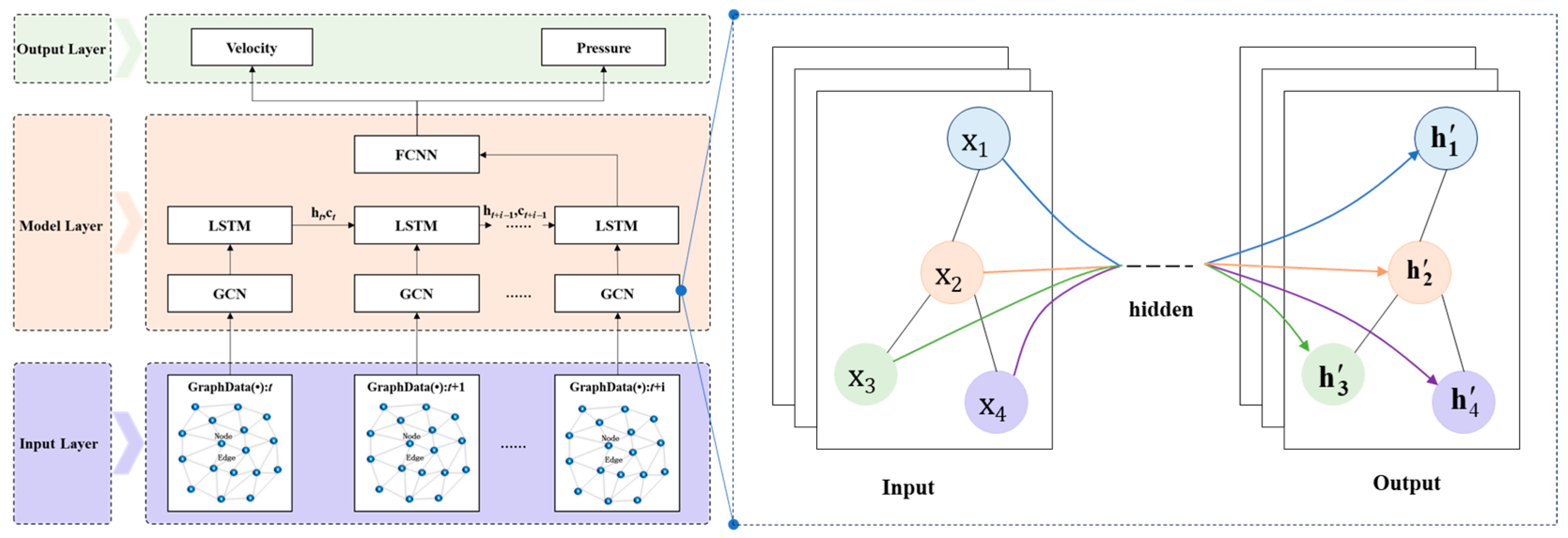

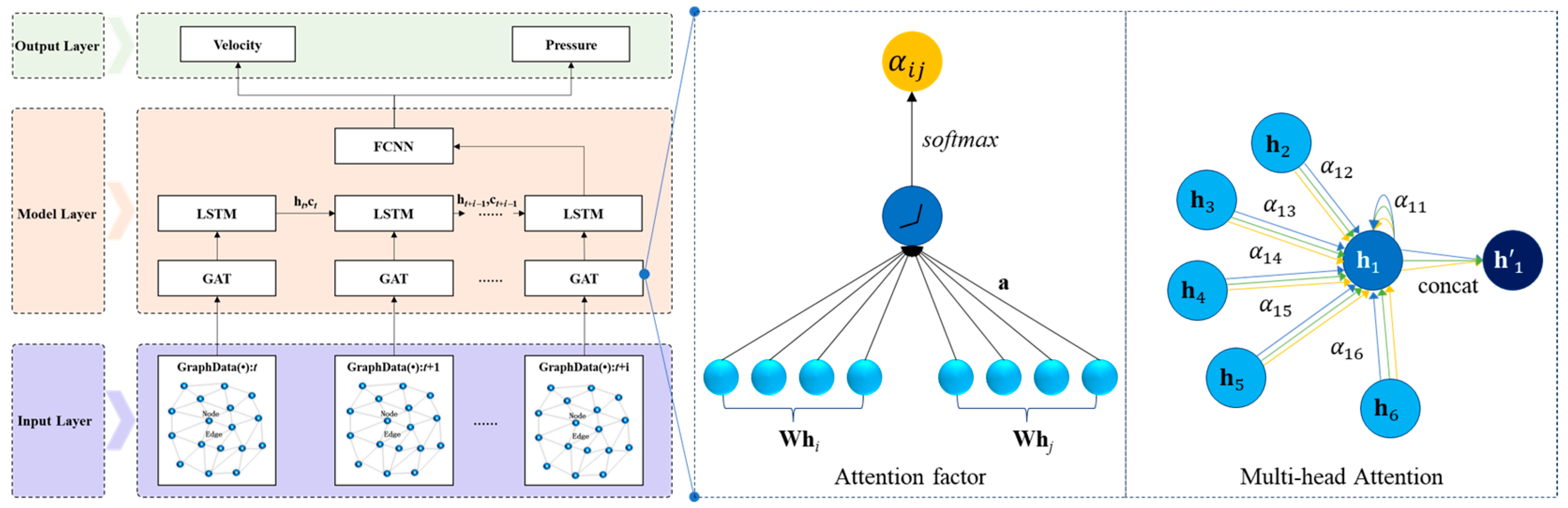

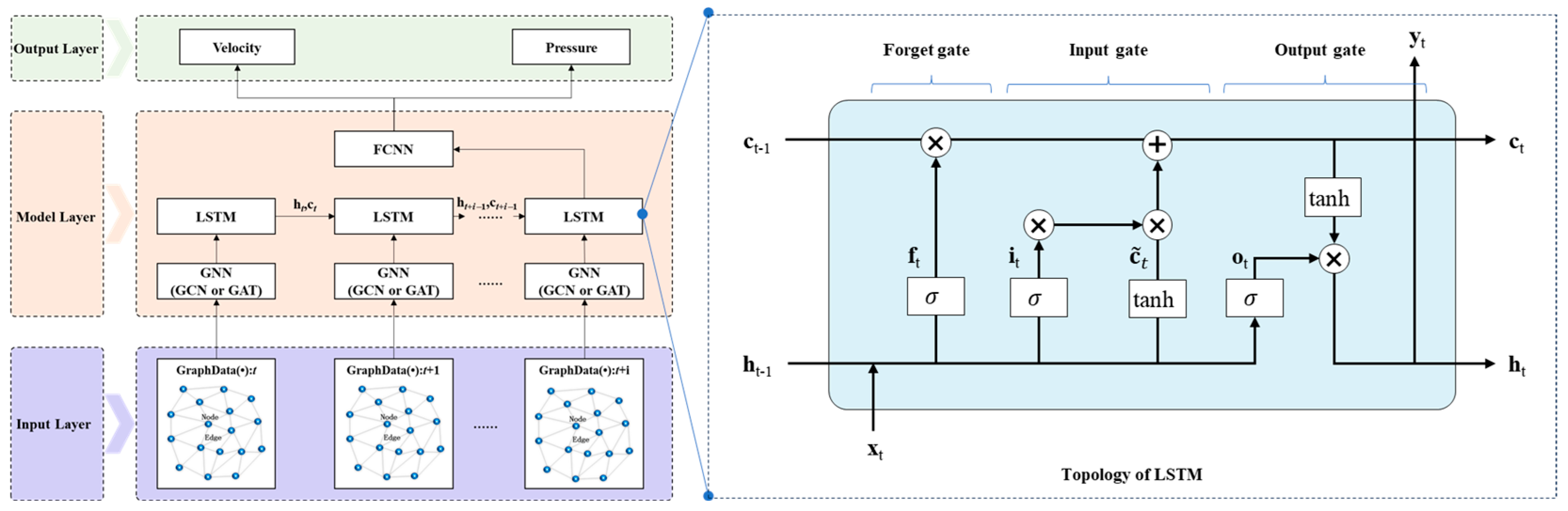

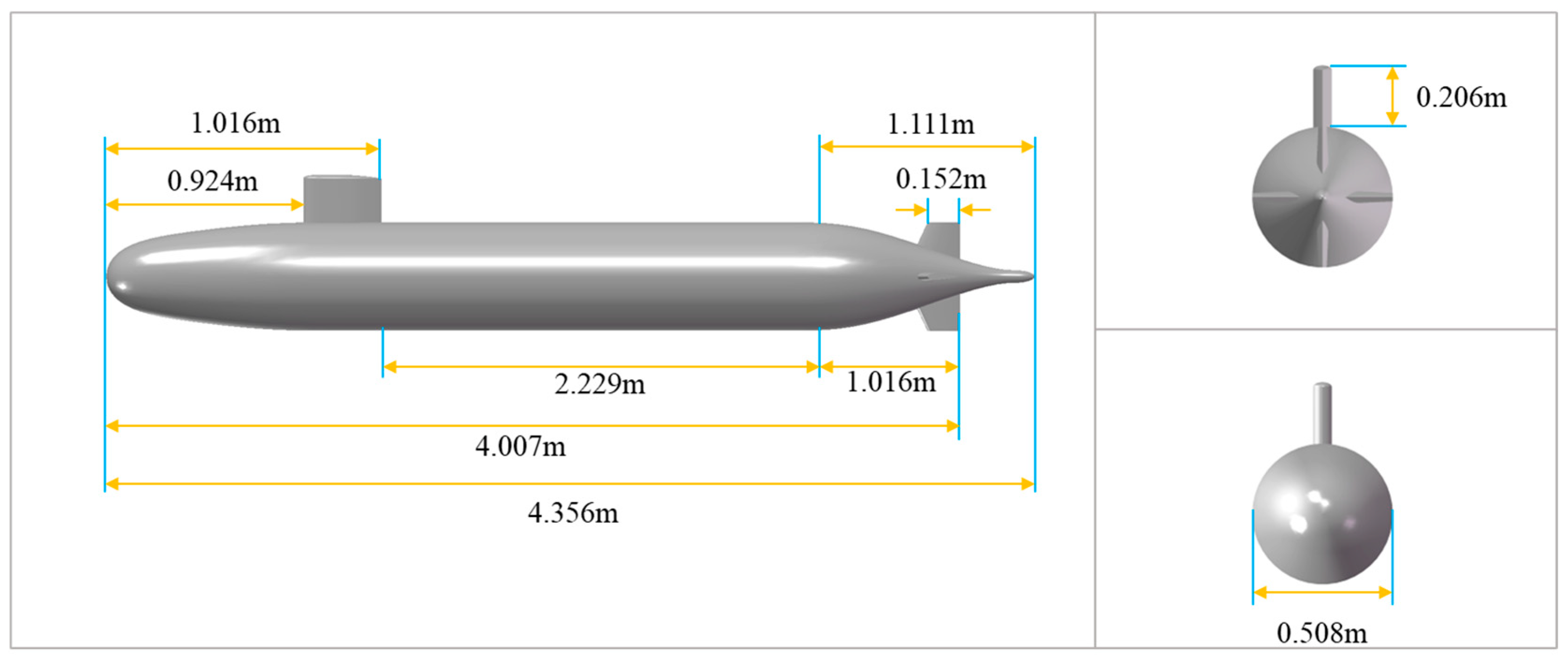

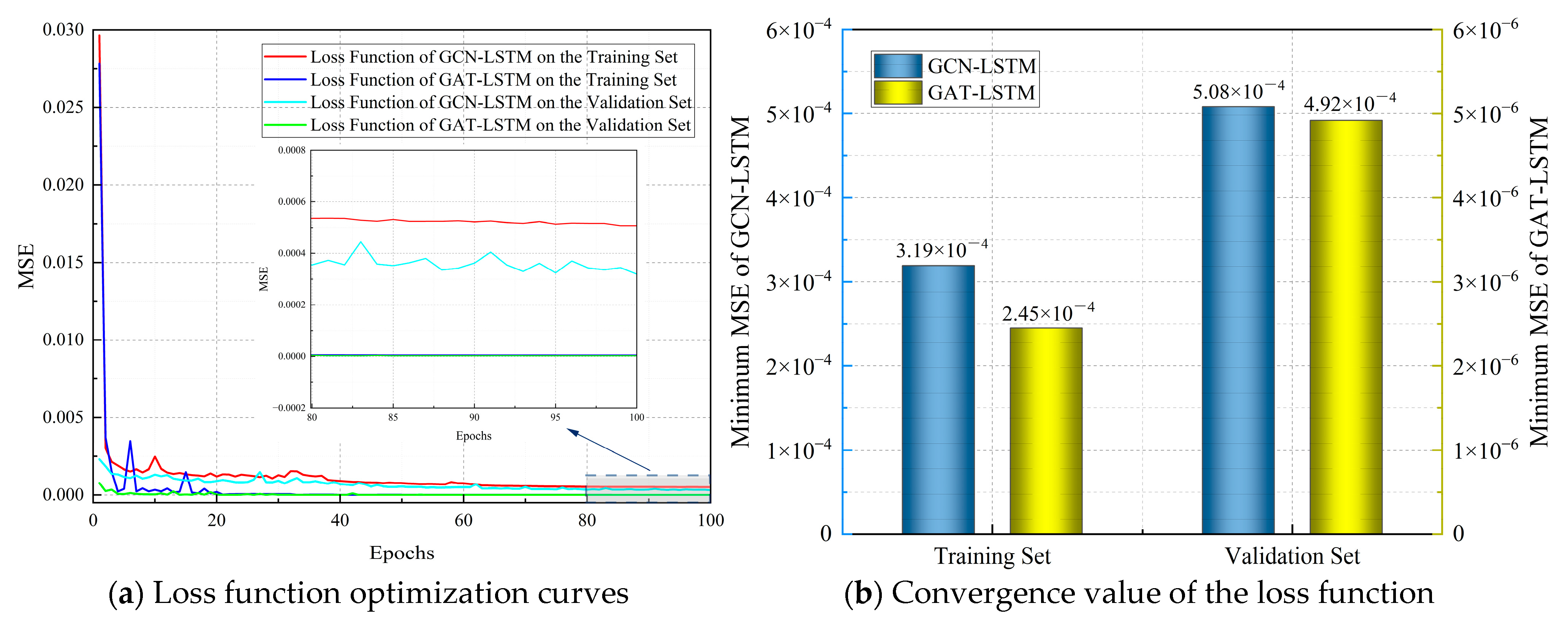

While single GNN-based models effectively extract spatial features from unstructured grid flow field data, they struggle with long-term temporal prediction because they neglect the temporal evolution inherent in unsteady flow fields. To address this limitation, we propose a Spatio-temporal Graph Neural Network (ST-GNN) model that integrates a Graph Neural Network (GNN) with a Long Short-Term Memory (LSTM) network. This model leverages the GNN’s message-passing mechanism to capture spatial relationships among unstructured grid nodes and employs the LSTM’s gated memory mechanism to learn temporal evolution patterns of physical quantities, thereby enabling simultaneous modeling of spatial and temporal features in complex flow fields. We evaluate the accuracy and efficiency of two variants, namely, GCN-LSTM and GAT-LSTM, for predicting the spatio-temporal evolution of the DARPA SUBOFF AFF-8 flow field, which is characterized by complex unstructured grids.

It is important to clarify the research scope and comparison design of this study. The core objective is to evaluate the feasibility and effectiveness of the ST-GNN architecture for predicting the spatio-temporal evolution of complex unstructured grid flow fields surrounding underwater vehicles, with the SUBOFF AFF-8 serving as a representative benchmark. Within this scope, we focus on two variants of the framework: GCN-LSTM and GAT-LSTM. This choice is motivated by the fact that the GCN and GAT are currently the most widely used and representative GNN architectures, and their integration with temporal modeling mechanisms (LSTM) is directly relevant to assessing the applicability of the ST-GNN framework in complex flow scenarios. By comparing these two variants, we aim to demonstrate how different spatial feature extraction mechanisms affect the accuracy of spatio-temporal modeling for complex underwater vehicle flow fields, an issue that remains insufficiently addressed in existing studies that primarily focus on simple geometries.

The key contributions of this paper are as follows:

A novel ST-GNN framework integrating a GNN and LSTM was proposed to jointly capture spatial dependencies and temporal dynamics in unstructured grid flow fields, addressing the limitation of traditional DNNs in handling non-Euclidean data.

Two ST-GNN variants (GCN-LSTM and GAT-LSTM) were developed and compared; on the SUBOFF AFF-8 benchmark, both showed superior accuracy and computational efficiency over conventional CFD methods.

The temporal generalization of the proposed models was evaluated, verifying their potential for accurate long-term prediction in complex unsteady flow scenarios.

The rest of the paper is organized as follows.

Section 2 outlines the fluid dynamics governing equations used in the numerical simulation of the SUBOFF AFF-8 flow field.

Section 3 details the proposed ST-GNN model and its underlying principles.

Section 4 presents the CFD modeling and simulation calibration results for SUBOFF AFF-8, including a case study comparing flow field predictions from GAT-LSTM and GCN-LSTM.

Section 5 assesses the temporal generalization capability of GAT-LSTM and GCN-LSTM. Finally,

Section 6 summarizes this study with key findings and conclusions.

2. Governing Equations

The Reynolds-Averaged Navier–Stokes (RANS) method was employed to simulate the flow field around the SUBOFF AFF-8 model in this study. The governing equations of incompressible viscous fluids include the continuity equation and RANS equations, which can be expressed in the following form:

where

ρ is the fluid density,

is the time-averaged velocity component,

is the Cartesian coordinate component,

is the time-averaged pressure,

is the kinematic viscosity, and

is the Reynolds stress tensor.

In this paper, the shear stress transport (SST) k-ω turbulence model was utilized to provide closure for these equations, and the governing equations are as follows:

Transport equation for turbulent kinetic energy (

k):

Transport equation for the specific dissipation rate (

ω):

where

is the production term for turbulent kinetic energy,

is the turbulent viscosity, and

is the blending function.

The mathematical expression for the blending function and related terms is as follows:

where

S is the magnitude of the strain rate tensor,

is the function for shear stress correction,

y is the distance to the nearest wall,

β* is a model constant, and

is the coefficient for the cross-diffusion term, expressed as:

The constants, such as turbulent kinetic energy diffusion coefficients

, specific dissipation rate diffusion coefficients

, generation term coefficients

, and dissipation term coefficients

, were calculated using the following equations:

where

and

represent sets of constants for the inner and outer layers in the blending function.

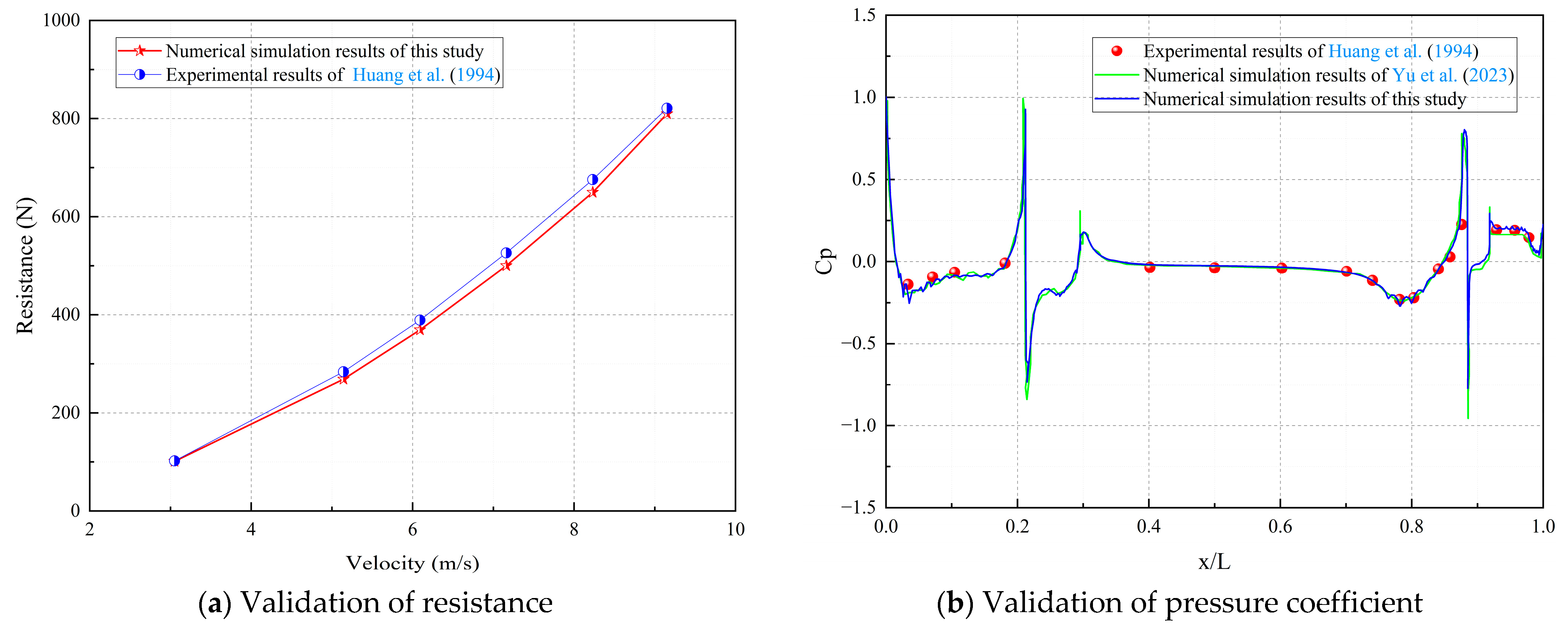

5. Results and Discussion

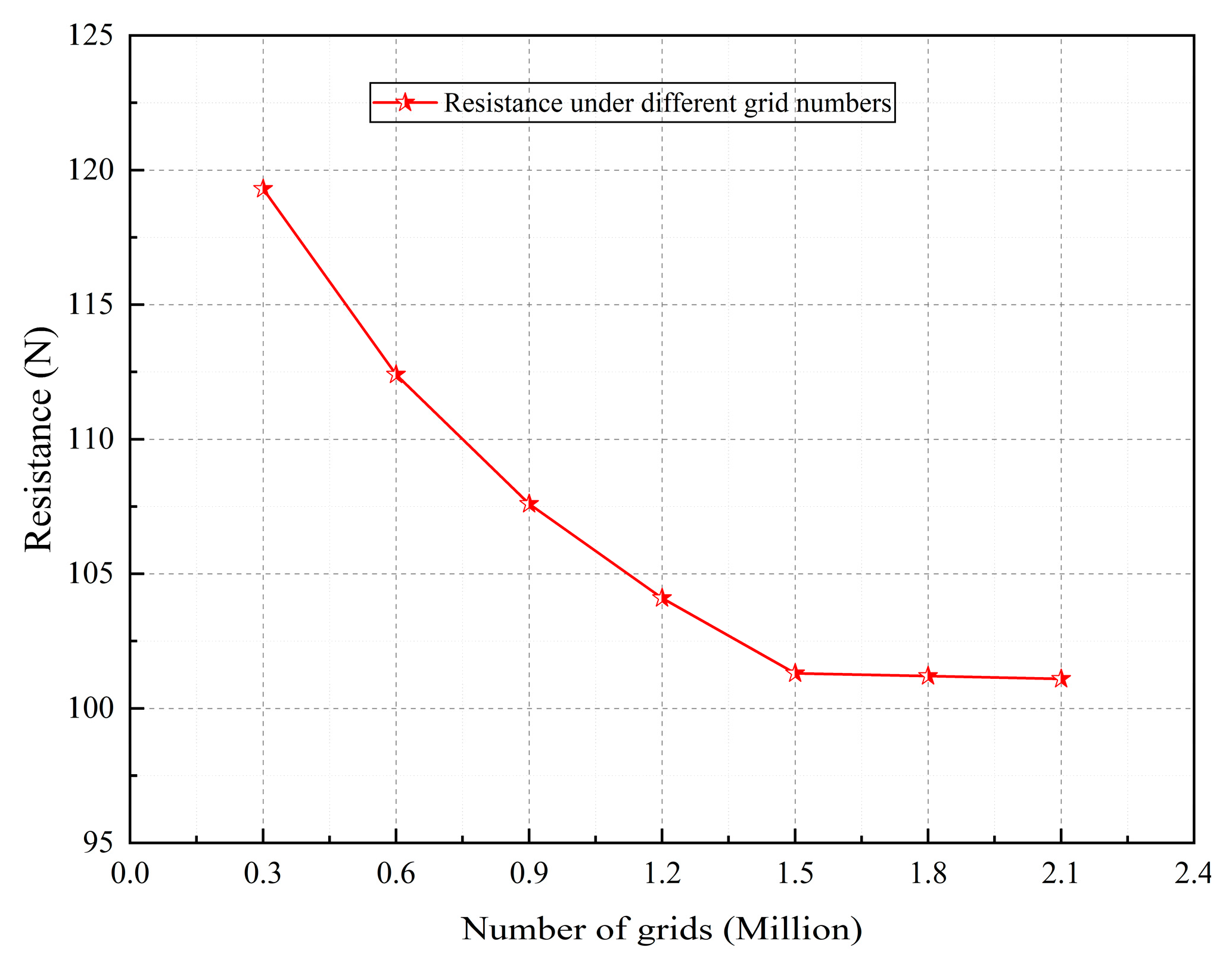

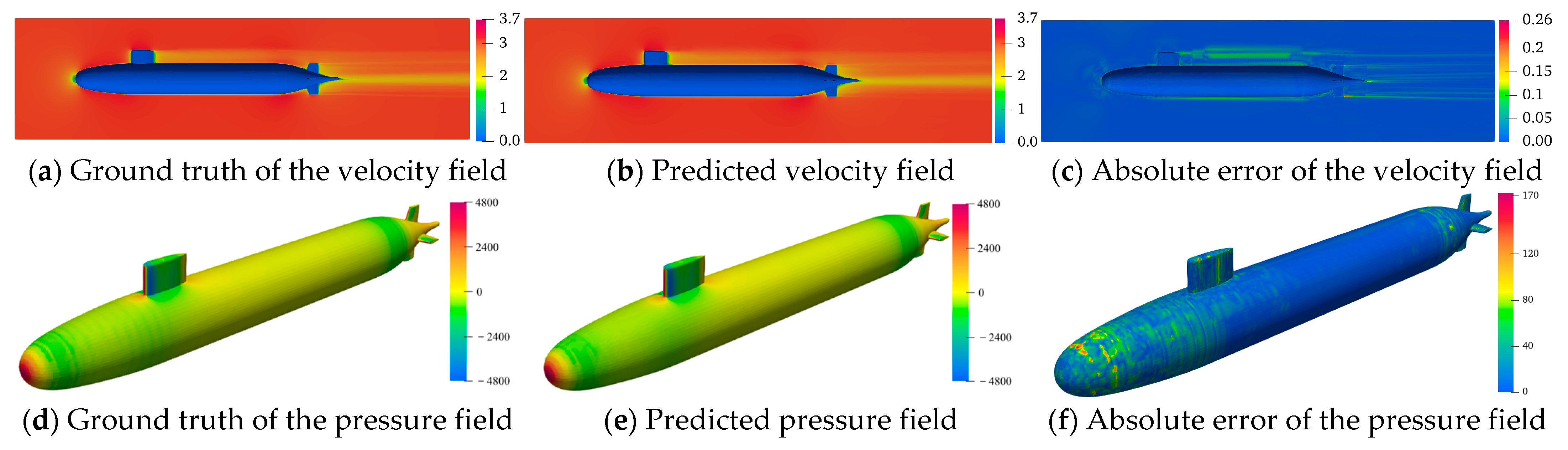

This section analyzes the ST-GNN prediction results from three perspectives: visual flow field contours, statistical error evolution, and pressure coefficient (Cp) accuracy. The computational efficiency between ST-GNN and CFD is benchmarked to highlight the practical advantages of the former.

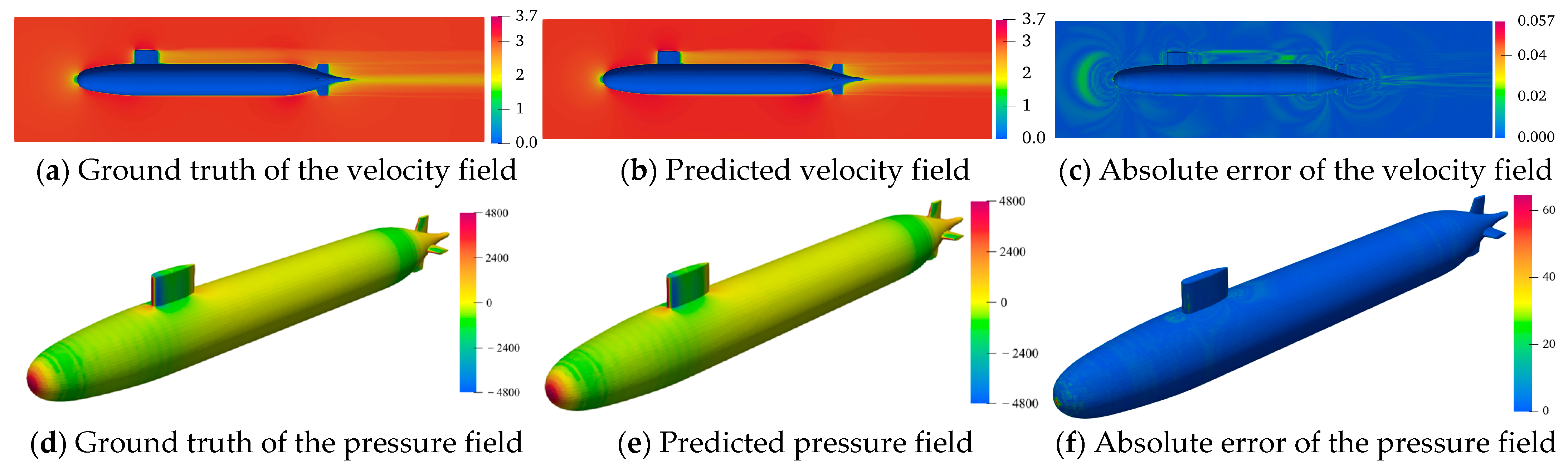

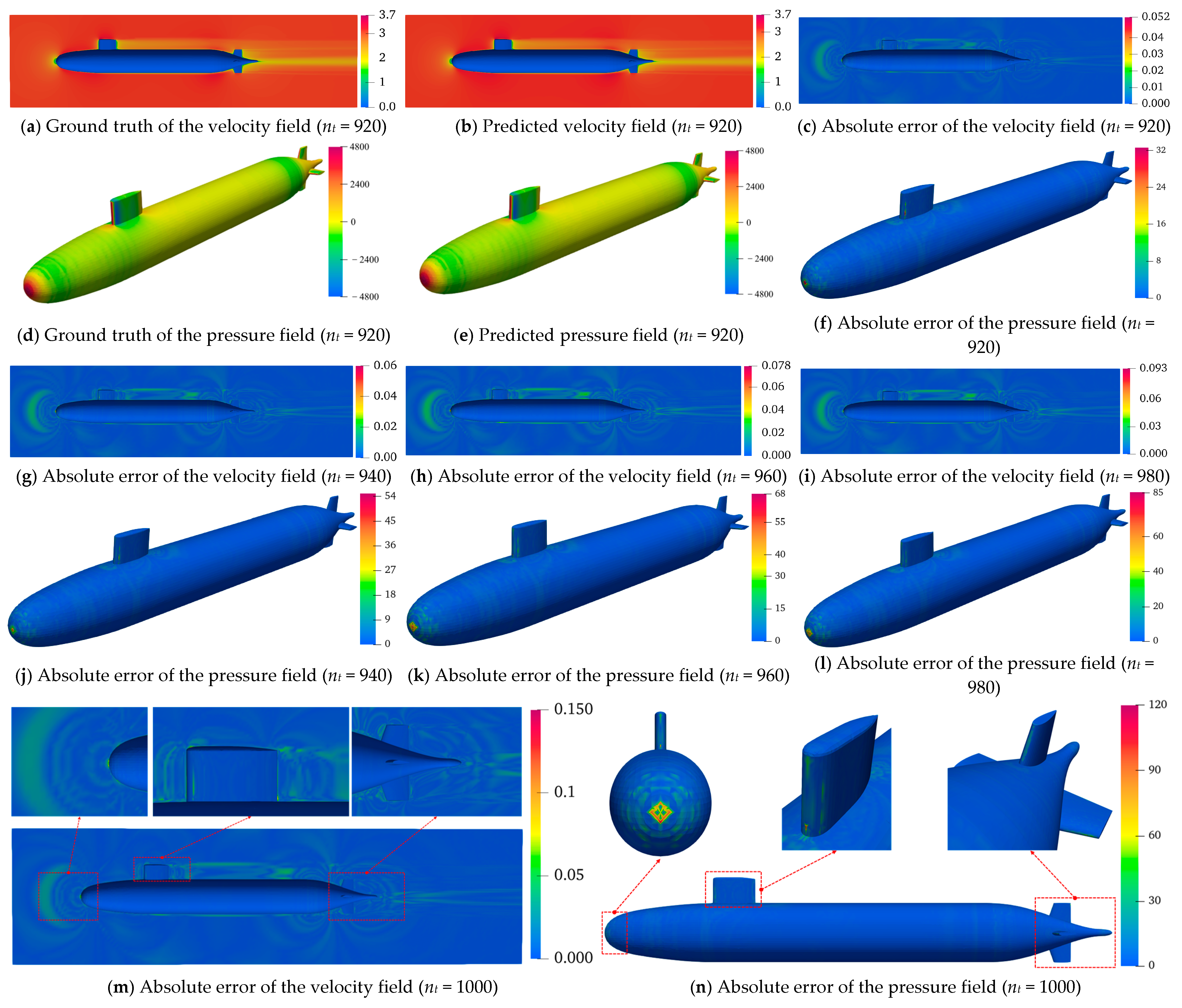

5.1. Visual Analysis of Predicted Flow Field Contours

A rigorous assessment of the temporal generalizability of the GCN-LSTM and GAT-LSTM architectures was conducted for velocity/pressure field predictions around the SUBOFF AFF-8 hull. Contour visualizations (

Figure 12 and

Figure 13) across five strategically selected time step indices (

nt = 920, 940, 960, 980, 1000, corresponding to physical times of 3.68 s, 3.76 s, 3.84 s, 3.92 s, 4.00 s) revealed a pronounced progressive accumulation of prediction errors in both models as time advances. This phenomenon arises from the autoregressive inference paradigm, wherein each sequential prediction depends recursively on the preceding output. Consequently, initial minor inaccuracies propagate and amplify through the prediction horizon, culminating in a cascade “snowball” effect that progressively diverges from the true spatio-temporal evolution of the flow field. This mechanistic insight underscores a core challenge inherent to data-driven approaches for long-term unsteady flow modeling.

The comparative evaluation demonstrated a consistent and statistically significant performance advantage of GAT-LSTM over GCN-LSTM across the entire temporal domain for both velocity and pressure predictions. This superiority is particularly evident within critical flow regions characterized by steep gradients, such as shear layers and flow separation zones. The enhanced fidelity of GAT-LSTM is attributable to its integrated graph attention mechanism, which transcends the limitations of fixed, topology-defined neighborhood aggregation inherent to GCNs. By dynamically computing attention coefficients between nodes, GAT-LSTM adaptively prioritizes and weights the most influential local flow features. This enables the model to selectively focus computational resources on resolving strongly nonlinear phenomena, including vortical structures and separation dynamics, thereby achieving a more precise characterization of localized structural discontinuities and significantly boosting predictive robustness in complex flow regimes.

The spatial distribution of prediction accuracy exhibited marked heterogeneity, strongly correlated with geometric complexity. Regions featuring abrupt geometric discontinuities, notably the bow stagnation point, the conning tower–hull junction, and stern appendages, such as the rudder and propeller, suffered significantly elevated prediction errors in both models compared to hydrodynamically smooth hull sections. This spatial error concentration arises directly from the intense physical gradients (velocity, pressure) induced by sharp geometric features and high curvature. These topologically complex zones invariably host intricate flow physics, encompassing sustained flow separation, coherent vortex shedding, and adverse pressure gradients, where field variables manifest highly nonlinear, transient behavior. Critically, while GAT-LSTM maintains an overall accuracy advantage through its attention mechanism, neither model fully resolves the intricate transient dynamics generated by severe geometric forcing. The persistent error elevation in these regions highlights a fundamental limitation of current spatio-temporal graph networks in capturing geometry-driven flow nonlinearities, thereby establishing an essential trajectory for future model refinement.

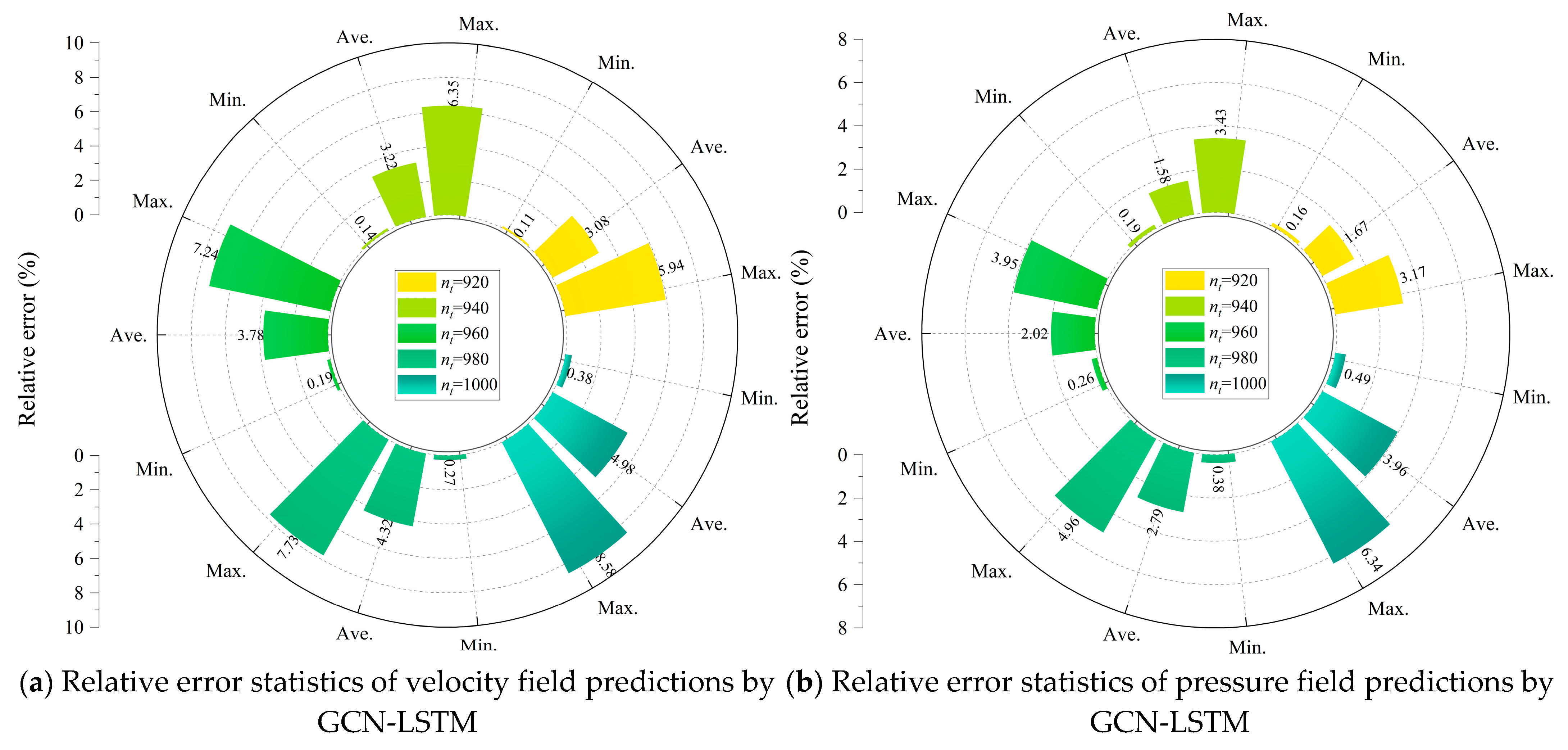

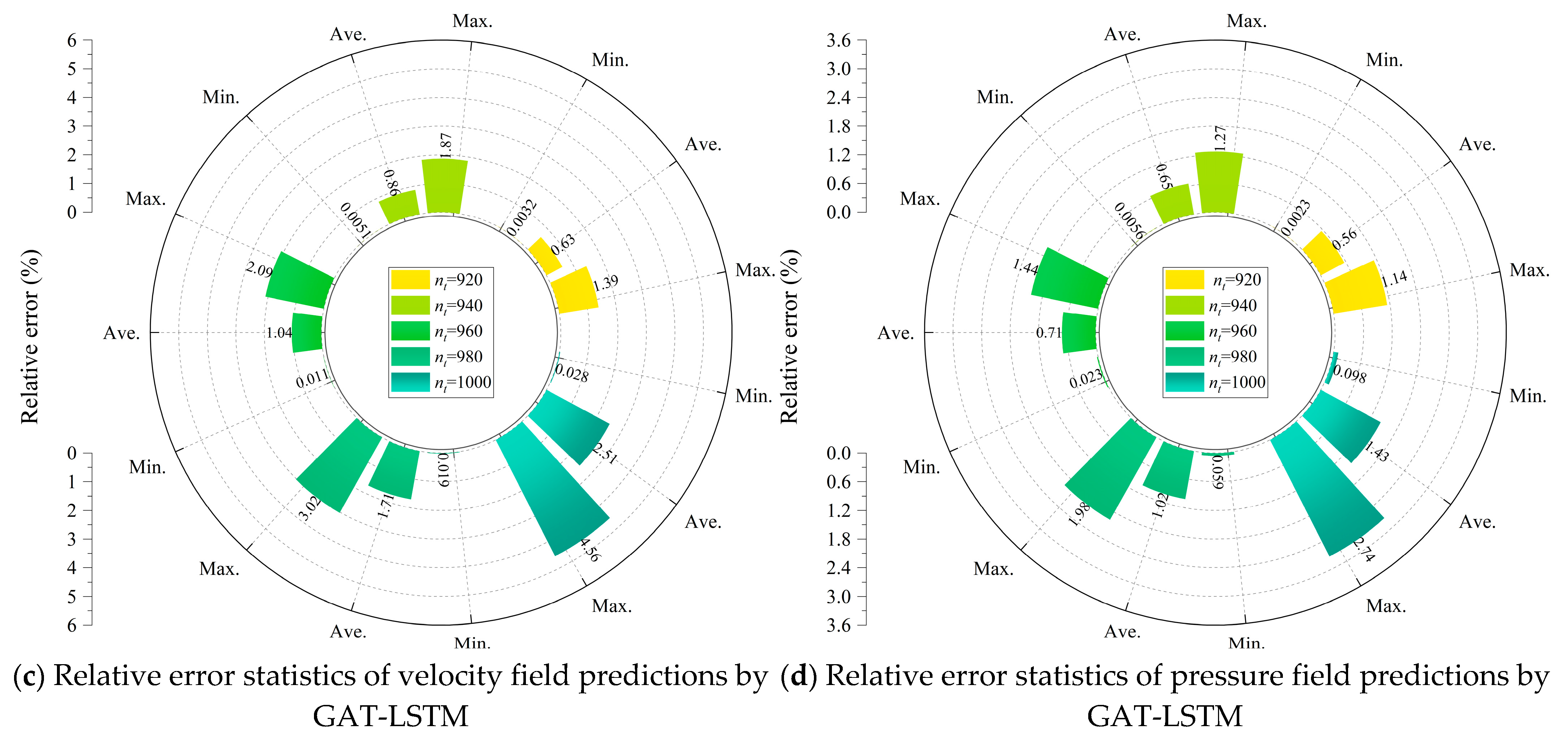

5.2. Statistical Analysis of Prediction Relative Errors

This subsection presents a rigorous quantitative assessment of the temporal evolution characteristics of relative errors in the flow field predictions obtained from the GCN-LSTM and GAT-LSTM models, with particular attention to the prediction horizon ranging from

nt = 920 to

nt = 1000 for the SUBOFF AFF-8 hydrodynamic configuration. For the GCN-LSTM model, a distinct and consistent upward trend was observed in the relative errors associated with both the velocity and pressure field predictions over time. As depicted in

Figure 14a,b, the maximum relative error in the velocity field increased from 5.94% at

nt = 920 to 8.58% at

nt = 1000, representing a notable 44.4% increase. In parallel, the average relative error rose from 3.08% to 4.98%, constituting a substantial 61.7% escalation. The degradation in prediction accuracy was even more pronounced in the pressure field: the maximum relative error nearly doubled, surging from 3.17% to 6.34%, an increase of 99.7%, while the average relative error increased by 137.1%, climbing from 1.67% to 3.96%. These trends provide quantitative evidence of the error compounding inherent in autoregressive inference, which becomes especially significant in geometrically complex regions where capturing fine-scale flow structures proves more difficult.

In contrast, the GAT-LSTM model demonstrated a strikingly enhanced capacity for controlling error accumulation. As illustrated in

Figure 14c,d, the maximum velocity field error grew from a relatively low 1.39% at

nt = 920 to 4.56% at

nt = 1000, corresponding to a 228.0% increase, while the average error increased from 0.63% to 2.51%, a numerically large 298.4% rise. Although these relative percentage increases appear substantial, they must be interpreted against the backdrop of significantly lower initial error magnitudes. For instance, the initial maximum velocity field error for GAT-LSTM is only 23.4% of that for GCN-LSTM. In the pressure field, the maximum and average relative errors increased from 1.14% to 2.74% and 0.56% to 1.43%, reflecting increases of 140.4% and 155.4%, respectively. Nonetheless, across the entire prediction window, GAT-LSTM maintained consistently lower absolute errors, attesting to its greater temporal stability and predictive reliability.

A direct comparison at nt = 1000 reinforces the superior generalization performance of GAT-LSTM. At this time point, the model achieved reductions of 49.6% and 63.9% in the average relative errors of the velocity and pressure fields, respectively, compared to GCN-LSTM. Furthermore, the maximum relative errors remained well-constrained within 4.56% for the velocity field and 2.74% for the pressure field. These results clearly underscore the efficacy of incorporating attention mechanisms within the recurrent graph-based framework. The graph attention layers appear to effectively mitigate error propagation across time steps, particularly in scenarios where spatial and temporal dynamics interact nonlinearly, as is often the case in turbulent or complex flow regimes.

In summary, both the GCN-LSTM and GAT-LSTM models maintained average relative errors below 5% throughout extended prediction intervals, thereby demonstrating their promising potential for long-term flow field forecasting on unstructured grids. Nevertheless, the GAT-LSTM architecture exhibited a clear advantage, not only in error minimization but also in generalization robustness, particularly in handling intricate flow patterns and challenging boundary conditions. This confirms the significant practical merit of attention-enhanced spatio-temporal models in advancing the frontier of data-driven fluid dynamics.

5.3. Analysis of Pressure Coefficient Prediction Results

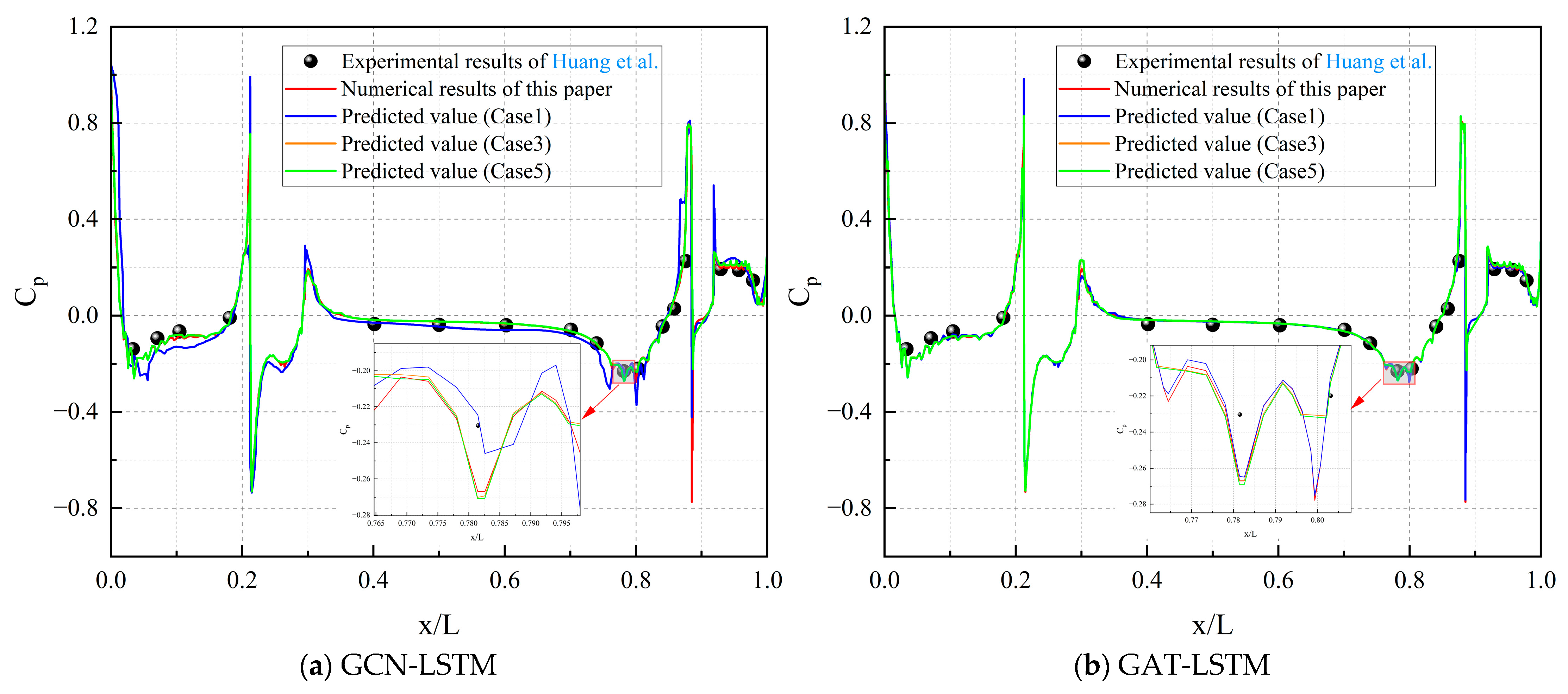

This subsection presents a rigorous comparative assessment of the Cp prediction performance between the GCN-LSTM and GAT-LSTM models for the SUBOFF AFF-8 hull configuration, leveraging the diagnostic insights from

Figure 15. As depicted in

Figure 15a, the GCN-LSTM model initially captured the overarching spatio-temporal trend of the Cp distribution at early stages (e.g.,

nt = 920). However, when progressing to

nt = 1000, progressive deviations emerged. Specifically, the model exhibited subtle inaccuracies in locating peak pressures within critical regions: minor shifts manifested at high-pressure zones (e.g., the bow stagnation point) and low-pressure regions (e.g., aft of the sail and near the rudder). Additionally, differences in amplitudes were observed, particularly an underestimation of negative pressure peaks in separation-sensitive zones.

In stark contrast, the GAT-LSTM model demonstrated exceptional spatio-temporal predictive fidelity across all time steps, as illustrated in

Figure 15b. Its Cp curves exhibited near-perfect quantitative and qualitative alignment with ground-truth data, maintaining remarkable precision in shape, peak localization, and amplitude magnitude. Key hydrodynamic regions, including the bow stagnation point, sail junction, and stern appendages, showed no measurable degradation in curve smoothness, peak integrity, or spatio-temporal coherence.

Collectively, these results underscore a statistically significant performance differential between the architectures. The GAT-LSTM’s attention mechanism enabled near-ideal Cp replication, validating its robustness for long-term fluid–structure interaction forecasting. Conversely, the GCN-LSTM’s accuracy diminished monotonically over time, particularly in resolving critical peak features, highlighting limitations in capturing complex boundary layer transition and separation dynamics. This performance divergence further accentuates the transformative role of graph attention mechanisms in advancing the accuracy of high-fidelity hydrodynamic simulations for engineering-relevant configurations.

To further assess the model’s generalizability across varying flow conditions, we expanded the evaluation to include three representative cases from

Table 1: Case 1 (Re = 1.24 × 10

7), Case 3 (Re = 2.481 × 10

7), and Case 5 (Re = 3.354 × 10

7). While training and validation were primarily conducted on Case 1, the models’ predictions of pressure coefficients (Cp) under these diverse Reynolds numbers and boundary conditions were systematically validated against both experimental data and high-fidelity CFD simulations. As shown in

Figure 16a for GCN-LSTM and

Figure 16b for GAT-LSTM, both models maintain strong alignment with the ground-truth Cp distributions across the tested cases, with GAT-LSTM again exhibiting superior fidelity in capturing peak locations and amplitudes, especially in regions influenced by higher Reynolds numbers where flow separation and turbulence intensify. These results confirm robust performance under extrapolated operating conditions, with minimal degradation in accuracy, thereby strengthening the empirical evidence for the models’ generalizability and practical applicability in varied hydrodynamic scenarios.

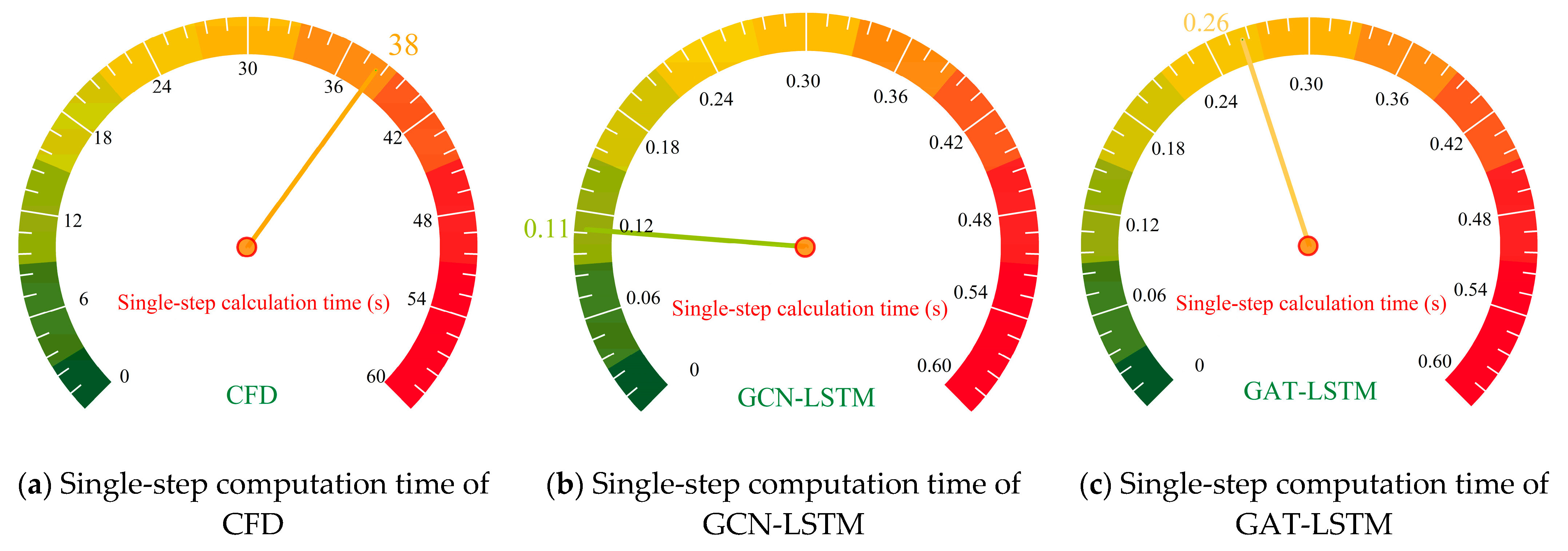

5.4. Solving Efficiency Analysis of ST-GNN and CFD

As illustrated in

Figure 17, the single-step solution time of the CFD simulation for the SUBOFF AFF-8 model reached 38 s, with computational bottlenecks primarily arising from the iterative solution of partial differential equations and complex discretization of the computational domain. Specifically, CFD employed the finite volume method to spatially discretize governing equations and relied on the SIMPLE iterative algorithm to solve pressure–velocity coupling at each time step, leading to prolonged computation. In contrast, the ST-GNN models (GCN-LSTM and GAT-LSTM) achieved remarkable inference efficiency, with single-step prediction times of merely 0.11 s and 0.26 s, respectively, achieving approximately 350× and 150× speedup over the CFD simulations. This dramatic acceleration is attributable to the parametric mapping capability inherent in GNNs, which, once trained, predict spatio-temporal flow-field evolution through a single, highly efficient forward propagation, thereby completely obviating the need for iterative equation solving. Comparing the two ST-GNN variants, GCN-LSTM exhibited marginally higher efficiency than GAT-LSTM. The GCN performs graph convolution with a fixed adjacency matrix, featuring a computational complexity of O(N) (where N is the number of nodes), whereas GAT introduces attention mechanisms that require dynamic calculation of adjacency weights for each node. This incurs additional computational overhead of O(N × K) (with K denoting the average number of neighbors, and K < N), contributing to a longer execution time for GAT. Collectively, these findings underscore the substantial potential of ST-GNN frameworks for real-time or large-scale flow-field analyses where rapid inference is mission-critical.

6. Conclusions

This study proposed a novel ST-GNN framework for predicting unsteady flow fields on unstructured grids. By integrating graph encoders, specifically a GCN and GAT, with LSTM decoders, the model effectively captures both spatial topology and temporal dynamics in complex fluid systems. Based on evaluation using the SUBOFF AFF 8 test case, the following conclusions are drawn:

(1) The ST-GNN framework demonstrates strong capability in modeling the spatio-temporal evolution of unsteady flow fields. GAT-LSTM outperforms GCN-LSTM by leveraging an adaptive graph attention mechanism that focuses on critical flow regions with high gradients, such as shear layers and separation zones, leading to more accurate resolution of fine-scale flow features.

(2) Although both ST-GNN variants exhibit gradually increasing prediction errors over time, due to the autoregressive inference process, their overall error levels remain low. GAT-LSTM shows notably better control over error propagation, achieving significantly lower average relative errors in velocity and pressure predictions by the final time step.

(3) The ST-GNN models provide a substantial improvement in computational efficiency compared to traditional CFD solvers. By using pre-trained parametric mappings rather than solving PDEs iteratively, both variants reduce computation time per step by two orders of magnitude. While GCN-LSTM is slightly faster, GAT-LSTM offers superior accuracy, making it more suitable for precision-sensitive applications, such as real-time flow analysis and design optimization.

(4) Both models encounter challenges near abrupt geometric features, for example, bow stagnation points and stern appendages, where strong nonlinearities occur due to flow separation and adverse pressure gradients. GAT-LSTM still achieves better accuracy in these regions, constraining maximum errors to lower levels, which suggests its stronger capability in handling geometry-induced flow complexities.

The ST-GNN framework, particularly GAT-LSTM, offers a promising solution for real-time simulation and engineering applications in complex flow fields. To further enhance accuracy in capturing local flow complexities, future work will incorporate physical constraints, such as Navier–Stokes equation terms, into the loss function. This physics-informed approach aims to strengthen adherence to fluid dynamics principles, improving predictive performance in challenging flow scenarios.