Class-Incremental Learning-Based Few-Shot Underwater-Acoustic Target Recognition

Abstract

1. Introduction

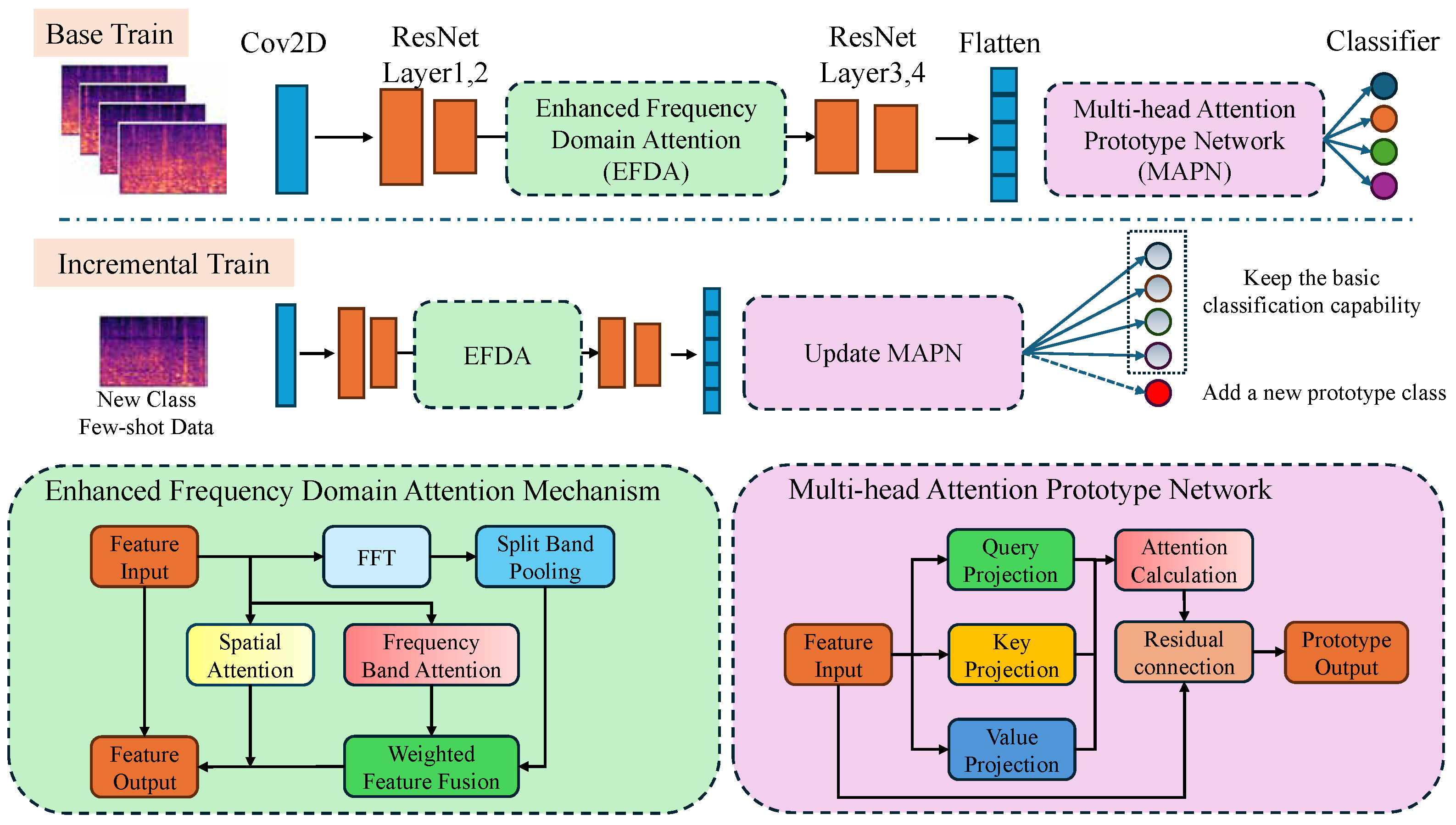

- (1)

- A few-shot incremental learning network for hydroacoustic target recognition is proposed, enabling rapid category expansion. The network conducts regular classification training in the base category database; when confronted with new category data, it can acquire recognition and classification capabilities for all the seen categories (including the new ones) by merely learning a few shots of new category data, eliminating the need for repeated transfer learning and cross-domain training. This network autonomously learns new knowledge and exhibits strong generalization and rapid deployment capabilities;

- (2)

- A prototype categorization method is introduced, integrating incremental learning via a prototype network structure and a multi-head attention mechanism into the training process. By retaining the instance feature classification prototypes learned during base training and incorporating new category classification heads, catastrophic forgetting in the network’s incremental learning process is effectively alleviated;

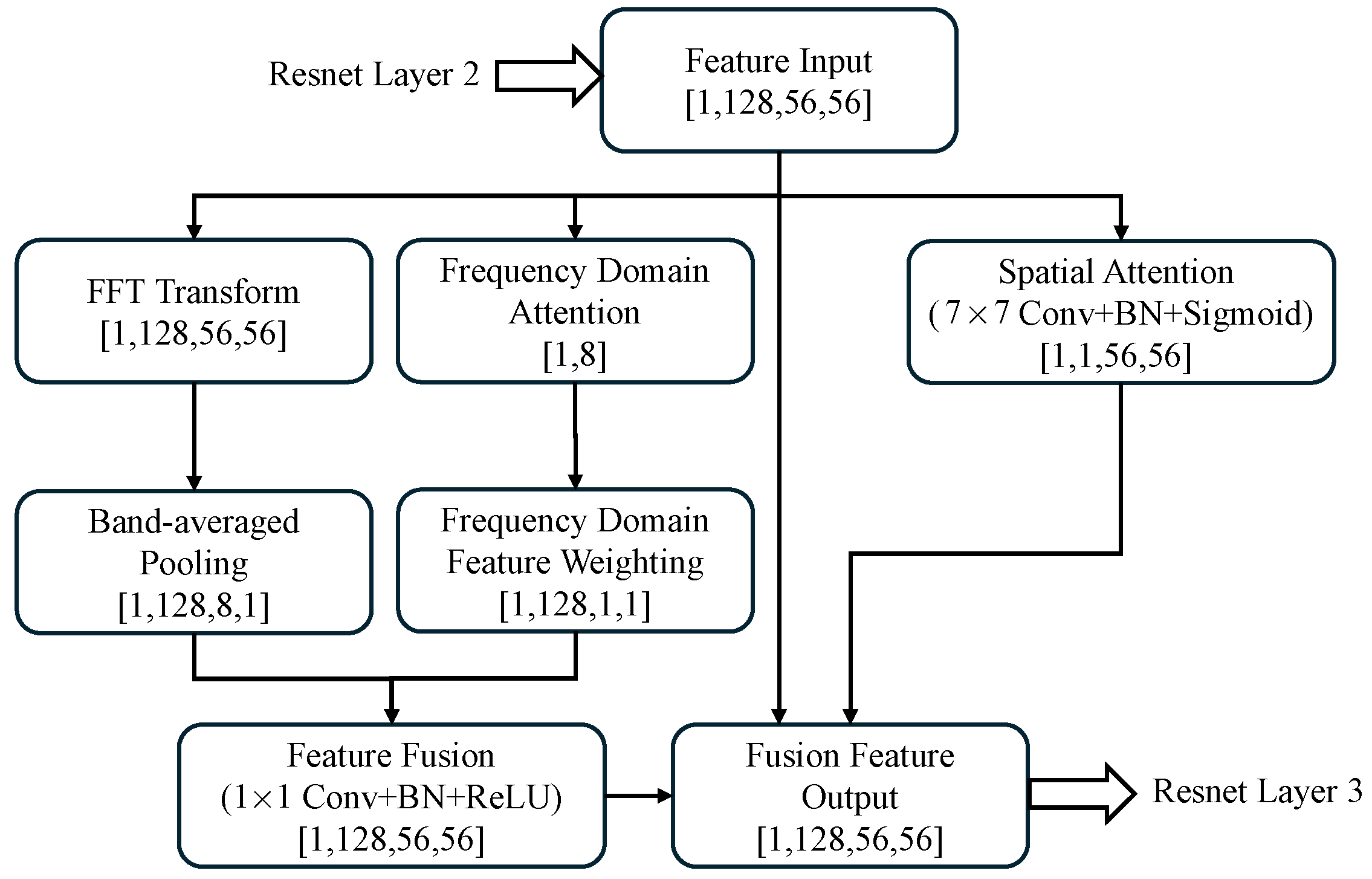

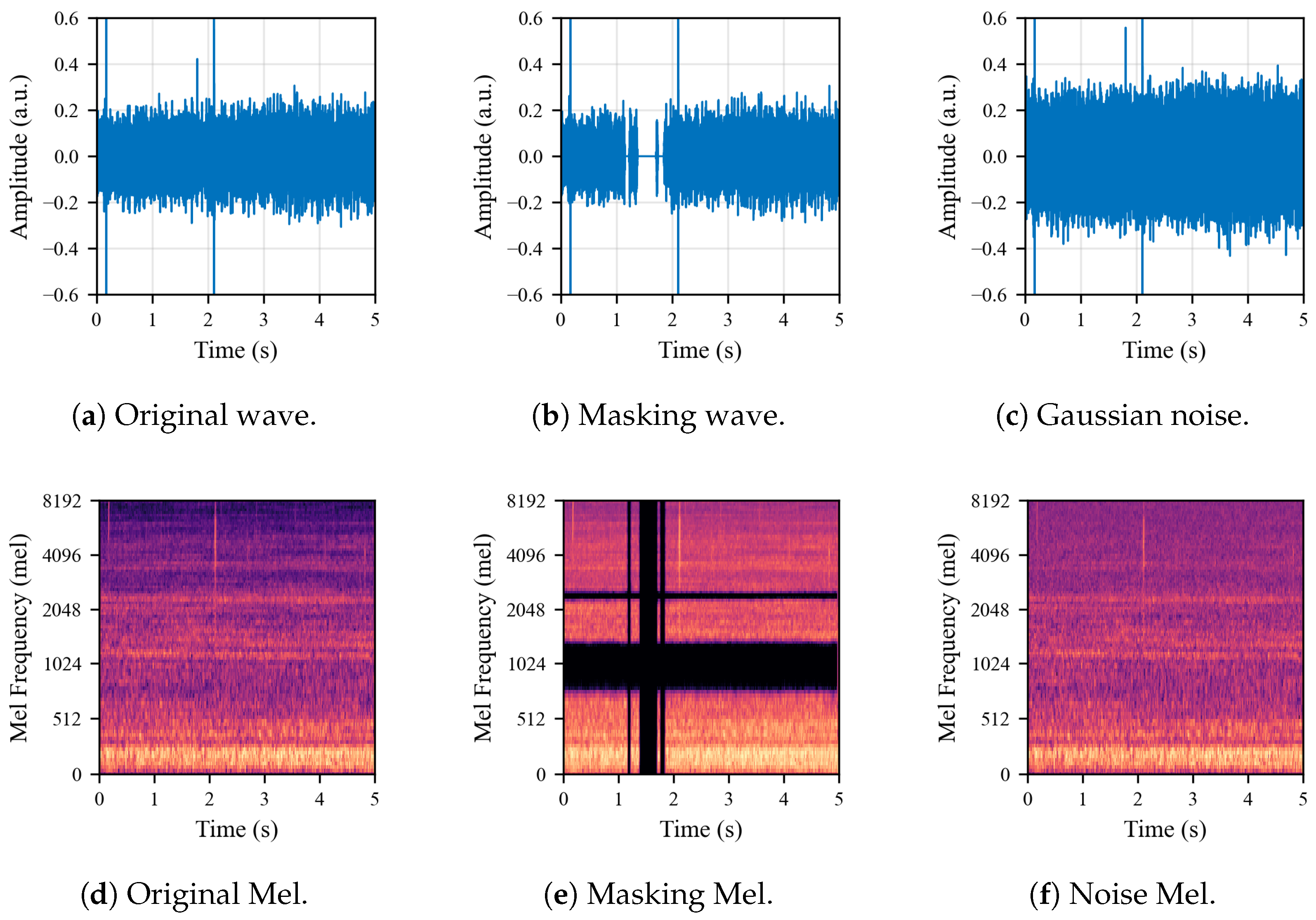

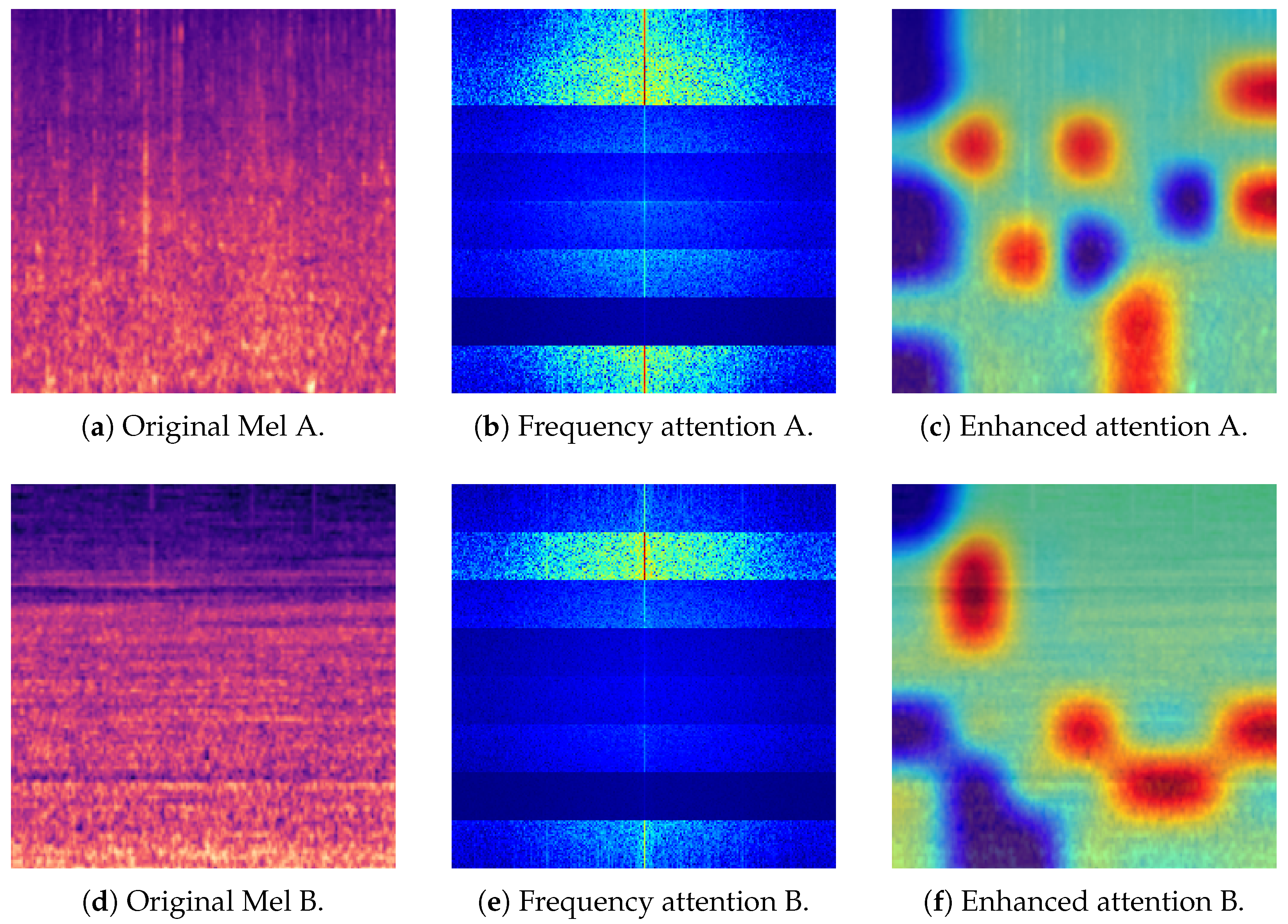

- (3)

- By integrating image-processing methods with the physical significance of hydroacoustic-based Mel spectrograms, an enhanced frequency-domain attention mechanism is proposed. This mechanism enables the network to consider both the spatial locations and variation patterns of Mel spectrograms, thereby enhancing its discriminative power in classification. The proposed approach strengthens the network’s capability in feature extraction and generalization for underwater-acoustic targets;

- (4)

- A two-stage learning strategy is introduced. In the base learning or pre-training phase, few-shot simulation training is conducted using an episode-based training paradigm to mitigate overfitting and enhance the generalization of the network model. In the few-shot incremental learning phase, partial freeze training is employed to reduce catastrophic forgetting in the network;

- (5)

- After pre-processing the public ShipsEar dataset [17], an incremental learning dataset for testing was constructed. Compared with classical UATR methods (CNN [2] and LSTM [2]), few-shot methods (TADAM [18] and Dense-CNN [19]), and few-shot incremental methods from the image domain (prototypical [20], IPN [21], iCaRL [22], and CEC [23]), the proposed method achieved superior performance in the tests. This demonstrates the effectiveness and anti-forgetting capability of few-shot incremental learning methods in underwater-acoustic target classification tasks.

2. Methods

2.1. Feature Extraction

2.2. Classifier Design

2.3. Training Strategies

- (1)

- represents the diversity loss, which is the sum of off-diagonal elements, by computing the covariance matrix of frequency-band attention weights. This loss is designed to encourage diversity in the distribution of attention weights across different frequency bands. It prevents all the frequency-band weights from converging to uniformity;

- (2)

- is the contrast loss, and it calculates distances between categories. It encourages increasing these distances to enhance the discriminability of category features;

- (3)

- is the consistency loss, and it computes the variance of features within the same category to encourage a reduction in variance. This process compactifies the feature distribution within each category, thereby enhancing intra-class consistency;

- (4)

- is the frequency prior loss, and it is used to manually guide the model to focus on specific frequency bands. This process enhances sensitivity to critical frequency ranges.

3. Evaluation

3.1. Dataset

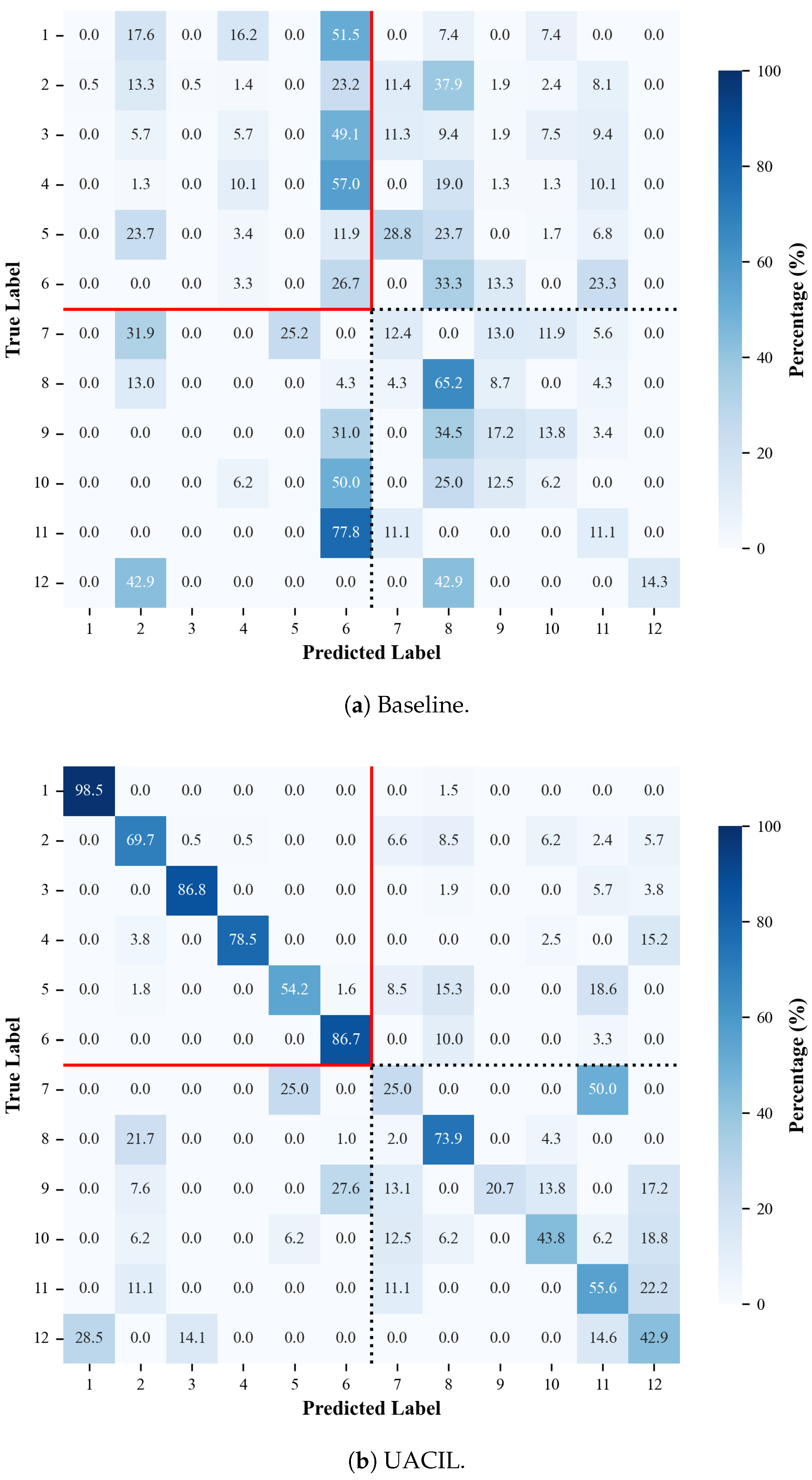

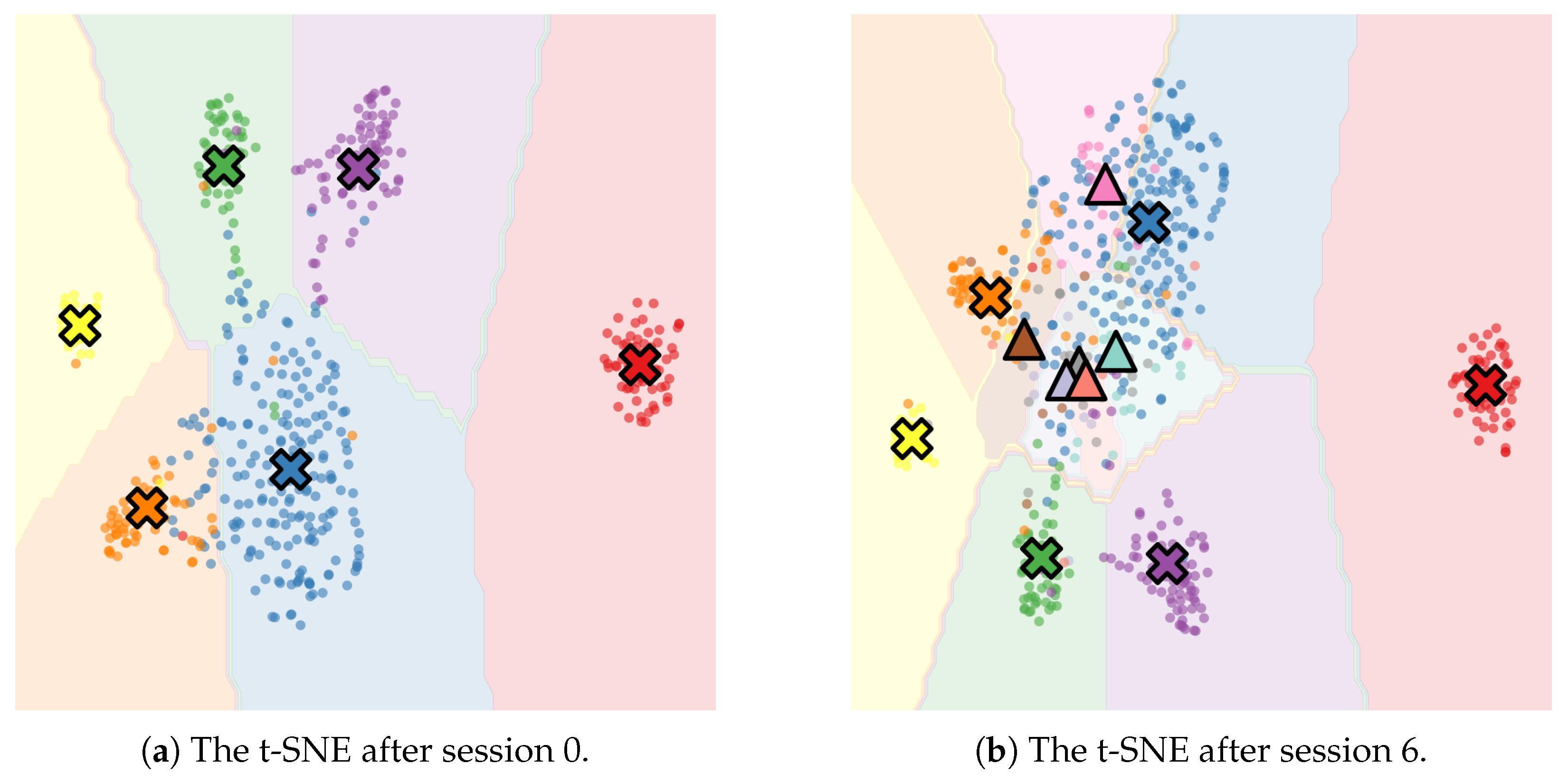

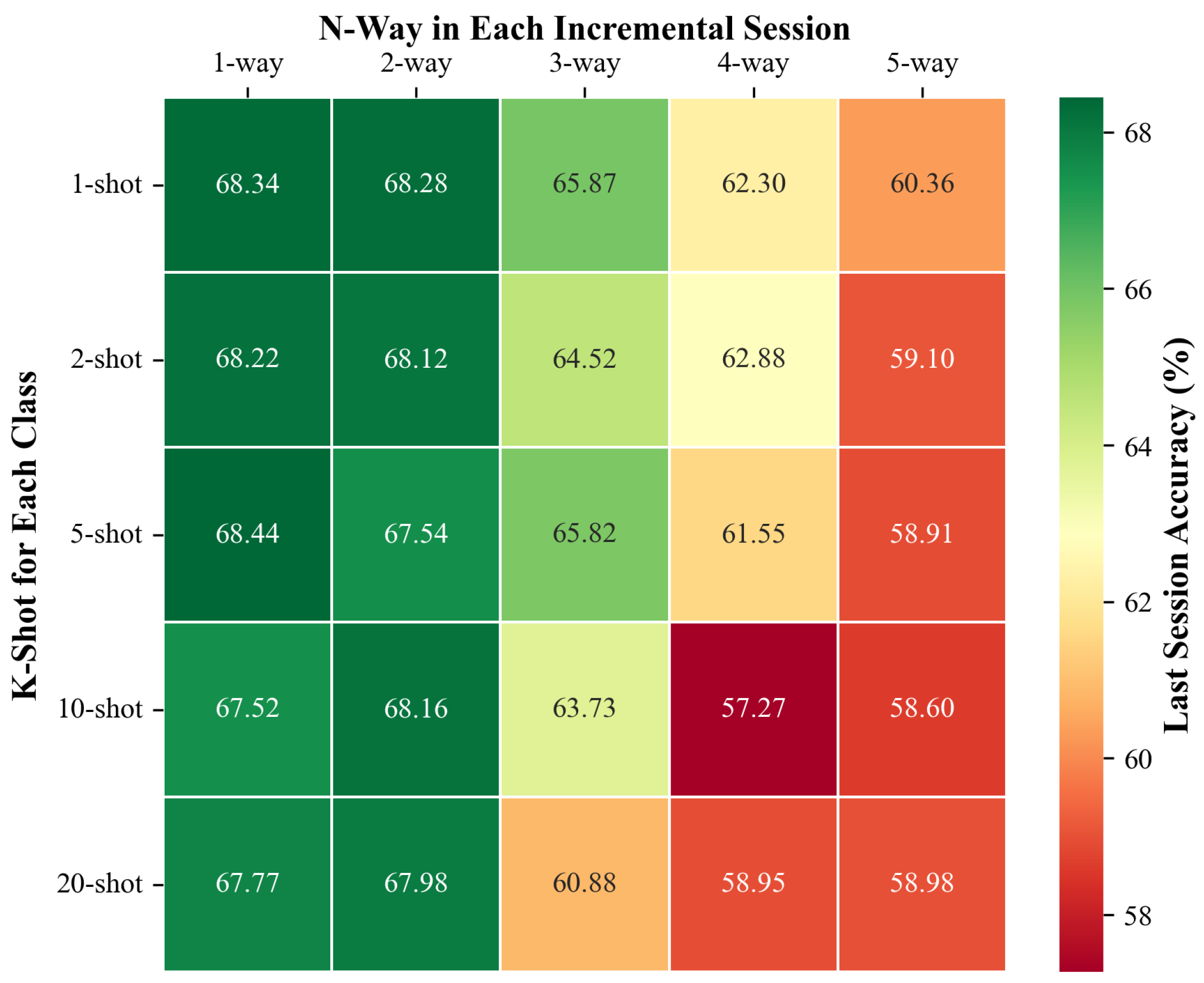

3.2. Results

4. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Luo, X.; Chen, L.; Zhou, H.; Cao, H. A Survey of Underwater Acoustic Target Recognition Methods Based on Machine Learning. J. Mar. Sci. Eng. 2023, 11, 384. [Google Scholar] [CrossRef]

- Liu, F.; Shen, T.; Luo, Z.; Zhao, D.; Guo, S. Underwater target recognition using convolutional recurrent neural networks with 3-D Mel-spectrogram and data augmentation. Appl. Acoust. 2021, 178, 107989. [Google Scholar] [CrossRef]

- Tang, J.; Ma, E.; Qu, Y.; Gao, W.; Zhang, Y.; Gan, L. UAPT: An underwater acoustic target recognition method based on pre-trained Transformer. Multimed. Syst. 2025, 31, 50. [Google Scholar] [CrossRef]

- Cui, X.; He, Z.; Xue, Y.; Tang, K.; Zhu, P.; Han, J. Cross-Domain Contrastive Learning-Based Few-Shot Underwater Acoustic Target Recognition. J. Mar. Sci. Eng. 2024, 12, 264. [Google Scholar] [CrossRef]

- Shi, B.; Sun, M.; Puvvada, K.C.; Kao, C.C.; Matsoukas, S.; Wang, C. Few-Shot Acoustic Event Detection Via Meta Learning. In Proceedings of the ICASSP 2020—2020 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Barcelona, Spain, 4–8 May 2020; pp. 76–80. [Google Scholar] [CrossRef]

- Wu, Y.; Li, Y.; Zhao, T.; Zhang, L.; Wei, B.; Liu, J.; Zheng, Q. Improved prototypical network for active few-shot learning. Pattern Recognit. Lett. 2023, 172, 188–194. [Google Scholar] [CrossRef]

- Cheng, H.; Wang, Y.; Li, H.; Kot, A.C.; Wen, B. Disentangled Feature Representation for Few-Shot Image Classification. IEEE Trans. Neural Netw. Learn. Syst. 2024, 35, 10422–10435. [Google Scholar] [CrossRef] [PubMed]

- Wen, W.; Liu, Y.; Lin, Q.; Ouyang, C. Few-shot Named Entity Recognition with Joint Token and Sentence Awareness. Data Intell. 2023, 5, 767–785. [Google Scholar] [CrossRef]

- Duan, R.; Li, D.; Tong, Q.; Yang, T.; Liu, X.; Liu, X. A Survey of Few-Shot Learning: An Effective Method for Intrusion Detection. Secur. Commun. Netw. 2021, 2021, 4259629. [Google Scholar] [CrossRef]

- Zeng, W.; Xiao, Z.-Y. Few-shot learning based on deep learning: A survey. Math. Biosci. Eng. 2024, 21, 679–711. [Google Scholar] [CrossRef]

- Cui, X.; He, Z.; Xue, Y.; Zhu, P.; Han, J.; Li, X. Few-Shot Underwater Acoustic Target Recognition Using Domain Adaptation and Knowledge Distillation. IEEE J. Ocean. Eng. 2025, 50, 637–653. [Google Scholar] [CrossRef]

- Fu, B.; Nie, J.; Wei, W.; Zhang, L. Constructing a Multi-Modal Based Underwater Acoustic Target Recognition Method with a Pre-Trained Language-Audio Model. IEEE Trans. Geosci. Remote Sens. 2025, 63, 1–14. [Google Scholar] [CrossRef]

- Tian, S.; Bai, D.; Zhou, J.; Fu, Y.; Chen, D. Few-shot learning for joint model in underwater acoustic target recognition. Sci. Rep. 2023, 13, 17502. [Google Scholar] [CrossRef]

- Yin, Q.; Shen, L. Underwater acoustic target recognition based on population balance-encoding classification. Ocean Eng. 2025, 337, 121899. [Google Scholar] [CrossRef]

- Bao, W.; Ren, Q.; Wang, W.; Huang, M.; Xiao, Z. A dual-label-reversed ensemble transfer learning strategy for underwater target detection. Appl. Acoust. 2025, 235, 110701. [Google Scholar] [CrossRef]

- Zhou, D.W.; Wang, Q.W.; Qi, Z.H.; Ye, H.J.; Zhan, D.C.; Liu, Z. Class-Incremental Learning: A Survey. IEEE Trans. Pattern Anal. Mach. Intell. 2024, 46, 9851–9873. [Google Scholar] [CrossRef]

- Santos-Dominguez, D.; Torres-Guijarro, S.; Cardenal-Lopez, A.; Pena-Gimenez, A. ShipsEar: An underwater vessel noise database. Appl. Acoust. 2016, 113, 64–69. [Google Scholar] [CrossRef]

- Oreshkin, B.; Rodríguez López, P.; Lacoste, A. TADAM: Task dependent adaptive metric for improved few-shot learning. In Proceedings of the Advances in Neural Information Processing Systems; Bengio, S., Wallach, H., Larochelle, H., Grauman, K., Cesa-Bianchi, N., Garnett, R., Eds.; Curran Associates, Inc.: Nice, France, 2018; Volume 31. [Google Scholar]

- Doan, V.S.; Huynh-The, T.; Kim, D.S. Underwater Acoustic Target Classification Based on Dense Convolutional Neural Network. IEEE Geosci. Remote Sens. Lett. 2022, 19, 1–5. [Google Scholar] [CrossRef]

- Snell, J.; Swersky, K.; Zemel, R. Prototypical Networks for Few-shot Learning. In Proceedings of the Advances in Neural Information Processing Systems; Guyon, I., Luxburg, U.V., Bengio, S., Wallach, H., Fergus, R., Vishwanathan, S., Garnett, R., Eds.; Curran Associates, Inc.: Nice, France, 2017; Volume 30, pp. 4080–4090. [Google Scholar]

- Ji, Z.; Chai, X.; Yu, Y.; Pang, Y.; Zhang, Z. Improved prototypical networks for few-Shot learning. Pattern Recognit. Lett. 2020, 140, 81–87. [Google Scholar] [CrossRef]

- Rebuffi, S.A.; Kolesnikov, A.; Sperl, G.; Lampert, C.H. iCaRL: Incremental Classifier and Representation Learning. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 2001–2010. [Google Scholar]

- Zhang, C.; Song, N.; Lin, G.; Zheng, Y.; Pan, P.; Xu, Y. Few-Shot Incremental Learning with Continually Evolved Classifiers. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 20–25 June 2021; pp. 12455–12464. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar] [CrossRef]

- Meng, X.; Liu, X.; Xu, Y.; Wu, Y.; Li, H.; Kim, K.W.; Liu, S.; Xu, Y. A Multi-Time-Frequency Feature Fusion Approach for Marine Mammal Sound Recognition. J. Mar. Sci. Eng. 2025, 13, 1101. [Google Scholar] [CrossRef]

- Xu, J.; Li, X.; Zhang, D.; Chen, Y.; Peng, Y.; Liu, W. Enhanced underwater acoustic target recognition using parallel dual-branch network with attention mechanism. Eng. Appl. Artif. Intell. 2025, 158, 111603. [Google Scholar] [CrossRef]

- Li, G.; Wu, M.; Yang, H. A new underwater acoustic signal recognition method: Fusion of cepstral feature and multi-path parallel joint neural network. Appl. Acoust. 2025, 239, 110809. [Google Scholar] [CrossRef]

- Hu, G.; Wang, K.; Peng, Y.; Qiu, M.; Shi, J.; Liu, L. Deep Learning Methods for Underwater Target Feature Extraction and Recognition. Comput. Intell. Neurosci. 2018, 2018, 1214301. [Google Scholar] [CrossRef]

- Yang, S.; Jin, A.; Zeng, X.; Wang, H.; Hong, X.; Lei, M. Underwater acoustic target recognition based on sub-band concatenated Mel spectrogram and multidomain attention mechanism. Eng. Appl. Artif. Intell. 2024, 133, 107983. [Google Scholar] [CrossRef]

- Jaderberg, M.; Simonyan, K.; Zisserman, A.; Kavukcuoglu, K. Spatial transformer networks. Adv. Neural Inf. Process. Syst. 2015, 28, 2017–2025. [Google Scholar]

- Grave, E.; Joulin, A.; Cissé, M.; Facebook AI Research, D.G.; Jégou, H. Efficient softmax approximation for GPUs. In Proceedings of the 34th International Conference on Machine Learning—Volume 70. JMLR.org, Sydney, Australia, 6–11 August 2017; ICML’17. pp. 1302–1310. [Google Scholar]

- Hu, J.; Shen, L.; Sun, G. Squeeze-and-Excitation Networks. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 8–23 June 2018; pp. 7132–7141. [Google Scholar] [CrossRef]

- Qin, Z.; Zhang, P.; Wu, F.; Li, X. FcaNet: Frequency Channel Attention Networks. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, QC, Canada, 10–17 October 2021; pp. 783–792. [Google Scholar]

- Bridle, J.S. Training Stochastic Model Recognition Algorithms as Networks can Lead to Maximum Mutual Information Estimation of Parameters. In Proceedings of the Advances in Neural Information Processing Systems 2, NIPS Conference, Denver, CO, USA, 27–30 November 1989. [Google Scholar]

- Ba, J.; Kiros, J.R.; Hinton, G.E. Layer Normalization. arXiv 2016, arXiv:1607.06450. [Google Scholar] [CrossRef]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. ImageNet classification with deep convolutional neural networks. Commun. ACM 2017, 60, 84–90. [Google Scholar] [CrossRef]

- Chen, T.; Kornblith, S.; Norouzi, M.; Hinton, G. A Simple Framework for Contrastive Learning of Visual Representations. In Proceedings of the 37th International Conference on Machine Learning, Virtual, 13–18 July 2020; Daumé, H.D., III, Singh, A., Eds.; PMLR; 2020; Volume 119—Proceedings of Machine Learning Research. pp. 1597–1607. [Google Scholar]

- Gess, B.; Kassing, S.; Konarovskyi, V. Stochastic Modified Flows, Mean-Field Limits and Dynamics of Stochastic Gradient Descent. J. Mach. Learn. Res. 2024, 25, 1518–1544. [Google Scholar]

- Jung, S.; Dagobert, T.; Morel, J.M.; Facciolo, G. A Review of t-SNE. Image Process. Line 2024, 14, 250–270. [Google Scholar] [CrossRef]

| Step | Description |

|---|---|

| 1 | Input: Support set features for each class, query feature Q |

| 2 | Compute prototype by Equation (2) for each class |

| 3 | Concatenate all the prototypes and query feature into sequence S by Equation (3) |

| 4 | Apply self-attention by Equation (5) |

| 5 | Extract enhanced prototypes and enhanced query from |

| 6 | Compute similarity for each class by Equation (6) |

| 7 | Apply softmax to similarities to obtain class probabilities |

| 8 | Output: Predicted class |

| Session | Session 0 | Session 1 | Session 2 | Session 3 | Session 4 | Session 5 | Session 6 |

|---|---|---|---|---|---|---|---|

| Class | Natural noise | Mussel boat | Sail boat | Tugboat | Fish boat | Dredger | Pilot ship |

| Passengers | |||||||

| Ocean liner | |||||||

| RORO | |||||||

| Motor boat | |||||||

| Trawler | |||||||

| Samples | 1656 | 95 | 76 | 23 | 28 | 52 | 26 |

| Method | Session 0 | Session 1 | Session 2 | Session 3 | Session 4 | Session 5 | Session 6 | PD |

|---|---|---|---|---|---|---|---|---|

| Baseline | 81.75 | 9.77 | 9.69 | 9.50 | 9.15 | 6.17 | 6.21 | 74.54 |

| CNN [2] | 80.99 | 10.08 | 9.77 | 9.65 | 9.58 | 8.21 | 7.04 | 73.95 |

| LSTM [2] | 72.29 | 13.20 | 10.22 | 9.31 | 8.54 | 8.02 | 7.55 | 64.74 |

| TADAM [18] | 88.00 | 12.29 | 11.30 | 10.89 | 10.25 | 9.18 | 8.73 | 79.27 |

| Dense-CNN [19] | 84.55 | 9.47 | 9.69 | 9.49 | 9.15 | 6.21 | 6.16 | 78.39 |

| Prototypical [20] | 75.00 | 44.29 | 42.50 | 41.11 | 40.00 | 39.09 | 38.33 | 36.67 |

| IPN [21] | 72.93 | 56.72 | 55.78 | 55.41 | 54.22 | 52.19 | 46.90 | 26.03 |

| iCaRL [22] | 69.09 | 51.52 | 49.96 | 47.24 | 46.10 | 42.16 | 40.66 | 28.43 |

| CEC [23] | 84.85 | 71.03 | 69.28 | 66.44 | 63.75 | 60.28 | 58.81 | 26.04 |

| UACIL | 92.89 | 75.46 | 70.31 | 69.06 | 68.94 | 68.59 | 68.44 | 24.45 |

| Method | Baseline | CNN | LSTM | TADAM | Dense-CNN | Prototypical | IPN | iCaRL | CEC | UACIL |

|---|---|---|---|---|---|---|---|---|---|---|

| Accuracy | 23.70 | 19.14 | 10.26 | 30.55 | 21.85 | 39.08 | 43.21 | 41.66 | 45.29 | 47.65 |

| Feature | Session 0 | Session 1 | Session 2 | Session 3 | Session 4 | Session 5 | Session 6 |

|---|---|---|---|---|---|---|---|

| Waveform | 47.56 | 30.77 | 27.39 | 27.34 | 27.34 | 27.34 | 27.34 |

| STFT | 87.70 | 72.31 | 70.16 | 65.15 | 64.68 | 64.55 | 64.53 |

| MFCC | 89.60 | 70.66 | 68.43 | 68.13 | 68.02 | 67.95 | 67.95 |

| Mel | 92.89 | 75.46 | 70.31 | 69.06 | 68.94 | 68.59 | 68.44 |

| Bands | Session 0 | Session 1 | Session 2 | Session 3 | Session 4 | Session 5 | Session 6 |

|---|---|---|---|---|---|---|---|

| 1 | 87.60 | 70.77 | 65.89 | 64.34 | 63.52 | 63.24 | 62.95 |

| 2 | 90.46 | 74.65 | 68.46 | 68.37 | 65.95 | 65.80 | 65.66 |

| 4 | 91.85 | 74.28 | 69.81 | 68.31 | 67.95 | 67.81 | 67.48 |

| 8 | 92.89 | 75.46 | 70.31 | 69.06 | 68.94 | 68.59 | 68.44 |

| 16 | 89.35 | 74.23 | 69.85 | 66.57 | 64.52 | 64.10 | 63.84 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Wang, W.; Li, Y.; Shen, T.; Zhao, D. Class-Incremental Learning-Based Few-Shot Underwater-Acoustic Target Recognition. J. Mar. Sci. Eng. 2025, 13, 1606. https://doi.org/10.3390/jmse13091606

Wang W, Li Y, Shen T, Zhao D. Class-Incremental Learning-Based Few-Shot Underwater-Acoustic Target Recognition. Journal of Marine Science and Engineering. 2025; 13(9):1606. https://doi.org/10.3390/jmse13091606

Chicago/Turabian StyleWang, Wenbo, Ye Li, Tongsheng Shen, and Dexin Zhao. 2025. "Class-Incremental Learning-Based Few-Shot Underwater-Acoustic Target Recognition" Journal of Marine Science and Engineering 13, no. 9: 1606. https://doi.org/10.3390/jmse13091606

APA StyleWang, W., Li, Y., Shen, T., & Zhao, D. (2025). Class-Incremental Learning-Based Few-Shot Underwater-Acoustic Target Recognition. Journal of Marine Science and Engineering, 13(9), 1606. https://doi.org/10.3390/jmse13091606