Development of a Low-Cost Semantic Monitoring System for Vineyards Using Autonomous Robots

Abstract

1. Introduction

- Grape growth: A viticulturist generally inspects the growth of grapes visually.

- Damage inspection: During the flowering of vine, strong winds and hail can cause damage. Cold temperatures may cause millerandage producing clusters with varying sizes or no seeds [10]. On the other hand, hot conditions may cause coulure causing grape clusters to either drop or not develop fully. This monitoring is often performed visually.

- Oidium inspection: Oidium is a fungal disease which has the potential to attack all the green parts of the vine with devastating consequences [11]. This is inspected visually.

- Phylloxera inspection: Phylloxera [14] is a pest of commercial grapevines worldwide and can easily be inspected visually.

- Monitoring for green harvest: Green harvesting is the process in which immature and green grape bunches are purposefully removed so that the vine uses all the nutrients for developing the remaining grapes. This helps to develop and ripen the grape with good flavors.

- Estimation of harvest time and areas: Visual monitoring is important to estimate the appropriate harvest time and areas of the vineyard.

- Yield estimation: Visual estimation is important to estimate an approximate yield estimation of a vineyard.

- A vineyard monitoring system which only uses inexpensive camera sensor is proposed.

- We propose a novel way to semantically label image data in vineyards by detecting the pillars set in the vineyard to support grapes. The system labels the image data on the basis of field name, lane number, and pillar numbers, which are automatically identified through image processing. Unlike traditional monitoring techniques, the proposed semantic labeling enables pin-point monitoring of the vineyard. In other words, farmers do not need to access the whole data, but instead can specify the exact location in the field which needs to be monitored. This is very efficient and time saving. Feature detection is important for semantic labeling. While extracting features such as walls, corners, and straight lines are easier in indoor environments, robust feature extraction in vineyard is difficult due to the dynamic nature of the environment (viz. moving leaves, plant’s trunk, changing lighting conditions, etc.). Hence, robust feature extraction is a major challenge faced by mobile robots in farms, which generally lack static features. Due to this, many researchers tend to use expensive sensors such as GPS, dense RGBD sensors, and 3D Lidar (e.g., VLP-16/32), or sensor networks [34,35,36]. However, such sensors increase the system cost. Feature detection is done using inexpensive cameras in the proposed research.

- A way to increase the robustness of the system by varying the range of detection is proposed.

- An algorithm to automatically turn data logging on and off has been proposed based on the motion of robot.

- An interactive software has been developed through which the farmers can monitor the vineyard.

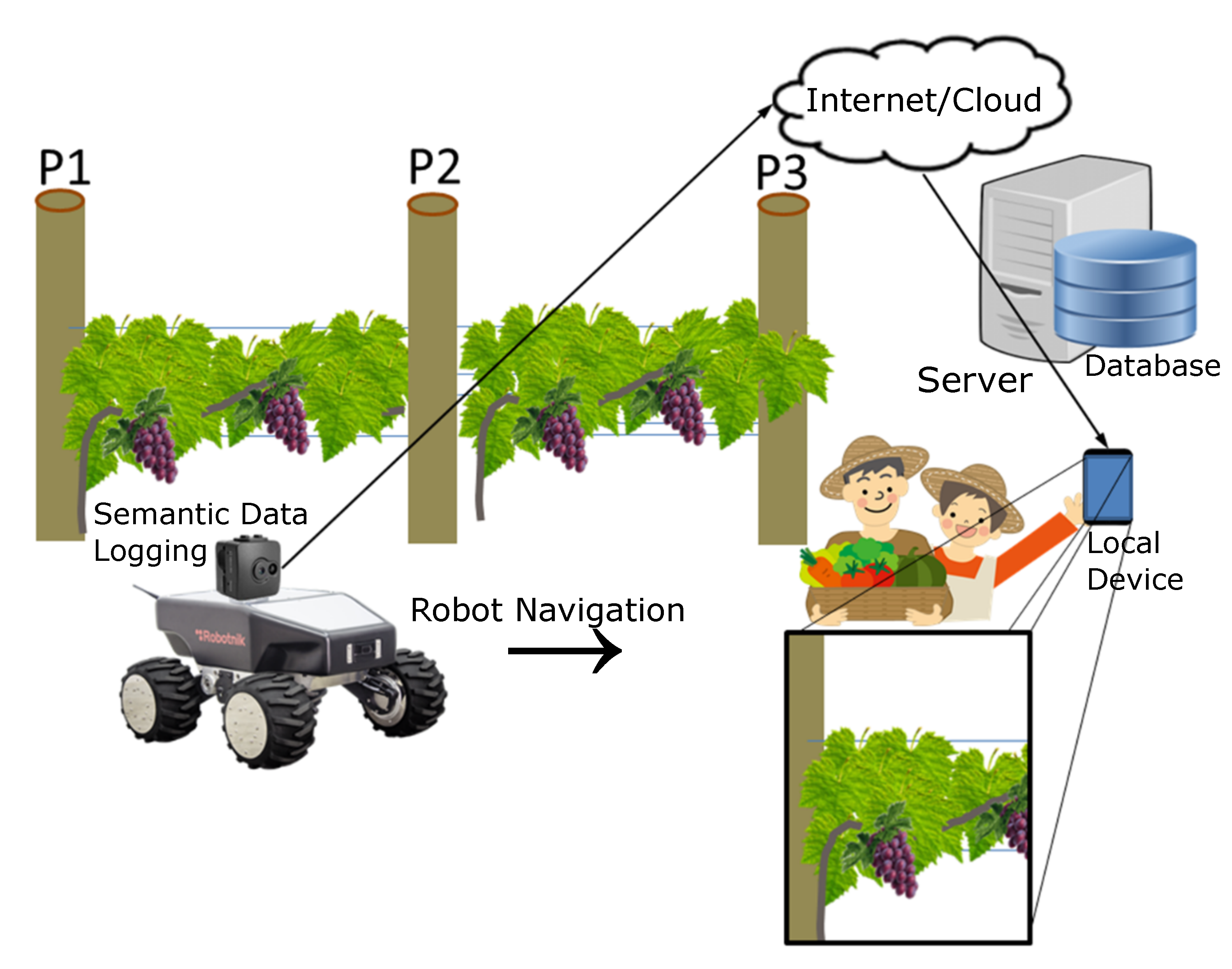

2. System Overview

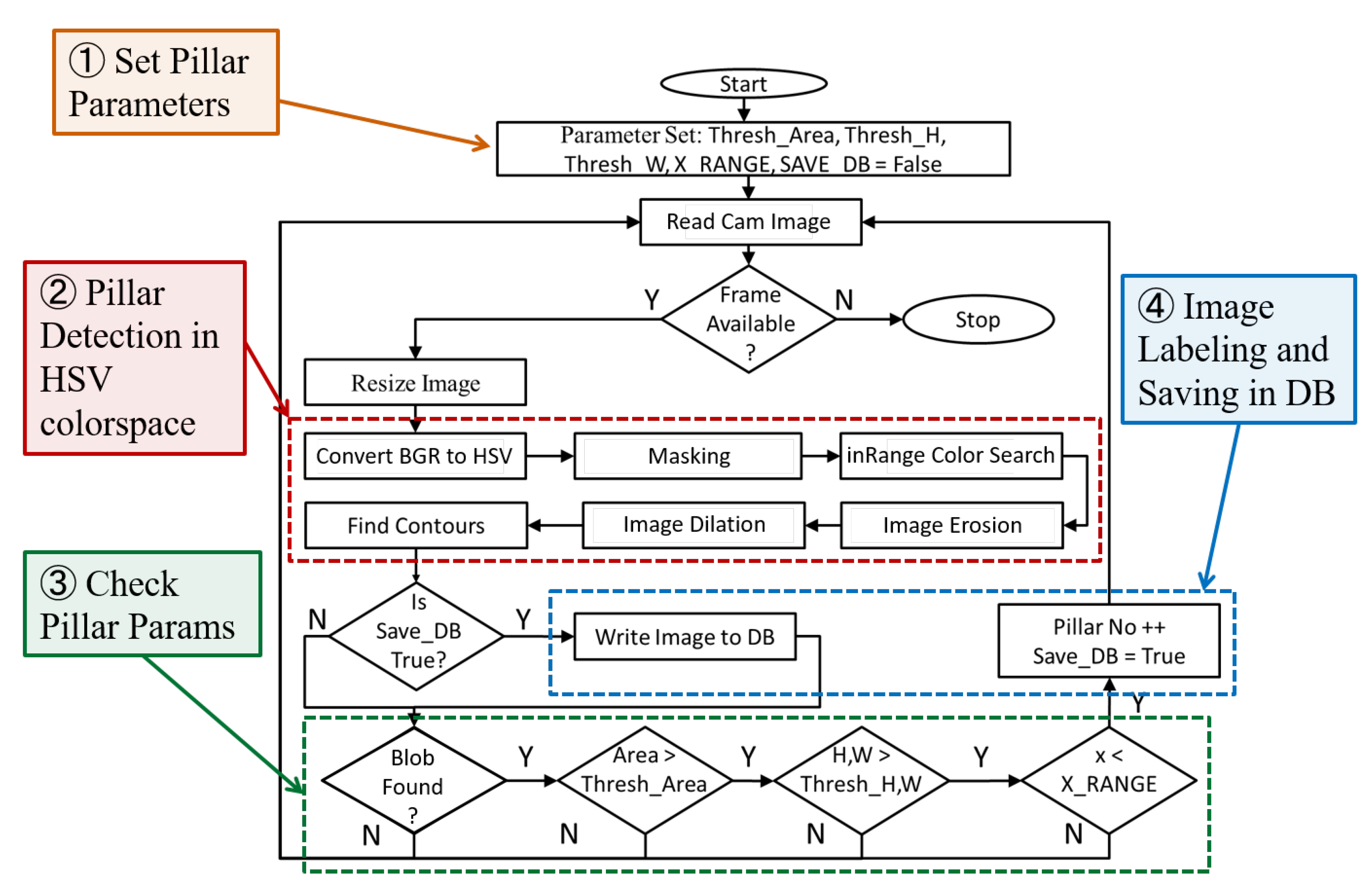

3. Pillar Detection Algorithm

- Setting Pillar Parameters: We first set the static parameters used for pillar detection. These includes the approximate width () and height () of the pillars, the approximate threshold area of detection (), and the range in the horizontal axis of image within which pillars should be detected (). At the start of the algorithm, a flag () which controls the labeling and saving of images in the database is set to .

- Pillar Detection in HSV Colorspace: The camera setup on the robot reads an RGB color image when the robot starts to move. The camera reads the image in full HD (1920 × 1080) pixel resolution. This image is resized to pixels for faster image processing. Next, pillars are detected in HSV colorspace and the various steps are explained below:

- BGR to HSV Conversion: The resized RGB image () is converted to HSV colorspace. Unlike RGB (Red, Green, and Blue channel image), HSV (Hue, Saturation, and Value) separates the color information (chroma) from the image intensity (luma). This separation enables robust color detection. The RGB to HSV color conversion is done using the following equations [40]:If , then . The range of values are: .

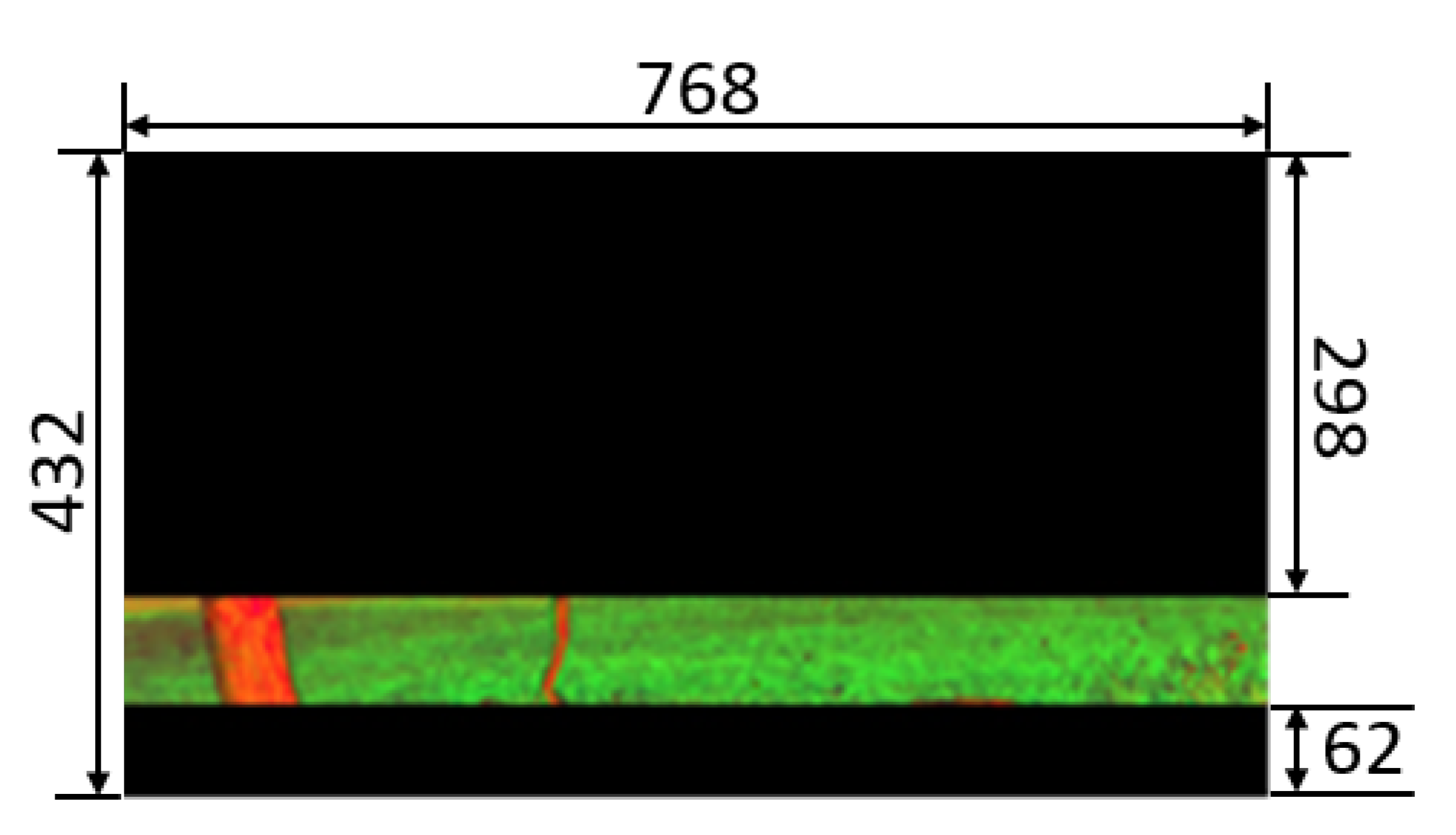

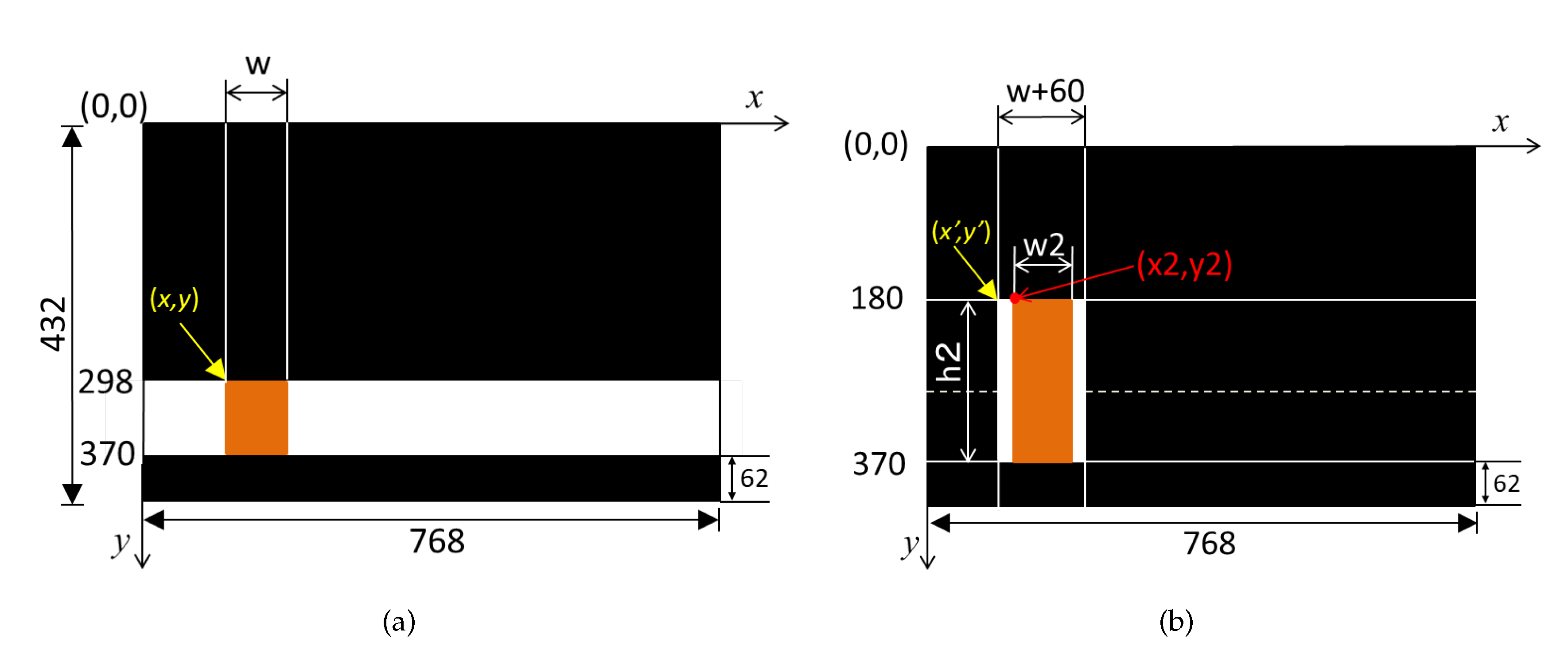

- Masking: The image contains information about the grapes (mainly in middle), leaves, weed (at the bottom), and soil (at bottom). We mask the top and bottom areas to focus only on pillar detection. This masking is achieved by setting pixels in certain range of the HSV image () to zero value as follows:In Equation (2), and represent the masking range’s start and end points in perpendicular direction (along y-axis), respectively. Similarly, and represents the masking range’s start and end points in horizontal direction (along x-axis), respectively. C represents the specific channel of the image . These values are set according to the height of the crop, the position at which the camera is fixed on the robot, and the height of the robot. In this work, full masking is applied in the horizontal direction (along x-axis). This was achieved by setting to 0, and to the width of the image i.e., 768. Masking in vertical direction (along y-axis) was done in two levels. In the first level, masking was done by setting the values of and to 0 and 298, respectively. In the second level, masking was done by setting the values of and to 370 and 432, respectively. Masking was done in all the three channels. The result of masking with dimensions is shown in Figure 3.

- Color Search: In the next step, we search for the pillar color within an and . These values are set by taking a snapshot of all the pillars in the vineyard in different lighting conditions and finding the lower and upper ranges of HSV values. In this work, was set to (34, 110, 255) and was set to (17, 26, 50) for the H, S, and V values. The result of color search in this range is a binary image (). If the pixel values of is within the upper and lower ranges, the respective pixel in is set to white (), or zero, otherwise.

- Noise Removal Using Erosion and Dilation: Noise is removed from the binary image by applying morphological operations [41] of erosion followed by dilation. Both the operations use a structuring element (), which is used to process the image. Erosion removes pixels, whereas dilate adds pixels based on the structuring element [40]. We first apply erosion operation, which removes the small and independent noise pixels. This operation affects the entire image. Therefore, dilate operation is applied afterwards.

- Detecting Contours: The next step involves retrieving contours from the noise removed binary image using the algorithm [42]. Each contour is stored as a vector of points. In this research, only the extreme outer contours are retrieved. Hence, each contour is a vector of five parameters: , and . Here, and are the coordinates of the top-left coordinates of the contour (), respectively. Moreover, is the contour area, with and the width and height of the contour (), respectively.

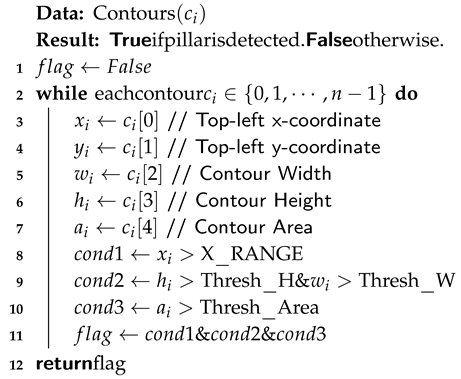

- Checking Detected Pillar’s Dimensions: If n contours are detected, then the dimensions of each contour are checked. Using the contour parameters , and , the condition for pillar detection is done using Algorithm 1.

- Image Labeling and Saving in Database: The controls the semantic indexing of image data in the robot’s database. For each frame, the is set to the output of Algorithm 1. When the flag is , the pillar number is incremented, and successive images are logged based on the new pillar index.

| Algorithm 1: Contour check for pillar. |

|

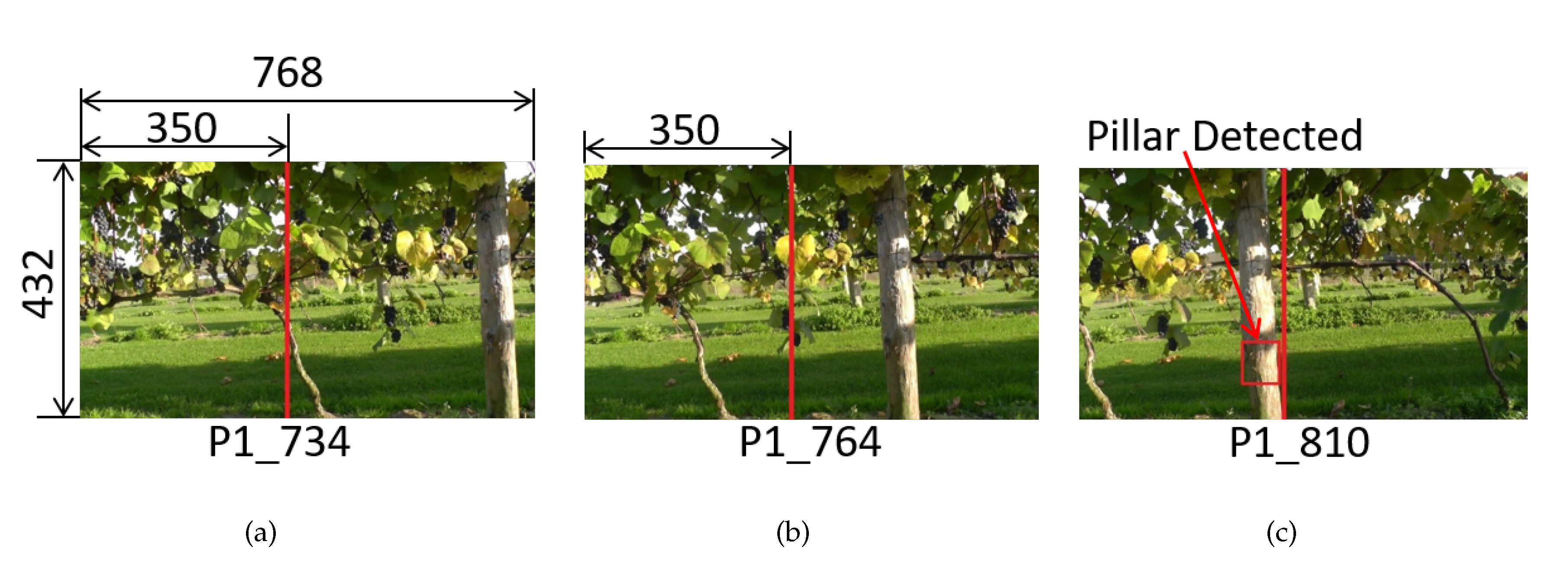

Effect of X-Range Parameter

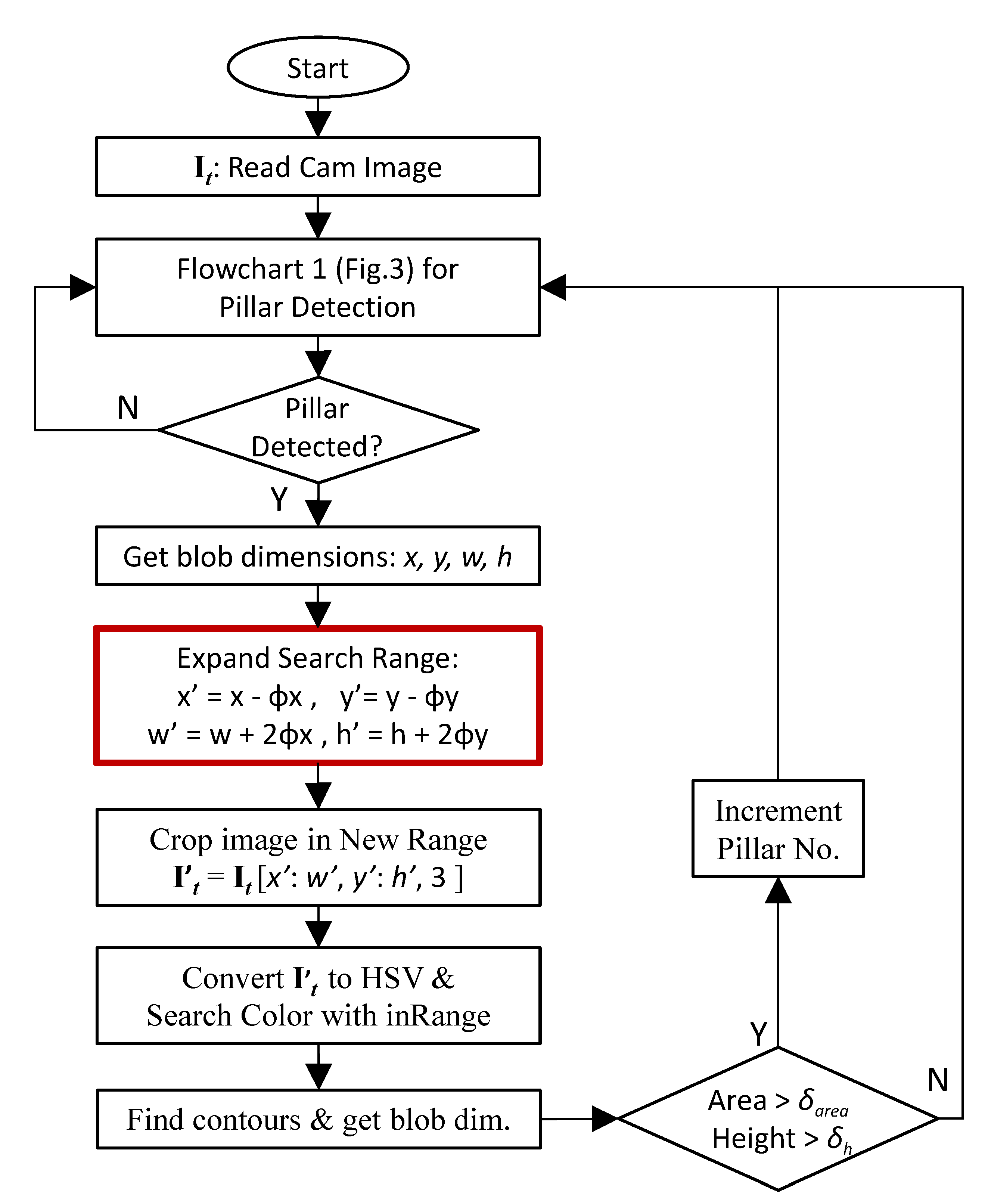

4. Improving the Robustness of Pillar Detection Algorithm

5. Semantic Data Logging and Monitoring System

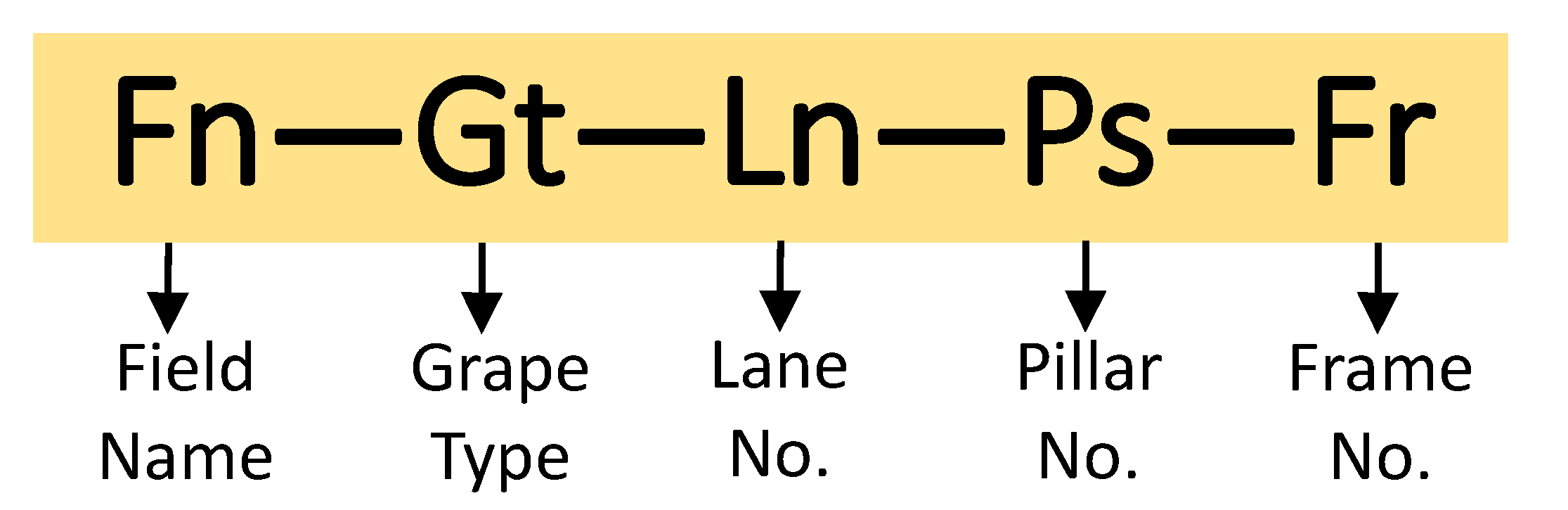

5.1. Semantic Data Logging in Vineyard

| ‘Kitami – Kiyomai – L5 – P3 – 108.jpg’ |

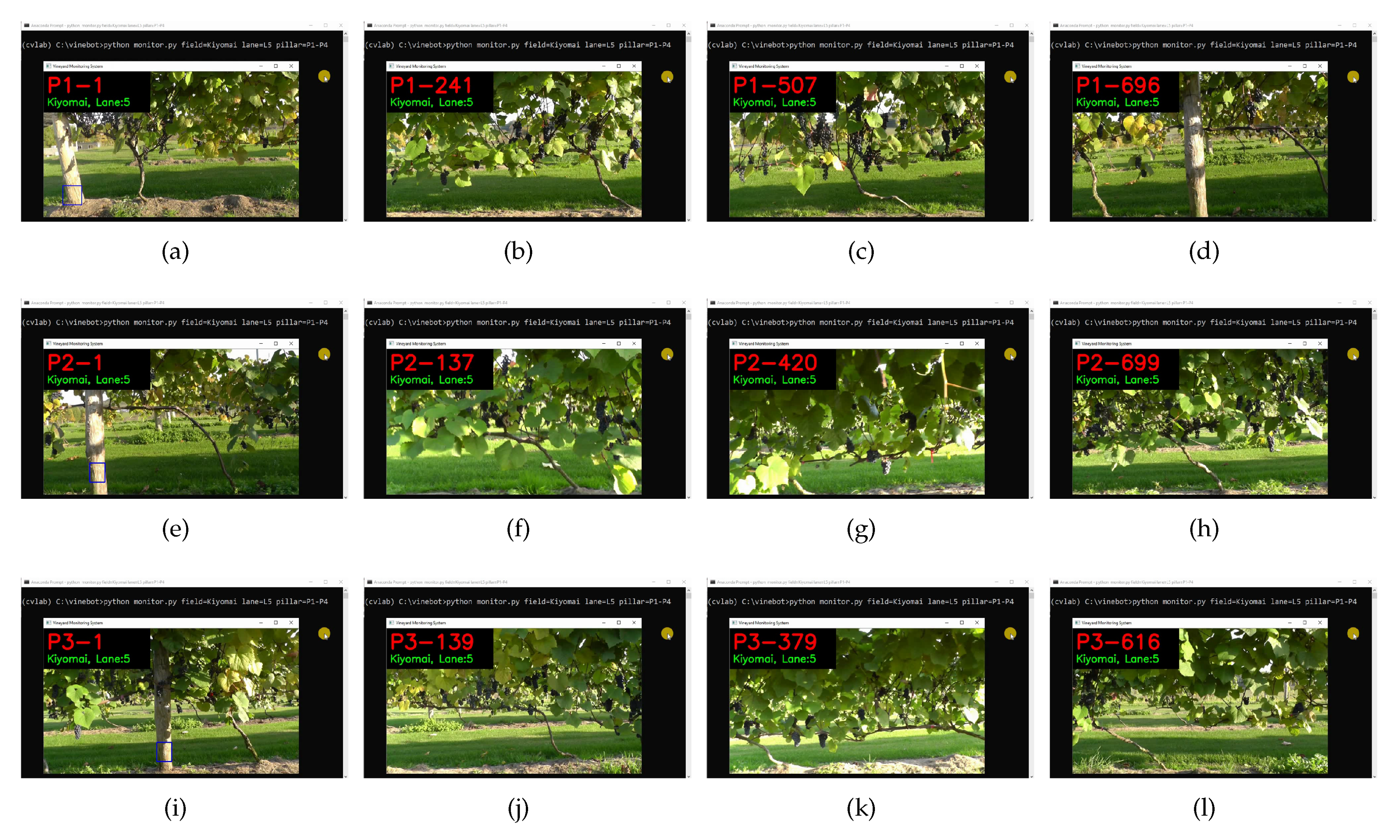

5.2. Monitoring System

6. Experiments in Real Field and Results

6.1. Results of Pillar Detection for Semantic Labeling

6.2. Processing Time for Pillar Detection

6.3. Vineyard Monitoring System

| C : \ > python monitor.py field=<field-name> lane=<Lane-No.> pillar=<Pillar-No.> |

| C : \ > python monitor.py field = Kiyomai lane = L5 pillar = P1 − P4 |

7. Conclusions

Supplementary Materials

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Abbreviations

| Width threshold for pillar detection | |

| Height threshold for pillar detection | |

| Area threshold for pillar detection | |

| Horizontal range within which pillars is detected | |

| Boolean flag to turn data logging ON or OFF | |

| Image in RGB colorspace | |

| Image in HSV colorspace | |

| Masking range’s start point along y-axis | |

| Masking range’s end point along y-axis | |

| Masking range’s start point along x-axis | |

| Masking range’s end point along x-axis | |

| Lower range of HSV values for pillar detection | |

| Upper range of HSV values for pillar detection | |

| Noise removed binary image | |

| Contour number i | |

| Top-left x-coordinate of contour () | |

| Top-left y-coordinate of contour () | |

| Area of contour | |

| Width of contour | |

| Height of contour | |

| Expanded range in left direction along the x-axis | |

| Expanded range in right direction along the x-axis | |

| Expanded range in top direction along the y-axis | |

| Expanded range in down direction along the y-axis | |

| Height threshold in expanded search space | |

| Area threshold in expanded search space |

References

- Ye, F.; Qi, W. Design of wireless sensor node for drought monitoring in vineyards. In Proceedings of the International Conference on Advanced Infocomm Technology 2011 (ICAIT 2011), Wuhan, China, 11–14 July 2011; Volume 2011, pp. 1–4. [Google Scholar] [CrossRef]

- Sánchez, N.; Martínez-Fernández, J.; Aparicio, J.; Herrero-Jiménez, C.M. Field radiometry for vineyard status monitoring under Mediterranean conditions. In Proceedings of the 2014 IEEE Geoscience and Remote Sensing Symposium, Quebec City, QC, Canada, 13–18 July 2014; pp. 2094–2097. [Google Scholar]

- Wigneron, J.; Dayan, S.; Kruszewski, A.; Aluome, C.; AI-Yaari, M.G.A.; Fan, L.; Guven, S.; Chipeaux, C.; Moisy, C.; Guyon, D.; et al. The Aqui Network: Soil Moisture Sites in the “Les Landes” Forest and Graves Vineyards (Bordeaux Aquitaine Region, France). In Proceedings of the IGARSS 2018—2018 IEEE International Geoscience and Remote Sensing Symposium, Valencia, Spain, 22–27 July 2018; pp. 3739–3742. [Google Scholar]

- Gay-Fernández, J.A.; Cuiñas, I. Deployment of a wireless sensor network in a vineyard. In Proceedings of the International Conference on Wireless Information Networks and Systems, Seville, Spain, 18–21 July 2011; pp. 35–40. [Google Scholar]

- Galmes, S. Lifetime Issues in Wireless Sensor Networks for Vineyard Monitoring. In Proceedings of the 2006 IEEE International Conference on Mobile Ad Hoc and Sensor Systems, Vancouver, BC, Canada, 9–12 October 2006; pp. 542–545. [Google Scholar]

- Medela, A.; Cendón, B.; González, L.; Crespo, R.; Nevares, I. IoT multiplatform networking to monitor and control wineries and vineyards. In Proceedings of the 2013 Future Network Mobile Summit, Lisboa, Portugal, 3–5 July 2013; pp. 1–10. [Google Scholar]

- Mouakher, A.; Belkaroui, R.; Bertaux, A.; Labbani, O.; Hugol-Gential, C.; Nicolle, C. An Ontology-Based Monitoring System in Vineyards of the Burgundy Region. In Proceedings of the 2019 IEEE 28th International Conference on Enabling Technologies: Infrastructure for Collaborative Enterprises (WETICE), Napoli, Italy, 12–14 June 2019; pp. 307–312. [Google Scholar]

- Ahumada-García, R.; Poblete-Echeverría, C.; Besoain, F.; Reyes-Suarez, J. Inference of foliar temperature profile of a vineyard using integrated sensors into a motorized vehicle. In Proceedings of the 2016 IEEE International Conference on Automatica (ICA-ACCA), Curico, Chile, 19–21 October 2016; pp. 1–6. [Google Scholar]

- Pérez-Expósito, J.P.; Fernández-Caramés, T.M.; Fraga-Lamas, P.; Castedo, L. An IoT Monitoring System for Precision Viticulture. In Proceedings of the 2017 IEEE International Conference on Internet of Things (iThings) and IEEE Green Computing and Communications (GreenCom) and IEEE Cyber, Physical and Social Computing (CPSCom) and IEEE Smart Data (SmartData), Exeter, UK, 21–23 June 2017; pp. 662–669. [Google Scholar]

- Stevenson, T. Sotheby’s Wine Encyclopedia; DK Publishers: London, UK, 2011; ISBN 0756686849. [Google Scholar]

- Unwin, T. Wine and the Vine: An Historical Geography of Viticulture and the Wine Trade; Routledge Publishers: Abingdon, UK, 1991; ISBN 0415031206. [Google Scholar]

- Peronospora. Wikipedia 2020. Available online: https://en.wikipedia.org/wiki/Peronospora (accessed on 31 March 2020).

- Panagos, P.; Ballabio, C.; Lugato, E.; Jones, A.; Borrelli, P.; Scarpa, S.; Orgiazzi, A.; Montanarella, L. Potential Sources of Anthropogenic Copper Inputs to European Agricultural Soils. Sustainability 2018, 10, 2380. [Google Scholar] [CrossRef]

- Gale, G. Saving the vine from phylloxera: A never-ending battle. In Wine: A Scientific Exploration; CRC Press: Boca Raton, FL, USA, 2003; pp. 70–91. [Google Scholar]

- Japan’s Ministry of Agriculture, Forestry and Fisheries (MAFF). Grapes in Japan 2015. Available online: https://www.maff.go.jp/e/data/publish/attach/pdf/index-115.pdf (accessed on 23 March 2020).

- Association of Japan’s Wine Lovers. List of Grape Varities in Japan. 2020. Available online: http://www.jp-wine.com/jp/kind.html (accessed on 15 April 2020).

- Wikipedia. Grapes in Japan (Japanese). 2020. Available online: https://ja.wikipedia.org/wiki/%E3%83%96%E3%83%89%E3%82%A6 (accessed on 16 May 2020).

- e-Stat. Statistics of Japan (Japanese). 2020. Available online: https://www.e-stat.go.jp/ (accessed on 16 May 2020).

- Ly, O.; Gimbert, H.; Passault, G.; Baron, G. A Fully Autonomous Robot for Putting Posts for Trellising Vineyard with Centimetric Accuracy. In Proceedings of the 2015 IEEE International Conference on Autonomous Robot Systems and Competitions, Vila Real, Portugal, 8–10 April 2015; pp. 44–49. [Google Scholar] [CrossRef]

- Igawa, H.; Tanaka, T.; Kaneko, S.; Tada, T.; Suzuki, S. Visual and tactual recognition of trunk of grape for weeding robot in vineyards. In Proceedings of the 2009 35th Annual Conference of IEEE Industrial Electronics, Porto, Portugal, 3–5 November 2009; pp. 4274–4279. [Google Scholar] [CrossRef]

- Thayer, T.C.; Vougioukas, S.; Goldberg, K.; Carpin, S. Multi-Robot Routing Algorithms for Robots Operating in Vineyards. In Proceedings of the 2018 IEEE 14th International Conference on Automation Science and Engineering (CASE), Munich, Germany, 20–24 August 2018; pp. 14–21. [Google Scholar] [CrossRef]

- Gao, M.; Lu, T. Image Processing and Analysis for Autonomous Grapevine Pruning. In Proceedings of the 2006 International Conference on Mechatronics and Automation, Luoyang, China, 25–28 June 2006; pp. 922–927. [Google Scholar] [CrossRef]

- Thayer, T.C.; Vougioukas, S.; Goldberg, K.; Carpin, S. Routing Algorithms for Robot Assisted Precision Irrigation. In Proceedings of the 2018 IEEE International Conference on Robotics and Automation (ICRA), Brisbane, Australia, 21–25 May 2018; pp. 2221–2228. [Google Scholar] [CrossRef]

- Riggio, G.; Fantuzzi, C.; Secchi, C. A Low-Cost Navigation Strategy for Yield Estimation in Vineyards. In Proceedings of the 2018 IEEE International Conference on Robotics and Automation (ICRA), Brisbane, Australia, 21–25 May 2018; pp. 2200–2205. [Google Scholar] [CrossRef]

- Nuske, S.; Achar, S.; Bates, T.; Narasimhan, S.; Singh, S. Yield estimation in vineyards by visual grape detection. In Proceedings of the 2011 IEEE/RSJ International Conference on Intelligent Robots and Systems, San Francisco, CA, USA, 25–30 September 2011; pp. 2352–2358. [Google Scholar] [CrossRef]

- de Sousa Contente, O.M.; Lau, J.N.P.N.; Morgado, J.F.M.; dos Santos, R.M.P.M. Vineyard Skeletonization for Autonomous Robot Navigation. In Proceedings of the 2015 IEEE International Conference on Autonomous Robot Systems and Competitions, Vila Real, Portugal, 8–10 April 2015; pp. 50–55. [Google Scholar] [CrossRef]

- Reis, M.; Morais, R.; Peres, E.; Pereira, C.; Contente, O.; Soares, S.; Valente, A.; Baptista, J.; Ferreira, P.; Bulas Cruz, J. Automatic detection of bunches of grapes in natural environment from color images. J. Appl. Log. 2012, 10, 285–290. [Google Scholar] [CrossRef]

- dos Santos, F.B.N.; Sobreira, H.M.P.; Campos, D.F.B.; dos Santos, R.M.P.M.; Moreira, A.P.G.M.; Contente, O.M.S. Towards a Reliable Monitoring Robot for Mountain Vineyards. In Proceedings of the 2015 IEEE International Conference on Autonomous Robot Systems and Competitions, Vila Real, Portugal, 8–10 April 2015; pp. 37–43. [Google Scholar]

- Ravankar, A.; Ravankar, A.A.; Hoshino, Y.; Kobayashi, Y. On Autonomous Navigation in Vineyards with Lidar Information. In Proceedings of the IEEE SICE Annual Conference 2019 (SICE 2019), IEEE, SICE, Hiroshima, Japan, 10–13 September 2019. [Google Scholar] [CrossRef]

- Roure, F.; Bascetta, L.; Soler, M.; Matteucci, M.; Faconti, D.; Gonzalez, J.P.; Serrano, D. Lessons Learned in Vineyard Monitoring and Protection from a Ground Autonomous Vehicle. In Advances in Robotics Research: From Lab to Market: ECHORD++: Robotic Science Supporting Innovation; Grau, A., Morel, Y., Puig-Pey, A., Cecchi, F., Eds.; Springer International Publishing: Cham, Switzerland, 2020; pp. 81–105. [Google Scholar] [CrossRef]

- Das, J.; Cross, G.; Qu, C.; Makineni, A.; Tokekar, P.; Mulgaonkar, Y.; Kumar, V. Devices, systems, and methods for automated monitoring enabling precision agriculture. In Proceedings of the 2015 IEEE International Conference on Automation Science and Engineering (CASE), Gothenburg, Sweden, 24–28 August 2015; pp. 462–469. [Google Scholar]

- Lloret, J.; Bosch, I.; Sendra, S.; Serrano, A. A Wireless Sensor Network for Vineyard Monitoring That Uses Image Processing. Sensors 2011, 11, 6165–6196. [Google Scholar] [CrossRef] [PubMed]

- Vinbot. Vinbot 2020. Available online: http://vinbot.eu/ (accessed on 31 March 2020).

- Ravankar, A.; Ravankar, A.; Kobayashi, Y.; Emaru, T. Hitchhiking Robots: A Collaborative Approach for Efficient Multi-Robot Navigation in Indoor Environments. Sensors 2017, 17, 1878. [Google Scholar] [CrossRef] [PubMed]

- Ravankar, A.; Ravankar, A.; Kobayashi, Y.; Hoshino, Y.; Peng, C.C.; Watanabe, M. Hitchhiking Based Symbiotic Multi-Robot Navigation in Sensor Networks. Robotics 2018, 7, 37. [Google Scholar] [CrossRef]

- Ravankar, A.; Ravankar, A.; Kobayashi, Y.; Emaru, T. Symbiotic Navigation in Multi-Robot Systems with Remote Obstacle Knowledge Sharing. Sensors 2017, 17, 1581. [Google Scholar] [CrossRef] [PubMed]

- Ravankar, A.A.; Hoshino, Y.; Ravankar, A.; Jixin, L.; Emaru, T.; Kobayashi, Y. Algorithms and a Framework for Indoor Robot Mapping in a Noisy Environment using Clustering in Spatial and Hough Domains. Int. J. Adv. Robot. Syst. 2015, 12, 27. [Google Scholar] [CrossRef]

- Ravankar, A.; Ravankar, A.A.; Hoshino, Y.; Emaru, T.; Kobayashi, Y. On a Hopping-points SVD and Hough Transform Based Line Detection Algorithm for Robot Localization and Mapping. Int. J. Adv. Robot. Syst. 2016, 13, 98. [Google Scholar] [CrossRef]

- Ravankar, A.A.; Ravankar, A.; Peng, C.; Kobayashi, Y.; Emaru, T. Task coordination for multiple mobile robots considering semantic and topological information. In Proceedings of the 2018 IEEE International Conference on Applied System Invention (ICASI), Chiba, Japan, 13–17 April 2018; pp. 1088–1091. [Google Scholar] [CrossRef]

- Bradski, A. Learning OpenCV, Computer Vision with OpenCV Library; Software That Sees, 1st ed.; Bradski, G., Kaehler, A., Eds.; O’Reilly Media: Sevastopol, CA, USA, 2008. [Google Scholar]

- Ravankar, A.; Kobayashi, Y.; Ravankar, A.; Emaru, T. A connected component labeling algorithm for sparse Lidar data segmentation. In Proceedings of the 2015 6th International Conference on Automation, Robotics and Applications (ICARA), Queenstown, New Zealand, 17–19 February 2015; pp. 437–442. [Google Scholar] [CrossRef]

- Suzuki, S.; Abe, K. Topological structural analysis of digitized binary images by border following. Comput. Vis. Graph. Image Process. 1985, 30, 32–46. [Google Scholar] [CrossRef]

- Robotnik Automation. Summit-XL Robot. 2020. Available online: https://www.robotnik.eu/mobile-robots/summit-xl/ (accessed on 21 May 2020).

- UTM-30LX Technical Specifications. 2020. Available online: https://www.hokuyo-aut.jp/search/single.php?serial=169 (accessed on 2 March 2020).

- Quigley, M.; Conley, K.; Gerkey, B.P.; Faust, J.; Foote, T.; Leibs, J.; Wheeler, R.; Ng, A.Y. ROS: An open-source Robot Operating System. In Proceedings of the ICRA Workshop on Open Source Software, Kobe, Japan, 12–17 May 2009. [Google Scholar]

- Cost of Computing. Wikipedia 2020. Available online: https://en.wikipedia.org/wiki/FLOPS (accessed on 15 May 2020).

| Function | Time (ms) |

|---|---|

| Image resize | 6.71 |

| BGR to HSV Conversion | 2.41 |

| Masking | 0.88 |

| Pillar’s color search | 0.88 |

| Erode and Dilate | 4.08 |

| Contours Detection | 0.40 |

| Image Labeling and DB Save | 23.19 |

| Total | 38.55 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Ravankar, A.; A. Ravankar, A.; Watanabe, M.; Hoshino, Y.; Rawankar, A. Development of a Low-Cost Semantic Monitoring System for Vineyards Using Autonomous Robots. Agriculture 2020, 10, 182. https://doi.org/10.3390/agriculture10050182

Ravankar A, A. Ravankar A, Watanabe M, Hoshino Y, Rawankar A. Development of a Low-Cost Semantic Monitoring System for Vineyards Using Autonomous Robots. Agriculture. 2020; 10(5):182. https://doi.org/10.3390/agriculture10050182

Chicago/Turabian StyleRavankar, Abhijeet, Ankit A. Ravankar, Michiko Watanabe, Yohei Hoshino, and Arpit Rawankar. 2020. "Development of a Low-Cost Semantic Monitoring System for Vineyards Using Autonomous Robots" Agriculture 10, no. 5: 182. https://doi.org/10.3390/agriculture10050182

APA StyleRavankar, A., A. Ravankar, A., Watanabe, M., Hoshino, Y., & Rawankar, A. (2020). Development of a Low-Cost Semantic Monitoring System for Vineyards Using Autonomous Robots. Agriculture, 10(5), 182. https://doi.org/10.3390/agriculture10050182