1. Introduction

The growing access to combined telecommunication along with the increase of electronic social network adoption has granted users a convenient method of sharing posts and comments on the Internet. However, even if this is an improvement in human communication, this environment also has provided proper conditions resulting in serious negative consequences to the welfare of society, due to a type of user who posts offensive comments and does not care about the psychological impact of his/her words, harming other users feelings. This phenomenon is called cyber-aggression [

1]. Cyber-aggression is a frequently used keyword in the literature to describe a wide range of offensive behaviors other than cyber-bullying [

2,

3,

4,

5].

Unfortunately, this problem has spread into a wide variety of mass media; Bauman [

6] says that some of the most-used digital media for cyber-aggression are: social networks (e.g., Facebook and Twitter), short message services, forums, trash-polling sites, blogs, video sharing websites, and chat rooms, among others. Their accessibility and fast adoption are a double-edged sword because it is impossible to have moderators to keep an eye on every post made and filter its content. Therefore, cyber-aggression [

7] has become a threat to society’s welfare, generating electronic violence.

When cyber-aggression is constant, then it becomes cyber-bullying, mainly characterized by the invasion of privacy, harassment, and use of obscene language against one user [

8], in most of the cases against a minor [

9]. Unlike bullying, cyber-bullying can happen 24/7, and the consequences for the victim can be more dramatic, because this not only creates insecurity, trust issues, and depression, but can also create suicidal thoughts [

10] with fatal consequences. Both cyber-bullying and cyber-aggression can harm a user by mocking and ridiculing them an indefinite number of times [

11,

12]; therefore it is crucial to support the increase of detection of these social problems. According to Ditch The Label and their Cyber-Bullying Survey [

13] some of the social networks with the highest number of users that have reported cases of cyber-aggression are Facebook, Twitter, Instagram, YouTube, and Ask.fm.

Currently, there is a wide variety of research aiming to mitigate cyber-bullying. Those from psychology or social sciences aim to detect criminal behavior and provide prevention strategies [

14], and those from the field of computer science seek to develop effective techniques or tools to support the prevention of cyber-aggression and cyber-bullying [

15]. Nevertheless, it is still complicated to identify a unique behavior pattern of aggression. It is also necessary to highlight that most of the research is carried out by English-speaking countries, so it is difficult to obtain free resources on the Internet for the development of tools that allow the analysis of comments written in Spanish. The Spanish language has an extensive vocabulary with multiple expressions, words that vary in different Spanish-speaking countries, synonyms and colloquialisms that are different in different countries and regions. For this reason, we consider it prudent to focus on the Spanish language of Mexico. However, we do not rule out the possibility of extending this research in other Spanish-speaking countries.

There are different types of cyber-aggression. Bauman [

6] identifies and describes some forms of cyber-bullying that are used to attack victims by digital means, such as flaming, harassment, denigration, masquerading, outing, trickery, social exclusion, and cyber-stalking. Peter [

16] highlights death threats, homophobia, sexual acts, the threat of physical violence, and damage to existing relationships, among others. Ringrose and Walker [

17,

18] indicate that women and children are the most vulnerable groups in case of cyber-aggression.

In Mexico, there has been a wave of hate crimes; in 2014, the World Health Organization reported that Mexico occupies second place in the world of hate crimes for factors such as “fear”. In Mexico, the case of violence based on sexual orientation is one of the most vulnerable groups, according to the National Survey on Discrimination [

19]. As found in an investigation by a group of civil society organizations [

20], in Mexico and Latin America, 84% of lesbian, gay, bisexual, and transgender (LGBT) population have been verbally harassed. On the other hand, violence against women is an exponential problem that in Mexico has affected young women between 18 and 30 [

21]. Mexico has a public organization known as Instituto Nacional de Estadística y Geografía (INEGI) [

22,

23], responsible for regulating and coordinating the National System of Statistical and Geographic Information, as well as conducting a national census. INEGI [

22] has reported that nine million Mexicans have suffered at least one incident of digital violence in one of its different forms. In 2016, INEGI reported that of the approximately 61.5 million women in Mexico, at least 63% aged 15 and older had experienced acts of violence. Racism is another problem in Mexico that has been growing through offensive messages related to “the wall” with the United States (US), the mobilization of immigrant caravans and discrimination by skin color. According to an INEGI study, skin color can affect a person’s job growth possibilities, as well as their socioeconomic status [

23].

The American Psychological Association [

24] says that different combinations of contextual factors such as gender, race, social class, and other sources of identity can result in different coping styles, and urges psychologists to learn guidelines for psychological practice with people with different problems (e.g., lesbian, gay, and bisexual patients).

For many years, problems from various research areas have been addressed through Artificial Intelligence (AI) techniques. AI is a discipline that emerged in the 1950s and is basically defined as the construction of automated models that can solve real problems by emulating human intelligence. Some recent applications include the following: in [

25], two chemical biodegradability prediction models are evaluated against another commonly used biodegradability model; Li et al. [

26] applied Multiscale Sample Entropy (MSE), Multiscale Permutation Entropy (MPE), and Multiscale Fuzzy Entropy (MFE) feature extraction methods along with Support Vector Machines (SVMs) classifier to analyze Motor Imagery EEG (MI-EEG) data; Li and coworkers [

27] proposed the new Temperature Sensor Clustering Method for thermal error modeling of machine tools, then the weight coefficient in the distance matrix and the number of the clusters (groups) were optimized by a genetic algorithm (GA); in [

28], fuzzy theory and a genetic algorithm are combined to design a Motor Diagnosis System for rotor failures; the aim in [

29] is to design a new method to predict click-through rate in Internet advertising based on a Deep Neural Network; Ocaña and coworkers [

30] proposed the evolutionary algorithm TS-MBFOA (Two-Swim Modified Bacterial Foraging Optimization Algorithm) and proved a real problem that seeks to optimize the synthesis of a four-bar mechanism in a mechatronic systems.

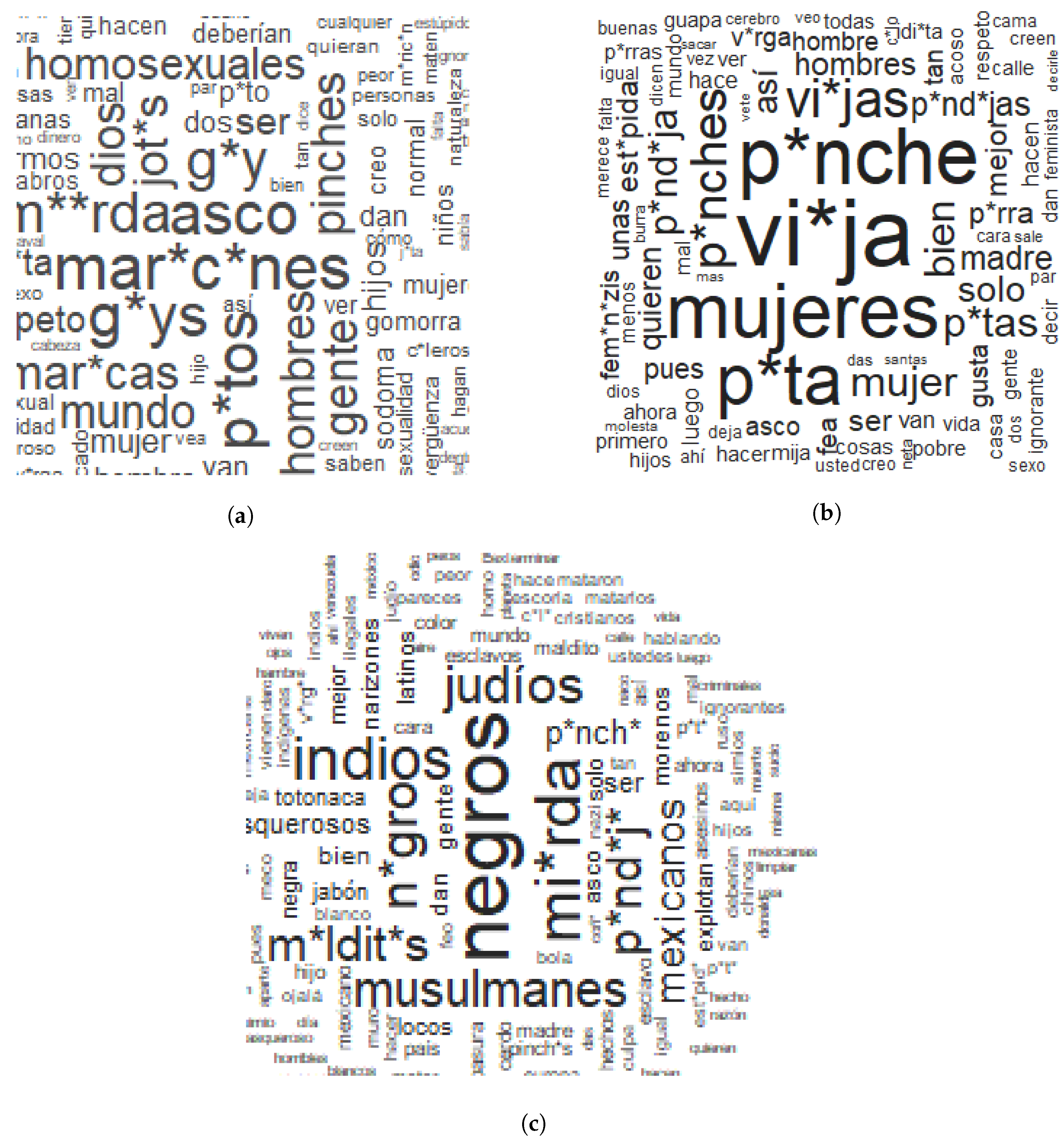

In this research, we used IA algorithms to classify comments in three cases of cyber-aggression: racism, violence based on sexual orientation, and violence against women. The accurate identification of cybernetic aggression cases is the first step of a process to reduce the incidence of this phenomenon. We applied IA techniques, specifically Random Forest, Variable Importance Measures (VIMs), and OneR. The comments used to create the data set were collected from Facebook, considering specific news related to the cyber-aggression cases included in this study.

In line with the aims of this study, we propose to answer the following research questions:

Is it possible to create a model of automatic detection of cyber-aggression cases with high precision? To answer this question, we will experiment with two classifiers of different approaches and compare their performance.

What are the terms that allow detection of cyber-aggression cases included in this work effectively? We will seek the answer to this question using methods to identify the relevant features for each cyber-aggression case.

The present work is organized as follows:

Section 2 presents a review of related research works,

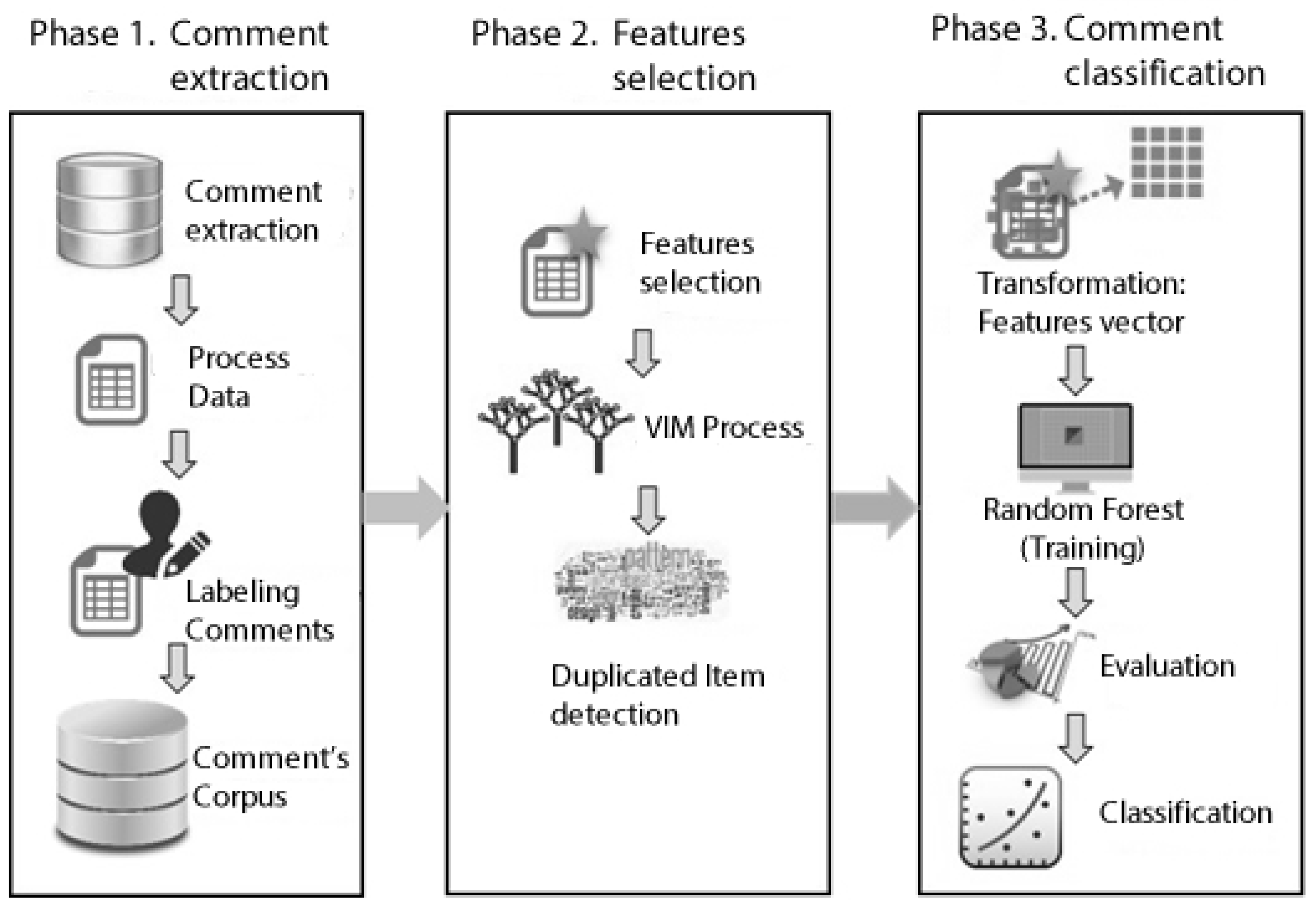

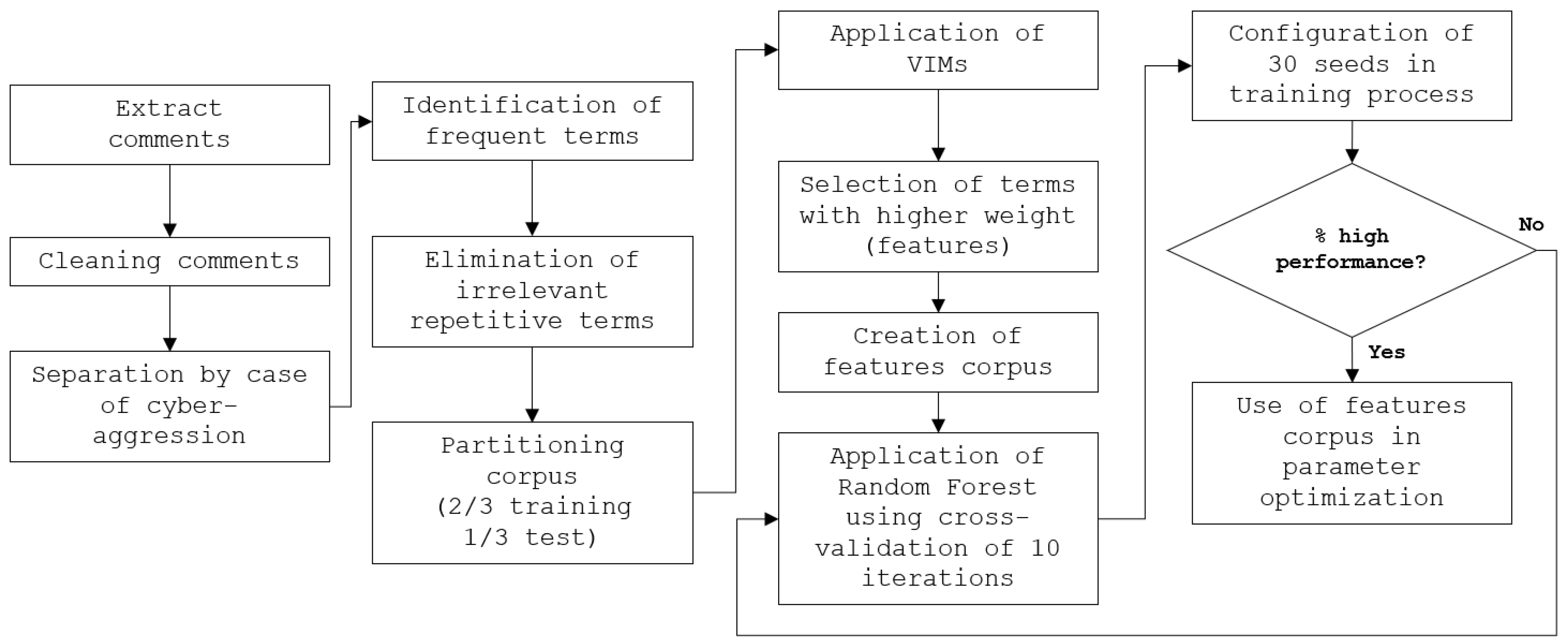

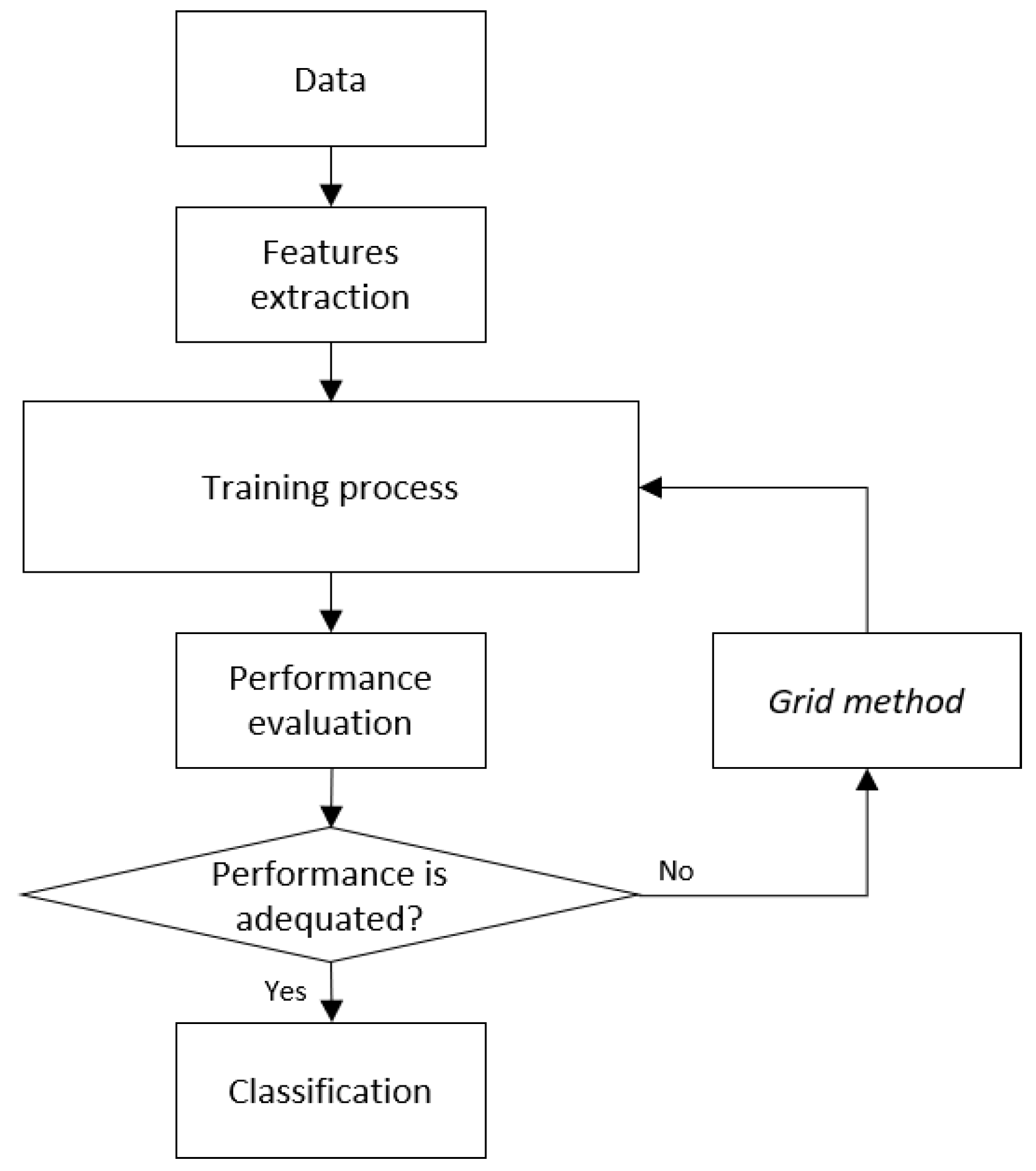

Section 3 describes the materials and methods used in this research,

Section 4 shows the architecture of the proposed computational model,

Section 5 describes the experiments and results obtained, and the last section concludes the article.

2. Related Research

Due to the numerous victims of cyber-bullying and the fatal consequences it causes, today there is a need to study this phenomenon in terms of its detection, prevention, and mitigation. The consequences of cyber-bullying are worrisome when victims cannot cope with the emotional stress of abusive, threatening, humiliating, and aggressive messages. Presently there are several types of research to avoid or reduce online violence; even so, it is necessary to develop more precise techniques or online tools to support the victims.

Raisi [

31] proposes a model to detect offensive comments on social networks, in order to intervene by filtering or advising those involved. To train this model, they used comments with offensive words from Twitter and Ask.fm. Other authors [

8,

32] have developed conversation systems, based on intelligent agents that allow supportive emotional feedback to victims who suffer from cyber-bullying. Reynolds [

33] proposed a system to detect cyber-bullying in the Formspring social network, based on the recognition of violence patterns in the user posts, through the analysis of offensive words; in addition, it uses a ranking level of the detected threat. Likewise, it obtained an accuracy of 81.7% with J48 decision trees.

Ptaszynski [

34] describes in his research the development of an online application in Japan, for school staff and parents with the duty of detecting inappropriate content on unofficial secondary websites. The goal is to report cases of cyber-bullying to federal authorities; in this work they used SVMs and got 79.9% accuracy. Rybnicek [

12] proposes an application for Facebook to protect minor users from cyber-bullying and sex-teasing. This application aims to analyze the content of images and videos, as well as the activity of the user to record changes in behavior. In another study, a collection of bad words was made using 3915 published messages tracked from the website Formspring.me. The accuracy obtained in this study was only 58.5% [

35].

Different studies fight against cyber-bullying by supporting the classification of situations, topics or types of it. For example, at the Massachusetts Institute of Technology, [

35] developed a system to detect cyber-bullying in YouTube video comments. The system can identify the topic of the message, such as sexuality, race, and intelligence. The overall success of this experiment was 66.7% accuracy using SVMs. Similarly, the study carried out by Nandhini [

36] proposes a system to detect cyber-bullying activities and classify them as flaming, harassment, racism, and terrorism. The author uses a fuzzy classification rule; however, the accuracy of the results are very low (around 40%), but he increased the efficiency of classifier up to 90% using a series of rules. In the same way, Chen [

37] proposes an architecture to detect offensive content and identify potential offensive users in social media. The system achieves an accuracy of 98.24% in sentence offensive detection and an accuracy of 77.9% in user offensiveness detection. Similar work to this is presented by Sood [

38], in which comments were tagged from a news site using Amazon’s Mechanical Turk to create a profanity-labeled data set. In this study, they use SVMs with a profanity list-based and Levenshtein edit distance tool, getting an accuracy of 90%.

In addition to studies that aim to detect messages with offensive content or classify messages into types of cyber-bullying, other studies are trying to prove that a system dedicated to taking care of user behavior can reduce situations of cyber-bullying. Bosse [

8] performed an experiment consisting of a normative multi-agent game with children 6 to 12 years old. In the experiment, the author highlights a particular case: a girl who, regardless of the agent’s warnings, continued to violate rules and was removed from the game. However, she changed her attitude and began to follow the rules of the game. Through this research, the author shows that in the long term, the system manages to reduce the number of rule violations. Therefore it is possible to affirm that research with technological proposals using sentiment analysis, text mining, multi-agent, or other AI techniques can support the reduction of violence online.

6. Discussion and Conclusions

Cyber-aggression has increased negatively as the use of social networks increases, which is why in this work we have sought to develop computational tools analyzing offensive comments and classified into three categories.

There are already a variety of software tools or applications that operate under AI techniques to detect offensive comments, filter them or send messages of support to the victim. However, it is still necessary to improve the performance of these tools to get more effective predictions. The development of tools that work in the Spanish language is also required, since most of the research targets English-speaking countries, which is why it is difficult to obtain resources for algorithm training, such as data sets, lexicons, corpora, among others. Moreover, it is crucial to consider certain idioms or colloquialisms of the region where the model applies, so the translation of the available resources of the Web is not always convenient. Therefore it is necessary to create resources in the Spanish language. According to the need for a data set of offensive comments in Spanish, and considering the example of other related research, the authors have created their own data sets of comments using social networks such as Twitter [

55], Formspring.me [

35], Facebook [

56], Ask.fm [

31] and others where cyber-bullying has been increasing [

57].

We decided to create a data set of offensive comments using Facebook. At first, Twitter was used to extract comments on these three cases of cyber-aggression, but most of the comments were irrelevant. The Twitter API allows download of comments using a hashtag, but there are few users who make offensive comments and use a hashtag to identify the comment. For this reason, we decided to use news on Facebook about marriage between people of the same sex, publications about women’s triumphs, or reports of physical abuse and news about Donald Trump’s wall. Besides, Facebook is the most-used social network in Mexico [

58]. We believe that a gathering of more relevant news comments such as abortion, adoption by same-sex couples, feminicides and other related news, not only from Mexico but also from Latin America, as well as the inclusion of experts who study the Spanish language and colloquialisms, can improve the performance of the classifier.

This paper describes the development of a model to classify cyber-aggression cases applying Random Forest and OneR. We seek to initially impact Mexico, where there has been a wave of hate crimes. Our contribution is as follows: (1) We created a data set with cyber-aggression cases from social networks. (2) We focused on cyber-aggression cases in our native language, i.e., Spanish. (3) Specifically, we were interested in the most representative types of cyber-aggression in our country of origin, Mexico. (4) We identified the most relevant terms for the detection of cyber-aggression cases included in the study. (5) We created an automatic detection model of cyber-aggression cases with high precision and interpretable by the human being (rule-based).

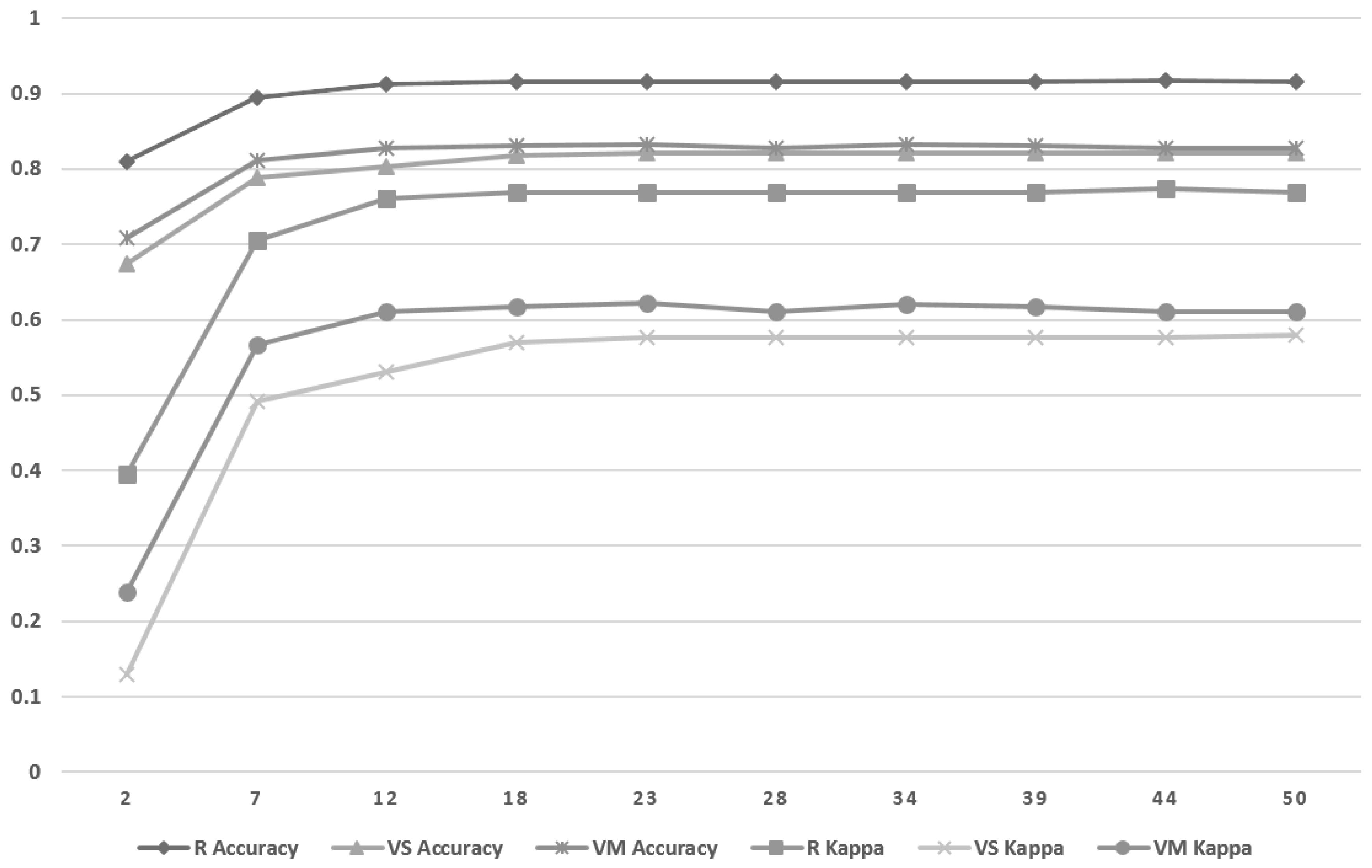

The results obtained in this work with Random Forest support the identification of relevant features to classify offensive comments into three cases: racism, violence based on sexual orientation, and violence against women. Nevertheless, OneR outperformed Random Forest in identifying types of cyber-aggression, in addition to providing a simple classification rule with the most relevant terms for each type of aggression. Even when we obtained high-performance classification models with this particular data, it is essential to highlight that the classifiers used in this study have a better performance classifying offensive comments against the LGBT population and racists comments. Therefore the exploration of other machine-learning techniques and the continuous update of the offensive data set may not be ruled out in future work, thus allowing the analysis of another kind of cyber-aggression case, e.g., those suffered by children. Also, it is important to continue improving the feature-selection process. On the other hand, building an automatic labeling system for offensive comments made by social networks users, and thus minimizing human error, will be of great help. Finally, we will seek to identify the victims of cyber-aggression to provide them with psychological attention according to the case of harassment that they suffer.