A Survey of Feature Set Reduction Approaches for Predictive Analytics Models in the Connected Manufacturing Enterprise

Abstract

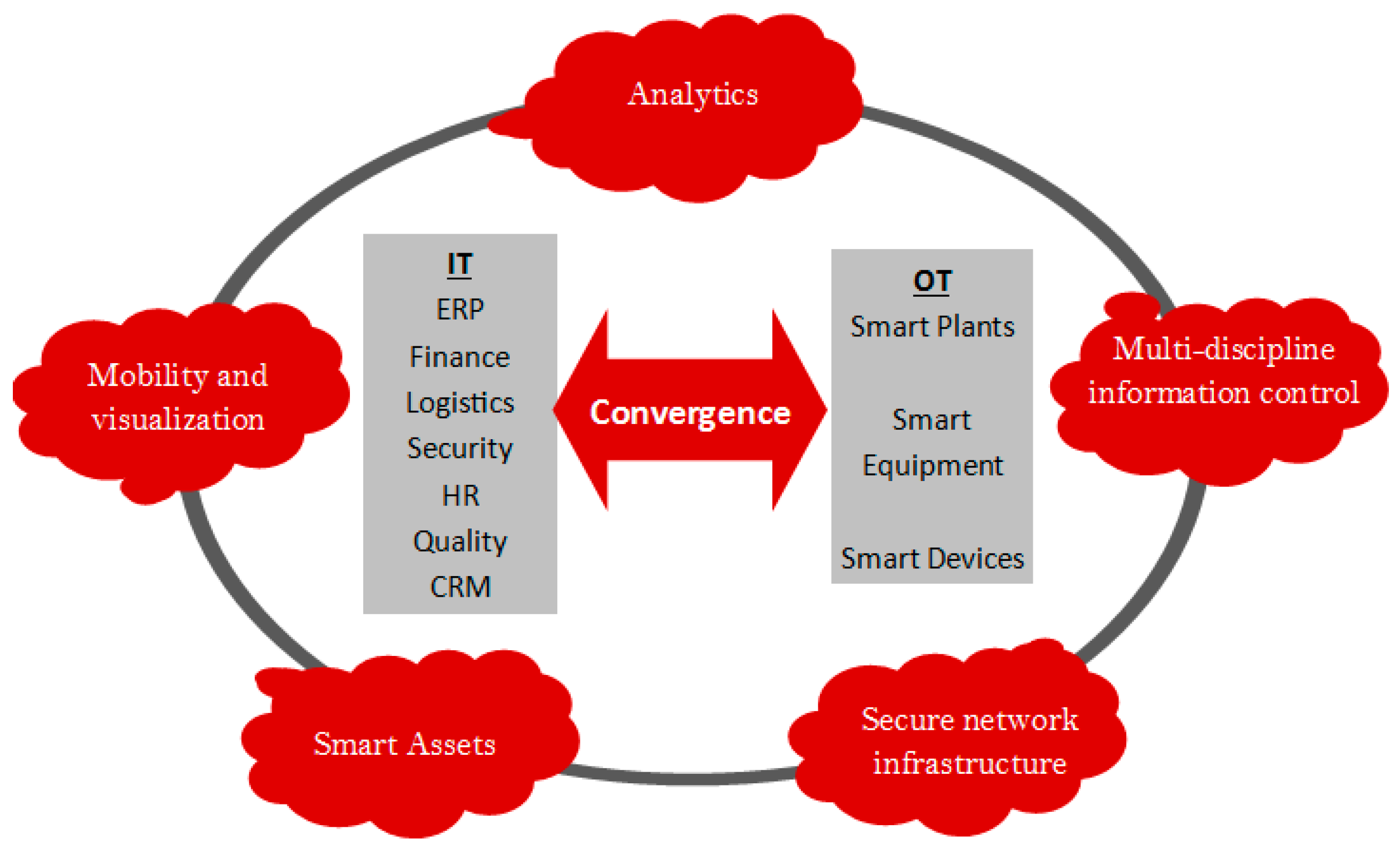

1. Introduction

- enterprise-wide visibility and collaboration;

- interconnected people, equipment, and processes;

- real-time learning of enterprise status;

- organizational agility by means of increased information to make informed, adaptive, proactive decisions.

2. Methodology

2.1. Data Collection

2.2. Data Analysis

- The first category explores big-data models for general industrial applications, specifically those featuring machine learning or deep learning.

- The second category focuses specifically on big data analyses and frameworks as applied to scenarios specific to smart manufacturing. Two subtopics emerged in the search results: fault detection and fault prediction.

- The third category addresses data reduction tools and techniques.

3. Literature Survey

3.1. Big Data Approaches for General Industrial Applications

- Adoption of advanced manufacturing technologies

- Growing importance of manufacturing of high value-added products

- Utilizing advanced knowledge, information management, and AI systems

- Sustainable manufacturing (processes) and products

- Agile and flexible enterprise capabilities and supply chains

- Innovation in products, services, and processes

- Close collaboration between industry and research to adopt new technologies

- New manufacturing paradigms.

3.2. Big Data Approaches for Specific Manufacturing Applications

3.2.1. Fault Detection

3.2.2. Fault Prediction

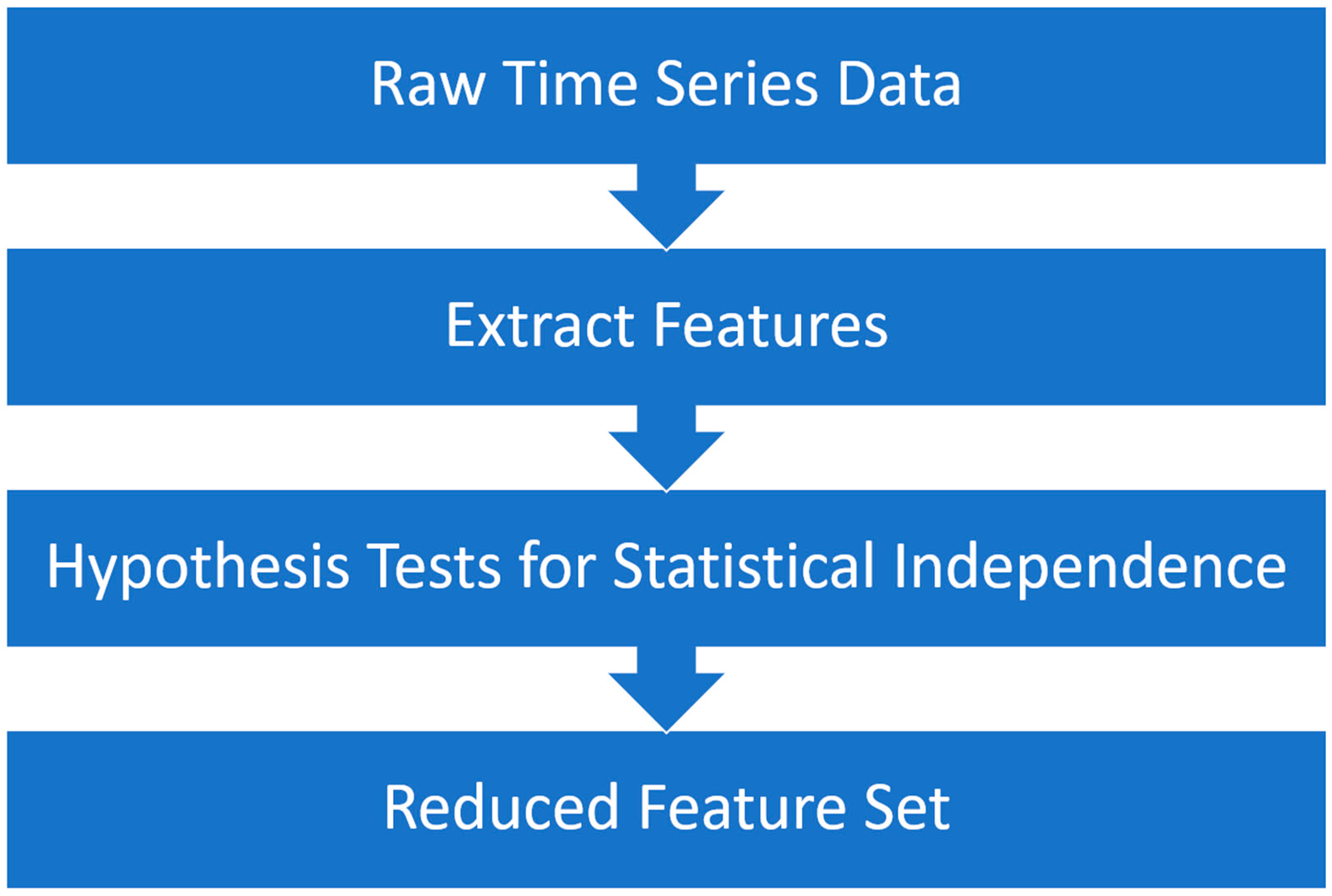

- Collect raw FDC, equipment tracking (ET), and metrology data

- Perform data reduction using a combination of principal component analysis (PCA) and subject matter expertise. This step, in the semiconductor case study, reduces the set of possible parameters from over 1000 to precisely 16

- Train model

- Display output to dashboard with a Maintenance/No Maintenance status

3.3. Frameworks for Data Reduction

4. Discussion

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Kusiak, A. Smart manufacturing must embrace big data. Nature 2017, 544, 23–25. [Google Scholar] [CrossRef] [PubMed]

- Tao, F.; Qi, Q.; Liu, A.; Kusiak, A. Data-driven smart manufacturing. J. Manuf. Syst. 2018, 48, 157–169. [Google Scholar] [CrossRef]

- Everything You Need to Know about the Industrial Internet of Things. G.E. Digital. 2016. Available online: https://www.ge.com/digital/blog/everything-you-need-know-about-industrial-internet-things (accessed on 1 May 2018).

- Schneider, S. The industrial internet of things (IIoT): Applications and taxonomy. In Internet of Things and Data Analytics Handbook; Wiley: Hoboken, NJ, USA, 2017; pp. 41–81. [Google Scholar]

- Industrial Internet Consortium. Available online: https://www.iiconsortium.org/ (accessed on 2 May 2018).

- OpenFog. Available online: https://www.openfogconsortium.org/ (accessed on 2 May 2018).

- Lasi, H.; Fettke, P.; Kemper, H.G.; Feld, T.; Hoffmann, M. Industry 4.0. Bus. Inf. Syst. Eng. 2014, 6, 239–242. [Google Scholar] [CrossRef]

- Gilchrist, A. Industry 4.0: The Industrial Internet of Things; Apress: New York, NY, USA, 2016. [Google Scholar]

- Li, L. China’s manufacturing locus in 2025: With a comparison of “Made-in-China 2025” and ‘Industry 4.0’. Technol. Forecast. Soc. Chang. 2018, 135, 66–74. [Google Scholar] [CrossRef]

- METI, Connected Industries. Ministry of Economy, Trade and Industry. 2017. Available online: http://www.meti.go.jp/english/policy/mono_info_service/connected_industries/index.html (accessed on 10 January 2019).

- Granrath, L. Japan’s Society 5.0: Going Beyond Industry 4.0. Japan Industry News. 2017. Available online: https://www.japanindustrynews.com/2017/08/japans-society-5-0-going-beyond-industry-4-0/ (accessed on 10 January 2019).

- Rockwell Automation. The Connected Enterprise eBook: Bringing People, Processes, and Technology Together; Rockwell Automation: Milwaukee, WI, USA, 2015. [Google Scholar]

- Otieno, W.; Cook, M.; Campbell-Kyureghyan, N. Novel approach to bridge the gaps of industrial and manufacturing engineering education: A case study of the connected enterprise concepts. In Proceedings of the 2017 IEEE Frontiers in Education Conference (FIE), Indianapolis, IN, USA, 18–21 October 2017; pp. 1–5. [Google Scholar]

- Qin, S.J. Process data analytics in the era of big data. AIChE J. 2014, 60, 3092–3100. [Google Scholar] [CrossRef]

- McKinsey & Company. Big Data: The Next Frontier for Innovation, Competition, and Productivity; McKinsey Global Institute: Washington, DC, USA, 2011; p. 156. [Google Scholar]

- Bollier, D.; Firestone, C.M. The Promise and Peril of Big Data; The Aspen Institute: Washington, DC, USA, 2010. [Google Scholar]

- Lenz, J.; Wuest, T.; Westkämper, E. Holistic approach to machine tool data analytics. J. Manuf. Syst. 2018, 48, 180–191. [Google Scholar] [CrossRef]

- Thoben, K.; Wiesner, S.; Wuest, T. ‘Industrie 4.0’ and Smart Manufacturing—A Review of Research Issues and Application Examples. Int. J. Autom. Technol. 2017, 11, 4–19. [Google Scholar] [CrossRef]

- Kaufman, E.L.; Lord, M.W.; Reese, T.W.; Volkmann, J. The Discrimination of Visual Number. Am. J. Psychol. 1949, 62, 498–525. [Google Scholar] [CrossRef] [PubMed]

- Miller, G.A. The magical number seven, plus or minus two: Some limits on our capacity for processing information. Psychol. Rev. 1956, 63, 81–97. [Google Scholar] [CrossRef] [PubMed]

- Simon, H.A. Designing organizations for an information-rich world. Comput. Commun. Public Interes. 1971, 72, 37. [Google Scholar]

- Oussous, A.; Benjelloun, F.; Lahcen, A.A.; Belfkih, S. Big Data technologies: A survey. J. King Saud Univ. Comput. Inf. Sci. 2018, 30, 431–448. [Google Scholar] [CrossRef]

- Honest, N. A Survey of Big Data Analytics. Int. J. Inf. Sci. Tech. 2016, 6, 35–43. [Google Scholar] [CrossRef]

- Tsai, C.-W.; Lai, C.-F.; Chao, H.-C.; Vasilakos, A.V. Big data analytics: A survey. J. Big Data 2015, 2, 21. [Google Scholar] [CrossRef]

- Spangenberg, N.; Roth, M.; Franczyk, B. Evaluating new approaches of big data analytics frameworks. In Proceedings of the International Conference on Business Information Systems, Poznań, Poland, 24–26 June 2015. [Google Scholar]

- Wuest, T.; Weimer, D.; Irgens, C.; Thoben, K.-D. Machine learning in manufacturing: Advantages, challenges, and applications. Prod. Manuf. Res. 2016, 4, 23–45. [Google Scholar] [CrossRef]

- Dingli, D.J. The Manufacturing Industry—Coping with Challenges; Working Paper No. 2012/05; 2012; p. 47. Available online: https://econpapers.repec.org/paper/msmwpaper/2012_2f05.htm (accessed on 27 February 2018).

- Gordon, J.; Sohal, A.S. Assessing manufacturing plant competitiveness—An empirical field study. Int. J. Oper. Prod. Manag. 2001, 21, 233–253. [Google Scholar] [CrossRef]

- Shiang, L.E.; Nagaraj, S. Impediments to innovation: Evidence from Malaysian manufacturing firms. Asia Pac. Bus. Rev. 2011, 17, 209–223. [Google Scholar] [CrossRef]

- Thomas, A.J.; Byard, P.; Evans, R. Identifying the UK’s manufacturing challenges as a benchmark for future growth. J. Manuf. Technol. Manag. 2012, 23, 142–156. [Google Scholar] [CrossRef]

- Kotsiantis, S.B. Supervised Machine Learning: A Review of Classification Techniques. Informatica 2007, 31, 249–268. [Google Scholar]

- Yang, K.; Trewn, J. Multivariate Statistical Methods in Quality Management; McGraw-Hill: New York, NY, USA, 2004. [Google Scholar]

- Alpaydin, E. Introduction to Machine Learning, 3rd ed.; MIT Press: Cambridge, MA, USA, 2014. [Google Scholar]

- Doltsinis, S.; Ferreira, P.; Lohse, N. Reinforcement learning for production ramp-up: A Q-batch learning approach. In Proceedings of the 11th International Conference on Machine Learning and Applications, Boca Raton, FL, USA, 12–15 December 2012; pp. 610–615. [Google Scholar]

- Wang, J.; Ma, Y.; Zhang, L.; Gao, R.X.; Wu, D. Deep learning for smart manufacturing: Methods and applications. J. Manuf. Syst. 2018, 48, 144–156. [Google Scholar] [CrossRef]

- Butler, B. What Is Edge Computing and How It’s Changing the Network. Network World. 2017. Available online: https://www.networkworld.com/article/3224893/internet-of-things/what-is-edge-computing-and-how-it-s-changing-the-network.html (accessed on 7 March 2018).

- Linthicum, D. Responsive Data Architecture for the Internet of Things. Computer 2016, 49, 72–75. [Google Scholar] [CrossRef]

- Mahmud, R.; Kotagiri, R.; Buyya, R. Fog Computing: A Taxonomy, Survey and Future Directions. In Internet of Everything; Springer: Singapore, 2018; pp. 103–130. [Google Scholar]

- Flath, C.M.; Stein, N. Towards a data science toolbox for industrial analytics applications. Comput. Ind. 2018, 94, 16–25. [Google Scholar] [CrossRef]

- Kumar, A.; Shankar, R.; Choudhary, A.; Thakur, L.S. A big data MapReduce framework for fault diagnosis in cloud-based manufacturing. Int. J. Prod. Res. 2016, 54, 7060–7073. [Google Scholar] [CrossRef]

- Japkowicz, N.; Stephen, S. The class imbalance problem: A systematic study. Intell. Data Anal. 2002, 6, 429–449. [Google Scholar] [CrossRef]

- Longadge, R.; Dongre, S.S.; Malik, L. Class imbalance problem in data mining: Review. Int. J. Comput. Sci. Netw. 2013, 2, 83–87. [Google Scholar]

- Bahga, A.; Madisetti, V.K. Analyzing massive machine maintenance data in a computing cloud. IEEE Trans. Parallel Distrib. Syst. 2012, 23, 1831–1843. [Google Scholar] [CrossRef]

- Devaney, M.; Cheetham, B. Case-Based Reasoning for Gas Turbine Diagnostics. In Proceedings of the 18th International FLAIRS Conference (FLAIRS-05), Clearwater Beach, FL, USA, 16–18 May 2005. [Google Scholar]

- Timmerman, H. SKF WindCon Condition Monitoring System for Wind Turbines. In Proceedings of the New Zealand Wind Energy Conference, Wellington, NZ, USA, 20–22 April 2009. [Google Scholar]

- Tamilselvan, P.; Wang, P. Failure diagnosis using deep belief learning based health state classification. Reliab. Eng. Syst. Saf. 2013, 115, 124–135. [Google Scholar] [CrossRef]

- Hinton, G.E. A Practical Guide to Training Restricted Boltzmann Machines. Computer 2012, 9, 599–619. [Google Scholar]

- Jia, F.; Lei, Y.; Lin, J.; Zhou, X.; Lu, N. Deep neural networks: A promising tool for fault characteristic mining and intelligent diagnosis of rotating machinery with massive data. Mech. Syst. Signal Process. 2016, 72–73, 303–315. [Google Scholar] [CrossRef]

- Schmidhuber, J. Deep Learning in neural networks: An overview. Neural Netw. 2015, 61, 85–117. [Google Scholar] [CrossRef] [PubMed]

- Banerjee, T.; Das, S.; Roychoudhury, J.; Abraham, A. Implementation of a New Hybrid Methodology for Fault Signal Classification Using Short-Time Fourier Transform and Support Vector Machines. In Proceedings of the 5th International Workship on Soft Computing Models in Industrial Environment Application (SOCO 2010), Guimarães, Portugal, 16–18 June 2010; Volume 73, pp. 219–225. [Google Scholar]

- Banerjee, T.P.; Das, S. Multi-sensor data fusion using support vector machine for motor fault detection. Inf. Sci. 2012, 217, 96–107. [Google Scholar] [CrossRef]

- Jack, L.B.; Nandi, A.K. Fault detection using support vector machines and artificial neural networks, augmented by genetic algorithms. Mech. Syst. Signal Process. 2002, 16, 373–390. [Google Scholar] [CrossRef]

- Rychetsky, M.; Ortmann, S.; Glesner, M. Support vector approaches for engine knock detection. In Proceedings of the IJCNN’99. International Joint Conference on Neural Networks, Washington, DC, USA, 10–16 July 1999; Volume 2. [Google Scholar]

- Altintas, Y. In-process detection of tool breakages using time series monitoring of cutting forces. Int. J. Mach. Tools Manuf. 1988, 28, 157–172. [Google Scholar] [CrossRef]

- Wang, H.; Zhoui, J.; He, I.; Sha, J. An uncertain information fusion method for fault diagnosis of complex system. In Proceedings of the 2003 International Conference on Machine Learning and Cybernetics, Xi’an, China, 5 November 2003; pp. 1505–1510. [Google Scholar]

- Xiong, J.; Zhang, Q.; Sun, G.; Zhu, X.; Liu, M.; Li, Z. An Information Fusion Fault Diagnosis Method Based on Dimensionless Indicators with Static Discounting Factor and KNN. IEEE Sens. J. 2016, 16, 2060–2069. [Google Scholar] [CrossRef]

- Dempster, A.P. A Generalization of Bayesian Inference. J. R. Stat. Soc. 1968, 30, 205–247. [Google Scholar] [CrossRef]

- Khakifirooz, M.; Chien, C.F.; Chen, Y.J. Bayesian inference for mining semiconductor manufacturing big data for yield enhancement and smart production to empower industry 4.0. Appl. Soft Comput. J. 2017, 68, 990–999. [Google Scholar] [CrossRef]

- Lee, H. Framework and development of fault detection classification using IoT device and cloud environment. J. Manuf. Syst. 2017, 43, 257–270. [Google Scholar] [CrossRef]

- Gunes, V.; Peter, S.; Givargis, T.; Vahid, F. A Survey on Concepts, Applications, and Challenges in Cyber-Physical Systems. KSII Trans. Internet Inf. Syst. 2014, 8, 120–132. [Google Scholar]

- Rajkumar, R.; Lee, I.; Sha, L.; Stankovic, J. Cyber-physical systems. In Proceedings of the 47th Design Automation Conference on—DAC ’10, Anaheim, CA, 13–18 June 2010; p. 731. [Google Scholar]

- Saez, M.; Maturana, F.; Barton, K.; Tilbury, D. Modeling and Analysis of Cyber-Physical Manufacturing Systems for Anomaly Detection and Diagnosis. 2018. Available online: https://www.nist.gov/sites/default/files/documents/2018/05/22/univ_michigan_miguel_saez.pdf (accessed on 25 February 2019).

- Saez, M.; Maturana, F.; Barton, K.; Tilbury, D. Anomaly detection and productivity analysis for cyber-physical systems in manufacturing. In Proceedings of the 2017 13th IEEE Conference on Automation Science and Engineering (CASE), Xi’an, China, 20–23 August 2017; pp. 23–29. [Google Scholar]

- Wan, J.; Tang, S.; Li, D.; Wang, S.; Liu, C.; Abbas, H.; Vasilakos, A.V. A Manufacturing Big Data Solution for Active Preventive Maintenance. IEEE Trans. Ind. Inform. 2017, 13, 2039–2047. [Google Scholar] [CrossRef]

- Munirathinam, S.; Ramadoss, B. Big data predictive analtyics for proactive semiconductor equipment maintenance. In Proceedings of the 2014 IEEE International Conference on Big Data (IEEE Big Data 2014), Washington, DC, USA, 27–30 October 2014; pp. 893–902. [Google Scholar]

- Franklin, J. Signalling and anti-proliferative effects mediated by gonadotrophin-releasing hormone receptors after expression in prostate cancer cells using recombinant adenovirus. J. Endocrinol. 2003, 176, 275–284. [Google Scholar] [CrossRef] [PubMed]

- Ji, W.; Wang, L. Big data analytics based fault prediction for shop floor scheduling. J. Manuf. Syst. 2017, 43, 187–194. [Google Scholar] [CrossRef]

- Rolfe, B.F.; Frayman, Y.; Kelly, G.L.; Nahavandi, S. Recognition of Lubrication Defects in Cold Forging Process with a Neural Network. In Artificial Neural Networks in Finance and Manufacturing; IGI Global: Hershey, PA, USA, 2006; pp. 262–275. [Google Scholar]

- Perzyk, M.; Kochański, A.W. Prediction of ductile cast iron quality by artificial neural networks. J. Mater. Process. Technol. 2001, 109, 305–307. [Google Scholar] [CrossRef]

- Kilickap, E.; Yardimeden, A.; Çelik, Y.H. Mathematical Modelling and Optimization of Cutting Force, Tool Wear and Surface Roughness by Using Artificial Neural Network and Response Surface Methodology in Milling of Ti-6242S. Appl. Sci. 2017, 7, 1064. [Google Scholar] [CrossRef]

- Huang, C.; Jia, X.; Zhang, Z. A modified back propagation artificial neural network model based on genetic algorithm to predict the flow behavior of 5754 aluminum alloy. Materials 2018, 11, 855. [Google Scholar] [CrossRef] [PubMed]

- Arnaiz-González, Á.; Fernández-Valdivielso, A.; Bustillo, A.; de Lacalle, L.N.L. Using artificial neural networks for the prediction of dimensional error on inclined surfaces manufactured by ball-end milling. Int. J. Adv. Manuf. Technol. 2016, 83, 847–859. [Google Scholar] [CrossRef]

- De Lacalle, L.N.L.; Lamikiz, A.; Salgado, M.A.; Herranz, S.; Rivero, A. Process planning for reliable high-speed machining of moulds. Int. J. Prod. Res. 2002, 40, 2789–2809. [Google Scholar] [CrossRef]

- De Lacalle, L.N.L.; Lamikiz, A.; Sánchez, J.A.; Salgado, M.A. Effects of tool deflection in the high-speed milling of inclined surfaces. Int. J. Adv. Manuf. Technol. 2004, 24, 621–631. [Google Scholar] [CrossRef]

- Lasemi, A.; Xue, D.; Gu, P. Recent development in CNC machining of freeform surfaces: A state-of-the-art review. CAD Comput. Aided Des. 2010, 42, 641–654. [Google Scholar] [CrossRef]

- Liu, X.; Li, Y. Feature-based adaptive machining for complex freeform surfaces under cloud environment. Robot. Comput. Integr. Manuf. 2019, 56, 254–263. [Google Scholar] [CrossRef]

- García, S.; Luengo, J.; Herrera, F. Tutorial on practical tips of the most influential data preprocessing algorithms in data mining. Knowl.-Based Syst. 2015, 98, 1–29. [Google Scholar] [CrossRef]

- Liu, H.; Setiono, R. A Probabilistic Approach to Feature Selection—A Filter Solution. In Proceedings of the Thirteenth International Conference on Machine and Learning, Bari, Italy, 3–6 July 1996; pp. 319–327. [Google Scholar]

- Battiti, R. Using Mutual Information for Selecting Features in Supervised Neural-Net Learning. IEEE Trans. Neural Netw. 1994, 5, 537–550. [Google Scholar] [CrossRef] [PubMed]

- Kira, K.; Rendell, L. A practical approach to feature selection. In Proceedings of the Ninth International Conference on Machine Learning, Aberdeen, UK, 1–3 July 1992; pp. 249–256. [Google Scholar]

- Peng, H.; Long, F.; Ding, C. Feature selection based on mutual information: Criteria of Max-Dependency, Max-Relevance, and Min-Redundancy. IEEE Trans. Pattern Anal. Mach. Intell. 2005, 27, 1226–1238. [Google Scholar] [CrossRef] [PubMed]

- Hart, P. The condensed nearest neighbor rule (Corresp.). IEEE Trans. Inf. Theory 1968, 14, 515–516. [Google Scholar] [CrossRef]

- Wilson, D.L. Asymptotic Properties of Nearest Neighbor Rules Using Edited Data. IEEE Trans. Syst. Man Cybern. 1972, 2, 408–421. [Google Scholar] [CrossRef]

- Wilson, D.R.; Martinez, T.R. Reduction Techniques for Instance-Based Learning Algorithms. Mach. Learn. 2000, 38, 257–286. [Google Scholar] [CrossRef]

- Brighton, H.; Mellish, C. Advances in Instance Selection for Instance-Based Learning Algorithms. Data Min. Knowl. Discov. 2002, 6, 153–172. [Google Scholar] [CrossRef]

- Stanula, P.; Ziegenbein, A.; Metternich, J. Machine learning algorithms in production: A guideline for efficient data source selection. Procedia CIRP 2018, 78, 261–266. [Google Scholar] [CrossRef]

- Rehman, M.H.U.; Chang, V.; Batool, A.; Wah, T.Y. Big data reduction framework for value creation in sustainable enterprises. Int. J. Inf. Manag. 2016, 36, 917–928. [Google Scholar] [CrossRef]

- Luan, T.H.; Gao, L.; Li, Z.; Xiang, Y.; Wei, G.; Sun, L. Fog Computing: Focusing on Mobile Users at the Edge. arXiv, 2015; arXiv:1502.01815. [Google Scholar]

- Ma, X.; Cripps, R.J. Shape preserving data reduction for 3D surface points. CAD Comput. Aided Des. 2011, 43, 902–909. [Google Scholar] [CrossRef]

- Jeong, I.-S.; Kim, H.-K.; Kim, T.-H.; Lee, D.H.; Kim, K.J.; Kang, S.-H. A Feature Selection Approach Based on Simulated Annealing for Detecting Various Denial of Service Attacks. Converg. Secur. 2016, 2016, 1–18. [Google Scholar] [CrossRef]

- Kang, S.-H.; Kim, K.J. A feature selection approach to find optimal feature subsets for the network intrusion detection system. Cluster Comput. 2016, 19, 325–333. [Google Scholar] [CrossRef]

- Du, K.L.; Swamy, M.N.S. Search and Optimization by Metaheuristics: Techniques and Algorithms Inspired by Nature; Springer: Basel, Switzerland, 2016; pp. 1–434. [Google Scholar]

- Lalehpour, A.; Berry, C.; Barari, A. Adaptive data reduction with neighbourhood search approach in coordinate measurement of planar surfaces. J. Manuf. Syst. 2017, 45, 28–47. [Google Scholar] [CrossRef]

- Haq, A.A.U.; Wang, K.; Djurdjanovic, D. Feature Construction for Dense Inline Data in Semiconductor Manufacturing Processes. IFAC-PapersOnLine 2016, 49, 274–279. [Google Scholar] [CrossRef]

- Christ, M.; Kempa-Liehr, A.W.; Feindt, M. Distributed and parallel time series feature extraction for industrial big data applications. arXiv, 2016; arXiv:1610.07717. [Google Scholar]

- Benjamini, Y.; Yekutieli, D. The control of the false discovery rate in multiple testing under dependency. Ann. Stat. 2001, 29, 1165–1188. [Google Scholar]

- Dheeru, D.; Taniskidou, E.K. UCI Machine Learning Repository; School of Information and Computer Sciences, University of California: Irvine, CA, USA, 2017. [Google Scholar]

- Christ, M.; Braun, N.; Neuffer, J.; Kempa-Liehr, A.W. Time Series FeatuRe Extraction on basis of Scalable Hypothesis tests (tsfresh—A Python package). Neurocomputing 2018, 307, 72–77. [Google Scholar] [CrossRef]

- Wang, W.; Liu, J.; Pitsilis, G.; Zhang, X. Abstracting massive data for lightweight intrusion detection in computer networks. Inf. Sci. 2018, 433–434, 1339–1351. [Google Scholar] [CrossRef]

- Fan, J.; Han, F.; Liu, H. Challenges of Big Data analysis. Natl. Sci. Rev. 2014, 1, 293–314. [Google Scholar] [CrossRef] [PubMed]

- Campos, J.; Sharma, P.; Gabiria, U.G.; Jantunen, E.; Baglee, D. A Big Data Analytical Architecture for the Asset Management. Procedia CIRP 2017, 64, 369–374. [Google Scholar] [CrossRef]

- Nikolaidis, K.; Goulermas, J.Y.; Wu, Q.H. A class boundary preserving algorithm for data condensation. Pattern Recognit. 2011, 44, 704–715. [Google Scholar] [CrossRef]

| Technique | Feature Learning | Model Construction | Model Training |

|---|---|---|---|

| Traditional Machine Learning | Features are identified, engineered and extracted manually through domain expert knowledge. | Models typically have shallow structures (few hidden layers) and are data-driven using selected features. | Modules are trained step by step. |

| Deep Learning | Features are learned by transforming the data into abstract representations. | Models are end-to-end, high hierarchies with nonlinear combinations of numerous hidden layers. | Model parameters are trained jointly. |

| Author(s) | Focus |

|---|---|

| Wuest et al. [26] | Key challenges for global manufacturing industry |

| Alpaydin [33] | Machine learning overview |

| Wang et al. [35] | Deep learning for smart manufacturing |

| Tao et al. [2] | Data-driven smart manufacturing |

| Flath and Stein [39] | Data science “toolbox” for industrial analytics |

| Authors(s) | Focus | Explicit Data Reduction Step |

|---|---|---|

| Kumar et al. [40] | Enterprise-level architecture; methodology to address class imbalance | No |

| Bahga and Madiseti [43] | Enterprise-level architecture | No |

| Tamilselvan and Wang [46] | Case study: Machine health states—DBN | No |

| Jia et al. [48] | Case study: Fault characterization—DNN | No |

| Banerjee et al. [50] | Case study: Fault signal identification—SVM | Discussed, not implemented |

| Xiong et al. [56] | Methodology: Information fusion to reconcile conflicting evidence in fault detection | No |

| Khakifirooz et al. [58] | Case study: Yield enhancement–Bayesian inference | Yes |

| Lee [59] | Enterprise-level architecture; Case study: Fault detection and classification—SVR, RBF, DBL-DL | No |

| Wan et al. [64] | Enterprise-level architecture; Methodology: Real-time and offline components; Case study: Fault prediction—Neural Network | No |

| Munirathinam & Ramadoss [65] | Enterprise-level architecture | Yes |

| Ji and Wang [67] | Enterprise-level architecture; Simulated proof of concept case study: Fault prediction for shop floor scheduling | No |

| Rolfe et al. [68] | Case study: Lubrication defects in cold forging process—NN | No |

| Perzyk and Kochanski [69] | Ductile cast iron quality—NN | No |

| Kilickap et al. [70] | Micro-milling parameter optimization—NN | No |

| Changqing et al. [71] | Alloy flow behavior-NN | No |

| Arnaiz-Gonzalez et al. [72] | Dimensional error in precision machining—NN | No |

| de Lacalle et al. [73] | High speed machining of moulds | No |

| Liu and Li [76] | Manufacturing freeform surfaces | No |

| Author(s) | Focus |

|---|---|

| Habib ur Rehman, et al. [87] | High level/Institutional framework |

| Jeong et al. [90] | Feature selection meta-heuristic (simulated annealing) |

| Lalehpour, Berry, and Barari [93] | Sample reduction |

| Ma and Cripps [89] | Shape preservation with data reduction for 3D surface points |

| Ul Haq, Wang, and Djurdjanovic [94] | Feature extraction from streaming signal data |

| Christ, Kempa-Liehr, and Feindt [95] | Feature extraction and selection from time series data |

| Wang et al. [99] | Clustering algorithms to extract representative data instances |

| Nikolaidis, Goulermas, and Wu [102] | Instance reduction based on distance from class boundaries |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

LaCasse, P.M.; Otieno, W.; Maturana, F.P. A Survey of Feature Set Reduction Approaches for Predictive Analytics Models in the Connected Manufacturing Enterprise. Appl. Sci. 2019, 9, 843. https://doi.org/10.3390/app9050843

LaCasse PM, Otieno W, Maturana FP. A Survey of Feature Set Reduction Approaches for Predictive Analytics Models in the Connected Manufacturing Enterprise. Applied Sciences. 2019; 9(5):843. https://doi.org/10.3390/app9050843

Chicago/Turabian StyleLaCasse, Phillip M., Wilkistar Otieno, and Francisco P. Maturana. 2019. "A Survey of Feature Set Reduction Approaches for Predictive Analytics Models in the Connected Manufacturing Enterprise" Applied Sciences 9, no. 5: 843. https://doi.org/10.3390/app9050843

APA StyleLaCasse, P. M., Otieno, W., & Maturana, F. P. (2019). A Survey of Feature Set Reduction Approaches for Predictive Analytics Models in the Connected Manufacturing Enterprise. Applied Sciences, 9(5), 843. https://doi.org/10.3390/app9050843