A Gesture-Based Teleoperation System for Compliant Robot Motion

Abstract

1. Introduction

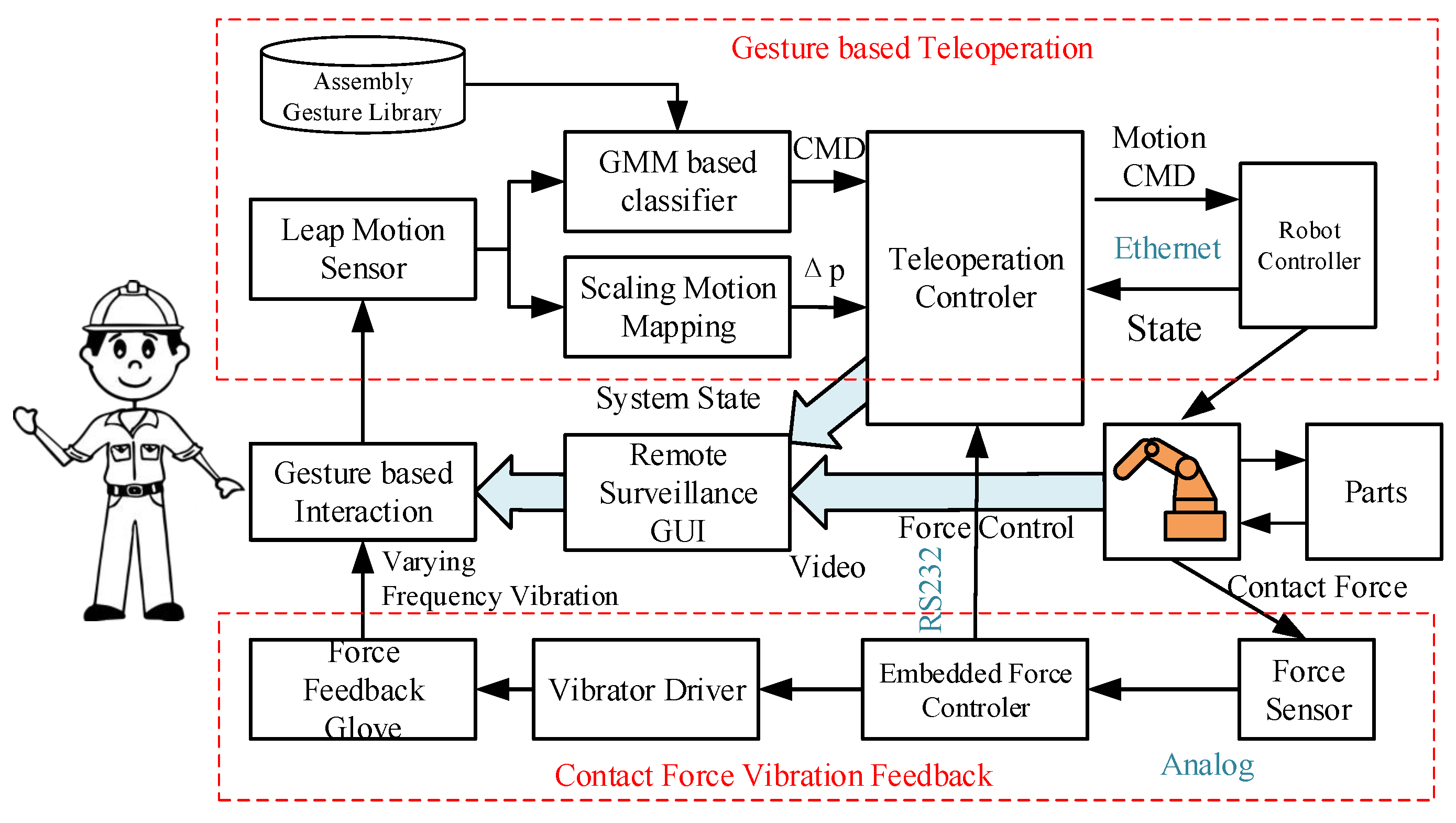

2. Gesture-Based Human-Robot Interface

2.1. Leap Motion Based Gesture Recognition

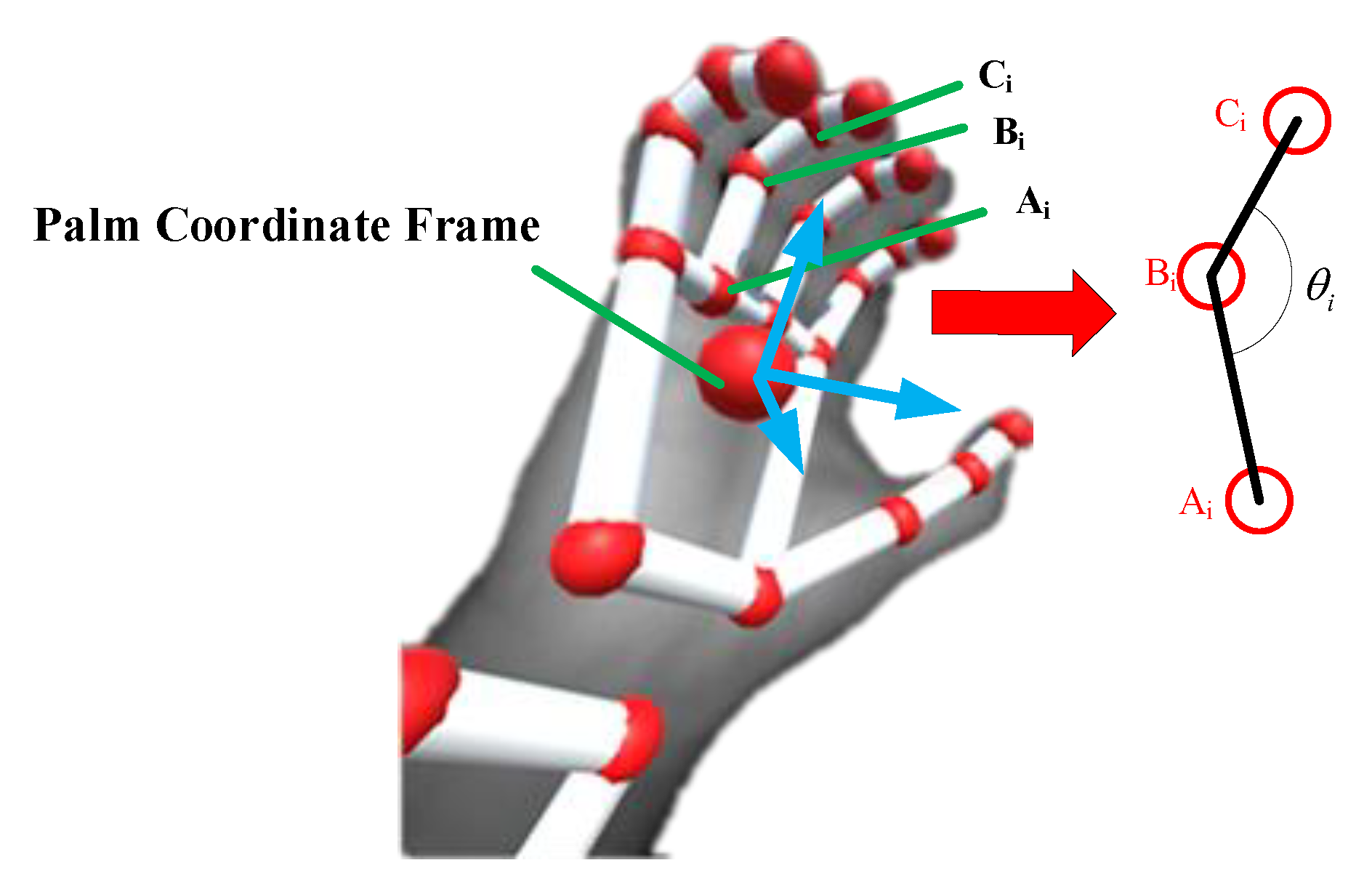

2.1.1. Feature Selection

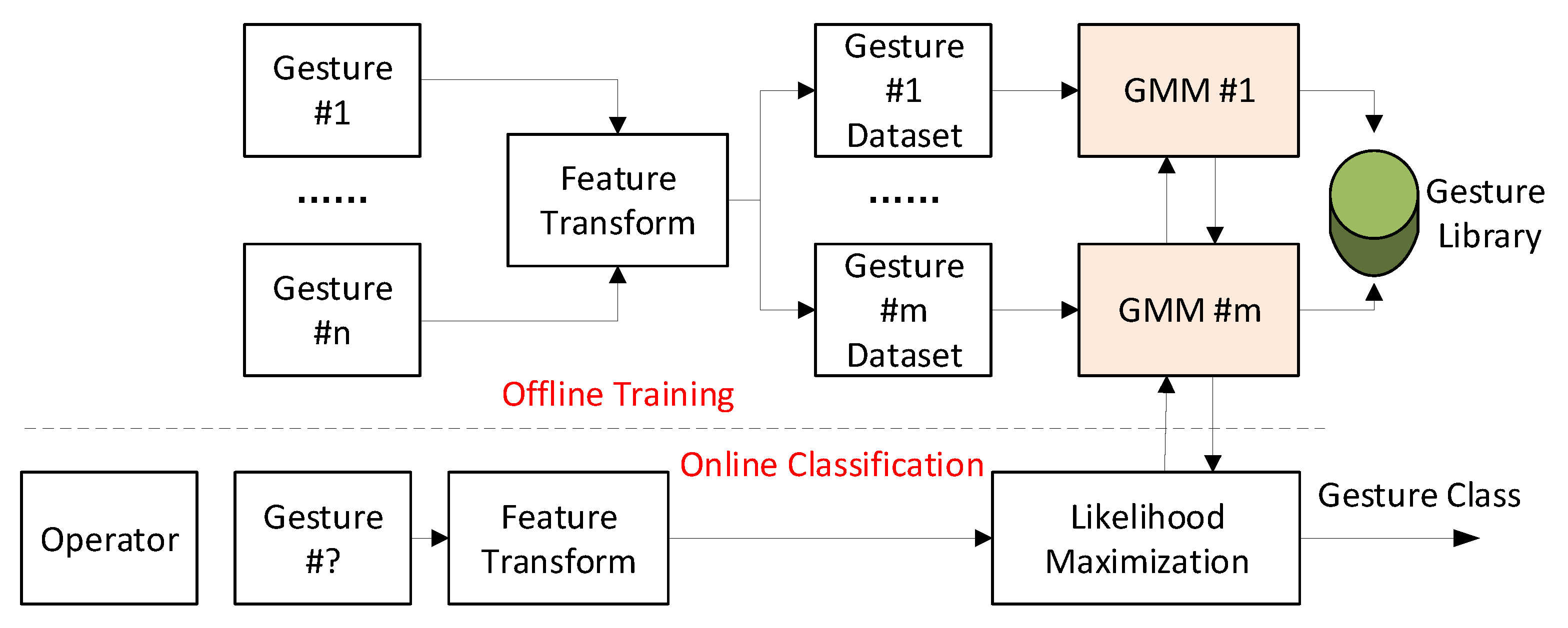

2.1.2. Gaussian Mixture Model (GMM) Based Classification

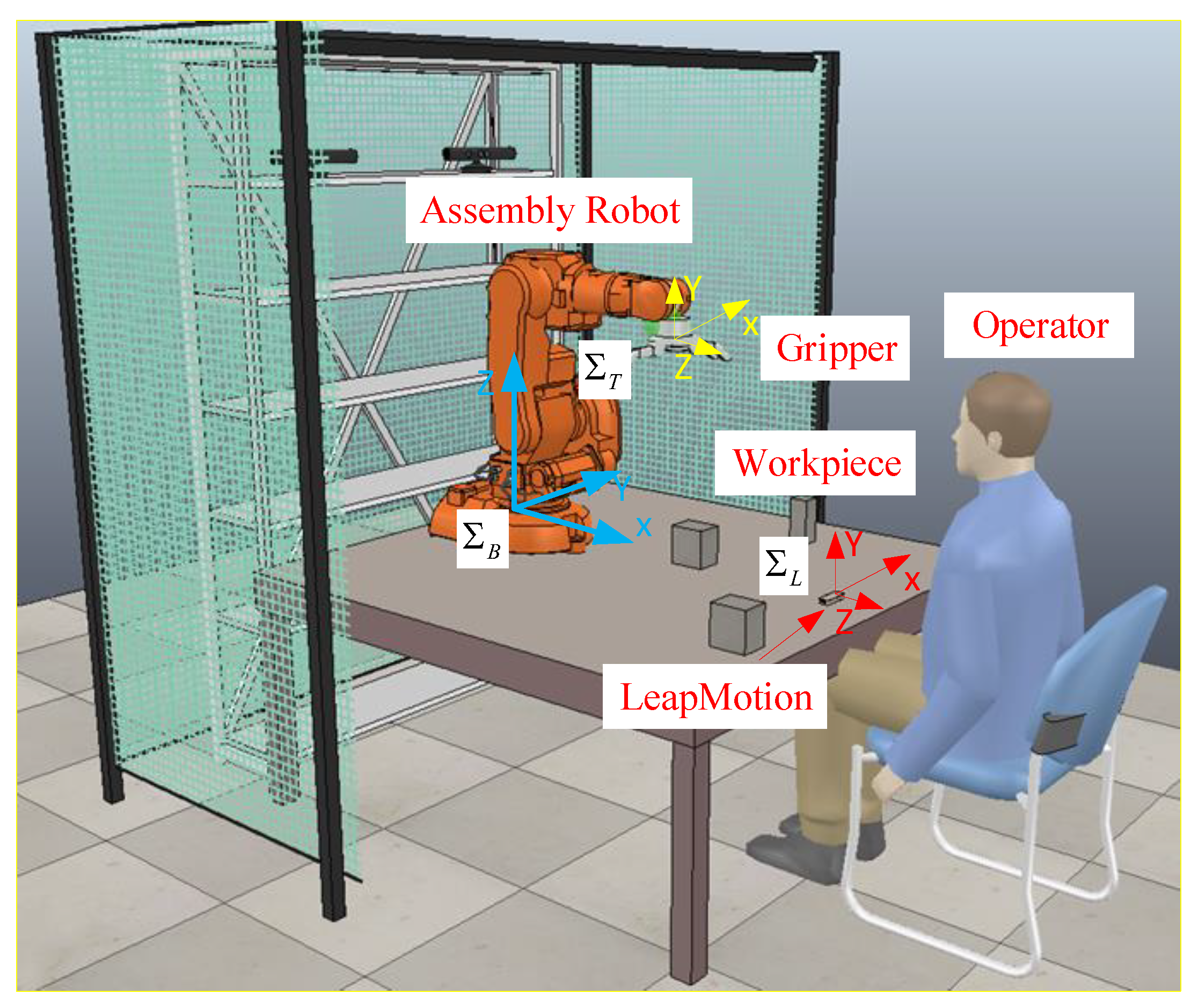

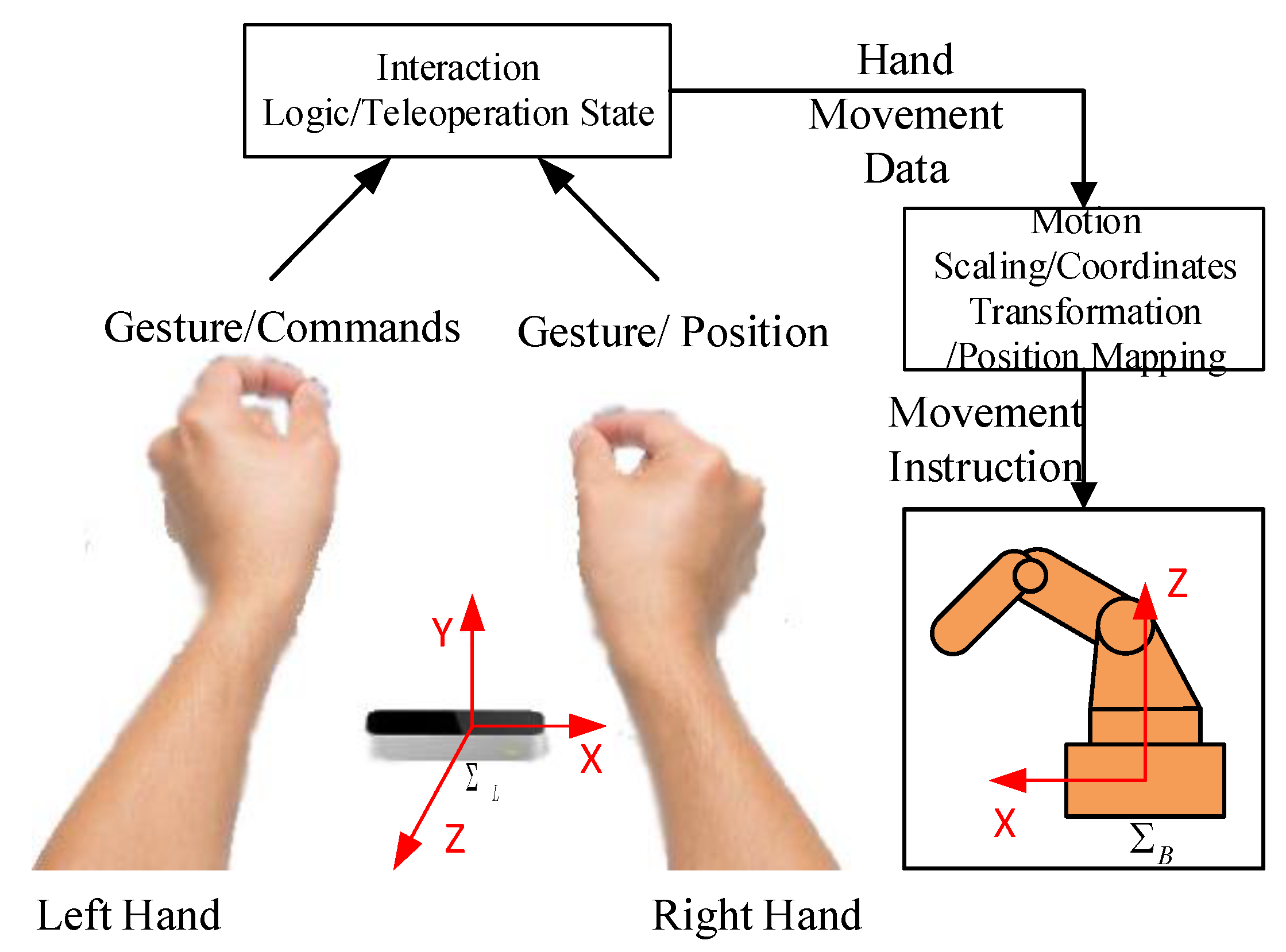

2.2. Hand-Robot-Tool Mapping

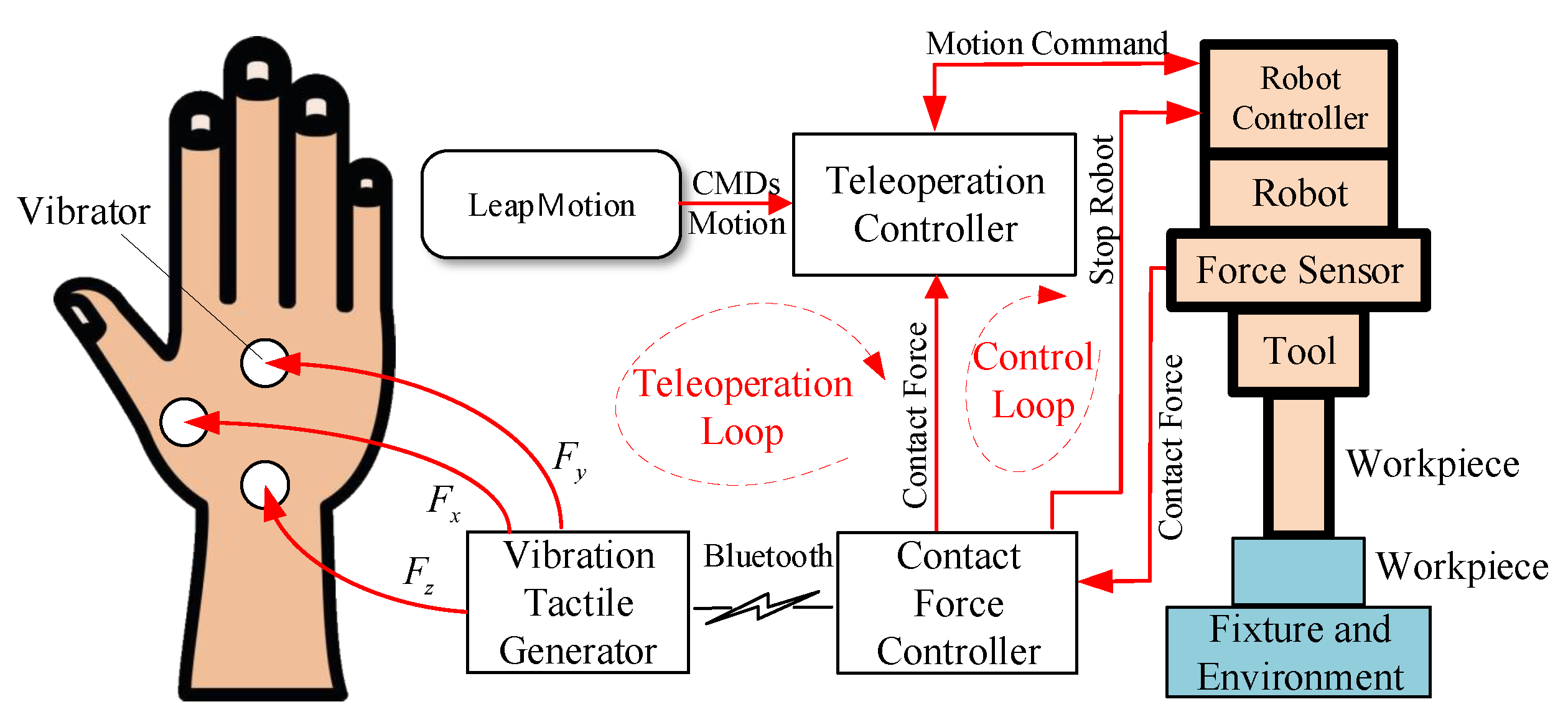

2.3. Force Feedback Loop

3. Gesture-Based Teleoperation System for Robot Motion

3.1. Gesture Language Library and Interaction Logic

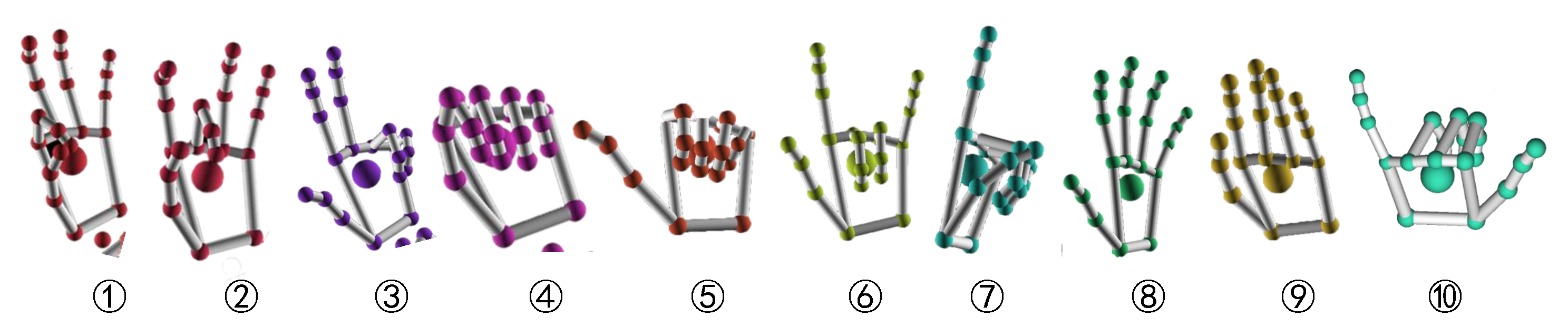

3.1.1. Gesture Library

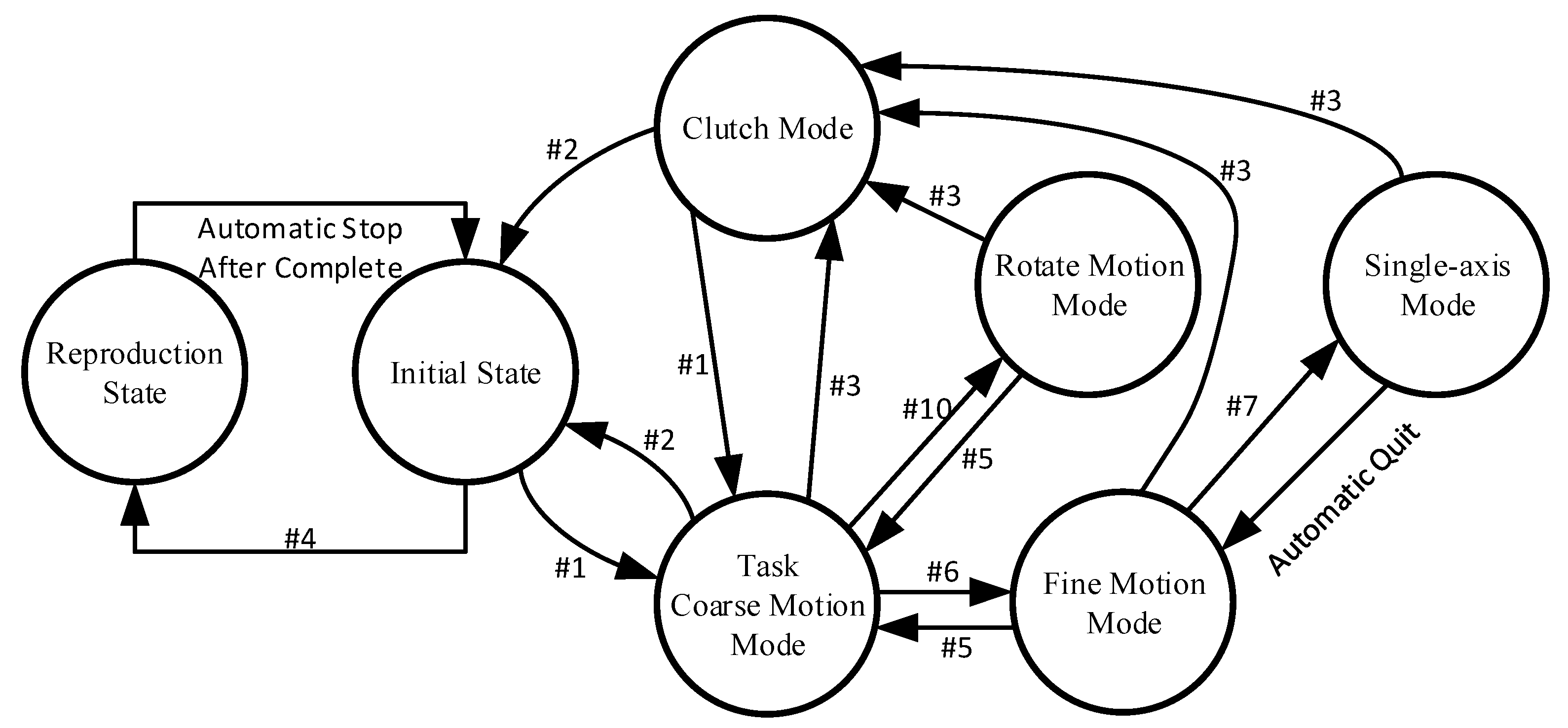

3.1.2. Interaction Logic

3.2. Hand/Tool Motion Scaling and Control

3.2.1. Motion Scaling

3.2.2. Single-Axis Motion

3.3. Active Force Control

4. Experiments

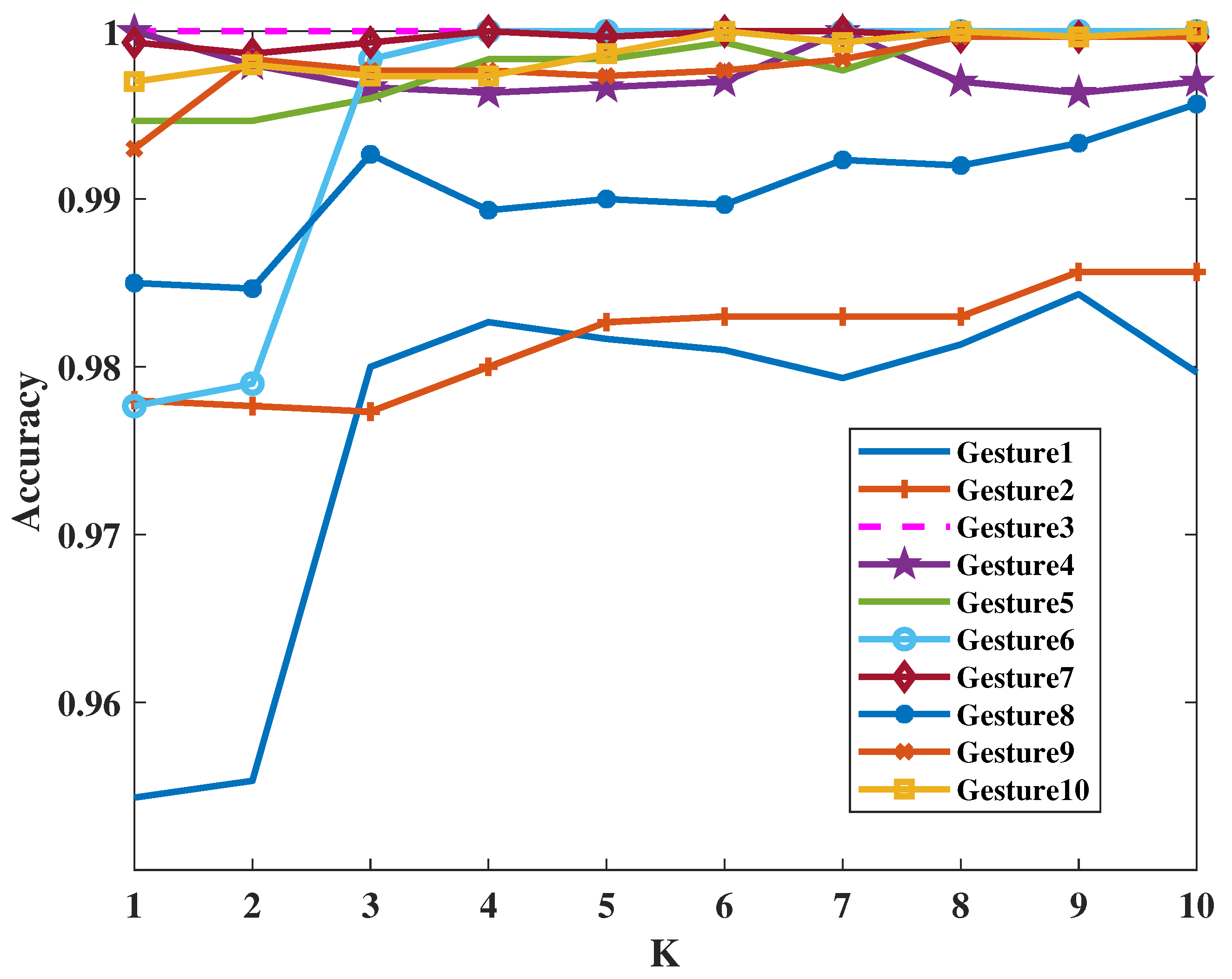

4.1. Gesture Recognition Results

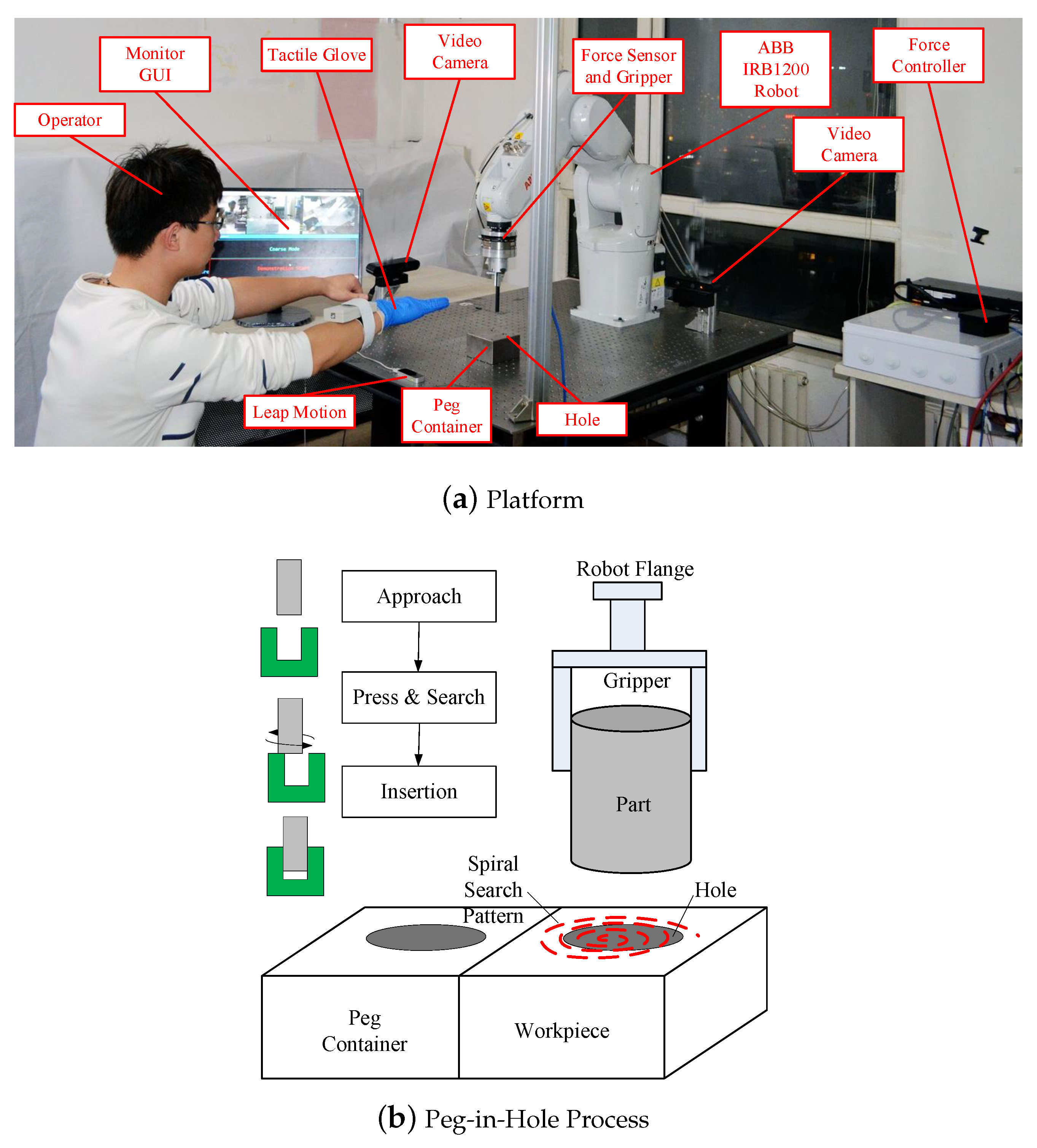

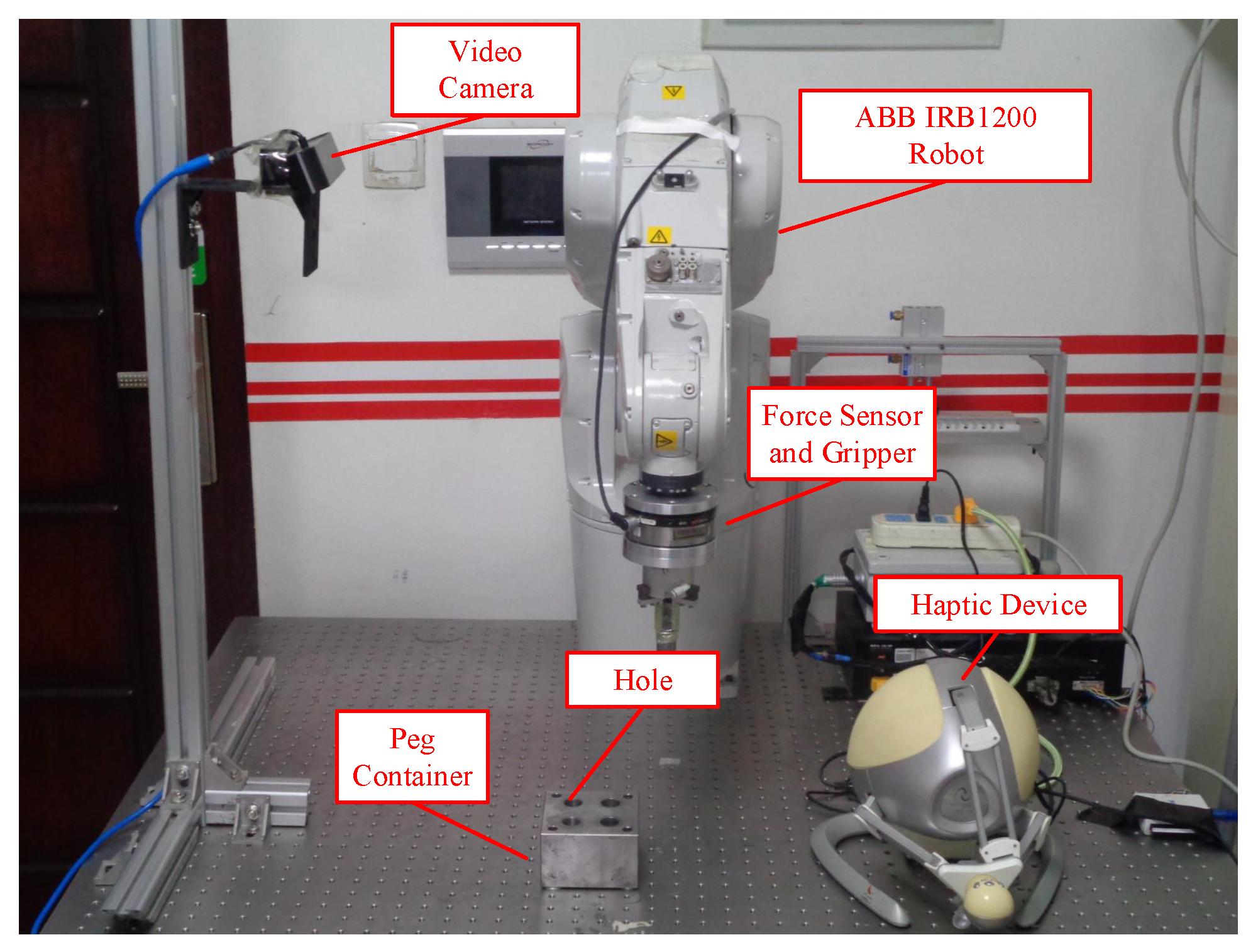

4.2. Case Study of Peg-In-Hole Process

- Move the robot gripper above the peg;

- Move the robot gripper downward until contact;

- Close the pneumatic gripper;

- Move the robot gripper upward until the peg is higher than container;

- Move the peg above the hole;

- Move the peg downward until contact;

- Move along a spiral pattern to search the hole while pressing the surface; this process continues until the contact force disappears;

- Move the peg downward;

- Open the robot gripper to release the peg.

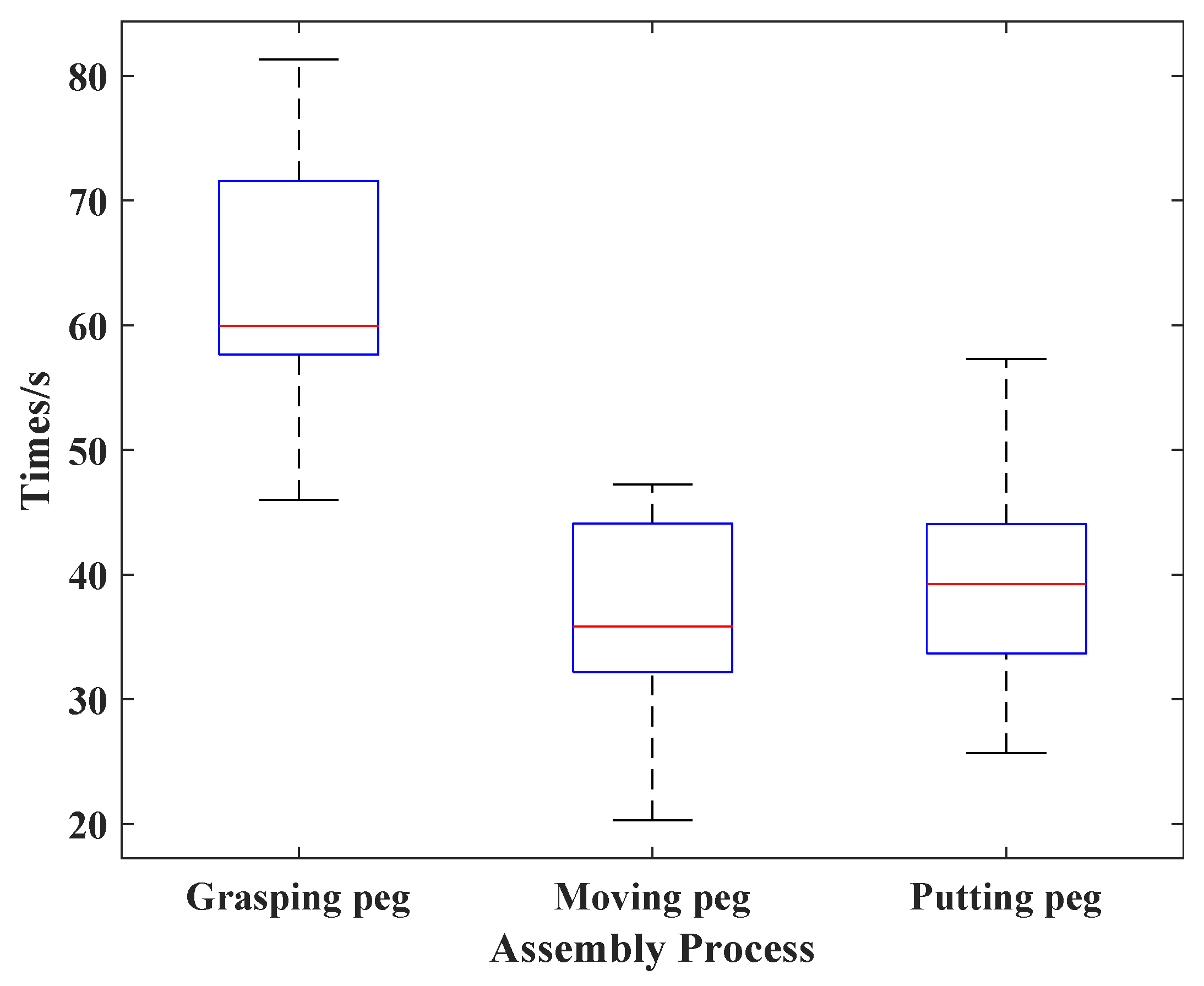

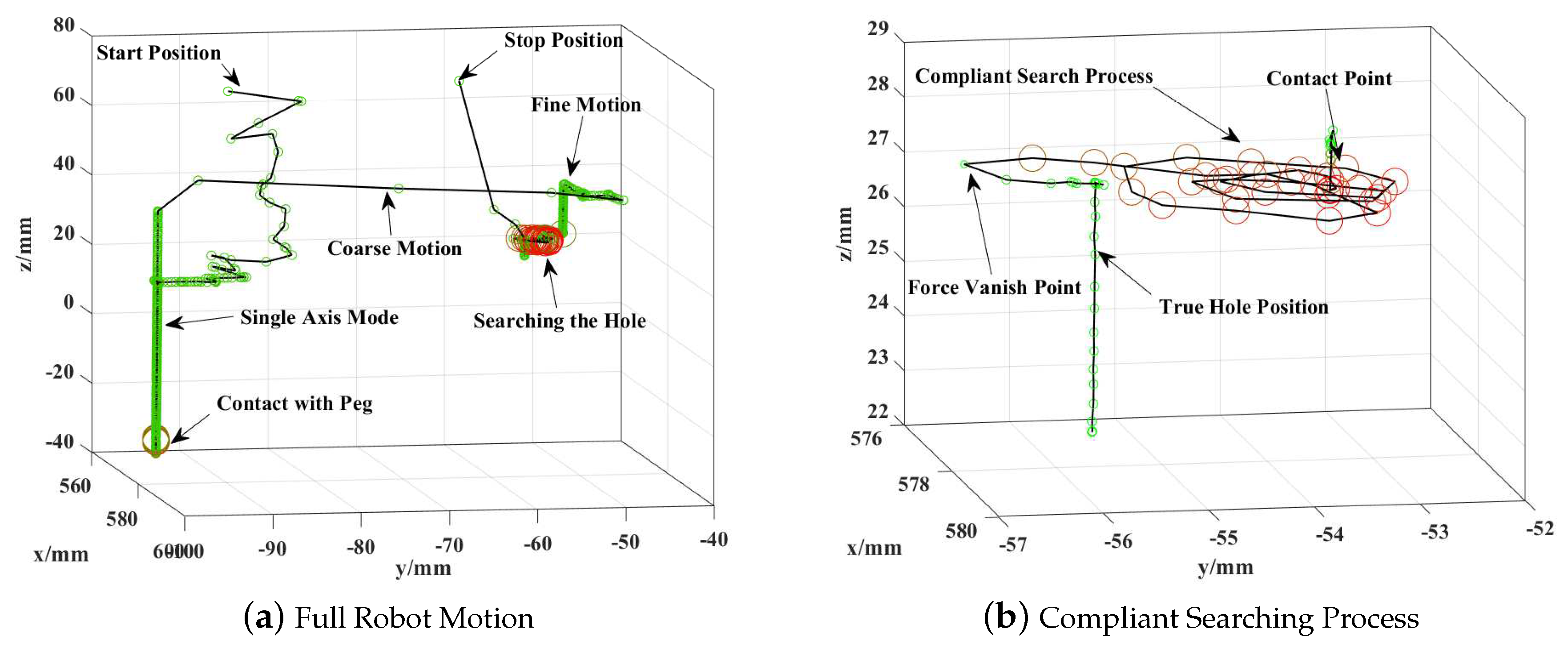

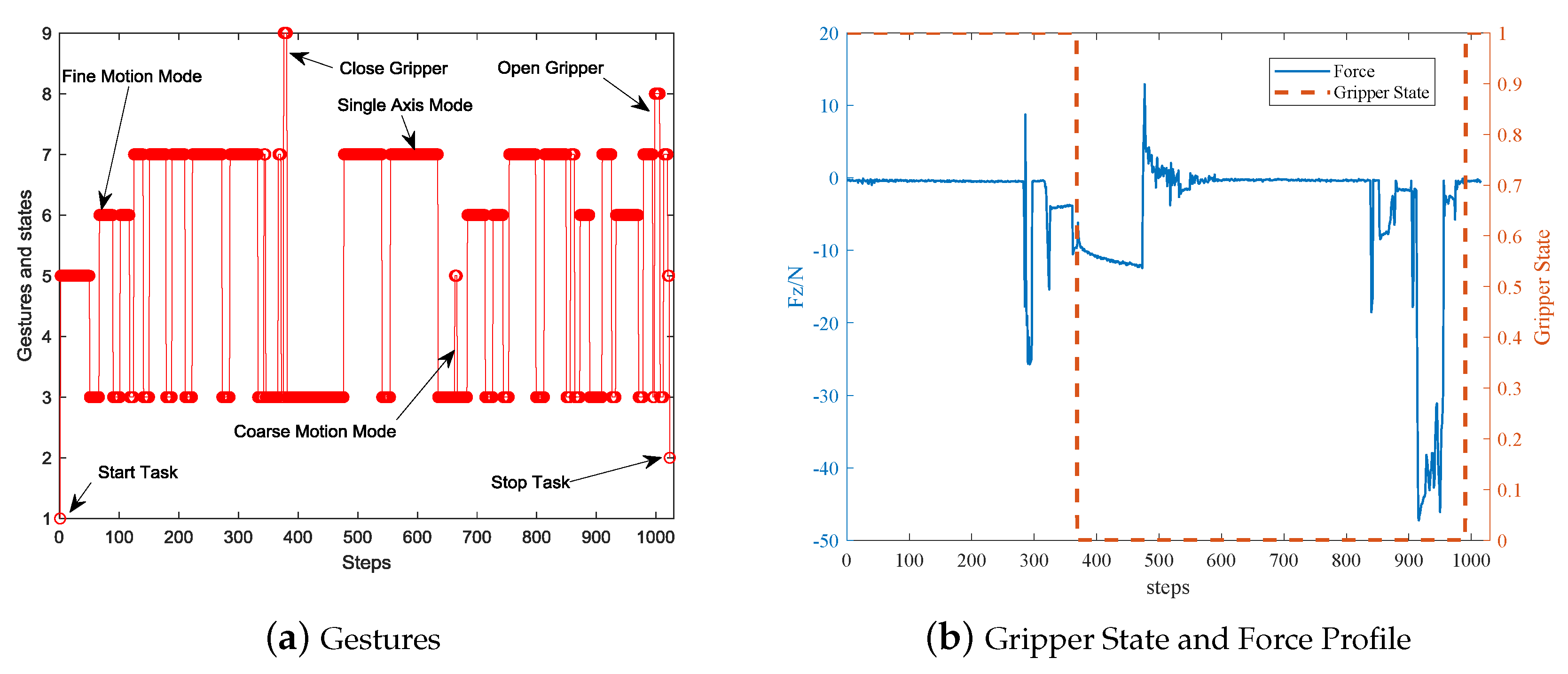

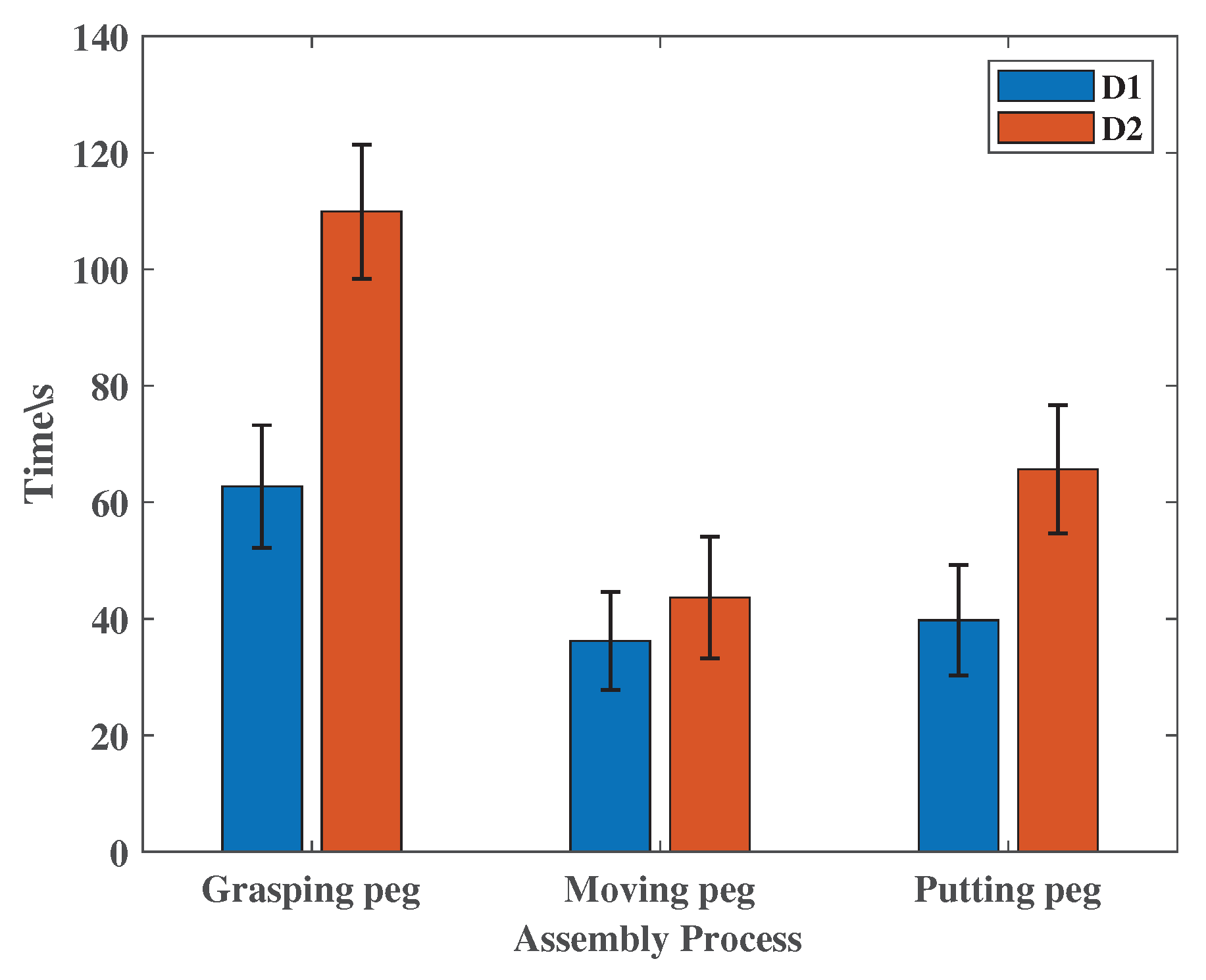

4.2.1. Results

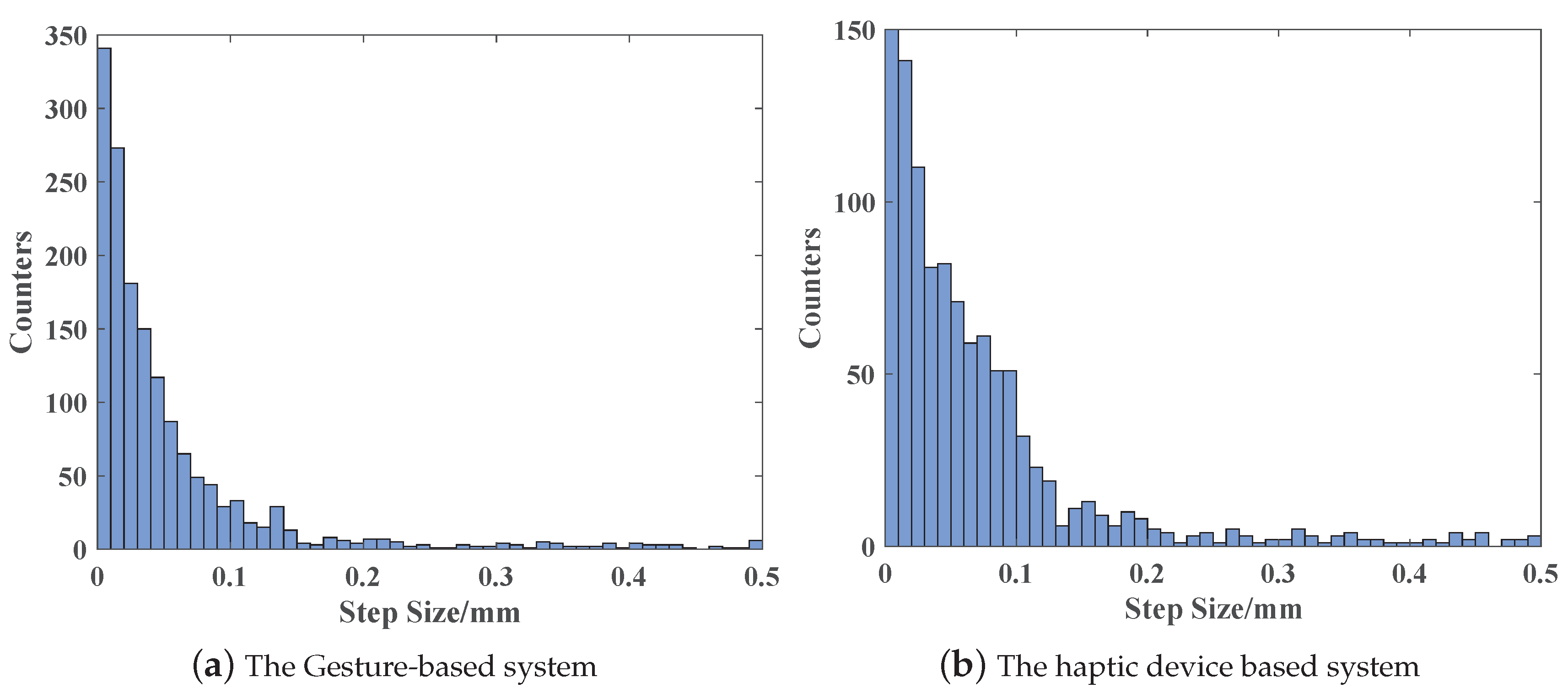

4.2.2. Analysis

5. Conclusions and Future Work

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Wen, G.; Xie, Y.C. Research on the tele-operation robot system with tele-presence. In Proceedings of the 4th International Workshop on Advanced Computational Intelligence (IWACI 2011), Beijing, China, 7–10 August 2011; pp. 725–728. [Google Scholar] [CrossRef]

- Romano, D.; Donati, E.; Benelli, G.; Stefanini, C. A review on animal-robot interaction: From bio-hybrid organisms to mixed societies. Biol. Cybern. 2019, 113, 201–225. [Google Scholar] [CrossRef] [PubMed]

- Ando, N.; Kanzaki, R. Using insects to drive mobile robots—Hybrid robots bridge the gap between biological and artificial systems. Arthropod Struct. Dev. 2017, 46, 723–735. [Google Scholar] [CrossRef] [PubMed]

- Bozkurt, A.; Lobaton, E.; Sichitiu, M.L. A Biobotic Distributed Sensor Network for Under-Rubble Search and Rescue. IEEE Comput. 2016, 49, 38–46. [Google Scholar] [CrossRef]

- Breazeal, C.; Dautenhahn, K.; Kanda, T. Social Robotics; Springer International Publishing: Berlin, Germany, 2016. [Google Scholar]

- Cui, J.; Tosunoglu, S.; Roberts, R.; Moore, C.; Repperger, D.W. A review of teleoperation system control. In Proceedings of the Florida Conference on Recent Advances in Robotics, Boca Raton, FL, USA, 18–20 June 2003; pp. 1–12. [Google Scholar]

- Xu, Z.; Fiebrink, R.; Matsuoka, Y. Virtual therapist: A Phantom robot-based haptic system for personalized post-surgery finger rehabilitation. In Proceedings of the 2012 IEEE International Conference on Robotics and Biomimetics (ROBIO), Guangzhou, China, 11–14 December 2012; pp. 1662–1667. [Google Scholar]

- Sanfilippo, F.; Weustink, P.B.T.; Pettersen, K.Y. A Coupling Library for the Force Dimension Haptic Devices and the 20-sim Modelling and Simulation Environment. In Proceedings of the 41st Annual Conference of the IEEE Industrial Electronics Society (IECON), Yokohama, Japan, 9–12 November 2015. [Google Scholar]

- Shimada, N.; Shirai, Y.; Kuno, Y.; Miura, J. Hand gesture estimation and model refinement using monocular camera-ambiguity limitation by inequality constraints. In Proceedings of the IEEE International Conference on Automatic Face and Gesture Recognition, Nara, Japan, 14–16 April 1998; pp. 268–273. [Google Scholar]

- Li, X.; An, J.H.; Min, J.H.; Hong, K.S. Hand gesture recognition by stereo camera using the thinning method. In Proceedings of the International Conference on Multimedia Technology, Hangzhou, China, 26–28 July 2011; pp. 3077–3080. [Google Scholar]

- Ren, Z.; Yuan, J.; Meng, J.; Zhang, Z. Robust Part-Based Hand Gesture Recognition Using Kinect Sensor. IEEE Trans. Multimed. 2013, 15, 1110–1120. [Google Scholar] [CrossRef]

- Wei, Q.; Yang, C.; Fan, W.; Zhao, Y. Design of Demonstration-Driven Assembling Manipulator. Appl. Sci. 2018, 8, 797. [Google Scholar] [CrossRef]

- Côté-Allard, U.; Fall, C.L.; Campeau-Lecours, A.; Gosselin, C.; Laviolette, F.; Gosselin, B. Transfer learning for sEMG hand gestures recognition using convolutional neural networks. In Proceedings of the 2017 IEEE International Conference on Systems, Man, and Cybernetics (SMC), Banff, AB, Canada, 5–8 October 2017; pp. 1663–1668. [Google Scholar]

- Weichert, F.; Bachmann, D.; Rudak, B.; Fisseler, D. Analysis of the Accuracy and Robustness of the Leap Motion Controller. Sensors 2013, 13, 6380–6393. [Google Scholar] [CrossRef] [PubMed]

- Placidi, G.; Cinque, L.; Polsinelli, M.; Spezialetti, M. Measurements by A LEAP-Based Virtual Glove for the Hand Rehabilitation. Sensors 2018, 18, 834. [Google Scholar] [CrossRef] [PubMed]

- Bassily, D.; Georgoulas, C.; Guettler, J.; Linner, T.; Bock, T. Intuitive and Adaptive Robotic Arm Manipulation using the Leap Motion Controller. In Proceedings of the Isr/robotik 2014; International Symposium on Robotics, Munich, Germany, 2–3 June 2014; pp. 1–7. [Google Scholar]

- Hernoux, F.; Béarée, R.; Gibaru, O. Investigation of dynamic 3D hand motion reproduction by a robot using a Leap Motion. In Proceedings of the Virtual Reality International Conference, Laval, France, 8–10 April 2015; pp. 1–10. [Google Scholar]

- Jin, H.; Zhang, L.; Rockel, S.; Zhang, J.; Hu, Y.; Zhang, J. Optical Tracking based Tele-control System for Tabletop Object Manipulation Tasks. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems, Hamburg, Germany, 28 September–3 October 2015; pp. 636–642. [Google Scholar]

- Jin, H.; Chen, Q.; Chen, Z.; Hu, Y.; Zhang, J. Multi-LeapMotion sensor based demonstration for robotic refine tabletop object manipulation task. CAAI Trans. Intell. Technol. 2016, 1, 104–113. [Google Scholar] [CrossRef]

- Despinoy, F.; Zemiti, N.; Forestier, G.; Sánchez, A.; Jannin, P.; Poignet, P. Evaluation of contactless human-machine interface for robotic surgical training. Int. J. Comput. Assist. Radiol. Surg. 2017, 13, 1–12. [Google Scholar] [CrossRef] [PubMed]

- Du, G.; Zhang, P.; Liu, X. Markerless Human–Manipulator Interface Using Leap Motion With Interval Kalman Filter and Improved Particle Filter. IEEE Trans. Ind. Inform. 2017, 12, 694–704. [Google Scholar] [CrossRef]

- Zhao, Y.; Al-Yacoub, A.; Goh, Y.M.; Justham, L.; Lohse, N.; Jackson, M.R. Human skill capture: A Hidden Markov Model of force and torque data in peg-in-a-hole assembly process. In Proceedings of the 2016 IEEE International Conference on Systems, Man, and Cybernetics (SMC), Budapest, Hungary, 9–12 October 2016; pp. 000655–000660. [Google Scholar]

- Zhang, K.; Shi, M.H.; Xu, J.; Liu, F.; Chen, K. Force control for a rigid dual peg-in-hole assembly. Assem. Autom. 2017, 37, 200–207. [Google Scholar] [CrossRef]

- Dennerlein, J.T.; Millman, P.A.; Howe, R.D. Vibrotactile feedback for industrial telemanipulators. In Proceedings of the Sixth Annual Symposium on Haptic Interfaces for Virtual Environment and Teleoperator Systems, ASME International Mechanical Engineering Congress and Exposition, Dallas, TX, USA, 16–21 November 1997; Volume 61, pp. 189–195. [Google Scholar]

- Raveh, E.; Portnoy, S.; Friedman, J. Adding vibrotactile feedback to a myoelectric-controlled hand improves performance when online visual feedback is disturbed. Hum. Mov. Sci. 2018, 58, 32–40. [Google Scholar] [CrossRef] [PubMed]

- Khasnobish, A.; Pal, M.; Sardar, D.; Tibarewala, D.N.; Konar, A. Vibrotactile feedback for conveying object shape information as perceived by artificial sensing of robotic arm. Cogn. Neurodyn. 2016, 10, 327–338. [Google Scholar] [CrossRef] [PubMed]

- Hussain, I.; Meli, L.; Pacchierotti, C.; Salvietti, G.; Prattichizzo, D. Vibrotactile Haptic Feedback for Intuitive Control of Robotic Extra Fingers. In Proceedings of the 2015 IEEE World Haptics Conference, Evanston, IL, USA, 22–26 June 2015. [Google Scholar]

- Cheok, M.J.; Omar, Z.; Jaward, M.H. A review of hand gesture and sign language recognition techniques. Int. J. Mach. Learn. Cybern. 2019, 10, 131–153. [Google Scholar] [CrossRef]

- Perezdelpulgar, C.J.; Smisek, J.; Rivasblanco, I.; Schiele, A.; Munoz, V.F. Using Gaussian Mixture Models for Gesture Recognition During Haptically Guided Telemanipulation. Electronics 2019, 8, 772. [Google Scholar] [CrossRef]

- Xuan, G.; Zhang, W.; Chai, P. EM algorithms of Gaussian mixture model and hidden Markov model. In Proceedings of the 2001 International Conference on Image Processing (ICIP), Thessaloniki, Greece, 7–10 October 2001; Volume 1, pp. 145–148. [Google Scholar]

- Watanabe, H.; Muramatsu, S.; Kikuchi, H. Interval calculation of EM algorithm for GMM parameter estimation. In Proceedings of the 2010 IEEE International Symposium on Circuits and Systems, Paris, France, 30 May–2 June 2010; pp. 2686–2689. [Google Scholar]

- Pedregosa, F.; Varoquaux, G.; Gramfort, A.; Michel, V.; Thirion, B.; Grisel, O.; Blondel, M.; Prettenhofer, P.; Weiss, R.; Dubourg, V.; et al. Scikit-learn: Machine Learning in Python. J. Mach. Learn. Res. 2011, 12, 2825–2830. [Google Scholar]

- Chen, H.; Zhang, G.; Zhang, H.; Fuhlbrigge, T.A. Integrated robotic system for high precision assembly in a semi-structured environment. Assem. Autom. 2007, 27, 247–252. [Google Scholar] [CrossRef]

- Li, X.; Cheng, H.; Liang, X. Adaptive motion planning framework by learning from demonstration. Ind. Robot 2019, 46, 541–552. [Google Scholar] [CrossRef]

- Cappa, P.; Clerico, A.; Nov, O.; Porfiri, M. Can force feedback and science learning enhance the effectiveness of neuro-rehabilitation? An experimental study on using a low-cost 3D joystick and a virtual visit to a zoo. PLoS ONE 2013, 8, e83945. [Google Scholar] [CrossRef] [PubMed]

| # | Name | Meanings |

|---|---|---|

| 1 | Task Start | Start a new task, from standby mode to task mode |

| 2 | Task Stop | End a task, from task mode to standby mode |

| 3 | Task Clutch | Clutch task, robot doesn’t response the motion command |

| 4 | Start reproduction | Autonomous Repeating the learned motions |

| 5 | Coarse Motion Mode | Moves with big motion scaling factor |

| 6 | Fine Motion Mode | Moves with small motion scaling factor |

| 7 | Single-axis Mode | Moves only along the gratitude direction |

| 8 | Open Gripper | Open the robot gripper or tool |

| 9 | Close Gripper | Close the robot gripper or tool |

| 10 | Rotation Motion Mode | Rotate the robot tool |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhang, W.; Cheng, H.; Zhao, L.; Hao, L.; Tao, M.; Xiang, C. A Gesture-Based Teleoperation System for Compliant Robot Motion. Appl. Sci. 2019, 9, 5290. https://doi.org/10.3390/app9245290

Zhang W, Cheng H, Zhao L, Hao L, Tao M, Xiang C. A Gesture-Based Teleoperation System for Compliant Robot Motion. Applied Sciences. 2019; 9(24):5290. https://doi.org/10.3390/app9245290

Chicago/Turabian StyleZhang, Wei, Hongtai Cheng, Liang Zhao, Lina Hao, Manli Tao, and Chaoqun Xiang. 2019. "A Gesture-Based Teleoperation System for Compliant Robot Motion" Applied Sciences 9, no. 24: 5290. https://doi.org/10.3390/app9245290

APA StyleZhang, W., Cheng, H., Zhao, L., Hao, L., Tao, M., & Xiang, C. (2019). A Gesture-Based Teleoperation System for Compliant Robot Motion. Applied Sciences, 9(24), 5290. https://doi.org/10.3390/app9245290