Playing for a Virtual Audience: The Impact of a Social Factor on Gestures, Sounds and Expressive Intents

Abstract

1. Introduction

1.1. Controlling Environmental Variables in Virtual Reality

1.2. Music Performance: From Sound to Gesture

1.2.1. Emotional Intensity and Expressive Intents in Musical Performances

1.2.2. Authenticity in Musical Performances

1.3. Goal of This Study

2. Materials and Methods

2.1. Recording Session

2.2. Rating Experiment

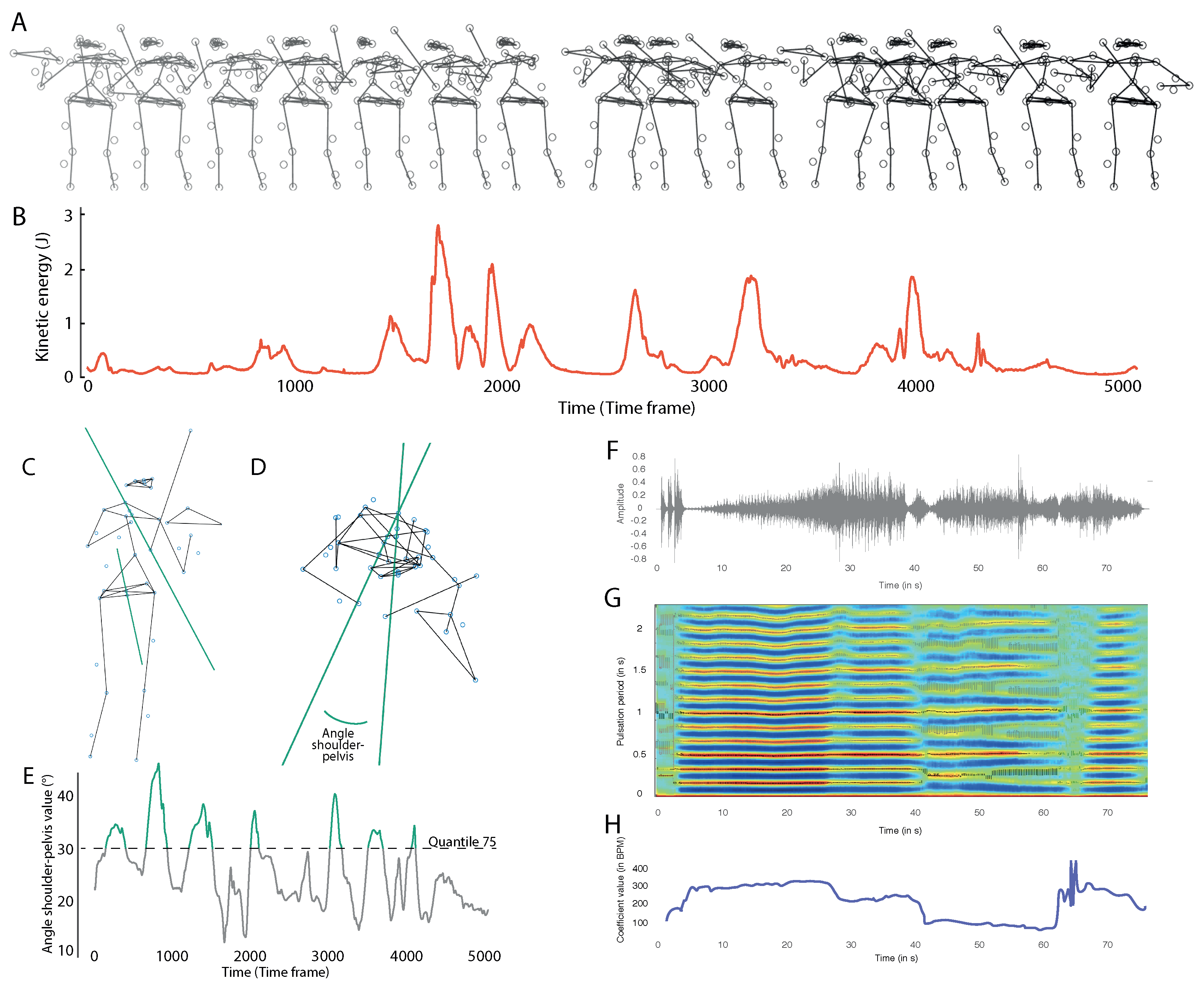

2.3. Multi-Modal Expressive Behavior Analysis

3. Results

3.1. Proximal Performance Data Analysis

3.2. Distal Participant Data Analysis

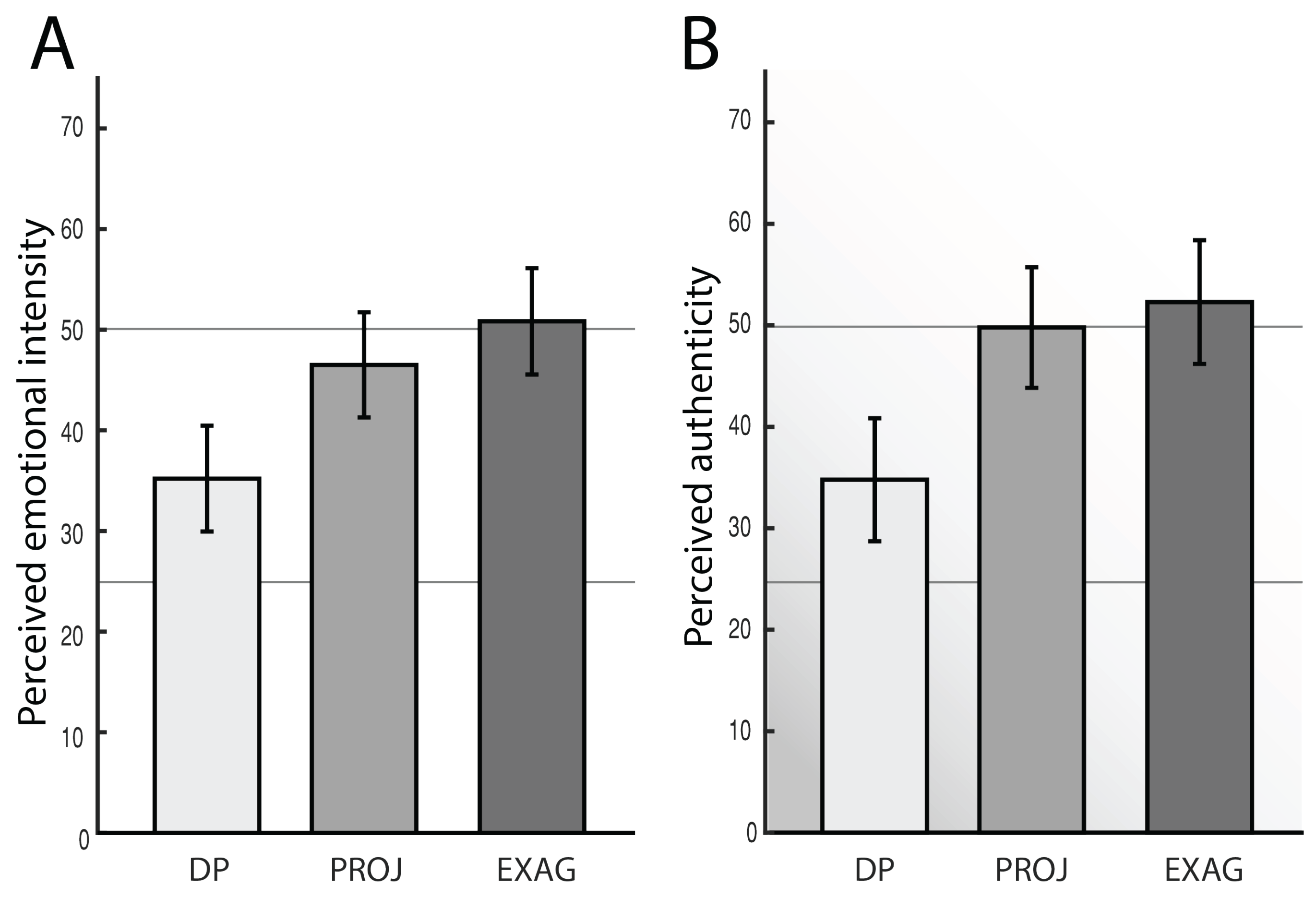

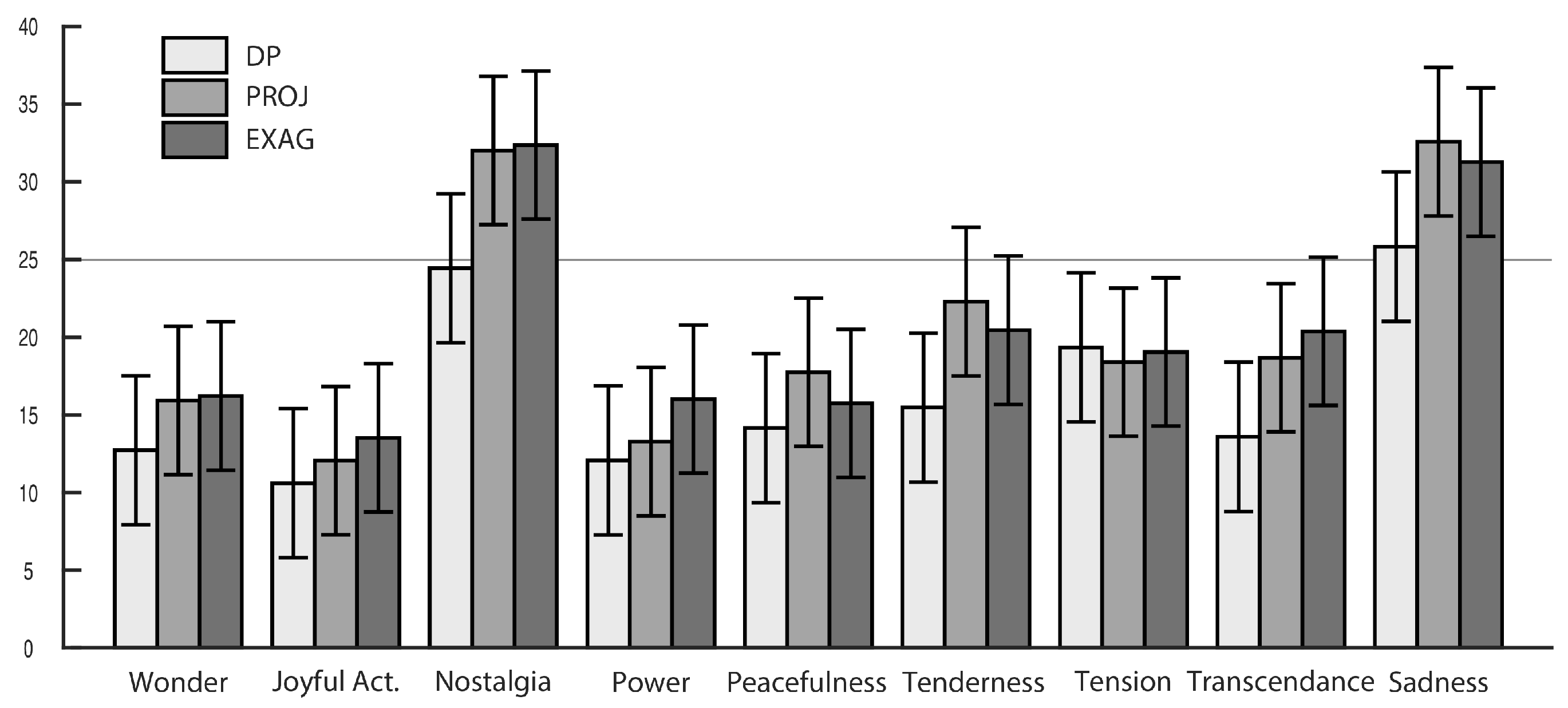

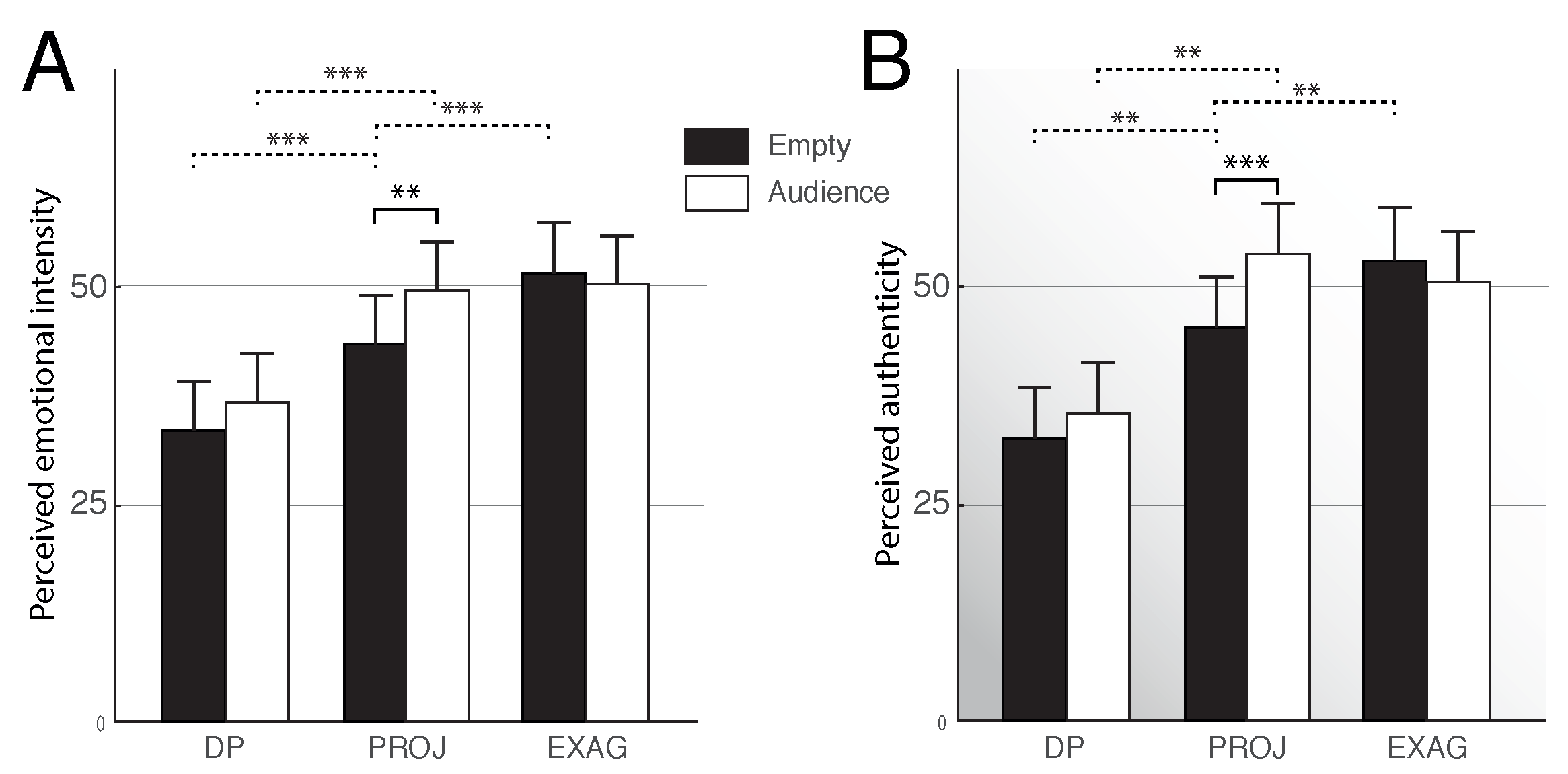

3.2.1. Interaction Effect of the Expressive Intents and the Presence of an Audience on Perceived Authenticity and Emotional Intensity

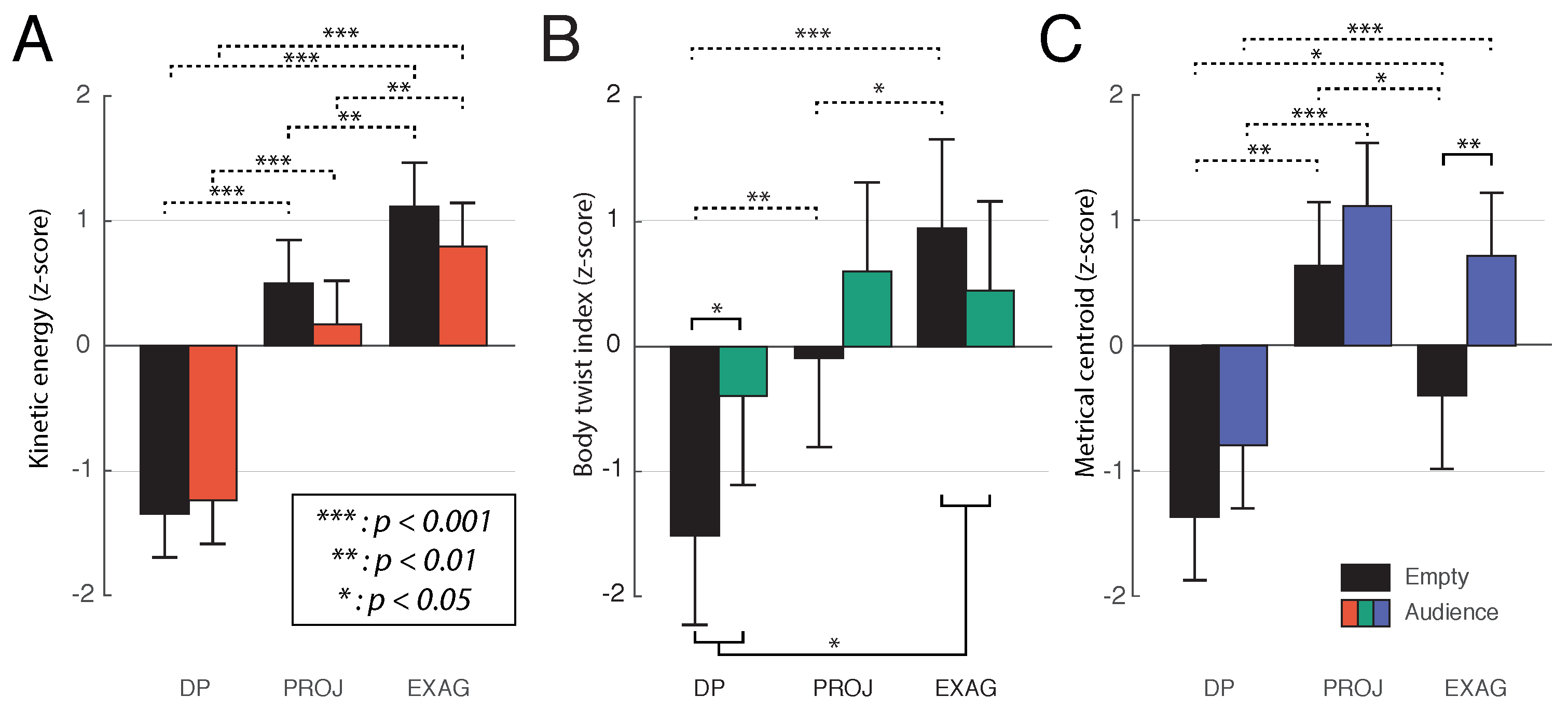

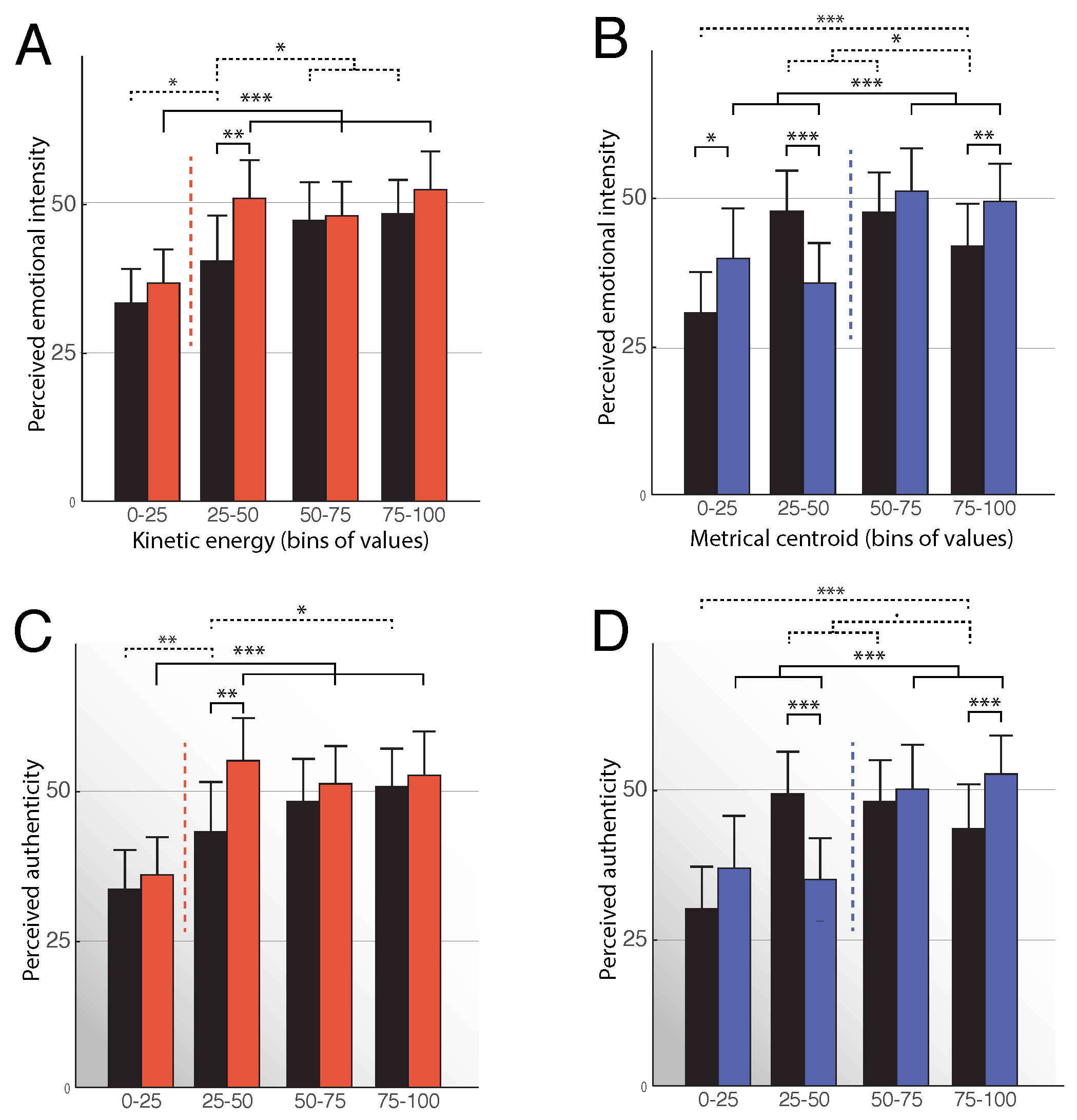

3.2.2. Effect of the Motion and Acoustic Features and the Presence of an Audience

4. Discussion

4.1. Absence of an audience

4.2. Impact of the Presence of a Virtual Audience

4.3. Interactive Virtual Environments as a Tool to Study Musical Performances

5. Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

Appendix A

Appendix B

Appendix C

References

- Wilson, G.D.; Roland, D. Performance anxiety. In The Science and Psychology of Music Performance: Creative Strategies for Teaching and Learning; Oxford University Press: Oxford, UK, 2002; pp. 47–61. [Google Scholar]

- Williamon, A.; Aufegger, L.; Eiholzer, H. Simulating and stimulating performance: Introducing distributed simulation to enhance musical learning and performance. Front. Psychol. 2014, 5, 1–9. [Google Scholar] [CrossRef] [PubMed]

- Blascovich, J.; Loomis, J.; Beall, A.C.; Swinth, K.R.; Hoyt, C.L.; Bailenson, N.; Bailenson, J.N. Immersive virtual environment technology as a methodological tool for social psychology. Psychol. Inq. 2002, 13, 103–124. [Google Scholar] [CrossRef]

- Sanchez-Vives, M.V.; Slater, M. From presence to consciousness through virtual reality. Nat. Rev. Neurosci. 2005, 6, 332–339. [Google Scholar] [CrossRef] [PubMed]

- Difede, J.; Cukor, J.; Hoffman, H.G. Virtual Reality Exposure Therapy for the Treatment of Posttraumatic Stress Disorder Following September 11, 2001. J. Clin. Psychiatry 2007, 68, 1639–1647. [Google Scholar] [CrossRef] [PubMed]

- Rizzo, A.; Reger, G.; Gahm, G.; Difede, J.; Rothbaum, B.O. Virtual reality exposure therapy for combat-related PTSD. In Post-Traumatic Stress Disorder; Springer: New York, NY, USA, 2009; pp. 375–399. [Google Scholar]

- Rizzo, A.S.; Buckwalter, J.G.; Forbell, E.; Reist, C.; Difede, J.; Rothbaum, B.O.; Lange, B.; Koenig, S.; Talbot, T. Virtual Reality Applications to Address the Wounds of War. Psychiatr. Ann. 2013, 43, 123–138. [Google Scholar] [CrossRef]

- Rothbaum, B.O.; Hodges, L.; Smith, S.; Lee, J.H.; Price, L. A controlled study of virtual reality exposure therapy for the fear of flying. J. Consult. Clin. Psychol. 2000, 68, 1020–1026. [Google Scholar] [CrossRef] [PubMed]

- Bouchard, S.; Côté, S.; St-Jacques, J.; Robillard, G.E.; Renaud, P. Effectiveness of virtual reality exposure in the treatment of arachnophobia using 3D games. Technol. Health Care 2006, 14, 19–27. [Google Scholar] [PubMed]

- Pertaub, D.; Slater, M.; Barker, C. An experiment on fear of public speaking in virtual reality. In Studies in Health Technology and Informatics; IOS Press: Amsterdam, The Netherlands, 2001; pp. 372–378. [Google Scholar]

- North, M.M.; North, S.M.; Coble, J.R. Virtual reality therapy: An effective treatment for the fear of public speaking. Int. J. Virtual Real. IJVR 2015, 3, 1–6. [Google Scholar]

- Orman, E.K. Effect of virtual reality graded exposure on anxiety levels of performing musicians: A case study. J. Music Ther. 2004, 41, 70–78. [Google Scholar] [CrossRef] [PubMed]

- Bissonnette, J.; Dubé, F.; Provencher, M.D.; Moreno Sala, M.T. Evolution of music performance anxiety and quality of performance during virtual reality exposure training. Virtual Real. 2016, 20, 71–81. [Google Scholar] [CrossRef]

- Juslin, P.N.; Laukka, P. Expression, Perception, and Induction of Musical Emotions: A Review and a Questionnaire Study of Everyday Listening. J. New Music Res. 2004, 33, 217–238. [Google Scholar] [CrossRef]

- Behrens, G.A.; Green, S.B. The ability to identify emotional content of solo improvisations performed vocally and on three different instruments. Psychol. Music 1993, 21, 20–33. [Google Scholar] [CrossRef]

- Gabrielsson, A. Expressive intention and performance. In Music and the mind machine; Springer: Berlin/Heidelberg, Germany, 1995; pp. 35–47. [Google Scholar]

- Gabrielsson, A.; Juslin, P.N. Emotional Expression in Music Performance: Between the Performer’s Intention and the Listener’s Experience. Psychol. Music 1996, 24, 68–91. [Google Scholar] [CrossRef]

- Juslin, P.N. Emotional Communication in Music Performance: A Functionalist Perspective and Some Data. Music Percept. Interdiscip. J. 1997, 14, 383–418. [Google Scholar] [CrossRef]

- Juslin, P.N. Perceived Emotional Expression in Synthesized Performances of a Short Melody: Capturing the Listener’s Judgment Policy. Music. Sci. 1997, 1, 225–256. [Google Scholar] [CrossRef]

- Juslin, P.N.; Madison, G. The Role of Timing Patterns in Recognition of Emotional Expression from Musical Performance. Music Percept. Interdiscip. J. 1999, 17, 197–221. [Google Scholar] [CrossRef]

- Laukka, P.; Juslin, P.N. Improving emotional communication in music performance through cognitive feedback. Music. Sci. J. Eur. Soc. Cognit. Sci. Music 2000, 4, 151–183. [Google Scholar]

- Balkwill, L.l.; Thompson, W.F. A Cross-Cultural Investigation of the Perception of Emotion in Music: Psychophysical and Cultural Cues. Music Percept. Interdiscip. J. 1999, 17, 43–64. [Google Scholar] [CrossRef]

- Fritz, T.; Jentschke, S.; Gosselin, N.; Sammler, D.; Peretz, I.; Turner, R.; Friederici, A.D.; Koelsch, S. Universal Recognition of Three Basic Emotions in Music. Curr. Biol. 2009, 19, 573–576. [Google Scholar] [CrossRef] [PubMed]

- Zentner, M.; Grandjean, D.; Scherer, K.R. Emotions evoked by the sound of music: Characterization, classification, and measurement. Emotion 2008, 8, 494–521. [Google Scholar] [CrossRef] [PubMed]

- Jansens, S.; Bloothooft, G.; de Krom, G. Perception And Acoustics Of Emotions In Singing. In Proceedings of the Fifth European Conference on Speech Communication and Technology, Rhodes, Greece, 22–25 September 1997. [Google Scholar]

- Mergl, R.; Piesbergen, C.; Tunner, W. Musikalisch-Improvisatorischer Ausdruck und Erkennen von Gefühlsqualitäten; Hogrefe: Göttingen, Germany, 2009; pp. 1–11. [Google Scholar]

- Juslin, P.N. Cue utilization in communication of emotion in music performance: relating performance to perception. J. Exp. Psychol. Hum. Percept. Perform. 2000, 26, 1797–1813. [Google Scholar] [CrossRef] [PubMed]

- Juslin, P.N.; Laukka, P. Communication of emotions in vocal expression and music performance: Different channels, same code? Psychol. Bull. 2003, 129, 770–814. [Google Scholar] [CrossRef] [PubMed]

- Juslin, P.N.; Sloboda, J.A. Music and Emotion: Theory and Research.; Oxford University Press: Oxford, UK, 2001. [Google Scholar]

- Banse, R.; Scherer, K.R. Acoustic profiles in vocal emotion expression. J. Personal. Soc. Psychol. 1996, 70, 614–636. [Google Scholar] [CrossRef]

- Finnäs, L. Presenting music live, audio-visually or aurally—does it affect listeners’ experiences differently? Br. J. Music Educ. 2001, 18, 55–78. [Google Scholar] [CrossRef]

- Frith, S. Performing Rites: On the Value of Popular Music; Harvard University Press: Cambridge, MA, USA, 1998. [Google Scholar]

- Vines, B.W.; Krumhansl, C.L.; Wanderley, M.M.; Levitin, D.J. Cross-modal interactions in the perception of musical performance. Cognition 2006, 101, 80–113. [Google Scholar] [CrossRef] [PubMed]

- Auslander, P. Liveness: Performance in a Mediatized Culture; Routledge: Abingdon, UK, 2008. [Google Scholar]

- Bergeron, V.; Lopes, D.M. Hearing and seeing musical expression. Philos. Phenomenol. Res. 2009, 78, 1–16. [Google Scholar] [CrossRef]

- Cook, N. Beyond the notes. Nature 2008, 453, 1186–1187. [Google Scholar] [CrossRef] [PubMed]

- Malin, Y. Metric Analysis and the Metaphor of Energy: A Way into Selected Songs by Wolf and Schoenberg. Music Theory Spectr. 2008, 30, 61–87. [Google Scholar] [CrossRef]

- Platz, F.; Kopiez, R. When the eye listens: A meta-analysis of how audio-visual presentation enhances the appreciation of music performance. Music Percept. 2012, 30, 71–83. [Google Scholar]

- Chapados, C.; Levitin, D.J. Cross-modal interactions in the experience of musical performances: Physiological correlates. Cognition 2008, 108, 639–651. [Google Scholar] [CrossRef] [PubMed]

- Vines, B.W.; Krumhansl, C.L.; Wanderley, M.M.; Dalca, I.M.; Levitin, D.J. Music to my eyes: Cross-modal interactions in the perception of emotions in musical performance. Cognition 2011, 118, 157–170. [Google Scholar] [CrossRef] [PubMed]

- Cadoz, C.; Wanderley, M.M.; Cadoz, C.; Wanderley, M.M.; Music, G.; Wanderley, M. Gesture-Music. In Trends Gestural Control Music; IRCAM: Paris, France, 2000. [Google Scholar]

- Wanderley, M.M.; Vines, B.W.; Middleton, N.; McKay, C.; Hatch, W. The Musical Significance of Clarinetists’ Ancillary Gestures: An Exploration of the Field. J. New Music Res. 2005, 34, 97–113. [Google Scholar] [CrossRef]

- Davidson, J.W. Bodily communication in musical performance. In Musical Communication; OUP Oxford: Oxford, UK, 2005; pp. 215–238. [Google Scholar]

- Glowinski, D.; Mancini, M.; Cowie, R.; Camurri, A.; Chiorri, C.; Doherty, C. The movements made by performers in a skilled quartet: A distinctive pattern, and the function that it serves. Front. Psychol. 2013, 4, 1–9. [Google Scholar] [CrossRef] [PubMed]

- Palmer, C. Music Performance: Movement and Coordination; Elsevier: Amsterdam, The Netherlands, 2012. [Google Scholar]

- Dahl, S.; Friberg, A. Visual Perception of Expressiveness in Musicians’ Body Movements. Music Percept. Interdiscip. J. 2007, 24, 433–454. [Google Scholar] [CrossRef]

- Wanderley, M.M. Quantitative Analysis of Non-Obvious Performer Gestures; Springer: Berlin/Heidelberg, Germany, 2002; pp. 241–253. [Google Scholar]

- Nusseck, M.; Wanderley, M.M. Music and Motion—How Music-Related Ancillary Body Movements Contribute to the Experience of Music. Music Percept. Interdiscip. J. 2009, 26, 335–353. [Google Scholar] [CrossRef]

- Bhatara, A.K.; Duan, L.M.; Tirovolas, A.; Levitin, D.J. Musical expression and emotion: Influences of temporal and dynamic variation. 2009; manuscript submitted for publication. [Google Scholar]

- Sloboda, J.A.; Lehmann, A.C. Tracking Performance Correlates of Changes in Perceived Intensity of Emotion During Different Interpretations of a Chopin Piano Prelude. Music Percept. 2001, 19, 87–120. [Google Scholar] [CrossRef]

- Sakata, M.; Wakamiya, S.; Odaka, N.; Hachimura, K. Effect of body movement on music expressivity in jazz performances. In International Conference on Human-Computer Interaction; Springer: Berlin/Heidelberg, Germany, 2009; pp. 159–168. [Google Scholar]

- Thompson, M.R.; Luck, G. Exploring relationships between pianists’ body movements, their expressive intentions, and structural elements of the music. Musicae Scientiae 2012, 16, 19–40. [Google Scholar] [CrossRef]

- Van Zijl, A.G.W.; Luck, G. Moved through music: The effect of experienced emotions on performers’ movement characteristics. Psychol. Music 2013, 41, 175–197. [Google Scholar] [CrossRef]

- Camurri, A.; Lagerlöf, I.; Volpe, G. Recognizing emotion from dance movement: Comparison of spectator recognition and automated techniques. Int. J. Hum. Comput. Stud. 2003, 59, 213–225. [Google Scholar] [CrossRef]

- Leman, M. Embodied Music Cognition and Mediation Technology; Mit Press: Cambridge, CA, USA, 2008. [Google Scholar]

- Mancas, M.; Madhkour, R.B.; Beul, D.D. Kinact: A saliency-based social game. In Proceedings of the 7th International Summer Workshop on Multimodal Interfaces, Plzen, Czech Republic, 1–26 August 2011; Volume 4, pp. 65–71. [Google Scholar]

- Shaffer, L.H. How to interpret music. In Cognitive Bases of Musical Communication; American Psychological Association: Washington, DC, USA, 1992; pp. 263–278. [Google Scholar]

- Palmer, C. Music Performance. Ann. Rev. Psychol. 1997, 48, 115–138. [Google Scholar] [CrossRef] [PubMed]

- Goffman, E. The Presentation of Self in Everyday Life; Penguin Books: London, UK, 1959. [Google Scholar]

- Scherer, K.R.; Keith, G.; Schaufer, L.; Taddia, B.; Pregardien, C. The singer’ s paradox: on authenticity in emotional expression on the opera stage. In Emotion; OUP Oxford: Oxford, UK, 2013; pp. 55–73. [Google Scholar]

- Wöllner, C. How to quantify individuality in music performance? Studying artistic expression with averaging procedures. Front. Psychol. 2013, 4, 1–3. [Google Scholar] [CrossRef] [PubMed]

- Juslin, P.N. From Everyday Emotions to Aesthetic Emotions: Towards a Unified Theory of Musical Emotions. J. Psychol. 2013, 10, 235–266. [Google Scholar] [CrossRef] [PubMed]

- Brunswik, E. Perception and the Representative Design of Experiments; Univer: Berkeley, CA, USA, 1956. [Google Scholar]

- Grandjean, D.; Bänziger, T.; Scherer, K.R. Intonation as an interface between language and affect. Prog. Brain Res. 2006, 156, 235–247. [Google Scholar] [PubMed]

- Krumhuber, E.G.; Tamarit, L.; Roesch, E.B.; Scherer, K.R. FACSGen 2.0 animation software: Generating three-dimensional FACS-valid facial expressions for emotion research. Emotion 2012, 12, 351. [Google Scholar] [CrossRef] [PubMed]

- Roesch, E.; Tamarit, L.; Reveret, L.; Grandjean, D.; Sander, D.; Scherer, K. FACSGen: A tool to synthesize emotional facial expressions through systematic manipulation of facial action units. J. Nonverbal Behav. 2011, 35, 1–16. [Google Scholar] [CrossRef]

- Guadagno, R.E.; Blascovich, J.; Bailenson, J.N.; Mccall, C. Virtual humans and persuasion: The effects of agency and behavioral realism. Media Psychol. 2007, 10, 1–22. [Google Scholar]

- Garau, M.; Slater, M.; Vinayagamoorthy, V.; Brogni, A.; Steed, A.; Sasse, M.A. The impact of avatar realism and eye gaze control on perceived quality of communication in a shared immersive virtual environment. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, Ft. Lauderdale, FL, USA, 5–10 April 2003; pp. 529–536. [Google Scholar]

- Schmitz, C. LimeSurvey: An Open Source Survey Tool. 2012. Available online: http://www.limesurvey.org (accessed on 5 November 2016).

- Dardard, F.; Gnecco, G.; Glowinski, D. Automatic classification of leading interactions in a string quartet. ACM Trans. Interact. Intell. Syst. 2016, 6, 1–27. [Google Scholar] [CrossRef]

- Glowinski, D.; Baron, N.; Shirole, K.; Coll, S.Y.; Chaabi, L.; Ott, T.; Rappaz, M.A.; Grandjean, D. Evaluating music performance and context-sensitivity with Immersive Virtual Environments. EAI Endors. Trans. Creat. Technol. 2015, 2, 1–10. [Google Scholar] [CrossRef]

- Juslin, P.N.; Liljestrom, S.; Laukka, P.; Vastfjall, D.; Lundqvist, L.O. Emotional reactions to music in a nationally representative sample of Swedish adults: Prevalence and causal influences. Music. Sci. 2011, 15, 174–207. [Google Scholar] [CrossRef]

- Lartillot, O.; Cereghetti, D.; Eliard, K.; Trost, W.J.; Rappaz, M.-A.; Grandjean, D. Estimating Tempo and Metrical Features by Tracking the Whole Metrical Hierarchy. In Proceedings of the 3rd International Conference on Music & Emotion (ICME3), Jyväskylä, Finland, 11–15 June 2013; pp. 11–15. [Google Scholar]

- Burger, P. MoCap Toolbox—A Matlab Toolbox for Computational Analysis of Movement Data; Logos Verlag Berlin: Berlin, Germany, 2013; pp. 172–178. [Google Scholar]

- Lartillot, O.; Toiviainen, P. A matlab toolbox for musical feature extraction from audio. In Proceedings of the 10th Int Conference on Digital Audio Effects DAFx07, Bordeaux, France, 10–15 September 2007; pp. 1–8. [Google Scholar]

- McLean, R.A.; Sanders, W.L.; Stroup, W.W. A unified approach to mixed linear models. Am. Stat. 1991, 45, 54. [Google Scholar]

- Zentner, M.; (University of Innsbruck, Innsbruck, Austria). Personal Communication, 2004.

- Cohen, J. A power primer. Psychol. Bull. 1992, 112, 155–159. [Google Scholar] [CrossRef] [PubMed]

- Westermann, R.; Stahl, G.; Hesse, F. Relative effectiveness and validity of mood induction procedures: analysis. Eur. J. Soc. Psychol. 1996, 26, 557–580. [Google Scholar] [CrossRef]

- Graybiel, A.M. Habits, rituals, and the evaluative brain. Annu. Rev. Neurosci. 2008, 31, 359–387. [Google Scholar] [CrossRef] [PubMed]

- Anderson, C.R. Locus of control, coping behaviors, and performance in a stress setting: A longitudinal study. J. Appl. Psychol. 1977, 62, 446. [Google Scholar] [CrossRef] [PubMed]

- Juslin, P.N.; Karlsson, J.; Lindström, E.; Friberg, A.; Schoonderwaldt, E. Play it again with feeling: Computer feedback in musical communication of emotions. J. Exp. Psychol. Appl. 2006, 12, 79. [Google Scholar] [CrossRef] [PubMed]

- Juslin, P.N.; Persson, R.S. Emotional communication. In The Science and Psychology of Music Performance: Creative Strategies for Teaching and Learning; Oxford University Press: Oxford, UK, 2002; pp. 219–236. [Google Scholar]

© 2017 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Schaerlaeken, S.; Grandjean, D.; Glowinski, D. Playing for a Virtual Audience: The Impact of a Social Factor on Gestures, Sounds and Expressive Intents. Appl. Sci. 2017, 7, 1321. https://doi.org/10.3390/app7121321

Schaerlaeken S, Grandjean D, Glowinski D. Playing for a Virtual Audience: The Impact of a Social Factor on Gestures, Sounds and Expressive Intents. Applied Sciences. 2017; 7(12):1321. https://doi.org/10.3390/app7121321

Chicago/Turabian StyleSchaerlaeken, Simon, Didier Grandjean, and Donald Glowinski. 2017. "Playing for a Virtual Audience: The Impact of a Social Factor on Gestures, Sounds and Expressive Intents" Applied Sciences 7, no. 12: 1321. https://doi.org/10.3390/app7121321

APA StyleSchaerlaeken, S., Grandjean, D., & Glowinski, D. (2017). Playing for a Virtual Audience: The Impact of a Social Factor on Gestures, Sounds and Expressive Intents. Applied Sciences, 7(12), 1321. https://doi.org/10.3390/app7121321