A Survey on Formal Verification and Validation Techniques for Internet of Things

Abstract

1. Introduction

- Overview of FV&V techniques for IoT systems;

- Discussion of challenges and open issues in FV&V for IoT systems;

- Examination of formal methods and techniques for IoT systems;

- Exploration of the use of AI in software for IoT systems;

- Identification of areas for future research and development in FV&V for IoT systems.

2. Related Work

3. Preliminaries Related to IoT

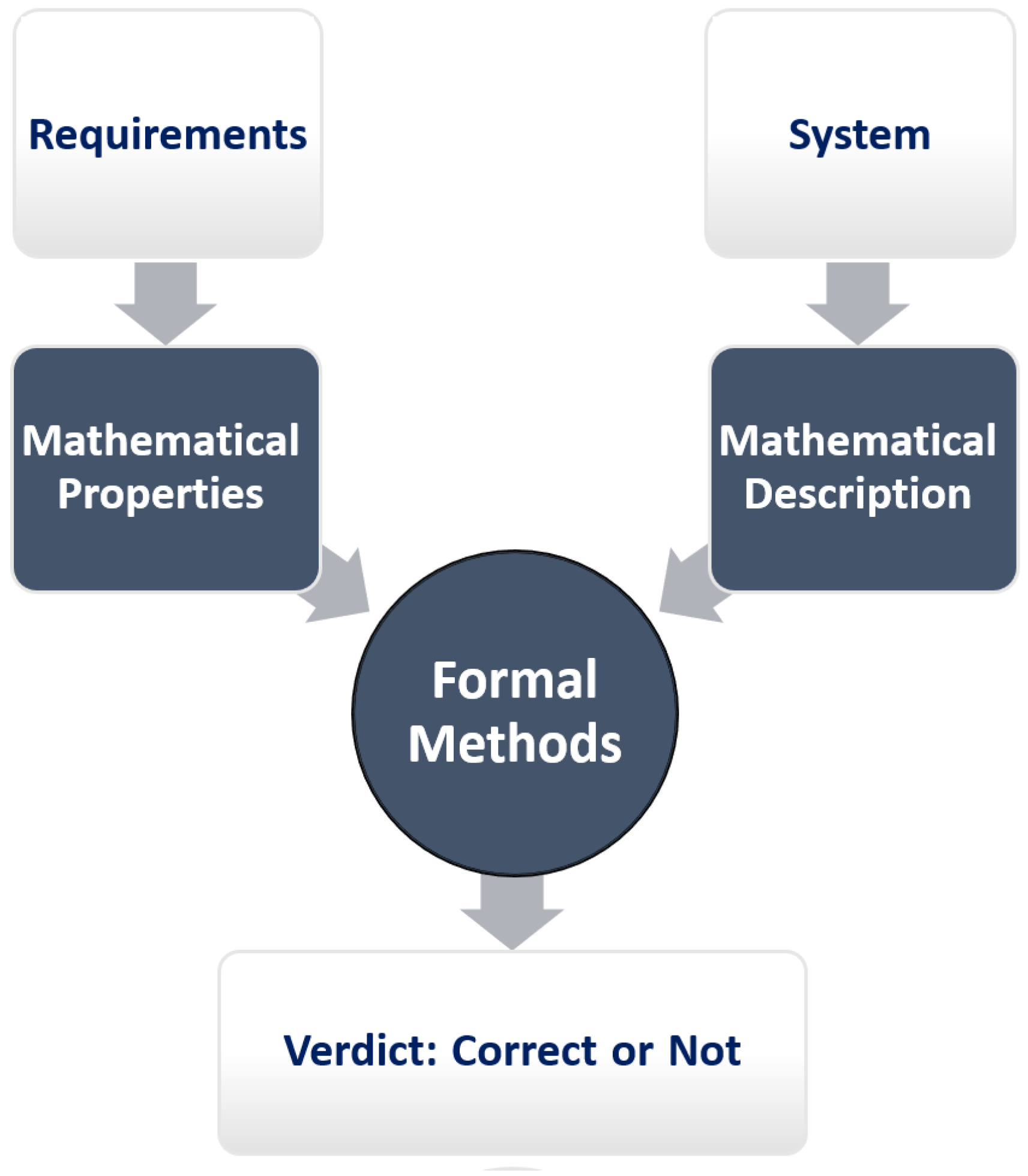

4. Formal Methods

- Abstract interpretation [53]: Abstract interpretation is a formal method used to analyze and verify the behavior of computer programs. It is a technique that involves approximating the behavior of a program by abstracting away some of its details. The goal of abstract interpretation is to prove that a program satisfies certain properties, such as safety, liveness, or termination. Abstract interpretation works by defining a set of abstract values that represent the possible states of a program. These abstract values are defined in such a way that they over-approximate the set of possible concrete values. This allows abstract interpretation to reason about the behavior of a program without actually executing it. One of the key benefits of abstract interpretation is that it can be used to analyze programs that are too complex to be analyzed using other methods. This is because abstract interpretation can reason about the behavior of a program at a higher level of abstraction, which makes it possible to handle a much larger state space.

- Semantic static analysis [54]: Semantic static analysis is a formal method used to analyze the behavior of computer programs by examining their source code. The goal of semantic static analysis is to detect errors and potential problems in a program before it is executed. Semantic static analysis works by analyzing the syntax and structure of a program to infer its meaning. This is done by constructing a mathematical model of the program’s behavior, which can then be used to reason about its properties. One of the advantages of semantic static analysis is that it can be applied early in the development process, which can save time and resources. By detecting errors before a program is executed, semantic static analysis can help to ensure that the final product is correct and reliable. However, semantic static analysis can be challenging because it relies on the ability to reason about complex mathematical models of program behavior. This requires specialized knowledge and expertise in formal methods and mathematical analysis. Additionally, the accuracy of semantic static analysis depends on the quality of the model used, which can be difficult to construct for complex programs [55,56].

- Model checking [57]: Model checking is a formal method used to verify the correctness of a system by exhaustively exploring its possible behaviors. It works by constructing a model of the system and specifying the desired properties that the system should satisfy. The model checker then systematically explores all possible states of the system to determine whether these properties hold for all possible behaviors. Model checking is particularly useful for verifying complex systems, such as concurrent and distributed systems, where traditional methods may not be sufficient. It can also be used to verify hardware designs and protocols. One of the main advantages of model checking is that it can provide complete coverage of all possible behaviors, making it a powerful tool for ensuring the correctness of critical systems.

- Proof Assistants [58]: Proof assistants are software tools that help users construct and verify mathematical proofs. They provide a formal language for expressing mathematical statements and a set of rules for manipulating these statements to construct proofs. Proof assistants are useful for formalizing mathematical theories and verifying their correctness. They can also be used to verify the correctness of software and hardware designs. One of the main advantages of proof assistants is that they provide a high level of assurance that the proof is correct, since the proof is constructed using formal rules and the software checks the proof for correctness.

- Deductive verification [59]: Deductive verification is a formal method used to verify the correctness of software by constructing a formal proof that the software satisfies its specifications. It works by starting with the specifications of the software and then systematically constructing a proof that the implementation of the software satisfies these specifications. Deductive verification is particularly useful for ensuring the correctness of safety-critical systems, such as those used in aviation and medical devices. One of the main advantages of deductive verification is that it can provide a high level of assurance that the software is correct, since the proof is constructed using formal rules and the proof can be checked by a computer.

- Design by refinement [60,61]: Design by refinement is a formal method used to develop correct software by iteratively refining an abstract specification of the software until a detailed implementation is obtained. It works by starting with a high-level specification of the software and then refining this specification step-by-step until a detailed implementation is obtained. Design by refinement can help to ensure that the software meets its specifications and is free of errors. It can also help to ensure that the software is maintainable and can be easily modified as requirements change. One of the main advantages of design by refinement is that it provides a systematic approach to software development, which can help ensure that the final product is correct and meets its specifications.

- Model-based testing (MBT) [62,63]: MBT is a formal method used to test software by generating test cases from a model of the software. It works by constructing a model of the software and then using this model to automatically generate test cases that exercise different parts of the software. MBT can help to ensure that the software meets its specifications and is free of errors. It can also help to reduce the time and effort needed to test the software. One of the main advantages of MBT is that it provides a systematic approach to that can help to ensure that the final product is correct and meets its specifications.

- Complete methods [64]: Complete methods are formal methods that are guaranteed to provide a definitive answer to a given problem. This means that, if a problem has a solution, a complete method will find it. For example, model checking is a complete method because it can systematically explore all possible behaviors of a system to determine whether a given property holds or not. Different types of complete FV exist, namely: SMT-based methods [65] and MILP-based methods.

- Partial methods [66]: Partial methods are formal methods that may not provide a definitive answer to a given problem. This means that a partial method may not be able to determine whether a problem has a solution or not. For example, abstract interpretation is a partial method because it can provide an over-approximation of the behavior of a program, but it may not be able to determine whether the program satisfies a given property or not.

- Asymptotically complete methods: Asymptotically complete methods are formal methods that are not guaranteed to provide a definitive answer to a problem, but as the size of the problem grows, the probability of finding a solution approaches 1. This means that, for very large problems, an asymptotically complete method will almost always find a solution. For example, heuristic search is an asymptotically complete method because, as the size of the search space grows, the probability of finding a solution approaches 1, even though there is no guarantee that a solution will be found for any given problem instance.

- Abstraction: Formal approaches allow abstraction, which means that they can provide a higher-level view of the software system. This can help to manage complexity by hiding irrelevant details and focusing on the essential characteristics of the system. Abstraction also makes it easier to reason about the behavior of the system and to identify potential errors and defects.

- Rigorous analysis: Formal methods provide a rigorous and systematic approach to analyzing software systems. This means that they use well-defined mathematical models and techniques to analyze the software, which can help to ensure that the analysis is accurate and complete. Rigorous analysis can identify defects and errors that may be missed by other methods, such as or informal reviews.

- Early defect discovery: Formal methods can be applied early in the software-development process, which can help to identify defects and errors before they become more difficult and expensive to fix. Early defect discovery can also help to improve the overall quality of the software and reduce the risk of defects, which could lead to system failures or safety hazards.

- Correctness guarantees: Formal methods can provide correctness guarantees, which means that they can prove that the software meets its specifications and behaves correctly. This can provide a high level of assurance that the software is correct and reliable. Correctness guarantees are particularly important for safety-critical systems, where errors or defects could have serious consequences.

- Reliability: Formal methods can improve the reliability of software systems by reducing the risk of errors and defects. This can help to ensure that the software behaves as expected and that it is robust and resilient to unexpected inputs or conditions. Reliability is particularly important for systems that need to operate continuously or that cannot be easily repaired or replaced.

- Efficient test scenarios: Formal methods can help to identify the most-efficient test scenarios for a software system. This can reduce the time and effort needed to test the software, while still ensuring that the software meets its specifications and behaves correctly. Efficient test scenarios can also help to improve the overall quality of the software and reduce the risk of defects that could lead to system failures or safety hazards [62,63].

- Maintainability: Formal methods can improve the maintainability of software systems by providing a clear and precise specification of the system’s behavior. This can make it easier to modify or refactor the software without introducing errors or defects. Formal methods can also help to ensure that modifications do not violate the system’s specifications or requirements.

- Reusability: Formal methods can improve the reusability of software components by providing a clear and precise specification of their behavior. This means that software components can be reused in different contexts without introducing errors or defects. Formal methods can also help to ensure that reused components behave correctly in all contexts.

- Standardization: Formal methods can provide a standardized approach to software development and verification. This means that software systems can be developed and verified using a common set of techniques and tools, which can improve interoperability and reduce the risk of errors or compatibility issues.

- Confidence: Formal methods can provide developers and stakeholders with confidence in the correctness and reliability of the software system. This can increase trust in the software system and reduce the risk of negative consequences, such as system failures or safety hazards.

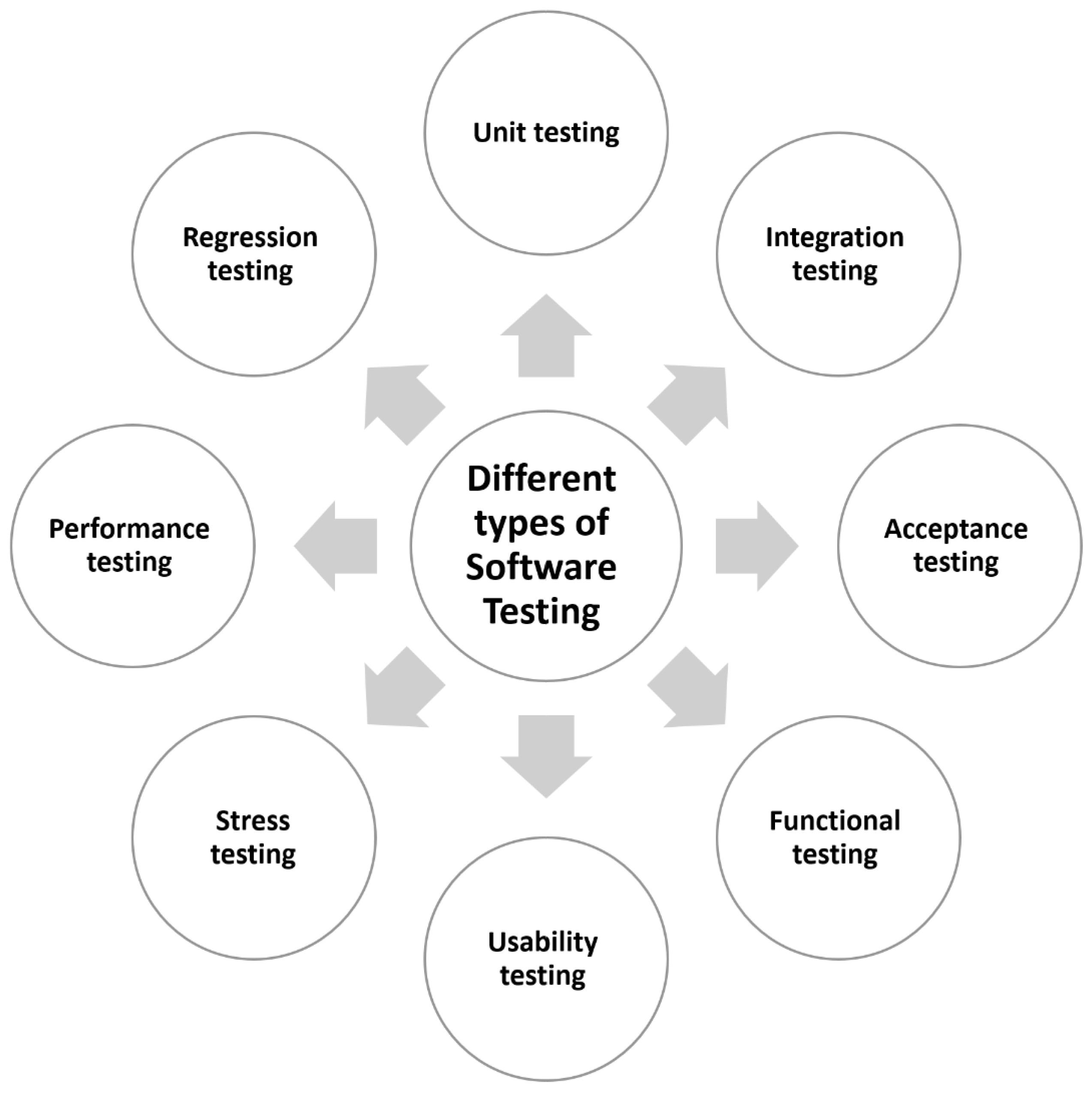

5. Testing Techniques

- Unit [67]: Unit is a method of that focuses on individual units or components of a software system. The goal of unit is to guarantee that each component of the system works as expected and meets its standards. Unit is normally accomplished by creating and executing test cases for each unit. Unit has the main advantage of detecting faults and defects early in the development process, making them easier and less expensive to rectify. The biggest disadvantage of unit is that it may fail to uncover flaws or faults that occur when units are merged.

- Integration [68]: Integration is a method that focuses on the interactions of several units or components of a software system. The goal of integration is to guarantee that the system as a whole works as planned and that the units work properly when joined. Integration is often accomplished by with various combinations of units and ensuring that they function as expected. The primary benefit of integration is that it can uncover flaws and defects that occur when units are merged, making them easier and less expensive to correct. The biggest disadvantage of integration is that it may miss faults or problems that occur when the system is stressed or loaded.

- Acceptance [69]: Acceptance is a method of determining whether a software system meets its requirements and specifications. The goal of acceptance is to guarantee that the system is acceptable to its stakeholders and meets their requirements. Acceptance is often accomplished by putting the system through its paces in a real-world setting and ensuring that it fits the requirements and specifications. Acceptance has the primary benefit of ensuring that the system meets the needs of its stakeholders. The fundamental shortcoming of acceptance is that it may fail to uncover mistakes or problems that occur when the system is stressed or loaded.

- Functional [70]: Functional is a approach that focuses on of the functionality of a software system. The objective of functional is to ensure that the system functions correctly and that it meets its requirements and specifications. Functional is typically achieved by of the system against a set of predefined test cases that cover all aspects of its functionality. The main advantage of functional is that it can ensure that the system functions correctly and that it meets its requirements and specifications.

- Usability [71]: Usability is a approach that focuses on for how easy it is to use a software system. The objective of usability is to ensure that the system is usable and that it meets the needs of its users. Usability is typically achieved by of the system with a group of representative users and observing how they interact with the system. The main advantage of usability is that it can ensure that the system is easy to use and that it meets the needs of its users.

- Stress [72]: Stress is a approach that focuses on for how well a software system performs under stress or load. The objective of stress is to ensure that the system can handle high volumes of traffic or requests without crashing or failing. Stress is typically achieved by of the system with a high volume of traffic or requests and observing how it performs. The main advantage of stress is that it can ensure that the system is reliable and can handle high volumes of traffic or requests.

- Performance [73]: Performance is a method of determining how well a software system operates under regular operating conditions. The goal of performance is to guarantee that the system is responsive and meets the needs of its users. Typically, performance is accomplished by subjecting the system to a representative load and evaluating how it performs. The primary benefit of performance is that it ensures that the system is responsive and works properly for its users. The fundamental disadvantage of performance is that it may miss mistakes or problems that occur when the system is stressed or loaded.

- Regression [74]: Regression is a method that focuses on determining whether changes to a software system have introduced new mistakes or faults. The goal of regression is to guarantee that the system continues to work appropriately after modifications have been made to it. Regression is often accomplished by retesting the system against a set of specified test cases following modifications to it. The primary benefit of regression is that it ensures that the system continues to function properly after modifications have been made to it. The fundamental shortcoming of regression is that it may fail to uncover mistakes or problems that occur when the system is under stress or pressure.

6. Use of AI in Software Testing

6.1. Advantages

- Automatic writing of test cases: Automatic writing of test cases is one of the most-significant advantages of using AI in software . AI can analyze code and identify potential areas of weakness, allowing it to generate test cases that can thoroughly test the software. This can save significant amounts of time and effort that would otherwise be spent writing test cases manually. Moreover, AI-generated test cases can often cover more scenarios and edge cases than human-written test cases, leading to more thorough and better software quality.

- Fast time-to-market: Ising AI in software can help reduce the time-to-market for software products. By automating repetitive and time-consuming tasks, such as regression , AI can help speed up the process. This can help software companies release products more quickly, gaining an edge in the competitive marketplace. Additionally, a faster time-to-market can lead to increased revenue and improved customer satisfaction, as customers are more likely to choose products that are released quickly and regularly updated with new features and functionality.

- Earliest response/feedback: Another advantage of using AI in software is the ability to provide early feedback on software quality. AI can detect defects and vulnerabilities early in the development cycle, allowing developers to address them before they become major issues. This can help improve software quality and reliability, leading to better customer satisfaction. Additionally, early detection of issues can help reduce the cost and effort required for fixing them later in the development process.

- Prognostic analysis: Prognostic analysis is another advantage of using AI in software . AI can analyze historical and real-time data to predict the future behavior of a software system. This can help identify potential issues before they occur, allowing developers to take preventative measures to avoid downtime or system failures. Additionally, prognostic analysis can help optimize the performance and efficiency of software systems, leading to better user experiences and improved customer satisfaction.

- Integrated platform: Using AI in software can help integrate various tools and platforms. AI can help unify different methods, such as unit , integration , and system . This can help software companies save time and reduce costs by using a single, integrated platform. Additionally, an integrated platform can provide a holistic view of software quality, allowing developers to identify and address issues more effectively.

- Reduction of UI-based : AI can help reduce the need for UI-based , which is often time-consuming and expensive. By automating backend , AI can help identify issues without the need for extensive UI , reducing the overall effort required. Additionally, reducing the need for UI-based can help improve the efficiency of teams, allowing them to focus on more-complex and critical tasks.

- Better code coverage: AI can help improve code coverage by identifying areas that are not adequately covered by existing test cases. This can help ensure that all parts of the software are thoroughly tested, reducing the risk of issues and vulnerabilities. Additionally, better code coverage can lead to better software quality and reliability, improving the overall user experience and customer satisfaction.

- Improved reliability: By automating tasks, AI can help improve the reliability of software products. Automated can detect defects and vulnerabilities that may be missed by manual , leading to more-reliable and stable software products. Additionally, improved reliability can help reduce the cost and effort required for maintenance and support, improving the overall efficiency and effectiveness of software development teams.

- Improved quality: Using AI in software can help improve the overall quality of software products. By detecting defects and vulnerabilities early in the development cycle, AI can help ensure that software products are of high quality and meet customer expectations. Additionally, improved quality can lead to better customer satisfaction, increased revenue, and a competitive edge in the marketplace.

- Automated visual validation : AI can also be used for automated visual validation , which involves comparing the visual output of a software system with expected results. This can help identify visual defects and inconsistencies, improving the overall quality and user experience of the software. Additionally, automated visual validation can help reduce the effort required for manual visual , allowing teams to focus on more-complex and critical tasks.

6.2. Examples of Tools

- Applitools is a visual tool that employs AI to automatically detect visual defects and inconsistencies in web and mobile applications. By utilizing computer vision algorithms, Applitools can compare screenshots of an application across various devices, browsers, and resolutions to identify differences that may indicate a defect. The tool can integrate with popular frameworks, such as Selenium and Appium, to seamlessly incorporate visual into existing processes. Applitools also provides a dashboard that highlights visual issues and streamlines defect tracking and management. Testers can leverage Applitools to enhance their visual coverage and accuracy, leading to better software products and increased customer satisfaction.

- Appvance IQ is an AI-based tool that utilizes machine learning algorithms to automatically generate and execute test cases across multiple platforms and environments. The tool can analyze user behavior to generate test cases that cover the most-critical and -common use cases. Appvance IQ can also detect defects and vulnerabilities and provide recommendations for improving software quality. The tool provides a dashboard that simplifies defect tracking and management and offers detailed reports and analytics on activities. Testers can optimize their test coverage and accuracy while saving time and effort on test case creation and maintenance by utilizing Appvance IQ.

- Functionize is an AI-based tool that allows testers to autonomously generate and execute test cases and detect and prioritize defects. Using advanced machine learning algorithms, Functionize can analyze user behavior to generate test cases that cover critical and common use cases and automatically prioritize defects based on severity. Functionize provides a dashboard that simplifies defect tracking and management and offers detailed reports and analytics on activities.

- Mabl is an AI-based tool that enables testers to automatically identify and prioritize issues and generate and maintain test cases. The tool uses advanced machine learning algorithms to analyze user behavior and generate test cases that cover critical and common use cases. Mabl can also detect issues and vulnerabilities and prioritize them based on severity, reducing the effort required for manual defect triage. The tool provides a dashboard that simplifies defect tracking and management and offers detailed reports and analytics on activities.

- ReTest is an artificial-intelligence-based solution that allows testers to assess software requirements and produce test cases that cover all potential combinations of input parameters. The program analyzes requirements and generates test cases that cover all conceivable combinations of input parameters, ensuring complete test coverage. ReTest can also automatically find problems and vulnerabilities and provide insights and recommendations for improving software quality. The tool provides a dashboard that simplifies defect tracking and management and offers detailed reports and analytics on activities. Testers can increase their productivity and effectiveness while ensuring complete test coverage and reducing the risk of faults and vulnerabilities.

- Sauce Labs is an AI-based tool that automates for web and mobile applications. The tool uses advanced machine learning algorithms to automatically generate and execute test cases across multiple platforms and environments, ensuring complete test coverage. Sauce Labs can also detect defects and vulnerabilities and provide recommendations for improving software quality. The tool provides a dashboard that simplifies defect tracking and management and offers detailed reports and analytics on activities. By utilizing Sauce Labs, testers can improve their efficiency and effectiveness while ensuring complete test coverage across multiple platforms and environments.

- Test.AI is an AI-powered platform that enables testers to create and execute test cases while detecting and prioritizing errors. The tool analyzes user activity and generates test cases that cover crucial and common use scenarios using powerful machine learning methods. Test.AI can also detect and prioritize flaws and vulnerabilities based on severity, minimizing the time and effort necessary for manual defect triage. The tool provides a dashboard that simplifies defect tracking and management and offers extensive results and analytics on efforts. Testers can increase their efficiency and effectiveness while reducing the time and effort required for test case generation and maintenance by using Test.AI.

- Testim is an AI-driven tool that enables testers to create and execute test cases with ease. The tool uses advanced machine learning algorithms to analyze user behavior and generate test cases that cover critical and common use cases. Testim can also detect defects and vulnerabilities and provide recommendations for improving software quality. The tool provides a dashboard that simplifies defect tracking and management and offers detailed reports and analytics on activities.

- Tricentis Tosca is an AI-based tool that enables testers to generate, maintain, and execute test cases across multiple platforms and environments. The tool uses advanced machine learning algorithms to analyze user behavior and generate test cases that cover critical and common use cases. Tricentis Tosca can also detect defects and vulnerabilities and provide recommendations for improving software quality. The tool provides a dashboard that simplifies defect tracking and management and offers detailed reports and analytics on activities across multiple platforms and environments.

- Usetrace is an AI-powered tool that enables testers to automatically generate and execute test cases. The tool uses machine learning algorithms to analyze user behavior and generate test cases that cover critical and common use cases. Usetrace can also detect defects and vulnerabilities and provide recommendations for improving software quality. The tool provides a dashboard that simplifies defect tracking and management and offers detailed reports and analytics on activities.

7. Case Study: Temperature-Measuring System

- Abstraction: At this level, it is quite simple to see that the various components of the system under consideration are described at a very high level. Additionally, many details are abstracted away, and the interactions between the various s are not taken into account either.

- Modularization and compositionality: The suggested system is represented as a network of eight finite state machines. Every finite state machine has three states and three transitions. By multiplying the products of these different finite state machines, we obtain a large finite state machine with around states. If we expand the number of s to eight, the product’s states might reach , which is a big quantity. This demonstrates the significance of studying modularization and compositionality in order to reduce the size of the models under consideration.

- Symmetry detection: It is easy to observe how the various s and pieces play symmetric roles. As a result, the FV of the entire studied system may be simplified to the verification of the product of only two finite state machines: one for each of the four s and one for each of the four collecting elements (as shown in Figure 7).

- Data independence detection: We can suppose that the system’s various s will measure additional elements such as pressure and humidity. However, if there is no association between and these additional variables, there is no need to include them in the system model. This definitely provides for a reduction in the complexity and size of the considered model.

- Eliminating functional dependencies: Assume that the saves the average of the readings received from the various s. In this situation, it is evident that there is a direct relationship between the stored variable and the data measured by the s. Thus, in order to simplify the complexity of the verification process, we must account for the connection between these various variables by removing the new variable corresponding to the average value because it can be determined from other variables.

- Exploiting reversible rules: Consider the finite state machine that describes the behavior of 1. This finite state machine can be simplified further by merging the two nodes connected by the transition , as this operation can be viewed as internal and has no effect on the FV of the entire system. Similarly, we can compress the pairs of finite state machine nodes connected by transitions. After verification, the collapsed nodes can be separated as they were originally.

- Refinement techniques: This technique considers an untimed specification () and a set of refinement rules that allow each high-level untimed activity to be transformed into a sequence of low-level timed actions. Test cases (s) are retrieved from the untimed and refined into timed s using the refinement procedures that have been established. For example, in Figure 8, the operation is refined into a sequence of four timed actions: is measured by recording three successive values and then taking the average of these three values. This dramatically simplifies the test-creation technique and greatly decreases the calculation time and space requirements.

- Reducing the size of digital-clock tests: A digital-clock test can be thought of as a particular tree with a special action, which mimics time progression. The purpose of this phase is to reduce the size of the test tree by compacting action sequences. This technique is presented in Figure 9, where a sequence of ten actions is replaced with just one transition labeled with . The size of the exams is greatly decreased in this way.

- Timed automaton (TA) tester generation: When the system is presented as a non-deterministic timed automaton, there are two alternatives for test generation. The first option is to generate on the fly. That is, test generation and test execution are carried out concurrently. This first option is challenging in general since it necessitates the use of high-performance calculators. The second option is to pre-determine the considered timed automaton before running the test. In Figure 10, for example, we suggest a non-deterministic version of a section of the model of the investigated system. This automaton is non-deterministic since the same action leads to different successor nodes from the beginning node. This non-determinism corresponds to the fact that the may estimate by computing the average of three collected data or only two values depending on some internal choices (for example, available resources). The result of the determinization of the non-deterministic automaton is shown in the same image. As previously stated, this method is not always practicable in a precise manner. As a result, we may need to make some assumptions.

- updating: As our system evolves, we must update the model accordingly. In this instance, we must also update the previously generated , as recreating them from the beginning would be prohibitively expensive. In Figure 11, we propose a potential system evolution. The initially sent an acknowledgment to the corresponding after storing the in the database. In the new version, the acknowledgment is transmitted once the storage is complete. In order to minimize the cost and duration of the test-regeneration phase, the previously generated tests must be updated appropriately. As previously described, we must compare the two system models (the old and the new) and classify the available to achieve this objective.

- Resource-aware tester component placement: In general, the architecture for may be centralized or decentralized. In the second scenario, we must devise a method for distributing the various test components across the system’s computational elements. If the has sufficient resources, for instance, it can host some of the test components devoted to verifying some of the s. Similarly, if one of the s has sufficient resources, it can host the test component responsible for the of another . Optimization techniques must be employed for this purpose.

- Coverage techniques: These techniques enable the intelligent reduction of the number of created tests by defining specific selection criteria. In our case, we may consider a criterion that enables us to cover the various nodes of the various finite state machines of the model under consideration. Similarly, we may consider a second criterion that encompasses the set of transitions, the set of transition pairs that occur consecutively, etc. This can be accomplished by constructing the observable graph as previously described.

8. Challenges and Open Issues

9. Conclusions and Future Work

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Laghari, A.A.; Wu, K.; Laghari, R.A.; Ali, M.; Khan, A.A. A review and state of art of Internet of Things (IoT). Arch. Comput. Methods Eng. 2021, 29, 1395–1413. [Google Scholar] [CrossRef]

- Abdalzaher, M.S.; Fouda, M.M.; Elsayed, H.A.; Salim, M.M. Toward Secured IoT-Based Smart Systems Using Machine Learning. IEEE Access 2023, 11, 20827–20841. [Google Scholar] [CrossRef]

- Hassan, F.; Hussain, S.F.; Qaisar, S.M. Fusion of multivariate EEG signals for schizophrenia detection using CNN and machine learning techniques. Inf. Fusion 2023, 92, 466–478. [Google Scholar] [CrossRef]

- Imtiaz, S.I.; Khan, L.A.; Almadhor, A.S.; Abbas, S.; Alsubai, S.; Gregus, M.; Jalil, Z. Efficient Approach for Anomaly Detection in Internet of Things Traffic Using Deep Learning. Wirel. Commun. Mob. Comput. 2022, 2022, 8266347. [Google Scholar] [CrossRef]

- Alamer, M.; Almaiah, M.A. Cybersecurity in Smart City: A systematic mapping study. In Proceedings of the 2021 International Conference on Information Technology (ICIT), Amman, Jordan, 14–15 July 2021; pp. 719–724. [Google Scholar]

- Allouch, A.; Cheikhrouhou, O.; Koubâa, A.; Toumi, K.; Khalgui, M.; Nguyen Gia, T. Utm-chain: Blockchain-based secure unmanned traffic management for Internet of drones. Sensors 2021, 21, 3049. [Google Scholar] [CrossRef] [PubMed]

- Abdalzaher, M.S.; Salim, M.M.; Elsayed, H.A.; Fouda, M.M. Machine learning benchmarking for secured IoT smart systems. In Proceedings of the 2022 IEEE International Conference on Internet of Things and Intelligence Systems (IoTaIS), Bali, Indonesia, 24–26 November 2022; pp. 50–56. [Google Scholar]

- Malik, A.; Khan, M.Z.; Qaisar, S.M.; Faisal, M.; Mehmood, G. An Efficient Approach for the Detection and Prevention of Gray-Hole Attacks in VANETs. IEEE Access 2023, 11, 46691–46706. [Google Scholar] [CrossRef]

- Abdalzaher, M.S.; Elsayed, H.A.; Fouda, M.M. Employing remote sensing, data communication networks, ai, and optimization methodologies in seismology. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2022, 15, 9417–9438. [Google Scholar] [CrossRef]

- Lee, I. Internet of Things (IoT) cybersecurity: Literature review and IoT cyber risk management. Future Internet 2020, 12, 157. [Google Scholar] [CrossRef]

- Koubaa, A.; Allouche, A.; Khalgui, M.; Cheikhrouhou, O. Blockchain-Based Solution for Internet of Drones Security and Privacy. U.S. Patent 11,488,488, 31 March 2022. [Google Scholar]

- Javed, A.R.; Shahzad, F.; ur Rehman, S.; Zikria, Y.B.; Razzak, I.; Jalil, Z.; Xu, G. Future smart cities: Requirements, emerging technologies, applications, challenges, and future aspects. Cities 2022, 129, 103794. [Google Scholar] [CrossRef]

- Antonakakis, M.; April, T.; Bailey, M.; Bernhard, M.; Bursztein, E.; Cochran, J.; Durumeric, Z.; Halderman, J.A.; Invernizzi, L.; Kallitsis, M.; et al. Understanding the Mirai Botnet. In Proceedings of the 26th USENIX Security Symposium (USENIX Security 17), Vancouver, BC, Canada, 16–18 August 2017; USENIX Association: Vancouver, BC, Canada, 2017; pp. 1093–1110. [Google Scholar]

- Bakić, B.; Milić, M.; Antović, I.; Savić, D.; Stojanović, T. 10 years since Stuxnet: What have we learned from this mysterious computer software worm? In Proceedings of the 2021 25th International Conference on Information Technology (IT), Zabljak, Montenegro, 16–20 February 2021; pp. 1–4. [Google Scholar]

- Wang, F.; Cao, Z.; Tan, L.; Zong, H. Survey on learning-based formal methods: Taxonomy, applications and possible future directions. IEEE Access 2020, 8, 108561–108578. [Google Scholar] [CrossRef]

- Gleirscher, M.; Marmsoler, D. Formal methods in dependable systems engineering: A survey of professionals from Europe and North America. Empir. Softw. Eng. 2020, 25, 4473–4546. [Google Scholar] [CrossRef]

- Gleirscher, M.; Foster, S.; Woodcock, J. New opportunities for integrated formal methods. ACM Comput. Surv. (CSUR) 2019, 52, 1–36. [Google Scholar] [CrossRef]

- Hofer-Schmitz, K.; Stojanović, B. Towards formal methods of IoT application layer protocols. In Proceedings of the 2019 12th CMI Conference on Cybersecurity and Privacy (CMI), Copenhagen, Denmark, 28–29 November 2019; pp. 1–6. [Google Scholar]

- Souri, A.; Norouzi, M. A state-of-the-art survey on formal verification of the Internet of things applications. J. Serv. Sci. Res. 2019, 11, 47–67. [Google Scholar] [CrossRef]

- Siboni, S.; Sachidananda, V.; Meidan, Y.; Bohadana, M.; Mathov, Y.; Bhairav, S.; Shabtai, A.; Elovici, Y. Security testbed for Internet-of-Things devices. IEEE Trans. Reliab. 2019, 68, 23–44. [Google Scholar] [CrossRef]

- Jeannotte, B.; Tekeoglu, A. Artorias: IoT security testing framework. In Proceedings of the 2019 26th International Conference on Telecommunications (ICT), Hanoi, Vietnam, 8–10 April 2019; pp. 233–237. [Google Scholar]

- Matheu-García, S.N.; Hernández-Ramos, J.L.; Skarmeta, A.F.; Baldini, G. Risk-based automated assessment and testing for the cybersecurity certification and labelling of IoT devices. Comput. Stand. Interfaces 2019, 62, 64–83. [Google Scholar] [CrossRef]

- Garousi, V.; Keleş, A.B.; Balaman, Y.; Güler, Z.Ö.; Arcuri, A. Model-based testing in practice: An experience report from the web applications domain. J. Syst. Softw. 2021, 180, 111032. [Google Scholar] [CrossRef]

- Ahmad, T.; Iqbal, J.; Ashraf, A.; Truscan, D.; Porres, I. Model-based testing using UML activity diagrams: A systematic mapping study. Comput. Sci. Rev. 2019, 33, 98–112. [Google Scholar] [CrossRef]

- Krichen, M.; Mechti, S.; Alroobaea, R.; Said, E.; Singh, P.; Khalaf, O.I.; Masud, M. A formal testing model for operating room control system using Internet of things. Comput. Mater. Contin. 2021, 66, 2997–3011. [Google Scholar] [CrossRef]

- Miller, B.P.; Zhang, M.; Heymann, E.R. The relevance of classic fuzz testing: Have we solved this one? IEEE Trans. Softw. Eng. 2020, 48, 2028–2039. [Google Scholar] [CrossRef]

- Fu, Y.; Ren, M.; Ma, F.; Shi, H.; Yang, X.; Jiang, Y.; Li, H.; Shi, X. Evmfuzzer: Detect evm vulnerabilities via fuzz testing. In Proceedings of the 2019 27th ACM Joint Meeting on European Software Engineering Conference and Symposium on the Foundations of Software Engineering, Tallinn, Estonia, 26–30 August 2019; pp. 1110–1114. [Google Scholar]

- Mihalič, F.; Truntič, M.; Hren, A. Hardware-in-the-loop simulations: A historical overview of engineering challenges. Electronics 2022, 11, 2462. [Google Scholar] [CrossRef]

- Kiesbye, J.; Messmann, D.; Preisinger, M.; Reina, G.; Nagy, D.; Schummer, F.; Mostad, M.; Kale, T.; Langer, M. Hardware-in-the-loop and software-in-the-loop testing of the move-ii cubesat. Aerospace 2019, 6, 130. [Google Scholar] [CrossRef]

- Xie, B.; Wang, S.; Wu, X.; Wen, C.; Zhang, S.; Zhao, X. Design and hardware-in-the-loop test of a coupled drive system for electric tractor. Biosyst. Eng. 2022, 216, 165–185. [Google Scholar] [CrossRef]

- Hofer-Schmitz, K.; Stojanović, B. Towards formal verification of IoT protocols: A Review. Comput. Netw. 2020, 174, 107233. [Google Scholar] [CrossRef]

- Al Farooq, A.; Al-Shaer, E.; Moyer, T.; Kant, K. Iotc 2: A formal method approach for detecting conflicts in large scale iot systems. In Proceedings of the 2019 IFIP/IEEE symposium on integrated network and service management (IM), Arlington, VA, USA, 8–12 April 2019; pp. 442–447. [Google Scholar]

- Ahmed, A.I.A.; Hamid, S.H.A.; Gani, A.; Abdelaziz, A.; Abaker, M. Formal Analysis of Trust and Reputation for Service Composition in IoT. Sensors 2023, 23, 3192. [Google Scholar] [CrossRef]

- Souad, M.; Faiza, B.; Nabil, H. Formal modeling iot systems on the basis of biagents* and maude. In Proceedings of the 2020 International Conference on Advanced Aspects of Software Engineering (ICAASE), Constantine, Algeria, 28–30 November 2020; pp. 1–7. [Google Scholar]

- Aziz, B. A formal model and analysis of an IoT protocol. Ad Hoc Netw. 2016, 36, 49–57. [Google Scholar] [CrossRef]

- Fortas, A.; Kerkouche, E.; Chaoui, A. Formal verification of IoT applications using rewriting logic: An MDE-based approach. Sci. Comput. Program. 2022, 222, 102859. [Google Scholar] [CrossRef]

- Hagar, J.; Wendland, M.F. Defining Software Test Architectures with the UML Testing Profile. In Proceedings of the 2023 IEEE International Conference on Software Testing, Verification and Validation Workshops (ICSTW), Dublin, Ireland, 16–20 April 2023; pp. 271–280. [Google Scholar]

- Toman, Z.H.; Hamel, L.; Toman, S.H.; Graiet, M.; Valadares, D.C.G. Formal verification for security and attacks in IoT physical layer. J. Reliab. Intell. Environ. 2023, 1–19. [Google Scholar] [CrossRef]

- Elsayed, E.K.; Diab, L.; Ibrahim, A.A. Formal Verification of an Efficient Architecture to Enhance the Security in IoT. Int. J. Adv. Comput. Sci. Appl. 2021, 12, 134–139. [Google Scholar] [CrossRef]

- Keerthi, K.; Roy, I.; Hazra, A.; Rebeiro, C. Formal verification for security in IoT devices. Secur. Fault Toler. Internet Things 2019, 179–200. [Google Scholar] [CrossRef]

- Shieh, M.Z.; Lin, Y.B.; Hsu, Y.J. VerificationTalk: A verification and security mechanism for IoT applications. Sensors 2021, 21, 7449. [Google Scholar] [CrossRef] [PubMed]

- Abdalzaher, M.S.; Samy, L.; Muta, O. Non-zero-sum game-based trust model to enhance wireless sensor networks security for IoT applications. IET Wirel. Sens. Syst. 2019, 9, 218–226. [Google Scholar] [CrossRef]

- Cheikhrouhou, O.; Koubâa, A. Blockloc: Secure localization in the Internet of things using blockchain. In Proceedings of the 2019 15th International Wireless Communications & Mobile Computing Conference (IWCMC), Tangier, Morocco, 24–28 June 2019; pp. 629–634. [Google Scholar]

- Nasir, M.; Javed, A.R.; Tariq, M.A.; Asim, M.; Baker, T. Feature engineering and deep learning-based intrusion detection framework for securing edge IoT. J. Supercomput. 2022, 78, 8852–8866. [Google Scholar] [CrossRef]

- Ahmad, W.; Rasool, A.; Javed, A.R.; Baker, T.; Jalil, Z. Cyber security in IoT-based cloud computing: A comprehensive survey. Electronics 2021, 11, 16. [Google Scholar] [CrossRef]

- Mihoub, A.; Lefebvre, G. Social intelligence modeling using wearable devices. In Proceedings of the 22nd International Conference on Intelligent User Interfaces, Limassol, Cyprus, 13–16 March 2017; pp. 331–341. [Google Scholar]

- Kelati, A.; Dhaou, I.B.; Tenhunen, H. Biosignal monitoring platform using Wearable IoT. In Proceedings of the 22st Conference of Open Innovations Association FRUCT, Jyvaskyla, Finland, 15–18 May 2018; pp. 332–337. [Google Scholar]

- Abdalzaher, M.S.; Soliman, M.S.; El-Hady, S.M.; Benslimane, A.; Elwekeil, M. A deep learning model for earthquake parameters observation in IoT system-based earthquake early warning. IEEE Internet Things J. 2021, 9, 8412–8424. [Google Scholar] [CrossRef]

- Maher, A.; Qaisar, S.M.; Salankar, N.; Jiang, F.; Tadeusiewicz, R.; Pławiak, P.; Abd El-Latif, A.A.; Hammad, M. Hybrid EEG-fNIRS brain-computer interface based on the non-linear features extraction and stacking ensemble learning. Biocybern. Biomed. Eng. 2023, 43, 463–475. [Google Scholar] [CrossRef]

- Krichen, M.; Adoni, W.Y.H.; Mihoub, A.; Alzahrani, M.Y.; Nahhal, T. Security challenges for drone communications: Possible threats, attacks and countermeasures. In Proceedings of the 2022 2nd International Conference of Smart Systems and Emerging Technologies (SMARTTECH), Riyadh, Saudi Arabia, 9–11 May 2022; pp. 184–189. [Google Scholar]

- Kondoro, A.; Dhaou, I.B.; Tenhunen, H.; Mvungi, N. Real time performance analysis of secure IoT protocols for microgrid communication. Future Gener. Comput. Syst. 2021, 116, 1–12. [Google Scholar] [CrossRef]

- Gupta, M.; Kumar, R.; Shekhar, S.; Sharma, B.; Patel, R.B.; Jain, S.; Dhaou, I.B.; Iwendi, C. Game theory-based authentication framework to secure Internet of vehicles with blockchain. Sensors 2022, 22, 5119. [Google Scholar] [CrossRef]

- Cousot, P. Abstract interpretation based formal methods and future challenges. In Informatics: 10 Years Back. 10 Years Ahead; Springer: Berlin/Heidelberg, Germany, 2001; pp. 138–156. [Google Scholar]

- Gosain, A.; Sharma, G. Static analysis: A survey of techniques and tools. In Intelligent Computing and Applications; Springer: Berlin/Heidelberg, Germany, 2015. [Google Scholar]

- Saadatmand, M.; Enoiu, E.P.; Schlingloff, H.; Felderer, M.; Afzal, W. Smartdelta: Automated quality assurance and optimization in incremental industrial software systems development. In Proceedings of the 2022 25th Euromicro Conference on Digital System Design (DSD), Maspalomas, Spain, 31 August–2 September 2022; pp. 754–760. [Google Scholar]

- Abbas, M.; Hamayouni, A.; Moghadam, M.H.; Saadatmand, M.; Strandberg, P.E. Making Sense of Failure Logs in an Industrial DevOps Environment. In Proceedings of the International Conference on Information Technology-New Generations, Las Vegas, NV, USA, 24–26 April 2023; pp. 217–226. [Google Scholar]

- Müller-Olm, M.; Schmidt, D.; Steffen, B. Model-checking. In Proceedings of the International Static Analysis Symposium, Venice, Italy, 22–24 September 1999; pp. 330–354. [Google Scholar]

- Geuvers, H. Proof assistants: History, ideas and future. Sadhana 2009, 34, 3–25. [Google Scholar] [CrossRef]

- Pnueli, A.; Ruah, S.; Zuck, L. Automatic deductive verification with invisible invariants. In Proceedings of the International Conference on Tools and Algorithms for the Construction and Analysis of Systems, Genoa, Italy, 2–6 April 2001; pp. 82–97. [Google Scholar]

- Burch, J.R.; Passerone, R.; Sangiovanni-Vincentelli, A.L. Modeling techniques in design-by-refinement methodologies. In System Specification & Design Languages; Springer: Berlin/Heidelberg, Germany, 2003; pp. 283–292. [Google Scholar]

- Bensalem, S.; Krichen, M.; Majdoub, L.; Robbana, R.; Tripakis, S. A Simplified Approach for Testing Real-Time Systems Based on Action Refinement. In Proceedings of the ISoLA 2007, Workshop on Leveraging Applications of Formal Methods, Verification and Validation, Poitiers, France, 12–14 December 2007; pp. 191–202. [Google Scholar]

- Krichen, M. Contributions to Model-Based Testing of Dynamic and Distributed Real-Time Systems. Ph.D. Thesis, École Nationale d’Ingénieurs de Sfax, Sfax, Tunisie, 2018. [Google Scholar]

- Krichen, M. A formal framework for conformance testing of distributed real-time systems. In Proceedings of the International Conference on Principles of Distributed Systems, Tozeur, Tunisia, 14–17 December 2010; pp. 139–142. [Google Scholar]

- Davis, J.A.; Clark, M.; Cofer, D.; Fifarek, A.; Hinchman, J.; Hoffman, J.; Hulbert, B.; Miller, S.P.; Wagner, L. Study on the barriers to the industrial adoption of formal methods. In Proceedings of the International Workshop on Formal Methods for Industrial Critical Systems, Madrid, Spain, 23–24 September 2013; pp. 63–77. [Google Scholar]

- Barrett, C.; Tinelli, C. Satisfiability modulo theories. In Handbook of Model Checking; Springer: Berlin/Heidelberg, Germany, 2018; pp. 305–343. [Google Scholar]

- Easterbrook, S.; Callahan, J. Formal methods for verification and validation of partial specifications: A case study. J. Syst. Softw. 1998, 40, 199–210. [Google Scholar] [CrossRef]

- Khorikov, V. Unit Testing Principles, Practices, and Patterns; Simon and Schuster: New York, NY, USA, 2020. [Google Scholar]

- Shashank, S.P.; Chakka, P.; Kumar, D.V. A systematic literature survey of integration testing in component-based software engineering. In Proceedings of the 2010 International Conference on Computer and Communication Technology (ICCCT), Allahabad, India, 17–19 September 2010; pp. 562–568. [Google Scholar]

- Van Heugten Breurkes, J.; Gilson, F.; Galster, M. Overlap between Automated Unit and Acceptance Testing—A Systematic Literature Review. In Proceedings of the International Conference on Evaluation and Assessment in Software Engineering 2022, Gothenburg, Sweden, 13–15 June 2022; pp. 80–89. [Google Scholar]

- Tramontana, P.; Amalfitano, D.; Amatucci, N.; Fasolino, A.R. Automated functional testing of mobile applications: A systematic mapping study. Softw. Qual. J. 2019, 27, 149–201. [Google Scholar] [CrossRef]

- Hertzum, M. Usability Testing: A Practitioner’s Guide to Evaluating the User Experience; Synthesis Lectures on Human-Centered Informatics; Springer: Berlin/Heidelberg, Germany, 2020; Volume 13, pp. 1–105. [Google Scholar]

- Maâlej, A.J.; Lahami, M.; Krichen, M.; Jmaïel, M. Distributed and Resource-Aware Load Testing of WS-BPEL Compositions. In Proceedings of the 20th International Conference on Enterprise Information Systems (ICEIS 2018), Funchal, Portugal, 21–24 March 2018; pp. 29–38. [Google Scholar]

- Ali, A.; Maghawry, H.A.; Badr, N. Performance testing as a service using cloud computing environment: A survey. J. Softw. Evol. Process. 2022, 34, e2492. [Google Scholar] [CrossRef]

- Lahami, M.; Krichen, M. A survey on runtime testing of dynamically adaptable and distributed systems. Softw. Qual. J. 2021, 29, 555–593. [Google Scholar] [CrossRef]

- Holzinger, A.; Saranti, A.; Angerschmid, A.; Retzlaff, C.O.; Gronauer, A.; Pejakovic, V.; Medel-Jimenez, F.; Krexner, T.; Gollob, C.; Stampfer, K. Digital transformation in smart farm and forest operations needs human-centered AI: Challenges and future directions. Sensors 2022, 22, 3043. [Google Scholar] [CrossRef] [PubMed]

- Alyami, H.; Alosaimi, W.; Krichen, M.; Alroobaea, R. Monitoring social distancing using artificial intelligence for fighting COVID-19 virus spread. Int. J. Open Source Softw. Process. (IJOSSP) 2021, 12, 48–63. [Google Scholar] [CrossRef]

- Krichen, M.; Mihoub, A.; Alzahrani, M.Y.; Adoni, W.Y.H.; Nahhal, T. Are Formal Methods Applicable To Machine Learning And Artificial Intelligence? In Proceedings of the 2022 2nd International Conference of Smart Systems and Emerging Technologies (SMARTTECH), Riyadh, Saudi Arabia, 9–11 May 2022; pp. 48–53. [Google Scholar]

- Mihoub, A. A deep learning-based framework for human activity recognition in smart homes. Mob. Inf. Syst. 2021, 2021, 6961343. [Google Scholar] [CrossRef]

- Gao, J.; Ramachandran, M. A survey on software testing techniques using artificial intelligence. J. Big Data 2018, 5, 1–35. [Google Scholar]

- Tian, J.; Li, Y.; Zhang, X. A survey on software testing with machine learning. J. Softw. Evol. Process. 2019, 31, e2176. [Google Scholar]

- Hrizi, O.; Gasmi, K.; Ben Ltaifa, I.; Alshammari, H.; Karamti, H.; Krichen, M.; Ben Ammar, L.; Mahmood, M.A. Tuberculosis disease diagnosis based on an optimized machine learning model. J. Healthc. Eng. 2022, 2022, 8950243. [Google Scholar] [CrossRef]

- Aworka, R.; Cedric, L.S.; Adoni, W.Y.H.; Zoueu, J.T.; Mutombo, F.K.; Kimpolo, C.L.M.; Nahhal, T.; Krichen, M. Agricultural decision system based on advanced machine learning models for yield prediction: Case of East African countries. Smart Agric. Technol. 2022, 2, 100048. [Google Scholar] [CrossRef]

- Cedric, L.S.; Adoni, W.Y.H.; Aworka, R.; Zoueu, J.T.; Mutombo, F.K.; Krichen, M.; Kimpolo, C.L.M. Crops yield prediction based on machine learning models: Case of West African countries. Smart Agric. Technol. 2022, 2, 100049. [Google Scholar] [CrossRef]

- Zidi, S.; Mihoub, A.; Qaisar, S.M.; Krichen, M.; Al-Haija, Q.A. Theft detection dataset for benchmarking and machine learning based classification in a smart grid environment. J. King Saud Univ.-Comput. Inf. Sci. 2023, 35, 13–25. [Google Scholar] [CrossRef]

- Teodoraș, D.A.; Popovici, E.C.; Suciu, G.; Sachian, M.A. Quantum technology’s role in cyber-security. In Proceedings of the Advanced Topics in Optoelectronics, Microelectronics, and Nanotechnologies XI, Constanta, Romania, 25–28 August 2022; Volume 12493, pp. 96–103. [Google Scholar]

| Layer | Function | Examples | Protocols |

|---|---|---|---|

| Perception | Data collection | Sensors, actuators | Zigbee, WiFi, Bluetooth |

| Network | Communication | Cellular, satellite, ad hoc | TCP/IP, MQTT, CoAP |

| Middleware | Data management | Data brokers, message queues | AMQP, MQTT, DDS |

| Application | Service provision | Smart homes, healthcare monitoring | REST, SOAP, CoAP |

| Formal Method | Description | Main Benefits |

|---|---|---|

| Abstract Interpretation | Analyzes and verifies the behavior of computer programs by abstracting away some details and approximating the program’s behavior | Can analyze complex programs and reason about their behavior at a higher level of abstraction |

| Semantic Static Analysis | Analyzes the behavior of computer programs by examining their source code and inferring their meaning | Can detect errors early in the development process and ensure the final product is correct and reliable |

| Model Checking | Verifies the correctness of a system by exhaustively exploring its possible behaviors | Provides complete coverage of all possible behaviors and can ensure the correctness of critical systems |

| Proof Assistants | Software tools that help users construct and verify mathematical proofs | Provides a high level of assurance that the proof is correct and can verify the correctness of software and hardware designs |

| Deductive Verification | Verifies the correctness of software by constructing a formal proof that the software satisfies its specifications | Provides a high level of assurance that the software is correct and can ensure the correctness of safety-critical systems |

| Design by Refinement | Develops correct software by iteratively refining an abstract specification of the software until a detailed implementation is obtained | Provides a systematic approach to software development that can ensure the final product is correct and meets its specifications |

| MBT | Tests software by generating test cases from a model of the software | Can ensure the software meets its specifications and is free of errors and can reduce the time and effort needed to test the software |

| Class | Definition | Example | Advantages | Limitations |

|---|---|---|---|---|

| Complete Methods | Formal methods that are guaranteed to provide a definitive answer to a given problem | Model checking, theorem proving | Provide a definitive answer and can find all solutions | May be computationally expensive and may not scale well to large problems |

| Partial Methods | Formal methods that may not provide a definitive answer to a given problem | Abstract interpretation, type checking | Can handle complex problems and can provide useful information even if a definitive answer cannot be found | May not be able to detect all errors or find all solutions |

| Asymptotically Complete Methods | Formal methods that are not guaranteed to provide a definitive answer to a problem, but as the size of the problem grows, the probability of finding a solution approaches 1 | Heuristic search, stochastic methods | Can handle very large problems and can often find solutions quickly | May not always find a solution and may not be able to guarantee correctness |

| Advantage | Description |

|---|---|

| Abstraction | Formal methods provide a way to represent complex software systems in a simplified and abstract manner, which can help to reduce the complexity of the system and make it easier to reason about. |

| Rigorous Analysis | Formal methods provide a way to analyze software systems rigorously and to prove that they meet their specifications. This can help to ensure that the system behaves correctly and that it meets the needs of its stakeholders. |

| Early Defect Discovery | Formal methods can help detect errors and defects early in the development process, which can make them easier and less expensive to fix. |

| Correctness Guarantees | Formal methods can provide guarantees that a software system is correct and meets its specifications. This can help to increase the confidence in the system and reduce the risk of errors or defects. |

| Reliability | Formal methods can help to ensure that a software system is reliable and performs as expected under different conditions. This can help to increase the trust in the system and reduce the risk of failures or errors. |

| Efficient Test Scenarios | Formal methods can help to identify test scenarios that cover all possible system behaviors, which can reduce the number of tests needed and the time required for . This can result in more-efficient and faster time-to-market for software systems. |

| Maintainability | Formal methods can improve the maintainability of software systems by providing a clear and precise specification of the system’s behavior. This can make it easier to modify or refactor the software without introducing errors or defects. Formal methods can also help to ensure that modifications do not violate the system’s specifications or requirements. |

| Reusability | Formal methods can improve the reusability of software components by providing a clear and precise specification of their behavior. This means that software components can be reused in different contexts without introducing errors or defects. Formal methods can also help to ensure that reused components behave correctly in all contexts. |

| Standardization | Formal methods can provide a standardized approach to software development and verification. This means that software systems can be developed and verified using a common set of techniques and tools, which can improve interoperability and reduce the risk of errors or compatibility issues. |

| Confidence | Formal methods can provide developers and stakeholders with confidence in the correctness and reliability of the software system. This can increase trust in the software system and reduce the risk of negative consequences, such as system failures or safety hazards. |

| Testing Approach | Objective | Main Procedures | Limitations |

|---|---|---|---|

| Unit | Ensures that each unit of the system is working as expected and meets its specifications. | Writing test cases for each unit and executing those test cases. | May not detect errors or defects that arise when units are combined. |

| Integration | Ensures that the system as a whole is working as expected and that the units are functioning correctly when combined. | of different combinations of units and verifying that they work together as expected. | May not detect errors or defects that arise when the system is under stress or load. |

| Acceptance | Ensures that the system meets its requirements and specifications and is acceptable to stakeholders. | of the system in a real-world environment and verifying that it meets the requirements and specifications. | May not detect errors or defects that arise when the system is under stress or load. |

| Functional | Ensures that the system functions correctly and meets its requirements and specifications. | of the system against a set of predefined test cases that cover all aspects of its functionality. | May not detect errors or defects that arise when the system is under stress or load. |

| Usability | Ensures that the system is easy to use and meets the needs of its users. | of the system with a group of representative users and observing how they interact with the system. | May not detect errors or defects that arise when the system is under stress or load. |

| Stress | Ensures that the system can handle high volumes of traffic or requests without crashing or failing. | of the system with a high volume of traffic or requests and observing how it performs. | May not detect errors or defects that arise under normal operating conditions. |

| Performance | Ensures that the system is responsive and performs well for its users. | of the system with a representative load and observing how it performs. | May not detect errors or defects that arise when the system is under stress or load. |

| Regression | Ensures that the system continues to function correctly after changes have been made to it. | Retesting the system against a set of predefined test cases after changes have been made to it. | May not detect errors or defects that arise when the system is under stress or load. |

| Tool Name | Functionality | Advantages |

|---|---|---|

| Applitools | AI-powered visual | Automatically detects visual defects and inconsistencies. Compares screenshots across multiple devices, browsers, and resolutions. Integrates with popular frameworks such as Selenium and Appium. Provides a dashboard for easy defect tracking and management. |

| Appvance IQ | AI-based test case generation and execution | Automatically generates and executes test cases across multiple platforms and environments. Analyzes user behavior to generate test cases covering the most-critical and -common use cases. Detects defects and vulnerabilities. Provides insights and recommendations for improving software quality. Offers a dashboard for easy defect tracking and management. |

| Functionize | AI-based test case generation, execution, and defect detection | Autonomously generates and executes test cases. Analyzes user behavior to generate test cases covering the most-critical and common use cases. Automatically detects and prioritizes defects based on severity. Provides a dashboard for easy defect tracking and management. Reduces time and effort required for test case creation and maintenance. |

| Mabl | AI-based issue identification and prioritization | Automatically identifies and prioritizes issues. Generates and maintains test cases. Automatically detects issues and vulnerabilities. Provides a dashboard for easy defect tracking and management. Reduces the time and effort required for test case creation and maintenance. |

| ReTest | AI-based test case generation and defect detection | Analyzes software requirements to generate test cases covering all possible combinations of input parameters. Automatically detects defects and vulnerabilities. Provides insights and recommendations for improving software quality. Offers a dashboard for easy defect tracking and management. Ensures complete test coverage. |

| Sauce Labs | AI-based automated for web and mobile applications | Provides automated across multiple platforms and environments. Detects defects and vulnerabilities. Provides insights and recommendations for improving software quality. Offers a dashboard for easy defect tracking and management. Ensures complete test coverage. |

| Test.AI | AI-based test case generation and defect detection | Generates and executes test cases. Analyzes user behavior to generate test cases covering the most-critical and -common use cases. Automatically detects and prioritizes defects based on severity. Provides a dashboard for easy defect tracking and management. Reduces time and effort required for test case creation and maintenance. |

| Testim | AI-based test case generation and maintenance | Generates and maintains test cases. Automatically analyzes user behavior to generate test cases covering the most-critical and -common use cases. Provides a dashboard for easy defect tracking and management. Reduces time and effort required for test case creation and maintenance. |

| Tricentis Tosca | AI-based end-to-end for web and mobile applications | Provides automated across multiple platforms and environments. Detects defects and vulnerabilities. Provides insights and recommendations for improving software quality. Offers a dashboard for easy defect tracking and management. Ensures complete test coverage. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Krichen, M. A Survey on Formal Verification and Validation Techniques for Internet of Things. Appl. Sci. 2023, 13, 8122. https://doi.org/10.3390/app13148122

Krichen M. A Survey on Formal Verification and Validation Techniques for Internet of Things. Applied Sciences. 2023; 13(14):8122. https://doi.org/10.3390/app13148122

Chicago/Turabian StyleKrichen, Moez. 2023. "A Survey on Formal Verification and Validation Techniques for Internet of Things" Applied Sciences 13, no. 14: 8122. https://doi.org/10.3390/app13148122

APA StyleKrichen, M. (2023). A Survey on Formal Verification and Validation Techniques for Internet of Things. Applied Sciences, 13(14), 8122. https://doi.org/10.3390/app13148122