Creating Audio Object-Focused Acoustic Environments for Room-Scale Virtual Reality

Abstract

:1. Introduction

2. Background

2.1. 6-DoF Audio

2.2. Adaptive Audio

2.3. Rule-Based Systems

2.4. Spatial Implementation of Music in Room-Scale VR

2.5. Authors’ Contribution

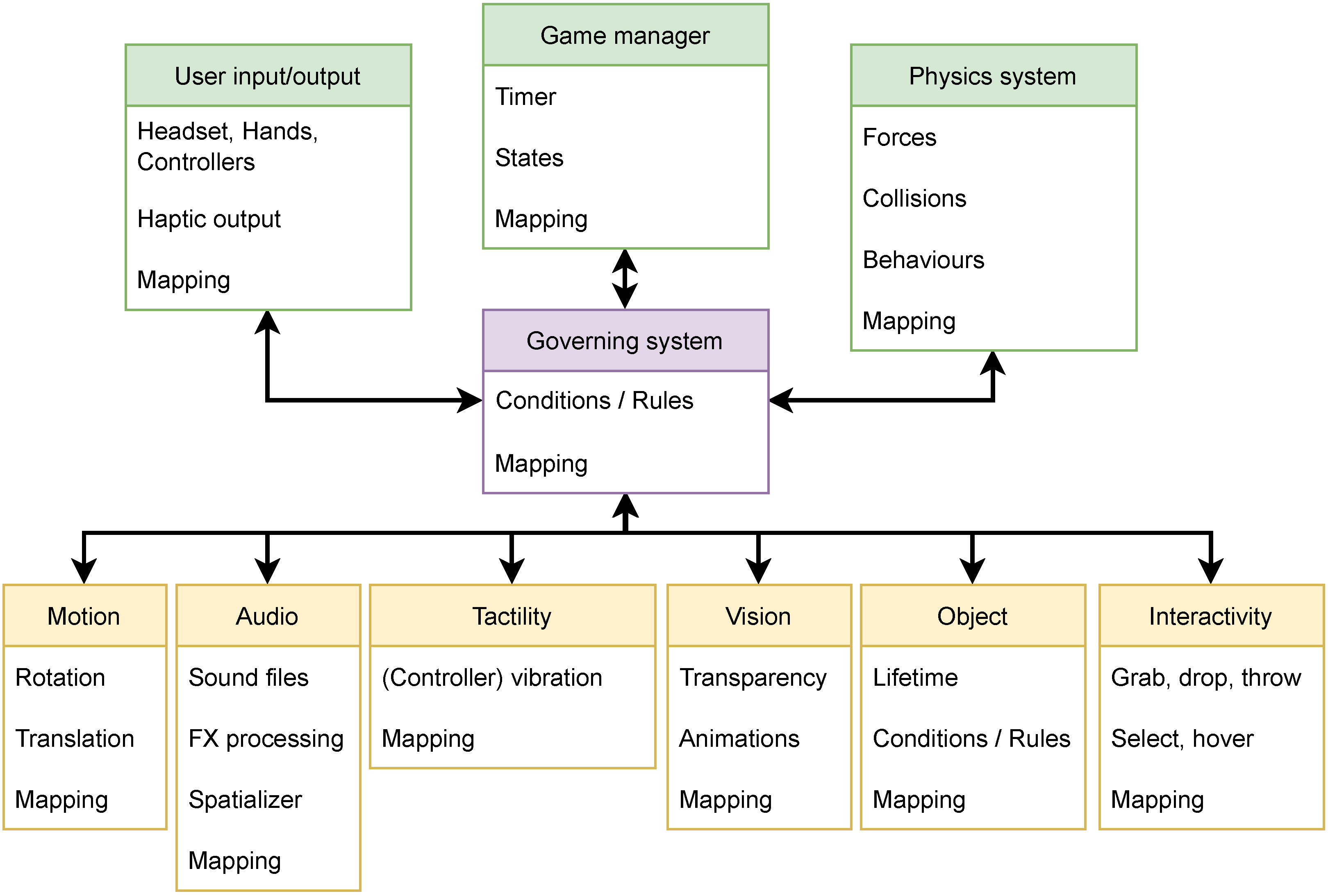

3. Methods

- The system should transition between its states over time to allow the player to observe these transitions.

- The system should affect or be replicated across several objects to cause complex inter-object interactions, aiming to cause subsequent state changes.

- The system should proactively initiate subsequent state changes to avoid the system’s premature termination/falling silent, depending on the scenario, narrative, and acoustic context.

- Methods of virtual room acoustics, such as reverberation and simulation of (early) reflections, should be used wherever possible to give a sense of space. Multiple, diffuse-sounding Ambisonic field recordings without localizable sound sources can be used alternatively in outdoor environments.

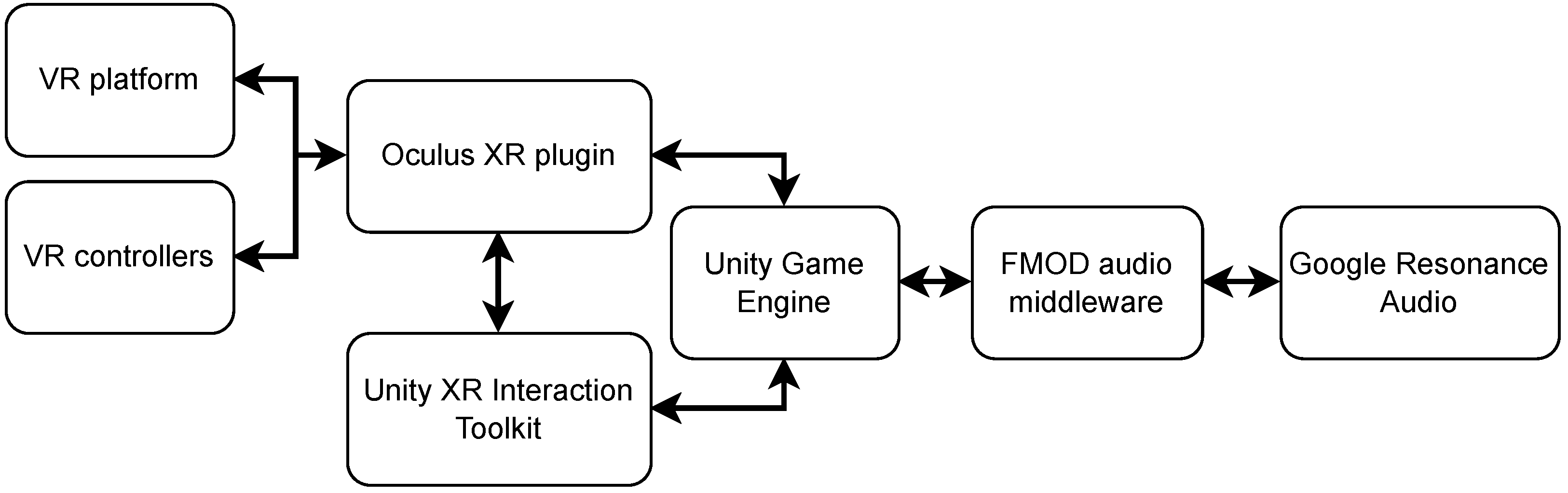

4. Implementation

4.1. Platform

4.2. Player Movement in Space

4.3. Multimodal Applications

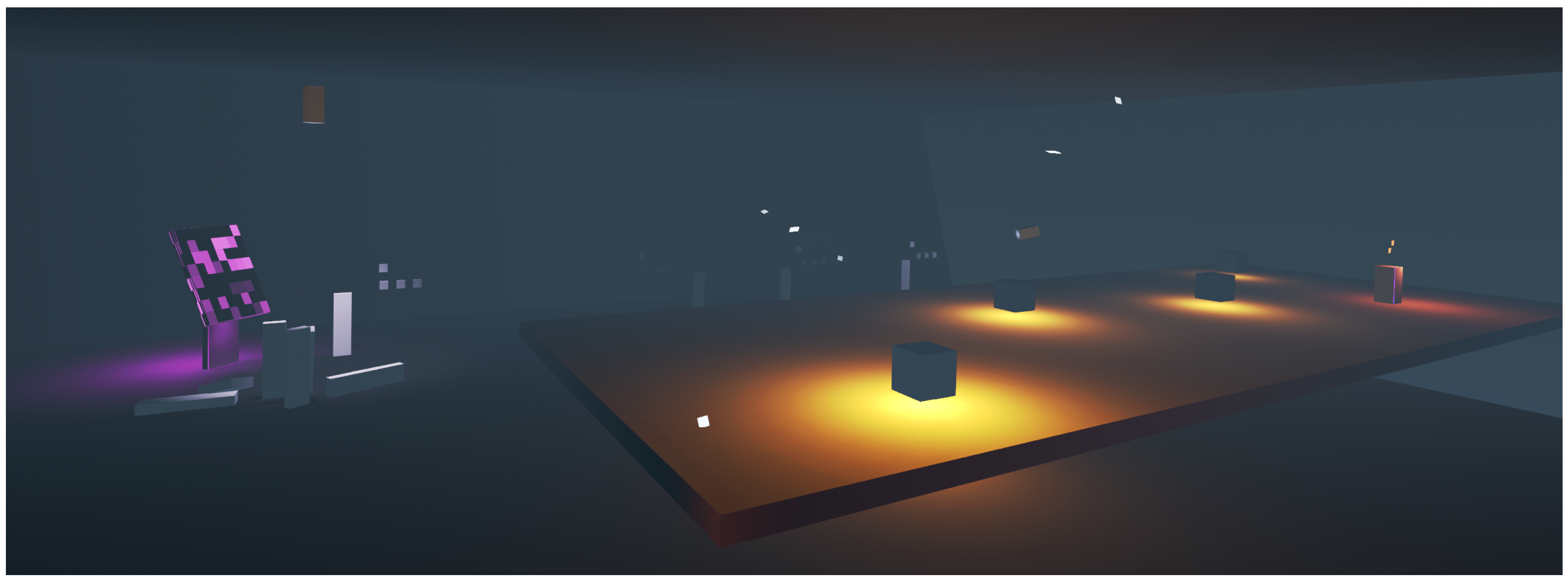

4.3.1. Collision-Based System

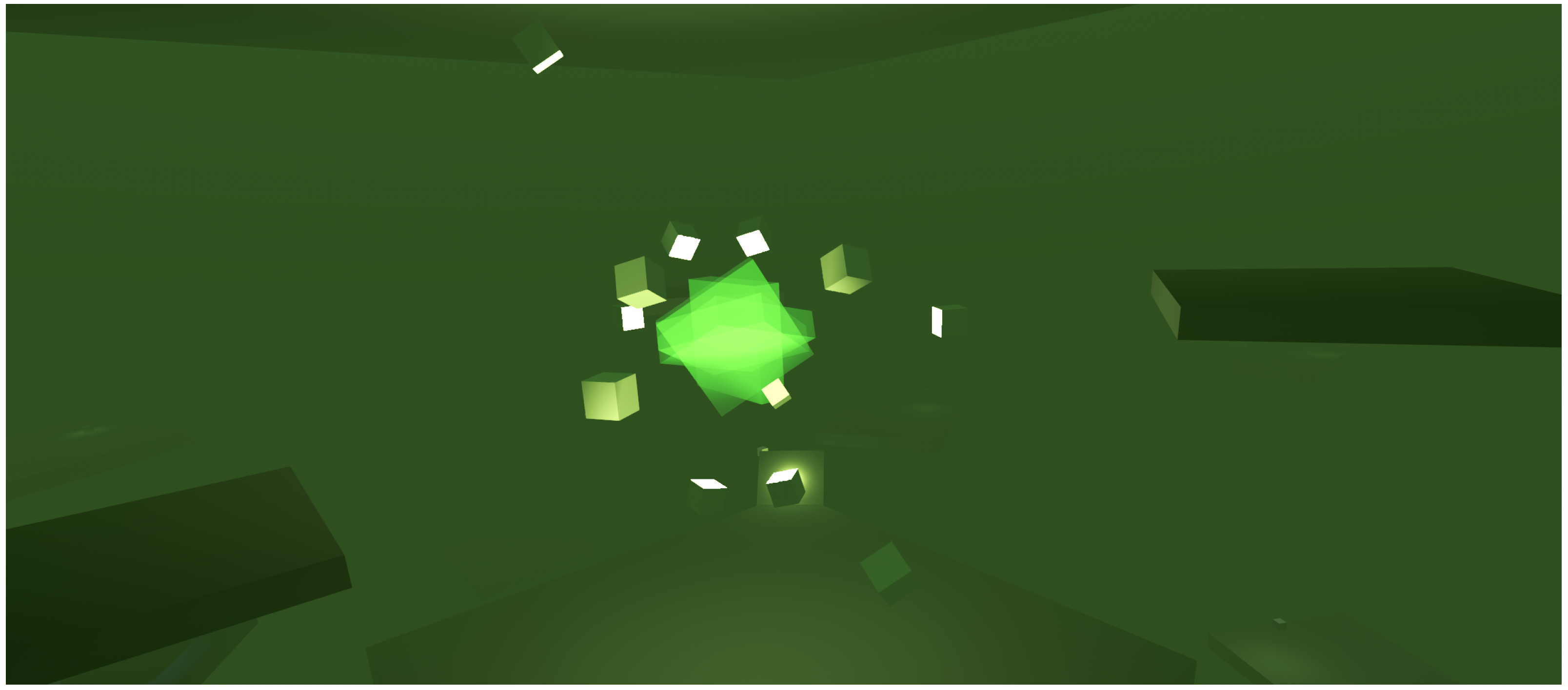

4.3.2. Motion-Pattern-Based System

4.3.3. List-Based System

5. Evaluation/Results

5.1. Collision-Based System

5.2. Motion-Pattern-Based System

5.3. List-Based System

6. Discussion

6.1. Multimodal Behavior Application Constraints

6.2. VR Platform Constraints

6.3. Implications of General-Purpose Programming Language

6.4. Implementation Complexities in Collision-Based Systems

6.5. Conversion of Ambisonic Field Recordings

7. Conclusions

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Schjerlund, J.; Hansen, M.R.P.; Jensen, J.G. Design Principles for Room-Scale Virtual Reality: A Design Experiment in Three Dimensions. In Designing for a Digital and Globalized World; Springer International Publishing: Cham, Switzerland, 2018; pp. 3–17. [Google Scholar] [CrossRef]

- Aylett, R.; Louchart, S. Towards a narrative theory of virtual reality. Virtual Real. 2003, 7, 2–9. [Google Scholar] [CrossRef] [Green Version]

- Facebook Technologies, LLC. Oculus Device Specifications. 2021. Available online: https://developer.oculus.com/resources/oculus-device-specs/ (accessed on 29 November 2021).

- Schütze, S.; Irwin-Schütze, A. New Realities in Audio: A Practical Guide for VR, AR, MR and 360 Video, 1st ed.; CRC Press: Boca Raton, FL, USA, 2018. [Google Scholar]

- Google LLC. Game Engine Integration. 2018. Available online: https://resonance-audio.github.io/resonance-audio/develop/fmod/game-engine-integration (accessed on 29 December 2021).

- Méndez, D.R.; Armstrong, C.; Stubbs, J.; Stiles, M.; Kearney, G. Practical Recording Techniques for Music Production with Six-Degrees of Freedom Virtual Reality. In Proceedings of the Audio Engineering Society Convention 145, New York, NY, USA, 17–20 October 2019; Audio Engineering Society: New York, NY, USA, 2019. [Google Scholar]

- Gorzel, M.; Allen, A.; Kelly, I.; Kammerl, J.; Gungormusler, A.; Yeh, H.; Boland, F. Efficient Encoding and Decoding of Binaural Sound with Resonance Audio. In Proceedings of the Audio Engineering Society Conference: 2019 AES International Conference on Immersive and Interactive Audio, York, UK, 27–29 March 2019; Audio Engineering Society: New York, NY, USA, 2019. [Google Scholar]

- Popp, C.; Murphy, D. Video Examples from: Creating Audio Object-Focused Acoustic Environments for Room-Scale Virtual Reality; Zenodo: Genève, Switzerland, 2022. [Google Scholar] [CrossRef]

- Tender Claws. Virtual Virtual Reality 2. 2022. Available online: https://www.oculus.com/experiences/quest/2731491443600205 (accessed on 5 June 2022).

- Phillips, W. Composing Video Game Music for Virtual Reality: Diegetic Versus Non-Diegetic. 2018. Available online: https://www.gamedeveloper.com/audio/composing-video-game-music-for-virtual-reality-diegetic-versus-non-diegetic (accessed on 21 March 2022).

- Meta. Sound Design for VR. 2022. Available online: https://developer.oculus.com/resources/audio-intro-sounddesign/ (accessed on 27 March 2022).

- Smalley, D. Spectromorphology: Explaining Sound-Shapes. Organised Sound 1997, 2, 107–126. [Google Scholar] [CrossRef] [Green Version]

- Song, X.; Lv, X.; Yu, D.; Wu, Q. Spatial-temporal change analysis of plant soundscapes and their design methods. Urban For. Urban Green. 2018, 29, 96–105. [Google Scholar] [CrossRef]

- Chattopadhyay, B. Sonic Menageries: Composing the sound of place. Organised Sound 2012, 17, 223–229. [Google Scholar] [CrossRef]

- Turchet, L.; Hamilton, R.; Çamci, A. Music in Extended Realities. IEEE Access 2021, 9, 15810–15832. [Google Scholar] [CrossRef]

- Atherton, J.; Wang, G. Doing vs. Being: A philosophy of design for artful VR. J. New Music. Res. 2020, 49, 35–59. [Google Scholar] [CrossRef]

- Valve Corporation. Half-Life: Alyx. 2020. Available online: https://www.half-life.com/en/alyx/ (accessed on 21 January 2022).

- Jain, D.; Junuzovic, S.; Ofek, E.; Sinclair, M.; Porter, J.; Yoon, C.; Machanavajhala, S.; Ringel Morris, M. A Taxonomy of Sounds in Virtual Reality. In Designing Interactive Systems Conference 2021; DIS ’21; Association for Computing Machinery: New York, NY, USA, 2021; pp. 160–170. [Google Scholar] [CrossRef]

- Zylia Sp. z o., o. ZYLIA Concert Hall on Oculus Quest. 2021. Available online: https://www.oculus.com/experiences/quest/3901710823174931/ (accessed on 30 November 2021).

- Ciotucha, T.; Rumiński, A.; Żernicki, T.; Mróz, B. Evaluation of Six Degrees of Freedom 3D Audio Orchestra Recording and Playback using multi-point Ambisonic interpolation. In Audio Engineering Society Convention 150, Online, 25–28 May 2021; Audio Engineering Society: New York, NY, USA, 2021. [Google Scholar]

- Settel, Z.; Downs, G. Building Navigable Listening Experiences Based On Spatial Soundfield Capture: The Case of the Orchestre Symphonique de Montréal Playing Beethoven’s Symphony No. 6. In Proceedings of the 18th Sound and Music Computing Conference (SMC), Online, 29 June–1 July 2021; p. 8. [Google Scholar]

- Quackenbush, S.R.; Herre, J. MPEG Standards for Compressed Representation of Immersive Audio. Proc. IEEE 2021, 109, 1578–1589. [Google Scholar] [CrossRef]

- Whitmore, G. Design With Music In Mind: A Guide to Adaptive Audio for Game Designers. 2003. Available online: https://www.gamedeveloper.com/audio/design-with-music-in-mind-a-guide-to-adaptive-audio-for-game-designers (accessed on 9 May 2022).

- Zdanowicz, G.; Bambrick, S. The Game Audio Strategy Guide: A Practical Course; Routledge: New York, NY, USA, 2020. [Google Scholar]

- Byrns, A.; Ben Abdessalem, H.; Cuesta, M.; Bruneau, M.A.; Belleville, S.; Frasson, C. Adaptive Music Therapy for Alzheimer’s Disease Using Virtual Reality. In Intelligent Tutoring Systems; Springer International Publishing: Cham, Switzerland, 2020; pp. 214–219. [Google Scholar] [CrossRef]

- Crytek GmbH. The Climb. 2021. Available online: https://www.theclimbgame.com/ (accessed on 5 June 2022).

- inXile entertainment Inc. The Mage’s Tale on Oculus Rift. 2017. Available online: https://www.oculus.com/experiences/rift/1018772231550220/?locale=en_GB (accessed on 5 June 2022).

- Scioneaux, E., III. VR Sound Design for Touch Controllers. 2017. Available online: https://designingsound.org/2017/09/14/vr-sound-design-for-touch-controllers/ (accessed on 25 March 2022).

- Horswill, I.D. Lightweight Procedural Animation With Believable Physical Interactions. IEEE Trans. Comput. Intell. AI Games 2009, 1, 39–49. [Google Scholar] [CrossRef]

- Shaker, N.; Togelius, J.; Nelson, M.J. Procedural Content Generation in Games; Springer: Cham, Switzerland, 2016. [Google Scholar] [CrossRef] [Green Version]

- Plut, C.; Pasquier, P. Generative music in video games: State of the art, challenges, and prospects. Entertain. Comput. 2020, 33, 100337. [Google Scholar] [CrossRef]

- Salamon, J.; MacConnell, D.; Cartwright, M.; Li, P.; Bello, J.P. Scaper: A library for soundscape synthesis and augmentation. In Proceedings of the 2017 IEEE Workshop on Applications of Signal Processing to Audio and Acoustics (WASPAA), New Paltz, NY, USA, 15–18 October 2017; pp. 344–348. [Google Scholar] [CrossRef]

- Farnell, A. An introduction to procedural audio and its application in computer games. In Proceedings of the 2nd Conference on Interaction with Sound (Audio Mostly 2007), Ilmenau, Germany, 27–28 September 2007; Fraunhofer Institute for Digital Media Technology IDMT: Ilmenau, Germany, 2007. [Google Scholar]

- De Prisco, R.; Malandrino, D.; Zaccagnino, G.; Zaccagnino, R. An Evolutionary Composer for Real-Time Background Music. In Evolutionary and Biologically Inspired Music, Sound, Art and Design; Springer International Publishing: Cham, Switzerland, 2016; pp. 135–151. [Google Scholar] [CrossRef]

- Washburn, M.; Khosmood, F. Dynamic Procedural Music Generation from NPC Attributes. In International Conference on the Foundations of Digital Games; Number Article 15 in FDG ’20; Association for Computing Machinery: New York, NY, USA, 2020; pp. 1–4. [Google Scholar] [CrossRef]

- Electronic Arts Inc. Spore: What is Spore. 2009. Available online: https://www.spore.com/what/spore (accessed on 21 January 2022).

- Collins, N. Musical Form and Algorithmic Composition. Contemp. Music. Rev. 2009, 28, 103–114. [Google Scholar] [CrossRef]

- Radvansky, G.A.; Krawietz, S.A.; Tamplin, A.K. Walking through doorways causes forgetting: Further explorations. Q. J. Exp. Psychol. 2011, 64, 1632–1645. [Google Scholar] [CrossRef] [PubMed]

- Phillips, W. Game Music and Mood Attenuation: How Game Composers Can Enhance Virtual Presence (Pt. 4). 2019. Available online: https://www.gamedeveloper.com/audio/game-music-and-mood-attenuation-how-game-composers-can-enhance-virtual-presence-pt-4- (accessed on 5 June 2022).

- Phillips, W. Composing video game music for Virtual Reality: 3D versus 2D. 2018. Available online: https://www.gamedeveloper.com/audio/composing-video-game-music-for-virtual-reality-3d-versus-2d (accessed on 21 March 2022).

- Beat Games. Beat Saber. 2019. Available online: https://www.oculus.com/experiences/quest/2448060205267927 (accessed on 5 June 2022).

- Firelight Technologies Pty Ltd. FMOD Studio User Manual 2.02. 2021. Available online: https://www.fmod.com/resources/documentation-studio?version=2.02 (accessed on 24 January 2022).

- Owlchemy Labs. Vacation Simulator on Oculus Quest. 2019. Available online: https://www.oculus.com/experiences/quest/2393300320759737 (accessed on 5 June 2022).

- Acampora, G.; Loia, V.; Vitiello, A. Distributing emotional services in Ambient Intelligence through cognitive agents. Serv. Oriented Comput. Appl. 2011, 5, 17–35. [Google Scholar] [CrossRef]

- Wen, X. Using deep learning approach and IoT architecture to build the intelligent music recommendation system. Soft Comput. 2021, 25, 3087–3096. [Google Scholar] [CrossRef]

- De Prisco, R.; Guarino, A.; Lettieri, N.; Malandrino, D.; Zaccagnino, R. Providing music service in Ambient Intelligence: Experiments with gym users. Expert Syst. Appl. 2021, 177, 114951. [Google Scholar] [CrossRef]

- Monstars Inc. and Resonair. Rez Infinite on Oculus Quest. 2020. Available online: https://www.oculus.com/experiences/quest/2610547289060480 (accessed on 5 June 2022).

- For Fun Labs. Eleven Table Tennis on Oculus Quest. 2020. Available online: https://www.oculus.com/experiences/quest/1995434190525828 (accessed on 5 June 2022).

- Forward Game Studios. Please, Don’t Touch Anything on Oculus Quest. 2019. Available online: https://www.oculus.com/experiences/quest/2706567592751319 (accessed on 5 June 2022).

- Robinson, C. Game Audio with FMOD and Unity; Routledge: New York, NY, USA, 2019. [Google Scholar]

- Brown, A.L.; Kang, J.; Gjestland, T. Towards standardization in soundscape preference assessment. Appl. Acoust. 2011, 72, 387–392. [Google Scholar] [CrossRef]

- Kelly, I.; Gorzel, M.; Güngörmüsler, A. Efficient externalized audio reverberation with smooth transitioning. Tech. Discl. Commons 2017, 421. [Google Scholar] [CrossRef]

- Stevens, F.; Murphy, D.T.; Savioja, L.; Välimäki, V. Modeling Sparsely Reflecting Outdoor Acoustic Scenes Using the Waveguide Web. IEEE/ACM Trans. Audio Speech Lang. Process. 2017, 25, 1566–1578. [Google Scholar] [CrossRef] [Green Version]

- SideQuestVR Ltd. SideQuest: Oculus Quest Games & Apps including AppLab Games (Oculus App Lab). Available online: https://sidequestvr.com/ (accessed on 31 May 2022).

- Unity Technologies. Unity—Manual: Unity User Manual 2020.3 (LTS). 2021. Available online: https://docs.unity3d.com/Manual/ (accessed on 12 January 2021).

- Google LLC. FMOD. 2018. Available online: https://resonance-audio.github.io/resonance-audio/develop/fmod/getting-started.html (accessed on 7 December 2021).

- Gould, R. Let’s Test: 3D Audio Spatialization Plugins. 2018. Available online: https://designingsound.org/2018/03/29/lets-test-3d-audio-spatialization-plugins/ (accessed on 12 January 2021).

- Unity Technologies. About the Oculus XR Plugin. 2022. Available online: https://docs.unity3d.com/Packages/com.unity.xr.oculus@3.0/manual/index.html (accessed on 31 May 2022).

- Unity Technologies. XR Interaction Toolkit. 2021. Available online: https://docs.unity3d.com/Packages/com.unity.xr.interaction.toolkit@2.0/manual/index.html (accessed on 28 December 2021).

- ap Cenydd, L.; Headleand, C.J. Movement Modalities in Virtual Reality: A Case Study from Ocean Rift Examining the Best Practices in Accessibility, Comfort, and Immersion. IEEE Consum. Electron. Mag. 2019, 8, 30–35. [Google Scholar] [CrossRef] [Green Version]

- Weißker, T.; Kunert, A.; Fröhlich, B.; Kulik, A. Spatial Updating and Simulator Sickness during Steering and Jumping in Immersive Virtual Environments. In Proceedings of the 2018 IEEE Conference on Virtual Reality and 3D User Interfaces (VR), Tuebingen/Reutlingen, Germany, 18–22 March 2018. [Google Scholar] [CrossRef]

- Langbehn, E.; Steinicke, F. Space Walk: A Combination of Subtle Redirected Walking Techniques Integrated with Gameplay and Narration. ACM SIGGRAPH 2019 Emerging Technologies; Association for Computing Machinery: New York, NY, USA, 2019; Number Article 24 in SIGGRAPH ’19; pp. 1–2. [Google Scholar] [CrossRef]

- Dear Reality. dearVR PRO. 2022. Available online: https://www.dear-reality.com/products/dearvr-pro (accessed on 16 May 2022).

- Çamci, A. Exploring the Effects of Diegetic and Non-diegetic Audiovisual Cues on Decision-making in Virtual Reality. In Proceedings of the 16th Sound & Music Computing Conference, Malaga, Spain, 28–31 May 2019; Isabel Barbancho, L.J., Tardón, A.P., Barbancho, A.M., Eds.; pp. 195–201. [Google Scholar] [CrossRef]

- Elliott, B. Anything is possible: Managing feature creep in an innovation rich environment. In Proceedings of the 2007 IEEE International Engineering Management Conference, Lost Pines, TX, USA, 29 July–01 August 2007; pp. 304–307. [Google Scholar] [CrossRef]

- Petrillo, F.; Pimenta, M.; Trindade, F.; Dietrich, C. What went wrong? A survey of problems in game development. Comput. Entertain. 2009, 7, 1–22. [Google Scholar] [CrossRef]

- Arm Limited. Arm Guide for Unity Developers: Real-Time 3D Art Best Practices: Lighting. 2020. Available online: https://developer.arm.com/documentation/102109/latest (accessed on 11 May 2022).

- Unity Technologies. Coding in C# in Unity for Beginners. 2021. Available online: https://unity.com/how-to/learning-c-sharp-unity-beginners (accessed on 3 December 2021).

- Dannenberg, R.B. Languages for Computer Music. Front. Digit. Humanit. 2018, 5, 26. [Google Scholar] [CrossRef] [Green Version]

- Lietzén, J.; Miettinen, J.; Kylliäinen, M.; Pajunen, S. Impact force excitation generated by an ISO tapping machine on wooden floors. Appl. Acoust. 2021, 175, 107821. [Google Scholar] [CrossRef]

- Shekhar, G. Detect collision in Unity 3D. 2020. Available online: http://gyanendushekhar.com/2020/04/27/detect-collision-in-unity-3d/ (accessed on 21 January 2022).

- Li, H.; Chen, K.; Wang, L.; Liu, J.; Wan, B.; Zhou, B. Sound Source Separation Mechanisms of Different Deep Networks Explained from the Perspective of Auditory Perception. Appl. Sci. 2022, 12, 832. [Google Scholar] [CrossRef]

| Title | Type | 6-DoF | 6-DoF Audio | Diegetic Music | Generative Music | Surrounding Ambiances | Music Mapping |

|---|---|---|---|---|---|---|---|

| Virtual Virtual Reality 2 [9] | Adventure | yes | partial | no | no | yes | no |

| Half-Life: Alyx [17] | FPS shooter | yes | partial | partial | no | yes | object |

| Zylia Concert Hall [19] | Music | yes | yes | yes | no | no | avatars |

| Settel et al., 2021 [21] | Music | yes | yes | yes | no | no | level meters |

| Méndez et al., 2018 [6] | Music | yes | yes | yes | no | no | avatars |

| Byrns et al., 2020 [25] | Music therapy | n/a | n/a | n/a | no | n/a | visualizations |

| The Climb 2 [26] | Sport | yes | partial | no | no | yes | no |

| The Mage’s Tale [27] | Action RPG | yes | partial | no | no | yes | no |

| De Prisco et al., 2016 [34] | n/a | n/a | n/a | no | evolutionary | n/a | visualizations |

| Washburn and Khosmood, 2020 [35] | n/a | n/a | n/a | n/a | multi-agent expert system | n/a | visualizations |

| Spore [36] | Simulation | no | no | no | rule-based | yes | objects |

| Beat Saber [41] | Music | yes | partial | no | no | no | multimodal |

| 12 Sentiments | Music | n/a | partial | partial | n/a | no | multimodal |

| Rez Infinite [47] | Music | no | no | partial | rule-based | music | multimodal |

| Eleven Table Tennis [48] | Sport | yes | yes | yes | no | n/a | object |

| Vacation Simulator [43] | Simulation | yes | yes | partial | no | yes | object |

| Please, Don’t Touch Anything! [49] | Puzzle | yes | partial | partial | no | yes | object |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Popp, C.; Murphy, D.T. Creating Audio Object-Focused Acoustic Environments for Room-Scale Virtual Reality. Appl. Sci. 2022, 12, 7306. https://doi.org/10.3390/app12147306

Popp C, Murphy DT. Creating Audio Object-Focused Acoustic Environments for Room-Scale Virtual Reality. Applied Sciences. 2022; 12(14):7306. https://doi.org/10.3390/app12147306

Chicago/Turabian StylePopp, Constantin, and Damian T. Murphy. 2022. "Creating Audio Object-Focused Acoustic Environments for Room-Scale Virtual Reality" Applied Sciences 12, no. 14: 7306. https://doi.org/10.3390/app12147306

APA StylePopp, C., & Murphy, D. T. (2022). Creating Audio Object-Focused Acoustic Environments for Room-Scale Virtual Reality. Applied Sciences, 12(14), 7306. https://doi.org/10.3390/app12147306