1. Introduction

Existing for nearly 50 years, bike sharing systems (BSS) have sharply increased both in terms of their prevalence and popularity recently [

1]. Similar to electric vehicles [

2,

3], bikes are environmentally friendly, and they have been frequently cited as a method for solving the “last mile” problem [

1,

2,

3,

4,

5]. Public bikes on the market are of two types: docked bikes and dockless bikes. The mainstream form of the Chinese BSS are dockless bikes, which allows users the freedom to pick up a bike and park it anywhere they want and, thus, is highly convenient and flexible for users. However, they generate new urban issues. The first problem involves inappropriate parking behaviors. A number of users park bikes at places that are unsuitable as parking spaces (e.g., on a pedestrian street, closely adjacent to a metro entrance), thereby resulting in negative impacts [

6,

7,

8]. The second problem is the imbalance caused by the tidal phenomenon [

9]. Affected by the lack of restraints on parking locations, bikes become spatially imbalanced over time. Moreover, the imbalance between users’ concentrated travel demand and the distribution of bikes is further exaggerated because the number of bikes fluctuates dramatically during rush hours [

10,

11,

12].

To solve the imbalance problem mentioned above, vehicle-based methods and user-based methods have been proposed. In vehicle-based methods, operators deploy trucks to rebalance the bike inventory. With regard to static rebalancing, Chemla et al. presented a relaxation of the original model, which was an MIP (mixed integer programming), to address the single-vehicle one-commodity capacitated pickup and delivery problem (SVOCPDP), in which a branch-and-cut algorithm was used [

13]. Based on the multiple traveling salesman problem, three formulations were described by Dell’Amico et al. [

12]. The branch-and-cut algorithm was used to solve the problem by combining the three formulations and invoking separation procedures. Liu et al. studied a dynamic BSS with multiple heterogeneous vehicles, depots, and visits. The conclusion was obtained that enhanced chemical reaction optimization (CRO) yielded better solutions than preliminary CRO [

14]. Schuijbroek et al. proposed the use of mixed-integer programming based on decomposing multivehicle rebalancing matters into single-vehicle problems [

15]. They also provided a heuristic of “cluster first, route second” to mitigate its running time. Other static rebalancing studies can also be found in [

16,

17,

18,

19,

20]. It is difficult to adjust a rebalancing plan in real time to cater to a fluctuating demand for static rebalancing purposes [

20]. As a result, dynamic rebalancing solutions began to appear. For dynamic rebalancing, Caggiani et al. carried out dynamic bike rebalancing with a constant gap time, aiming at making users more satisfied and a low rebalancing cost [

21]. To decide the number of bikes to reposition and at which station to carry out the rebalancing process, Legros et al. developed an implementable decision-support tool [

22]. Mellou et al. proposed a novel mixed-integer programming formulation to solve the dynamic rebalancing problem and provided a linear programming model to capture the bike flows from all trips [

23,

24]. A rebalancing framework for the dynamic bike sharing problem was presented in [

25]. These methods are used to predict upcoming critical statuses and plan the most effective rebalancing operations using an entirely data-driven approach. However, they have limited predictive capabilities of critical stations caused by the absence of temporal sequences, especially in the case of long-term predictions. The vehicle-based approaches’ rebalancing effects depend heavily on the accuracy of demand prediction [

26]. Additionally, due to the maintenance and traveling costs of trucks, as well as labor costs, the truck-based approach can deplete a limited budget rapidly.

User-based methods reposition bikes from the perspective of demand/inventory management [

27]. They usually provide incentives or implement regulations to encourage users to participate in bike rebalancing. Existing user-based rebalancing methods can be divided into three types: best-of-two regulation, parking space reservation, and dynamic pricing incentives [

27]. Flicker et al. presented a two-choice model in which every user is provided with two station choices at the time of a rental and is given an incentive if he/she chooses the station with the lower load [

28]. They showed that even if a small portion of the users make the intended choices, the number of unbalanced stations is dramatically reduced. Kaspi et al. investigated the parking space reservation problem by comparing the performance of complete, partial, and no parking space reservation policy. They found that the complete parking reservation policy achieved the lowest total excess time [

29]. The dynamic pricing incentive is the most commonly used incentive to respond to the rapid changes in bike inventory levels. Flicker operated a V+ scheme to induce users to avoid certain stations and prefer others [

30]. Fifteen minutes are added to their travel time if users place the bikes at one of the hundred uphill stations. Using a fluid approximation, Waserhole et al. also developed a pricing strategy to solve the imbalance problem [

31]. By rewarding users, a graph-theoretic approach was employed to solve the imbalance problem and maximize the profit of the system [

26]. Chemla et al. determined the incentive price at each station dynamically to maximize the bike service level [

32]. Pfrommer et al. and Singla et al. considered the current and projected demand–supply condition in the incentive mechanism design [

33,

34]. Specifically, Pfrommer et al. combined the vehicle-based strategy and user-based strategy by computing dynamically varying rewards for customers based on the current and predicted bike demand–supply conditions of the bike sharing systems [

33]. Singla et al. presented a crowdsourcing mechanism that employs the approach of regret minimization in online learning [

34]. Haider et al. also presented an incentive mechanism to encourage users to pick up/drop off bikes at neighboring stations to generate hub stations, which reduces the need for vehicle-based strategies [

35]. A mixed-integer nonlinear and nonconvex problem was formulated by Li and Liu to design the rebalance strategy under a static scenario. Compared with vehicle-based approaches, user-based approaches offer more flexible ways to rebalance the system [

36,

37,

38].

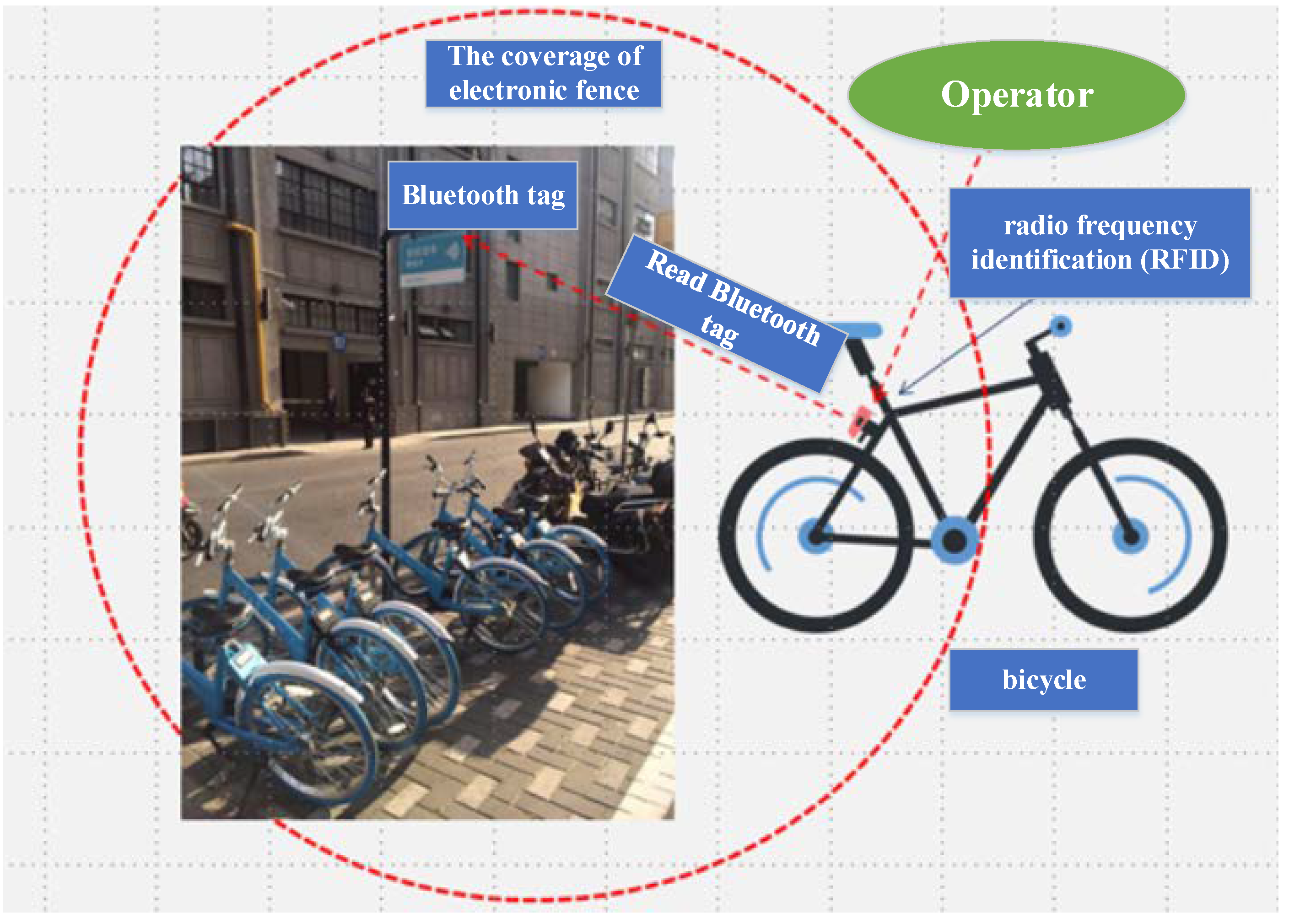

According to the literature above, current vehicle-based methods on bike rebalancing problems mainly focus on the strategy design, either as a tactical problem (i.e., bike rebalancing under static scenario) or as an operational problem (i.e., bike rebalancing under dynamic scenario). However, vehicle-based methods operate on a routine basis; all demands cannot be satisfied until the next rebalancing operation. By incentivizing users to rebalance bikes on a real-time basis, the user-based rebalancing strategy effectively improves the service level of a bike sharing system. However, majority of existing user-based rebalancing studies employ a post-price model-based incentive mechanism, which increases the operation cost of the bike sharing systems. To solve the inappropriate parking problem, electric fences have been built as a choice for regulating inappropriate parking behaviors. An electric fence is a predetermined “virtual fence” without a physical installation. Users who park bikes outside the allowed areas cannot lock them and will continue to be charged [

13,

39]. In this way, users will be guided by their application to proper parking locations. Electric fence policies and technology have been recommended in several important governmental documents, such as the “National Guidance to Encourage and Regulate the Development of the Internet-based Dockless Bike-sharing Service”. Such technology and policies have also been tested as pilot projects in several cities in China since early 2017 [

40].

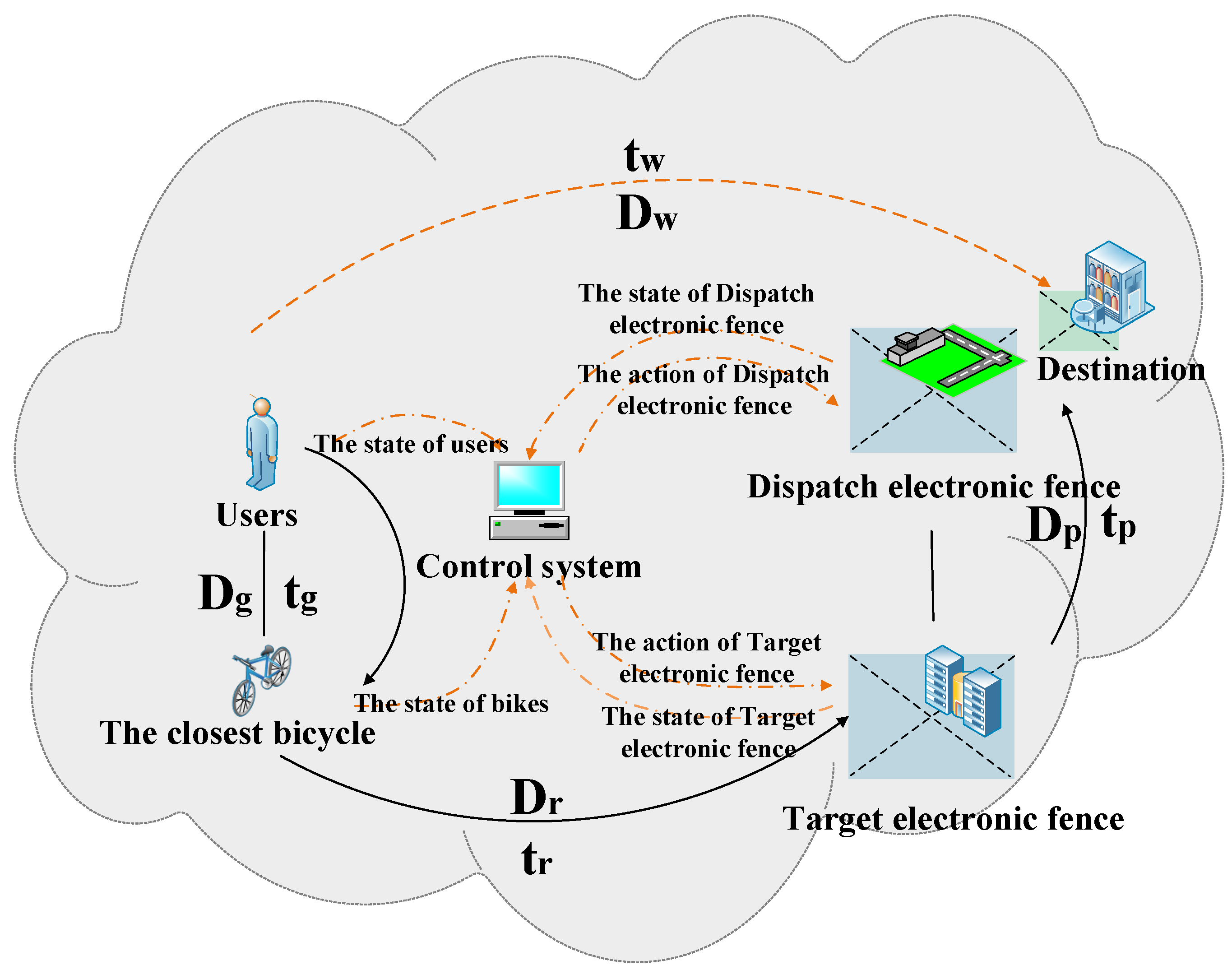

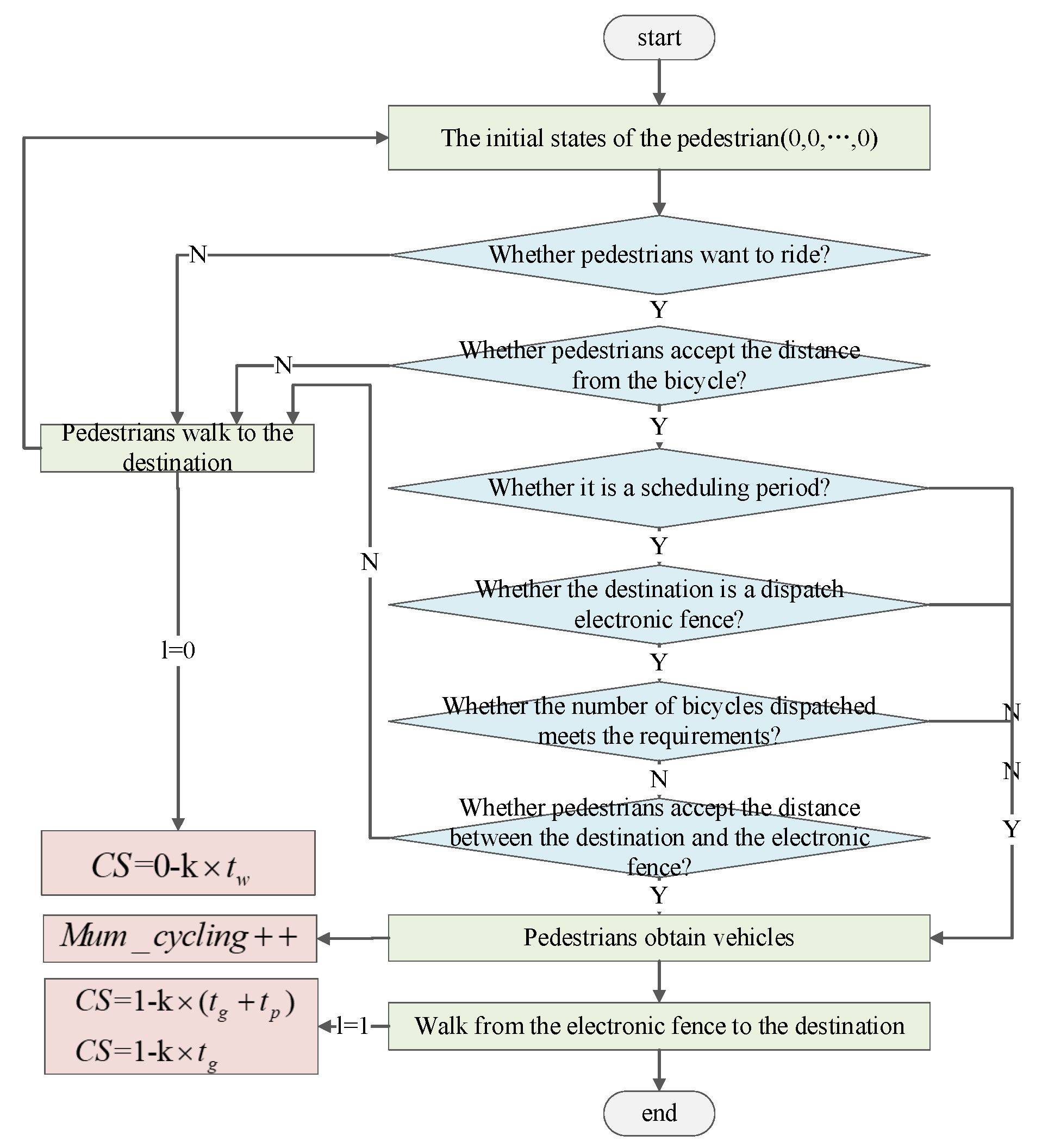

Considering the application of electric fences in recent years, we first propose a scheduling method based on electric fences. As a dynamic method that considers the real-time usage of BSS, electric fences adjust their capacities based on real-time information, which guides users to return bikes to areas within dynamic electric fences with greater urgency. A model-free intelligent scheduling approach is proposed in this paper, based on deep Q-learning (DQN), which can adapt to the changing distributions of customer arrivals and the changing distributions regarding the active bikes, the bikes’ locations, and users’ valuations for the total travel times [

41,

42,

43]. To the best of our knowledge, this is the first work that uses electric fences for the imbalance problem faced by BSS and casts the imbalance problem into a reinforcement learning problem. The arrangement of this paper is as follows. First, a bike sharing system modeling mechanism is provided to describe the structures and operations of BSS; then, we propose the goals and constraints of our optimization approach. In the fourth section, an intelligent dispatching solution and optimization model based on dynamic electric fences is presented. Finally, taking the BSS at Beihang University as an example, the scheduling strategy for electric fences is given, and the effectiveness of the scheduling scheme is verified in AnyLogic.

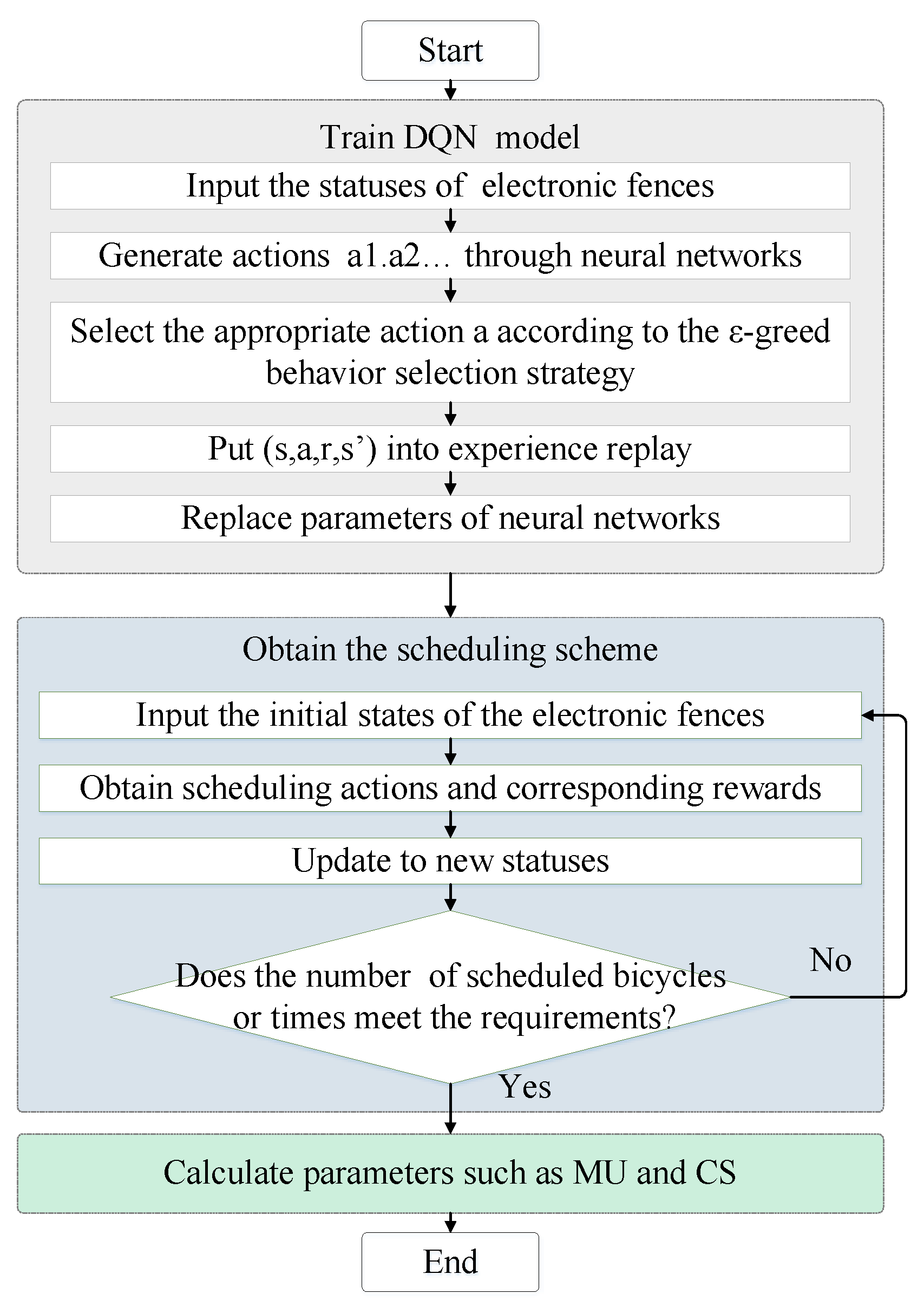

3. Intelligent Electric Fence Dispatching Algorithm Based on DQN

According to the description of the scheduling process mentioned above, the scheduling strategies given by the control system are the core of the scheduling method. We need to propose a set of electric fence scheduling schemes to rebalance the system by guiding users to park bikes in areas with the greatest demand. Considering the characteristics of the DQN method, which does not need to solve complex models, and its adaptability to dynamic environments, an electric fence scheduling algorithm based on DQN is proposed in this section. The flow diagram of DQN is shown in Algorithm 1.

| Algorithm 1 The flow diagram of DQN [41] |

| 1: Initialize the online network with weight |

| 2: Initialize the target network with weight |

| 3: Initialize the total number of scheduled bikes as |

| 4: Initialize the total number of scheduling requests as |

| 5: Initialize the capacity of the experience pool as |

| 6: Initialize the batch capacity as m |

| 7: While episode

do: |

| 8: Initialize the state of the electric fence as |

| 9: If num

and

:

|

| 10: Select an initial action

randomly according to the

strategy; |

| 11: Or

|

| 12: Execute

, observe the reward

and set the next state as

|

| 13: Store

in the experience replay;

|

| 14: Extract m data from the experience pool randomly and obtain

according to the target network; |

| 15: Obtain

according to the online network and calculate the value of |

| 16: Update parameter

of the target network;

|

| 17: |

| 18: every steps;

|

| 19: End if |

| 20: End while |

Figure 4 is the flow chart of the electric fences’ training and scheduling processes. The proposed scheduling algorithm includes three parts: (1) training the reinforcement learning model (the DQN-based neural network); (2) obtaining the electric fence scheduling scheme; and (3) evaluating the effectiveness of the scheme.

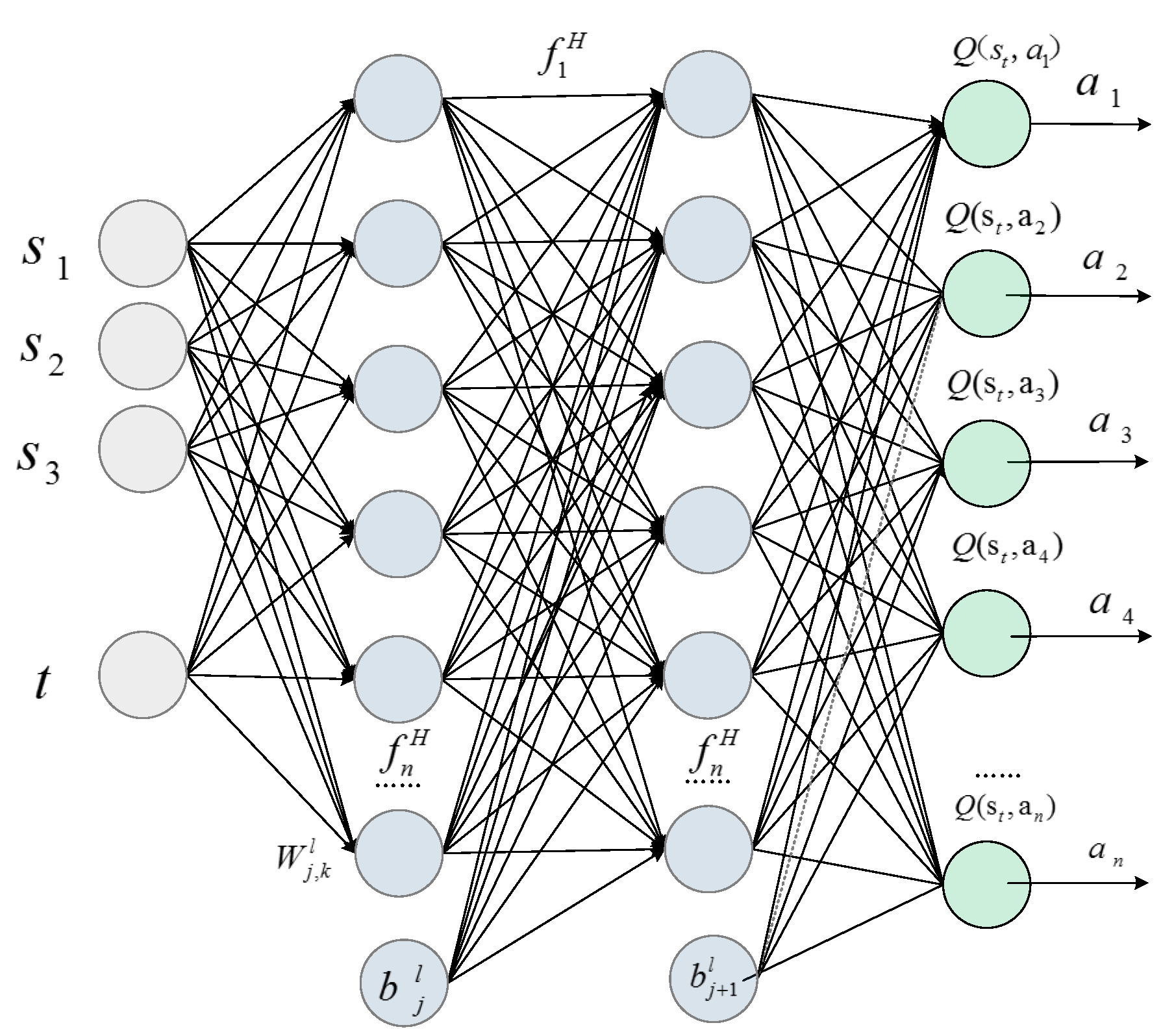

3.1. Generating Actions through Neural Networks

3.1.1. Forward Propagation

Figure 5 shows the neural network used to obtain the value function, in which the leftmost and rightmost columns are the input layer and output layer, respectively, and the others in between are hidden layers. The depth of the neural network is 2;

s1 represents the number of scheduled bikes for the target electric fence at time

t;

s2,

s3, …,

sj represent the numbers of dispatched bikes by the dispatch electric fences. The network outputs action

ak at time

t + 1 according to the corresponding

Q-value and

ε-greedy strategy. The output of the

jth neuron is

where

represents the multiplying factors (or weights) between the

kth element in the (

l − 1)th layer and the

jth element in the

lth layer,

is a constant (normally referred to as a threshold or bias), and

is the input from the

kth element of the previous layer. Because of its fast convergence and simple calculation process, the activation function

used in this case is as follows:

3.1.2. Bias Calculation

Markov decision processes (MDPs) offer standard formalisms for describing multistate decision making in a probabilistic environment. More precisely, an MDP is a discrete-time stochastic control process, where at each time step, the process is in some state

s and the decision maker chooses a feasible action

a. Accordingly, the process then moves to a new state and awards the decision maker a corresponding reward

. The role of rewards is to provide feedback to a reinforcement learning model about the performances induced by the previous actions. Thus, it is important to define the reward to correctly guide the learning process, which accordingly helps the system take the best action policy. In our system, the main goal is to increase customer satisfaction. Thus, we define the rewards as follows:

To perform the dispatch actions with different vehicle states, we utilize the deep Q-networks to dynamically generate optimized values. This learning technique is widely used in modern decision making due to its adaptability to the dynamic features in a system. The optimal action value function for an electric fence is defined as the maximum expected achievable reward. Thus, we have

where

is the discount factor for the future. At any time slot

t, the dispatcher monitors the current state

st and then feeds it to the neural network to generate an action. Note that we do not use a full representation of

st to find the expectation. Rather, we use a neural network to approximate the Q function.

For each electric fence, an action is taken such that the output of the neural network is maximized. The learning process starts with zero knowledge, and actions are chosen using a greedy scheme by following the

ε-greedy method. For the electric fence, after choosing the action and according to the reward, the

Q-value is updated with a learning factor α as follows:

where

γ ∊ [0, 1] is a discount factor that defines the discounted reward for the future. This technique is known as the value iteration algorithm, and it converges to the optimal action value function,

as

. The action value function can be represented with a neural network, which takes the current system state and action as input and outputs the corresponding

Q-value.

To update the parameters in the neural network, a target value is defined to help guide the update process:

. Let Qtarget(

s, a) denote the target

Q-value at state

s when taking action

a. The neural network is updated by the mean square error (MSE) in the following equation:

3.1.3. Bias Reduction

Since the output of the neural network is q but the expected target value is

TargetQ, the loss function of the value neural network is loss = (

TargetQ −

Q)

2. The objective is to find the neural network’s set of weights that make

as small as possible. This is accomplished using an algorithm known as gradient descent, which repeatedly computes the gradient and updates the neural network’s weights to reach a global minimum. Hence, by differentiating the loss function with respect to the neural network’s parameters at iteration

i,

θi gives the gradient, as expressed in (11):

3.2. ε-Greedy Strategy

To avoid electric fences selecting actions with a maximum Q-value of 100%, a random exploration strategy (the ε-greedy scheme) is proposed in the DQN algorithm, which makes electric fences select actions randomly with probability ε and prevents the algorithm from obtaining a locally optimal solution instead of a globally optimal solution. Under this policy, the agent chooses the action that results in the highest Q-value with probability 1 − ε; otherwise, it selects a random action.

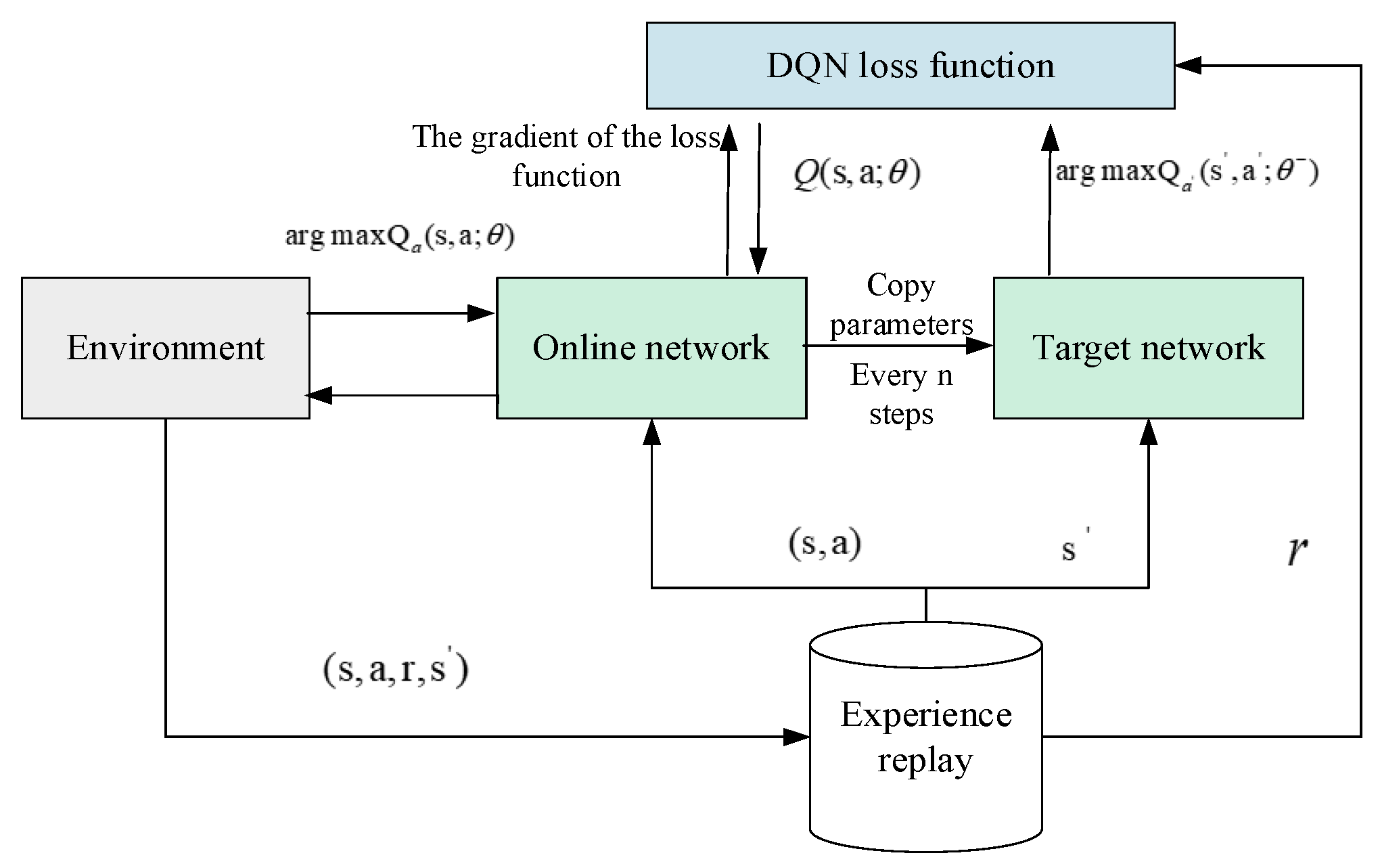

3.3. Experience Replay

In a DQN, the true value of a sample is obtained by the formula, and the parameter in the formula is related to the prediction of Q, so the true value of Q is dependent on the predicted value of Q. To minimize the impact of the correlation between two networks, the concept of experience replay is proposed. For any initial states, the corresponding action is selected according to the ε-greedy strategy to obtain an immediate reward, and the system is updated to the new state; then, (st, at, r, st+1) is stored in the experience replay. After the samples in the experience replay are sufficient, a batch of samples from the replay is selected randomly to update the real Q network.

3.4. Parameter Update

To eliminate the correlations between training samples, two neural networks with the same structure but different parameters are established. Among these two networks, the parameters of the online networks are up to date, and their training samples come from the experience replay. The target network with parameter

is the same as the online network, except its parameters are copied every

τ steps from the online network so that

, which is kept fixed during all other steps. The training process of the DQN is shown in

Figure 6.

4. Case Study

As a densely populated place, a campus has a very serious bike imbalance problem due to the tidal characteristics of people’s travels, especially during the noon peak period. Taking the bike sharing system at Beihang University as an example, a scheduling strategy is obtained by using the proposed intelligent scheduling method. The effectiveness of the resulting scheduling strategy is verified in this case study.

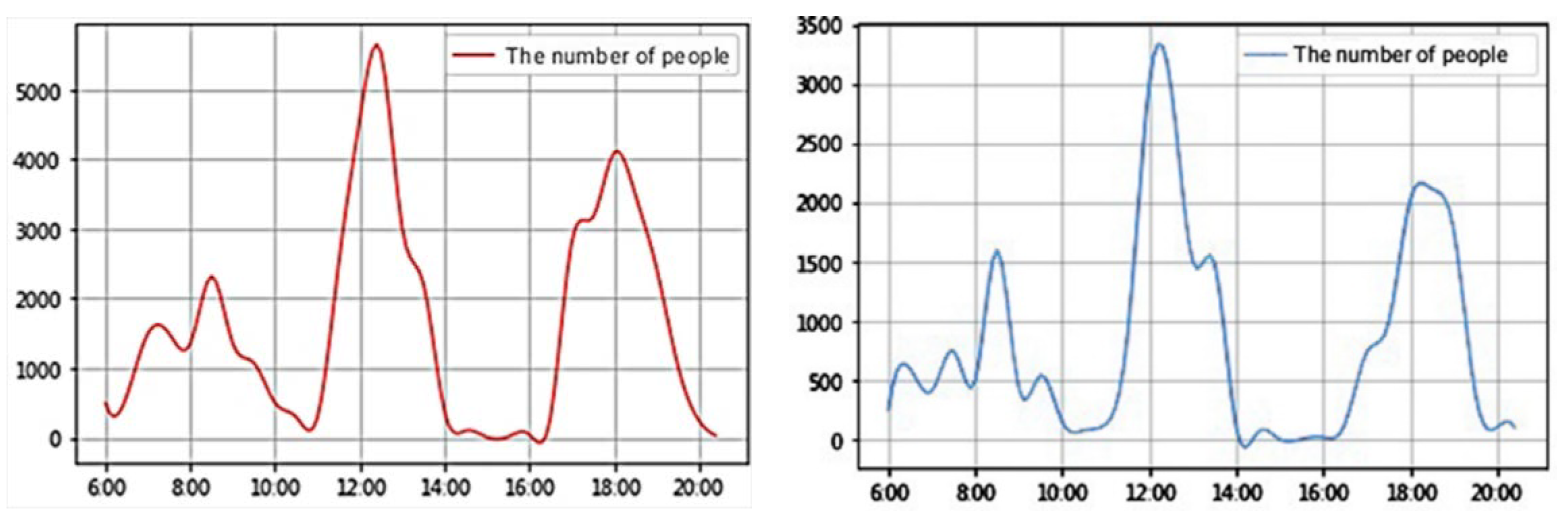

4.1. User Travel Data

By analyzing the data from questionnaire surveys, a data review, and field observations, users’ travel patterns can be determined.

Figure 7 shows the traffic flows of users from the classroom to canteens on working days and weekends. According to

Figure 7, students’ travel needs are characterized by concentrations and tides due to the similarities of their life trajectories. The travel flows during the breakfast, lunch, and dinner periods increase significantly, and the tidal phenomenon is very obvious on both weekdays and weekends. Compared with the tidal phenomenon observed during breakfast and dinner, the tidal phenomenon during lunch is more serious. As a result, a scheduling scheme is formulated to alleviate the bike imbalance during lunch.

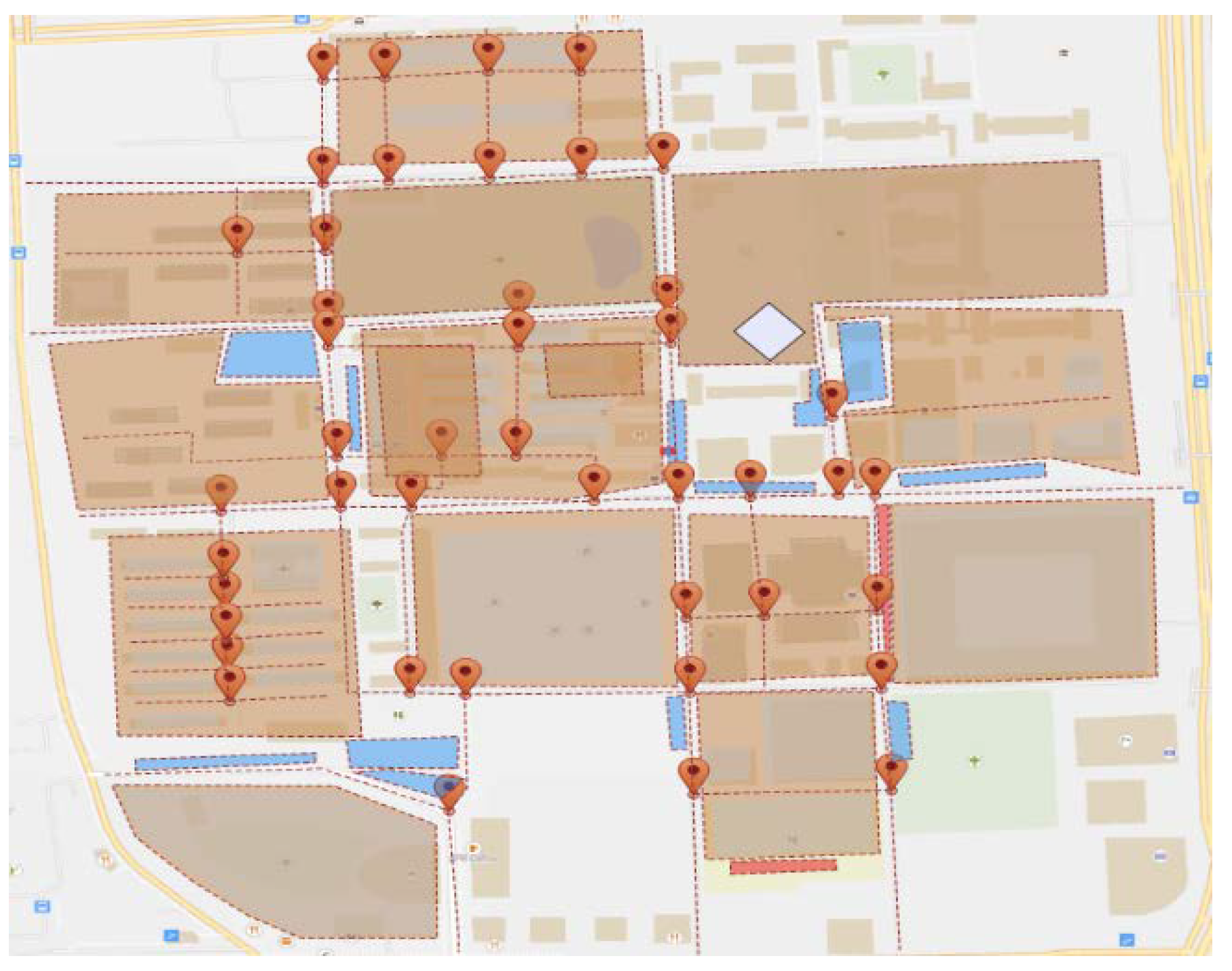

4.2. Simulation Model

Since the application of our scheduling strategy in reality requires many financial resources, an AnyLogic model is built for the bike sharing system. By applying our scheduling strategy in the simulation model, the effectiveness of the strategy can be verified. The simulation model interface, which also shows the layout of Beihang University, is shown in

Figure 8. By modeling the operations of the BBS at Beihang University, the effects of using a scheduling strategy, such as the improvement in

CS and the

MU, can be reflected. The details of the model, including riding speed, walking speed, etc., are shown in

Table 2.

4.3. Scheduling Strategy

Taking the bike imbalance problem on working days as an example, we simulate the peak travel patterns of students in the simulation model. At noon, a large number of students go from teaching buildings to canteens, which makes it hard for students near teaching buildings to find available bikes. By using our scheduling strategies, users are guided to park their bikes at electric fences near the teaching buildings. In our scheduling strategy, the status of the electric fence is (in_num, out_num1, out_num2), in_num is the number of bikes that the target electric fence near the teaching building obtains from the dispatch electric fences, and out_num1 and out_num2 represent the numbers of bikes obtained from two dispatch electric fences (in_num = out_num1+out_num2). There are four kinds of scheduling actions for electric fences, and these are a: (+10, −5, −5), b: (+20, −10, −10), c: (+30, −15, −15), and d: (+40, −20, −20). The scheduling period on working days is from 9:00 a.m. to 11:30 a.m. The whole scheduling period consists of five timeslots, and each timeslot is half an hour. For the scheduling period on weekends, the whole scheduling period from 10:00 a.m. to 11:30 a.m. is divided into three timeslots. If n denotes the maximum scheduling iterations, the end condition of a schedule is n = 5 during working days and n = 3 on weekends.

The parameters of the DQN model are shown in

Table 3. By taking the end condition for dispatches on weekends as 50 bikes, the scheduling scheme corresponding to the tidal phenomenon observed on weekends caused by the flow of students from classrooms to cafeterias is shown in

Table 4, and the scheme is a→a→c. The

MU and

CS values obtained with and without scheduling on weekends are shown in

Table 5.

By taking the end condition for dispatches on weekdays as 80 bikes, the scheduling strategy corresponding to the tidal phenomenon observed on working days is shown in

Table 6. After using this scheduling strategy, the obtained

MU and

CS values on working days are shown in

Table 7.

4.4. Results Analysis

4.4.1. The Effect of Scheduling Strategies on CS

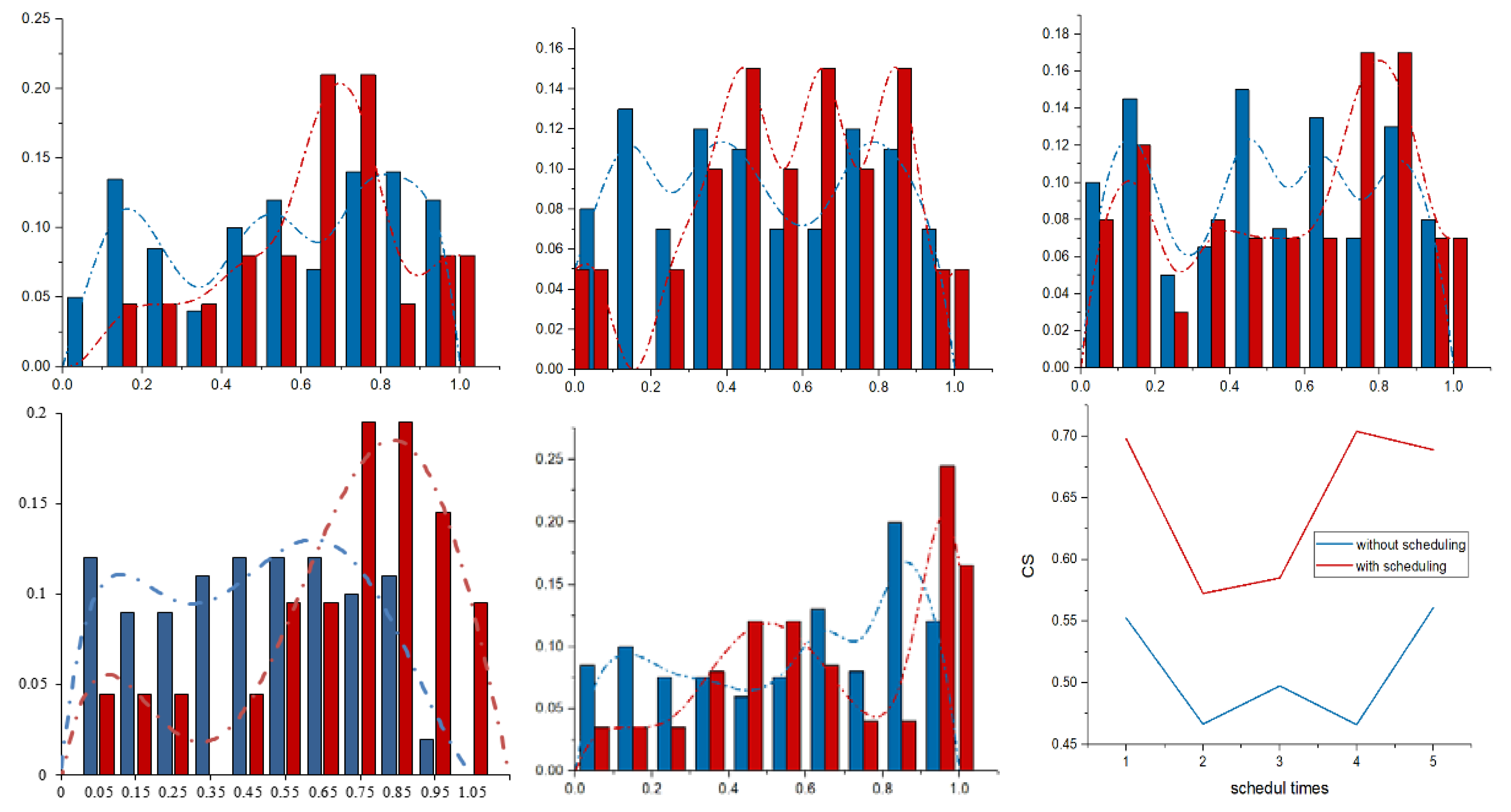

To show the effect of the scheduling strategy, it is applied to the BSS in AnyLogic. The distributions of user satisfaction before and after executing five dispatches and the average

CS values with and without scheduling are shown in

Figure 9, where the red line represents the distribution with dispatches. Analyzing the average

CS values with and without scheduling shown in

Table 8, we find that users’ satisfaction with scheduling is significantly higher than that without scheduling, which means that scheduling strategies are useful for solving the imbalance problem and improving

CS.

4.4.2. The Effect of Scheduling Strategies on the MU

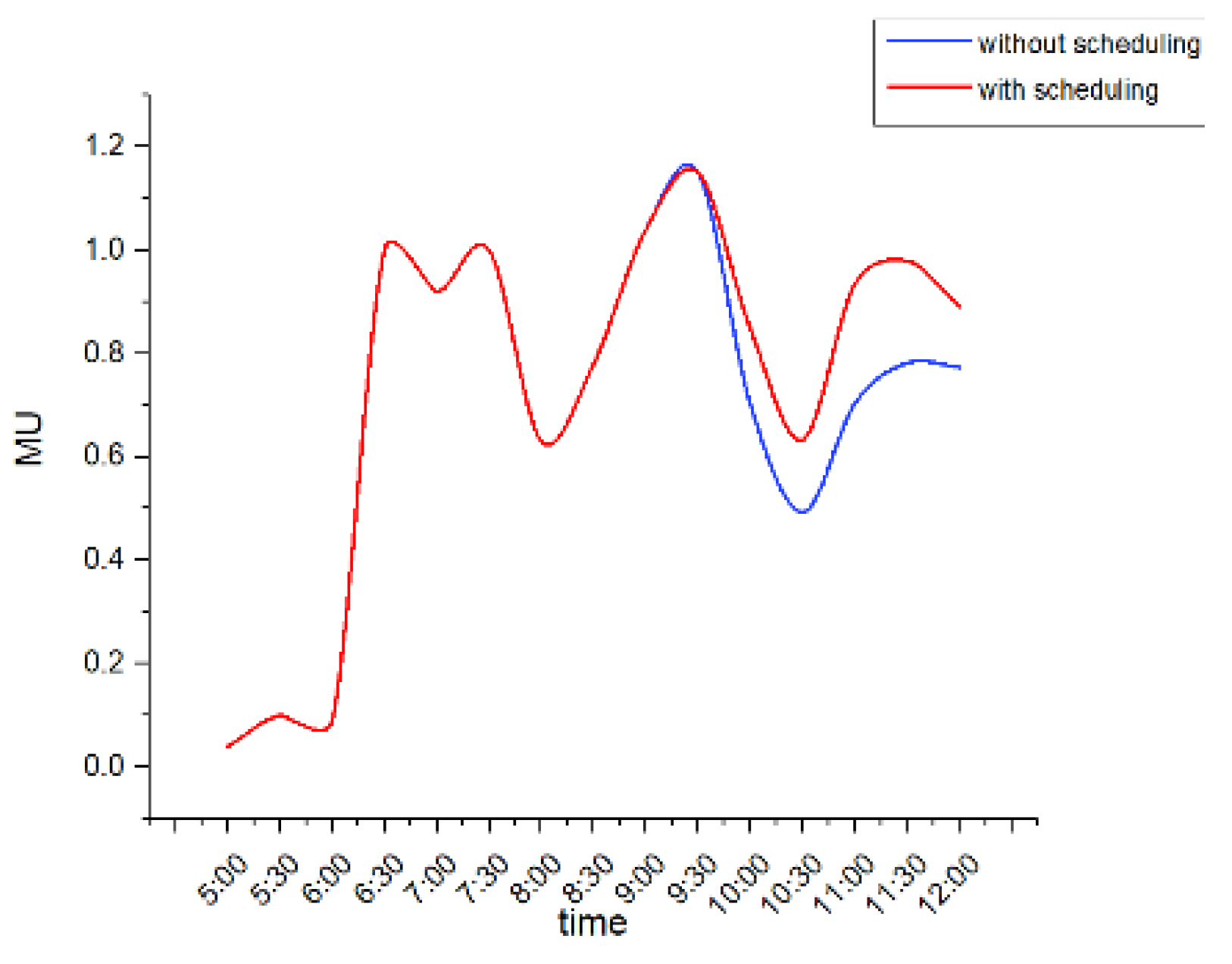

Taking the scheduling strategy with the end condition of 80 as an example, the bike utilization rates with and without scheduling are shown in

Figure 10. Similar to the travel flow of people shown in

Figure 7, bike utilization increases starting at 6:00 a.m. Then, it decreases because students’ similar travel flows from dormitories to canteens results in bike imbalance. After the morning peak, students’ random travel patterns begin, and these can alleviate bike imbalance. According to

Figure 7, the travel flow from the classroom to the cafeteria decreases from 9:30 a.m. to 10:30 a.m., which results in a decline in the

MU. Starting at approximately 10:30 a.m., the travel flow from the classroom to the cafeteria increases. However, because the number of bikes at the electric fence near the classroom is limited and users near the teaching building do not have available bikes, an imbalance during the peak period begins to appear, which causes the

MU without dispatching during peak periods to be lower than 0.8. By using the dispatching strategy, the

MU remains higher than that without scheduling.

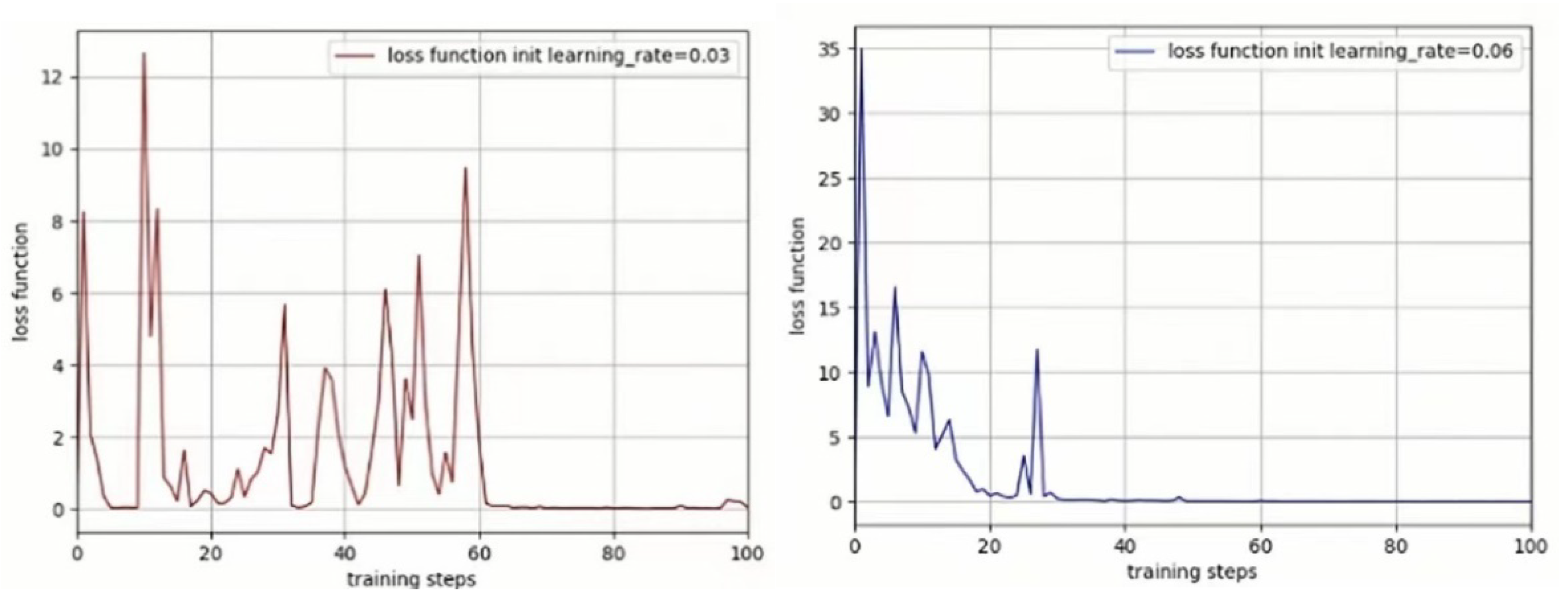

4.4.3. The Loss Function

The loss function of the DQN with different learning rates is shown in

Figure 11. During the simulation, the value of the loss function decreases rapidly as the number of training iterations increases at the beginning and then gradually decreases to zero, accompanied by oscillation. The results illustrate the gradual approximation of the

Q-value function, which means that the proposed method can obtain the globally optimal strategy rather than the locally optimal strategy. Furthermore, when the learning ratio is 0.06, the loss function can converge better than when the learning ratio is 0.03.

4.5. Discussion

Without scheduling, MU on weekdays is higher than that on weekends, due to the greater demand for cycling on weekdays. However, the CS on weekdays is lower than that on weekends. Because the imbalance problem on weekdays is more serious. Based on this method, MU is increased by about 20% and CS is increased by about 8% both on weekdays and weekends. According to the results above, the proposed method performs well in alleviating the imbalance problem caused by tidal phenomena, and the MU and CS can be improved.

5. Conclusions

This work focuses on the imbalance problem caused by tidal phenomena in BSS while attempting to improve the mean utilization of and customer satisfaction with such services. Considering the role of electric fences in restricting user parking behaviors, we propose an electric fence-based intelligent scheduling method that uses deep neural networks and reinforcement learning to learn optimal dispatch policies via interactions with the external environment. As a dynamic method that considers the real-time usage of a BSS, the proposed approach efficiently incorporates travel demand statistics and deep learning models to manage electric fences for achieving improved mean utilization and customer satisfaction. By adjusting electric fences’ capacities, users are guided to return bikes to dynamic electric fences with the largest demands. Taking the campus of Beihang University as an example, we find that the proposed method performs well in alleviating the imbalance problem. During the working days, MU is improved from 0.505 to 0.603, and CS is improved from 0.501 to 0.544. During weekends, MU is improved from 0.412 to 0.487, and CS is improved from 0.528 to 0.563.

Based on the electronic fences, a new management method to rebalance the bike sharing systems is proposed in this paper, where MU and CS are taken as the goals of the rebalancing strategy. In future works, we plan to add the profits of operators in our optimization objectives to realize more reasonable management of bike sharing systems. In addition, with the rapid development of the sharing economy, shared electric vehicles and shared e-scooters also appear. Future work will also include applying this method to shared electric vehicle systems and e-scooter systems.