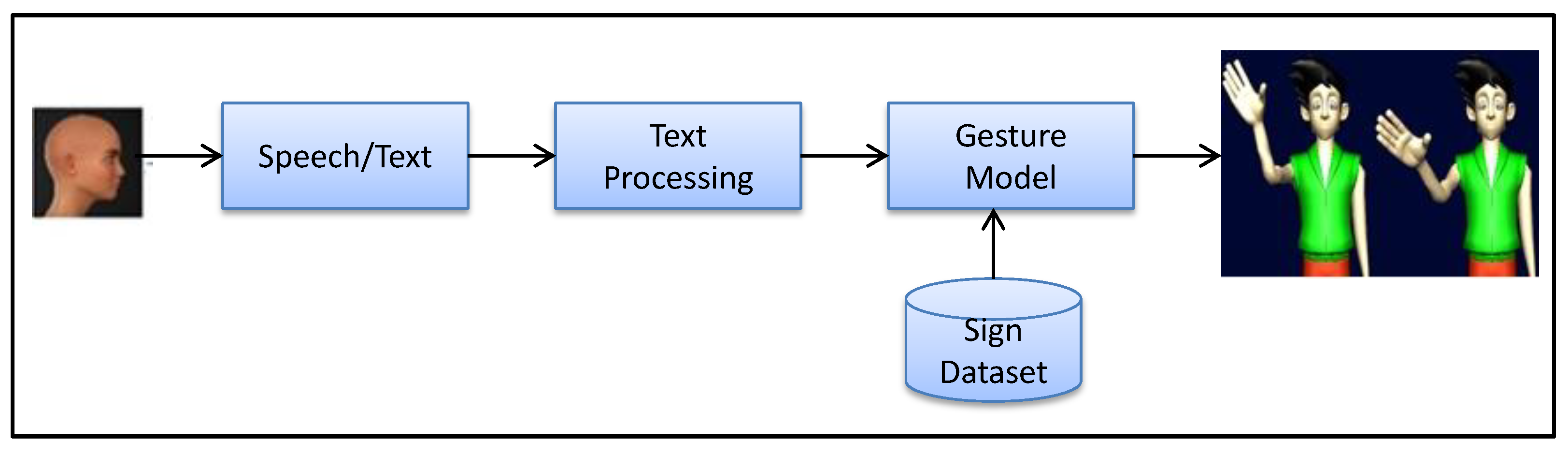

3D Avatar Approach for Continuous Sign Movement Using Speech/Text

Abstract

1. Introduction

- Our first contribution is the development of a 3D avatar model for Indian Sign Language (ISL).

- The proposed 3D avatar model can generate sign movements from three different inputs, namely speech, text, and complete sentences. The complete sentence obtained is made up of continuous signs corresponding to a sentence of spoken language.

2. Related Work

2.1. Sign Language Translation Systems

2.2. Performance Analysis of the Sign Language Translation System

3. Materials and Methods

3.1. Speech to English Sentence Conversion

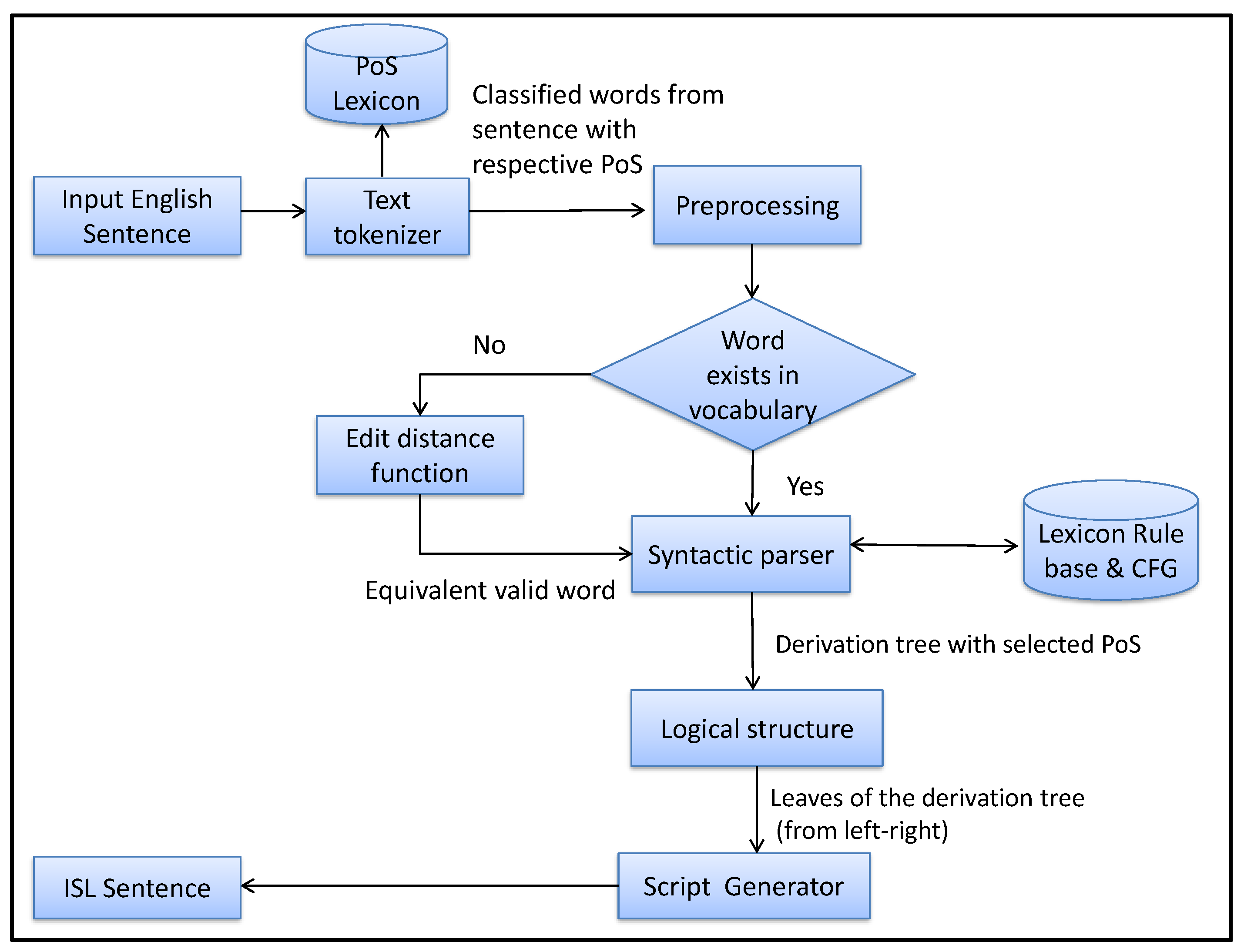

3.2. Translation of ISL Sentence from English Sentence

3.2.1. Preprocessing of Input Text Using Regular Expression

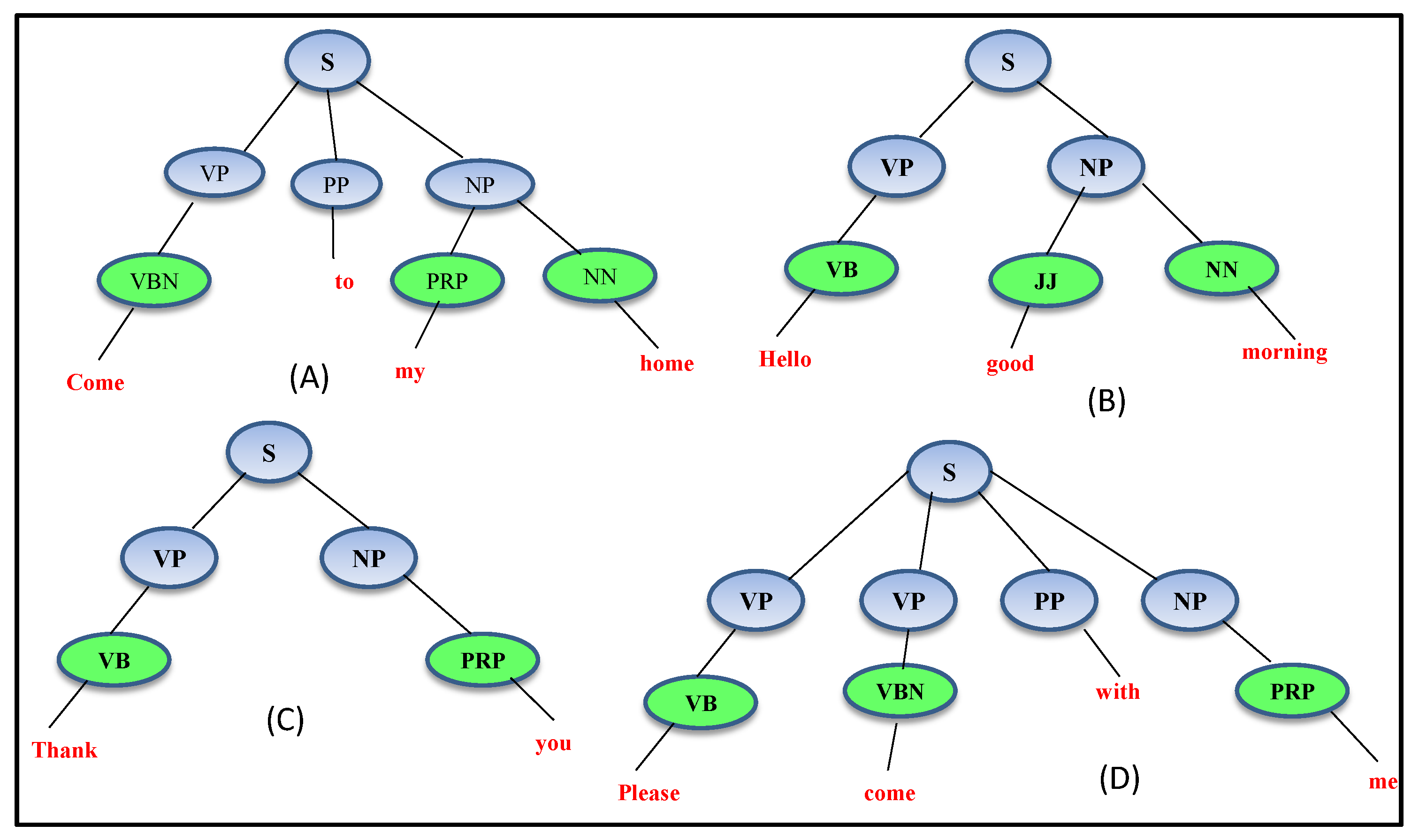

3.2.2. Syntactic Parsing and Logical Structure

- Context-free grammar

- S→VP PP NP|VP NP|VP VP PP NP

- VP→VB|VBN

- PP→“to”|“with”

- NP→PRP NN|JJ NN|PRP

- VB→“hello”|“Thank”|“please”

- VBN→“Come”

- PRP→“my”|“you”|“me”

- JJ→“Good”

- NN→“home”|“morning”

- where (“hello”, “Thank”, “please”, “Come”, “my”, “you”, “me”, “Good”, “morning”,

- “to”, “with”, “home”) ∈ terminals and (VP, PP, NP, VB, VBN, PRP, NN, JJ)

- ∈ nonterminals of the context-free grammar.

3.2.3. Script Generator and ISL Sentence

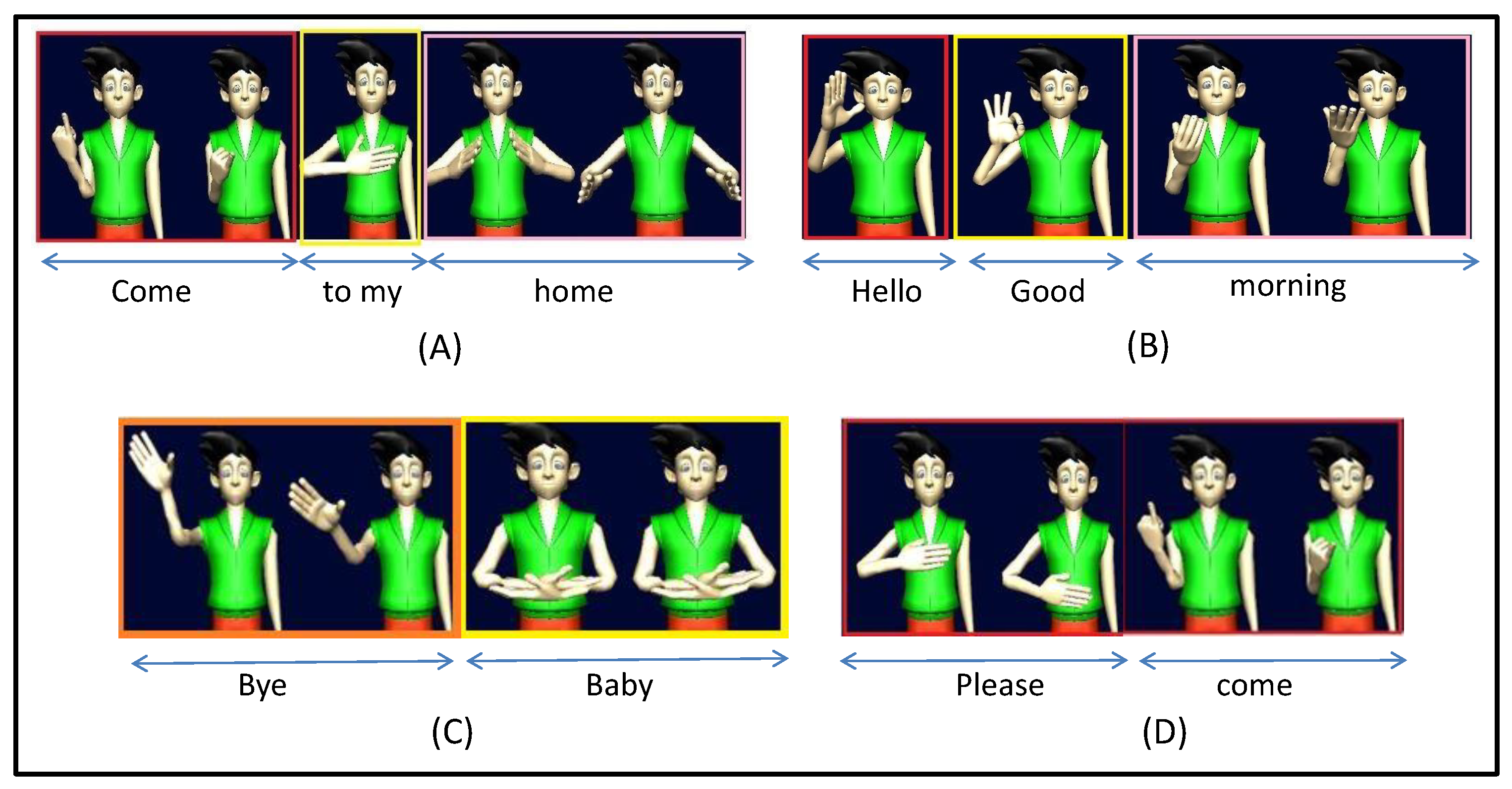

3.3. Generation of Sign Movement

4. Results

4.1. Sign Database

4.2. Speech Recognition Results

4.3. Results of Translation Process

4.4. Generation of Sign Movement from ISL Sentence

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Kumar, P.; Roy, P.P.; Dogra, D.P. Independent bayesian classifier combination based sign language recognition using facial expression. Inf. Sci. 2018, 428, 30–48. [Google Scholar] [CrossRef]

- Mittal, A.; Kumar, P.; Roy, P.P.; Balasubramanian, R.; Chaudhuri, B.B. A modified LSTM model for continuous sign language recognition using leap motion. IEEE Sens. J. 2019, 19, 7056–7063. [Google Scholar] [CrossRef]

- Kumar, P.; Gauba, H.; Roy, P.P.; Dogra, D.P. A multimodal framework for sensor based sign language recognition. Neurocomputing 2017, 259, 21–38. [Google Scholar] [CrossRef]

- Kumar, P. Sign Language Recognition Using Depth Sensors. Ph.D. Thesis, Indian Institute of Technology Roorkee, Roorkee, India, 2018. [Google Scholar]

- Kumar, P.; Saini, R.; Behera, S.K.; Dogra, D.P.; Roy, P.P. Real-time recognition of sign language gestures and air-writing using leap motion. In Proceedings of the IEEE 2017 Fifteenth IAPR International Conference on Machine Vision Spplications (MVA), Nagoya, Japan, 8–12 May 2017; pp. 157–160. [Google Scholar]

- Krishnaraj, N.; Kavitha, M.; Jayasankar, T.; Kumar, K.V. A Glove based approach to recognize Indian Sign Languages. Int. J. Recent Technol. Eng. IJRTE 2019, 7, 1419–1425. [Google Scholar]

- Zeshan, U.; Vasishta, M.N.; Sethna, M. Implementation of Indian Sign Language in educational settings. Asia Pac. Disab. Rehabil. J. 2005, 16, 16–40. [Google Scholar]

- Dasgupta, T.; Basu, A. Prototype machine translation system from text-to-Indian sign language. In Proceedings of the ACM 13th International Conference on Intelligent User Interfaces, Gran Canaria, Spain, 13–16 January 2008; pp. 313–316. [Google Scholar]

- Kishore, P.; Kumar, P.R. A video based Indian sign language recognition system (INSLR) using wavelet transform and fuzzy logic. Int. J. Eng. Technol. 2012, 4, 537. [Google Scholar] [CrossRef]

- Goyal, L.; Goyal, V. Automatic translation of English text to Indian sign language synthetic animations. In Proceedings of the 13th International Conference on Natural Language Processing, Varanasi, India, 17–20 December 2016; pp. 144–153. [Google Scholar]

- Al-Barahamtoshy, O.H.; Al-Barhamtoshy, H.M. Arabic Text-to-Sign Model from Automatic SR System. Proc. Comput. Sci. 2017, 117, 304–311. [Google Scholar] [CrossRef]

- San-Segundo, R.; Barra, R.; Córdoba, R.; D’Haro, L.; Fernández, F.; Ferreiros, J.; Lucas, J.M.; Macías-Guarasa, J.; Montero, J.M.; Pardo, J.M. Speech to sign language translation system for Spanish. Speech Commun. 2008, 50, 1009–1020. [Google Scholar] [CrossRef]

- López-Ludeña, V.; San-Segundo, R.; Morcillo, C.G.; López, J.C.; Muñoz, J.M.P. Increasing adaptability of a speech into sign language translation system. Exp. Syst. Appl. 2013, 40, 1312–1322. [Google Scholar] [CrossRef]

- Halawani, S.M.; Zaitun, A. An avatar based translation system from arabic speech to arabic sign language for deaf people. Int. J. Inf. Sci. Educ. 2012, 2, 13–20. [Google Scholar]

- Papadogiorgaki, M.; Grammalidis, N.; Tzovaras, D.; Strintzis, M.G. Text-to-sign language synthesis tool. In Proceedings of the IEEE 13th European Signal Processing Conference, Antalya, Turkey, 4–8 September 2005; pp. 1–4. [Google Scholar]

- Elliott, R.; Glauert, J.R.; Kennaway, J.; Marshall, I.; Safar, E. Linguistic modelling and language-processing technologies for Avatar-based sign language presentation. Univers. Access Inf. Soc. 2008, 6, 375–391. [Google Scholar] [CrossRef]

- ELGHOUL, M.J.O. An avatar based approach for automatic interpretation of text to Sign language. Chall. Assist. Technol. AAATE 2007, 20, 266. [Google Scholar]

- Loke, P.; Paranjpe, J.; Bhabal, S.; Kanere, K. Indian sign language converter system using an android app. In Proceedings of the IEEE 2017 International conference of Electronics, Communication and Aerospace Technology (ICECA), Coimbatore, India, 20–22 April 2017; Volume 2, pp. 436–439. [Google Scholar]

- Nair, M.S.; Nimitha, A.; Idicula, S.M. Conversion of Malayalam text to Indian sign language using synthetic animation. In Proceedings of the IEEE 2016 International Conference on Next Generation Intelligent Systems (ICNGIS), Kottayam, India, 1–3 September 2016; pp. 1–4. [Google Scholar]

- Vij, P.; Kumar, P. Mapping Hindi Text To Indian sign language with Extension Using Wordnet. In Proceedings of the International Conference on Advances in Information Communication Technology & Computing, Bikaner, India, 12–13 August 2016; p. 38. [Google Scholar]

- Adamo-Villani, N.; Doublestein, J.; Martin, Z. The MathSigner: An interactive learning tool for American sign language. In Proceedings of the IEEE Eighth International Conference on Information Visualisation, London, UK, 16 July 2004; pp. 713–716. [Google Scholar]

- Li, K.; Zhou, Z.; Lee, C.H. Sign transition modeling and a scalable solution to continuous sign language recognition for real-world applications. ACM Trans. Access. Comput. 2016, 8, 7. [Google Scholar] [CrossRef]

- López-Ludeña, V.; González-Morcillo, C.; López, J.C.; Barra-Chicote, R.; Córdoba, R.; San-Segundo, R. Translating bus information into sign language for deaf people. Eng. Appl. Artif. Intell. 2014, 32, 258–269. [Google Scholar] [CrossRef]

- Bouzid, Y.; Jemni, M. An Avatar based approach for automatically interpreting a sign language notation. In Proceedings of the IEEE 13th International Conference on Advanced Learning Technologies, Beijing, China, 15–18 July 2013; pp. 92–94. [Google Scholar]

- Duarte, A.C. Cross-modal neural sign language translation. In Proceedings of the 27th ACM International Conference on Multimedia, Nice, France, 21–25 October 2019; pp. 1650–1654. [Google Scholar]

- Patel, B.D.; Patel, H.B.; Khanvilkar, M.A.; Patel, N.R.; Akilan, T. ES2ISL: An advancement in speech to sign language translation using 3D avatar animator. In Proceedings of the 2020 IEEE Canadian Conference on Electrical and Computer Engineering (CCECE), London, ON, Canada, 30 August–2 September 2020; pp. 1–5. [Google Scholar]

- Stoll, S.; Camgöz, N.C.; Hadfield, S.; Bowden, R. Text2Sign: Towards Sign Language Production Using Neural Machine Translation and Generative Adversarial Networks. Int. J. Comput. Vis. 2020, 128, 891–908. [Google Scholar] [CrossRef]

- Kaur, K.; Kumar, P. HamNoSys to SiGML conversion system for sign language automation. Proc. Comput. Sci. 2016, 89, 794–803. [Google Scholar] [CrossRef]

- Hanke, T. HamNoSys-representing sign language data in language resources and language processing contexts. LREC 2004, 4, 1–6. [Google Scholar]

- Kennaway, R. Avatar-independent scripting for real-time gesture animation. arXiv 2015, arXiv:1502.02961. [Google Scholar]

- Zhang, Y.; Vogel, S.; Waibel, A. Interpreting bleu/nist scores: How much improvement do we need to have a better system? In Proceedings of the Fourth International Conference on Language Resources and Evaluation, Lisbon, Portugal, 26–28 May 2019; pp. 1650–1654. [Google Scholar]

- Larson, M.; Jones, G.J. Spoken content retrieval: A survey of techniques and technologies. Found. Trends Inf. Retriev. 2012, 5, 235–422. [Google Scholar] [CrossRef]

- Lee, L.S.; Glass, J.; Lee, H.Y.; Chan, C.A. Spoken content retrieval—Beyond cascading speech recognition with text retrieval. IEEE/ACM Trans. Audio Speech Lang. Process. 2015, 23, 1389–1420. [Google Scholar] [CrossRef]

- Bird, S.; Klein, E.; Loper, E. Natural Language Processing with Python: Analyzing Text with the Natural Language Toolkit; O’Reilly Media Inc.: Newton, MA, USA, 2009. [Google Scholar]

- Mehta, N.; Pai, S.; Singh, S. Automated 3D sign language caption generation for video. Univers. Access Inf. Soc. 2020, 19, 725–738. [Google Scholar] [CrossRef]

- Zeshan, U. Indo-Pakistani Sign Language grammar: A typological outline. Sign Lang. Stud. 2003, 3, 157–212. [Google Scholar] [CrossRef]

- Roosendaal, T.; Wartmann, C. The Official Blender Game Kit: Interactive 3d for Artists; No Starch Press: San Francisco, CA, USA, 2003. [Google Scholar]

- Tominaga, T.; Hayashi, T.; Okamoto, J.; Takahashi, A. Performance comparisons of subjective quality assessment methods for mobile video. In Proceedings of the IEEE 2010 Second international workshop on quality of multimedia experience (QoMEX), Trondheim, Norway, 21–23 June 2010; pp. 82–87. [Google Scholar]

| Study | Sign Language | Input: Speech | Input: Text | 3D Avatar | Sentence-Wise Sign |

|---|---|---|---|---|---|

| Al-Barahamtoshy, O.H. et al. [11] | ArSL | ✗ | ✓ | ✓ | ✗ |

| Li et al. [22] | CSL | ✓ | ✗ | ✗ | ✓ |

| Halawani et al. [14] | ArSL | ✓ | ✗ | ✗ | ✗ |

| Lopez-Ludena et al. [23] | SSL | ✗ | ✓ | ✓ | ✗ |

| Bouzid, Y. et al. [24] | ASL | ✗ | ✗ | ✓ | ✗ |

| Dasgupta et al. [8] | ISL | ✗ | ✓ | ✗ | ✗ |

| Nair et al. [19] | ISL | ✗ | ✓ | ✗ | ✓ |

| Vij et al. [20] | ISL | ✗ | ✓ | ✗ | ✗ |

| Krishnaraj et al. [6] | ISL | ✓ | ✓ | ✗ | ✗ |

| Duarte et al. [25] | ASL | ✓ | ✗ | ✗ | ✓ |

| Patel et al. [26] | ISL | ✓ | ✗ | ✓ | ✓ |

| Proposed | ISL | ✓ | ✓ | ✓ | ✓ |

| Misspelled/Invalid Word | Equivalent Valid Word |

|---|---|

| “Hellllo” | “Hello” |

| “Halo” | “Hello” |

| “Hapyyyy” | “Happy” |

| “Happppyyy” | “Happy” |

| “Noooooooo” | “No” |

| English Sentence | ISL Sentence |

|---|---|

| I have a pen. | I pen have. |

| The child is playing. | Child playing. |

| The woman is blind. | Woman blind. |

| It is cloudy outside. | Outside cloudy. |

| I see a dog. | I dog see. |

| Total Sentences | Total Words | Vocabulary | Running Words |

|---|---|---|---|

| 150 | 763 | 365 | 50 |

| English Word | Sign Movement | English Word | Sign Movement |

|---|---|---|---|

| Home |  | Night |  |

| Morning |  | Work |  |

| Welcome |  | Bye |  |

| Rain |  | Baby |  |

| Please |  | Sorry |  |

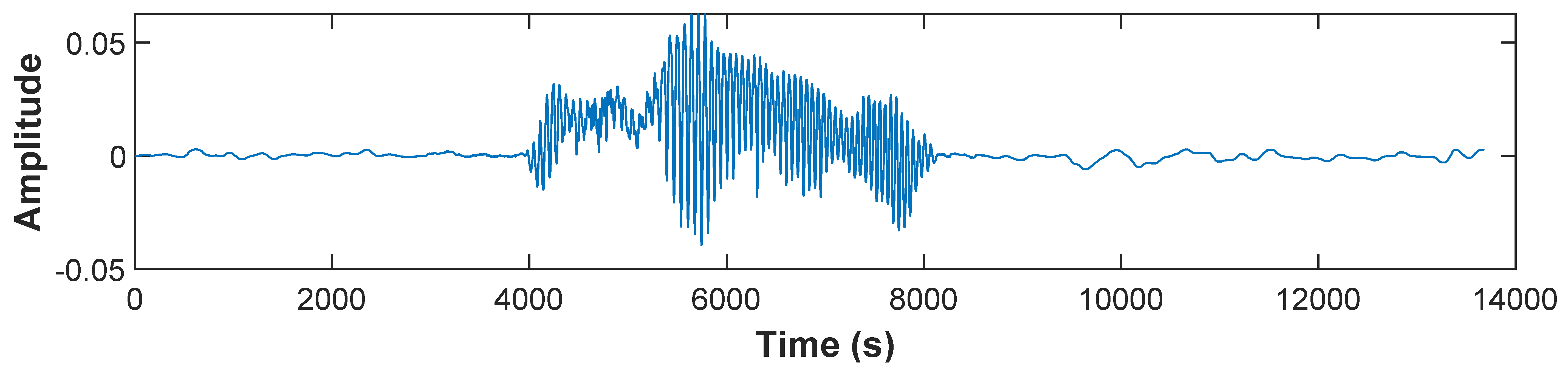

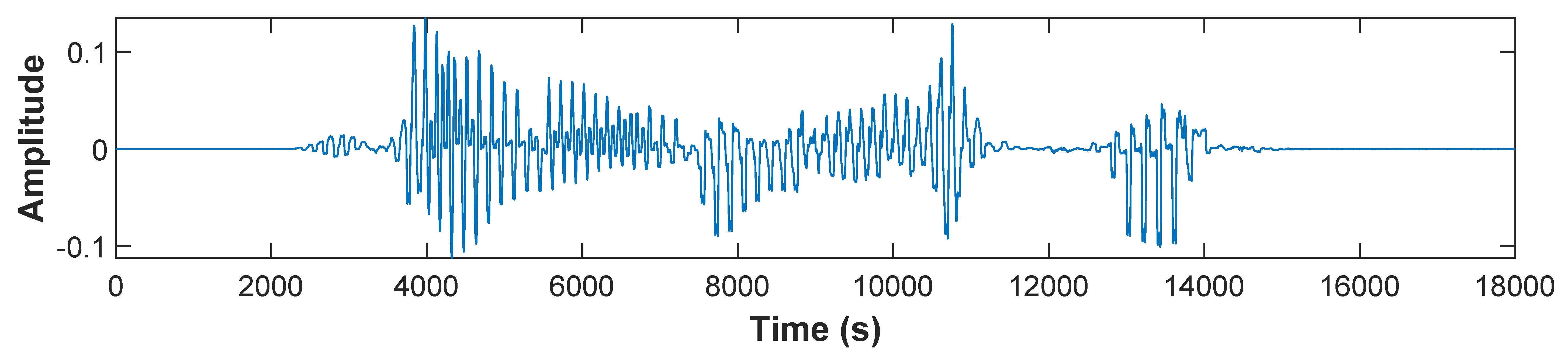

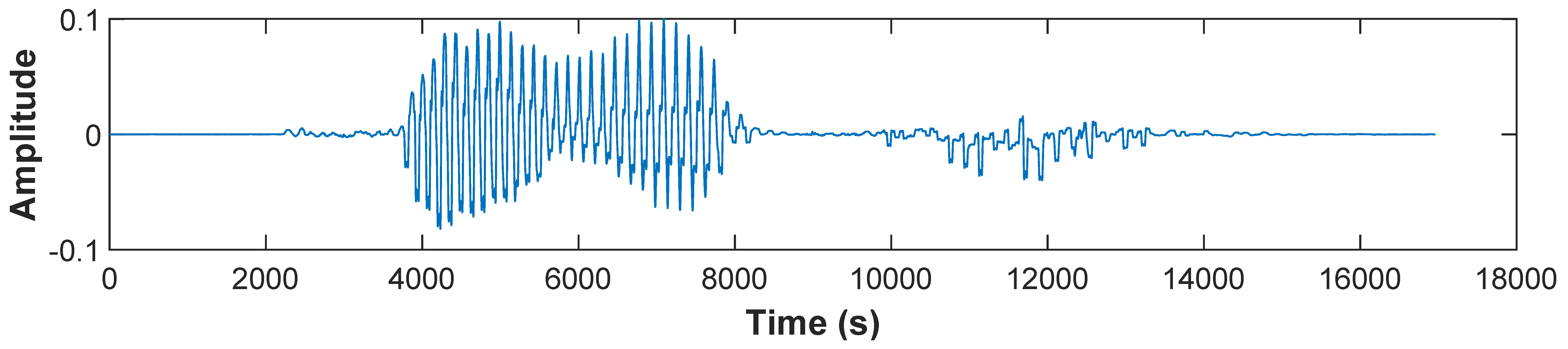

| Speech Type | Speech Signal | Output Text (Using IBM-Watson Service) |

|---|---|---|

| Discrete |  | Hello |

| Discrete |  | Come |

| Continuous |  | Do you like it? |

| Continuous |  | Thank you |

| WER (%) | Ins (%) | Del (%) | Sub (%) |

|---|---|---|---|

| 25.2 | 3.3 | 7.1 | 14.8 |

| SER | BLEU | NIST |

|---|---|---|

| 10.50 | 82.30 | 86.80 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Das Chakladar, D.; Kumar, P.; Mandal, S.; Roy, P.P.; Iwamura, M.; Kim, B.-G. 3D Avatar Approach for Continuous Sign Movement Using Speech/Text. Appl. Sci. 2021, 11, 3439. https://doi.org/10.3390/app11083439

Das Chakladar D, Kumar P, Mandal S, Roy PP, Iwamura M, Kim B-G. 3D Avatar Approach for Continuous Sign Movement Using Speech/Text. Applied Sciences. 2021; 11(8):3439. https://doi.org/10.3390/app11083439

Chicago/Turabian StyleDas Chakladar, Debashis, Pradeep Kumar, Shubham Mandal, Partha Pratim Roy, Masakazu Iwamura, and Byung-Gyu Kim. 2021. "3D Avatar Approach for Continuous Sign Movement Using Speech/Text" Applied Sciences 11, no. 8: 3439. https://doi.org/10.3390/app11083439

APA StyleDas Chakladar, D., Kumar, P., Mandal, S., Roy, P. P., Iwamura, M., & Kim, B.-G. (2021). 3D Avatar Approach for Continuous Sign Movement Using Speech/Text. Applied Sciences, 11(8), 3439. https://doi.org/10.3390/app11083439