Using Mixed Reality (MR) to Improve On-Site Design Experience in Community Planning

Abstract

1. Introduction

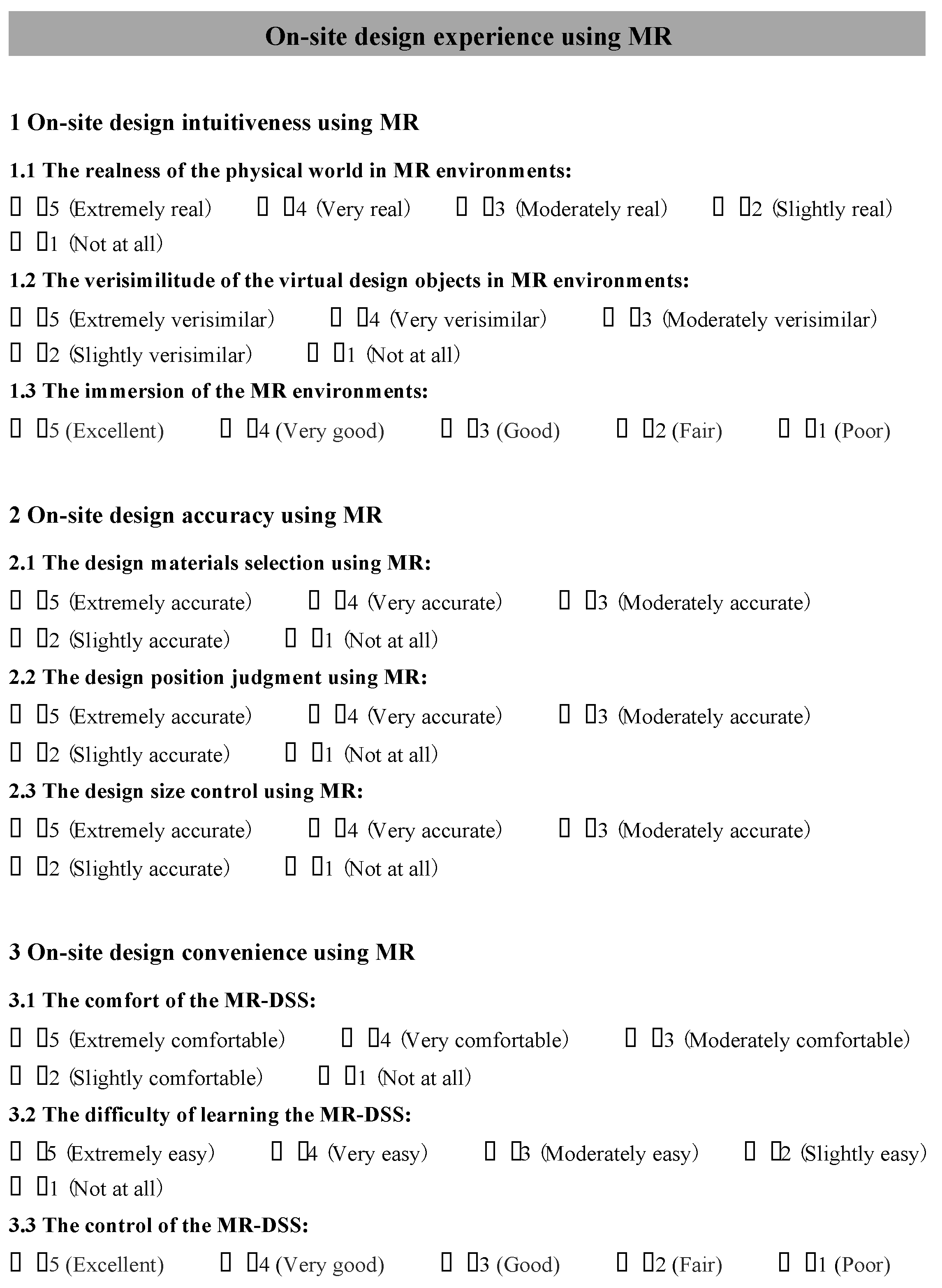

2. Materials and Methods

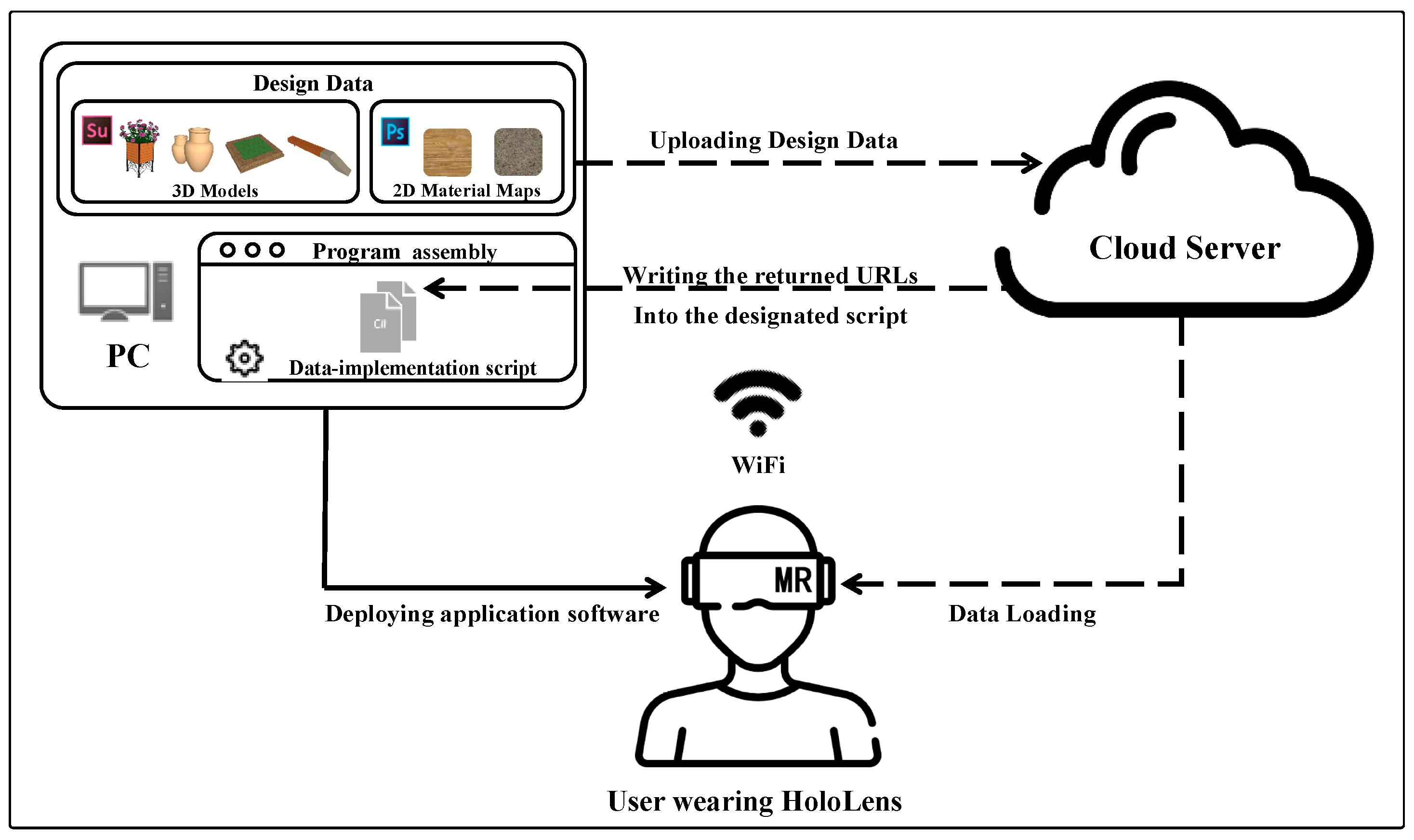

2.1. MR-DSS

2.1.1. Hardware

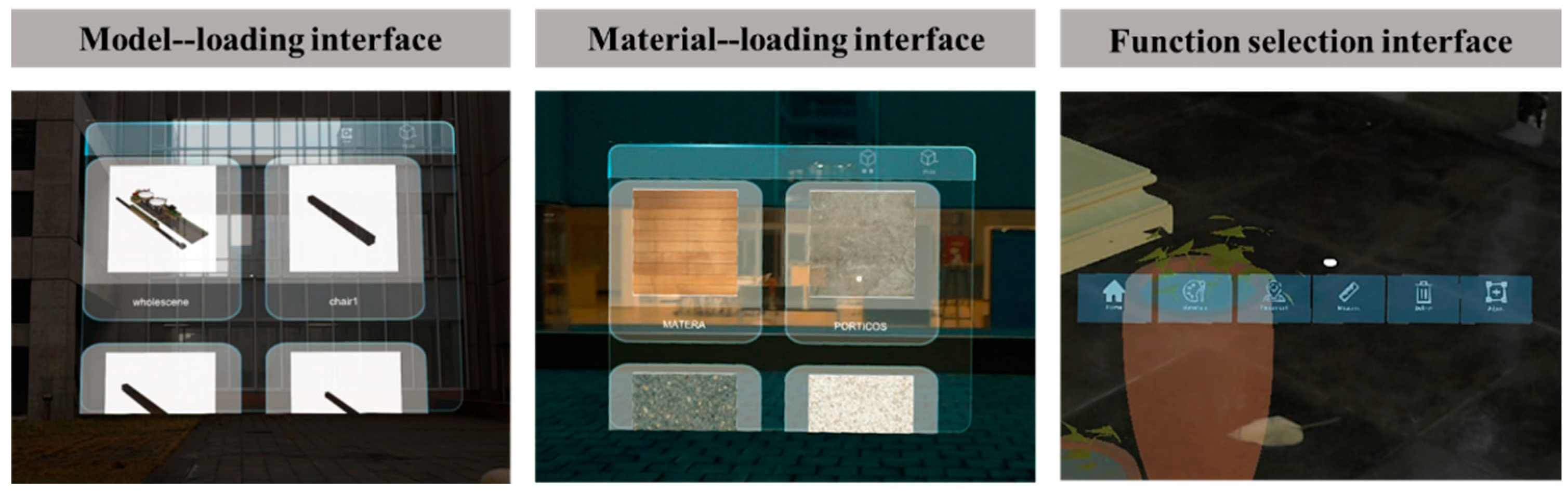

2.1.2. Software

2.2. Case Selection and Data Preparation

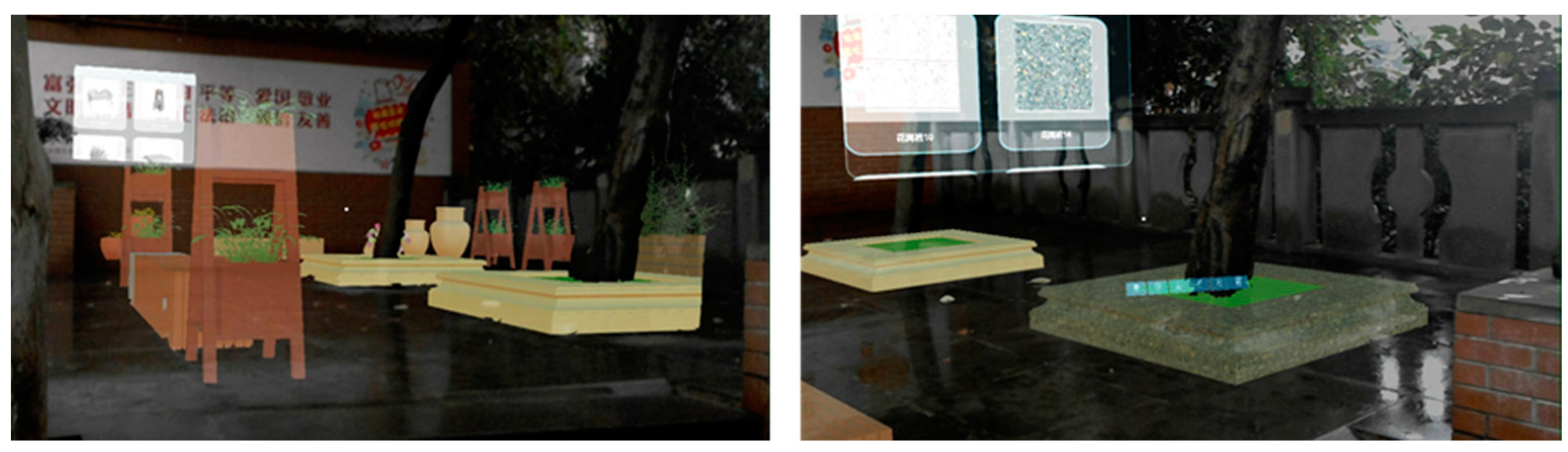

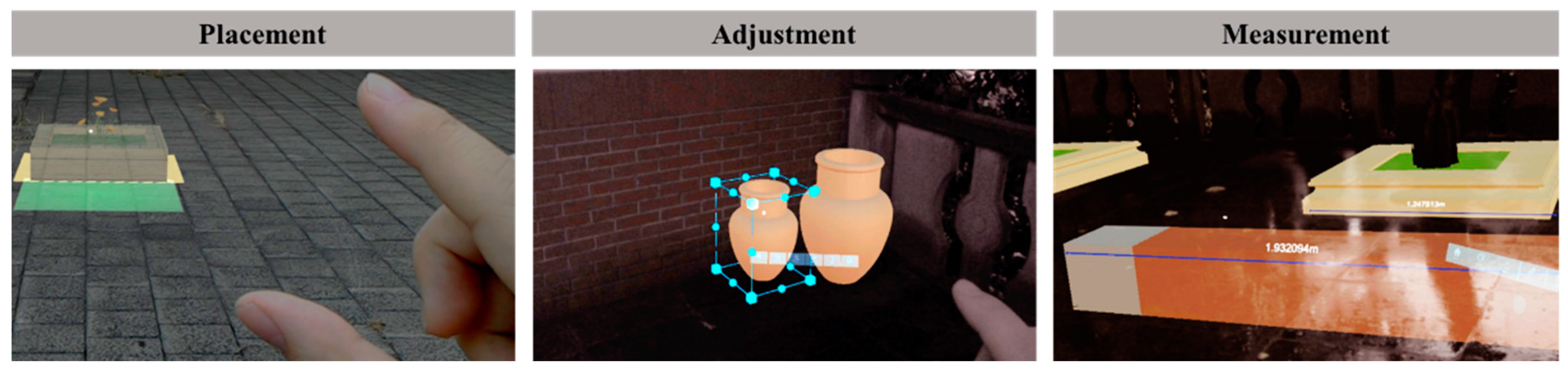

2.3. On-Site Design Experiment

2.3.1. Participants

2.3.2. Experimental Procedures

3. Results and Analysis

3.1. On-Site Design Intuitiveness Using MR

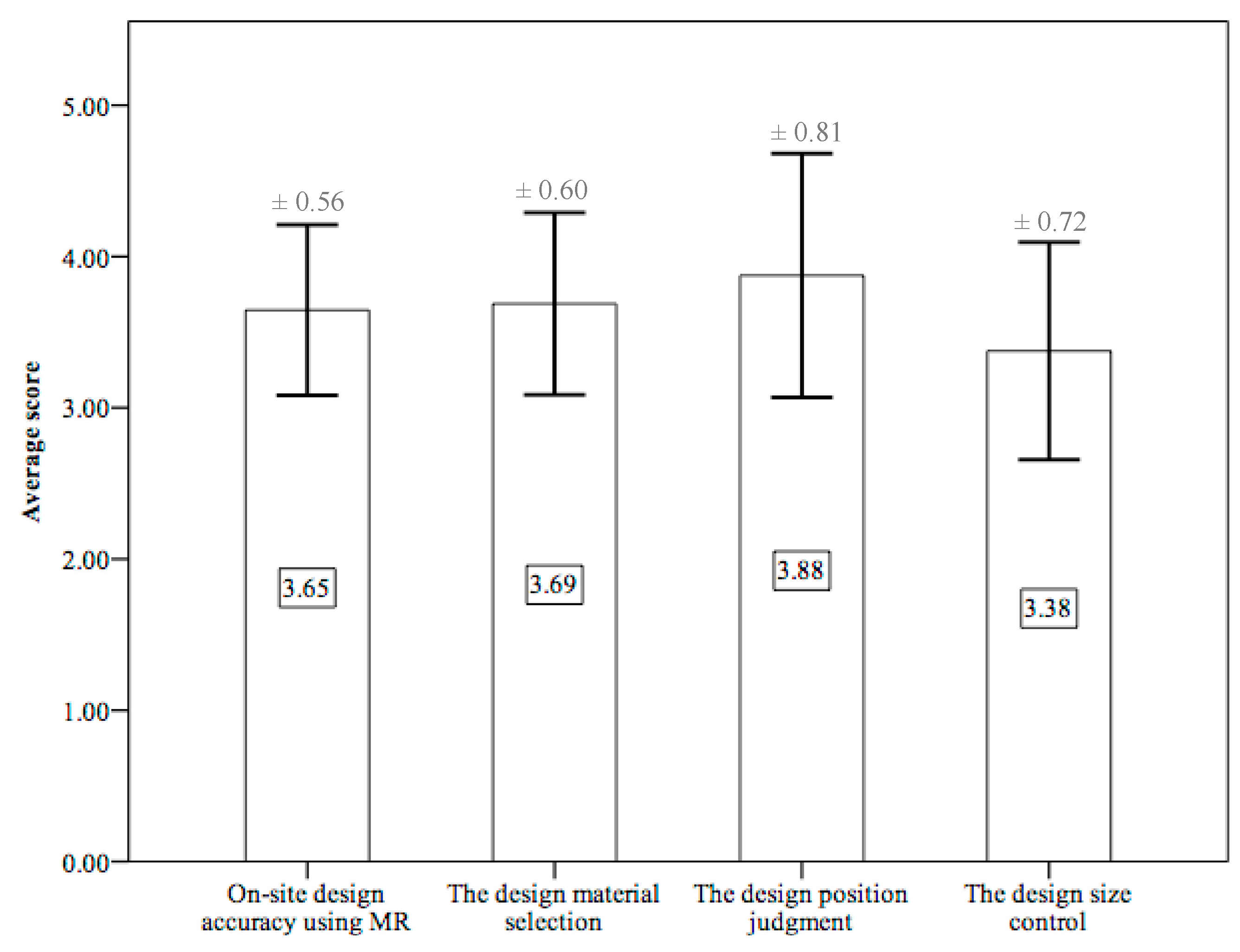

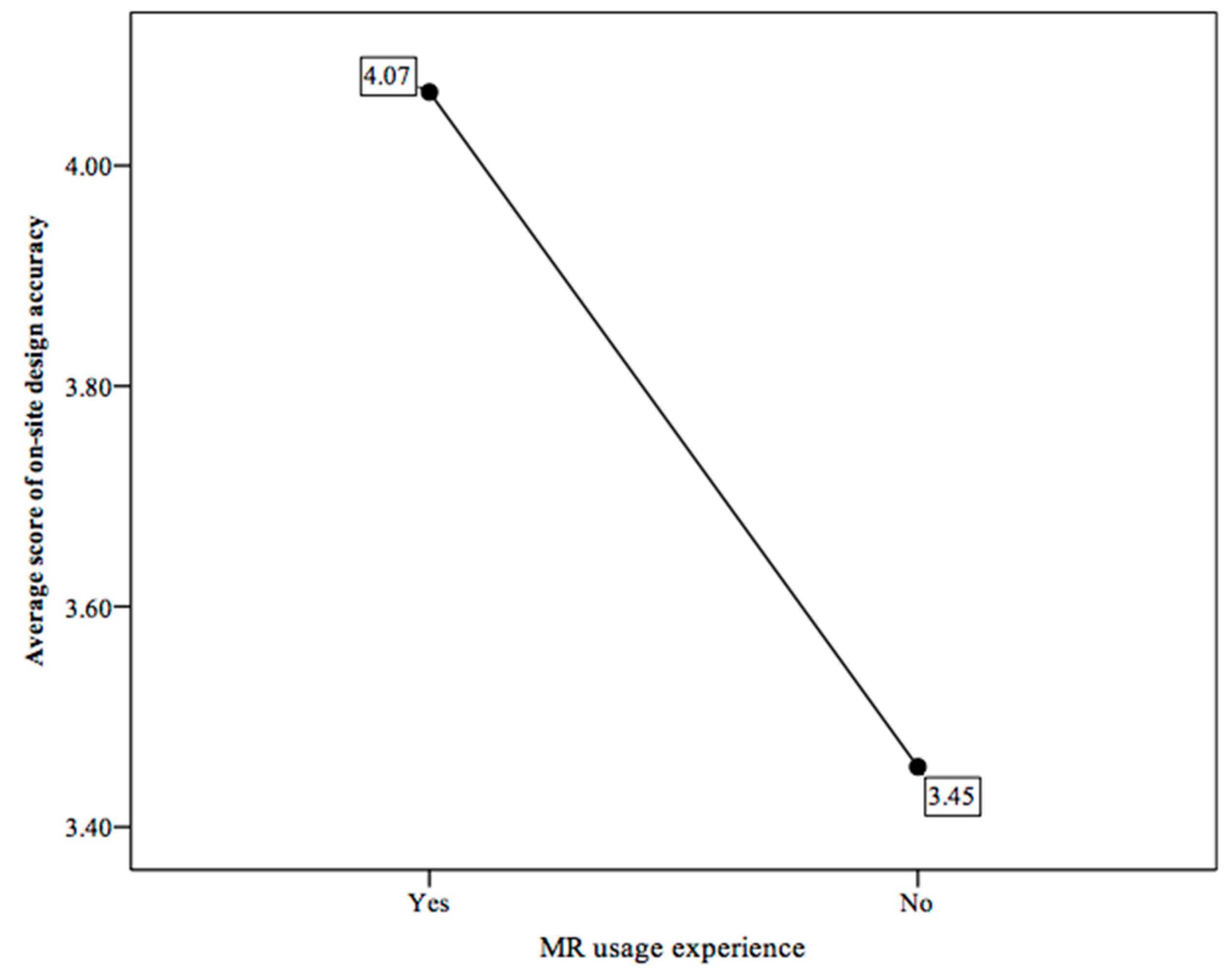

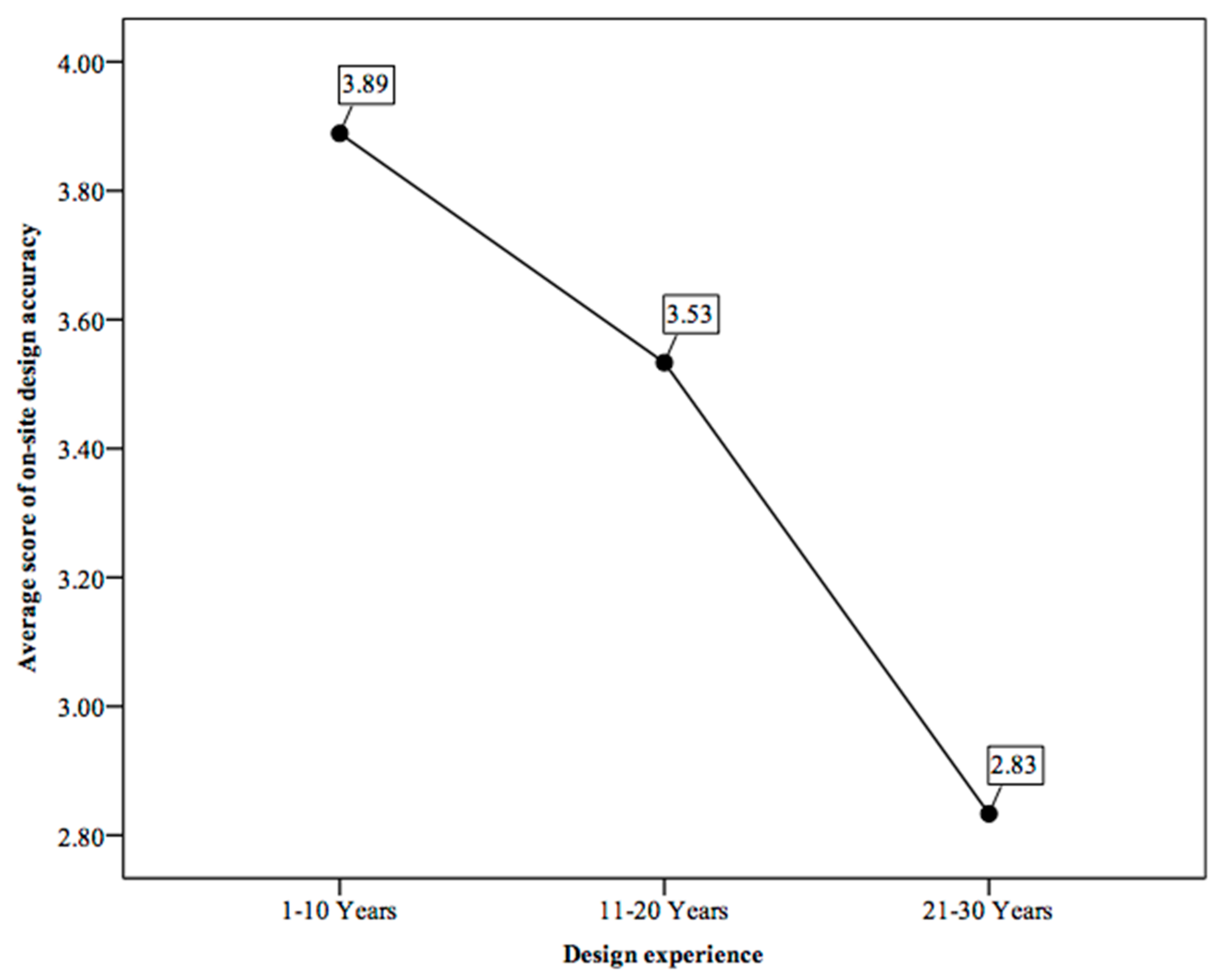

3.2. On-Site Design Accuracy Using MR

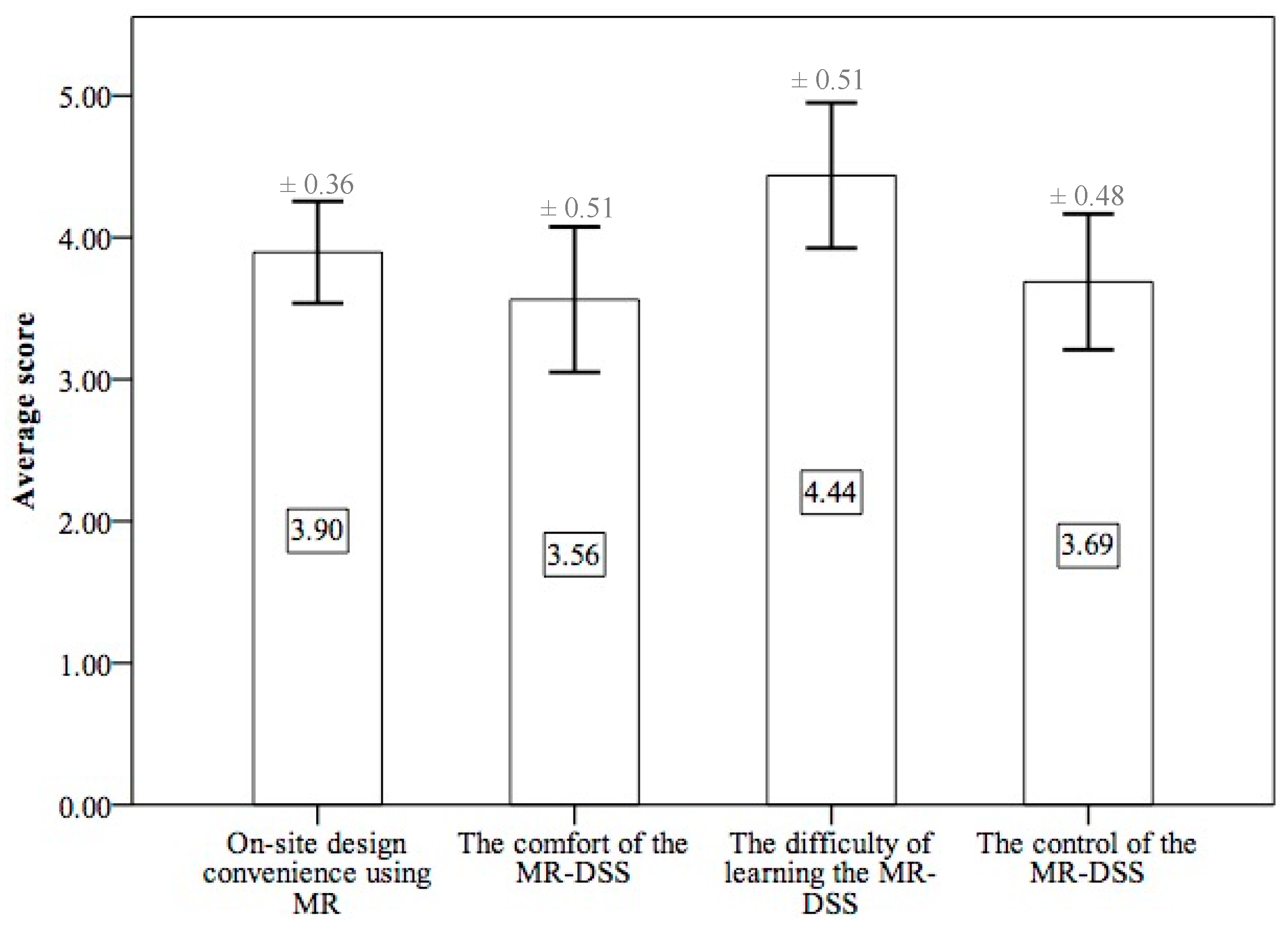

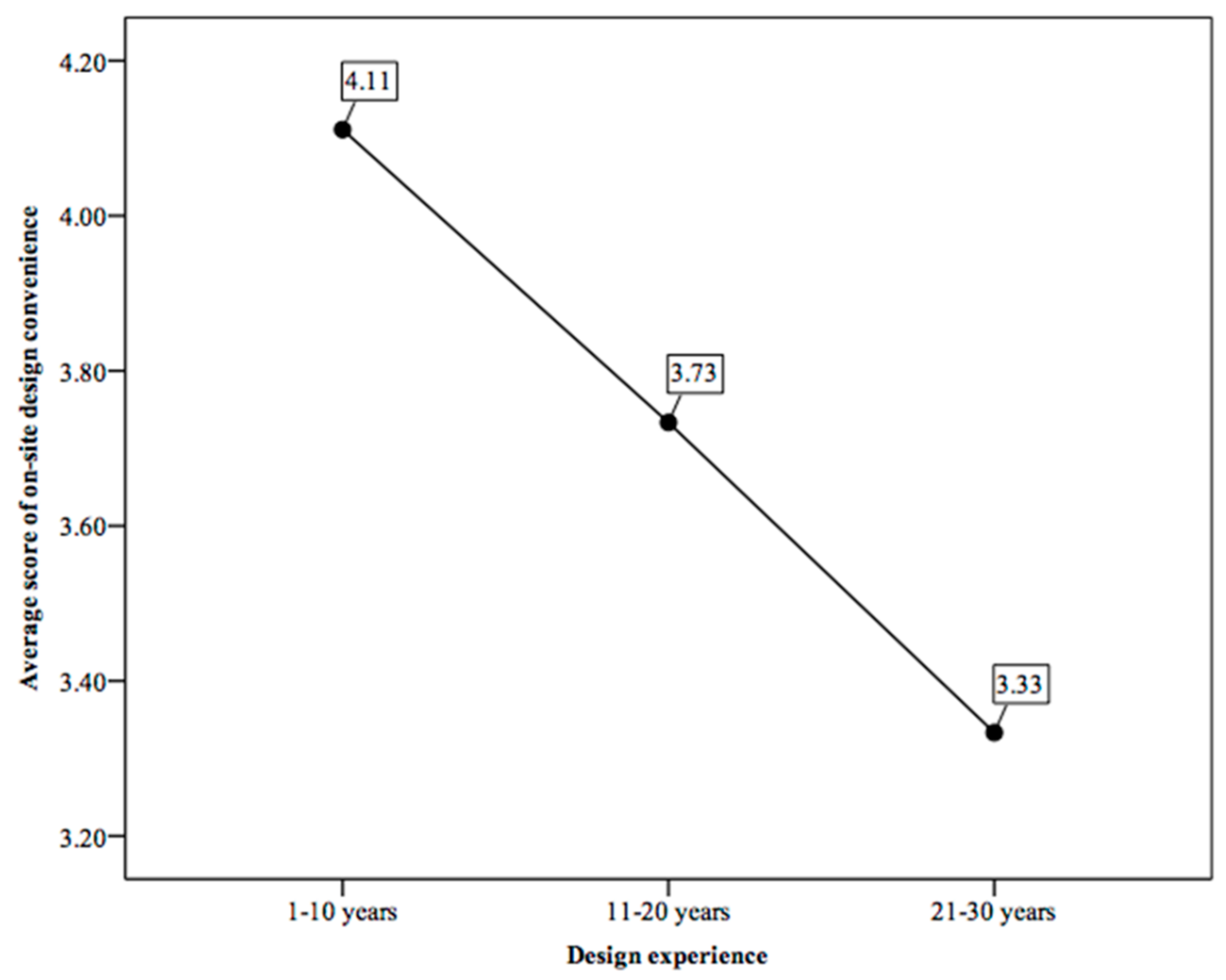

3.3. On-Site Design Convenience Using MR

4. Discussion and Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Zhang, J.X.; Zhao, D.; Chen, H. Zengzhangzhuyi de zhongjie he zhongguo chengshiguihua de zhuanxing (Termination of growth supremacism and transformation of China’s urban planning). City Plan. Rev. (Chin.) 2013, 37, 45–50. Available online: https://scholar.google.co.jp/scholar?cluster=12542838010508592735&hl=zh-CN&as_sdt=2005&sciodt=0,5 (accessed on 29 March 2021).

- Rohe, W.M. From local to global: One hundred years of neighborhood planning. J. Am. Plan. Assoc. 2009, 75, 209–230. [Google Scholar] [CrossRef]

- Huang, L.; Dan, Y.Z.; Xu, J.F.; Tong, M. From Concept to Action: Practice and Thinking in Urban Community Development and Community Planning in Chongqing. Int. Rev. Spat. Plan. 2018, 6, 1–11. [Google Scholar] [CrossRef]

- Skov, M.B.; Kjeldskov, J.; Paay, J.; Husted, N.; Nørskov, J.; Pedersen, K. Designing on-site: Facilitating participatory contextual architecture with mobile phones. Pervasive Mob. Comput. 2013, 9, 216–227. [Google Scholar] [CrossRef]

- Husserl, E. Logical Investigations Volume 2, 1st ed.; Moran, D., Ed.; Routledge: London, UK, 2001; p. 168. ISBN 978-0415241908. Available online: https://philpapers.org/rec/HUSLIV-2 (accessed on 29 March 2021).

- Benjamin, W. The Work of Art in the Age of Mechanical Reproduction. In Illuminations, 1st ed.; Arendt, H., Ed.; Schocken Books: New York, NY, USA, 1968; pp. 217–252. Available online: https://scholar.google.com/scholar_lookup?title=%The%Work%of%Art%in%the%Age%of%Mechanical%Reproduction%&author=W.%%20Benjamin%20&publication_year=1968 (accessed on 29 March 2021).

- Holl, S.; Pallasmaa, J.; Perez-Gomez, A. Questions of Perception: Phenomenology of Architecture, 2nd ed.; William K Stout Pub: San Francisco, CA, USA, 2007; pp. 44–120. ISBN 978-0974621470. Available online: https://stoutbooks.com/products/questions-of-perception-phenomenology-of-architecture-3rd-edition-71167?_pos=2&_sid=a97fcf2fd&_ss=r (accessed on 29 March 2021).

- Schubert, G.; Schattel, D.; Tönnis, M.; Klinker, G.; Petzold, F. Tangible mixed reality on-site: Interactive augmented visualizations from architectural working models in urban design. In The Next City New Technologies and the Future of the Built Environment, 1st ed.; Celani, G., Sperling, D.M., Franco, J.M.S., Eds.; Springer: Berlin/Heidelberg, Germany, 2015; pp. 55–74. [Google Scholar]

- Pittman, K. A laboratory for the visualization of virtual environments. Landsc. Urban Plan. 1992, 21, 327–331. [Google Scholar] [CrossRef]

- Chen, I.R.; Schnabel, M.A. Retrieving lost space with tangible augmented reality. In Proceedings of the 14th Conference on Computer-Aided Architectural Design Research in Asia, Yunling, Taiwan, 22–25 April 2009; pp. 135–142. Available online: http://papers.cumincad.org/cgi-bin/works/paper/caadria2009_208 (accessed on 29 March 2021).

- D’Souza, N.S.; Yoon, Y.; Islam, Z. Understanding design skills of the Generation Y: An exploration through the VR-KiDS project. Des. Stud. 2011, 32, 180–209. [Google Scholar] [CrossRef]

- Guo, X.; Yang, G. Animating prairies simulation with shell method in real-time. J. Softw. 2013, 8, 3166–3172. [Google Scholar] [CrossRef]

- Gu, N.; Kim, M.J.; Maher, M.L. Technological advancements in synchronous collaboration: The effects of 3D virtual worlds and tangible user interfaces on architectural design. Autom. Constr. 2011, 20, 270–278. [Google Scholar] [CrossRef]

- Portman, M.E.; Natapov, A.; Fisher-Gewirtzman, D. To go where no man has gone before: Virtual reality in architecture, landscape architecture and environmental planning. Comput. Environ. Urban Syst. 2015, 54, 376–384. [Google Scholar] [CrossRef]

- Kim, S.; Dey, A.K. AR interfacing with prototype 3D applications based on user-centered interactivity. Comput. Aided Des. 2010, 42, 373–386. [Google Scholar] [CrossRef]

- Lange, E. 99 volumes later: We can visualise. Now what? Landsc. Urban Plan. 2011, 100, 403–406. [Google Scholar] [CrossRef]

- Wang, X.; Kim, M.J.; Love, P.E.D.; Kang, S.C. Augmented Reality in built environment: Classification and implications for future research. Autom. Constr. 2013, 32, 1–13. [Google Scholar] [CrossRef]

- Allen, M.; Regenbrecht, H.; Abbott, M. Smart-phone augmented reality for public participation in urban planning. In Proceedings of the 23rd Australian Computer-Human Interaction Conference, Canberra, Australia, 28 November–2 December 2011; Stevenson, D., Ed.; Association for Computing Machinery: New York, NY, USA, 2011; pp. 11–20. [Google Scholar] [CrossRef]

- Gill, L.; Lange, E. Getting virtual 3D landscapes out of the lab. Comput. Environ. Urban Syst. 2015, 54, 356–362. [Google Scholar] [CrossRef]

- Olsson, T.; Kärkkäinen, T.; Lagerstam, E.; Ventä-Olkkonen, L. User evaluation of mobile augmented reality scenarios. J. Ambient Intell. Smart Environ. 2012, 4, 29–47. [Google Scholar] [CrossRef]

- Chi, H.L.; Kang, S.C.; Wang, X. Research Trends and opportunities of augmented reality applications in architecture, engineering, and construction. Autom. Constr. 2013, 33, 116–122. [Google Scholar] [CrossRef]

- Alizadehsalehi, S.; Hadavi, A.; Huang, J.C. From BIM to extended reality in AEC industry. Autom. Constr. 2020, 116, 103254. [Google Scholar] [CrossRef]

- Milgram, P.; Kishino, F. A Taxonomy of mixed reality visual displays. IEICE Trans. Inf. Syst. 1994, 12, 1321–1329. Available online: http://citeseerx.ist.psu.edu/viewdoc/summary?doi=10.1.1.102.4646 (accessed on 29 March 2021).

- Agten, C.A.; Dennler, C.; Rosskopf, A.B.; Jaberg, L.; Pfirrmann, C.W.A.; Farshad, M. Augmented Reality–Guided Lumbar Facet Joint Injections. Investig. Radiol. 2018, 53, 495–498. [Google Scholar] [CrossRef]

- Brun, H.; Bugge, R.A.B.; Suther, L.K.R.; Birkeland, S.; Kumar, R.; Pelanis, E.; Elle, O.J. Mixed reality holograms for heart surgery planning: First user experience in congenital heart disease. Eur. Heart J. Cardiovasc. Imaging 2019, 20, 883–888. [Google Scholar] [CrossRef]

- National Aeronautics and Space Administration. ‘Mixed Reality’ Technology Brings Mars to Earth. Available online: https://www.jpl.nasa.gov/news/news.php?feature=6220 (accessed on 10 February 2021).

- Hammady, R.; Ma, M. Designing Spatial UI as a Solution of the Narrow FOV of Microsoft HoloLens: Prototype of Virtual Museum Guide. In Augmented Reality and Virtual Reality—The Power of AR and VR for Business, 1st ed.; Dieck, M.C.T., Jung, T., Eds.; Springer International Publishing AG: Cham, Switzerland, 2017; pp. 217–231. [Google Scholar] [CrossRef]

- Chalhoub, J.; Ayer, S.K. Using Mixed Reality for electrical construction design communication. Autom. Constr. 2018, 86, 1–10. [Google Scholar] [CrossRef]

- Riexinger, G.; Kluth, A.; Olbrich, M.; Braun, J.-D.; Bauernhansl, T. Mixed Reality for on-site self-instruction and self-inspection with Building Information Models. Procedia CIRP 2018, 72, 1124–1129. [Google Scholar] [CrossRef]

- Microsoft. HoloLens (1st gen) Hardware. Available online: https://docs.microsoft.com/en-us/hololens/hololens1-hardware (accessed on 10 February 2021).

- Hanna, M.G.; Ahmed, I.; Nine, J.; Prajapati, S.; Pantanowitz, L. Augmented Reality Technology Using Microsoft HoloLens in Anatomic Pathology. Arch. Pathol. Lab. Med. 2018, 142, 638–644. [Google Scholar] [CrossRef] [PubMed]

| Mean Ranks of Scores for the MR Usage Experience Groups | p-Value | ||

|---|---|---|---|

| With MR usage experience | Without MR usage experience | (Kruskal–Wallis) | |

| On-site design intuitiveness using MR | 11.00 | 7.36 | 0.112 |

| Mean Ranks of Scores for the MR Design Experience Groups | p-Value | |||

|---|---|---|---|---|

| 1–10 Years | 11–20 Years | 21–30 Years | (Kruskal–Wallis) | |

| On-site design intuitiveness using MR | 9.17 | 9.50 | 3.00 | 0.145 |

| Mean Ranks of Scores for the MR Usage Experience Groups | p-Value | ||

|---|---|---|---|

| With MR usage experience | Without MR usage experience | (Kruskal–Wallis) | |

| On-site design accuracy using MR | 11.90 | 6.95 | 0.049 |

| Mean Ranks of Scores for the Design Experience Groups | p-Value | |||

|---|---|---|---|---|

| 1–10 Years | 11–20 Years | 21–30 Years | (Kruskal–Wallis) | |

| On-site design accuracy using MR | 10.56 | 7.50 | 1.75 | 0.036 |

| Mean Ranks of Scores for the Design Experience Groups | p-Value | |||

|---|---|---|---|---|

| 1–10 Years | 11–20 Years | 21–30 Years | (Kruskal–Wallis) | |

| On-site design convenience using MR | 11.22 | 6.20 | 2.00 | 0.014 |

| Mean Ranks of Scores for the MR Usage Experience Groups | p-Value | ||

|---|---|---|---|

| With MR usage experience | Without MR usage experience | (ANOVA) | |

| On-site design convenience using MR | 11.50 | 7.14 | 0.077 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Dan, Y.; Shen, Z.; Zhu, Y.; Huang, L. Using Mixed Reality (MR) to Improve On-Site Design Experience in Community Planning. Appl. Sci. 2021, 11, 3071. https://doi.org/10.3390/app11073071

Dan Y, Shen Z, Zhu Y, Huang L. Using Mixed Reality (MR) to Improve On-Site Design Experience in Community Planning. Applied Sciences. 2021; 11(7):3071. https://doi.org/10.3390/app11073071

Chicago/Turabian StyleDan, Yuze, Zhenjiang Shen, Yiyun Zhu, and Ling Huang. 2021. "Using Mixed Reality (MR) to Improve On-Site Design Experience in Community Planning" Applied Sciences 11, no. 7: 3071. https://doi.org/10.3390/app11073071

APA StyleDan, Y., Shen, Z., Zhu, Y., & Huang, L. (2021). Using Mixed Reality (MR) to Improve On-Site Design Experience in Community Planning. Applied Sciences, 11(7), 3071. https://doi.org/10.3390/app11073071