Applications of Smart Glasses in Applied Sciences: A Systematic Review

Abstract

1. Introduction

2. Methods

- RQ1: What are the research trends of smart glasses by year and application fields?

- RQ2: Which product and operating system are widely used in smart glass applications?

- RQ3: Which sensors are mainly used depending on the purpose of application of the smart glasses?

- RQ4: Which data visualization, processing, and transfer methods are widely used in such applications?

2.1. Search Method

2.2. Selection Criteria

3. Results

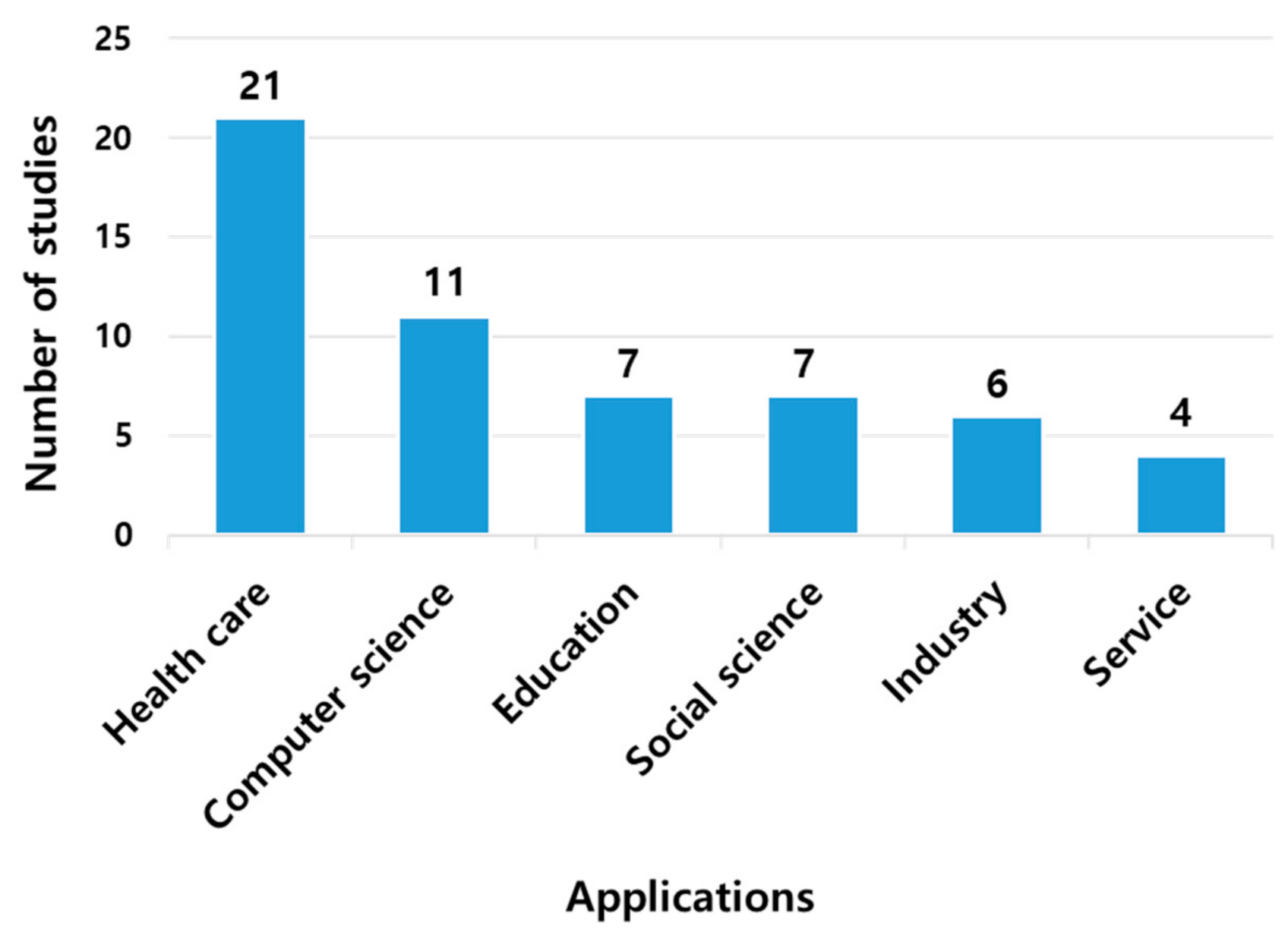

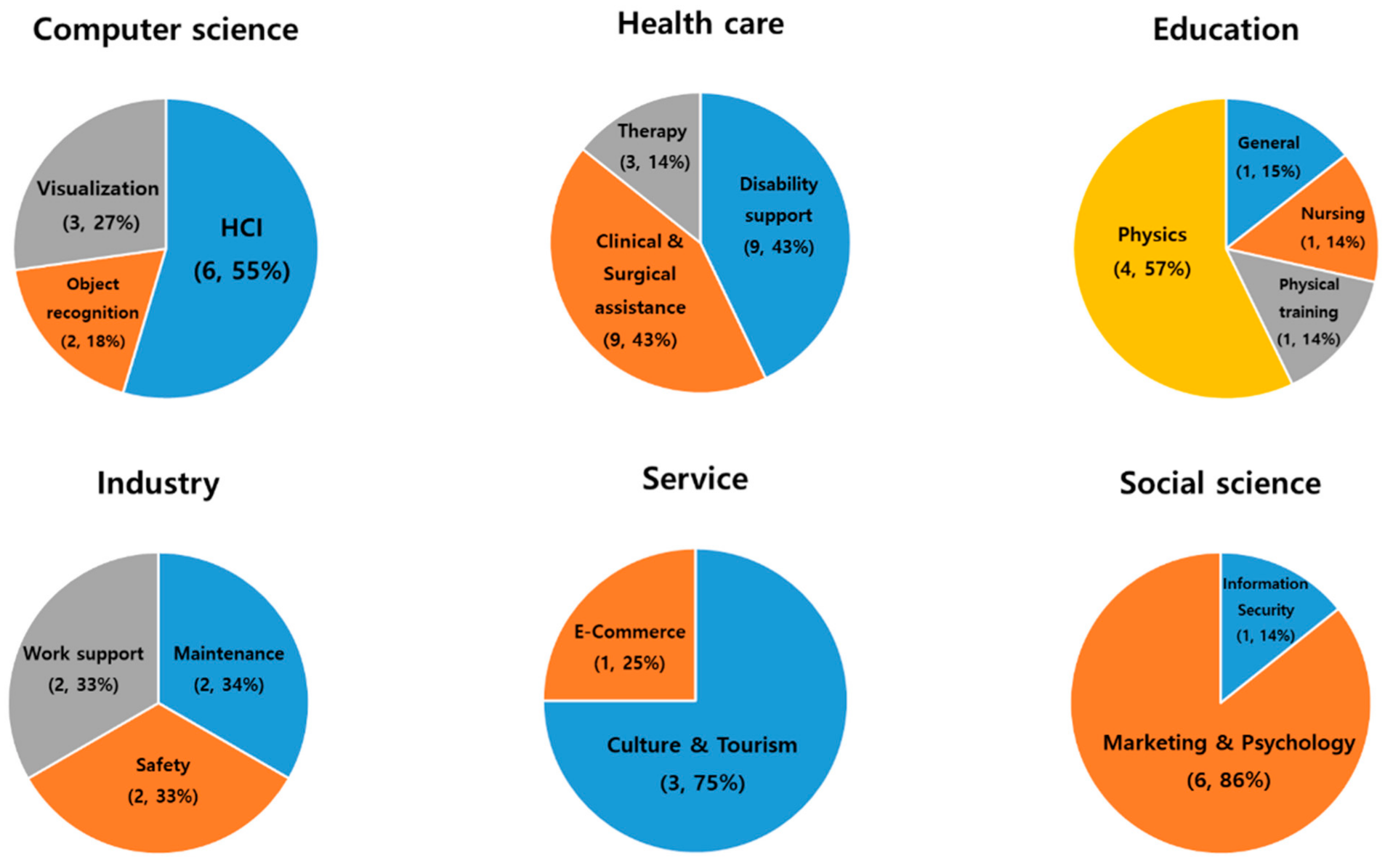

3.1. Smart Glasses Research Trend

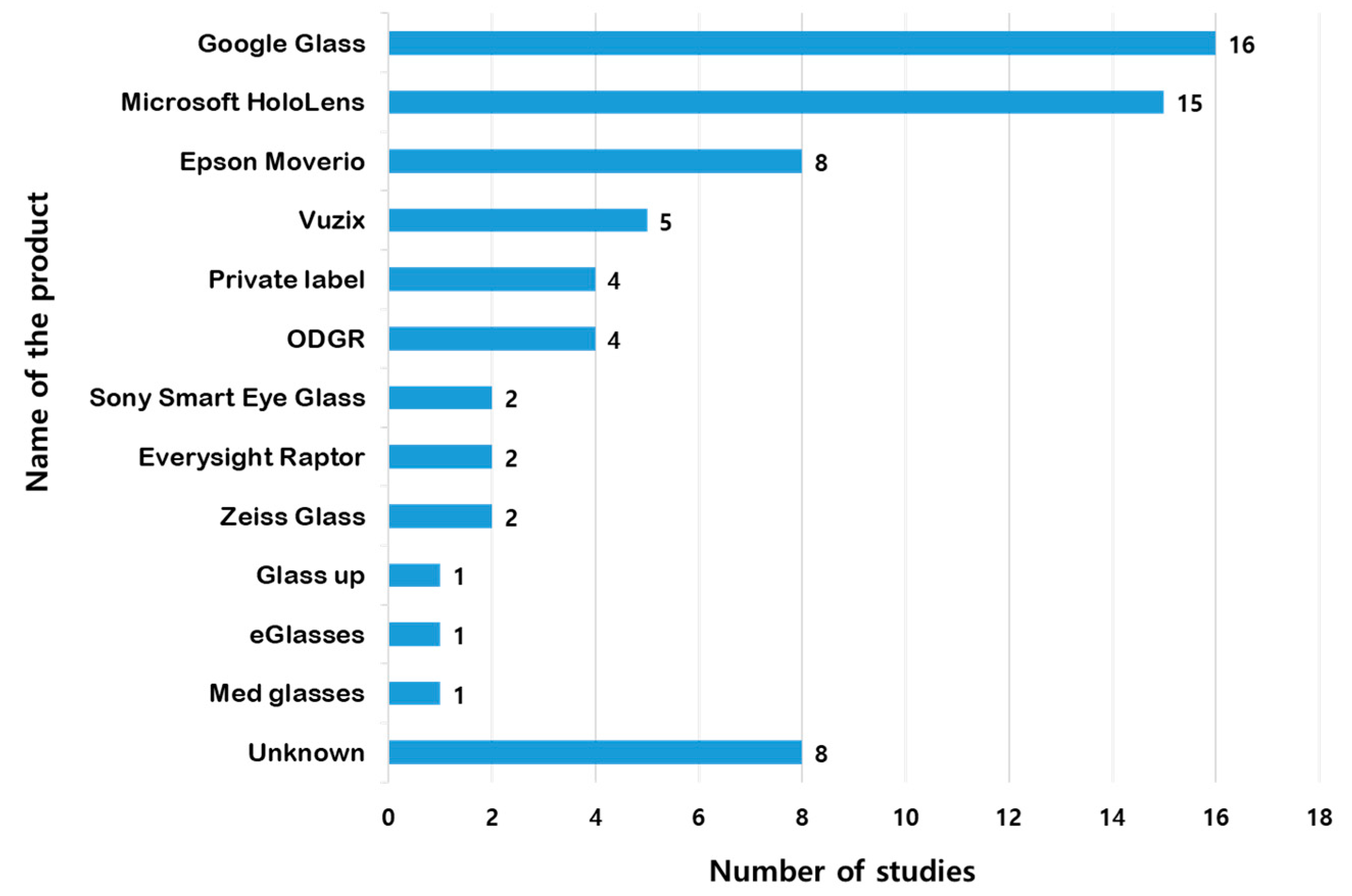

3.2. Product and Operating System

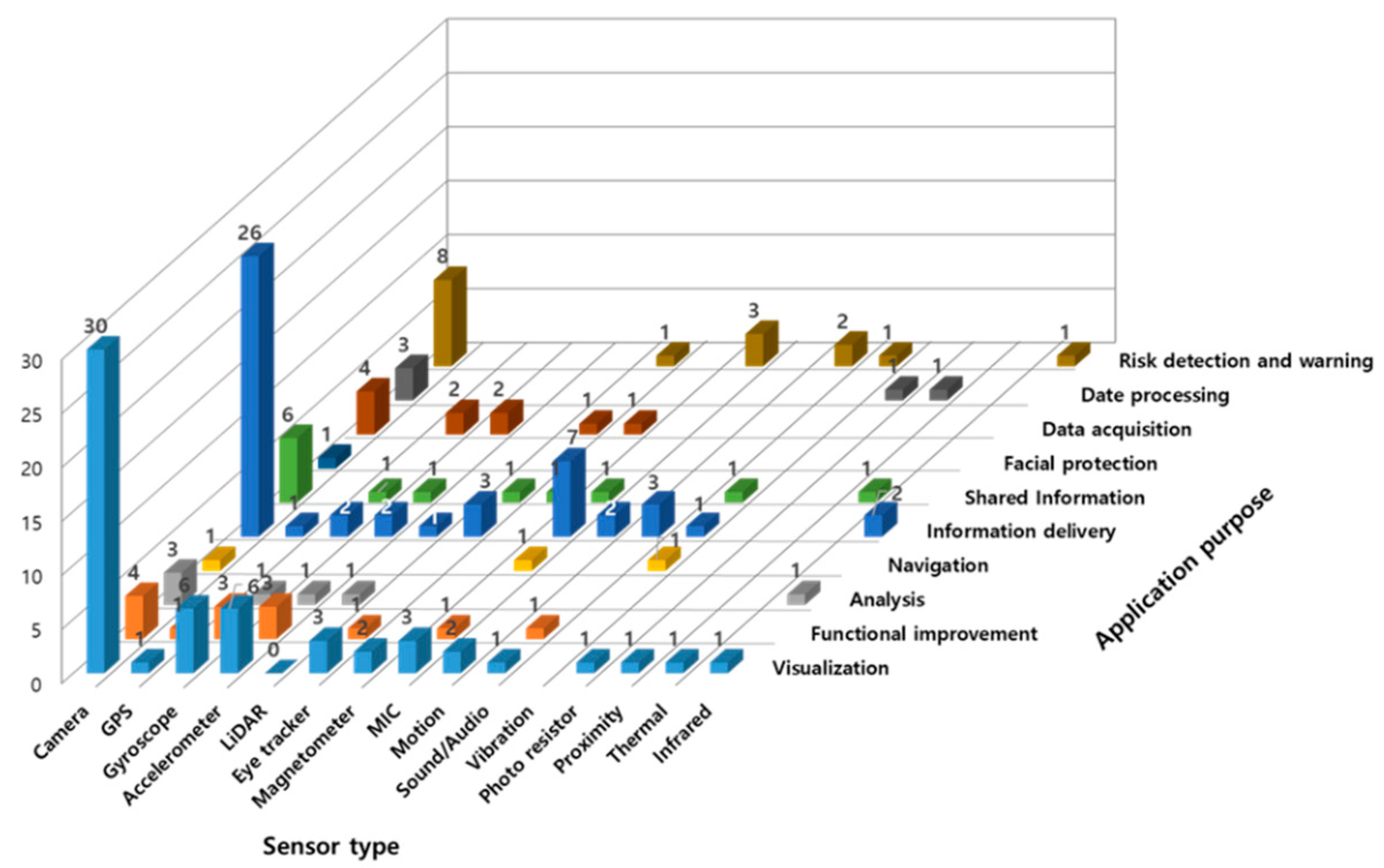

3.3. Sensors Depending on the Application Purpose

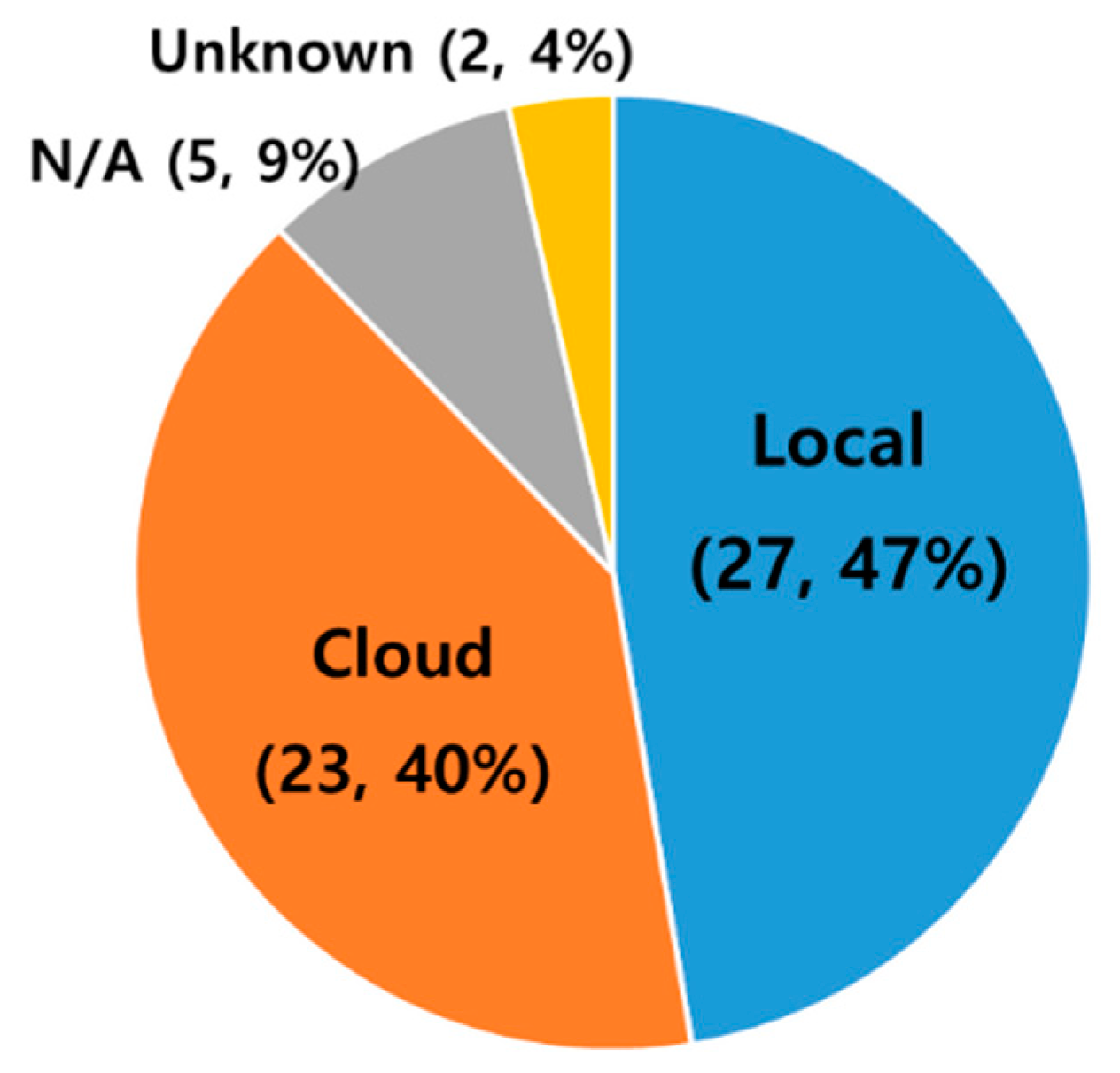

3.4. Data Visualization, Processing, and Transfer Methods

4. Discussion

- RQ 1: Research related to smart glasses has been actively conducted since the launch of Google Glass in 2017. This development has been the most researched in the field of healthcare, focusing on areas of clinical and surgical assistance for healthcare professionals or assisting persons with mental or physical disabilities.

- RQ 2: Google Glass products were used the most, followed by Microsoft’s HoloLens. Google Glass is an Android-based product, and HoloLens is a Windows-based product. Thus, Android OS is used the most, followed by Windows.

- RQ 3: The purpose of the studies was mostly to determine ways to visually represent information using smart glasses, and transmitting data acquired through smart glasses was the most frequently described. A camera sensor was the most commonly used device.

- RQ 4: Most of the acquired data appeared in the form of AR. The acquired data are mainly processed in the device, and Wi-Fi is the most frequently used data transmission method.

- Users can view digital images from the front without switching their line of sight to their smartphones.

- Visual and head direction sensor technology that can confirm the direction of the user’s interest provides information based on the more elaborate head orientation and position, as well as a more immersive and personalized experience.

- Owing to wireless interaction between the device and the user (e.g., voice recognition and air motion), both hands can be used freely without buttons or touchpads.

- There is no need to remember difficult or complicated processes in the field, and it is possible to learn accurately and quickly through virtual videos provided in front of users, improving work efficiency [53].

- Efficiency can be improved through these advantages.

- Cameras and GPS-like sensors attached to smart glasses have potential elements of privacy breaches.

- With sensors and batteries to improve functionality, effort is required to achieve a universal smart glass design.

- According to the user environment, it is necessary to consider the appropriate interaction technology (e.g., speech recognition or air motion).

- Smart glasses can be used in reading visual media and articles with long texts, and can technically complement works of fiction, such as novels.

- We must continually develop technologies that can modify existing types of content used in mobile apps and implement dynamic forms of content allowing apps on smartphones to be used successfully when applied in AR [52].

- Next-generation smart glass applications must adopt specific strategies for tailoring content to specific types of smart glasses.

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Kang, J.Y. Study on the Content Design for Wearable Device—Focus on User Centered Wearable Infotainment Design. J. Digit. Des. 2015, 15, 325–333. [Google Scholar]

- Michalski, R.S.; Carbonell, J.G.; Mitchell, T.M. Machine learning: An artificial intelligence approach. Artif. Intell. 1985, 25, 236–238. [Google Scholar] [CrossRef]

- Sutherland, I.E. The Ultimate Display. In Proceedings of the IFIP Congress, New York, NY, USA, 24–28 May 1965; pp. 506–508. [Google Scholar]

- Sutherland, I.E. A head-mounted three dimensional display. In Proceedings of the AFIPS 68, San Francisco, CA, USA, 9–11 December 1968. [Google Scholar]

- Kress, B.; Starner, T. A Review of Head-Mounted Displays (HMD) Technologies and Applications for Consumer Electronics. In Photonic Applications for Aerospace, Commercial, and Harsh Environments IV; International Society for Optics and Photonics: Bellingham, WA, USA, 2013; Volume 8720, p. 87200A. [Google Scholar]

- Due, B.L. The Future of Smart Glasses: An Essay about Challenges and Possibilities with Smart Glasses; Centre of Interaction Research and Communication Design, University of Copenhagen: København, Denmark, 2014; Volume 1, pp. 1–21. [Google Scholar]

- Seifabadi, R.; Li, M.; Long, D.; Xu, S.; Wood, B.J. Accuracy Study of Smartglasses/Smartphone AR Systems for Percutaneous Needle Interventions. In Proceedings of the SPIE Medical Imaging, Houston, TX, USA, 16 March 2020; Volume 11315. [Google Scholar] [CrossRef]

- Aiordǎchioae, A.; Vatavu, R.-D. Life-Tags: A Smartglasses-Based System for Recording and Abstracting Life with Tag Clouds. ACM Hum. Comput. Interact. 2019, 3, 1–22. [Google Scholar] [CrossRef]

- Han, D.-I.D.; Tom Dieck, M.C.; Jung, T. Augmented Reality Smart Glasses (ARSG) Visitor Adoption in Cultural Tourism. Leis. Stud. 2019, 38, 618–633. [Google Scholar] [CrossRef]

- Miller, A.; Malasig, J.; Castro, B.; Hanson, V.L.; Nicolau, H.; Brandão, A. The Use of Smart Glasses for Lecture Comprehension by Deaf and Hard of Hearing Students. In Proceedings of the 2017 CHI Conference Extended Abstracts on Human Factors in Computing Systems, CHI EA ’17, Denver, CO, USA, 6–11 May 2017; pp. 1909–1915. [Google Scholar] [CrossRef]

- Baek, J.; Choi, Y. Smart Glasses-Based Personnel Proximity Warning System for Improving Pedestrian Safety in Construction and Mining Sites. Int. J. Environ. Res. Public Health 2020, 17, 1422. [Google Scholar] [CrossRef]

- Aiordachioae, A.; Schipor, O.-A.; Vatavu, R.-D. An Inventory of Voice Input Commands for Users with Visual Impairments and Assistive Smartglasses Applications. In Proceedings of the 2020 International Conference on Development and Application Systems (DAS), Suceava, Romania, 21–23 May 2020; pp. 146–150. [Google Scholar] [CrossRef]

- Mitrasinovic, S.; Camacho, E.; Trivedi, N.; Logan, J.; Campbell, C.; Zilinyi, R.; Lieber, B.; Bruce, E.; Taylor, B.; Martineau, D. Clinical and Surgical Applications of Smart Glasses. Technol. Health Care 2015, 23, 381–401. [Google Scholar] [CrossRef]

- Klinker, K.; Berkemeier, L.; Zobel, B.; Wüller, H.; Huck, V.; Wiesche, M.; Remmers, H.; Thomas, O.; Krcmar, H. Structure for Innovations: A Use Case Taxonomy for Smart Glasses in Service Processes. In Proceedings of the Multikonferenz Wirtschaftsinformatik 2018, Lüneburg, Germany, 6–9 March 2018; pp. 1599–1610. [Google Scholar]

- Hofmann, B.; Haustein, D.; Landeweerd, L. Smart-Glasses: Exposing and Elucidating the Ethical Issues. Sci. Eng. Ethics 2017, 23, 701–721. [Google Scholar] [CrossRef]

- Lee, L.-H.; Hui, P. Interaction Methods for Smart Glasses: A Survey. IEEE Access 2018, 6, 28712–28732. [Google Scholar] [CrossRef]

- Klinker, K.; Obermaier, J.; Wiesche, M. Conceptualizing passive trust: The case of smart glasses in healthcare. In Proceedings of the 27th European Conference on Information Systems (ECIS), Stockholm & Uppsala, Sweden, 8–14 June 2019; p. 12. [Google Scholar]

- Belkacem, I.; Pecci, I.; Martin, B.; Faiola, A. TEXTile: Eyes-Free Text Input on Smart Glasses Using Touch Enabled Textile on the Forearm. In Human-Computer Interaction—INTERACT 2019; Lamas, D., Loizides, F., Nacke, L., Petrie, H., Winckler, M., Zaphiris, P., Eds.; Computer Science, Lecture Notes; Springer International Publishing: Cham, Switzerland, 2019; pp. 351–371. [Google Scholar] [CrossRef]

- Zhang, L.; Li, X.-Y.; Huang, W.; Liu, K.; Zong, S.; Jian, X.; Feng, P.; Jung, T.; Liu, Y. It Starts with Igaze: Visual Attention Driven Networking with Smart Glasses. In Proceedings of the 20th Annual International Conference on Mobile Computing and Networking, Maui, HI, USA, 7–11 September 2014; pp. 91–102. [Google Scholar]

- Lee, L.H.; Yung Lam, K.; Yau, Y.P.; Braud, T.; Hui, P. HIBEY: Hide the Keyboard in Augmented Reality. In Proceedings of the 2019 IEEE International Conference on Pervasive Computing and Communications (PerCom), Kyoto, Japan, 11–15 March 2019. [Google Scholar] [CrossRef]

- Lee, L.H.; Braud, T.; Bijarbooneh, F.H.; Hui, P. Tipoint: Detecting Fingertip for Mid-Air Interaction on Computational Resource Constrained Smartglasses. In Proceedings of the 23rd ACM Annual International Symposium on Wearable Computers (ISWC2019), London, UK, 11–13 September 2019; pp. 118–122. [Google Scholar] [CrossRef]

- Lee, L.H.; Braud, T.; Lam, K.Y.; Yau, Y.P.; Hui, P. From Seen to Unseen: Designing Keyboard-Less Interfaces for Text Entry on the Constrained Screen Real Estate of Augmented Reality Headsets. Pervasive Mob. Comput. 2020, 64, 101148. [Google Scholar] [CrossRef]

- Lee, L.H.; Braud, T.; Bijarbooneh, F.H.; Hui, P. UbiPoint: Towards Non-Intrusive Mid-Air Interaction for Hardware Constrained Smart Glasses. In Proceedings of the 11th ACM Multimedia Systems Conference, Istambul, Turkey, 8–11 June 2020; pp. 190–201. [Google Scholar] [CrossRef]

- Park, K.-B.; Kim, M.; Choi, S.H.; Lee, J.Y. Deep Learning-Based Smart Task Assistance in Wearable Augmented Reality. Robot. Comput. Integr. Manuf. 2020, 63, 101887. [Google Scholar] [CrossRef]

- Chauhan, J.; Asghar, H.J.; Mahanti, A.; Kaafar, M.A. Gesture-Based Continuous Authentication for Wearable Devices: The Smart Glasses Use Case. In Applied Cryptography and Network Security; Manulis, M., Sadeghi, A.-R., Schneider, S., Eds.; Computer Science, Lecture Notes; Springer International Publishing: Cham, Switzerland, 2016; pp. 648–665. [Google Scholar] [CrossRef]

- Pamparau, C.I. A System for Hierarchical Browsing of Mixed Reality Content in Smart Spaces. In Proceedings of the 2020 International Conference on Development and Application Systems (DAS), Suceava, Romania, 21–23 May 2020; pp. 194–197. [Google Scholar] [CrossRef]

- Riedlinger, U.; Oppermann, L.; Prinz, W. Tango vs. Hololens: A Comparison of Collaborative Indoor AR Visualisations Using Hand-Held and Hands-Free Devices. Multimodal Technol. Interact. 2019, 3, 23. [Google Scholar] [CrossRef]

- Yong, M.; Pauwels, J.; Kozak, F.K.; Chadha, N.K. Application of Augmented Reality to Surgical Practice: A Pilot Study Using the ODG R7 Smartglasses. Clin. Otolaryngol. 2020, 45, 130–134. [Google Scholar] [CrossRef]

- van Doormaal, T.P.C.; van Doormaal, J.A.M.; Mensink, T. Clinical Accuracy of Holographic Navigation Using Point-Based Registration on Augmented-Reality Glasses. Oper. Neurosurg. 2019, 17, 588–593. [Google Scholar] [CrossRef]

- Salisbury, J.P.; Keshav, N.U.; Sossong, A.D.; Sahin, N.T. Concussion Assessment with Smartglasses: Validation Study of Balance Measurement toward a Lightweight, Multimodal, Field-Ready Platform. JMIR mHealth uHealth 2018, 6, e15. [Google Scholar] [CrossRef]

- Maruyama, K.; Watanabe, E.; Kin, T.; Saito, K.; Kumakiri, A.; Noguchi, A.; Nagane, M.; Shiokawa, Y. Smart Glasses for Neurosurgical Navigation by Augmented Reality. Oper. Neurosurg. 2018, 15, 551–556. [Google Scholar] [CrossRef]

- Klueber, S.; Wolf, E.; Grundgeiger, T.; Brecknell, B.; Mohamed, I.; Sanderson, P. Supporting Multiple Patient Monitoring with Head-Worn Displays and Spearcons. Appl. Ergon. 2019, 78, 86–96. [Google Scholar] [CrossRef]

- García-Cruz, E.; Bretonnet, A.; Alcaraz, A. Testing Smart Glasses in Urology: Clinical and Surgical Potential Applications. Actas Urológicas Españolas 2018, 42, 207–211. [Google Scholar] [CrossRef]

- Ruminski, J.; Bujnowski, A.; Kocejko, T.; Andrushevich, A.; Biallas, M.; Kistler, R. The Data Exchange between Smart Glasses and Healthcare Information Systems Using the HL7 FHIR Standard. In Proceedings of the 2016 9th International Conference on Human System Interactions (HSI), Portsmouth, UK, 6–8 July 2016; pp. 525–531. [Google Scholar]

- Nag, A.; Haber, N.; Voss, C.; Tamura, S.; Daniels, J.; Ma, J.; Chiang, B.; Ramachandran, S.; Schwartz, J.; Winograd, T. Toward Continuous Social Phenotyping: Analyzing Gaze Patterns in an Emotion Recognition Task for Children with Autism through Wearable Smart Glasses. J. Med. Internet Res. 2020, 22, e13810. [Google Scholar] [CrossRef]

- Rowe, F. A Hazard Detection and Tracking System for People with Peripheral Vision Loss Using Smart Glasses and Augmented Reality. Int. J. Adv. Comput. Sci. Appl. 2019, 10, 1–9. [Google Scholar]

- Meza-de-Luna, M.E.; Terven, J.R.; Raducanu, B.; Salas, J. A Social-Aware Assistant to Support Individuals with Visual Impairments during Social Interaction: A Systematic Requirements Analysis. Int. J. Hum. Comput. Stud. 2019, 122, 50–60. [Google Scholar] [CrossRef]

- Schipor, O.; Aiordăchioae, A. Engineering Details of a Smartglasses Application for Users with Visual Impairments. In Proceedings of the 2020 International Conference on Development and Application Systems (DAS), Suceava, Romania, 21–23 May 2020; pp. 157–161. [Google Scholar] [CrossRef]

- Chang, W.; Chen, L.; Hsu, C.; Chen, J.; Yang, T.; Lin, C. MedGlasses: A Wearable Smart-Glasses-Based Drug Pill Recognition System Using Deep Learning for Visually Impaired Chronic Patients. IEEE Access 2020, 8, 17013–17024. [Google Scholar] [CrossRef]

- Lausegger, G.; Spitzer, M.; Ebner, M. OmniColor—A Smart Glasses App to Support Colorblind People. Int. J. Interact. Mob. Technol. 2017, 11, 161–177. [Google Scholar] [CrossRef]

- Janssen, S.; Bolte, B.; Nonnekes, J.; Bittner, M.; Bloem, B.R.; Heida, T.; Zhao, Y.; van Wezel, R.J.A. Usability of Three-Dimensional Augmented Visual Cues Delivered by Smart Glasses on (Freezing of) Gait in Parkinson’s Disease. Front. Neurol. 2017, 8, 279. [Google Scholar] [CrossRef]

- Sandnes, F.E. What Do Low-Vision Users Really Want from Smart Glasses? Faces, Text and Perhaps No Glasses at All. In Computers Helping People with Special Needs; Miesenberger, K., Bühler, C., Penaz, P., Eds.; Computer Science, Lecture Notes; Springer International Publishing: Cham, Switzerland, 2016; pp. 187–194. [Google Scholar] [CrossRef]

- Ruminski, J.; Smiatacz, M.; Bujnowski, A.; Andrushevich, A.; Biallas, M.; Kistler, R. Interactions with Recognized Patients Using Smart Glasses. In Proceedings of the 2015 8th International Conference on Human System Interaction (HSI), Warsaw, Poland, 25–27 June 2015; pp. 187–194. [Google Scholar]

- Machado, E.; Carrillo, I.; Saldana, D.; Chen, F.; Chen, L. An Assistive Augmented Reality-Based Smartglasses Solution for Individuals with Autism Spectrum Disorder. In Proceedings of the 2019 IEEE Intl Conf on Dependable, Autonomic and Secure Computing, Intl Conf on Pervasive Intelligence and Computing, Intl Conf on Cloud and Big Data Computing, Intl Conf on Cyber Science and Technology Congress (DASC/PiCom/CBDCom/CyberSciTech), Fukuoka, Japan, 5–8 August 2019; pp. 245–249. [Google Scholar]

- Liu, R.; Salisbury, J.P.; Vahabzadeh, A.; Sahin, N.T. Feasibility of an Autism-Focused Augmented Reality Smartglasses System for Social Communication and Behavioral Coaching. Front. Pediatr. 2017, 5, 145. [Google Scholar] [CrossRef]

- Vahabzadeh, A.; Keshav, N.U.; Abdus-Sabur, R.; Huey, K.; Liu, R.; Sahin, N.T. Improved Socio-Emotional and Behavioral Functioning in Students with Autism Following School-Based Smartglasses Intervention: Multi-Stage Feasibility and Controlled Efficacy Study. Behav. Sci. 2018, 8, 85. [Google Scholar] [CrossRef]

- Wolfartsberger, J.; Zenisek, J.; Wild, N. Data-Driven Maintenance: Combining Predictive Maintenance and Mixed Reality-Supported Remote Assistance. Procedia Manuf. 2020, 45, 307–312. [Google Scholar] [CrossRef]

- Siltanen, S.; Heinonen, H. Scalable and Responsive Information for Industrial Maintenance Work: Developing XR Support on Smart Glasses for Maintenance Technicians. In Proceedings of the 23rd International Conference on Academic Mindtrek, AcademicMindtrek ’20, Tampere, Finland, 29–30 January 2020; pp. 100–109. [Google Scholar] [CrossRef]

- Chang, W.-J.; Chen, L.-B.; Chiou, Y.-Z. Design and Implementation of a Drowsiness-Fatigue-Detection System Based on Wearable Smart Glasses to Increase Road Safety. IEEE Trans. Consum. Electron. 2018, 64, 461–469. [Google Scholar] [CrossRef]

- Kirks, T.; Jost, J.; Uhlott, T.; Püth, J.; Jakobs, M. Evaluation of the Application of Smart Glasses for Decentralized Control Systems in Logistics. In Proceedings of the 2019 IEEE Intelligent Transportation Systems Conference (ITSC), Auckland, New Zealand, 27–30 October 2019; pp. 4470–4476. [Google Scholar]

- Gensterblum, C. An Analysis of Smart Glasses and Commercial Drones’ Ability to Become Disruptive Technologies in the Construction Industry. Ph.D. Thesis, Kalamazoo College, Kalamazoo, MI, USA, 1 January 2020. [Google Scholar]

- tom Dieck, M.C.; Jung, T.; Han, D.I. Mapping Requirements for the Wearable Smart Glasses Augmented Reality Museum Application. J. Hosp. Tour. Technol. 2016, 7, 230–253. [Google Scholar] [CrossRef]

- Mason, M. The MIT Museum Glassware Prototype: Visitor Experience Exploration for Designing Smart Glasses. J. Comput. Cult. Herit. 2016, 9, 12:1–12:28. [Google Scholar] [CrossRef]

- Ho, C.C.; Tseng, B.-Y.; Ho, M.-C. Typingless Ticketing Device Input by Automatic License Plate Recognition Smartglasses. ICIC Express Lett. Part B Appl. 2018, 9, 325–330. [Google Scholar]

- Kopetz, J.P.; Wessel, D.; Jochems, N. User-Centered Development of Smart Glasses Support for Skills Training in Nursing Education. i-com 2019, 18, 287–299. [Google Scholar] [CrossRef]

- Berkemeier, L.; Menzel, L.; Remark, F.; Thomas, O. Acceptance by Design: Towards an Acceptable Smart Glasses-Based Information System Based on the Example of Cycling Training. In Proceedings of the Multikonferenz Wirtschaftsinformatik, Lüneburg, Germany, 6–9 March 2018; p. 12. [Google Scholar]

- Cao, Y.; Tang, Y.; Xie, Y. A Novel Augmented Reality Guidance System for Future Informatization Experimental Teaching. In Proceedings of the 2018 IEEE International Conference on Teaching, Assessment, and Learning for Engineering (TALE), Wollongong, Australia, 4–7 December 2019; pp. 900–905. [Google Scholar] [CrossRef]

- Spitzer, M.; Nanic, I.; Ebner, M. Distance Learning and Assistance Using Smart Glasses. Educ. Sci. 2018, 8, 21. [Google Scholar] [CrossRef]

- Kapp, S.; Thees, M.; Strzys, M.P.; Beil, F.; Kuhn, J.; Amiraslanov, O.; Javaheri, H.; Lukowicz, P.; Lauer, F.; Rheinländer, C.; et al. Augmenting Kirchhoff’s Laws: Using Augmented Reality and Smartglasses to Enhance Conceptual Electrical Experiments for High School Students. Phys. Teach. 2018, 57, 52–53. [Google Scholar] [CrossRef]

- Thees, M.; Kapp, S.; Strzys, M.P.; Beil, F.; Lukowicz, P.; Kuhn, J. Effects of Augmented Reality on Learning and Cognitive Load in University Physics Laboratory Courses. Comput. Hum. Behav. 2020, 108, 106316. [Google Scholar] [CrossRef]

- Strzys, M.P.; Kapp, S.; Thees, M.; Klein, P.; Lukowicz, P.; Knierim, P.; Schmidt, A.; Kuhn, J. Physics Holo.Lab Learning Experience: Using Smartglasses for Augmented Reality Labwork to Foster the Concepts of Heat Conduction. Eur. J. Phys. 2018, 39, 035703. [Google Scholar] [CrossRef]

- Rauschnabel, P.A.; He, J.; Ro, Y.K. Antecedents to the Adoption of Augmented Reality Smart Glasses: A Closer Look at Privacy Risks. J. Bus. Res. 2018, 92, 374–384. [Google Scholar] [CrossRef]

- Adapa, A.; Nah, F.F.-H.; Hall, R.H.; Siau, K.; Smith, S.N. Factors Influencing the Adoption of Smart Wearable Devices. Int. J. Hum. Comput. Interact. 2018, 34, 399–409. [Google Scholar] [CrossRef]

- Rauschnabel, P.A.; Brem, A.; Ivens, B.S. Who Will Buy Smart Glasses? Empirical Results of Two Pre-Market-Entry Studies on the Role of Personality in Individual Awareness and Intended Adoption of Google Glass Wearables. Comput. Hum. Behav. 2015, 49, 635–647. [Google Scholar] [CrossRef]

- Rauschnabel, P.A.; Hein, D.W.E.; He, J.; Ro, Y.K.; Rawashdeh, S.; Krulikowski, B. Fashion or Technology? A Fashnology Perspective on the Perception and Adoption of Augmented Reality Smart Glasses. i-com 2016, 15, 179–194. [Google Scholar] [CrossRef]

- Rallapalli, S.; Ganesan, A.; Chintalapudi, K.; Padmanabhan, V.N.; Qiu, L. Enabling Physical Analytics in Retail Stores Using Smart Glasses. In Proceedings of the 20th Annual International Conference on Mobile Computing and Networking, Maui, HI, USA, 7–11 September 2014; pp. 115–126. [Google Scholar]

- Hoogsteen, K.M.P.; Osinga, S.A.; Steenbekkers, B.L.P.A.; Szpiro, S.F.A. Functionality versus Inconspicuousness: Attitudes of People with Low Vision towards OST Smart Glasses. In Proceedings of the 22nd International ACM SIGACCESS Conference on Computers and Accessibility, Virtual Event, Greece, 26–28 October 2020; pp. 1–4. [Google Scholar]

- Ok, A.E.; Basoglu, N.A.; Daim, T. Exploring the Design Factors of Smart Glasses. In Proceedings of the 2015 Portland International Conference on Management of Engineering and Technology (PICMET), Portland, OR, USA, 2–6 August 2015; pp. 1657–1664. [Google Scholar]

- Caria, M.; Todde, G.; Sara, G.; Piras, M.; Pazzona, A. Performance and Usability of Smartglasses for Augmented Reality in Precision Livestock Farming Operations. Appl. Sci. 2020, 10, 2318. [Google Scholar] [CrossRef]

- Vuzix. Available online: https://www.vuzix.com/support/legacy-product/m100-smart-glasses (accessed on 29 March 2021).

- Google Enterprise Edition Help. Available online: https://support.google.com/glass-enterprise/customer/answer/9220200?hl=en&ref_topic=9235678 (accessed on 29 March 2021).

- Epson. Available online: https://www.epson.co.kr/%EB%B9%84%EC%A6%88%EB%8B%88%EC%8A%A4%EC%9A%A9-%EC%A0%9C%ED%92%88/%EC%8A%A4%EB%A7%88%ED%8A%B8%EA%B8%80%EB%9D%BC%EC%8A%A4/Moverio-BT-350/p/V11H837051 (accessed on 29 March 2021).

- Microsoft. Available online: https://www.microsoft.com/ko-kr/hololens (accessed on 29 March 2021).

- Gintautas, V.; Hübler, A.W. Experimental Evidence for Mixed Reality States in an Interreality System. Phys. Rev. E 2007, 75, 057201. [Google Scholar] [CrossRef]

- Milgram, P.; Kishino, F. A Taxonomy of Mixed Reality Visual Displays. IEICE Trans. Inf. Syst. 1994, E77-D, 1321–1329. [Google Scholar]

- Kiyokawa, K. An Introduction to Head-Mounted Displays for Augmented Reality. In Emerging Technologies of Augmented Reality: Interfaces and Design; IGI Global: Hershey, PA, USA, 2007; pp. 43–63. [Google Scholar]

- Mason, M. The dimensions of the mobile visitor experience: Thinking beyond the technology design. Int. J. Incl. Mus. 2012, 5, 51–72. [Google Scholar] [CrossRef]

| Sub-Field | References | Year | Aim of Study |

|---|---|---|---|

| HCI (Human–Computer Interaction) | Belkacem et al. [18] | 2019 | Inputting text into smart glasses using touch fiber |

| Zhang et al. [19] | 2014 | Exploring the user’s visual interest through smart glasses | |

| Lee et al. [20] | 2019 | Development of technology to enter text through fingertip detection technology in the air without using a keyboard | |

| Lee et al. [21] | 2019 | ||

| Lee et al. [22] | 2020 | ||

| Lee et al. [23] | 2020 | ||

| Object recognition | Park et al. [24] | 2020 | Effective object detection and object segmentation verification through wearable AR technology based on deep learning technology |

| Chauhan et al. [25] | 2016 | Verification of biometric accuracy of smart glasses | |

| Visualization | Parmparau [26] | 2020 | Development of technology to visualize digital contents |

| Riedlinger et al. [27] | 2019 | Visualization comparison of hands-free (smart glass) versus non-hands-free (tablet) devices | |

| Aiordǎchioae et al. [8] | 2019 | Data cloud formation through tags extracted from images recorded with cameras in smart glasses |

| Sub-Field | References | Year | Aim of Study |

|---|---|---|---|

| Clinical and Surgical assistance | Yong et al. [28] | 2020 | Enhancing the learning experience for trainees practicing microsurgery techniques for science and surgery |

| van Doormaal et al. [29] | 2019 | To determine feasibility and accuracy of holographic neuronavigation (HN) using AR smart glasses. | |

| Salisbury et al. [30] | 2018 | Objective measurement of concussion-related disorders through smart glasses | |

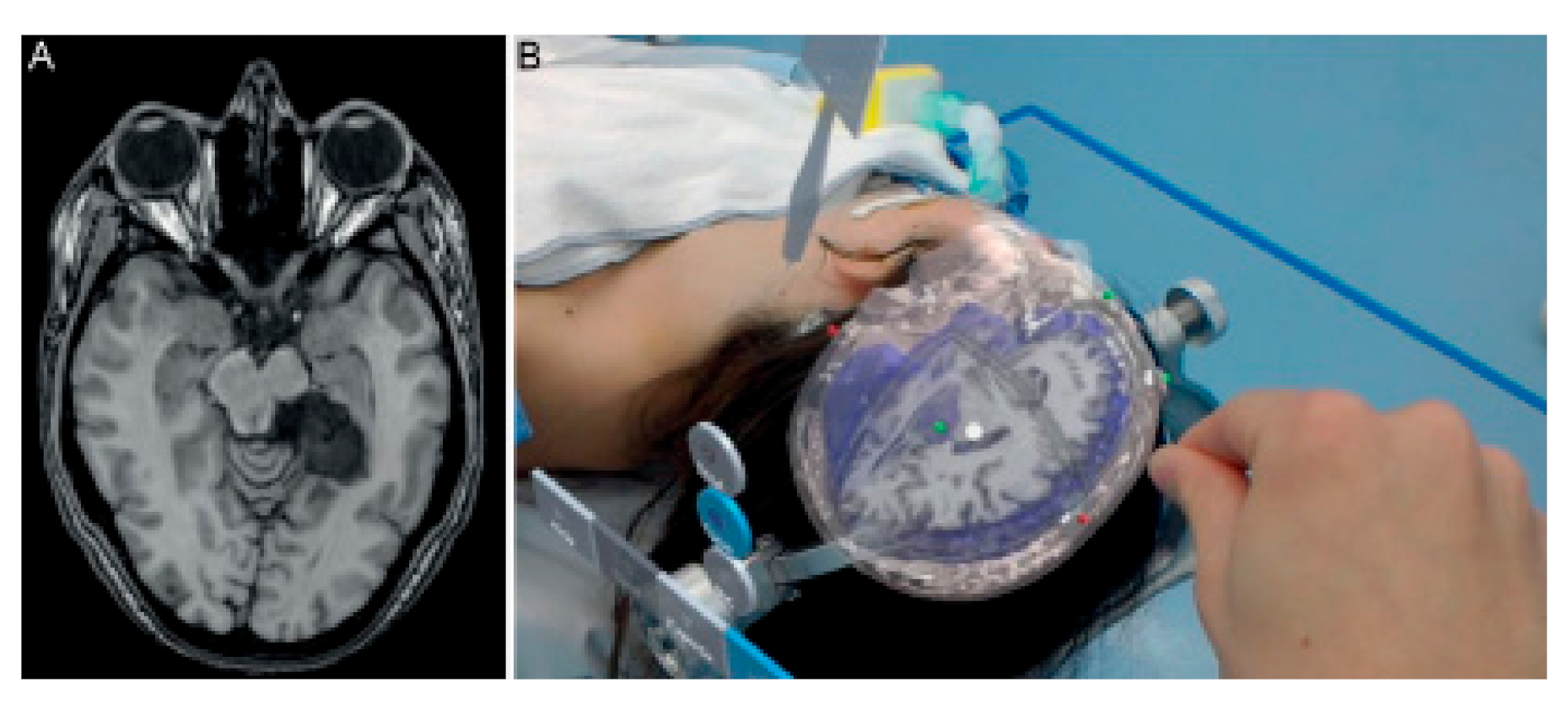

| Maruyama et al. [31] | 2018 | Assessing the accuracy of visualizing 3D graphics in neurosurgery surgery | |

| Klueber et al. [32] | 2019 | Multiple patient monitoring | |

| García-Cruz et al. [33] | 2018 | Potential evaluation of smart eyeglasses in urology | |

| Ruminski et al. [34] | 2016 | Data exchange between medical information systems | |

| Salisbury et al. [7] | 2020 | Verification of the AR system accuracy of smart glasses in percutaneous needle interventions surgery | |

| Nag et al. [35] | 2020 | Analysis of emotional awareness of autistic children | |

| Disability support | Rowe [36] | 2019 | Assisting people with peripheral vision loss through a risk detection and tracking system |

| Meza-de-Luna et al. [37] | 2019 | Supporting social interaction (conversation) of people with visual impairments | |

| Schipor et al. [38] | 2020 | Improved convenience for visually impaired users through voice command input and control | |

| Chang et al. [39] | 2020 | Development of a system to improve the safety of the visually impaired when using drugs | |

| Lausegger et al. [40] | 2017 | Visual aid for people with color blindness | |

| Miller et al. [10] | 2017 | To improve the understanding of lectures for the hearing impaired | |

| Janssen et al. [41] | 2017 | Validation of gait assist effect in Parkinson’s disease patients | |

| Sandnes [42] | 2016 | Investigating the features that people with low vision need in smart glasses | |

| Ruminski et al. [43] | 2015 | Identifying the potential use of smart glasses in medical activities | |

| Therapy | Machado et al. [44] | 2019 | Assisting people with autism to acquire daily life skills |

| Liu et al. [45] | 2017 | System application using smart glasses for coaching to improve autism disorder | |

| Vahabzadeh et al. [46] | 2018 | Socio-emotional and behavioral function treatment of autistic students by intervening using smart glasses |

| Sub-Field | References | Year | Aim of Study |

|---|---|---|---|

| Maintenance | Wolfartsberger et al. [47] | 2020 | Development of technology that can support maintenance work on the job site |

| Siltanen and Heinonen [48] | 2020 | ||

| Safety | Chang et al. [49] | 2018 | Design and implementation of drowsiness fatigue monitoring system to improve road safety |

| Baek and Choi [11] | 2020 | Development of smart glass-based proximity warning system for pedestrians at a mining site | |

| Work support | Kirks et al. [50] | 2019 | Development of distributed control system based on smart glasses |

| Gensterblum [51] | 2020 | Examine the possibility that commercial drones and smart glasses are disruptive technologies in the construction industry. |

| Sub-Field | References | Year | Aim of Study |

|---|---|---|---|

| Culture and Tourism | Han et al. [9] | 2019 | Providing a framework for adopting smart glasses in cultural tourism |

| tom Dieck et al. [52] | 2015 | Improving the understanding of art and analyzing the effects of using smart glasses in museums | |

| Mason [53] | 2016 | ||

| E-Commerce | Ho et al. [54] | 2018 | Ticketing without input after automatically recognizing the license plate using smart glasses |

| Sub-Field | References | Year | Aim of Study |

|---|---|---|---|

| Nursing | Kopetz et al. [55] | 2019 | User-oriented development of smart glasses for nursing education boarding training |

| Physical training | Berkemeier et al. [56] | 2018 | Consumer perception survey for application to cycling training |

| General | Cao et al. [57] | 2019 | Development of new augmented reality learning system for future informatization experiment |

| Physics | Spitzer et al. [58] | 2018 | Distance learning and support using smart glasses |

| Kapp et al. [59] | 2018 | Enhance understanding of physics experiments through smart glasses | |

| Thees et al. [60] | 2020 | ||

| Strzys et al. [61] | 2018 |

| Sub-Field | References | Year | Aim of Study |

|---|---|---|---|

| Information security | Rauschnabel et al. [62] | 2018 | Research on consumer perception of smart glasses and personal information risk |

| Marketing and Psychology | Adapa et al. [63] | 2018 | Investigating factors influencing the selection of smart wearable devices |

| Rauschnabel et al. [64] | 2015 | ||

| Rauschnabel et al. [65] | 2016 | Consumer perception of smart glasses as a fashion | |

| Rallapalli et al. [66] | 2014 | Physical analysis of (indoor) retail stores using smart glasses | |

| Hoogsteen et al. [67] | 2020 | Recognition of smart glasses by low-visibility persons | |

| Ok et al. [68] | 2015 | Analysis of harmfulness and social perception in purchasing smart glasses |

| Item | Google Glass Enterprise Edition 2 | Microsoft HoloLens 2 | Epson Moverio BT-350 | Vuzix Blade M100 |

|---|---|---|---|---|

| Storage | 32 GB | 64 GB | 48 GB | 8 GB |

| CPU | Qualcomm XR1 1.7 GHz Quad-core | Qualcomm Snapdragon 850 | Intel Atom X5 (1.44 GHz Quad-Core) | Quad Core ARM |

| RAM | DDR4 3 GB | 4 GB LPDDR4x system DRAM | 2 GB | 1 GB |

| Battery | 800 mA⋅h | 16,500 mA⋅h | 2950 mA⋅h | 550 mA⋅h |

| Camera | 8-megapixel camera | 8-megapixel camera, 1080p video recording | 5-megapixel camera | 5-megapixel camera, 1080p video recording |

| OS | Android Open Source Project 8.1 (Oreo) | Windows 10 | Android 5.1 | Android ICS 4.04 |

| Weight | ~46 g | 566 g | 151 g | 372 g |

| Sensor | Wi-Fi, Bluetooth, GPS, 6-axis gyroscope | Azure Kinect sensor, accelerometer, gyroscope, magnetometer, 6-DoF (Degrees of Freedom), GPS | Wi-Fi, Bluetooth, GPS, 3-axis gyroscope | Wi-Fi, Bluetooth, 3-axis gyroscope, 3-axis accelerometer, 3-axis magnetometer, Pressure sensor |

| Fluoroscopy method | Curved mirror (+Reflective waveguide): Google Light pipe | Holographic waveguide | Reflective waveguide: Epson light guide | Waveguide optics |

| Type | Number of Studies | References |

|---|---|---|

| AR | 52 (91.2%) | [7,8,9,10,11,18,19,20,21,22,23,24,25,26,27,28,29,30,31,32,33,34,35,36,38,39,40,41,42,43,44,45,46,48,50,51,52,53,54,55,56,57,58,60,62,63,64,65,66,67,68,69] |

| MR | 2 (3.5%) | [47,59] |

| N/A | 3 (5.2%) | [37,49,61] |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kim, D.; Choi, Y. Applications of Smart Glasses in Applied Sciences: A Systematic Review. Appl. Sci. 2021, 11, 4956. https://doi.org/10.3390/app11114956

Kim D, Choi Y. Applications of Smart Glasses in Applied Sciences: A Systematic Review. Applied Sciences. 2021; 11(11):4956. https://doi.org/10.3390/app11114956

Chicago/Turabian StyleKim, Dawon, and Yosoon Choi. 2021. "Applications of Smart Glasses in Applied Sciences: A Systematic Review" Applied Sciences 11, no. 11: 4956. https://doi.org/10.3390/app11114956

APA StyleKim, D., & Choi, Y. (2021). Applications of Smart Glasses in Applied Sciences: A Systematic Review. Applied Sciences, 11(11), 4956. https://doi.org/10.3390/app11114956