Virtual Reality Research: Design Virtual Education System for Epidemic (COVID-19) Knowledge to Public

Abstract

:1. Introduction

- 360-degree: Speicher concludes that a 360-degree experience is the key feature of VR [2,4]. 360-degree experiences do not barely reflect the number of degrees, it means that the VR device can provide an immersive virtual environment (VE) to users [2]. Furthermore, users will be largely immersed in the VE in visualization and motion [2].

- The VR education system uses the Virtual Storytelling Technology (VST) to build the system structure and provide users with active exploratory experiences.

- The system balances the entertainment and education elements. The research covers the fundamental Epidemic (COVID-19) knowledge from various medical articles. Meanwhile, entertainment interactions are integrated with the exploring process to enhance the user’s immersive experience.

- The system uses Unreal 4 as the development platform. By writing blueprint scripts, it can efficiently design VR/desktop interactions by adopting various platforms criteria.

2. Related Work

2.1. User Experience Design

2.2. COVID-19 Knowledge Stroyline

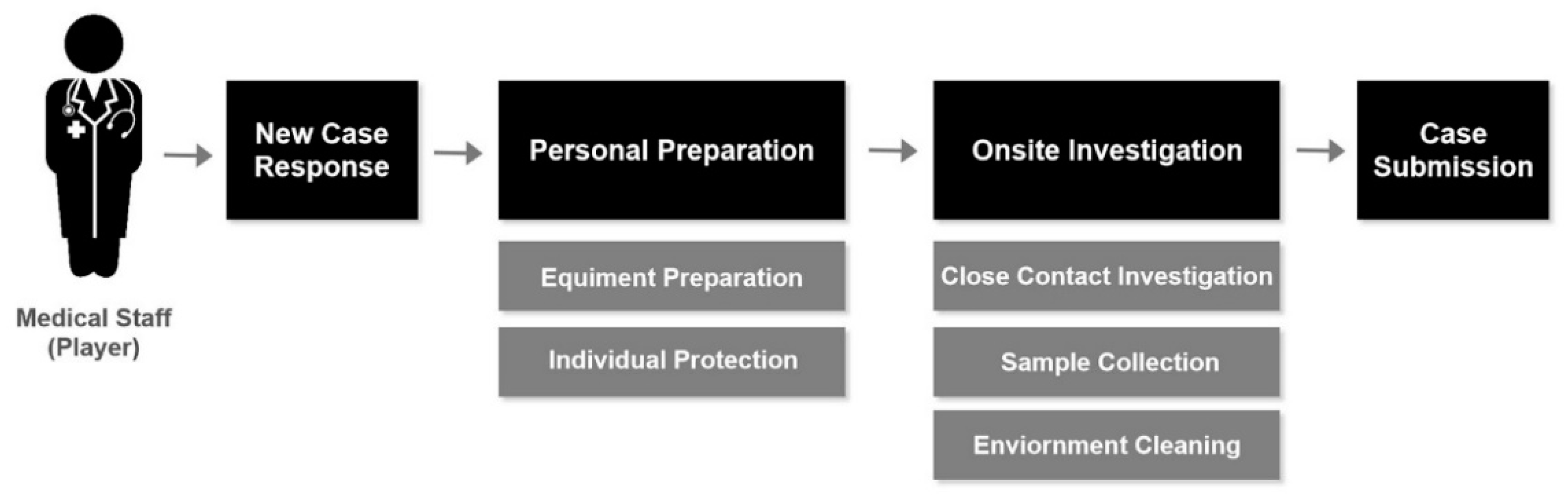

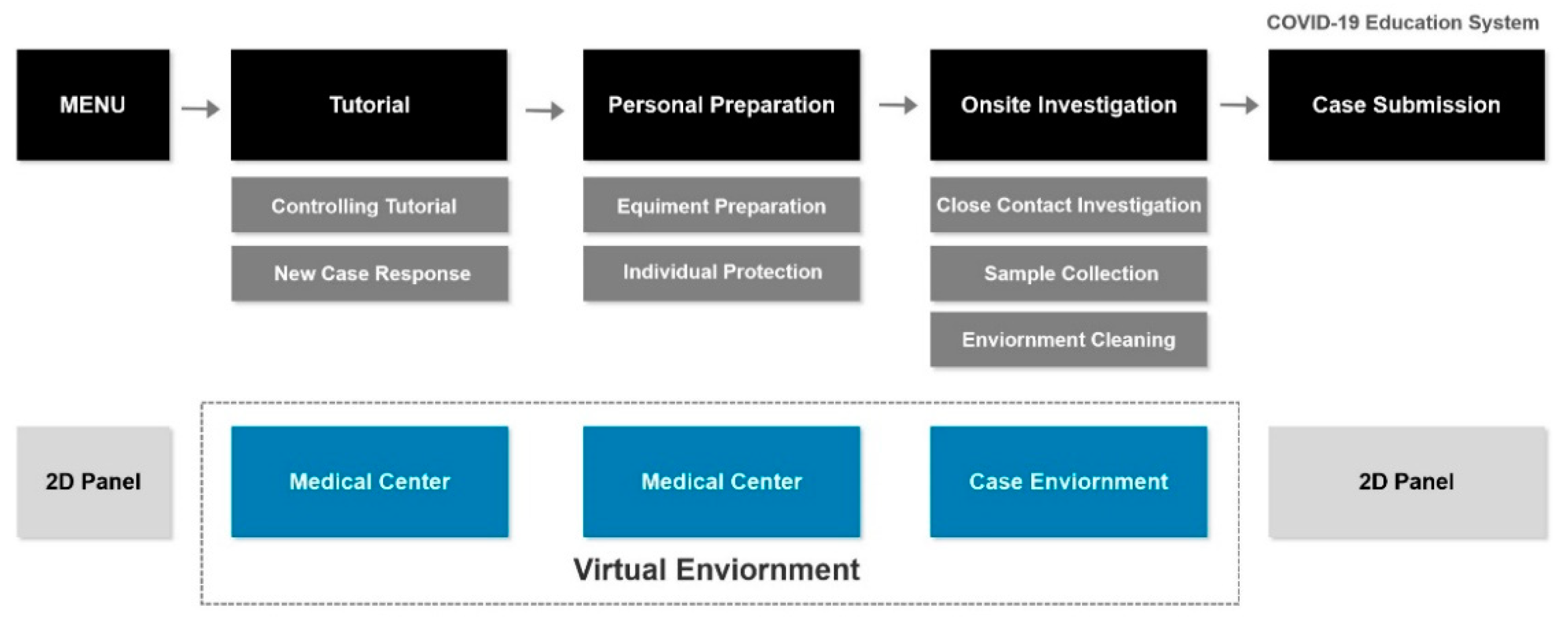

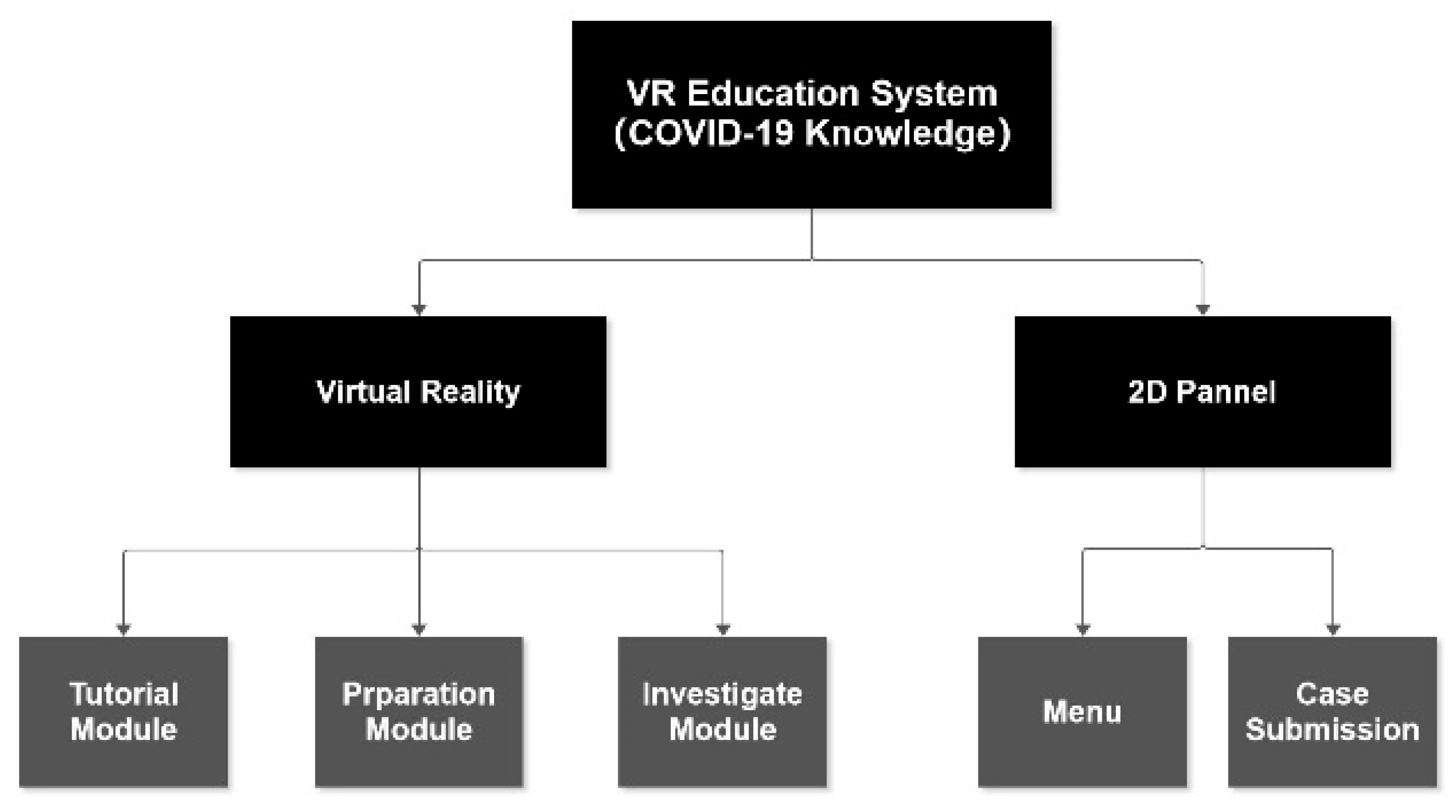

3. System Overview

3.1. Interactive Mode

3.1.1. Operation Mode

3.1.2. User Interface

3.2. Function Modules

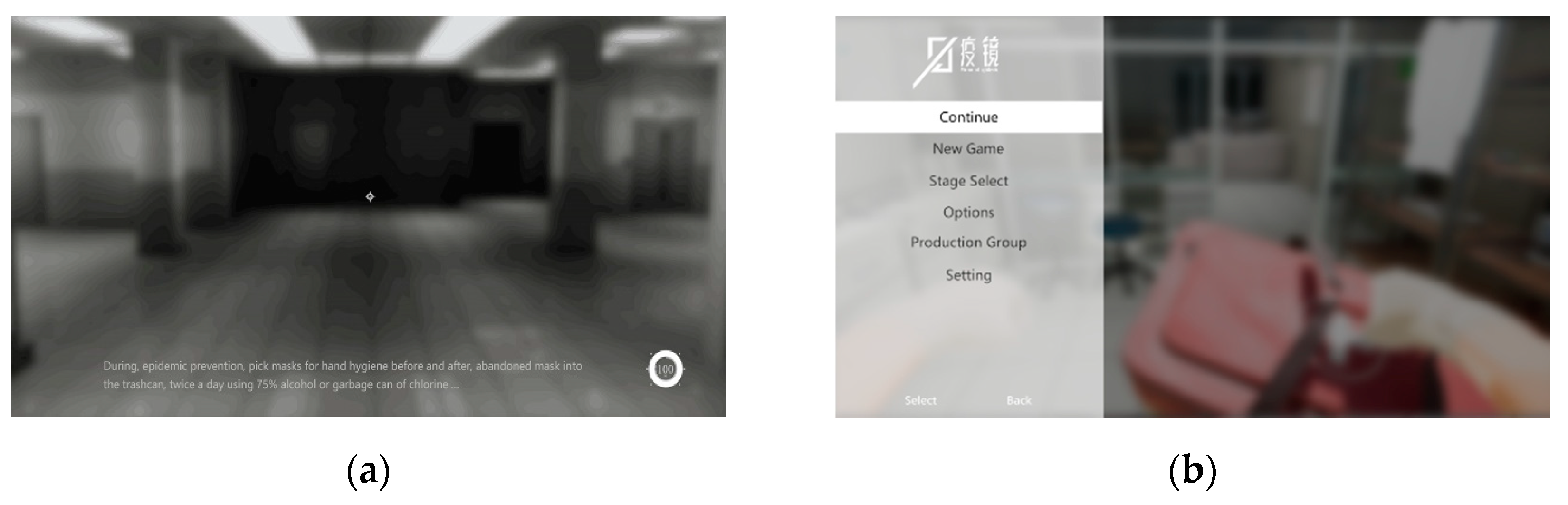

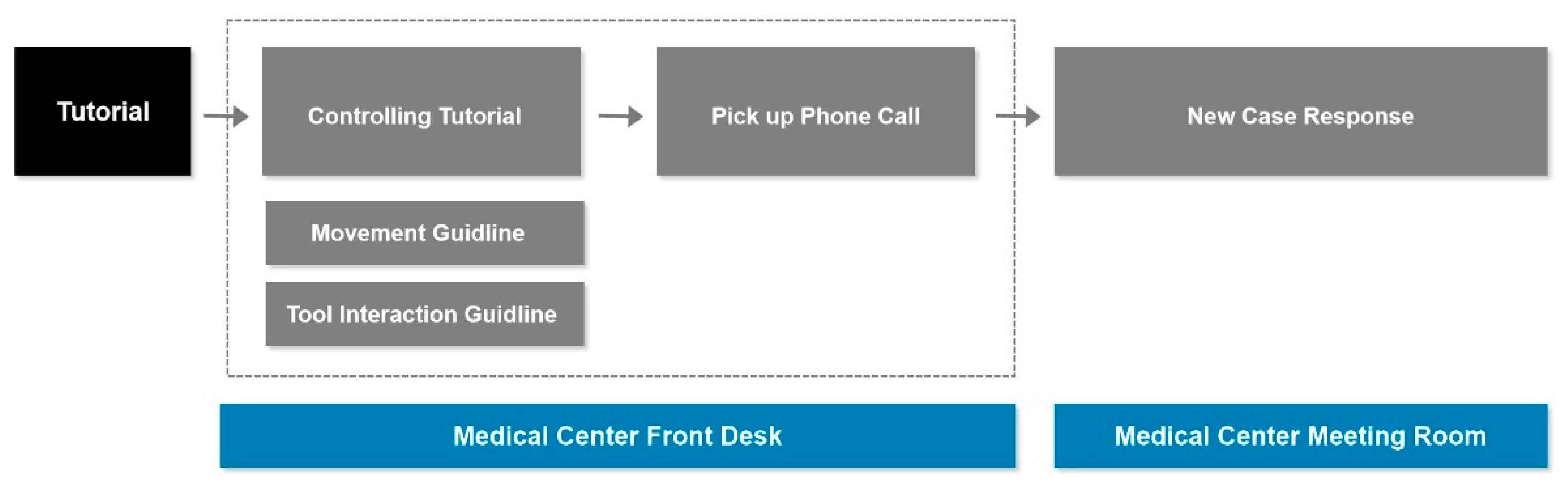

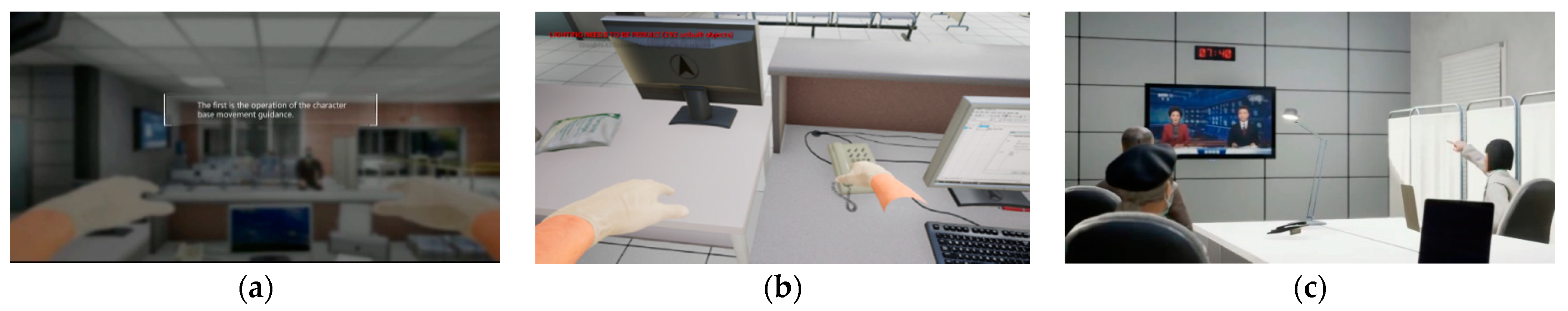

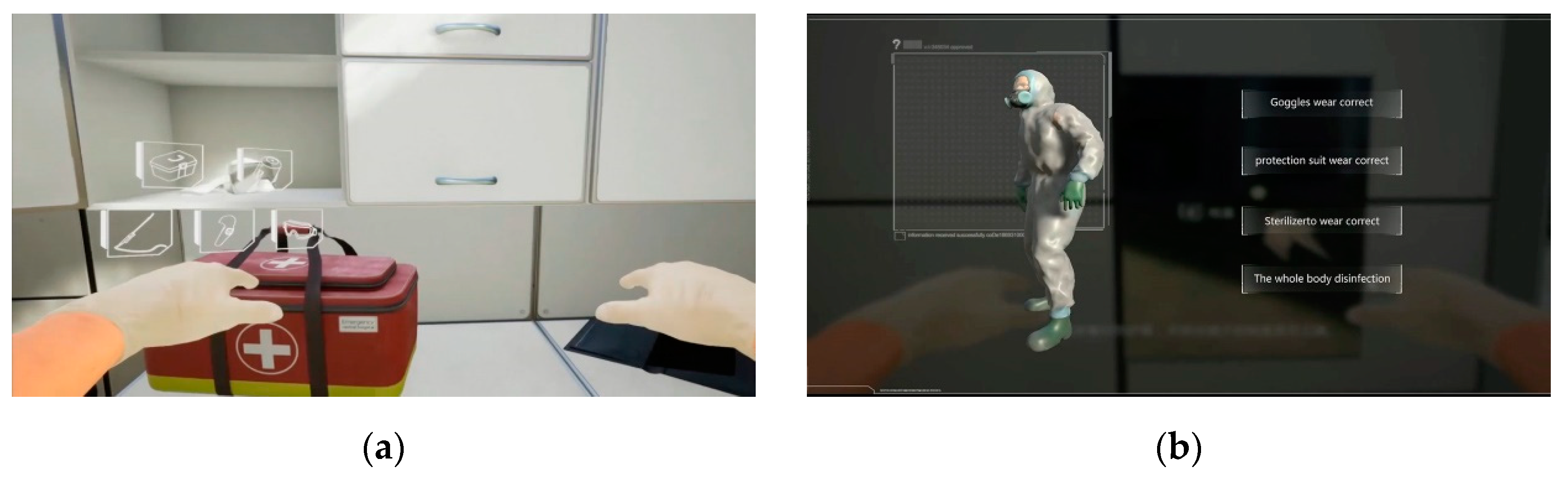

3.2.1. Tutorial Module

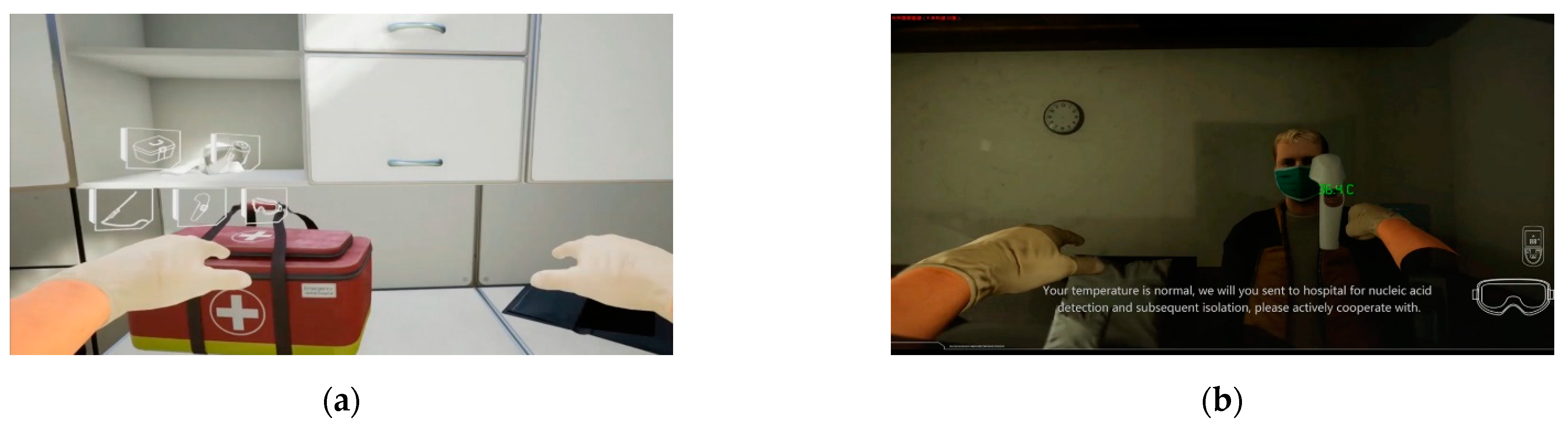

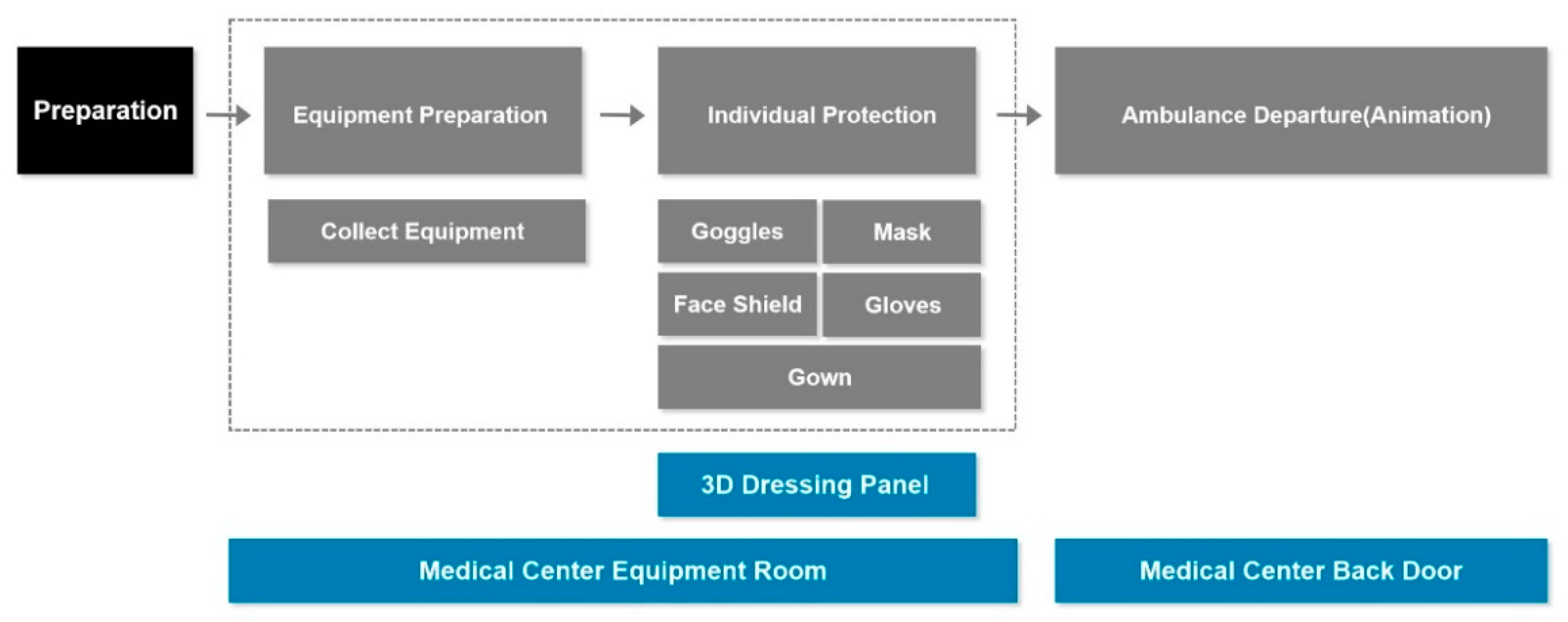

3.2.2. Preparation Module

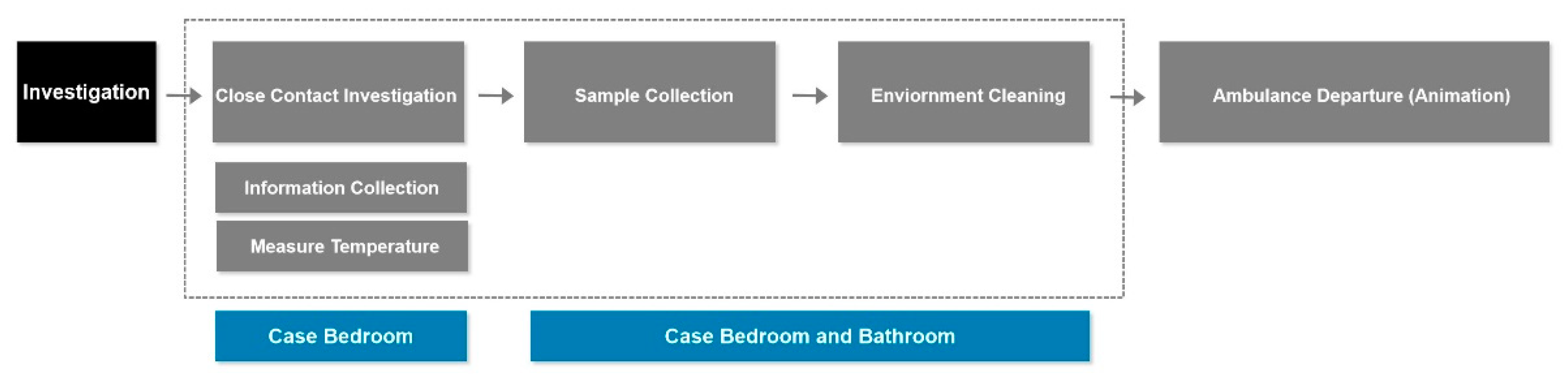

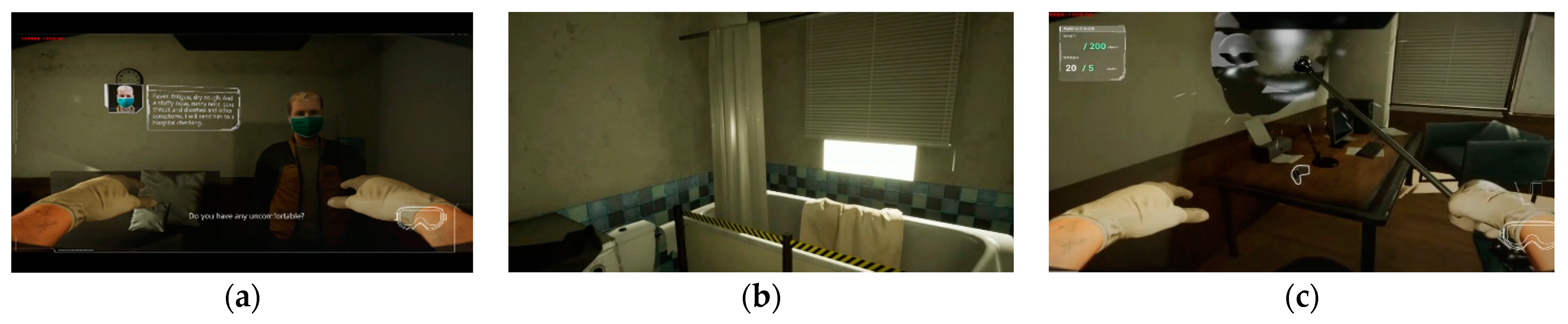

3.2.3. Investigation Module

4. Evaluation

- Measure the effectiveness of educating COVID-19 knowledge (all media).

- Compare the effectiveness of digital and traditional education.

- Compare the effectiveness of VR devices and desktops.

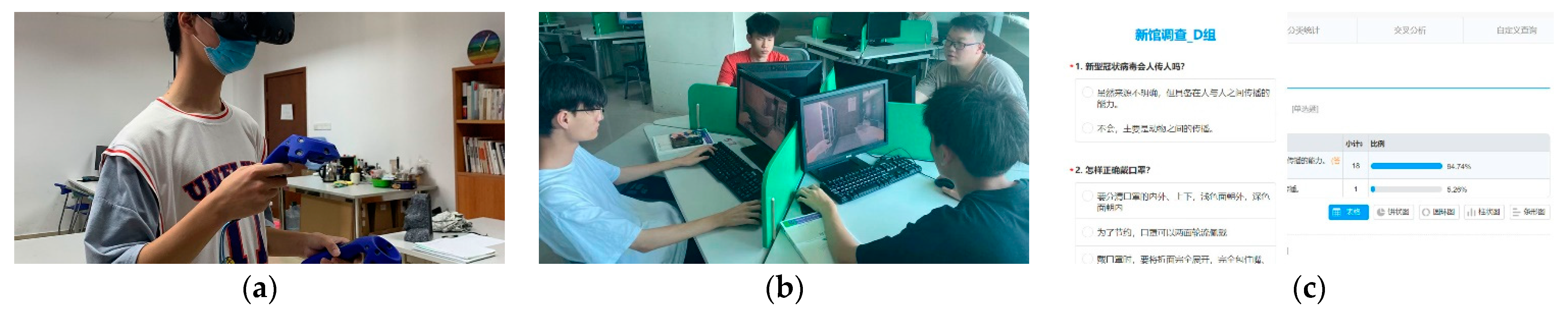

4.1. Participant

4.2. Tasks

4.2.1. Interactive Experiment

4.2.2. Questionnaire

4.3. Result and Analysis

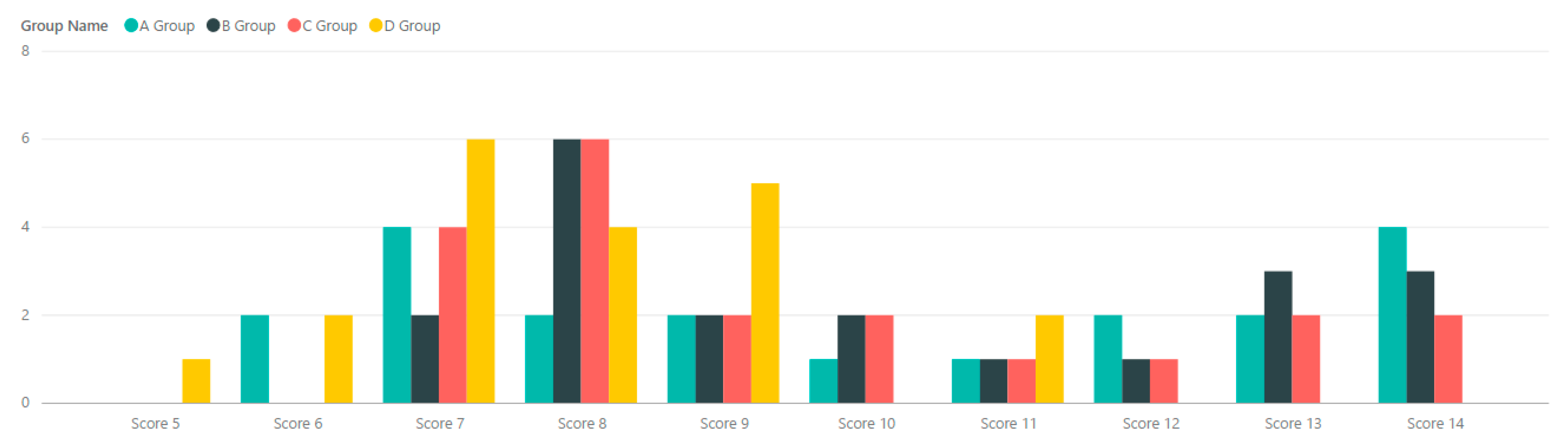

- The p-values of the user score for Group A and D is 0.0068. The p-values for Group B and D is 0.0014. The p-values for Group C and D is 0.0148. The data indicates that the difference in the user score between the experimental groups and the control group is significant.

- The p-values of the user score for Group A and B is 0.865. The data indicates that the difference in the user score between Group A and Group B is insignificant.

- It is encouraging that Group A/B/C has better performance in average correct rate than Group D (Control Group). Both digital and traditional media can improve the knowledge level efficiently.

- Group A/B (Digital Media) has higher number than Group C (Traditional Media). Interactive technologies can enhance the education experience and result in increasing the correct rate.

- Group A uses VR devices with interactive hardware characteristic, but its correct rate is 1% less than Group B (Desktop).

- Compared to Group A with four failure samples, Group B has a high completion rate that only one user can not complete the exploration.

- Group A has the best performance if the study filters the failure sample from data. The correct rate is 73.75%, which is higher than the Group B number (69.12%) within the completed sample.

5. Conclusions and Future Work

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Flavián, C.; Ibáñez-Sánchez, S.; Orús, C. The impact of virtual, augmented and mixed reality technologies on the customer experience. J. Bus. Res. 2019, 100, 547–560. [Google Scholar] [CrossRef]

- Xing, Y.; Liang, Z.; Shell, J.; Fahy, C.; Guan, K.; Liu, B. Historical Data Trend Analysis in Extended Reality Education Field. In Proceedings of the 2021 IEEE 7th International Conference on Virtual Reality, Foshan, China, 20–22 May 2021; pp. 434–440. [Google Scholar]

- Arnaldi, B.; Guitton, P.; Moreau, G. (Eds.) Virtual Reality and Augmented Reality: Myths and Realities; John Wiley & Sons: Hoboken, NJ, USA, 2018. [Google Scholar]

- Speicher, M.; Hall, B.D.; Nebeling, M. What is mixed reality? In Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems, Glasgow, UK, 4–9 May 2019; pp. 1–15. [Google Scholar]

- Kirkley, S.E.; Kirkley, J.R. Creating next generation blended learning environments using mixed reality, video games and simulations. TechTrends 2005, 49, 42–53. [Google Scholar] [CrossRef]

- Garrett, M. Developing Knowledge for Real World Problem Scenarios: Using 3D Gaming Technology within a Problem-Based Learning Framework. 2012. Available online: https://ro.ecu.edu.au/theses/527/ (accessed on 1 September 2021).

- Nincarean, D.; Alia, M.B.; Halim, N.D.A.; Rahman, M.H.A. Mobile Augmented Reality: The potential for education. Procedia-Soc. Behav. Sci. 2013, 103, 657–664. [Google Scholar] [CrossRef] [Green Version]

- Philippe, S.; Souchet, A.D.; Lameras, P.; Petridis, P.; Caporal, J.; Coldeboeuf, G.; Duzan, H. Multimodal teaching, learning and training in virtual reality: A review and case study. Virtual Real. Intell. Hardw. 2020, 2, 421–442. [Google Scholar] [CrossRef]

- Liang, Z.; Xing, Y.; Guan, K.; Da, Z.; Fan, J.; Wu, G. Design Virtual Reality Simulation System for Epidemic (Covid-19) Education to Public. In Proceedings of the 4th International Conference on Control and Computer Vision (ICCCV 2021), Macau, China, 20–22 May 2021; pp. 147–152. [Google Scholar]

- Negera, E.; Demissie, T.M.; Tafess, K. Inadequate Level of Knowledge, Mixed Outlook and Poor Adherence to COVID-19 Prevention Guideline among Ethiopians. 2020. Available online: https://www.biorxiv.org/content/10.1101/2020.07.22.215590v2/s (accessed on 10 September 2021).

- Yang, D.Y.; Cheng, S.Y.; Wang, S.Z.; Wang, J.S.; Kuang, M.; Wang, T.H.; Xiao, H.P. Preparedness of medical education in China: Lessons from the COVID-19 outbreak. Med Teach. 2020, 42, 787–790. [Google Scholar] [CrossRef] [PubMed]

- Ahir, K.; Govani, K.; Gajera, R.; Shah, M. Application on Virtual Reality for Enhanced Education Learning. Mil. Train. Sports 2020, 5, 1–9. [Google Scholar]

- World Meter Covid-19 Live Update. Reported Cases and Deaths by Country or Territory. Available online: https://www.worldometers.info/coronavirus/#countries (accessed on 27 August 2021).

- Geldsetzer, P. Knowledge and perceptions of COVID-19 among the general public in the United States and the United Kingdom: A cross-sectional online survey. Ann. Intern. Med. 2020, 173, 157–160. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- CDC Healthy Schools. Infectious Disease Epidemiology. Available online: https://www.cdc.gov/healthyschools/bam/teachers/epi.html (accessed on 28 October 2021).

- Danilicheva, P.; Klimenko, S.; Baturin, Y.; Serebrov, A. Education in virtual worlds: Virtual storytelling. In Proceedings of the 2009 International Conference on CyberWorlds, Bradford, UK, 7–11 September 2009; pp. 333–338. [Google Scholar]

- Hartson, R.; Pyla, P. The UX Book: Process and Guidelines for Ensuring a Quality User Experience; Elsevier: Amsterdam, The Netherlands, 2012. [Google Scholar]

- Hassenzahl, M.; Tractinsky, N. User experience-a research agenda. Behav. Inf. Technol. 2006, 25, 91–97. [Google Scholar] [CrossRef]

- Charles, F.; Lemercier, S.; Vogt, T.; Bee, N.; Mancini, M.; Urbain, J.; Price, M.; André, E.; Pélachaud, C.; Cavazza, M. Affective Interactive Narrative in the CALLAS Project. In Proceedings of the 2007 4th International Conference on Virtual Storytelling, Saint-Malo, France, 5–7 December 2007; pp. 210–213. [Google Scholar]

- Rizvic, S.; Boskovic, D.; Okanovic, V.; Sljivo, S.; Zukic, M. Interactive digital storytelling: Bringing cultural heritage in a classroom. J. Comput. Educ. 2019, 6, 143–166. [Google Scholar] [CrossRef]

- Tran, B.X.; Dang, A.K.; Thai., P.K.; Le, H.T.; Le, X.T.T.; Do, T.T.T.; Nguyen, T.H.; Pham, H.Q.; Phan, H.T.; Vu, G.T. Coverage of health information by different sources in communities: Implication for COVID-19 epidemic response. Int. J. Environ. Res. Public Health 2020, 17, 3577. [Google Scholar] [CrossRef] [PubMed]

- Ağalar, C.; Engin, D.Ö. Protective measures for COVID-19 for healthcare providers and laboratory personnel. Turk. J. Med Sci. 2020, 50, 578–584. [Google Scholar] [CrossRef] [PubMed]

- 360 Rumors. VR Users Percentage Increased 63% in the Past Year. Valve’s Hardware Survey Shows. Available online: https://360rumors.com/vr-users-percentage-increased-63-past-year-valves-hardware-survey-shows/ (accessed on 27 May 2021).

- Hussain, J.; Hassan, A.U.; Bilal, H.S.M.; Ali, R.; Afzal, M.; Hussain, S.; Bang, J.; Banos, O.; Lee, S. Model-based adaptive user interface based on context and user experience evaluation. J. Multimodal User Interfaces 2018, 12, 1–16. [Google Scholar] [CrossRef]

| Desktop Control Mode | VR Control Mode (Oculus Touch) | Interactive Result |

|---|---|---|

| Mouse X, Y, Z axis | User Head Movement | Camera Direction Movement |

| Mouse Right Click | Right Hand Controller First Trigger | Right Hand Grasp |

| Mouse Left Click | Left Hand Controller First Trigger | Right Left Grasp |

| Keyboard W/A/S/D button | Controller Movement | User Movement |

| Keyboard Left Shift button & Mouse Movement | Left Hand Controller Thumb stick | Left Hand Movement |

| Keyboard Left Alt button & Mouse Movement | Right Hand Controller Thumb stick | Right Hand Movement |

| Keyboard ESC button | Reserved Button | Pause System |

| Keyboard F button | Button.One | Turn on Selected Medical Tool |

| Keyboard Q button | Button.Two | Wear Protection Grass |

| Keyboard Tab button | Button.Three | Check Medical back package |

| Group A | Group B | Group C | Group D | |

|---|---|---|---|---|

| Participant | 20 | 20 | 20 | 20 |

| Media (Condition) | HTC Vive | Desktop | Brochure | NO |

| Background | Bachelor Student | Bachelor Student | Bachelor Student | Bachelor Student |

| Single Choices | Multiple Choices | Judgment Question | |

|---|---|---|---|

| Tutorial Module | 1 | 1 | 1 |

| Preparation Module | 2 | 1 | 1 |

| Investigation Module | 1 | 2 | 1 |

| Compared Group | Group A and Group D | ||||

| SS | df | MS | F | p-value | F crit |

| 46.225 | 1 | 46.225 | 8.17 | 0.0068 | 4.0981 |

| Compared Group | Group B and Group D | ||||

| SS | df | MS | F | p-value | F crit |

| 52.9 | 1 | 52.9 | 11.75 | 0.0014 | 4.0981 |

| Compared Group | Group C and Group D | ||||

| SS | df | MS | F | p-value | F crit |

| 27.225 | 1 | 27.225 | 6.51 | 0.0148 | 4.0981 |

| Compared Group | Group A and Group B | ||||

| SS | df | MS | F | p-value | F crit |

| 0.225 | 1 | 0.225 | 0.029 | 0.865 | 4.0981 |

| Group A | Group B | Group C | Group D | |

|---|---|---|---|---|

| Average Correct Rate (%) | 67% | 68% | 63.66% | 52.98% |

| Completed Rate | 80% | 95% | 100% | / |

| Average Correct Rate (Completed Units) | 73.75% | 69.12% | / | / |

| Failure User | User Group | Fail Reason | Ending Module |

|---|---|---|---|

| Users 1 | Group B | Not enough Time | Investigation Module |

| Users 2 | Group A | Not enough Time | Investigation Module |

| Users 3 | Group A | Not enough Time | Investigation Module |

| Users 4 | Group A | Hard to control | Preparation Module |

| Users 5 | Group A | Hard to control | Preparation Module |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Xing, Y.; Liang, Z.; Fahy, C.; Shell, J.; Guan, K.; Liu, Y.; Zhang, Q. Virtual Reality Research: Design Virtual Education System for Epidemic (COVID-19) Knowledge to Public. Appl. Sci. 2021, 11, 10586. https://doi.org/10.3390/app112210586

Xing Y, Liang Z, Fahy C, Shell J, Guan K, Liu Y, Zhang Q. Virtual Reality Research: Design Virtual Education System for Epidemic (COVID-19) Knowledge to Public. Applied Sciences. 2021; 11(22):10586. https://doi.org/10.3390/app112210586

Chicago/Turabian StyleXing, Yongkang, Zhanti Liang, Conor Fahy, Jethro Shell, Kexin Guan, Yuxi Liu, and Qian Zhang. 2021. "Virtual Reality Research: Design Virtual Education System for Epidemic (COVID-19) Knowledge to Public" Applied Sciences 11, no. 22: 10586. https://doi.org/10.3390/app112210586

APA StyleXing, Y., Liang, Z., Fahy, C., Shell, J., Guan, K., Liu, Y., & Zhang, Q. (2021). Virtual Reality Research: Design Virtual Education System for Epidemic (COVID-19) Knowledge to Public. Applied Sciences, 11(22), 10586. https://doi.org/10.3390/app112210586